1. Introduction

With the evolution of airborne LiDAR technology, numerous studies have been conducted on the use of airborne LiDAR height and intensity data for land cover classification [

1,

2,

3,

4]. Initial studies have combined LiDAR-derived height surfaces in the format of the Digital Surface Model (DSM) or the normalized Digital Surface Model (nDSM) with multispectral aerial/satellite imagery [

5,

6]. Other investigations have combined multispectral aerial/satellite imagery with LiDAR height and intensity data [

7,

8,

9]. Since most of the previous studies converted either LiDAR intensity or height data into 2D images, typical LiDAR images such as intensity [

2,

3,

4,

7,

8], multiple returns [

2,

7], DSM, the Digital Terrain Model (DTM) [

2,

4] and nDSM [

5,

6,

8] were created. Furthermore, when the LiDAR intensity data were combined with multispectral aerial/satellite imagery, the NDVI was used [

5,

6,

8]. Traditional supervised pixel-based classification techniques such as maximum likelihood [

5,

9], rule-based classification [

2,

3,

8] and the Gaussian mixture model [

7] were applied. Other studies accounted for the spatial coherence of different objects to avoid the noises in the pixel-based classification results by using object-orientated classification techniques [

4,

6].

Brennan and Webster [

2] used a rule-based classification approach for segmenting and classifying five bands, which were created from LiDAR data, into land cover classes. The five bands include DSM, digital terrain model, intensity, multiple returns and normalized height. The image pixels were first segmented into objects by applying threshold values on mean intensity, the standard deviation of intensity, mean DSM, mean normalized height and mean multiple returns. The segmentation process included four levels, where the image objects were separated in the first level into three classes, namely water, low land and elevated objects. The elevated objects were further discriminated into trees and buildings. The low land objects were separated into additional classes, such as low vegetation, roads and intertidal, until the image objects were segmented into ten classes by the fourth level. The overall classification accuracy was 94% and 98% for ten and seven classes, respectively. However, this study relied mainly on the height of LiDAR data. In addition, the threshold values, which were used in the classification rules, were applied to the object’s mean values (e.g., mean intensity). That was a source of error, especially where building edges and ground returns formed one image object in the normalized height band. Furthermore, some dense coniferous trees exhibited single returns as they could not be penetrated by the laser beam. Those trees were misclassified as buildings because the separation of trees from buildings primarily relied on the multiple returns’ band.

In [

3], a sensitive analysis on eight different LiDAR-derived features from height and intensity for three different sites was exhibited. First, image objects segmentation was conducted based on five generated bands from the LiDAR returns, namely bare soil, first returns, last returns, height and intensity. Second, six features based on height, including mean, standard deviation, homogeneity, contrast, entropy and correlation, in addition to mean intensity and compactness were computed. Finally, a decision tree was used to classify the image objects into five land cover classes and achieved more than 90% overall classification accuracy. It should be pointed out that the classification process relied on three features, mean height, height standard deviation and mean intensity, while the use of other features did not improve the land cover classifications. This might be due to that the tested sites were not complex landscapes (e.g., no interference between trees and buildings). Furthermore, the intensity band was assigned a weight of 0.1 in the segmentation process, while other bands were assigned an equal weight of one. This is because the recorded intensity was manually adjusted during the data acquisition. As a result, the intensity data were inconsistent along the different flight lines, meaning that the intensity data were not sufficiently used in this study.

Antonarakis et al. [

4] used a supervised object-orientated approach to classify the terrain into nine classes. Eight LiDAR-derived bands, namely the canopy surface model, terrain model, vegetation height model (VHM), intensity model, intensity difference model, skewness model, kurtosis model and percentage canopy model, were first created. A decision tree was then applied in order to classify three urban area datasets. The used approach brought out more than 93% overall accuracy for the three datasets. However, two essential classes were not considered in the presented approach (roads and buildings), even though some buildings were present in one of the three investigated sites. The VHM was calculated by subtracting the digital terrain model (created from the last return) from the canopy surface model (created from the first return). Some last return values had higher elevations than the first pulse return due to noise in the LiDAR receiver, which affected the calculation of VHM. As a result, this approach could not accurately distinguish between the ground and canopy tops. Another source of error is resulted from the triangulated irregular network interpolation of the LiDAR points to create images, whereas high elevations were recorded on the river surface.

Other investigations have explored the use of LiDAR-derived height surfaces, such as the nDSM with multispectral imagery in land cover classification. Huang et al. [

5] incorporated LiDAR-derived nDSM with high-resolution RGB aerial image and near-infrared band imagery. A pixel-based classification method, maximum likelihood, was used to obtain four land cover classes, namely buildings, trees, roads and grass, and achieved an overall accuracy of up to 88.3%. The classification accuracy was further improved up to 93.9% using a knowledge-based classification and correction systems. This technique was based on a set of threshold values applied to the height, height difference, smoothness, anisotropic smoothness, intensity, NDVI, transformed vegetation index, area and shape in order to detect the four land classes. Chen et al. [

6] incorporated LiDAR-derived nDSM with Quick-Bird images in order to classify the terrain. First, two bands were derived from Quick-Bird imagery, namely the Normalized Difference Water Index and NDVI, and then combined with nDSM. Second, a hierarchical object-oriented classification method was used, which included image segmentation, and then, threshold values to image objects were applied. The hierarchical classification process achieved an overall accuracy of 89.4% for nine land classes. However, this method could not separate road from vacant land, as these objects exhibit similar spectral and elevation characteristics. Both of the aforementioned studies did not incorporate LiDAR intensity data in their research work. The optical images were resampled to coarser resolution to be consistent with the created LiDAR images that resulted in mixed pixels. These pixels presented more than one land cover and caused classification errors.

Other studies used multispectral imagery with LiDAR data (height and intensity) to take advantage of reflectivity variation from spectrum ranges (e.g., visible and Near Infrared (NIR)) of different land objects. Charaniya et al. [

7] generated four LiDAR bands from height, intensity, height variation, multiple returns and the luminance band, measured in the visible spectrum, from aerial imagery. A Gaussian mixture model was then used to model the training data of four classes, including roads, grass, buildings and trees. The model parameters and the posterior probabilities were estimated using the expectation-maximization algorithm. Subsequently, those parameters were used to classify the tested dataset and resulted in overall accuracy of 85%. The results demonstrated that the height variation played an important role in classification, where the worst results were obtained by excluding the height band. Furthermore, the overall accuracy was decreased by excluding the aerial imagery. The use of the difference of multiple returns improved the classification of roads and buildings. However, it decreased the classification accuracy of other terrain covers, because of the misclassification of the grass patches.

Hartfield et al. [

8] combined LiDAR data with a 1-m resolution multispectral aerial image. Two LiDAR bands, namely intensity and nDSM, were generated from the LiDAR data, and NDVI was derived from the multispectral aerial image. Classification and regression tree were tested on the number of band combinations. The combination of LiDAR nDSM, multispectral image and NDVI produced the highest overall accuracy of 89.2% for eight land cover classes. The shadow in the aerial image affected significantly the classification results. In addition, misclassification between the bare ground and herbaceous (grass) classes occurred due to the use of the intensity data. This is because the intensity data needed to be calibrated as reported in [

8]. Singh et al. [

9] combined Landsat Thematic Mapper (TM) imagery with LiDAR-derived bands, which included intensity, the canopy height model and nDSM. The maximum likelihood classifier was applied to classify land cover into six classes. A number of band combinations was tested considering different resolutions of TM imagery such as 1 m, 5 m, 10 m, 15 m and 30 m. Classification of 1-m resolution TM imagery combined with the three LiDAR bands brought out the highest overall accuracy of 85%. The classification results were affected by two main sources of errors; first, the LiDAR data gaps that contributed to misinterpretation when creating 2D LiDAR images; second, the LiDAR intensity data were not normalized to a standard range.

The effects of radiometric correction of LiDAR intensity data on land cover classification accuracies have been recently demonstrated. Radiometric correction aims to convert the recorded intensity data into the spectral reflectance of the land objects. Based on the radar (range) equation, system and environmental parameters were studied such as flying height, range, incidence angle, sensor aperture size and atmospheric attenuation, to correct the LiDAR intensity data [

10,

11]. By using the radiometrically-corrected LiDAR intensity data, the overall classification accuracies of urban areas were improved by 7.4% [

12], 9.4–12.8% [

11] and 3.8–16.5% [

13].

2. Historical Development of Multispectral LiDAR Systems

In the past few years, numerous attempts have been conducted towards multispectral LiDAR systems. Laboratory-based multispectral LiDAR systems have been developed to collect data at different wavelengths [

14,

15,

16]. Analysis of multispectral LiDAR data, collected from Terrestrial Laser Scanning (TLS) platforms, was conducted in order to retrieve the biophysical and/or biochemical vegetation parameters [

17,

18,

19,

20,

21]. A few attempts have been reported on multispectral airborne LiDAR, which uses various airborne LiDAR systems and combines different flight missions of the same study area [

22,

23,

24].

Laboratory-based multispectral LiDAR systems have been developed to collect data at wavelengths of 531, 550, 660 and 780 nm [

14] and 556, 670, 700 and 780 nm [

15], in order to measure the 3D structure of forest canopies. Shi et al. [

16] developed a calibration method for the backscatter intensity from a laboratory-based multispectral LiDAR systems operating at wavelengths of 556, 670, 700 and 780 nm. This method accounted for the incidence angle and surface roughness. After that, different vegetation indices were defined and explored, in order to improve the classification accuracy.

Other investigations used TLS platforms to collect multispectral LiDAR data. For instance, a dual-wavelength full-waveform TLS platform was developed by [

18], operating at two wavelengths (NIR: 1063 nm; mid-infrared (MIR): 1545 nm). The platform was used to record the full-waveform returned from the forest canopies to measure their three-dimensional structure. The Finnish Geodetic Institute developed a Hyperspectral LiDAR (HSL) system transmitting a continuous spectrum of 400–2500 nm [

19]. An outdoor experiment was performed using seven wavelength bands ranging from 500–980 nm in order to discriminate between man-made targets and vegetation based on their spectral response [

20]. Douglas et al. [

21] designed a portable ground-based full-waveform TLS operating at 1064- and 1548-nm wavelengths. The system was used to collect data in the Sierra Nevada National Forest. Subsequently, and based on that the leaves absorb more strongly at 1548 nm compared to stems, the leaves were discriminated from the woody materials.

For multispectral airborne LiDAR attempts, Briese et al. [

22] proposed a practical radiometric calibration workflow of multi-wavelength airborne LiDAR data. Their approach was based on full waveform observations (range, amplitude and echo width), flight trajectory and in situ reference targets. The datasets used in this study were acquired by three flight missions based on the same flight plan within three months. Three RIEGL sensors, namely VQ-820-G (532 nm), VQ-580 (1064 nm) and LMS-Q680i (1550 nm), were utilized as one sensor for each mission. Important observations related to this study can be summarized as follows. First, the RIEGL VQ-820-G was mainly designed to survey seabeds, rivers or lakes, where its scan pattern is an arc-like pattern on the ground. As a result, the data collected using this sensor covered a smaller area with a curved boundary, compared to the other two sensors, which produced linear and parallel scan lines. Second, the in situ measurements of reference targets, which were used in the radiometric calibration, were performed using different sensors under specific conditions (i.e., dry condition at zero angle of incidence). Third, the LiDAR data and the in situ measurements of reference targets were collected at different times (in different seasons from August–December). Thus, the surface conditions at the individual flight missions were not identical. Consequently, the calibrated intensity values were affected, such as the calibrated reflectance 1064-nm wavelength showing higher values than other sensors.

Briese et al. [

23] calibrated multi-wavelength airborne LiDAR data acquired using the aforementioned three RIEGL sensors. The LiDAR data were acquired by two flight missions (both with an aircraft equipped with two sensors) within a short time period (i.e., four days) to ensure more stable reflectance behavior of the study site at all wavelengths. The calibrated intensity data collected at 532 nm were quite dark, and also, the data acquired at 1064 nm was brighter compared to the other wavelengths. In this study, no classification process is reported. In addition, the different viewing angle of the RIEGL VQ-820-G with respect to the other two nadir-looking sensors produced LiDAR data with different boundaries. Generally, the surface conditions at the individual flight mission were not identical due to temporal surface changes, atmospheric conditions and the influence of moisture content [

23].

Wang et al. [

24] demonstrated the potential use of dual-wavelength full waveform LiDAR data for land cover classification. The LiDAR data were acquired by two laser sensors, Optech ALTM Pegasus HD400 (Teledyne Optech, Vaughan, ON, Canada) and RIEGL LMS-Q680i operating (RIEGL Laser Measurment Systems, Horn, Austria) at 1064 nm and 1550 nm, respectively. A radiometric correction model was first applied to the LiDAR data acquired from both sensors. The LiDAR points were then converted into spectral images with 1-m resolution and combined for subsequent processing. Three features were then derived from Optech and RIEGL sensors’ data, namely amplitude (intensity), echo width and surface height. Finally, a supervised classification algorithm, the support vector machine, was used to classify the terrain into six classes, including soil, low vegetation, road and gravel, high vegetation, building roofs and water. Different feature combinations were tested, and overall accuracies of 84.3–97.4% were achieved. The conversion of the 3D LiDAR points into 2D spectral images affected the canopy reflectance information in the spectral images by the objects under the canopy, where the canopy could not be separated from the understory vegetation and soil. This study considered the first return only, extracted from each full waveform, for processing. However, land covers such as trees, building roofs or low vegetation may reflect more than one return. Furthermore, when the RIEGL and Optech amplitude information was tested, the building roofs were not completely separated from soil or low vegetation. One possible reason is that the intensity data came from different missions conducted at different times. Thus, the weather and/or surface conditions change over time, and hence, the same object exhibits different intensity values. As a result, the surface height and echo width were considered the major features for land cover discrimination, while the amplitude information was complementary information [

24].

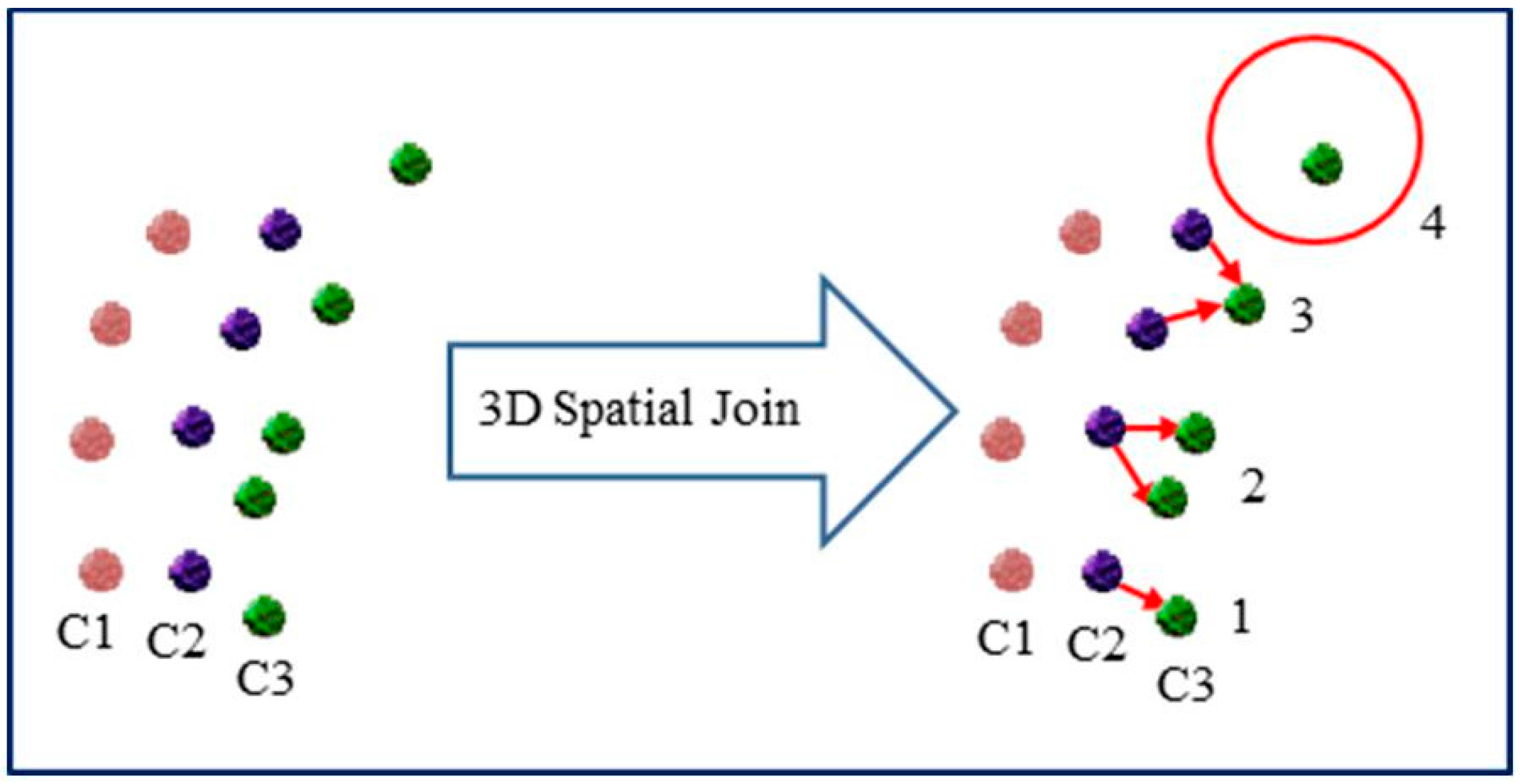

In 2014, Teledyne Optech (Vaughan, ON, Canada) developed the world’s first commercial airborne multispectral LiDAR sensor, which is known as the “Optech Titan”. The sensor offers the possibility of obtaining multispectral active data acquisition at day and night. This facilitates new applications and information extraction capabilities for LiDAR. The sensor operates simultaneously at three wavelengths and acquires point clouds in three channels with different looking angles, namely MIR (1550 nm) in C1 at 3.5° forward looking, NIR (1064 nm) in C2 at 0° nadir looking and green (532 nm) in C3 at 7° forward looking. Specifications of the Optech Titan sensor are provided in

Table 1 [

25].

Combining multispectral LiDAR data collected at three different wavelengths allows for a higher reliability and accuracy compared to the monochromatic wavelength LiDAR data. A few studies have been conducted on the use of multispectral LiDAR data collected by the Optech Titan for land cover classification. Wichmann et al. [

26] studied the spectral patterns of different classes and showed that the intensity values could potentially be used in land cover classification. Raster images were created from the LiDAR intensity and height data, and image classification techniques were then applied [

27].

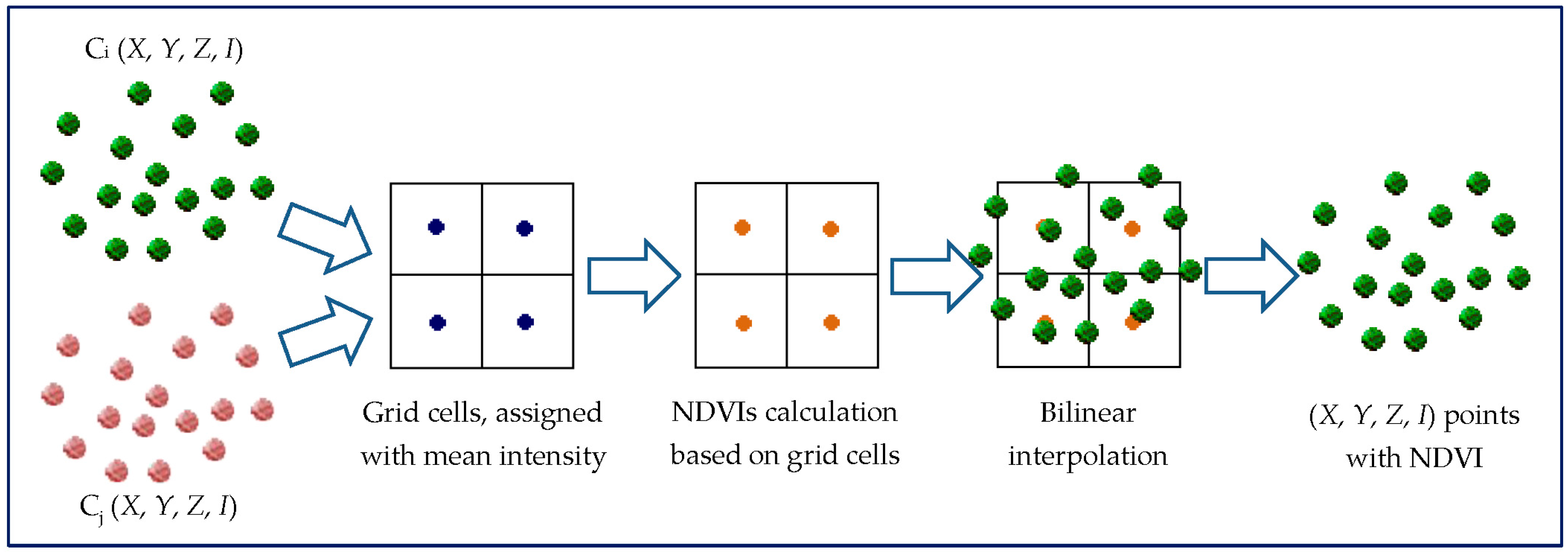

In a previous work conducted by the authors, the maximum likelihood classifier was applied to single intensity image, combined three-intensity images and combined three-intensity images with DSM [

28]. The overall accuracy of classifying the terrain into six classes was 65.5% when using the combined three-intensity images, compared to a 17% improvement when using single-intensity images. In addition, the overall classification accuracy was improved to 72.5% when using the combined three-intensity images with DSM. Moreover, we derived three spectral indices from the intensity values recorded in the three channels. The spectral indices were tested in an urban area and achieved an overall accuracy of up to 96% for separating the low and high vegetation from built-up areas [

29].

The combined use of LiDAR height and intensity data improved the results, in comparison with those obtained through either of the multispectral imagery alone or LiDAR height data (DSM) with high-resolution multispectral aerial/satellite imagery [

30]. Although LiDAR systems acquire 3D dense and accurate point clouds, most of the previous studies converted the 3D point clouds into 2D intensity and/or height images, so that image classification techniques can be applied. However, with such conversion, the data lose the third dimension (i.e., the z component), which leads to incomplete and potentially incorrect classification results. In this research work, we aim to present the capability of using multispectral airborne LiDAR data for land cover classification. The objectives of this study are: (1) explore the use of existing image classification techniques in classifying multispectral LiDAR data; (2) develop a method for merging multi-wavelengths LiDAR data; (3) develop an automated method for land cover classification from 3D multispectral LiDAR points; and (4) assess the effect of radiometric correction of multispectral LiDAR data on the land cover classification results. This paper is organized as follows: the methodology, including image- and point-based classification techniques, is explained in

Section 3;

Section 4 presents the study area and dataset; the land cover classification results are illustrated in

Section 5;

Section 6 discusses and analyzes the results, and finally, the conclusion is summarized in

Section 7.

4. Study Area and Dataset

The study area is located in Oshawa, Ontario, Canada. The Optech Titan multispectral LiDAR sensor was used to acquire LiDAR point for a single strip during a flight mission on 3 September 2014. Optech Titan acquired LiDAR points in three channels at 1075 m altitude, ±20° scan angle, 200 kHz/channel Pulse Repetition Frequency and 40-Hz scan frequency. The mean point density for each channel is 3.6/m2, with a point spacing of about 0.5 m. The acquired data consist of trajectory position data, as well as a time-tagged 3D point cloud with multiple returns (up to a maximum of 4 returns) in LASer file format (LAS) for each channel. The LAS data file contains xyz coordinates, raw intensity values, the scan angle and the GPS time of each LiDAR point.

A subset from the LiDAR strip was clipped with a dimension of 550 m by 380 m for testing. The study area covers a variety of land cover features on the ground such as buildings, roads, parking lots, shrubs, trees and open spaces with grass covered. The tested subset has 712,171, 763,507 and 595,387 points from C1, C2 and C3, respectively. The variation in the number of points recorded at different channels depends on the interaction of land objects with different wavelengths (e.g., greenness of the vegetation). An aerial image, captured simultaneously with the acquisition of the LiDAR data, was geo-referenced with the LiDAR data and was used to validate the land cover classification results, as shown in

Figure 5.

Since the 3D reference points are not available, a set of polygons was selected to extract the reference points for each class (i.e., buildings, trees, roads and grass). All points within a polygon drawn on classes, including roads, grass or buildings, were labeled as the same class, while the top layer of the trees class was used as reference points. The total number of points for the four classes was 45,618, distributed as shown in

Table 2.

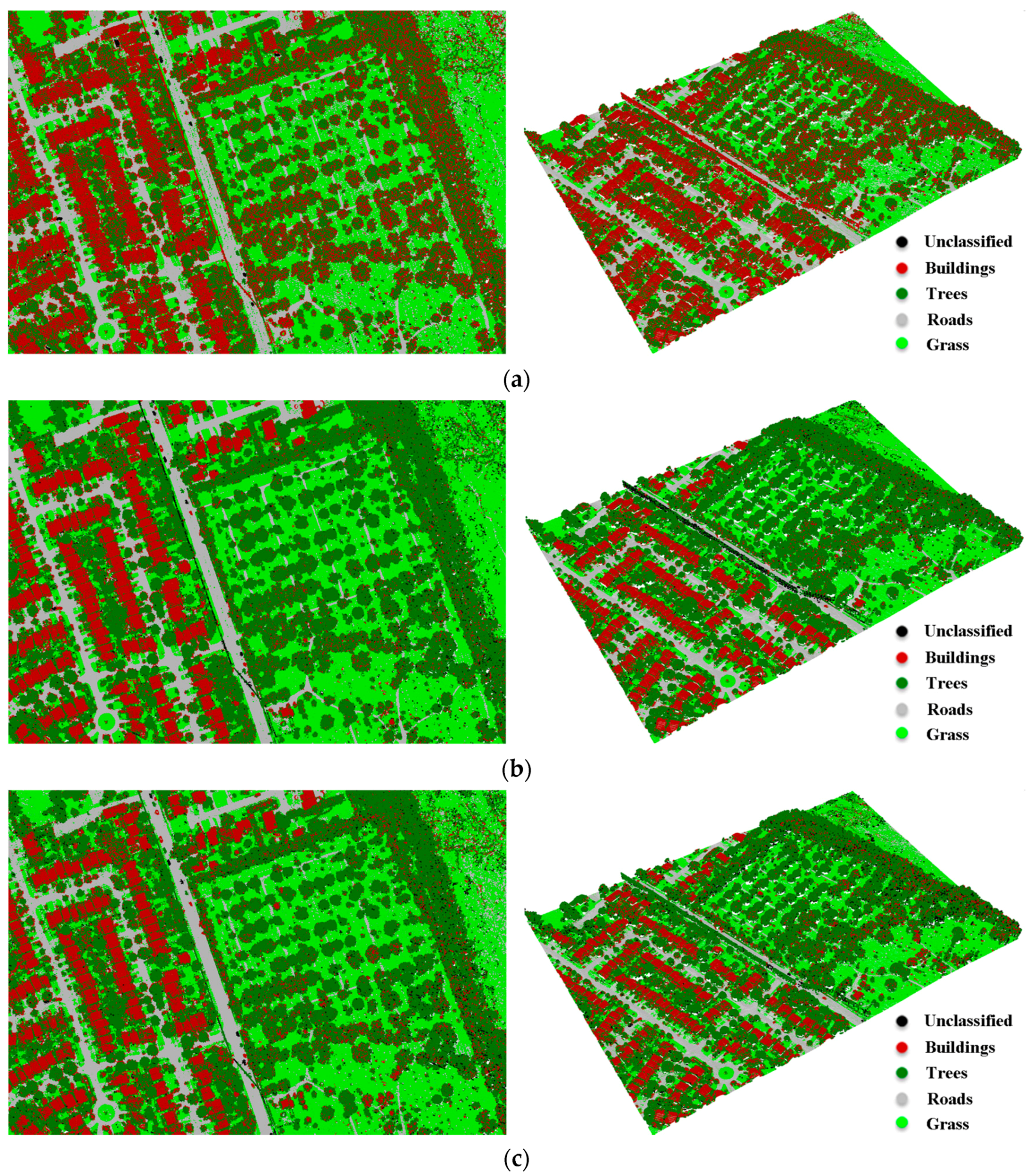

6. Discussion

Generally, the classification results have demonstrated the capability of using multispectral LiDAR data for classifying the terrain into four classes, namely buildings, trees, roads and grass. The image-based and point-based classification techniques achieved overall classification accuracies of up to 89.9% and 92.7%, respectively. Previous studies achieved overall classification accuracies from 85–89.5% for the same four land cover classes. They used multispectral aerial/satellite imagery combined with nDSM derived from LiDAR data [

5,

6] or combined with LiDAR height and intensity data [

7,

8,

9], while the presented work in this research used the LiDAR data only. Furthermore, the availability of the multispectral LiDAR data eliminates the need for multispectral aerial/satellite imagery for classification purposes.

Figure 9 summarizes the achieved overall accuracy from the two classification techniques.

In particular, the results demonstrated the importance of combining LiDAR intensity and height data, where the overall accuracy increased by more than 12% after the DSM was incorporated with the intensity images. This is clearly notable in

Figure 7c, where some pixels (marked by red rectangles) in the study area were misclassified as trees (i.e., high vegetation). Furthermore, many pixels that belong to the road surface were misclassified as buildings, while those pixels were correctly classified as grass (i.e., low vegetation) or roads, after DSM was incorporated as shown in

Figure 7d (marked by black rectangles). Significant improvements of the producer’s and user’s accuracies were achieved for the individual land covers when DSM was considered. For instant, an improvement was achieved for the producer’s accuracy of buildings, trees and roads classes by 29.7%, 9.1% and 13.3%, respectively. Furthermore, the user’s accuracy of trees and roads classes was improved by 15.4% and 28.8%, respectively.

The point-based classification technique produced various overall accuracies for land cover classification. Overall accuracies of 77.8%, 92.7% and 88.0% were achieved when using NDVINIR-MIR, NDVINIR-G and NDVIMIR-G, respectively. As previously mentioned, the unclassified points and the ground filtering are two sources of classification errors. The error of the unclassified points ranges from 0.0–2.3%, while the ground filtering errors range from 0.0–4.4%.

With the focus on the individual classes, about 35.6% of the tree points were omitted (64.4% producer accuracy), and those points were wrongly classified as buildings when NDVI

NIR-MIR was used. This omission caused a misclassification of the buildings class with about 37% (63% user accuracy). This is primarily due to the moisture content of the vegetation, where dry vegetation exhibits high intensity values in C1 [

38]. As a result, the NDVI

NIR-MIR yielded low values, and hence, the tree points were misclassified as buildings. Similarly, about 19.7% of the grass points were omitted (80.3% producer accuracy) and mainly classified as roads. Furthermore, about 31.1% and 14.5% of roads points were misclassified as grass when NDVI

NIR-MIR and NDVI

MIR-G, respectively, were used.

In addition, about 20.7% (79.3% producer accuracy) of the buildings’ points were wrongly classified as trees when NDVIMIR-G was used. This is mainly because some roof materials exhibit high intensity values in C1, and hence, the NDVIMIR-G increased, leading to the classification errors. As a result, this omission caused a misclassification of the tree class for about 14.4% (85.6% user accuracy).

Although previous attempts showed that radiometric correction and normalization can lead to the improvement of intensity homogeneity [

11,

12,

13], such phenomena cannot be observed in this research. This might be attributed to the fact that the multispectral LiDAR data, used in this research, were collected with a narrow scan angle, whereas the LiDAR data of the aforementioned studies were collected with a wide scan angle that caused obvious energy loss especially close to the edge of the scan line. In addition, the target-related parameters, including the range to the target, the target size, the laser beam incident angle and the illumination of the target material should not necessarily have similar influence on the LiDAR intensity data to the previous studies. Another reason is that the environmental parameters related to the collected data, such as aerosol and Rayleigh scattering, aerosol absorption and atmospheric attenuation, are not changed over the study area, and hence, they do not have the same effect on the LiDAR intensity data as the previous studies. Furthermore, the three channels of the Optech Titan sensor are well controlled by its in-house transfer function, where the recorded signal strength is linear and stable. As such, there is no significant loss of energy due to the signal transmission function. Consequently, radiometric correction may not always be required under similar conditions.

7. Conclusions

This research discussed the use of multispectral LiDAR data in land cover classification of an urban area. The multispectral data were collected by the Optech Titan sensor operating at three wavelengths of 1550 nm, 1064 nm and 532 nm. Two classification techniques were used to classify the multispectral LiDAR data into buildings, trees, roads and grass. The first technique is image-based classification, where the LiDAR intensity and height data were converted into images. Two band combinations were stacked including combined three-intensity images and combined three-intensity images with DSM. The maximum likelihood classifier was then applied to the two band combinations. This technique achieved an overall classification accuracy of about 77.32% from the LiDAR intensity data only. The classification accuracy was improved to 89.89% when DSM was incorporated with the LiDAR intensity data.

The second technique is point-based classification, where the 3D LiDAR points in the three channels were combined and three intensity values were assigned to each single LiDAR point as a pre-processing step. Ground filtering, using skewness balancing and slope-based change, was then applied to separate the LiDAR data into ground and non-ground points. Subsequently, the NDVIs were computed and threshold values were estimated using Jenks break optimization method to cluster the ground points into roads and grass, and the non-ground points into buildings and trees. This technique achieved overall accuracies of 77.83%, 92.70% and 88.02% when NDVINIR-MIR, NDVINIR-G and NDVIMIR-G were used, respectively. A physical model based on the radar range equation was used for radiometric correction of the intensity data. The correction considered the system parameters and the topographic effect. It has been noticed that there is no significant effect on the land covers’ classification results after applying the radiometric correction on this particular dataset. The presented work demonstrates the advantage of using multi-dimensional LiDAR data (intensity and height) over a single dimensional LiDAR data (intensity or height).