Uncertainty Comparison of Visual Sensing in Adverse Weather Conditions †

Abstract

:1. Introduction

2. Image Segmentation in Environmental Application

3. Material and Methods

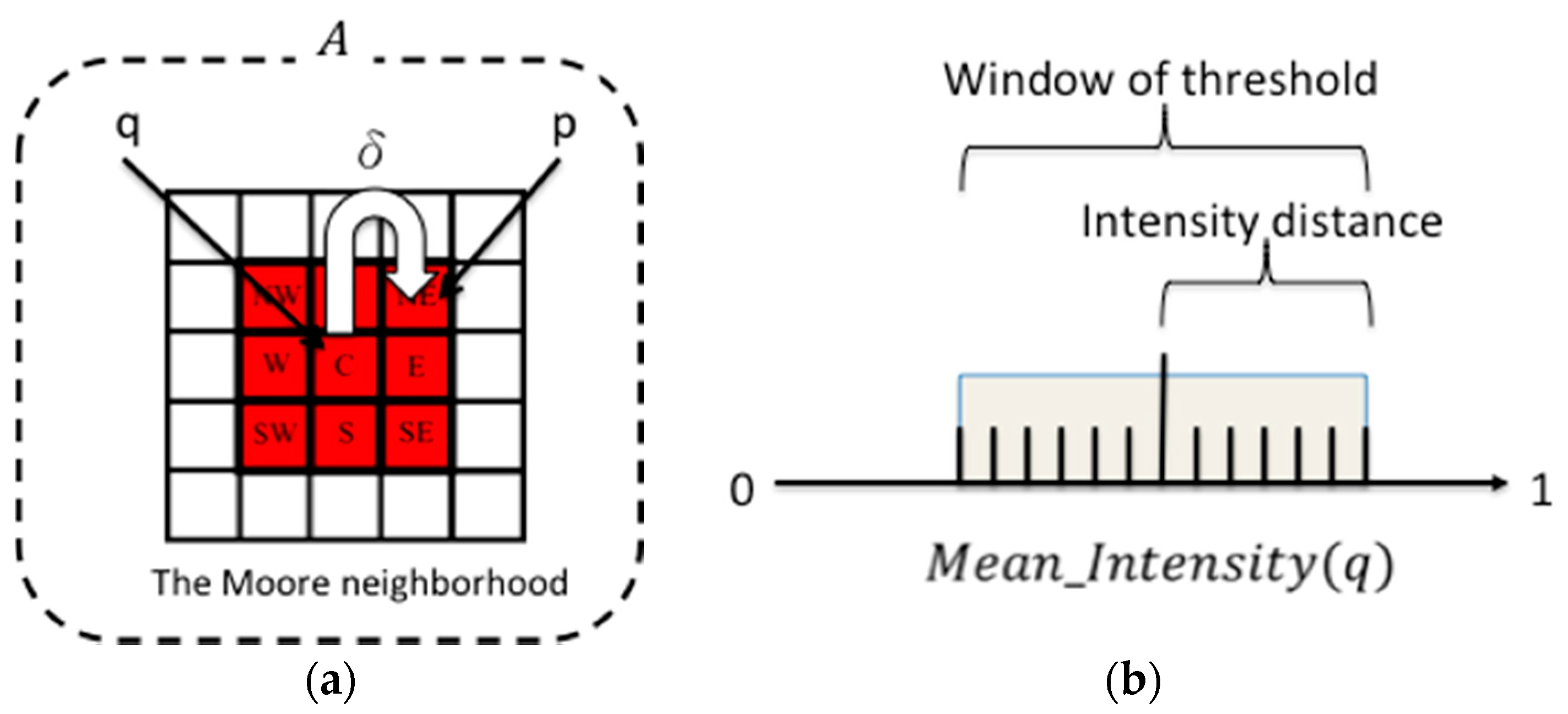

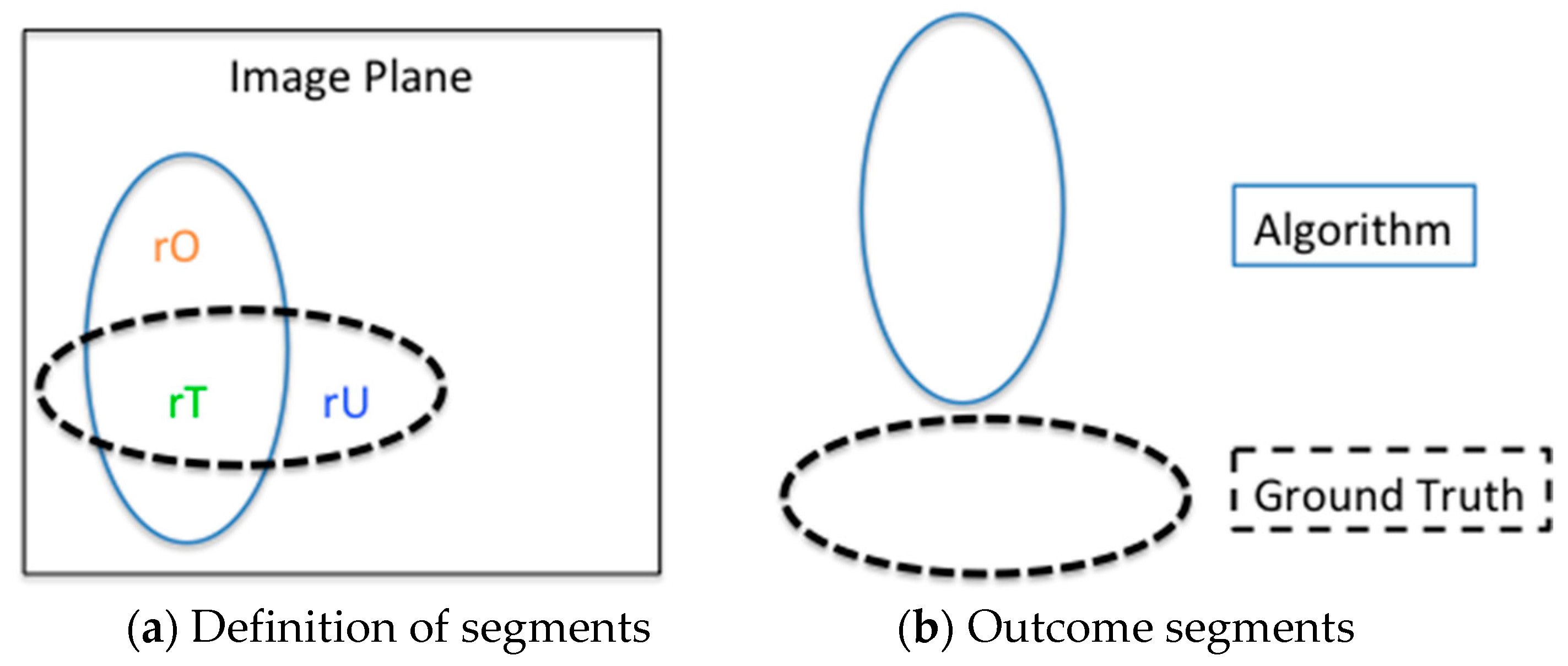

3.1. RegGro Method

| Algorithm 1: RegGro rule pseudocode. |

| //For each pixel p |

| for all p in image A |

| //Copy previous state of p and the mean intensity of all q |

| labels_new=labels; Mean_Intensity(q); |

| //All current q try to spread to neighbors p |

| for all neighbors of p |

| //Successful growth spreads out to the current p. |

| labels_new(p)=labels(q) |

| end if |

| end for |

| end for |

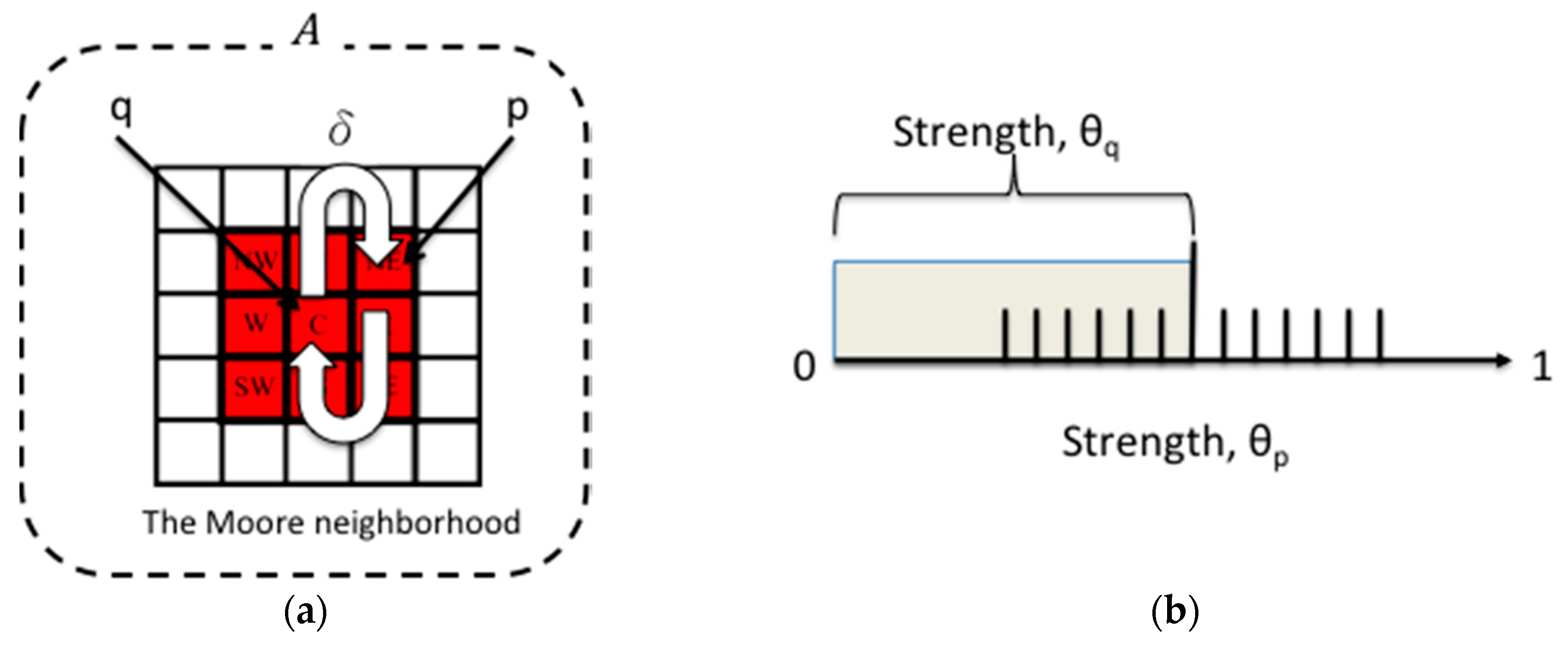

3.2. GrowCut Method

| Algorithm 2: GrowCut rule pseudocode. |

| //For each cell p |

| for all p in image A |

| //Copy the previous state of p |

| //All current cells q try to attack p |

| for all neighbors p |

| if |

| //Successful attacks spread to neighbors p |

| end if |

| end for |

| end for |

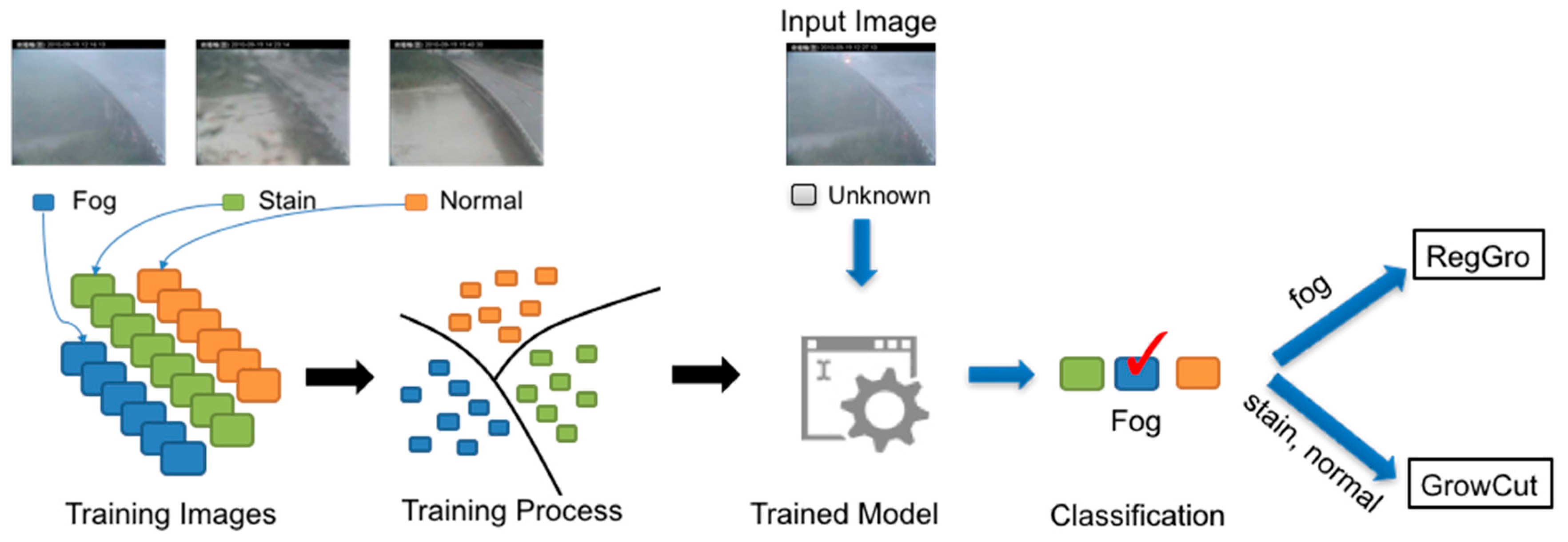

3.3. Hybrid RegGro and GrowCut

3.4. Image Set and Ground Truth

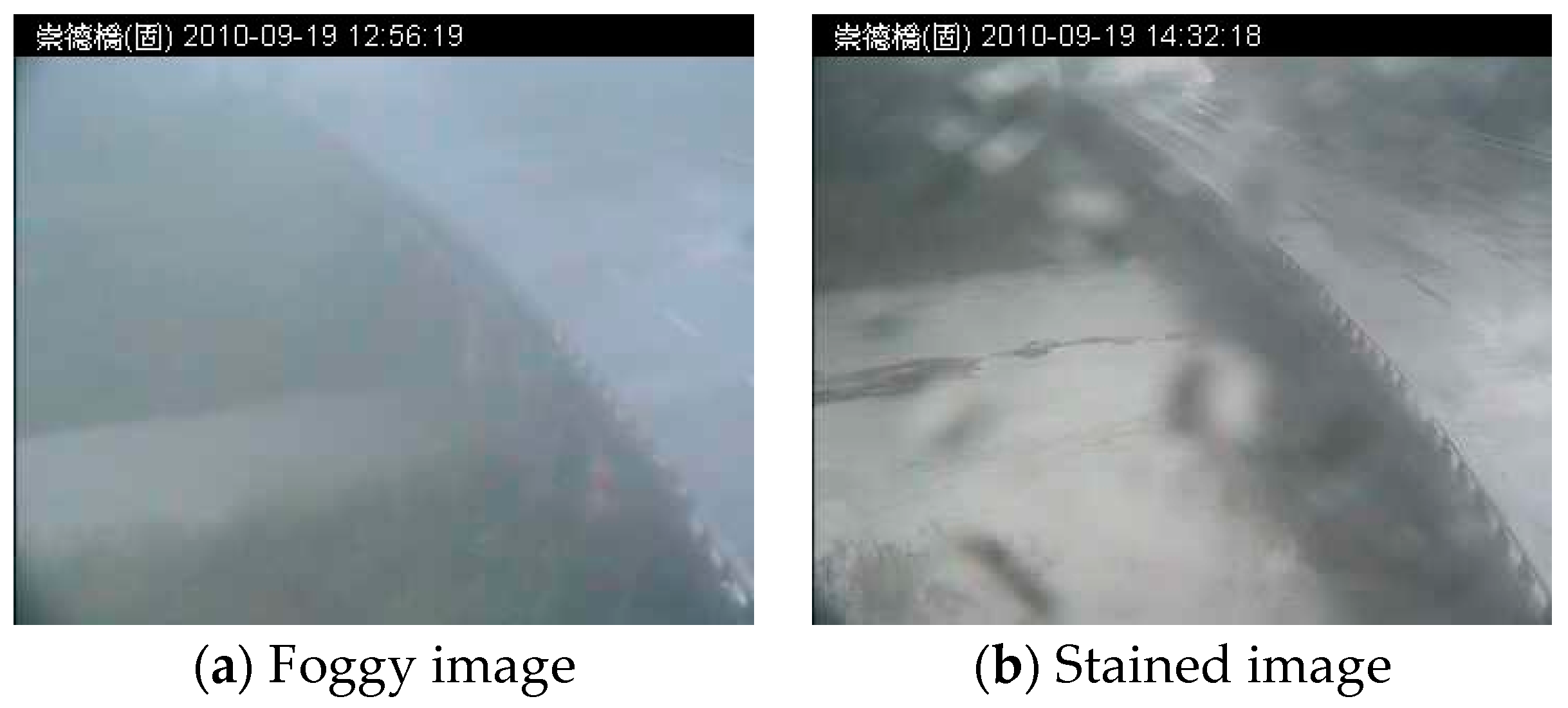

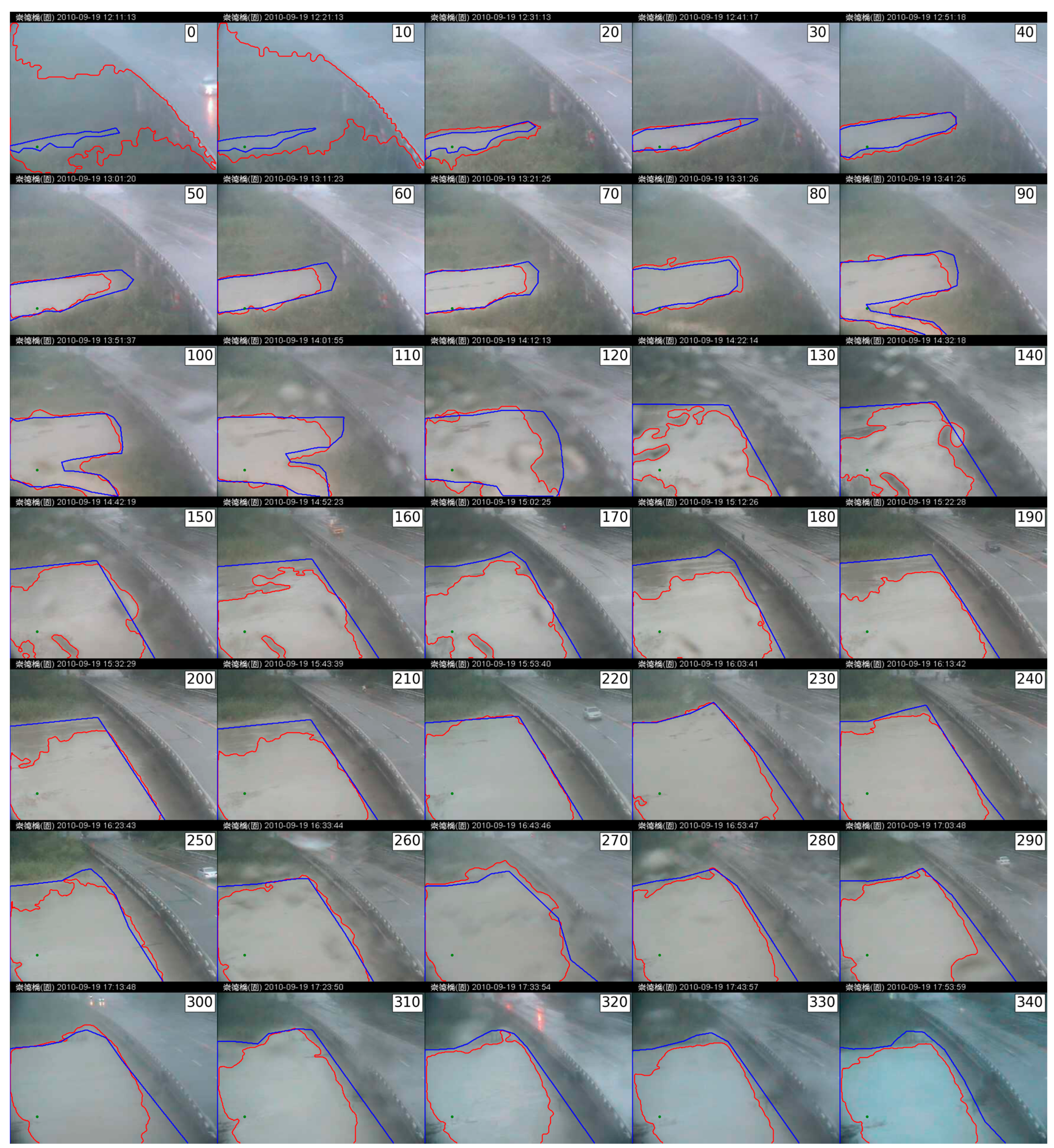

3.4.1. Typhoon Image Set

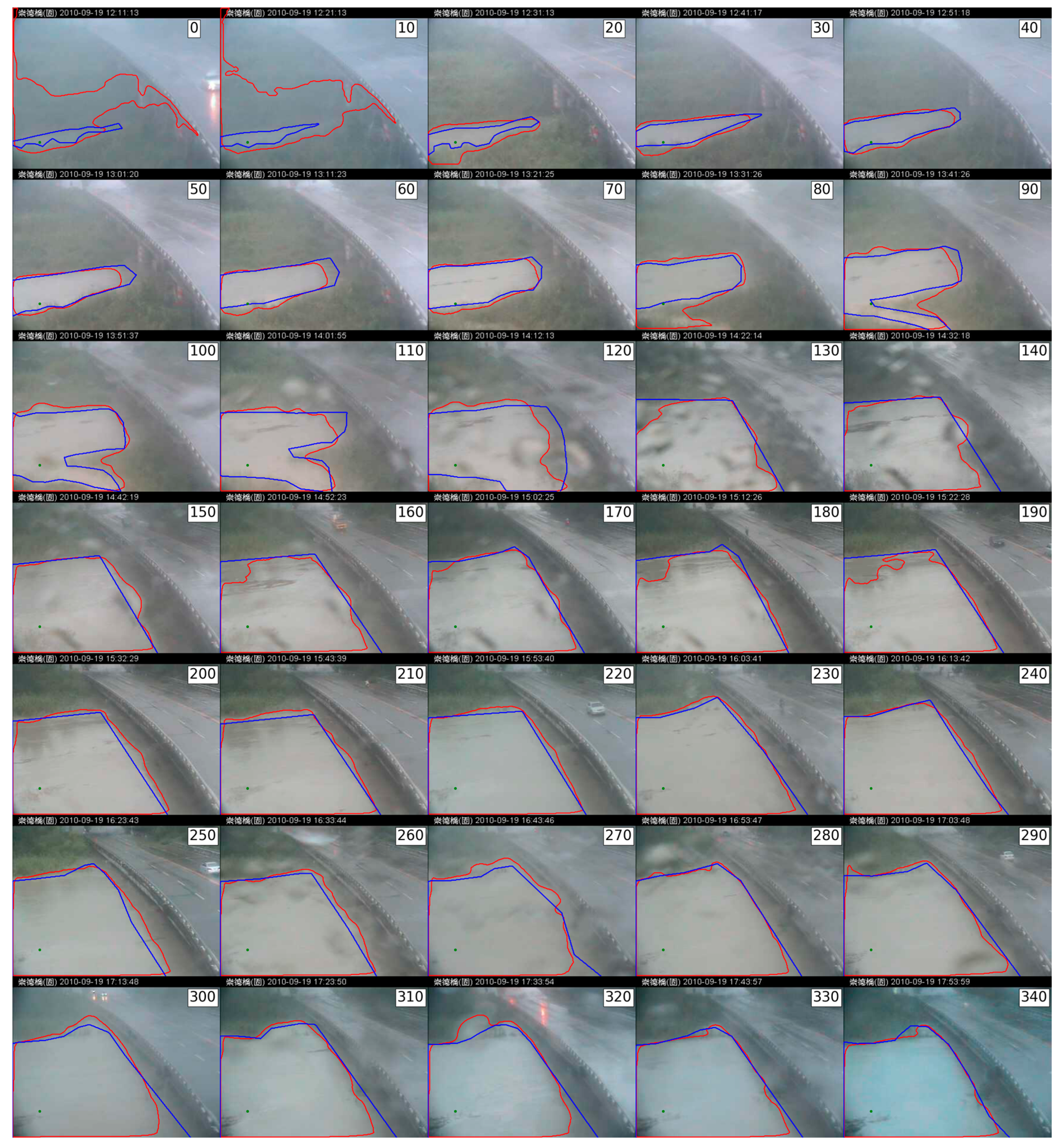

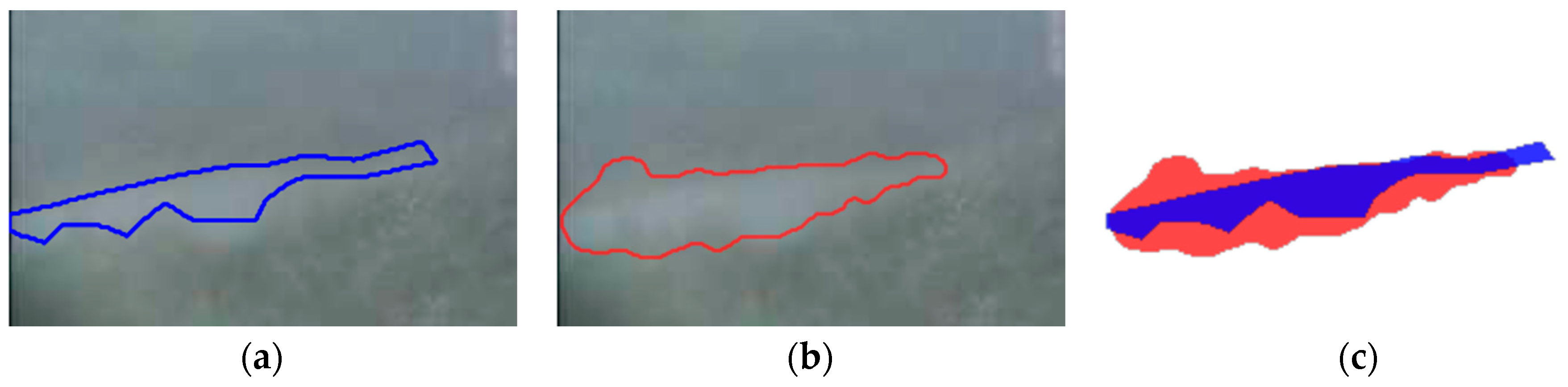

3.4.2. Ground Truth of Flood Segments

4. Results

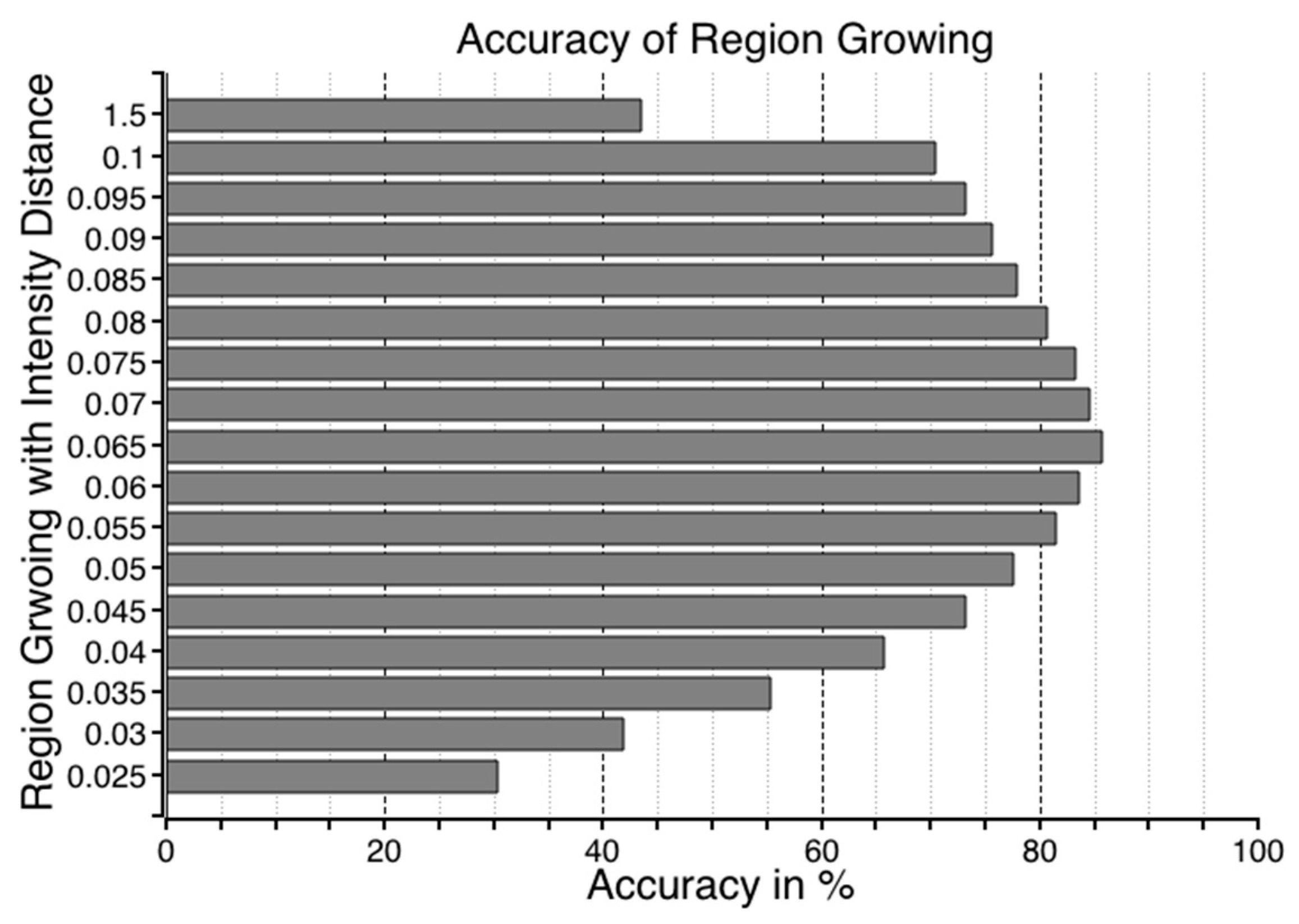

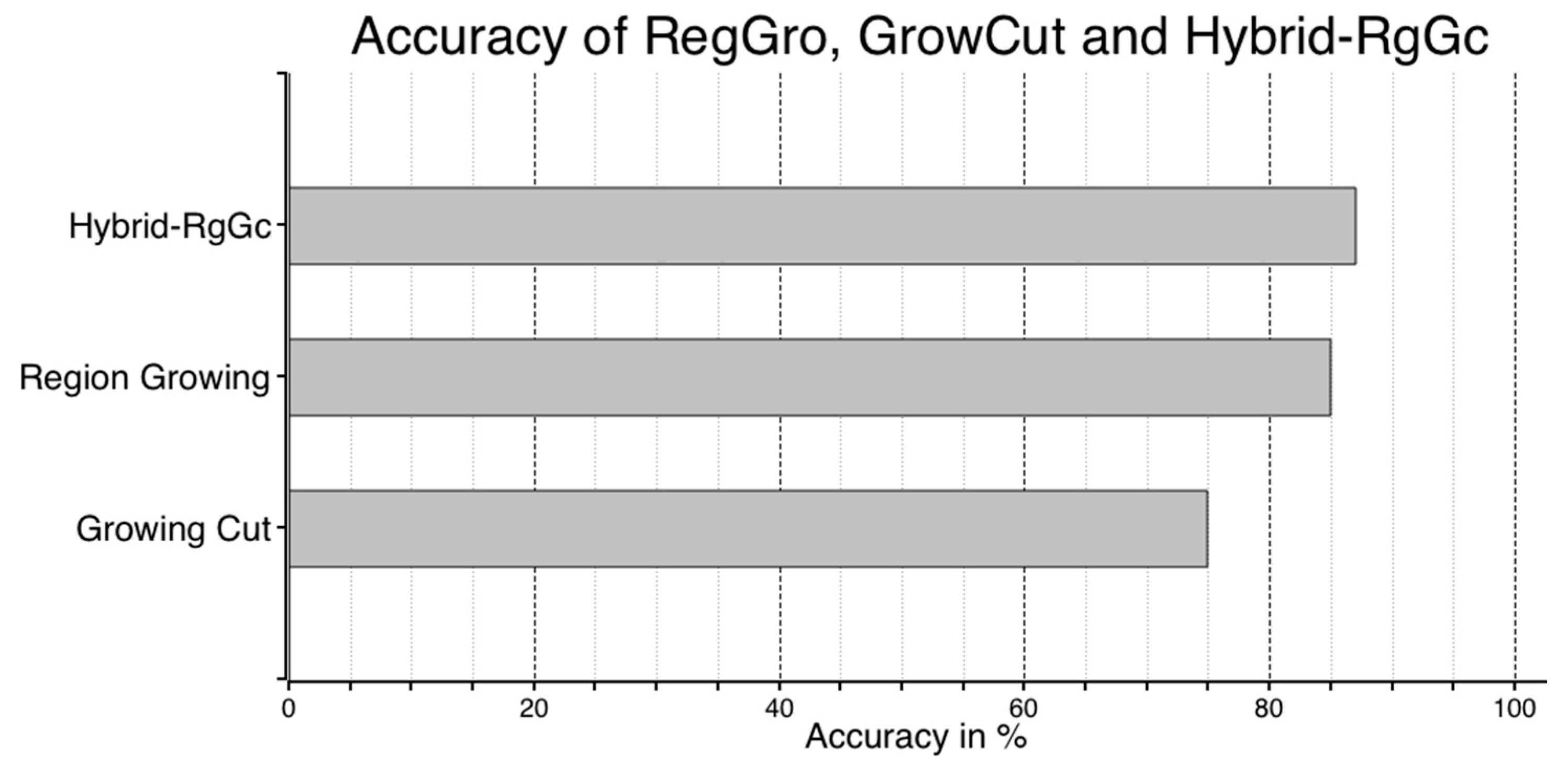

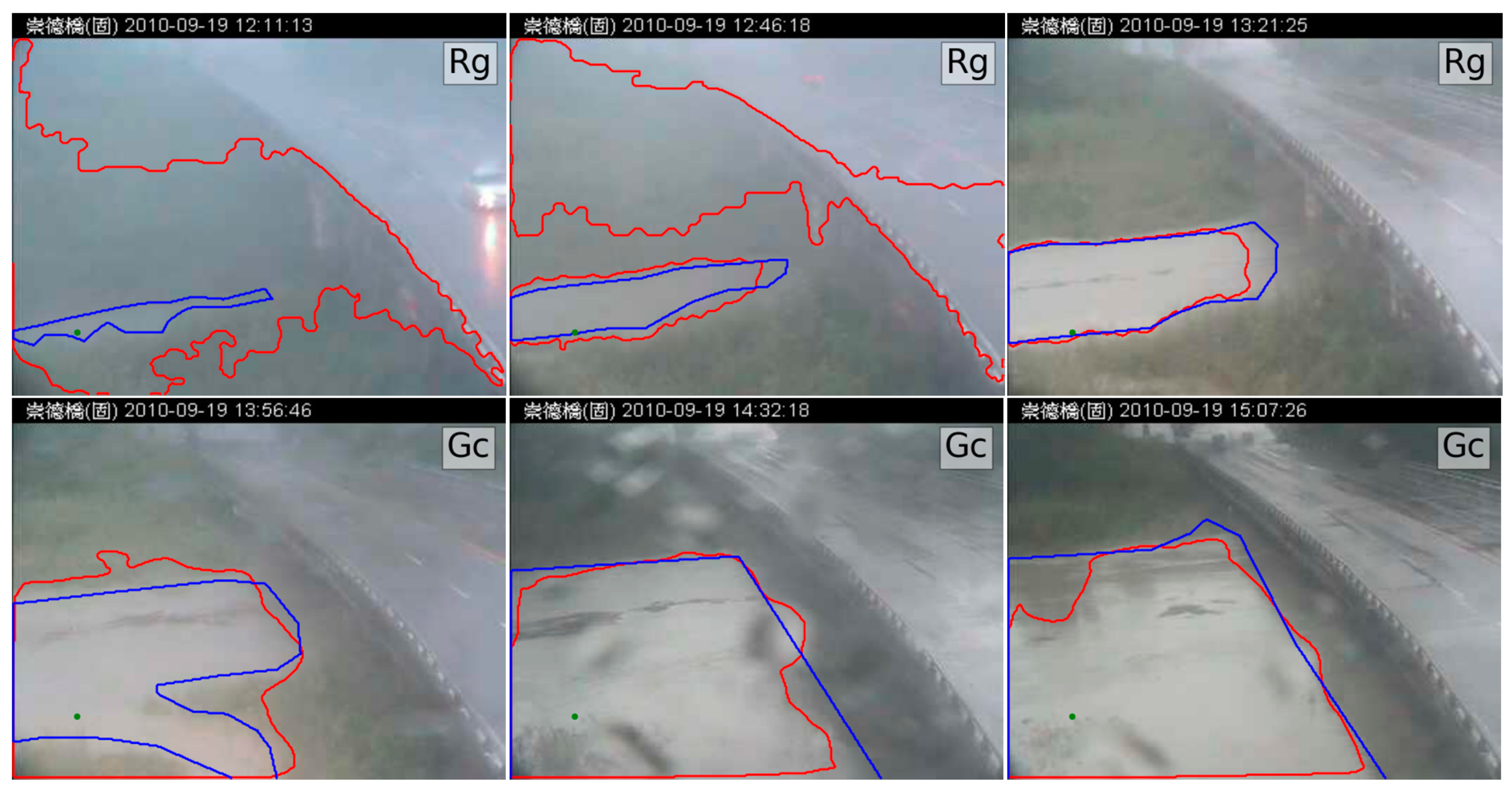

4.1. Performance of RegGro

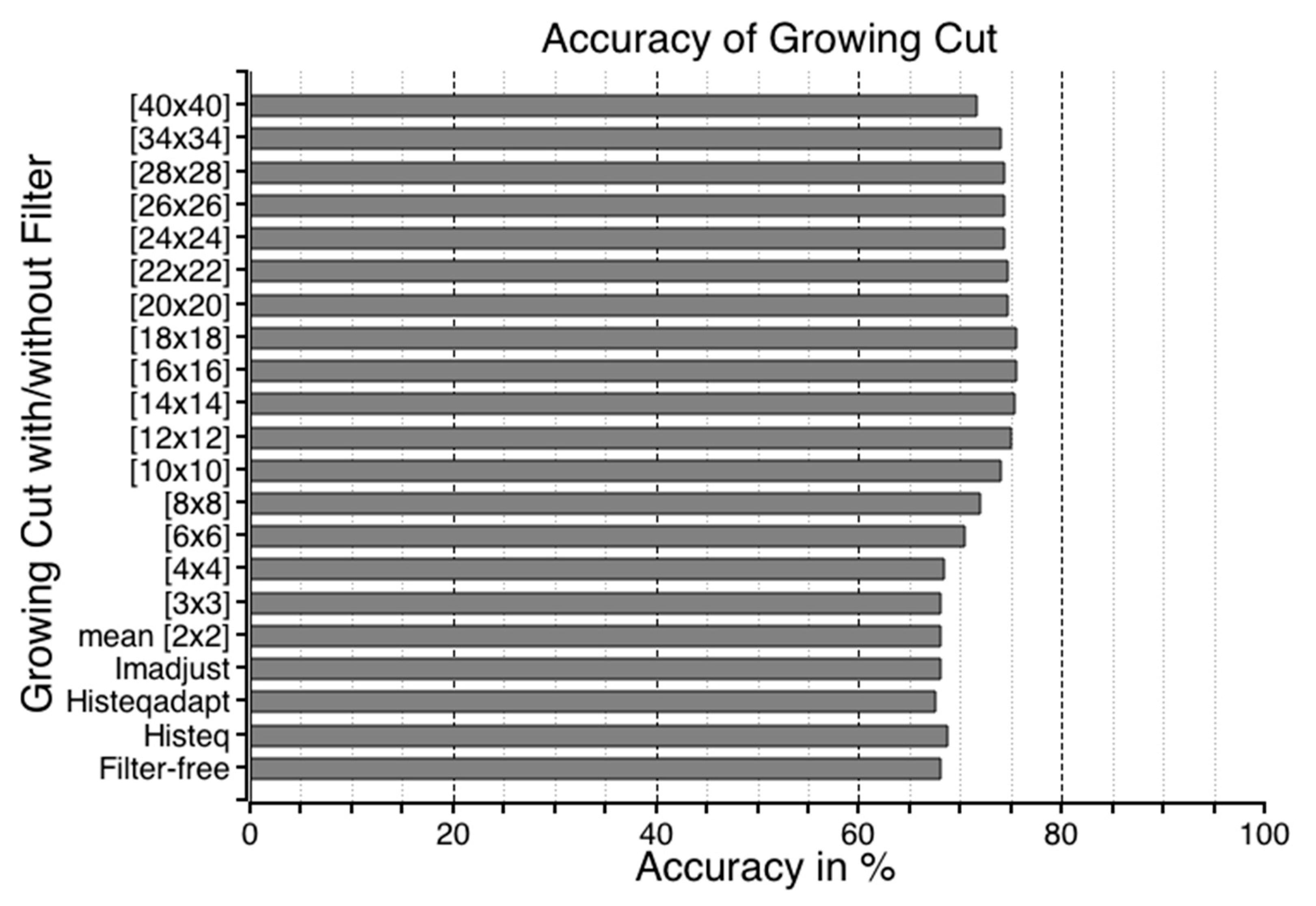

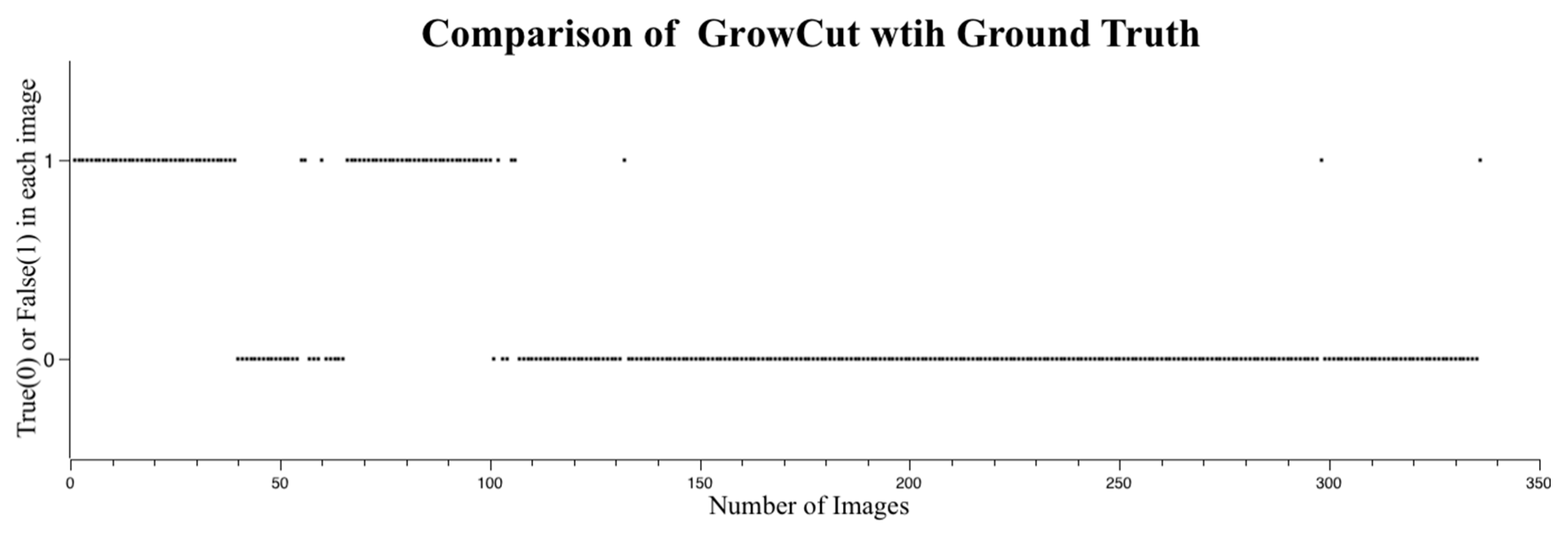

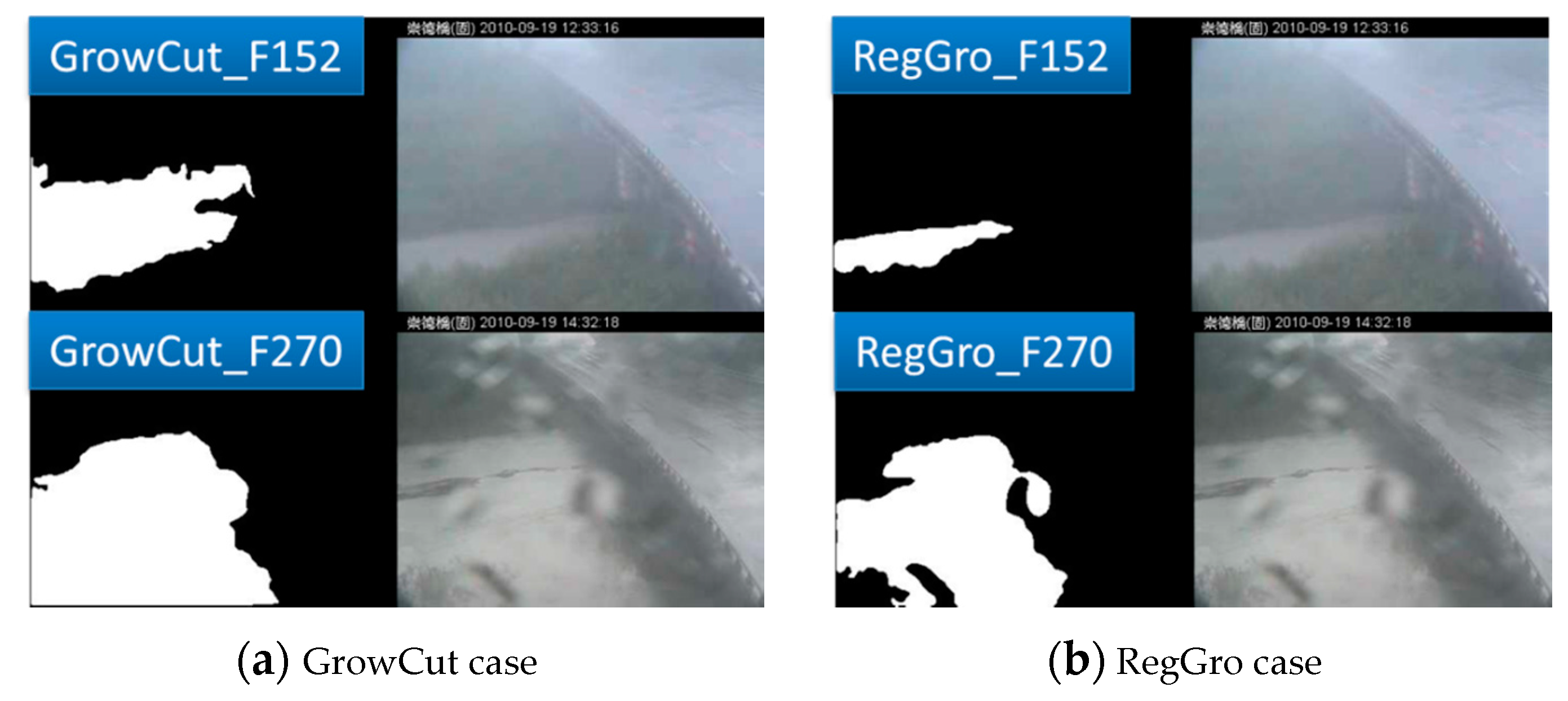

4.2. Performance of GrowCut

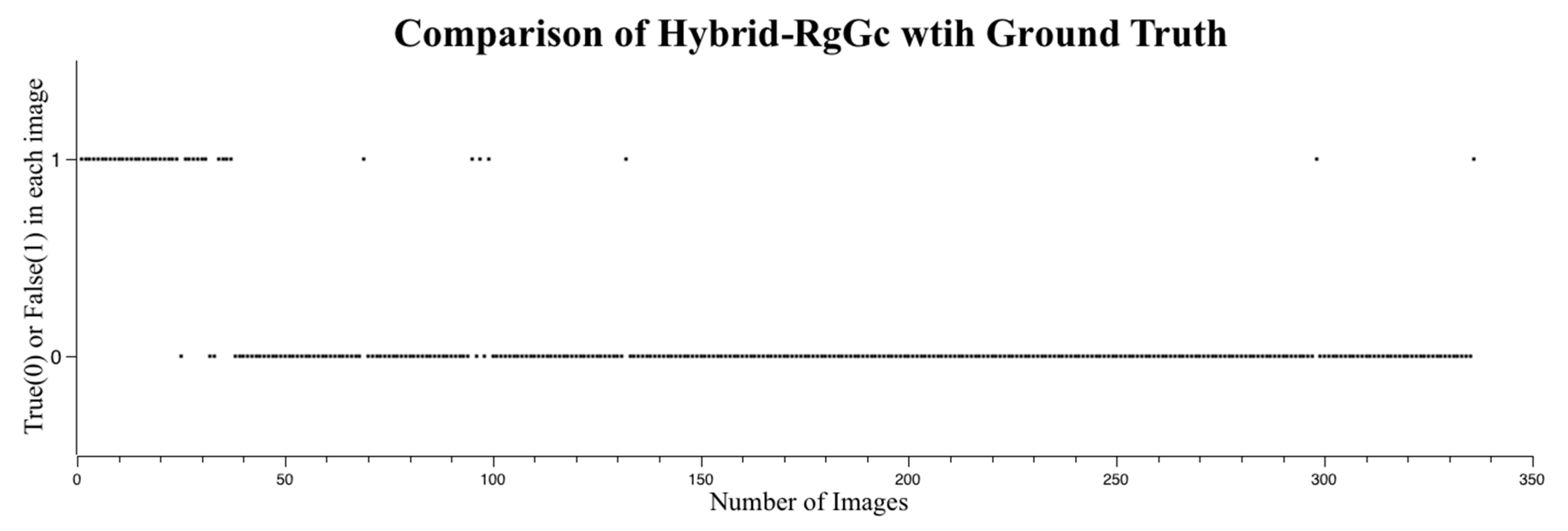

4.3. Performance of Hybrid RegGro and GrowCut

5. Discussion

5.1. Influence of Fog and Heavy Rainfall

5.2. Influence of Stains on Lenses

5.3. Ground Truth

6. Conclusions and Future Work

Acknowledgments

Author Contributions

Conflict of Interests

References

- Lin, M.L.; Jeng, F.S. Characteristics of hazards induced by extremely heavy rainfall in Central Taiwan—Typhoon Herb. Eng. Geol. 2000, 58, 191–207. [Google Scholar] [CrossRef]

- Tsou, C.Y.; Feng, Z.Y.; Chigira, M. Catastrophic landslide induced by Typhoon Morakot, Shiaolin, Taiwan. Geomorphology 2011, 127, 166–178. [Google Scholar] [CrossRef]

- Guo, X.Y.; Zhang, H.Y.; Wang, Y.Q.; Clark, J. Mapping and assessing typhoon-induced forest disturbance in Changbai Mountain National Nature Reserve using time series Landsat imagery. J. Mt. Sci. 2015, 12, 404–416. [Google Scholar] [CrossRef]

- Chen, S.C.; Lin, T.W.; Chen, C.Y. Modeling of natural dam failure modes and downstream riverbed morphological changes with different dam materials in a flume test. Eng. Geol. 2015, 188, 148–158. [Google Scholar] [CrossRef]

- Zhuang, J.Q.; Peng, J.B. A coupled slope cutting—A prolonged rainfall-induced loess landslide: A 17 October 2011 case study. Bull. Eng. Geol. Environ. 2014, 73, 997–1011. [Google Scholar] [CrossRef]

- Tsou, C.Y.; Chigira, M.; Matsushi, Y.; Chen, S.C. Fluvial incision history that controlled the distribution of landslides in the Central Range of Taiwan. Geomorphology 2014, 226, 175–192. [Google Scholar] [CrossRef]

- Chigira, M. Geological and geomorphological features of deep-seated catastrophic landslides in tectonically active regions of Asia and implications for hazard mapping. Episodes 2014, 37, 284–294. [Google Scholar]

- Lo, S.-W.; Wu, J.-H.; Lin, F.-P.; Hsu, C.-H. Cyber Surveillance for Flood Disasters. Sensors 2015, 15, 2369–2387. [Google Scholar] [CrossRef] [PubMed]

- Massari, C.; Tarpanelli, A.; Moramarco, T. A fast simplified model for predicting river flood inundation probabilities in poorly gauged areas. Hydrol. Process. 2015, 29, 2275–2289. [Google Scholar] [CrossRef]

- Holcer, N.J.; Jelicic, P.; Bujevic, M.G.; Vazanic, D. Health protection and risks for rescuers in cases of floods. Arh. Za Hig. Rada I Toksikol. Arch. Ind. Hyg. Toxicol. 2015, 66, 9–13. [Google Scholar]

- Fang, S.F.; Xu, L.D.; Zhu, Y.Q.; Liu, Y.Q.; Liu, Z.H.; Pei, H.; Yan, J.W.; Zhang, H.F. An integrated information system for snowmelt flood early-warning based on internet of things. Inf. Syst. Front. 2015, 17, 321–335. [Google Scholar] [CrossRef]

- Lo, S.W.; Wu, J.H.; Chen, L.C.; Tseng, C.H.; Lin, F.P. Fluvial Monitoring and Flood Response. In Proceedings of the 2014 IEEE Sensors Applications Symposium (SAS), Queenstown, New Zealand, 18–20 February; pp. 378–381.

- Lo, S.W.; Wu, J.H.; Chen, L.C.; Tseng, C.H.; Lin, F.P. Flood Tracking in Severe Weather. In Proceedings of the 2014 International Symposium on Computer, Consumer and Control (Is3c 2014), Taichung, Taiwan, 10–12 June 2014; pp. 27–30.

- Krzhizhanovskaya, V.V.; Shirshov, G.S.; Melnikova, N.B.; Belleman, R.G.; Rusadi, F.I.; Broekhuijsen, B.J.; Gouldby, B.P.; Lhomme, J.; Balis, B.; Bubak, M.; et al. Flood early warning system: Design, implementation and computational modules. Procedia Comput. Sci. 2011, 4, 106–115. [Google Scholar] [CrossRef]

- Castillo-Effer, M.; Quintela, D.H.; Moreno, W.; Jordan, R.; Westhoff, W. Wireless sensor networks for flash-flood alerting. In Proceedings of The Fifth IEEE International Caracas Conference On Devices, Circuits and Systems, Punta Cana, Dominican Republic, 3–5 November 2004; Volume 1, pp. 142–146.

- Chen, Z.; Di, L.; Yu, G.; Chen, N. Real-Time On-Demand Motion Video Change Detection in the Sensor Web Environment. Comput. J. 2011, 54, 2000–2016. [Google Scholar] [CrossRef]

- Kim, J.; Han, Y.; Hahn, H. Embedded implementation of image-based water-level measurement system. IET Comput. Vis. 2011, 5, 125–133. [Google Scholar] [CrossRef]

- Nguyen, L.S.; Schaeli, B.; Sage, D.; Kayal, S.; Jeanbourquin, D.; Barry, D.A.; Rossi, L. Vision-based system for the control and measurement of wastewater flow rate in sewer systems. Water Sci. Technol. 2009, 60, 2281–2289. [Google Scholar] [CrossRef] [PubMed]

- Lo, S.-W.; Wu, J.-H.; Lin, F.-P.; Hsu, C.-H. Visual Sensing for Urban Flood Monitoring. Sensors 2015, 15, 20006–20029. [Google Scholar] [CrossRef] [PubMed]

- Garg, K.; Nayar, S.K. Detection and removal of rain from videos. In Proceedings of the 2004 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Washington, DC, USA, 27 June–2 July 2004; Volume 1, pp. 528–535.

- Tripathi, A.K.; Mukhopadhyay, S. A Probabilistic Approach for Detection and Removal of Rain from Videos. IETE J. Res. 2011, 57, 82–91. [Google Scholar] [CrossRef]

- Tripathi, A.K.; Mukhopadhyay, S. Meteorological approach for detection and removal of rain from videos. IET Comput. Vis. 2013, 7, 36–47. [Google Scholar] [CrossRef]

- Adler, W.F. Rain impact retrospective and vision for the future. Wear 1999, 233, 25–38. [Google Scholar] [CrossRef]

- Garg, K.; Nayar, S.K. Vision and rain. Int. J. Comput. Vis. 2007, 75, 3–27. [Google Scholar] [CrossRef]

- Pang, J.; Au, O.C.; Guo, Z. Improved Single Image Dehazing Using Guided Filter. In Proceedings of the APSIPAASX, Xi’an, China, 17–21 October 2011; pp. 1–4.

- Shwartz, S.; Namer, E.; Schechner, Y.Y. Blind Haze Separation. In Proceedings of the 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, New York, NY, USA, 17–22 June 2006; pp. 1984–1991.

- Kopf, J.; Neubert, B.; Chen, B.; Cohen, M.; Cohen-Or, D.; Deussen, O.; Uyttendaele, M.; Lischinski, D. Deep Photo: Model-Based Photograph Enhancement and Viewing. In Proceedings of the ACM SIGGRAPH Asia 2008, Singapore, 11–13 December 2008.

- Xiao, C.X.; Gan, J.J. Fast image dehazing using guided joint bilateral filter. Vis. Comput. 2012, 28, 713–721. [Google Scholar] [CrossRef]

- Kim, J.H.; Jang, W.D.; Sim, J.Y.; Kim, C.S. Optimized contrast enhancement for real-time image and video dehazing. J. Vis. Commun. Image Represent. 2013, 24, 410–425. [Google Scholar] [CrossRef]

- Blaschke, T. Object based image analysis for remote sensing. Isprs J. Photogramm. Remote Sens. 2010, 65, 2–16. [Google Scholar] [CrossRef]

- Dos Santos, P.P.; Tavares, A.O. Basin Flood Risk Management: A Territorial Data-Driven Approach to Support Decision-Making. Water 2015, 7, 480–502. [Google Scholar] [CrossRef]

- Mason, D.C.; Giustarini, L.; Garcia-Pintado, J.; Cloke, H.L. Detection of flooded urban areas in high resolution Synthetic Aperture Radar images using double scattering. Int. J. Appl. Earth Obs. Geoinf. 2014, 28, 150–159. [Google Scholar] [CrossRef]

- Long, S.; Fatoyinbo, T.E.; Policelli, F. Flood extent mapping for Namibia using change detection and thresholding with SAR. Environ. Res. Lett. 2014, 9. [Google Scholar] [CrossRef]

- Chen, S.; Liu, H.J.; You, Y.L.; Mullens, E.; Hu, J.J.; Yuan, Y.; Huang, M.Y.; He, L.; Luo, Y.M.; Zeng, X.J.; et al. Evaluation of High-Resolution Precipitation Estimates from Satellites during July 2012 Beijing Flood Event Using Dense Rain Gauge Observations. PLoS ONE 2014, 9. [Google Scholar] [CrossRef] [PubMed]

- Oliva, D.; Osuna-Enciso, V.; Cuevas, E.; Pajares, G.; Pérez-Cisneros, M.; Zaldívar, D. Improving segmentation velocity using an evolutionary method. Expert Syst. Appl. 2015, 42, 5874–5886. [Google Scholar] [CrossRef]

- Foggia, P.; Percannella, G.; Vento, M. Graph Matching and Learning in Pattern Recognition in the Last 10 Years. Int. J. Pattern Recognit. Artif. Intell. 2014, 28, 1450001. [Google Scholar] [CrossRef]

- Ducournau, A.; Bretto, A. Random walks in directed hypergraphs and application to semi-supervised image segmentation. Comput. Vis. Image Underst. 2014, 120, 91–102. [Google Scholar] [CrossRef]

- Oliva, D.; Cuevas, E.; Pajares, G.; Zaldivar, D.; Perez-Cisneros, M. Multilevel Thresholding Segmentation Based on Harmony Search Optimization. J. Appl. Math. 2013, 2013. [Google Scholar] [CrossRef]

- Vantaram, S.R.; Saber, E. Survey of contemporary trends in color image segmentation. J. Electron. Imaging 2012, 21, 040901. [Google Scholar] [CrossRef]

- Gonzalez, R.C.; Woods, R.E. Digital Image Processing, 3rd ed.; Prentice Hall: Upper Saddle River, NJ, USA, 2008. [Google Scholar]

- Peng, B.; Zhang, L.; Zhang, D. A survey of graph theoretical approaches to image segmentation. Pattern Recognit. 2013, 46, 1020–1038. [Google Scholar] [CrossRef]

- Ning, J.; Zhang, L.; Zhang, D.; Wu, C. Interactive image segmentation by maximal similarity based region merging. Pattern Recognit. 2010, 43, 445–456. [Google Scholar] [CrossRef]

- Panagiotakis, C.; Grinias, I.; Tziritas, G. Natural Image Segmentation Based on Tree Equipartition, Bayesian Flooding and Region Merging. IEEE Trans. Image Process. 2011, 20, 2276–2287. [Google Scholar] [CrossRef] [PubMed]

- Couprie, C.; Grady, L.; Najman, L.; Talbot, H. Power watersheds: A new image segmentation framework extending graph cuts, random walker and optimal spanning forest. In Proceedings of the 2009 IEEE 12th International Conference on Computer Vision, Kyoto, Japan, 29 September–2 October 2009; pp. 731–738.

- Panagiotakis, C.; Papadakis, H.; Grinias, E.; Komodakis, N.; Fragopoulou, P.; Tziritas, G. Interactive image segmentation based on synthetic graph coordinates. Pattern Recognit. 2013, 46, 2940–2952. [Google Scholar] [CrossRef]

- Arbelaez, P.; Maire, M.; Fowlkes, C.; Malik, J. Contour Detection and Hierarchical Image Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 33, 898–916. [Google Scholar] [CrossRef] [PubMed]

- Vezhnevets, V.; Konouchine, V. GrowCut: Interactive multi-label ND image segmentation by cellular automata. Proc. Graphicon 2005, 1, 150–156. [Google Scholar]

- Abadi, M.; Agarwal, A.; Barham, P.; Brevdo, E.; Chen, Z.; Citro, C.; Corrado, G.; Davis, A.; Dean, J.; Devin, M.; et al. TensorFlow: Large-Scale Machine Learning on Heterogeneous Distributed Systems. 2016; arXiv:1603.04467. [Google Scholar]

- TensorFlow. Available online: https://www.tensorflow.org/ (accessed on 28 April 2016).

- NASA Spinoff. Available online: https://spinoff.nasa.gov/ (accessed on 28 April 2016).

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lo, S.-W.; Wu, J.-H.; Chen, L.-C.; Tseng, C.-H.; Lin, F.-P.; Hsu, C.-H. Uncertainty Comparison of Visual Sensing in Adverse Weather Conditions. Sensors 2016, 16, 1125. https://doi.org/10.3390/s16071125

Lo S-W, Wu J-H, Chen L-C, Tseng C-H, Lin F-P, Hsu C-H. Uncertainty Comparison of Visual Sensing in Adverse Weather Conditions. Sensors. 2016; 16(7):1125. https://doi.org/10.3390/s16071125

Chicago/Turabian StyleLo, Shi-Wei, Jyh-Horng Wu, Lun-Chi Chen, Chien-Hao Tseng, Fang-Pang Lin, and Ching-Han Hsu. 2016. "Uncertainty Comparison of Visual Sensing in Adverse Weather Conditions" Sensors 16, no. 7: 1125. https://doi.org/10.3390/s16071125

APA StyleLo, S.-W., Wu, J.-H., Chen, L.-C., Tseng, C.-H., Lin, F.-P., & Hsu, C.-H. (2016). Uncertainty Comparison of Visual Sensing in Adverse Weather Conditions. Sensors, 16(7), 1125. https://doi.org/10.3390/s16071125