1. Introduction

For exploration tasks that rely on multi-agent systems, with complex, unstructured terrains, autonomy plays a key role to lower potential threats or tedious work for human operators, be it space exploration, disaster relief, or routine industrial facility inspections. The main objective here is to give a human operator more detailed information about the explored area, e.g., in terms of a map, and to support further decision making. While multiple agents do provide an increased sensing aperture and can potentially collect information more efficiently than a single-agent system, they have to rely more heavily on autonomy to compensate, e.g., possible large (or unreliable) communication delays [

1] or the complexity of teleoperating multiple agents.

One of the approaches to increase the autonomy of multi-agent systems consists of using in situ analysis of the collected data with the agents’ own computing resources to decide on future actions. In the context of mapping, such an approach is also known as active information gathering [

2,

3] or exploration. Note that mapping is generally not restricted to sensing with imaging sensors, such as cameras. The exploration of gas sources [

4] or of the magnetic field [

5] also falls in this category.

An approach for active information gathering lies in the focus of the presented work. In the following, we provide an overview of work related to the approach discussed in this paper, the arising challenges, and a proposed solution.

1.1. Related Work

The objective of active information gathering is to utilize the collected data, represented in terms of a parameterized model, to compute information content as a function of space. This can be done using heuristic approaches, as in [

6,

7], where the authors modify the random walk strategy by adjusting the movement steps of each robot such as to collect more information. Alternatively, information–theoretic approaches can be used. In [

8], the authors use a probabilistic description of the model to steer cameras mounted on multiple unmanned aerial vehicles (UAVs). In this case, the information metric can be computed directly based on statistics of the pixels. The resulting quantity is then used to autonomously coordinate UAVs in an optimal configuration. In [

9], the authors propose an

exploration driven by uncertainty by minimizing the determinant of the covariance matrix for an optimal camera placement for a 3D image. This approach essentially implements an optimal experiment design [

10], which in turn relates the determinant of the covariance matrixof the model parameters to the Shannon entropy of Gaussian random variables. This connection has been further explored in [

11], where the authors compare criteria for optimal experiment design with mutual information for Gaussian processes regression and sensor placement. This leads to a greedy algorithm that uses mutual information for finding optimal sensor placements. An extension of [

11] for multiple agents and a decentralized estimation of the mutual information is presented in [

2,

12]. In the latter, the authors also considered robotic aspects, such as optimal trajectory planning along with information gathering: an approach that has been further investigated in [

13].

One of the key elements in experiment design-based information gathering is the ability to compute the covariance structure of the model parameters as a function of space and evaluate it in a distributed fashion. In [

14], the authors studied the information-gathering approach for sparsity constrained models, i.e., under assumption that the model parameters are sparse. This required implementing non-smooth

constraints in the optimization problem, which in turn made the exact computation of the parameter covariance impossible. Instead, the covariance structure was approximated by locally smoothing the curvature of the objective function. In [

14], the method was applied to generalized linear models with sparsity constraints for a distributed computation with two versions of data splitting over agents: homogeneous splitting, also called splitting-over-examples (SOE), and heterogeneous splitting, also called splitting-over-features (SOF). However, despite the method yielding in simulations a better performance as compared to systematic or random exploration approaches, the used approximation has been derived with purely empirical arguments.

1.2. Paper Contribution

To address this, the exploration problem with sparsity constraints has been cast into a probabilistic framework, where the parameter covariance can be computed exactly. In [

15], we formulated a Bayesian approach toward cooperative sparse parameter estimation for SOF, and in [

16] for SOE data splitting. However, the distributed computation of the covariance matrix and information-driven exploration has not been considered so far. With this contribution, we close this gap and study an information-driven exploration strategy that is based on a Bayesian approach toward distributed sparse regression. Specifically,

We consider a distributed computation of the corresponding parameter covariance matrices for information-seeking exploration using a Bayesian formulation of the model, and

Validate the algorithm’s performance both in simulations as well as in an experiment with two robots exploring the magnetic field variations on a laboratory floor.

The rest of the paper is structured as follows. We begin with a model formulation and model learning in

Section 2. In

Section 3, we discuss a distributed computation of the exploration criterion for the considered regression problem. Afterwards, we outline the experimental setting, the collection of ground truth data, and the sensor calibration in

Section 4, as well as the overall system design in

Section 5. The experimental results are summarized in

Section 6, and

Section 7 concludes this work.

2. Distributed Sparse Bayesian Learning

2.1. Model Definition

We make use of a classical basis function regression [

17] to express an unknown scalar physical process

, with

and

. Typically, the process is

d-dimensional, with

. To represent the process

, a set of

basis functions

,

are used, where

is dependent on the used basis function and

s is a number of parameters per basis function.

Each basis function is parameterized with

,

, which can represent centers of corresponding basis functions, their width, etc. More formally, we assume that

where

are generally unknown weights in the representation.

To estimate

,

, we make

M observations of the process

at locations

. The corresponding

m-th measurement is then represented as

where

is an additive sample of white Gaussian noise with a known precision

. By collecting

M measurements in a vector

, we can reformulate (

2) in a vector-matrix notation. To this end, we define

which allows us to formulate the measurement model in a vectorized form

with

.

Based on (

7), we define the likelihood of the parameters

as follows

Often, the representation (

1) is selected such that

, i.e., it is underdetermined. This implies that there is an infinite number of possible solutions for

. A popular approach to restrict a set of solutions consists of introducing sparsity constraints on parameters. Within the Bayesian framework, this can be achieved by defining a prior over the parameter weights

. This leads to a class of probabilistic approaches referred to as Sparse Bayesian Learning (SBL).

The basic idea of SBL is to assign an appropriate prior to the

N-dimensional vector

such that the resulting maximum a posteriori (MAP) estimate

is sparse, i.e., many of its entries are zero. Typically, SBL specifies a hierarchical factorable prior

, where

,

[

18,

19,

20]. For each

, the hyperparameter

, also called sparsity parameter, regulates the width of

; the product

defines a Gaussian scale mixture (the authors in work [

21] extend this framework by generalizing

to be the probability density function (PDF) of a power exponential distribution, which makes the hierarchical prior a power exponential scale mixture distribution). Bayesian inference on a linear model with such a hierarchical prior is commonly realized via two types of techniques: MAP estimation of

(Type I estimation; note that many traditional “non-Bayesian” methods for learning sparse representations such as basis pursuit de-noising or re-weighted

-norm regressions [

22,

23,

24] can be interpreted as Type I estimation within the above Bayesian framework [

21]) or MAP estimation of

(Type II estimation, also called maximum evidence estimation, or empirical Bayes method). Type II estimation has proven (both theoretically and empirically) to perform consistently better than Type I estimation in the present application context. One reason is that the objective function of a Type II estimator typically exhibits significantly fewer local minima than that of the corresponding Type I estimator and promotes greater sparsity [

25]. The hyperprior

,

, is usually selected to be non-informative, i.e.,

[

26,

27,

28]. The motivation for this choice is twofold. First, the resulting inference schemes typically demonstrate superior (or similar) performance as compared to schemes derived based on other hyperprior selections [

21]. Second, very efficient inference algorithms can be constructed and studied [

26,

27,

28,

29,

30].

In the following, we consider only SBL Type II optimization as it leads to usually sparser parameter vectors

[

21], and we drop explicit dependencies on measurements

and basis function parameters

to simplify notation. The marginalized likelihood for SBL Type II optimization is therefore

where

,

, and

being the identity. Taking the negative logarithm of (

9), we obtain the objective function for SBL Type II optimization in the following form

An estimate of hyperparameters

is then found as

Once the estimate

is obtained, the posterior probability density function (PDF) of the the parameter weights

can be easily computed: it is known to be Gaussian

with the moments given as

where

(see also [

18]).

2.2. Sparse Bayesian Learning with the Automatic Relevance Determination

The key to a sparse estimate of

is a solution to (

11). There are a number of efficient schemes [

26,

27,

28] to solve this problem. The method that we use in this paper is based on [

26]. In the following, we shortly outline this algorithm.

In [

26], the authors introduced the reformulated automatic relevance determination (R-ARD) by using an auxiliary function that upper bounds the objective function

in (

10). Specifically, using the concavity of the log-determinant in (

10) with respect to

, the former can be represented using a Fenchel conjugate as

where

is a dual variable and

is the dual (or conjugate) function (see also [

31] (Chapter 5) or [

32]).

Using (

13), we can now upper-bound (

10) as follows

Note that for any , the bound becomes tight when minimized over . This fact is utilized for the numerical estimation of , which is the essence of the R-ARD algorithm.

R-ARD alternates between estimating

, which can be found in closed form as [

26,

31]

and estimating

as a solution to a convex optimization problem

In order to solve (

16), the authors in [

26] proposed to use yet another upper bound on

. Specifically, by noting that

the cost function in (

16) can be bounded with

The right-hand side of (

18) is convex both in

and

. As such, for any fixed

, the optimal solution for

can be easily found as

,

. By inserting the latter in (

18), we find the solution for

that minimizes the upper-bound

as

which can be recognized as a weighted least absolute shrinkage and selection operator (LASSO) cost function. Expression (

19) builds a basis for a distributed estimation learning of SBL parameters, since there exist techniques to optimize a LASSO function over a network, which are presented in the following section.

2.3. The Distributed Automated Relevance Determination Algorithm for SOF Data Splitting

The derivation of the distributed R-ARD (D-R-ARD) for SOF is shown in [

14]. Here, we would like to show the main aspects of the distribution paradigm and the resulting algorithm. The main aspect of heterogeneous data splitting is that each agent has its own model. Therefore, the parameter weights

are distributed among

agents as

and each agent has its part

, where

. Likewise, the matrix

is partitioned among

K agents as

where

. The SOF model is then formulated as

Similarly, the hyper-parameters are also partitioned as .

The solution to cooperative SOF inference then amounts to computing

from (

15) and optimizing the upper bound (

18) over a network of

K agents.

Unfortunately, in the case of the SOF model, the dual variable

in (

15) cannot be computed exactly. Instead it is upper-bounded [

14] as

, where

is computed for each agent:

with

and

. This approximation preserves the upper bound in (

18). Consequently, (

19) can be reformulated to fit for SOF as

which can be solved distributively via the alternating direction method of multipliers (ADMM) algorithm [

33] (Section 8.3). The D-R-ARD algorithm for SOF is summarized in Algorithm 1. When using ADMM to solve for

, the only communication between the agents takes place inside of the ADMM algorithm. The communication load of the ADMM algorithm for SOF is discussed in [

33] (Chapter 8).

| Algorithm 1 D-R-ARD for SOF |

- 1:

- 2:

while not converged do - 3:

▹ See ( 22); is solved distributively using - 4:

- 5:

- 6:

,

|

2.4. The Distributed Automated Relevance Determination Algorithm for SOE Data Splitting

For SOE, we will assume that measurements

at locations

are partitioned into

K disjoint subsets

, each associated with the corresponding agent in the network. Hence, each agent

k makes

observations

at locations

, such that

,

,

,

, and

. This allows us to rewrite (

7) in an equivalent form as

where we assumed that perturbations

,

, are independent between agents, i.e.,

To cast R-ARD in a distributed setting, we need to be able to solve (

19) and compute

in (

15) over a network of agents. To this end, let us define

where

, and

is an identity matrix of size

,

. We point out that

, or rather the last factor in (

24), can be computed over a network of agents using an averaged consensus algorithm [

34,

35].

Next, we apply the Woodbury identity to

to obtain

where

. Inserting (

25) and (

24) into (

15), we get

where

. Thus, once

becomes available,

can be found distributively using expression (

26).

To solve (

19) distributively, we first note that for the model (

23) the likelihood (

8) can be equivalently rewritten as

It is then straightforward to show that the upper bound (

18) will take the form

Similarly to (

18), for any

,

, the bound is minimized with respect to

at

,

. Inserting the latter in (

28), we obtain an objective function for estimating

Expression (

29) can be readily solved distributively using an ADMM algorithm (see e.g., [

33] (Chapter 8) and [

36]). Once

is found, optimal parameter values

are found as

,

.

In Algorithm 2, we now summarize the key steps of the resulting D-R-ARD algorithm for SOE. As we can see from Algorithm 2, D-R-ARD includes two optimizing loops. The inner optimization loop is an ADMM algorithm, which is guaranteed to converge to a solution [

33]. The convergence of the outer loop is basically the convergence of the R-ARD algorithm presented in [

26].

| Algorithm 2 D-R-ARD for SOE |

- 1:

- 2:

Compute using averaged consensus over as in ( 24) - 3:

while not converged do - 4:

▹ See ( 29); is solved distributively using ADMM [ 33, 36] - 5:

- 6:

- 7:

|

Communication Load of D-R-ARD

In the D-R-ARD algorithm, two communication steps are required. The first communication step involves the computation of the matrix , where we leverage an average consensus algorithm. There, each of the consensus steps requires the transmission of floats due to the symmetry of . Note that the number A of averaged consensus iterations can vary depending on the connectivity of the network.

The second communication step involves the iterative estimation of the model parameters. Assuming that the update loop of D-R-ARD requires iterations, the distributed estimation of parameters with ADMM iterations then scales up as . Thus, the total communication load of D-R-ARD algorithm behaves as . Please note also that for this estimation of the communication load, the network structure remains unchanged.

3. Distributed Entropy-Driven Exploration for Sparse Bayesian Learning

The learning algorithm described in the previous section estimates the parameters of the model and given the measurements and . In the following, we focus on the question of how a new measurement is acquired in an optimal fashion. As we will show, the main criterion for this purpose is the information or, more specifically, the entropy change as a function of a possible sampling location.

3.1. D-Optimality

One possible strategy to optimally select a new measurement location

is provided by the theory of optimal experiment design. Optimal experiment design aims at optimizing the variance of an estimator through a number of optimality criteria. One of these criteria is a so-called D-optimality: it measures the “size” of an estimator covariance matrix by computing the volume of the corresponding uncertainty ellipsoid. More specifically, a determinant (or rather the logarithm of a determinant) of the covariance matrix is computed. The latter can then be optimized with respect to the experiment parameter. In our case, the covariance matrix

of the model parameters

is readily given in (

12) as a second central moment of

. Thus, the D-optimality criterion can be formulated as

where the dependency of

on measurement locations

has been made explicit. Note that due to the normality of the posterior pdf

, the term

is proportional to the entropy of

; thus, minimization of the criterion (

30) would imply a reduction of the entropy of the parameter estimates. Note that in contrast to [

14], the covariance matrix is not approximated here, but it is computed exactly based on the resulting probabilistic inference model. Our intention is now to evaluate and optimize (

30) as a function of the new possible sampling location

.

Let us consider a modification of the model (

7) as a function of the location

. The incorporation of

into (

7) would imply that the design matrix

would be extended as

where

is a new parameterization of a function

based on the new location

—a new regression feature. Let us stress that in general, the potential measurement at

does not have to lead to a new column in (

31)—columns, i.e., basis functions in

can be fixed from the initial design of the problem. In the latter case,

would be extended only by a row vector

. However, a basis function with a currently zero parameter weight estimate might be useful for explaining the new measurement value at

and, thus, might be activated. Our next step is to consider how

3.1.1. Measurement Only-Update of the D-Optimality Criterion

We will begin with considering the update of the D-optimality criterion with respect to a new measurement location

assuming that only the number of rows in

grows, while the number of features stays constant. In this case, (

31) can be represented as

Based on (

32), the new covariance matrix

that accounts for the new measurement location

can be computed as

where

and

is the assumed noise precision at the potential measurement location. It is worth noting that we assume every measurement to be independent white Gaussian noise.

By combining terms that depend on

, we can represent (

33) as

As we see from (

34), an addition of a new measurement row causes a rank-1 perturbation of the information matrix

. Using matrix determinant lemma [

37], we can thus compute

Note that

is independent of

, and thus, only the second term on the right-hand side of (

36) is relevant for the estimation.

Finally, the D-optimality criterion with respect to a location

can be formulated as

where we have exchanged minimization with a maximization by changing the sign of the cost function.

3.1.2. Computation of the D-Optimality Criterion with Addition of a New Feature

The computation of the D-optimality criterion becomes more involved when a measurement at a location is associated with a new feature . This can happen if, e.g., is a center or location of a new basis function.

Then, based on (

31), the new covariance matrix

that accounts for

and

is formulated as

where

is a sparsity parameter associated with a new column

. By combining terms that depend on

, we can represent (

38) as

To simplify the notation, let us define

which can be inserted into (

39), leading to

The first term in (

41) describes how much the new feature column contributes to the covariance matrix, while the second term represents the contribution of a measurement at location

. Let us now insert (

41) into the D-optimality criterion in (

30). By applying the matrix determinant lemma [

37] to the resulting expression, we compute

Now, consider separately the contribution of the two terms in the right-hand side of (

42) to the D-optimality criterion. For the first term, we can use the Schur complement [

38]

such that the first logarithmic term can be reformulated as

Note that is independent of and of , which is a fact that will become useful later.

To simplify the second term in the right-hand side of (

42), we first apply inversion rules for structured matrices [

39], which allows us to write

and thus

Finally, after inserting (

43) and (

45) into (

42), the D-optimality criterion with respect to a location

can be formulated as

where we have exchanged minimization with a maximization by changing the sign of the cost function, and we dropped

as it is independent of

and

.

3.1.3. Distributed Computation of the D-Optimality Criterion for SOE

Let us begin first with evaluating the D-optimality criterion for the SOE case. Evaluating (

37) for this data splitting is easier as compared with SOF.

Since

is known to each agent, the vector

can be evaluated without any cooperation between the agents. The covariance

can then be evaluated distributively using averaged consensus as

, where

is computed using network-wide averaging. To compute (

46), a few more steps are needed. Specifically, in addition to

, we also need to compute the quantities

and

in (

40) to evaluate the criterion. These can already be computed using averaged consensus as

Then, using (

47) and (

48) as well as

computed distributively, the criterion (

46) can be easily evaluated by each agent.

It is worth noting that the choice of

in (

48) is the only parameter that can be set manually in this exploration criterion. Basically, it controls how much we know about the potential measurement location. If

is large, the criterion would yield that the potential measurement location is not informative. On the other side, if

, the criterion yields that the considered measurement location is potentially informative. We set

for all considered measurement locations, such that the current information in the model determines how informative a measurement location could be.

3.1.4. Distributed Computation of the D-Optimality Criterion for SOF

For SOF, (

37) is unsuited for a distributed computation such that some changes have to be made. First, we define the following terms to facilitate the distributed formulation

where

and

. All terms in (

49)–(

51) can then be computed by means of an averaged consensus [

40,

41]. Next, we reformulate

with the help of the matrix-inversion-lemma as

Now, (

37) can be reformulated in a distributed setting for SOF as

For the case when the criterion (

46) is used for evaluaton of the D-optimality, the variable

in (

46) and the second additive term there have to be reformulated in a form suitable for SOF data splitting. For the former, we utilize the definitions in (

49)–(

51), together with (

52) such that

The other term in (

46) is then reformulated similarly using the results (

49)–(

52) as

As a result, the exploration criterion can be re-formulated for SOF in the following form

with

defined in (

54) and

given in (

53).

4. Experimental Setup

This section describes definition of the experimental setup, calibration of the sensors, and collection of ground-truth data for performance evaluation.

4.1. Map Construction

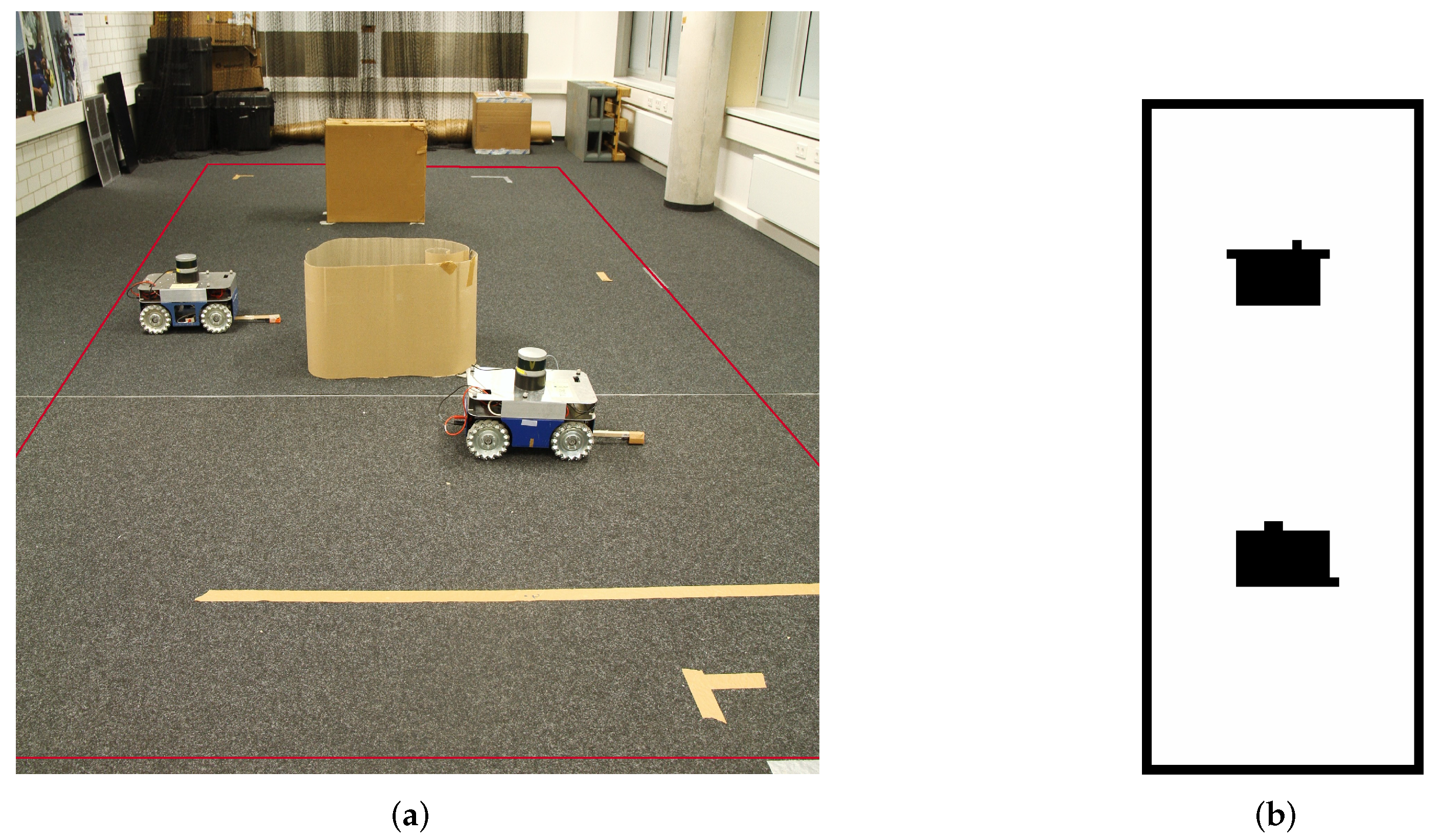

The following describes our experimental setup. We conducted the experiments indoor in our laboratory with two paper boxes as obstacles displayed in

Figure 1a. Red lines in the figure represent the borders of the experimental area. We use two Commonplace Robotics (

https://cpr-robots.com, accessed on 19 March 2022) ground-based robots with mecanum wheels; further in the text, we will refer to the robots as sliders due to their ability to move holonomically. To position the slider within the environment, the laboratory is equipped with 16 VICON (

https://www.vicon.com/, accessed on 19 March 2022) Bonita cameras. For the experiment itself, we assume that the map is a priori known to the system. Thus, we need to record the map before the experiment. So, a single slider is equipped with a light detection and ranging (LIDAR) sensor. We use a Velodyne (

https://velodynelidar.com/, accessed on 19 March 2022)

VLP-16 LIDAR and the corresponding robot operating system (ROS) package, which can be downloaded from the ROS repository. We construct the map while sending waypoints to the slider manually. The steering of the slider is done with the help of ROS’

navigation stack [

42] together with the

Teb Local Planner [

43]. The sensor output of the LIDAR and the slider position estimated by the VICON system are then used to generate a map with the

Octomap [

44] ROS package. Because we use the VICON position of the slider, which is accurate, this mapping procedure is simpler compared to simultaneous localization and mapping (SLAM) algorithms [

45,

46].

Figure 1b shows the constructed map, which is afterwards used in the experiment.

4.2. Sensor Calibration

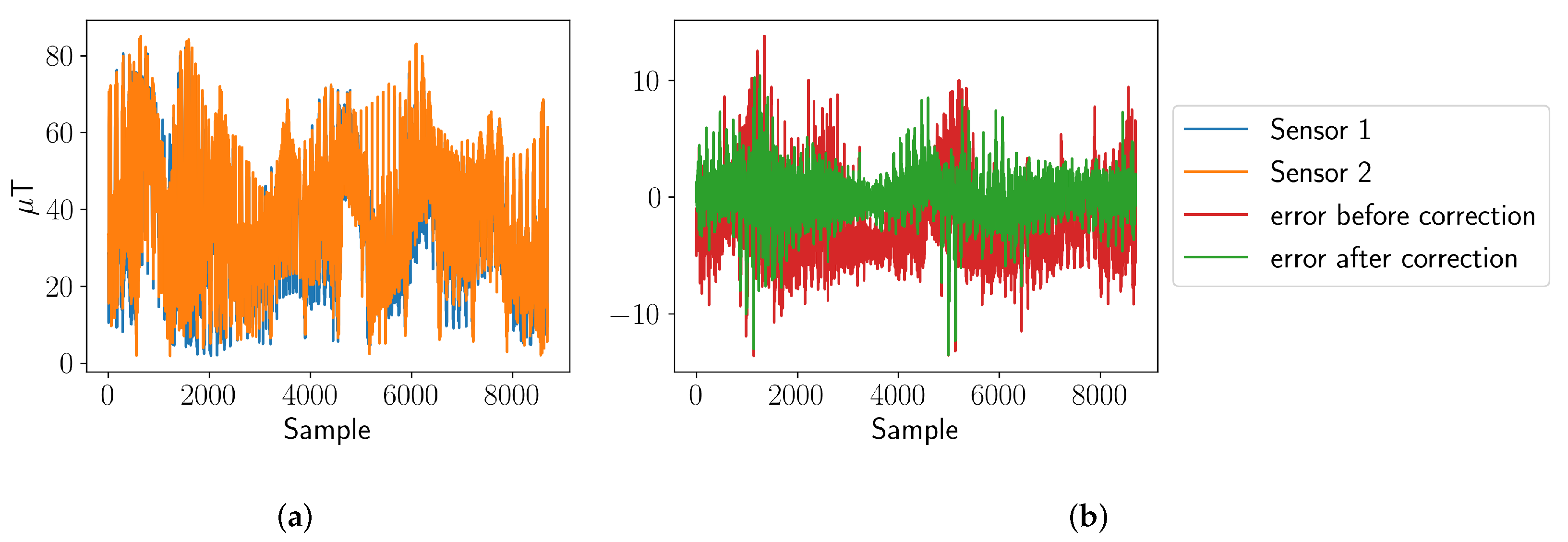

Each slider is equipped with a XSens MTw inertial measurement unit (IMU). The sensor comprises a three-axis magneto-resistive magnetometer, an accelerometer, gyroscopes, and a barometer. For the following experiment, we only use the magnetometer. The sensor is attached to a wooden stick to reduce the influence of the metal wheels on the measurement. Although the sliders are equipped with sensors from the same product line of the same manufacturer, their absolute perception differs. Additionally, the sensors can still perceive the metal in the wheels of the robots. Therefore, we need to calibrate the sensors relatively to each other to perceive the environment equally using the approach proposed in [

47].

The authors in [

47] assume that the sensor readings of one sensor can be expressed as another sensor’s reading through an affine transformation. To estimate the rotation and translation, multiple sensor readings of all sensors have to be acquired. These readings are then exploited to estimate the rotation and translation relative to one specific sensor by means of a least squares method. In this experiment, each magnetic field sensor reads at a position

one measurement of the magnetic field per Euclidean axis. During the estimation, absolute values of these measurements are used.

Figure 2a shows the absolute values of the sensor readings for multiple measurement locations of two sensors. The error of the sensor readings before and after calibration are presented in

Figure 2b. The correction thus reduces the bias and the standard deviation of the error between both sensors.

However, this calibration is only useful if the orientation of both sensors is constant during the experiment. As the sensors always measure in the same orientation, this assumption is fulfilled for our experiments. For further information on intrinsic calibration of inertial and magnetic sensors, the reader is referred to [

48].

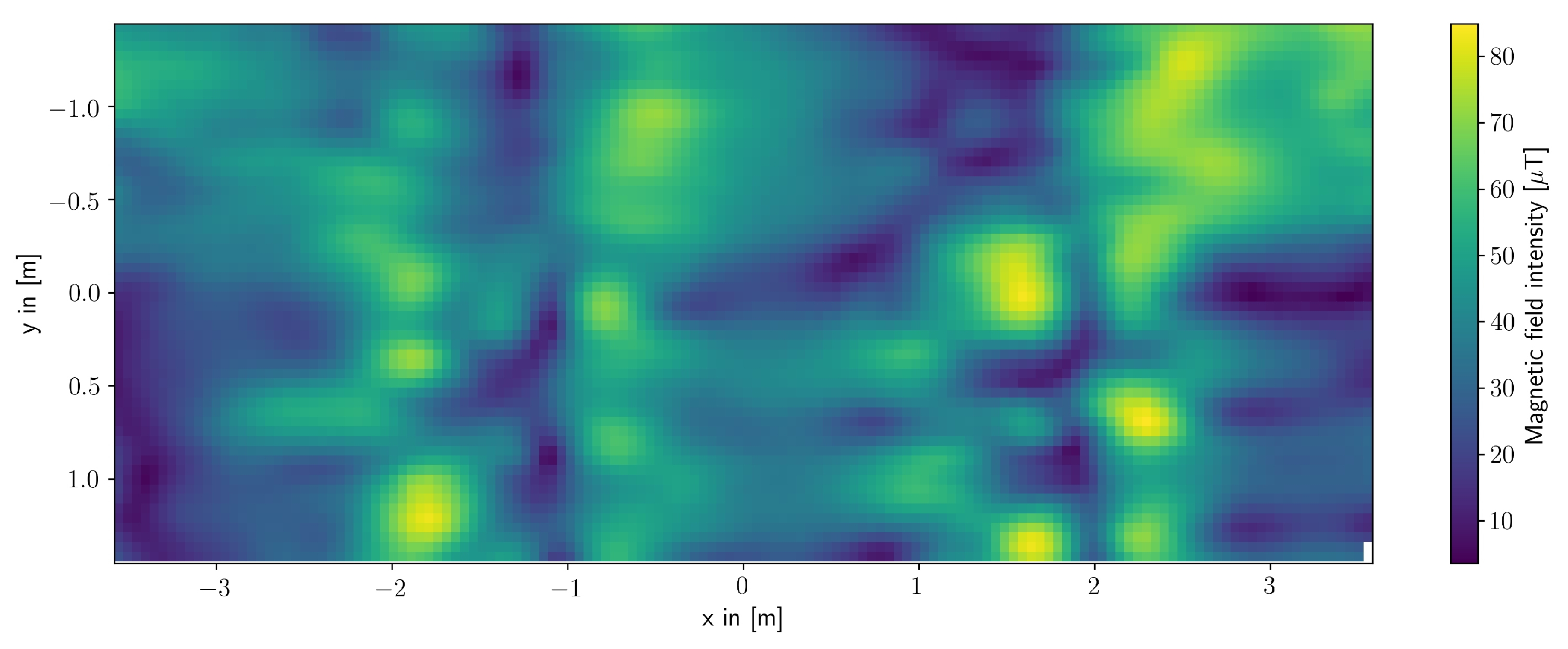

4.3. Collecting Ground Truth Data

In order to evaluate the performance of the distributed exploration, we also need to know the actual magnetic field in the laboratory—a ground truth data. For collecting the ground truth data, one slider measures the area of the Holodeck in a systematic fashion, where the distance between each measurement was set to be 5 cm such that in total, 8699 measurement points were collected. On each measurement position, multiple sensor readings are taken and averaged. The resulting ground truth is displayed in

Figure 3.

5. Experimental System Design

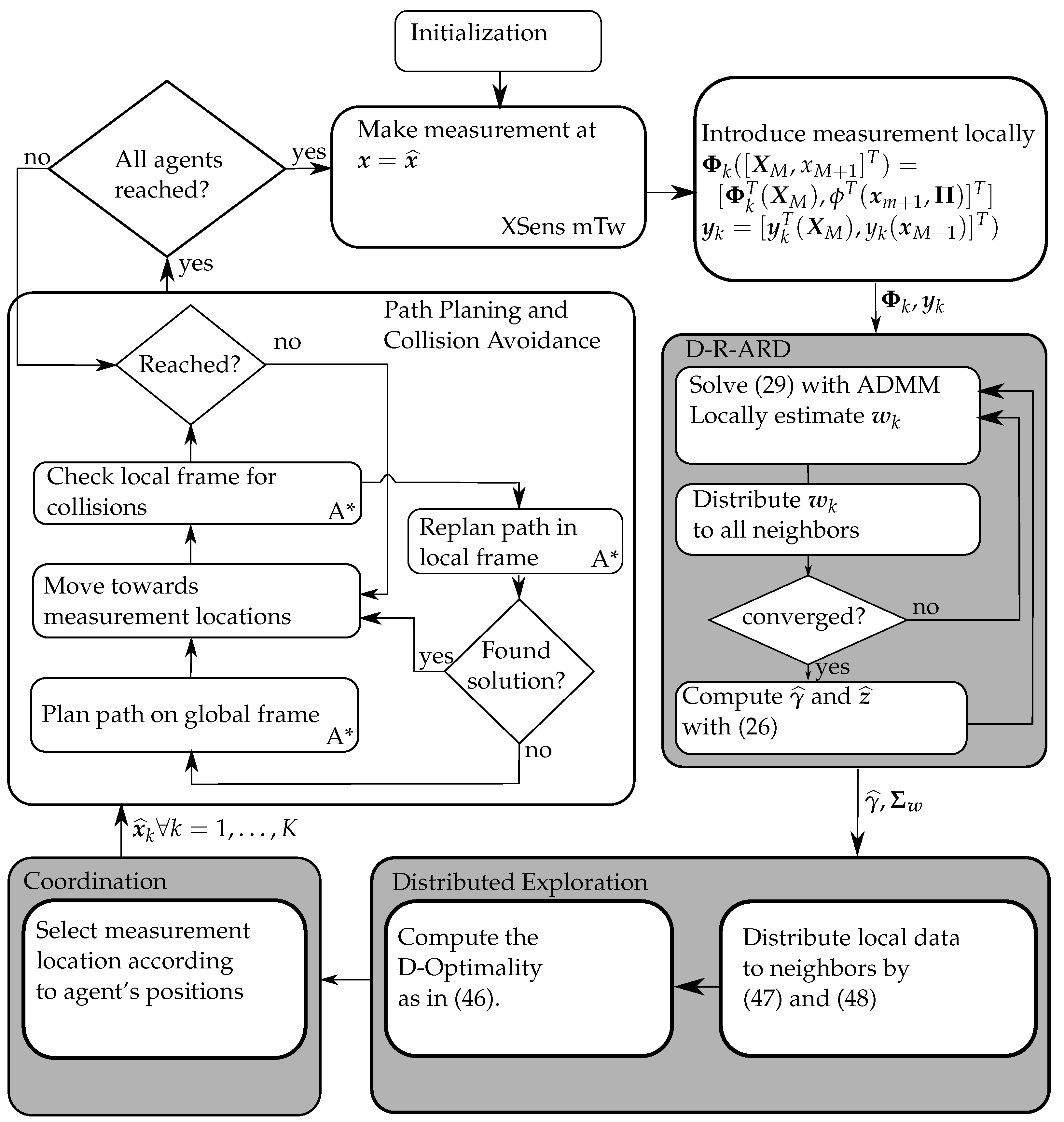

Our setup relies on ROS (

https://www.ros.org/, accessed on 19 March 2022), which manages the communication between all software modules called

nodes. On each slider, several ROS nodes are running such as the motor controller, which translates the measurement locations into velocity commands for each wheel, the path-planner, and the sensor.

As a path-planner, we use the popular A* [

49,

50]. We implemented the A* algorithm as a global and as a local planner, which is utilized for collision avoidance. Therefore, each slider does not only consider the global map but also a local map around its current position.

After receiving a new waypoint, the global path planner estimates a path in the global map from the current position to the goal avoiding the obstacles. If there is no other robotic system in its path, the goal is reached. However, if another slider enters the local frame while the robot is on its way toward the goal, the robot stops, and the path within the local frame is re-planned to avoid collisions. If the planner is not able to find a solution in the local frame within a given time, the global path planning is re-initiated, taking the current slider as an obstacle into account.

The whole system design for this experiment is shown in

Figure 4. The distributed exploration criterion uses the computed map excluding the locations of the obstacles. In addition, the map information is used by the path-planner to find an obstacle-free path to the estimated measurement location

.

Figure 4 also describes the process-flow of the whole system.

For comparison, we will use non-Bayesian SOF and SOE formulations as discussed in [

14]. As in these formulations, the ADMM algorithm [

33] was used for estimation, we will refer to them as ADMM for SOF and ADMM for SOE, respectively. For the Bayesian learning and algorithms discussed in this paper, we will refer to them as D-R-ARD for SOF and the D-R-ARD for SOE (see also

Table 1).

In the experiments, we will set the number of basis functions to

, which also determines the size of the vector

. The basis functions are distributed in a regular grid. We consider Gaussian basis functions with a width set to

such that

where

and

.

After initialization of the system, every agent takes a first measurement and incorporates it in its local measurement model to calculate the first estimate of the regression. Then, each algorithm requires that the intermediate estimated parameter weights are distributed to the neighbors (following

Figure 4) to do an average consensus [

40,

41]. Consequently, each agent can proceed to estimate with the regression using the averaged intermediate parameter weights. When the distributed regression converged, the agents use the estimated covariance matrix in the distributed exploration step. In this step, the agents propose candidate positions to their neighbors and receive information to compute the D-optimality criterion locally. When the best next measurement locations are chosen, they are passed to the coordination part [

51] to verify that all agents go to different positions. If the measurement location is considered as valid, an agent locally plans its path on the global frame to reach the goal. While approaching the goal, the agent checks if other agents entered into the local frame to avoid collisions. When all agents reached their goal, the agents take measurements and the process flow continues.

As evaluation metric, we chose the normalized mean square error (NMSE), which can be defined as

where

is the ground truth measured at

positions

. Here, we set

, and these locations are equal to the center positions of the Gaussian basis functions.

6. Experimental Validation

Figure 5 shows the NMSE of all conducted experiments with respect to time (top plot) and to the number of measurements (bottom plot). Each experimental run has a different duration, and the ROS system uses asynchronous interprocess communication resulting in asynchronous time-steps. Thus, all runs of one particular algorithm are visualized as a scatter plot. The number of measurements varies because the computation time for each measurement could be different. As a consequence, an averaging along multiple experimental runs along the time axis is not reasonable. For both ADMM algorithms, we conducted four experiments, whereas for each D-R-ARD algorithm, we conducted two experiments. The corresponding results are summarized in

Figure 5.

When looking at the top plot in

Figure 5, the D-R-ARD for SOE has the best performance because the NMSE is reduced faster compared to the other methods.

Regarding the ADMM algorithms, the SOE paradigm has a brief benefit until the 1200 s until SOF paradigm outperforms the SOE paradigm. The weak performance of D-R-ARD for SOF might result from the distributed structure of the algorithm, which requires the algorithm to compute a matrix inversion in each iteration together with the computational complex estimation of parameter weights and variances. In contrast to that, the corresponding algorithm with the SOE distribution paradigm is able to cache the matrix inversion, which drastically increases the performance. Yet, the D-R-ARD algorithms have generally a higher computational complexity compared to the ADMM algorithms. This is due to the fact that the Bayesian methods require the covariance to be computed in each iteration. The ADMM algorithm, in contrast, does not require this.

The plot at the bottom of

Figure 5 displays the NMSE with respect to the number of obtained measurements. There, the D-R-ARD for SOF and ADMM for SOF have almost the same performance. However, the ADMM for SOF is able to achieve substantially more measurements because it is computationally less complex. Consequently, the ADMM for SOF achieves not only more measurements but is on a par with the D-R-ARD for SOF when it comes to efficiency per measurement.

For the SOE distribution paradigm, on the contrary, it is beneficial to use the Bayesian methodology. In the experiments we present here, the D-R-ARD for SOE achieves a lower NMSE with fewer measurements compared to ADMM for SOE algorithm. This could be due to the fact that D-R-ARD for SOE computes the entropy of the parameter weights and does not approximate it. The computed entropy seems then to be better for the D-optimality criterion than the approximated version for the ADMM for SOE.

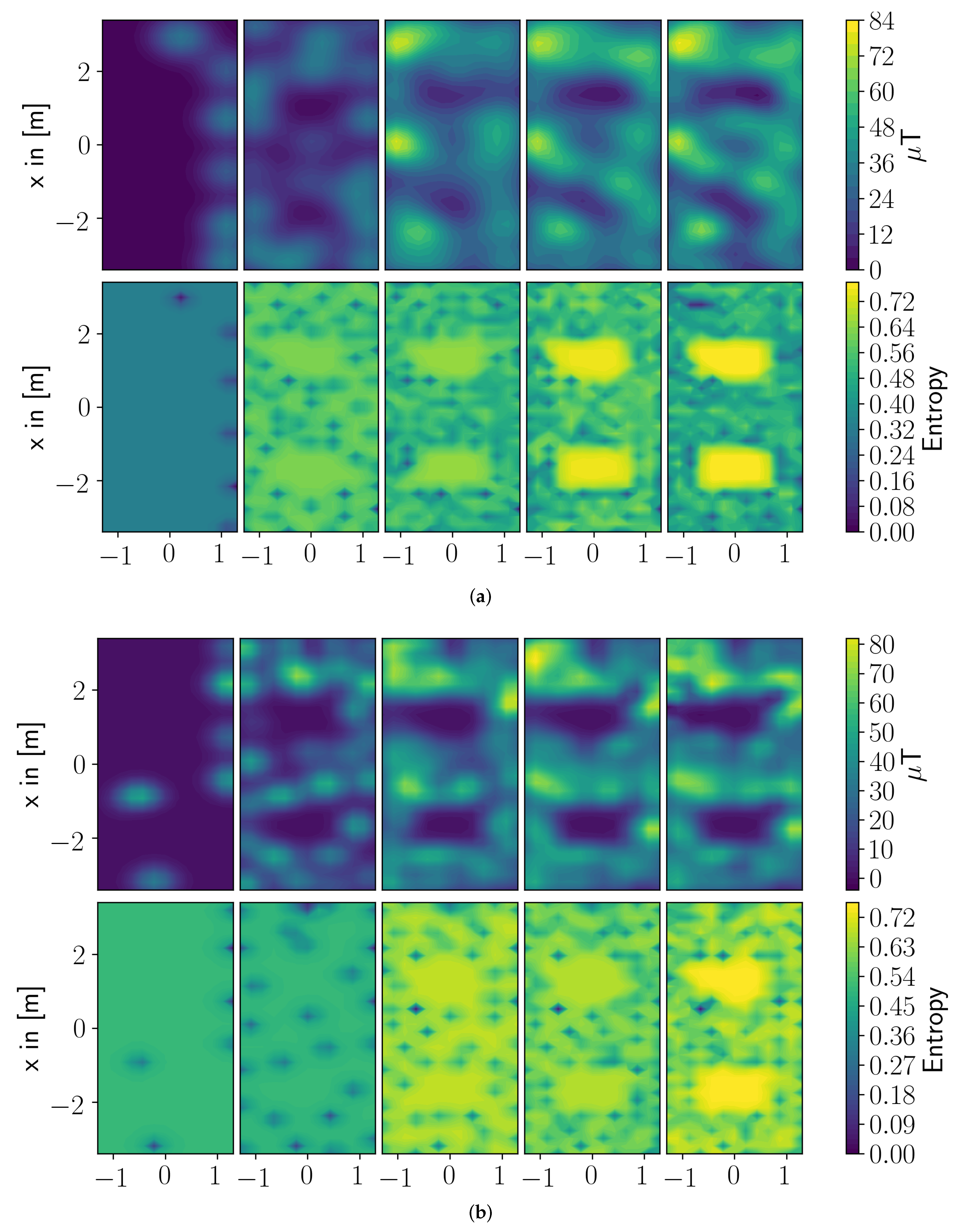

To support the claim that the Bayesian framework estimates a better covariance of the parameter weights when the SOE paradigm is applied,

Figure 6a,b present the estimated magnetic field and the estimated covariance at different timesteps. In both figures, the left most plots display the beginning of the experiment and the most right plots show the end result of the experiment. At the beginning of the experiments, both algorithms—ADMM and D-R-ARD for SOE—estimate a sparse covariance with not much difference. As the measurements increase, the approximated covariance becomes smoother, and the covariance estimated in the Bayesian framework stays sparse. This effect might result from the approximation of a covariance as introdcued in [

14], where a penalty parameter needs to be chosen as a compromise between sparsity and a reasonably well approximated covariance.

As a second remark, the ADMM algorithms involve a thresholding operator, which sets all not used basis functions to zero such that these basis functions can not be considered by the exploration step. This is controlled by a manually set penalty parameter and might be sub-optimal. The D-R-ARD for SOE, on the other side, estimates a hyper-parameter for each basis function based on the current data. Therefore, the influence of each basis function is addressed more individually and, hence, leads to a better covariance estimate. The way basis functions and parameter weights are introduced in the SOF paradigm makes this effect eventually not observable between the Bayesian and the Frequentist framework.

7. Conclusions

The presented paper proposes and validates a method for spatial regression using Sparse Bayesian Learning (SBL) and exploration, which are both implemented over a network of interconnected mobile agents. The spatial process of interest is described as a linear combination of parameterized basis functions; by constraining the weights of these functions in the final representation using a sparsifying prior, we find a model with only a few, relevant functions contributing to the model. The learning is implemented in a distributed fashion, such that no centralized processing unit is necessary. We also considered two conceptually different distribution paradigms splitting-over-features (SOF) and splitting-over-examples (SOE). To this end, a numerical algorithm based on alternating direction method of multipliers is used.

The learned representation is used to devise an information-driven optimal data collection approach. Specifically, the perturbation of the parameter covariance matrix with respect to a new measurement location is derived. This perturbation allows us to choose new measurement locations for agents such that the size of the resulting joint parameter uncertainty, as measured by the log-determinant of the covariance, is minimized. We show also how this criterion can be evaluated in a distributed fashion for both distribution paradigms in an SBL framework.

The resulting scheme thus includes two key steps: (i) cooperative sparse models that fit data collected by agents, and (ii) the cooperative identification of new measurement locations that optimizes the D-optimality criterion. To validate the performance of the scheme, we set up an experiment involving two mobile robots that navigated in an environment with obstacles. The robots were tasked with reconstructing the magnetic field which was measured on the floor of the laboratory by a magnetometer sensor. We tested the proposed scheme against a non-Bayesian sparse regression method and a similar exploration criterion.

The experimental results show that the Bayesian methods explore more efficiently than the benchmark algorithms. Efficiency is measured as the reduction of error over the number of measurements or the reduction of error over time. The reason is that the used Bayesian method directly computes the covariance matrix from the data and has fewer parameters that have to be manually adjusted. The exploration with these methods is therefore simpler to set up as compared with non-Bayesian inference approaches studied before. Yet, for the SOF distribution paradigm, the Bayesian method is computationally too demanding such that significantly fewer measurements can be collected in the same amount of time as compared with the non-Bayesian learning method.