Acceleration of Global Optimization Algorithm by Detecting Local Extrema Based on Machine Learning

Abstract

1. Introduction

2. Problem Statement

3. Methods

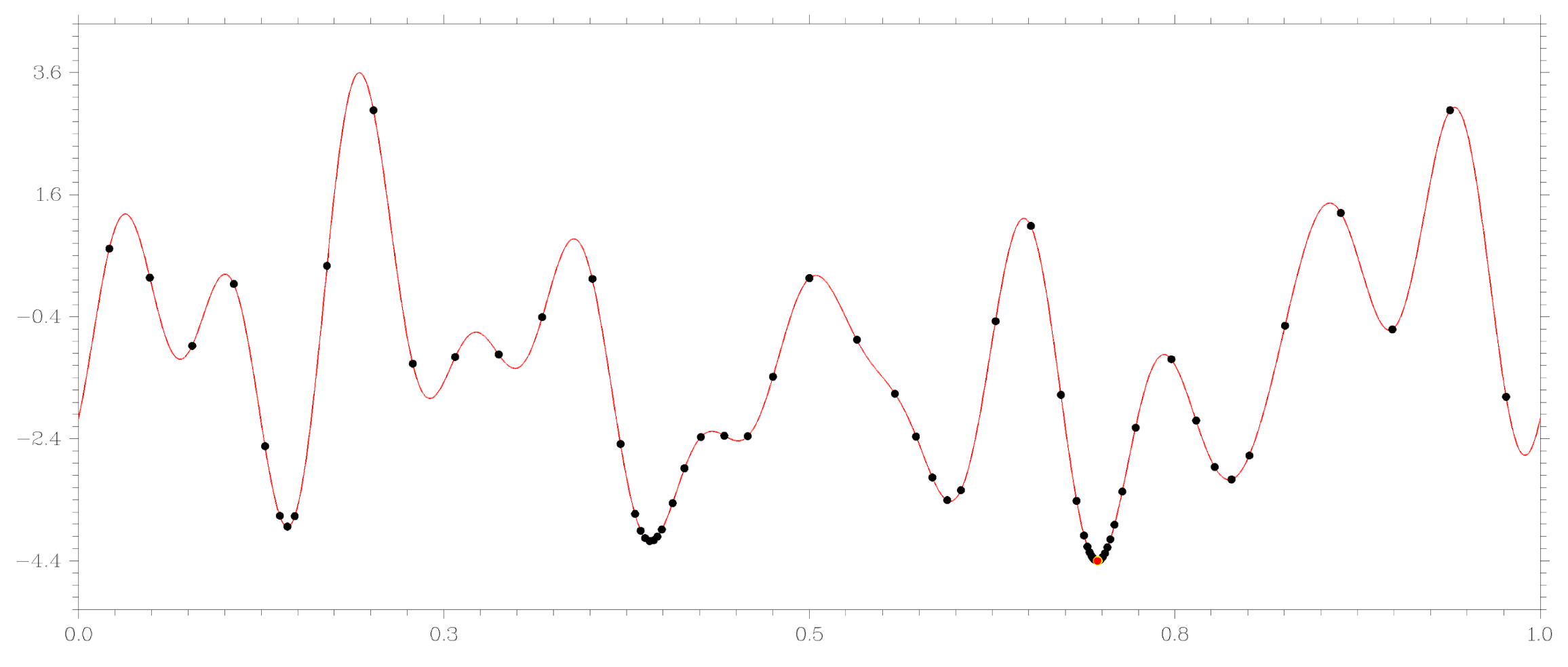

3.1. Core Global Search Algorithm

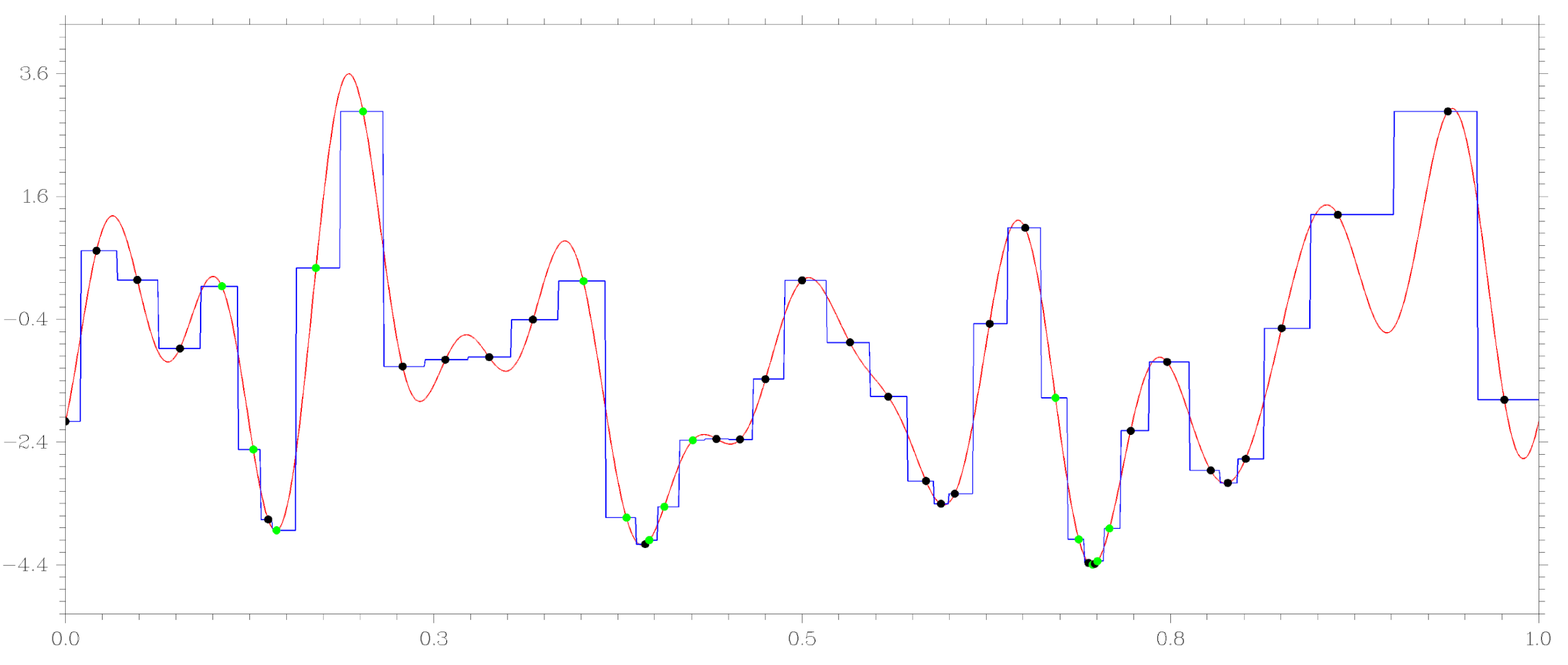

3.2. Machine Learning Regression as a Tool for Identifying Attraction Regions of Local Extrema

- Search domain D is divided into J non-overlapping subdomains , provided that .

- Any value falling into the subdomain , i.e., , is matched to the average value based on the training trials that fall into this subdomain.

- The number of trial points assigned to the node becomes less than the specified threshold value (we used 1).

- The sum of the squared deviations of the function values from the value , assigned to this node becomes less than the set accuracy (we used ).

- ;

- and ;

- and ;

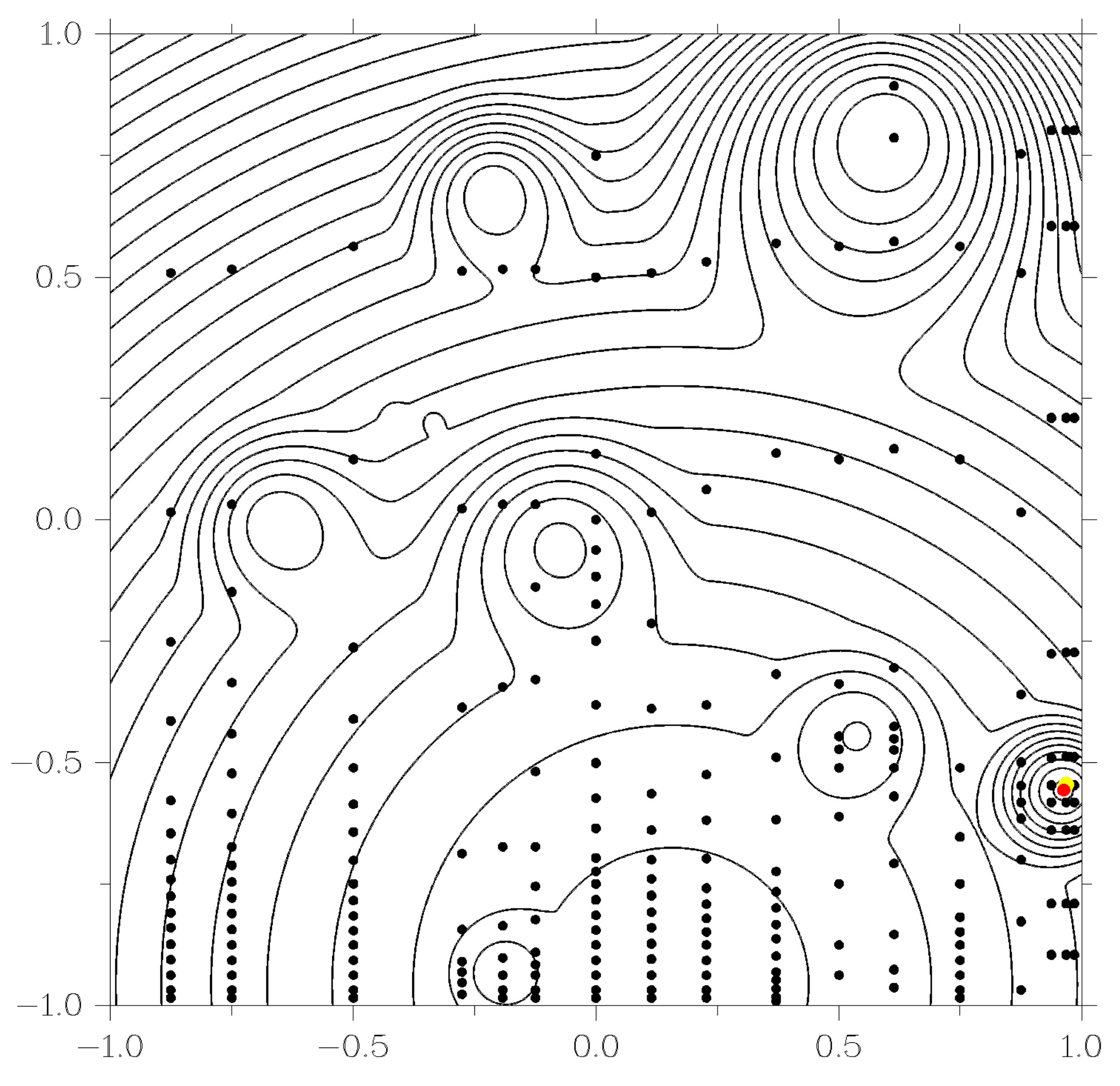

3.3. Adaptive Dimension Reduction Scheme

- to calculate the value of i-th level function from (12) a new level problem is generated, in which only one trial is carried out, after which the new generated problem is included in the set of already existing problems to be solved;

- iteration of the global search consists of choosing one (most promising) problem from the set of available problems, in which one trial is carried out; the new trial point is determined according to the basic global search algorithm from Section 3.1 or a modified algorithm from Section 3.2;

- the minimum values of functions from (12) are their current estimates obtained based on accumulated search information.

4. Experimental Results

| DIRECT | 64(1) | 34(6) | 20(17) |

| GSA | 106 | 53 | 31 |

| GSA-DT | 49 | 43 | 35 |

| DIRECT | 66(12) | 36(31) | 20(51) |

| GSA | 130 | 75 | 43 |

| GSA-DT | 64 | 59 | 50 |

- dimensionality of the problem N;

- the number of local minima l;

- value of the global minimum ;

- radius of the area of attraction of the global optimizer ;

- the distance between the global optimizer and the vertex of the paraboloid .

| GSA | 937 | 12716 | 206869 |

| GSA-DT | 653 | 9204 | 156190 |

| GSA | 1489 | 69764 | 583903 |

| GSA-DT | 831 | 10776 | 173155 |

5. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

References

- Golovenkin, S.; Bac, J.; Chervov, A.; Mirkes, E.; Orlova, Y.; Barillot, E.; Gorban, A.; Zinovyev, A. Trajectories, bifurcations, and pseudo-time in large clinical datasets: Applications to myocardial infarction and diabetes data. GigaScience 2020, 9, 1–20. [Google Scholar] [CrossRef] [PubMed]

- Gonoskov, A.; Wallin, E.; Polovinkin, A.; Meyerov, I. Employing machine learning for theory validation and identification of experimental conditions in laser-plasma physics. Sci. Rep. 2019, 9, 7043. [Google Scholar] [CrossRef] [PubMed]

- Seleznev, A.; Mukhin, D.; Gavrilov, A.; Loskutov, E.; Feigin, A. Bayesian framework for simulation of dynamical systems from multidimensional data using recurrent neural network. Chaos 2019, 29, 123115. [Google Scholar] [CrossRef] [PubMed]

- Lagaris, I.; Likas, A.; Fotiadis, D. Artificial neural networks for solving ordinary and partial differential equations. IEEE Trans. Neural Netw. 1998, 9, 987–1000. [Google Scholar] [CrossRef]

- Blechschmidt, J.; Ernst, O. Three ways to solve partial differential equations with neural networks—A review. GAMM Mitteilungen 2021, 44, e202100006. [Google Scholar] [CrossRef]

- Xu, Y.; Zhang, H.; Li, Y.; Zhou, K.; Liu, Q.; Kurths, J. Solving Fokker–Planck equation using deep learning. Chaos 2020, 30, 013133. [Google Scholar] [CrossRef] [PubMed]

- Rinnooy Kan, A.; Timmer, G. Stochastic global optimization methods part I: Clustering methods. Math. Program. 1987, 39, 27–56. [Google Scholar] [CrossRef]

- Cassioli, A.; Di Lorenzo, D.; Locatelli, M.; Schoen, F.; Sciandrone, M. Machine learning for global optimization. Comput. Optim. Appl. 2012, 51, 279–303. [Google Scholar] [CrossRef]

- Archetti, F.; Candelieri, A. Bayesian Optimization and Data Science; Springer: New York, NY, USA, 2019. [Google Scholar] [CrossRef]

- Zhigljavsky, A.; Žilinskas, A. Bayesian and High-Dimensional Global Optimization; Springer: New York, NY, USA, 2021. [Google Scholar] [CrossRef]

- Jin, Y. A comprehensive survey of fitness approximation in evolutionary computation. Soft Comput. 2005, 9, 3–12. [Google Scholar] [CrossRef]

- Kvasov, D.E.; Mukhametzhanov, M.S. Metaheuristic vs. deterministic global optimization algorithms: The univariate case. Appl. Math. Comput. 2018, 318, 245–259. [Google Scholar] [CrossRef]

- Sergeyev, Y.D.; Kvasov, D.E.; Mukhametzhanov, M.S. On the efficiency of nature-inspired metaheuristics in expensive global optimization with limited budget. Sci. Rep. 2018, 8, 435. [Google Scholar] [CrossRef]

- Strongin, R.G.; Sergeyev, Y.D. Global Optimization with Non-Convex Constraints. Sequential and Parallel Algorithms; Kluwer Academic Publishers: Dordrecht, The Netherlands, 2000. [Google Scholar]

- Barkalov, K.; Strongin, R. A global optimization technique with an adaptive order of checking for constraints. Comput. Math. Math. Phys. 2002, 42, 1289–1300. [Google Scholar]

- Gergel, V.; Kozinov, E.; Barkalov, K. Computationally efficient approach for solving lexicographic multicriteria optimization problems. Optim. Lett. 2020, 15, 2469–2495. [Google Scholar] [CrossRef]

- Barkalov, K.; Lebedev, I. Solving multidimensional global optimization problems using graphics accelerators. Commun. Comput. Inf. Sci. 2016, 687, 224–235. [Google Scholar]

- Gergel, V.; Barkalov, K.; Sysoyev, A. A novel supercomputer software system for solving time-consuming global optimization problems. Numer. Algebr. Control Optim. 2018, 8, 47–62. [Google Scholar] [CrossRef]

- Strongin, R.; Gergel, V.; Barkalov, K.; Sysoyev, A. Generalized Parallel Computational Schemes for Time-Consuming Global Optimization. Lobachevskii J. Math. 2018, 39, 576–586. [Google Scholar] [CrossRef]

- Jones, D.; Perttunen, C.; Stuckman, B. Lipschitzian optimization without the Lipschitz constant. J. Optim. Theory Appl. 1993, 79, 157–181. [Google Scholar] [CrossRef]

- Pinter, J. Global Optimization in Action (Continuous and Lipschitz Optimization: Algorithms, Implementations and Applications); Kluwer Academic Publishers: Dordrecht, The Netherlands, 1996. [Google Scholar]

- Žilinskas, J. Branch and bound with simplicial partitions for global optimization. Math. Model. Anal. 2008, 13, 145–159. [Google Scholar] [CrossRef][Green Version]

- Evtushenko, Y.; Malkova, V.; Stanevichyus, A.A. Parallel global optimization of functions of several variables. Comput. Math. Math. Phys. 2009, 49, 246–260. [Google Scholar] [CrossRef]

- Sergeyev, Y.D.; Candelieri, A.; Kvasov, D.E.; Perego, R. Safe global optimization of expensive noisy black-box functions in the δ-Lipschitz framework. Soft Comput. 2020, 24, 17715–17735. [Google Scholar] [CrossRef]

- Jones, D. The DIRECT global optimization algorithm. In The Encyclopedia of Optimization; Springer: Heidelberg, Germany, 2009; pp. 725–735. [Google Scholar]

- Paulavičius, R.; Žilinskas, J.; Grothey, A. Investigation of selection strategies in branch and bound algorithm with simplicial partitions and combination of Lipschitz bounds. Optim. Lett. 2010, 4, 173–183. [Google Scholar] [CrossRef]

- Evtushenko, Y.; Posypkin, M. A deterministic approach to global box-constrained optimization. Optim. Lett. 2013, 7, 819–829. [Google Scholar] [CrossRef]

- Kvasov, D.E.; Sergeyev, Y.D. Lipschitz global optimization methods in control problems. Autom. Remote Control 2013, 74, 1435–1448. [Google Scholar] [CrossRef]

- Paulavičius, R.; Žilinskas, J. Advantages of simplicial partitioning for Lipschitz optimization problems with linear constraints. Optim. Lett. 2016, 10, 237–246. [Google Scholar] [CrossRef]

- Paulavičius, R.; Sergeyev, Y.D.; Kvasov, D.E.; Žilinskas, J. Globally-biased BIRECT algorithm with local accelerators for expensive global optimization. Expert Syst. Appl. 2020, 144, 113052. [Google Scholar] [CrossRef]

- Paulavičius, R.; Žilinskas, J. Simplicial Global Optimization; Springer: New York, NY, USA, 2014. [Google Scholar]

- Sergeyev, Y.D.; Kvasov, D.E. Deterministic Global Optimization: An Introduction to the Diagonal Approach; Springer: New York, NY, USA, 2017. [Google Scholar]

- Sergeyev, Y.D.; Strongin, R.G.; Lera, D. Introduction to Global Optimization Exploiting Space-Filling Curves; Springer: New York, NY, USA, 2013. [Google Scholar]

- Shi, L.; Ólafsson, S. Nested partitions method for global optimization. Oper. Res. 2000, 48, 390–407. [Google Scholar] [CrossRef]

- Sergeyev, Y.D.; Grishagin, V.A. Parallel asynchronous global search and the nested optimization scheme. J. Comput. Anal. Appl. 2001, 3, 123–145. [Google Scholar]

- Van Dam, E.; Husslage, B.; Den Hertog, D. One-dimensional nested maximin designs. J. Glob. Optim. 2010, 46, 287–306. [Google Scholar] [CrossRef]

- Gergel, V.; Grishagin, V.; Israfilov, R. Local tuning in nested scheme of global optimization. Procedia Comput. Sci. 2015, 51, 865–874. [Google Scholar] [CrossRef]

- Gergel, V.; Grishagin, V.; Gergel, A. Adaptive nested optimization scheme for multidimensional global search. J. Glob. Optim. 2016, 66, 35–51. [Google Scholar] [CrossRef]

- Grishagin, V.A.; Israfilov, R.A.; Sergeyev, Y.D. Comparative efficiency of dimensionality reduction schemes in global optimization. AIP Conf. Proc. 2016, 1776, 060011. [Google Scholar]

- Breiman, L.; Friedman, J.; Stone, C.; Olshen, R. Classification and Regression Trees; CRC Press: Boca Raton, FL, USA, 1984. [Google Scholar]

- Press, W.; Teukolsky, S.; Vetterling, W.; Flannery, B. Numerical Recipes: The Art of Scientific Computing; Cambridge University Press: New York, NY, USA, 2007. [Google Scholar]

- Grishagin, V.A.; Israfilov, R.A.; Sergeyev, Y.D. Convergence conditions and numerical comparison of global optimization methods based on dimensionality reduction schemes. Appl. Math. Comput. 2018, 318, 270–280. [Google Scholar] [CrossRef]

- Jones, D.; Martins, J. The DIRECT algorithm: 25 years Later. J. Glob. Optim. 2021, 79, 521–566. [Google Scholar] [CrossRef]

- Gaviano, M.; Kvasov, D.E.; Lera, D.; Sergeyev, Y.D. Software for generation of classes of test functions with known local and global minima for global optimization. ACM Trans. Math. Softw. 2003, 29, 469–480. [Google Scholar] [CrossRef]

- Kvasov, D.E.; Mukhametzhanov, M.S.; Nasso, M.C.; Sergeyev, Y.D. On Acceleration of Derivative-Free Univariate Lipschitz Global Optimization Methods. Lect. Notes Comput. Sci. 2020, 11974, 413–421. [Google Scholar] [CrossRef]

- Sergeyev, Y.D.; Nasso, M.C.; Mukhametzhanov, M.S.; Kvasov, D.E. Novel local tuning techniques for speeding up one-dimensional algorithms in expensive global optimization using Lipschitz derivatives. J. Comput. Appl. Math. 2021, 383, 113134. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Barkalov, K.; Lebedev, I.; Kozinov, E. Acceleration of Global Optimization Algorithm by Detecting Local Extrema Based on Machine Learning. Entropy 2021, 23, 1272. https://doi.org/10.3390/e23101272

Barkalov K, Lebedev I, Kozinov E. Acceleration of Global Optimization Algorithm by Detecting Local Extrema Based on Machine Learning. Entropy. 2021; 23(10):1272. https://doi.org/10.3390/e23101272

Chicago/Turabian StyleBarkalov, Konstantin, Ilya Lebedev, and Evgeny Kozinov. 2021. "Acceleration of Global Optimization Algorithm by Detecting Local Extrema Based on Machine Learning" Entropy 23, no. 10: 1272. https://doi.org/10.3390/e23101272

APA StyleBarkalov, K., Lebedev, I., & Kozinov, E. (2021). Acceleration of Global Optimization Algorithm by Detecting Local Extrema Based on Machine Learning. Entropy, 23(10), 1272. https://doi.org/10.3390/e23101272