2.1. Restricted Boltzmann Machines

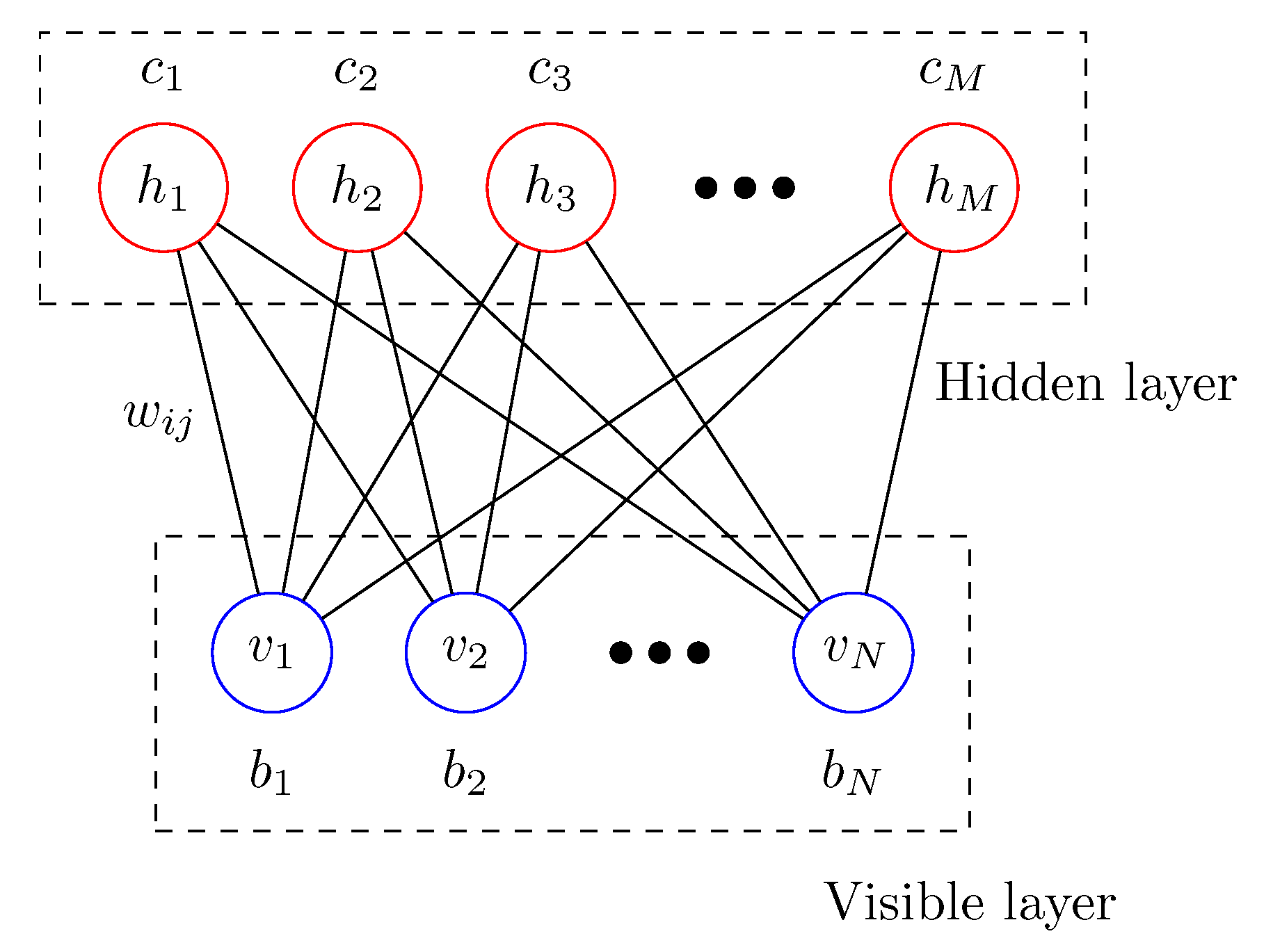

Let us start with a brief introduction of the RBM [

1,

2,

3]. As shown in

Figure 1, the RBM is composed of two layers; the visible layer and the hidden layer. Possible configurations of the visible and hidden layers are represented by the random binary vectors,

and

, respectively. The interaction between the visible and hidden layers is given by the so-called weight matrix

, where the weight

is the connection strength between a visible unit

and a hidden unit

. The biases

and

are applied to visible unit

i and hidden unit

j, respectively. Given random vectors

and

, the energy function of the RBM is written as an Ising-type Hamiltonian.

where the set of model parameters is denoted by

. The joint probability of finding

and

of the RBM in a particular state is given by the Boltzmann distribution

where the partition function,

, is the sum over all possible configurations. The marginal probabilities

and

for visible and hidden layers are obtained by summing up the hidden or visible variables, respectively,

The training of the RBM is to adjust the model parameter

such that the marginal probability of the visible layer

becomes as close as possible to the unknown probability

that generate the training data. Given identically and independently sampled training data

, the optimal model parameters

can be obtained by maximizing the likelihood function of the parameters,

, or equivalently by maximizing the log-likelihood function

. Maximizing the likelihood function is equivalent to minimizing the Kullback–Leibler divergence or the relative entropy of

from

[

15,

16]

where

is an unknown probability that generates the training data,

. Another method of monitoring the progress of training is the cross-entropy cost between the input visible vector

and a reconstructed visible vector

of the RBM,

The stochastic gradient ascent method for the log-likelihood function is used to train the RBM. Estimating the log-likelihood function requires the Monte-Carlo sampling for the model probability distribution. Well-known sampling methods are the contrastive-divergence, denoted by CD-

k, and the persistent contrastive divergence PCD-

k. For details of the RBM algorithm, please see References [

2,

3,

4]. Here, we employ the CD-

k method.

2.2. Free Energy, Entropy, and Internal Energy

From a physics point of view, the RBM is a finite classical system composed of two subsystems, similar to an Ising spin system. The training of the RBM is considered the driving of the system from an initial equilibrium state to the target equilibrium state by switching the model parameters. It may be interesting to see how thermodynamic quantities such as free energy, entropy, internal energy, and work change as the training progresses.

It is straightforward to write down various thermodynamic quantities for the total system. The free energy

F is given by the logarithm of the partition function

Z,

The internal energy

U is given by the expectation value of the energy function

The entropy

S of the total system comprising the hidden and visible layers is given by

Here, the convention of

is employed if

[

17]. The free energy (

7) is related to the difference between the internal energy (

9) and the entropy (

10)

where

T is set to 1.

Generally, it is very challenging to calculate the thermodynamic quantities, even numerically. The number of possible configurations of

N visible units and

M hidden units grow exponentially as

. Here, for a feasible benchmark test, the

bar-and-stripe data are considered [

18,

19].

Figure 2 shows the 6 possible

bar-and-stripe patterns out of 16 possible configurations, which will be used as the training data in this work. We take the sizes of the visible and the hidden layers as

and

, respectively. One may take a larger size of hidden layers, i.e.,

M = 8 or 10, but it does not make an appreciable difference in our results.

M = 6 is not a choice of magic number but was used as an example since we were rather limited in our capacity of numerical computation. In order to understand better how the RBM is trained, the thermodynamic quantities are calculated numerically for this small benchmark system.

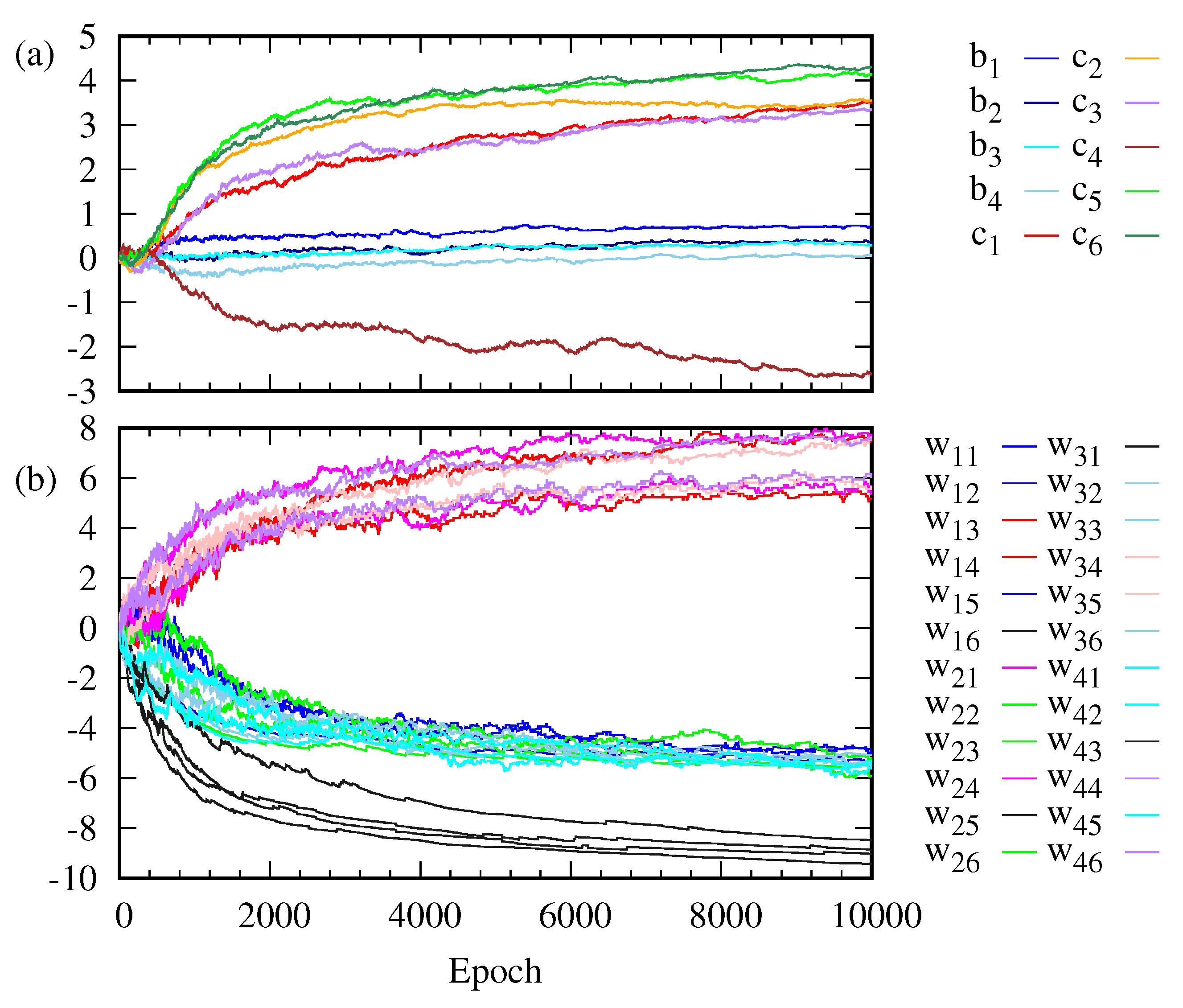

Figure 3 shows how the weight

, the bias

on the visible unit

i and the bias

on the hidden unit

j change as the training goes on. The weights

are clustered into three classes. The evolution of the bias

on the visible layer is somewhat different from that of the bias

on the hidden layer. The change in

is larger than that in

.

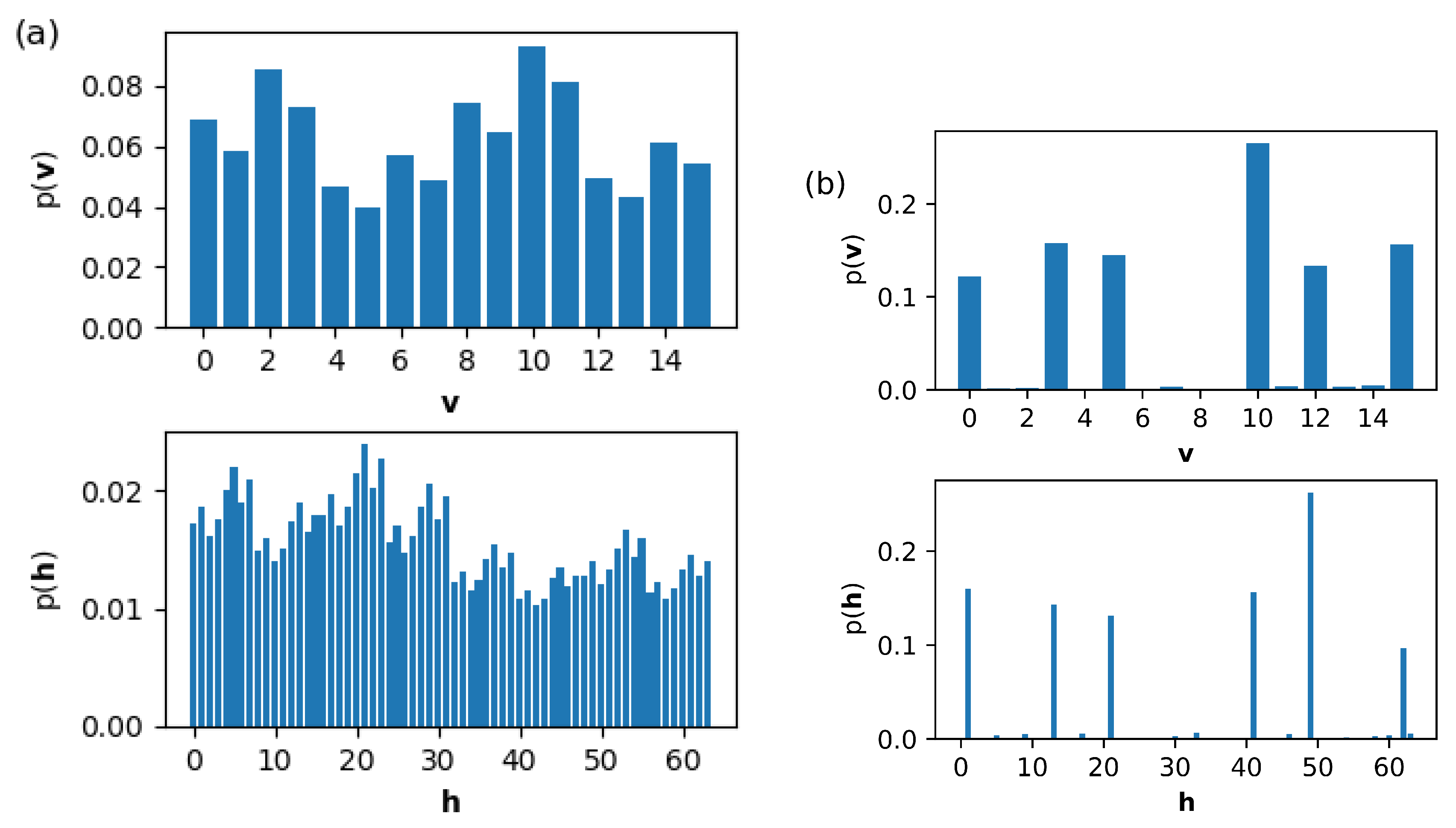

Figure 4 shows the change in the marginal probabilities

of the visible layer and

of the hidden layer before and after training. Note that the marginal probability

after training is not distributed exclusively over six possible outcomes corresponding to the training data set in

Figure 2.

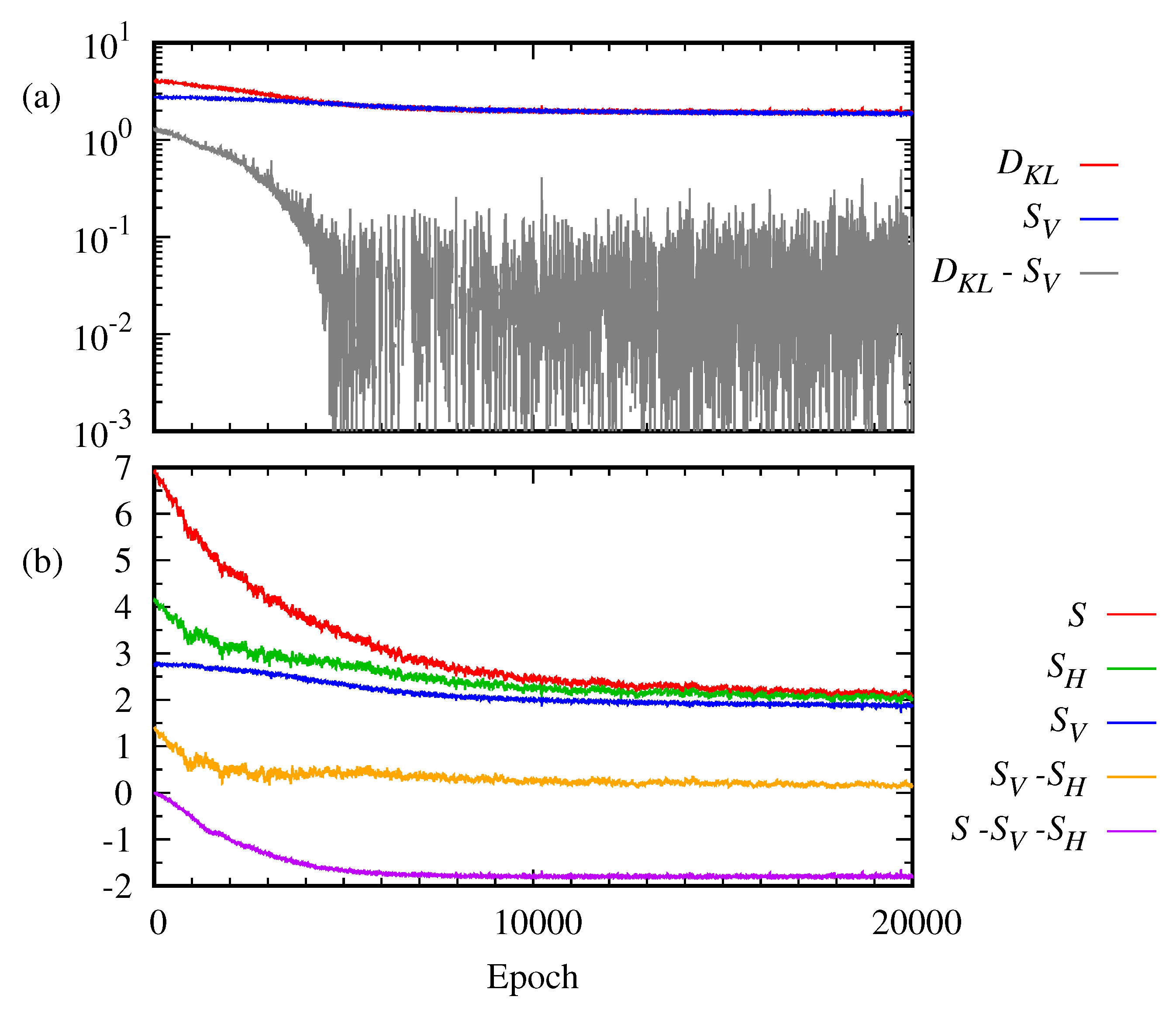

Typically, the progress of learning of the RBM is monitored by the loss function. Here, the Kullback–Leibler divergence, Equation (

5), and the reconstructed cross entropy, Equation (

6), are used.

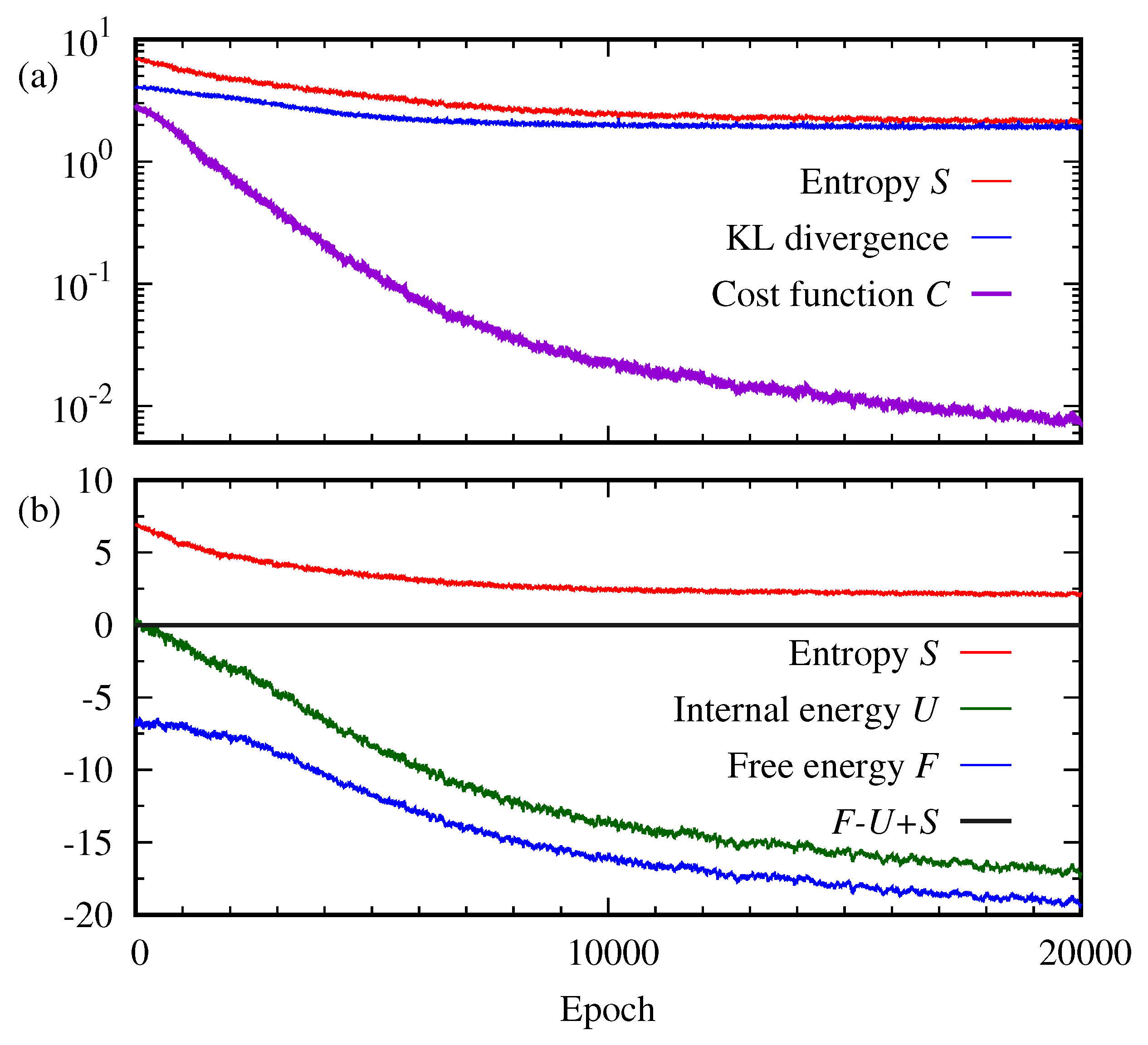

Figure 5 plots the reconstructed cross entropy

C, the Kullback–Leibler divergence

, the entropy

S, the free energy

F, and the internal energy

U as a function of the epoch. As shown in

Figure 5a, it is interesting to see that even after a large number of epochs

, the cost function

C continues approaching zero while the entropy

S and the Kullback–Leibler divergence

become steady. On the other hand, the free energy

F continues decreasing together with the internal energy

U, as depicted in

Figure 5b. The Kullback–Leibler divergence is a well-known indicator of the performance of RBMs. Then, our result implies that the entropy may be another good indicator to monitor the progress of the RBM while other thermodynamic quantities may be not.

In addition to the thermodynamic quantities of the total system of the RBM, Equations (

7)–(

9), it is interesting to see how the two subsystems of the RBM evolve. Since the RBM has no intra-layer connection, the correlation between the visible layer and the hidden layer may increase as the training proceeds. The correlation between the visible layer and the hidden layer can be measured by the difference between the total entropy and the sum of the entropies of the two subsystems. The entropies of the visible and hidden layers are given by

The entropy

of the visible layer is closely related to the Kullback–Leibler divergence of

to an unknown probability

which produces the data. Equation (

5) is expanded as

The second term depends on the parameter . As the training proceeds, becomes close to so the behavior of the second term is very similar to that of the entropy of the visible layer. If the training is perfect, we have that leads to while remains nonzero.

The difference between the total entropy and the sum of the entropies of subsystems is written as

Equation (

14) tells us that if the visible random vector

and the hidden random vector

are independent, i.e.,

, then the entropy

S of the total system is the sum of the entropies of subsystems. In general, the entropy

S of the total system is always less than or equal to the sum of the entropy of the visible layer,

, and the entropy of the hidden layer,

,

20],

This is called the subadditivity of entropy, one of the basic properties of the Shannon entropy, which is also valid for the von Neumann entropy [

17,

21]. This property can be proved using the log inequality,

. In another way, Equation (

15) may be proved by using the log-sum inequality, which states that for the two sets of nonnegative numbers,

and

,

In other words, Equation (

14) can be regarded as the negative of the relative entropy or Kullback–Leibler divergence of the joint probability

to the product probability

,

For the 2

bar-and-stripe pattern, the entropies of visible and hidden layers,

are calculated numerically.

Figure 6 plots the entropies,

,

S, and the Kullback–Leibler divergence

as a function of the epoch.

Figure 6a shows that the Kullback–Leibler divergence,

becomes saturated, though above zero, as the training proceeds. Similarly, the entropy

of the visible layer is saturated. This implies that the entropy of the visible layer, as well as the total entropy shown in

Figure 5, can be a better indicator of learning than the reconstructed cross entropy

C, Equation (

6). The same can also be said about the entropy of the hidden layer,

. If some information measures such as entropy and the Kullback–Leibler divergence become steady, one may presume the training has been done.

The difference between the total entropy and the sum of the entropies of the two subsystems,

, becomes less than 0, as shown in

Figure 6b. Thus, it demonstrates the subadditivity of entropy, i.e., the correlation between the visible and the hidden layer as the training proceeds. As it is saturated just as the total entropy and the entropies of the visible and hidden layers after a large number of epochs, the correlation between the visible layer and the hidden layer can also be a good quantifier of the RBM progress.

2.3. Work, Free Energy, and Jarzynski Equality

The training of the RBM may be viewed as driving a finite classical spin system from an initial equilibrium state to a final equilibrium state by changing the system parameters

slowly. If the parameters

are switched infinitely slowly, the classical system remains in a quasi-static equilibrium. In this case, the total work done on the systems is equal to the Helmholtz free energy difference between the before-training and the after-training,

For switching

at a finite rate, the system may not evolve immediately to an equilibrium state, the work done on the system depends on a specific path of the system in the configuration space. Jarzynski [

22,

23] proved that for any switching rate, the free energy difference

is related to the average of the exponential function of the amount of work

W over the paths

The RBM is trained by changing the parameters

through a sequence

, as shown in

Figure 3. To calculate the work done during the training, we perform the Monte-Carlo simulation of the trajectory of a state

of the RBM in configuration space. From the initial configuration,

which is sampled from the initial Boltzmann distribution, Equation (

2), the trajectory

is obtained using the Metropolis–Hastings algorithm of the Markov chain Monte-Carlo method [

24,

25]. Assuming the evolution is Markovian, the probability of taking a specific trajectory is the product of the transition probabilities at each step,

The transition

can be implemented by the Metropolis–Hastings algorithm based on the detailed balance condition for the fixed parameter

,

The work done on the RBM at epoch

i may be given by

The total work

performed on the system is written as [

26]

Given the sequence of the model parameter

, the Markov evolution of the visible and hidden vectors

may be considered the discrete random walk. Random walkers move to the points with low energy in configuration space.

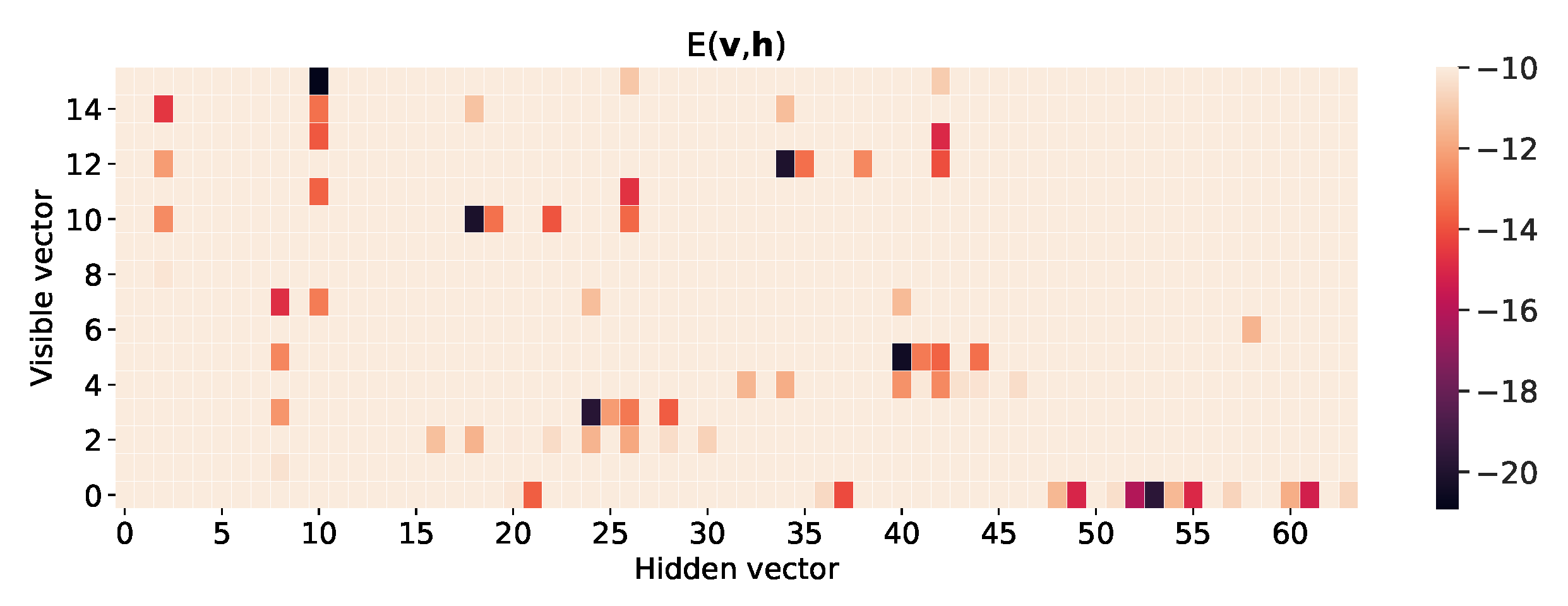

Figure 7 shows the heat map of energy function

of the RBM for the

bar-and-stripe pattern after training. One can see the energy function has deep levels at the visible vectors corresponding to the bar-and-stripe patterns of the training data set in

Figure 2, representing a high probability of generating the trained patterns. Furthermore, note that the energy function has many local minima.

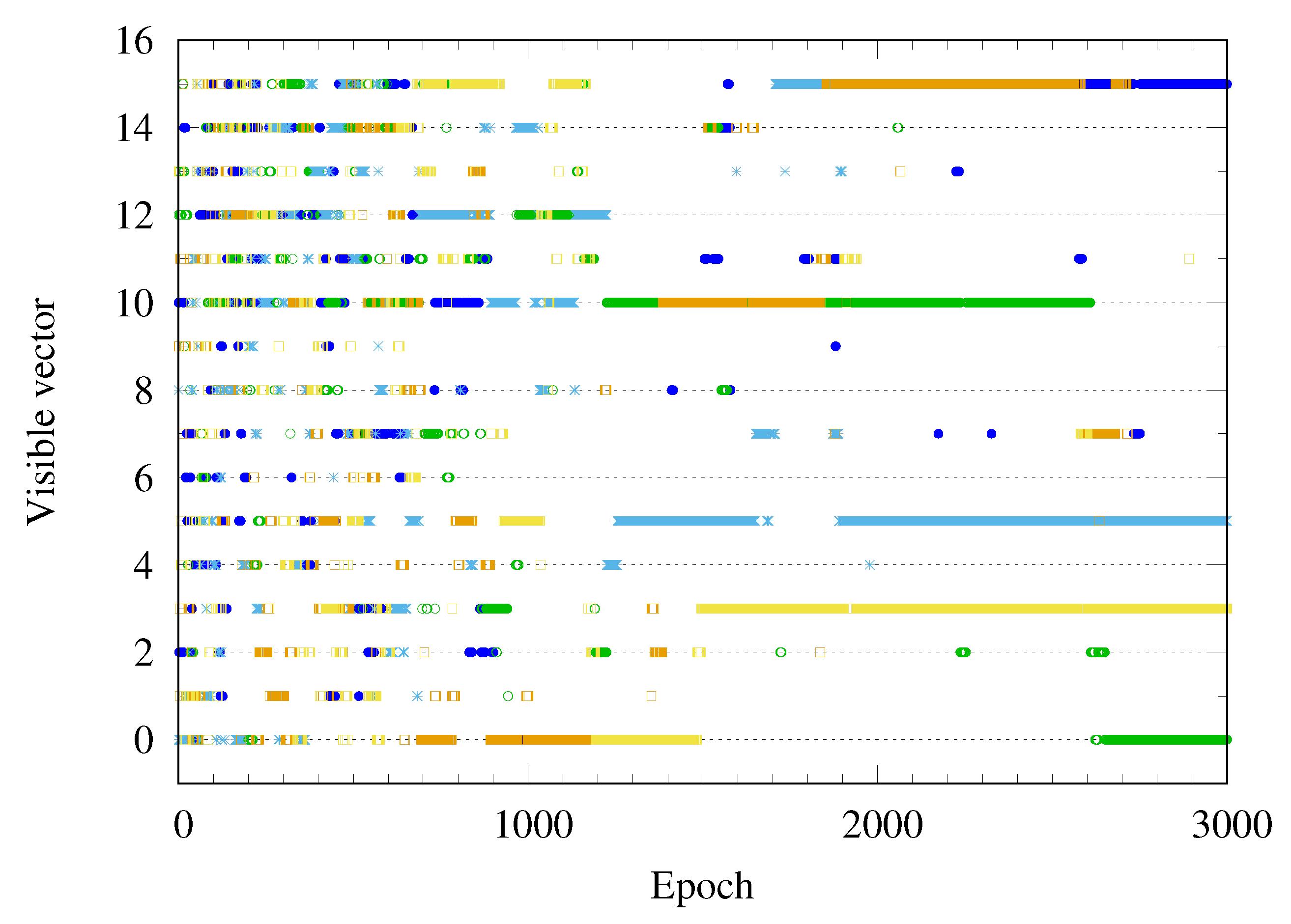

Figure 8 plots a few Monte-Carlo trajectories of the visible vector

as a function of the epoch. Before training, the visible vector

is distributed over all possible configurations, represented by the number

. As the training progresses, the visible vector

becomes trapped into one of the six possible outcomes

.

In order to examine the relation between work done on the RBM during the training and the free energy difference, the Monte-Carlo simulation is performed to calculate the average of the work over paths generated by the Metropolis–Hastings algorithm of the Markov chain Monte-Carlo method. Each path starts from an initial state sampled from the uniform distribution over the configuration space, as shown in

Figure 4a. Since the work done on the system depends on the path, the distribution of the work is calculated by generating many trajectories.

Figure 9 shows the distribution of the work over 50000 paths at 5000 training epochs. The Monte-Carlo average of the work is

, and its standard deviation is

. The distribution of the work generated by the Monte-Carlo simulation is well fitted to the Gaussian distribution, as depicted by the red curve in

Figure 9. This agrees with the statement in Reference [

23] that for the slow switching of the model parameters the probability distribution of work is approximated to the Gaussian.

We perform the Monte-Carlo calculation of the exponential average of work,

to check the Jarzynski equality, Equation (

18). The free energy difference can be estimated as

where

is the number of the Monte-Carlo samplings. At a small epoch number, the Monte-Carlo estimated value of the free energy difference is close to

calculated from the partition function. However, this Monte-Carlo calculation gives rise to the poor estimation of the free energy difference if the epoch is greater than 5000. This numerical errors can be explained by the fact that the exponential average of the work is dominated by rare realization [

27,

28,

29,

30,

31]. As shown in

Figure 9, the distribution of work is given by the Gaussian distribution

with the mean

and the standard deviation

. If the standard deviation

becomes larger, the peak position of

moves to the long tail of the Gaussian distribution. So the main contribution of the integration of

comes from the rare realizations.

Figure 10 shows that the standard deviation

grows with the epoch, so the error of the Monte-Carlo estimation of the exponential average of the work grows quickly.

If

, the free energy is related to the average of work and its variance as

Here, the case is the opposite, the spread of the value of work is large, i.e.,

, so the central limit theorem does not work and the above equation can not be applied [

32].

Figure 10 shows how the average of work,

, over the Markov chain Monte-Carlo paths changes as a function of the epoch. The standard deviation of the Gaussian distribution of the work also grows as a function of the training epoch. The free energy difference between before-training and after-training is called the reversible work

. The difference between the actual work and the reversible work is called the dissipative work,

[

26]. As depicted in

Figure 10, the magnitude of the dissipative work grows with the training epoch.