1. Introduction

When searching for common foundations of cortical computation, more and more emphasis is being placed on information-theoretic descriptions of cognitive processing [

1,

2,

3,

4,

5]. One of the core tasks in the analysis of cognitive processing is to follow the flow of information within the nervous system, by finding cause-effect components. Indeed, understanding causal relationships is considered to be fundamental to all natural sciences [

6]. However, inferring causal relationships and separating them from mere correlations is difficult, and the subject of ongoing research [

7,

8,

9,

10,

11]. The concept of

Granger causality is an established statistical measure that aims to determine directed (causal) functional interactions among components or processes of a system. Schreiber [

12] described Granger causality in terms of information theory by introducing the concept of

transfer entropy (TE). The main idea is that if a process

X is influencing process

Y, then an observer can predict the future state of

Y more accurately given the history of both

X and

Y (written as

and

, where

k and

ℓ determine how many states from the past of

X and

Y are taken into account) compared to only knowing the history of

Y. According to Schreiber, the transfer entropy

quantifies the flow of information from process

X to

Y:

Here as before, and refer to the history of the processes X and Y, while refers to the variable at only. Further, is the joint probability of and the histories and , while and are conditional probabilities.

The transfer entropy (

1) is a conditional mutual entropy, and quantifies what the process

Y at time

knows about the process

X up to time

t, given the history of

Y up to time

t (see [

13] for a thorough introduction to the subject). Specifically,

measures “how much uncertainty about the future course of

Y can be reduced by the past of

X, given

Y’s own past.” Transfer entropy reduces to Granger causality for so-called “auto-regressive processes” [

14] (which encompasses most biological dynamics), and has become one of the most widely used directed information measures, especially in neuroscience (see [

5,

13,

15,

16] and references cited therein).

While transfer entropy is sometimes used to infer causal influences between susbsystems, it is important to point out that inferring causal relationships is different from inferring information flow [

17]. In complex systems (for example, in computations that a brain performs to choose the correct action given a particular sensory experience) events in the sensory past can causally influence decisions significantly distant in time, and to capture such influences using the transfer entropy concept requires a careful analysis in which not only the history lengths

k and

ℓ used in Equation (

1) must be optimized, but false influences due to linear mixing of signals (which can mimic causal influences) must also be corrected for [

13,

15]. In some sense, inferring information flow is a much simpler task than finding all causal influences, as we need only to identify (and quantify) the sources of information transferred to a particular variable. More precisely, for this application the pairwise transfer entropy is used to find candidate sources (in the immediate past) that account for the entropy of a particular neuron.

Using transfer entropy to search for and detect directed information was shown to lead to inaccurate assessments in simple case studies [

18,

19]. For instance, James et al. [

18] presented two examples in which TE underestimates the flow of information from inputs to output in one example, and overestimates it in the other. In the first example, they define a simple system with three binary variables

X,

Y, and

Z where

(⊕ is the exclusive OR logic operation) and variables

X and

Y take states 0 or 1 with equal probabilities, i.e.,

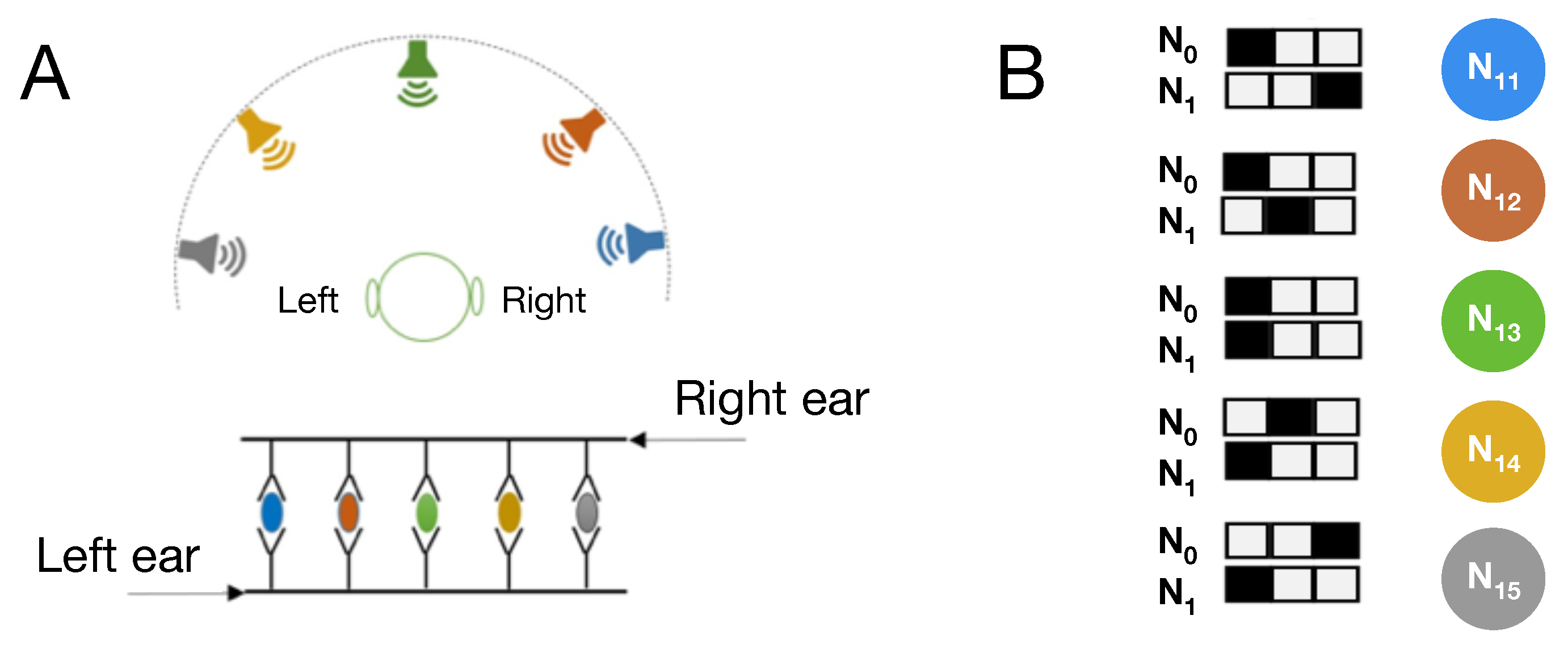

(this 2-to-1 relation is schematically shown in

Figure 1A). In this network,

whereas the entropy of the process

Z,

, and variables

X and

Y certainly influence the future state of

Z. In this example, the entropy of

Z can be reduced by 1 bit but the TE does not attribute this entropy to either variables

X or

Y and as a consequence the TE underestimates the flow of information from

X and

Y to

Z. In another example, they define a system with two binary variables

Y and

Z, where

and similar to the previous example,

(this feedback loop relation is schematically shown in

Figure 1B). In this scenario,

, which implies that the entire 1 bit of entropy in

Z is coming from process

Y. However, this is not correct since both

Y and

Z are equally contributing to determine the future state of

Z. In this example, TE overestimates the information flow from process

Y to

Z. It is also noteworthy that in this example the processed information (defined as

) vanishes, which again does not correctly detect the other source,

, from which the information is coming. As acknowledged by the authors in [

18], expecting that the entropy of the output

is given simply by the sum of the transfer entropy from each of the inputs independently is a naive interpretation of information flow. Indeed, this is generally not the case, even if the two sources are uncorrelated. Consider for example, the first system described above in which

. Suppose

f is a deterministic function of

and

, in which case the conditional entropy

. Then, the entropy

decomposes into the sum of an unconditional and a conditional transfer entropy

where the conditional transfer entropy is defined as (see [

13], Section 4.2.3)

Using this definition, it is easy to show that

and Equation (

2) can be rewritten in terms of transfer entropies only, or else conditional transfer entropies only, as

In light of Equation (

5), it then becomes clear that the naive sum of the transfer entropies

and

(or naive sum of conditional transfer entropies) must fail to account for the entropy of

Z whenever the term

is non-zero, and therefore will fail to fully and accurately quantify information transferred from sources

X and

Y. Therefore, the error in information flow estimate when using transfer entropy is simply given by the absolute value of

(same when using conditional transfer entropies).

Now consider the second example system with a feedback loop in which

, and again suppose

f is a deterministic function which implies

. In this case, there is a similar information decomposition that now involves a shared entropy

Here, the entropy

can be written in terms of transfer entropy and processed information (recall that

)

While Equation (

7) shows that the sum of transfer entropy

and processed information

account for all the entropy

, these two terms do not always individually identify the sources of information flow correctly. For instance, we have seen that in the second example (where

) the processed information

vanishes even though variable

most definitely influences the state of variable

. As discussed earlier, all the information transferred to

in that case is attributed to variable

. Note that the processed information can be written as

where

and

.

Note that for the most general case where function

f can be non-deterministic and the network with or without feedback loop, the full entropy decomposition can be written as

There is also another key factor in the examples described above that results in misestimating information flow when using transfer entropy. In both examples, the input to output relation is implemented by an XOR function. For instance, in the first example (

), the transfer entropy

considers

X in isolation and independent of variable

Y. We should make it clear that it is not the formulation of TE that is at the origin of mis-attributing the sources of the transferred information. Rather, by definition Shannon’s mutual information,

is dyadic, and cannot capture polyadic correlations where more than one variable influences another. Consider for example a similar but

time-independent process between binary variables

X,

Y, and

Z where

. As is well-known, the mutual information between

X and

Z, and also between

Y and

Z vanishes:

(this corresponds to the one-time pad, or Vernam cipher [

20], a common method of encryption that takes advantage of the fact that

). Thus, while the TE formulation aims to capture a directed dependency of information, Shannon information measures the

undirected (correlational) dependency of two variables only. As a consequence, problems with TE measurements in detecting directed dependencies are unavoidable when using Shannon information, and do not stem from the formulation of transfer entropy [

12] or similar measures such

causation entropy [

10] to capture causal relations. Note that methods such as

partial information decomposition have been proposed to take into account the synergistic influence of a set of variables on the others [

21]. However, such higher-order calculations are more costly (possibly exponentially so) and require significantly more data in order to perform accurate measurements.

Given the observed error in measuring information flow using TE due to logic gates that encrypt, we now set out to examine how well TE measurements capture information flow when the function is implemented with Boolean functions other than XOR. In particular, we examine every first-order Markov process

where function

f is implemented by all 16 possible 2-to-1 binary relations (

Figure 1A) and quantify the error in information transfer estimate for each of them. Similar to previous examples, the state of variable

Z is independent of its past, and inputs

X and

Y take states 0 and 1 with equal probabilities, i.e.,

.

Table 1 shows the results of transfer entropy measurements for all possible 2-to-1 logic gates and the error that would occur if TE measures are used to quantify the information flow from inputs to outputs. This error is the sum of misestimations in information flow quantified by pairwise transfer entropies

and

. As we discussed before, for the XOR relation the transfer entropies

, and

which means that TE misestimates the information flow from inputs

X and

Y by 1 bit (the XNOR is exactly the same). We find that in all other polyadic relations where both

X and

Y influence the future state of

Z,

and

capture part of the information flow from inputs to outputs, but

is less than the entropy of the output

Z by 0.19 bits (

,

). In the remaining six relations where only one of the inputs or neither of them influences the output, the transfer entropies correctly capture the information flow. The difference between the sum of transfer entropies,

, and the entropy of the output

in XOR and XNOR relations, stems from the fact that

, the tell-tale sign of encryption. Furthermore, while other polyadic gates do not implement perfect encryption, they still encrypt partially

, which we call

obfuscation. It is this obfuscation that is at the heart of the TE error shown in

Table 1.

We repeated similar calculations for the case of a feedback loop network where

(

Figure 1B) and function

f can be any one of the 16 logic relations shown in

Table 1. These simple calculations show that in 16 relations including XOR and XNOR, the sum of the transfer entropies,

(the formulation for transfer entropy of a variable to itself reduces to processed information

) is equal to the entropy of the output

as was shown in Equation (

7). However, in XOR and XNOR relations transfer entropy incorrectly attributes all the information to one of the input variables and no influence is attributed to the other. Furthermore, in the polyadic relations other than XOR and XNOR, the transfer entropies

and

differ in value while variables

X and

Y equally influence the state of the output

Z, which is why the TE error in these relations is 0.19 bits.

Given that pairwise TE measurements (not taking into account higher-order conditional transfer entropies) only fail to correctly identify the sources of information flow in cryptographic gates and demonstrate partial errors in quantifying information flow in polyadic relations, we now set out to determine how often these relations appear in networks that implement basic cognitive tasks, and how much error is introduced when measuring information flow using transfer entropy. If the total error in transfer entropy measurements of information flow in cognitive networks is significant, an analysis of pairwise directed information among neural components (neurons, voxels, cortical columns, etc.) using this concept is bound to be problematical. If, however, these errors are reasonably low within biological control structures because cryptographic logic is rarely used, then treatments using the TE concept can largely be trusted.

To answer this question, we use a new tool in computational cognitive neuroscience, namely computational models of cognitive processing that can explain task-performance in terms of plausible dynamic components [

22]. In particular, we use Darwinian evolution to evolve artificial digital brains (also known as Markov Brains or MBs [

23]) that can receive sensory stimuli from the environment, process this information, and take actions in response (In the following we refer to digital brains as “Brains”, while biological brains remain “brains”.). We evolve Markov Brains that perform two different cognitive tasks whose circuitry is thoroughly studied in neuroscience: visual motion detection [

24], as well as sound localization [

25,

26]. Markov Brains have been shown to be a powerful platform that can unravel the information-theoretic correlates of fitness and network structure in neural networks [

27,

28,

29,

30,

31]. This computational platform enables us to analyze structure, function, and circuitry of hundreds of evolved digital Brains. As a result, we can obtain statistics on the frequency of different types of relations in evolved circuits (as opposed to studying only a single evolutionary outcome), and further assess how crucial different operators are for each evolved task, by performing knockout experiments in order to measure an operator’s contribution to the task. In particular, we first investigate the composition of different types of logic gates in networks evolved for the two cognitive tasks, and then theoretically estimate how accurate transfer entropy measures could be when applied to quantify the pairwise information flow from one neuron to another in such simple cognitive networks. We then use transfer entropy measures as a statistic to identify information flow between neurons of evolved circuits using the time series of neural recordings obtained from behaving Brains engaged in their task, and evaluate how successful transfer entropy is in detecting this flow. While artificial evolution of control structures (“artificial Brains”) is not a substitute for the analysis of information flow in biological brains, this investigation should provide some insights on how accurate (or inaccurate) transfer entropy measures could be.

4. Discussion

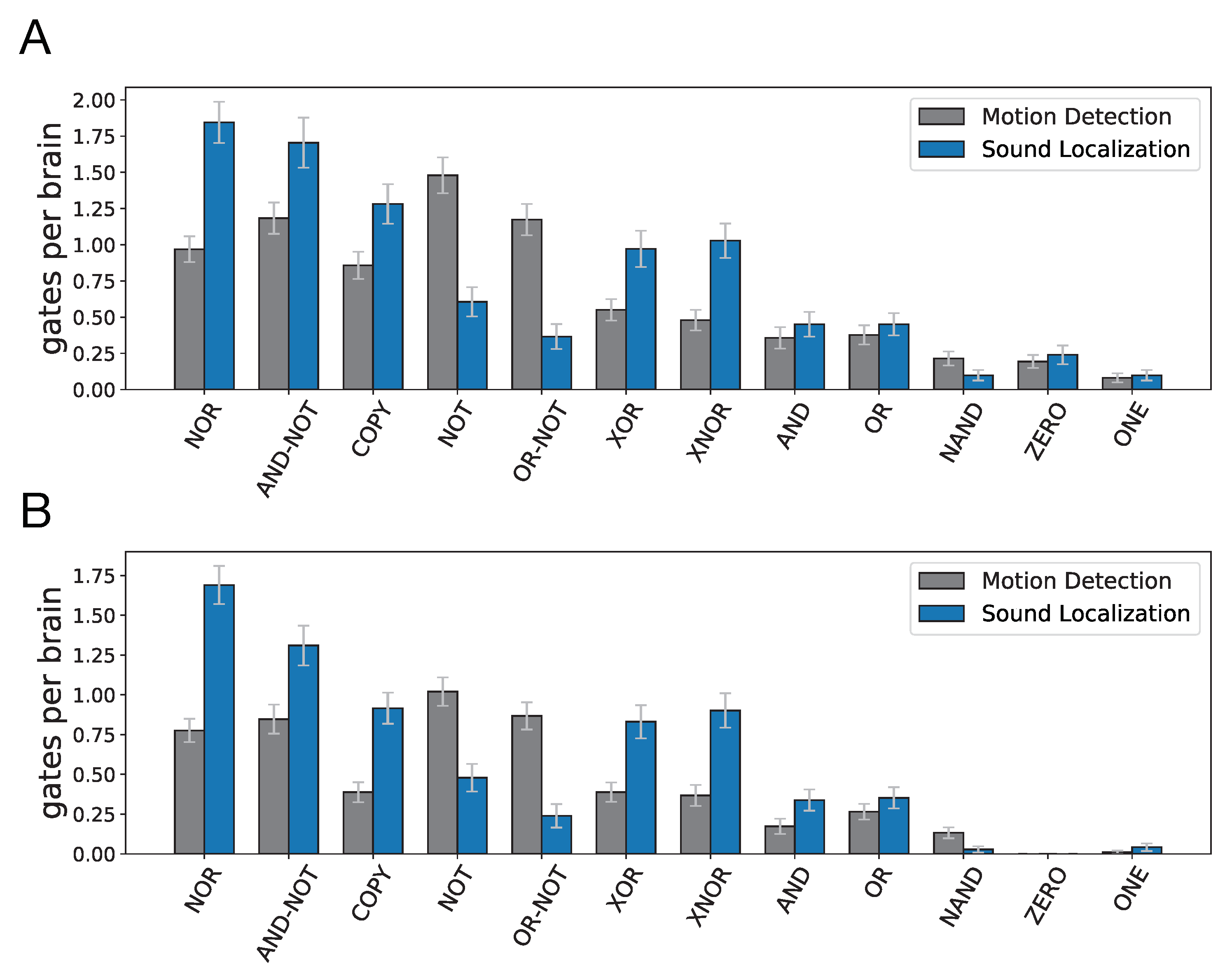

We used an agent-based evolutionary platform to create digital Brains so as to quantitatively evaluate the accuracy of transfer entropy measurements as a proxy for measuring information flow. To this end, we measured the frequency and significance of cryptographic and polyadic 2-to-1 logic gates in evolved digital Brains that perform two fundamental and well-studied cognitive tasks: visual motion detection and sound localization. We evolved 100 populations for each of the cognitive tasks and analyzed the Brain with the highest fitness at the end of each run. Markov Brains evolved a variety of neural architectures that vary in number of neurons and the number of logic gates, as well as the type of logic gates to perform each of the cognitive tasks. In fact, both modeling [

41] and empirical [

42] studies have shown that a wide variety of internal parameters in neural circuits can result in the same functionality [

43]. Thus, it would be informative and perhaps necessary to examine a variety of circuits that perform the same cognitive task [

34].

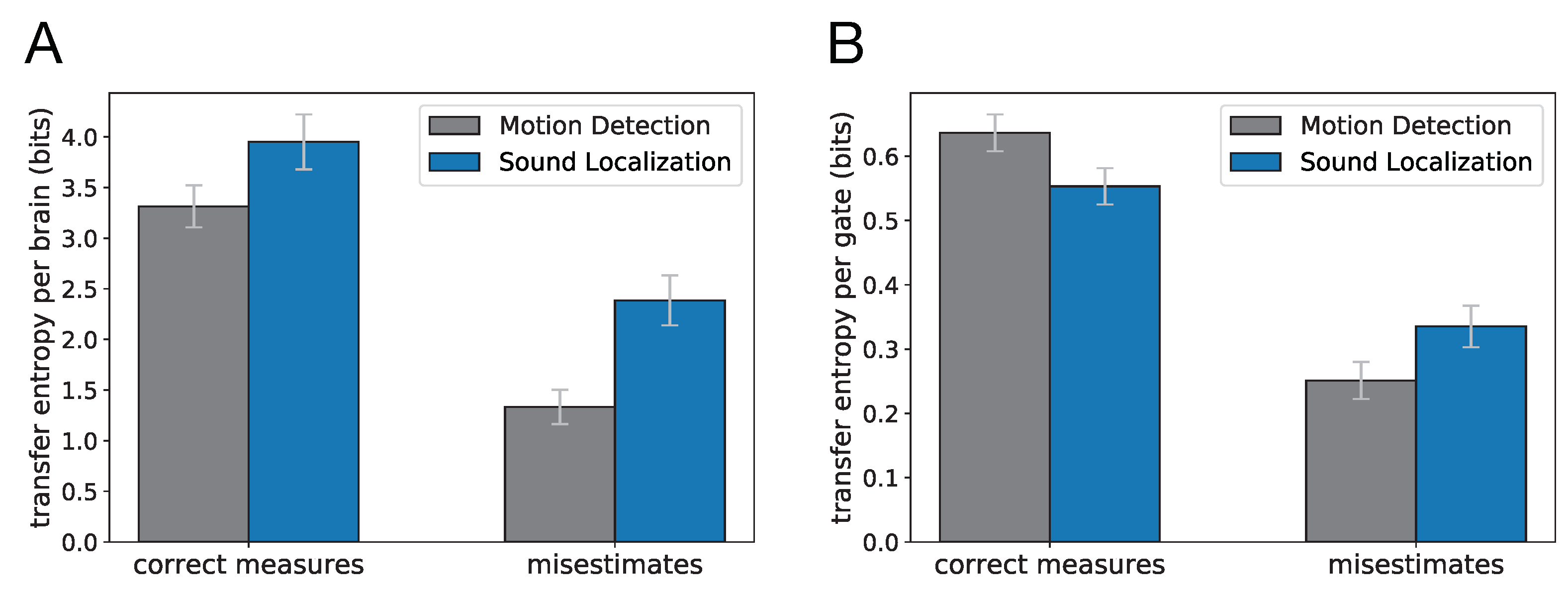

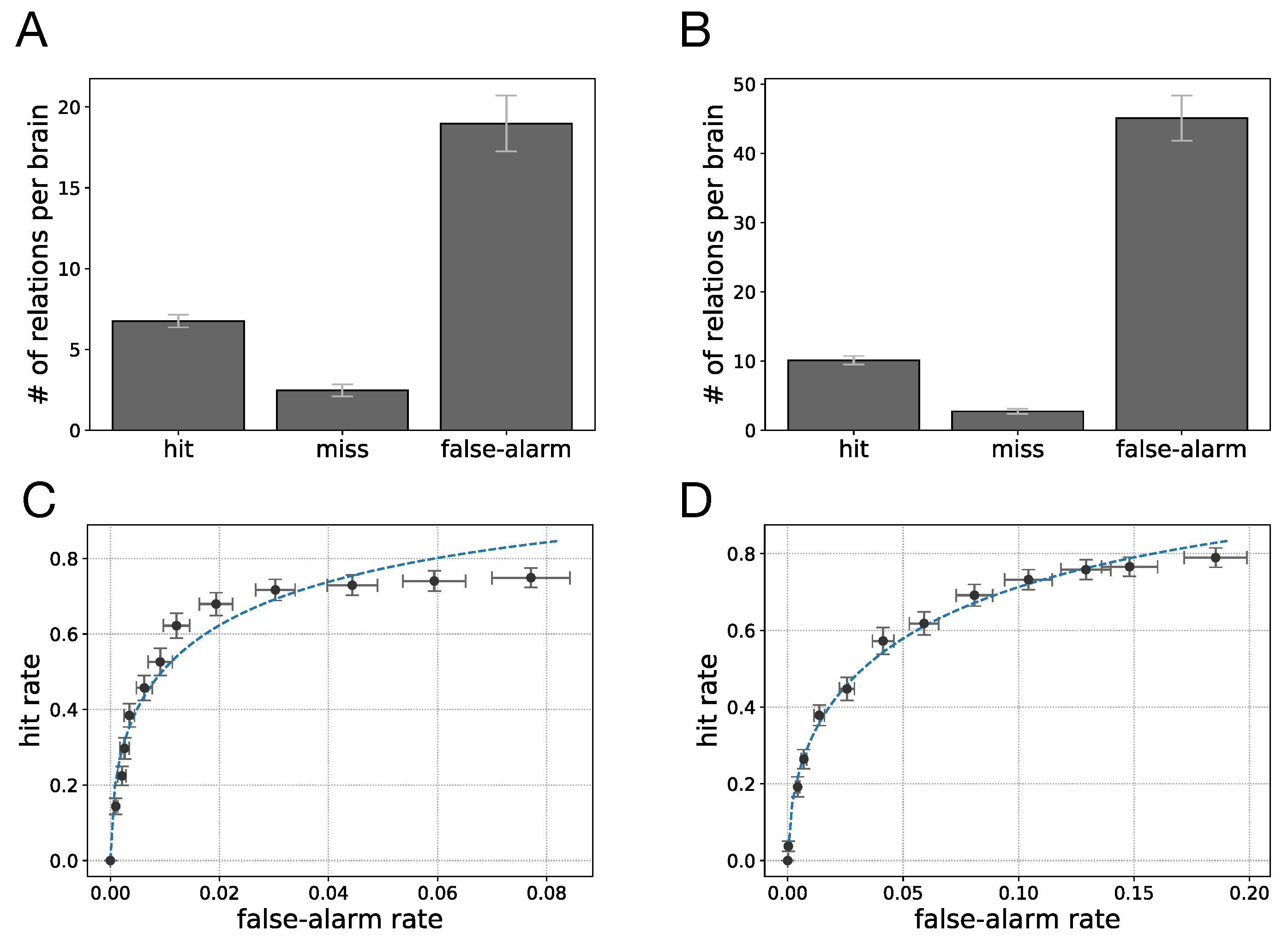

An analysis of the evolved Brains suggests that selecting for different cognitive tasks leads to significantly different gate type distributions. Using the error estimate for each particular gate due to encryption or polyadicity, we used the gate type distributions for each cognitive task to estimate the total error in information flow stemming from using transfer entropy as a statistic. The transfer entropy misestimate was 1.33 bits (SE = 0.08) per Brain on average for Brains evolved for motion detection, whereas in evolved Brains performing sound localization the misestimate was significantly higher: 2.39 bits (SE = 0.12) per Brain on average. More importantly, the inherent differences between the two tasks result in different levels of accuracy when using transfer entropy measures to identify information flow between neurons. It is important to note that in calculating these misestimates, we only accounted for the misestimates that result from TE measurements in polyadic or cryptographic gates. However, we commonly face several other challenges when applying the transfer entropy concept to components of nervous systems (neurons, voxels, etc.). These challenges range from intrinsic noise in neurons to inaccessibility of recording data for larger populations of neurons which we discuss in more detail later.

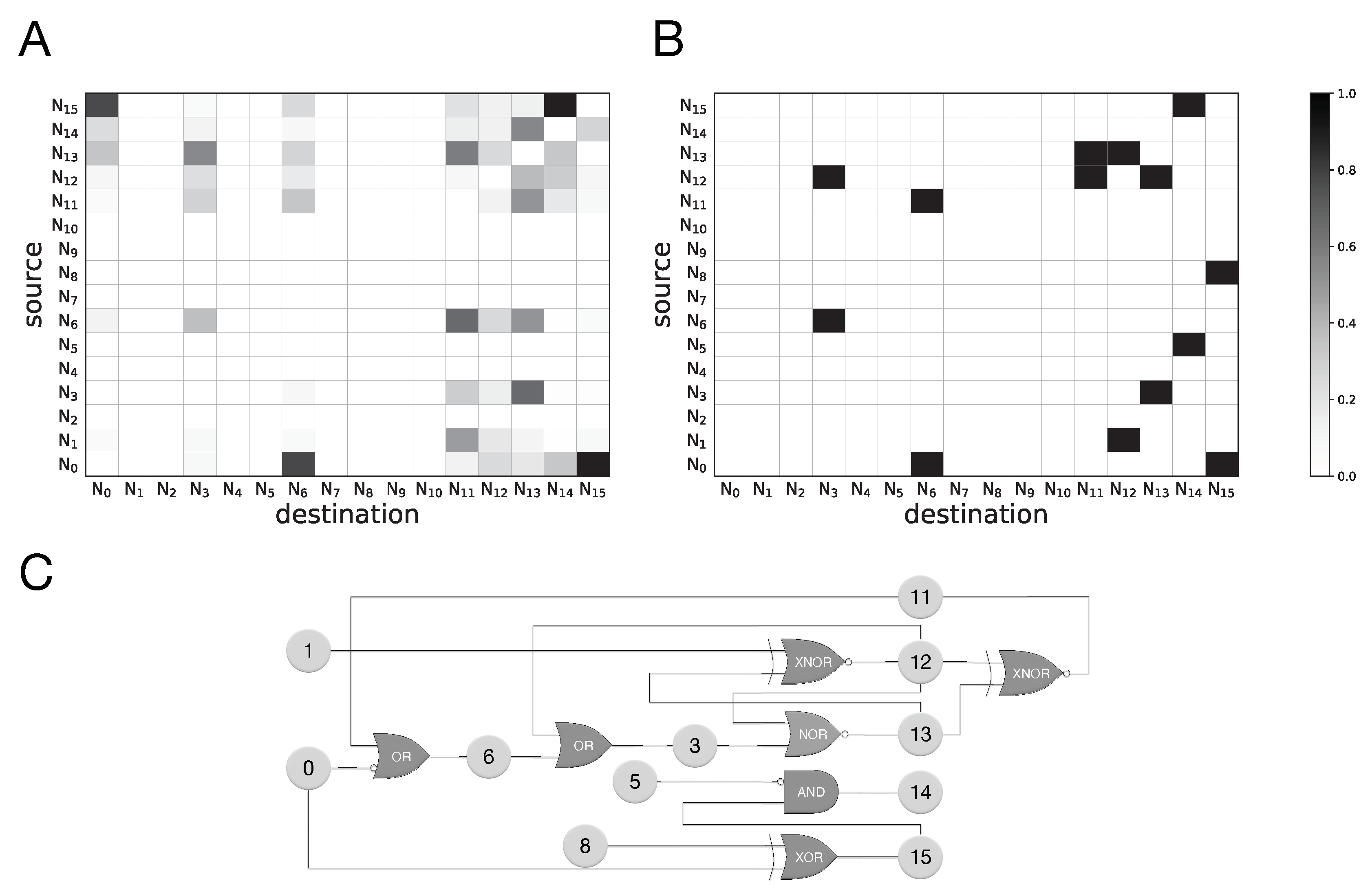

We also tested how well transfer entropy can identify the existence of information flow between any pair of neurons using the statistics of neural recordings at two subsequent time points only. Because a perfect model for the “ground truth” of information flow is difficult (if not impossible) to establish, we use an approximate ground truth that uses the connectivity of the network, along with information from the (simplified) logic function to provide a comparison. We find that TE captures many of the connections established by the ground truth model, with a true positive rate (hit rate) of 73.1% for motion detection and 78.7% for sound localization (assuming any non-zero value of transfer entropy implies causal relation). The TE measurements miss some relations from the established ground truth while also providing demonstrably false positives, with a false-alarm rate of 7.7% in motion detection and 18.5% for sound localization. For example, some of the information flow estimates in

Figure 6 manifestly reverse the actual information flow, suggesting a backwards flow that is causally impossible. Such erroneous backwards influence is possible, for example, when the signal has a periodicity that creates accidental correlations with significant frequency. Besides these false positives, the false negatives (missed inferences) are due to the use of information-hiding (cryptographic or obfuscating) relations, as discussed earlier.

It is noteworthy that in the transfer entropy measurements we performed, we benefited from multiple factors that are commonly great challenges in TE analysis of biological neural recordings. First, our TE measurement results were obtained using error-free recordings of noise-free neurons, while biological neurons are intrinsically noisy. We were also able to use the recordings from every neuron in the network, which presumably results in more accurate estimates. In contrast, in biological networks we only have the capacity to record from a finite number of neurons which, in turn, constrains our understanding of how information flows in the network.

Furthermore, by focusing only on information flow from one time step to the next we can evade the complex issues posed by estimating causal influence, which requires finding optimal time delays in transfer entropies. For example, while a signal may influence a neuron’s firing three time steps after it was perceived by a sensory neuron, it must be possible to follow this influence step-by-step in a first-order Markov process, as causal signals must be relayed physically (no action-at-a-distance). As a consequence, when using transfer entropy to detect and follow information flow, we can restrict ourselves to history lengths of 1 (

), which significantly simplifies the analysis [

17]. Furthermore, complications arising from discretizing continuous signals [

15] do not arise, nor is there a choice in embedding the signal as all our neurons have discrete states. In principle, extending the history lengths (from

to higher) may be used to get rid of false positives in entropy estimates (even for a first-order Markov process), for the simple reason that the higher dimensionality of state space reduces accidental correlations, given a finite sample set. However, such an increase in dimensionality has a drawback: it makes the detection of true positives more difficult (it increases the rate of false negatives) unless the dataset size is also increased. In many dynamical systems such an increase in data size is not an issue, but it may be very difficult (if not impossible) for smaller systems such as the simple cognitive circuits that we evolve. For those, the number of different “sensory experiences” is extremely limited, and increasing the dataset size does not solve the problem because it would simply repeat the same data. In other words, unlike for large probabilistic systems where generating longer time series will almost invariably exhaustively sample the probability space, this is not the case for motion detection and sound localization. For such “small” systems, increasing the history lengths reduces false positives, but increases false negatives at the same time.

Finally, in order to precisely calculate transfer entropy from Equation (

1), the summation should be performed over all possible states of variables

,

,

. Using only a subset of those states when calculating the entropy estimate may result in false positives, as well as false negatives. This is another common source of inaccuracy in TE measurements of neural recordings. Here we were able to generate neural recording data for all possible sensory input patterns and included them in our dataset, yet we still observe the described shortcomings in our results. This brings up another important point to notice, namely, even if we introduce every possible sensory pattern to the network, we do not necessarily observe every possible neural firing pattern in the network, and as a result, we do not necessarily sample the entire set of variable states

.