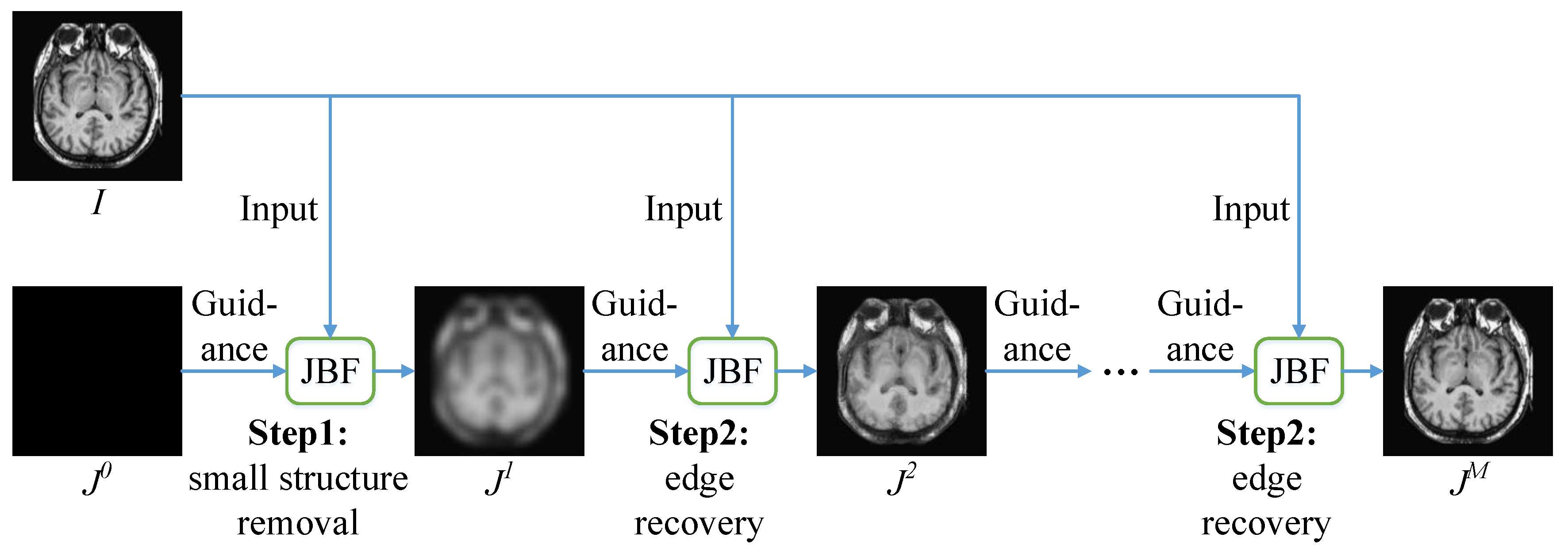

Figure 1.

Rolling guidance filtering.

Figure 1.

Rolling guidance filtering.

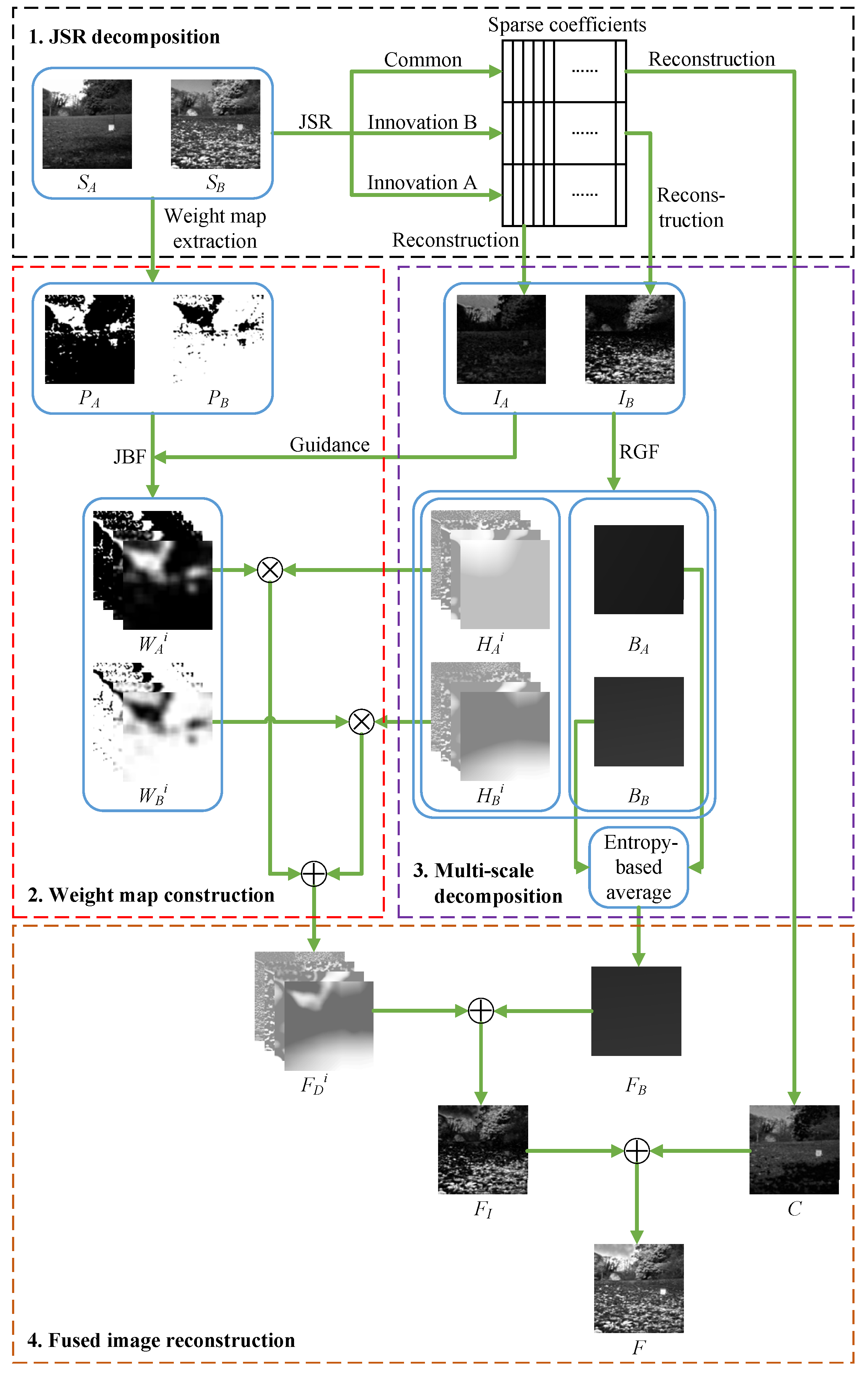

Figure 2.

The schematic diagram of our proposed fusion method.

Figure 2.

The schematic diagram of our proposed fusion method.

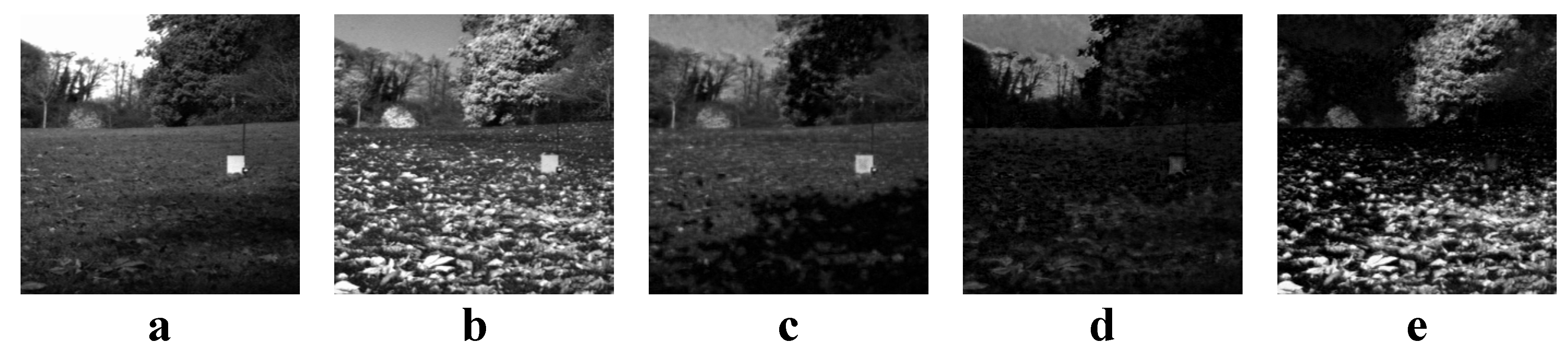

Figure 3.

An example of JSR decomposition. (a,b) Source images; (c) The common image; (d) The innovation image of (a); (e) The innovation image of (b).

Figure 3.

An example of JSR decomposition. (a,b) Source images; (c) The common image; (d) The innovation image of (a); (e) The innovation image of (b).

Figure 4.

Infrared-visible image sets. (a–d) Four sets of infrared–visible source images.

Figure 4.

Infrared-visible image sets. (a–d) Four sets of infrared–visible source images.

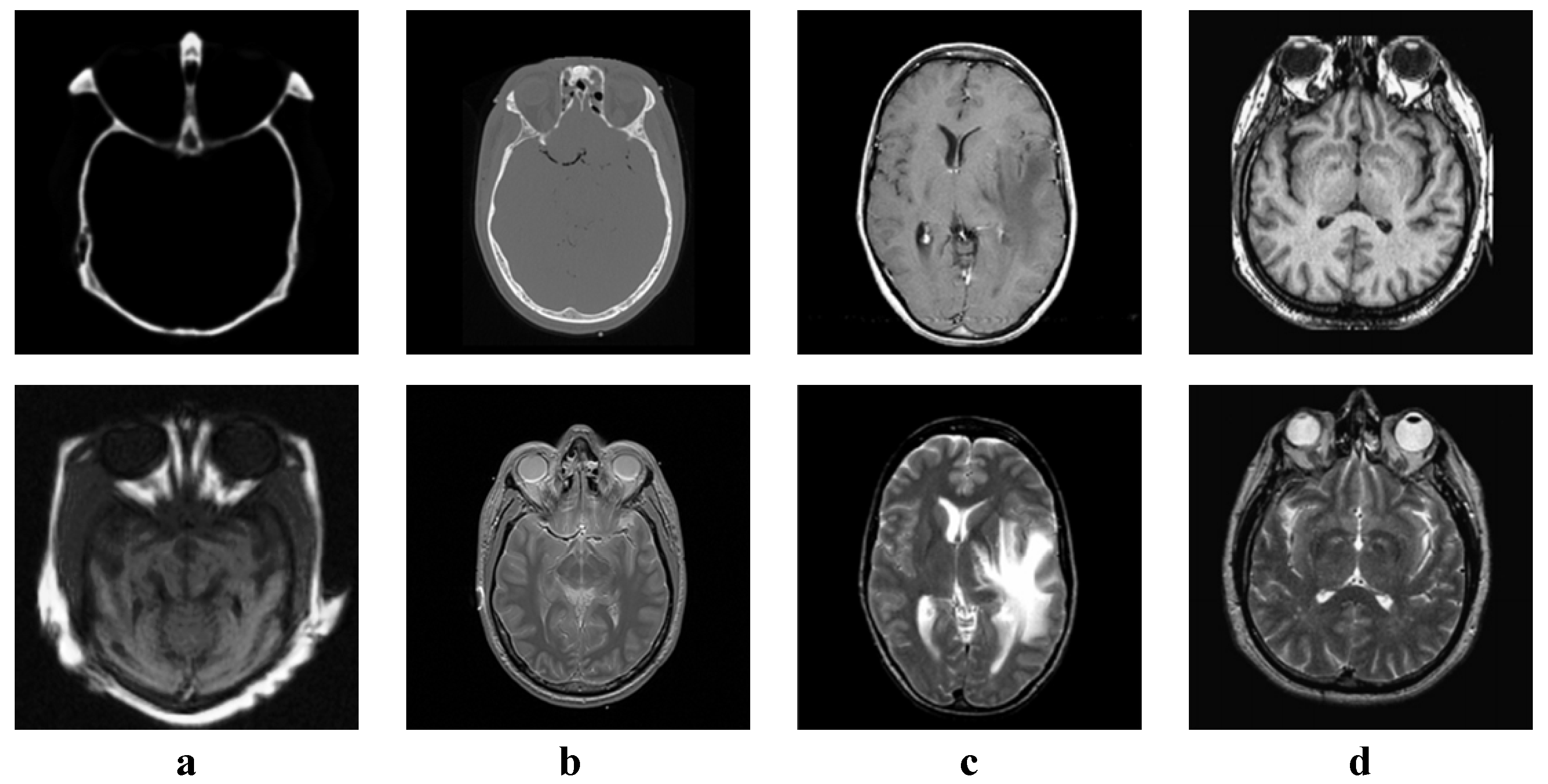

Figure 5.

Medical image sets. (a–d) Four sets of medical source images.

Figure 5.

Medical image sets. (a–d) Four sets of medical source images.

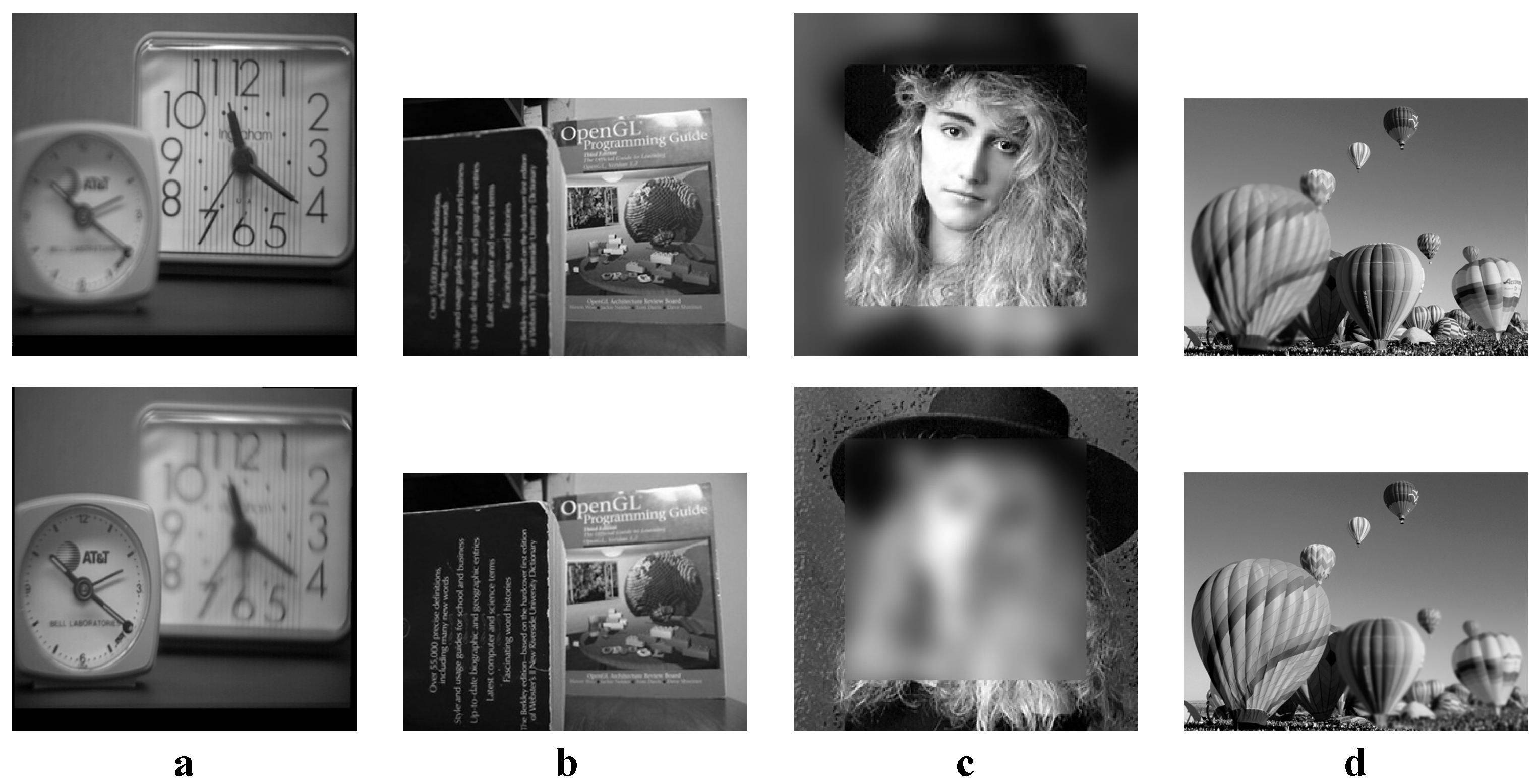

Figure 6.

Multi-focus image sets. (a–d) Four sets of multi-focus source images.

Figure 6.

Multi-focus image sets. (a–d) Four sets of multi-focus source images.

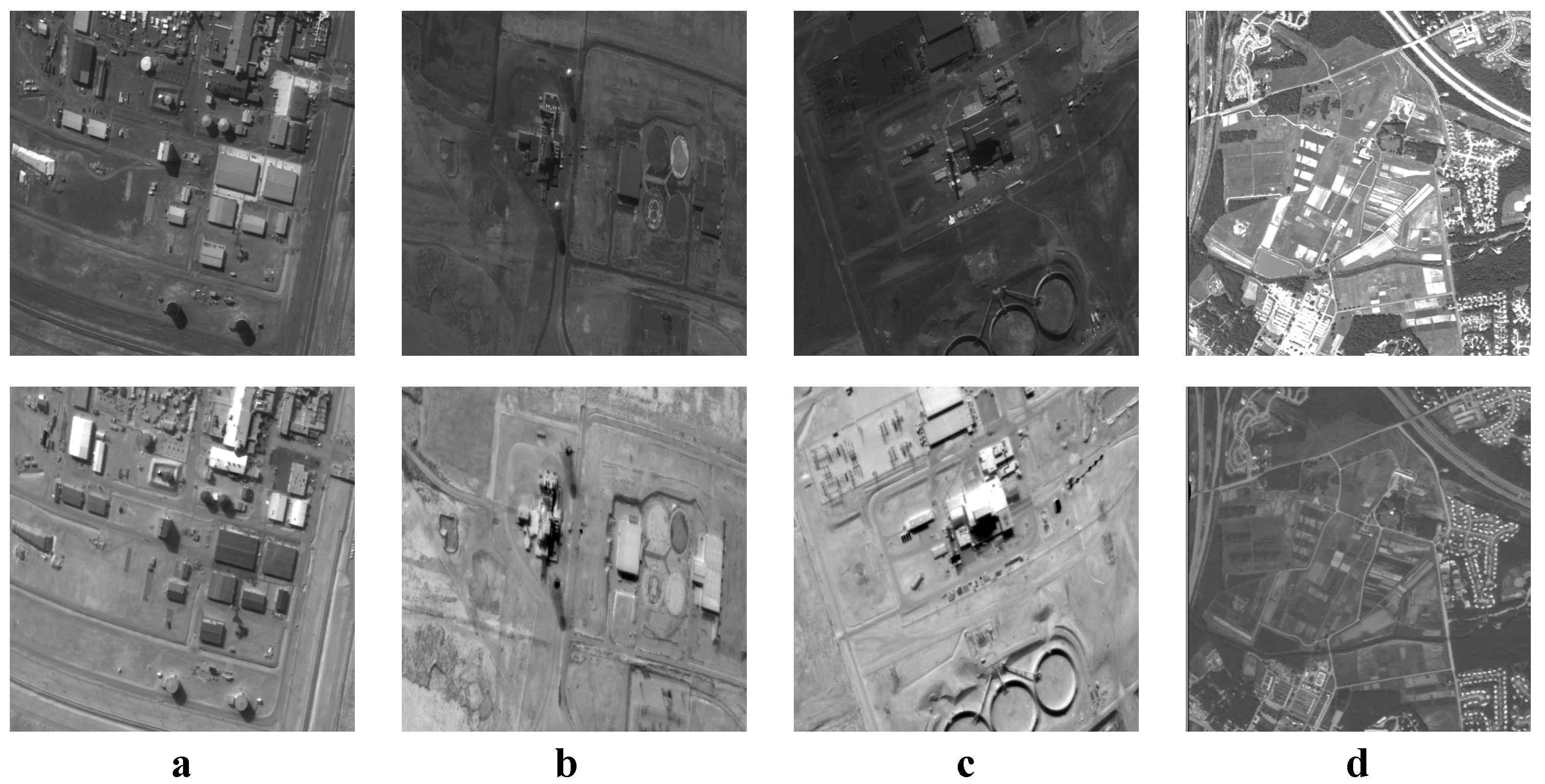

Figure 7.

Remote sensing image sets. (a–d) Four sets of remote sensing source images.

Figure 7.

Remote sensing image sets. (a–d) Four sets of remote sensing source images.

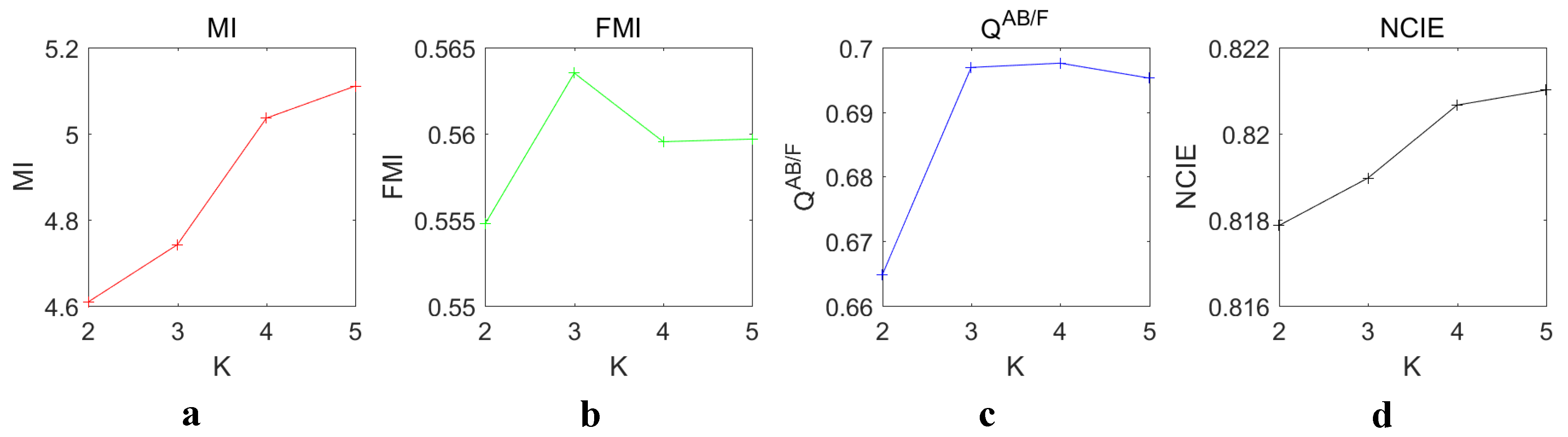

Figure 8.

Objective evaluation of different decomposition level K. (a–d) The values of , , and , respectively.

Figure 8.

Objective evaluation of different decomposition level K. (a–d) The values of , , and , respectively.

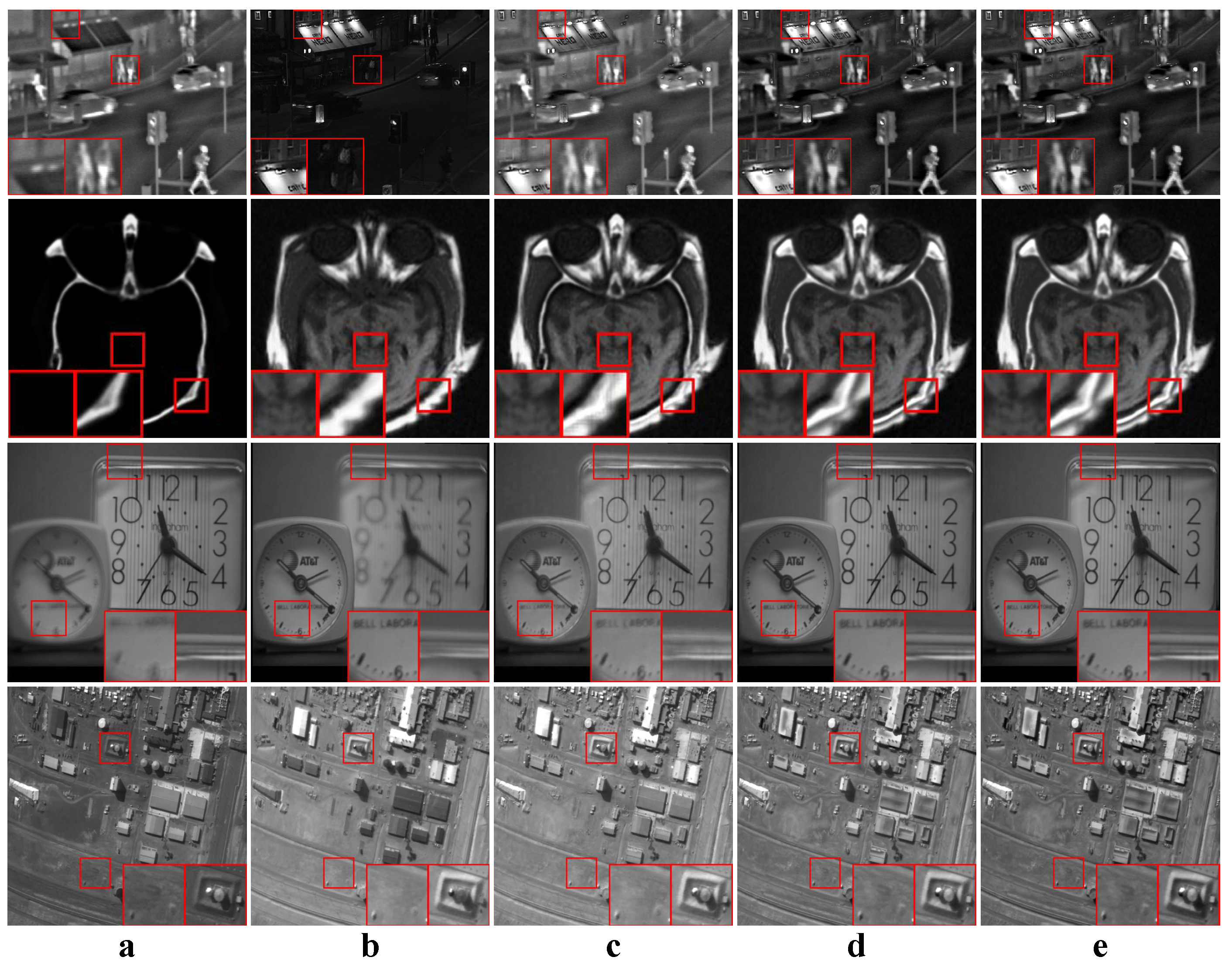

Figure 9.

Some fused images of JSR, RGF and our proposed method. (a,b) Source images; (c) The fused results of JSR; (d) The fused results of RGF; (e) The fused results of our proposed method.

Figure 9.

Some fused images of JSR, RGF and our proposed method. (a,b) Source images; (c) The fused results of JSR; (d) The fused results of RGF; (e) The fused results of our proposed method.

Figure 10.

Examples of the fusion results of infrared-visible images. (a,b) Source images; (c–p) The fused results of ASR, CSR, CVT, DTCWT, GTF, H-MSD, CNN, LP, MSSR, MSVD, NSCT, WLS, FFIF, and our proposed method, respectively.

Figure 10.

Examples of the fusion results of infrared-visible images. (a,b) Source images; (c–p) The fused results of ASR, CSR, CVT, DTCWT, GTF, H-MSD, CNN, LP, MSSR, MSVD, NSCT, WLS, FFIF, and our proposed method, respectively.

Figure 11.

Examples of the fusion results of medical images. (a,b) Source images; (c–p) The fused results of ASR, CSR, CVT, DTCWT, GTF, H-MSD, CNN, LP, MSSR, MSVD, NSCT, WLS, FFIF, and our proposed method, respectively.

Figure 11.

Examples of the fusion results of medical images. (a,b) Source images; (c–p) The fused results of ASR, CSR, CVT, DTCWT, GTF, H-MSD, CNN, LP, MSSR, MSVD, NSCT, WLS, FFIF, and our proposed method, respectively.

Figure 12.

Examples of the fusion results of multi-focus images. (a,b) Source images; (c–p) The fused results of ASR, CSR, CVT, DTCWT, GTF, H-MSD, CNN, LP, MSSR, MSVD, NSCT, WLS, FFIF, and our proposed method, respectively.

Figure 12.

Examples of the fusion results of multi-focus images. (a,b) Source images; (c–p) The fused results of ASR, CSR, CVT, DTCWT, GTF, H-MSD, CNN, LP, MSSR, MSVD, NSCT, WLS, FFIF, and our proposed method, respectively.

Figure 13.

Examples of the fusion results of remote sensing images. (a,b) Source images; (c–p) The fused results of ASR, CSR, CVT, DTCWT, GTF, H-MSD, CNN, LP, MSSR, MSVD, NSCT, WLS, FFIF, and our proposed method, respectively.

Figure 13.

Examples of the fusion results of remote sensing images. (a,b) Source images; (c–p) The fused results of ASR, CSR, CVT, DTCWT, GTF, H-MSD, CNN, LP, MSSR, MSVD, NSCT, WLS, FFIF, and our proposed method, respectively.

Table 1.

Objective evaluation of different n values for the JSR dictionary. The best and second best results of each metric are marked in red and bold, respectively.

Table 1.

Objective evaluation of different n values for the JSR dictionary. The best and second best results of each metric are marked in red and bold, respectively.

| Metric | | | |

|---|

| 5.3075 | 5.3377 | 5.3179 |

| 0.5650 | 0.5600 | 0.5556 |

| 0.6971 | 0.6978 | 0.6974 |

| 0.8224 | 0.8226 | 0.8225 |

| Time | 163.74 | 398.33 | 1182.71 |

Table 2.

Objective evaluation of different m values for the JSR dictionary. The best and second best results of each metric are marked in red and bold, respectively.

Table 2.

Objective evaluation of different m values for the JSR dictionary. The best and second best results of each metric are marked in red and bold, respectively.

| Metric | | | |

|---|

| 5.2805 | 5.3309 | 5.3377 |

| 0.5538 | 0.5638 | 0.5600 |

| 0.6936 | 0.6973 | 0.6978 |

| 0.8223 | 0.8225 | 0.8226 |

| Time | 176.07 | 251.15 | 398.33 |

Table 3.

Time cost of different decomposition levels.

Table 3.

Time cost of different decomposition levels.

| K | 2 | 3 | 4 | 5 |

|---|

| Time | 374.70 | 376.97 | 381.58 | 398.33 |

Table 4.

Objective evaluation of JSR, RGF and our method. The best and second best results of each metric are marked in red and bold, respectively.

Table 4.

Objective evaluation of JSR, RGF and our method. The best and second best results of each metric are marked in red and bold, respectively.

| Category | Metric | JSR | RGF | OURS |

|---|

| Infrared-visible | | 3.6597 | 3.7839 | 4.2105 |

| 0.4946 | 0.5456 | 0.5477 |

| 0.6130 | 0.6644 | 0.6652 |

| 0.8106 | 0.8113 | 0.8143 |

| Medical | | 4.1862 | 4.0194 | 4.2164 |

| 0.5439 | 0.5278 | 0.5228 |

| 0.6177 | 0.6768 | 0.6800 |

| 0.8133 | 0.8119 | 0.8130 |

| Multi-focus | | 6.9542 | 8.8911 | 8.9213 |

| 0.5475 | 0.6316 | 0.6324 |

| 0.7449 | 0.7890 | 0.7891 |

| 0.8316 | 0.8465 | 0.8467 |

| Remote sensing | | 2.9600 | 3.7494 | 4.0035 |

| 0.4555 | 0.5337 | 0.5370 |

| 0.5846 | 0.6508 | 0.6567 |

| 0.8082 | 0.8145 | 0.8165 |

Table 5.

Objective evaluation of infrared-visible image fusion. The best and second best results of each metric are marked in red and bold, respectively.

Table 5.

Objective evaluation of infrared-visible image fusion. The best and second best results of each metric are marked in red and bold, respectively.

| Metric | ASR | CSR | CVT | DTCWT | GTF | H-MSD | CNN |

| 2.7134 | 2.7878 | 2.2697 | 2.3902 | 2.5465 | 2.6970 | 2.9490 |

| 0.5202 | 0.4510 | 0.4596 | 0.4892 | 0.4874 | 0.4324 | 0.4818 |

| 0.5986 | 0.5890 | 0.5512 | 0.5796 | 0.4994 | 0.5686 | 0.6290 |

| 0.8064 | 0.8066 | 0.8052 | 0.8055 | 0.8061 | 0.8064 | 0.8072 |

| Metric | LP | MSSR | MSVD | NSCT | WLS | FFIF | OURS |

| 2.6575 | 3.4726 | 2.9739 | 2.4802 | 2.7887 | 4.9717 | 4.2105 |

| 0.5003 | 0.5044 | 0.3972 | 0.4988 | 0.4339 | 0.5775 | 0.5477 |

| 0.6366 | 0.6065 | 0.4123 | 0.6144 | 0.5574 | 0.6405 | 0.6652 |

| 0.8062 | 0.8107 | 0.8072 | 0.8057 | 0.8065 | 0.8226 | 0.8143 |

Table 6.

Objective evaluation of medical image fusion. The best and second best results of each metric are marked in red and bold, respectively.

Table 6.

Objective evaluation of medical image fusion. The best and second best results of each metric are marked in red and bold, respectively.

| Metric | ASR | CSR | CVT | DTCWT | GTF | H-MSD | CNN |

| 3.4473 | 3.3705 | 2.6794 | 2.9084 | 2.9051 | 3.2624 | 3.5522 |

| 0.5638 | 0.5087 | 0.3534 | 0.4478 | 0.5125 | 0.4738 | 0.5152 |

| 0.6037 | 0.5976 | 0.5170 | 0.5488 | 0.4288 | 0.5639 | 0.6416 |

| 0.8092 | 0.8090 | 0.8069 | 0.8075 | 0.8076 | 0.8086 | 0.8097 |

| Metric | LP | MSSR | MSVD | NSCT | WLS | FFIF | OURS |

| 3.2668 | 3.6737 | 3.5279 | 3.1658 | 3.5519 | 4.6729 | 4.2164 |

| 0.5243 | 0.5406 | 0.4731 | 0.5063 | 0.4907 | 0.6086 | 0.5228 |

| 0.6384 | 0.6422 | 0.4713 | 0.6220 | 0.5914 | 0.6535 | 0.6800 |

| 0.8085 | 0.8102 | 0.8097 | 0.8082 | 0.8097 | 0.8151 | 0.8130 |

Table 7.

Objective evaluation of multi-focus image fusion. The best and second best results of each metric are marked in red and bold, respectively.

Table 7.

Objective evaluation of multi-focus image fusion. The best and second best results of each metric are marked in red and bold, respectively.

| Metric | ASR | CSR | CVT | DTCWT | GTF | H-MSD | CNN |

| 7.5714 | 7.6874 | 7.2197 | 7.4468 | 7.6793 | 7.5452 | 8.5647 |

| 0.6022 | 0.4586 | 0.5643 | 0.5942 | 0.5963 | 0.5534 | 0.6065 |

| 0.7746 | 0.7570 | 0.7571 | 0.7710 | 0.6210 | 0.7477 | 0.7835 |

| 0.8367 | 0.8363 | 0.8343 | 0.8360 | 0.8397 | 0.8363 | 0.8441 |

| Metric | LP | MSSR | MSVD | NSCT | WLS | FFIF | OURS |

| 7.9235 | 7.8695 | 6.5958 | 7.5796 | 7.3741 | 9.2663 | 8.9213 |

| 0.6057 | 0.5987 | 0.4236 | 0.5963 | 0.5680 | 0.6558 | 0.6324 |

| 0.7829 | 0.7807 | 0.6212 | 0.7795 | 0.7647 | 0.7403 | 0.7891 |

| 0.8390 | 0.8385 | 0.8299 | 0.8367 | 0.8349 | 0.8533 | 0.8467 |

Table 8.

Objective evaluation of remote sensing image fusion. The best and second best results of each metric are marked in red and bold, respectively.

Table 8.

Objective evaluation of remote sensing image fusion. The best and second best results of each metric are marked in red and bold, respectively.

| Metric | ASR | CSR | CVT | DTCWT | GTF | H-MSD | CNN |

| 2.0875 | 2.2285 | 1.8935 | 1.9541 | 1.7620 | 2.1677 | 3.6505 |

| 0.5057 | 0.4519 | 0.4305 | 0.4590 | 0.4967 | 0.4141 | 0.4741 |

| 0.5375 | 0.5908 | 0.5526 | 0.5802 | 0.4661 | 0.5428 | 0.6194 |

| 0.8047 | 0.8052 | 0.8044 | 0.8045 | 0.8037 | 0.8055 | 0.8143 |

| Metric | LP | MSSR | MSVD | NSCT | WLS | FFIF | OURS |

| 2.1795 | 3.1134 | 2.0510 | 2.0314 | 2.1937 | 4.5241 | 4.0035 |

| 0.4784 | 0.4646 | 0.3010 | 0.4757 | 0.4199 | 0.5473 | 0.5370 |

| 0.6173 | 0.5941 | 0.4055 | 0.6119 | 0.5389 | 0.6298 | 0.6567 |

| 0.8051 | 0.8110 | 0.8044 | 0.8046 | 0.8050 | 0.8209 | 0.8165 |