Service-Oriented Model Encapsulation and Selection Method for Complex System Simulation Based on Cloud Architecture

Abstract

:1. Introduction

2. Related Works

2.1. High-Level Architecture (HLA)-Based Simulation Model Development Specification

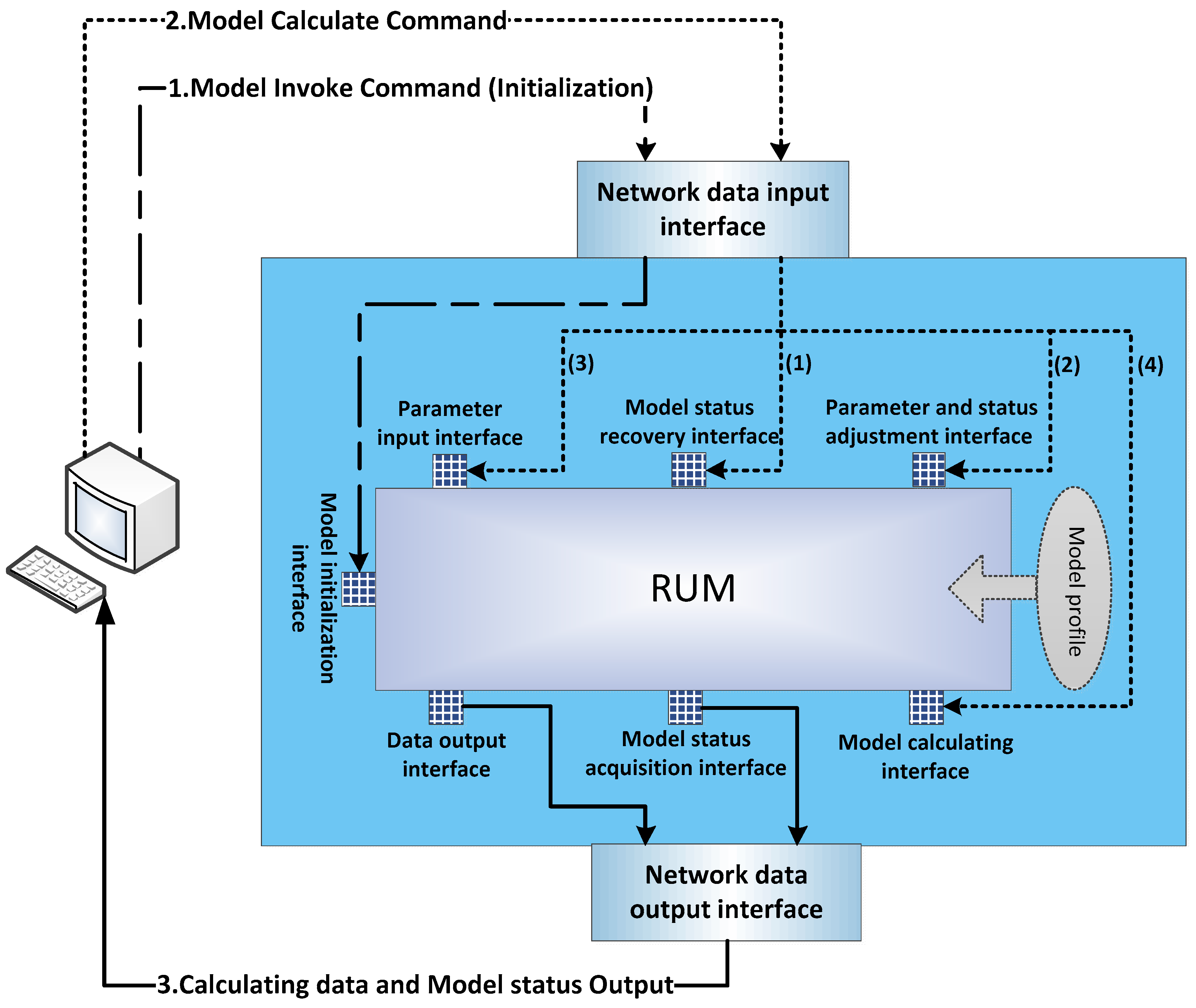

2.2. RUM Specification

2.3. OWL-Based Simulation Model Search Method

2.4. QoS-Based Simulation Model Selection Method

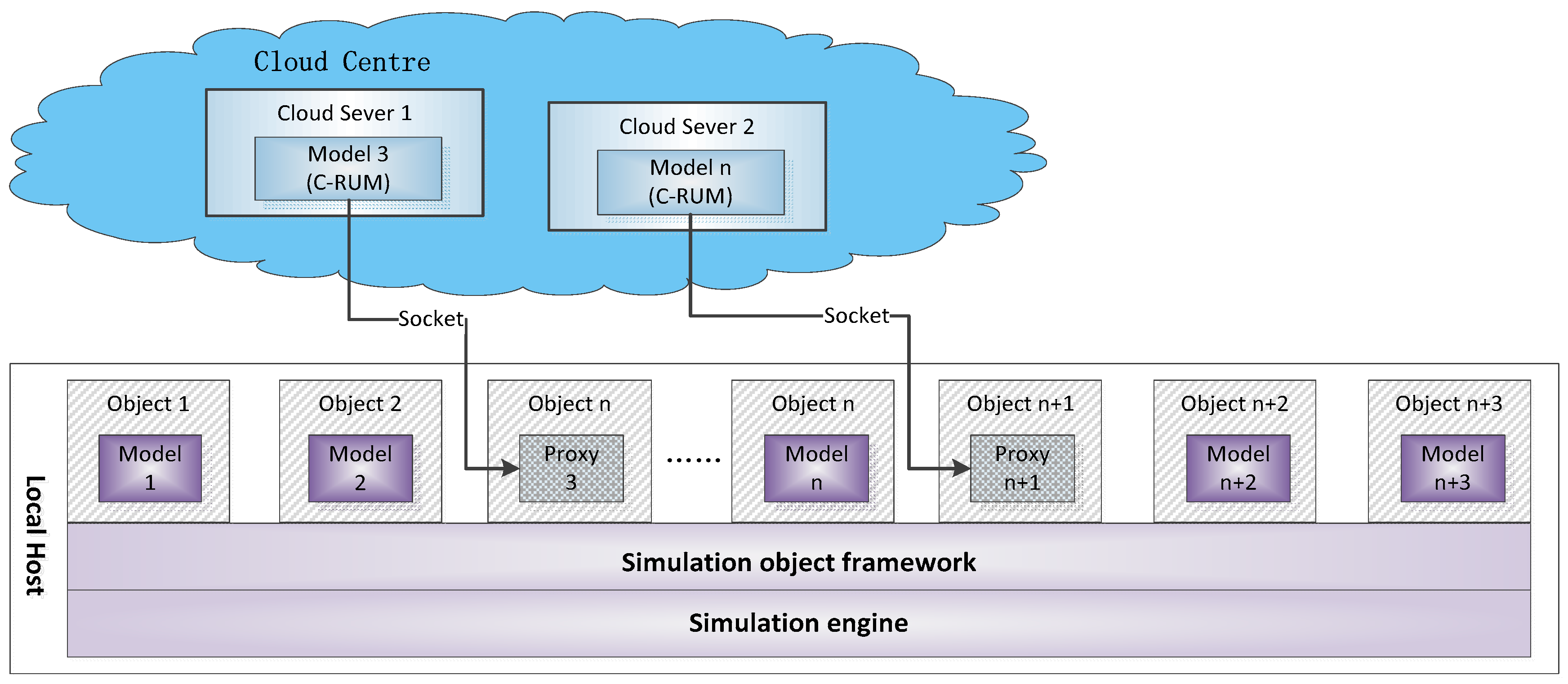

3. C-RUM Specification and Cloud-Based Simulation Model Service Framework

3.1. C-RUM Specification

3.2. Cloud-Based Simulation Model Service Framework

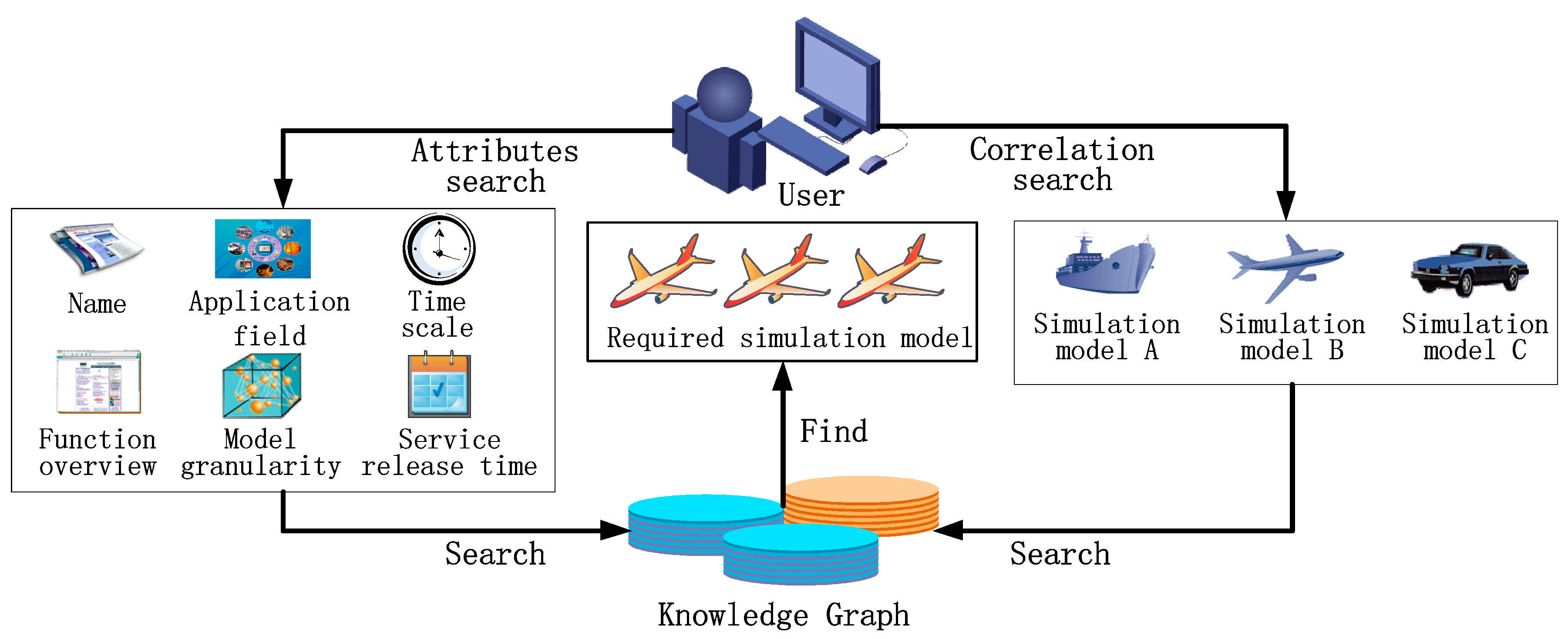

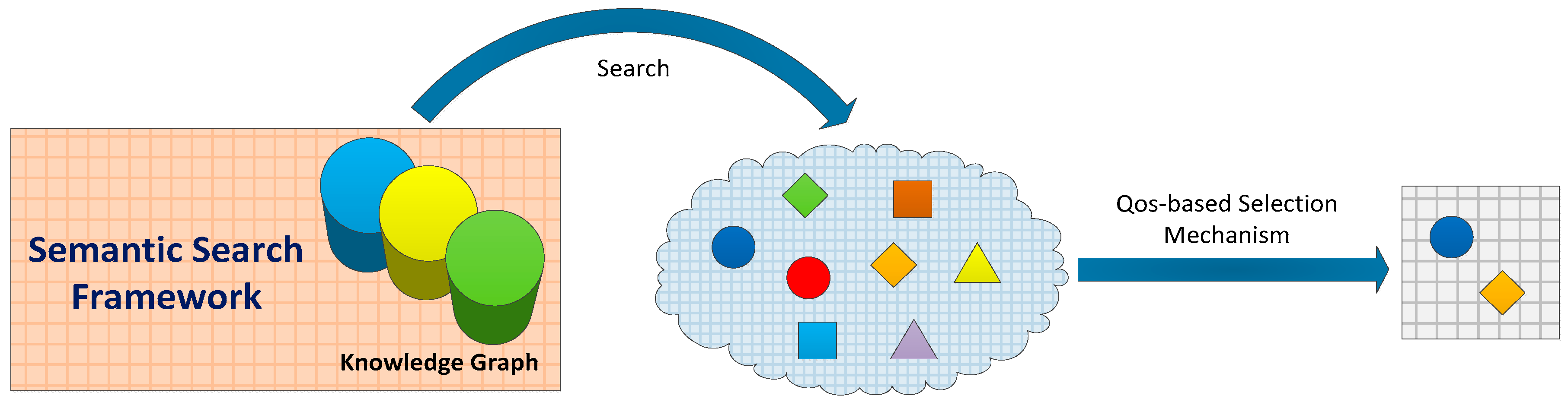

4. Simulation Model Selection Method Based on Semantic Search in Cloud Environment

4.1. Semantic Search Framework

| Algorithm 1 Semantic_Search |

| Input:Attributes_conditions [M], the vector for storing model attribute requirements; Correlated model; Relationship, the relationship with correlated model; |

| Output:, simulation model initial set; |

| 1: Boolean , ; |

| 2: if (Attributes_search_conditions ≠ null)||(Relationship_search_conditions ≠ null) then |

| 3: for each do // Loop traversal of the simulation model |

| 4: for to M do // Loop traversal of model attribute requirement condition |

| 5: if then ; |

| 6: end for |

| 7: if |

| 8: if then ; |

| 9: else |

| 10: if (flag1 & flag2) then push_into_list (model, ); |

| 11: end for |

| 12: end if |

| 13: return |

4.2. QoS Weighted-Based Simulation Model Selection Method in Cloud Environment

- Model performance (QM) is determined by the computation of the model. A simulation model with more computations has lower model performance.

- Communication capability (QC) reflects the communication capability of the link between the user’s terminal node and the cloud server.

- Availability (QA) indicates the probability that the simulation model can be called and used. It is defined by the mean time between failures and the mean time to repair.

- Reliability (QR) is defined by the execution success rate of the service, which refers to the probability of obtaining the correct response to the user’s requirements within the maximum expected time range.

- Security (QS) is measured by the data management capability of a model service, which mainly depends on the user’s historical experience. Terminal users should be given a [0, 10] range to score the service (regarding the confidentiality, integrality, realness, etc., of data) after using it. Then, the value of QS is the average score; with the increase and accumulation of evaluations, this value becomes reliable.

| Algorithm 2 QoS Weighted-Based_Selection |

| Input: W, Simulation model QoS index weight vector; , Simulation model initial set (from Algorithm 1); |

| Output:, Ordered model candidate set; |

| 1: if then |

| 2: for each do // Loop traversal of model initial set |

| 3: for each to 5 do // Loop through 5 QoS indices |

| 4: // Calculate the standard value of the QoS index |

| 5: // Calculate the target QoS value of the simulation model |

| 6: push_into_list(<model,Q>, ) // Insert the binary <model, Q> into the set Φ |

| 7: end for |

| 8: end for |

| 9: rank_list_by () // Sort the elements in Φ by Q |

| 10: end if |

| 11: return |

5. Case Study: Airport Operation Control System Simulation

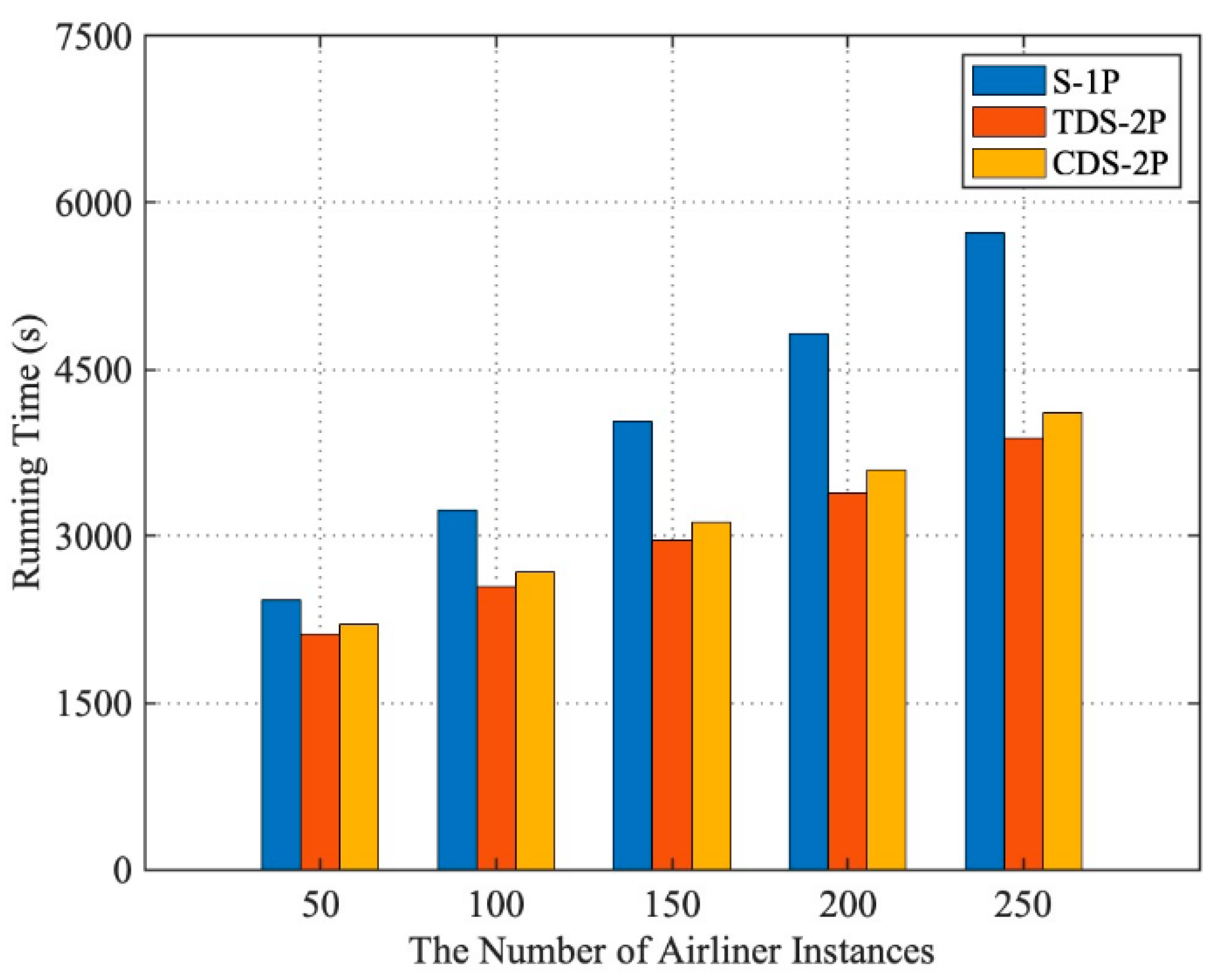

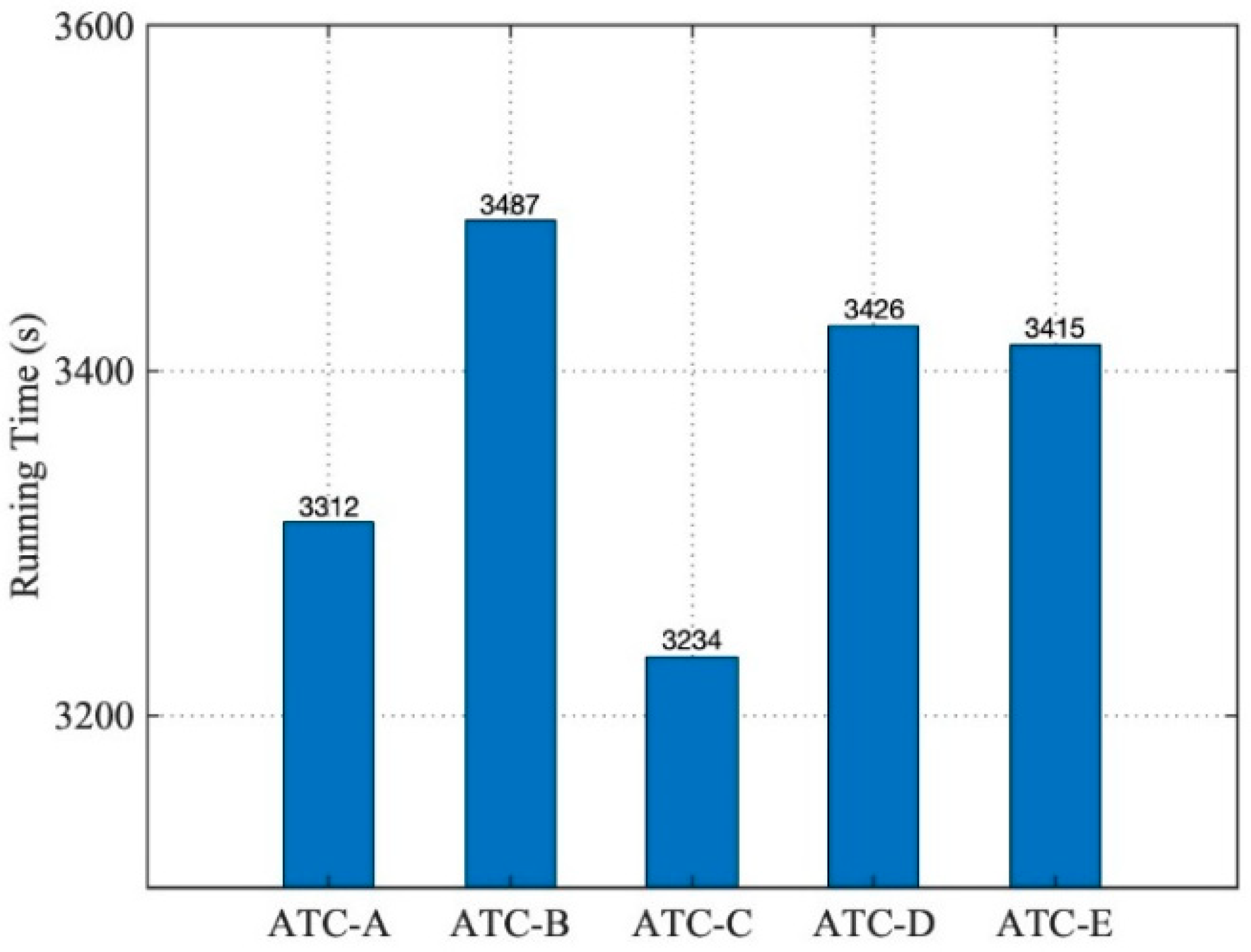

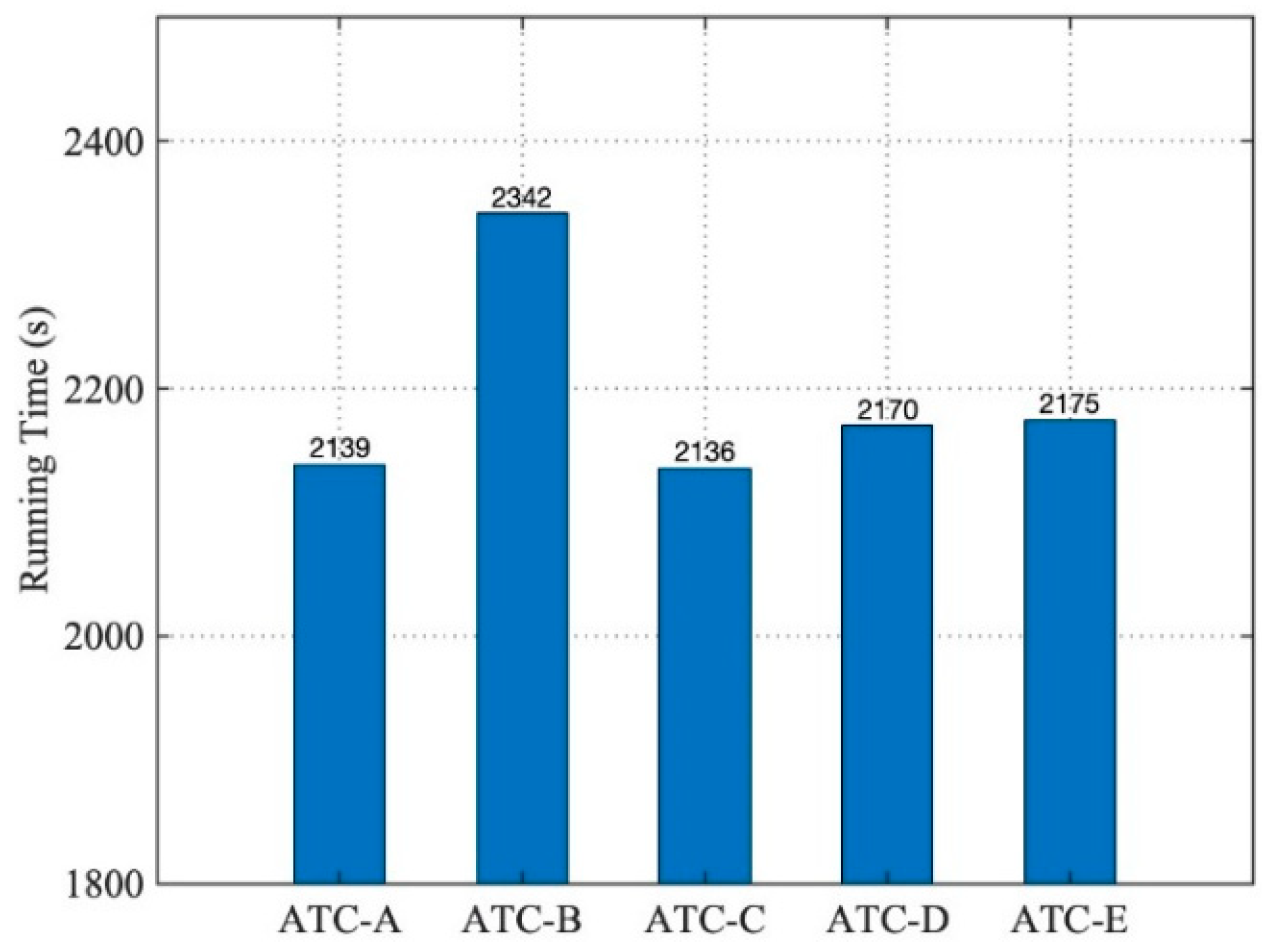

5.1. Performance Evaluation

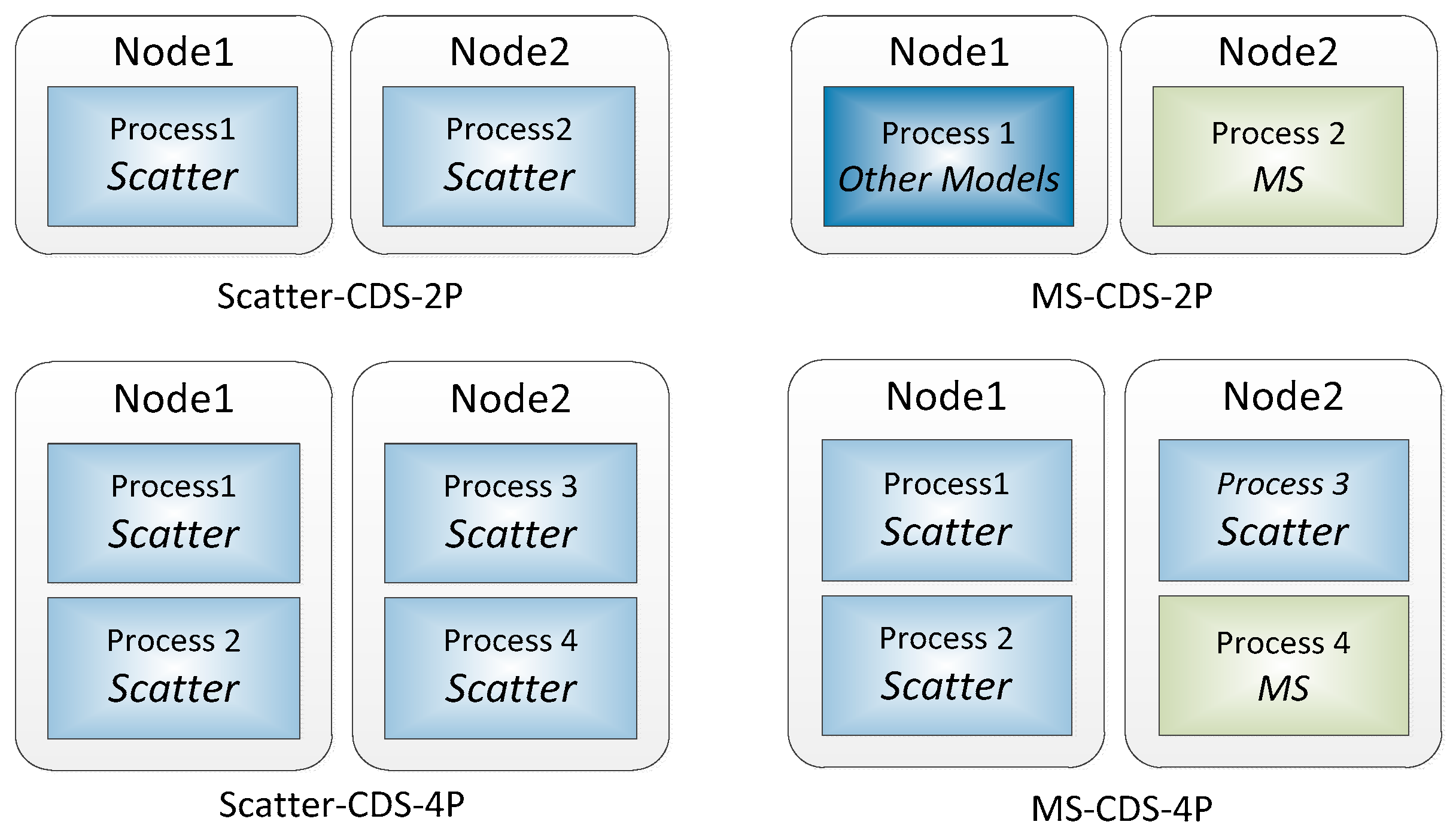

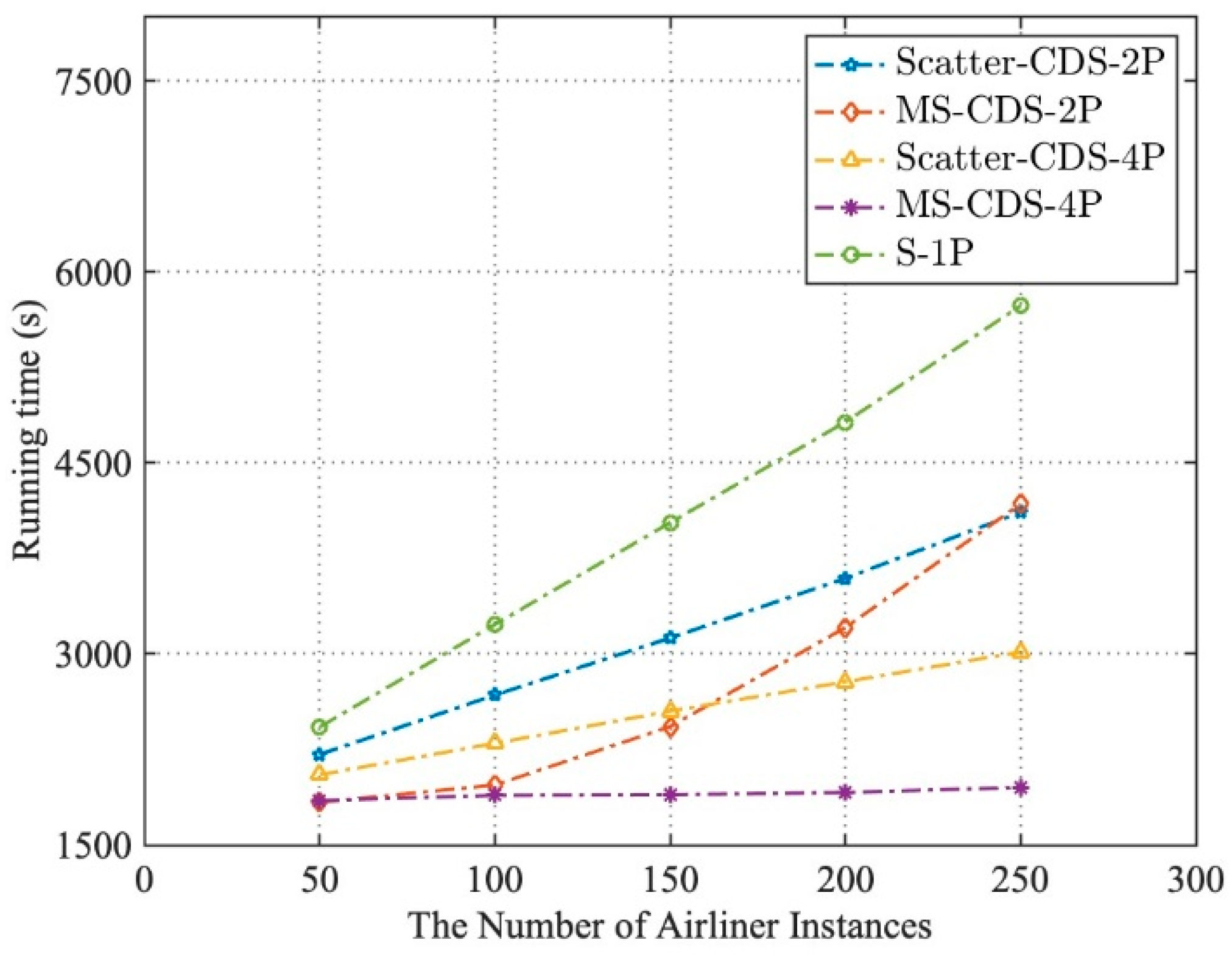

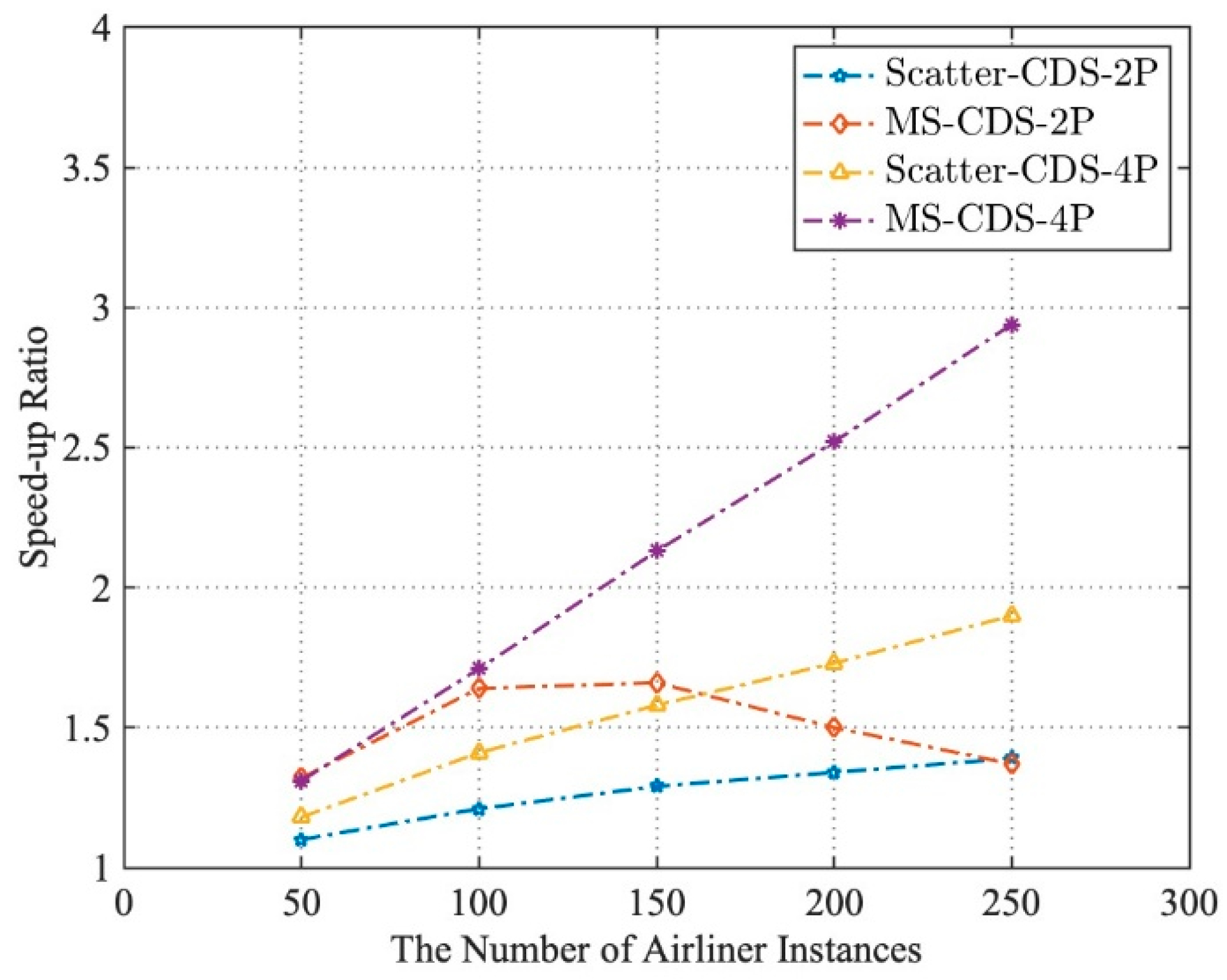

5.1.1. Cloud-Architecture-Based Distributed Simulation (CDS)

5.1.2. Model Servitization

5.1.3. Simulation Model Selection Method Based on Semantic Search

5.2. Discussion

6. Summary and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Angelica, S.; Emanuele, P.; Andrea, Z.P.S. The Role of Complex Analysis in Modelling Economic Growth. Entropy 2018, 20, 883. [Google Scholar]

- Martin, I.; Jan, C. A Behavioural Analysis of Complexity in Socio-Technical Systems under Tension Modelled by Petri Nets. Entropy 2017, 19, 572. [Google Scholar]

- Yiping, Y.; Gang, L. High-performance Simulation Computer for Large-scale System-of-Systems Simulation. Journal of System Simulation. J. Syst. Simul. 2011, 23, 1617–1623. [Google Scholar]

- Calheiros, R.N.; Ranjan, R.; Beloglazov, A.; Rose, C.A.F.D.; Buyya, R. CloudSim: A toolkit for modelling and simulation of cloud computing environments and evaluation of resource provisioning algorithms. Softw. Pract. Exp. 2011, 41, 23–50. [Google Scholar] [CrossRef]

- Simon, J.E.T.; Azam, K.; Katherine, L.M.; Andreas, T.; Levent, Y.; Justyna, Z.; Pieter, J.M. Grand challenges for modelling and simulation: Simulation everywhere—from cyber infrastructure to clouds to citizens. Simulation 2015, 91, 648–665. [Google Scholar]

- Feng, Z.; Yiping, Y.; Huilong, C. Reusable Component Model Development Approach for Parallel and Distributed Simulation. Sci. World J. 2014. [Google Scholar] [CrossRef]

- Sheng, B.; Zhang, C. Common intelligent semantic matching engines of cloud manufacturing service based on OWL-S. Int. J. Adv. Manuf. Technol. 2016, 84, 103–118. [Google Scholar] [CrossRef]

- Singh, S.; Chana, I. QRSF: QoS-aware resource scheduling framework in cloud computing. J. Supercomput. 2015, 71, 241–292. [Google Scholar] [CrossRef]

- Pujara, J. Knowledge Graph Identification. Int. Semant. Web Conf. 2013. [Google Scholar] [CrossRef]

- Yang, S.; Hanquan, D.; Minghua, L. Research on Simulation Composability and Reusability Based on SOA. J. Syst. Simul. 2014, 26, 1522–1526. [Google Scholar]

- Lee, H.; Yang, J.S.; Kang, K.C. Domain-oriented variability modeling for reuse of simulation models. Simulation 2014, 90, 438–459. [Google Scholar] [CrossRef]

- Jianbo, L.; Yiping, Y. Research on the Development Approach for Reusable Model inParallel Discrete Event Simulation. Discrete Dyn. Nat. Soc. 2015. [Google Scholar] [CrossRef]

- Yiping, Y.; Feng, Z. A Reusable Simulation Model Development and Usage Method. China Patent 1 0353755.5, 14 August 2013. [Google Scholar]

- Purohit, L.; Kumar, S. Web Service Selection using Semantic Matching. In Proceedings of the International Conference on Advances in Information Communication Technology & Computing, Bikaner, India, 12–13 August 2016. [Google Scholar]

- Wu, M.C.; Gu, J.Z. OWL-S Semantic Extension in the Dynamic Combination of Web services. Comput. Appl. Softw. 2007, 24, 69–71. [Google Scholar]

- Jiao, H.; Zhang, J.; Li, J.H.; Shi, J. Research on cloud manufacturing service discovery based on latent semantic preference about OWL-S. Int. J. Comput. Integr. Manuf. 2017, 30, 433–441. [Google Scholar] [CrossRef]

- Zhang, T.; Liu, Y.; Zha, Y. Semantic Web-based approach to simulation services dynamic discovery. Comput. Eng. Appl. 2007, 43, 15–19. [Google Scholar]

- Song, L.-L.; Li, Q. Research on simulation model description ontology and its matching model. Comput. Eng. Appl. 2008, 44, 6–12. [Google Scholar]

- Li, T.; Li, B.H.; Chai, X.D. Layered simulation service description framework oriented to cloud simulation. Comput. Integr. Manuf. Syst. 2012, 18, 2091–2098. [Google Scholar]

- Cheng, C.; Chen, A.Q. Study on Cloud Service Evaluation Index System Based on QoS. Appl. Mech. Mater. 2015, 742, 683–687. [Google Scholar]

- Tong, Z.; Yunsheng, L.; Yabing, Z. Optimal Approach to QoS-Driven Simulation Services Composition. J. Syst. Simul. 2009, 21, 4990–4994. [Google Scholar]

- Jiahang, L.; Junli, S.; Liqun, J. A QoS Evaluation Model for Cloud Computing. Comput. Knowl. Technol. 2010, 6, 8801–8803. [Google Scholar]

- Li, B.H.; Chai, X.; Hou, B.; Li, T.; Zhang, Y.B.; Yu, H.Y.; Tang, Z. Networked Modeling & Simulation Platform Based on Concept of Cloud Computing—Cloud Simulation Platform. J. Syst. Simul. 2009, 21, 5292–5299. [Google Scholar]

- Chen, T.; Chiu, M.C. Development of a cloud-based factory simulation system for enabling ubiquitous factory simulation. Robot. Comput. Integr. Manuf. 2017, 45, 133–143. [Google Scholar] [CrossRef]

- Jeanjacques, M.C. Web services Description Language (WSDL) Version 1.2; World Wide Web Consortium (W3C): Cambridge, MA, USA, 2003. [Google Scholar]

- Xu, Z.L.; Sheng, Y.P.; He, L.R.; Wang, Y.F. Review on Knowledge Graph Techniques. J. Univ. Electron. Sci. Technol. China 2016, 45, 589–606. [Google Scholar]

- Organization, T.M. Resource Description Framework (RDF). In Encyclopedia of Gis; Shekar, S., Xiong, H., Eds.; Spring: Berlin, Germany, 2004; pp. 6–19. [Google Scholar]

- Siqi, X.; Feng, Z.; Yiping, Y.; WenJie, T. A Description Method of Cloud Simulation Model Resources based on Knowledge Graph. In Proceedings of the 4th International Conference on Cloud Computing and Big Data Analytics, IEEE, Chengdu, China, 12–15 April 2019; pp. 655–663. [Google Scholar]

- You, M.; Wang, S.; Hung, P.C.K. A Highly Accurate Prediction Algorithm for Unknown Web Service QoS Values. IEEE Trans. Serv. Comput. 2017, 9, 511–523. [Google Scholar]

- Francis, N.; Green, A.; Guagliardo, P. Formal Semantics of the Language Cypher. arXiv 2018, arXiv:1802.09984. [Google Scholar]

| Experimental Parameters | Description/Value |

|---|---|

| Number of airliners | (50, 250) |

| Number of airport runways | 5 |

| Number of air traffic control centers | 1 |

| Scheduling policy | Punctuality prioritized |

| Simulation run time | 1008 |

| Model distribution mode | Scatter, model servitization |

| Degree of parallelism | 1, 2, 4 |

| QoS1 [0,100] | QoS2 [0,100] | QoS3 [0,1] | QoS4 [0,1] | QoS5 [0,10] | Q | |

|---|---|---|---|---|---|---|

| ATC-A | 85 (0.85) | 83 (0.83) | 0.98 | 0.9 | 9 (0.9) | 0.873 |

| ATC-B | 65 (0.65) | 55 (0.55) | 0.96 | 0.92 | 9 (0.9) | 0.733 |

| ATC-C | 92 (0.92) | 52 (0.52) | 0.95 | 0.92 | 10 (1) | 0.931 |

| ATC-D | 71 (0.71) | 70 (0.7) | 0.97 | 0.93 | 10 (1) | 0.787 |

| ATC-E | 74 (0.74) | 65 (0.65) | 0.95 | 0.91 | 9 (0.9) | 0.794 |

| Ordered candidate set: {ATC-C, ATC-A, ATC-E, ATC-D, ATC-B} | ||||||

| QoS1 [0,100] | QoS2 [0,100] | QoS3 [0,1] | QoS4 [0,1] | QoS5 [0,10] | Q | |

|---|---|---|---|---|---|---|

| ATC-A | 85 (0.85) | 83 (0.83) | 0.98 | 0.9 | 9 (0.9) | 0.866 |

| ATC-B | 65 (0.65) | 55 (0.55) | 0.96 | 0.92 | 9 (0.9) | 0.698 |

| ATC-C | 92 (0.92) | 52 (0.52) | 0.95 | 0.92 | 10 (1) | 0.847 |

| ATC-D | 71 (0.71) | 70 (0.7) | 0.97 | 0.93 | 10 (1) | 0.7835 |

| ATC-E | 74 (0.74) | 65 (0.65) | 0.95 | 0.91 | 9 (0.9) | 0.752 |

| Ordered candidate set: {ATC-A, ATC-C, ATC-D, ATC-E, ATC-B} | ||||||

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Xiong, S.; Zhu, F.; Yao, Y.; Tang, W.; Xiao, Y. Service-Oriented Model Encapsulation and Selection Method for Complex System Simulation Based on Cloud Architecture. Entropy 2019, 21, 891. https://doi.org/10.3390/e21090891

Xiong S, Zhu F, Yao Y, Tang W, Xiao Y. Service-Oriented Model Encapsulation and Selection Method for Complex System Simulation Based on Cloud Architecture. Entropy. 2019; 21(9):891. https://doi.org/10.3390/e21090891

Chicago/Turabian StyleXiong, Siqi, Feng Zhu, Yiping Yao, Wenjie Tang, and Yuhao Xiao. 2019. "Service-Oriented Model Encapsulation and Selection Method for Complex System Simulation Based on Cloud Architecture" Entropy 21, no. 9: 891. https://doi.org/10.3390/e21090891

APA StyleXiong, S., Zhu, F., Yao, Y., Tang, W., & Xiao, Y. (2019). Service-Oriented Model Encapsulation and Selection Method for Complex System Simulation Based on Cloud Architecture. Entropy, 21(9), 891. https://doi.org/10.3390/e21090891