1. Introduction

Classification is one of the most important tasks in machine learning. The basic problem of supervised classification is the induction of a model with feature set

that classifies testing instance (example)

into one of the several class labels

of class variable

C. Bayesian network classifiers (BNCs) have many desirable properties over other numerous classification models, such as model interpretability, the ease of implementation, the ability to deal with multi-class classification problems and the comparable classification performance [

1]. A BNC or

B assigns the most probable label with the maximum posterior probability to

x by calculating the posterior probability for each class label that is:

where class label

.

Although unrestricted BNCs are the least biased, the search-space that is needed to train such a model increases exponentially with the number of features [

2]. The arising complexity issues limit the study of unrestricted BNCs and it has led to the study of restricted BNCs, from 0-dependence naive Bayes (NB) [

3,

4,

5], 1-dependence tree-augmented naive Bayes (TAN) [

6] to

k-dependence Bayesian classifier (KDB) [

7]. These classifiers take class variable as the common parent of all predictive features and use different learning strategies to explore the conditional dependence among features. KDB has numerous desirable characteristics in structure learning. For example, it has satisfactory classification accuracy while dealing with large quantities of data [

2]. In addition, KDB uses a single parameter,

k, to set the maximum number of parents for any feature and thus controls the structure complexity. KDB first determines the feature order by comparing MI. Suppose that the order is

, then

can select at most

k, or more precisely

, features as parents from its candidates

. These parents correspond to the

largest CMI values.

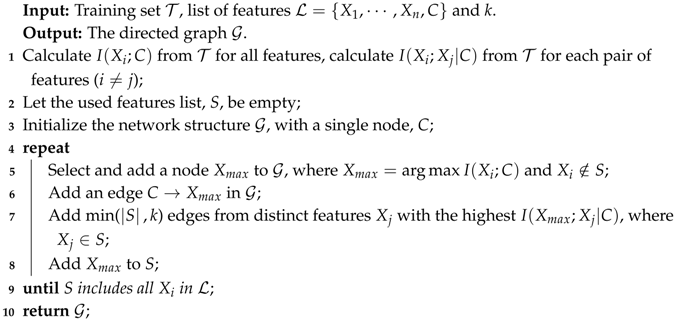

Figure 1 shows two examples, i.e., K

DB (KDB with

) and K

DB (KDB with

). Suppose that

, then the feature order is

. If

,

in K

DB chooses

as its only parent and

in K

DB chooses

as its parents from candidates

.

There are two basic kinds of dependencies in restricted BNCs: (1) direct dependence between feature

and

C that can be quantified by mutual information (MI)

, and (2) conditional dependence between

and

given

C that can be measured by conditional mutual information (CMI)

. Many researchers have exploited methods, such as filter and wrapper [

8,

9,

10,

11,

12,

13], to select direct dependencies by removing redundant features. The filter approach operates independently of any learning algorithms that rank the features by some criteria and omit all features that do not achieve a sufficient score [

14,

15,

16]. The wrapper approach evaluates the feature subsets every time and may produce better results. For example, Backwards Sequential Elimination (BSE) [

17] uses a simple heuristic wrapper approach that seeks a subset of the available features that minimizes 0–1 loss on the training set. Forward Sequential Selection (FSS) [

18] uses the reverse search direction to BSE. Although the filter and wrapper approaches have proved to be beneficial in domains with highly correlated features, the learning procedure ends only when there is no accuracy improvement, thus they are expensive to run and can break down with very large numbers of features [

8,

19,

20]. Suppose that we need to select

m from

n features for classification, BSE or FSS will construct

or

candidate BNCs to judge if there exist non-significant features or direct dependencies. It is even more difficult for BSE or FSS to select the conditional dependencies. For example, the network topology of KDB consists of

conditional dependencies [

21]. If BSE or FSS evaluate them one by one to identify those relatively non-significant ones, the high computational overheads is almost unbearable and few approaches are proposed to address this issue.

Obviously, how to efficiently identify non-significant direct and conditional dependencies are two key issues to learn BNC. Strictly speaking, there exist no direct or conditional independence due to the fact that the MI and CMI values are non-negative. However, weak dependencies, if introduced into the network topology, will result in overfitting and classification bias. For KDB, all features are indiscriminately conditionally dependent on at most

k parent features even if the conditional dependencies are very weak. Discarding these redundant features or weak conditional dependencies can help increase structure reliability and avoid overfitting.

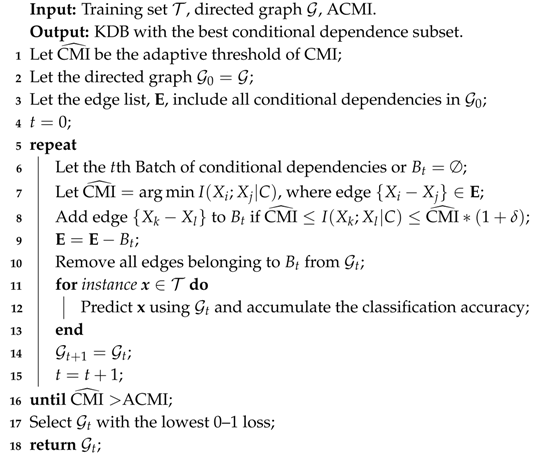

Figure 2 presents the distributions of MI and CMI values for K

DB (KDB with

k = 2) on dataset

Connect-4, which has 67,557 instances (or examples), 42 features and three classes. As shown in

Figure 2a, there exist minor differences among some MI values, thus the significance of corresponding direct dependencies is almost the same and they can be treated in batch. From

Figure 2b, the same also applies to CMI and corresponding conditional dependencies.

The filter approaches have computational efficiency while the wrapper approaches may produce better results. The algorithm proposed combines the characteristics of filter with wrapper approaches to exploit the complementary strengths. In this paper, we propose to group the direct (or conditional) dependencies into different batches using adaptive thresholds. We assume that there exists no significant difference between the MI (or CMI) values in the same batch. Then, the basic idea of filter and wrapper will be applied to a select batch rather than single dependence for each iteration. This learning strategy can help achieve much higher efficiency compared to BSE (or FSS) while retaining competitive classification performance, and above all it provides a feasible solution for selecting conditional dependencies, the number of which increases exponentially as the number of features increases.

We investigate two extensions to KDB, MI-based feature selection and CMI-based dependence selection based on a novel adaptive thresholding method. The final BNC, Adaptive KDB (AKDB), evaluates the subsets of features and conditional dependencies using leave-one-out cross validation (LOOCV). In the remaining sections, we prove that applying feature selection and dependence selection techniques to KDB can alleviate the potential redundancy problem. We present extensive experimental results, which prove that AKDB significantly outperforms several other state-of-the-art BNCs in terms of 0–1 loss, root mean squared error (RMSE), bias and variance.

2. Restricted Bayesian Network Classifiers

For convenience, except for the algorithm names, all the used acronyms in this work are listed in

Table 1. The structure of BNC can be described as a directed acyclic graph [

22]. Nodes in structure represent the class variable

C or features, edge

denotes probabilistic dependency relationship between these two features and

is one of the immediate parent nodes of

. Thus, in a restricted BNC or

B, class variable

C is required as the common parent of all features and does not have any parents so the individual probability of

C is

. We use

to denote the individual probability of feature

, where

denotes the set of values of

’s parents. The joint probability distribution can be calculated as the product of

of all features and

that is:

Unfortunately, the inference of an unrestricted BNC has been proved to be an NP-hard problem [

23,

24] and learning a restricted or pre-fixed BNC is one approach to deal with the intractable complexity. For example, NB [

25,

26] is the simplest classifier among restricted BNCs that assumes each feature is conditionally independent given the class variable

C.

Since, in the real world, the dataset usually does not satisfy the independence assumption, this may cause a deterioration of the classification performance. KDB alleviates the independence assumption of NB that it constructs classifiers which allow feature

within BNC to have at most

k parent features. KDB firstly sets the feature order by comparing MI values and then calculates CMI values as the weights to measure the conditional relationship between features given

C and select at most

k parent features for one feature. MI and CMI are defined as follows:

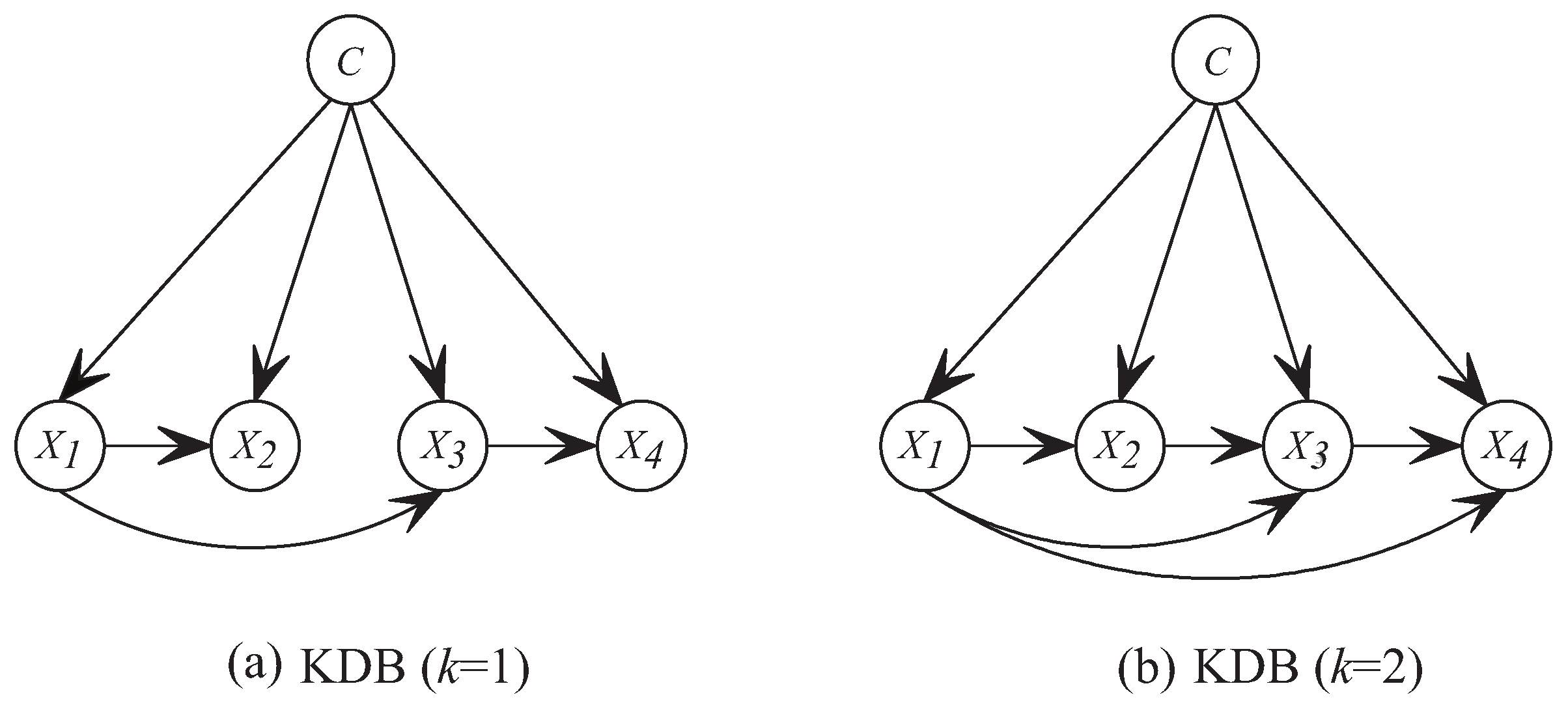

For KDB, measures the direct dependence between and C. measures the conditional dependence between and given C. For a given training set with n features and the parameter k, KDB firstly calculates MI and CMI. Suppose that the feature order is by comparing MI, will choose ) features with the highest CMI values from the first candidates. The structure learning procedure of KDB is depicted in Algorithm 1.

There have been some refinements that may improve KDB’s performance. Rodríguez and Lozano [

27] proposed to extend KDB to a multi-dimensional classifier, which learned a population of classifiers (nondominated solutions) by a multi-objective optimization technique and the objective functions for the multi-objective approach are the multi-dimensional

k-fold cross-validation estimations of the errors. Louzada [

28] proposed to generate multiple KDB networks via a naive bagging procedure by obtaining the predicted values from the adjusted models, and then combine them into a single predictive classification.

| Algorithm 1: Structure learning procedure of KDB: LearnStructure(, , k) |

![Entropy 21 00665 i001 Entropy 21 00665 i001]() |

3. Adaptive KDB

MI and CMI are non-negative in Equation (

3).

(or

) if

and

C are independent (or

and

are conditionally independent given

C). If

and

C are regarded as independent, the edge connecting them will be removed. Practically, the estimated MI is compared to a small threshold, in order to distinguish pairs of dependent and pairs of independent features [

29,

30,

31,

32]. In the following discussion, we mainly discuss how to choose the threshold of MI. The test for conditional independence using CMI is similar.

To refine the network structure, AKDB uses an adaptive threshold to filter out those non-significant dependencies. If the threshold is high, too many dependencies will be identified as non-significant and removed, and a sparse network may underfit the training data. In contrast, if the threshold is low, few dependencies will be identified as non-significant and a dense network may overfit the training data. The thresholds control AKDB’s bias-variance trade-off and, if appropriate thresholds are predefined, the lowest error will be achieved as this is a complex interplay between structure complexity and classification performance. Unfortunately, for different training datasets, the thresholds may differ and there are no formal methods to preselect the thresholds.

To guarantee satisfactory performance and overcome exhaustive experimentation, for KDB, given the feature order selected by KDB based on MI comparison, if feature

is assumed to be independent of

C when

, it will be at the end of the order and the edge

will be removed. Furthermore,

may be dependent on other features, whereas no feature depends on it. That is,

will be irrelevant to classification directly or indirectly. The problem of choosing the threshold of MI turns to choosing a feature subset. Many feature selection algorithms are based on forward selection or backwards elimination strategies [

18,

33]. They start with either an empty set of features or a full set of features, and then only one feature is added to BNC or removed from BNC for each iteration. Feature selection is a complex task that the search space for

n features is

. Thus, it is impractical to search the whole space exhaustively, unless

n is small. Our proposed algorithm, AKDB, extends KDB to adaptively select a threshold of MI and the threshold can help remove more than one feature at each step.

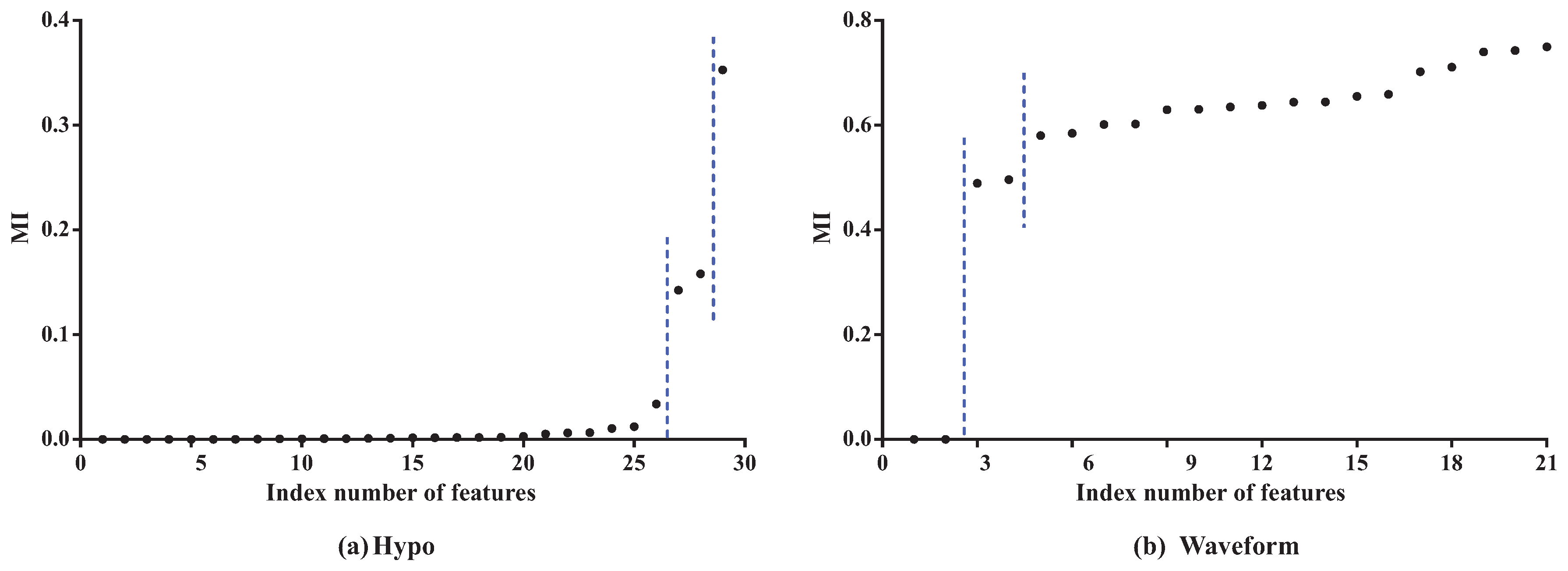

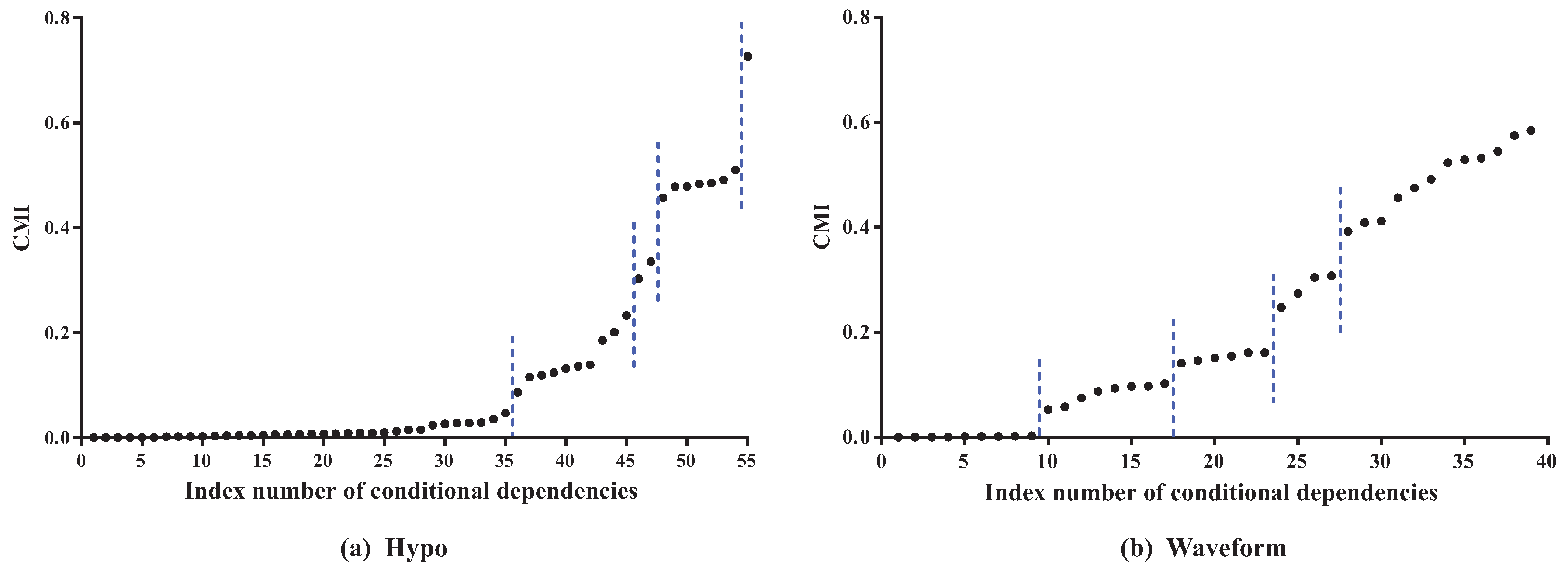

To clarify the basic idea, we take datasets

Hypo and

Waveform for a case study. Dataset

Hypo has 3772 instances, 29 features and four classes. Dataset

Waveform has 100,000 instances, 21 features and three classes. Corresponding MI values (see details in

Table A1 and

Table A2 in the

Appendix A) and CMI values (see details in

Table A3 and

Table A4 in the

Appendix A) for K

DB are, respectively, presented in

Figure 3 and

Figure 4.

As

Figure 3 shows, the features can be divided into different parts according to the distribution of MI values. In dataset

Hypo, we can see that the difference in MI values of the first 26 features is not obvious and that these features can be grouped into one part. The 27th and 28th features can be grouped into another part, and the 29th feature is the last part. On dataset

Waveform, the features can also be divided into three parts. The distribution of CMI values is similar. As

Figure 4 shows, the CMI values on datasets

Hypo and

Waveform are both divided into five groups. The difference in MI values in the same part should be non-significant and, if the MI values are small, corresponding features can be identified as redundant for classification and removed from BNC. The test for redundant conditional dependencies is similar. From

Figure 3 and

Figure 4, we can see that the thresholds for identifying redundancy differ greatly for different datasets. Thus, a threshold that maximizes a performance measure should be adapted to different datasets.

and

are introduced as the adaptive thresholds of redundant features and redundant conditional dependencies, respectively. AMI and ACMI respectively denote the average MI and the average CMI, which are defined as follows, and are introduced in this paper as the benchmark thresholds to distinguish between strong and weak dependencies:

where

is the size of

,

and

denotes the cardinality of feature set

and feature subset

F. To guarantee satisfactory performance and overcome exhaustive experimentation, we require that AMI >

and ACMI >

hold.

AKDB applies the greedy-search strategy to iteratively identify redundant and near-redundant features. For feature selection, we take advantage of the feature order that is determined by comparing MI. For simplicity, we adaptively provide the threshold value of MI that cuts off an entire region at the end of the order. Let

be a user-specified parameter,

(see detail in

Section 4.1). We suppose that the difference between features

and

is non-significant if

. Correspondingly, we regard the feature

as near-redundant if

is redundant and the difference between features

and

is non-significant. Given the feature order, AKDB firstly selects the feature, e.g.,

, at the end of the order and identifies it as a redundant feature. Then, we identify the near-redundant features. Finally, LOOCV is introduced to evaluate the classification performance after removing redundant and near-redundant features as it can provide the out-of-sample error with an unbiased low-variance estimation. In addition, the 0–1 loss is used as a loss function since it is an effective measure to evaluate the quality of a classifier’s classification performance.

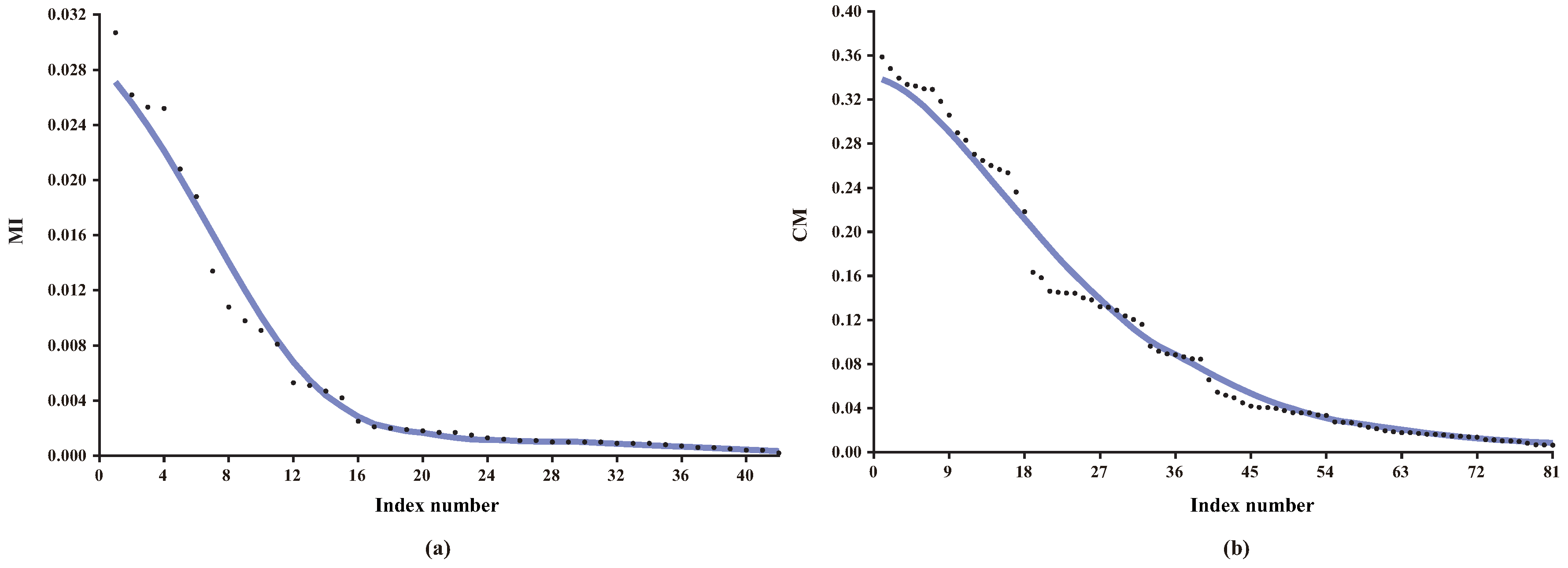

Finally, the feature subset is selected with the lowest 0–1 loss. In case of a draw, preference is given to the smallest number of features. If the MI values are distributed densely, then all redundant and near-redundant features can be identified only in a few iterations. After that, the greedy-search strategy is applied to identify redundant and near-redundant conditional dependencies. In this paper, we proposed to extend KDB by using information-threshold based techniques, (FS) and (DS), to respectively identify redundant features and redundant conditional dependencies. Both techniques are based on backward elimination that begins at the full set of features or conditional dependencies.

The learning procedure of FS is shown in Algorithm 2. By applying BSE, FS aims to seeks a subset of the available features that minimizes 0–1 loss on the training set. FS starts from the full set of features and corresponding MI values have been grouped into several batches. There should exist significant differences between the MI values in different batches. Suppose that, for successive batches and , for any and for any . In this paper, FS requires that, for batches and , the criterion holds, or for batch the criterion holds. BSE operates by iteratively removing successive batches. Then, the threshold of MI, or , will change from to if the removal can help reduce the 0–1 loss. The features in the batch or corresponding direct dependencies will be removed from the network structure and the classification performance will be evaluated iteratively using LOOCV. This procedure will terminate if there is no 0–1 loss improvement or > AMI.

When the learning procedure of FS terminates, DS is applied to identify non-significant conditional dependencies and its learning procedure is similar except that CMI rather than MI values will be grouped into several batches and we need to remove batch of CMI values iteratively to improve 0–1 loss. The learning procedure of DS is shown in Algorithm 3.

The description of a complete AKDB algorithm, which includes FS and DS techniques, is shown in Algorithm 4. Both FS and DS firstly apply the filter approach to rank feature or conditional dependence by MI or CMI criteria, then use the wrapper approach to evaluate the feature subset or dependence subset every time for better 0–1 loss results.

| Algorithm 2: FeatureSelection(, BN, , AMI) |

![Entropy 21 00665 i002 Entropy 21 00665 i002]() |

| Algorithm 3: DependenceSelection(, , ACMI) |

![Entropy 21 00665 i003 Entropy 21 00665 i003]() |

| Algorithm 4: AKDB |

Input: Training set with features and k.

Output: AKDB model.

1 Calculate from for each feature and AMI;

2 Calculate from for every pair of features and ACMI;

3 Let be a list which includes all in decreasing order of ;

4 Initialize the network structure = LearnStructure(); // Algorithm 1

5 = FeatureSelection(, AMI); // Algorithm 2

6 = DependenceSelection( ACMI); // Algorithm 3

7 return ;

|

4. Experiments

We conduct the experiments on 30 benchmark datasets from UCI (University of California, Irvine) machine learning repository [

34]. The detailed characteristics of these datasets are described in

Table 2, which includes the number of instance, feature and class. The datasets are divided into two categories—first, small datasets with number of instances ≤3000; second, large datasets with number of instances >3000. Numeric features, if they exist in a dataset, are discretized based on Minimum Description Length (MDL) [

35]. Missing values are considered as a distinct value and the

m-estimation with

[

36] is employed to smooth the probability estimates.

The following algorithms are compared:

NB, standard Naive Bayes.

TAN, tree-augmented naive Bayes.

NB-FSS, selective Naive Bayes classifier with forward sequential selection.

KDB, standard k-dependence Bayesian classifier with k = 1.

KDB, standard k-dependence Bayesian classifier with k = 2.

AKDB, KDB with feature selection and conditional dependence selection based on adaptive thresholding.

The classification accuracy of algorithms are compared in terms of 0–1 loss and RMSE, and the results of them are respectively presented in

Table A5 and

Table A6. The bias and variance results are respectively provided in

Table A7 and

Table A8 because the bias-variance decomposition can provide valuable insights into the components of the error of learned algorithms [

37,

38]. Note that only 13 large datasets are selected because of statistical significance in terms of bias-variance comparison.

4.1. Selection of the Value of Parameter for AKDB

Removing redundant features or conditional dependencies from BNC may positively affect its classification performance if the threshold value

is selected appropriately. However, there is no priori work that can achieve this goal. We perform an empirical study to select an appropriate

. The 0–1 loss results for all datasets with different

values are presented in

Table 3. We can see that AKDB achieves the lowest 0–1 loss results more often when

. Although on some datasets AKDB with

may perform relatively poorer, the difference between the 0–1 loss when

and the lowest 0–1 loss is not significant (less than

). For example, on dataset

Splice-C4.5, AKDB achieves the lowest 0–1 loss (0.0468) when

, and when

the 0–1 loss is 0.0469. From the experimental results, we argue that

is appropriate to help identify the threshold efficiently.

4.2. Effects of Feature Selection and Conditional Dependence Selection on KDB

FS and DS are two information-threshold based techniques which are used in the proposed algorithm AKDB. Using these techniques will cause a portion of features and conditional dependencies to be removed. To prove that they can work severally, we present respectively two versions of KDB as follows:

KDB-FS, KDB with only feature selection,

KDB-DS, KDB with only conditional dependence selection.

In order to evaluate the difference between two classifiers, we define the relative ratio as follows:

The values of parameter M represents different measures. Corresponding values of represent the difference in percentage between two classifiers A and B based on parameter M.

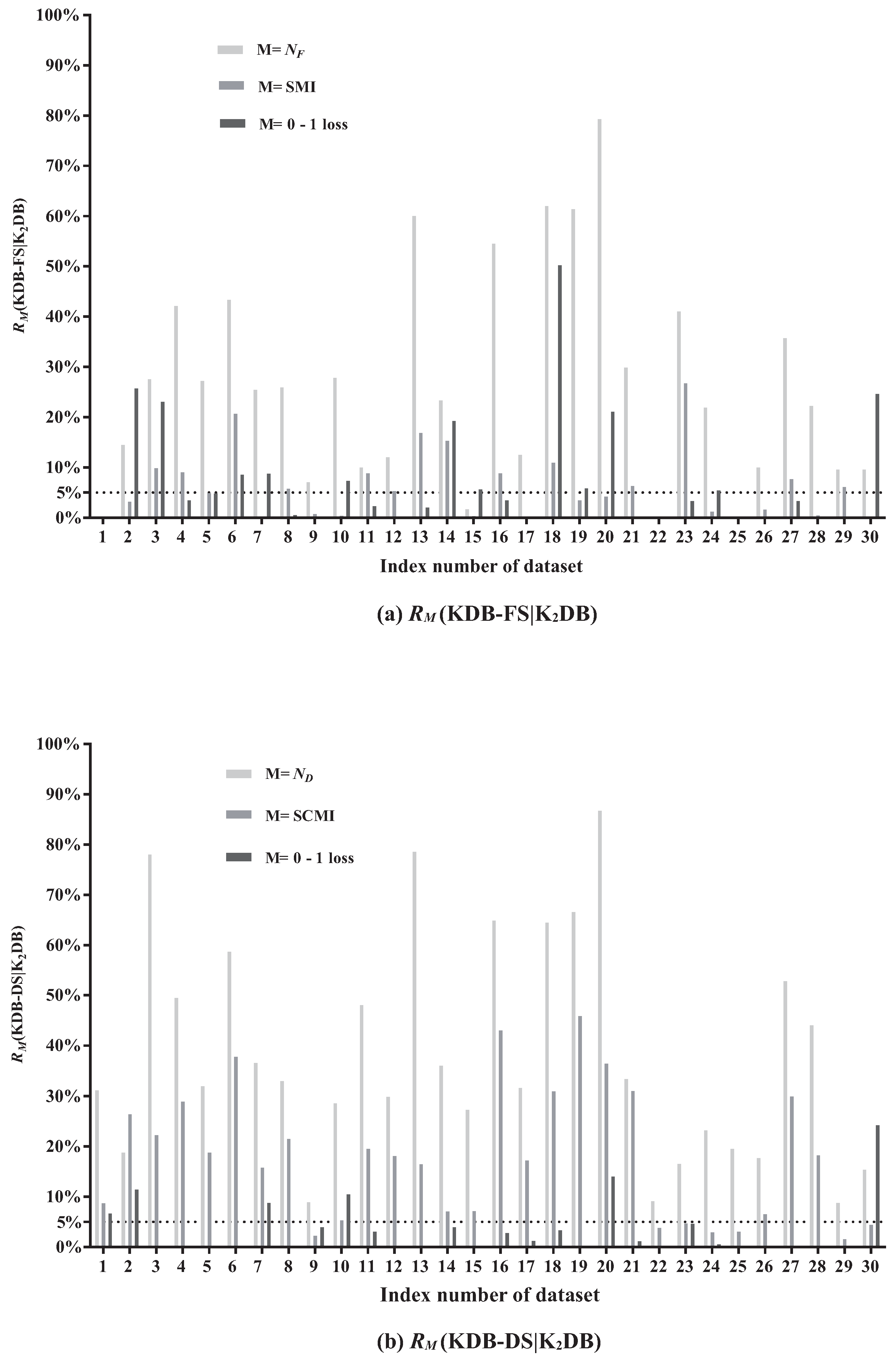

In this paper,

and

are respectively used to denote the number of features and the number of conditional dependencies in BNC. SMI and SCMI are used to indicate the sum of MI and CMI in BNC, respectively. The results of

(KDB-FS|K

DB) and

(KDB-DS|K

DB) are shown in

Figure 5a,b, respectively.

Figure 5a presents relative ratios between KDB-FS and K

DB in terms of

, SMI and 0–1 loss. The effectiveness of FS can be demonstrated by comparing the SMI values before and after removing redundant features. From

Figure 5a, FS removes features on 27 out of 30 datasets. The larger the value of

(KDB-FS|K

DB), the more features that are identified as redundant and removed. We can see that the values of

(KDB-FS|K

DB) on five datasets are greater than 50%. For example, on dataset

Hypo (No. 20),

(KDB-FS|K

DB) = 79.31%, indicating that 79.31% of features are identified as redundant and removed. The AMI value on dataset

Hypo with 29 features is 0.0251 and only three features have MI values greater than the AMI value. In addition, 23 of these 29 features have MI values lower than 0.007 and they are iteratively removed from KDB according to the greedy-search strategy. Thus, the significant difference in MI values contributes to this high value of

(KDB-FS|K

DB). Furthermore, removing features based on the FS technique will not result in strong direct dependencies to be removed. For example, the value of

(KDB-FS|K

DB) on datasets

Hypo is 4.14%, although 79.31% of features are removed. On dataset

Wavement (No. 30), the value of

(KDB-FS|K

DB) is close to 0% after removing 9.52% of features. These facts suggest that those removed features in KDB show weak direct dependencies. In addition, removing weak direct dependencies may help improve the classification performance. The values of

(KDB-FS|K

DB) on datasets

Hypo and

Wavement are 21.05% and 24.61%, respectively. That is, the classification performance is improved after removing the weak direct dependencies. The significant improvement in 0–1 loss (the value of

(•) > 5%) on 12 datasets has demonstrated that the FS technique demonstrates a positive influence on classification performance.

The redundancy of conditional dependencies may also exist in KDB.

Figure 5b presents the relative ratios between KDB-DS and K

DB in terms of

, SCMI and 0–1 loss. The comparison of the SCMI values before and after removing redundant conditional dependencies can demonstrate the effectiveness of DS. When DS is applied to KDB, the selection of conditional dependencies occurs on all 30 datasets. The value of

(KDB-DS|K

DB) ranges from 8.77% to 86.72%. The larger the value of

(KDB-DS|K

DB), the more conditional dependencies that are identified as redundant and removed. For example, the value of

(KDB-DS|K

DB) is 78.51% on dataset

Credit-a. It indicates that on average only 5.8 of 27 conditional dependencies are retained. Furthermore, 24 of all 27 CMI values are lower than the ACMI value (0.1592), and even the minimum CMI value is 0.0189. The difference in CMI values on dataset

Credit-a is obvious; by applying the DS technique with the greedy-search strategy, weak conditional dependencies are iteratively removed. The value of

(KDB-DS|K

DB) ranges from 1.54% to 45.83%. The high value of

(KDB-DS|K

DB) does not indicate that the strong conditional dependencies are removed. On dataset

Hypo,

(KDB-DS|K

DB) = 36.40%. The factor that contributes to this high value is that the SCMI value of these 48 removed conditional dependencies reaches 2.3355, but the CMI value of each removed conditional dependence is lower than the ACMI value. When it comes to 0–1 loss, the value of

(KDB-DS|K

DB) on dataset

Hypo is 14.04%. It indicates that deleting those weak conditional dependencies may help improve classification accuracy. KDB-DS achieves almost the same classification accuracy as K

DB with a simplified network structure on 14 datasets and achieves 0–1 loss improvement on 16 datasets. These results indicate that the DS technique is effective and can help reduce the structure complexity of KDB.

Both FS and DS techniques combine the characteristics of filter and wrapper approaches. The redundant features or conditional dependencies are filtered out and then we use classification accuracy to evaluate the feature subsets or the retained conditional dependencies, respectively. On the other hand, removing redundant features and conditional dependencies can reduce the parameters that are needed for probability estimates and may improve the classification accuracy. From the above discussion, we can see that both FS and DS techniques are efficient and can help improve the classification performance.

4.3. Comparison of AKDB vs. NB, NB-FSS, TAN, KDB and KDB

In this section, we conduct comparisons for related algorithms in terms of 0–1 loss, RMSE and bias-variance decomposition. RMSE [

2] is computed as:

where

t is the number of training instances in training set

,

is the true class label for the instance

, and

is the estimated posterior probability of the true class given

.

The win/draw/loss (W/D/L) records of 0–1 loss, RMSE and bias-variance decomposition are presented in

Table 4,

Table 5 and

Table 6, respectively. The W/D/L record of the comparison results of every two different algorithms are presented in each cell

of every table. When one algorithm in row

i (

) and the another algorithm in column

j (

) are compared, we can observe which algorithm performs better on all datasets from cell

. This is because, in cell

, a win denotes that

obtains a lower 0–1 loss than

, a loss denotes

that obtains a lower 0–1 loss than

, and a draw denotes that

and

perform comparably. We regard a difference as significant if the outcome of a one-tailed binomial sign test is less than 0.05 [

39,

40].

From

Table 4, we can see that NB-FSS performs better than NB in terms of a 0–1 loss. It indicates that FSS is feasible to NB. Surprisingly, K

DB does not have an obvious advantage when compared to 1-dependence classifiers. In addition, it even performs poorer when compared to TAN. However, when it comes to large datasets, as

Table 7 shows, K

DB performs better than both TAN and K

DB. We can see that AKDB significantly outperforms all other algorithms. Most importantly, when compared to K

DB, AKDB has a 0–1 loss improvement with 15 wins and only one loss, which proves that the proposed two information-threshold based techniques are effective. This advantage is even greater on small datasets. From

Table A5, AKDB never loses on small datasets and it obtains a significantly lower 0–1 loss on 11 out of 17 small datasets. On dataset

Lymphography, the error is substantially reduced from 0.2365 to 0.1554. Compared to K

DB on large datasets, AKDB achieves W/D/L record of 4/8/1. Although the improvement is not significant, AKDB only loses on dataset

Spambase. Based on these facts, we argue that AKDB is a more effective algorithm in terms of 0–1 loss.

What is revealed in

Table 5 is similar to that in

Table 4. NB and NB-FSS perform worse, which demonstrates the limitations of the independence assumption in NB. TAN and K

DB get better performance than NB and NB-FSS. In addition, AKDB still achieves lower RMSE significantly more often than the other five algorithms. On average, 72.4% of the features and 59.6% of conditional dependencies are selected to build the network structure of AKDB, although in some cases the improvement in terms of RMSE is not significant. Considering that AKDB has significantly lower 0–1 loss and RMSE in comparison to other algorithms, we argue that the FS technique in tandem with the DS technique used in the proposed algorithm is powerful to improve classification accuracy.

The W/D/L records of bias-variance decomposition are presented in

Table 6. We may observe that NB and NB-FSS achieve higher bias and lower variance significantly more often than the other algorithms because their structures are definite without considering the true data distribution. TAN, K

DB and K

DB are all low-bias and high-variance learners because they are derived from higher-dimensional probability estimates. Thus, these classifiers are more sensitive to the changes in the training data. AKDB performs best in terms of bias. When compared to K

DB and K

DB, AKDB obtains lower bias more often than them, as jointly applying both FS and DS to KDB can simplify the network structure. Furthermore, we can observe that AKDB shows an advantage over K

DB in variance. The average of variance of K

DB and AKDB are 0.045 and 0.025 on 13 large datasets, respectively. Based on these facts, we argue that the proposed AKDB is more stable for classification.

4.4. Tests of Significant Differences

Friedman proposed the Friedman test [

41] for comparisons of multiple algorithms over multiple datasets. It first calculates the ranks of algorithms for each dataset separately, and then compares the average ranks of algorithms over datasets. The best performance algorithm getting the rank of 1, the second best rank of 2, and so on. The null-hypothesis is that there is no significant difference in terms of average ranks. The Friedman test is a non-parametric measure which can be computed as follows:

where

N and

t respectively denote the number of datasets and the number of algorithms, and

is the average rank of the

j-th algorithm. With the 30 datasets and 6 (

t = 6) algorithms, the critical value of

for

with (

t− 1) degrees of freedom is 11.07. The Friedman statistics

of experimental results in

Table A5 and

Table A6 are respectively 36.56 and 22.90, which are larger than

, 11.07. Hence, we reject all the null-hypothesis.

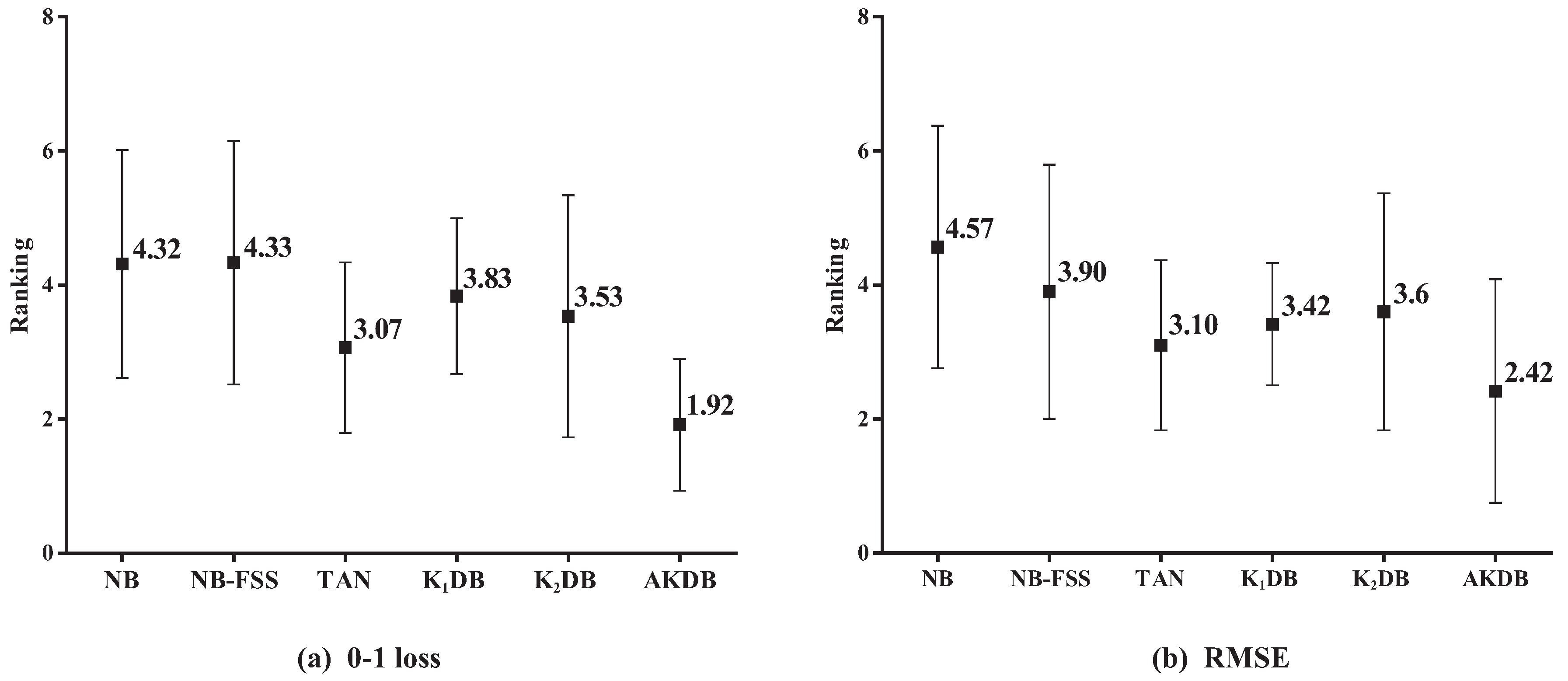

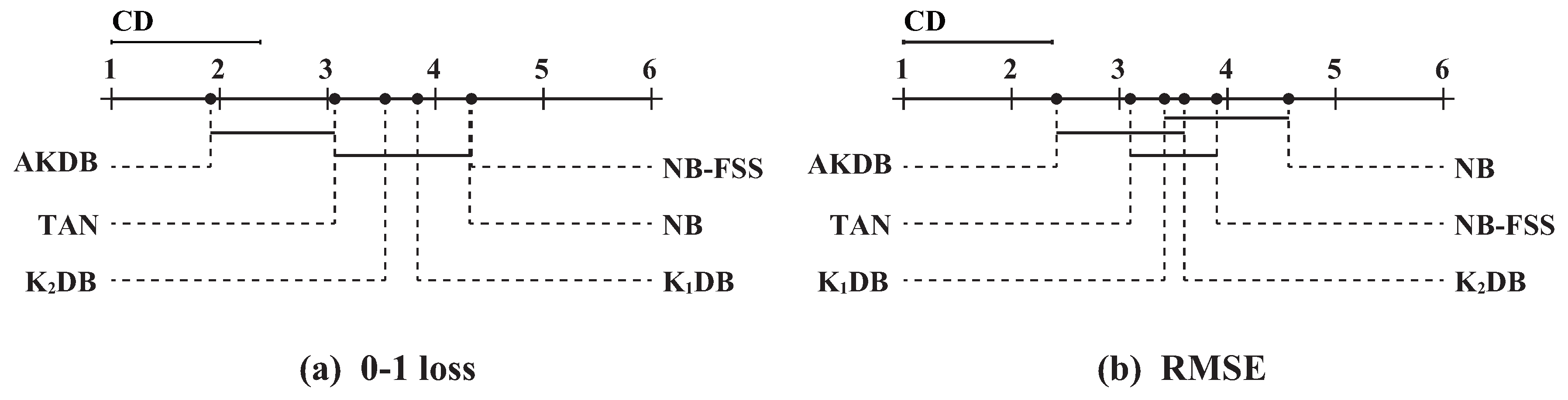

Figure 6 presents the results of average ranking in terms of 0–1 loss and RMSE for six algorithms. The average ranks of different algorithms based on 0–1 loss on all datasets are, respectively, {NB(4.32), NB-FSS(4.33), K

DB(3.07), TAN(3.83), K

DB(3.53), and AKDB(1.92)}. That is, the ranking of AKDB is higher than that of other algorithms, followed by TAN, K

DB, K

DB, NB, and NB-FSS. When assessing performance using RMSE, AKDB still obtains the advantage of ranking with the lowest average rank, i.e., 2.42.

In order to determine which algorithm has a significant difference to others, we further employ the Nemenyi test [

42]. The comparisons of six algorithms against each other with the Nemenyi test on 0–1 loss and RMSE are shown in

Figure 7. Critical difference (CD) is also presented in the figure that is calculated as follows:

where the critical value

for

and

is 2.85. With the 30 (

N = 30) datasets and six algorithms, CD =

1.377. On the top dotted line, we plot the algorithms based on their average ranks, which are indicated on the top solid line. On a line, the lower rank is to the more leftward position and the algorithm on the left side has better performance. The algorithms are connected by a line if their differences are not significant.

As shown in

Figure 7a, these algorithms are divided into two groups clearly in terms of 0–1 loss. One group includes AKDB and TAN, and other algorithms are in another group. AKDB ranks first although it does not have a significant advantage when compared to TAN. AKDB enjoys a significant 0–1 loss advantage relative to K

DB, K

DB, NB and NB-FSS, proving the effectiveness of the proposed information-threshold based techniques in our algorithm. As shown in

Figure 7b, when RMSE is compared, AKDB still achieves lower mean ranks than the other algorithms, although the differences between AKDB, K

DB, K

DB are not significant.