1. Introduction

Human gestures are non-verbal corporal actions that are used to transmit information and to express feelings. These human expressions can be done by different parts of the body; the hand is the most versatile interaction tool, and is effective fpr general use. Gestures have been classified into two categories: static and dynamic [

1]. The static gestures refer to a particular pose of the hand without movement, represented by only one image and the dynamic gestures refer to the movements of the hand, represented by several images. The use of the hand gesture has become a research area in Computer Vision (CV) that plays a very important role in the field of Human–Computer Interaction (HCI). The goal of HCI is to improve the interaction between users and computers in such a way that computers are more receptive to the needs of users so that it allows humans to interact with the computer in a natural way without any physical contact with external devices such as mouse, keyboard, touch screen, joystick, etc.

According to Haria [

2], the gesture recognition system consists of four main stages: data acquisition, segmentation and pre-processing, feature extraction, and classification.Data Acquisition is responsible for collecting the input data which are the images of the hand captured by RGB cameras, data gloves, Time-Of-Flight camera (ToF) and sensors such as Kinect. Subsequently, Segmentation prepares the image to extract the features in the next stage. Its process consists of dividing the input image into separated regions. The most commonly used technique to determine the regions of interest is the detection of skin color [

3], which is used to get the skin pixels in the image. Skin color segmentation can be applied on any color space HSV, YCbCr, YUV, CIELab, etc. Part of the segmentation is Pre-processing that is responsible for image processing subject to different pre-processing techniques such as normalization, noise removal, edge detection, smoothening, and morphological operations followed by different segmentation techniques for separating the foreground from the background. After modeling, the representation of a gesture is based on the appropriate metrics with the Feature Extraction. These features can be the location of the hand, palm, and fingertips; angles of the joints and fingers; fingertip markings; number of stretched fingers; orientation; speed; histograms; any emotional expression; or body movement [

4]. The extracted features are an input to a computational model, whose objective is to correctly classify the given gesture or can be stored in the system at the training stage as a model [

5]. The final stage is Classification where the command/meaning of the gesture must be clearly identified by the system. A classifier allows associating each input gesture according to similarity with other test gestures (database). The input of this stage is a sequence of data (unobservable) with the parameters of a model that were acquired in the training stage. Some methods that allow classifying the gestures are: Euclidean Distance [

6], Support Vector Machine (SVM) [

7,

8], Clustering [

9], Neural Network [

10], Fuzzy Systems [

11], Hidden Markov Model (HMM) [

12,

13], Non-Parametric (Nearest neighbor, Minimum Distance to Centroid) [

14,

15], and Principle Components Analysis (PCA) [

16,

17]. PCA is a dimensionality reduction technique that can be used to solve body recognition problems. This technique is also known as Hotelling, projection of eigenvalues and eigenvectors, and Karhunen–Loève theorem. The dimensionality reduction helps in the following way: it avoids voluminous calculations and is robust to the noise in the images. PCA transforms the original image data into a subspace set of principal components, of which the first principal component captures the greatest amount of variance among the images, while the second principal component provides the vector of the directions orthogonal to the first principal component and so on. This article focuses on the recognition of manual gestures with a simple application such as painting, using capturing images with a PC camera as data acquisition; application of two spaces colors HSV and CIELab as segmentation; and erosion and dilation as pre-processing. Subsequently, PCA is applied in feature extraction and classification.

2. Related Work

It should be noted that all gesture recognition systems consist of several stages such as those mentioned in this work: data acquisition, segmentation and pre-processing, feature extraction and classification. According to the criterion of the authors, each stage uses a technique or method according to the application of the recognition system. For example, there are sign language applications using gesture recognition known as Sign Language Recognition (SLR). Kumar [

18] presented a framework to recognize manual signs and finger spellings using Leap motion sensor. This sensor includes the associated Application Programming Interface (API) that allows the capture of the 3D position of the fingertips. Subsequently, the raw data are pre-processed with a zero-mean (z-score) based normalization by computing the standard deviation and mean, and the features extracted are the fingertip positions and three angular direction. Then, these data are classified using SVM to differentiate between manual and finger spelling gestures, and two BLSTM-NN classifiers for the recognition of manual signs and finger-spelling gestures using sequence classification and sequence-transcription based approaches. The database of this proposal is 2240 gestures with the participation of 10 subjects. As a result, the system has an accuracy of 100% by using the SVM classifier but in general terms has an accuracy of 63.57% for both types of gesture classes in real time. Another study on SLR was reported by Kumar [

19], who developed a SLR system using a depth-sensor device (Kinect) that, when capturing 3D points of body skeleton, recognizes gestures independently to the signer’s position or rotation with respect to the sensor. Its process is as follows: The signer’s skeleton information is captured through Kinect and the data are processed through affine transformation. This transformation is used to cancel out the effect of signer’s rotation and position while performing the gestures. Then, the features (angular features, velocity and curvature features) from the 3D segmented hands are extracted. Furthermore, gesture recognition is carried out with the HMM classifier in three modes: single-handed (64 Gaussians components and 3 HMM states), double-handed and combined setup (128 Gaussians components, 4 HMM and 5 HMM states). The latter (combined setup) presents an accuracy of 83.77% on occluded gestures. Its database consists of 2700 gestures performed by 10 different signers. As noted in the description of the previous work, a gesture recognition system has various applications according to each project. Each research proposal applies various classifiers according to the best accuracy results; the features extracted depend on the data acquisition sensor and the input variables of the classifier; and the database is customized for the training of the proposed algorithm.

Much work on gesture recognition has focused on detecting the hand as well as its features such as position and size of the hand. Thus, many algorithms have been proposed to identify hand gestures with PCA. PCA is used to reduce the dimension of the data and helps the process of classification the features obtained [

20]. Dardas [

21] presented a real time system that includes the detection and tracking of the hand with non-uniform backgrounds using a skin segmentation and an algorithm of the hand contour position that implies a subtraction of the face and a base of recognition of gestures of the hand with PCA. Prasad [

22] used the PCA to determine the characteristics of all frames in the video sequence and then these are stored in the database. Then, the gestures are classified using an artificial neural network. Sawant [

23] proposed a sign language recognition system using the PCA algorithm to compare the features obtained from HSV color space segmentation. After comparing the features of the sign captured with the test database, the minimal Euclidean distance is calculated for the recognition of the sign. On the other hand, Zaki [

24] presented sign language recognition that uses PCA as a dimensionality reduction technique and represented the features of the image to provide the orientation of the hand. In [

25], PCA characterizes the function of the fingers in a vision-based Chinese SLR system. Aleotti [

26] analyzed the use of PCA for automatic recognition of dynamic gestures of the human arm and the imitation of the robots. In [

27], PCA is applied for the recognition of hand gestures in order to represent the alphabet. Furthermore, Mandeep [

28] focused on a skin color model database and a defined threshold, which they compared with a PCA analysis.

Segmentation is a crucial step in a system because it provides color information for an image. This project proposes a segmentation technique with the use of two HSV and CIELab color spaces. Some studies employ these color spaces but with different applications; for example, Cai [

29] presented a system for automatic transfer function design based on visibility distribution and projective method color mapping. These transfer functions play a key role in representing the volume of medical data. The techniques commonly used in the transfer function design are clustering and segmentation, which divide the volume into a set of clusters or segments. The algorithm uses visibility distribution for the design of a transfer function. For the color transfer function, normalized-cut segmentation algorithm on the IGM (Intensity Gradient Magnitude) histogram is used to divide the histogram into several regions. Then, the CIELab color space is applied to map the center of each region in order to assign a unique color to each region by its projected coordinate. Then, the resulting color transfer function is applied to represent the volume of data and generate the visualization result. Another study with a different application to the previous one but making use of color space was reported by Nguyen [

30]. This article allows the segmentation of regenerated muscle fibers in mice based on superpixels. Superpixels have numerous applications in computer vision including image segmentation. A method called SLIC-MMED (simple linear iterative clustering on multi-channel microscopy with edge detection), which segments muscle fibers in multi-channel microscopy, is proposed. The green channel is processed to extract the nuclei, while the remaining channels (cyan, yellow and red) are used to generate superpixels according to SLIC-MMED algorithm. This work compares the performance of the proposed algorithm with the original SLIC algorithm, the latter operating on color images in the CIELab color space using the components (

l,

a,

b). However, in SLIC-MMED algorithm, each image channel is used as a component of the feature vector. In the results, the SLIC-MMED algorithm can correctly segment muscle fibers of different sizes with bright and dark regions. The previous works represent that the coloration is widely applied in different fields because the segmentation allows distinguishing different structures for a better compression of the data, and thus to be able to perform a manipulation of data according to the objective.

3. Materials and Methods

This section describes the procedure of the proposal of this work.

3.1. Data Acquisition

The purpose of this stage is to acquire a sequence of images (video) that subsequently is processed in the next stage. The sequence of images is obtained using a 1.3 megapixel camera from the PC (ASUS Notebook UX32A (ASUSTeK Computer Inc., Taipei, Taiwan)) with different backgrounds and with a variation of lighting conditions.

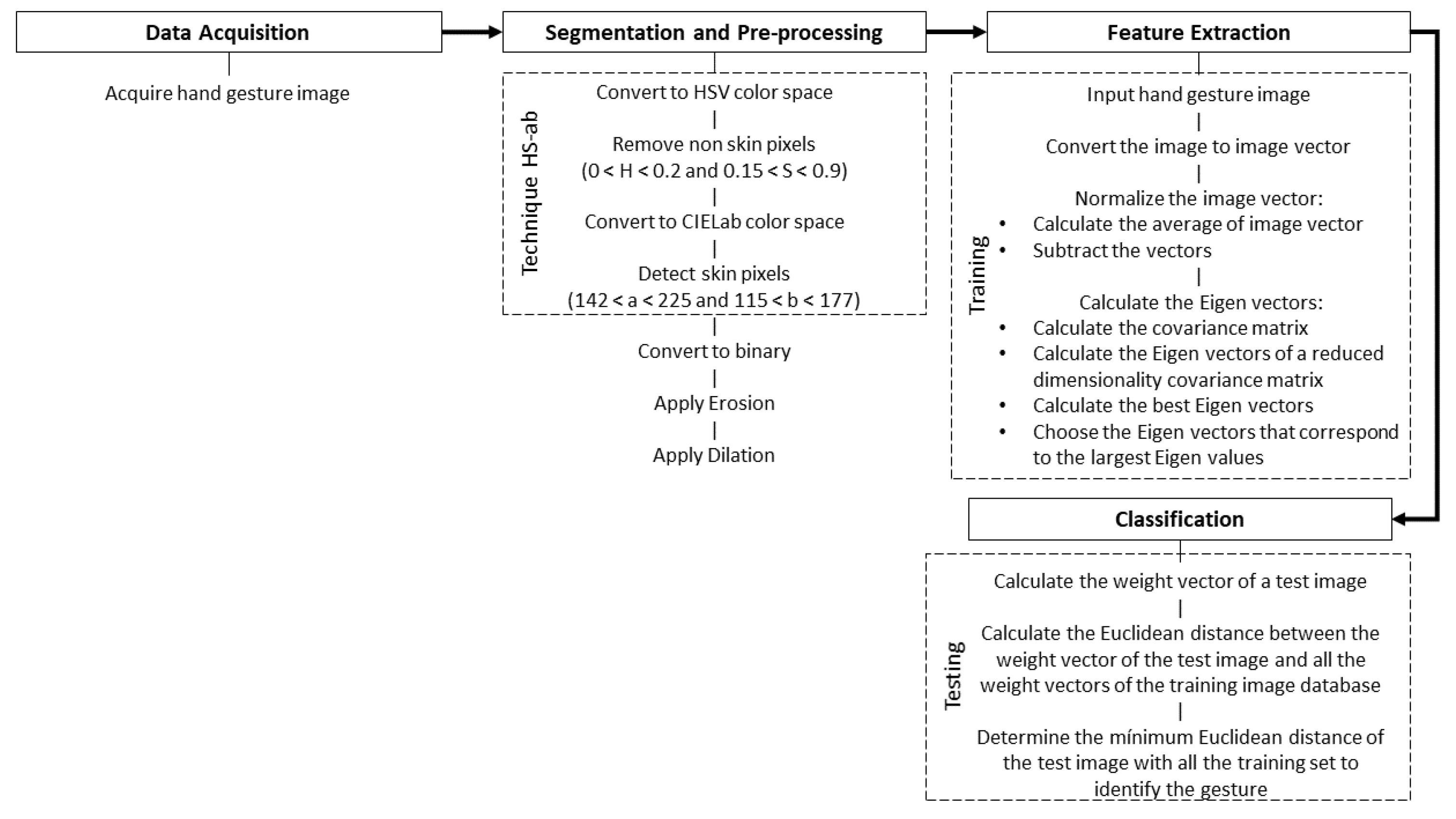

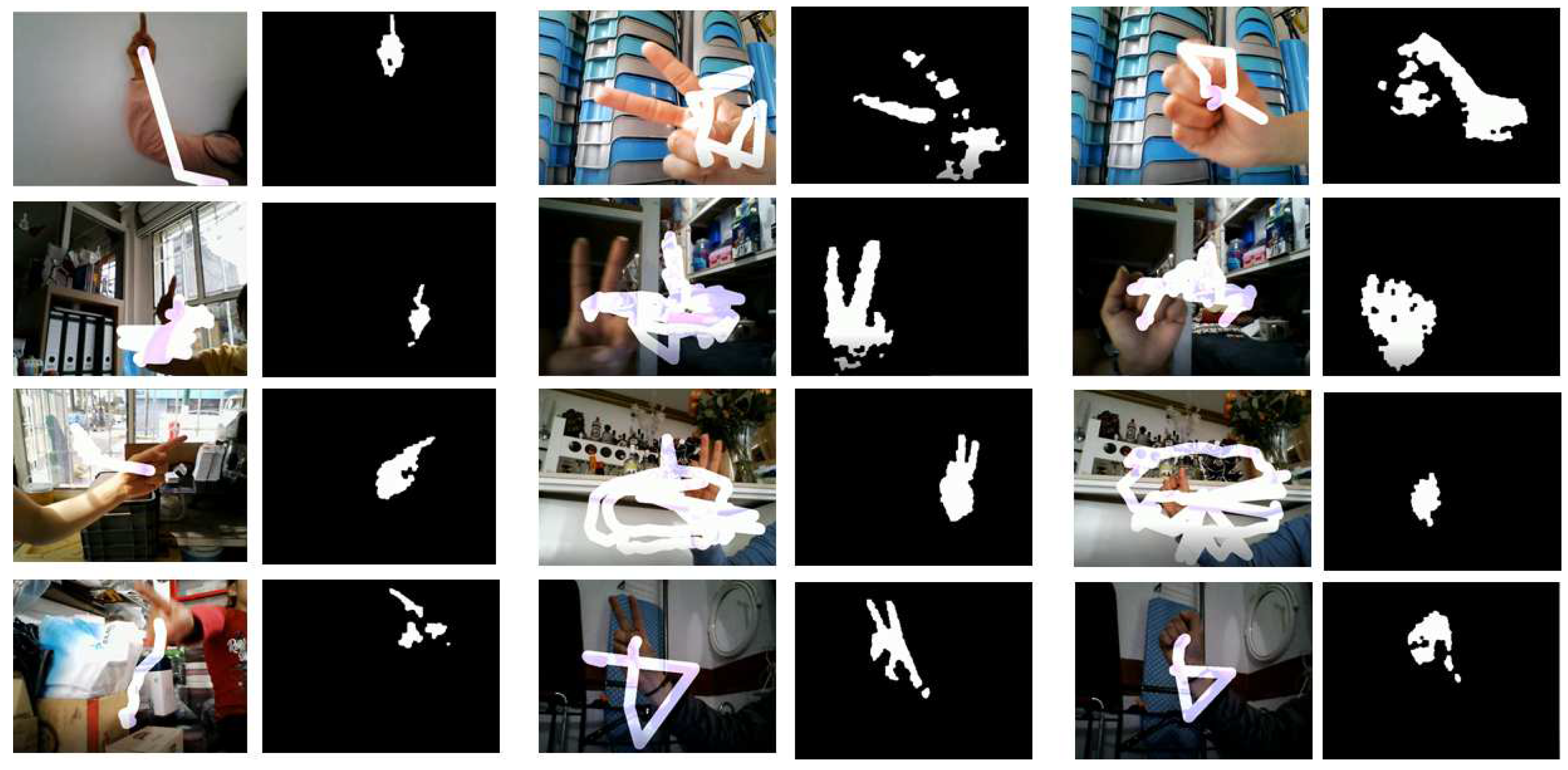

3.2. Segmentation and Pre-Processing

In this stage, the image is prepared so that, later, in the following stage, it is possible to extract the characteristics of the image. Preparation consists of dividing the input image into regions, so that the region of the hand gesture in the image is set aside. The most commonly used technique to determine regions of interest in the image (in this case, hand gestures) is through color detection. A good segmentation will allow detecting the skin color in the image. This work uses a proposed segmentation technique called HS-ab, which consists of transforming the input image into the HSV color space to identify non-skin color pixels with thresholds 0 < H < 0.2 and 0.15 < S < 0.9 in such a way that the pixels in the image with these thresholds are excluded. Subsequently, this same image is converted to the CIELab color space to detect the skin color pixels through the thresholds 142 < a < 225 and 115 < b < 177, so that, finally, the image presents only the skin areas. It should be noted that part of the segmentation process is to build a decision rule that differentiates between the pixels of an image that correspond to skin color and non-skin color. This can be done by a non-parametric modeling such as the histogram-based threshold [

31]. This modeling of histogram-based threshold uses an approximate estimate to define the range of a color space corresponding to the desired color that appears in the images. Then, to obtain the thresholds of the chrominance components of the HSV and CIELab color spaces, the images acquired in the SFA Image Database [

32] are used. For the analysis of the HSV color space, 40 images are used that do not represent any type of skin color. For the CIELab color space, 40 images of different skin color tones are applied. In the case of non-skin images, each image is transformed to the HSV color space by obtaining the histograms of the H and S channels. Each histogram estimates the minimum and maximum values for component H and component S. After having all the histogram values of the 40 images, the minimum and maximum average value of H and S are obtained. In this way, the thresholds for non-skin color pixels H and S are achieved. On the other hand, in the case of skin images, it is the same procedure described above except that the images are transformed to the CIELab color space resulting in the minimum and maximum average values of components a and b.

Later, the image is converted to binary so that morphological operations can be applied. The two basic morphological operations are erosion and dilation, which are used to eliminate the noise in the images and expand and reduce the structure of the objects for their detection. Erosion has the effect of thinning the area of the image while dilation has the effect of widening the region of interest (skin regions) of the image. The PCA has two stages: training and testing. In the training stage, the Eigen space is established using hand gesture training images and, at the same time, these images are mapped in the Eigen space. At the testing stage, when the image is approved (that is, it is mapped to Eigen space), it is classified using a distance classifier. The training stage is then presented in

Section 3.3 and the testing stage in

Section 3.4.

3.3. Feature Extraction

Step 1: Create a set of hand gesture training images and get the database. The database contains training images of dimensions : , , …, .

Step 2: Represent each image in a vector where with dimension .

Steps 3 and 4 allow normalizing the vectors of the images of gestures of the hand. This normalization eliminates common characteristics of the images of gestures, in such a way that every image vector represents to the only characteristic.

Step 3: Calculate the average of the vector of the image:

Step 4: Get the difference image by subtracting the vector from the average image of each vector from the gesture training image:

Thus, is the normalization of the vectors of the gesture images, is a vector of the gesture image and is a vector of the average image.

The following steps are for calculating the Eigen vectors but first the covariance matrix C must be calculated.

Step 5: Calculate the covariance matrix

C with dimensions

:

where

is a column of matrix with each gesture normalized with dimensions

where

is the number of rows and

M is the number of columns.

The dimensions of

are very large, therefore it generates

Eigen vectors of big size; the dimensions are as big as the gesture’s own image. Therefore, there is a need to reduce the dimensionality with a reduced dimensionality covariance matrix. This can be done by calculating the covariance matrix in the following way:

Step 6: Calculate the Eigen vectors of with dimensions , which has the same Eigen vectors and Eigen values of the covariance matrix . Then, to calculate the , it has to be that the total number of rows in the covariance matrix gives the total number of Eigen vectors. That is, the total number of Eigen vectors .

Step 7: Calculate the best

M Eigen vectors of

with the following equation:

where

is the upper Eigen vector in dimensional space while

is the lower Eigen vector in dimensional space.

Step 8: Take only the Eigen vectors corresponding to the largest Eigen values .

To make the representation of the database of the training images using the Eigen vectors is when each gesture is projected in the space. It is for this reason that the projection of the gesture is calculated through the weight of each training image; therefore, the weight is calculated as follows:

where

.

As the weight vector is represented as:

each image in the training database is represented by the weight vector in the following way:

Thus, for each training image, the weight vectors are calculated and stored.

3.4. Classification

Step 1: Then, the test stage is performed. For this, a test image is required (image with any gesture of the hand)

from which its weight

is calculated through the multiplication of Eigen vector

with the image difference; this is described in the following equation:

In the same way, the test image is represented by the weight vector and is calculated as:

Step 2: Calculate the Euclidean distance between the weight vector of the test image

and the weight vectors of the training image database

.

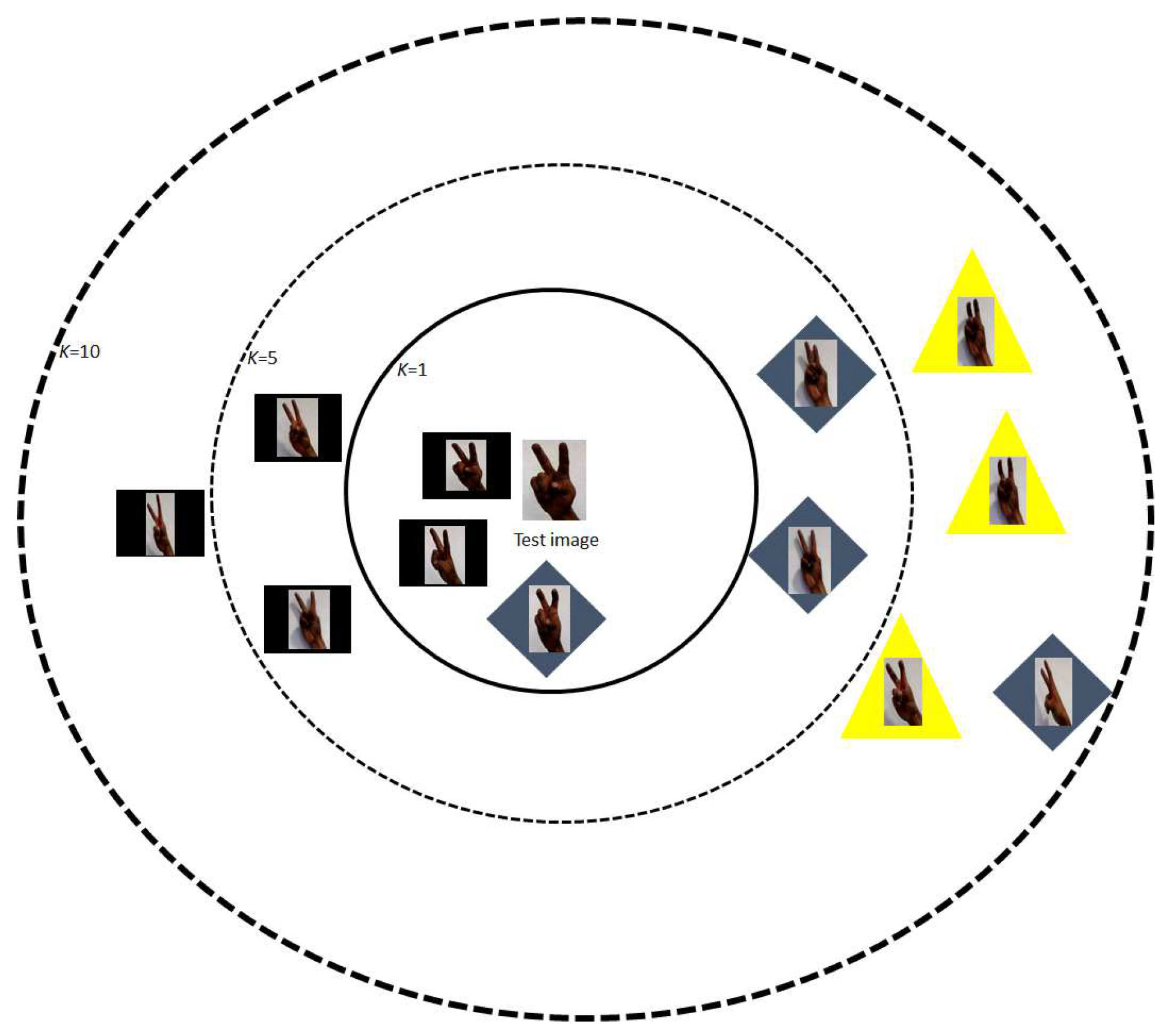

Finally, a type of gesture is determined by presenting a minimum Euclidean distance with respect to the test gesture (which is the test image). Thus, to classify the input image (test image) with the correct match of the training database the k-nearest neighbor algorithm (also named K-Nearest Neighbors (KNN) Classifier) is used. Therefore, KNN algorithm is used in this project to classify a test image based on the similarity measure of a set of training image database. KNN algorithm calculates the distance between each unknown instance (test image) and all known instances (training image), and the k-nearest neighbor in known instance sets are selected. The nearest neighbors can be determined by calculating based on distinct distance metrics, such as Euclidean distance [

33]. This distance metric is used in this work where the classification is performed by finding the nearest Euclidean distance of the transformed weights of the test image, i.e., a neighbor is deemed nearest if it has the smallest distance, in the Euclidean sense. The choice of the number of neighbors (

k) is discretionary and up to the choice of the users. On this occasion,

k = 1 is chosen, because the algorithm classifies the class of the nearest neighbor. Typically, the test image is classified according to the labels of its k-nearest neighbors by a majority of votes. Then, if

k = 1, the object (test image) is classified as the class of the object (some training image) closest to it and also presents greater precision in having good results. In this work,

k > 1 is not chosen because with more

k points the algorithm takes longer to process and receive a response, while a quick response is desired because the gesture detection is in real time. In addition, the training image closest to the image test is searched, thus more

k points are not considered, only the nearest one that appears in

k = 1.

Figure 1 shows the operation of the k-nearest neighbor algorithm.

The steps described above, namely data acquisition, segmentation and pre-processing, feature extraction and classification, are described in

Figure 2, where the hand gesture recognition processing is observed.

4. Results

The PCA algorithm requires two databases, the training database and the testing database. In the first database, each gesture of the training database is represented as a column vector, that is, the values of each column that forms a matrix represent the pixels of the gesture image. Subsequently, the algorithm calculates the average of this matrix to normalize the gesture vectors. Then, the Eigen vectors of a covariance matrix is obtained. Finally, each gesture is projected to the corresponding gesture space when the Eigen vector matrix is multiplied by each of the gesture vectors. At the testing database, a gesture of a subject (which is a test gesture) in the same way is represented as a column vector that is subsequently normalized with respect to the average gesture. Then, the algorithm projects the test gesture into the gesture space using the Eigen vector matrix. Next, the Euclidean distance is calculated between this projection and each of the known projections of the database in the training stage, so that the algorithm selects the minimum value of these comparisons. This indicates that the algorithm recognizes a gesture from the training database with respect to the test gesture. It should be noted that at this stage the testing database is used.

Training and testing data are sets of manual gesture images. The training database is for the PCA algorithm training, while the testing database is for testing the flexibility and adaptability of the proposed gesture recognition algorithm. Another difference of both databases is that the images in each database are different, i.e., the set of gestures in the training database belong to some test subjects while the gestural images of the testing database are acquired from other test subjects who are different from those who participated for the training database. Therefore, there are no images from the training and testing database belonging to the same person as each image in both databases belongs to different people.

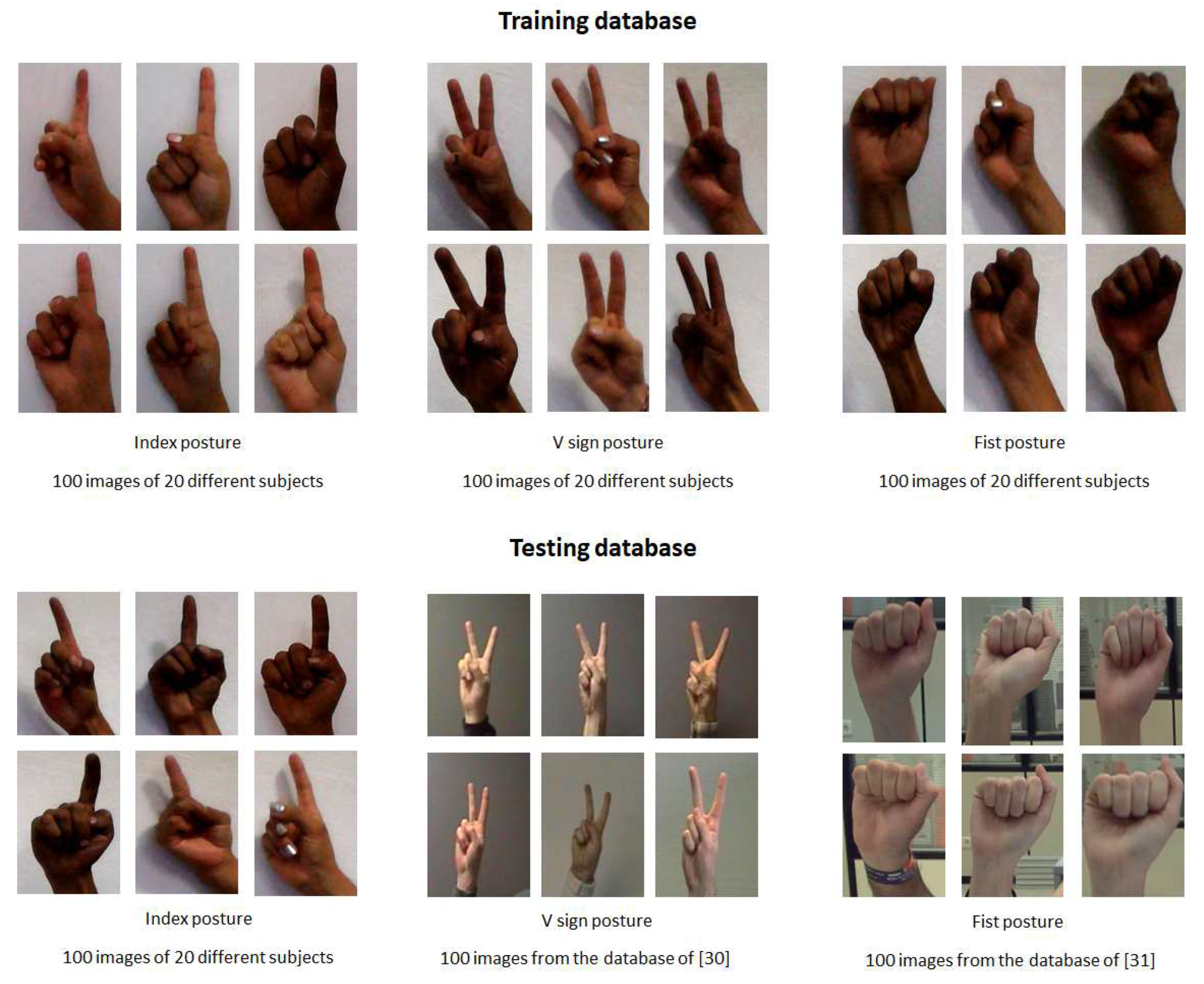

Training database: This database contains images of gestures performed by 20 people (there are 10 female subjects and 10 male subjects) and each person develops five gestures. Therefore, there are a total of 100 images of manual gestures for each hand posture. The images are captured using a PC camera. The database consists of three hand postures: index, V sign and fist under a uniform background (see

Figure 3). These last two gestures are attached to experience the testing database of other studies [

34,

35] with the algorithm of this work. Marcel [

34] used a neural network model to recognize six hand postures where an analysis of face location and body anthropometry is used through space discretization. On the other hand, Maqueda [

35] presented a human computer interface to control an application by executing manual gestures using a binary Support Vector Machine classifier and Local Binary Patterns as feature vectors.

Testing database: The database of the V sign and fist hand posture was obtained from [

34,

35] with different lighting conditions. The database of Marcel [

34] contains thousands of various images with both uniform and complex backgrounds; in this case, the images with a uniform background were used. In [

35], the database contains 30 video sequences performed by six different individuals. The video sequence was recorded on a non-uniform background and each video sequence was spatially and temporally segmented, so the training samples of each gesture are cropped video sequences, whose frames are regions of image that contain only the poses of the hand. In each database, 100 images of manual gestures were acquired. Furthermore, for the index hand posture database, the same procedure described above of the training database was performed, i.e., there are 100 images with a uniform background of 20 different people. Some images of the testing database are presented in

Figure 3.

It should be clarified that the images of the three hand postures, namely index, V sign and fist, that belong to the training database and the images of the index posture of the testing database were captured on a PC camera by 20 different subjects. However, testing the operation of the system proposed in this work involves 20 people who are different from the subjects who participated in the capture of the gestural images for the database.

Figure 4 shows some results of the application of the recognition system of the three gestures (index, sign V and fist) in the hand with a paint simulation. This hand gesture recognition algorithm was implemented on the Microsoft Visual Studio 2017 Express platform with the SDK version 3.0 library.

On the other hand, in

Table 1, the efficiency of the gesture recognition system is shown considering the equations for the calculation of recall (Equation (

12)), precision (Equation (

13)) and prevalence (Equation (

14)). The system was tested by 20 different subjects in real time for the detection of index, V sign and fist gestures. The results presented in

Table 1 show that Maqueda [

35] presented a lower percentage than Marcel [

34], which could be because the images in the training database have non-uniform backgrounds compared to Marcel [

34].

The average execution time (this is the maximum time to recognize a gesture) of the proposed hand gesture recognition system for each gesture capture in real time is 2.6 ms. This test 3 we performed on a computer with an Intel Core 1.80 GHz processor, 4 GB RAM, a 1.3 MP camera and a resolution of 1280 × 720 pixels.

Considering the results of recall, precision and prevalence of this work, a comparison was made with other methods. Some methods use the PCA algorithm for analysis in the database applied in a gesture recognition system. Kumar [

36] presented a system that recognizes specific hand gestures to transmit information in a device control. For this, the PCA is used for manual gesture recognition and the Sheffield KInect Gesture (SKIG) dataset are extracted to create a training set. The training set consists of 10 categories of hand gestures: circle (clockwise), triangle (anti-clockwise), up–down, right–left, wave “Z”, cross, come here, turn around and pat. These gestures are performed with three hand postures, namely fist, index and flat, under three different backgrounds, namely wooden board, plain paper and paper with characters.

Table 2 shows the results of recall, precision and prevalence of 10 gesture tests in the system. Mandeep [

28] focused on a hand gesture recognition system based on skin color modeling in the YCbCr color space. Then, the image is divided into foreground and background through a defined threshold. Finally, a template based matching technique is developed using PCA. The datasets are in two forms: controlled and uncontrolled. The first has images with a similar background taken from several people. The second presents images with different lighting conditions. The results obtained using both databases to test the system are shown in

Table 2.

As shown in

Table 2, Mandeep [

28], Kumar [

36] presented very good results because their training and test databases are very similar (see

Figure 5b). In addition, the backgrounds of the images are very uniform and controlled under ideal conditions (see

Figure 5c), which allows the database to be more efficient and therefore provide maximum precision. It is for this reason that the percentages of this work tend to be low in the majority of cases: the images in the training and testing databases were acquired by different people (see

Figure 5d).

As shown in

Figure 5, Kumar [

36] only compared identical images and did not test with other images, which is why they offered a precision of 100%. Mandeep [

28] contained images with good conditions considering the background and lighting, thus they also offered a precision of 100% but, when testing with images with low illumination, the precision reduced to 96.97%. however, they ded not present results with different gestures and under different conditions as carried out in this project. This project was tested with different images of gestures of various people in real time and with non-uniform backgrounds under different lighting conditions.

Finally, some tests are presented of the system for experimental purposes. First, a comparison of equal images was made in the training and testing databases. Only for this test, some images of the index gesture of the training and testing databases belonged to the same person. This does not mean that the databases of this system have images of the same person, and this test was only done to know the operation of the Euclidean distance with the same, similar and different images. It was observed that, when a test gesture that already exists in the training database is entered, the projection distance (i.e., the Euclidean distance) tends to be almost or completely zero; therefore, there is an encounter of the same gesture. However, when a different test gesture from the images in the training database is shown, the Euclidean distances presented may be far or close to zero. Thus, when comparing all Euclidean distances, the smallest projection distance is chosen because it corresponds to the test gesture. The above is presented in

Figure 6.

Figure 6a shows that the test image is the same as the training image so it presents a Euclidean distance of 0.0 × 10

10.

Figure 6b shows the test gesture is similar to the training gesture but is not the same, with a Euclidean distance of 0.6 × 10

10.

Figure 6c shows a different test gesture to the training gesture with a projection distance of 1.3 × 10

10.

The second test was to apply the training database of this work in an algorithm from another study. Dakkata [

37] presented a prototype system to recognize the gesture of deaf people based on ASL (American Sign Language) in order to be able to communicate with people. The algorithm consists of the following steps: skin detection, image segmentation, image filtering, and cross-correlation method. This project uses a PC camera to capture the gestures of the person and, after the image processing, the system applies the gray algorithm for illumination compensation in that picture. The database of Dakkata [

37] contains 160 images with some types of gestures that represent the ASL with 10 images for each gesture. The training database of the proposed project presents three hand postures, which also belong to ASL. Then, 100 images of each posture (index, V sign and fist) were implemented in the algorithm database of Dakkata [

37]. It should be noted that the algorithm [

37] was implemented in MATLAB 2019. The results are shown in

Figure 7.