1. Introduction

The advent of Industry 4.0 [

1] has increased the need for decentralized industrial processes within value chains. Processes and their associated resources—including machines, materials, human expertise, knowledge, and software—are increasingly distributed across multiple locations and organizations [

2]. Such distribution enables more effective utilization of resources and better control of production capacities [

3] (pp. 9–10). Globally distributed collaboration is becoming common throughout the entire

Product Lifecycle (PLC) [

4], driven by factors such as limited raw materials, capacity constraints, and the need for efficiency [

3] (pp. 9–10). Digitization and connectivity are essential for seamless production planning [

3] (pp. 9–10). In addition to physical resources, knowledge plays a central role: implicit knowledge in the minds of individuals can be externalized into explicit, machine-readable formats, enabling automated processing across systems [

5] (pp. 8–9). Software resources must adapt to support distributed operations, ensuring that processes, data, and knowledge are always accessible across locations [

6]. Consequently, efficient and flexible process planning requires the integration of tangible and intangible resources into a coordinated, digitally connected system. These foundations—decentralization, digitization, and connectivity—remain critical, even as Industry 5.0 introduces human-centric, sustainable, and resilient production approaches [

7]. While this work is positioned in the context of Industry 4.0, the proposed service-oriented and knowledge-based architecture also provides a foundation for human-centric and adaptive production scenarios discussed in Industry 5.0. This paper addresses these challenges by proposing a framework for knowledge-driven, distributed production planning.

1.1. Motivation

Knowledge-based production planning (KPP) [

8] was initially developed as a prototypical proof of concept to enable semantic production planning for smart products [

8]. It is part of the research activities of the Chair of Multimedia and Internet Applications at the University of Hagen, which collaborated with its affiliated Research Institute for Telecommunications and Cooperation e.V. (FTK). KPP builds on a series of preceding research projects, including the EU-funded SHAMAN project [

9] that focused on long-term archiving of knowledge resources that were not bound to specific processes. Due to the missing aspect of process binding,

knowledge-based and process-oriented innovation management (WPIM) [

9] addressed process-oriented knowledge management, connecting archived knowledge to the processes in which it was generated. CAPP-4-SMEs [

9] extended collaborative and adaptive production process planning to distribute environments. While CAPP-4-SMEs did not consider process-oriented representation of knowledge resources in production, KPP integrates these concepts to provide semantic, knowledge-driven process planning for Industry 4.0 environments [

10].

The original KPP-System was implemented using a

Monolithic (Software) Architecture (MSA) [

11], which allowed incremental growth of functionalities. However, this architecture faces

limitations in distributed Industry 4.0 environments: certain functionalities cannot be deployed independently, scalability is limited, and integration with other systems is cumbersome [

11]. To overcome these challenges, KPP must evolve toward a

service-oriented architecture (SOA) [

11], enabling modular deployment, cloud integration, and interoperability across digital ecosystems. The transition to a cloud-based, service-oriented architecture offers significant advantages, including increased agility, faster development and deployment cycles, improved scalability of functionalities, and the ability to develop solutions using different technologies [

12].

Compliance with standards such as the Arrowhead Framework (AF) [

13] and Industry 4.0 reference models (e.g., RAMI 4.0 [

14]) must be considered

to ensure compatibility and seamless integration within broader digital ecosystems. Incorporating

adaptive ontology-based development (AOD) [

15] allows KPP to dynamically

expand its capabilities, supporting new processes without manual intervention. This transformation lays the foundation for adaptive, knowledge-driven production planning that is scalable, flexible, and fully compatible with smart manufacturing environments. Based on this context, the following section presents the problem statements and derives the corresponding research questions.

1.2. Problem Statements and Research Questions

This section provides a cohesive foundation for transforming the KPP-System into a distributed, standards-compliant, and adaptive solution for Industry 4.0. It first identifies the key problem statements (PS), highlighting the main challenges and limitations of the current system. Based on these PS, specific research questions (RQ) are derived to clearly define the scope of investigation.

With respect to the first problem statement, a major limitation of the current KPP-System is its monolithic software architecture, which restricts the distribution of functionalities across processes, resources, and knowledge (PS1). Consequently, the first research question, therefore, investigates how the system can be transformed into a service-oriented architecture that enables distributable functionalities (RQ1). Concerning the second problem statement, the system currently provides only limited interoperability with other Industry 4.0 solutions, as it does not comply with the specifications of the Arrowhead Framework (PS2). Consequently, this leads to the second research question of how Arrowhead-compliant capabilities can be achieved to ensure seamless integration with external systems (RQ2). In relation to the third problem statement, the KPP-System lacks standardized data exchange across distributed resources (PS3). Because it does not yet support RAMI 4.0 concepts such as Asset Administration Shells or eCl@ss classifications, uniform and semantically meaningful data exchange along the value chain remains limited. The third research question, therefore, examines how these standards can be incorporated to enable consistent and interoperable communication (RQ3). As for the fourth problem statement, the system is unable to adapt dynamically to evolving or newly introduced processes (PS4). Both the KPP-System and its underlying ontology require manual adjustments when requirements change. Thus, the fourth research question explores how ontology-based mechanisms can support automatic and flexible adaptation to changing processes and resources (RQ4). The following chapter builds on this research question by presenting the research methodology and deriving the corresponding research goals.

1.3. Research Methodology and Research Goals

Regarding the methodology, this research follows the design-oriented approach of Nunamaker et al. [

16], which comprises four phases:

observation (OB),

theory building (TB), system development (SD), and

experimentation (E). These phases guide the analysis of the current KPP-System, the development of concepts, the implementation of modular solutions, and the evaluation of their effectiveness. Based on this methodology, specific research goals are derived to structure the subsequent activities of theory building, system development, and experimentation, as outlined below.

For RQ1, which addresses the distribution of the KPP-System and its underlying software architecture, the study begins by identifying architectural constraints and analyzing distributed architectures and service structures (OB1). Based on these insights, system requirements are derived and a conceptual service design for selected KPP-Functions is developed (TB1). These functions are subsequently implemented as modular service components (SD1), and the distributed setup is evaluated by comparing its performance and functionality with the existing KPP-System (E1).

The RQ2, focusing on extending the KPP-System to ensure compliance with the Arrowhead Framework, starts with an examination of the framework’s structure, interfaces, and interoperability requirements (OB2). From this foundation, an architecture is designed that merges service-oriented principles with Arrowhead specifications (TB2). The required components are then realized within the KPP environment (SD2), followed by an evaluation of the resulting architecture’s interoperability and alignment with Arrowhead standards (E2).

For RQ3, which investigates the integration of Industry 4.0 standards such as RAMI 4.0 and eCl@ss, relevant communication standards and semantic interface concepts are analyzed (OB3). Based on this analysis, a semantic wrapper is designed to enable standardized and meaningful data exchange (TB3). This wrapper is integrated into the mediator-based architecture (SD3), and the system is evaluated regarding standard compliance and the effectiveness of semantic communication across distributed resources (E3).

Finally, the RQ4, which aims to enable dynamic adaptation to new value-chain processes through ontology-based mechanisms, begins with an exploration of meta-ontologies and adaptive system approaches (OB4). From these findings, a dedicated KPP-Service-Ontology is developed to support flexible adaptation (TB4). Adaptive KPP-Services leveraging this ontology are then implemented (SD4), and the system’s capability to dynamically integrate newly introduced processes is examined (E4). The following subsection defines the research scope of this paper, outlines its main contributions, and provides an overview of the paper structure.

1.4. Research Scope, Contribution, and Paper Outline

While the research questions cover the full scope of the research plan, this paper focuses on the initial architectural concept of the KPP-System and the first step towards its transformation into a distributed architecture. The methodological contribution of this work lies not in the development of a new planning algorithm, but in the systematic transformation of an existing knowledge-based production planning system into a service-oriented, Arrowhead-compliant architecture, including ontology-driven adaptability. The presented implementation and evaluation, therefore, focus on architectural feasibility and service-based integration rather than on algorithmic optimization. Specifically, it addresses OB1, OB2, and OB4, which provide the analytical groundwork for understanding the distribution of functionalities within the KPP-System, the status of the Arrowhead Framework, and approaches for ontology-based system adaptivity. These observations form the basis for the subsequent theory building (TB) activities. Following this analytical foundation, the paper develops an initial conceptual model of a distributed KPP-System architecture. This TB phase derives design principles, defines key service interactions, and outlines a mediator-based structuring of system components with a strong emphasis on service orientation. Although the broader system development phase includes multiple transformation steps, this paper implements only a selective part of SD1, namely the practical realization of a core service component. This implementation serves as a proof of concept, demonstrating the feasibility of the proposed mediator-based, service-oriented architecture. The evaluation section then examines this prototype as an initial step toward the full transformation of the KPP-System, establishing a foundation for future standard integrations and ontology-driven adaptive mechanisms. Finally, the paper concludes with a discussion of the results and a summary of the contributions.

2. Current State of the Art

This section presents the state of the art relevant to this study. The focus is on distributable production planning services, while the Arrowhead Framework and adaptive ontology-based development are introduced as context for interoperability and future dynamic expansion. To establish the analytical foundation of this work, the section presents the key findings associated with the observation goals OB1, OB2, and OB4. These cover (i) distributed architectures and service structures, (ii) the Arrowhead Framework and its interoperability mechanisms, and (iii) meta-ontologies together with adaptive, ontology-driven approaches. Finally, the section outlines the remaining challenges (RC) identified within each of these observation areas, which subsequently guide theory building and system development activities.

2.1. Distributed and Service-Oriented Architecture (SOA)

The KPP-System currently relies on a

mediator architecture (MA) [

8], which integrates distributed knowledge resources via wrappers. MA enables the integration of data from various sources into a unified view, allowing users to access information from a single-entry point while hiding the complexity of heterogeneous sources. The wrappers transform the content of different sources into a common format, supporting semantic data queries in the KPP-System [

8]. The KPP-System currently follows an MSA, meaning all components are interconnected and dependent. There is a frontend that provides interfaces for administration, interacts with a backend responsible for supplying data queries in the form of interfaces, and has a data layer with a Neo4J database and SPARQL for semantic data queries [

8].

A SOA addresses these limitations by enabling independent, modular deployment of functions along business processes [

11]. In 1996, Gartner analyst Roy Schulte published an initial definition of service-oriented architectures [

17], describing SOA as a form of layered computing designed to enable the reuse and sharing of logic and data across different applications and usage scenarios. The emphasis is placed on the service approach by distributing it across different applications. SOA primarily involves the use of different services along a company’s business processes [

11]. Services are loosely coupled, self-contained, and communicate through standardized interfaces such as REST [

18], allowing dynamic adaptation, efficient real-time processing, and scalable integration across distributed environments. Recent systematic literature reviews confirm that the migration from monolithic software toward service-based architectures has become a major research focus, driven by scalability, maintainability, and distributed deployment requirements [

11,

12]. These reviews further highlight that service-based architectures are increasingly adopted in industrial domains to support continuous deployment, cloud-native execution, and integration with digital twins and semantic middleware. Software systems with a distributed, service-oriented architecture are better suited to the dynamic industrial requirements [

12]. Industry 4.0 necessitates the ability to flexibly adapt systems to changing conditions, to ensure the scalability of services, to seamlessly integrate different technologies, and to enable efficient processing of real-time data. Transforming KPP into an SOA enables independent, modular deployment of functions, supporting dynamic adaptation and improved real-time processing [

11].

In discussing the remaining challenges of the service-oriented architecture, transforming the KPP-System into a service-oriented architecture offers clear benefits in terms of modularity, flexibility, and scalability. However, remaining challenges still need to be addressed. First, the precise definition of service boundaries remains an open issue (RC1). Second, ensuring the maintainability of individual components is crucial (RC2). Third, achieving efficient integration with existing system layers is necessary to support a fully functional system (RC3). Addressing these challenges is essential for designing a conceptual architecture that supports independent, reusable services while meeting the dynamic requirements of Industry 4.0 environments.

2.2. Fundamentals of the Arrowhead Framework

To enable cross-service communication and interoperability in distributed industrial systems, the

Arrowhead Framework (AF) provides a standardized, service-oriented architecture [

13]. The EU Project named Arrowhead addresses this issue and focuses on the automation of industrial machines with efficient energy consumption and flexibility [

13]. The Arrowhead Project refers to a three-year research initiative founded by the Key Digital Technologies Joint Undertaking (KDT JU) and coordinated by Luleå University of Technology in Sweden [

13]. AF relies on the concept of a

local cloud (LC) [

13], a spatially connected, private group of systems, with communication between LCs facilitated via gateways. Key AF components include the

Service Registry (SVR) for publishing and discovering services, the

Orchestration System (OS) for coordinating interactions, and the

Authorization System (AS) for access control [

13]. AF systems must provide standardized interface descriptions for content, functions, and attributes, enabling consistent integration across distributed environments. To deploy an LC within the AF, these three core systems are required [

13] (pp. 56–57). While the Arrowhead Framework was originally introduced by Delsing [

13], more recent studies have embedded it within broader Industry 4.0 and emerging Industry 5.0 architectures, highlighting its role in interoperability, service orchestration, and human-centric production systems [

19,

20]. Recent versions of the Arrowhead Framework (such as release 5.0) show its ongoing progress toward stronger security features, improved orchestration, and better semantic support, confirming its continued importance for industrial IoT applications and service-oriented architectures [

21]. In addition, recent studies assess the Arrowhead Framework’s suitability as middleware for enabling service orchestration and system integration in distributed autonomous scenarios, demonstrating its potential beyond traditional manufacturing environments [

22].

When discussing the remaining challenges of Arrowhead Framework compliance, ensuring that the KPP-System adheres to the framework introduces several design considerations. The KPP-System can leverage AF to support

Smart Production Planning for smart products, utilizing SOA principles and LCs to achieve loose coupling, dynamic reconfiguration, and flexible service deployment. By combining semantic planning data with AF-compliant services, KPP can enhance interoperability and scalability in distributed Industry 4.0 environments [

8]. First,

deployment within a local cloud must be properly addressed (RC4). Second,

the integration of the three core Arrowhead components presents a significant challenge (RC5). Tackling these challenges is critical for developing a distributed architecture that is fully aligned with the framework and capable of supporting seamless Industry 4.0 interactions.

2.3. Fundamentals of Adaptive Ontology-Based Development and Meta-Ontologies

The continuous evolution of processes requires that the KPP-System’s functionalities be regularly extended and adapted. Currently, the system is not aware of its own functions, and modifications must be implemented manually within the monolithic architecture, while ensuring backward compatibility. Any change in functionality requires a parallel adaptation of the KPP-Ontology and -System. In the SOA-based KPP-System, such adaptations should occur dynamically. The objective is to enable automatic generation of distributed KPP-Services through ontology-driven mechanisms. A Meta-KPP-Ontology will serve as the foundation for future adaptive ontology-based development (AOD), supporting flexible, knowledge-driven configuration and extension of KPP functionalities. This approach aims to allow the system to automatically adapt and provide new functions solely by updating the ontology, without manual code changes.

Self-adapting systems are used in a wide variety of areas [

23]. In software development, the focus is on developing systems that can adapt dynamically to changing conditions or requirements [

23]. Ontology-based adaptation has been recognized as a key principle in self-adaptive software systems [

15].

Such systems adjust dynamically to new conditions by interpreting semantic structures and modifying program behavior accordingly. Ontologies offer formal and machine-readable models of domain knowledge and are increasingly being adopted as a key basis for achieving semantic interoperability in smart manufacturing systems. Recent work emphasizes their growing importance through semantic web technologies and digital twin standards, supporting interoperable and human-centric production environments as envisioned in Industry 4.0 and emerging Industry 5.0 paradigms [

7,

20,

24]. Semantic standards such as the Asset Administration Shell are increasingly combined with ontology-based digital twin concepts to enable interoperable Industry 4.0 production systems [

24]. In

ontology-driven development, changes to ontology attributes or structures directly trigger updates in corresponding functions and system components [

15]. Ontologies can be organized hierarchically into three levels: Meta-ontologies (domain-independent conceptual structures), mid-level ontologies (application or user-level abstractions), and domain-ontologies (specific domain knowledge) [

25]. This layered approach provides a theoretical foundation for realizing adaptive, ontology-driven extensions in the distributed KPP-System, enabling flexible interpretation of domain knowledge and dynamic service integration in future iterations. Recent research explores ontology-based approaches for semantically describing and discovering RESTful services, such as ontology-based APIs (OBAs), in order to improve interoperability and automated service interaction [

26]. Similar research proposes semantic models for RESTful services based on OWL and RDF to enable standardized, machine-interpretable service descriptions and automated discovery mechanisms [

27]. Such approaches provide an important foundation for semantic interoperability in service-oriented systems. These theoretical foundations enable the conceptual implementation of adaptive, ontology-driven extensions, allowing systems to flexibly interpret domain knowledge and support dynamic service integration. Recent work on ontology-driven adaptive systems demonstrates how semantic models can be used to dynamically modify system behavior in response to changing conditions [

28]. These approaches confirm the feasibility of using ontologies not only for knowledge representation, but also as active drivers of system adaptation in distributed and service-oriented systems. In particular, ontology-based reasoning is increasingly applied to influence service composition, configuration, and execution behavior in distributed and service-oriented systems. Building on these concepts, the proposed approach applies ontology-based mechanisms within a service-oriented production planning context, as detailed in the subsequent architectural and implementation sections.

Concerning the discussion and remaining challenges of the adaptive ontology-based implementation, incorporating ontology-driven adaptation mechanisms enables the KPP-System to dynamically evolve and integrate new functionalities automatically. However, some challenges remain: First, designing a flexible and extensible ontology structure (RC6); second, ensuring that structural and functional extensions occur exclusively through controlled ontology evolution (RC7); and third, implementing adaptive services that reliably respond to changing requirements (RC8). Addressing these challenges lays the groundwork for a system capable of adapting to evolving processes without manual intervention.

2.4. Initial Summary of Discussion and Remaining Challenges

Considering the discussion and remaining challenges, the previous sections have outlined the theoretical foundations of distributed and service-oriented architectures, the Arrowhead Framework, and adaptive ontology-based development. Building on these insights, several key challenges for the KPP-System can be identified, which serve as a basis for the subsequent conceptual modeling and implementation. Transforming the monolithic system into a distributed, service-oriented architecture requires ensuring modularity, flexibility, and scalability, while clearly defining service boundaries and maintaining reusability and maintainability across components (RC1–RC3). Compliance with the Arrowhead Framework introduces further requirements, including deployment within a local cloud and integration of the core Arrowhead components to enable seamless interoperability with external systems (RC4–RC5). In addition, supporting dynamic, ontology-driven adaptation mechanisms is essential to allow new functionalities to emerge automatically and evolve without manual intervention (RC6–RC8). These challenges not only summarize the current limitations of the KPP-System but also highlight the areas where the research questions guide the investigation and inform the design choices. They provide a structured framework for evaluating architectural alternatives and aligning the system’s development with Industry 4.0 requirements.

Based on the results of the research objectives of this study, the challenges outlined above correspond to the analytical findings and provide the foundation for the subsequent theoretical investigation. In the modeling phase, RC1–RC3 are addressed within the design of the distributed, service-oriented architecture, whereas RC4–RC5 are handled in the Arrowhead-compliant integration layer, and RC6–RC8 are resolved through the development of the adaptive, ontology-driven mechanisms. In the next chapter, the focus shifts to the research objectives derived from theory building, presenting their respective results and insights that inform the conceptual architecture and implementation of the KPP-System.

3. Conceptual Modeling of Service-Oriented Knowledge-Based Process Planning (SO-KPP)

Regarding the modeling of the system architecture using the User-Centered System Design (UCSD) methodology [

29], the overall research plan ensures system usability and alignment with stakeholder requirements. Within this paper, UCSD is applied conceptually to the system architecture, with an understanding that the user context and domain requirements inform the decomposition of services and their orchestration, rather than detailed UI design. As a result, detailed modeling of individual use cases and user interactions is not part of the scope here but will be addressed in later stages of the overall work.

In terms of the derivation of the conceptual architecture, the design presented in this chapter is the outcome of a structured UCSD-based process. First, the use context of the KPP-System is defined to capture stakeholder groups, operational environments, and overarching domain requirements. Based on this, personas representing key user roles are developed, from which concrete use cases are formulated that identify the functional requirements and interactions expected from the system. These use cases are further operationalized through a set of modeling artifacts—use context diagrams, use-case diagrams, information models, component models, UI sketches, and activity or sequence diagrams. Although the detailed modeling and full elaboration of these artifacts are part of the broader dissertation work, this paper presents the resulting architectural design derived from them. The included sequence diagrams illustrate the interactions between core components and highlight how the service-oriented design supports the required functionality. In this way, the conceptual architecture in this chapter is explicitly grounded in the UCSD process, ensuring that service decomposition, component responsibilities, and interaction flows are directly aligned with real user needs and system requirements

3.1. Service-Oriented Knowledge-Based Process Planning (SO-KPP)

Concerning the transformation goals of this work, the aim of the thesis is to transform the existing monolithic KPP-System into a distributed, service-oriented architecture. This transformation enables compliance with the Arrowhead Framework, RAMI 4.0, and eCl@ss, ensuring interoperability with Industry 4.0 ecosystems. To clearly distinguish the legacy system from the transformed version, the term Service-Oriented Knowledge-based Process Planning (SO-KPP) is introduced. The conceptual modeling of the SO-KPP-System directly addresses the previously identified research challenges—distributability (RC1–RC3), Arrowhead-compliant integration (RC4–RC5), and adaptive ontology-based extensibility (RC6–RC8). Its objective is to reshape the legacy the KPP-System into a distributed, interoperable, and extensible architecture that is aligned with current industrial requirements. This transformation is required to overcome the scalability and integration limitations of the existing mediator-based architecture, which currently restricts distribution and dynamic extensibility. Unlike the monolithic KPP-System, the SO-KPP architecture enables modular service deployment, ontology-driven adaptation, and compliance with Industry 4.0 standards, establishing a foundation for autonomous and interoperable process planning in future digital ecosystems. The next step is to carry out the conceptual modeling of the SO-KPP architecture. Regarding the theory-building goals, the conceptual modeling focuses on the distributed decomposition of KPP functionality (addressing RC1–RC3), the integration of Arrowhead-compliant service orchestration (addressing RC4–RC5), and the design of an adaptive meta-service ontology to support dynamic evolution (addressing RC6–RC8).

Figure 1 presents the conceptual architecture of the proposed SO-KPP-System. The presented architecture illustrates the target system design and implicitly addresses the limitations of the former monolithic KPP-System, such as limited deployability, interoperability, and adaptability. The model is organized into four layers representing the logical distribution of functions and services. The overall architecture is based on the UCSD analysis conducted in the broader dissertation work, from which the system functions and their allocation to architectural components were derived. These elements represent the initial implementation step toward a service-oriented KPP-System and form the basis for the mediator-based service interaction described in the following sections. The detailed development of these highlighted components, including their underlying models and design rationale, was carried out in [

30]. The following subsections outline the four architectural layers and explain the role of the highlighted components in enabling the foundational service-oriented transformation of the KPP-System. The

blue-highlighted components are addressed through implementation and subsequent evaluation in the implementation and evaluation chapter of this paper.

3.2. Transformation from Monolithic to Service-Oriented Architecture

Regarding the transformation of the mediator-based architecture, the existing KPP-System operates as a Mediator-based Software Architecture (MSA) integrating distributed knowledge resources through wrappers. While this design provides centralized coordination and semantic mediation, it lacks distributability—every modification requires redeployment of the full system. To overcome this limitation, SO-KPP decomposes the MSA into modular, autonomous services communicating via RESTful interfaces. Each KPP-function is encapsulated as an independent KPP-Service, exposing its functionality through a REST API. This transformation enables loose coupling, isolated deployment, and scalability (addressing RC1–RC3). The mediator remains the central semantic hub but is now split into three dedicated mediator services corresponding to its former layers: Supervisory Planning Process (SPP), Execution Control Planning Process (ECPP), and Operational Planning Process (OPP). This modularization supports reusability, maintainability, and efficient knowledge exchange across distributed domains. The right-hand side shows an example of the future ontologies that SO-KPP should consider. The separate ontologies are provided in a distributable form. So far, the existing KPP-Ontology is to be taken up and supplemented by standards such as the eCl@ss-Ontology. The SO-KPP-Ontology represents the Meta-Ontology mentioned here. In the future, this will contain the definition of the services for adaptive implementation (addressing RC6–RC8).

In terms of the mediation process, the transformation of the mediator into three dedicated services can be illustrated with a sequence diagram (

Figure 2), showing the flow of a planning request through the three mediator layers. The diagram, based on the UCSD model, is included here to provide a clearer understanding of the process. It demonstrates how a user-initiated planning request is processed step by step [

30]. The SPP-Service retrieves orders and master data, enriching them semantically via the ontology database, and creates an MFB. The ECPP-Service extends the planning with execution control, integrating prior MFBs and creating an EFB. The OPP-Service completes the operational planning, refining the plan with sensor and production data, and creates a final OFB. This staged approach highlights the modular and distributable nature of the Mediator-Services while maintaining semantic consistency across the planning process. Each legacy function is encapsulated as an independent service with a clearly defined REST interface [

31]. In addition to the mediator, the KPP-System provides a process editor and query library that were previously anchored in the monolith. These are also to be provided as individual services as part of the distribution and represent the core services.

3.3. Integration of the Arrowhead Framework

Concerning the integration of the Arrowhead Framework, the SO-KPP manager layer incorporates the Arrowhead Core Systems to ensure local cloud (LC) compliance and orchestrate service execution. It provides dynamic service registration and discovery through the Service Registry, coordinates distributed service execution according to process requirements via the Orchestration System, and governs access rights while ensuring secure communication between services through the Authenticator System (addressing RC4–RC5). These components are crucial for the integration of the other SO-KPP-Services, as they must register with the manager layer and rely on it for authentication and orchestration. Together, they enable the registration, discovery, orchestration, and secure operation of distributed services within a local cloud environment, allowing the SO-KPP-System to be seamlessly integrated into an LC instance while supporting modular, secure, and compliant service execution.

3.4. Ontology-Driven Adaptivity (AOD)

Regarding the management and adaptive functionality of the SO-KPP-System, the manager layer incorporates, in addition to the Arrowhead Core Systems, a Service Manager (SM). The SM oversees the management of services, stores information regarding their structure, and integrates modifications from the ontology into the services. The SM has access to the ontologies and is primarily intended to serve as a Meta-Ontology for AOD. The Ontology Manager (OM) facilitates changes to the ontology, oversees their management, and notifies the SM of any updates. At the same time, clear access to the ontologies should be made possible via the OM, so that in the future, only the OM will allow the ontology to be queried. This means that access to the ontology can be managed at a central location, possible competing accesses can be avoided, and RC6 can be localized in the same way. The manager function plays a pivotal role in dynamic generation for adaptive implementation. The transition toward adaptive, self-configuring process planning is realized through the SO-KPP Meta-Ontology, which describes both service structures and their semantic relations. It provides the foundation for dynamic service generation: changes to the ontology (e.g., new process attributes or resource classes) automatically propagate to the SM, which instantiates corresponding service endpoints. This mechanism enables continuous system evolution without manual code modification. The Meta-Ontology interacts with the domain-specific KPP-Ontology and integrates standardized taxonomies such as eCl@ss and RAMI 4.0. This ensures semantic consistency and prepares the system for future cross-domain adaptability. The approach directly addresses remaining challenges of ontology-based dynamism and exclusive adaptation through ontology changes. The mechanism for adaptive implementation will be implemented, thus eliminating the need for any further manual alterations in the future.

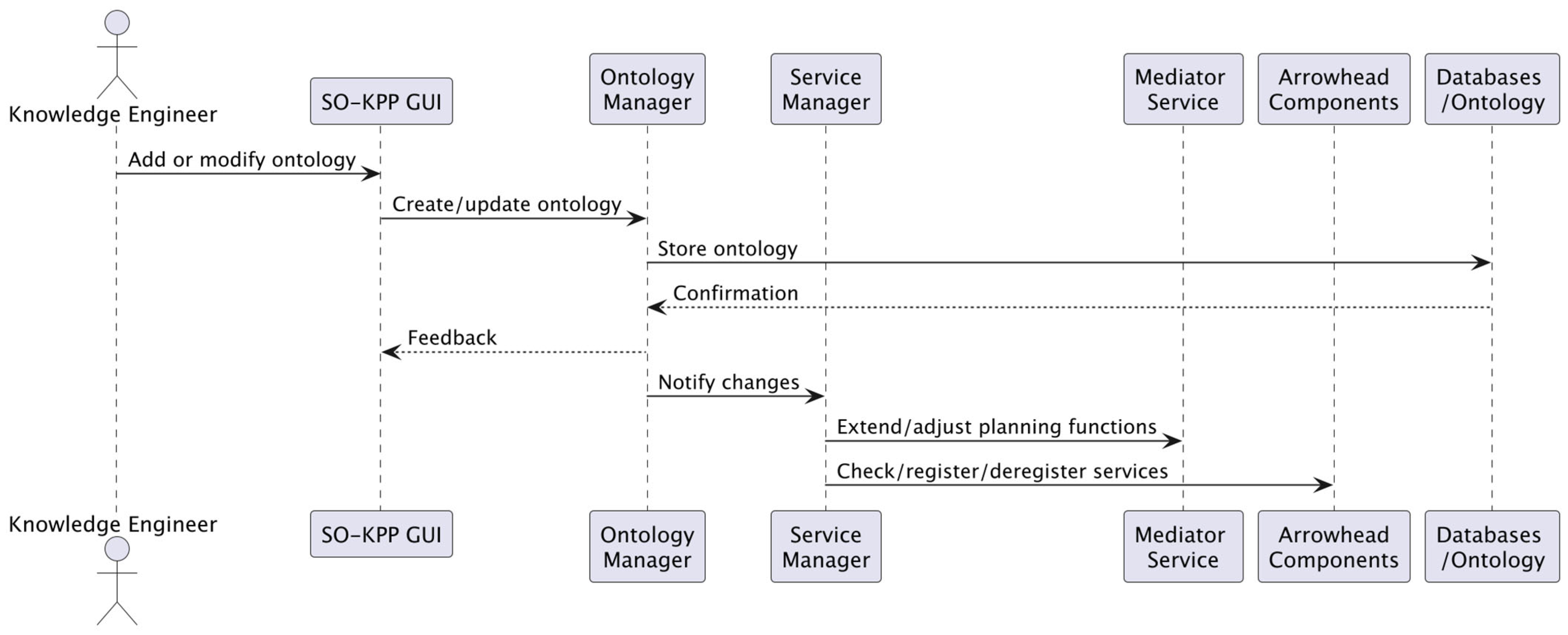

With respect to the adaptive, ontology-driven implementation in SO-KPP,

Figure 3, adapted from the UCSD methodology, illustrates the sequence of operations and highlights the dynamic, adaptive mechanism. The Knowledge Engineer (KE) interacts with the GUI/API to create or modify ontologies via the Ontology-Service, which ensures storage and standardization in the database. Changes are then communicated to the Service Manager (SM), which updates the functional services with extended or modified functions and interacts with the Arrowhead components to register, deregister, or validate the services. Then, it is possible to utilize the newly available planning functions. This sequence highlights the central role of the SM and OM in dynamic, ontology-based adaptation, enabling flexible and automated extension of system capabilities without manual code changes.

3.5. Example Scenario: Planning a Production Cell

Regarding the demonstration of SO-KPP functionality, a simplified use case illustrates the planning of a production cell.

Figure 4 presents a sequence diagram depicting the flow of events in this scenario. The process begins with a planner initiating a request via the web interface to generate a process plan for a specific product type. This request is forwarded to the

Orchestration System (OS), which queries the

Service Registry (SR) to identify the relevant KPP-Services, such as material selection, resource allocation, and tool matching. The OS then composes a workflow from the available services while ensuring access control through the

Authorization System (AS). Each service retrieves semantic information from the distributed ontologies via the

Ontology Manager (OM). If the product or process type is not yet represented, the Meta-Ontology dynamically extends the ontology and triggers the

Service Manager (SM) to instantiate a new specialized service. Finally, the resulting process plan is aggregated and returned to the user interface as a structured, standardized description compliant with AAS/eCl@ss standards.

This scenario in

Figure 4 demonstrates the feasibility of distributed, semantically interoperable, and self-extensible process planning. It exemplifies how service orchestration combined with ontology-driven adaptivity enables dynamic planning capabilities within Industry 4.0 ecosystems, supporting automated extension and adaptation of the system without manual code intervention.

3.6. Summary of Conceptual Modeling of SO-KPP

Concerning the conceptual modeling of SO-KPP, the existing KPP-Functions have been distributed to independent services as part of the modeling effort, fulfilling the research objective of distributivity and addressing the remaining challenges of clearly defined functionality, maintainability, and reusability (RC1–RC3). The services are designed with RESTful interfaces to ensure reliable interoperability, while the challenges of flexibility and scalability remain open and are expected to be resolved during implementation and technology selection.

The Mediator Architecture serves as the central and primary transformation target, laying the foundation for the SO-KPP-System. Its correct operation is essential, as it constitutes the hub for data integration and semantic processing, enabling downstream services, ontology-driven adaptations, and Arrowhead-based components to function effectively. The three-layer Mediator-Services (SPP, ECPP, and OPP) have been modularized from the original monolithic mediator, forming the core of the distributed SO-KPP architecture and addressing RC1–RC3.

Regarding Arrowhead integration, the Authorization, Orchestration, and Service Registry systems were considered during modeling, addressing RC4–RC5. For adaptive, ontology-driven functionality, the planned Service- and Ontology-Managers provide centralized ontology access and form the foundation for dynamic service generation, addressing RC6–RC8. This ensures that changes to the ontology automatically propagate to the services, enabling dynamic extension without manual code modification.

It should be emphasized that the conceptual model presented in this paper represents a blueprint for future implementation rather than a fully realized deployment. The focus is intentionally placed on the mediator-based architecture, as it forms the foundation for the complete SO-KPP-System. By implementing and evaluating these core Mediator-Services, this study provides an initial, verifiable step toward the SO-KPP vision.

In terms of the transition from theory to implementation, the results of the conceptual modeling now inform the implementation objectives, with a clear focus on the mediator architecture. The next step is the practical implementation of the mediator layer, which serves as the basis for subsequent extensions, including dynamic service generation, adaptive ontology-based functionality, and full Arrowhead integration.

4. Implementation of Distributed Mediator-Based Core Service

With respect to the practical implementation of the SO-KPP-System, the subsequent phases build upon the conceptual design by

focusing on the mediator-based core, which forms the foundation for all other services and extensions. The implementation described in this chapter builds upon the results presented in a previous Bachelor’s thesis [

30], which introduced the foundational concepts of a distributed mediator architecture for service-oriented, knowledge-based process support in Industry 4.0. Without a functional mediator, the distribution of KPP-functions, ontology-driven adaptations, and Arrowhead integration cannot operate effectively. The first practical step is to transform the existing three-stage mediator architecture (SPP, ECPP, and OPP) and the process editor into independent, containerized services. Each fundamental KPP-function is analyzed, separated, and encapsulated as a service with a REST interface, enabling modular deployment, scalability, and reusability.

Regarding service flexibility and scalability, modern technologies that are suitable for service-oriented development should be selected for both implementation and operation. The prototype implementation should, therefore, be realized in

Java using the Quarkus Framework [

31] to benefit from the libraries for service-oriented development [

30]. The implemented architecture strictly follows the microservice principle: The three mediators—SPP, ECPP, and OPP—have been realized as independent services, each running in isolated applications with its own data and logic. Each service encapsulates one layer of the planning logic and communicates with the others exclusively via REST interfaces using structured JSON payloads and consistent HTTP status codes. This design replaces the previous monolithic prototype and resolves earlier issues regarding modularity and coupling. The unified deployment enables holistic evaluation concerning performance, fault tolerance, and scalability.

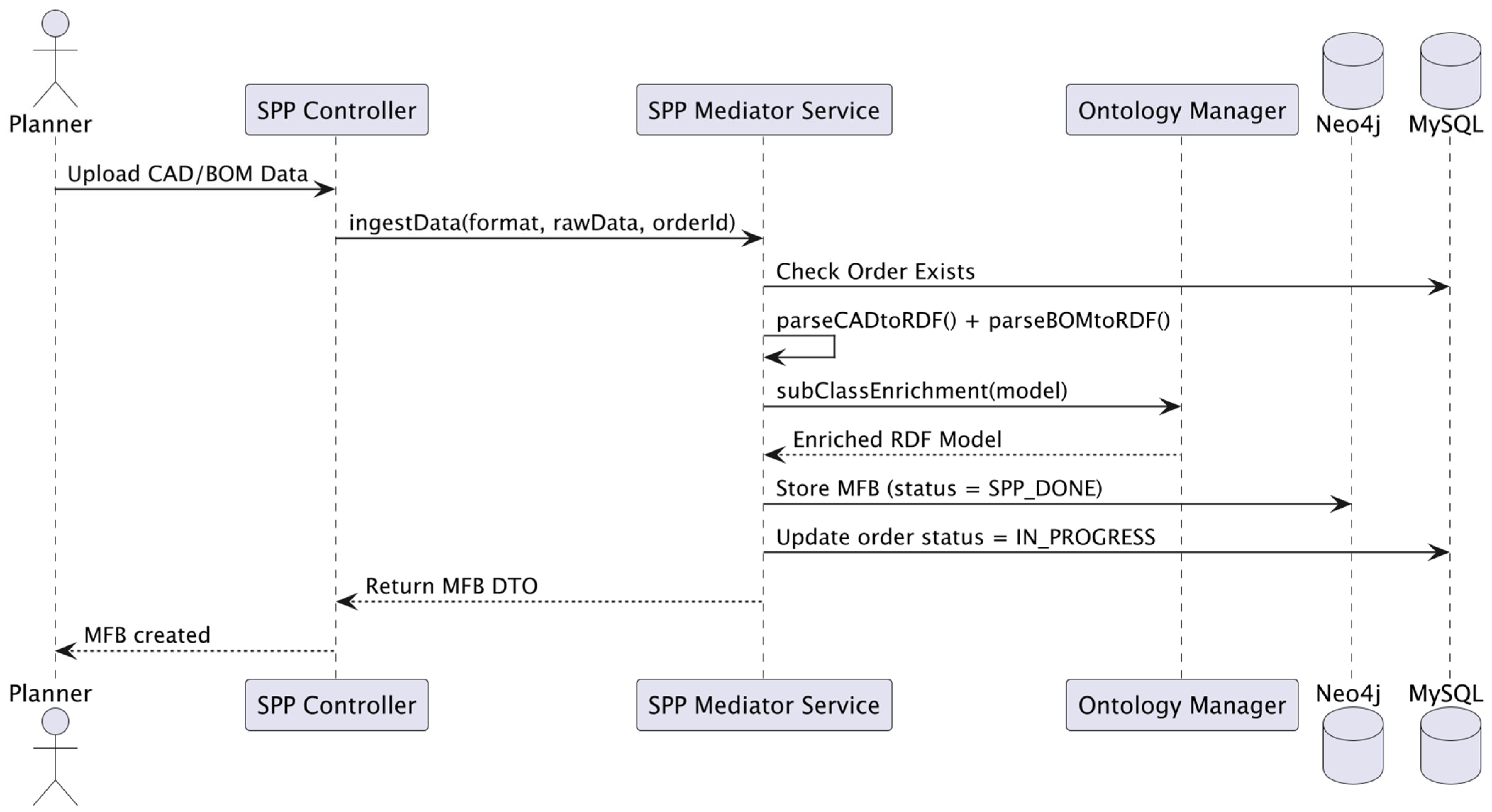

4.1. Implementation of SPP-Mediator-Service

Each mediator implements a defined transformation within the KPP chain, translating function blocks and enriching them with semantic information. The process starts within the SPP-Mediator, which ingests CAD or production data and generates the Meta Function Block (MFB). The SPP-Mediator aggregates all relevant input data, including CAD files, product features, and planning specifications. It is responsible for processing incoming production data (e.g., CAD files, Bill of Materials) and generating the Meta Function Block (MFB)—a semantically enriched representation of the manufacturing order.

The process is initiated through the REST endpoint/

spp/ingestSingleData, which accepts raw input data in various formats. The SPP validates the request, fetches the related order metadata, and invokes the core logic of the

ingestData() method.

Figure 5 shows the complete data flow within the SPP-Mediator—from input validation to RDF enrichment and persistence. The user queries the controller class with a CAD file, which accepts the requests. The controller forwards the request to the Mediator-Service. The

ingestData()-Method contains the enrichment logic in collaboration with the Ontology-Manager. This process is implemented in the next step so that the SPP-Service can generate MFBs.

Listing 1 illustrates the stepwise semantic enrichment pipeline with the method

ingestData(). The process integrates syntactic parsing and semantic reasoning. The service first validates incoming requests and persists an initial MFB instance in Neo4j. Next, CAD and BOM data are parsed into RDF models and merged into a unified semantic model. This model is then semantically enriched using ontology reasoning (

subClassEnrichment) to classify entities, infer relationships, and embed domain semantics. Finally, the enriched MFB is finalized with the status

SPP_DONE, and the corresponding production order is set to “

in progress”. The resulting semantic model forms the completed MFB, which persisted and is ready for subsequent planning stages and adaptive service integration in the SO-KPP architecture (addressing RC1–RC3). The resulting model forms the MFB, which persisted and is marked as complete.

| Listing 1. Central SPP mediator method implementing MFB creation, semantic enrichment, and persistence. |

@Transactional

public MFBEntity ingestData(String format, String rawData, Long orderId, String name) {

if (!mysqlRepository.orderExists(orderId)) {

Log.warnf("Order §d does not exist, aborting", orderId);

return null;

}

Log.infof("SPPMediatorService: ingestData => format=8s, orderId=8d", format, orderId);

// 1) Create and persist the initial MFB

MFBEntity mfbEntity = createAndPersistInitialMFB(format, rawData, orderId,

"Created via ingestData"

, name);

Model baseModel = parseCADtORDF(format, rawData); // (2) CAD → RDF

Model initialModel = buildInitialMfbModel(mfbEntity); // (2) Core structure

Model bomModel = parseBOMtoRDF(orderId); // (3) BOM → RDF

if (baseModel != null && bomModel != null) baseModel.add(bomModel);

if (initialModel != null) baseModel.add(initialModel);

// (4) Ontology enrichment

Model enriched = ontologyManager.subClassEnrichment(baseModel);

mfbEntity.setSemanticConstraints(serializeModel(enriched));

// (5) Finalize and persist enriched MFB

mfbEntity.setstatus ("SPP_DONE");

neo4 jRepository.update(mfbEntity);

setProductionorderStatus(orderId, "IN _PROGRESS");

return mfbEntity;

} |

4.2. Implementation of ECPP-Mediator-Service

The ECPP-Mediator takes the MFB and transforms it into an executable Execution Function Block. It also performs semantic reasoning and rule-based transformations that integrate the MFB into a contextualized planning model. This service represents the intermediate reasoning layer, bridging high-level manufacturing plans with execution parameters.

Figure 6 highlights the sequence of semantic enrichment in the ECPP-Mediator, showing the integration of ontology reasoning and rule-based adaptation before the final persistence step. It illustrates how the ECPP-Mediator extends an existing Meta Function Block by integrating machine-specific data and applying rule-based semantic enrichment to derive an Execution Function Block. The ECPP-Mediator first generates an initial EFB based on the previously created MFB. The existing wrappers are then used to compile the heterogeneous data, and the Ontology-Manager is used to further enrich it. Finally, the EFB is returned.

Listing 2 demonstrates how the ECPP-Mediator merges multiple RDF models (from production and machine data) and applies semantic enrichment followed by rule-based reasoning.

| Listing 2. Core ECPP-Method for Execution Function Block (EFB) creation. |

public EFBEntity runECPPMediator (MFBDto mfb, OFBDto ofb, boolean needToReturnMFB) {

if (mfb == null) throw new IllegalArgumentException("MFB cannot be null");

if (ofb != null) updateMEBwithoFB(mfb, ofb);

EFBEntity efbEntity = createBasicEFB(mfb);

Object created = neo4jRepository.create(efbEntity);

efbEntity = (EFBEntity) created;

Model efbModel = buildInitialEfbModel(efbEntity, mfb);

Model prodModel = productionDataWrapper.fetchAsRdfModel(mfb.getOrderId());

efbmodel.add(prodModel);

Model usedMachines = machineDataWrapper.mergeMachineDataIntoEFBModel(efbModel);

efbModel.add(usedMachines);

Model enriched = ontologyManager.subClassEnrichment(efbMode1);

miniRuleEngine.applyRulesMFB(enriched);

efbEntity.semanticConstraints = serializeModel(enriched);

efbEntity.status = "ECPP_DONE";

neo4jRepository.update(efbEntity);

return efbEntity;

} |

4.3. Implementation of OPP-Mediator-Service

The OPP-Mediator controls the real execution of the EFB on the production machines. For this purpose, process control is started and continuously monitored. During operation, status messages and machine data are recorded, such as, for example, tool wear or deviations from setpoints. These status data flow back into the EFB, allowing for real-time adjustment of process parameters. If an optional MFB is available, additional metadata or resource information is integrated into the EFB before execution to enable optimized process control. Once all execution steps are completed, a new OFB is generated containing detailed information about the actual machine runs. This OFB is stored in a knowledge database and is available for future optimization processes or as feedback for the ECPP-Mediator. The OPP-Mediator forms the final stage, transforming the EFB into an Operational Function Block (OFB) and connecting it to real machine execution data. It validates machine availability, enriches operational data, and monitors execution feedback.

Figure 7 illustrates how the OPP-Mediator orchestrates execution control through semantic linkage between machine entities and operational function blocks. The OPP mediator takes up the previous EFB and generates the OFB. This phase has been set up with mock services for the machines to align the data for testing purposes. This is because direct access to machine data is not possible. Listing 3 depicts the OPP Mediator logic—including machine verification, execution start, and OFB persistence. This method operationalizes the semantic model created by the ECPP-Mediator.

The implementation, however, presents challenges in realizing the OPP-Service. For further production-oriented development, it would be necessary to establish a more machine-oriented scenario—either by directly connecting to a real plant or by integrating a

digital twin (DT) [

32] —so that the specific machine logic, which fundamentally shapes the OPP-Service, can be utilized in real-world operation. A DT in this context refers to a virtual representation of a real system that captures all relevant data, states, and behaviors [

32]. This allows machine processes to be simulated and monitored, even in the absence of a physical installation [

32]. To supply the OPP planning layer with real-time machine data, integration with physical equipment or highly realistic simulations is required. Coupling the OPP-Service with a digital twin—a virtual replica of the machine—would enable consideration of actual machine states and responses. This would significantly enhance the validity and practical relevance of production planning. Implementing this requires the development of interface modules for Industry 4.0 platforms and controllers. A deeper investigation should therefore explore how the mediator services can be seamlessly integrated into such a connected environment without compromising the loose coupling and interchangeability of the services.

| Listing 3. Core OPP-Method for Operational Function Block (OFB) creation. |

public OFBEntity runOPPMediator (EFBDto efb, MFBDtO optionalMfb) {

if (efb == null) {

Log.error("OPPMediatorService: Cannot run OPP with a null EFB. ");

return null;

}

// 1) Retrieve/create the machine record

Long machineId = parseMachineIdInline(efb.getSemanticConstraints());

OPPMachineMonitoringEntity machine = getOrCreateMachineEntity(machineId);

Model ofbModel = null;

Model oldRdf = null;

Model newRdf = null;

try {

existingofb = createBasicofb(efb);

validateOFB(existingofb);

ofbModel = buildInitialofbModel(existingofb, efb);

existingofb.setSemanticConstraints(serializeModel(ofbModel));

Log.infof("runOPPMediator: Creating new OFB for EFB &d => OFB ID=8d",

efb.getId(), existingofb.getId());

// 2) Machine status handling

if (!"ONLINE".equalsIgnoreCase(machine.status)) {

Log.warnf("Machine ID=8d is offline; skipping CNC execution.", machineId);

existingofb.setstatus("MACHINE_OFFLINE");

} else {

machine.status = "RUNNING";

oppMySQLRepository.update(machine);

cncwrapper.startExecution(efb);

existingofb.setStatus("OPP_DONE");

runOPPCheck(existingofb);

// 4) Create or update OFB in Neo4j

existingof = (OFBEntity) oppNeo4jRepository.create(existing0fb);

// 5) Update machine to either ONLINE or MAINTENANCE

updateFinalMachineStatus(existingOfb, machine);

// 6) Notify ECPP that EFB is used

notifysPPofStatusChangeEFB(efb.getId(), "USED");

return existingofb;

} finally{

closeModelSafely(ofbModel, oldRdf, newRdf);

}

}

} |

Each service runs as a containerized Quarkus application, communicates strictly via REST interfaces, and uses JSON as the data exchange format. This made it possible, for the first time, to realize a prototype deployment covering all planning stages, with components automatically packaged into Docker images and deployed within a Kubernetes cluster. By consistently applying OpenAPI/Swagger definitions and enforcing REST/JSON conventions, documentation and standardization have been significantly improved, as all interfaces are now uniformly described and maintainable. Each mediator contributes a defined layer of semantic refinement—from raw data to knowledge-based execution—enabling transparent interoperability and traceable decision chains.

Building on this operational setup, the next step focuses on the formal evaluation of the developed mediator services. The evaluation assesses functional correctness, semantic enrichment, database persistence, and performance, directly addressing the evaluation objectives and verifying that the SO-KPP architecture achieves the research challenges (RC1–RC8) identified during conceptual modeling.

5. Discussion and Evaluation

With respect to the evaluation of the mediator-based core, the focus is on the three mediator services—

SPP, ECPP, and OPP—which constitute the

core of the SO-KPP-System. These services were implemented as independent, containerized microservices, each responsible for a distinct stage of production planning. The evaluation demonstrates

functional correctness, semantic enrichment, database persistence, and performance, showing the viability of the mediator-based service architecture. The broader research challenges were considered during design and implementation, and the evaluation also draws on insights from a previous Bachelor's thesis [

30], which introduced the foundational concepts of the distributed mediator architecture, particularly regarding modularity, scalability, and semantic adaptation.

5.1. Functional Scope and Validation

Regarding functional validation, the implemented mediator services fully reproduce and extend the functionality of the previous monolithic KPP-System. Previously, the generation of Manufacturing Function Blocks (MFBs), Execution Function Blocks (EFBs), and Operational Function Blocks (OFBs) was only theoretical or semi-automated.

Table 1 provides an overview of the enhanced functionalities offered by the SO-KPP-Mediator services compared to the legacy KPP-System [

30].

In terms of the functionality of the mediator services, the SPP-Service ingests CAD files and Bill-of-Materials (BOM) data to generate semantic Meta Function Blocks (MFBs). It validates inputs, verifies order existence, persists the MFB in Neo4j, and performs semantic enrichment, linking MFBs to production and machine data. This ensures that subsequent stages operate on semantically consistent and enriched data.

The ECPP-Service transforms MFBs into Execution Function Blocks (EFBs), optionally merging feedback from previous operational blocks and applying rule-based transformations. Semantic relationships are preserved, guaranteeing correct execution-level planning.

The OPP-Service finalizes operational planning by integrating dynamic machine feedback and generating Operational Function Blocks (OFBs). Even though some machine interactions are simulated, the service demonstrates how ontology-derived rules can influence operational decisions, such as blocking or releasing functions under critical conditions.

This Table highlights that the SO-KPP-Mediator services allow automated, semantically enriched planning that was previously not feasible. The functional blocks now exist as actual objects in the system, fully connected to production and machine data. The following section complements this functional validation with a concrete execution-based evaluation of the mediator services using an end-to-end planning example.

5.2. Execution-Based Evaluation of the Mediator-Based SO-KPP-Services

The evaluation of the mediator-based SO-KPP-Services follows an execution-oriented design-science approach. Rather than focusing on quantitative performance levels, the objective is to demonstrate that the proposed service-oriented and ontology-driven architecture is executable, semantically consistent, and functionally viable in practice. The evaluation is therefore based on four criteria: (i) functional correctness of the service pipeline, i.e., whether the SPP-, ECPP-, and OPP-Services correctly generate and transform Meta, Execution and Operational Function Blocks (MFB → EFB → OFB); (ii) semantic consistency, i.e., whether ontology-based typing and semantic relations are correctly applied and preserved across planning stages; (iii) persistence and data integrity, i.e., whether all generated function blocks and their relationships are correctly stored and retrievable in the distributed data infrastructure; and (iv) operational feasibility of the microservice-based design, i.e., whether the services can be executed independently and orchestrated to complete a full planning workflow.

These criteria are evaluated using an end-to-end planning run based on a production order example derived from a STEP input file. The execution is based on an existing production order stored in the KPP database. An excerpt of the order representation is shown below:

{

"orderId": 789094,

"productName": " AUTO_wooden_figure",

"status": "OPEN",

"productType": " Wooden Figure",

"isActive": true

} |

The order with ID 789094 was processed by the SPP-, ECPP-, and OPP-Services, resulting in a complete planning chain from a Meta Function Block (MFB) to an Execution Function Block (EFB) and an Operational Function Block (OFB). The SPP-Service generated the MFB with ID 1768405610490, which was then consumed by the ECPP-Service to create the corresponding EFB with ID 1768405868660. The OPP-Service finalized the operational planning and updated the order state accordingly.

An excerpt of the MFB returned by the SPP-Service for this order is shown below:

{

# Note: The namespace IRIs used here are illustrative RDF identifiers and not intended as web links.

"id": 1764273853272,

"orderId": 789094,

"name": "MFB_AUTO_wooden_figure_1764273853272",

"description": "Aggregated MFB from multiple inputs | subClassEnrichment done, triple count=29",

"format": "STEP",

"status": "SPP_DONE",

"semanticConstraints": [

"@prefix kpp: <http://example.org/kpp#> .",

"@prefix mat: <http://example.org/material#> .",

"@prefix rdf: <http://www.w3.org/1999/02/22-rdf-syntax-ns#> .",

"",

"# --- Materials ---",

"mat:OAK rdf:type kpp:Oak .",

"mat:BEECH rdf:type kpp:Beech .",

"",

"# --- Component model ---",

"kpp:Component_WOODEN_FIGURE",

" kpp:partName \"WOODEN_FIGURE\" ;",

" kpp:hasMaterial mat:OAK , mat:BEECH .",

"",

"# --- Product / Order mapping ---",

"kpp:Product_789094",

" rdf:type kpp:ManufacturingFeatureBlock ;",

" kpp:productName \"AUTO_wooden_figure\" ;",

" kpp:orderId \"789094\" ;",

" kpp:bomId \"3003\" ;",

" kpp:hasComponent kpp:Component_WOODEN_FIGURE ;",

" kpp:hasMaterial mat:OAK ;",

" kpp:alternativeMaterial mat:BEECH ;",

" kpp:materialCount \"2\" .",

"",

"# ... (STEP entity typing and further triples omitted for brevity)"

]

} |

Unit tests and service-level invocations confirmed that the Mediator-Services correctly handle normal and exceptional cases for this execution, including null inputs, missing orders, and malformed data. For order 789094, all three services produced valid function blocks without runtime errors, and the state transitions SPP_DONE, ECPP_DONE, and OPP_DONE were correctly reached.

The ECPP-Service transformed this MFB into an Execution Function Block. An excerpt of the generated EFB is shown below:

{

# Note: The namespace IRIs used here are illustrative RDF identifiers and not intended as web links.

"id": 1764273899372,

"orderId": 789094,

"name": "EFB_for_MFB_AUTO_wooden_figure_1764273853272",

"description": "Derived from [MFB_AUTO_wooden_figure_1764273853272]",

"format": "STEP",

"status": "ECPP_DONE",

"semanticConstraints": [

"@prefix ecpp: <http://example.org/ecpp#> .",

"@prefix kpp: <http://kpp.fernuni-hagen.de/ontology#> .",

"@prefix mfb: <http://example.org/spp#mfb_> .",

"@prefix prod: <http://example.org/production#> .",

"@prefix mat: <http://example.org/material#> .",

"@prefix rdf: <http://www.w3.org/1999/02/22-rdf-syntax-ns#> .",

"",

"# --- Materials ---",

"mat:OAK rdf:type <http://example.org/kpp#Oak> .",

"mat:BEECH rdf:type <http://example.org/kpp#Beech> .",

"",

"# --- Production order ---",

"prod:order_789094",

" rdf:type kpp:ProductionOrder ;",

" <itemName> \"AUTO_wooden_figure\" ;",

" <orderId> \"789094\" ;",

" <status> \"IN_PROGRESS\" .",

"",

"# --- Base MFB link ---",

"mfb:1764273853272 rdf:type kpp:MetaFunctionBlock .",

"",

"# --- Component description ---",

"<http://example.org/kpp#Component_WOODEN_FIGURE>",

" <http://example.org/kpp#partName> \"WOODEN_FIGURE\" ;",

" <http://example.org/kpp#hasMaterial> mat:BEECH , mat:OAK .",

"",

"# --- Derived Execution Function Block (EFB) ---",

"ecpp:efb_1764273899372",

" rdf:type kpp:Functionblock , kpp:ExecutionFunctionBlock ;",

" ecpp:hasBaseMFB mfb:1764273853272 .",

"",

"# --- Original MFB summary (kept minimal) ---",

"<http://example.org/kpp#Product_789094>",

" rdf:type <http://example.org/kpp#ManufacturingFeatureBlock> ;",

" <http://example.org/kpp#productName> \"AUTO_wooden_figure\" ;",

" <http://example.org/kpp#orderId> \"789094\" ;",

" <http://example.org/kpp#bomId> \"3003\" ;",

" <http://example.org/kpp#hasComponent> <http://example.org/kpp#Component_WOODEN_FIGURE> ;",

" <http://example.org/kpp#hasMaterial> mat:OAK ;",

" <http://example.org/kpp#alternativeMaterial> mat:BEECH ;",

" <http://example.org/kpp#materialCount> \"2\" .",

"",

"# ... (STEP entity typing omitted for readability)"

],

"rawData": "<placeholder: derived from MFB STEP payload; omitted for thesis/report readability>"

} |

Database verification was performed after the execution of this planning run. The Neo4j graph database contained the generated MFB, EFB, and OFB entities with the expected identifiers and relationships, while MySQL reflected the corresponding production order state (IN_PROGRESS) and workflow tracking information. The identifiers of the function blocks were consistent across both databases, confirming persistence and data integrity.

Semantic inspection of the RDF models stored in Neo4j further demonstrated that ontology-based enrichment was applied correctly. For the generated MFB 1764273853272, the RDF model stored in Neo4j explicitly represents the block as a kpp:MetaFunctionBlock and links it to product, material, and order entities derived from the STEP and BOM input. After transformation by the ECPP-Service, the EFB 1768405868660 was represented as a kpp:ExecutionFunctionBlock and explicitly linked to its originating MFB via the semantic RDF relation ecpp:hasBaseMFB, while both blocks are associated with the same production order node in the Neo4j property graph. These RDF triples confirm that semantic identity and process context are preserved across planning stages.

A graph-based view of this execution, shown in

Figure 8, illustrates the MFB, EFB, and their ontology-based relations as stored in Neo4j for order 789094. The graph confirms that the Mediator-Services produce a consistent, queryable, and semantically enriched planning state that can be accessed by downstream services and external systems.

Taken together, this execution-based validation demonstrates that all four evaluation criteria are fulfilled. The successful generation and transformation of MFB 1764273853272 into EFB 1764273899372, and subsequently into an OFB, confirms functional correctness (RC1–RC3). The RDF-based ontology typing and relations confirm semantic consistency across planning stages (RC6–RC8). The presence of all entities and links in Neo4j and MySQL confirms persistence, reproducibility, and auditability. Finally, the successful orchestration of the SPP-, ECPP-, and OPP-Services for a complete order execution demonstrates operational feasibility and modular extensibility of the microservice-based architecture (RC1–RC5).

This shows that the mediator-based SO-KPP implementation now realizes in practice what was previously only conceptual in the legacy KPP-System, providing an executable, semantically grounded, and persistently stored planning pipeline that forms a solid basis for further Arrowhead integration and ontology-driven extensions.

6. Conclusions and Future Work

In light of the distributed design requirements of Industry 4.0, the KPP-System must adopt a service-oriented architecture that supports distributed industrial processes, components, knowledge resources, and software resources within a virtual enterprise. For a unified architecture in the digital ecosystem of Industry 4.0, compatibility with the Arrowhead Framework (AF) is essential. Transitioning from a monolithic software architecture (MSA) to a service-oriented architecture (SOA) within the AF aligns with Industry 4.0 developments, enabling smart planning services that increase production flexibility through reconfiguration, late binding, and loose coupling. Semantic descriptions of planning data, including production-relevant information, remain inherently associated with the product.

Building on the research foundation, this paper first presents problem areas, research questions, and associated objectives, followed by a review of SOA, the Arrowhead Framework, and adaptive ontology-based development (AOD) with meta-ontologies. Building on these findings, the first conceptual architecture was modeled, illustrating: core KPP functions as independent services with REST interfaces, integration of Arrowhead components (Service Registry, Orchestrator, and Authentication), and an initial design of the Service- and Ontology-Manager for adaptive, ontology-based extension (addressing RC1–RC8).

Regarding the practical implementation, the mediator-based core services (SPP, ECPP, and OPP) are now operational. The SPP-Service ingests CAD data and generates semantically enriched Manufacturing Function Blocks (MFBs), the ECPP-Service transforms MFBs into Execution Function Blocks (EFBs) while preserving ontology-based enrichment, and the OPP-Service integrates operational data to produce OFBs. This implementation verifies that the functional blocks, previously only theoretical in the legacy system, can now be automatically generated, semantically enriched, persisted, and linked across services, demonstrating the feasibility of the distributed design.

The prototype implementation confirms that: Core KPP-Functions can be transformed into microservices, with clear REST interfaces and containerized deployment. Semantic enrichment is fully integrated at each planning stage, combining production and machine data. Database persistence in Neo4j and MySQL ensures consistent representation and tracking of orders and planning data. Unit tests validate functional correctness, covering single and multiple data ingestion, transformation, and operational execution.

Considering overall feasibility, these results indicate that the conceptual architecture is viable, and the mediator-based service approach provides a solid foundation for future steps. Remaining research objectives—Arrowhead integration, standardized interfaces (RAMI 4.0, eCl@ss), and a fully adaptive ontology-based system—will be addressed in subsequent work. In essence, this paper presents the first operational building block, bridging the gap between conceptual modeling and practical implementation, and demonstrating that the envisioned distributed, semantically aware, and service-oriented KPP-System is feasible.

Looking ahead to future implementation steps, the next phase of SO-KPP development will extend the distribution of the remaining core services, including the process editor, transforming them into independent, containerized services with well-defined REST interfaces. Subsequently, the integration of Arrowhead services will be completed, enabling dynamic registration, deregistration, and orchestration of all distributed services within the local cloud environment (addressing RC4–RC5). In parallel, the mediator will be enhanced with a standardized wrapper to support the conversion of planning data into standardized representations, such as Asset Administration Shells (AAS) and eCl@ss classifications, ensuring compliance with Industry 4.0 standards (RC3, RC7). Finally, the entire service generation and deployment process will be driven by the SO-KPP-Ontology, enabling fully adaptive, automated creation of new services and planning functions in response to evolving production requirements (RC6–RC8). These steps collectively aim to realize the full vision of a distributed, semantically aware, and self-adaptive SO-KPP-System, bridging conceptual modeling, practical implementation, and standard-compliant interoperability in Industry 4.0 environments.