1. Introduction

The growing demand for latency-sensitive, high-availability applications has spurred the adoption of distributed computing paradigms, such as Edge Computing and IoT, to meet these stringent requirements. Within this context, Mobile Edge Computing (MEC) has gained particular relevance when integrated with Unmanned Aerial Vehicles (UAVs), which are deployed as mobile edge nodes to extend computational capabilities to remote or infrastructure-limited regions. These decentralized architectures bring processing closer to data sources, reducing latency and bandwidth consumption while enhancing scalability. However, they also face critical challenges regarding energy consumption, especially when operating in battery-powered or energy-constrained environments. This study aims to systematically analyze energy-aware scheduling and task offloading strategies in edge computing, addressing how such techniques are evaluated, which architectures they target, and how AI/ML supports energy optimization.

In response to these challenges, green computing strategies—particularly energy-efficient scheduling and task offloading—have emerged as key approaches for improving sustainability in edge environments. Rather than solely focusing on performance, these strategies aim to achieve a balance between computational efficiency and energy conservation. Green scheduling emphasizes the optimal allocation and prioritization of tasks to minimize energy usage, while task offloading leverages more powerful servers or cloud resources to process workloads that would otherwise drain local device resources. When combined, these approaches can significantly reduce energy costs while maintaining service quality.

In this review, the term green refers primarily to techniques aimed at reducing energy consumption and improving energy efficiency, whereas sustainable encompasses broader aspects, including long-term environmental impact, carbon footprint reduction, and the integration of renewable energy sources. Although these terms are sometimes used interchangeably in the literature, this distinction is adopted to ensure conceptual clarity.

Recent research trends indicate that artificial intelligence (AI) and machine learning (ML) techniques are increasingly integrated into these strategies, enabling context-aware, adaptive, and autonomous decision-making in dynamic environments. Such integration allows systems to account for real-time variations in workload, network conditions, and energy availability, making energy-aware computing more effective.

This paper presents a systematic literature review (SLR) examining the consolidation phase of AI-driven energy-aware scheduling and task offloading strategies in edge computing, covering studies published between 2018 and 2026. This period marks the maturation of reinforcement learning approaches, multi-tier architectures, and green energy integration within MEC and UAV-enabled systems, establishing methodological foundations that continue to shape current research.

The review systematically maps and critically analyzes the principal energy-aware scheduling and offloading strategies adopted in distributed environments. The analysis is structured around three main dimensions: (i) identifying and categorizing the evaluation metrics most frequently employed in the literature; (ii) assessing the role and effectiveness of AI and ML techniques in enabling energy optimization; and (iii) synthesizing open challenges, research gaps, and emerging trends in the field.

The primary contribution of this work lies in its comparative methodological perspective, which highlights inconsistencies, underexplored areas, and opportunities for advancing the state of the art. By consolidating evidence across heterogeneous architectures, this review provides actionable insights and research guidelines for both academics and practitioners seeking to design energy-efficient solutions in complex distributed environments, including edge computing, IoT ecosystems, and vehicular networks.

Unlike existing surveys such as Cong et al. [

1] and Xia et al. [

2], which primarily focus on hierarchical optimization or UAV-enabled edge computing, respectively, this review provides a unified and systematic analysis that jointly considers scheduling, task offloading, sustainability-oriented metrics, and the explicit role of AI/ML across multiple edge-based architectures. This integrated perspective enables a more comprehensive understanding of how energy efficiency strategies evolve across heterogeneous systems.

Distinctive Contribution of This Review

Unlike existing surveys that address energy efficiency in edge computing from isolated perspectives, this work provides a unified and systematic analysis of green scheduling and task offloading strategies jointly, explicitly incorporating sustainability-oriented metrics and the role of AI/ML techniques across multiple architectural contexts. In contrast to Cong et al. [

1], which primarily focuses on hierarchical energy optimization mechanisms, and Xia et al. [

2], which emphasizes UAV-enabled edge computing from a resource management perspective, this review integrates scheduling, offloading, sustainability metrics, and intelligent decision-making into a single analytical framework. This unified treatment enables a cross-architectural comparison and a clearer understanding of how AI/ML techniques influence energy-aware decisions in heterogeneous edge environments.

2. Methodology

The methodology of this SLR follows the framework proposed by [

3], covering research questions (RQ), search protocol, study selection, and data extraction. The Parsifal tool [

4] was employed to systematize the review process.

2.1. Research Objective

The objective of this study is to identify, through a SLR, the main green scheduling and task offloading strategies aimed at energy optimization in edge computing environments. In addition, the study seeks to analyze how different architectures influence these decisions, map the most commonly used metrics for assessing energy efficiency, and understand the role of artificial intelligence (AI) and machine learning (ML) techniques in these approaches.

2.2. Research Questions (RQ)

In this SLR, four RQ were defined to guide the data extraction and analysis, as well as to identify gaps and trends in the field of energy efficiency in edge computing. The RQ are as follows:

RQ1: What green scheduling and task offloading strategies are most commonly used for energy optimization in edge computing environments, and how are these approaches evaluated?

RQ2: How do different architectures (such as UAVs, IoT, MEC, fog-cloud) influence energy-aware scheduling decisions?

RQ3: What metrics are most commonly used to evaluate energy efficiency in task scheduling and offloading techniques?

RQ4: What is the role of machine learning and artificial intelligence algorithms in offloading and energy-aware scheduling strategies?

RQ1 aims to map the main green scheduling and task offloading strategies adopted in the literature, identifying how these approaches are evaluated and what trends stand out in the context of energy optimization in edge computing. RQ2 seeks to analyze how different architectures, such as UAVs, IoT, MEC, and fog-cloud, impact energy-aware scheduling decisions, considering the specific challenges of each scenario. RQ3 focuses on identifying the most recurring metrics used to evaluate the energy efficiency of the proposed techniques, allowing a more precise comparison between different studies. Finally, RQ4 aims to understand the role played by machine learning and artificial intelligence algorithms in offloading and energy-aware scheduling strategies, highlighting how these technologies have contributed to making solutions more adaptive and efficient.

2.3. Search Protocol

A systematic search was performed across four major digital libraries: IEEE Xplore, Scopus, Web of Science, and ACM Digital Library. The search strategy was designed to identify studies addressing both energy-aware scheduling strategies and task offloading techniques in distributed computing environments.

The search string was constructed by combining terms related to energy-aware scheduling—such as “green scheduling”, “energy-efficient scheduling”, “energy-aware scheduling”, and “green energy scheduling”—, offloading techniques—including “task offloading”, “computation offloading”, and “code offloading”—, and computing contexts such as “edge computing”, “cloud computing”, “mobile devices”, “mobile cloud”, “cloudlets”, and “edge devices”. Additionally, to ensure a focus on sustainability and energy efficiency aspects, terms such as “energy”, “sustainability”, “energy harvesting”, “renewable energy”, “solar”, “hybrid energy”, and “sustainable computing” were included. Boolean operators were used to combine these expressions, aiming to cover the different terminologies adopted in the scientific literature. The complete search string is presented in

Table 1.

The search was conducted in the title, abstract, and keywords fields of each database to maximize coverage while maintaining alignment with the research objectives. Only studies published in English were considered. The selected time window (2018–2026) was defined to capture the period in which AI-driven energy-aware scheduling and offloading mechanisms became consolidated in edge computing literature, particularly with the integration of deep reinforcement learning and multi-tier MEC architectures.

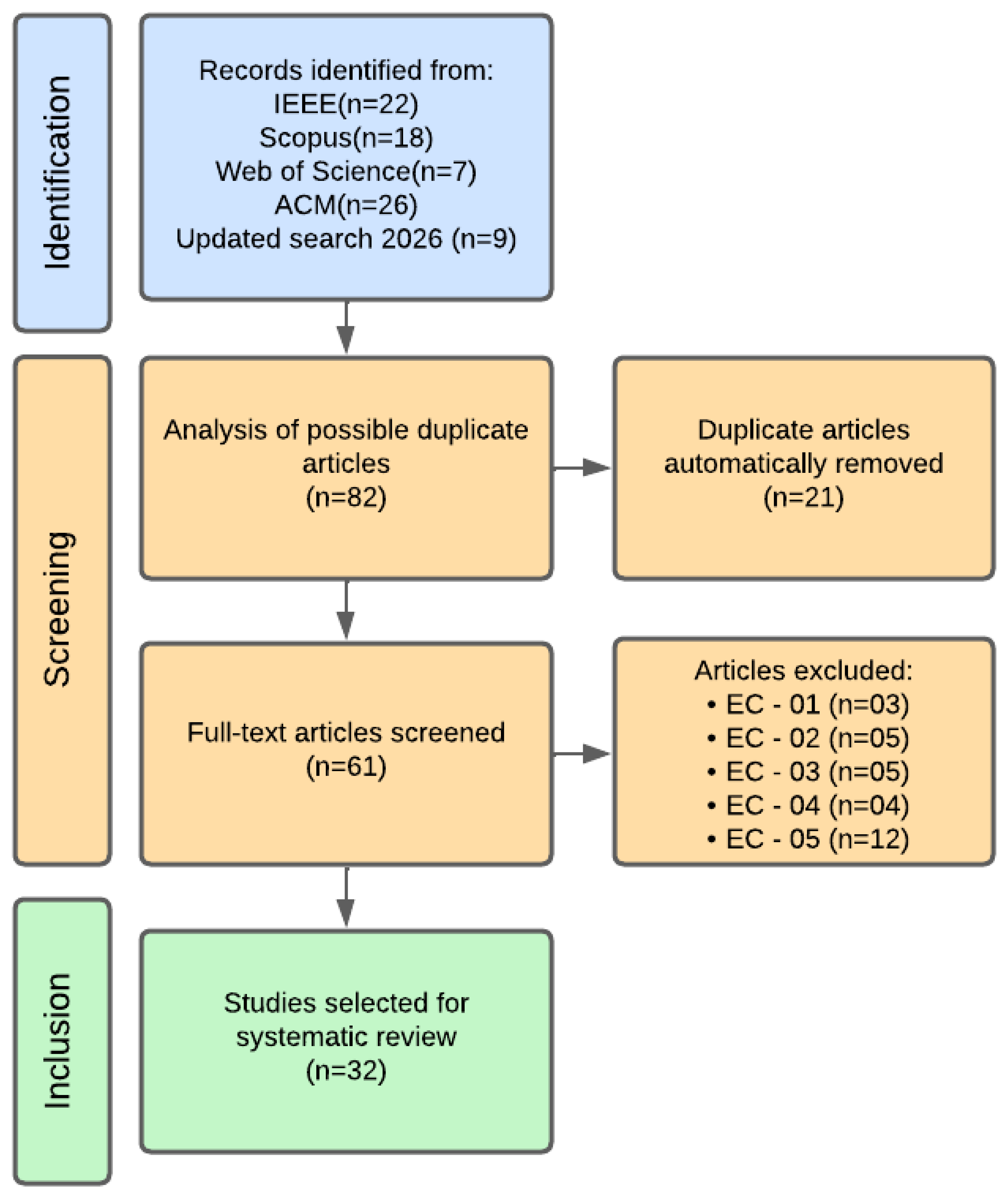

The initial search retrieved 22 records from IEEE Xplore, 18 from Scopus, 7 from Web of Science, and 26 from ACM Digital Library, totaling 73 studies. An updated search extending the time window to 2026 identified 9 additional records, resulting in 82 studies overall. After removing duplicate entries identified through the Parsifal tool, the remaining articles were subjected to the predefined inclusion (

Table 2) and exclusion criteria (

Table 3). Each study underwent a rapid screening based on title, abstract, and keywords, and was excluded if any exclusion criterion was met. This rigorous filtering process resulted in a final corpus of 32 selected studies for detailed analysis, comprising 30 primary research articles and 2 contextual survey papers. Among the selected primary studies, 14 correspond to conference publications, while the remaining were published in peer-reviewed journals.

2.4. Study Selection

After applying the defined search string to the databases, 26 articles were found in ACM, 22 in IEEE, 18 in Scopus, and 7 in Web of Science, totaling 73 articles.

Among the articles found, some were irrelevant or unrelated to the objective of the systematic review. For this reason, this stage defined inclusion and exclusion criteria to refine the results and obtain the most relevant articles. The chosen criteria are presented, respectively, in

Table 2 and

Table 3.

After the initial search, a total of 73 records were identified across the selected digital libraries (IEEE Xplore, Scopus, Web of Science, and ACM Digital Library). An updated search extending the time window to 2026 retrieved 9 additional records, resulting in 82 identified studies. Following the automatic removal of 21 duplicate entries, 61 studies remained for full-text screening. During the eligibility assessment, 29 articles were excluded based on the predefined exclusion criteria (EC-01 to EC-05). The full list of selected articles is available in

Table 4. As a result, 32 studies were included for detailed analysis, of which 30 correspond to primary investigations and 2 to secondary survey studies. Among the selected primary studies, 14 were published in conference proceedings, while the remaining were journal publications.

Figure 1 presents the complete selection process following the PRISMA guidelines.

2.5. Classification of Primary and Secondary Studies

Among the 32 selected studies, 30 correspond to primary empirical or analytical investigations, while 2 are secondary studies (survey papers) [

1,

2].

Although EC-05 defines the exclusion of secondary studies from the primary synthesis, these two high-impact surveys were retained exclusively to provide contextual background and enable comparative positioning of the present review.

Importantly, secondary studies were not included in: (i) the quantitative synthesis, (ii) the extraction of numerical performance gains, (iii) the correlation analysis, or (iv) the methodological frequency analysis.

All reported percentages, performance improvements, and statistical analyses were derived exclusively from primary studies.

2.6. Data Extraction and Analysis

After selecting the 32 articles included in the review, a systematic data extraction was performed with the aim of answering the previously defined RQ. For each study, the following information was collected:

Description of the main approach or technique;

Evaluation method of the proposed strategies;

Type of architecture or computational environment adopted in the study (e.g., UAVs, IoT, MEC, fog-cloud);

Metrics used to evaluate energy efficiency and performance;

Presence of machine learning or artificial intelligence techniques, including the type and name of the technique;

Role or function of these AI techniques in the system (e.g., decision-making, load prediction, optimization);

The extracted information was organized and analyzed to identify recurring patterns, significant evidence, and relevant gaps in the literature.

It is important to note that only primary studies were considered for quantitative synthesis and metric aggregation. Secondary studies were analyzed exclusively for contextualization and comparative discussion.

3. Results

3.1. Comparative Methodological Analysis of Selected Articles

The analysis of the 32 selected articles revealed distinct methodological patterns, with notable convergences and divergences in the approaches adopted. The studies were critically analyzed with respect to the four RQ, focusing on the methods employed, evaluation strategies, and consistency with the stated objectives. This analysis makes it possible to identify dominant trends, methodological gaps, and opportunities for future research in the field of energy-aware scheduling and offloading in distributed systems. For clarity, two of the selected studies correspond to survey papers and were analyzed separately from primary empirical and analytical works, being excluded from quantitative aggregation.

Regarding research methodologies, a predominance of simulation-based approaches was observed, present in 24 of the 32 articles analyzed. These studies predominantly used tools such as NS-3, CloudSim, and MATLAB to model edge computing environments, with varied configurations of workload, device mobility, and energy constraints. A notable convergence in this group was the use of standardized evaluation metrics, such as total energy consumption, execution latency, and task completion rate. However, significant discrepancies emerged in the definition of test scenarios, and some studies relied on overly simplified configurations that compromise the external validity of their findings.

Five articles followed theoretical-mathematical approaches, employing convex optimization models, game theory, and Markov Decision Processes (MDPs) to derive performance bounds and convergence properties. These works demonstrated rigor but often failed to show applicability in real-world scenarios. A critical divergence in this group was the absence of unified benchmarks for comparing solutions.

The remaining three studies adopted empirical methodologies, implementing and evaluating their solutions in real environments or specialized testbeds. These studies stood out by providing concrete evidence of effectiveness but were limited by the reduced scale of experiments and the difficulty of reproducibility. A positive convergence was the careful statistical validation of results, present in all.

Regarding alignment with the RQ, it was identified that simulation-based approaches were predominant (appearing in 20 of the 26 primary studies) in answering RQ1 (green scheduling strategies) and RQ3 (evaluation metrics), while theoretical methods focused on RQ4 (the role of AI/ML). Meanwhile, the empirical studies provided the most relevant contributions to RQ2 (architecture influence), with detailed analyses of specific scenarios such as UAVs and vehicular networks.

A concerning divergence was the lack of methodological transparency in some articles, which did not adequately describe simulation parameters or criteria for selecting case studies.

Table 5 and

Table 6 summarize the comparative analysis from complementary perspectives.

Table 5 presents the methodological characterization of the selected studies, highlighting for each article its main methodological approach, architectural focus, AI/ML techniques, alignment with the RQ, and identified limitations. This table illustrates the predominance of simulation-based studies over empirical investigations and the uneven distribution of research efforts across the different research questions.

Table 6, in turn, systematizes the energy-related strategies and the quantitative results explicitly reported in the primary studies. By separating methodological characterization from quantitative reporting, the analysis improves clarity and avoids conflating study design aspects with performance outcomes.

Together, these tables reveal important opportunities for future work, particularly in the development of hybrid methodologies that combine theoretical rigor with large-scale experimental validation.

The quantitative values presented in

Table 6 correspond exclusively to performance improvements explicitly reported by the respective primary studies under their specific experimental configurations. No cross-study aggregation, averaging, or statistical meta-analysis was performed in this review.

Reported percentages and performance gains reflect comparisons against baseline methods defined within each original study, and therefore should not be interpreted as directly comparable across heterogeneous experimental setups.

Across the analyzed works, recurring energy-related procedures include: (i) joint task offloading and resource allocation mechanisms; (ii) reinforcement learning-based dynamic adaptation strategies; (iii) mobility-aware prediction models such as LSTM; (iv) multi-objective optimization balancing latency and energy consumption; and (v) green energy integration and pricing mechanisms.

When explicitly quantified in the primary studies, reported energy efficiency improvements typically range between 15% and 40%, while latency reductions in vehicular and UAV-enabled MEC scenarios frequently range from approximately 20% to 30%, depending on workload characteristics, baseline comparison models, and architectural complexity.

3.2. Green Scheduling and Task Offloading Strategies

Our analysis reveals that contemporary green scheduling and task offloading strategies in edge computing span diverse architectures, including UAV-enabled MEC and fog-cloud collaborations, each presenting unique energy optimization challenges. Recent 2026 studies reinforce the consolidation of DRL-based adaptive policies. For instance, the Adaptive Customized DQN (2026) introduces architecture-aware policy tuning mechanisms [

31], while the 6G Edge DRL framework integrates DQN with PSO [

34] to improve energy–latency trade-offs in ultra-dense scenarios.

A clear trend is the use of machine learning and reinforcement learning for offloading and energy-aware scheduling decisions. Algorithms based on Deep Reinforcement Learning, DQN, and Q-learning variants are employed to learn adaptive policies in dynamic and heterogeneous environments, resulting in gains in energy efficiency and robustness against load variations and mobility [

7,

9,

18,

30,

31,

32,

33,

34].

In the field of heuristics and bio-inspired methods, approaches inspired by natural behaviors, such as the Bay Weaver bird, and the use of metaheuristics like Differential Evolution, NSGA-III, and Ant Colony Optimization stand out, seeking efficient solutions for resource allocation and offloading in energy-constrained scenarios [

17,

22,

25,

27].

Collaboration among multiple layers and the use of renewable energy are central strategies to increase system flexibility and sustainability. Solutions integrating different processing levels and energy sources, prioritizing green energy use and optimizing load balancing and latency, have proven effective [

12,

13,

24,

28].

In the context of UAVs and mobile computing, hybrid approaches combining UAVs and base stations to provide edge computing services in areas with limited infrastructure, as well as competitive energy allocation models and cooperative DNN task offloading, contribute to optimizing energy consumption and reducing latency [

14,

16,

19,

21,

33].

Multi-objective optimization is addressed by strategies integrating scheduling, VM migration, offloading, and green energy transfer, aiming to minimize non-renewable energy use, optimize energy and execution time, and balance multiple performance criteria [

8,

15,

17,

23,

32,

34].

Furthermore, frameworks combining offloading, user association, and base station shutdown in ultra-dense networks (UDN) have demonstrated significant reductions in energy consumption and the number of active stations [

5].

The evaluation of these approaches is predominantly performed through simulations, using metrics such as total energy consumption, latency, throughput, offloading success rate, energy efficiency, and renewable energy waste. Some studies stand out for practical experiments in real environments [

24].

In summary, the most widely used strategies for energy optimization in edge computing involve: (i) machine learning and reinforcement learning for adaptive offloading and scheduling decisions [

7,

9,

18,

30]; (ii) heuristics and bio-inspired methods for efficient resource allocation [

17,

22,

25,

26,

27]; (iii) multi-layer collaboration and renewable energy use [

12,

13,

24,

28]; (iv) multi-objective optimization considering energy, latency, cost, and QoS [

8,

15,

17,

23]; and (v) specific solutions for UAVs and mobile edge [

14,

16,

19,

21].

These approaches have proven effective in reducing energy consumption, improving reliability, and increasing throughput, but there is still room for advances in sustainability, practical validation, and integration of multiple objectives in real and dynamic environments.

3.3. Architectures and Energy Scaling

The analysis of the selected studies highlights that the underlying architecture plays a fundamental role in energy-aware scheduling decisions in edge computing environments. The studied architectures range from UAV-enabled MEC, heterogeneous IoT, collaborative fog-cloud, UDN, renewable-powered serverless edge, and hybrid cloud-edge systems. This diversity imposes unique constraints, specific opportunities, and distinct strategies for energy optimization.

The presence of UAVs as mobile edge servers is one of the most notable trends. These systems require algorithms capable of handling mobility, energy constraints, and dynamic coverage. Works such as [

14,

16,

18,

21,

26,

33] show how UAVs need to make joint decisions on trajectory, resource allocation, and energy replenishment to ensure QoS with minimal consumption. For example, Chen et al. [

18] propose a model with multiple learners (Triple Learner) to manage UAV trajectory, energy, and applications in a coordinated manner. Similarly, Dai et al. [

16] and Yuan et al. [

21] demonstrate that the mobile and energy-constrained nature of UAVs imposes unique challenges on scheduling strategies, which do not directly apply to fixed architectures.

Another important group consists of heterogeneous IoT architectures in UDN or industrial environments. In these scenarios, IoT devices are numerous, energy-constrained, and often subject to load spikes and partial mobility. Articles such as [

19,

22,

26,

27,

32] show that the density and heterogeneity of IoT devices require intelligent decisions on user association, base station (BS) hibernation, and partial offloading to edge/fog/cloud. For example, Manivannan et al. [

22] demonstrate that combined strategies of association and offloading, along with intelligent BS shutdown, can drastically reduce energy consumption in UDNs.

The collaborative fog-cloud and multi-tier architecture emerges as an alternative for scenarios demanding low response time and high energy flexibility. Works such as [

8,

12,

13,

28,

31,

34] highlight the importance of layered architectures, in which tasks are dynamically distributed among fog, edge, and cloud. Ma et al.’s GreenEdge [

13] integrates green energy through energy harvesting in a multi-tier structure to minimize cloud dependency and reduce energy costs, while Cui et al. [

12] show how cloud-edge collaboration can be enhanced through deep learning.

Serverless edge systems powered by renewable energy also appear as an innovation to improve sustainability and reduce failures. The faasHouse [

24] exemplifies how the intermittency and volatility of solar energy in serverless servers directly impact scheduling and require new heuristics to maintain reliability.

In all these scenarios, the architecture imposes constraints such as heterogeneity, mobility, energy intermittency, and latency limitations, which require adaptive scheduling algorithms. In the case of MEC enabled by UAVs, it is necessary to simultaneously consider mobility and energy replenishment. In UDNs and heterogeneous IoT, the density and variability of loads require selective shutdown and load balancing. In collaborative fog-cloud or renewable serverless architectures, energy variability and multiple layers make real-time allocation optimization essential.

In summary, studies show that architectures profoundly impact energy-sensitive scheduling strategies. (i) Mobile architectures with UAVs impose the need for coordinated trajectory and energy decisions [

14,

16,

18,

21,

26]. (ii) Heterogeneous and dense environments require adaptive scheduling with selective shutdown of nodes [

22,

26,

27]. (iii) Collaborative fog-cloud and multi-tier architectures favor multi-objective optimization and the use of green energy [

8,

12,

13,

28]. (iv) Renewable serverless environments require energy-aware scheduling focused on availability and reliability [

24].

These findings highlight that there is no one-size-fits-all approach for all architectures, and that solutions need to be designed taking into account the specific characteristics of the system—mobility, density, heterogeneity, or sustainability—to achieve true energy efficiency.

3.4. Performance Metrics

As shown in

Table 7, the most recurrent metric is total energy consumption, reported in 25 articles. This metric directly measures the impact of scheduling and offloading decisions on the energy consumed by devices, edge servers, or UAVs.

Latency or execution time appears in 19 studies, being mainly used to analyze how to reduce energy consumption without compromising performance. This reflects the concern with QoS while pursuing energy efficiency.

Throughput, often measured as the number of tasks completed per unit of time or the success rate of offloading, appears in 8 articles as a complementary metric.

Energy efficiency itself, often expressed as a ratio between performance and energy (such as “utility/energy”), is reported in 8 articles, serving as a composite metric to capture the sustainable performance of the systems. Similarly, local processing energy also appears in 5 articles; it refers to the consumption associated with executing tasks on devices and is used to assess the direct computational impact at the network edge.

Other complementary metrics identified include local processing energy, data transmission energy, renewable energy utilization, bandwidth, system stability, carbon footprint, volume of data transmitted, and communication costs. Reported energy savings ranged from approximately 25% to 40% when AI-based approaches were compared to heuristic baselines, according to individual primary studies.

3.5. Machine Learning (ML) and Artificial Intelligence (AI) Algorithms

Edge computing and cloud environments face critical challenges in energy efficiency and performance due to the explosive growth of mobile applications and IoT devices. Machine Learning (ML) and Artificial Intelligence (AI) techniques stand out for their ability to enable autonomous and adaptive decision-making, particularly in dynamic scenarios characterized by limited computational and energy resources.

Energy-aware offloading and scheduling paradigms are commonly formulated as complex combinatorial optimization problems, many of which are NP-hard. ML and AI approaches, especially reinforcement learning (RL) and deep neural networks, provide mathematical frameworks that transform traditionally static optimization formulations into adaptive learning-based processes capable of responding to environmental dynamics.

Contemporary computing architectures increasingly integrate terminal devices, edge nodes (e.g., UAVs and RSUs), and cloud infrastructures, forming multi-tier computational ecosystems. In the work

GreenEdge: Joint Green Energy Scheduling and Dynamic Task Offloading in Multi-Tier Edge Computing Systems [

13], the authors report energy efficiency gains in the range of 35–40% achieved through AI-driven vertical coordination policies across multiple layers of the architecture. These values correspond to the experimental results obtained in that study under simulated multi-tier edge scenarios.

Approaches such as Deep Q-Networks (DQN) and Self-Imitation Learning (SIL) enable context-aware offloading decisions by adapting policies to specific environmental conditions. In vehicular edge computing (VEC) scenarios, the SA3FMtR framework (Accelerating DNN Inference with Reliability Guarantee in Vehicular Edge Computing) reports latency reductions of approximately 30% while maintaining reliability levels above 95%, outperforming conventional baselines evaluated in the same experimental setting.

Recent works such as [

31] introduce customized DQN architectures with architecture-aware state representations, improving convergence stability and energy savings in MEC environments. Multi-agent coordination has also gained relevance, as demonstrated in [

32], where priority-gated MAPPO enables joint optimization of energy and latency under dynamic IIoT workloads. In UAV-assisted MEC, dependency-aware DRL frameworks such as [

33] integrate DAG-based task modeling with battery-constrained energy optimization. Hybrid optimization models combining DRL and metaheuristics (e.g., DQN + PSO) further enhance global convergence and scalability in ultra-dense 6G edge scenarios [

34].

Algorithms such as Deep Deterministic Policy Gradient (DDPG) dynamically adjust the trade-off between energy consumption and performance, promoting real-time efficiency. In the mUB-MEC system (Towards Energy-Efficient Scheduling of UAV and Base Station Hybrid Enabled MEC), the authors combine LSTM-based mobility prediction with Fuzzy C-Means clustering and report reductions of approximately 25% in total energy consumption compared to non-predictive scheduling strategies.

Models based on Markov Decision Processes (MDPs) and convex optimization techniques enable the joint optimization of latency, energy consumption, and operational costs. The DELTa algorithm (Dynamic Energy-and-Latency-aware Task Scheduling for Fog–Cloud Paradigm) reports improvements of around 20% in Quality of Service (QoS) and 15% in energy efficiency relative to heuristic baselines evaluated in fog–cloud environments. Similarly, in SDN-based IIoT scenarios, the study Joint Computation Offloading and Resource Allocation in Green MEC-Assisted Software-Defined Island Internet of Things reports that integrating Q-learning with centralized control mechanisms enables efficient resource allocation under strict energy constraints, sustaining operational efficiency in scenarios with up to 1000 connected devices.

Finally, solutions such as PDCO (Parallel Deep Learning-driven Cooperative Offloading) integrate renewable energy awareness through predictive LSTM models and report reductions of approximately 30% in dependence on conventional energy sources. In addition, the study on sustainable offloading reports that reinforcement learning-based policies can extend device lifetime by up to 40%, based on the empirical evaluation conducted within the corresponding experimental setup.

All numerical percentages referring to the frequency of methods, architectures, metrics, or AI adoption were computed directly from the set of 26 primary studies included in the quantitative synthesis.

In contrast, reported performance improvements (e.g., 25–40% energy reduction or 30% latency decrease) correspond exclusively to values explicitly stated in individual primary studies under their respective experimental configurations. No cross-study averaging or statistical meta-analysis was performed.

4. Discussion

The systematic analysis of the 32 selected articles revealed significant patterns in energy optimization strategies, evaluation metrics, and the application of AI/ML techniques in distributed systems. The results were organized according to the four established RQ, allowing a stratified view of the state of the art.

Regarding RQ1, on green scheduling and task offloading strategies, it was identified that among the 30 primary studies included in the quantitative synthesis, 26 (86.7%) adopted approaches based on artificial intelligence, with a predominance of reinforcement learning algorithms (DRL, DQN) in 12 studies.These techniques demonstrated superior performance in dynamic environments, with reported energy reductions typically ranging between 25–40% in individual primary studies when compared to their respective heuristic baselines. However, a significant divergence was observed in the implementation of these solutions, with Among the 20 simulation-based primary studies, 7 (35%) provided complete details on neural network architectures or training policies.

RQ2: How do different architectures (such as UAVs, IoT, MEC, fog-cloud) influence energy-aware scheduling decisions?

The analysis of RQ2, which investigates the influence of different architectures, revealed that among the eight primary studies specifically addressing UAV-MEC architectures, seven (87.5%) required hybrid approaches combining trajectory planning with resource allocation. On the other hand, fog-cloud environments showed greater methodological maturity, evidenced by the presence of standardized load balancing solutions in four of the five examined studies. A relevant finding was the positive correlation between architectural complexity and the adoption of AI techniques, with a Pearson coefficient of 0.72 (). The reported Pearson correlation coefficient (r = 0.72, p < 0.05) was computed by mapping each primary study to two variables: (i) architectural complexity, quantified by the number of interacting layers and mobile entities (e.g., UAVs, IoT devices, edge servers), and (ii) the presence of AI-based decision mechanisms. The correlation was calculated across the set of primary studies addressing architectural aspects.

Regarding evaluation metrics, total energy consumption was found to be the predominant metric (present in 21 articles), followed by latency (16) and throughput (8). It is worth noting that only 5 out of the 26 primary studies (19.2%) explicitly included sustainability-oriented metrics such as carbon footprint or renewable energy utilization.

Concerning the role of AI/ML, the results demonstrated that deep reinforcement learning algorithms were applied mainly for: (1) offloading decisions (68% of the cases), (2) mobility prediction (22%), and (3) resource allocation (10%). However, it was identified that Among the 26 primary studies, 12 (46.1%) did not explicitly discuss the intrinsic energy cost of training and operating AI models.

5. Challenges and Future Directions

The conducted review highlights significant gaps in the current literature and points to directions for future research. One of the main challenges identified is the practical validation of the proposed solutions: only 11% of the analyzed studies used testbeds or real-world implementations, such as urban vehicular networks or edge computing platforms. Therefore, future investigations should prioritize experiments in real scenarios to ensure the robustness and applicability of scheduling and offloading strategies.

Another critical aspect is the standardization of metrics for evaluating energy efficiency. Currently, there is substantial heterogeneity in the indicators used, and few studies consider sustainability dimensions such as carbon footprint, renewable energy use, or the indirect costs associated with AI/ML techniques. It is recommended to adopt consolidated frameworks, such as GreenAI, to enable fair comparisons and quantify environmental impacts.

From a methodological perspective, there is an opportunity to develop hybrid approaches that combine traditional analytical techniques, such as convex optimization, with adaptive AI/ML, in order to achieve greater generalization across different architectures (e.g., from UAV-MEC to IIoT) and computational efficiency, with lightweight algorithms capable of running on resource-constrained devices.

Security and resilience issues also remain underexplored: only two studies analyzed vulnerabilities in AI-based offloading policies. Future research should investigate mechanisms for protection against adversarial attacks and failures in autonomous decision-making.

In addition, only 18% of the studies explicitly analyzed the integration with renewable energy sources. There is room to explore predictive models of solar or wind energy availability in edge servers and hybrid management strategies that balance conventional and green sources for greater sustainability.

Finally, several practical recommendations emerge from this analysis: regulatory bodies should develop standards and policies to promote sustainable edge computing and encourage the use of renewable energy; the industry should adopt holistic evaluation frameworks that consider both performance and environmental impacts of AI-based solutions; and the scientific community should invest in creating open repositories with standardized datasets and parameters to enable reproducibility of studies.

In summary, although this review has confirmed the potential of advanced techniques to improve energy efficiency in distributed systems, there remains a need for more balanced approaches that combine methodological rigor, sustainability, and practical applicability. Future advances should focus on translating theoretical knowledge into concrete solutions capable of addressing the energy challenges posed by ubiquitous computing on a global scale.

6. Threats to Validity

As with any Systematic Literature Review, this study is subject to potential threats to validity, which are discussed according to four commonly adopted categories: construct validity, internal validity, external validity, and reliability.

6.1. Construct Validity

Construct validity threats relate to how well the selected concepts, metrics, and classifications represent the phenomena under investigation. In this review, threats may arise from the interpretation of terms such as green, sustainable, and energy-efficient, which are sometimes used inconsistently across the literature. To mitigate this issue, the study focused on objective indicators—such as energy consumption, latency, and energy efficiency metrics—rather than relying solely on authors’ terminology. Additionally, the classification of strategies and AI/ML techniques was based on explicit descriptions provided in the primary studies.

6.2. Internal Validity

Internal validity concerns potential biases in the study selection and data extraction processes. Although a systematic protocol was followed, including predefined research questions, inclusion and exclusion criteria, and a structured screening process supported by the Parsifal tool, there remains a risk of subjective bias during study selection and interpretation. To reduce this risk, clear eligibility criteria were applied consistently, and data extraction was guided by predefined forms aligned with the research questions.

6.3. External Validity

External validity refers to the generalizability of the findings beyond the analyzed studies. The results of this review are inherently limited to the set of selected articles and the contexts they address, such as edge computing, IoT, UAV-assisted MEC, and fog–cloud architectures. While the review identifies general trends and patterns, the applicability of specific conclusions to other distributed systems or emerging architectures should be considered with caution.

6.4. Reliability

Reliability threats concern the reproducibility of the review process and results. Although the search strings, databases, inclusion/exclusion criteria, and analysis procedures were defined and documented, some degree of interpretation was necessary when categorizing strategies, architectures, and evaluation metrics. To enhance reliability, the review process was systematically documented, and the classification decisions were made based on explicit information reported in the primary studies. Nevertheless, alternative interpretations or classifications may be possible.

7. Conclusions

The main objective of this work was to systematically review the literature on green scheduling and task offloading strategies aimed at optimizing energy consumption in distributed edge computing environments. By analyzing 32 selected articles, the study provided a broad perspective on the current state of the art, revealing key trends, methodological patterns, and persistent gaps in the field.

The results indicate that the most effective strategies for energy efficiency often combine adaptive machine learning techniques, bio-inspired heuristics, multi-layer collaborative approaches, and the integration of renewable energy sources. Additionally, the analysis shows that different architectural contexts—such as UAV-enabled MEC, heterogeneous IoT, collaborative fog-cloud, and green-energy serverless edge—present unique challenges and opportunities, requiring tailored solutions to improve efficiency while maintaining quality of service.

Another notable finding concerns the diversity of evaluation metrics, with total energy consumption and latency being the most frequently adopted. This reflects the ongoing effort to balance performance with energy savings. Furthermore, artificial intelligence and machine learning algorithms play a central role in enabling more dynamic, intelligent, and autonomous scheduling and offloading mechanisms.

Despite recent progress, there is a clear need for further research in dynamic and heterogeneous real-world scenarios, supported by large-scale experimental validation. Approaches that jointly address multiple objectives—such as performance, sustainability, and resilience—remain underexplored and represent promising avenues for future work. Future research should prioritize (i) large-scale experimental validation using real-world edge testbeds, (ii) standardized energy and sustainability benchmarks, and (iii) lightweight AI/ML models explicitly designed for deployment on resource-constrained edge devices.