Journalists’ Perceptions of Artificial Intelligence and Disinformation Risks

Abstract

1. Introduction

2. Materials and Methods

3. Results

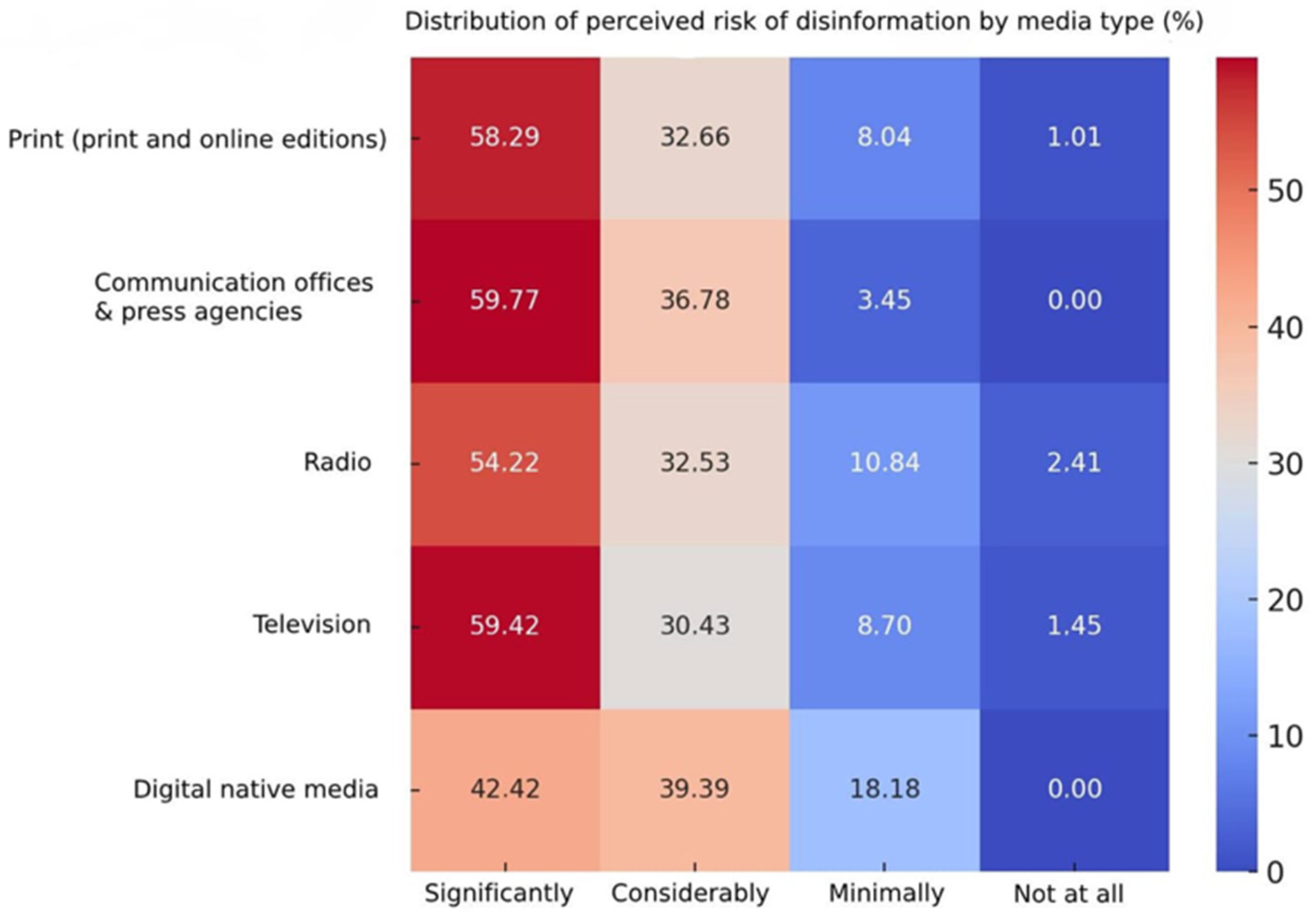

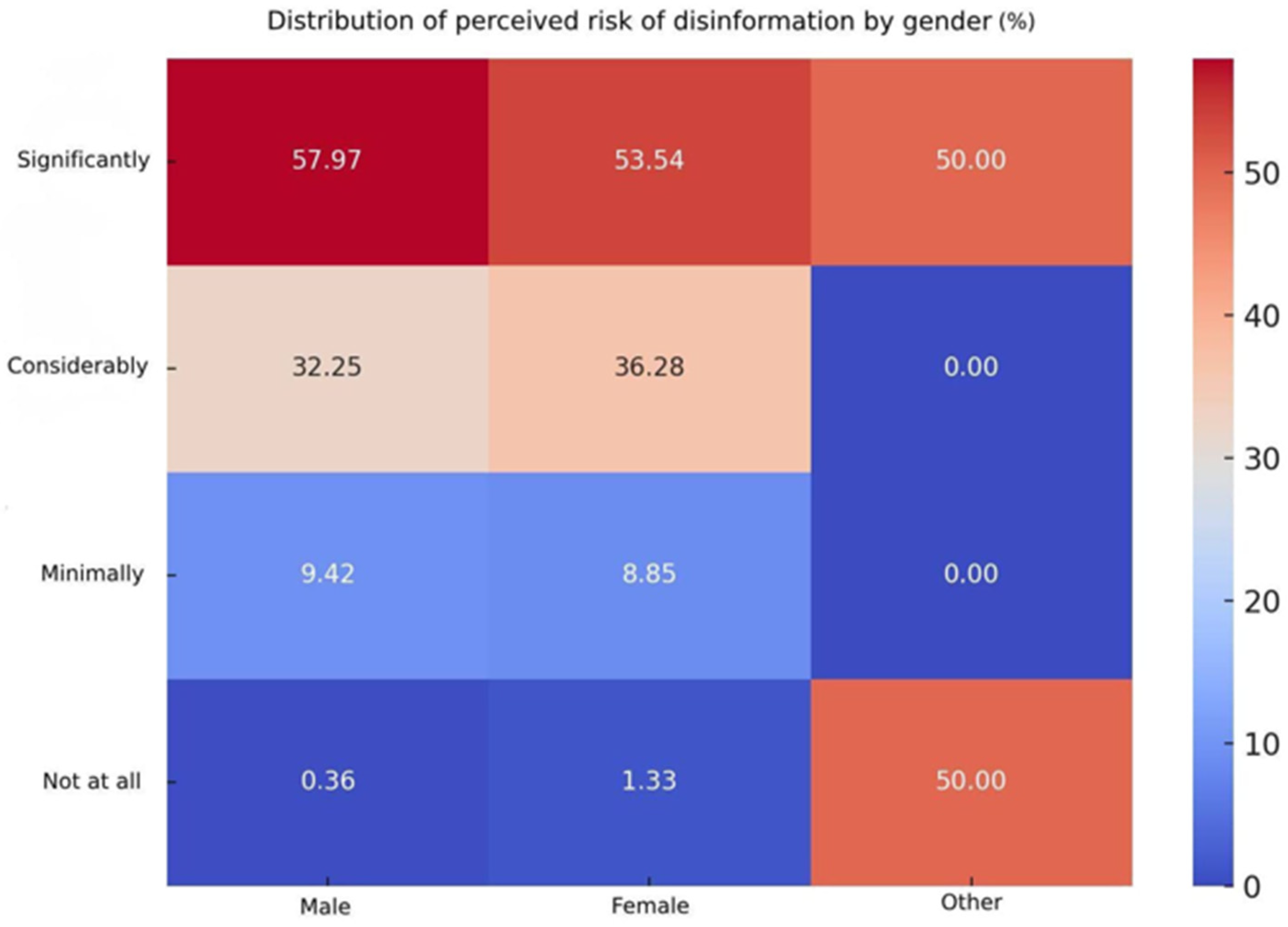

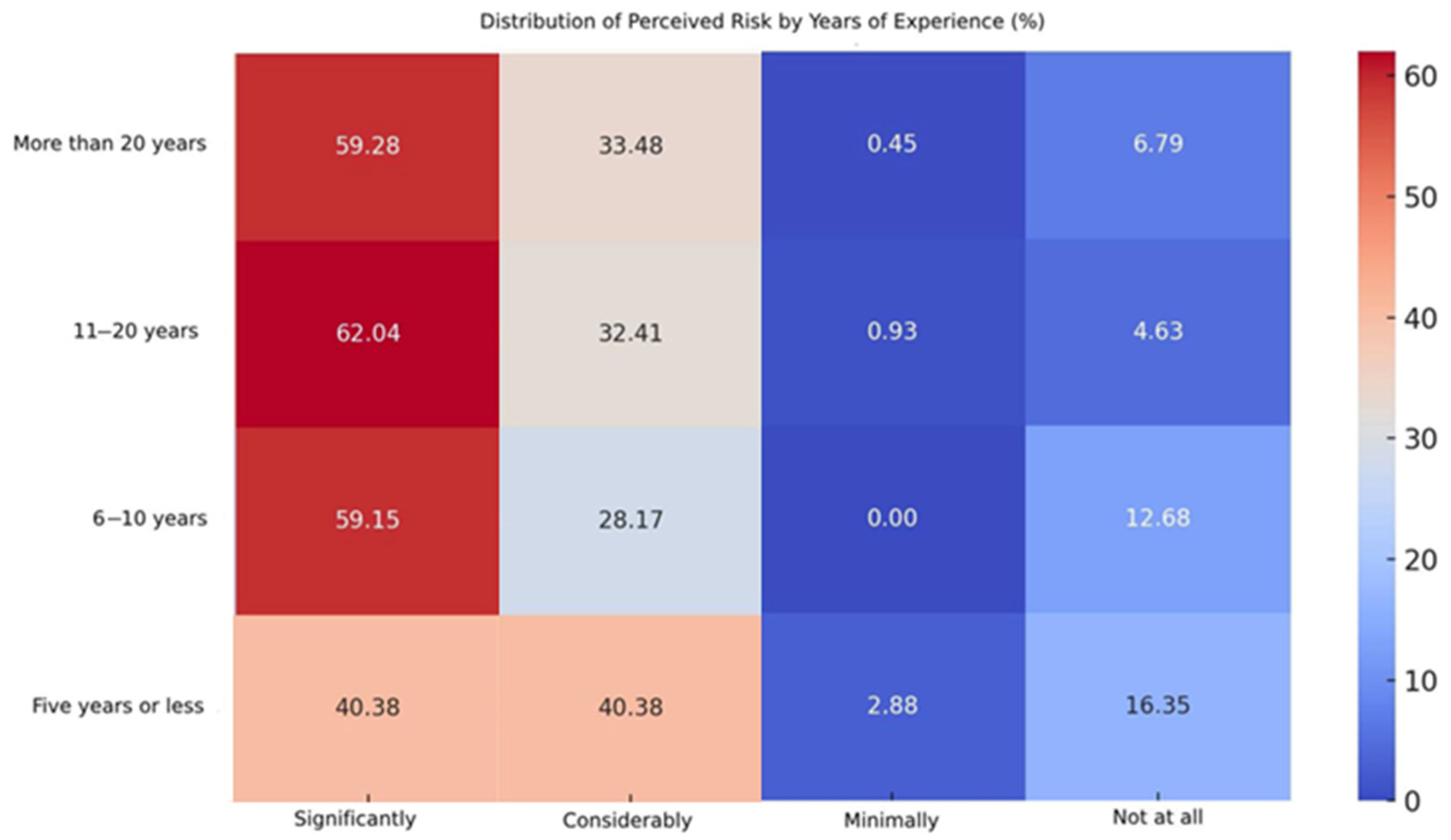

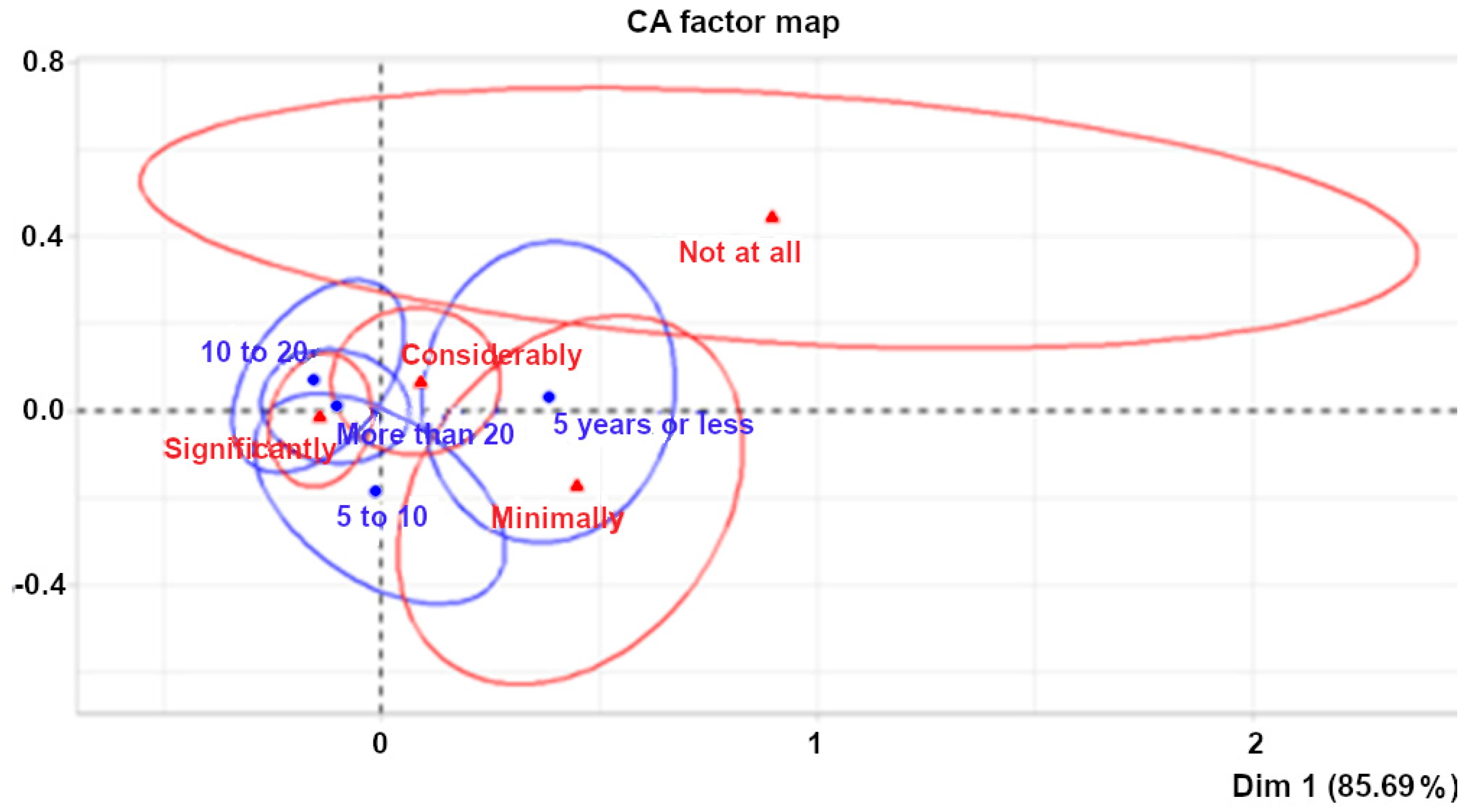

3.1. Journalists’ Perceptions of Disinformation Associated with AI

3.2. Perceived Risks of AI Use Among Basque Journalists

4. Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Ananny, M., & Karr, J. (2025). How media unions stabilize technological hype: Tracing organized journalism’s discursive constructions of generative artificial intelligence. Digital Journalism, 1–21. [Google Scholar] [CrossRef]

- Astobiza, A. M. (2024). Deepfakes, desinformación, discursos de odio y democracia en la era de la inteligencia artificial. Cuadernos del Audiovisual/CAA, 12, 177–190. [Google Scholar] [CrossRef]

- Autor, D. H. (2015). Why are there still so many jobs? The history and future of workplace automation. Journal of Economic Perspectives, 29(3), 3–30. [Google Scholar] [CrossRef]

- Ballesteros, L., & del Olmo, F. (2024). Vídeos falsos y desinformación ante la IA: El deepfake como vehículo de la posverdad. Revista de Ciencias de la Comunicación e Información, 29, 1–14. [Google Scholar] [CrossRef]

- Basque Association of Journalists—Basque College of Journalists. (n.d.). Kazetariak. Available online: https://kazetariak.eus/ (accessed on 22 March 2025).

- Basque Government. (2022). Censo del mercado de trabajo. Specific Statistical Body of the Department of Labor and Employment. Available online: https://www.eustat.eus/elementos/ele0021400/censo-del-mercado-de-trabajo-oferta/inf0021420_c.pdf (accessed on 22 March 2024).

- Basque Government. (n.d.). Open communication guide. Available online: https://gida.euskadi.eus (accessed on 22 March 2024).

- Benaissa Pedriza, S. (2024). Activistas, «influencers» y usuarios de redes sociales como fuente de desinformación: Una tipología operativa de nuevos líderes de opinión en los entornos digitales. Enrahonar, 73, 105–129. [Google Scholar] [CrossRef]

- Binns, R. (2018, February 23–24). Fairness in machine learning: Lessons from political philosophy. 2018 Conference on Fairness, Accountability, and Transparency (pp. 149–159), New York, NY, USA. [Google Scholar] [CrossRef]

- Brennen, J. S., Howard, P. N., & Nielsen, R. K. (2018). An industry-led debate: How UK media cover artificial intelligence. Reuters Institute for the Study of Journalism. [Google Scholar]

- Brundage, M., Avin, S., Clark, J., Toner, H., Eckersley, P., Garfinkel, B., Dafoe, A., Scharre, P., Zeitzoff, T., Filar, B., Anderson, H., Roff, H., Allen, G. C., Steinhardt, J., Flynn, C., Ó hÉigeartaigh, S., Beard, S., Belfield, H., Farquhar, S., … Amodei, D. (2018). The malicious use of artificial intelligence: Forecasting, prevention, and mitigation. Available online: https://tinyurl.com/3ayc5tnw (accessed on 22 March 2024).

- Canavilhas, J. (2022). Inteligencia artificial aplicada al periodismo: Traducción automática y recomendación de contenidos en el proyecto “A European Perspective” (UER). Revista Latina de Comunicación Social, 80, 1–13. [Google Scholar] [CrossRef]

- Cea, N., & Palomo, B. (2021). Disinformation matters. Analyzing the academic production. In G. López-García, D. Palau-Sampio, B. Palomo, E. Campos-Domínguez, & P. Masip (Eds.), Politics of disinformation: The influence of fake news on public sphere. John Wiley & Sons. [Google Scholar]

- Cohen, J. (1988). Statistical power analysis for the behavioral sciences (2nd ed.). Lawrence Erlbaum Associates. [Google Scholar]

- Cools, H., & de Vreese, C. H. (2025). From automation to transformation with AI-tools: Exploring the professional norms and the perceptions of responsible AI in a news organization. Digital Journalism, 1–20. [Google Scholar] [CrossRef]

- Cools, H., & Diakopoulos, N. (2024). Uses of generative AI in the newsroom: Mapping journalists’ perceptions of perils and possibilities. Journalism Practice, 1–19. [Google Scholar] [CrossRef]

- Cuartielles, R., Mauri Ríos, M., & Rodríguez Martínez, R. (2024). Transparencia en el uso de la IA en las plataformas de fact-checking en España y sus desafíos éticos. Communication & Society, 37(4), 257–271. [Google Scholar] [CrossRef]

- Cuartielles, R., Ramon Vegas, X., & Pont Sorribes, C. (2023). Retraining fact-checkers: The emergence of ChatGPT in information verification. Profesional de la información, 32(5), e320515. [Google Scholar] [CrossRef]

- Cui, J. (2025). Digital transformation in the media industry: The moderating role of human-AI interaction technologies. Media, Communication, and Technology, 1(1), 42–47. [Google Scholar] [CrossRef]

- D’Andrea, A., Fusacchia, G., & D’Ulizia, A. (2025). Linguistic insights, media mechanisms, and the role of AI in dissemination and impact of disinformation. Journal of Information, Communication and Ethics in Society. [Google Scholar] [CrossRef]

- De-Lara-González, A., García-Avilés, J. A., & Arias-Robles, F. (2022). Implantación de la inteligencia artificial en los medios españoles: Análisis de las percepciones de los profesionales. Textual&Visual Media, 1(15), 1–16. [Google Scholar] [CrossRef]

- Deuze, M., & Beckett, C. (2022). Imagination, algorithms, and news: Developing AI literacy for journalism. Digital Journalism, 10(10), 1913–1918. [Google Scholar] [CrossRef]

- Dodds, T., Zamith, R., & Lewis, S. C. (2025). The AI turn in journalism: Disruption, adaptation, and democratic futures. Journalism. [Google Scholar] [CrossRef]

- Esteban Regules, B., & Calle Mendoza, S. (2024). La transformación del periodismo: Ética y responsabilidad en el uso de la inteligencia artificial. In S. Mayorga Escala (Ed.), Tendencias de investigación en comunicación (pp. 251–266). Dykinson. [Google Scholar]

- Flores Vivar, J. M. (2019). Inteligencia artificial y periodismo: Diluyendo el impacto de la desinformación y las noticias falsas a través de los bots. Doxa Comunicación, 29, 197–212. [Google Scholar] [CrossRef]

- García, A. M. (2024). ¿Qué desafíos éticos plantea la inteligencia artificial generativa? Ciencia Vital, 2(4), 1–2. [Google Scholar] [CrossRef]

- García de Torres, E., Ramos, G., Yezers’ ka, L., Gonzales, M., Higuera, L., & Herrera, C. (2025). The use and ethical implications of artificial intelligence, collaboration, and participation in local Ibero-American newsrooms. Frontiers in Communication, 10, 1539844. [Google Scholar] [CrossRef]

- Goldstein, J. A., Sastry, G., Musser, M., DiResta, R., Gentzel, M., & Sedova, K. (2023). Generative language models and automated influence operations: Emerging threats and potential mitigations. arXiv, arXiv:2301.04246. [Google Scholar] [CrossRef]

- Gonzalo, M. (2024). Cuánto puede impactar la IA en la desinformación: El periodismo en la era de los ‘deepfakes’. Cuadernos de periodistas: Revista de la Asociación de la Prensa de Madrid, 48, 69–77. [Google Scholar]

- Graefe, A. (2016). Guide to automated journalism. Tow Center for Digital Journalism. Available online: https://academiccommons.columbia.edu/doi/10.7916/D80G3XDJ (accessed on 22 March 2024).

- Gutiérrez-Caneda, B., Lindén, C. G., & Vázquez-Herrero, J. (2024). Ethics and journalistic challenges in the age of artificial intelligence: Talking with professionals and experts. Frontiers in Communication, 9, 1465178. [Google Scholar] [CrossRef]

- Guzman, A. L., & Lewis, S. C. (2020). Artificial intelligence and communication: A human–machine communication research agenda. New Media&Society, 22(1), 70–86. [Google Scholar] [CrossRef]

- Hansen, M., Roca-Sales, M., Keegan, J., & King, G. (2017). Artificial intelligence: Practice and implications for journalism. Columbia Journalism School. [Google Scholar] [CrossRef]

- Kertysova, K. (2018). Artificial Intelligence and disinformation. Security and Human Rights, 29(1–4), 55–81. [Google Scholar] [CrossRef]

- Kevin-Alerechi, E., Abutu, I., Oladunni, O., Osanyinro, E., Ojumah, O., & Ogundele, E. (2025). AI and the newsroom: Transforming journalism with intelligent systems. Journal of Artificial Intelligence, Machine Learning and Data Science, 3(1), 1930–1937. [Google Scholar] [CrossRef]

- Kim, D., & Kim, S. (2017). Newspaper companies’ determinants in adopting robot journalism. Technological Forecasting and Social Change, 117, 184–195. [Google Scholar] [CrossRef]

- Kim, D., & Kim, S. (2018). Newspaper journalists’ attitudes towards robot journalism. Telematics and Informatics, 35, 340–357. [Google Scholar] [CrossRef]

- Kotenidis, E., & Veglis, A. (2021). Algorithmic journalism—Current applications and future perspectives. Journalism and Media, 2(2), 244–257. [Google Scholar] [CrossRef]

- Lange, B., & Lechterman, T. M. (2021, October 28–31). Combating disinformation with AI: Epistemic and ethical challenges. 2021 IEEE International Symposium on Technology and Society (ISTAS) (pp. 1–5), Waterloo, ON, Canada. [Google Scholar] [CrossRef]

- La Rosa, A., & Luján, J. (2024). Del “periodismo de verdad” a las fake news en la era de la inteligencia artificial. Revista Científica de Comunicación Social, (6), 86–98. [Google Scholar] [CrossRef]

- Leiser, M. R. (2022). Bias, journalistic endeavours, and the risks of artificial intelligence. In T. Pihlajarinne, & A. Alén-Savikko (Eds.), Artificial intelligence and the media (pp. 8–32). Elgar. [Google Scholar] [CrossRef]

- Lindén, C. G., & Tuulonen, H. (2019). News automation: The rewards, risks, and realities of ‘machine journalism’. WAN-IFRA Report. Available online: https://tinyurl.com/yc24pmxc (accessed on 22 March 2024).

- Londoño-Proaño, C., & Buele, J. (2025). Can artificial intelligence replace journalists? A theoretical approach. Frontiers in Communication, 10, 1537146. [Google Scholar] [CrossRef]

- Lopezosa, C., Codina, L., Pont-Sorribes, C., & Vállez, M. (2023). Use of generative artificial intelligence in the training of journalists: Challenges, uses, and training proposal. Profesional de la Información, 32(4), e320408. [Google Scholar] [CrossRef]

- López Jiménez, E., & Ouariachi, T. (2020). An exploration of the impact of artificial intelligence (AI) and automation for communication professionals. Journal of Information, Communication and Ethics in Society, 19(2), 249–267. [Google Scholar] [CrossRef]

- Lundberg, E., & Mozelius, P. (2025). The potential effects of deepfakes on news media and entertainment. AI & Soc, 40, 2159–2170. [Google Scholar] [CrossRef]

- Manfredi Sánchez, J. L., & Ufarte Ruiz, M. J. (2020). Inteligencia artificial y periodismo: Una herramienta contra la desinformación. Revista CIDOB d’Afers Internacionals, 124, 49–72. [Google Scholar] [CrossRef]

- Mateos Abarca, J. P., & Gamonal Arroyo, R. (2024). Metodologías de investigación y usos de la inteligencia artificial aplicada al periodismo. Comunicación y Métodos—Communication & Methods, 6(1), 90–107. [Google Scholar] [CrossRef]

- Mondría Terol, T. (2023). Innovación mediática: Aplicaciones de la inteligencia artificial en el periodismo en España. Textual&Visual Media, 17(1), 41–60. [Google Scholar] [CrossRef]

- Montal, T., & Reich, Z. (2017). I, robot. You, journalist. Who is the author? Authorship, bylines and full disclosure in automated journalism. Digital Journalism, 5(7), 829–849. [Google Scholar] [CrossRef]

- Moravec, V., Hynek, N., Skare, M., Gavurova, B., & Kubak, M. (2024). Human or machine? The perception of artificial intelligence in journalism, its socio-economic conditions, and technological developments toward the digital future. Technological Forecasting and Social Change, 200, 123162. [Google Scholar] [CrossRef]

- Moreno Espinosa, P., Abdulsalam Alsarayreh, R. A., & Figuereo Benítez, J. C. (2024). El big data y la inteligencia artificial como soluciones a la desinformación. Doxa Comunicación, 38, 437–451. [Google Scholar] [CrossRef]

- Morosoli, S., Resendez, V., Naudts, L., Helberger, N., & de Vreese, C. (2025). “I Resist.” A study of individual attitudes towards generative AI in journalism and acts of resistance, risk perceptions, trust, and credibility. Digital Journalism, 1–20. [Google Scholar] [CrossRef]

- Møller, L. A., Skovsgaard, M., & de Vreese, C. (2024). Reinforce, readjust, reclaim: How artificial intelligence impacts journalism’s professional claim. Journalism, 26(7), 1373–1390. [Google Scholar] [CrossRef]

- Murcia Verdú, F. J., & Lara Ramos, D. (2024). ¿Están preparadas las redacciones para la integración de la inteligencia artificial? In F. J. Murcia Verdú, & R. Ramos Antón (Coords.), La inteligencia artificial y la transformación del periodismo: Narrativas, aplicaciones y herramientas (pp. 67–89). Comunicación Social Ediciones y Publicaciones. [Google Scholar]

- Newman, N., Fletcher, R., Robertson, C. T., Arguedas, A. R., & Nielsen, R. K. (2024). Digital news report 2024: Key findings and analysis. Reuters Institute. Available online: https://coilink.org/20.500.12592/kprrc2m (accessed on 29 July 2025).

- Nguyen, D. (2023). How news media frame data risks in their coverage of big data and AI. Internet Policy Review, 12(2), 1–30. [Google Scholar] [CrossRef]

- Noain-Sánchez, A. (2022). Addressing the impact of artificial intelligence on journalism: The perception of experts, journalists and academics. Communication & Society, 35(3), 3. [Google Scholar] [CrossRef]

- Oh, S., & Jung, J. (2025). Harmonizing traditional journalistic values with emerging AI technologies: A systematic review of journalists’ perception. Media and Communication, 13, 1–27. [Google Scholar] [CrossRef]

- Palomo, B., Tandoc, E. C., Jr., & Cunha, R. (2023). El impacto de la desinformación en las rutinas profesionales y soluciones basadas en la inteligencia artificial. Estudios sobre el Mensaje Periodístico, 29(4), 757–759. [Google Scholar] [CrossRef]

- Papadimitriou, A. (2016). The future of communication: Artificial intelligence and social networks. Media&communication studies. Mälmo University. Available online: http://www.diva-portal.org/smash/record.jsf?pid=diva2%3A1481794&dswid=5239 (accessed on 22 March 2024).

- Peña-Fernández, S., Meso Ayerdi, K., Larrondo Ureta, A., & Díaz Noci, J. (2023). Without journalists, there is no journalism: The social dimension of generative artificial intelligence in the media. Profesional de la Información, 32(2), e320227. [Google Scholar] [CrossRef]

- Peña-Fernández, S., Peña-Alonso, U., & Eizmendi-Iraola, M. (2023). El discurso de los periodistas sobre el impacto de la inteligencia artificial generativa en la desinformación. Estudios sobre el Mensaje Periodístico, 29(4), 833–841. [Google Scholar] [CrossRef]

- Pérez, F., Broseta, B., Escribá, A., López, G., Maudos, J., & Pascual, F. (2023). Los medios de comunicación en la era digital. Fundación BBVA. [Google Scholar]

- Pihlajarinne, T., & Alén-Savikko, A. (2022). Artificial intelligence and the media: Reconsidering rights and responsibilities. Edward Elgar Publishing. Available online: https://tinyurl.com/2zdbrepu (accessed on 22 March 2024).

- Pilo García, M. A., Romero Gutiérrez, J. M., de Casas Moreno, P., & Aguaded, I. (2024). El impacto de la inteligencia artificial en comunicación. Revisión sistematizada de la producción científica española en Scopus (2020–2023). Razón y Palabra, 28(119), 65–79. [Google Scholar] [CrossRef]

- Rick, J., & Hanitzsch, T. (2023). Journalists’ perceptions of precarity: Toward a theoretical model. Journalism Studies, 25(2), 199–217. [Google Scholar] [CrossRef]

- Rodríguez Martelo, T., Rúas Araújo, J., & Maroto González, I. (2023). Innovation, digitization, and disinformation management in European regional television stations in the Circom network. Profesional de la Información, 32(1), 1–14. [Google Scholar] [CrossRef]

- Ross Arguedas, A. (2024). Public attitudes towards the use of AI in journalism. Reuters Institute for the Study of Journalism, University of Oxford. Available online: https://reutersinstitute.politics.ox.ac.uk/digital-news-report/2024/public-attitudes-towards-use-ai-and-journalism (accessed on 22 March 2024).

- Rostamian, S., & Moradi Kamreh, M. (2024). AI in broadcast media management: Opportunities and challenges. AI and Tech in Behavioral and Social Sciences, 2(3), 21–28. [Google Scholar] [CrossRef]

- Rubin, V. L. (2022). Misinformation and disinformation: Detecting fakes with the eye and AI. Springer. [Google Scholar]

- Saeidnia, H. R., Hosseini, E., Lund, B., Tehrani, M. A., Zaker, S., & Molaei, S. (2025). Artificial intelligence in the battle against disinformation and misinformation: A systematic review of challenges and approaches. Knowledge and Information Systems, 67(4), 3139–3158. [Google Scholar] [CrossRef]

- Sanguinetti, P. A. B. L. O. (2023). Inteligencia artificial en periodismo: Oportunidades, riesgos, incógnitas. Cuadernos de periodistas: Revista de la Asociación de la Prensa de Madrid, 46, 9–17. [Google Scholar]

- Santos, F. C. C. (2023). Artificial intelligence in automated detection of disinformation: A thematic analysis. Journalism and Media, 4(2), 679–687. [Google Scholar] [CrossRef]

- Sarrionandia, B., Peña-Fernández, S., Ángel Pérez-Dasilva, J., & Larrondo-Ureta, A. (2025). Artificial intelligence training in media: Addressing technical and ethical challenges for journalists and media professionals. Frontiers in Communication, 10, 1537918. [Google Scholar] [CrossRef]

- Schaetz, N., Schjøtt, A., Dodds, T., & Mellado, C. (2025). The (invisible) work involved in bridging the research-practice gap in journalism. Journalism, 26(8), 1591–1602. [Google Scholar] [CrossRef]

- Sixto García, J., Rodríguez Vázquez, A. I., & López García, X. (2021). Sistemas de verificación en medios nativos digitales e implicación de la audiencia en la lucha contra la desinformación en el modelo ibérico. Revista de comunicación de la SEECI, 54, 41–61. [Google Scholar] [CrossRef]

- Sonni, A. F. (2025). Digital transformation in journalism: Mini review on the impact of AI on journalistic practices. Frontiers in Communication, 10, 1535156. [Google Scholar] [CrossRef]

- Sonni, A. F., Hafied, H., Irwanto, I., & Latuheru, R. (2024). Digital newsroom transformation: A systematic review of the impact of artificial intelligence on journalistic practices, news narratives, and ethical challenges. Journalism and Media, 5(4), 1554–1570. [Google Scholar] [CrossRef]

- Subiela Hernández, B. H., Gómez Company, A., & Vizcaíno Laorga, R. (2023). Retos y oportunidades en la lucha contra la desinformación y los derechos de autor en periodismo: MediaVerse (IA, blockchain y smart contracts). Estudios sobre el Mensaje Periodístico, 29(4), 869–880. [Google Scholar] [CrossRef]

- Tejedor, S., & Sancho Ligorred, B. (2023). Cartografía mundial de herramientas, fact-checkers y proyectos contra la infodemia. Estudios sobre el Mensaje Periodístico, 29(4), 933–942. [Google Scholar] [CrossRef]

- Thäsler-Kordonouri, S., & Barling, K. (2023). Automated journalism in UK local newsrooms: Attitudes, integration, impact. Journalism Practice, 19(1), 58–75. [Google Scholar] [CrossRef]

- Thurman, N., Dörr, K., & Kunert, J. (2017). When reporters get hands-on with robo-writing: Professionals consider automated journalism’s capabilities and consequences. Digital Journalism, 5(10), 1240–1259. [Google Scholar] [CrossRef]

- Túñez López, J. M., Fieiras Ceide, C., & Vaz Álvarez, M. (2021). Impacto de la inteligencia artificial en el periodismo: Transformaciones en la empresa, los productos, los contenidos y el perfil profesional. Communication & Society, 34(1), 177–193. [Google Scholar] [CrossRef]

- Ufarte Ruiz, M. J., Murcia Verdú, F. J., & Túñez López, J. M. (2023). Use of artificial intelligence in synthetic media: First newsrooms without journalists. Profesional de la información, 32(2), e320203. [Google Scholar] [CrossRef]

- van Dalen, A. (2024). Revisiting the algorithms behind the headlines: How journalists respond to professional competition of generative AI. Journalism Practice, 1–18. [Google Scholar] [CrossRef]

- Verma, D. (2024). Impact of artificial intelligence on journalism: A comprehensive review of AI in journalism. Journal of Communication and Management, 3(2), 150–156. [Google Scholar] [CrossRef]

- Vrabič Dežman, D. (2024). Promising the future, encoding the past: AI hype and public media imagery. AI and Ethics, 4(3), 743–756. [Google Scholar] [CrossRef]

- Wei, K., Ezell, C., Gabrieli, N., & Deshpande, C. (2024). How do AI companies “fine-tune” policy? Examining regulatory capture in AI governance. Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society, 7(1), 1539–1555. [Google Scholar] [CrossRef]

- Wilczek, B., Haim, M., & Thurman, N. (2024). Transforming the value chain of local journalism with artificial intelligence. AI Magazine, 45(2), 200–211. [Google Scholar] [CrossRef]

- Wu, S. (2024). Journalists as individual users of artificial intelligence: Examining journalists’ “value-motivated use” of ChatGPT and other AI tools within and without the newsroom. Journalism. [Google Scholar] [CrossRef]

- Wu, S., Tandoc, E. C., & Salmon, C. T. (2019). Journalism reconfigured. Journalism Studies, 20(10), 1440–1457. [Google Scholar] [CrossRef]

- Zheng, Y., Zhong, B., & Yang, F. (2018). When algorithms meet journalism: The user perception to automated news in a cross-cultural context. Computers in Human Behavior, 86, 266–275. [Google Scholar] [CrossRef]

| Risk Category | Count | Percentage |

|---|---|---|

| Difficulty identifying false content and deepfakes | 382 | 37.9% |

| Obtaining inaccurate or erroneous content/data | 336 | 33.33% |

| Being a victim of criminal uses (scams and skimming) | 117 | 11.61% |

| Biases due to data origin (gender, social class, etc.) | 109 | 10.81% |

| None other | 64 | 6.35% |

| Risk Pair | Count | Percentage |

|---|---|---|

| Difficulty identifying false content and deepfakes paired with obtaining inaccurate or erroneous content/data | 253 | 50.5% |

| Difficulty identifying false content and deepfakes paired with being a victim of criminal uses (scams, skimming) | 69 | 13.77% |

| Obtaining inaccurate or erroneous content/data paired with biases due to data origin (gender, social class, etc.) | 58 | 11.58% |

| Difficulty identifying false content and deepfakes paired with biases due to data origin (gender, social class, etc.) | 40 | 7.99% |

| Obtaining inaccurate or erroneous content/data paired with being a victim of criminal uses (scams, skimming) | 17 | 3.99% |

| Difficulty identifying false content and deepfakes paired with none other | 20 | 3.99% |

| Being a victim of criminal uses (scams, skimming) paired with none other | 25 | 4.99% |

| Obtaining inaccurate or erroneous content/data paired with none other | 8 | 1.6% |

| Biases due to data origin (gender, social class, etc.) paired with0 none other | 5 | 1% |

| Biases due to data origin (gender, social class, etc.) paired with being a victim of criminal uses (scams, skimming) | 6 | 1.2% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Peña-Alonso, U.; Peña-Fernández, S.; Meso-Ayerdi, K. Journalists’ Perceptions of Artificial Intelligence and Disinformation Risks. Journal. Media 2025, 6, 133. https://doi.org/10.3390/journalmedia6030133

Peña-Alonso U, Peña-Fernández S, Meso-Ayerdi K. Journalists’ Perceptions of Artificial Intelligence and Disinformation Risks. Journalism and Media. 2025; 6(3):133. https://doi.org/10.3390/journalmedia6030133

Chicago/Turabian StylePeña-Alonso, Urko, Simón Peña-Fernández, and Koldobika Meso-Ayerdi. 2025. "Journalists’ Perceptions of Artificial Intelligence and Disinformation Risks" Journalism and Media 6, no. 3: 133. https://doi.org/10.3390/journalmedia6030133

APA StylePeña-Alonso, U., Peña-Fernández, S., & Meso-Ayerdi, K. (2025). Journalists’ Perceptions of Artificial Intelligence and Disinformation Risks. Journalism and Media, 6(3), 133. https://doi.org/10.3390/journalmedia6030133