Deep Representation Learning for Cluster-Level Time Series Forecasting †

Abstract

:1. Introduction

2. Background

2.1. A Brief Review of Common Time Series Forecasting Techniques

- Statistical models that aim to explicitly model time series patterns using domain knowledge.

- Deep-learning-based models that learn temporal dynamics in a purely data-driven way and without explicit formulations.

2.2. A Brief Review of Time Series Clustering Techniques

3. Methodology

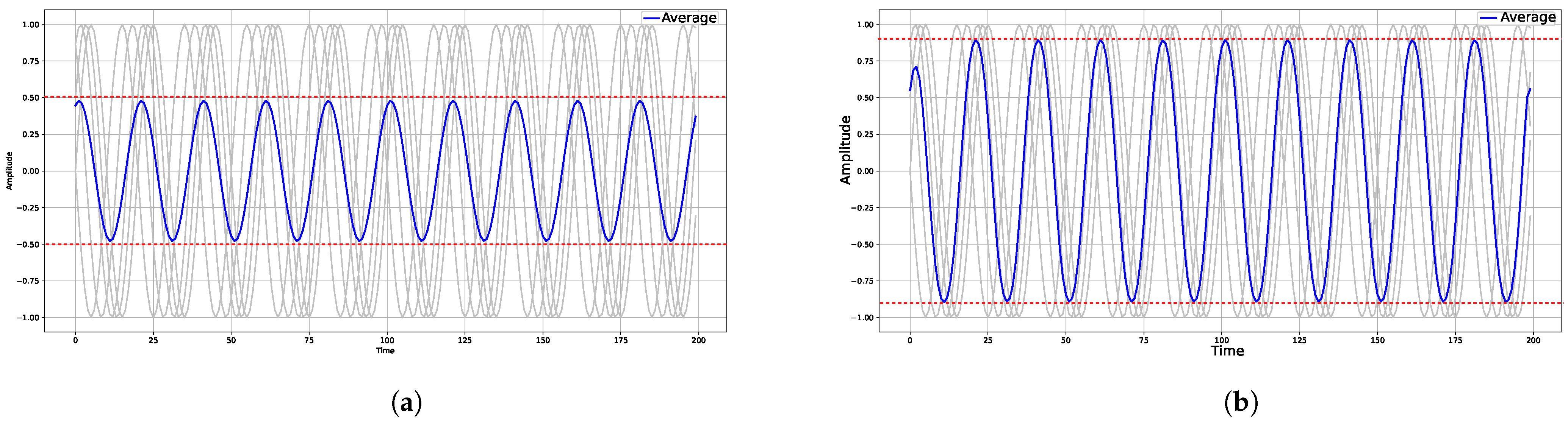

3.1. Proposed Network Architecture

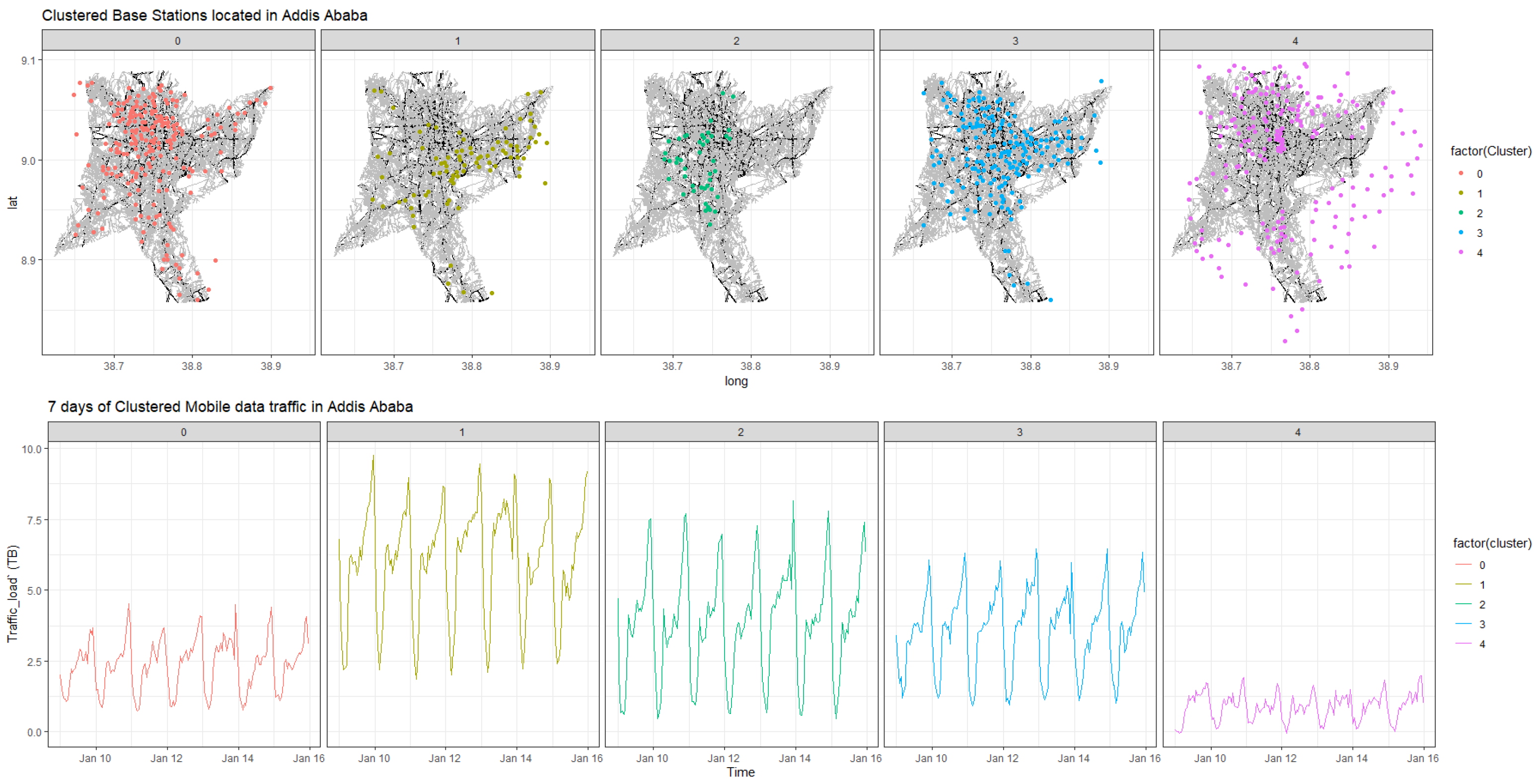

3.2. Datasets

3.3. Experimental Setup

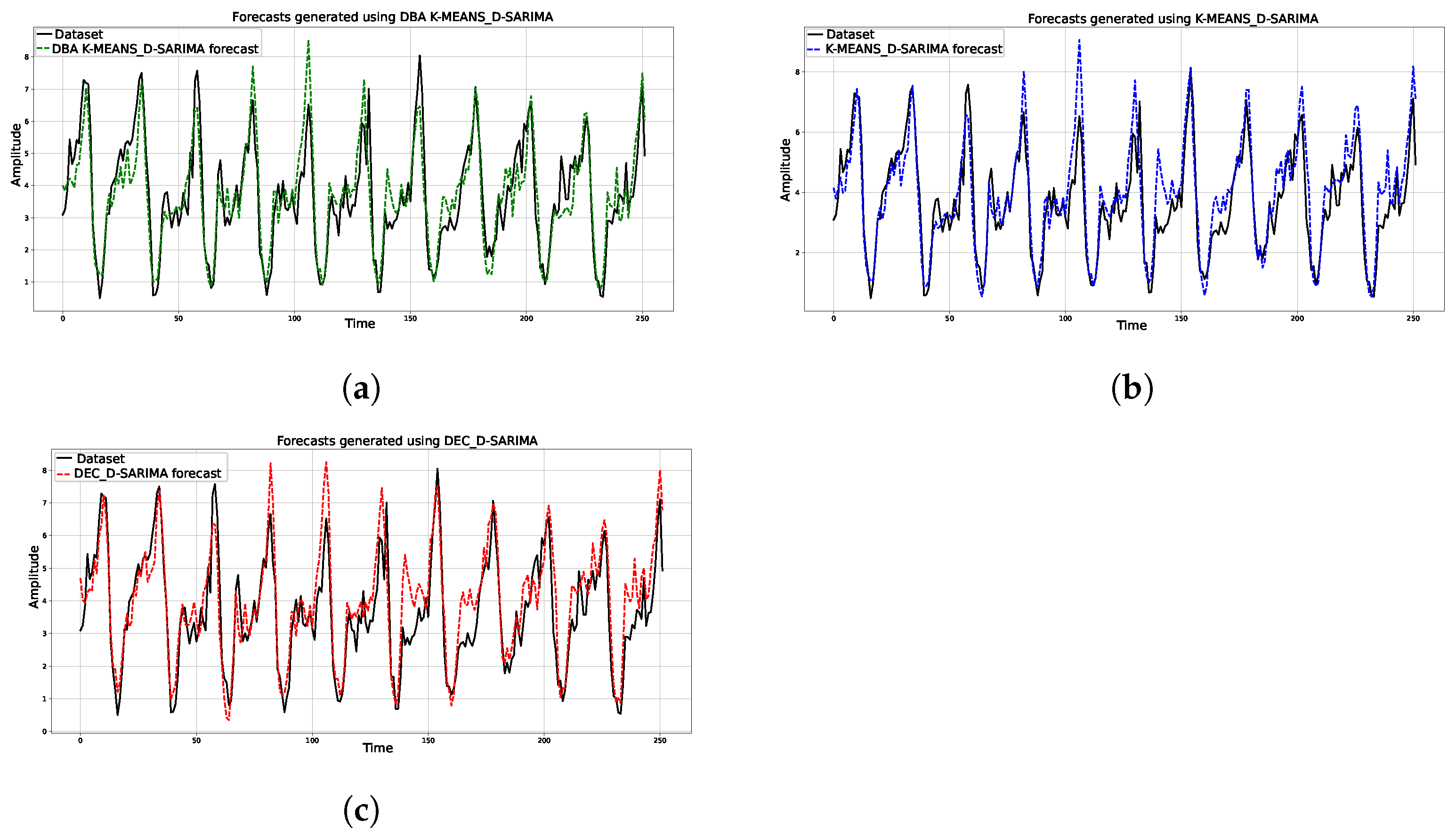

4. Experimental Results and Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Fotios, P.; Daniele, A.; Vassilios, A.; Babai, M.Z.; Barrow, D.K.; Taieb, S.B.; Bergmeir, C.; Bessa, R.J.; Bijak, J.; Boylan, J.E.; et al. Forecasting: Theory and practice. Int. J. Forecast. 2022, 38, 705–871. [Google Scholar]

- Azari, A.; Papapetrou, P.; Denic, S.; Peters, G. Cellular Traffic Prediction and Classification: A Comparative Evaluation of LSTM and ARIMA. In Discovery Science; Kralj Novak, P., Šmuc, T., Džeroski, S., Eds.; Springer International Publishing: Cham, Switzerland, 2019; pp. 129–144. [Google Scholar]

- Chen, G. Spatiotemporal Individual Mobile Data Traffic Prediction; Technical Report RT-0497; INRIA Saclay—Ile-de-France: Leyon, France, 2018. [Google Scholar]

- Xu, F.; Lin, Y.; Huang, J.; Wu, D.; Shi, H.; Song, J.; Li, Y. Big Data Driven Mobile Traffic Understanding and Forecasting: A Time Series Approach. IEEE Trans. Serv. Comput. 2016, 9, 796–805. [Google Scholar] [CrossRef]

- Wei, W. Time Series Analysis: Univariate and Multivariate Methods; Pearson Addison Wesley: Boston, MA, USA, 2006. [Google Scholar]

- Shu, Y.; Yu, M.; Liu, J.; Yang, O. Wireless traffic modeling and prediction using seasonal ARIMA models. In Proceedings of the IEEE International Conference on Communications, Anchorage, AK, USA, 11–15 May 2003; Volume 3, pp. 1675–1679. [Google Scholar]

- Montero-Manso, P.; Hyndman, R.J. Principles and algorithms for forecasting groups of time series: Locality and globality. Int. J. Forecast. 2021, 37, 1632–1653. [Google Scholar] [CrossRef]

- Zeng, D.; Xu, J.; Gu, J.; Liu, L.; Xu, G. Short Term Traffic Flow Prediction Using Hybrid ARIMA and ANN Models. In Proceedings of the 2008 Workshop on Power Electronics and Intelligent Transportation System, Guangzhou, China, 2–3 August 2008; pp. 621–625. [Google Scholar]

- Zhou, B.; He, D.; Sun, Z. Traffic Modeling and Prediction using ARIMA/GARCH Model. In Modeling and Simulation Tools for Emerging Telecommunication Networks; Springer: Boston, MA, USA, 2006; pp. 101–121. [Google Scholar]

- Zhang, C.; Patras, P.; Haddadi, H. Deep Learning in Mobile and Wireless Networking: A Survey. IEEE Commun. Surv. Tutor. 2019, 21, 2224–2287. [Google Scholar] [CrossRef]

- Shawel, B.S.; Debella, T.T.; Tesfaye, G.; Tefera, Y.Y.; Woldegebreal, D.H. Hybrid Prediction Model for Mobile Data Traffic: A Cluster-level Approach. In Proceedings of the 2020 International Joint Conference on Neural Networks (IJCNN), Glasgow, UK, 19–24 July 2020; pp. 1–8. [Google Scholar]

- Jaderberg, M.; Simonyan, K.; Zisserman, A.; Kavukcuoglu, K. Spatial Transformer Networks. In Advances in Neural Information Processing Systems; Curran Associates, Inc.: Jose, CA, USA, 2015; Volume 28. [Google Scholar]

- John, G.P.; Masoud, S. Fundamentals of Communication Systems; Prentice Hall: Upper Saddle River, NJ, USA, 2002. [Google Scholar]

- Sfetsos, A.; Siriopoulos, C. Time series forecasting with a hybrid clustering scheme and pattern recognition. IEEE Trans. Syst. Man Cybern. Part A Syst. Humans 2004, 34, 399–405. [Google Scholar] [CrossRef]

- Aghabozorgi, S.; Shirkhorshidi, A.S.; Wah, T.Y. Time-series clustering–a decade review. Inf. Syst. 2015, 53, 16–38. [Google Scholar] [CrossRef]

- Petitjean, F.; Forestier, G.; Webb, G.I.; Nicholson, A.E.; Chen, Y.; Keogh, E. Faster and more accurate classification of time series by exploiting a novel dynamic time warping averaging algorithm. Knowl. Inf. Syst. 2016, 47, 1–26. [Google Scholar] [CrossRef]

- Petitjean, F.; Gançarski, P. Summarizing a set of time series by averaging: From Steiner sequence to compact multiple alignment. Theor. Comput. Sci. 2012, 414, 76–91. [Google Scholar] [CrossRef]

- Terefe, T.; Devanne, M.; Weber, J.; Hailemariam, D.; Forestier, G. Time Series Averaging Using Multi-Tasking Autoencoder. In Proceedings of the 2020 IEEE 32nd International Conference on Tools with Artificial Intelligence (ICTAI), Baltimore, MD, USA, 9–11 November 2020; pp. 1065–1072. [Google Scholar]

- Xie, J.; Girshick, R.; Farhadi, A. Unsupervised Deep Embedding for Clustering Analysis. In Proceedings of the 33rd International Conference on International Conference on Machine Learning, New York, NY, USA, 19–24 June 2016; Volume 48, pp. 478–487. [Google Scholar]

- Vasilev, I.; Slater, D.; Spacagna, G.; Roelants, P.; Zocca, V. Python Deep Learning: Exploring Deep Learning Techniques and Neural Network Architectures with PyTorch, Keras, and TensorFlow, 2nd ed.; Packt Publishing: Birmingham, UK, 2019. [Google Scholar]

- Lafabregue, B.; Weber, J.; Gançarski, P.; Forestier, G. End-to-end deep representation learning for time series clustering: A comparative study. Data Min. Knowl. Discov. 2021, 36, 29–81. [Google Scholar] [CrossRef]

- Leonard, K.; Peter, J.R. Finding Groups in Data: An Introduction to Cluster Analysis; John Wiley: Hoboken, NJ, USA, 1990. [Google Scholar]

- Bock, H.H. Origins and extensions of the -means algorithm in cluster analysis. J. Électron. d’Histoire Probab. Stat. 2008, 4, 18. [Google Scholar]

- Sakoe, H.; Chiba, S. Dynamic programming algorithm optimization for spoken word recognition. IEEE Trans. Acoust. Speech Signal Process. 1978, 26, 43–49. [Google Scholar] [CrossRef]

- Chollet, F. Keras. 2015. Available online: https://keras.io (accessed on 1 May 2022).

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. In Proceedings of the 3rd International Conference on Learning Representations, San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Hyndman, R.J.; Khandakar, Y. Automatic Time Series Forecasting: The forecast Package for R. J. Stat. Softw. 2008, 27, 1–22. [Google Scholar] [CrossRef]

- Tavenard, R.; Faouzi, J.; Vandewiele, G.; Divo, F.; Androz, G.; Holtz, C.; Payne, M.; Yurchak, R.; Rußwurm, M.; Kolar, K.; et al. Tslearn, A Machine Learning Toolkit for Time Series Data. J. Mach. Learn. Res. 2020, 21, 1–6. [Google Scholar]

| Techniques | Errors | Cluster 0 | Cluster 1 | Cluster 2 | Cluster 3 | Cluster 4 | Average |

|---|---|---|---|---|---|---|---|

| DEC_CS | RMSE | 1.104 | 1.050 | 0.409 | 1.524 | 0.863 | 0.990 |

| MAE | 0.816 | 0.778 | 0.305 | 1.145 | 0.671 | 0.743 | |

| DEC_Clust_BS | 1.417 | 1.205 | 0.426 | 1.867 | 0.992 | 1.181 | |

| 1.092 | 0.932 | 0.308 | 1.158 | 0.758 | 0.909 | ||

| DBA K-Means_CS | RMSE | 0.714 | 2.129 | 1.079 | 0.416 | 1.468 | 1.161 |

| MAE | 0.524 | 1.715 | 0.789 | 0.318 | 1.156 | 0.901 | |

| DBA_K-Means_Clust_BS | 0.868 | 2.012 | 1.205 | 0.330 | 1.652 | 1.214 | |

| 0.664 | 0.565 | 0.919 | 0.239 | 1.288 | 0.935 | ||

| K-Means_CS | RMSE | 1.131 | 0.938 | 0.389 | 1.774 | 1.906 | 1.228 |

| MAE | 0.859 | 0.739 | 0.293 | 1.452 | 1.522 | 0.973 | |

| K-Means_Clust_BS | 1.263 | 0.961 | 0.388 | 1.664 | 1.993 | 1.254 | |

| 0.968 | 0.735 | 0.282 | 1.289 | 1.562 | 0.967 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Debella, T.T.; Shawel, B.S.; Devanne, M.; Weber, J.; Woldegebreal, D.H.; Pollin, S.; Forestier, G. Deep Representation Learning for Cluster-Level Time Series Forecasting. Eng. Proc. 2022, 18, 22. https://doi.org/10.3390/engproc2022018022

Debella TT, Shawel BS, Devanne M, Weber J, Woldegebreal DH, Pollin S, Forestier G. Deep Representation Learning for Cluster-Level Time Series Forecasting. Engineering Proceedings. 2022; 18(1):22. https://doi.org/10.3390/engproc2022018022

Chicago/Turabian StyleDebella, Tsegamlak T., Bethelhem S. Shawel, Maxime Devanne, Jonathan Weber, Dereje H. Woldegebreal, Sofie Pollin, and Germain Forestier. 2022. "Deep Representation Learning for Cluster-Level Time Series Forecasting" Engineering Proceedings 18, no. 1: 22. https://doi.org/10.3390/engproc2022018022

APA StyleDebella, T. T., Shawel, B. S., Devanne, M., Weber, J., Woldegebreal, D. H., Pollin, S., & Forestier, G. (2022). Deep Representation Learning for Cluster-Level Time Series Forecasting. Engineering Proceedings, 18(1), 22. https://doi.org/10.3390/engproc2022018022