1. Introduction

Tennis is one of the most physically and psychologically demanding individual sports. Fluctuating intensity, momentum shifts, and decisive points such as break points impose considerable stress on athletes. Quantifying and classifying match stress is critical for training design, injury prevention, and psychological preparation. Traditional sports analytics has relied on regression models and handcrafted indicators. While effective in limited settings, such methods cannot capture nonlinear dependencies among features such as serve efficiency, rally length, and break point dynamics. Deep learning models, including long short-term memory (LSTM), bidirectional LSTM (BiLSTM), convolutional LSTM (CNN-LSTM), and attention-based LSTM, offer stronger predictive capability but often suffer from limited interpretability, restricting practical adoption in coaching applications.

To address this issue, we employ TabNet, a deep tabular learning architecture that incorporates sequential attention for feature selection and provides intrinsic interpretability. Feature attributions are further analyzed using Shapley additive explanations (SHAP), enabling validation that the models rely on meaningful match statistics rather than spurious correlations. We constructed a binary classification framework to distinguish high-stress and low-stress Association of Tennis Professional (ATP) matches, defined by longer durations and frequent break points. Comparative evaluation across TabNet, LSTM, BiLSTM, attention-LSTM, and CNN-LSTM shows that TabNet achieves the highest accuracy (98%), while recurrent models maintain competitive performance (>93%). SHAP analysis identifies break point dynamics and match duration as decisive factors, with serve and return features providing secondary contributions.

Using the developed model, an interpretable tennis match stress classification was conducted. The model included comparative benchmarking of TabNet against sequential deep learning models, allowing for a clear evaluation of performance across different architectures. In addition, SHAP-based analysis was employed to confirm the decisive match statistics that contributed most significantly to stress classification, thereby enhancing transparency and interpretability. Finally, the work demonstrated practical applications of interpretable deep learning in sports analytics, highlighting how advanced models can provide both accurate predictions and meaningful insights into player performance and match dynamics.

2. Related Works

Sports analytics has long examined performance indicators such as rally length, service efficiency, and break points to assess competitive outcomes and player fatigue. Lisi [

1] analyzed the statistical distribution of rally lengths in professional tennis, finding that extended rallies increase exertion and the likelihood of performance decline. Amatori et al. [

2] investigated recreational players and reported measurable reductions in sprint and jump performance after match play, underscoring the physiological toll of sustained competition. With advanced deep learning models, researchers model sequential dependencies in match dynamics. Jiang and Yuan [

3] applied LSTM networks to forecast tennis match trends, while Lerner [

4] developed DeepTennis, an LSTM-based tool for predicting outcomes during play. Zhang [

5] extended this line of work to racket sports by combining convolutional layers with LSTM units, enabling local technical actions to be modeled alongside temporal context. In parallel, the interpretability of the model’s results has advanced. Lundberg and Lee [

6] introduced SHAP to provide consistent feature attributions, and Arık and Pfister [

7] proposed TabNet, later refined by Si et al. [

8] to better capture salient features in tabular data. Collectively, these studies demonstrate how deep learning and explainable AI are reshaping modern sports analytics.

3. Proposed Method

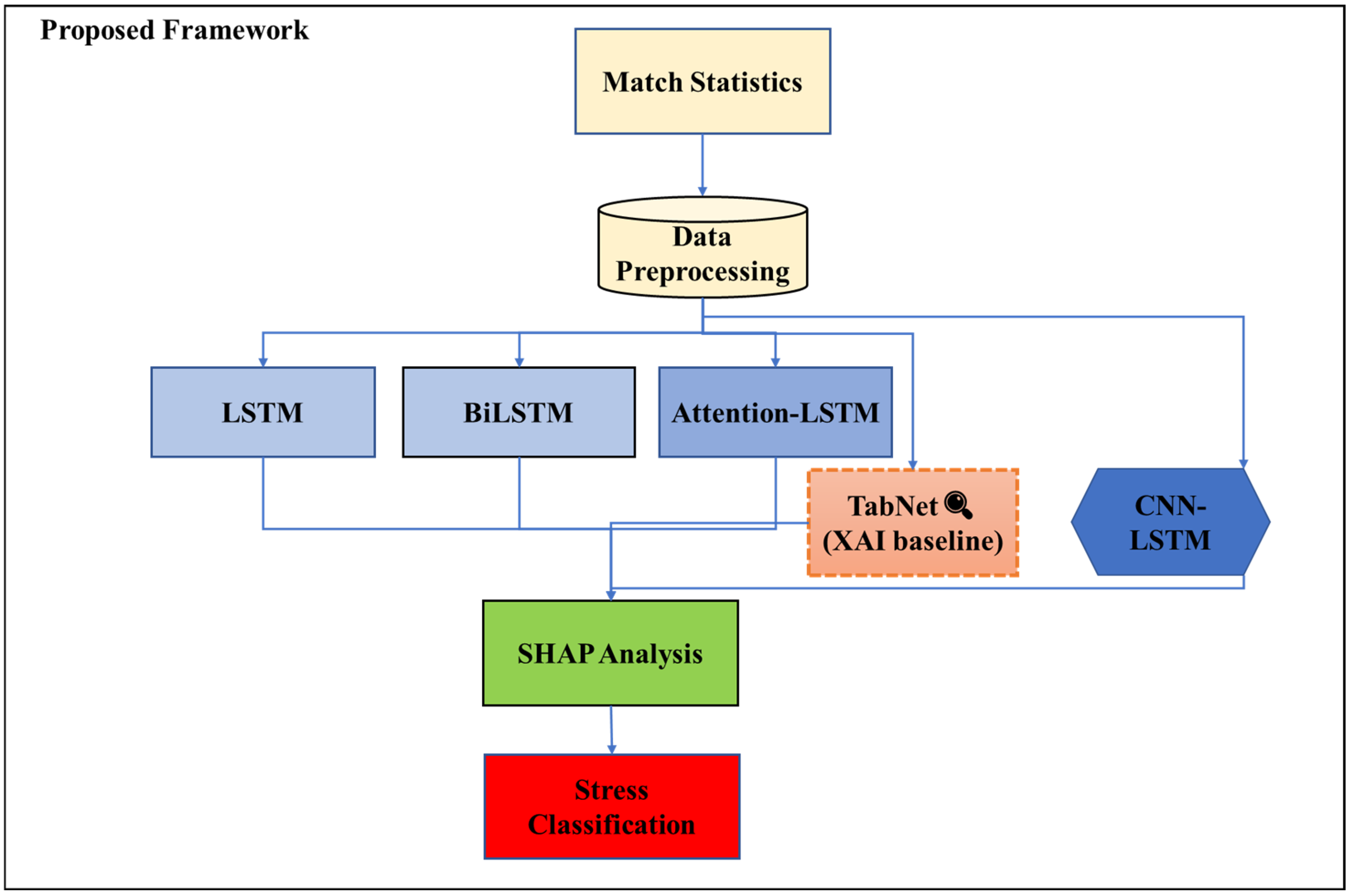

We developed a deep learning model to classify high-stress tennis matches and identify decisive performance determinants. Five architectures are employed, TabNet, LSTM, BiLSTM, CNN-LSTM, and Attention-LSTM, each addressing different aspects of feature dependencies, temporal patterns, and interpretability (

Figure 1).

3.1. Data Preprocessing

The dataset was obtained from ATP tennis match records from the 2000 season. Each sample includes statistical and contextual variables such as match duration, break points faced and saved, aces, first-serve percentage, double faults, and player background information (age, rank, surface, and round). High-stress matches are defined as follows.

To mitigate feature-scale bias, all numeric variables were standardized using

Z-score normalization [

9].

where

and

represent the mean and standard deviation of the training set, respectively, ensuring stable model convergence.

3.2. Model Architecture

TabNet employs sparse attentive feature masks at each decision step for feature selection, enabling both predictive accuracy and interpretability [

7,

8]. LSTM captures long-term dependencies through gated recurrent units, and BiLSTM processes input bidirectionally to exploit contextual information. CNN-LSTM combines convolutional filters for local feature extraction with LSTM for temporal modeling, while Attention-LSTM selectively emphasizes critical features via adaptive weighting [

10].

where

denotes the attention weight and

c the context vector.

3.3. Traing Strategy

All models are trained with binary cross-entropy loss [

9].

Adam optimizer is used with early stopping after 5 epochs without improvement.

4. Results and Discussions

4.1. Experimental Setup

The framework was evaluated on the ATP 2000 dataset. High-stress matches were defined as those exceeding the median duration or with more than five break points faced by the winner. Features were standardized using Z-score normalization, and the data were split into training (80%) and testing (20%) sets with stratification.

4.2. TabNet with SHAP Interpretability

TabNet achieved strong predictive performance across all evaluation metrics.

Figure 2 shows the feature importance ranking from TabNet’s built-in attribution, where break points faced (w_bpFaced, l_bpFaced), first-serve consistency (l_1stIn), and match duration emerged as the most influential indicators.

Figure 3 shows the precision–recall (PR) curve, with an average precision (AP) of 0.988. The confusion matrix in

Figure 4 shows that both HighStress and LowStress samples had few classification errors.

Figure 5 shows the ROC curve, with an area under the curve (AUC) of 0.995. The dashed diagonal line in the ROC plot corresponds to a random classifier (AUC = 0.5) and serves as a reference.

Finally, the SHAP-based interpretability analysis (

Figure 6) provides a fine-grained perspective, showing that minutes played, break points saved, and break points faced exert the strongest positive contributions toward HighStress predictions. Additional factors, such as serve games and double faults, also play secondary roles. This combination of high accuracy and transparent interpretation underscores TabNet’s suitability as an explainable AI (XAI) baseline for stress-aware tennis analytics.

4.3. Deep Learning Baselines

We benchmarked TabNet against sequential deep learning models, including LSTM, BiLSTM, CNN-LSTM, and Attention-LSTM. Performance metrics are summarized in

Table 1. All recurrent models achieved accuracy above 93%, with CNN-LSTM performing the best among them. TabNet outperformed all baselines with 98% accuracy.

To evaluate class-specific performance, precision, recall, and F1-score for both LowStress and HighStress categories were measured (

Table 2). TabNet achieves balanced predictions, while CNN-LSTM shows weaker recall for LowStress matches.

5. Conclusions

We developed a framework for stress classification in professional tennis using ATP statistics. TabNet achieved the highest accuracy (0.98) with strong interpretability, while BiLSTM and Attention-LSTM also provided competitive sequential modeling performance (0.96). The results highlight the value of combining interpretable and temporal models to deliver both predictive accuracy and actionable insights. Future work will validate the framework on multi-season datasets, incorporate physiological and biomechanical features, and develop real-time applications for stress-aware performance support.

Author Contributions

Conceptualization, W.-H.H. and M.-H.T.; methodology, W.-H.H.; software, W.-H.H.; validation, W.-H.H.; formal analysis, W.-H.H.; investigation, W.-H.H.; data curation, W.-H.H.; writing—original draft preparation, H.-C.H.; writing—review and editing, M.-H.T.; supervision, M.-H.T. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The data analyzed in this study are publicly available from the tennis_atp repository on GitHub (

https://github.com/JeffSackmann/tennis_atp), (accessed on 26 June 2025), which contains ATP match records and related statistics.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Lisi, F.; Grigoletto, M.; Briglia, M.G. On the distribution of rally length in professional tennis matches. J. Sports Anal. 2024, 10, 105–121. [Google Scholar] [CrossRef]

- Amatori, S.; Gobbi, E.; Moriondo, G.; Gervasi, M.; Sisti, D.; Rocchi, M.B.L.; Perroni, F. Effects of a Tennis Match on Perceived Fatigue, Jump and Sprint Performances on Recreational Players. Open Sports Sci. J. 2020, 13, 54–59. [Google Scholar] [CrossRef]

- Jiang, Y.; Yuan, C. Tennis Match Trend Prediction Based on LSTM. Highlights Sci. Eng. Technol. 2024, 107, 268–275. [Google Scholar] [CrossRef]

- Lerner, S.; Desai, S.; Patel, K. DeepTennis: Mid-Match Tennis Predictions; CS230 Project Report; Stanford University: Stanford, CA, USA, 2019. [Google Scholar]

- Zhang, X. Analysis of athletes’ technical action based on deep learning. Mol. Cell. Biomech. 2024, 21, 490. [Google Scholar] [CrossRef]

- Lundberg, S.M.; Lee, S.-I. A Unified Approach to Interpreting Model Predictions. arXiv 2017, arXiv:1705.07874. [Google Scholar] [CrossRef]

- Arık, S.Ö.; Pfister, T. TabNet: Attentive interpretable tabular learning. In Proceedings of the Thirty-Fifth AAAI Conference on Artificial Intelligence (AAAI), Virtual Conference, 2–9 February 2021; pp. 6679–6687. [Google Scholar]

- Si, J.Y.H.; Cheng, W.Y.; Cooper, M.; Krishnan, R.G. InterpreTabNet: Distilling Predictive Signals from Tabular Data by Salient Feature Interpretation. In Proceedings of the 41st International Conference on Machine Learning (ICML), Vienna, Austria, 21–27 July 2024. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Bahdanau, D.; Cho, K.; Bengio, Y. Neural Machine Translation by Jointly Learning to Align and Translate. arXiv 2014, arXiv:1409.0473. [Google Scholar]

| Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |