A Nested U-Network with Temporal Convolution for Monaural Speech Enhancement in Laser Hearing

Abstract

1. Introduction

- We propose a nested U-network with gated temporal convolution for monaural speech enhancement.

- In a real-world environment, we designed a remote speech acquisition system based on LDV to obtain enhanced speech. We used various target objects and different corpora to demonstrate the effectiveness and generalization ability of the proposed method.

2. Method

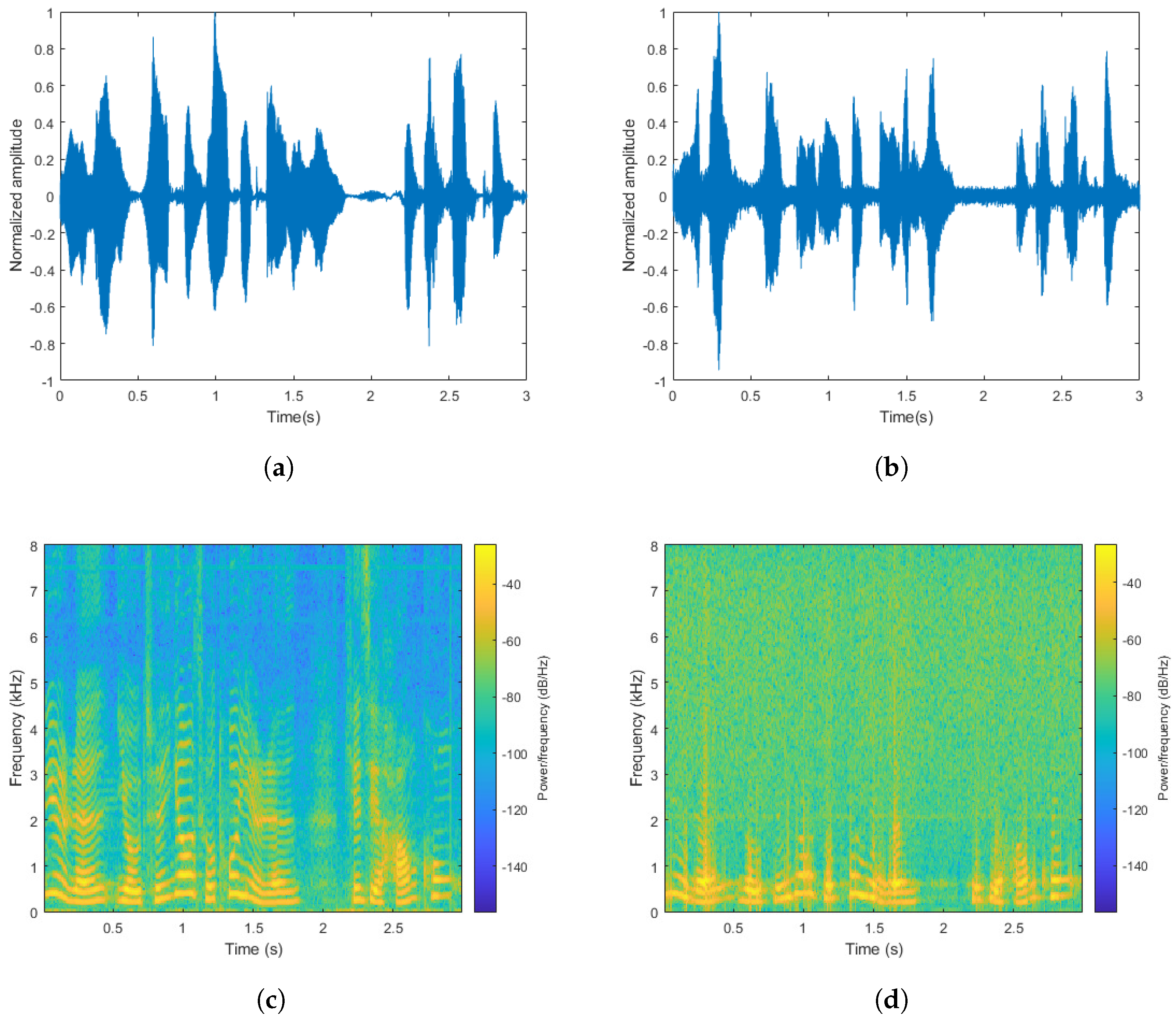

2.1. Analysis of Signals Captured by LDV

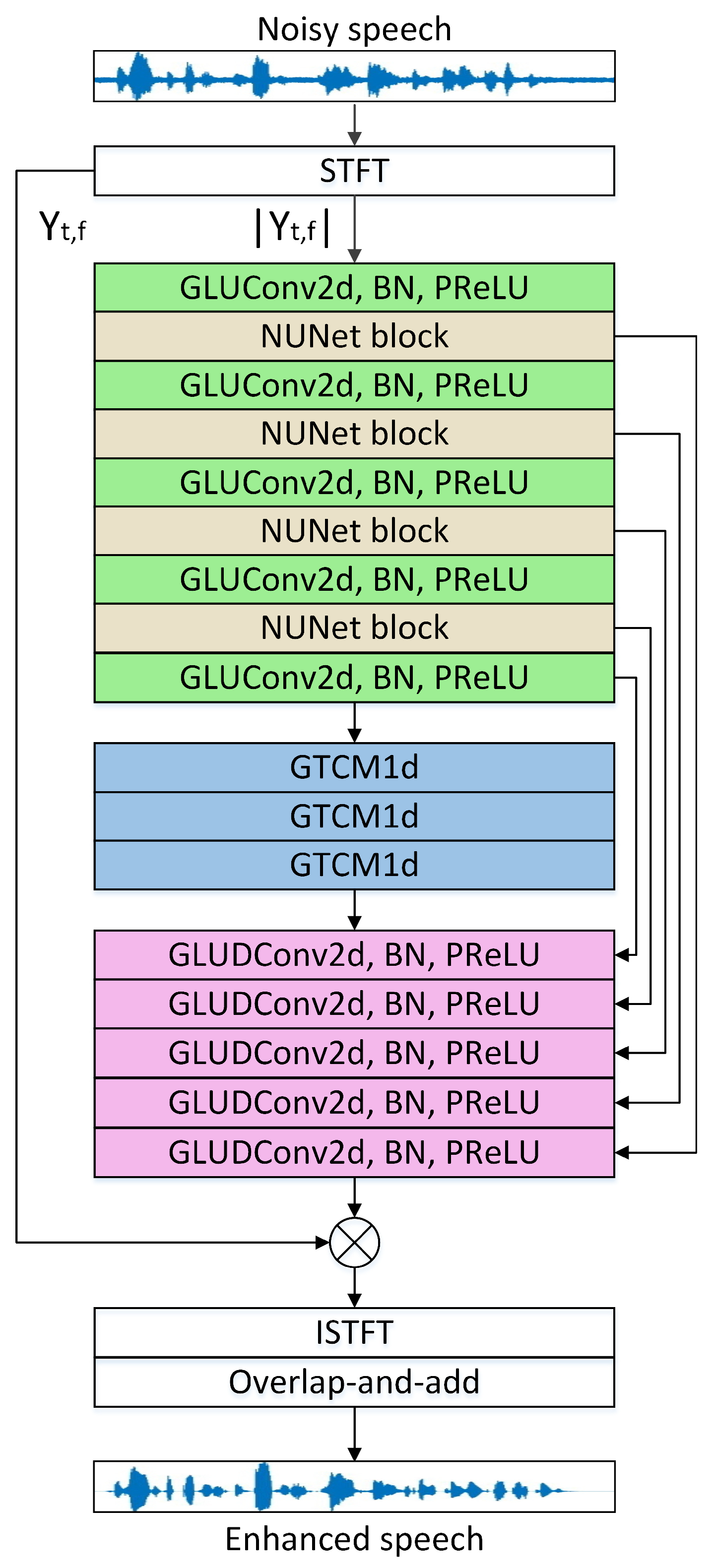

2.2. Network Architecture

2.3. Nested U-Net Block

2.4. Gated Temporal Convolution Module

3. Evaluation

3.1. Datasets

3.2. Experimental Setup and Baselines

3.3. Metrics

4. Results

4.1. Ablation Study

4.2. Comparison with Baselines

4.3. Comparison with Various Targets

5. Limitations

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Li, R.; Wang, T.; Zhu, Z.; Xiao, W. Vibration Characteristics of Various Surfaces Using an LDV for Long-Range Voice Acquisition. IEEE Sens. J. 2011, 11, 1415–1422. [Google Scholar] [CrossRef]

- Peng, S.; Wu, S.; Li, Y.; Chen, H. All-fiber monostatic pulsed laser Doppler vibrometer: A digital signal processing method to eliminate cochannel interference. Opt. Laser Technol. 2020, 124, 105952. [Google Scholar] [CrossRef]

- Wu, S.; Lv, T.; Han, X.; Yan, C.; Zhang, H. Remote audio signals detection using a partial-fiber laser Doppler vibrometer. Appl. Acoust. 2018, 130, 216–221. [Google Scholar] [CrossRef]

- Xu, Z.; Li, J.; Zhang, S.; Tan, Y.; Zhang, X.; Lin, X.; Wan, X.; Zhuang, S. Remote eavesdropping at 200 m distance based on laser feedback interferometry with single-photon sensitivity. Opt. Lasers Eng. 2021, 141, 106562. [Google Scholar] [CrossRef]

- Boll, S. Suppression of acoustic noise in speech using spectral subtraction. IEEE Trans. Acoust. Speech Signal Process. 1979, 27, 113–120. [Google Scholar] [CrossRef]

- Li, W.; Liu, M.; Zhu, Z.; Huang, T. LDV Remote Voice Acquisition and Enhancement. In Proceedings of the 18th International Conference on Pattern Recognition (ICPR’06), Hong Kong, China, 20–24 August 2006; IEEE: Piscataway, NJ, USA, 2006; Volume 4, pp. 262–265. [Google Scholar] [CrossRef]

- Zhang, H.; Lv, T.; Yan, C. The novel role of arctangent phase algorithm and voice enhancement techniques in laser hearing. Appl. Acoust. 2017, 126, 136–142. [Google Scholar] [CrossRef]

- Lv, T.; Zhang, H.; Yan, C. Double mode surveillance system based on remote audio/video signals acquisition. Appl. Acoust. 2018, 129, 316–321. [Google Scholar] [CrossRef]

- Lv, T.; Han, X.; Wu, S.; Li, Y. The effect of speckles noise on the Laser Doppler Vibrometry for remote speech detection. Opt. Commun. 2019, 440, 117–125. [Google Scholar] [CrossRef]

- Peng, R.; Xu, B.; Li, G.; Zheng, C.; Li, X. Long-range speech acquirement and enhancement with dual-point laser Doppler vibrometers. In Proceedings of the 2018 IEEE 23rd International Conference on Digital Signal Processing (DSP), Shanghai, China, 19–21 November 2018; IEEE: Piscataway, NJ, USA, 2018; pp. 1–5. [Google Scholar] [CrossRef]

- Wang, Y.H.; Zhang, W.X.; Wu, Z.; Kong, X.X.; Wang, Y.B.; Zhang, H.X. Highly-Accurate and Real-Time Speech Measurement for Laser Doppler Vibrometers. IEICE Trans. Inf. Syst. 2022, E105D, 1568–1580. [Google Scholar] [CrossRef]

- Xie, Z.; Du, J.; McLoughlin, I.; Xu, Y.; Ma, F.; Wang, H. Deep neural network for robust speech recognition with auxiliary features from laser-Doppler vibrometer sensor. In Proceedings of the 2016 10th International Symposium on Chinese Spoken Language Processing (ISCSLP), Tianjin, China, 17–20 October 2016; IEEE: Piscataway, NJ, USA, 2016; pp. 1–5. [Google Scholar] [CrossRef]

- Peng, S.; Lv, T.; Han, X.; Wu, S.; Yan, C.; Zhang, H. Remote speaker recognition based on the enhanced LDV-captured speech. Appl. Acoust. 2019, 143, 165–170. [Google Scholar] [CrossRef]

- Cai, C.; Iwai, K.; Nishiura, T. Speech Enhancement Based on Two-Stage Processing with Deep Neural Network for Laser Doppler Vibrometer. Appl. Sci. 2023, 13, 1958. [Google Scholar] [CrossRef]

- Wang, D.; Chen, J. Supervised Speech Separation Based on Deep Learning: An Overview. IEEE/ACM Trans. Audio Speech Lang. Process. 2018, 26, 1702–1726. [Google Scholar] [CrossRef]

- Wang, Y.; Narayanan, A.; Wang, D. On Training Targets for Supervised Speech Separation. IEEE/ACM Trans. Audio Speech Lang. Process. 2014, 22, 1849–1858. [Google Scholar] [CrossRef] [PubMed]

- Pandey, A.; Wang, D. Dense CNN With Self-Attention for Time-Domain Speech Enhancement. IEEE/ACM Trans. Audio Speech Lang. Process. 2021, 29, 1270–1279. [Google Scholar] [CrossRef]

- Hummersone, C.; Stokes, T.; Brookes, T. On the Ideal Ratio Mask as the Goal of Computational Auditory Scene Analysis. In Blind Source Separation: Advances in Theory, Algorithms and Applications; Naik, G.R., Wang, W., Eds.; Springer: Berlin/Heidelberg, Germany, 2014; pp. 349–368. [Google Scholar] [CrossRef]

- Williamson, D.S.; Wang, Y.; Wang, D. Complex Ratio Masking for Monaural Speech Separation. IEEE/ACM Trans. Audio Speech, Lang. Process. 2016, 24, 483–492. [Google Scholar] [CrossRef] [PubMed]

- Li, A.; Yuan, M.; Zheng, C.; Li, X. Speech enhancement using progressive learning-based convolutional recurrent neural network. Appl. Acoust. 2020, 166, 107347. [Google Scholar] [CrossRef]

- Wang, Z.Q.; Wang, P.; Wang, D. Multi-microphone Complex Spectral Mapping for Utterance-wise and Continuous Speech Separation. IEEE/ACM Trans. Audio Speech Lang. Process. 2021, 29, 2001–2014. [Google Scholar] [CrossRef] [PubMed]

- Pandey, A.; Wang, D. A New Framework for CNN-Based Speech Enhancement in the Time Domain. IEEE/ACM Trans. Audio Speech Lang. Process. 2019, 27, 1179–1188. [Google Scholar] [CrossRef]

- Tolooshams, B.; Giri, R.; Song, A.H.; Isik, U.; Krishnaswamy, A. Channel-Attention Dense U-Net for Multichannel Speech Enhancement. In Proceedings of the ICASSP 2020—2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Barcelona, Spain, 4–8 May 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 836–840. [Google Scholar] [CrossRef]

- Fu, Y.; Liu, Y.; Li, J.; Luo, D.; Lv, S.; Jv, Y.; Xie, L. Uformer: A Unet Based Dilated Complex & Real Dual-Path Conformer Network for Simultaneous Speech Enhancement and Dereverberation. In Proceedings of the ICASSP 2022—2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Singapore, 23–27 May 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 7417–7421. [Google Scholar] [CrossRef]

- Xu, X.; Tu, W.; Yang, Y. CASE-Net: Integrating local and non-local attention operations for speech enhancement. Speech Commun. 2023, 148, 31–39. [Google Scholar] [CrossRef]

- Zhang, Z.; Xu, S.; Zhuang, X.; Qian, Y.; Wang, M. Dual branch deep interactive UNet for monaural noisy-reverberant speech enhancement. Appl. Acoust. 2023, 212, 109574. [Google Scholar] [CrossRef]

- Zhu, Y.; Xu, X.; Ye, Z. FLGCNN: A novel fully convolutional neural network for end-to-end monaural speech enhancement with utterance-based objective functions. Appl. Acoust. 2020, 170, 107511. [Google Scholar] [CrossRef]

- Fan, C.; Zhang, H.; Li, A.; Xiang, W.; Zheng, C.; Lv, Z.; Wu, X. CompNet: Complementary network for single-channel speech enhancement. Neural Netw. 2023, 168, 508–517. [Google Scholar] [CrossRef]

- Xiang, X.; Zhang, X. Joint waveform and magnitude processing for monaural speech enhancement. Appl. Acoust. 2022, 200, 109077. [Google Scholar] [CrossRef]

- Zhang, Y.; Li, L.; Tong, Y.; Zeng, H.; Zhou, Y. Vibration characteristics of aluminum material and its influences on laser Doppler voice acquisition. In International Symposium on Photoelectronic Detection and Imaging 2013: Infrared Imaging and Applications; Gong, H., Shi, Z., Chen, Q., Lu, J., Eds.; International Society for Optics and Photonics, SPIE: Bellingham, WA, USA, 2013; Volume 8907, p. 89074B. [Google Scholar] [CrossRef][Green Version]

- Li, L.; Zeng, H.; Zhang, Y.; Kong, Q.; Zhou, Y.; Liu, Y. Analysis of backscattering characteristics of objects for remote laser voice acquisition. Appl. Opt. 2014, 53, 971–978. [Google Scholar] [CrossRef]

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. In Proceedings of the International Conference on Machine Learning, Lille, France, 6–11 July 2015; PMLR: Cambridge, MA, USA, 2015; pp. 448–456. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 13–16 December 2015; IEEE: Piscataway, NJ, USA, 2015. [Google Scholar]

- Dauphin, Y.N.; Fan, A.; Auli, M.; Grangier, D. Language modeling with gated convolutional networks. In Proceedings of the International Conference on Machine Learning, Sydney, Australia, 6–11 August 2017; PMLR: Cambridge, MA, USA, 2017; pp. 933–941. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Medical Image Computing and Computer-Assisted Intervention—MICCAI 2015; Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F., Eds.; Springer: Cham, Switzerland, 2015; pp. 234–241. [Google Scholar]

- Qin, X.; Zhang, Z.; Huang, C.; Dehghan, M.; Zaiane, O.R.; Jagersand, M. U2-Net: Going deeper with nested U-structure for salient object detection. Pattern Recognit. 2020, 106, 107404. [Google Scholar] [CrossRef]

- Xiang, X.; Zhang, X.; Chen, H. A Nested U-Net With Self-Attention and Dense Connectivity for Monaural Speech Enhancement. IEEE Signal Process. Lett. 2022, 29, 105–109. [Google Scholar] [CrossRef]

- Noh, H.; Hong, S.; Han, B. Learning Deconvolution Network for Semantic Segmentation. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 13–16 December 2015; IEEE: Piscataway, NJ, USA, 2015. [Google Scholar]

- Bai, S.; Kolter, J.Z.; Koltun, V. An Empirical Evaluation of Generic Convolutional and Recurrent Networks for Sequence Modeling. arXiv 2018, arXiv:1803.01271. [Google Scholar] [CrossRef]

- Pandey, A.; Wang, D. TCNN: Temporal Convolutional Neural Network for Real-time Speech Enhancement in the Time Domain. In Proceedings of the ICASSP 2019—2019 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Brighton, UK, 12–17 May 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 6875–6879. [Google Scholar] [CrossRef]

- Shi, Z.; Lin, H.; Liu, L.; Liu, R.; Han, J.; Shi, A. Deep Attention Gated Dilated Temporal Convolutional Networks with Intra-Parallel Convolutional Modules for End-to-End Monaural Speech Separation. In Proceedings of the Interspeech 2019, Graz, Austria, 15–19 September 2019; ISCA: Toulouse, France, 2019; pp. 3183–3187. [Google Scholar] [CrossRef]

- Wang, D.; Zhang, X.; Zhang, Z. THCHS-30: A Free Chinese Speech Corpus. 2015. Available online: https://www.openslr.org/18/ (accessed on 15 August 2025).

- Lyons, J.W. DARPA TIMIT Acoustic-Phonetic Continuous Speech Corpus; National Institute of Standards and Technology: Gaithersburg, MD, USA, 1993.

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Ephraim, Y.; Malah, D. Speech enhancement using a minimum mean-square error log-spectral amplitude estimator. IEEE Trans. Acoust. Speech Signal Process. 1985, 33, 443–445. [Google Scholar] [CrossRef]

- Cohen, I. Optimal speech enhancement under signal presence uncertainty using log-spectral amplitude estimator. IEEE Signal Process. Lett. 2002, 9, 113–116. [Google Scholar] [CrossRef]

- Scalart, P.; Filho, J. Speech enhancement based on a priori signal to noise estimation. In Proceedings of the 1996 IEEE International Conference on Acoustics, Speech, and Signal Processing Conference Proceedings, Atlanta, GA, USA, 7–10 May 1996; IEEE: Piscataway, NJ, USA, 1996; Volume 2, pp. 629–632. [Google Scholar] [CrossRef]

- Li, A.; Zheng, C.; Fan, C.; Peng, R.; Li, X. A Recursive Network with Dynamic Attention for Monaural Speech Enhancement. arXiv 2020, arXiv:2003.12973. [Google Scholar] [CrossRef]

- Saleem, N.; Gunawan, T.S.; Kartiwi, M.; Nugroho, B.S.; Wijayanto, I. NSE-CATNet: Deep Neural Speech Enhancement Using Convolutional Attention Transformer Network. IEEE Access 2023, 11, 66979–66994. [Google Scholar] [CrossRef]

- Hu, Y.; Liu, Y.; Lv, S.; Xing, M.; Zhang, S.; Fu, Y.; Wu, J.; Zhang, B.; Xie, L. DCCRN: Deep Complex Convolution Recurrent Network for Phase-Aware Speech Enhancement. arXiv 2020, arXiv:2008.00264. [Google Scholar] [CrossRef]

- Saleem, N.; Gunawan, T.S.; Shafi, M.; Bourouis, S.; Trigui, A. Multi-Attention Bottleneck for Gated Convolutional Encoder-Decoder-Based Speech Enhancement. IEEE Access 2023, 11, 114172–114186. [Google Scholar] [CrossRef]

- Tan, K.; Wang, D. Learning Complex Spectral Mapping With Gated Convolutional Recurrent Networks for Monaural Speech Enhancement. IEEE/ACM Trans. Audio Speech Lang. Process. 2020, 28, 380–390. [Google Scholar] [CrossRef] [PubMed]

- Li, A.; Zheng, C.; Zhang, L.; Li, X. Glance and gaze: A collaborative learning framework for single-channel speech enhancement. Appl. Acoust. 2022, 187, 108499. [Google Scholar] [CrossRef]

- Rix, A.; Beerends, J.; Hollier, M.; Hekstra, A. Perceptual evaluation of speech quality (PESQ)-a new method for speech quality assessment of telephone networks and codecs. In Proceedings of the 2001 IEEE International Conference on Acoustics, Speech, and Signal Processing, Salt Lake City, UT, USA, 7–11 May 2001; Proceedings (Cat. No.01CH37221). IEEE: Piscataway, NJ, USA, 2001; Volume 2, pp. 749–752. [Google Scholar] [CrossRef]

- Taal, C.H.; Hendriks, R.C.; Heusdens, R.; Jensen, J. An Algorithm for Intelligibility Prediction of Time–Frequency Weighted Noisy Speech. IEEE Trans. Audio Speech Lang. Process. 2011, 19, 2125–2136. [Google Scholar] [CrossRef]

- Wang, P.; Wang, Y.; Liu, H.; Sheng, Y.; Wang, X.; Wei, Z. Speech enhancement based on auditory masking properties and log-spectral distance. In Proceedings of the 2013 3rd International Conference on Computer Science and Network Technology, Dalian, China, 12–13 October 2013; IEEE: Piscataway, NJ, USA, 2013; pp. 1060–1064. [Google Scholar] [CrossRef]

| Layer Name | Input Size | Hyperparameters | Output Size |

|---|---|---|---|

| GLUConv2d_1 | |||

| NUNet2d_1 | |||

| GLUConv2d_2 | |||

| NUNet2d_2 | |||

| GLUConv2d_3 | |||

| NUNet2d_3 | |||

| GLUConv2d_4 | |||

| NUNet2d_4 | |||

| GLUConv2d_5 | |||

| reshape_1 | - | ||

| GTCM1d_1 | |||

| GTCM1d_2 | |||

| GTCM1d_3 | |||

| reshape_2 | - | ||

| GLUDConv2d_5 | |||

| GLUDConv2d_4 | |||

| GLUDConv2d_3 | |||

| GLUDConv2d_2 | |||

| GLUDConv2d_1 |

| Layer Name | Input Size | Hyperparameters | Output Size |

|---|---|---|---|

| Conv2d_1 | |||

| Conv2d_2 | |||

| Conv2d_3 | |||

| Conv2d_4 | |||

| DConv2d_4 | |||

| DConv2d_3 | |||

| DConv2d_2 | |||

| DConv2d_1 |

| Layer Name | Input Size | Hyperparameters | Output Size |

|---|---|---|---|

| GLUConv1d_1 | |||

| D-Conv1d_1 | |||

| GLUConv1d_2 | |||

| GLUConv1d_3 | |||

| D-Conv1d_2 | |||

| GLUConv1d_4 | |||

| GLUConv1d_5 | |||

| D-Conv1d_3 | |||

| GLUConv1d_6 | |||

| GLUConv1d_7 | |||

| D-Conv1d_4 | |||

| GLUConv1d_8 |

| Model | LSD ↓ | STOI ↑ | PESQ ↑ |

|---|---|---|---|

| Mixture | 8.658 | 0.697 | 1.509 |

| TCNUNet | 4.642 | 0.822 | 2.268 |

| w/o GLU | 4.945 | 0.819 | 2.211 |

| w/o GTCM | 5.092 | 0.809 | 2.161 |

| w/o NUNet | 4.730 | 0.818 | 2.247 |

| Validation | Test | |||||

|---|---|---|---|---|---|---|

| Model | LSD ↓ | STOI ↑ | PESQ ↑ | LSD ↓ | STOI ↑ | PESQ ↑ |

| Mixture | 8.566 ± 0.13 | 0.697 ± 0.01 | 1.521 ± 0.02 | 8.658 ± 0.12 | 0.697 ± 0.01 | 1.509 ± 0.03 |

| MMSELSA | 8.995 ± 0.09 | 0.692 ± 0.01 | 1.716 ± 0.04 | 8.959 ± 0.10 | 0.693 ± 0.01 | 1.689 ± 0.05 |

| OM-LSA | 11.258 ± 0.14 | 0.683 ± 0.01 | 1.722 ± 0.04 | 11.137 ± 0.16 | 0.685 ± 0.01 | 1.691 ± 0.05 |

| Wiener | 8.748 ± 0.06 | 0.696 ± 0.01 | 1.626 ± 0.03 | 8.751 ± 0.09 | 0.695 ± 0.01 | 1.606 ± 0.04 |

| DCCRN | 5.915 ± 0.05 | 0.767 ± 0.01 | 1.730 ± 0.02 | 5.887 ± 0.06 | 0.766 ± 0.01 | 1.721 ± 0.02 |

| MAB-CED | 4.804 ± 0.07 | 0.775 ± 0.01 | 1.538 ± 0.02 | 4.833 ± 0.06 | 0.773 ± 0.01 | 1.535 ± 0.02 |

| GaGNet | 4.710 ± 0.06 | 0.815 ± 0.01 | 2.104 ± 0.04 | 4.744 ± 0.06 | 0.815 ± 0.01 | 2.123 ± 0.04 |

| GCRN | 4.751 ± 0.06 | 0.770 ± 0.01 | 1.514 ± 0.01 | 4.791 ± 0.06 | 0.768 ± 0.01 | 1.514 ± 0.01 |

| DARCN | 6.826 ± 0.06 | 0.747 ± 0.01 | 1.816 ± 0.03 | 6.862 ± 0.07 | 0.750 ± 0.01 | 1.819 ± 0.04 |

| NSE-CATNet | 4.936 ± 0.06 | 0.815 ± 0.01 | 2.179 ± 0.04 | 4.986 ± 0.06 | 0.815 ± 0.01 | 2.199 ± 0.04 |

| TCNUNet | 4.598 ± 0.06 | 0.822 ± 0.01 | 2.255 ± 0.05 | 4.642 ± 0.06 | 0.822 ± 0.01 | 2.268 ± 0.05 |

| Mixture | Enhanced | |||||

|---|---|---|---|---|---|---|

| Target Object | LSD ↓ | STOI ↑ | PESQ ↑ | LSD ↓ | STOI ↑ | PESQ ↑ |

| Iron box | 8.990 ± 0.12 | 0.547 ± 0.01 | 1.149 ± 0.01 | 4.821 ± 0.05 | 0.752 ± 0.01 | 1.705 ± 0.02 |

| Printing paper | 8.931 ± 0.14 | 0.633 ± 0.01 | 1.326 ± 0.02 | 5.053 ± 0.10 | 0.762 ± 0.01 | 2.038 ± 0.05 |

| PVC sheet | 9.064 ± 0.13 | 0.680 ± 0.01 | 1.374 ± 0.02 | 4.937 ± 0.08 | 0.786 ± 0.01 | 1.995 ± 0.04 |

| Mixture | Enhanced | |||||

|---|---|---|---|---|---|---|

| Target Object | LSD ↓ | STOI ↑ | PESQ ↑ | LSD ↓ | STOI ↑ | PESQ ↑ |

| Iron box | 8.954 ± 0.13 | 0.550 ± 0.01 | 1.150 ± 0.01 | 4.853 ± 0.06 | 0.755 ± 0.01 | 1.733 ± 0.02 |

| Printing paper | 9.286 ± 0.13 | 0.628 ± 0.01 | 1.328 ± 0.02 | 5.070 ± 0.09 | 0.759 ± 0.01 | 2.051 ± 0.05 |

| PVC sheet | 9.076 ± 0.15 | 0.680 ± 0.01 | 1.388 ± 0.01 | 4.931 ± 0.10 | 0.784 ± 0.01 | 2.010 ± 0.04 |

| Mixture | Enhanced | |||||

|---|---|---|---|---|---|---|

| Target Object | LSD ↓ | STOI ↑ | PESQ ↑ | LSD ↓ | STOI ↑ | PESQ ↑ |

| Cardboard box | 7.665 ± 0.07 | 0.716 ± 0.01 | 1.741 ± 0.05 | 5.932 ± 0.18 | 0.780 ± 0.01 | 1.992 ± 0.06 |

| Iron box | 8.074 ± 0.09 | 0.560 ± 0.01 | 1.457 ± 0.02 | 7.035 ± 0.09 | 0.607 ± 0.02 | 1.500 ± 0.03 |

| Printing paper | 9.090 ± 0.12 | 0.625 ± 0.01 | 1.518 ± 0.02 | 6.531 ± 0.10 | 0.713 ± 0.01 | 1.870 ± 0.04 |

| PVC sheet | 7.690 ± 0.10 | 0.687 ± 0.01 | 1.599 ± 0.03 | 6.091 ± 0.08 | 0.728 ± 0.01 | 1.804 ± 0.04 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhou, B.; Tang, J.; Guo, F. A Nested U-Network with Temporal Convolution for Monaural Speech Enhancement in Laser Hearing. Modelling 2026, 7, 32. https://doi.org/10.3390/modelling7010032

Zhou B, Tang J, Guo F. A Nested U-Network with Temporal Convolution for Monaural Speech Enhancement in Laser Hearing. Modelling. 2026; 7(1):32. https://doi.org/10.3390/modelling7010032

Chicago/Turabian StyleZhou, Bomao, Jin Tang, and Fan Guo. 2026. "A Nested U-Network with Temporal Convolution for Monaural Speech Enhancement in Laser Hearing" Modelling 7, no. 1: 32. https://doi.org/10.3390/modelling7010032

APA StyleZhou, B., Tang, J., & Guo, F. (2026). A Nested U-Network with Temporal Convolution for Monaural Speech Enhancement in Laser Hearing. Modelling, 7(1), 32. https://doi.org/10.3390/modelling7010032