1. Introduction

Coal is a black sedimentary rock composed predominantly of carbon, with varying proportions of elements such as oxygen, nitrogen, sulfur, and hydrogen. It is classified as a fossil fuel. Coal is formed from plant material over long geological periods under favorable sedimentary conditions that allow for its formation and preservation. It originates from plants that accumulated in wet, low-oxygen environments. Over millions of years, successive layers of organic matter—including plant debris, peat, and decaying vegetation—accumulate in these settings. As these layers continue to build up, they undergo physical and chemical transformations driven by pressure, temperature, and time, ultimately resulting in the formation of coal as a solid fuel [

1,

2].

Plant material accumulates in wetlands or swamp environments during coal formation, where oxygen availability is limited. The lack of oxygen slows the decomposition process, allowing organic matter to accumulate. As layers of organic material deepen, the weight of overlying sediments and water exerts increasing pressure on the lower layers. This pressure, combined with geothermal heat, initiates the transformation of the organic material. Compaction and heat induce physical and chemical changes in the plant matter, gradually converting it into peat. With continued burial and geological processes, peat undergoes further transformation. The expulsion of water, carbon dioxide, and other volatile components results in an increased concentration of carbon, ultimately leading to coal formation. As coalification progresses, peat is transformed into various coal ranks, ranging from lignite to anthracite. These coal ranks differ in carbon content, energy content, and physicochemical properties, which determine their suitability for energy applications [

3,

4].

Based on the degree of carbonization and the nature of the plant precursor, coal can be classified into four distinct types, as illustrated in

Figure 1.

Peat: This represents the earliest stage of coal formation. It is brown, soft, dull, lightweight, and contains clearly visible plant remnants.

Lignite: This is a type of coal formed by the compression of peat. It is a crumbly material that still retains some identifiable plant structures. It is typically brown to black in color and exhibits a texture similar to that of the original wood.

Bituminous coal: This is a type of coal composed of organic sedimentary rock with a carbon content of approximately 80–90%. It is stratified, dull to greasy in appearance, hard and brittle, and black in color.

Anthracite: This is a fully carbonized coal that is black, hard, highly compact, and characterized by a bright, pearlescent luster.

Current global challenges encompass energy security, environmental protection, and sustainable development. Prolonged reliance on fossil fuels, particularly coal, has contributed to the greenhouse effect, global climate change, and resource depletion. Consequently, researchers worldwide are actively exploring alternative fuel utilization strategies that are both environmentally sustainable and efficient within the energy supply chain [

3,

4].

Coal contributes significantly to global energy production. The increasing demand for energy in thermal and power applications has driven the widespread use of this solid fossil fuel, and it is projected that coal consumption will nearly double by 2030 [

5]. Coal is the most abundant fossil fuel worldwide and serves as a chemical repository of solar energy. It consists of over 50% organic matter (primarily carbon), including inherent moisture [

6].

Ultimate analysis quantifies the mass fractions of carbon (C), hydrogen (H), oxygen (O), sulfur (S), and nitrogen (N) in coal, which are essential for assessing its combustion characteristics and heating value.

Figure 2 illustrates the steps involved in ultimate coal analysis:

Coal sampling: The coal sample is prepared by drying, grinding, and sieving to obtain uniformly small particles.

Laboratory test: The coal sample is combusted to convert its components into the corresponding oxides.

Detection: The combustion products are analyzed to determine the sample’s elemental composition.

Data analysis: The analysis results are used to assess the elemental composition and predict potential applications.

The calorific value, or heating value (HV), of a solid fuel determines its energy yield. Therefore, accurately determining the HV of coal is essential for various purposes, including classification, evaluation of energy potential, assessment of productive use, and precise valuation in the commodity market [

7]. Furthermore, knowledge of HV is critical for the proper design and operation of coal-based systems [

8]. Consequently, it is desirable to develop and implement methods that allow rapid and accurate determination of coal’s gross calorific value (HHV), offering substantial cost savings compared to traditional laboratory experiments. In this context, several previous studies have developed mathematical and empirical relationships to predict coal HHV based on the key elements obtained from ultimate analysis [

9,

10,

11,

12,

13,

14]. While traditional empirical correlations for predicting coal HHV are based on linear assumptions, machine learning (ML) techniques provide a data-driven approach capable of capturing complex, nonlinear relationships. These models not only help reduce the costs associated with conducting standard experimental tests, but they also have the potential to achieve greater accuracy than the previously mentioned empirical correlations.

Previous research on coal HHV prediction using statistical and machine learning approaches, as documented in earlier studies, includes decision tree regression [

15], adaptive neuro-fuzzy inference systems [

16], artificial neural networks (ANNs), and genetic algorithms (GAs) [

17]. Additionally, a regression approach that integrates elements of proximate analysis with Gaussian process regression has been reported [

18]. However, the potential of support vector machines (SVMs) to predict HHV across different coal types, deposits, and locations has not yet been investigated.

To the best of the authors’ knowledge, the methodology employed in this study is significant because it addresses a task that has not been previously undertaken. It combines the support vector machine (SVM) technique [

19,

20,

21,

22,

23,

24,

25] with the Differential Evolution (DE) optimizer [

26,

27,

28,

29,

30,

31,

32,

33] to predict the gross calorific value (HHV) of coal from samples collected across various deposits and locations. For comparative purposes, Ridge, Lasso, and Elastic-Net regressions [

34,

35,

36,

37,

38,

39,

40,

41] were also applied to the dataset to evaluate the coal HHV as the target variable. The SVM approach [

19,

20,

21,

22,

23,

24,

25], a supervised learning method renowned for its robustness and capacity to handle nonlinearities, is particularly well-suited for regression problems.

In several domains, including hydro-climatic parameters [

42], solar radiation [

43], and photovoltaic energy [

44], SVM have demonstrated considerable efficacy. Several factors support the utility of the proposed SVM approach [

19,

20,

21,

22,

23,

24,

25]: (1) because the loss function is data-driven, the majority of the dataset (referred to as training data) is utilized to construct the SVM-based model; (2) to enhance memory efficiency and accuracy in high-dimensional spaces, the SVM model relies on support vectors, which constitute a subset of the training points employed by the decision function; (3) the SVM technique incorporates the kernel trick, facilitating regression of nonlinear data and enabling the resolution of complex problems; and (4) when hyperparameters are properly tuned, the SVM technique is robust to outliers and achieves high predictive accuracy due to its reduced sensitivity to anomalous data points.

Based on the chemical elements determined from the comprehensive analysis of coal samples from diverse sources and locations, the primary objective of the present study is to evaluate the predictive performance of various machine learning approaches for coal HHV. These approaches include DE-optimized SVM models as well as DE-optimized Ridge, Lasso, and Elastic-Net regressions. The study examines the influence of five input elements (C, O, H, S, and N) to accurately predict coal HHV as the target variable.

This study is organized as follows. First, the instruments and methodologies employed in this investigation are described. Next, the results are presented and discussed. Finally, the main conclusions are summarized.

3. Results and Discussion

The best hyperparameters for the coal’s HHV as determined by DE optimizer are shown in

Table 3 for the updated SVM-based technique.

For comparison purposes, the RR, LR and ENR models have been created. The

parameter in these models determines their accuracy [

39,

40,

41]. The optimal

values are presented in

Table 4.

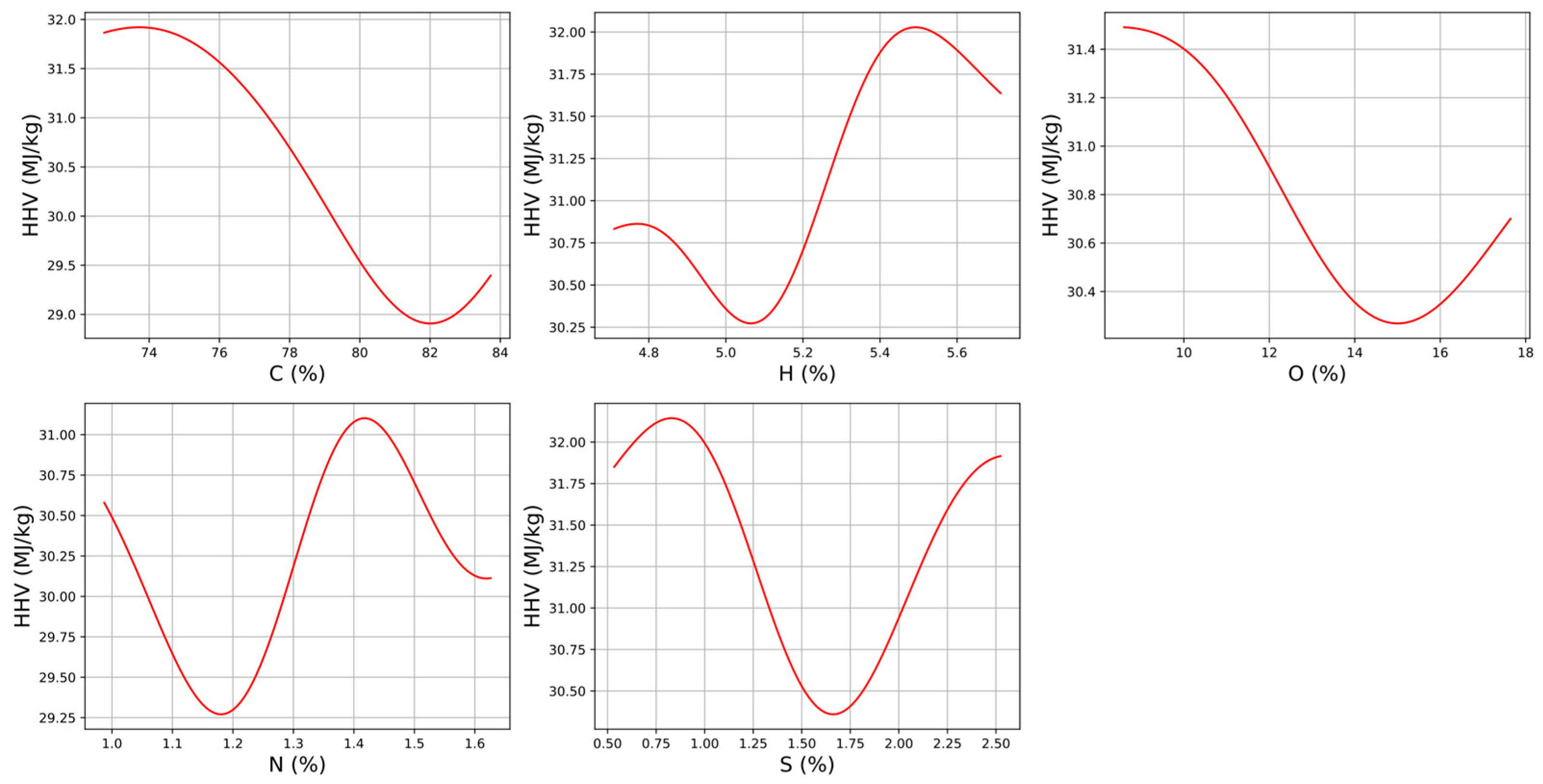

The first-order terms of the DE/SVM method employing an RBF kernel are presented in

Figure 5. This figure facilitates understanding of the relationships among the multiple input variables used in this approach. For example, HHV is plotted on the Y-axis against C on the X-axis, while the remaining four input variables are held constant (see the first graph in

Figure 5). Similarly, with all other input variables kept constant, the second and third graphs in

Figure 5 depict HHV on the Y-axis as a function of H and O on the X-axis, respectively. Finally, the fourth and fifth graphs illustrate HHV on the Y-axis as a function of N and S on the X-axis, respectively.

Similarly, the second-order terms of the DE/SVM method employing an RBF kernel are presented in

Figure 6. In this case, with all other variables held constant, the first graph in

Figure 6 depicts HHV on the Z-axis as a function of H on the Y-axis and C on the X-axis. The remaining graphs in

Figure 6 follow a similar pattern, illustrating HHV on the Z-axis as a function of C and O, C and S, H and S, and O and S on the X- and Y-axes, respectively, while the other variables remain unchanged.

A selection of the most widely used empirical formulas for estimating coal HHV reported in the literature is presented in

Table 5. These formulas are also based on the mass percentage of the components from the ultimate analysis.

The determination and correlation coefficients for the empirical correlations of coal gross calorific value (output variable) [

9,

10,

11,

12,

13,

14], as well as for the DE/SVM approach with different kernel types and for RR, LR, and ENR, are all presented in

Table 6 and were evaluated using the testing data.

Based on recent statistical analyses, the SVM approach with an RBF kernel is the most effective model for estimating the gross calorific value (HHV) as a dependent variable across various coal types. This model achieved a value of R2 of 0.9575 and of r of 0.9861 for coal HHV prediction. The close agreement between the experimentally measured data and the SVM predictions demonstrates the model’s consistent goodness-of-fit.

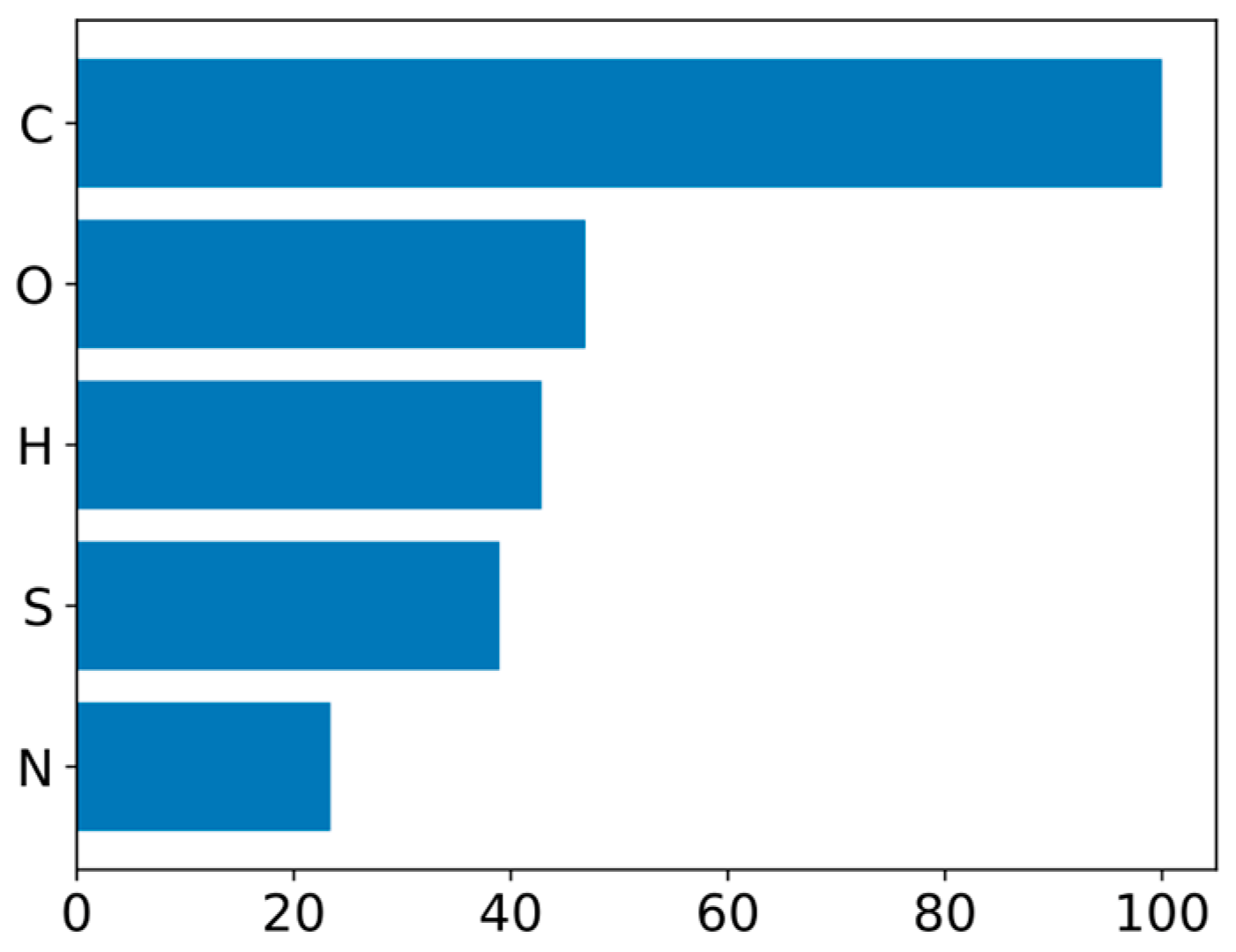

Table 7 and

Figure 7 present an additional outcome of these analyses: the significance ranking of the five input variables in predicting coal gross calorific value in this comprehensive study. Variable importance was determined from the weights of a linear-kernel SVR model, where the magnitude of each weight reflects the relative contribution of the corresponding feature to the prediction. Although these weights are not directly interpretable as coefficients in linear regression due to the effects of regularization and the

-insensitive loss function, their normalized values provide a relative ranking of feature importance.

According to the SVM model, C is the most significant factor in predicting HHV, followed by N, S, H, and O. Dulong previously emphasized the importance of C and H for HHV using models that linked these variables in solid fuels [

45]. In this context, the literature suggests that theoretical models for solid fuel HHV may exist and that these models are likely to have a strong relationship with C, as well as significant influences from H and O [

57].

Generally, C, O, and H are the primary parameters used to determine the potential HHV of a fuel, while S and N are relevant mainly due to their impact on the environmental aspects of fuel combustion. In this context, carbon, as the fundamental element in all carbonaceous fuels, plays a critical role. Meanwhile, the influence of nitrogen is relatively minor, as it is primarily associated with living biomass, where it is required for cellular synthesis.

According to the ranking order of variables (

Table 7 and

Figure 7), C is the primary component in the proposed model, as it directly contributes to the energy released during combustion and serves as the most significant indicator of coalification grade. Carbon is the predominant element in coal, typically constituting the largest proportion of its elemental composition. O is the second most important parameter in the model; it is also present in coal and influences both the heating value and the reactivity of coal during combustion. O is commonly bonded with carbon or hydrogen to form various functional groups, such as carboxyl and hydroxyl groups in coal [

58].

H is the third most important element in the resulting model, as it contributes to coal combustibility. In contrast, S and N have a lower impact on the modeled heating value, likely due to their relatively low abundance in coal. Furthermore, during combustion, sulfur and nitrogen can be oxidized to form sulfur dioxide and nitrogen oxides, respectively, which are air pollutants. It is noteworthy that, for the development of energy applications, it is essential to characterize solid fuels both in terms of their heating value and their potential pollutant emissions [

59,

60].

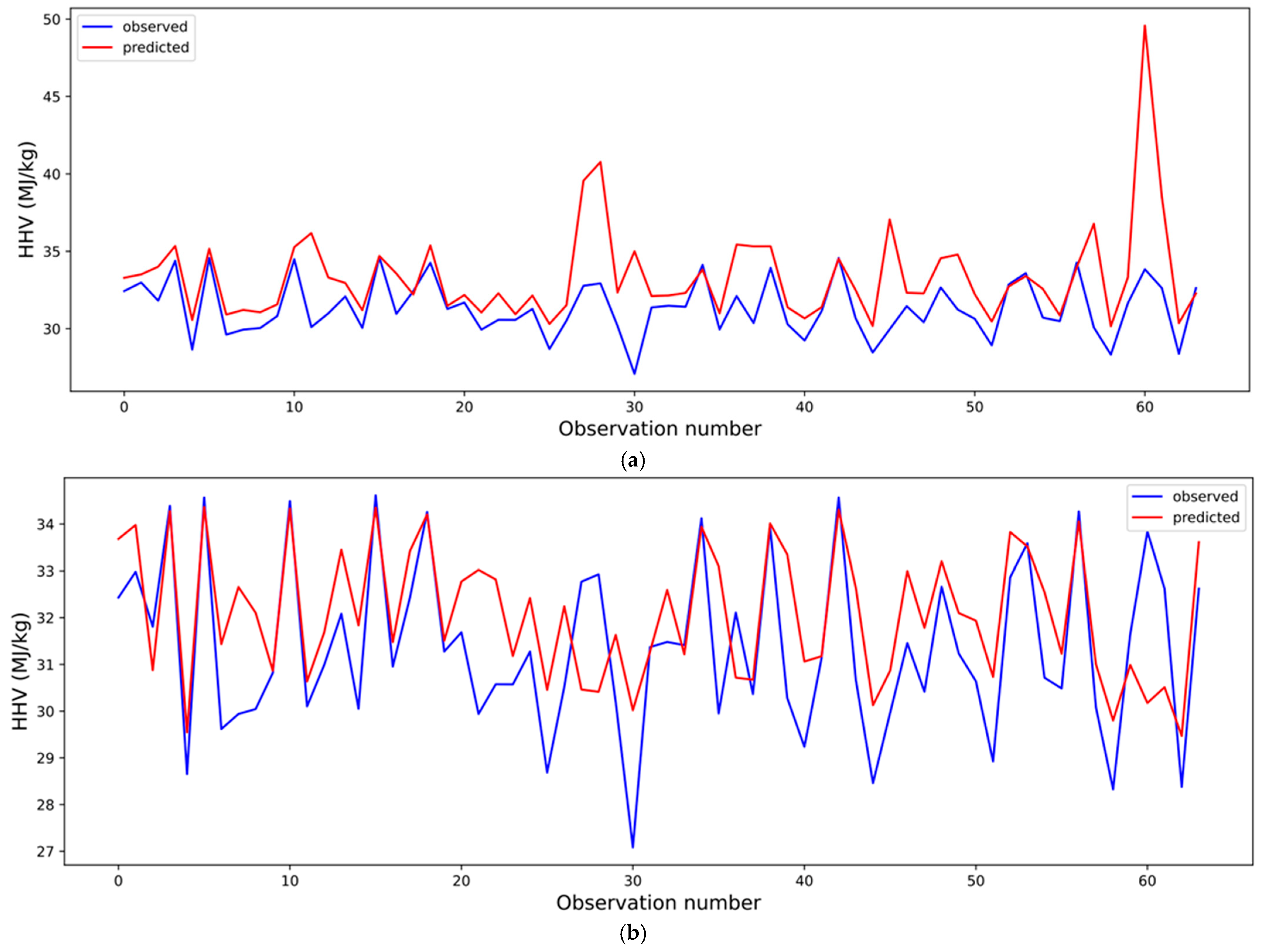

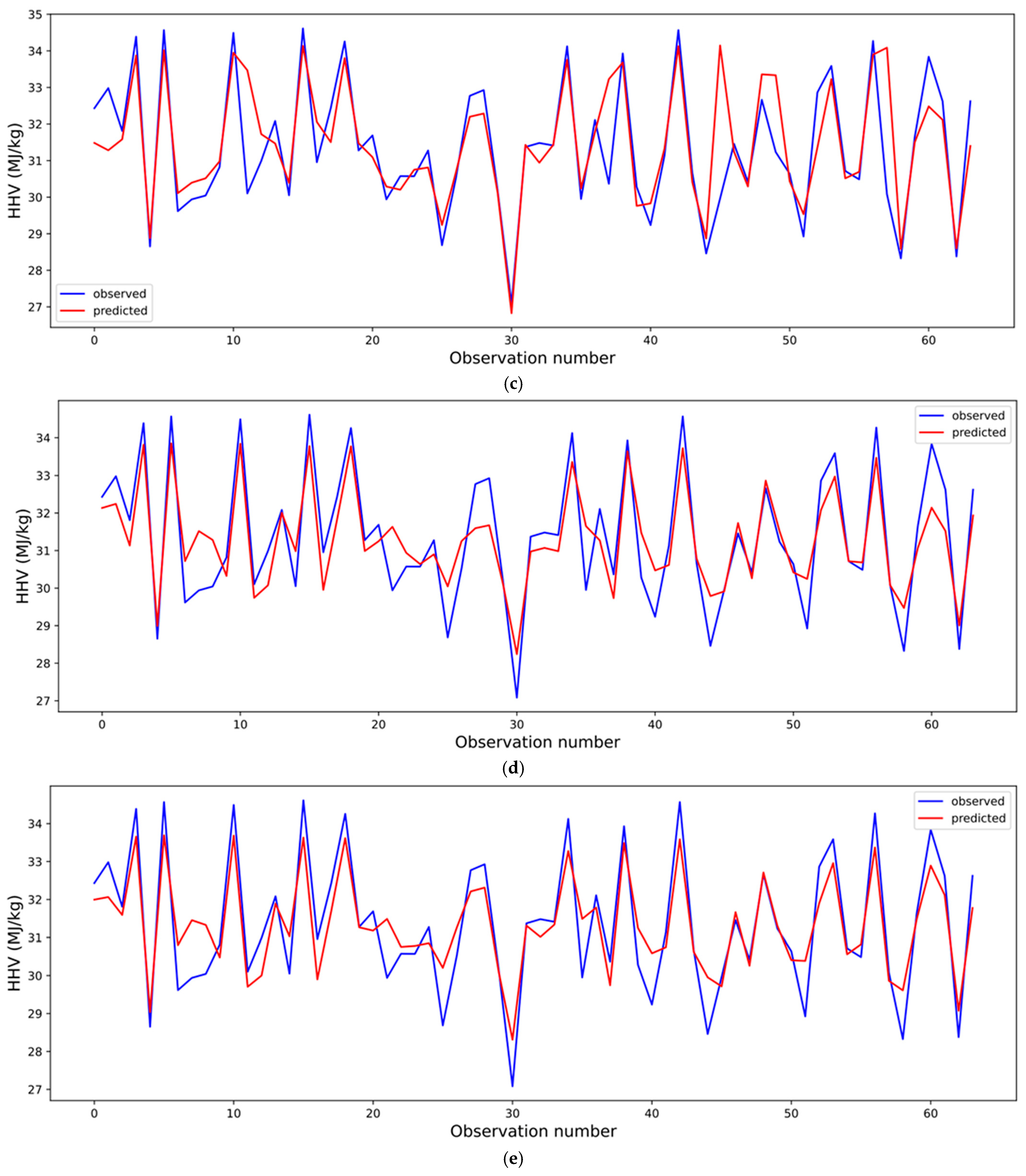

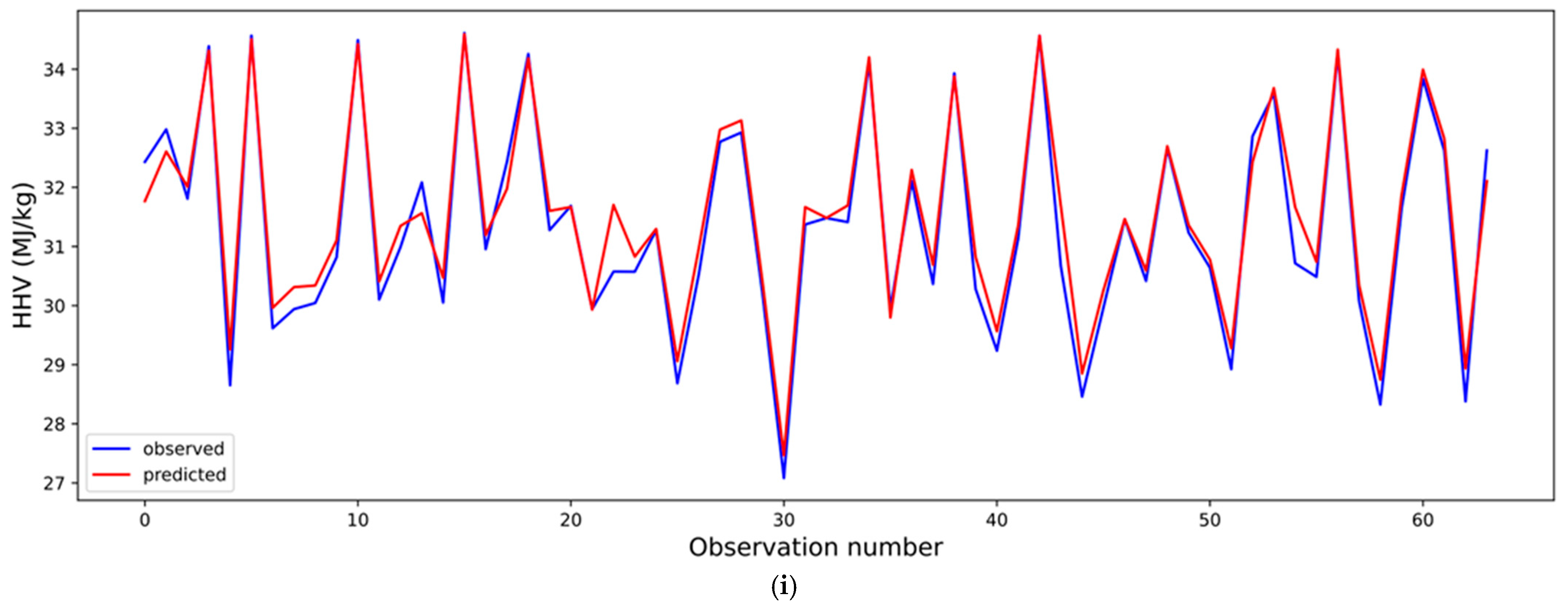

The predicted and experimental values of coal HHV are compared in

Figure 8 using the following models: the DE/SVM model with a quadratic kernel (

Figure 8a), the most accurate empirical correlation E6 (

Figure 8b), the DE/SVM model with a cubic kernel (

Figure 8c), RR (

Figure 8d), the DE/SVM model with a sigmoid kernel (

Figure 8e), the DE/SVM model with a linear kernel (

Figure 8f), the ENR model (

Figure 8g), the LR model (

Figure 8h), and the DE/SVM model with an RBF kernel (

Figure 8i). These comparisons highlight the importance of the SVM approach for achieving the most effective solution to the regression problem. The results clearly indicate that the DE/SVM model with an RBF kernel provides the best fit while meeting the critical statistical goodness-of-fit criterion (

).

In summary, the DE/SVM model with an RBF kernel achieved the highest predictive accuracy significantly outperforming empirical correlations . This performance underscores the model’s capability to capture nonlinear relationships between coal’s elemental composition (C, H, O, N, and S) and HHV.