1. Introduction

Wheat is one of the most essential staple crops worldwide, serving as a primary food source and an important biomass energy resource [

1]. In the processes of cultivation, harvesting, and storage, the failure to identify and classify unsound wheat grains may severely affect grain quality and yield, posing threats to global food security and energy supply. Therefore, the ability to classify such grains accurately and efficiently is of critical importance.

Early-stage unsound wheat grain classification mainly relied on visual inspection by experts, which was labor-intensive and susceptible to human error and fatigue. With the advancement of computer vision, traditional feature engineering methods became dominant. These methods manually extracted explicit features (e.g., color, texture) and fed them into classical machine learning classifiers. Despite achieving automation to some extent, these approaches still suffer from poor generalization due to their dependence on handcrafted features.

The emergence of deep learning, particularly Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs), brought significant improvements by enabling automatic hierarchical feature extraction directly from raw images. However, these unimodal methods typically require large-scale, high-quality labeled image datasets. This requirement limits their performance in domains like agriculture, where labeled data is often scarce. Moreover, unimodal models are constrained to visual information and cannot leverage cross-modal cues, limiting the model’s ability to capture semantic information from images.

Recently, pre-trained Vision-Language Models (VLMs) have shown impressive capabilities in few-shot learning. Notably, CLIP (Contrastive Language-Image Pre-training) jointly learns from image–text pairs to build strong cross-modal representations, which significantly improves generalization across domains [

2]. However, its potential remains underexplored in unsound wheat grain classification, which is a fine-grained agricultural task.

These challenges above highlight the need for a vision-language framework that can not only generalize from limited labeled data, but also leverage semantic cues from language to enhance fine-grained classification. To address these challenges, we propose UWGC (Unsound Wheat Grain Classification) framework, a novel few-shot classification framework based on a VLM. UWGC consists of two core modules designed to address both vision adaptation and text enhancement:

Fine-tuning module: We adopt APE as our baseline fine-tuning method. This approach enables the pre-trained CLIP model to adapt to the unsound wheat grain classification task using only a small number of image samples;

Text Prompt Enhancement module: To address APE’s limited consideration of the text side, we enhance the prompts using ATPrompt. Furthermore, we leverage the multimodal model Qwen2.5-VL to generate more accurate attribute descriptions and text prompts.

The fundamental principle behind the integration of these two modules is driven by a multimodal–completeness principle: we systematically remove “modality blind spots” by letting each module compensate for what the other omits. The major contributions of this work are summarized as follows:

We propose two novel vision-language frameworks for unsound wheat grain classification, namely UWGC-F (Training-free) and UWGC-T (Training-required). To the best of our knowledge, this is the first work to apply VLMs to the few-shot classification of unsound wheat grains;

We innovatively integrate APE and ATPrompt to jointly enhance few-shot learning from both the image and text perspectives, and further employ the multimodal model Qwen2.5-VL for attribute extraction and prompt generation. This dual-perspective design significantly improves the framework’s semantic alignment and fine-grained discrimination capabilities;

We conduct extensive experiments on a large-scale wheat grain dataset. The results show that UWGC consistently outperforms existing methods on the few-shot classification task of unsound wheat grains.

The remaining sections of this paper are organized as follows:

Section 2 reviews prior studies on unsound wheat grain classification and recent advances in VLMs.

Section 3 describes the two modules of the proposed UWGC framework in detail.

Section 4 reports extensive experiments on the GrainSpace dataset, including backbone comparison, performance evaluation and ablation studies.

Section 5 discusses the framework’s advantages, limitations, and potential applications.

Section 6 concludes the paper and discusses future research directions

2. Related Work

With the advancement of computer vision, the field of unsound wheat grain classification has evolved from traditional handcrafted feature engineering to modern deep learning approaches. Although vision-based models such as CNNs and ViTs have greatly improved classification accuracy, they still struggle under few-shot settings due to their reliance on large-scale annotated datasets. Moreover, these unimodal models are limited to visual cues and cannot incorporate external knowledge from other modalities, such as language.

The emergence of pre-trained VLMs offers a promising alternative by enabling joint modeling of visual and textual information. VLMs have demonstrated strong cross-modal generalization and impressive performance in few-shot learning tasks of many downstream domains. However, their effectiveness in downstream applications heavily depends on the quality of fine-tuning frameworks.

2.1. Development of Unsound Wheat Grain Classification

Before widespread adoption of deep learning, the classification of unsound wheat grains primarily relied on handcrafted feature extraction combined with traditional machine learning models. These approaches required researchers to manually extract features such as color, texture, and morphology, followed by classification using algorithms. For instance, several studies utilized hyperspectral imaging and applied Principal Component Analysis (PCA) and Successive Projections Algorithm (SPA) to select informative spectral bands. They then used PLS or SVM for defect detection [

3]. Others employed near-infrared hyperspectral data and combined Competitive Adaptive Reweighted Sampling (CARS) with SPA for feature selection, subsequently applying Linear Discriminant Analysis (LDA) or SVM to distinguish sound kernels from slightly sprouted ones [

4]. Additionally, PCA-based strategies and band ratio analyses were used to construct rule-based classification frameworks targeting specific grain defects such as black embryos, moldy kernels, and broken grains [

5]. In 2D visible light imagery, morphological, textural, and color-based features combined with classifiers like SVMDA, PLSDA, and PCA-ANN also achieved satisfactory performance [

6]. Overall, these approaches performed reasonably well but remained highly dependent on manual feature engineering and lacked generalization capability for complex visual patterns.

The introduction of AlexNet in 2012 marked the beginning of deep learning’s use in the vision domain [

7]. CNNs enabled automatic learning of hierarchical visual features, significantly improving classification performance while eliminating the reliance on handcrafted descriptors. CNN-based models captured both low-level and high-level cues, offering robust predictions in visually challenging scenarios. More recently, ViTs, based on self-attention mechanisms, have shown promising results in capturing global relationships within images and have been applied to unsound wheat grain classification [

8]. Several works proposed dual-channel fusion models (DCFFM) that combine 1D spectral and 2D hyperspectral features using CNNs and attention mechanisms to enhance accuracy [

9]. Others utilized YOLOv8 for segmentation and detection, followed by ViT for classification, and introduced data augmentation strategies to mitigate sample sparsity [

10]. Additionally, some efforts designed low-cost acquisition platforms paired with lightweight ResNet variants to meet practical requirements of low-latency inference [

11].

Despite these advancements, all of these models still depend heavily on large-scale, well-annotated datasets, which are costly and time-consuming to acquire in agricultural domains. None have been tested under true few-shot conditions. Furthermore, unimodal visual models lack access to external semantic priors, limiting their ability to reason over complex scenes or adapt to unseen distributions. Therefore, the need to develop a multimodal framework for few-shot classification of unsound wheat grains is crucial

2.2. Vision-Language Model for Downstream Tasks

In recent years, VLMs have demonstrated significant potential across a wide range of downstream vision tasks. Among them, CLIP stands out as a milestone in multimodal representation learning [

2]. By leveraging large-scale image–text pairs, CLIP learns to project both image and text inputs into a shared embedding space, using contrastive learning to align semantically matched pairs. This enables CLIP to effectively understand vision content guided by language descriptions, and empowers strong generalization in zero-shot and few-shot tasks.

Thanks to its cross-modal alignment capabilities and pretraining on massive scale data, CLIP has been widely adopted to many domain-specific zero-shot and few-shot tasks. Representative applications include:

Security & Surveillance: CLIFS framework integrates GPT-4-generated textual prompts with visual embeddings, achieving significant improvements in few-shot threat detection at airport security checkpoints [

12];

Construction Site Object Recognition: In construction scenarios, researchers proposed a similarity cache mechanism based on ImageNet embeddings to enable few-shot recognition of temporary objects using CLIP-based prototypes [

13];

Medical Imaging: MediCLIP employs a self-supervised strategy involving synthetic anomaly generation to fine-tune CLIP for medical image anomaly detection, yielding excellent performance under low data regimes [

14];

Biodiversity & Geolocation-Aided Recognition: For species classification, geographic features were fused with visual embeddings to enhance the discriminative power of CLIP in few-shot species recognition tasks [

15];

Image Understanding & Scene Adaptation: In monocular depth estimation, learnable prompts and dynamic depth binning were used to adapt CLIP for precise scene-level understanding under limited supervision [

16].

Agriculture: AgriCLIP constructs an agriculture–livestock image–text corpus (~600 k pairs) and demonstrates that aligning CLIP to this domain substantially improves fine-grained recognition on datasets such as PlantDoc and other low-accuracy CLIP regimes, highlighting the value of domain-adaptive multimodal pretraining for agrifood tasks [

17].

Given the demonstrated flexibility and transferability of CLIP in a variety of real-world domains, and considering that unsound wheat grain classification also faces challenges such as data scarcity and the need for fine-grained semantic differentiation, we are motivated to develop a CLIP-based framework specifically tailored for few-shot classification in this agricultural context. This framework offers a promising alternative addressing the limitations of conventional vision-only approaches.

2.3. APE: Few-Shot Adaptation of Vision-Language Model

In fine-grained domains such as agriculture, medical imaging, and remote sensing, directly applying pre-trained VLMs like CLIP often fails to deliver the expected performance. This is because in downstream domains like agriculture or medical imaging, capturing subtle inter-class differences requires domain-specific prior knowledge—knowledge that is absent from the pretraining data. As a result, recent efforts have increasingly focused on developing efficient few-shot adaptation strategies for VLMs.

One promising approach is APE [

18], which enables lightweight adaptation of frozen CLIP models by introducing trainable residuals. In particular, this method offers two variants (APE and APE-T) for different needs. Overall, APE achieves great performance while avoiding the high computational cost of full fine-tuning, making it well-suited for the few-shot unsound wheat grain classification task.

2.4. ATPrompt: Textual-Based Prompt Learning Method

APE-T fine-tunes only the vision side and leaves the text prompt in its default form (“A photo of a [CLASS]”). However, recent studies [

19,

20,

21] show the importance of text side. In particular, authors of ATPrompt [

22] clearly noted that using such a simple prompt template can hurt accuracy. In APE-T’s text side, the prompt is basically just the class name attached to a fixed phrase, which limits the model’s fine-tuning effectiveness. The main issue is that during training the model only aligns images with known class names, so it struggles to handle unseen classes.

For example, when distinguishing between normal wheat grains and unsound wheat grains attacked by pests, we tend to describe them as “wheat grains that are intact and uniformly colored” versus “wheat grains with holes or notches”, rather than simply “normal wheat grains” and “pest-damaged wheat grains”. Intuitively, when facing an unfamiliar object, humans naturally characterize it by mentioning its distinctive attributes (such as color or shape) instead of only relying on its name.

Specifically, researchers first use Large Language Models (LLMs) to generate an attribute pool based on the downstream categories, aiming to extract a set of universal attributes. We adopt a differentiable selector over the attribute pool to rank and obtain descriptor combinations that best support attribute-aware prompts. The selected combination is then assembled into the texts that condition fine-tuning, enabling the learned prompts to encode multi-attribute cues instead of only a class name.

The emergence of ATPrompt inspired us. APE has the drawback of overlooking the language modality. The idea behind ATPrompt offers us valuable inspiration: to address the shortcomings of APE in the text side, we introduce an automatic stage of attribute extraction, attribute search, and prompt generation before APE fine-tuning. This enhances the expressive power of text descriptions.

Still, ATPrompt suggests users provide only class names to a unimodal LLM and use multi-turn dialogues to guide the model in generating multiple candidate attribute words. This raises our concern that, due to their lack of direct access to visual information, LLMs tend to focus on label tokens rather than visually grounded attributes. As a result, this label-centric bias leads to vague or semantically misaligned attributes and prompts, which is particularly detrimental in fine-grained tasks. To mitigate this issue, some recent works employ multimodal models like Qwen-VL to generate prompts directly from representative images, enhancing few-shot performance [

23]. Inspired by this, we also explore using multimodal models for prompt generation.

3. Method

Based on the insights from related works, we propose a CLIP-based framework called UWGC, aiming to address the few-shot classification problem of unsound wheat grains. Specifically, the framework leverages the powerful cross-modal pretraining knowledge of CLIP to achieve accurate classification of unsound wheat grains even with only a limited number of training samples. To accommodate different practical needs, we propose two versions: UWGC-F and UWGC-T.

The overall architecture consists of two key modules working in coordination:

Our framework is designed so that neither the vision nor the language modality is neglected. If one modality provides less information, we introduce a small complementary component from the other modality to fill that gap. We begin with a baseline few-shot learning method (APE or APE-T) that focuses on the vision side, and then we add attribute-based prompts (derived from images) to reinforce the text side. These enriched text prompts help stabilize and improve the few-shot adaptation of APE/APE-T. We also replace the original ATPrompt’s language model with the multimodal model Qwen2.5-VL, which uses both visual and textual information to generate more informative attributes. This step-by-step complementary design is the core of our UWGC framework. By following this principle, our method greatly improves few-shot classification performance by leveraging the synergy of both image and text cues.

Specifically, the Fine-tuning module introduces APE or its training-required variant APE-T to efficiently fine-tune the CLIP model, enabling effective integration of visual features and class knowledge. Text Prompt Enhancement module enriches the prompts by incorporating additional attributes. It replaces traditional LLMs with the multimodal model Qwen2.5-VL for attribute extraction. The synergy between the two modules, leveraging cross-modal semantics, forms the foundation of our UWGC framework.

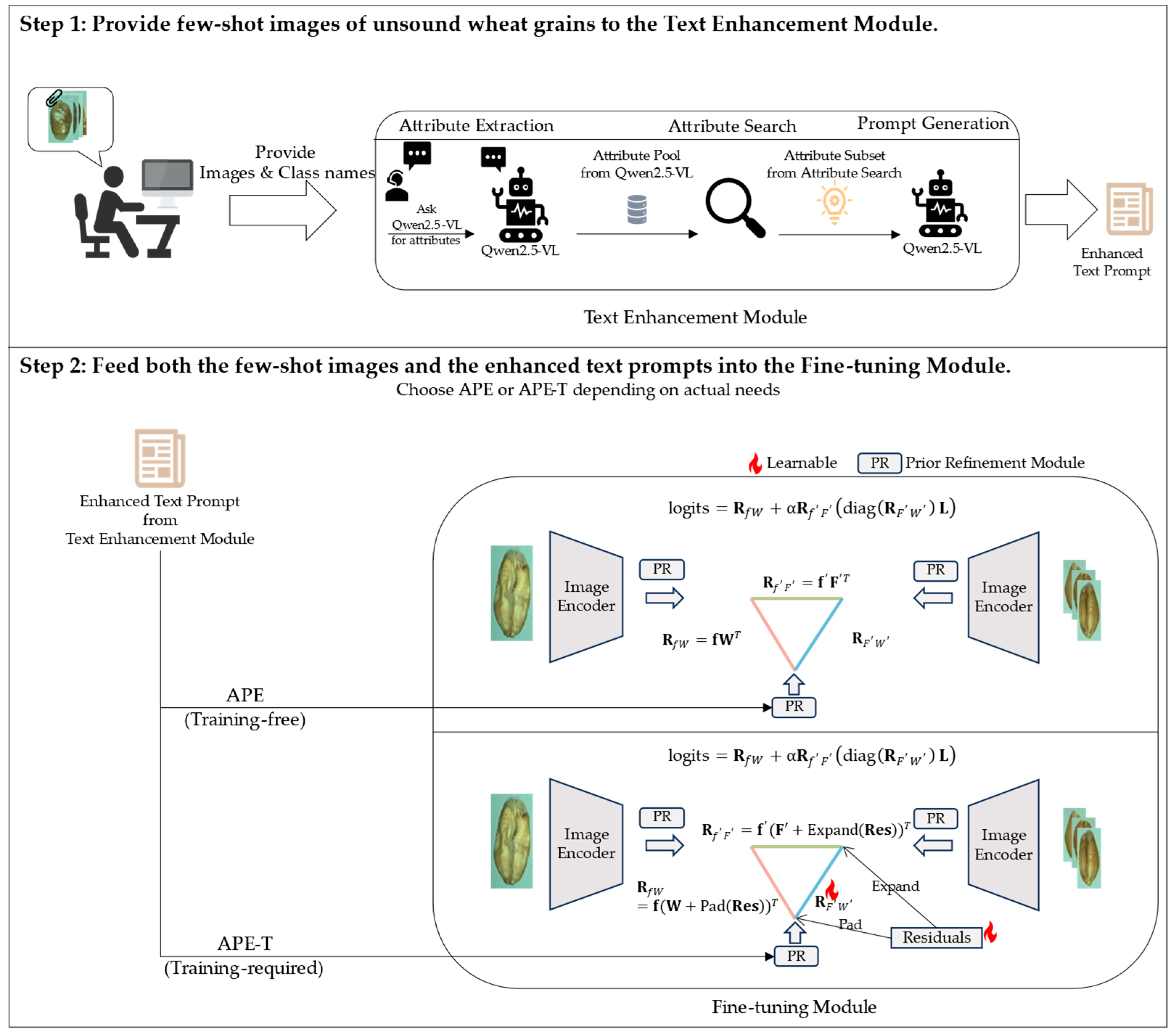

There are two steps in UWGC workflow:

First, activate the Text Prompt Enhancement module. To be more specific, we provide representative images together with their class names to Qwen2.5-VL to extract visually grounded attributes and build an attribute pool for each class. Then, run an attribute search step to automatically select a small, high-signal subset. Using the selected attributes, Qwen2.5-VL generates enhanced text prompts;

Next, we activate the Fine-tuning module with few-shot images, and choose either APE (training-free; UWGC-F) or APE-T (lightweight training; UWGC-T). The chosen variant adapts CLIP to the task of unsound wheat grain classification.

The overall workflow of UWGC framework is shown in

Figure 1.

We adopt a consistent notation style throughout this paper: scalars are italic, vectors or matrixes are bold. Operators such as

,

and

, etc. follow standard definitions. The notation is summarized in

Table 1.

3.1. Fine-Tuning Module

Our UWGC framework allows for flexible selection between APE and APE-T as the fine-tuning strategy based on application needs: APE is used when deployment without additional training is preferred, while APE-T is chosen when a small amount of training is acceptable in exchange for higher accuracy. The version using APE is referred to as UWGC-F, and the one using APE-T is referred to as UWGC-T. In practical applications, users can balance training overhead and accuracy improvement to select the most suitable framework.

3.1.1. UWGC-F

In the few-shot settings, we only have labeled images per class (a total of images across classes), and the goal is to make effective use of these limited samples without significantly altering CLIP’s pretrained parameters. To this end, we introduce the APE method for parameter-efficient fine-tuning of CLIP. APE leverages the prior knowledge of CLIP by modeling the relationship among the test image, support set, and class text, effectively making use of available information even under incomplete data conditions.

Specifically, APE first performs channel-wise feature selection and refinement on CLIP-extracted features: the test image feature , all class text feature matrix , and all training sample features are fed into the prior refinement (PR) module of APE, which selects the most informative channels from dimension , resulting in reduced-dimension features , , and .

Next, three pairwise relations are defined:

Image–Text Relation

: This represents the alignment between the test image and each class’s text prompt, computed as:

which essentially corresponds to the class logits predicted by CLIP in the zero-shot setting;

Image–Image Relation

: This represents the similarity between the test and training images in the support set. It is computed in the reduced feature space using an exponential weighting with a scaling factor:

which represents the image–image similarity and is smoothed by the scalar

, a hyperparameter;

Text–Image Relation

: This evaluates the confidence of CLIP’s zero-shot prediction on the training samples in the support set. For

and

, define their cosine-similarity logits as

, which serve as CLIP’s zero-shot scores for the few-shot samples. We then evaluate downstream classification performance of CLIP through KL-divergence,

, between the predicted class distribution and the one-hot labels

. Let

be a smoothing factor. Then the weight for each training sample is defined as:

which can be interpreted as CLIP’s prior confidence score on each sample—samples with smaller divergence (i.e., more accurate zero-shot predictions) receive higher weights, while those with larger divergence receive lower weights.

Finally, the three types of relations are integrated to form the final classification decision. Specifically, let

denote a diagonal matrix constructed from the vector

, which is then used to reweight the label indicator matrix

of the support set. The combined logits for each test image are given by:

where

is a balancing hyperparameter and

denotes diagonalization. In this equation, the first term

represents the zero-shot prediction of CLIP and contains its pre-trained prior knowledge. The second term denotes the few-shot prediction from the cache model, which is based on the refined feature channels and

’s reweighing.

By adaptively combining prior knowledge from pretraining with information from the few training samples, APE effectively enhances CLIP without extensive parameter updates. This significantly improves classification performance in few-shot scenarios.

3.1.2. UWGC-T

Depending on practical needs, we may also choose the trainable version APE-T to achieve higher accuracy.

APE-T keeps the refined cache and the trilateral relations of APE while training only a few extra parameters. It freezes the cache features and the CLIP encoders, and introduces a learnable category-wise residual that is added element-wise to both W (padded to via ) and the refined training features (broadcast via ).

In addition, the cache scores , which in APE were computed by a KL-divergence–based rule, are reparametrized as learnable weights so that the model can autonomously reweight individual training samples.

The Image–Text Relation is updated as:

pads the residual to channels for the text branch; broadcasts it to for the cache branch.

The Image–Image Relation is updated as:

The final logits keep the same form as in APE Equation (4) with the above substitutions. During training, UWGC-T learns only a few residual parameters while keeping the backbone frozen. UWGC-T achieves higher accuracy with limited training data, trading additional training overhead for performance gains.

3.2. Text Prompt Enhancement Module

Although APE/APE-T can effectively fine-tune the model with a small number of samples, the default text side input in the above methods remains a simple template sentence (e.g., “A photo of a [CLASS]”) that contains only the class name as the sole piece of information. Such overly concise prompts are insufficient to capture the fine-grained differences among unsound wheat grains and may become a bottleneck limiting further model improvement. To address this, we draw inspiration from the latest ATPrompt pipeline, which enhances text prompts using attributes, and propose a text prompt enhancement module that optimizes textual representations in a training-free manner—which provides CLIP with a better textual starting point and enables the model to leverage additional semantic information when computing image–text similarity, thereby improving classification performance under few-shot settings.

This module can be regarded as a form of prior optimization for CLIP’s zero-shot capabilities: by improving textual descriptions, we aim to fully unlock CLIP’s inherent potential without modifying its backbone parameters or loss design. This approach is simple and efficient, involving improvements only at the text input level, and serves as a complement to the APE/APE-T fine-tuning strategies, together forming the complete UWGC framework. The module consists of three steps: attribute extraction, attribute search, and prompt generation.

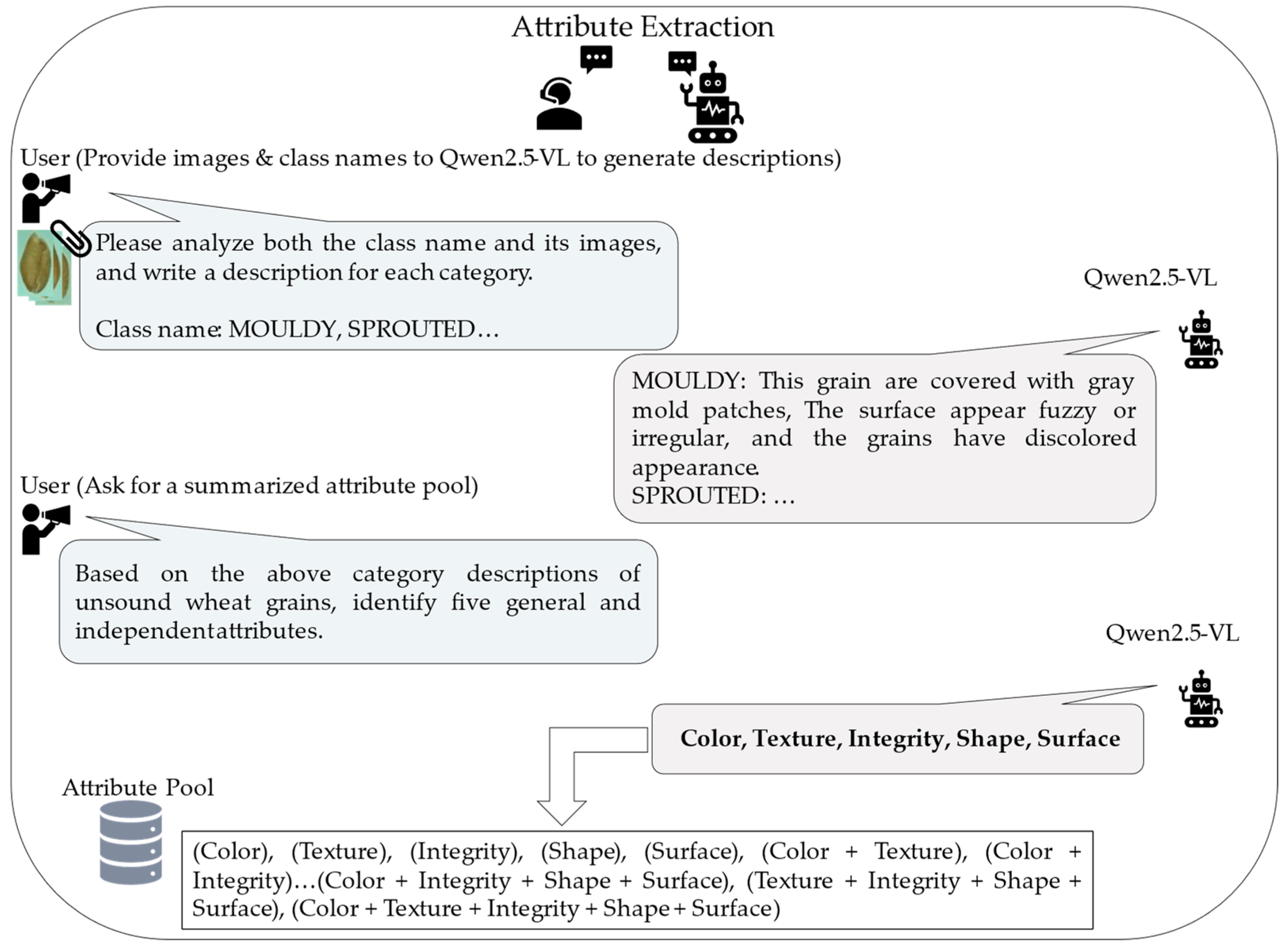

3.2.1. Attribute Extraction

The pipeline designed in ATPrompt adopts a strategy where class name information is provided to an LLM, and multiple rounds of guidance are used to generate attribute words. In the task of unsound wheat grain classification, we are concerned that generating attributes solely based on class labels may have limitations. The attributes produced by the model might rely too heavily on class priors without fully capturing the visual differences present in specific wheat grain images.

Inspired by related work [

23], we adopt an attribute extraction strategy based on the multimodal model Qwen2.5-VL. Compared to single-modal generation, Qwen2.5-VL generates prompts directly based on the image content, and thus its outputs align more closely with the model’s own visual understanding [

24]. This alignment significantly improves the semantic consistency between image and text, which is crucial for downstream classification. Furthermore, Qwen2.5-VL is open-source and free to use, which offers a cost-effective alternative to proprietary models. The process of Attribute Extraction is shown in

Figure 2.

Specifically, for each category of unsound wheat grains, we collect and input three representative sample images (per class) along with the corresponding class name into Qwen2.5-VL, prompting it in a dialogue-based manner to generate mutually independent unary attribute words (e.g., shape, color, material, size) that describe the characteristics of the given class. By incorporating visual information, the generated attributes better reflect the actual differences in images—something that LLMs relying solely on text cannot achieve. Since the model “sees” real images from each category while generating the attributes, the resulting attributes highlight the most distinguishing features among the categories—such as color (e.g., “black”, “dark yellow”), shape (e.g., “shriveled”, “underdeveloped”), and surface condition (e.g., “spotted”, “cracked”). These attribute words effectively compensate for the lack of detailed information compared with using only class names.

3.2.2. Attribute Search

In ATPrompt, a differentiable attribute search method is proposed to identify the most representative attribute combination from attribute pool given by LLM. We incorporate this search method into our UWGC framework to determine the most appropriate attribute combinations for the unsound wheat grain classification task. The process of Attribute Search is shown in

Figure 3.

Specifically, for the

attribute words obtained during the attribute extraction phase, we construct their power set, resulting in

attribute subsets, trying to find representative attributes

. Instead of a discrete search space, we learn continuous weights over all attribute subsets by using softmax:

where

represents the weight for attribute

,

represents the function of the CLIP model.

Equivalently, now the task is to jointly optimize the attribute weight

of per candidate across the pool and soft prompt tokens

, a “hat” denotes the current estimate obtained by solving the corresponding subproblem, symbols without hats are the decision variables being optimized in that step. The training loss

of training set

is minimized for obtaining the soft prompt tokens, while the validation loss

of validation set

serve for the optimize process. Both losses use cross-entropy,

, between predictions and labels.

and

can be formulated as follows:

We adopt a two-step iterative scheme—optimize one set of variables while keeping the other fixed, then switch and repeat:

By introducing universal attributes, the prompt is expanded from a purely class-level form into an “attribute + class” hybrid form, resulting in more generalizable representations that allow the model to achieve more accurate alignment when recognizing unseen categories—compared to relying on class names alone.

Still, it should be noted that attribute search imposes relatively high computational demands. This limitation was also acknowledged in the ATPrompt work, where alternative solutions were proposed. Inspired by their approach, we likewise include this as an optional component to enhance the flexibility and user-friendliness of our framework. Specifically, when computational resources are constrained, we recommend bypassing the attribute search and then directly asking the language model to summarize two general attributes as the combination for target categories (two is the number found to be most suitable in ATPrompt experiments). This approach to obtaining attribute combinations for later stages is more lightweight, though it comes at some performance cost.

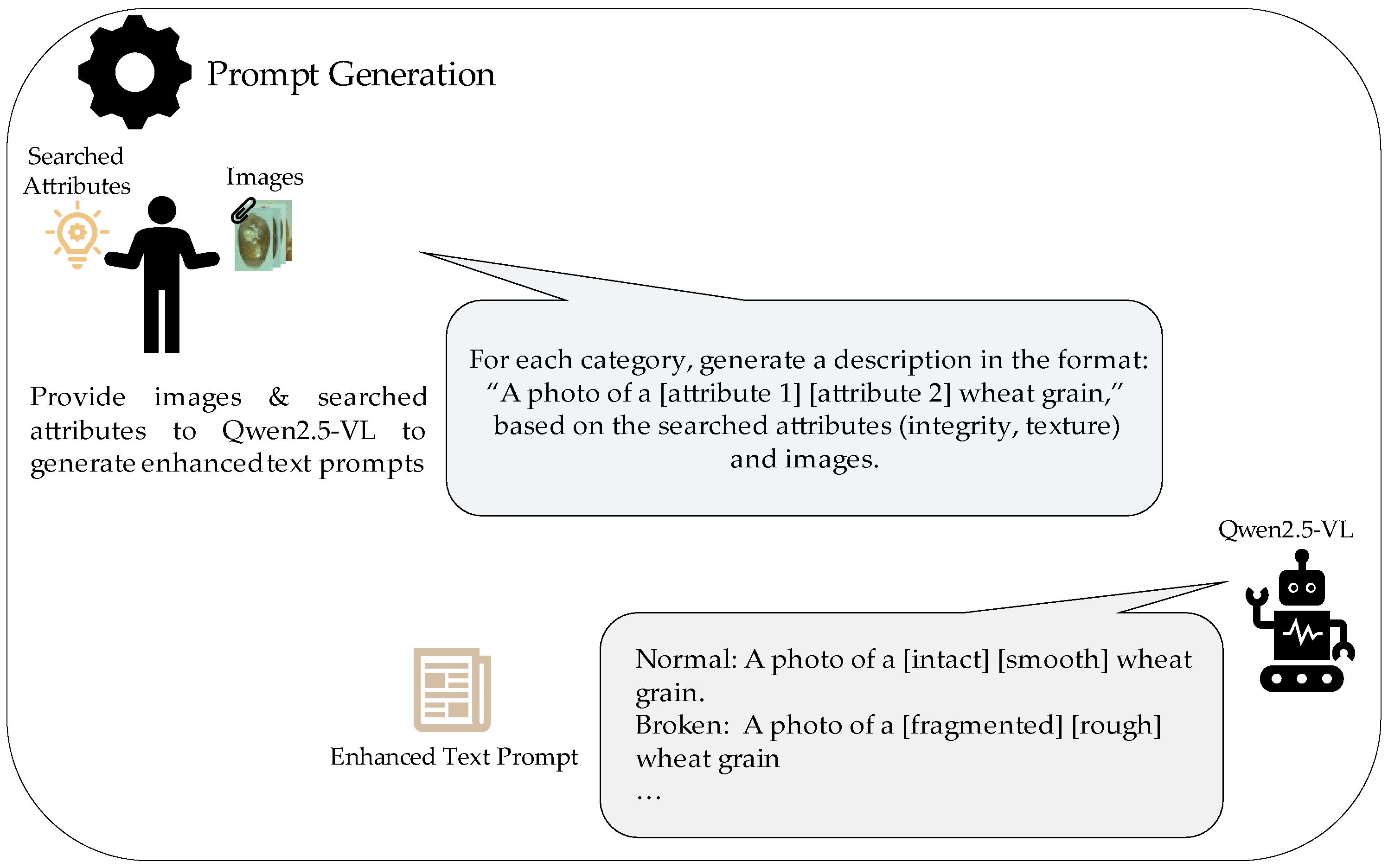

3.2.3. Prompt Generation

After obtaining the optimal attribute combination, we provide the attribute set, the original prompt template, and class-specific example images to Qwen2.5-VL. The multimodal model then generates an enhanced text prompt for each class based on the visual attributes. These enhanced prompts are fed into CLIP’s text encoder as the new textual features for each class. Compared to the original plain prompts (which contained only the class name), the attribute-enriched prompts carry richer semantic information, allowing the model to leverage more detailed cues during fine-tuning and thereby improving classification performance in the few-shot setting.

To clarify how the text prompt evolves, we decompose it into two stages and illustrate each with the wheat-grain classes Normal and Broken. We assume the attribute search identifies integrity and texture:

Baseline prompt:

We begin with a simple template that includes only the class name: “a photo of a [CLASS] wheat grain.” For example: Normal—“a photo of a [normal] wheat grain”; Broken—“a photo of a [broken] wheat grain”.

This baseline prompt provides only implicit visual information through the class name itself, which may limit the effectiveness of the subsequent fine-tuning because the model must infer all distinctions purely from the class name;

Attribute-based prompt:

Next, we incorporate searched attributes into the original prompt template: “a photo of a [integrity] [texture] wheat grain”. We supply this template along with representative images to Qwen2.5-VL, which will then generate concrete descriptors to fill in the attribute slots. For example, Qwen2.5-VL might produce Normal—“a photo of an [intact], [smooth] wheat grain”; Broken—“a photo of a [fragmented], [rough] wheat grain”.

Such attribute-based prompts offer far richer semantic context to the model, which is highly beneficial for the few-shot fine-tuning stage.

The prompt-generation process is also shown in

Figure 4.

4. Experiments

To evaluate the UWGC framework, we conducted a comprehensive set of experiments.

We compared different CLIP-based backbone models (VLM encoders) within the UWGC framework;

We tested UWGC against traditional vision-only models and other few-shot learning methods under various few-shot settings;

We performed ablation studies to measure the impact of our two key enhancements (Text-prompt improvement using ATPrompt, Attribute extraction and prompt generation using Qwen2.5-VL).

Together, these experiments validate the effectiveness and robustness of UWGC and demonstrate the contribution of each component in our framework.

4.1. Dataset

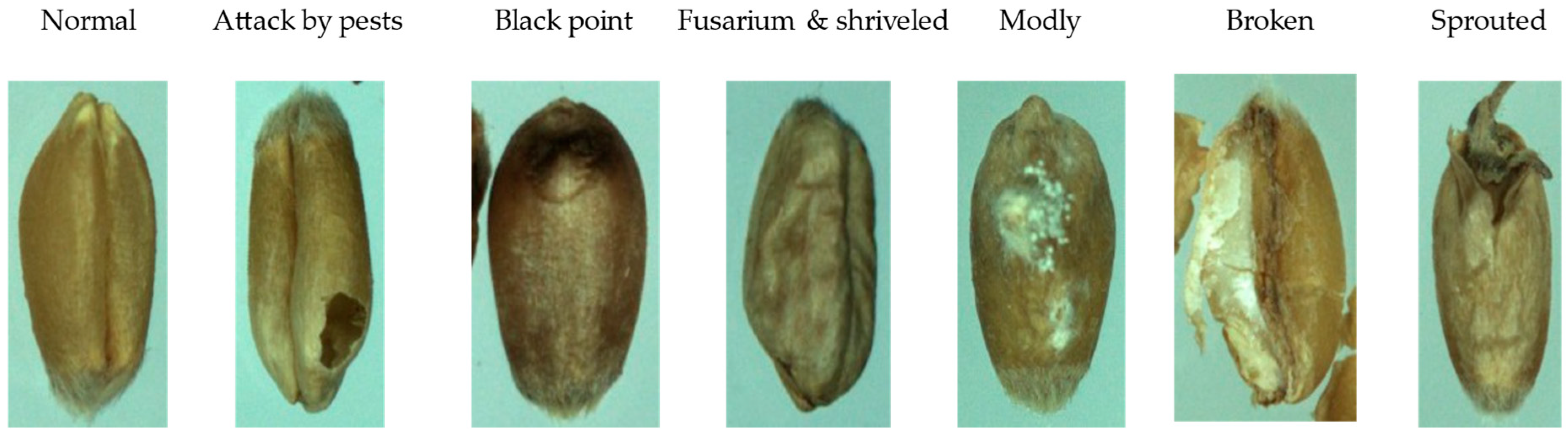

The dataset used in this study is the GrainSpace dataset [

25], the first publicly available large-scale visual dataset specifically designed for grain appearance inspection. Constructed over four years by the University of New South Wales in collaboration with multiple industrial partners, GrainSpace covers three major cereal types—wheat, corn, and rice—with a total of approximately 5.25 million images, among which over 4 million are wheat samples. The original grain specimens were collected from more than 30 regions across five countries, ensuring high geographic diversity and representativeness.

Compared to prior datasets, which are typically limited in size, proprietary in access, or constrained to specific imaging devices, GrainSpace exhibits several notable advantages. First, wheat grains are categorized into seven classes, including normal grains (NOR) and six types of unsound grains: attacked by pests (AP), broken (BN), black point (BP), fusarium & shriveled (FS), moldy (MY), and sprouted (SD), with all labeled samples manually sorted and annotated at the individual kernel level. Each sub-image in

Figure 5 corresponds to one category. Second, the dataset includes image subsets captured using diverse imaging devices, naturally introducing domain variations, making it particularly suitable for studies in few-shot learning, domain adaptation, and out-of-distribution recognition. Finally, the image acquisition process adheres strictly to the ISO24333 grain sampling standard, employing both mechanical and semi-automated imaging setups to closely simulate real-world inspection environments.

Figure 5 presents representative images for each of the seven wheat grain categories: one normal grain (NOR) and six types of unsound grains (AP, BN, BP, FS, MY, SD).

In summary, GrainSpace offers a well-structured, diverse, and practically relevant platform for research. It not only supports our focus on unsound wheat grain classification under few-shot settings but also facilitates broader investigations into prompt learning, cross-domain adaptation, and multimodal representation learning for intelligent agricultural inspection.

4.2. Implementation Details

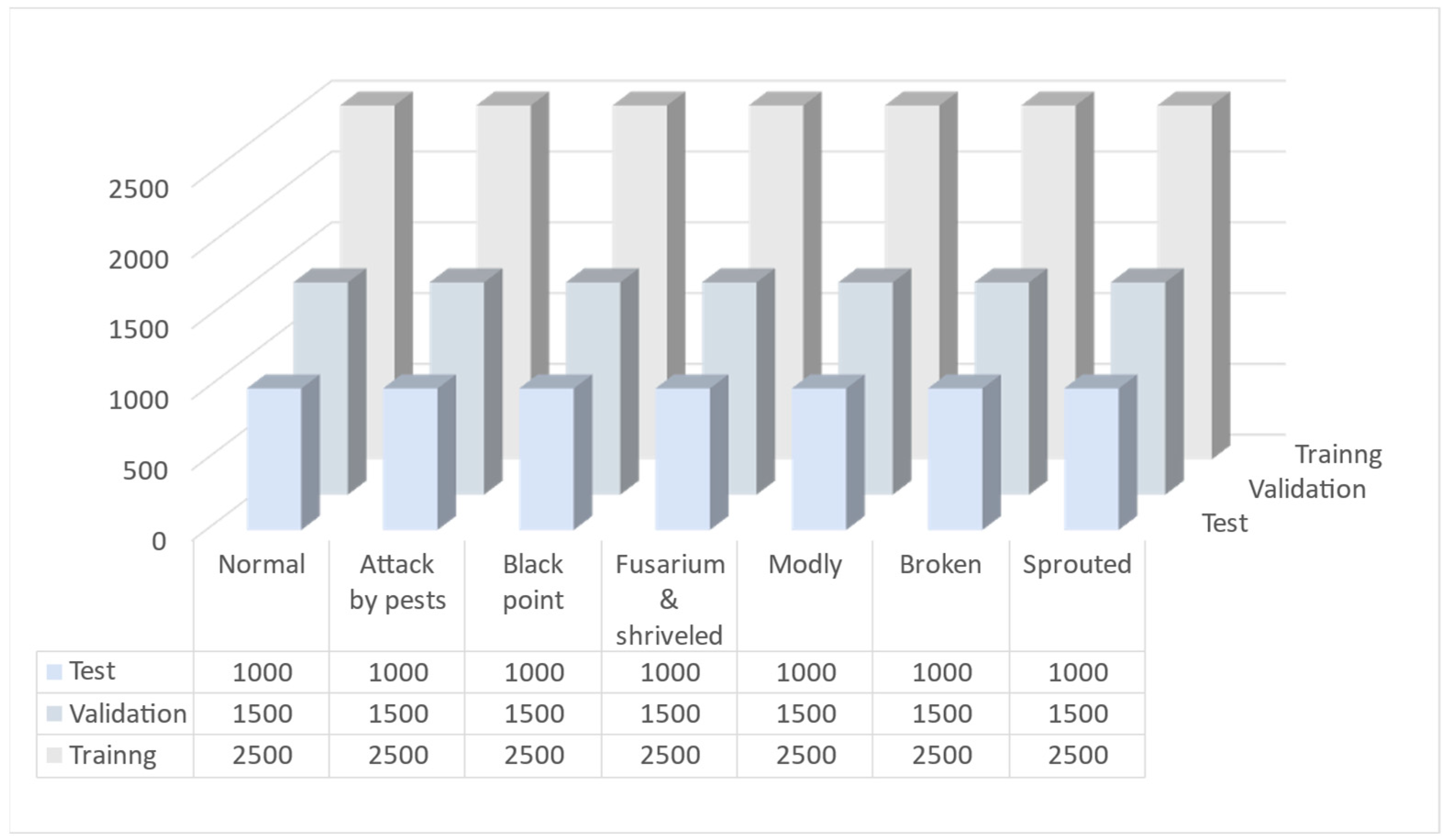

We randomly select 5000 images from each class in the GrainSpace dataset, resulting in a balanced dataset of 35,000 wheat grain images. These are then split into training, validation, and test sets with a ratio of 5:3:2. The validation set is used for model tuning and hyperparameter selection, while the test set is reserved for final performance evaluation.

Figure 6 illustrates the distribution of the selected dataset, showing an equal number of wheat grain images per class and their split into training, validation, and test sets for all seven categories.

For the few-shot classification experiments, we randomly sample training samples per class from the training set. Model training is performed on the sampled subset, and evaluation is conducted on the full validation and test sets to simulate performance under limited supervision.

ViT-B/16 is adopted as the visual encoder for CLIP and both the visual and text encoders with pretrained weights are initialized. During training, we freeze both encoders.

Image preprocessing follows the standard CLIP pipeline, including random cropping, resizing, and horizontal flipping. We adopt the AdamW as optimizer. We set its initial learning rate at 0.001 with a cosine annealing learning rate scheduler. We set batch size 64, and set the training epochs 30. Parameters for the PR module follow the optimal settings reported in the APE baseline.

In all experiments, we compute the class logits using Equation (4), which combines the image–text term from Equation (1), the image–image term from Equation (2), and the text–image term from Equation (3). For APE-T, we replace the image–text term in Equation (1) with Equation (5) and the image–image term in Equation (2) with Equation (6), while retaining the overall logit formulation of Equation (4). All hyperparameters associated with these equations—e.g., the smoothing scalars in Equations (2) and (3) and the balancing coefficient in Equation (4)—are tuned once on the validation set and kept fixed across all comparisons to ensure fairness. In addition, the attribute combination is selected on the validation set via an attribute search based on Equations (7)–(11) and is held fixed for all experiments to ensure a fair comparison.

All experiments are implemented in PyTorch2.7.0 and conducted on an RTX 5080 GPU.

4.3. Model Backbones Comparison

In this section, we evaluate two major categories of CLIP image encoders—ViT and CNNs—to determine the most suitable backbone type for our UWGC framework under few-shot learning conditions. To ensure fairness, we select two representative models with comparable parameter sizes and FLOPs: ViT-B/16 for the ViT-based category, and ConvNeXt-B for the CNN-based category. Detailed model statistics are listed in

Table 2.

As shown in

Table 2, the two selected backbone models (ViT-B/16 and ConvNeXt-B) are closely matched in terms of input image size (224 × 224), number of parameters (around 150 million each), and computational cost (approximately 37–41 billion FLOPs). This parity ensures a fair comparison of backbone architectures without conflating model capacity differences. We integrate each model into UWGC-F and UWGC-T variants, training and evaluating under the same conditions. The classification results are illustrated in

Table 3.

Overall, ViT-based backbones significantly outperform their CNN counterparts under comparable parameter settings. This indicates a superior capacity for few-shot vision-language modeling. As the number of training samples increases, the ViT can better leverage these samples for generalization, whereas the performance improvement of CNN is relatively limited. The difference between ViT and ConvNeXt is minimal in the 1-shot case, likely because with only one example per class, both models cannot leverage their full capacity and thus achieve only modest accuracy. By the 16-shot setting, ViT-B/16 achieves about 88% (in UWGC-T) vs. 82% for ConvNeXt-B, clearly demonstrating ViT’s superior few-shot learning capability when more samples are available.

This observation is consistent with prior findings that ViTs tend to be more effective image encoders for CLIP [

2]. Based on these findings, we recommend using ViT-based Image encoders within the UWGC framework whenever hardware conditions permit, as they consistently yield stronger performance

4.4. Performance Evaluation

To comprehensively evaluate the effectiveness of our proposed UWGC framework in few-shot classification of unsound wheat grains, we compare it against several widely used baselines. For clarity, both UWGC-F and UWGC-T belong to the Baseline++ family (cosine-classifier transfer). Accordingly, our comparisons include representative Baseline++ methods (e.g., CoOp/CoCoOp, Tip-Adapter, APE) alongside strong unimodal baselines, while excluding episodic meta-learning methods which are orthogonal to our design. For the single-modal setting, we ensure fairness by selecting models whose parameter counts are at least comparable to—if not larger than—the ViT-B/16 backbone used in our method. We select ConvNeXt-Large (198 M parameters) [

26] and FocalNet-Large (197.1 M parameters) [

27] as a high-performing CNNs representative and ResNet-26 + ViT-Small/32 (170 M parameters) [

8] as a hybrid ViT representative. Their sizes exceed that of ViT-B/16 by 32.34%, 31.73% and 15.91%, respectively, thereby avoiding any parameter-level bias in favor of vision-language architectures. In addition, we include four prominent fine-tuning methods for VLMs: CoOp [

28], Tip-Adapter [

29], CoCoOp [

30], and our baseline method APE [

18].

All models are trained and evaluated under consistent settings, using the same training, validation, and test sets. We evaluate each method under few-shot settings with

samples per class. The classification accuracies are reported in

Table 4 (with a corresponding accuracy plot shown in

Figure 7).

The results indicate that unimodal visual models such as ConvNeXt-Large and FocalNet-Large achieve only around 17% accuracy in the 1-shot scenario, barely above random guessing (≈14.3% for 7 classes). Even at 16 shots, their accuracies reach only around 55–57%, far below those of multimodal methods (which mostly exceed 70%). Even the weakest vision-language method in the training-free group (Tip-Adapter) more than doubles the 1-shot accuracy of the best CNN (about 35% vs. 17%). At 16 shots, most multimodal methods achieve between 70% and 87% accuracy, whereas the CNN-based models plateau at or below 57%. This stark contrast underscores the difficulty traditional visual-based models face in learning effective representations from limited data and the importance of cross-modal information for the few-shot learning.

Notably, our proposed methods UWGC-F and UWGC-T achieve the top accuracy in every few-shot scenario. For example, in the 16-shot case, UWGC-T reaches 88.14%, which is significantly higher than the next best method (CoCoOp, 86.77%). Across all tested shot counts, compared to the APE and APE-T baselines, UWGC-F and UWGC-T improve accuracy by averages of 1.22% and 2.48%, respectively. These consistent gains validate the fact that the introduced prompt enhancement module provides substantial benefits over using APE or APE-T alone.

In summary, these results reveal that mainstream single-modal approaches (even very large CNNs or ViTs) struggle in few-shot grain classification, underscoring the necessity of incorporating multimodal knowledge. By contrast, the UWGC framework excels under the data-scarce conditions, demonstrating a promising direction for tackling the long-standing challenge of limited annotations in agricultural vision tasks.

4.5. Ablation Studies

To validate the effectiveness of the two key innovations introduced by our UWGC framework on top of the APE baseline, we conducted a series of ablation studies. Each experiment isolates or replaces a specific modification to assess its individual contribution to overall classification performance. Through these controlled studies, we aim to uncover causal relationships between components and offer insights for future improvements.

4.5.1. Text Prompt Enhancement Based on ATPrompt

This ablation study investigates how integrating the ATPrompt-based text prompt enhancement influences UWGC’s performance compared to the baseline APE alone. To ensure a fair ablation setting, in this study, we follow the original ATPrompt design and use the unimodal LLM Qwen2.5 to generate attributes and prompts, in order to fairly assess the impact of incorporating ATPrompt on overall performance. The results are reported in

Table 5.

As shown in

Table 5, incorporating the ATPrompt-based prompt enhancement consistently improves accuracy over the baseline fine-tuning methods (APE and APE-T) across all evaluated shot counts. When data is extremely limited (e.g., in the 1-shot setting), ATPrompt-based prompts provide limited benefit to pure APE, possibly because a single sample fails to capture the semantic meaning of attributes. As the number of shots increases, the contribution of attribute-based prompts becomes more evident. For instance, at 8-shot, APE + ATPrompt achieves approximately 0.96% higher accuracy than APE, and at 16-shot it leads by ~1.1%. Similarly, adding ATPrompt to APE-T yields substantial gains (e.g., 2.71% at 8-shot, reaching 80.76% vs. 78.05%). Notably, the performance boost from ATPrompt is more pronounced for the trainable variant (APE-T), which gains up to approximately 2–3% (compare 86.48% vs. 87.94% at 16-shot)—indicating that when the model is allowed training, it can better utilize the richer textual information. These results confirm that APE’s limitation on the text side—using only basic class-name prompts—can hinder fine-tuning effectiveness. By introducing ATPrompt’s attribute extraction and search mechanisms, the textual input becomes more informative, which in turn guides the model to better distinguish fine-grained classes. This is why the combined APE + ATPrompt approach outperforms APE alone, especially once a few examples are available to reveal useful attributes.

4.5.2. Attribute Search and Prompt Generation Based on Qwen2.5-VL

To validate the effectiveness of replacing the original unimodal-LLM-based attribute extraction and text prompt generation in ATPrompt with a multimodal model, we conducted an ablation study by replacing Qwen2.5-VL with the unimodal Qwen2.5 in both UWGC-F and UWGC-T. This comparison allows us to assess the impact of our proposed modification and verify its positive contribution to the overall framework.

Table 6 shows that replacing the unimodal prompt generator (Qwen2.5) with the multimodal Qwen2.5-VL leads to higher accuracies in both UWGC-F and UWGC-T across all few-shot settings. The performance gains from using Qwen2.5-VL are evident but moderate—on average around 0.5% to 1.0% for UWGC-F, and a similar range for UWGC-T. For instance, UWGC-T (multimodal) reaches 81.75% at 8-shot compared to 80.76% with the unimodal generator, and 88.14% vs. 87.94% at 16-shot, respectively. However, the advantage of the multimodal prompt diminishes as the shot count grows (the gap is only about 0.2% at 16-shot for UWGC-T), likely because with more training examples, the model learns sufficient visual features, so the added value of extra textual context diminishes.

The consistent, albeit modest, outperformance of Qwen2.5-VL underscores the value of incorporating visual context into prompt generation. The unimodal Qwen2.5 model relies only on class names, without ‘seeing’ the grain images. This limitation can lead to vague or misaligned attribute suggestions. In contrast, Qwen2.5-VL processes the actual image and thus produces attributes more pertinent to each grain’s appearance, resulting in attributes and prompts that better align with the visual content. This alignment likely makes the subsequent image classification more effective, explaining the performance gap in favor of the multimodal approach.

Thus, this ablation confirms that leveraging a multimodal model for attribute extraction and prompt generation is an effective design choice in UWGC, further validating our framework’s emphasis on cross-modal synergy.

5. Discussion

This study proposes UWGC, a novel vision-language framework tailored for the few-shot unsound wheat grain classification. By integrating an APE-based fine-tuning module with a text prompt enhancement module, UWGC effectively leverages cross-modal prior knowledge to tackle fine-grained agricultural image recognition under the data-scarce conditions. We developed two variants—UWGC-F (training-free, using APE) and UWGC-T (training-required, using APE-T)—to accommodate different deployment scenarios. This dual-module design (combining efficient visual fine-tuning with enriched textual prompts) is, to the best of our knowledge, the first of its kind applied to unsound grain classification, filling a significant gap in the application of pre-trained VLMs to this problem domain. UWGC’s two modules communicate solely via prompt text embeddings. The Text Enhancement module generates improved prompts, while the Fine-Tuning module handles adaptation. Coupled only at the data level, either module can be modified or replaced independently, keeping UWGC highly modular and lightweight.

Extensive experiments verify the effectiveness and robustness of the UWGC framework. Under stringent few-shot settings (ranging from 1-shot to 16-shot), UWGC consistently achieves the highest accuracy compared to all baseline methods. These results highlight the value of harnessing cross-modal, pre-trained knowledge in data-scarce scenarios. Notably, even when compared to much larger single-modal models (such as ConvNeXt-L or FocalNet-L with substantially more parameters), UWGC, built on the CLIP backbone—demonstrates superior generalization ability. This underscores that transferring knowledge from image—text pre-training yields greater benefits than simply scaling up unimodal models on this task. Furthermore, UWGC outperforms other state-of-the-art fine-tuning approaches in every few-shot case. This superior performance stems from our dual-optimization strategy. By coupling APE (vision side) with ATPrompt-based prompt enrichment (text side), UWGC absorbs the limited labeled information far more effectively, thereby significantly improving fine-grained classification performance under severe data constraints. These findings are significant for smart agriculture, as they demonstrate a practical solution to the longstanding challenge of insufficient labeled data—namely, leveraging multimodal knowledge to compensate for data scarcity. Our results suggest that VLMs can unlock new levels of accuracy in agricultural inspection tasks that were previously unattainable with conventional methods.

Compared to traditional vision-only methods, our UWGC framework provides richer semantic representations by capitalizing on the alignment between images and textual attributes. This is evidenced by the stark performance gap we observed between UWGC and the purely visual models (e.g., the CNN and ViT baselines) in few-shot trials. Unlike those unimodal models, which struggle to discern fine-grained differences with limited data, UWGC benefits from semantic context and prior knowledge. This results in far more discriminative features. In addition, compared to prior vision-language fine-tuning methods that focus on only one modality, UWGC’s dual-focus approach yields clear advantages. For instance, prompt-learning schemes like CoOp and CoCoOp adjust only the text embeddings (learning soft prompt vectors) and still depend on simple class-name prompts without visual context. On the other hand, adapter-based methods like Tip-Adapter and the original APE primarily enhance the visual side, leaving the text guidance underexploited. In contrast, UWGC combines the strengths of both. It preserves CLIP’s powerful visual recognition prior through APE/APE-T, while concurrently enhancing the descriptive richness of text input via attribute-based prompting. Because we improve both modalities in tandem, UWGC is able to outperform methods that treat only one side of the problem (as consistently borne out by our results across all shot counts). It is worth noting that our approach aligns with emerging research trends that employ large multimodal models to improve prompt quality [

23]. By incorporating image-informed attributes from Qwen2.5-VL into CLIP’s prompts, we further improved classification accuracy, illustrating one practical way to fuse generative multimodal models with discriminative VLMs for enhanced performance.

Still, UWGC has some limitations that warrant discussion. Identifying these issues provides direction for future research:

Multimodal model overhead: Incorporating the multimodal model Qwen2.5-VL model for attribute extraction introduces additional computational overhead. Running a large multimodal model is resource-intensive, which could slow down the inference process and may not be feasible in real-time or resource-constrained environments (e.g., embedded systems on farming equipment). While the multimodal prompts improve accuracy, this comes at the cost of extra processing time. This trade-off between performance and efficiency needs to be considered for practical deployments. It suggests a need for optimizing or distilling the prompt generation process in future work;

Scope of evaluation: To date, we have only evaluated UWGC on wheat grain data (GrainSpace). It is uncertain how well the framework would perform on other grain types or agricultural products (for example, unsound corn or rice kernels). Moreover, our experiments assumed a closed-set scenario where all classes were known in advance. We have not yet tested UWGC in open-set conditions where new classes might emerge over time. Adapting the framework to handle a growing set of classes—or entirely new classes in a zero-shot setting—is a promising direction for future work. Successfully doing so would demonstrate UWGC’s versatility, though it might require additional mechanisms such as novelty detection or continual learning.

In summary, acknowledging these limitations provides a balanced view of UWGC. While the framework substantially advances the state of few-shot grain classification, addressing the above issues in future work will be important. By doing so, UWGC can be made more general, efficient, and robust. Overall, discussing both the advantages and limitations gives readers a nuanced understanding of where our contributions shine and where further work is needed. This balanced perspective is essential in rigorous academic reporting.

6. Conclusions

In this paper, we addressed the challenge of few-shot unsound wheat grain classification by introducing an innovative vision-language framework, UWGC. Our approach combines the strengths of pre-trained visual and textual models. It consists of a fine-tuning module (APE or APE-T) for efficient adaptation, and an attribute-driven prompt enhancement module (integrating ATPrompt with the multimodal model Qwen2.5-VL) to enrich the text input. Through experiments on a large-scale public grain dataset, we demonstrate that UWGC significantly outperforms traditional vision-only methods as well as existing vision-language fine-tuning techniques across all tested few-shot scenarios. UWGC-F achieved 72.93% accuracy and UWGC-T reached 88.14% accuracy. Relative to the APE and APE-T baselines, UWGC-F and UWGC-T improved accuracy by 1.22% and 2.48%, respectively, validating the effectiveness of coupling visual and textual information for this task. Also, these results confirm that incorporating cross-modal knowledge is a powerful strategy in data-sparse agricultural settings. Looking ahead, there are several promising directions to extend and improve this work:

The UWGC framework could be applied to other crops or food quality inspection tasks to evaluate its generality beyond wheat grains (for example, identifying unsound corn kernels or detecting defects in fruits). Such experiments would strengthen confidence that our vision-language strategy works broadly and hence increase its practical impact.

While our use of a multimodal model for prompt generation proved effective in boosting accuracy, it also added noticeable computational latency. Future research could explore more efficient or distilled multimodal models, or alternative prompt optimization techniques, to reduce inference time and make the system more feasible for real-time use on edge devices.

Another important avenue is to adapt UWGC (and similar vision-language models) to even more challenging scenarios such as open-set recognition or continuously expanding class pools. In real-world grain storage or processing, new types of imperfections might appear over time; hence, enabling the model to handle novel or evolving classes with minimal human intervention, perhaps by leveraging zero-shot capacities or continual learning would be invaluable.

Addressing these issues would further extend UWGC’s applicability and impact in real-world settings. We anticipate that as multimodal AI advances, the core ideas presented here will be further developed. Such developments could play a significant role in the broader evolution of smart agriculture and food quality assurance.