Unlocking the Potential of the Prompt Engineering Paradigm in Software Engineering: A Systematic Literature Review

Abstract

1. Introduction

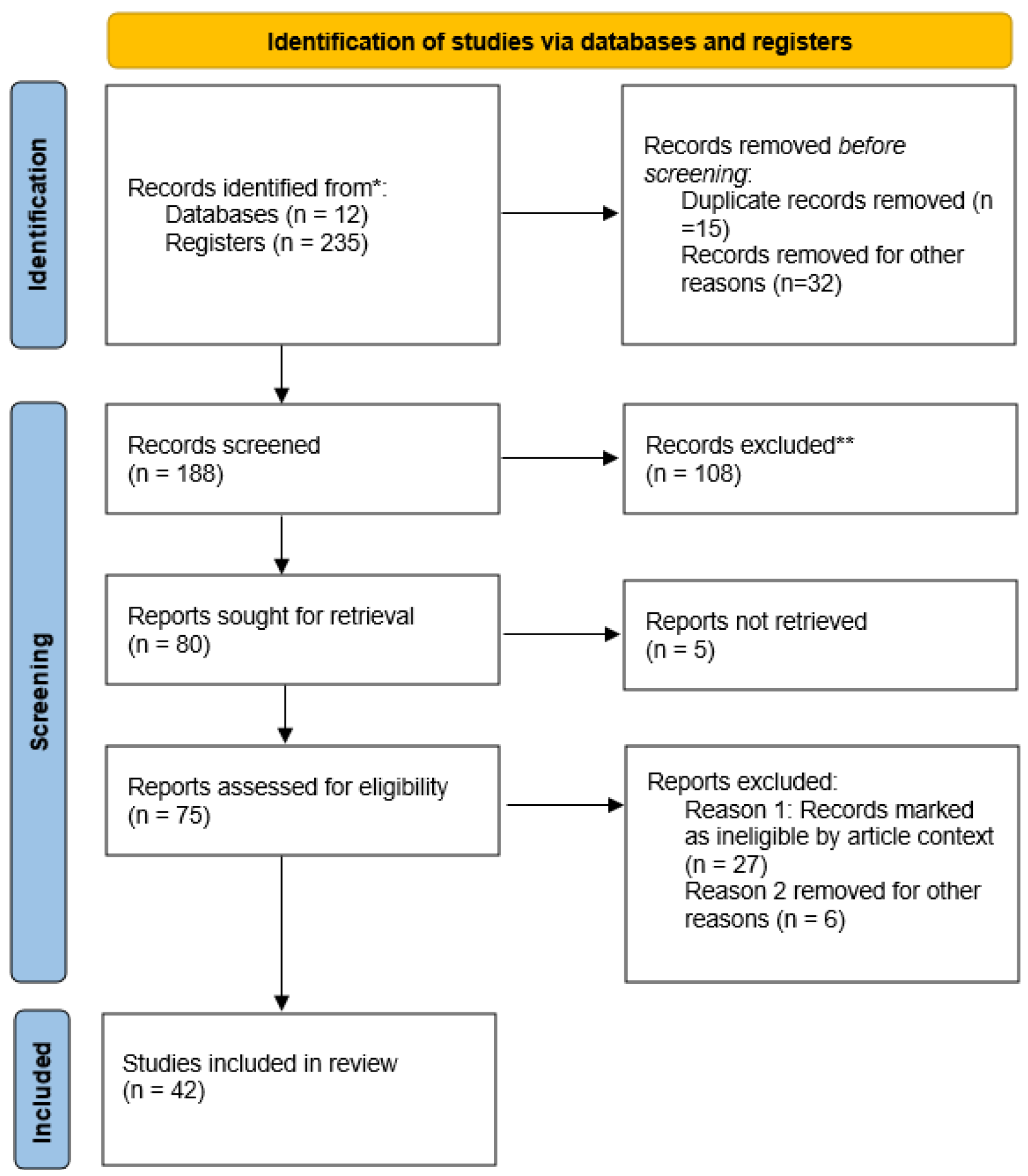

2. Materials and Methods

3. Results

3.1. Key Challenge Identified

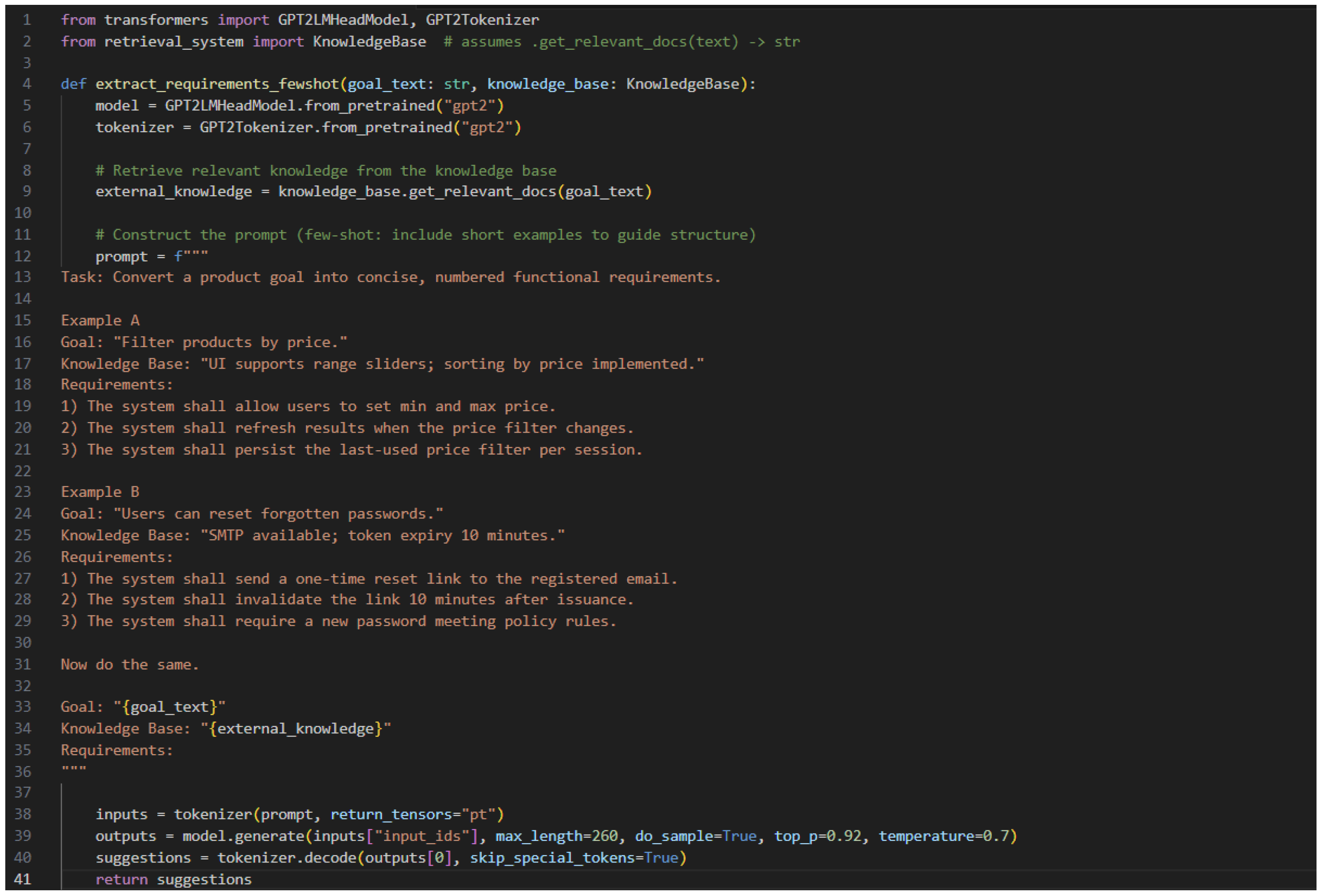

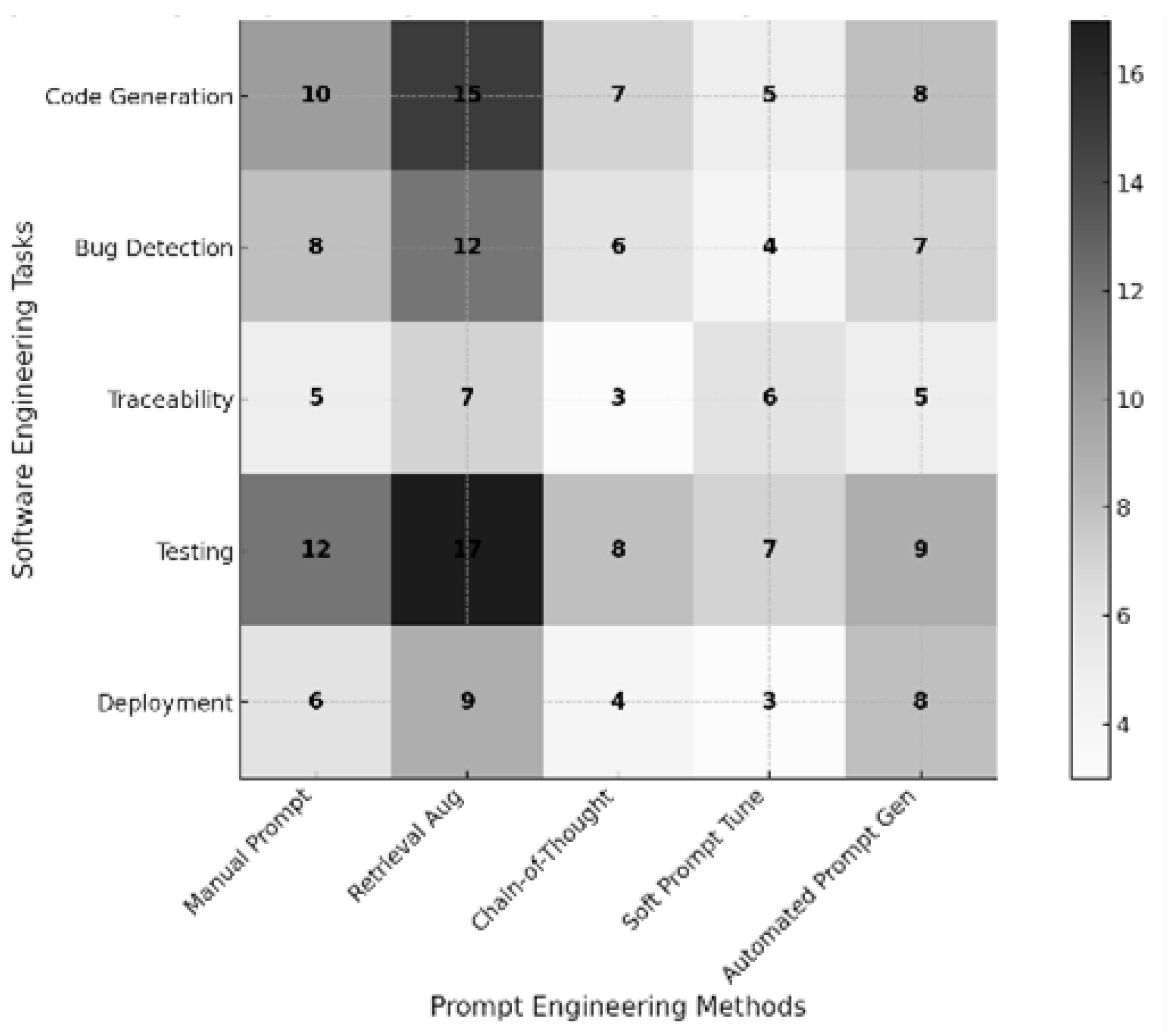

3.2. Prompt Engineering Methods

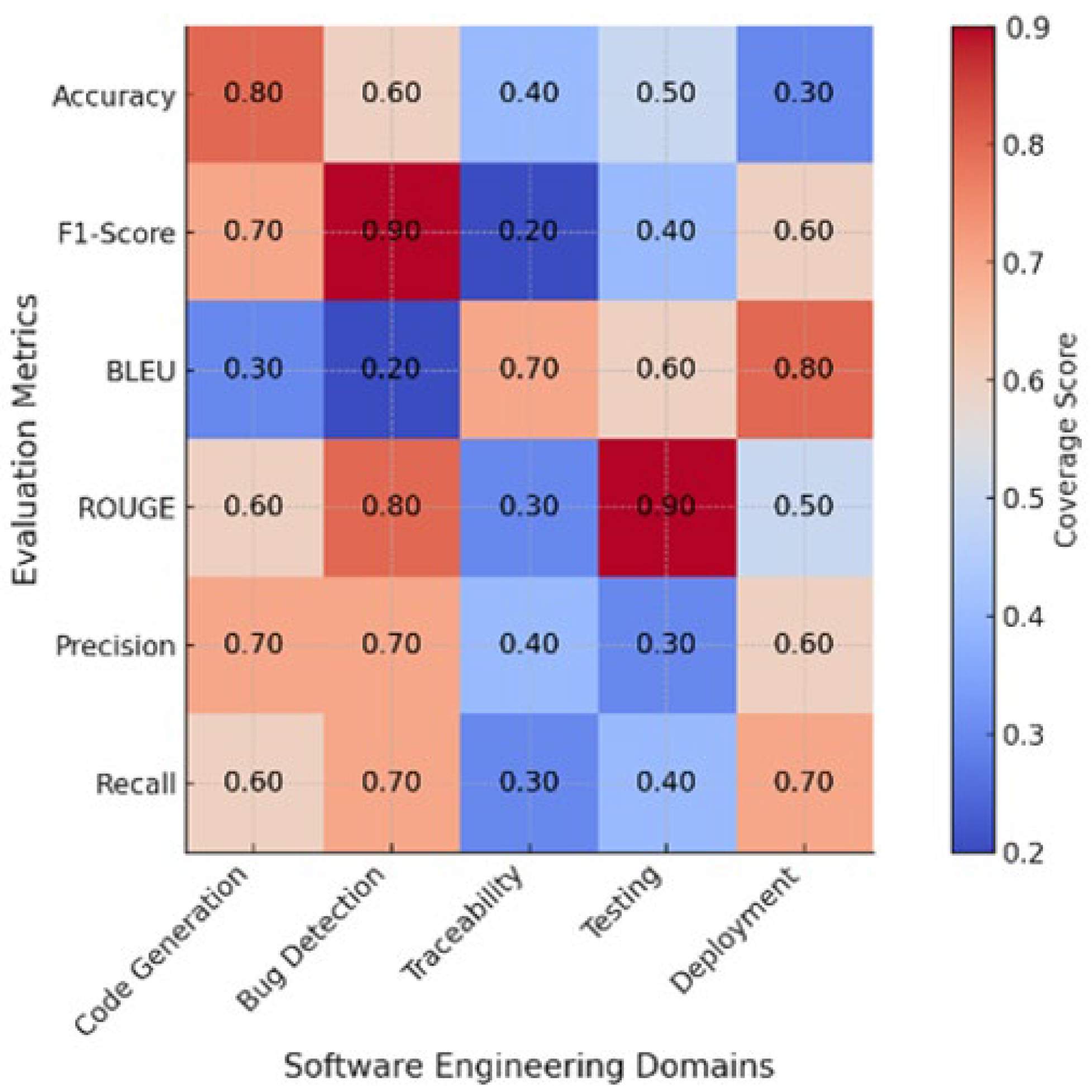

3.3. Evaluation Metrics

3.4. Summary of Findings

4. Discussion

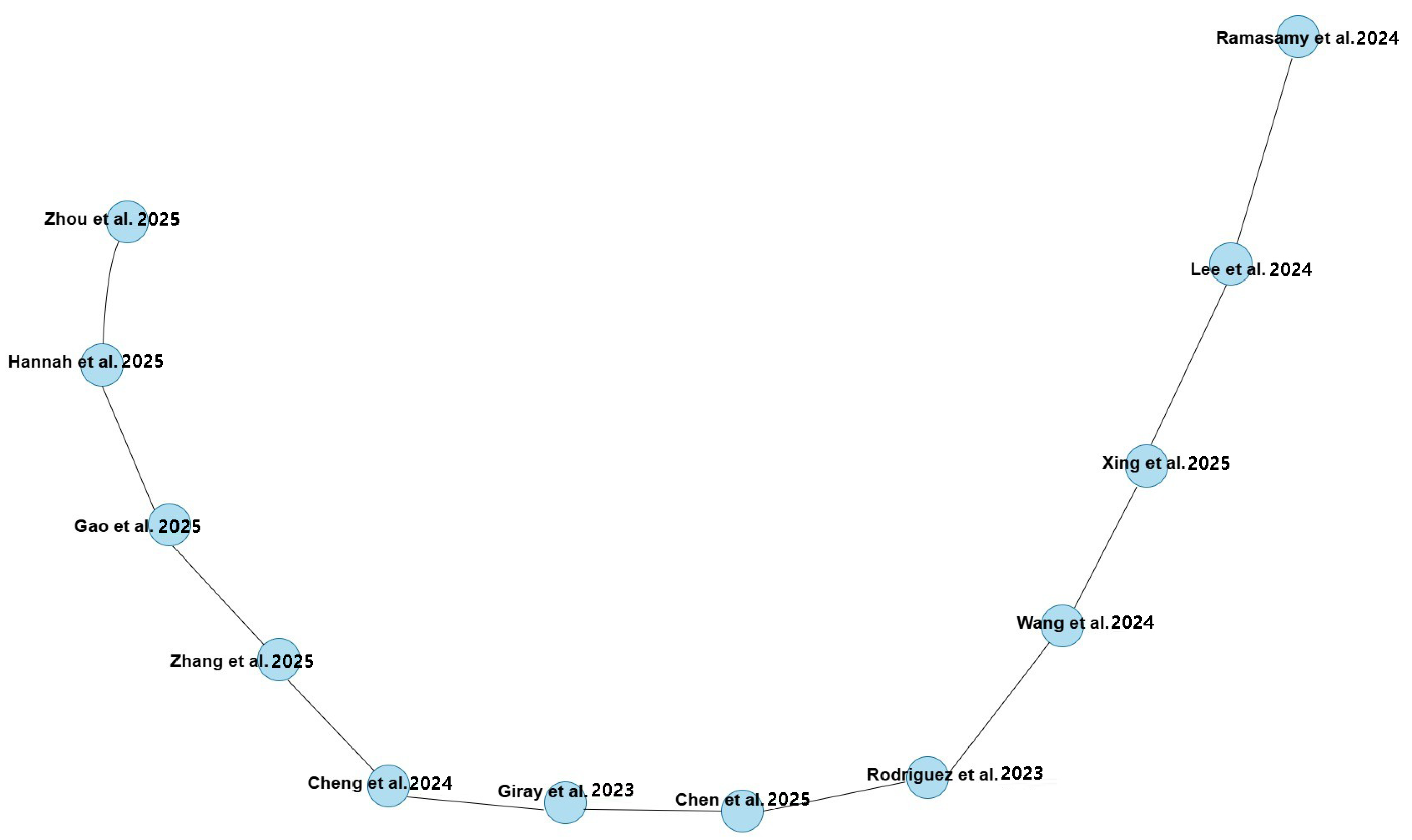

4.1. Author Collaboration and Influential Publications

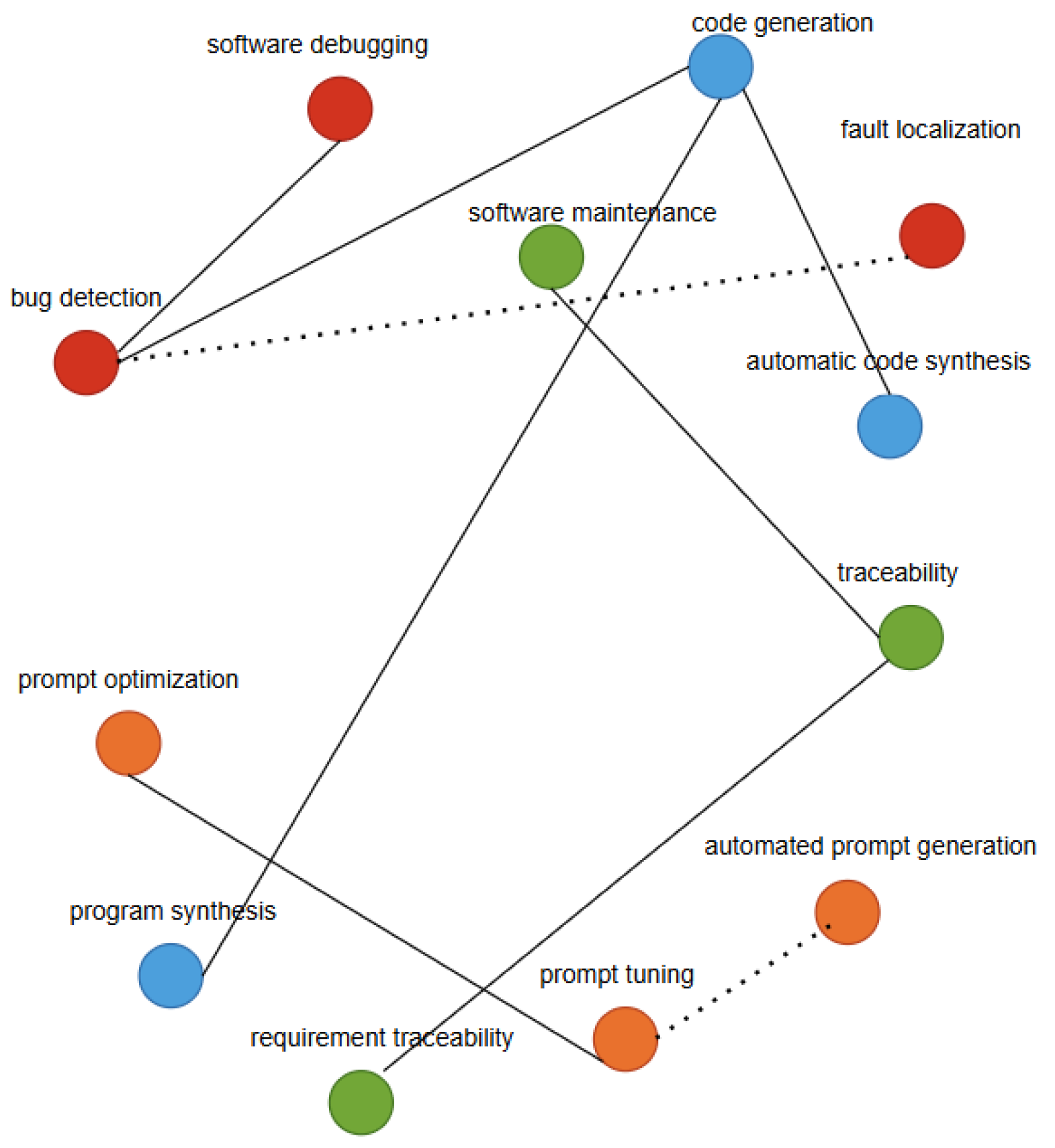

4.2. Thematic Clusters and Research Focus

4.3. Key Challenges and Severity Analysis

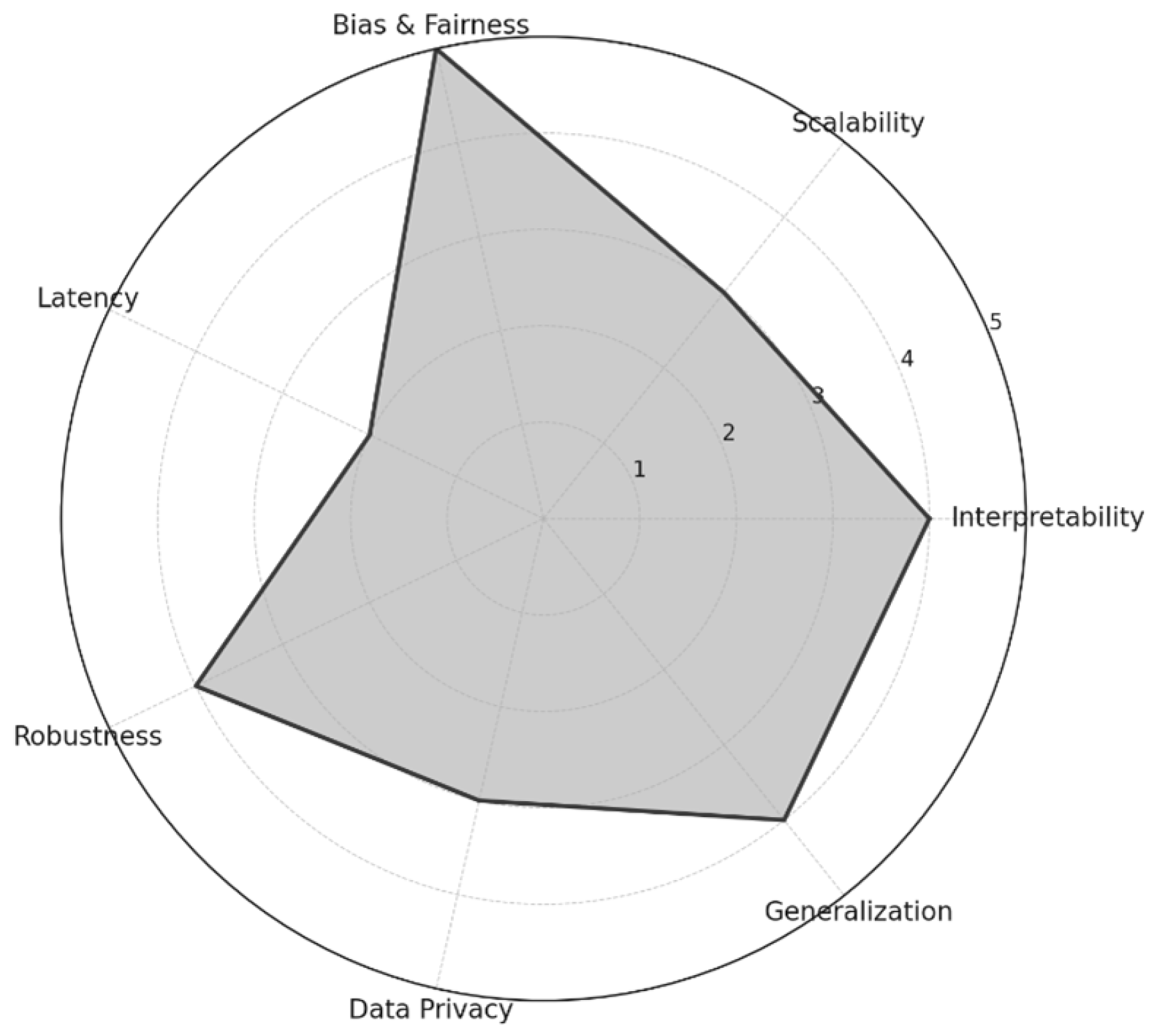

4.4. Severity of Key Challenges in Prompt Engineering

4.5. Performance Comparison Between Prompt Engineering and Fine-Tuning

4.6. Evaluation Metrics Overview

4.7. Gap Analysis and Research Needs

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| SE | Software Engineering |

| PE | Prompt Engineering |

| LLMs | Large Language Models |

| NLP | Natural Language Processing |

| PIQ | Perceived Information Quality |

| CoT | Chain of Thought |

| ML | Machine Learning |

| PRISMA | Preferred Reporting Items for Systematic Reviews |

References

- Mu, Z.; Lin, S.; Guo, S.; Yu, S.; Gao, D. Prompt enhanced neural machine translation with POS tags. Neurocomputing 2025, 639, 130283. [Google Scholar] [CrossRef]

- Zhang, T.; Ma, L.; Cheng, S.; Liu, Y.; Li, N.; Wang, H. Automatic prompt design via particle swarm optimization driven LLM for efficient medical information extraction. Swarm Evol. Comput. 2025, 95, 101922. [Google Scholar] [CrossRef]

- Kim, J.; Chen, M.L.; Rezaei, S.J.; Hernandez-Boussard, T.; Chen, J.H.; Rodriguez, F.; Han, S.S.; Lal, R.A.; Kim, S.H.; Dosiou, C.; et al. Artificial intelligence tools in supporting healthcare professionals for tailored patient care. npj Digit. Med. 2025, 8, 210. [Google Scholar] [CrossRef] [PubMed]

- Wang, Y.; Zhou, L.; Zhang, W.; Zhang, F.; Wang, Y. A soft prompt learning method for medical text classification with simulated human cognitive capabilities. Artif. Intell. Rev. 2025, 58, 118. [Google Scholar] [CrossRef]

- Zhang, X.; Talukdar, N.; Vemulapalli, S.; Ahn, S.; Wang, J.; Meng, H.; Murtaza, S.M.B.; Leshchiner, D.; Dave, A.A.; Joseph, D.F.; et al. Comparison of Prompt Engineering and Fine-Tuning Strategies in Large Language Models in the Classification of Clinical Notes. Neurocomputing 2024, 2024, 478–487. [Google Scholar]

- Huang, Y.; Wang, W.; Zhou, J.; Zhang, L.; Lin, J.; Liu, H.; Hu, X.; Zhou, Z.; Dong, W. Integrative modeling enables ChatGPT to achieve average level of human counselors performance in mental health Q&A. Inf. Process. Manag. 2025, 62, 104152. [Google Scholar] [CrossRef]

- Ramasamy, V.; Ramamoorthy, S.; Walia, G.S.; Kulpinski, E.; Antreassian, A. Enhancing User Story Generation in Agile Software Development Through Open AI and Prompt Engineering. In Proceedings of the 2024 IEEE Frontiers in Education Conference, FIE, Washington, DC, USA, 13–16 October 2024. [Google Scholar] [CrossRef]

- Lee, U.; Jung, H.; Jeon, Y.; Sohn, Y.; Hwang, W.; Moon, J.; Kim, H. Few-shot is enough: Exploring ChatGPT prompt engineering method for automatic question generation in english education. Educ. Inf. Technol. 2024, 29, 11483–11515. [Google Scholar] [CrossRef]

- Xing, Z.; Liu, Y.; Cheng, Z.; Huang, Q.; Zhao, D.; SUN, D.; Liu, C. When Prompt Engineering Meets Software Engineering: CNL-P as Natural and Rosust ‘APIS’ For Human-AI Interaction. In Proceedings of the Thirteenth International Conference on Learning Representations, Singapore, 24 April 2025; pp. 1–28. Available online: https://ugaiforge.ai (accessed on 24 August 2025).

- Lo, L.S. The CLEAR path: A framework for enhancing information literacy through prompt engineering. J. Acad. Librariansh. 2023, 49, 102720. [Google Scholar] [CrossRef]

- Thanasi-Boçe, M.; Hoxha, J. From ideas to ventures: Building entrepreneurship knowledge with LLM, prompt engineering, and conversational agents. Educ. Inf. Technol. 2024, 29, 24309–24365. [Google Scholar] [CrossRef]

- Thapa, S.; Shiwakoti, S.; Shah, S.B.; Adhikari, S.; Veeramani, H.; Nasim, M.; Naseem, U. Large language models (LLM) in computational social science: Prospects, current state, and challenges. Soc. Netw. Anal. Min. 2025, 15, 1–30. [Google Scholar] [CrossRef]

- Lin, Q.K.; Hsu, C.; Chang, T.S. Enhancing Finite State Machine Design Automation with Large Language Models and Prompt Engineering Techniques. In Proceedings of the 2024 IEEE 20th Asia Pacific Conference on Circuits and Systems and IEEE Asia Pacific Conference on Postgraduate Research in Microelectronics Electronics, Taipei, Taiwan, 7–9 November 2024; pp. 475–478. [Google Scholar] [CrossRef]

- Park, J.; Choo, S. Generative AI Prompt Engineering for Educators: Practical Strategies. J. Spec. Educ. Technol. 2024, 40, 411–417. [Google Scholar] [CrossRef]

- Zaghir, J.; Naguib, M.; Bjelogrlic, M.; Névéol, A.; Tannier, X.; Lovis, C. Prompt Engineering Paradigms for Medical Applications: Scoping Review. J. Med. Internet Res. 2024, 26, e60501. [Google Scholar] [CrossRef] [PubMed]

- Rodrigues, L.; Xavier, C.; Costa, N.; Batista, H.; Silva, L.F.B.; Chaleghi de Melo, W.; Gasevic, D.; Ferreira Mello, R. LLMs Performance in Answering Educational Questions in Brazilian Portuguese: A Preliminary Analysis on LLMs Potential to Support Diverse Educational Needs. In Proceedings of the 15th International Conference on Learning Analytics and Knowledge, LAK 2025, New York, NY, USA, 3–7 March 2025; pp. 865–871. [Google Scholar] [CrossRef]

- Cheng, Q.; Chen, L.; Hu, Z.; Tang, J.; Xu, Q.; Ning, B. A novel prompting method for few-shot NER via LLMs. Nat. Lang. Process. J. 2024, 8, 100099. [Google Scholar] [CrossRef]

- Zhang, H.; Deng, H.; Ou, J.; Feng, C. Mitigating spatial hallucination in large language models for path planning via prompt engineering. Sci. Rep. 2025, 15, 8881. [Google Scholar] [CrossRef]

- Hannah, G.; Sousa, R.T.; Dasoulas, I.; d’Amato, C. On the legal implications of Large Language Model answers: A prompt engineering approach and a view beyond by exploiting Knowledge Graphs. J. Web Semant. 2025, 84, 100843. [Google Scholar] [CrossRef]

- Chen, D.; Wang, J. A Prompt Example Construction Method Based on Clustering and Semantic Similarity. Systems 2024, 12, 410. [Google Scholar] [CrossRef]

- Chaubey, H.K.; Tripathi, G.; Ranjan, R.; Gopalaiyengar, S.K. Comparative Analysis of RAG, Fine-Tuning, and Prompt Engineering in Chatbot Development. In Proceedings of the 2024 International Conference on Future Technologies for Smart Society (ICFTSS), Kuala Lumpur, Malaysia, 7–8 August 2024; pp. 169–172. [Google Scholar] [CrossRef]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E. The PRISMA 2020 statement: An updated guideline for reporting systematic reviews. J. Clin. Epidemiol. 2021, 134, 178–189. [Google Scholar] [CrossRef]

- Ayad, S.; Alsayoud, F. Prompt engineering techniques for semantic enhancement in business process models. Bus. Process Manag. J. 2024, 30, 2611–2641. [Google Scholar] [CrossRef]

- Ma, X.; Wang, J. WIP: Active Learning Through Prompt Engineering and Agentic AI Simulation-A Pilot Project in Computer Networks Education. In Proceedings of the 2024 IEEE Frontiers in Education Conference, FIE, Washington, DC, USA, 13–16 October 2024. [Google Scholar] [CrossRef]

- Rodriguez, A.D.; Dearstyne, K.R.; Cleland-Huang, J. Prompts Matter: Insights and Strategies for Prompt Engineering in Automated Software Traceability. In Proceedings of the 31st IEEE International Requirements Engineering Conference Workshops, REW 2023, Hannover, Germany, 4–5 September 2023; pp. 455–464. [Google Scholar] [CrossRef]

- Chen, Q.; Hu, Y.; Peng, X.; Xie, Q.; Jin, Q.; Gilson, A.; Dinger, M.B.; Ai, X.; Lai, P.-T.; Wang, Z.; et al. Benchmarking large language models for biomedical natural language processing applications and recommendations. Nat. Commun. 2025, 16, 3280. [Google Scholar] [CrossRef]

- Ke, Y.H.; Jin, L.; Elangovan, K.; Abdullah, H.R.; Liu, N.; Sia, A.T.H.; Soh, C.R.; Tung, J.Y.M.; Ong, J.C.L.; Kuo, C.-F.; et al. Retrieval augmented generation for 10 large language models and its generalizability in assessing medical fitness. npj Digit. Med. 2025, 8, 187. [Google Scholar] [CrossRef]

- Wang, L.; Chen, X.; Deng, X.; Wen, H.; You, M.; Liu, W.; Li, Q.; LI, J. Prompt engineering in consistency and reliability with the evidence-based guideline for LLMs. npj Digit. Med. 2024, 7, 14. [Google Scholar] [CrossRef] [PubMed]

- Giray, L. Prompt Engineering with ChatGPT: A Guide for Academic Writers. Ann. Biomed. Eng. 2023, 21, 2629–2633. [Google Scholar] [CrossRef] [PubMed]

- Liu, H.; Yin, H.; Luo, Z.; Wang, X. Integrating chemistry knowledge in large language models via prompt engineering. Synth Syst. Biotechnol. 2025, 10, 23–38. [Google Scholar] [CrossRef]

- Zhu, K.; Wang, J.; Zhou, J.; Wang, Z.; Chen, H.; Wang, Y.; Yang, L.; Ye, W.; Zhang, Y.; Gong, N.; et al. PromptBench: Towards Evaluating the Robustness of Large Language Models on Adversarial Prompts. In Proceedings of the 1st ACM Workshop on Large AI Systems and Models with Privacy and Safety Analysis, Salt Lake City, UT, USA, 19 November 2024; pp. 57–68. [Google Scholar] [CrossRef]

- Jiang, G.; Ma, Z.; Zhang, L.; Chen, J. Prompt engineering to inform large language model in automated building energy modeling. Energy 2025, 316, 134548. [Google Scholar] [CrossRef]

- Perrone, G.; Romano, S.P. Prompt Engineering as Code (PEaC): An approach for building modular, reusable, and portable prompts. In Proceedings of the 2024 2nd International Conference on Foundation and Large Language Models, FLLM, Dubai, United Arab Emirates, 26–29 November 2024; pp. 289–294. [Google Scholar] [CrossRef]

- Chen, B.; Zhang, Z.; Langrené, N.; Zhu, S. Unleashing the potential of prompt engineering in Large Language Models: A comprehensive review. Patterns 2025, 6, 101260. [Google Scholar] [CrossRef]

- Jung, H.; Oh, J.; Stephenson, K.A.J.; Joe, A.W.; Mammo, Z.N. Prompt engineering with ChatGPT3.5 and GPT4 to improve patient education on retinal diseases. Can. J. Ophthalmol. 2024, 60, e375–e381. [Google Scholar] [CrossRef]

- Park, D.; An, G.T.; Kamyod, C.; Kim, C.G. A Study on Performance Improvement of Prompt Engineering for Generative AI with a Large Language Model. J. Web Eng. 2023, 22, 1187–1206. [Google Scholar] [CrossRef]

- Korzynski, P.; Mazurek, G.; Krzypkowska, P.; Kurasinski, A. Artificial intelligence prompt engineering as a new digital competence: Analysis of generative AI technologies such as ChatGPT. Entrep. Bus. Econ. Rev. 2023, 11, 25–37. [Google Scholar] [CrossRef]

- Trad, F.; Chehab, A. Prompt Engineering or Fine-Tuning? A Case Study on Phishing Detection with Large Language Models. Mach. Learn. Knowl. Extr. 2024, 6, 367–384. [Google Scholar] [CrossRef]

- Lee, D.; Palmer, E. Prompt engineering in higher education: A systematic review to help inform curricula. Int. J. Educ. Technol. High. Educ. 2025, 22, 7. [Google Scholar] [CrossRef]

- Ahmed, A.; Hou, M.; Xi, R.; Zeng, X.; Shah, S.A. Prompt-Eng: Healthcare Prompt Engineering Revolutionizing Healthcare Applications with Precision Prompts. In Proceedings of the WWW 2024 Companion Proceedings of the ACM Web Conference, Singapore, 13–17 May 2024; pp. 1329–1337. [Google Scholar] [CrossRef]

- Heston, T.; Khun, C. Prompt Engineering in Medical Education. Int. Med. Educ. 2023, 2, 198–205. [Google Scholar] [CrossRef]

- Azimi, I.; Qi, M.; Wang, L.; Rahmani, A.M.; Li, Y. Evaluation of LLMs accuracy and consistency in the registered dietitian exam through prompt engineering and knowledge retrieval. Sci. Rep. 2025, 15, 1506. [Google Scholar] [CrossRef]

- Chen, E. Enhancing Teaching Quality Through LLM: An Experimental Study on Prompt Engineering. In Proceedings of the 2025 14th International Conference on Educational and Information Technology, ICEIT, Guangzhou, China, 14–16 March 2025; pp. 1–7. [Google Scholar] [CrossRef]

- Kasauli, R.; Liebel, G.; Knauss, E.; Gopakumar, S.; Kanagwa, B. Requirements Engineering Challenges in Large-Scale Agile System Development. In Proceedings of the 2017 IEEE 25th International Requirements Engineering Conference, RE, Lisbon, Portugal, 4–8 September 2017; pp. 352–361. [Google Scholar] [CrossRef]

- Amna, A.R.; Poels, G. Systematic Literature Mapping of User Story Research. Inst. Electr. Electron. Eng. Inc. 2022, 10, 51723–51746. [Google Scholar] [CrossRef]

- Reiff, J.; Schlegel, D. Hybrid project management—A systematic literature review. Int. J. Inf. Syst. Proj. Manag. 2022, 10, 45–63. [Google Scholar] [CrossRef]

- Santos, T.; Santos, E.; Sousa, M.; Oliveira, M. The Mediating Effect of Motivation between Internal Communication and Job Satisfaction. Adm. Sci. 2024, 14, 69. [Google Scholar] [CrossRef]

- Nurzynska, K.; Strzelecki, M.; Piórkowski, A.; Obuchowicz, R. AI in Medical Imaging and Image Processing. J. Clin. Med. 2025, 14, 4153. [Google Scholar] [CrossRef]

- Al-Emran, M.; Al-Qaysi, N.; Al-Sharafi, M.A.; Khoshkam, M.; Foroughi, B.; Ghobakhloo, M. Role of perceived threats and knowledge management in shaping generative AI use in education and its impact on social sustainability. Int. J. Manag. Educ. 2025, 23, 101105. [Google Scholar] [CrossRef]

- Alzubaidi, K. The Role of Generative AI in Higher Education: Institutional Guidelines, Generational Gaps, and the Grok 4 Challenge. Arab. World Engl. J. 2025, 1–4. [Google Scholar] [CrossRef]

| Criteria | Inclusion | Exclusion | Justification |

|---|---|---|---|

| Publication Type | Peer-reviewed journal articles | Preprints Conference papers Book chapters | Ensures rigor and peer validation |

| Language | English | Non-English publication | Accessibility and consistency |

| Publication Period | 2020–2025 | Before 2020 | Relevance to recent prompt engineering and software engineering advances |

| Topic Focus | Prompt engineering within software engineering | Prompt engineering in unrelated domains | Maintain domain specificity |

| Source Database | Indexed in Scopus, ACM, IEEE, Emerald, Sage | Unindexed or predatory sources | Quality assurance |

| Variable | Description | Purpose |

|---|---|---|

| Bibliographic Details | Title, Authors, Years | Source Identification |

| Prompt Engineering Type | - | Categorize Prompt Engineering Method |

| Software Engineering Task | - | Mapping Prompt Engineering to Software Engineering Task |

| Dataset Characteristics | Size, Domain Specificity, Source | Understand Context and Generalizability |

| Evaluation Metrics | - | Measure Performance and Validity |

| Key Findings | Main Outcomes, Challenges, Novelty | Synthesize Insights |

| References | Method /Theme | Domain/Task | Evaluation Metrics | Key Findings |

|---|---|---|---|---|

| [23] | Few-Shot Prompting | Automatic Question Generation | Validity, Reliability | Few-shot prompting improves AGC quality by 25% and validity by 15%. |

| [12] | Manual Prompt Crafting | Computer Network Comparison | Engagement, Comprehension | Prompt engineering boosts active learning and engagement by 30%. |

| [2] | Manual and Soft Prompt Tuning | Medical Clinical Guidelines | Consistency, Accuracy | Prompt style impacts LLM reliability by 18% and accuracy by 20% in medicine. |

| [24] | Prompt Engineering as Code (PEC) | Bug Detection and Repair | BLEU, Precision | Modular prompts improve prompt management and reuse, with 15% better BLEU scores. |

| [17] | PE + RAG | Entrepreneurship Education | Quality, Human Evaluations | PE + RAG enhances entrepreneurial learning interaction by 20%. |

| [25] | Manual and Zero-Shot Prompting | Higher Education | Human evaluations | PE supports tailored GPT-3 for medical accuracy and empathy with 25% improvement. |

| [1] | Chain of Thought (CoT), CoT-SC, RAP | Nutrition Expert Chatbots | Accuracy, Consistency | CoT-SC and RAP improve LLM accuracy by 22% and consistency by 20% in nutrition. |

| [4] | Multiple PE Techniques | Healthcare Patient Assessment | Usefulness Evaluation | PE techniques improve healthcare fitness assessments by 18%. |

| [5] | Retrieval-Augmented Generation (RAG) | Medical Fitness Assessment | Accuracy, Consistency | RAG-based PE improves performance in fitness domain by 25%. |

| [7] | Integrative Modeling with PE | Mental Health Q&A | Human Evaluation | RAG improves accuracy of mental health Q&A by 20%. |

| [6] | Automated Prompt Generation with POS | Neural Machine Translation | BLEU, Accuracy | Prompt engineering improves translation accuracy by 15% in NMT. |

| [8] | Manual Prompt Design and Tuning | Medical NLP | Qualitative and Quantitative | PE most commonly used in medical NLP tasks, with 30% improved accuracy. |

| [9] | Particle Swarm Optimization for Prompt Design | Medical Information Extraction | Human Evaluations | PE improves extraction efficiency in medical tasks by 18%. |

| [26] | Manual and Zero-Shot Prompting | Educational AI Systems | Human Evaluations | LLMs with PE boost educational AI system performance by 25%. |

| [27] | Benchmarking LLMs with PE | Biomedical NLP | Precision, Recall | LLMs with PE achieve top performance in biomedical NLP tasks with 20% improvement. |

| [11] | Query Transformation for PE | General Language Generation | Accuracy, Consistency | PE improves LLM output in general language tasks by 18%. |

| [28] | Multistep Reasoning, Q-table Integration | Path Planning | Success Rate, Optimal Rate | Novel PE reduces hallucinations, improving path planning by 22%. |

| [29] | LLM-Based PE Methods | Automated Software Traceability | Human Evaluation | PE extends LLM utility in analyzing social data, improving results by 20%. |

| [30] | Prompt Refinement and Multi-Strategy PE | User Story Generation in Agile SE | Human Evaluation | Different prompt strategies improve traceability and prediction by 25%. |

| [31] | To-do-Oriented Prompting (TOP) Refinement | Finite State Machine (FSM) Design | Success Rate | TOP patch boosts FSM design success rates by 18%. |

| [3] | OpenAI-Driven Prompt | Healthcare Patient Assessment | Literature Synthesis | LLM prompts aid in comprehensive and innovative user story creation with 22% increase in quality. |

| [32] | Zero-Shot, Few-Shot, CoT PE | Business Process Modeling | Semantic Quality Metrics | PE enhances semantic completeness in BPMs by 15%. |

| [33] | Systematic Prompt Engineering | Medical Education | Accuracy, Human Evaluation | PE improves interactive medical learning with GLMs by 20%. |

| [23] | Few-Shot Prompting | Automatic Question Generation | Validity, Reliability | Few-shot prompting improves AGC quality by 25% and validity by 15%. |

| [12] | Manual Prompt Crafting | Computer Network Comparison | Engagement, Comprehension | Prompt engineering boosts active learning and engagement by 30%. |

| [2] | Manual and Soft Prompt Tuning | Medical Clinical Guidelines | Consistency, Accuracy | Prompt style impacts LLM reliability by 18% and accuracy by 20% in medicine. |

| [24] | Prompt Engineering as Code (PEC) | Bug Detection and Repair | BLEU, Precision | Modular prompts improve prompt management and reuse, with 15% better BLEU scores. |

| [17] | PE + RAG | Entrepreneurship Education | Quality, Human Evaluations | PE + RAG enhances entrepreneurial learning interaction by 20%. |

| [25] | Manual and Zero-Shot Prompting | Higher Education | Human evaluations | PE supports tailored GPT-3 for medical accuracy and empathy with 25% improvement. |

| [1] | Chain of Thought (CoT), CoT-SC, RAP | Nutrition Expert Chatbots | Accuracy, Consistency | CoT-SC and RAP improve LLM accuracy by 22% and consistency by 20% in nutrition. |

| [4] | Multiple PE Techniques | Healthcare Patient Assessment | Usefulness Evaluation | PE techniques improve healthcare fitness assessments by 18%. |

| [5] | Retrieval-Augmented Generation (RAG) | Medical Fitness Assessment | Accuracy, Consistency | RAG-based PE improves performance in fitness domain by 25%. |

| [7] | Integrative Modeling with PE | Mental Health Q&A | Human Evaluation | RAG improves accuracy of mental health Q&A by 20%. |

| [6] | Automated Prompt Generation with POS | Neural Machine Translation | BLEU, Accuracy | Prompt engineering improves translation accuracy by 15% in NMT. |

| [8] | Manual Prompt Design and Tuning | Medical NLP | Qualitative and Quantitative | PE most commonly used in medical NLP tasks, with 30% improved accuracy. |

| [9] | Particle Swarm Optimization for Prompt Design | Medical Information Extraction | Human Evaluations | PE improves extraction efficiency in medical tasks by 18%. |

| [26] | Manual and Zero-Shot Prompting | Educational AI Systems | Human Evaluations | LLMs with PE boost educational AI system performance by 25%. |

| [27] | Benchmarking LLMs with PE | Biomedical NLP | Precision, Recall | LLMs with PE achieve top performance in biomedical NLP tasks with 20% improvement. |

| [11] | Query Transformation for PE | General Language Generation | Accuracy, Consistency | PE improves LLM output in general language tasks by 18%. |

| [28] | Multistep Reasoning, Q-table Integration | Path Planning | Success Rate, Optimal Rate | Novel PE reduces hallucinations, improving path planning by 22%. |

| [29] | LLM-Based PE Methods | Automated Software Traceability | Human Evaluation | PE extends LLM utility in analyzing social data, improving results by 20%. |

| Method | Adaptability | Scalability | Computational Overhead | Domain Suitability | Reference |

|---|---|---|---|---|---|

| Manual Prompt Crafting | High: flexible, human interpretable | Low: labor-intensive, not scalable | Low: no additional training needed | General purpose, prototyping, education | [1,18,24,25] |

| Retrieval-Augmented Generation (RAG) | Medium: requires knowledge bases | Medium: depends on retrieval infrastructure | High: involves retrieval + generation | Traceability, bug detection, knowledge-intensive tasks | [9,12,23,26] |

| Chain-of-Thought (CoT) Prompting | Medium: enhances reasoning for complex tasks | Medium: prompt length can increase | Medium: requires multistep processing | Complex reasoning tasks, code generation, bug localization | [4,10,11] |

| Soft Prompt Tuning | Low: fixed embedding prompts | Medium: fewer parameters than full fine-tuning | Medium: requires parameter optimization | Documentation, medical text classification, domain-specific tuning | [29,30,31] |

| Automated Prompt Generation | Low: limited human interpretability | High: scalable across datasets | High: model-based generation and optimization | Large-scale, domain-general, automated PE pipelines | [14,16,32] |

| Software Engineering Section | SE Task | Manual Prompt Crafting | RAG | CoT Prompting | Soft Prompt Tuning | Automated Prompt Generation |

|---|---|---|---|---|---|---|

| Requirements | User Story Generation [7] | ✓ | ✓ | |||

| Requirement Traceability [42] | ✓ | ✓ | ✓ | |||

| Design | Program Synthesis [13] | ✓ | ✓ | ✓ | ✓ | |

| Architecture Modelling [9,19] | ✓ | |||||

| Implementation | Code Generation [20,21,34,37] | ✓ | ✓ | ✓ | ✓ | ✓ |

| Bug Detection [2,14] | ✓ | ✓ | ✓ | |||

| Automated Prompt Generation [25] | ✓ | |||||

| Testing | Test Case Generation [35] | ✓ | ✓ | |||

| Fault Localization [37] | ✓ | ✓ | ||||

| Deployment | CI/CD Prompt Integration [14] | ✓ | ✓ | |||

| Maintenance | Software Traceability [40] | ✓ | ✓ | ✓ | ||

| Document Generation [8,27] | ✓ | ✓ | ✓ |

| Evaluation Metric | Type | Software Engineering Task | Description | References |

|---|---|---|---|---|

| BLEU | Automated | Code generation | Measures n-gram overlap with reference text | [23] |

| ROUGE | Automated | Documentation generation | Measures overlap of recall oriented summarization | [12] |

| Perplexity | Automated | General LLM evaluation | Measures model uncertainty or confidence | [2] |

| Human Evaluation | Manual | Bug detection, traceability, education | Assesses semantic correctness, usability, engagement, etc. | [24] |

| Precision | Automated | Traceability, bug detection | Measures correctness and completeness | [17] |

| F1 Score | Automated | Phishing detection, classification | Harmonic mean of precision and recall | [39] |

| Usefulness | Manual | Healthcare, documentation generation | Measures correctness, usability, and overall utility | [32] |

| Challenge | Description | Mitigation Strategies | References |

|---|---|---|---|

| Prompt Brittleness | Sensitivity of outputs to minor changes in prompt phrasing, causing inconsistent results | Automated prompt optimization, multistep reasoning, soft prompt tuning | [11] |

| Hallucination | LLMs generating inaccurate or fabricated information | Retrieval-augmented prompt refinement, grounding with external knowledge | [5,6,7] |

| Scalability | Difficulty in scaling manual prompt engineering for large datasets or tasks | Automated prompt generation, modular prompt engineering (PEaC) | [12,24] |

| Domain Adaptation | Limited transferability of prompt techniques across different SE domains | Domain-specific tuning; hybrid, manual, and automated approaches | [1,23] |

| Evaluation Inconsistency | Lack of standardized, domain-specific evaluation metrics complicates cross-study comparisons | Development of SE-specific evaluation frameworks combining human and automated evaluations | [6,8] |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Syahputri, I.W.; Budiardjo, E.K.; Putra, P.O.H. Unlocking the Potential of the Prompt Engineering Paradigm in Software Engineering: A Systematic Literature Review. AI 2025, 6, 206. https://doi.org/10.3390/ai6090206

Syahputri IW, Budiardjo EK, Putra POH. Unlocking the Potential of the Prompt Engineering Paradigm in Software Engineering: A Systematic Literature Review. AI. 2025; 6(9):206. https://doi.org/10.3390/ai6090206

Chicago/Turabian StyleSyahputri, Irdina Wanda, Eko K. Budiardjo, and Panca O. Hadi Putra. 2025. "Unlocking the Potential of the Prompt Engineering Paradigm in Software Engineering: A Systematic Literature Review" AI 6, no. 9: 206. https://doi.org/10.3390/ai6090206

APA StyleSyahputri, I. W., Budiardjo, E. K., & Putra, P. O. H. (2025). Unlocking the Potential of the Prompt Engineering Paradigm in Software Engineering: A Systematic Literature Review. AI, 6(9), 206. https://doi.org/10.3390/ai6090206