Blind Source Separation in Polyphonic Music Recordings Using Deep Neural Networks Trained via Policy Gradients

Abstract

:1. Introduction

Related Work

2. Data Model

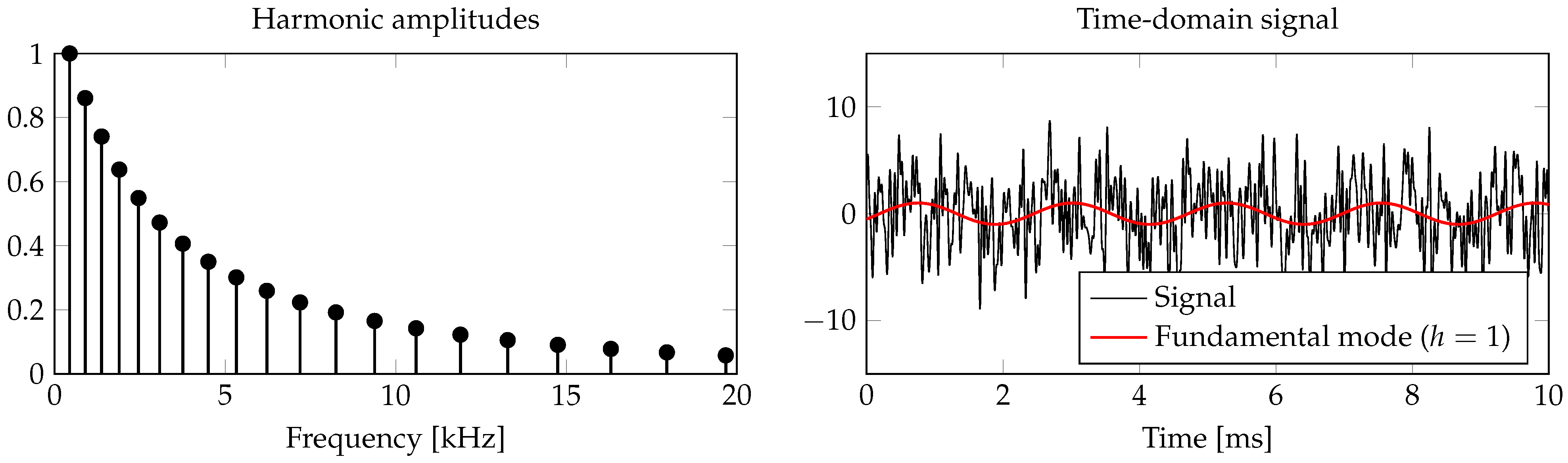

2.1. Tone Model

2.2. Time-Frequency Representation

2.3. Dictionary Representation

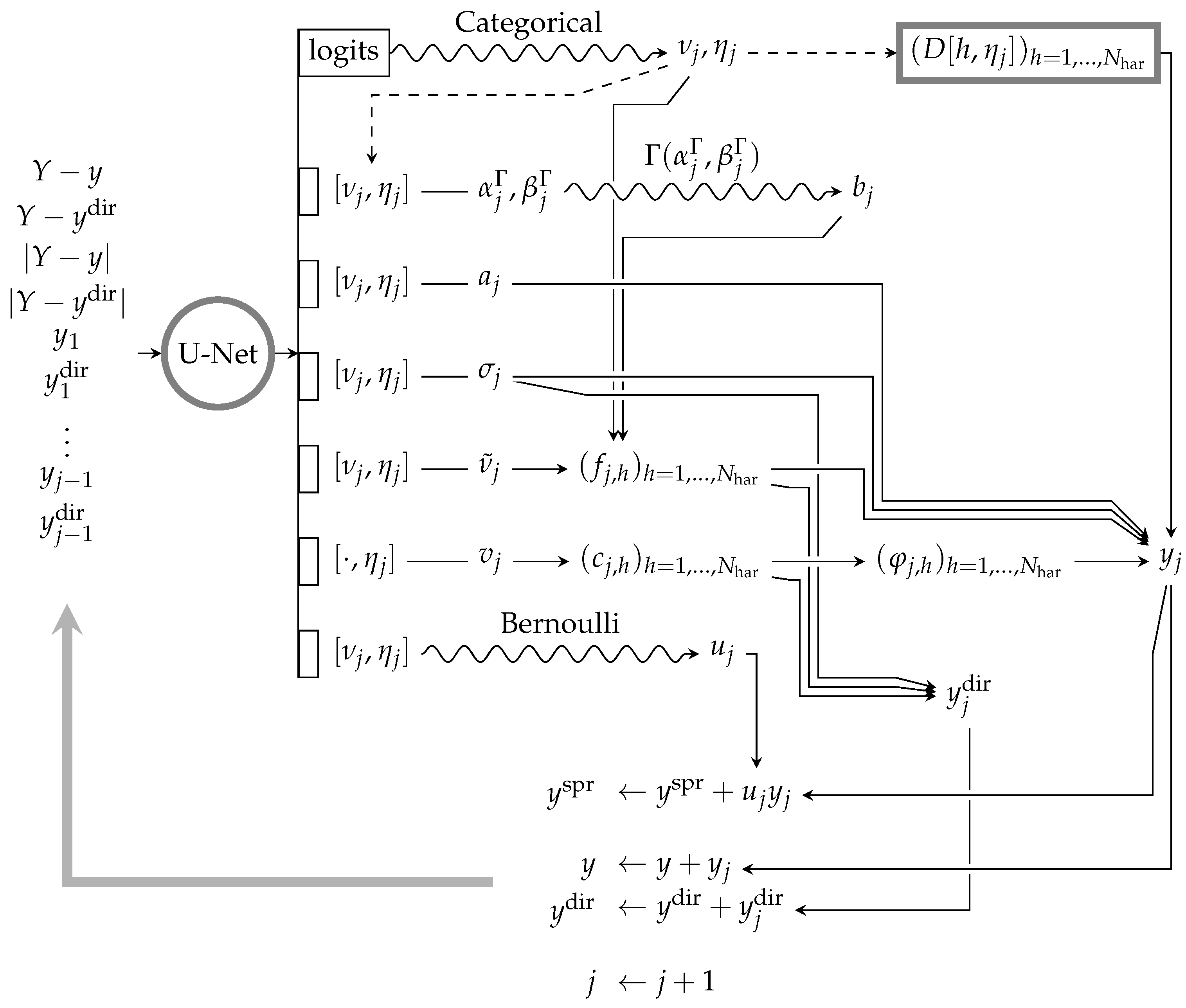

3. Learned Separation

3.1. Distance Function

3.2. Model Fitting

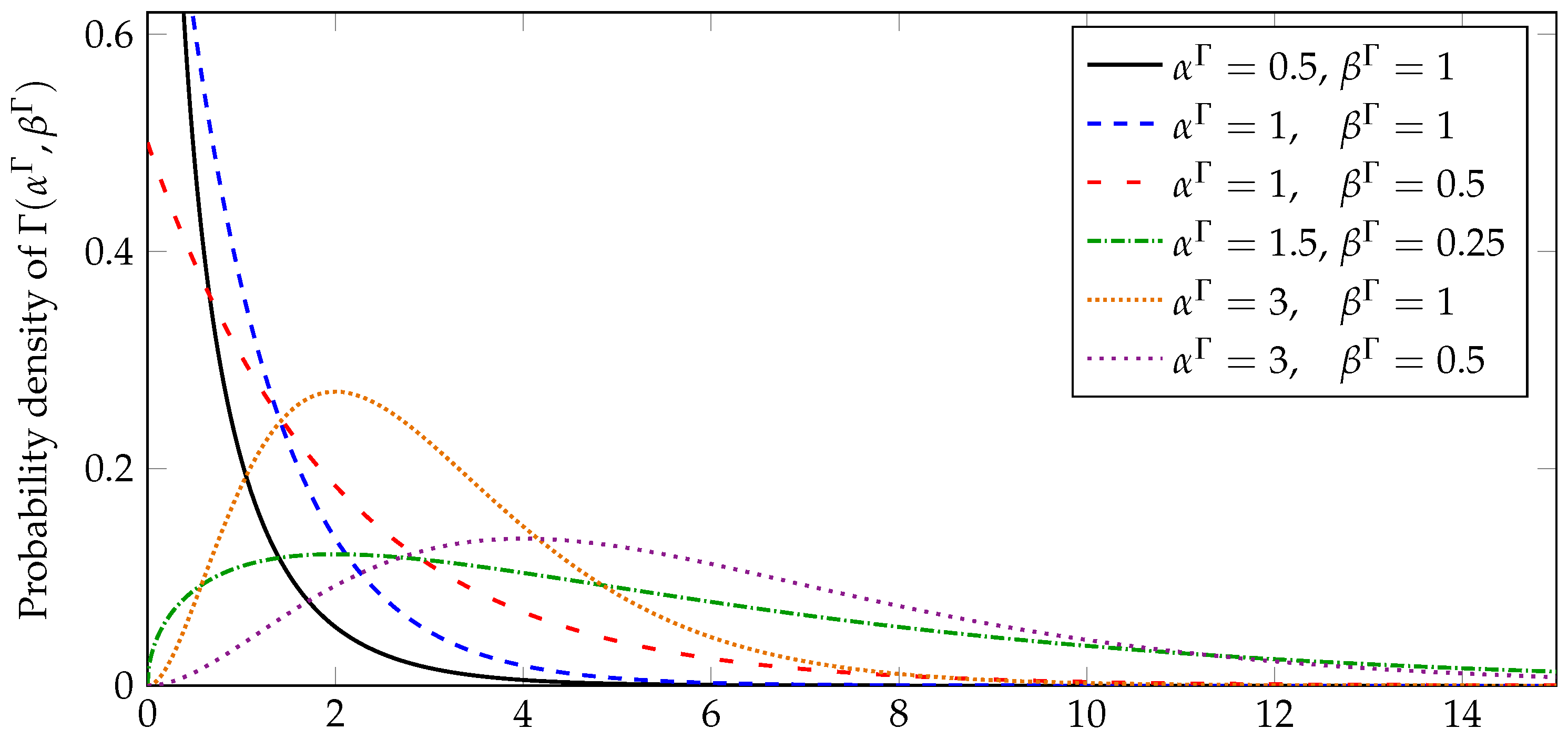

3.2.1. Parameter Representation

3.2.2. Policy Gradients

3.3. Phase Prediction

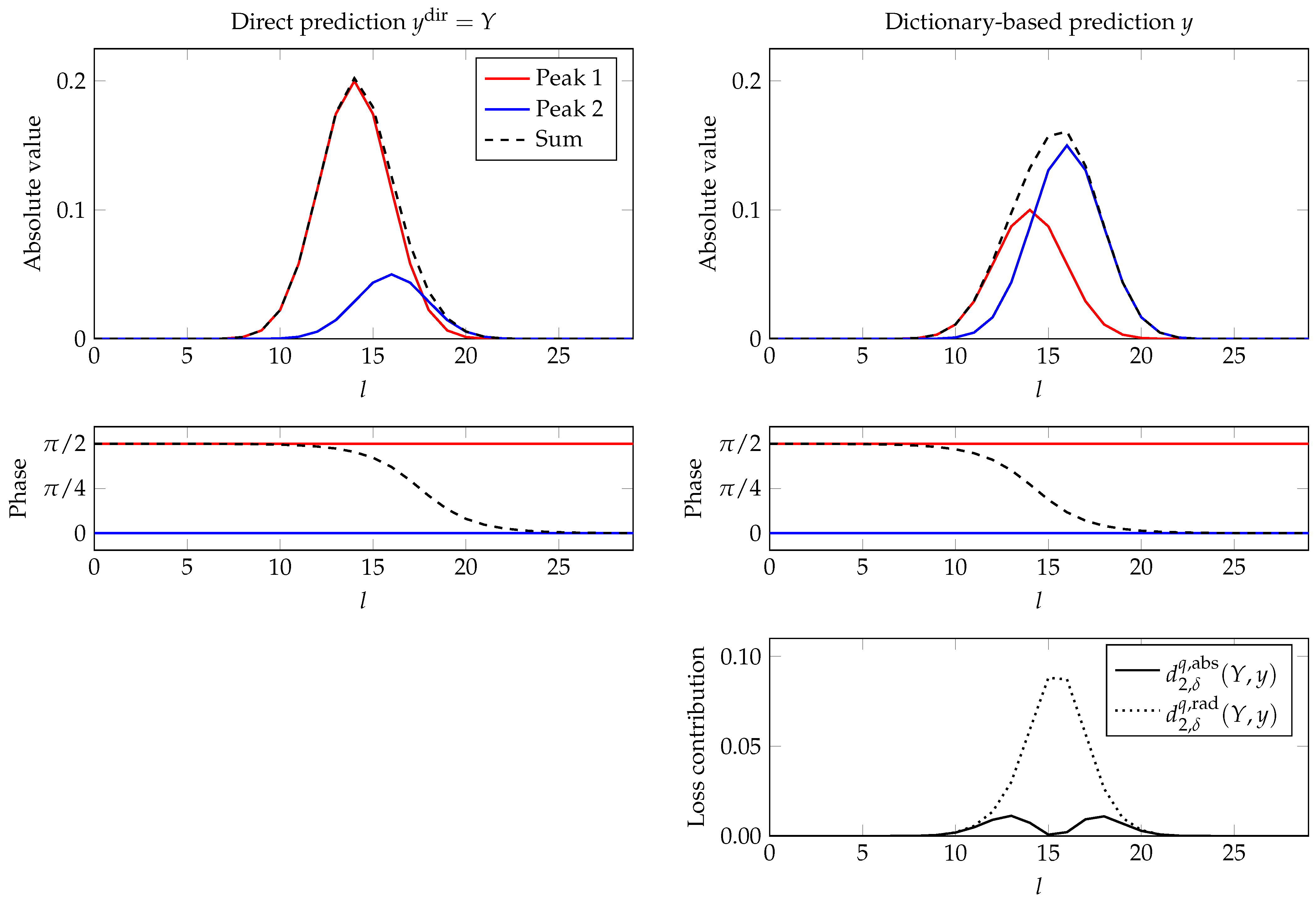

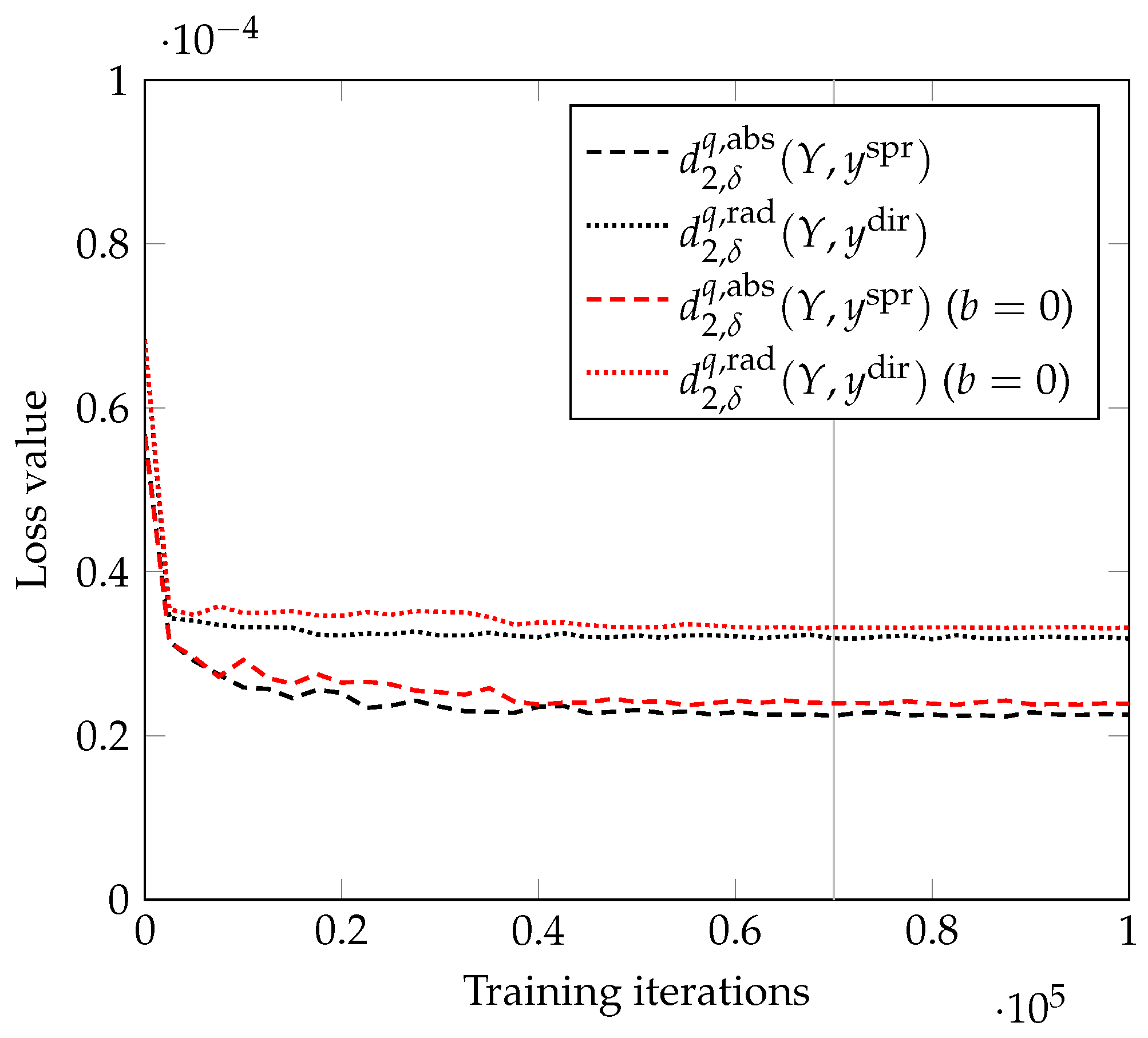

3.4. Complex Objectives

- It takes a number of training iterations for to give a useful value. In the meantime, the training of the other parameters can go in a bad direction.

- If the discrepancy between y and is high and there is a lot of overlap between the peaks (typically from different tones), the optimal phase values for y and may be significantly different. An example for this is displayed in Figure 3: The two peaks (red and blue) each have different phases, but by design, those are identical between the predictions. However, since the dictionary prediction is less flexible, its amplitude magnitudes of the harmonics often do not accurately match the input spectrum Y, which shifts the phase in the overlapping region. Thus, attempting to minimize both and would lead to a conflict regarding the choice of common phase values.

3.5. Sampling for Gradient Estimation

3.6. Network Architecture

3.7. Training

| Algorithm 1 Training scheme for the network and the dictionary, based on AdaMax [38]. Upper bound regularization of D and batch summation (see Section 3.6 and Section 3.7) are not explicitly stated. |

| Input:Z, , D Parameters:, , , , , fordo choose Y out of Output: |

3.8. Resynthesis

4. Experimental Results and Discussion

- The algorithm from a previous publication of some of the authors [20] assumes an identical tone model, but instead of a trained neural network, it uses a hand-crafted sparse pursuit algorithm for identification, and it operates on a specially computed log-frequency spectrogram. While the data model can represent inharmonicity, it is not fully incorporated into the pursuit algorithm. Moreover, information is lost in the creation of the spectrogram. Since the algorithm operates completely in the real domain, it does not consider phase information, which can lead to problems in the presence of beats. The conceptual advantage of the method is that it only requires rather few hyperparameters and their choice is not critical.

- The algorithm by Duan et al. [18] detects and clusters peaks in a linear-frequency STFT spectrogram via a probabilistic model. Its main advantage over other methods is that it can extract instrumental music out of a mixture with signals that cannot be represented. However, this comes at the cost of having to tune the parameters for the clustering algorithm specifically for every sample.

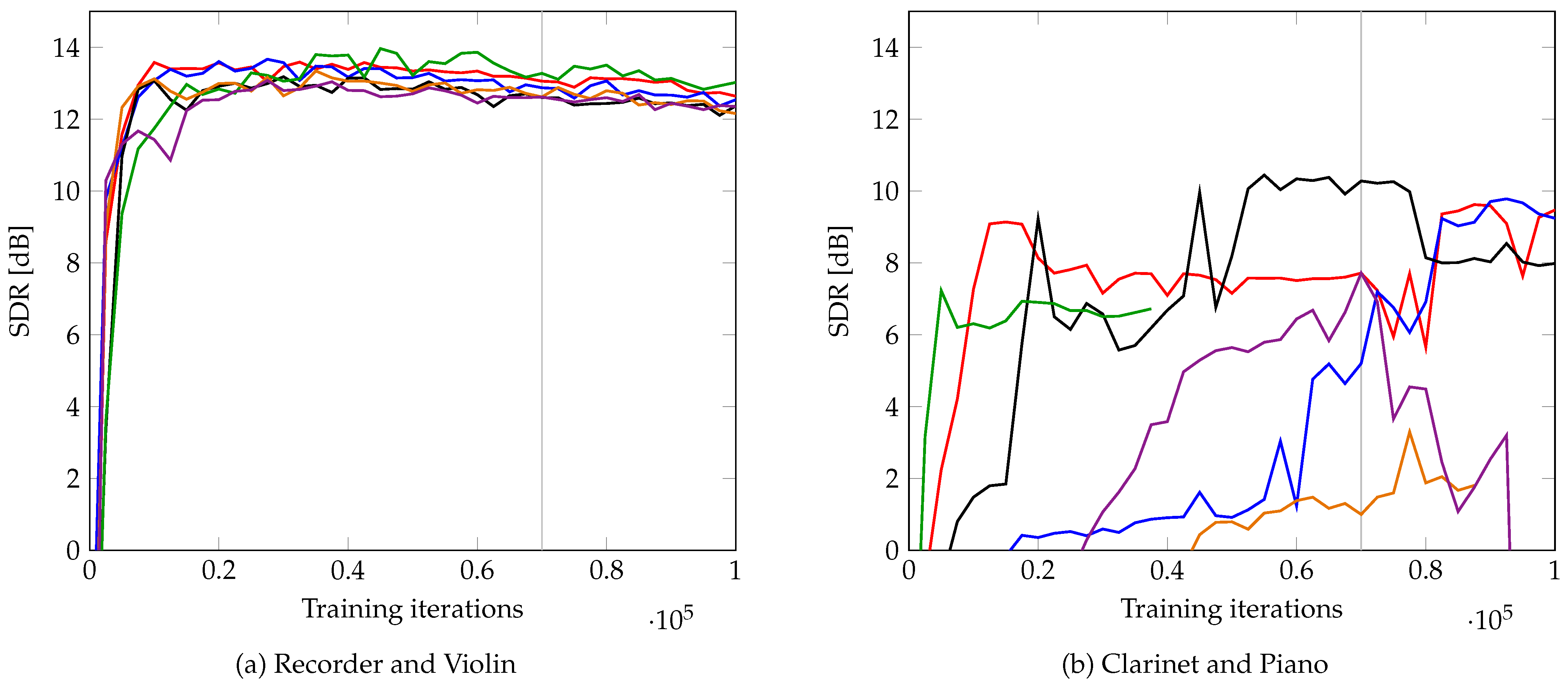

4.1. Mozart’s Duo for Two Instruments

4.2. URMP

Oracle Dictionary

4.3. Duan et al.

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| DIP | Deep image prior |

| GAN | Generative adversarial network |

| MCTS | Monte Carlo tree search |

| NMF | Non-negative matrix factorization |

| PLCA | Probabilistic latent component analysis |

| REINFORCE | |

| SAR | Signal-to-artifacts ratio |

| SDR | Signal-to-distortion ratio |

| SIR | Signal-to-interference ratio |

| STFT | Short-time Fourier transform |

| URMP | University of Rochester Multi-Modal Music Performance Dataset |

References

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Proceedings of the Medical Image Computing and Computer-Assisted Intervention–MICCAI 2015, Munich, Germany, 5–9 October 2015; Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F., Eds.; Springer: Berlin/Heidelberg, Germany, 2015; pp. 234–241. [Google Scholar] [CrossRef] [Green Version]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning, 2nd ed.; MIT Press: Cambridge, MA, USA, 2018. [Google Scholar]

- Vincent, E.; Virtanen, T.; Gannot, S. (Eds.) Audio Source Separation and Speech Enhancement; Wiley: Chichester, UK, 2018. [Google Scholar]

- Makino, S. (Ed.) Audio Source Separation; Springer: Cham, Switzerland, 2018. [Google Scholar]

- Chien, J.T. Source Separation and Machine Learning; Academic Press: London, UK, 2018. [Google Scholar]

- Smaragdis, P.; Brown, J.C. Non-negative matrix factorization for polyphonic music transcription. Applications of Signal Processing to Audio and Acoustics. In Proceedings of the 2003 IEEE Workshop on Applications of Signal Processing to Audio and Acoustics, New Paltz, NY, USA, 19–22 October 2003; pp. 177–180. [Google Scholar] [CrossRef] [Green Version]

- Wang, B.; Plumbley, M.D. Musical audio stream separation by non-negative matrix factorization. In Proceedings of the Digital Music Research Network (DMRN) Summer Conference, Glasgow, UK, 23–24 July 2005. [Google Scholar]

- Fitzgerald, D.; Cranitch, M.; Coyle, E. Shifted non-negative matrix factorisation for sound source separation. In Proceedings of the IEEE/SP 13th Workshop on Statistical Signal Processing, Bordeaux, France, 17–20 July 2005; pp. 1132–1137. [Google Scholar] [CrossRef] [Green Version]

- Jaiswal, R.; Fitzgerald, D.; Barry, D.; Coyle, E.; Rickard, S. Clustering NMF basis functions using shifted NMF for monaural sound source separation. In Proceedings of the 2011 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Prague, Czech Republic, 22–27 May 2011; pp. 245–248. [Google Scholar] [CrossRef] [Green Version]

- Fitzgerald, D.; Jaiswal, R.; Coyle, E.; Rickard, S. Shifted NMF using an efficient constant-Q transform for monaural sound source separation. In Proceedings of the 22nd IET Irish Signals and Systems Conference, Dublin, Ireland, 23–24 June 2011. [Google Scholar]

- Jaiswal, R.; Fitzgerald, D.; Coyle, E.; Rickard, S. Towards shifted NMF for improved monaural separation. In Proceedings of the 24th IET Irish Signals and Systems Conference, Letterkenny, Ireland, 20–21 June 2013. [Google Scholar] [CrossRef]

- Smaragdis, P.; Raj, B.; Shashanka, M. A probabilistic latent variable model for acoustic modeling. In Proceedings of the Neural Information Processing Systems Workshop on Advances in Models for Acoustic Processing, Whistler, BC, Canada, 9 December 2006. [Google Scholar]

- Smaragdis, P.; Raj, B.; Shashanka, M. Supervised and semi-supervised separation of sounds from single-channel mixtures. In Proceedings of the International Conference on Independent Component Analysis and Signal Separation, London, UK, 9–12 September 2007; pp. 414–421. [Google Scholar] [CrossRef]

- Smaragdis, P.; Raj, B.; Shashanka, M. Sparse and shift-invariant feature extraction from non-negative data. In Proceedings of the 2008 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Las Vegas, NV, USA, 31 March–4 April 2008; pp. 2069–2072. [Google Scholar] [CrossRef]

- Fuentes, B.; Badeau, R.; Richard, G. Adaptive harmonic time-frequency decomposition of audio using shift-invariant PLCA. In Proceedings of the 2011 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Prague, Czech Republic, 22–27 May 2011; pp. 401–404. [Google Scholar] [CrossRef]

- Fuentes, B.; Badeau, R.; Richard, G. Harmonic adaptive latent component analysis of audio and application to music transcription. IEEE Trans. Audio Speech Lang. Process. 2013, 21, 1854–1866. [Google Scholar] [CrossRef]

- Neuwirth, E. Musical Temperaments; Springer: Berlin/Heidelberg, Germany, 1997. [Google Scholar]

- Duan, Z.; Zhang, Y.; Zhang, C.; Shi, Z. Unsupervised single-channel music source separation by average harmonic structure modeling. IEEE Trans. Audio Speech Lang. Process. 2008, 16, 766–778. [Google Scholar] [CrossRef]

- Hennequin, R.; Badeau, R.; David, B. Time-dependent parametric and harmonic templates in non-negative matrix factorization. In Proceedings of the 13th International Conference on Digital Audio Effects (DAFx), Graz, Austria, 6–10 September 2010. [Google Scholar]

- Schulze, S.; King, E.J. Sparse pursuit and dictionary learning for blind source separation in polyphonic music recordings. EURASIP J. Audio Speech Music Process. 2021, 2021. [Google Scholar] [CrossRef]

- Stöter, F.R.; Uhlich, S.; Liutkus, A.; Mitsufuji, Y. Open-Unmix–A Reference Implementation for Music Source Separation. J. Open Source Softw. 2019, 4. [Google Scholar] [CrossRef] [Green Version]

- Défossez, A.; Usunier, N.; Bottou, L.; Bach, F. Music Source Separation in the Waveform Domain. arXiv 2019, arXiv:1911.13254. [Google Scholar]

- Li, T.; Chen, J.; Hou, H.; Li, M. Sams-Net: A sliced attention-based neural network for music source separation. In Proceedings of the 12th International Symposium on Chinese Spoken Language Processing (ISCSLP), Hong Kong, China, 24–27 January 2021; pp. 1–5. [Google Scholar] [CrossRef]

- Nachmani, E.; Adi, Y.; Wolf, L. Voice separation with an unknown number of multiple speakers. In Proceedings of the International Conference on Machine Learning (ICML), Vienna, Austria, 12–18 July 2020; Volume 119, pp. 7164–7175. [Google Scholar]

- Takahashi, N.; Mitsufuji, Y. D3Net: Densely connected multidilated DenseNet for music source separation. arXiv 2021, arXiv:2010.01733. [Google Scholar]

- Ulyanov, D.; Vedaldi, A.; Lempitsky, V. Deep image prior. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 9446–9454. [Google Scholar]

- Gandelsman, Y.; Shocher, A.; Irani, M. “Double-DIP”: Unsupervised Image Decomposition via Coupled Deep-Image-Priors. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–20 June 2019; pp. 11026–11035. [Google Scholar]

- Tian, Y.; Xu, C.; Li, D. Deep audio prior. arXiv 2019, arXiv:1912.10292. [Google Scholar]

- Narayanaswamy, V.; Thiagarajan, J.J.; Anirudh, R.; Spanias, A. Unsupervised Audio Source Separation Using Generative Priors. In Proceedings of the Interspeech 2020, Shanghai, China, 25–29 October 2020; pp. 2657–2661. [Google Scholar] [CrossRef]

- Williams, R.J. Simple statistical gradient-following algorithms for connectionist reinforcement learning. Mach. Learn. 1992, 8, 229–256. [Google Scholar] [CrossRef] [Green Version]

- Silver, D.; Schrittwieser, J.; Simonyan, K.; Antonoglou, I.; Huang, A.; Guez, A.; Hubert, T.; Baker, L.; Lai, M.; Bolton, A.; et al. Mastering the game of Go without human knowledge. Nature 2017, 550, 354–359. [Google Scholar] [CrossRef] [PubMed]

- Silver, D.; Hubert, T.; Schrittwieser, J.; Antonoglou, I.; Lai, M.; Guez, A.; Lanctot, M.; Sifre, L.; Kumaran, D.; Graepel, T.; et al. A general reinforcement learning algorithm that masters chess, shogi, and Go through self-play. Science 2018, 362, 1140–1144. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Schrittwieser, J.; Antonoglou, I.; Hubert, T.; Simonyan, K.; Sifre, L.; Schmitt, S.; Guez, A.; Lockhart, E.; Hassabis, D.; Graepel, T.; et al. Mastering atari, Go, chess and shogi by planning with a learned model. Nature 2020, 588, 604–609. [Google Scholar] [CrossRef]

- Fletcher, N.H.; Rossing, T.D. The Physics of Musical Instruments, 2nd ed.; Springer: New York, NY, USA, 1998. [Google Scholar]

- Gröchenig, K. Foundations of Time-Frequency Analysis; Birkhäuser: Boston, MA, USA, 2001. [Google Scholar]

- Févotte, C.; Idier, J. Algorithms for nonnegative matrix factorization with the β-divergence. Neural Comput. 2011, 23, 2421–2456. [Google Scholar] [CrossRef]

- Liu, R.; Lehman, J.; Molino, P.; Petroski Such, F.; Frank, E.; Sergeev, A.; Yosinski, J. An intriguing failing of convolutional neural networks and the CoordConv solution. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Montréal, QC, Canada, 3–8 December 2018; Bengio, S., Wallach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., Garnett, R., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2018; Volume 31. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Dörfler, M. Gabor Analysis for a Class of Signals Called Music. Ph.D. Thesis, University of Vienna, Vienna, Austria, 2002. [Google Scholar]

- Vincent, E.; Gribonval, R.; Févotte, C. Performance measurement in blind audio source separation. IEEE Trans. Audio Speech Lang. Process. 2006, 14, 1462–1469. [Google Scholar] [CrossRef] [Green Version]

- Févotte, C.; Gribonval, R.; Vincent, E. BSS_EVAL Toolbox User Guide–Revision 2.0; Technical Report 1706; IRISA: Rennes, France, 2005. [Google Scholar]

- Li, B.; Liu, X.; Dinesh, K.; Duan, Z.; Sharma, G. Creating a multitrack classical music performance dataset for multimodal music analysis: Challenges, insights, and applications. IEEE Trans. Multimed. 2018, 21, 522–535. [Google Scholar] [CrossRef]

| Method | Instrument | SDR | SIR | SAR |

|---|---|---|---|---|

| Ours | Recorder | 13.1 | 34.8 | 13.2 |

| Violin | 13.4 | 34.2 | 13.5 | |

| Clarinet | 12.4 | 28.0 | 12.6 | |

| Piano | 8.1 | 42.2 | 8.1 | |

| [20] | Recorder | 15.1 | 32.4 | 15.2 |

| Violin | 11.9 | 23.8 | 12.2 | |

| Clarinet | 04.1 | 24.3 | 04.1 | |

| Piano | 02.1 | 09.3 | 03.5 | |

| [18] | Recorder | 10.6 | 21.4 | 11.0 |

| Violin | 05.8 | 18.4 | 06.1 | |

| Clarinet | 06.7 | 21.3 | 06.9 | |

| Piano | 05.5 | 16.4 | 05.9 |

| Method | Instrument | SDR | SIR | SAR |

|---|---|---|---|---|

| Ours | Flute | −4.7 | 17.5 | −4.6 |

| Clarinet | 5.0 | 10.1 | 7.0 | |

| Trumpet | 7.7 | 19.9 | 8.0 | |

| Violin | 9.7 | 30.7 | 9.7 | |

| Trumpet | 8.4 | 30.3 | 8.4 | |

| Saxophone | 13.0 | 24.9 | 13.3 | |

| Oboe | 2.9 | 6.9 | 5.9 | |

| Cello | −0.6 | 19.2 | −0.5 | |

| [20] | Flute | 2.4 | 9.5 | 3.9 |

| Clarinet | 6.2 | 25.3 | 6.3 | |

| Trumpet | 5.3 | 16.6 | 5.7 | |

| Violin | 7.7 | 25.1 | 7.8 | |

| Trumpet | −2.4 | 1.1 | 2.7 | |

| Saxophone | 0.1 | 22.5 | 0.2 | |

| Oboe | 6.3 | 17.0 | 6.8 | |

| Cello | 4.2 | 17.1 | 4.5 | |

| [18] | Flute | 3.4 | 19.6 | 3.6 |

| Clarinet | 2.1 | 5.9 | 5.4 | |

| Trumpet | — | — | — | |

| Violin | — | — | — | |

| Trumpet | 1.2 | 9.4 | 2.3 | |

| Saxophone | 6.9 | 17.2 | 7.4 | |

| Oboe | −0.8 | 13.1 | −0.4 | |

| Cello | 03.4 | 06.4 | 7.3 |

| Fix | Pred. | Instrument | SDR | SIR | SAR |

|---|---|---|---|---|---|

| Yes | Dir. | Flute | 1.2 | 9.4 | 2.4 |

| Clarinet | 5.7 | 25.7 | 5.8 | ||

| Oboe | 5.3 | 11.2 | 6.8 | ||

| Cello | 3.0 | 30.3 | 3.0 | ||

| Dict. | Flute | −0.5 | 1.0 | 0.3 | |

| Clarinet | 1.8 | 30.2 | 1.8 | ||

| Oboe | 0.5 | 9.6 | 1.6 | ||

| Cello | −1.4 | 25.4 | −1.4 | ||

| No | Dir. | Flute | −0.4 | 21.6 | −0.3 |

| Clarinet | 7.0 | 13.2 | 8.4 | ||

| Oboe | 3.7 | 8.0 | 6.3 | ||

| Cello | 0.4 | 26.1 | 0.5 | ||

| Dict. | Flute | −5.1 | 24.6 | −5.1 | |

| Clarinet | 2.2 | 17.3 | 2.4 | ||

| Oboe | −1.8 | 4.6 | 0.6 | ||

| Cello | −2.8 | 23.7 | −2.8 |

| Method | Instrument | SDR | SIR | SAR |

|---|---|---|---|---|

| Ours | Oboe (a.) | 9.6 | 47.2 | 9.6 |

| Euphonium (a.) | 8.7 | 33.7 | 8.7 | |

| Piccolo (s.) | 17.2 | 36.5 | 17.2 | |

| Organ (s.) | 14.3 | 50.3 | 14.3 | |

| Piccolo (s.) | 6.8 | 22.1 | 6.9 | |

| Organ (s.) | 7.3 | 19.2 | 7.7 | |

| Oboe (s.) | 8.3 | 46.3 | 8.3 | |

| [20] | Oboe (a.) | 18.6 | 33.6 | 18.8 |

| Euphonium (a.) | 14.7 | 31.5 | 14.7 | |

| Piccolo (s.) | 11.2 | 25.9 | 11.3 | |

| Organ (s.) | 10.1 | 20.7 | 10.5 | |

| Piccolo (s.) | 4.2 | 24.8 | 4.3 | |

| Organ (s.) | 6.0 | 20.0 | 6.3 | |

| Oboe (s.) | 5.3 | 12.4 | 6.4 | |

| [18] | Oboe (a.) | 8.7 | 25.8 | 8.8 |

| Euphonium (a.) | 4.6 | 14.5 | 5.3 | |

| Piccolo (s.) | 14.2 | 27.9 | 14.4 | |

| Organ (s.) | 11.8 | 25.1 | 12.1 | |

| Piccolo (s.) | 6.5 | 20.0 | 6.7 | |

| Organ (s.) | 6.6 | 17.3 | 7.1 | |

| Oboe (s.) | 9.0 | 21.9 | 9.2 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Schulze, S.; Leuschner, J.; King, E.J. Blind Source Separation in Polyphonic Music Recordings Using Deep Neural Networks Trained via Policy Gradients. Signals 2021, 2, 637-661. https://doi.org/10.3390/signals2040039

Schulze S, Leuschner J, King EJ. Blind Source Separation in Polyphonic Music Recordings Using Deep Neural Networks Trained via Policy Gradients. Signals. 2021; 2(4):637-661. https://doi.org/10.3390/signals2040039

Chicago/Turabian StyleSchulze, Sören, Johannes Leuschner, and Emily J. King. 2021. "Blind Source Separation in Polyphonic Music Recordings Using Deep Neural Networks Trained via Policy Gradients" Signals 2, no. 4: 637-661. https://doi.org/10.3390/signals2040039

APA StyleSchulze, S., Leuschner, J., & King, E. J. (2021). Blind Source Separation in Polyphonic Music Recordings Using Deep Neural Networks Trained via Policy Gradients. Signals, 2(4), 637-661. https://doi.org/10.3390/signals2040039