Optimizing Carbon Capture Efficiency: Knowledge Extraction from Process Simulations of Post-Combustion Amine Scrubbing

Abstract

1. Introduction

2. Materials and Methods

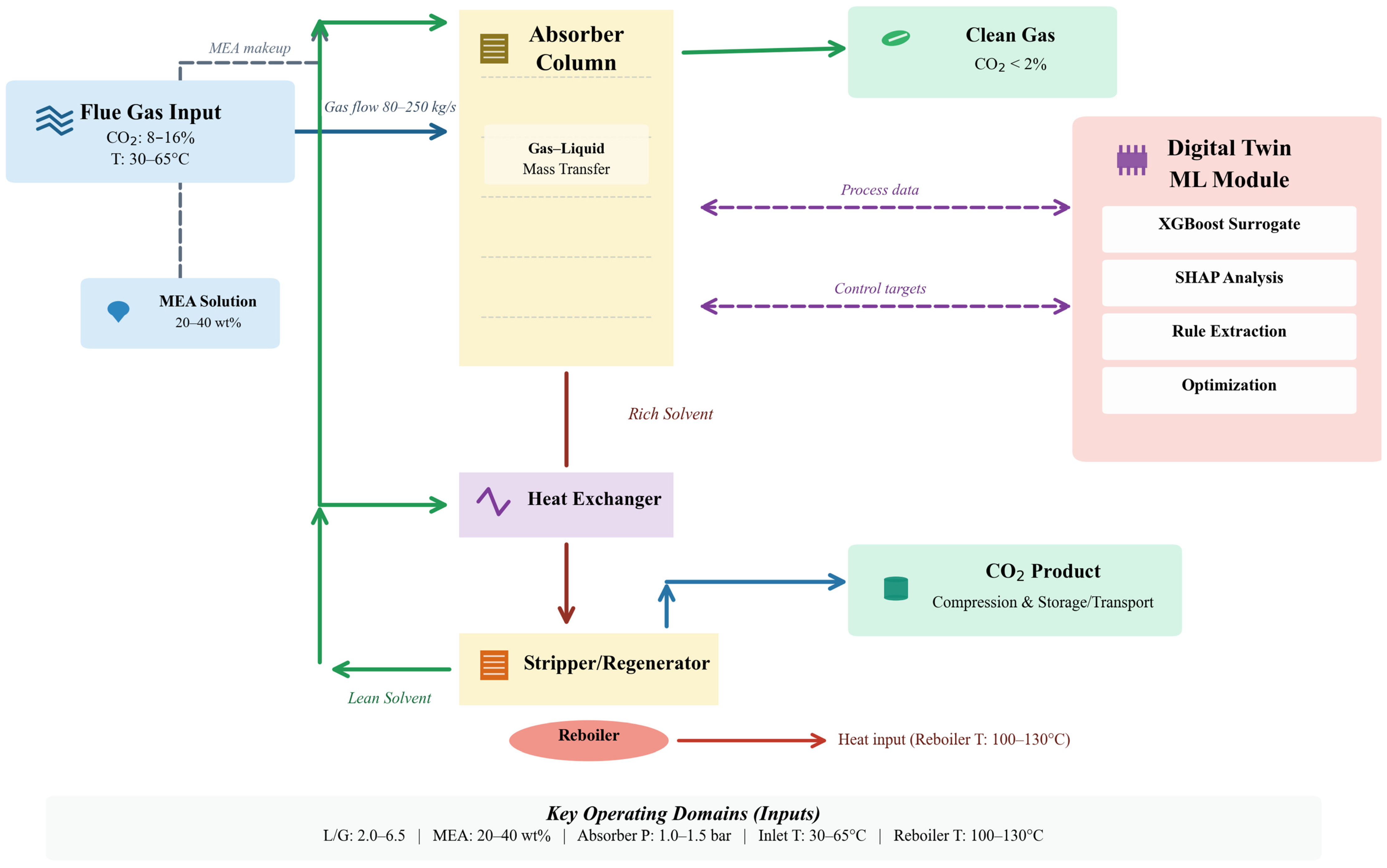

2.1. Study Design and Analytical Framework

2.2. Simulation Framework and Training Data Generation

2.2.1. Simulation Architecture

2.2.2. Parametric Framework and Sampling Methodology

2.2.3. Latin Hypercube Sampling and Database Construction

2.3. Machine Learning Model Development and Architecture Selection

2.3.1. Data Preprocessing and Feature Engineering

2.3.2. Training and Validation Dataset Partitioning

2.3.3. XGBoost Architecture and Hyperparameter Optimization

2.3.4. Alternative Model Architectures and Comparative Evaluation

2.4. Model Interpretability Through SHAP Analysis

2.4.1. Shapley Value Theory and TreeExplainer Algorithm

2.4.2. Global Feature Importance Quantification

2.4.3. SHAP Dependence Plots and Interaction Effects

2.5. Sensitivity Analysis and Uncertainty Quantification

2.5.1. Local Sensitivity Analysis Methodology

2.5.2. Bootstrap Uncertainty Quantification

2.5.3. Multi-Level Sensitivity Evaluation

2.6. Multi-Objective Optimization Framework

2.6.1. Pareto Optimization Problem Formulation

2.6.2. NSGA-II Evolutionary Algorithm Implementation

2.6.3. Pareto Frontier Post-Processing and Decision Support

2.7. External Validation Protocol and Benchmark Dataset

3. Results

3.1. Machine Learning Model Performance and Comparative Evaluation

3.2. Model Interpretability Through SHAP Feature Importance Analysis

3.3. Parametric Response Analysis and Optimal Operating Regions

3.4. External Validation Against CASTOR Pilot Data

4. Discussion

5. Conclusions

Supplementary Materials

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| ANN | Artificial Neural Network |

| CCS | Carbon Capture and Storage |

| CI | Confidence Interval |

| CO2 | Carbon Dioxide |

| CPU | Central Processing Unit |

| CSVs | Comma Separated Values |

| DT | Digital Twin |

| GBM | Gradient Boosting Machine |

| GPU | Graphics Processing Unit |

| L/G | Liquid-to-Gas Ratio |

| LHS | Latin Hypercube Sampling |

| MAE | Mean Absolute Error |

| MAPD | Mean Absolute Percentage Deviation |

| MEA | Monoethanolamine |

| ML | Machine Learning |

| MPC | Model Predictive Control |

| NSGA-II | Non-dominated Sorting Genetic Algorithm II |

| NRTL | Non-Random Two Liquid (activity coefficient model) |

| RBF | Radial Basis Function |

| RMSE | Root Mean Square Error |

| RMSPD | Root Mean Square Percentage Deviation |

| R2 | Coefficient of Determination |

| SRD | Specific Regeneration Duty |

| SVR | Support Vector Regression |

| VLE | Vapor–Liquid Equilibrium |

| XGBoost | Extreme Gradient Boosting |

| SHAP | SHapley Additive exPlanations |

References

- Intergovernmental Panel on Climate Change (IPCC). Climate Change 2021—The Physical Science Basis; Cambridge University Press: Cambridge, UK, 2023; ISBN 9781009157896. [Google Scholar]

- Rabbi, M.F.; Abdullah, M. Fossil Fuel CO2 Emissions and Economic Growth in the Visegrád Region: A Study Based on the Environmental Kuznets Curve Hypothesis. Climate 2024, 12, 115. [Google Scholar] [CrossRef]

- Pianta, S.; Rinscheid, A.; Weber, E.U. Carbon Capture and Storage in the United States: Perceptions, Preferences, and Lessons for Policy. Energy Policy 2021, 151, 112149. [Google Scholar] [CrossRef]

- Rabbi, M.F. Unified Artificial Intelligence Framework for Modeling Pollution Dynamics and Sustainable Remediation in Environmental Chemistry. Sci. Rep. 2025, 15, 36196. [Google Scholar] [CrossRef]

- Wang, Y.; Pan, Z.; Zhang, W.; Borhani, T.N.; Li, R.; Zhang, Z. Life Cycle Assessment of Combustion-Based Electricity Generation Technologies Integrated with Carbon Capture and Storage: A Review. Environ. Res. 2022, 207, 112219. [Google Scholar] [CrossRef]

- van Ruijven, B.J.; van Vuuren, D.P.; Boskaljon, W.; Neelis, M.L.; Saygin, D.; Patel, M.K. Long-Term Model-Based Projections of Energy Use and CO2 Emissions from the Global Steel and Cement Industries. Resour. Conserv. Recycl. 2016, 112, 15–36. [Google Scholar] [CrossRef]

- Zhang, Z.; Vo, D.-N.; Nguyen, T.B.H.; Sun, J.; Lee, C.-H. Advanced Process Integration and Machine Learning-Based Optimization to Enhance Techno-Economic-Environmental Performance of CO2 Capture and Conversion to Methanol. Energy 2024, 293, 130758. [Google Scholar] [CrossRef]

- Wennersten, R.; Sun, Q.; Li, H. The Future Potential for Carbon Capture and Storage in Climate Change Mitigation—An Overview from Perspectives of Technology, Economy and Risk. J. Clean. Prod. 2015, 103, 724–736. [Google Scholar] [CrossRef]

- Gidden, M.J.; Joshi, S.; Armitage, J.J.; Christ, A.-B.; Boettcher, M.; Brutschin, E.; Köberle, A.C.; Riahi, K.; Schellnhuber, H.J.; Schleussner, C.-F.; et al. A Prudent Planetary Limit for Geologic Carbon Storage. Nature 2025, 645, 124–132. [Google Scholar] [CrossRef]

- Rubin, E.S.; Davison, J.E.; Herzog, H.J. The Cost of CO2 Capture and Storage. Int. J. Greenh. Gas Control 2015, 40, 378–400. [Google Scholar] [CrossRef]

- Gingerich, D.B.; Grol, E.; Mauter, M.S. Fundamental Challenges and Engineering Opportunities in Flue Gas Desulfurization Wastewater Treatment at Coal Fired Power Plants. Environ. Sci. 2018, 4, 909–925. [Google Scholar] [CrossRef]

- Wang, H.; Liu, S.; Wang, H.; Chao, J.; Li, T.; Ellis, N.; Duo, W.; Bi, X.; Smith, K.J. Thermochemical Conversion of Biomass to Fuels and Chemicals: A Review of Catalysts, Catalyst Stability, and Reaction Mechanisms. Catal. Rev. 2025, 67, 57–129. [Google Scholar] [CrossRef]

- Tahir, N.M.; Zhang, J.; Armstrong, M. Control of Heat-Integrated Distillation Columns: Review, Trends, and Challenges for Future Research. Processes 2024, 13, 17. [Google Scholar] [CrossRef]

- Mommers, J.; van der Wal, S. Column Selection and Optimization for Comprehensive Two-Dimensional Gas Chromatography: A Review. Crit. Rev. Anal. Chem. 2021, 51, 183–202. [Google Scholar] [CrossRef] [PubMed]

- Zhang, Q.; Turton, R.; Bhattacharyya, D. Development of Model and Model-Predictive Control of an MEA-Based Postcombustion CO2 Capture Process. Ind. Eng. Chem. Res. 2016, 55, 1292–1308. [Google Scholar] [CrossRef]

- Li, Q.; Zhang, W.; Qin, Y.; An, A. Model Predictive Control for the Process of MEA Absorption of CO2 Based on the Data Identification Model. Processes 2021, 9, 183. [Google Scholar] [CrossRef]

- Harun, N.; Nittaya, T.; Douglas, P.L.; Croiset, E.; Ricardez-Sandoval, L.A. Dynamic Simulation of MEA Absorption Process for CO2 Capture from Power Plants. Int. J. Greenh. Gas Control 2012, 10, 295–309. [Google Scholar] [CrossRef]

- Ghadyanlou, F.; Azari, A.; Vatani, A. A Review of Modeling Rotating Packed Beds and Improving Their Parameters: Gas–Liquid Contact. Sustainability 2021, 13, 8046. [Google Scholar] [CrossRef]

- Tobiesen, F.A.; Svendsen, H.F.; Juliussen, O. Experimental Validation of a Rigorous Absorber Model for CO2 Postcombustion Capture. AIChE J. 2007, 53, 846–865. [Google Scholar] [CrossRef]

- Wu, X.; Wang, M.; Shen, J.; Li, Y.; Lawal, A.; Lee, K.Y. Reinforced Coordinated Control of Coal-Fired Power Plant Retrofitted with Solvent Based CO2 Capture Using Model Predictive Controls. Appl. Energy 2019, 238, 495–515. [Google Scholar] [CrossRef]

- Bartling, A.W.; Stone, M.L.; Hanes, R.J.; Bhatt, A.; Zhang, Y.; Biddy, M.J.; Davis, R.; Kruger, J.S.; Thornburg, N.E.; Luterbacher, J.S.; et al. Techno-Economic Analysis and Life Cycle Assessment of a Biorefinery Utilizing Reductive Catalytic Fractionation. Energy Environ. Sci. 2021, 14, 4147–4168. [Google Scholar] [CrossRef]

- Sipöcz, N.; Tobiesen, F.A.; Assadi, M. The Use of Artificial Neural Network Models for CO2 Capture Plants. Appl. Energy 2011, 88, 2368–2376. [Google Scholar] [CrossRef]

- Fu, J.; Chang, Y.; Huang, B. Prediction and Sensitivity Analysis of CO2 Capture by Amine Solvent Scrubbing Technique Based on BP Neural Network. Front. Bioeng. Biotechnol. 2022, 10, 907904. [Google Scholar] [CrossRef] [PubMed]

- Hu, X.; Guo, L.; Wang, J.; Liu, Y. Computational Fluid Dynamics and Machine Learning Integration for Evaluating Solar Thermal Collector Efficiency -Based Parameter Analysis. Sci. Rep. 2025, 15, 24528. [Google Scholar] [CrossRef] [PubMed]

- Yan, Y.; Borhani, T.N.; Subraveti, S.G.; Pai, K.N.; Prasad, V.; Rajendran, A.; Nkulikiyinka, P.; Asibor, J.O.; Zhang, Z.; Shao, D.; et al. Harnessing the Power of Machine Learning for Carbon Capture, Utilisation, and Storage (CCUS)—A State-of-the-Art Review. Energy Environ. Sci. 2021, 14, 6122–6157. [Google Scholar] [CrossRef]

- Manikandan, S.; Kaviya, R.S.; Shreeharan, D.H.; Subbaiya, R.; Vickram, S.; Karmegam, N.; Kim, W.; Govarthanan, M. Artificial Intelligence-driven Sustainability: Enhancing Carbon Capture for Sustainable Development Goals—A Review. Sustain. Dev. 2025, 33, 2004–2029. [Google Scholar] [CrossRef]

- Ebadian, M.; van Dyk, S.; McMillan, J.D.; Saddler, J. Biofuels Policies That Have Encouraged Their Production and Use: An International Perspective. Energy Policy 2020, 147, 111906. [Google Scholar] [CrossRef]

- Rudin, C. Stop Explaining Black Box Machine Learning Models for High Stakes Decisions and Use Interpretable Models Instead. Nat. Mach. Intell. 2019, 1, 206–215. [Google Scholar] [CrossRef]

- Venkatasubramanian, V. The Promise of Artificial Intelligence in Chemical Engineering: Is It Here, Finally? AIChE J. 2019, 65, 466–478. [Google Scholar] [CrossRef]

- Sun, C.; Liu, Z.-P. Discovering Explainable Biomarkers for Breast Cancer Anti-PD1 Response via Network Shapley Value Analysis. Comput. Methods Programs Biomed. 2024, 257, 108481. [Google Scholar] [CrossRef]

- Tan, S.; Wang, R.; Song, G.; Qi, S.; Zhang, K.; Zhao, Z.; Yin, Q. Machine Learning and Shapley Additive Explanation-Based Interpretable Prediction of the Electrocatalytic Performance of N-Doped Carbon Materials. Fuel 2024, 355, 129469. [Google Scholar] [CrossRef]

- Kumar De, S.; Won, D.-I.; Kim, J.; Kim, D.H. Integrated CO2 Capture and Electrochemical Upgradation: The Underpinning Mechanism and Techno-Chemical Analysis. Chem. Soc. Rev. 2023, 52, 5744–5802. [Google Scholar] [CrossRef]

- Nguyen, A.-T.; Reiter, S.; Rigo, P. A Review on Simulation-Based Optimization Methods Applied to Building Performance Analysis. Appl. Energy 2014, 113, 1043–1058. [Google Scholar] [CrossRef]

- Alyahya, A.; Lannon, S.; Jabi, W. A Framework for Optimizing Biomimetic Opaque Ventilated Façades Using CFD and Machine Learning. Buildings 2025, 15, 4130. [Google Scholar] [CrossRef]

- Bhosekar, A.; Ierapetritou, M. Advances in Surrogate Based Modeling, Feasibility Analysis, and Optimization: A Review. Comput. Chem. Eng. 2018, 108, 250–267. [Google Scholar] [CrossRef]

- Nian, M.; Dong, R.; Zhong, W.; Zhang, Y.; Lou, D. Multi-Objective Optimization Study on Capture Performance of Diesel Particulate Filter Based on the GRA-MLR-WOA Hybrid Method. Sustainability 2025, 17, 8777. [Google Scholar] [CrossRef]

- Yang, Y.; Li, Y.; Huang, Q.; Xia, J.; Li, J. Surrogate-Based Multiobjective Optimization to Rapidly Size Low Impact Development Practices for Outflow Capture. J. Hydrol. 2023, 616, 128848. [Google Scholar] [CrossRef]

- Omrany, H.; Al-Obaidi, K.M.; Husain, A.; Ghaffarianhoseini, A. Digital Twins in the Construction Industry: A Comprehensive Review of Current Implementations, Enabling Technologies, and Future Directions. Sustainability 2023, 15, 10908. [Google Scholar] [CrossRef]

- Rasheed, A.; San, O.; Kvamsdal, T. Digital Twin: Values, Challenges and Enablers From a Modeling Perspective. IEEE Access 2020, 8, 21980–22012. [Google Scholar] [CrossRef]

- Rahimi, M.; Moosavi, S.M.; Smit, B.; Hatton, T.A. Toward Smart Carbon Capture with Machine Learning. Cell Rep. Phys. Sci. 2021, 2, 100396. [Google Scholar] [CrossRef]

- Chung, W.; Lee, J.H. Input–Output Surrogate Models for Efficient Economic Evaluation of Amine Scrubbing CO2 Capture Processes. Ind. Eng. Chem. Res. 2020, 59, 18951–18964. [Google Scholar] [CrossRef]

- von Stosch, M.; Oliveira, R.; Peres, J.; Feyo de Azevedo, S. Hybrid Semi-Parametric Modeling in Process Systems Engineering: Past, Present and Future. Comput. Chem. Eng. 2014, 60, 86–101. [Google Scholar] [CrossRef]

- Motaei, E.; Ganat, T.; Tabatabai, M.; Umer, M.; Krishna, S. Advancements in Machine Learning–Driven Proxy Modelling Techniques for Reservoir Engineering: A Systematic Review. J. Pet. Geol. 2026, 49, 516–540. [Google Scholar] [CrossRef]

- Gao, X.; Chen, B.; He, X.; Qiu, T.; Li, J.; Wang, C.; Zhang, L. Multi-Objective Optimization for the Periodic Operation of the Naphtha Pyrolysis Process Using a New Parallel Hybrid Algorithm Combining NSGA-II with SQP. Comput. Chem. Eng. 2008, 32, 2801–2811. [Google Scholar] [CrossRef]

- Ziaii, S.; Rochelle, G.T.; Edgar, T.F. Optimum Design and Control of Amine Scrubbing in Response to Electricity and CO2 Prices. Energy Procedia 2011, 4, 1683–1690. [Google Scholar] [CrossRef][Green Version]

- Bailey, N.; Papakyriakou, T.N.; Bartels, C.; Wang, F. Henry’s Law Constant for CO2 in Aqueous Sodium Chloride Solutions at 1 Atm and Sub-Zero (Celsius) Temperatures. Mar. Chem. 2018, 207, 26–32. [Google Scholar] [CrossRef]

- Gladis, A.; Lomholdt, N.F.; Fosbøl, P.L.; Woodley, J.M.; von Solms, N. Pilot Scale Absorption Experiments with Carbonic Anhydrase-Enhanced MDEA- Benchmarking with 30 wt% MEA. Int. J. Greenh. Gas Control 2019, 82, 69–85. [Google Scholar] [CrossRef]

- Hu, Y.; Wang, Q.; Hu, D.; Zhang, Y.; Furqan, M.; Lu, S. Experimental Study on CO2 Capture by MEA/n-Butanol/H2O Phase Change Absorbent. RSC Adv. 2024, 14, 3146–3157. [Google Scholar] [CrossRef]

- Vinjarapu, S.H.B.; Neerup, R.; Larsen, A.H.; Jørsboe, J.K.; Villadsen, S.N.B.; Jensen, S.; Karlsson, J.L.; Kappel, J.; Lassen, H.; Blinksbjerg, P.; et al. Results from Pilot-Scale CO Capture Testing Using 30 wt% MEA at a Waste-to-Energy Facility: Optimisation through Parametric Analysis. Appl. Energy 2024, 355, 122193. [Google Scholar] [CrossRef]

- McKay, M.D.; Beckman, R.J.; Conover, W.J. Comparison of Three Methods for Selecting Values of Input Variables in the Analysis of Output from a Computer Code. Technometrics 1979, 21, 239–245. [Google Scholar] [CrossRef]

- Lundberg, S.; Lee, S.-I. A Unified Approach to Interpreting Model Predictions. arXiv 2017, arXiv:1705.07874. [Google Scholar] [CrossRef]

- Lundberg, S.M.; Erion, G.; Chen, H.; DeGrave, A.; Prutkin, J.M.; Nair, B.; Katz, R.; Himmelfarb, J.; Bansal, N.; Lee, S.-I. From Local Explanations to Global Understanding with Explainable AI for Trees. Nat. Mach. Intell. 2020, 2, 56–67. [Google Scholar] [CrossRef]

- Mangalapally, H.P.; Notz, R.; Hoch, S.; Asprion, N.; Sieder, G.; Garcia, H.; Hasse, H. Pilot Plant Experimental Studies of Post Combustion CO2 Capture by Reactive Absorption with MEA and New Solvents. Energy Procedia 2009, 1, 963–970. [Google Scholar] [CrossRef]

- Bui, M.; Adjiman, C.S.; Bardow, A.; Anthony, E.J.; Boston, A.; Brown, S.; Fennell, P.S.; Fuss, S.; Galindo, A.; Hackett, L.A.; et al. Carbon Capture and Storage (CCS): The Way Forward. Energy Environ. Sci. 2018, 11, 1062–1176. [Google Scholar] [CrossRef]

- Nittaya, T.; Douglas, P.L.; Croiset, E.; Ricardez-Sandoval, L.A. Dynamic Modelling and Control of MEA Absorption Processes for CO2 Capture from Power Plants. Fuel 2014, 116, 672–691. [Google Scholar] [CrossRef]

- Rodríguez-Pérez, R.; Bajorath, J. Interpretation of Machine Learning Models Using Shapley Values: Application to Compound Potency and Multi-Target Activity Predictions. J. Comput. Aided. Mol. Des. 2020, 34, 1013–1026. [Google Scholar] [CrossRef]

- Deb, K.; Pratap, A.; Agarwal, S.; Meyarivan, T. A Fast and Elitist Multiobjective Genetic Algorithm: NSGA-II. IEEE Trans. Evol. Comput. 2002, 6, 182–197. [Google Scholar] [CrossRef]

- Helton, J.C.; Davis, F.J. Latin Hypercube Sampling and the Propagation of Uncertainty in Analyses of Complex Systems. Reliab. Eng. Syst. Saf. 2003, 81, 23–69. [Google Scholar] [CrossRef]

- Lawal, A.; Wang, M.; Stephenson, P.; Obi, O. Demonstrating Full-Scale Post-Combustion CO2 Capture for Coal-Fired Power Plants through Dynamic Modelling and Simulation. Fuel 2012, 101, 115–128. [Google Scholar] [CrossRef]

- Bove, C.; Laugel, T.; Lesot, M.-J.; Tijus, C.; Detyniecki, M. Why Do Explanations Fail? A Typology and Discussion on Failures in XAI. arXiv 2025, arXiv:2405.13474. [Google Scholar]

| Parameter | Minimum | Maximum | Mean | Std. Dev. | Distribution |

|---|---|---|---|---|---|

| Gas flow rate (kg/s) | 80.0 | 250.0 | 150.0 | 42.5 | Normal |

| CO2 concentration (%) | 10.0 | 20.0 | 14.8 | 2.85 | Uniform |

| Inlet temperature (°C) | 30.0 | 65.0 | 45.2 | 9.12 | Normal |

| Liquid-to-gas ratio | 2.0 | 6.5 | 4.25 | 1.18 | Uniform |

| MEA concentration (wt%) | 20.0 | 40.0 | 30.5 | 5.42 | Normal |

| Reboiler temperature (°C) | 100.0 | 130.0 | 118.3 | 7.83 | Normal |

| Lean CO2 loading (mol/mol) | 0.20 | 0.35 | 0.268 | 0.042 | Uniform |

| Absorber pressure (bar) | 1.0 | 1.5 | 1.13 | 0.15 | Normal |

| Packing height (m) | 10.0 | 20.0 | 15.2 | 2.84 | Uniform |

| Solvent circulation rate (m3/h) | 100.0 | 400.0 | 245.0 | 78.5 | Normal |

| Campaign ID | Varied Parameter | Range in Pilot Study | Controlled/Approximately Constant Parameters | Notes |

|---|---|---|---|---|

| V1 | CO2 partial pressure | 35–135 mbar | Flue-gas flow 30–110 kg/h, MEA = 30 wt%, solvent flow fixed, desorber heat input fixed | Quantifies how increasing gas phase driving force affects captured CO2 amount and rich/lean solvent loadings. |

| V2 | CO2 removal rate | 40–88% | mbar, flue-gas and solvent flows fixed, packing height fixed | Systematically varies reboiler duty between ≈3.5 and 6 GJ/t CO2 to identify the onset of sharply increasing energy demand. |

| V3 | Flue-gas flow rate (F-factor) | 55–100 kg/h | fixed, liquid-to-gas ratio held constant, fixed | Probes sensitivity of regeneration energy to gas-side fluid-dynamic load and associated changes in mass transfer regime. |

| V4 | Solvent flow rate | 100–350 kg/h | fixed, fixed | Identifies an optimal circulation rate near 200 kg/h that minimizes regeneration energy and separates contributions from desorption enthalpy, stripping steam, solvent preheating, and reflux heating. |

| Model | R2 | RMSE (%) | MAE (%) | Training Time (s) | Prediction Time (ms) | Throughput (pred/s) | Memory (MB) | Rank |

|---|---|---|---|---|---|---|---|---|

| Neural Network | 0.9729 | 1.43 | 1.06 | 45.3 | 2.8 | 357 | 45 | 1 |

| XGBoost | 0.9701 | 1.50 | 1.05 | 12.4 | 1.5 | 667 | 38 | 2 |

| Gradient Boosting | 0.9702 | 1.50 | 1.05 | 15.6 | 2.1 | 476 | 52 | 3 |

| Random Forest | 0.9615 | 1.70 | 1.16 | 8.7 | 4.2 | 238 | 180 | 4 |

| Support Vector Regression | 0.9487 | 1.96 | 1.52 | 18.9 | 3.1 | 323 | 68 | 5 |

| Rank | Feature | Importance (%) | Cumulative (%) | Physical Interpretation |

|---|---|---|---|---|

| 1 | Liquid-to-gas ratio | 46.6 | 46.6 | Primary mass transfer driver |

| 2 | Inlet temperature | 28.5 | 75.1 | Thermodynamic limitation boundary |

| 3 | MEA concentration | 9.9 | 85.0 | Solvent absorption capacity |

| 4 | Reboiler temperature | 4.8 | 89.8 | Regeneration energy requirement |

| 5 | Lean CO2 loading | 4.3 | 94.1 | Chemical equilibrium driving force |

| 6 | CO2 concentration | 3.8 | 97.9 | Reaction kinetics influence |

| 7 | Absorber pressure | 1.9 | 99.8 | Phase equilibrium effect |

| 8 | Gas flow rate | 0.3 | 100.1 * | Contact time modulator |

| Target Efficiency (%) | L/G Ratio (Optimal) | Inlet Temp. (°C) | MEA Conc. (wt%) | Reboiler Temp. (°C) | Expected SRD (MJ/kg CO2) | Relative Cost Index |

|---|---|---|---|---|---|---|

| ≥85 | 3.2 | ≤50 | 25–35 | 110 | 3.1 | 1.00 |

| ≥90 | 4.2 | ≤45 | 28–35 | 115 | 3.4 | 1.15 |

| ≥95 | 5.5 | ≤40 | 30–38 | 120 | 3.9 | 1.42 |

| ≥98 | 6.3 | 38–43 | 32–40 | 125 | 4.3 | 1.68 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Rabbi, M.F. Optimizing Carbon Capture Efficiency: Knowledge Extraction from Process Simulations of Post-Combustion Amine Scrubbing. Mach. Learn. Knowl. Extr. 2026, 8, 87. https://doi.org/10.3390/make8040087

Rabbi MF. Optimizing Carbon Capture Efficiency: Knowledge Extraction from Process Simulations of Post-Combustion Amine Scrubbing. Machine Learning and Knowledge Extraction. 2026; 8(4):87. https://doi.org/10.3390/make8040087

Chicago/Turabian StyleRabbi, Mohammad Fazle. 2026. "Optimizing Carbon Capture Efficiency: Knowledge Extraction from Process Simulations of Post-Combustion Amine Scrubbing" Machine Learning and Knowledge Extraction 8, no. 4: 87. https://doi.org/10.3390/make8040087

APA StyleRabbi, M. F. (2026). Optimizing Carbon Capture Efficiency: Knowledge Extraction from Process Simulations of Post-Combustion Amine Scrubbing. Machine Learning and Knowledge Extraction, 8(4), 87. https://doi.org/10.3390/make8040087