Adaptive Learning with Gaussian Process Regression: A Comprehensive Review of Methods and Applications

Abstract

1. Introduction

1.1. Reviews on Gaussian Processes

1.2. Reviews on Adaptive Learning

1.3. Scope of This Review

- Advanced GP methods: Categorizing advanced GP methods into non-stationary GPs, heteroscedastic GPs for variable noise estimation, scalable GP approximations, local GPs, multi-task GPs, dynamic GPs, and different training methods.

- Learning strategies in ADL with GPs: Categorizing adaptive learning strategies, including Bayesian optimization and active learning, and distinguishing between single-point and batch-query methods.

- Applications of ADL with GPs: Categorizing practical use cases, including hyperparameter tuning, material and product design, and efficient modeling for costly simulations and experiments.

- Software libraries for GP-based ADL: Surveying widely used Python and R packages for GPs, ACL, and BO, summarizing capabilities (batching, multi-output, heteroscedastic, and non-stationary modeling) and providing their key references.

2. Gaussian Processes and Advanced Methods

2.1. Gaussian Process Regression

2.2. Advanced Methods of Gaussian Processes

2.2.1. Anisotropic Gaussian Processes

2.2.2. Non-Stationary Gaussian Processes

2.2.3. Sparse Gaussian Processes

2.2.4. Dynamic Gaussian Processes

2.2.5. Multi-Output Gaussian Processes

2.2.6. Local Gaussian Processes

2.2.7. Vecchia Approximation and Fast Inference

2.2.8. Further Advanced Methods

2.2.9. Overview of Advanced Gaussian Process Models

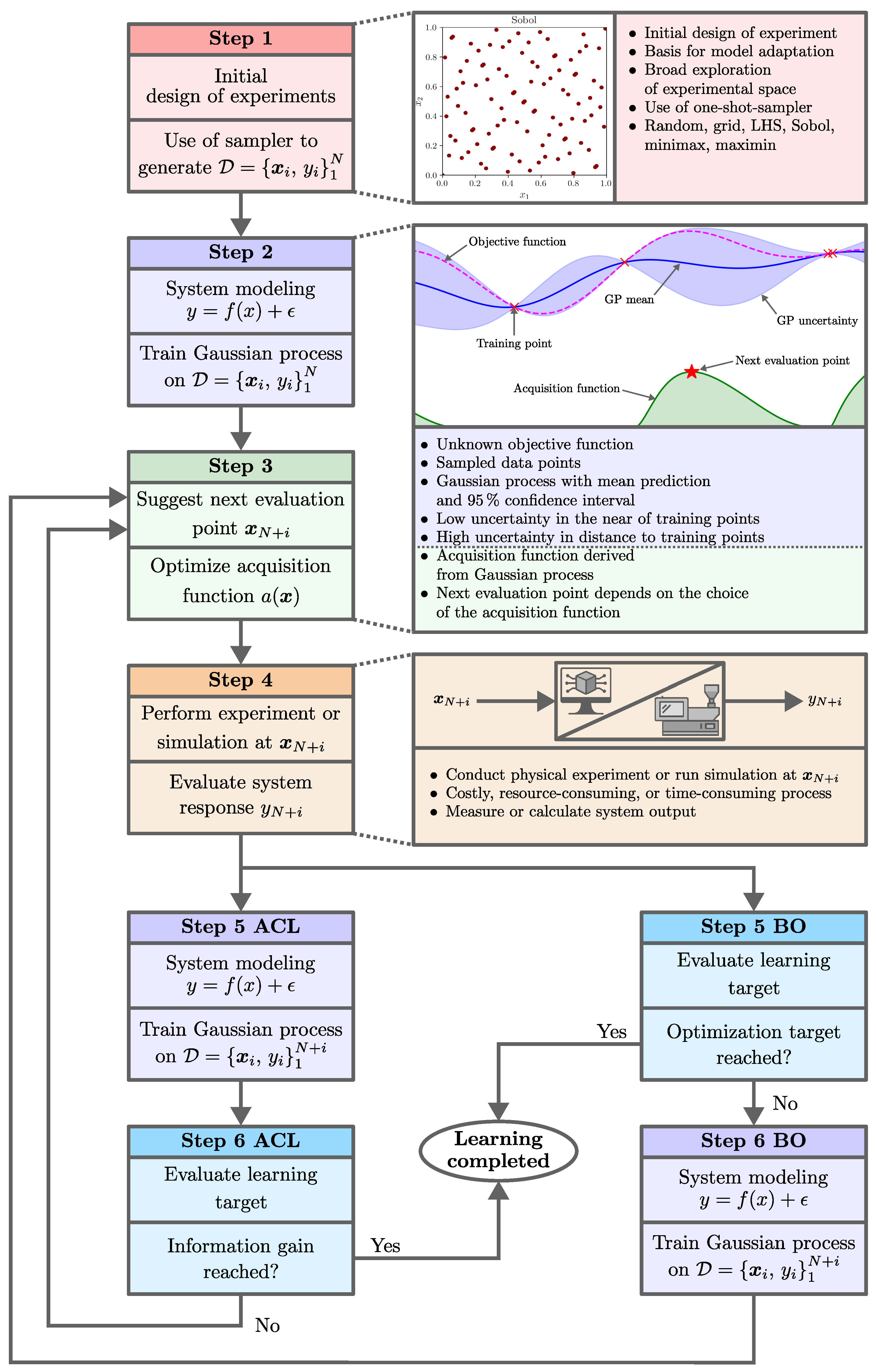

3. Adaptive Learning

3.1. Fundamentals and Definitions

3.2. Initial Design Strategies

3.3. Active Learning

3.3.1. Active Learning MacKay

3.3.2. Fisher Information

3.3.3. Bayesian Active Learning by Disagreement

3.3.4. Active Learning Cohn

3.3.5. Bayesian Query-by-Commitee

3.3.6. Query by Mixture of Gaussian Processes

3.3.7. Residual Active Learning

3.3.8. Euclidean Distance-Based Diversity

3.3.9. Integrated Mean Squared Prediction Error

3.3.10. Sensitivity-Based Active Learning

3.4. Bayesian Optimization

3.4.1. Acquisition Functions

Expected Improvement

Probability of Improvement

Upper/Lower Confidence Bound

Thompson Sampling

Knowledge Gradient

(Predictive) Entropy Search

3.4.2. Batch Bayesian Optimization

3.4.3. Multi-Goal Bayesian Optimization

3.5. Overview of Adaptive Learning Methods

4. Applications of Adaptive Learning with Gaussian Processes

4.1. Methodology of the Literature Search for Application Studies

4.2. Fields of Application

4.2.1. Aerospace

4.2.2. Chemical Engineering

4.2.3. Dynamic Systems

4.2.4. Geoscience and Environmental Monitoring

4.2.5. Manufacturing

4.2.6. Materials Science

4.2.7. Robotics

4.2.8. Structural Reliability

4.3. Methodologies

4.3.1. Method-Oriented Studies

4.3.2. Multi-Output Usage

4.3.3. Initial Designs

4.3.4. Acquisition Function Usage over Domains

4.4. Gaps and Guidance for Practical Application

4.5. Overview of GP-Based ADL Applications

| Application | Goal | Data | Type | Init. | Acq. | GP Model | References |

|---|---|---|---|---|---|---|---|

| Aerospace | Wing shape design of a UAV | Sim. | BO | LHS | EI | Comb. global/local GPs | [115] |

| Aerospace | Surrogate modeling of shape control of composite fuselage | Sim. | ACL | Maximin LHS | Var., FI | Multiple GPs | [15] |

| Aerospace | Optimization of non-stationary aerospace problems | Fcn., Sim. | BO | LHS | EI | Deep GP | [116] |

| Gaps | simulation-driven, validation in real experimental loops not reported | ||||||

| Chemical | Optimization of coating properties | Exp. | Batch BO | LHS, full factorial | EI | DGCN, non-stationary | [105] |

| Chemical | Optimization of chemical reactions and material consumption | Exp. | BO | LHS | EI | Multi-output GP | [117] |

| Chemical | Optimization of coating properties | Exp. | Batch BO | Sobol | UCB | GP | [88] |

| Chemical | Optimization of catalyst properties for higher alcohol synthesis | Exp. | Batch BO | - | EI, EHVI, ALM | GP | [118] |

| Chemical | Surrogate modeling of pharmaceuticals | Exp. | Batch ACL | - | ALM | GP | [147] |

| Chemical | Acceleration of automated discovery of drug molecules | Sim., data sets | Batch BO | Random | EI | Multi-output GP | [84] |

| Gaps | Advanced non-stationary models are rarely used in experimental use-cases, heteroscedastic likelihoods and outlier-robust noise models are not used | ||||||

| Dynamic systems | Surrogate modeling of dynamic systems | Sim. | ACL | - | ALM | GP | [120] |

| Dynamic systems | Optimization of MPC-parameter | Sim. | Batch BO | Zero initializing | EI | GP with heteroskedastic noise | [119] |

| Dynamic systems | Optimization of control parameters | Exp. | BO | Grid | EI | GP | [121] |

| Gaps | Closed-loop safe exploration, online updating with stability guarantees, consistent physics constraints in both model and acquisition | ||||||

| Environment monitoring | Optimization of measurement locations to minimize model uncertainty | Sim. | BO | Random | EI | GP | [85] |

| Environment monitoring | Surrogate modeling of plant growth | Sim., Exp. | ACL | LHS | RSAL, EBD | GP | [102] |

| Geoscience | Surrogate modeling of essential climate variables | data set | ACL | Random | Div., Var. | GP | [122] |

| Gaps | Studies rely on simulations and data sets, operational deployment aspects are not addressed | ||||||

| Manufacturing | Constrained optimization of process parameters for turning | Sim., Exp. | BO | Random, Grid | Constrained EI | GP | [126] |

| Manufacturing | Surrogate modeling of surface shapes | data set | ACL | Random | IMSE | Multi-task GP | [71] |

| Manufacturing | Learn countersink depths | Exp. | ACL | Process-driven | ALM | GP | [143] |

| Manufacturing | Optimization of welding parameters | Exp. | BO | Sobol | EI | GP | [123] |

| Manufacturing | Optimization of laser power profile | Sim. | BO | LHS | UCB | GP | [124] |

| Manufacturing | Optimization of manufacturing parameters | Fcn., Sim. | BO | LHS | EI | Deep GP | [128] |

| Manufacturing | Optimization of parameters for laser power control | Exp. | BO | Predefined | LCB | GP | [127] |

| Manufacturing | Constraint learning for manufacturing process design | Fcn., Exp. | Batch ACL, BO | LHS | EI, PI, TS, UCB | GP | [129] |

| Manufacturing | Optimization of shape accuracy in 3D print | Exp. | Batch BO | Sobol | EI | Multi-output GP | [125] |

| Gaps | Systematic modeling of input-dependent noise is missing, non-stationary cross-covariances for coupled quality metrics are completely absent, and modeling that injects safe process windows directly into the acquisition is not routine | ||||||

| Materials science | Optimization of parameters for material design | Sim. | BO | - | - | GP | [130] |

| Materials science | Surrogate modeling of corrosion-resistant alloy design | Fcn., Sim. | ACL | LHS | Partitioned ALC | Partitioned GP | [131] |

| Material science | Optimization of the insulation coating process for alloy sheets | Exp. | BO | - | EI | GP | [145] |

| Materials science | Surrogate modeling for identifying fissile material | Fcn., Sim. | ACL | LHS | CSQ, IP-SUR | Multi-output GP | [142] |

| Gaps | Non-stationary cross-covariances for multi-output ADL, broader use in real experimental studies remains limited | ||||||

| Robotic | Verification of complex safety specifications | Sim. | BO | Random | LCB | GP | [148] |

| Gaps | simulation-driven, validation in real experimental loops not reported | ||||||

| Structural reliability analysis | Surrogate modeling of structural reliability analysis | Sim. | ACL | LHS | U-, EFF-, H-fcn. | Multi-output GP | [134] |

| Structural reliability analysis | Surrogate modeling of structural reliability of wind-excited systems | Sim. | BO | LHS | Failure criterion | GP with heteroscedastic noise | [136] |

| Structural reliability analysis | Surrogate modeling of structural reliability analysis of airplane parts design | Fcn., Sim. | ACL | Sobol | Weighted Error and Uncertainty | GP with heteroscedastic noise | [135] |

| Gaps | Batch ADL underused, simulation-driven, validation in real experimental loops not reported | ||||||

| Methods/General | Surrogate modeling | Sim. | ACL | LHS | ALM | Bayesian Treed GP | [42] |

| Methods/General | Surrogate modeling | Sim. | ACL | LHS | MSPE | Local GPs | [72] |

| Methods/General | Surrogate modeling with avoiding critical regions | Fcn., Sim. | ACL | Safe sampling points | ALM | GP | [144] |

| Methods/General | Surrogate modeling | Fcn., Sim. | ACL | Maximin LHS | ALM | Warped Multiple Index GP, non-stationary | [12] |

| Methods/General | Optimization of computational complexity in multi-objective BO | Sim. | Batch BO | Random | EI, EHVI | Multi-output GP | [149] |

| Methods/General | Surrogate modeling of structural reliability analysis | Sim. | ACL | LHS | Conditional likelihood | GP | [150] |

| Methods/General | Surrogate modeling | Fcn. | ACL | Maximin LHS | B-QBC, QB-MGP | Bayesian GP | [11] |

| Methods/General | Acceleration of BO | Fcn., Sim. | Batch BO | Sobol | EI | Vecchia GP Approximationg | [78] |

| Methods/General | Surrogate modeling with reduction in computational complexity | Fcn. | ACL | LHS | Comb. of FI and ALC | GP | [151] |

| Methods/General | Surrogate modeling | Fcn., Sim. | ACL | LHS | ALC | Deep GP | [45] |

| Methods/General | Optimization of BO with function networks | Fcn., data sets | BO | - | KG | Function Network GP | [141] |

| Methods/General | Surrogate modeling | Fcn., Sim. | ACL | Random | ALM | HHK-GP | [13] |

| Methods/General | Extension of BO for specific target subsets | data sets | BO | Random | SwitchBAX, InfoBAX, MeanBAX | GP | [152] |

| Methods/General | Reduction in dimension with Sobol indices | Fcn., Exp. | ACL | LHS, Random | MUSIC | GP | [14] |

| Methods/General | Surrogate modeling of structural reliability analysis | Sim. | Batch ACL | LHS | qAK | GP | [146] |

| Methods/General | Surrogate modeling | Sim., Exp. | ACL | LHS | MSPE, IMSPE, ALC, ALM | Jump GP | [139] |

| Methods/General | Surrogate modeling with Sobol indices | Fcn. | ACL | Random | SBAL | GP | [103] |

| Methods/General | Surrogate modeling | Fcn., Sim. | ACL | LHS | FI | PCEGP | [54] |

| Gaps | Transfer to industrial ADL loops is missing, scalable ALC and FI approximations are needed for large candidate sets, non-stationary cross-covariances in multi-output models are rarely used in practical studies | ||||||

| Methods/Manufacturing | Optimization of power of free-electron laser | Fcn., data set | BO | Random | EI | Sparse online GPs | [57] |

| Method/Manufacturing | Optimization of BO for complex Fcn. networks | Fcn., Sim. | BO | Random | EI | GP network | [140] |

| Methods/Material science | Surrogate modeling of shape errors | data set | Batch ACL | Random | Diversity ALM | GP | [153] |

| Methods/Robotics | Surrogate modeling and safe exploration of different outputs | data set | ACL | Random | ALM | Multi-output GP | [133] |

| Methods/Robotics | Constrained and safe optimization | Exp. | BO | Predefinded | SafeOpt | GP | [83] |

| Methods/Robotics | Robust optimization | Fcn., Sim. | BO | LHS | Robust EI | GP | [132] |

| Methods/Robotics | Surrogate modeling for robotic information gathering | Sim., Exp. | ACL | Pilot path | ALM | AKGP | [53] |

| Methods/Structural reliability | Surrogate modeling of structural reliability | Sim. | ACL | LHS | Distance-based | GP | [137] |

| Methods/Structural reliability | Surrogate modeling for structural reliability analysis | Sim. | Batch ACL | LHS | K-means prob. max. | GP | [138] |

| Gaps | Constraint-aware and safe acquisitions are reported but are not default choices in routine experimentation, and the industrial deployment of advanced non-stationary GP models remains limited; validation in real experimental loops is rare. | ||||||

5. Software Libraries

5.1. Library Landscape

5.1.1. Python Stacks for GP Modeling

5.1.2. Optimization and Design Frameworks

5.1.3. Advanced Models

5.1.4. R Ecosystem for GP, ACL, and BO

5.2. Gaps and Guidance

5.2.1. Observed Gaps and Maintenance Notes

5.2.2. Guidance for Selection and Typical Roles

| Library | Language/Framework | Characteristic strength | References |

|---|---|---|---|

| GPyTorch | Python/PyTorch | Scalable GP regression with fast linear algebra, smooth integration with BoTorch for BO and Pyro for full Bayesian modeling | [154] |

| HiGP | Python/Python with C++ backend | Scalable GP regression with hierarchical kernel representations, AFN-preconditioned iterative solvers, and analytically derived gradients | [156] |

| GPflow | Python/TensorFlow | Modular variational GP platform for research and applications | [157] |

| GPflux | Python/TensorFlow on GPflow | Deep GP constructions with variational building blocks interoperable with GPflow | [158] |

| GPy | Python/NumPy and SciPy | Classical toolbox with many kernels and an approachable API for education and baselines | |

| scikit-learn GaussianProcessRegressor | Python/NumPy and SciPy | Standard GP baselines integrated in scikit-learn pipelines, ARD options and common kernels such as RBF and Matérn | [159] |

| Pyro | Python/PyTorch | Probabilistic programming with GP priors and modern MCMC or variational inference for full Bayesian modeling | [155] |

| PyMC | Python/PyMC | Fully Bayesian modeling with GP modules and practical approximations such as HSGP | [163] |

| MOGPTK | Python/PyTorch | Multi-output GP toolkit with training utilities and diagnostics | [161] |

| hetGPy | Python/NumPy and SciPy | Lightweight prototyping for heteroscedastic GP regression in Python, Python-side counterpart to hetGP in R | [162] |

| SMT Surrogate Modeling Toolbox | Python/NumPy and SciPy | Engineering oriented surrogates with Kriging and GP, plus design of experiments utilities | [160] |

| HHK-GP | Python/GPflow | Non-stationary hyperplane kernel with ACL reference implementation | [13] |

| Gaps | Multi-output ACL with non-stationary covariance structures is not used | ||

| BoTorch | Python/PyTorch | Modular BO with Monte Carlo acquisitions for research and production workflows | [111] |

| Ax Adaptive Experimentation Platform | Python/PyTorch stack | Orchestration for online and offline experimentation built on BoTorch | [164] |

| Trieste | Python/TensorFlow | BO and ACL on top of GPflow and GPflux with constraint and multi-objective support | [165] |

| Emukit | Python | Unified interface for experiment design, ACL, BO, and Bayesian quadrature | [166] |

| scikit-optimize | Python/sklearn ecosystem | Sequential model-based optimization with GP surrogates | |

| Optuna | Python | General-purpose hyperparameter optimization, complements GP-based BO stacks as a flexible HPO framework | [167] |

| modAL | Python/sklearn | Simple ACL API that works directly with sklearn estimators, including GP regressors | [168] |

| BatchBALD | Python/PyTorch | Information theoretic batch acquisitions for data efficient labeling and ACL | [9] |

| Dragonfly | Python | Robust and scalable BO including multi-fidelity and high-dimensional settings | [169] |

| RoBO Robust Bayesian Optimization | Python/sklearn | Research framework for robust BO baselines and benchmarks | |

| Gaps | Safe and feasible acquisitions are often available, scalable batch ACL utilities require custom integration | ||

| tgp | R | Non-stationary treed GP modeling with ACL | [170] |

| laGP | R | Local approximate GPs for large data | [171] |

| hetGP | R | Heteroscedastic GP regression with sequential design criteria such as IMSPE, established R counterpart to hetGPy in Python | [172] |

| DiceKriging and DiceOptim | R | Kriging and BO with EGO and qEGO criteria widely used in engineering design | [173] |

| GPareto | R | Multi-objective BO with GP surrogates and Pareto analysis | [174] |

| deepgp | R | Bayesian deep GP modeling with examples for sequential design | [45] |

| ParBayesianOptimization | R | Parallel BO wrappers often used with GP-based surrogates | |

| rBayesianOptimization | R | Lightweight BO interface for applied workflows based on GP surrogates | |

| Gaps | Heteroscedastic regression and sequential design are more standardized in R, multi-output ACL with non-stationary covariance structures is not used | ||

6. Summary and Outlook

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Di Fiore, F.; Nardelli, M.; Mainini, L. Active Learning and Bayesian Optimization: A Unified Perspective to Learn with a Goal. Arch. Comput. Methods Eng. 2024, 31, 2985–3013. [Google Scholar] [CrossRef]

- Greenhill, S.; Rana, S.; Gupta, S.; Vellanki, P.; Venkatesh, S. Bayesian Optimization for Adaptive Experimental Design: A Review. IEEE Access 2020, 8, 13937–13948. [Google Scholar] [CrossRef]

- Gramacy, R.B. Surrogates: Gaussian Process Modeling, Design and Optimization for the Applied Sciences; Chapman Hall/CRC: Boca Raton, FL, USA, 2020. [Google Scholar]

- Shahriari, B.; Swersky, K.; Wang, Z.; Adams, R.P.; de Freitas, N. Taking the Human Out of the Loop: A Review of Bayesian Optimization. Proc. IEEE 2016, 104, 148–175. [Google Scholar] [CrossRef]

- Daulton, S.; Eriksson, D.; Balandat, M.; Bakshy, E. Multi-objective Bayesian optimization over high-dimensional search spaces. In Proceedings of the Thirty-Eighth Conference on Uncertainty in Artificial Intelligence, Eindhoven, The Netherlands, 1–5 August 2022; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2022; Volume 180, pp. 507–517. [Google Scholar]

- Wang, K.; Dowling, A.W. Bayesian Optimization for Chemical Products and Functional Materials. Curr. Opin. Chem. Eng. 2022, 36, 100728. [Google Scholar] [CrossRef]

- Turner, R.; Eriksson, D.; McCourt, M.; Kiili, J.; Laaksonen, E.; Xu, Z.; Guyon, I. Bayesian Optimization is Superior to Random Search for Machine Learning Hyperparameter Tuning: Analysis of the Black-Box Optimization Challenge 2020. In Proceedings of the NeurIPS 2020 Competition and Demonstration Track; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2021; Volume 133, pp. 3–26. [Google Scholar]

- Settles, B. Active Learning; Springer International Publishing: Cham, Switzerland, 2012. [Google Scholar]

- Kirsch, A.; van Amersfoort, J.; Gal, Y. BatchBALD: Efficient and Diverse Batch Acquisition for Deep Bayesian Active Learning. In Proceedings of the Advances in Neural Information Processing Systems; Curran Associates, Inc.: Red Hook, NY, USA, 2019; Volume 32, pp. 7026–7037. [Google Scholar]

- Rasmussen, C.; Williams, C. Gaussian Process for Machine Learning; The MIT Press: London, UK, 2006. [Google Scholar]

- Riis, C.; Antunes, F.; Hüttel, F.; Lima Azevedo, C.; Pereira, F. Bayesian Active Learning with Fully Bayesian Gaussian Processes. In Proceedings of the Advances in Neural Information Processing Systems; Curran Associates, Inc.: Red Hook, NY, USA, 2022; Volume 35, pp. 12141–12153. [Google Scholar]

- Marmin, S.; Ginsbourger, D.; Baccou, J.; Liandrat, J. Warped Gaussian Processes and Derivative-Based Sequential Designs for Functions with Heterogeneous Variations. SIAM/ASA J. Uncertain. Quantif. 2018, 6, 991–1018. [Google Scholar] [CrossRef]

- Bitzer, M.; Meister, M.; Zimmer, C. Hierarchical-Hyperplane Kernels for Actively Learning Gaussian Process Models of Nonstationary Systems. In Proceedings of the 26th International Conference on Artificial Intelligence and Statistics, Valencia, Spain, 25–27 April 2023; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2023; Volume 206, pp. 7897–7912. [Google Scholar]

- Chauhan, M.S.; Ojeda-Tuz, M.; Catarelli, R.A.; Gurley, K.R.; Tsapetis, D.; Shields, M.D. On Active Learning for Gaussian Process-based Global Sensitivity Analysis. Reliab. Eng. Syst. Saf. 2024, 245, 109945. [Google Scholar] [CrossRef]

- Yue, X.; Wen, Y.; Hunt, J.H.; Shi, J. Active Learning for Gaussian Process Considering Uncertainties with Application to Shape Control of Composite Fuselage. IEEE Trans. Autom. Sci. Eng. 2021, 18, 36–46. [Google Scholar] [CrossRef]

- Booth, A.S.; Cooper, A.; Gramacy, R.B. Nonstationary Gaussian Process Surrogates. arXiv 2023, arXiv:2305.19242. [Google Scholar] [CrossRef]

- Jakkala, K. Deep Gaussian Processes: A Survey. arXiv 2021, arXiv:2106.12135. [Google Scholar] [CrossRef]

- Li, P.; Chen, S. A review on Gaussian Process Latent Variable Models. CAAI Trans. Intell. Technol. 2016, 1, 366–376. [Google Scholar] [CrossRef]

- Liu, H.; Ong, Y.S.; Shen, X.; Cai, J. When Gaussian Process Meets Big Data: A Review of Scalable GPs. IEEE Trans. Neural Netw. Learn. Syst. 2020, 31, 4405–4423. [Google Scholar] [CrossRef] [PubMed]

- Lyu, C.; Liu, X.; Mihaylova, L. Review of Recent Advances in Gaussian Process Regression Methods. In Advances in Computational Intelligence Systems; Advances in Intelligent Systems and Computing; Springer Nature: Cham, Switzerland, 2024; Volume 1454, pp. 226–237. [Google Scholar]

- Marrel, A.; Iooss, B. Probabilistic Surrogate Modeling by Gaussian Process: A Review on Recent Insights in Estimation and Validation. Reliab. Eng. Syst. Saf. 2024, 247, 110094. [Google Scholar] [CrossRef]

- Swiler, L.P.; Gulian, M.; Frankel, A.L.; Safta, C.; Jakeman, J.D. A Survey of Constrained Gaussian Process Regression: Approaches and Implementation Challenges. J. Mach. Learn. Model. Comput. 2020, 1, 119–156. [Google Scholar] [CrossRef]

- Scampicchio, A.; Arcari, E.; Lahr, A.; Zeilinger, M.N. Gaussian Processes for Dynamics Learning in Model Predictive Control. Annu. Rev. Control 2025, 60, 101034. [Google Scholar] [CrossRef]

- Binois, M.; Wycoff, N. A Survey on High-dimensional Gaussian Process Modeling with Application to Bayesian Optimization. ACM Trans. Evol. Learn. Optim. 2022, 2, 1–26. [Google Scholar] [CrossRef]

- Kumar, P.; Gupta, A. Active Learning Query Strategies for Classification, Regression, and Clustering: A Survey. J. Comput. Sci. Technol. 2020, 35, 913–945. [Google Scholar] [CrossRef]

- Malu, M.; Dasarathy, G.; Spanias, A. Bayesian Optimization in High-Dimensional Spaces: A Brief Survey. In Proceedings of the 2021 12th International Conference on Information, Intelligence, Systems & Applications (IISA), Chania Crete, Greece, 12–14 July 2021; pp. 1–8. [Google Scholar]

- Kochan, D.; Yang, X. Gaussian Process Regression with Soft Equality Constraints. Mathematics 2025, 13, 353. [Google Scholar] [CrossRef]

- Polke, D.; Kösters, T.; Ahle, E.; Söffker, D. Polynomial Chaos Expanded Gaussian Process. Mach. Learn. Knowl. Extr. (MAKE) 2026, 8, 78. [Google Scholar] [CrossRef]

- Kocijan, J. Modelling and Control of Dynamic Systems Using Gaussian Process Models; Advances in Industrial Control; Springer: Cham, Switzerland, 2016. [Google Scholar]

- Moreno-Muñoz, P.; Artés, A.; Álvarez, M. Heterogeneous Multi-output Gaussian Process Prediction. In Proceedings of the Advances in Neural Information Processing Systems, Montréal, Canada, 3–8 December 2018; Curran Associates, Inc.: Red Hook, NY, USA, 2018; Volume 31, pp. 6711–6720. [Google Scholar]

- Sauer, A.; Cooper, A.; Gramacy, R.B. Vecchia-Approximated Deep Gaussian Processes for Computer Experiments. J. Comput. Graph. Stat. 2023, 32, 824–837. [Google Scholar] [CrossRef]

- Li, J.; Pan, L.; Suvarna, M.; Wang, X. Machine Learning aided Supercritical Water Gasification for H2-rich Syngas Production with Process Optimization and Catalyst Screening. Chem. Eng. J. 2021, 426, 131285. [Google Scholar] [CrossRef]

- Kiran, B.R.; Sobh, I.; Talpaert, V.; Mannion, P.; Sallab, A.; Yogamani, S.; Pérez, P. Deep Reinforcement Learning for Autonomous Driving: A Survey. IEEE Trans. Intell. Transp. Syst. 2022, 23, 4909–4926. [Google Scholar] [CrossRef]

- Somu, N.; Raman, G.; Ramamritham, K. A Deep Learning Framework for Building Energy Consumption Forecast. Renew. Sustain. Energy Rev. 2021, 137, 110591. [Google Scholar] [CrossRef]

- Keogh, E.; Mueen, A. Curse of Dimensionality. In Encyclopedia of Machine Learning; Springer: Boston, MA, USA, 2011; pp. 257–258. [Google Scholar]

- Neal, R.M. Bayesian Learning for Neural Networks; Lecture Notes in Statistics; Springer: New York, NY, USA, 1996; Volume 118. [Google Scholar]

- Paananen, T.; Piironen, J.; Andersen, M.R.; Vehtari, A. Variable Selection for Gaussian Processes via Sensitivity Analysis of the Posterior Predictive Distribution. In Proceedings of the 22nd International Conference on Artificial Intelligence and Statistics, Naha, Okinawa, Japan, 16–18 April 2019; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2019; Volume 89, pp. 1743–1752. [Google Scholar]

- Varunram, T.N.; Shivaprasad, M.B.; Aishwarya, K.H.; Balraj, A.; Savish, S.V.; Ullas, S. Analysis of Different Dimensionality Reduction Techniques and Machine Learning Algorithms for an Intrusion Detection System. In Proceedings of the 2021 IEEE 6th International Conference on Computing, Communication and Automation (ICCCA), Arad, Romania, 17–19 December 2021; pp. 237–242. [Google Scholar]

- Paciorek, C.; Schervish, M. Nonstationary Covariance Functions for Gaussian Process Regression. In Proceedings of the Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 2003; Volume 16, pp. 273–280. [Google Scholar]

- Plagemann, C.; Kersting, K.; Burgard, W. Nonstationary Gaussian Process Regression Using Point Estimates of Local Smoothness. In Proceedings of the Machine Learning and Knowledge Discovery in Databases; Springer: Berlin/Heidelberg, Germany, 2008; pp. 204–219. [Google Scholar]

- Gramacy, R.B.; Lee, H.K.H. Bayesian Treed Gaussian Process Models with an Application to Computer Modeling. J. Am. Stat. Assoc. 2008, 103, 1119–1130. [Google Scholar] [CrossRef]

- Gramacy, R.B.; Lee, H.K.H.; MacReady, W. Parameter Space Exploration with Gaussian Process Trees. In Proceedings of the Twenty-First International Conference on Machine Learning, Banff, Alberta, Canada, 4–8 July 2004; Omnipress: Madison, WI, USA, 2004; pp. 353–360. [Google Scholar]

- Wilson, A.G.; Knowles, D.A.; Ghahramani, Z. Gaussian Process Regression Networks. In Proceedings of the 29th International Coference on International Conference on Machine Learning, Madison, WI, USA, 26 June–1 July 2012; pp. 1139–1146. [Google Scholar]

- Damianou, A.; Lawrence, N.D. Deep Gaussian Processes. In Proceedings of the Sixteenth International Conference on Artificial Intelligence and Statistics, Scottsdale, AZ, USA, 29 April–1 May 2013; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2013; Volume 31, pp. 207–215. [Google Scholar]

- Sauer, A.; Gramacy, R.B.; Higdon, D. Active Learning for Deep Gaussian Process Surrogates. Technometrics 2023, 65, 4–18. [Google Scholar] [CrossRef]

- Wilson, A.G.; Hu, Z.; Salakhutdinov, R.; Xing, E.P. Deep Kernel Learning. In Proceedings of the 19th International Conference on Artificial Intelligence and Statistics, Cadiz, Spain, 9–11 May 2016; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2016; Volume 51, pp. 370–378. [Google Scholar]

- Heinonen, M.; Mannerström, H.; Rousu, J.; Kaski, S.; Lähdesmäki, H. Non-Stationary Gaussian Process Regression with Hamiltonian Monte Carlo. In Proceedings of the 19th International Conference on Artificial Intelligence and Statistics, Cadiz, Spain, 9–11 May 2016; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2016; Volume 51, pp. 732–740. [Google Scholar]

- Remes, S.; Heinonen, M.; Kaski, S. Non-Stationary Spectral Kernels. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; Curran Associates, Inc.: Red Hook, NY, USA, 2017; Volume 30, pp. 4642–4651. [Google Scholar]

- Cremanns, K.; Roos, D. Deep Gaussian Covariance Network. arXiv 2017, arXiv:1710.06202. [Google Scholar] [CrossRef]

- Liang, Y.; Li, S.; Yan, C.; Li, M.; Jiang, C. Explaining the Black-box Model: A Survey of Local Interpretation Methods for Deep Neural Networks. Neurocomputing 2021, 419, 168–182. [Google Scholar] [CrossRef]

- Park, C. Jump Gaussian Process Model for Estimating Piecewise Continuous Regression Functions. J. Mach. Learn. Res. 2022, 23, 1–37. [Google Scholar]

- Xu, Y.; Park, C. Deep Jump Gaussian Processes for Surrogate Modeling of High-Dimensional Piecewise Continuous Functions. arXiv 2026, arXiv:2510.21974. [Google Scholar]

- Chen, W.; Khardon, R.; Liu, L. Adaptive Robotic Information Gathering via Non-stationary Gaussian Processes. Int. J. Robot. Res. 2024, 43, 405–436. [Google Scholar] [CrossRef]

- Polke, D.; Ahle, E.; Söffker, D. Bayesian Active Learning with Polynomial Chaos Expanded Gaussian Process. In Proceedings of the 2026 IEEE 3rd International Conference on Artificial Intelligence, Computer, Data Sciences and Applications (ACDSA), Boracay Island, Philippines, 5–7 February 2026; pp. 1–14. [Google Scholar]

- Hensman, J.; Matthews, A.G.d.G.; Filippone, M.; Ghahramani, Z. MCMC for Variationally Sparse Gaussian Processes. In Proceedings of the 29th International Conference on Neural Information Processing Systems, Montreal, QC, Canada, 7–12 December 2015; Volume 1, pp. 1648–1656. [Google Scholar]

- Bauer, M.; van der Wilk, M.; Rasmussen, C.E. Understanding probabilistic sparse Gaussian process approximations. In Proceedings of the 30th International Conference on Neural Information Processing Systems, Barcelona, Spain; Curran Associates, Inc.: Red Hook, NY, USA, 2016; pp. 1533–1541. [Google Scholar]

- McIntire, M.; Ratner, D.; Ermon, S. Sparse Gaussian processes for Bayesian optimization. In Proceedings of the Thirty-Second Conference on Uncertainty in Artificial Intelligence, Jersey City, New Jersey, USA; AUAI Press: Arlington, VA, USA, 2016; pp. 517–526. [Google Scholar]

- Almosallam, I.A.; Jarvis, M.J.; Roberts, S.J. GPz: Non-stationary Sparse Gaussian Processes for Heteroscedastic Uncertainty Estimation in Photometric Redshifts. Mon. Not. R. Astron. Soc. 2016, 462, 726–739. [Google Scholar] [CrossRef]

- Cheng, L.F.; Dumitrascu, B.; Darnell, G.; Chivers, C.; Draugelis, M.; Li, K.; Engelhardt, B.E. Sparse Multi-output Gaussian Processes for Online Medical Time Series Prediction. BMC Med. Inform. Decis. Mak. 2020, 20, 152. [Google Scholar] [CrossRef]

- Luo, H.; Nattino, G.; Pratola, M.T. Sparse Additive Gaussian Process Regression. J. Mach. Learn. Res. 2022, 23, 1–34. [Google Scholar]

- Hajibabaei, A.; Myung, C.W.; Kim, K.S. Sparse Gaussian Process Potentials: Application to Lithium Diffusivity in Superionic Conducting Solid Electrolytes. Phys. Rev. B 2021, 103, 214102. [Google Scholar] [CrossRef]

- Yang, K.; Lu, J.; Wan, W.; Zhang, G.; Hou, L. Transfer Learning Based on Sparse Gaussian Process for Regression. Inf. Sci. 2022, 605, 286–300. [Google Scholar] [CrossRef]

- Hewing, L.; Kabzan, J.; Zeilinger, M.N. Cautious Model Predictive Control using Gaussian Process Regression. IEEE Trans. Control Syst. Technol. 2020, 28, 2736–2743. [Google Scholar] [CrossRef]

- Diepers, F.; Polke, D.; Ahle, E.; Söffker, D. Comparison of Different Gaussian Process Models and Applications in Model Predictive Control. In Proceedings of the 2023 23rd International Conference on Control, Automation and Systems (ICCAS), Yeosu, Republic of Korea, 17–20 October 2023; pp. 54–59. [Google Scholar]

- Diepers, F.; Ahle, E.; Söffker, D. Investigation of the Influence of Training Data and Methods on the Control Performance of MPC Utilizing Gaussian Processes. In Systems Theory in Data and Optimization; Lecture Notes in Control and Information Sciences—Proceedings; Springer Nature: Cham, Switzerland, 2025; pp. 87–103. [Google Scholar]

- Chakraborty, S.; Adhikari, S.; Ganguli, R. The Role of Surrogate Models in the Development of Digital Twins of Dynamic Systems. Appl. Math. Model. 2021, 90, 662–681. [Google Scholar] [CrossRef]

- Bilionis, I.; Zabaras, N. Multi-output Local Gaussian Process Regression: Applications to Uncertainty Quantification. J. Comput. Phys. 2012, 231, 5718–5746. [Google Scholar] [CrossRef]

- Alaa, A.M.; van der Schaar, M. Bayesian Inference of Individualized Treatment Effects using Multi-task Gaussian Processes. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; Guyon, I., Von Luxburg, U., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R., Eds.; Curran Associates, Inc.: Red Hook, NY, USA, 2017; Volume 30, pp. 3427–3435. [Google Scholar]

- Parra, G.; Tobar, F. Spectral Mixture Kernels for Multi-Output Gaussian Processes. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; Curran Associates, Inc.: Red Hook, NY, USA, 2017; Volume 30, pp. 6681–6690. [Google Scholar]

- Liu, H.; Cai, J.; Ong, Y.S. Remarks on Multi-output Gaussian Process Regression. Knowl.-Based Syst. 2018, 144, 102–121. [Google Scholar] [CrossRef]

- Mehta, M.; Shao, C. Adaptive Sampling Design for Multi-task Learning of Gaussian Processes in Manufacturing. J. Manuf. Syst. 2021, 61, 326–337. [Google Scholar] [CrossRef]

- Gramacy, R.B.; Apley, D.W. Local Gaussian Process Approximation for Large Computer Experiments. J. Comput. Graph. Stat. 2015, 24, 561–578. [Google Scholar] [CrossRef]

- Park, C.; Huang, J.Z. Efficient Computation of Gaussian Process Regression for Large Spatial Data Sets by Patching Local Gaussian Processes. J. Mach. Learn. Res. 2016, 17, 1–29. [Google Scholar]

- Fuhg, J.N.; Marino, M.; Bouklas, N. Local Approximate Gaussian Process Regression for Data-driven Constitutive Models: Development and Comparison with Neural Networks. Comput. Methods Appl. Mech. Eng. 2022, 388, 114217. [Google Scholar] [CrossRef]

- Liu, C.; Duan, Z.; Zhang, B.; Zhao, Y.; Yuan, Z.; Zhang, Y.; Wu, Y.; Jiang, Y.; Tai, H. Local Gaussian Process Regression with Small Sample Data for Temperature and Humidity Compensation of Polyaniline-cerium Dioxide NH3 Sensor. Sens. Actuators B Chem. 2023, 378, 133113. [Google Scholar] [CrossRef]

- Katzfuss, M.; Guinness, J.; Gong, W.; Zilber, D. Vecchia Approximations of Gaussian-Process Predictions. J. Agric. Biol. Environ. Stat. 2020, 25, 383–414. [Google Scholar] [CrossRef]

- Katzfuss, M.; Guinness, J. A General Framework for Vecchia Approximations of Gaussian Processes. Stat. Sci. 2021, 36, 124–141. [Google Scholar] [CrossRef]

- Jimenez, F.; Katzfuss, M. Scalable Bayesian Optimization using Vecchia Approximations of Gaussian Processes. In Proceedings of the 26th International Conference on Artificial Intelligence and Statistics, Valencia, Spain, 25–27 April 2023; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2023; Volume 206, pp. 1492–1512. [Google Scholar]

- Pleiss, G.; Gardner, J.; Weinberger, K.; Wilson, A.G. Constant-Time Predictive Distributions for Gaussian Processes. In Proceedings of the 35th International Conference on Machine Learning, Stockholm, Sweden, 10–15 July 2018; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2018; Volume 80, pp. 4114–4123. [Google Scholar]

- Schöbi, R.; Sudret, B.; Wiart, J. Polynomial-Chaos-based Kriging. Int. J. Uncertain. Quantif. 2015, 5, 171–193. [Google Scholar] [CrossRef]

- Sigrist, F. Gaussian Process Boosting. J. Mach. Learn. Res. 2022, 23, 1–46. [Google Scholar]

- Victoria, A.H.; Maragatham, G. Automatic Tuning of Hyperparameters Using Bayesian Optimization. Evol. Syst. 2021, 12, 217–223. [Google Scholar] [CrossRef]

- Berkenkamp, F.; Krause, A.; Schoellig, A.P. Bayesian Optimization with Safety Constraints: Safe and Automatic Parameter Tuning in Robotics. Mach. Learn. 2023, 112, 3713–3747. [Google Scholar] [CrossRef]

- McDonald, M.A.; Koscher, B.A.; Canty, R.B.; Zhang, J.; Ning, A.; Jensen, K.F. Bayesian Optimization over Multiple Experimental Fidelities Accelerates Automated Discovery of Drug Molecules. ACS Cent. Sci. 2025, 11, 346–356. [Google Scholar] [CrossRef]

- Peralta, F.; Reina, D.G.; Toral, S.; Arzamendia, M.; Gregor, D. A Bayesian Optimization Approach for Multi-Function Estimation for Environmental Monitoring Using an Autonomous Surface Vehicle: Ypacarai Lake Case Study. Electronics 2021, 10, 963. [Google Scholar] [CrossRef]

- Golestan, S.; Ardakanian, O.; Boulanger, P. Grey-Box Bayesian Optimization for Sensor Placement in Assisted Living Environments. In Proceedings of the AAAI Conference on Artificial Intelligence, Vancouver, BC, Canada, 20–27 February 2024; Volume 38, pp. 22049–22057. [Google Scholar]

- Deneault, J.R.; Chang, J.; Myung, J.; Hooper, D.; Armstrong, A.; Pitt, M.; Maruyama, B. Toward Autonomous Additive Manufacturing: Bayesian Optimization on a 3D Printer. MRS Bull. 2021, 46, 566–575. [Google Scholar] [CrossRef]

- Polke, D.; Surjana, A.; Diepers, F.; Ahle, E.; Söffker, D. Development of a Modular Automation Framework for Data-Driven Modeling and Optimization of Coating Formulations. In Proceedings of the 2023 IEEE 28th International Conference on Emerging Technologies and Factory Automation (ETFA), Sinaia, Romania, 12–15 September 2023; pp. 1–8. [Google Scholar]

- Lookman, T.; Balachandran, P.V.; Xue, D.; Yuan, R. Active Learning in Materials Science with Emphasis on Adaptive Sampling Using Uncertainties for Targeted Design. npj Comput. Mater. 2019, 5, 21. [Google Scholar] [CrossRef]

- Pronzato, L.; Müller, W.G. Design of Computer Experiments: Space Filling and Beyond. Stat. Comput. 2012, 22, 681–701. [Google Scholar] [CrossRef]

- Renardy, M.; Joslyn, L.R.; Millar, J.A.; Kirschner, D.E. To Sobol or not to Sobol? The effects of sampling schemes in systems biology applications. Math. Biosci. 2021, 337, 108593. [Google Scholar] [CrossRef]

- McKay, M.D.; Beckman, R.J.; Conover, W.J. A Comparison of Three Methods for Selecting Values of Input Variables in the Analysis of Output from a Computer Code. Technometrics 1979, 21, 239–245. [Google Scholar] [PubMed]

- Lin, C.D.; Tang, B. Latin Hypercubes and Space-filling Designs. In Handbook of Design and Analysis of Experiments; Dean, A., Morris, M., Stufken, J., Bingham, D., Eds.; Chapman & Hall/CRC Press: Boca Raton, FL, USA, 2015; pp. 593–625. [Google Scholar]

- Keller, A.; Van Keirsbilck, M. Artificial Neural Networks Generated by Low Discrepancy Sequences. In Monte Carlo and Quasi-Monte Carlo Methods; Springer Proceedings in Mathematics & Statistics; Springer International Publishing: Cham, Switzerland, 2022; Volume 387, pp. 291–311. [Google Scholar]

- Johnson, M.; Moore, L.; Ylvisaker, D. Minimax and Maximin Distance Designs. J. Stat. Plan. Inference 1990, 26, 131–148. [Google Scholar] [CrossRef]

- Zhang, B.; Cole, A.D.; Gramacy, R.B. Distance-Distributed Design for Gaussian Process Surrogates. Technometrics 2021, 63, 40–52. [Google Scholar] [CrossRef]

- Azriel, D. Optimal Minimax Random Designs for Weighted Least Squares Estimators. Biometrika 2022, 110, 273–280. [Google Scholar] [CrossRef]

- Jankovic, A.; Chaudhary, G.; Goia, F. Designing the Design of Experiments (DOE)—An Investigation on the Influence of Different Factorial Designs on the Characterization of Complex Systems. Energy Build. 2021, 250, 111298. [Google Scholar] [CrossRef]

- MacKay, D.J.C. Information-Based Objective Functions for Active Data Selection. Neural Comput. 1992, 4, 590–604. [Google Scholar] [CrossRef]

- Cohn, D.; Ghahramani, Z.; Jordan, M. Active Learning with Statistical Models. In Proceedings of the Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 1994; Volume 7. [Google Scholar]

- Seo, S.; Wallat, M.; Graepel, T.; Obermayer, K. Gaussian Process Regression: Active Data Selection and Test Point Rejection. In Proceedings of the IEEE-INNS-ENNS International Joint Conference on Neural Networks. IJCNN 2000. Neural Computing: New Challenges and Perspectives for the New Millennium, Como, Italy, 24–27 July 2000; pp. 241–246. [Google Scholar]

- Sahoo, R.N.; Gakhar, S.; Rejith, R.G.; Verrelst, J.; Ranjan, R.; Kondraju, T.; Meena, M.C.; Mukherjee, J.; Daas, A.; Kumar, S.; et al. Optimizing the Retrieval of Wheat Crop Traits from UAV-Borne Hyperspectral Image with Radiative Transfer Modelling Using Gaussian Process Regression. Remote Sens. 2023, 15, 5496. [Google Scholar] [CrossRef]

- Wulf, B.; Polke, D.; Ahle, E. Active Learning for Gaussian Processes Based on Global Sensitivity Analysis. In Proceedings of the 2025 IEEE 4th International Conference on Computing and Machine Intelligence (ICMI), Mount Pleasant, MI, USA, 5–6 April 2025; pp. 1–7. [Google Scholar]

- Sobol, I. Global Sensitivity Indices for Nonlinear Mathematical Models and Their Monte Carlo Estimates. Math. Comput. Simul. 2001, 55, 271–280. [Google Scholar] [CrossRef]

- Schmitz, C.; Cremanns, K.; Bissadi, G. Application of Machine Learning Algorithms for Use in Material Chemistry. In Computational and Data-Driven Chemistry Using Artificial Intelligence; Elsevier: Amsterdam, The Netherlands, 2022; pp. 161–192. [Google Scholar]

- Wu, J.; Chen, X.Y.; Zhang, H.; Xiong, L.D.; Lei, H.; Deng, S.H. Hyperparameter Optimization for Machine Learning Models Based on Bayesian Optimization. J. Electron. Sci. Technol. 2019, 17, 26–40. [Google Scholar]

- Nguyen, V. Bayesian Optimization for Accelerating Hyper-Parameter Tuning. In Proceedings of the 2019 IEEE Second International Conference on Artificial Intelligence and Knowledge Engineering (AIKE), Sardinia, Italy, 3–5 June 2019; pp. 302–305. [Google Scholar]

- Ozaki, Y.; Tanigaki, Y.; Watanabe, S.; Onishi, M. Multiobjective Tree-structured Parzen Estimator for Computationally Expensive Optimization Problems. In Proceedings of the 2020 Genetic and Evolutionary Computation Conference, Cancún, Mexico, 8–12 July 2020; pp. 533–541. [Google Scholar]

- Liu, D.C.; Nocedal, J. On the Limited Memory BFGS Method for Large Scale Optimization. Math. Program. 1989, 45, 503–528. [Google Scholar] [CrossRef]

- Dozat, T. Incorporating Nesterov Momentum into Adam. In Proceedings of the 4th International Conference on Learning Representations, Workshop Track, San Juan, Puerto Rico, 2–4 May 2016; pp. 1–4. [Google Scholar]

- Balandat, M.; Karrer, B.; Jiang, D.; Daulton, S.; Letham, B.; Wilson, A.G.; Bakshy, E. BoTorch: A Framework for Efficient Monte-Carlo Bayesian Optimization. In Proceedings of the 34th International Conference on Neural Information Processing Systems, Vancouver, BC, Canada; Curran Associates, Inc.: Red Hook, NY, USA, 2020; pp. 21524–21538. [Google Scholar]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; Shamseer, L.; Tetzlaff, J.M.; Akl, E.A.; Brennan, S.E.; et al. The PRISMA 2020 Statement: An Updated Guideline for Reporting Systematic Reviews. BMJ 2021, 372, n71. [Google Scholar] [CrossRef]

- Pranckutė, R. Web of Science (WoS) and Scopus: The Titans of Bibliographic Information in Today’s Academic World. Publications 2021, 9, 12. [Google Scholar] [CrossRef]

- Mongeon, P.; Paul-Hus, A. The Journal Coverage of Web of Science and Scopus: A Comparative Analysis. Scientometrics 2016, 106, 213–228. [Google Scholar] [CrossRef]

- Martinez-Cantin, R. Bayesian Optimization with Adaptive Kernels for Robot Control. In Proceedings of the 2017 IEEE International Conference on Robotics and Automation (ICRA), Singapore, 29 May–3 June 2017; pp. 3350–3356. [Google Scholar]

- Hebbal, A.; Brevault, L.; Balesdent, M.; Talbi, E.G.; Melab, N. Bayesian Optimization Using Deep Gaussian Processes with Applications to Aerospace System Design. Optim. Eng. 2021, 22, 321–361. [Google Scholar] [CrossRef]

- Taylor, C.J.; Felton, K.C.; Wigh, D.; Jeraal, M.I.; Grainger, R.; Chessari, G.; Johnson, C.N.; Lapkin, A.A. Accelerated Chemical Reaction Optimization Using Multi-Task Learning. ACS Cent. Sci. 2023, 9, 957–968. [Google Scholar] [CrossRef]

- Suvarna, M.; Zou, T.; Chong, S.H.; Ge, Y.; Martín, A.J.; Pérez-Ramírez, J. Active Learning Streamlines Development of High Performance Catalysts for Higher Alcohol Synthesis. Nat. Commun. 2024, 15, 5844. [Google Scholar] [CrossRef] [PubMed]

- Hoang, K.T.; Boersma, S.; Mesbah, A.; Imsland, L. Heteroscedastic Bayesian Optimisation for Active Power Control of Wind Farms. IFAC-PapersOnLine 2023, 56, 7650–7655. [Google Scholar] [CrossRef]

- Buisson-Fenet, M.; Solowjow, F.; Trimpe, S. Actively Learning Gaussian Process Dynamics. In Proceedings of the 2nd Conference on Learning for Dynamics and Control, Berkeley, CA, USA, 10–11 June 2020; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2020; Volume 120, pp. 5–15. [Google Scholar]

- Gafurov, A.N.; Lee, S.; Ali, U.; Irfan, M.; Kim, I.; Lee, T.M. AI-driven Digital Twin for Autonomous Web Tension Control in Roll-to-Roll Manufacturing System. Sci. Rep. 2025, 15, 24096. [Google Scholar] [CrossRef]

- Brown, L.A.; Fernandes, R.; Verrelst, J.; Morris, H.; Djamai, N.; Reyes-Muñoz, P.; D.Kovács, D.; Meier, C. GROUNDED EO: Data-driven Sentinel-2 LAI and FAPAR Retrieval Using Gaussian Processes Trained with Extensive Fiducial Reference Measurements. Remote Sens. Environ. 2025, 326, 114797. [Google Scholar] [CrossRef]

- Haas, M.; Onuseit, V.; Powell, J.; Zaiß, F.; Wahl, J.; Menold, T.; Hagenlocher, C.; Michalowski, A. Improving the Weld Seam Quality in Laser Welding Processes by Means of Bayesian Optimization. Procedia CIRP 2024, 124, 772–775. [Google Scholar] [CrossRef]

- Karkaria, V.; Goeckner, A.; Zha, R.; Chen, J.; Zhang, J.; Zhu, Q.; Cao, J.; Gao, R.X.; Chen, W. Towards a Digital Twin Framework in Additive Manufacturing: Machine Learning and Bayesian Optimization for Time Series Process Optimization. J. Manuf. Syst. 2024, 75, 322–332. [Google Scholar] [CrossRef]

- Johnson, J.E.; Jamil, I.R.; Pan, L.; Lin, G.; Xu, X. Bayesian Optimization with Gaussian-process-based Active Machine Learning for Improvement of Geometric Accuracy in Projection Multi-photon 3D Printing. Light Sci. Appl. 2025, 14, 56. [Google Scholar] [CrossRef]

- Maier, M.; Zwicker, R.; Akbari, M.; Rupenyan, A.; Wegener, K. Bayesian Optimization for Autonomous Process Set-up in Turning. CIRP J. Manuf. Sci. Technol. 2019, 26, 81–87. [Google Scholar] [CrossRef]

- Kavas, B.; Balta, E.C.; Tucker, M.R.; Krishnadas, R.; Rupenyan, A.; Lygeros, J.; Bambach, M. In-situ Controller Autotuning by Bayesian Optimization for Closed-loop Feedback Control of Laser Powder Bed Fusion Process. Addit. Manuf. 2025, 99, 104641. [Google Scholar] [CrossRef]

- Gnanasambandam, R.; Shen, B.; Law, A.C.C.; Dou, C.; Kong, Z. Deep Gaussian Process for Enhanced Bayesian Optimization and Its Application in Additive Manufacturing. IISE Trans. 2025, 57, 423–436. [Google Scholar] [CrossRef]

- Li, G.; Wang, Y.; Kar, S.; Jin, X. Bayesian Optimization with Active Constraint Learning for Advanced Manufacturing Process Design. IISE Trans. 2026, 58, 257–271. [Google Scholar] [CrossRef]

- Khatamsaz, D.; Neuberger, R.; Roy, A.M.; Zadeh, S.H.; Otis, R.; Arróyave, R. A Physics Informed Bayesian Optimization Approach for Material Design: Application to NiTi Shape Memory Alloys. npj Comput. Mater. 2023, 9, 221. [Google Scholar] [CrossRef]

- Lee, C.; Wang, K.; Wu, J.; Cai, W.; Yue, X. Partitioned Active Learning for Heterogeneous Systems. J. Comput. Inf. Sci. Eng. 2023, 23, 041009. [Google Scholar] [CrossRef]

- Christianson, R.B.; Gramacy, R.B. Robust Expected Improvement for Bayesian Optimization. IISE Trans. 2024, 56, 1294–1306. [Google Scholar] [CrossRef]

- Li, C.Y.; Rakitsch, B.; Zimmer, C. Safe Active Learning for Multi-Output Gaussian Processes. In Proceedings of the 25th International Conference on Artificial Intelligence and Statistics (AISTATS), Virtual, 28–30 March 2022; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2022; Volume 151, pp. 4512–4551. [Google Scholar]

- Qian, H.M.; Wei, J.; Huang, H.Z.; Dong, Q.; Li, Y.F. Kriging-based Reliability Analysis for a Multi-output Structural System with Multiple Response Gaussian Process. Qual. Reliab. Eng. Int. 2023, 39, 1622–1638. [Google Scholar] [CrossRef]

- Yu, Y.; Ma, D.; Yang, M.; Yang, X.; Guan, H. Surrogate Modeling with Non-stationary-noise Based Gaussian Process Regression and K-Fold ANN for Systems Featuring Uneven Sensitivity Distribution. Aerosp. Sci. Technol. 2024, 150, 109157. [Google Scholar] [CrossRef]

- Kim, J.; Yi, S.R.; Song, J. Estimation of First-passage Probability under Stochastic Wind Excitations by Active-learning-based Heteroscedastic Gaussian Process. Struct. Saf. 2023, 100, 102268. [Google Scholar] [CrossRef]

- Wang, Y.; Pan, H.; Shi, Y.; Wang, R.; Wang, P. A new active-learning estimation method for the failure probability of structural reliability based on Kriging model and simple penalty function. Comput. Methods Appl. Mech. Eng. 2023, 410, 116035. [Google Scholar] [CrossRef]

- Chun, J. Active Learning-Based Kriging Model with Noise Responses and Its Application to Reliability Analysis of Structures. Appl. Sci. 2024, 14, 882. [Google Scholar] [CrossRef]

- Park, C.; Waelder, R.; Kang, B.; Maruyama, B.; Hong, S.; Gramacy, R.B. Active Learning of Piecewise Gaussian Process Surrogates. Technometrics 2026, 68, 186–201. [Google Scholar] [CrossRef]

- Astudillo, R.; Frazier, P. Bayesian Optimization of Function Networks. In Proceedings of the Advances in Neural Information Processing Systems, Virtual, 6–14 December 2021; Curran Associates, Inc.: Red Hook, NY, USA, 2021; Volume 34, pp. 14463–14475. [Google Scholar]

- Buathong, P.; Wan, J.; Astudillo, R.; Daulton, S.; Balandat, M.; Frazier, P.I. Bayesian Optimization of Function Networks with Partial Evaluations. In Proceedings of the 41st International Conference on Machine Learning, Vienna, Austria, 21–27 July 2024; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2024; Volume 235, pp. 4752–4784. [Google Scholar]

- Lartaud, P.; Humbert, P.; Garnier, J. Solving Bayesian Inverse Problems Using Gaussian Process Regression with Goal-oriented Active Learning. Technometrics 2026, 68, 172–185. [Google Scholar] [CrossRef]

- Leco, M.; McLeay, T.; Kadirkamanathan, V. A Two-step Machining and Active Learning Approach for Right-first-time Robotic Countersinking through In-process Error Compensation and Prediction of Depth of Cuts. Robot. Comput.-Integr. Manuf. 2022, 77, 102345. [Google Scholar] [CrossRef]

- Schreiter, J.; Nguyen-Tuong, D.; Eberts, M.; Bischoff, B.; Markert, H.; Toussaint, M. Safe Exploration for Active Learning with Gaussian Processes. In Machine Learning and Knowledge Discovery in Databases; Springer International Publishing: Cham, Switzerland, 2015; Volume 9286, pp. 133–149. [Google Scholar]

- Park, S.M.; Lee, T.; Lee, J.H.; Kang, J.S.; Kwon, M.S. Gaussian Process Regression-based Bayesian Optimization of the Insulation-coating Process for Fe–Si Alloy sheets. J. Mater. Res. Technol. 2023, 22, 3294–3301. [Google Scholar] [CrossRef]

- Prentzas, I.; Fragiadakis, M. Quantified Active Learning Kriging Model for Structural Reliability Analysis. Probabilistic Eng. Mech. 2024, 78, 103699. [Google Scholar] [CrossRef]

- Patel, R.A.; Kesharwani, S.S.; Ibrahim, F. Active Learning and Gaussian Processes for the Development of Dissolution Models: An AI-based Data-efficient Approach. J. Control. Release 2025, 379, 316–326. [Google Scholar] [CrossRef]

- Ghosh, S.; Berkenkamp, F.; Ranade, G.; Qadeer, S.; Kapoor, A. Verifying Controllers Against Adversarial Examples with Bayesian Optimization. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia, 21–25 May 2018; pp. 7306–7313. [Google Scholar]

- Maddox, W.J.; Balandat, M.; Wilson, A.G.; Bakshy, E. Bayesian Optimization with High-Dimensional Outputs. In Proceedings of the Advances in Neural Information Processing Systems, Virtual, 6–14 December 2021; Curran Associates, Inc.: Red Hook, NY, USA, 2021; Volume 34, pp. 19274–19287. [Google Scholar]

- Lu, M.; Li, H.; Hong, L. An Adaptive Kriging Reliability Analysis Method Based on Novel Condition Likelihood Function. J. Mech. Sci. Technol. 2022, 36, 3911–3922. [Google Scholar] [CrossRef]

- Kontoudis, G.P.; Otte, M. Adaptive Exploration-Exploitation Active Learning of Gaussian Processes. In Proceedings of the 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Detroit, MI, USA, 1–5 October 2023; pp. 9448–9455. [Google Scholar]

- Chitturi, S.R.; Ramdas, A.; Wu, Y.; Rohr, B.; Ermon, S.; Dionne, J.; Da Jornada, F.H.; Dunne, M.; Tassone, C.; Neiswanger, W.; et al. Targeted Materials Discovery Using Bayesian Algorithm Execution. npj Comput. Mater. 2024, 10, 156. [Google Scholar] [CrossRef]

- Denkenaa, B.; Wichmanna, M.; Rokickib, M.; Stürenburga, L. Active Learning for the Prediction of Shape Errors in Milling. Procedia CIRP 2024, 126, 324–329. [Google Scholar] [CrossRef]

- Gardner, J.; Pleiss, G.; Weinberger, K.Q.; Bindel, D.; Wilson, A.G. GPyTorch: Blackbox Matrix-Matrix Gaussian Process Inference with GPU Acceleration. Adv. Neural Inf. Process. Syst. 2018, 31, 7576–7586. [Google Scholar]

- Bingham, E.; Chen, J.P.; Jankowiak, M.; Obermeyer, F.; Pradhan, N.; Karaletsos, T.; Singh, R.; Szerlip, P.; Horsfall, P.; Goodman, N.D. Pyro: Deep Universal Probabilistic Programming. J. Mach. Learn. Res. 2019, 20, 973–978. [Google Scholar]

- Huang, H.; Xu, T.; Xi, Y.; Chow, E. HiGP: A high-performance Python package for Gaussian Processes. J. Open Source Softw. 2026, 11, 8621. [Google Scholar] [CrossRef]

- Matthews, A.G.d.G.; van der Wilk, M.; Nickson, T.; Fujii, K.; Boukouvalas, A.; Leon-Villagrá, P.; Ghahramani, Z.; Hensman, J. GPflow: A Gaussian Process Library using TensorFlow. J. Mach. Learn. Res. 2017, 18, 1–6. [Google Scholar]

- Dutordoir, V.; Salimbeni, H.; Hambro, E.; McLeod, J.; Leibfried, F.; Artemev, A.; van der Wilk, M.; Hensman, J.; Deisenroth, M.P.; John, S.T. GPflux: A Library for Deep Gaussian Processes. arXiv 2021, arXiv:2104.05674. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Müller, A.; Nothman, J.; Louppe, G.; et al. Scikit-learn: Machine Learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Saves, P.; Lafage, R.; Bartoli, N.; Diouane, Y.; Bussemaker, J.; Lefebvre, T.; Hwang, J.T.; Morlier, J.; Martins, J.R. SMT 2.0: A Surrogate Modeling Toolbox with a Focus on Hierarchical and Mixed Variables Gaussian Processes. Adv. Eng. Softw. 2024, 188, 103571. [Google Scholar] [CrossRef]

- de Wolff, T.; Cuevas, A.; Tobar, F. MOGPTK: The Multi-output Gaussian Process Toolkit. Neurocomputing 2021, 424, 49–53. [Google Scholar] [CrossRef]

- O’Gara, D.; Binois, M.; Garnett, R.; Hammond, R.A. hetGPy: Heteroskedastic Gaussian Process Modeling in Python. J. Open Source Softw. 2025, 10, 7518. [Google Scholar] [CrossRef]

- Salvatier, J.; Wiecki, T.V.; Fonnesbeck, C. Probabilistic Programming in Python Using PyMC3. PeerJ Comput. Sci. 2016, 2, e55. [Google Scholar] [CrossRef]

- Olson, M.; Santorella, E.; Tiao, L.C.; Cakmak, S.; Eriksson, D.; Garrard, M.; Daulton, S.; Balandat, M.; Bakshy, E.; Kashtelyan, E.; et al. Ax: A Platform for Adaptive Experimentation. In Proceedings of the Fourth International Conference on Automated Machine Learning, New York City, NY, USA, 8–11 September 2025; Proceedings of Machine Learning Research; PMLR: Cambridge, MA, USA, 2025; Volume 293. [Google Scholar]

- Moss, H.; Picheny, V.; Stojic, H.; Ober, S.W.; Artemev, A.; Paleyes, A.; Vakili, S.; Markou, S.; Qing, J.; Loka, N.R.B.S.; et al. Trieste: Efficiently Exploring The Depths of Black-box Functions with TensorFlow. In Proceedings of the NeurIPS 2024 Workshop on Bayesian Decision-making and Uncertainty, Vancouver, BC, Canada, 14 December 2024. [Google Scholar]

- Paleyes, A.; Mahsereci, M.; Lawrence, N. Emukit: A Python Toolkit for Decision Making under Uncertainty. In Proceedings of the 22nd Python in Science Conference, SciPy, Austin, TX, USA, 10–16 July 2023; pp. 68–75. [Google Scholar]

- Akiba, T.; Sano, S.; Yanase, T.; Ohta, T.; Koyama, M. Optuna. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, Anchorage, AK, USA, 4–8 August 2019; pp. 2623–2631. [Google Scholar]

- Danka, T.; Horvath, P. modAL: A Modular Active Learning Framework for Python. arXiv 2018, arXiv:1805.00979. [Google Scholar] [CrossRef]

- Kandasamy, K.; Vysyaraju, K.R.; Neiswanger, W.; Paria, B.; Collins, C.R.; Schneider, J.; Poczos, B.; Xing, E.P. Tuning Hyperparameters without Grad Students: Scalable and Robust Bayesian Optimisation with Dragonfly. J. Mach. Learn. Res. 2020, 21, 1–27. [Google Scholar]

- Gramacy, R.B. tgp: An R Package for Bayesian Nonstationary, Semiparametric Nonlinear Regression and Design by Treed Gaussian Process Models. J. Stat. Softw. 2007, 19, 1–46. [Google Scholar] [CrossRef]

- Gramacy, R.B. laGP: Large-Scale Spatial Modeling via Local Approximate Gaussian Processes in R. J. Stat. Softw. 2016, 72, 1–46. [Google Scholar] [CrossRef]

- Binois, M.; Gramacy, R.B. hetGP: Heteroskedastic Gaussian Process Modeling and Sequential Design in R. J. Stat. Softw. 2021, 98, 1–44. [Google Scholar] [CrossRef]

- Roustant, O.; Ginsbourger, D.; Deville, Y. DiceKriging, DiceOptim: Two R Packages for the Analysis of Computer Experiments by Kriging-Based Metamodeling and Optimization. J. Stat. Softw. 2012, 51, 1–55. [Google Scholar] [CrossRef]

- Binois, M.; Picheny, V. GPareto: An R Package for Gaussian-Process-Based Multi-Objective Optimization and Analysis. J. Stat. Softw. 2019, 89, 1–30. [Google Scholar] [CrossRef]

| Category | Method | Characteristics | Limitations | References |

|---|---|---|---|---|

| Standard | GP with ARD | Anisotropic input weighting, implicit feature selection | Stationary kernel | [10] |

| Non-Stationary | Non-stationary kernel | Location-dependent correlations | Computational cost in high dimensions | [39,40] |

| Non-stationary | TGP | Bayesian tree partitions, local experts | Tree growth complexity | [41] |

| Non-Stationary | GPRN | Non-stationary correlations and noise, multi-output | Complex inference, tuning | [43] |

| Non-Stationary | Deep GP | Hierarchical layers, compositional learning | Nested inference, scalability | [44,45] |

| Non-Stationary | Deep kernel learning | Neural input transformation | DNN structure selection, low interpretability | [46] |

| Non-Stationary | Non-stationary and heteroscedastic GP | Non-stationary correlations and noise | Computational cost with HMC | [47] |

| Non-Stationary | Non-stationary spectral kernel | Input-dependent spectral density, non-stationary and non-monotonic covariances | Model complexity, initialization sensitivity | [48] |

| Non-Stationary | DGCN | DNN hyperparameter estimation | Low interpretability, DNN structure selection | [49] |

| Non-stationary | JGP | Local partitioning, handles discontinuities | Partition learning overhead | [51] |

| Non-Stationary | HHK-GP | Hyperplane-based local experts | Complex joint optimization | [13] |

| Non-Stationary | Attentive Kernel GP | Input-dependent attention mixes fixed-scale base kernel, masks cross-region correlations | Model complexity, choice of primitive scales and network tuning | [53] |

| Non-Stationary | PCEGP | PCE hyperparameter estimation, interpretable | PCE basis selection, scalability in high-dimensions | [28,54] |

| Non-Stationary | DJGP | Region-specific locally linear projection layers for high-dimensional piecewise continuous modeling | Increased model complexity and inference effort | [52] |

| Sparse | FITC/VFE | Approximation schemes, flexible | Bias, sensitive tuning | [56] |

| Sparse | GPz | Sparse model and heteroscedastic noise | Domain-specific tuning | [58] |

| Sparse | VSGP | Variational inference, scalable posterior sampling | Inducing point selection critical | [55] |

| Sparse | Online sparse BO | Adaptive inducing point updates | Sensitivity to initialization | [57] |

| Sparse | MedGP | Multi-output, temporal dynamics | Limited to time series | [59] |

| Sparse | Sparse Additive GP | Hierarchical, additive modeling | Partitioning choices | [60] |

| Sparse | Transfer sparse GP | Transfer learning, inducing point selection algorithm | Limited to homogenous domains | [62] |

| Dynamic | State-space GP | dynamic system modeling, latent states | One GP for each state needed | [29,64,65] |

| Dynamic | NARX-GP | Non-linear autoregression, feedback | High model complexity | [29,64] |

| Dynamic | Cautious GP-MPC | Uncertainty aware MPC, sparse GP | Limited to one application | [63] |

| Dynamic | Digital twin GP | Real-time emulation, adaptive control | Sensitive to data quality | [66] |

| Multi-Output | Treed MOGP | Adaptive partitions, local surrogates | Hyperparameter optimization of tree | [67] |

| Multi-Output | Multi-task GP | Individualized measures of confidence, causal inference as a multi-task learning problem | Limited to medical use-case | [68] |

| Multi-Output | Spectral kernel MOGP | Parametric family of complex-valued cross-spectral densities | Spectral kernel tuning required | [69] |

| Multi-Output | Hetero MOGP | Output-specific likelihoods | Scalability limits | [30] |

| Multi-Output | Sparse MOGP | Multi-output, temporal and cross-variable structure | Output alignment required | [59] |

| Multi-Output | MOGP with adaptive sampling | Task allocation, data efficiency | Assumes task similarity | [71] |

| Local | TGP | Bayesian tree partitions, local experts | Tree growth complexity | [41] |

| Local | Local GP approx. | Region-wise fitting, parallelizable | Needs coordination across regions | [72] |

| Local | Patching GPs | Spatial patches, smooth boundaries | Edge inconsistency | [73] |

| Local | laGPR | Integration in non-linear finite element setting | Scalability with data set size | [74] |

| Local | HHK-GP | Learned hyperplane partitions | Joint optimization | [13] |

| Vecchia Approx. | Vecchia GP | Linear-time, spatial factorization | Global consistency loss | [76,77] |

| Vecchia Approx. | BO with Vecchia | Mini-batch, scalable BO | Approximation artifacts | [78] |

| Fast Inference | LOVE approx. | Fast variance estimation | Variance approximation error | [79] |

| Further Methods | PCE-Kriging | Global trend modeling with PCE, local variability captured by GP | High model complexity | [80] |

| Further Methods | GPBoost | Gradient boosting, mixed effects | Optimization of tree and boosting needed | [81] |

| Category | Method | Characteristics | Limitations | References |

|---|---|---|---|---|

| Initial Design | Random Sampling | Simple, flexible, baseline | Clustering, poor space-filling | [90,91] |

| Initial Design | Latin hypercube sampling (LHS) | Stratified, well-distributed projections | Axis-aligned bias, lacks optimality guarantees | [92,93] |

| Initial Design | Sobol sequence | Low-discrepancy, quasi-random, excellent space-filling | Axis bias for small n, deterministic | [91,94] |

| Initial Design | Maximin distance | Maximizes minimum distance, uniform dispersion | Computationally expensive optimization | [95,96] |

| Initial Design | Minimax distance | Guarantees uniform global coverage | Expensive in high dimensions | [97] |

| Initial Design | Grid/full factorial design | Exhaustive coverage of factor combinations | Exponential growth with dimension | [90,98] |

| Active Learning | Active Learning MacKay (ALM) | Maximizes information gain, theoretical grounding | Sensitive to noise, boundary bias | [3,99] |

| Active Learning | Fisher Information (FI) | Targets hyperparameter-sensitive regions | Focuses on gradient regions, neglects flat areas | [72] |

| Active Learning | Bayesian Active Learning by Disagreement (BALD) | Explores poorly understood regions | Requires posterior sampling, sensitive to surrogate quality | [9] |

| Active Learning | Active Learning Cohn (ALC) | Minimizes global predictive variance | High computational cost (global integral) | [100,101] |

| Active Learning | Bayesian Query-by-Commitee (B-QBC) | Posterior-based model disagreement | GP predictive uncertainty not considered | [11] |

| Active Learning | Query by Mixture of Gaussian Processes (QB-MGP) | Mixture of GP models, combines disagreement and variance | No explicit balance of epistemic vs. aleatoric uncertainty | [11] |

| Active Learning | Residual Active Learning (RSAL) | Ranks candidates by residual error, focuses mispredictions | Requires reference labels, sensitive to noise | e.g., [102] |

| Active Learning | Euclidean Distance-Based Diversity (EBD) | Adds farthest points in feature space, improves coverage | Ignores model uncertainty, boundary bias | e.g., [102] |

| Active Learning | IMSE | Minimizes integrated posterior variance, global coverage | High computational cost (domain integral) | [3] |

| Active Learning | SBAL | Targets sensitive and uncertain dimensions, fast convergence | Requires sensitivity analysis | [104] |

| Bayesian Optimization | Expected Improvement (EI) | Balances exploration/exploitation | Tends to over-exploit if not tuned | [4] |

| Bayesian Optimization | Probability of Improvement (PI) | Simple, efficient | Exploitative, sensitive to | [4] |

| Bayesian Optimization | Upper/Lower Confidence Bound (UCB, LCB) | Theoretically founded, parameter-controlled trade-off | Choice of critical | [4] |

| Bayesian Optimization | Thompson Sampling (TS) | Posterior sampling, balances exploration/exploitation | Needs many samples for multi-modal functions | [2] |

| Bayesian Optimization | Knowledge Gradient (KG) | Explicit value of information, anticipates model improvement | Computationally intensive | [2] |

| Bayesian Optimization | Entropy Search (ES)/PES | Reduces uncertainty on global optimum location | High computational cost, entropy estimation required | [2] |

| Bayesian Optimization | Batch BO (qEI, qUCB, BatchBALD) | Enables parallel experiments, batch diversity | Interaction effects in batch optimization | [2,9,111] |

| Bayesian Optimization | Multi-Objective BO (MOBO) | Pareto optimization, multiple objectives | Scalability with objectives, complex trade-offs | [2] |

| Database | Link | Query | Total | Relevant | Included | Excluded |

|---|---|---|---|---|---|---|

| Scopus | https://www.scopus.com/search/form.uri?display=advanced (accessed on 23 March 2026) | TITLE-ABS-KEY((("gaussian process*" or kriging) and ("active learning" or "adaptive learning" or "adaptive sampling" or "sequential design" or "bayesian optimi*" or "efficient global optimi*" or "safe exploration" or "information gathering")) and PUBYEAR > 2003 and PUBYEAR < 2026) | 3333 | 30 | 30 | 3303 |

| Web of Science | https://www.webofscience.com/wos/woscc/advanced-search (accessed on 23 March 2026) | TS=((("gaussian process*" or kriging) and ("active learning" or "adaptive learning" or "adaptive sampling" or "sequential design" or "bayesian optimi*" or "efficient global optimi*" or "safe exploration" or "information gathering"))) and PY=(2004-2025) | 2422 | 24 | 0 | 2422 |

| Fused result | 30 | |||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Polke, D.; Ahle, E.; Söffker, D. Adaptive Learning with Gaussian Process Regression: A Comprehensive Review of Methods and Applications. Mach. Learn. Knowl. Extr. 2026, 8, 101. https://doi.org/10.3390/make8040101

Polke D, Ahle E, Söffker D. Adaptive Learning with Gaussian Process Regression: A Comprehensive Review of Methods and Applications. Machine Learning and Knowledge Extraction. 2026; 8(4):101. https://doi.org/10.3390/make8040101

Chicago/Turabian StylePolke, Dominik, Elmar Ahle, and Dirk Söffker. 2026. "Adaptive Learning with Gaussian Process Regression: A Comprehensive Review of Methods and Applications" Machine Learning and Knowledge Extraction 8, no. 4: 101. https://doi.org/10.3390/make8040101

APA StylePolke, D., Ahle, E., & Söffker, D. (2026). Adaptive Learning with Gaussian Process Regression: A Comprehensive Review of Methods and Applications. Machine Learning and Knowledge Extraction, 8(4), 101. https://doi.org/10.3390/make8040101