DeepHits: A Multimodal CNN Approach to Hit Song Prediction

Abstract

1. Introduction

- Multimodal Integration: We integrate heterogeneous data sources—low-level and high-level audio features, song lyrics, and metadata (e.g., artist popularity)—into a single deep learning model for hit prediction.

- Spectrogram-Based CNN: Although convolutional neural networks (CNNs) have previously been employed in HSS [6,12], most existing work has focused on audio-only pipelines or has lacked the simultaneous integration of multiple modalities. By applying 2D CNNs to the log-Mel spectrograms of audio signals, we aim to capture fine-grained temporal and frequency patterns that are often difficult to detect through manually engineered features. Crucially, we combine these CNN-derived audio embeddings with lyrical and metadata inputs, thereby extending the use of CNNs beyond isolated audio analysis and enabling a richer, multimodal representation of each track. While several recent studies have explored multimodal approaches that combine audio, lyrics, and metadata [9,10,11], they do not incorporate advanced 2D CNN architectures applied to spectrograms. We thus advance prior multimodal efforts by integrating spectrogram-based 2D CNNs within a unified predictive framework, allowing for more sophisticated audio representation learning in tandem with textual and contextual features.

| Study | Method Type | Modalities | Dataset | Results | Benchmark |

|---|---|---|---|---|---|

| Dhanaraj and Logan (2005) [4] | SVM and boosting classifiers | Audio (MFCCs) and lyrics (topics) | Hit vs. non-hit songs (Billboard charts) | Some predictive power, but limited by simple features (accuracy modestly above chance) | - |

| Pachet and Roy (2008) [2] | Feature-based classification | Audio (proprietary descriptors) | 32,000 songs (hits vs. others) | Inconclusive results; no robust hit prediction achieved (HSP “not yet a science”) | - |

| Yang et al. (2017) [6] | Deep CNN on spectrograms | Audio only | Western and Chinese pop hits (streaming play-count data) | Deep model outperformed shallow models in popularity prediction (higher accuracy than MLP/SVM) | - |

| Oramas et al. (2017) [8] | Deep multimodal pipeline: text-FFN + audio-CNN => late-fusion MLP | Audio (CQT) and artist biography text | MSD-A (328,000 tracks/24,000 artists, Million Song Dataset + metadata) | Cold-start song recommendation.: MAP@500 = 0.0036 | - |

| Delbouys et al. (2018) [13] | CNN (audio) and LSTM (lyrics)—tested early fusion vs. late fusion | Audio and lyrics | 18,644 songs (Deezer Mood Detection Dataset) | Arousal: R2 0.235 (audio deep > classical); valence: mid-fusion R2 0.219 > unimodal (best fusion) | - |

| Zangerle et al. (2019) [9] | Wide and deep neural network (dense features and deep audio net) | Audio (MFCCs, high-level features) and Metadata (release year) | Million Song Dataset ± Billboard Hot 100 labels (11,000 songs) | Acc 75%; fusion > low- or high-level alone | ✓ |

| Martín-Gutiérrez et al. (2020) [11] | Deep autoencoder and fully connected DNN | Audio and lyrics and metadata | SpotGenTrack Popularity Dataset, 101,939 tracks scraped from Spotify + Genius | ~83% accuracy in three-class popularity classification (outperforms prior models by integrating all modalities) | ✓ |

| Vavaroutsos and Vikatos (2023) [10] | Deep multimodal net and triplet loss (metric learning); CNN handles low-level audio | Audio, lyrics, and metadata (year) | Hit Song Prediction Dataset + Genius lyrics (11,634 songs, balanced) | ~80% accuracy for hit vs. non-hit prediction—about 8% higher than previous audio-only baseline (demonstrates lyric and temporal features boost performance) | ✓ |

| Choi et al. (2017) [7] | Convolutional Recurrent Neural Network (CRNN) | Audio (mel-spectrogram) | Million Song Dataset (MSD), tagwise AUC evaluation | AUC 0.8950 (best CRNN model) | - |

2. Materials and Methods

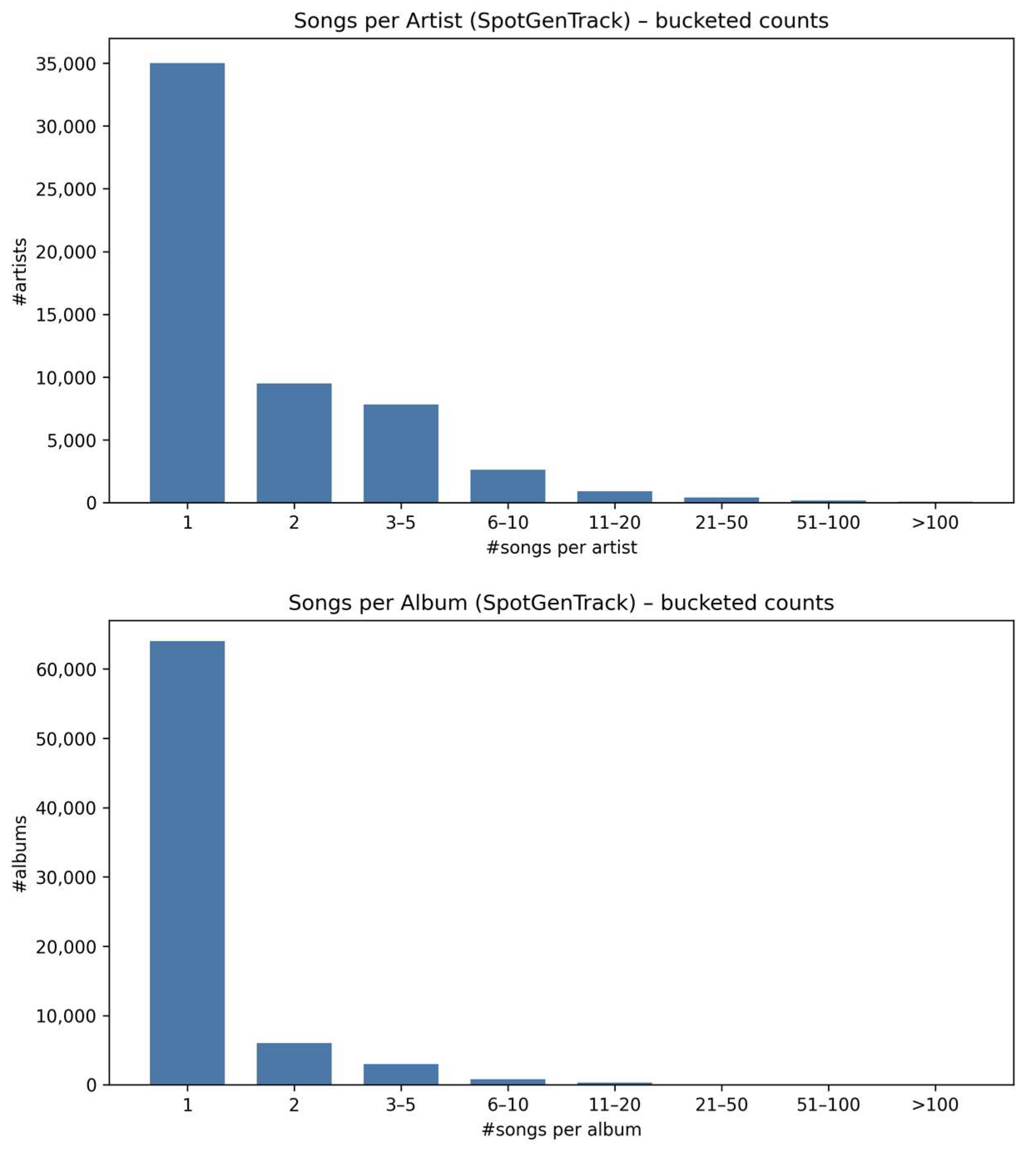

2.1. Dataset

- High-Level Audio Descriptors (Spotify Audio Features)

- Low-Level Audio (Log-Mel Spectrograms)

- High-level audio descriptors derived with the Essentia library (Spotify-style)

- Missing-descriptor sensitivity (speechiness, liveness)

- Lyric Embeddings

- Metadata Features

- Data Preprocessing

2.2. Implementation

2.2.1. Model Architecture

- Low-Level Audio Features (log-Mel spectrograms)

- Lyric Embeddings (Multilingual BERT)

- High-Level Numeric Features and Metadata

- Feature Fusion and Output Layer

2.2.2. Training Configuration

2.2.3. Evaluation Metrics and Analysis

- Regression Models

- Classification Models

- Regularization and overfitting control

3. Results

3.1. Regression vs. Classification

- Data split and stratification

- Model selection

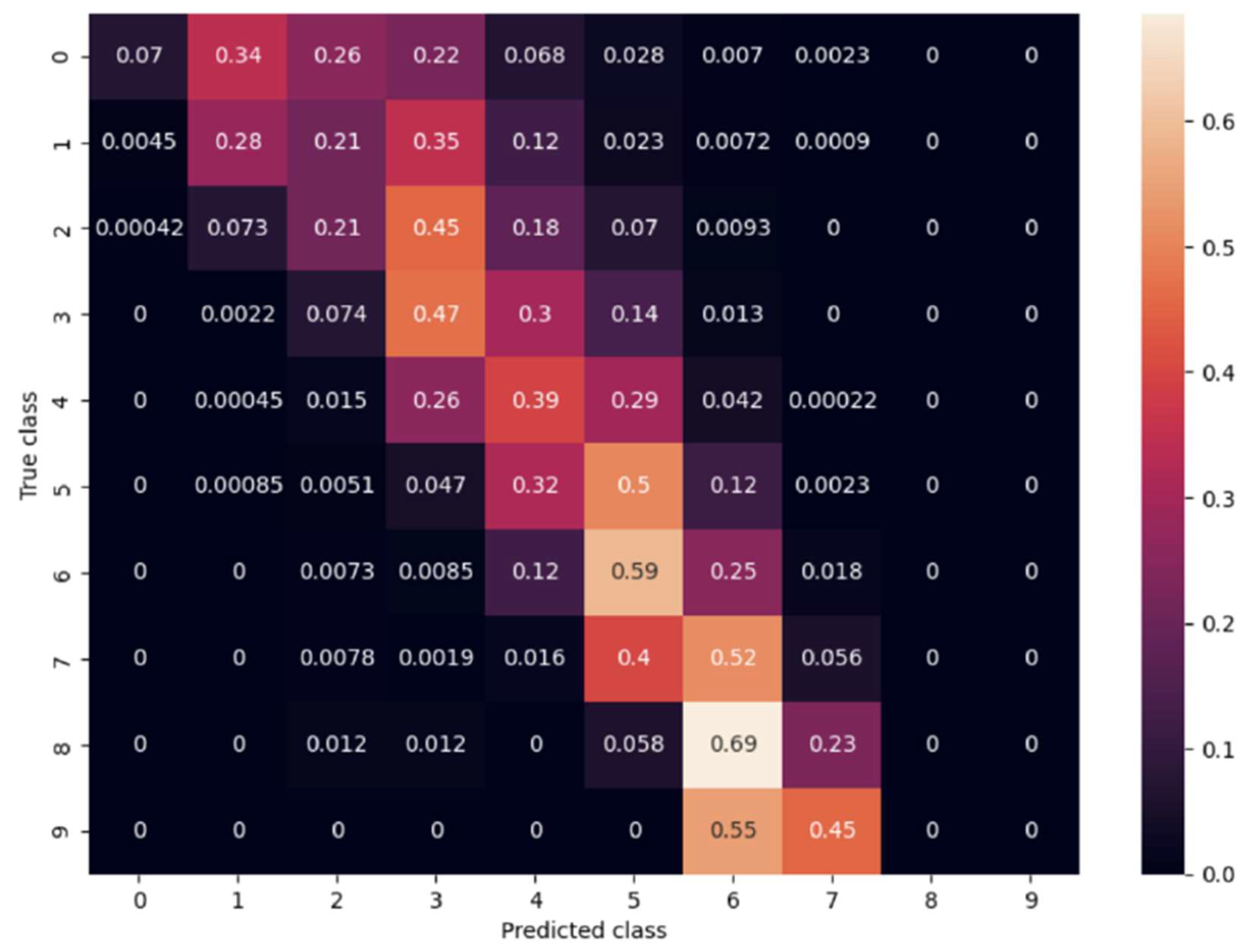

3.2. Number of Classes for Classification

3.3. Empirical Findings

3.3.1. Performance Comparison of Regression and Classification Models

- Capacity-controlled shallow baselines

| Feature Set | Macro-F1 (%) | Macro-Recall (%) | Macro-Precision (%) | Accuracy (%) | Within ±1 tier (%) | Tier MAE (↓) | ROC-AUC (Macro, %) | PR-AUC (Macro, %) |

|---|---|---|---|---|---|---|---|---|

| Audio-only | 8.99 | 11.96 | 13.53 | 24.48 | 64.45 | 1.265 | 63.60 | 13.00 |

| Metadata-only | 20.92 | 20.50 | 34.00 | 34.22 | 80.74 | 0.912 | 80.60 | 24.80 |

| Early fusion (audio+ meta) | 22.96 | 22.07 | 33.20 | 34.47 | 80.79 | 0.901 | 81.10 | 25.20 |

| Late fusion (weighted class-score vectors) | 22.21 | 21.47 | 34.52 | 34.23 | 80.73 | 0.913 | 80.70 | 24.90 |

| Early fusion (class_weight = balanced) | 20.13 | 30.03 | 17.79 | 23.82 | 63.03 | 1.318 | 79.40 | 19.00 |

- Imbalance-mitigation control

- Three-Class Regression Model

- Ten-Class Regression Model

- Three-Class Classification Model

- Ten-Class Classification Model

- Benchmarking against simple baselines

- Model-to-model variance

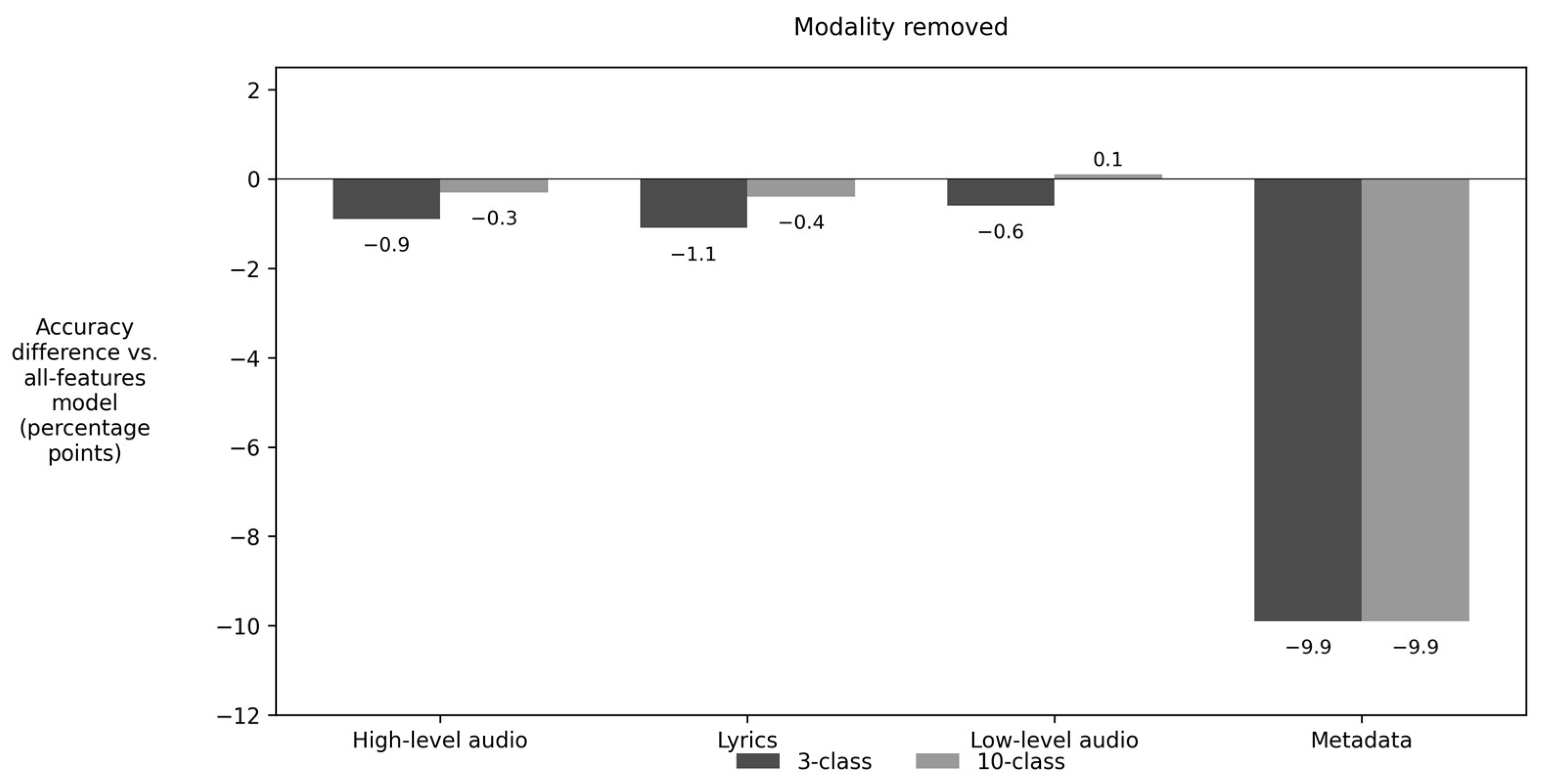

3.3.2. Ablation Study

4. Discussion

5. Conclusions

5.1. Summary

5.2. Limitations

5.3. Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Appendix A

| Number | Layer (Branch) | Kernel/Units | Stride | Padding | Output Shape * | Number of Parameters |

|---|---|---|---|---|---|---|

| Low-level-Audio-CNN (Mel-Spec 1 × 128 × 1292) | ||||||

| 1 | Conv2d 1→8 | 5 × 5 | 2 | 2 | 8 × 64 × 646 | 208 |

| 2 | BN + ReLU | – | – | – | 8 × 64 × 646 | 16 |

| Residual Block 1 | ||||||

| 3 | Conv2d 8→16 | 3 × 3 | 2 | 1 | 16 × 32 × 323 | 1152 |

| 4 | BN + ReLU | – | – | – | 16 × 32 × 323 | 32 |

| 5 | Conv2d 16→16 | 3 × 3 | 1 | 1 | 16 × 32 × 323 | 2304 |

| 6 | BN | – | – | – | 16 × 32 × 323 | 32 |

| 7 | Shortcut 1 × 1 Conv 8→16 | 1 × 1 | 2 | 0 | 16 × 32 × 323 | 128 |

| 8 | Shortcut BN | – | – | – | 16 × 32 × 323 | 32 |

| Residual Block 2 | ||||||

| 9 | Conv2d 16→32 | 3 × 3 | 2 | 1 | 32 × 16 × 162 | 4608 |

| 10 | BN + ReLU | – | – | – | 32 × 16 × 162 | 64 |

| 11 | Conv2d 32→32 | 3 × 3 | 1 | 1 | 32 × 16 × 162 | 9216 |

| 12 | BN | – | – | – | 32 × 16 × 162 | 64 |

| 13 | Shortcut 1 × 1 Conv 16→32 | 1 × 1 | 2 | 0 | 32 × 16 × 162 | 512 |

| 14 | Shortcut BN | – | – | – | 32 × 16 × 162 | 64 |

| Residual Block 3 | ||||||

| 15 | Conv2d 32→64 | 3 × 3 | 2 | 1 | 64 × 8 × 81 | 18,432 |

| 16 | BN + ReLU | – | – | – | 64 × 8 × 81 | 128 |

| 17 | Conv2d 64→64 | 3 × 3 | 1 | 1 | 64 × 8 × 81 | 36,864 |

| 18 | BN | – | – | – | 64 × 8 × 81 | 128 |

| 19 | Shortcut 1 × 1 Conv 32→64 | 1 × 1 | 2 | 0 | 64 × 8 × 81 | 2048 |

| 20 | Shortcut BN | – | – | – | 64 × 8 × 81 | 128 |

| 21 | AdaptiveAvgPool2d | – | – | – | 64 × 1 × 1 | – |

| 22 | FC 64→128 | – | – | – | 128 | 8320 |

| 23 | ReLU | – | – | – | 128 | – |

| 24 | FC 128→64 | – | – | – | 64 | 8256 |

| High-level + Metadata Branch (26-D) | ||||||

| 25 | FC 26→64 | – | – | – | 64 | 1728 |

| 26 | ReLU | – | – | – | 64 | – |

| 27 | FC 64→64 | – | – | – | 64 | 4160 |

| Lyrics-Branch (BERT CLS 768) | ||||||

| 28 | FC 768→256 | – | – | – | 256 | 196,864 |

| 29 | ReLU | – | – | – | 256 | – |

| 30 | FC 256→256 | – | – | – | 256 | 65,792 |

| Fusion and Prediction Head (64 + 64 + 256 = 384) | ||||||

| 31 | Dropout (p = 0.5) | – | – | – | 384 | – |

| 32 | FC 384→256 | – | – | – | 256 | 98,560 |

| 33 | FC 256→64 | – | – | – | 64 | 16,448 |

| 34 | Output layer (3-Class) | 64→3 | – | – | 3 | 195 |

| Model | Macro- Precision (%) | Macro- Recall (%) | Macro- F1-Score (%) | Accuracy (%) |

|---|---|---|---|---|

| Without high-level audio descriptors (Spotify features) | 74.05 | 48.21 | 52.58 | 82.51 |

| Without lyric embeddings (BERT) | 75.25 | 47.79 | 50.79 | 82.26 |

| Without low-level audio (Mel-spectrogram CNN) | 77.37 | 47.51 | 50.83 | 82.54 |

| Without metadata (artist popularity, release year) | 50.09 | 35.17 | 33.06 | 79.07 |

| All features | 74.79 | 47.86 | 52.20 | 82.63 |

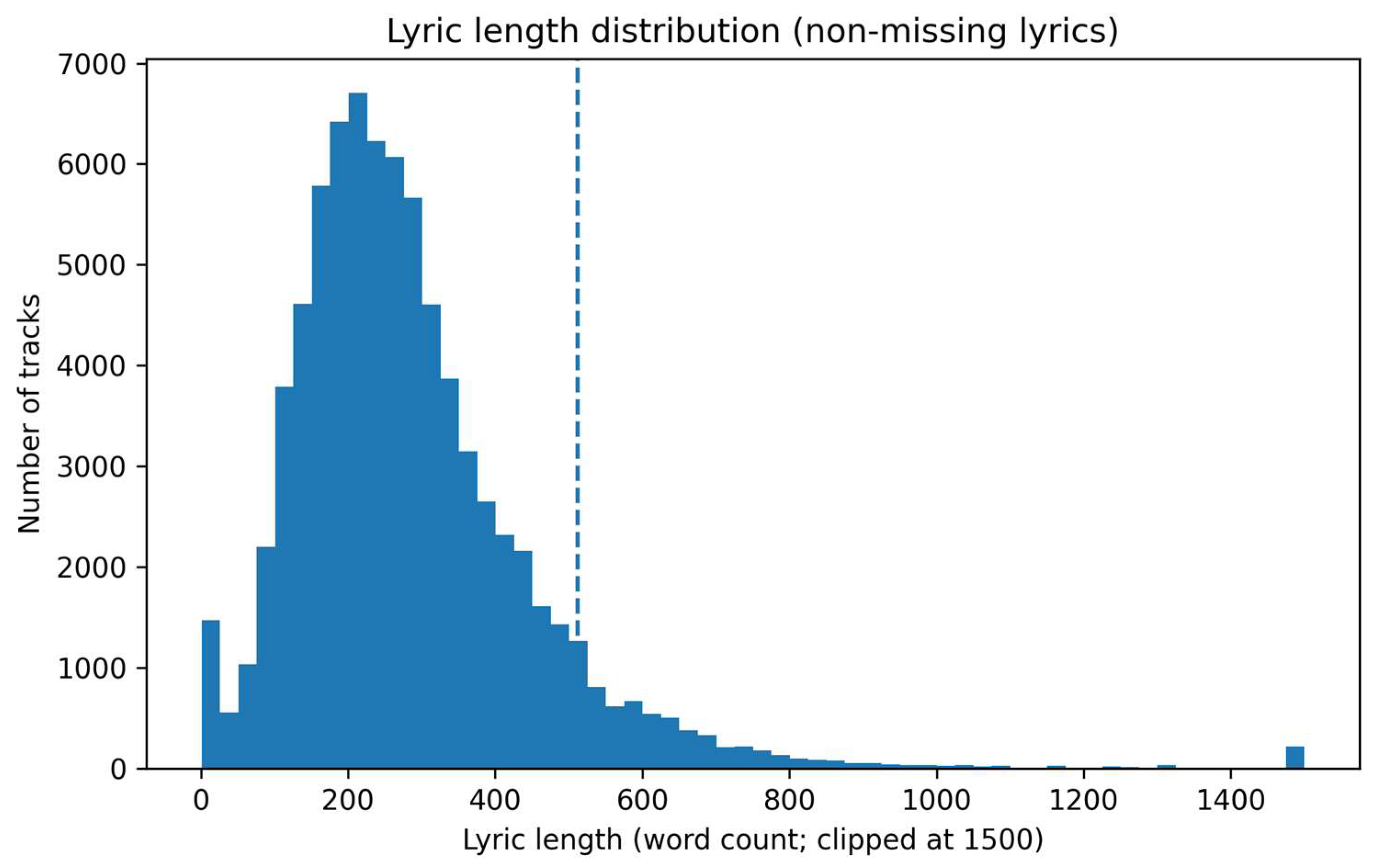

| Statistic | Value |

|---|---|

| Tracks after filtering (speechiness ≤ 0.66 and duration ≤ 7 min) | 92,517 |

| Tracks with missing lyrics (placeholder) | 13,620 |

| Missing lyrics (%) | 14.68 |

| Median lyric length (words; non-missing) | 254 |

| 95th percentile lyric length (words; non-missing) | 583 |

| 99th percentile lyric length (words; non-missing) | 841 |

| Lyrics exceeding 512 words (%) (word-count proxy; non-missing) | 7.81 |

| Lyrics exceeding 512 words (%) in the top two tiers (8–9; word-count proxy; non-missing) | 19.83 |

| Model | Macro-F1 (%) | Macro-Recall (%) | Macro-Precision (%) | Accuracy (%) | Within ±1 Tier (%) | Tier MAE (↓) |

|---|---|---|---|---|---|---|

| Audio-only | 7.30 ± 0.21 | 10.67 ± 0.32 | 9.17 ± 0.25 | 24.11 ± 0.36 | 63.45 ± 0.67 | 1.351 ± 0.009 |

| Metadata-only | 22.21 ± 0.72 | 21.10 ± 0.69 | 34.45 ± 0.56 | 34.16 ± 0.31 | 79.74 ± 0.40 | 0.927 ± 0.006 |

| Early fusion (audio+meta) | 23.46 ± 0.44 | 21.83 ± 0.38 | 30.68 ± 0.37 | 35.03 ± 0.32 | 82.33 ± 0.42 | 0.864 ± 0.007 |

| Late fusion (w_meta = 0.9) | 22.30 ± 0.65 | 20.95 ± 0.63 | 32.50 ± 0.50 | 35.12 ± 0.37 | 80.05 ± 0.47 | 0.917 ± 0.007 |

| Step | Filter | Removed n | Removed % | Remaining n |

|---|---|---|---|---|

| Raw dataset | - | 0 | 0.00 | 101,939 |

| (1) Speech filter | speechiness > 0.66 | 5362 | 5.26 | 96,577 |

| (2) Long-track filter | duration_ms > 420,000 (7 min) | 3792 | 3.72 | 92,785 |

| (3) Short-track filter | duration_ms < 30,000 (30 s) | 268 | 0.26 | 92,517 |

| Genre | Count | Share_of_Tracks_% |

|---|---|---|

| Radio play | 1218 | 22.7 |

| Guidance | 270 | 5.0 |

| Children’s audio stories | 161 | 3.0 |

| Drama | 159 | 3.0 |

| Poetry | 138 | 2.6 |

| Comedy | 126 | 2.3 |

| Comic | 104 | 1.9 |

| Reading | 100 | 1.9 |

| Children’s music | 91 | 1.7 |

| German poetry | 72 | 1.3 |

| Speechiness Threshold | Removed by Speech n | Removed by Speech % | Final Dataset | Final Dataset % | Rap Tracks Removed by Speech n | Rap Share Within Speech-Removed % |

|---|---|---|---|---|---|---|

| 0.6 | 5539 | 5.43 | 92,340 | 90.58 | 68 | 1.23 |

| 0.66 | 5362 | 5.26 | 92,517 | 90.76 | 52 | 0.97 |

| 0.7 | 5245 | 5.15 | 92,634 | 90.87 | 43 | 0.82 |

| Task | Removed Descriptors | Δ Accuracy (pp) | Δ Macro-F1 (pp) |

|---|---|---|---|

| 3-class | w/o speechiness | −0.05 | −0.06 |

| 3-class | w/o liveness | −0.06 | −0.04 |

| 3-class | w/o speechiness & liveness | −0.05 | −0.04 |

| 10-class | w/o speechiness | 0.01 | −1.09 |

| 10-class | w/o liveness | 0.04 | 0.02 |

| 10-class | w/o speechiness & liveness | −0.05 | −1.20 |

| Model | Accuracy (%) | Macro-Precision (%) | Macro-Recall (%) | Macro-F1 (%) | ROC-AUC (OVR, Macro, %) | PR-AUC (Macro, %) |

|---|---|---|---|---|---|---|

| Metadata-only | 58.80 | 50.11 | 38.14 | 40.73 | 83.08 | 45.84 |

| Audio-only | 43.48 | 27.43 | 21.27 | 16.63 | 60.74 | 23.70 |

| Early fusion (audio+meta) | 58.69 | 49.00 | 37.71 | 40.10 | 83.45 | 46.20 |

| Late fusion (weighted class-score vectors) | 57.32 | 51.81 | 31.19 | 30.56 | 82.61 | 45.97 |

References

- Seufitelli, D.B.; Oliveira, G.P.; Silva, M.O.; Scofield, C.; Moro, M.M. Hit song science: A comprehensive survey and research directions. J. New Music Res. 2023, 52, 41–72. [Google Scholar] [CrossRef]

- Pachet, F.; Roy, P. Hit Song Science Is Not Yet a Science. In Proceedings of the 9th International Conference on Music Information Retrieval (ISMIR 2008), Philadelphia, PA, USA, 14–18 September 2008; pp. 355–360. [Google Scholar]

- Bayley, J. IFPI Global Music Report: Global Recorded Music Revenues Grew 10.2% in 2023—IFPI. IFPI Report 2024. Available online: https://www.ifpi.org/ifpi-global-music-report-global-recorded-music-revenues-grew-10-2-in-2023 (accessed on 25 March 2025).

- Dhanaraj, R.; Logan, B. Automatic prediction of hit songs. In Proceedings of the 6th International Conference on Music Information Retrieval (ISMIR), London, UK, 11–15 September 2005; pp. 488–491. [Google Scholar] [CrossRef]

- Zhao, M.; Harvey, M.; Cameron, D.; Hopfgartner, F.; Gillet, V.J. An analysis of classification approaches for hit song prediction using engineered metadata features with lyrics and audio features. In Proceedings of the International Conference on Information (iConference 2023), Barcelona, Spain, 13–29 March 2023; pp. 303–311. [Google Scholar] [CrossRef]

- Yang, L.-C.; Chou, S.-Y.; Liu, J.-Y.; Yang, Y.-H.; Chen, Y.-A. Revisiting the problem of audio-based hit song prediction using convolutional neural networks. In Proceedings of the 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5–9 March 2017; pp. 621–625. [Google Scholar] [CrossRef]

- Choi, K.; Fazekas, G.; Sandler, M.; Cho, K. Convolutional Recurrent Neural Networks for Music Classification. In Proceedings of the 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), New Orleans, LA, USA, 5–9 March 2017; pp. 2392–2396. [Google Scholar]

- Oramas, S.; Nieto, O.; Sordo, M.; Serra, X. A deep multimodal approach for cold-start music recommendation. In Proceedings of the 2nd Workshop on Deep Learning for Recommender Systems (DLRS 2017), Como, Italy, 27 August 2017; pp. 32–37. [Google Scholar] [CrossRef]

- Zangerle, E.; Vötter, M.; Huber, R.; Yang, Y.-H. Hit Song Prediction: Leveraging Low- and High-Level Audio Features. In Proceedings of the 20th International Society for Music Information Retrieval Conference (ISMIR 2019), Delft, The Netherlands, 4–8 November 2019; pp. 319–326. [Google Scholar] [CrossRef]

- Vavaroutsos, P.; Vikatos, P. HSP-TL: A Deep Metric Learning Model with Triplet Loss for Hit Song Prediction. In Proceedings of the European Signal Processing Conference (EUSIPCO), Helsinki, Finland, 4–8 September 2023; pp. 146–150. [Google Scholar] [CrossRef]

- Martín-Gutiérrez, D.; Hernández Peñaloza, G.; Belmonte-Hernández, A.; Álvarez García, F. A multimodal end-to-end deep learning architecture for music popularity prediction. IEEE Access 2020, 8, 39361–39374. [Google Scholar] [CrossRef]

- Yu, L.-C.; Yang, Y.-H.; Hung, Y.-N.; Chen, Y.-A. Hit song prediction for pop music by siamese CNN with ranking loss. arXiv 2017, arXiv:1710.10814. [Google Scholar] [CrossRef]

- Delbouys, R.; Hennequin, R.; Piccoli, F.; Royo-Letelier, J.; Moussallam, M. Music mood detection based on audio and lyrics with deep neural net. arXiv 2018, arXiv:1809.07276. [Google Scholar] [CrossRef]

- Martín-Gutiérrez, D.; Hernández Peñaloza, G.; Belmonte-Hernández, A.; Álvarez García, F. SpotGenTrack Popularity Dataset. Available online: https://data.mendeley.com/datasets/4m2x4zngny/1 (accessed on 25 June 2025).

- Alonso-Jiménez, P.; Bogdanov, D.; Pons, J.; Serra, X. Tensorflow audio models in Essentia. In Proceedings of the 2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Barcelona, Spain, 4–8 May 2020. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. BERT: Pre-training of deep bidirectional transformers for language understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- Yang, D.; Lee, W.-S. Music emotion identification from lyrics. In Proceedings of the 11th IEEE International Symposium on Multimedia (ISM 2009), San Diego, CA, USA, 14–16 December 2009; pp. 624–629. [Google Scholar] [CrossRef]

- McVicar, M.; Di Giorgi, B.; Dundar, B.; Mauch, M. Lyric document embeddings for music tagging. arXiv 2021, arXiv:2112.11436. [Google Scholar] [CrossRef]

- Akalp, H.; Cigdem, E.F.; Yilmaz, S.; Bolucu, N.; Can, B. Language representation models for music genre classification using lyrics. In Proceedings of the 2021 International Symposium on Electrical, Electronics and Information Engineering (ISEEIE 2021), Seoul, Republic of Korea, 19–21 February 2021; pp. 408–414. [Google Scholar] [CrossRef]

- Spotify for Developers. Get Track’s Audio Features (Web API Reference). Available online: https://developer.spotify.com/documentation/web-api (accessed on 9 February 2026).

- Oramas, S.; Barbieri, F.; Nieto, O.; Serra, X. Multimodal deep learning for music genre classification. Trans. Int. Soc. Music Inf. Retr. 2018, 1, 4–21. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving deep into rectifiers: Surpassing human-level performance on ImageNet classification. In Proceedings of the 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 11–18 December 2015; pp. 1026–1034. [Google Scholar] [CrossRef]

- Smith, L.N.; Topin, N. Super-convergence: Very fast training of neural networks using large learning rates. In Proceedings of the SPIE Artificial Intelligence and Machine Learning for Multi-Domain Operations Applications, Baltimore, MD, USA, 15–17 April 2019; Volume 11006, pp. 369–386. [Google Scholar] [CrossRef]

- Vujović, Ž. Classification model evaluation metrics. Int. J. Adv. Comput. Sci. Appl. 2021, 12, 599–606. [Google Scholar] [CrossRef]

- Prinz, K.; Flexer, A.; Widmer, G. The Impact of Label Noise on a Music Tagger. arXiv 2020, arXiv:2008.06273. [Google Scholar] [CrossRef]

- Reisz, N.; Servedio, V.D.; Thurner, S. Quantifying the impact of homophily and influencer networks on song popularity prediction. Sci. Rep. 2024, 14, 8929. [Google Scholar] [CrossRef] [PubMed]

- Gourévitch, B. Billboard 200: The lessons of musical success in the US. Music Sci. 2023, 6, 1–13. [Google Scholar] [CrossRef]

- Interiano, M.; Kazemi, K.; Wang, L.; Yang, J.; Yu, Z.; Komarova, N.L. Musical trends and predictability of success in contemporary songs in and out of the top charts. R. Soc. Open Sci. 2018, 5, 171274. [Google Scholar] [CrossRef] [PubMed]

- Sinclair, N.C.; Ursell, J.; South, A.; Rendell, L. From Beethoven to Beyoncé: Do changing aesthetic cultures amount to “cumulative cultural evolution”? Front. Psychol. 2022, 12, 663397. [Google Scholar] [CrossRef] [PubMed]

- Tsiara, E.; Tjortjis, C. Using Twitter to predict chart position for songs. In Artificial Intelligence Applications and Innovations, IFIP AIAI 2020; Springer: Cham, Switzerland, 2020; Volume 583, pp. 62–72. [Google Scholar] [CrossRef]

- Merritt, S.H.; Gaffuri, K.; Zak, P.J. Accurately predicting hit songs using neurophysiology and machine learning. Front. Artif. Intell. 2023, 6, 1154663. [Google Scholar] [CrossRef] [PubMed]

| Modality | Raw Representation | Number of Features per Track |

|---|---|---|

| Low-level audio (log-Mel spectrograms) | 30 s preview → log-Mel spectrogram (22,050 Hz, 1024-pt FFT, 128 Mel bands, hop = 512) | 128 bins × 1292 frames = 165,376 coefficients |

| Lyrics | Full lyrics → multilingual BERT, CLS embedding | 768-dimensional CLS vector |

| High-level audio descriptors (Spotify audio features) | Spotify descriptors (danceability, energy, valence, etc.) | 12 descriptors |

| Metadata | Artist popularity, release year | 2 features |

| Audio Features | Description |

|---|---|

| Acousticness | Confidence measure (0.0–1.0) of whether the track is acoustic; 1.0 indicates high confidence. |

| Danceability | How suitable a track is for dancing (0.0–1.0). |

| Duration | Duration of the track in milliseconds. |

| Energy | Perceptual measure of intensity and activity (0.0–1.0). |

| Instrumentalness | Likelihood that the track contains no vocals (0.0–1.0). |

| Key | The key the track is in. Integers map to pitches using standard Pitch Class notation. If no key was detected, the value is −1. Range from −1 to 11. |

| Liveness | Detects the presence of an audience (0.0–1.0); values above 0.8 suggest live recordings. |

| Loudness | Overall loudness in decibels (dB), typically between −60 and 0 dB. |

| Mode | Modality: 1 = major, 0 = minor. |

| Speechiness | Presence of spoken words; higher values indicate more speech-like content (e.g., talk shows, audiobooks). |

| Tempo | Overall estimated tempo in beats per minute (BPM). |

| Valence | Musical positiveness conveyed (0.0–1.0); higher values sound more positive. |

| Filtering Step | Criterion | Removed (N) | Removed (%) | Remaining (N) |

|---|---|---|---|---|

| Raw dataset | - | - | - | 101,939 |

| Speech-dominant removal | speechiness > 0.66 | 5362 | 5.26 | 96,577 |

| Very long track removal | duration > 7 min | 3792 | 3.72 | 92,785 |

| Short track removal | duration < 30 s | 268 | 0.26 | 92,517 |

| Macro- Precision (%) | Macro- Recall (%) | Macro- F1-Score (%) | Accuracy (%) | ||

|---|---|---|---|---|---|

| Regression | 3-Class | 74.14 | 45.09 | 48.30 | 81.55 |

| 10-Class | 29.27 | 16.62 | 16.30 | 35.27 | |

| Classification | 3-Class | 74.79 | 47.86 | 52.20 | 82.63 |

| 10-Class | 33.90 | 21.84 | 23.15 | 37.00 | |

| Model | Macro- Precision (%) | Macro- Recall (%) | Macro- F1-Score (%) | Accuracy (%) |

|---|---|---|---|---|

| Without high-level audio descriptors (Spotify features) | 42.95 | 22.52 | 23.80 | 36.70 |

| Without lyric embeddings (BERT) | 37.50 | 23.94 | 25.98 | 36.56 |

| Without low-level audio (Mel-spectrogram CNN) | 35.64 | 23.03 | 24.90 | 37.08 |

| Without metadata (artist popularity, release year) | 27.11 | 14.21 | 13.65 | 27.11 |

| All features | 33.90 | 21.84 | 23.15 | 37.00 |

| Study (Year) | Modalities 1 | Dataset (Size) | Task | Number of Classes | Accuracy (%) | Macro-F1 (%) |

|---|---|---|---|---|---|---|

| Zangerle et al. (2019) [9] | A hi+lo, M | MSD + Billboard (11,000) | Hit vs. non-hit | 2 | 75.0 | - |

| Martín-Gutiérrez et al. (2020) [11] | A,L,M | SpotGenTrack (101,939) | Popularity | 3 | 83.0 | - |

| Vavaroutsos and Vikatos (2023) [10] | A,L,M | HSP + Genius (11,600) | Hit vs. non-hit | 2 | 80.0 | - |

| Our study (2025) | A (Mel),L,M | SpotGenTrack (92,517) | Popularity | 3/10 | 82.63/37.00 | 52.20/23.15 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Nofer, M.; Nimani, V.; Hinz, O. DeepHits: A Multimodal CNN Approach to Hit Song Prediction. Mach. Learn. Knowl. Extr. 2026, 8, 58. https://doi.org/10.3390/make8030058

Nofer M, Nimani V, Hinz O. DeepHits: A Multimodal CNN Approach to Hit Song Prediction. Machine Learning and Knowledge Extraction. 2026; 8(3):58. https://doi.org/10.3390/make8030058

Chicago/Turabian StyleNofer, Michael, Valdrin Nimani, and Oliver Hinz. 2026. "DeepHits: A Multimodal CNN Approach to Hit Song Prediction" Machine Learning and Knowledge Extraction 8, no. 3: 58. https://doi.org/10.3390/make8030058

APA StyleNofer, M., Nimani, V., & Hinz, O. (2026). DeepHits: A Multimodal CNN Approach to Hit Song Prediction. Machine Learning and Knowledge Extraction, 8(3), 58. https://doi.org/10.3390/make8030058