1. Introduction

Developing autonomous mobile robots that can navigate and make decisions has traditionally required substantial expertise in control theory, state machines, perception, and real-time software [

1]. Even relatively simple tasks, such as following traffic signs, often entail extensive integration work: designing vision pipelines, tuning parameters, and performing iterative testing in simulation and hardware. These requirements create a high barrier to entry and slow down prototyping and deployment.

Large Language Models (LLMs) offer a complementary mechanism for specifying and adapting behavior. Rather than encoding intent exclusively through hand-crafted logic, developers can express task goals in natural language and leverage the model to infer context-dependent suggestions [

2]. Prior work has used LLMs for high-level planning, task decomposition, and manipulation [

3]. However, many existing approaches rely on cloud inference, specialized hardware, or model fine-tuning, which reduces portability and is often incompatible with embedded mobile platforms operating under strict power and latency budgets.

Mobile robot navigation imposes a non-negotiable constraint: control must meet millisecond-scale deadlines to remain safe under sensing uncertainty and dynamic environments [

4]. While Vision Language Action (VLA) models [

5] demonstrate end-to-end coupling between perception and action, they typically require substantial computation and can be difficult to interpret and constrain.

Directly integrating an LLM into the low-level control loop is therefore problematic due to high inference latency and stochastic outputs [

6]; missed deadlines and malformed outputs can compromise safety and robustness. A practical alternative is a modular integration paradigm that separates perception, language-based reasoning, and deterministic control, preserving real-time guarantees while leveraging language-driven guidance only where it demonstrably adds value.

In this work, we treat language as a source of high-level guidance that complements rather than replaces a deterministic controller. We introduce a modular framework that augments a conventional Finite-State Machine (FSM) with asynchronous LLM suggestions, accepted only when timely and safe. Concretely, the FSM maintains a strict 50 Hz control loop with a <5 ms fallback path. In parallel, an off-the-shelf LLM (without fine-tuning) proposes structured adjustments to via prompt templates. The model is instructed to return a lightweight JavaScript Object Notation (JSON) object (e.g., {"linear_x": 0.05, "angular_z": −0.10}), which serves as the exchange format for knowledge extraction. A validator enforces JSON well-formedness, deadline compliance, and explicit kinematic bounds before merging an accepted suggestion with the baseline command. This design preserves the FSM’s deterministic safety guarantees while allowing the LLM to provide context-aware refinements during approach phases.

We evaluate the framework in a 5 × 5 m Maze Arena, both in Gazebo (a robotics simulation environment) simulation and on a physical TurtleBot3 Burger (a differential-drive mobile robot platform). Across trials per condition, the LLM-augmented controller reduces the final position error from 0.246 m to 0.159 m and improves trajectory efficiency from 0.821 to 0.901, without affecting control-loop latency. Approximately 80% of LLM outputs pass validation and are applied. Finally, an ablation against a smoothing baseline indicates that the observed gains are not explained by filtering alone, but arise from anticipatory, context-dependent adjustments.

The main contributions of this work are as follows:

Plug-and-play knowledge extraction: A modular framework that augments existing Robot Operating System 2 (ROS 2) navigation with off-the-shelf LLM guidance, without model fine-tuning or additional data collection.

Latency-aware edge architecture: A validation layer and deterministic fallback mechanism that preserves a stable 50 Hz control loop while accommodating asynchronous LLM inference on embedded hardware (Jetson Orin).

Verified performance gains: In both simulation and real-world experiments, the augmented controller improves positioning accuracy (0.246 → 0.159 m) and trajectory efficiency (0.821 → 0.901) relative to the baseline.

Edge ML efficiency analysis: An ablation study shows that a distilled Small Language Model (SLM) (DistilGPT-2 (a distilled GPT-2 language model)) extracts sufficient navigational context to outperform a classical smoothing baseline, supporting the necessity of the LLM component.

Accessibility focus: The framework reduces developer effort by enabling behavioral tuning through prompt modifications rather than extensive code changes.

Overall, the paper provides a practical and interpretable pathway for integrating LLM guidance into embedded robot navigation. The remainder of the paper is structured as follows:

Section 2 reviews related work,

Section 3 presents the system architecture,

Section 4 details the implementation,

Section 5 describes the experimental setup,

Section 6 examines the results, including those of the ablation study,

Section 7 discusses limitations, and

Section 8 concludes the paper.

2. Related Work

This section reviews prior work relevant to integrating language models into robotic navigation. We discuss conventional engineering approaches (state machines, perception stacks, and Robot Operating System (ROS) tooling), LLMs and multimodal policies for decision-making, navigation-oriented language and vision–language methods, and the safety and real-time constraints that dominate embedded deployment in ROS 2. We also highlight a frequently under-reported dimension, developer accessibility, encompassing implementation effort, debugging overhead, and prompt-iteration cost. The discussion emphasizes trade-offs among training requirements, deployment modality (cloud vs. on-device), control-loop guarantees, and the practicality of knowledge-extraction mechanisms for embedded robotics.

2.1. Conventional Robot Programming Challenges

Mobile robot navigation has traditionally been implemented using explicit state machines, classical controllers (e.g., PID), and hand-engineered perception pipelines [

7]. Building such systems requires expertise in control, computer vision, and real-time software engineering, and even relatively simple behaviors often involve substantial integration and tuning effort. Middleware frameworks such as ROSs [

8] improve modularity but introduce their own learning curve, including message interfaces, launch systems, and debugging tools. As a result, robotics codebases can become difficult to maintain and extend, especially when perception and control components must be co-tuned across simulation and hardware [

9].

2.2. LLMs and Multimodal Models in Robotics

A growing body of work leverages LLMs and vision–language models for high-level reasoning, planning, and manipulation. CLIPort [

10] combines CLIP-based representations with Transporter-style networks for language-conditioned tabletop manipulation, while SayCan [

11] grounds LLM outputs in skill primitives and value estimates to execute long-horizon instructions. The RT family (RT-1 [

12], RT-2 [

5], RT-X [

13]) illustrates policy generalization through large-scale data collection and training. PaLM-E [

14] further integrates multimodal perception with language-based reasoning. More recently, VLA models such as

and

[

15,

16] report strong generalization across heterogeneous robot data. Despite their capabilities, these systems often require substantial computation and curated datasets, which can limit portability and make on-device deployment challenging.

2.3. Language-Grounded Navigation

Vision Language Models (VLMs) have also been applied to navigation [

17]. LM-Nav [

18] composes pretrained vision and language modules to execute outdoor navigation tasks. ViNT [

19] trains a generalist navigation transformer across diverse trajectories and reports transfer across environments. VLMaps [

20] and LERF [

21] incorporate language into 3D scene representations for flexible goal specification. More direct LLM-based navigation has been explored by NavGPT [

22] using large proprietary models for zero-shot reasoning and through long-horizon instruction-following on legged robots [

23]. While promising, many of these approaches face practical limitations for embedded control, including high inference latency, sensitivity to prompt design, and limited real-time safety guarantees.

Several studies pursue “plug-and-play” integration to reduce training requirements. DriveMLM, for example, applies LLMs to autonomous driving and zero-shot robotic control [

24]. However, prompt design can become a bottleneck: reasoning-style prompting (e.g., chain-of-thought) can affect performance substantially [

25], yet discovering effective prompts often requires iterative experimentation and domain knowledge [

26]. This motivates methods that constrain outputs, reduce prompt fragility, and provide robust validation mechanisms.

2.4. Deployment Challenges: Safety, Real-Time Constraints, and Resource Limitations

Safety and determinism are central challenges when deploying LLM-driven components on physical robots [

27]. Prior analyses report that LLM/VLM-based policies can degrade under perturbations and distribution shift [

28].

Fundamental deployment barriers. Beyond average inference latency, several factors hinder direct deployment of recent LLM/VLM/VLA methods on embedded mobile robots. First, token-level decoding is often variable under common sampling strategies, which complicates reproducibility, regression testing, and certification-oriented workflows when the model is placed in a closed-loop system. Second, hallucinations and structured-output violations (e.g., malformed fields, missing keys, or numerically inconsistent values) can produce unusable or unsafe actions unless strict output constraints and runtime validation are enforced [

27,

28]. Third, memory and computation requirements can exceed embedded budgets or induce thermal throttling, especially when language inference must co-execute with perception workloads on shared accelerators. Finally, systems-level effects in ROS 2 (message queuing, executor scheduling, clock alignment, and jitter under heterogeneous load) can amplify variability and complicate timing guarantees [

29,

30,

31].

Real-time constraints further complicate integration: LLM inference latencies can reach hundreds of milliseconds, which conflicts with millisecond-scale control deadlines [

32,

33]. Existing reports on LLM–ROS 2 integration [

34] remain fragmented and often provide limited guidance on how to combine slow, stochastic reasoning with fast, deterministic control in a principled and reproducible manner. These constraints motivate architectures in which language-based reasoning is treated as opportunistic guidance, gated by deterministic mechanisms (schema checks, bounded actions, freshness policies, and a fast fallback path), rather than embedded directly into the low-level loop.

Small language models in robotics. Despite potential advantages for resource-constrained platforms, small language models (sub-billion to a few billion parameters) remain comparatively understudied in robotics. Community benchmarks and flagship demonstrations prioritize cloud-scale models and long-horizon reasoning, while embedded-centric metrics (peak memory usage, energy per query, latency percentile distributions under concurrent load) are infrequently reported. Tooling for constrained decoding and structured output validation has matured primarily in large-model ecosystems, whereas lightweight on-device deployments often rely on generic generation without strong guarantees. Additionally, evaluation protocols that combine real-time control requirements with language-model reliability dimensions (format correctness, action boundedness, temporal freshness) remain unstandardized. These gaps motivate systematic investigation of small models under embedded constraints with explicit safety mechanisms. In this work, we specifically study whether a lightweight generative model can provide bounded, structured refinements under explicit validation, rather than acting as a standalone policy.

2.5. Developer-Centered Perspectives

Most robotics studies prioritize robot-level metrics (e.g., success rate, trajectory error, or completion time), whereas developer-centered metrics are rarely reported. Measures such as implementation time, debugging effort, and the number of prompt iterations are uncommon in empirical evaluations [

35]. Surveys and perspective articles highlight this gap and call for methods that reduce programming complexity and support practical adoption [

36,

37]. From this viewpoint, a method may improve navigation accuracy yet remain impractical if it requires specialized training pipelines, extensive dataset collection, or large-scale computation resources. Accordingly, we treat prompt editing as the primary tuning interface and report developer-centered indicators alongside robot-level outcomes in our evaluation.

In contrast to prior studies, our work targets the interface between semantic guidance and real-time control. We propose a modular, plug-and-play framework that integrates LLM-based knowledge extraction into ROS 2 navigation without fine-tuning while preserving a deterministic 50 Hz control loop via a sub-5 ms fallback path. By employing a lightweight model (DistilGPT-2) on-device, we avoid cloud dependencies and mitigate latency bottlenecks. Finally, by reporting both robot-level outcomes and developer-centered indicators, we complement performance-driven evaluations with an accessibility-oriented perspective.

To clarify these differences,

Table 1 summarizes representative approaches by domain, training requirements, control considerations, and accessibility-related aspects.

3. System Architecture

This section presents the overall architecture of our proposal, which integrates LLM-based knowledge extraction into mobile robot navigation while preserving the safety guarantees of a conventional deterministic controller. The architecture follows a modular, neuro-symbolic paradigm, layering logic-based control with data-driven reasoning. This layered approach ensures seamless integration with ROS2 and real-world execution on edge devices. Here we provide a high-level overview; all technical components and timing mechanisms are explained in detail in

Section 4.

3.1. Overall Framework

The framework transforms classical robot navigation into a modular, language-augmented pipeline. Instead of replacing deterministic controllers with end-to-end neural policies, the LLM acts as an asynchronous reasoning layer on top of a reliable Finite-State Machine (FSM). This hybrid design ensures that the robot always maintains stable, high-frequency control (symbolic layer) while gaining adaptive, context-aware suggestions from the LLM (neural layer).

As shown in

Figure 1, the framework is organized into three main layers:

3.2. Perception Layer

The Perception Layer captures the environmental context through a vision-based detector. A lightweight Convolutional Neural Network (CNN) model processes camera input to identify relevant traffic signs in real time. The detector provides three key outputs: class, estimated distance, and lateral offset. This design is modular, allowing the framework to incorporate different sensors or perception modules without requiring changes to the reasoning or control logic.

3.3. Control Layer

The Control Layer is centered on a deterministic FSM. Each state encodes a specific navigation behavior, and transitions are triggered strictly by perception events or safety timers. This provides a robust rule-based structure that guarantees the robot can operate safely at 50 Hz, even if the upper reasoning layer experiences latency or failure.

3.4. Knowledge-Extraction Layer

The Knowledge-Extraction Layer (formerly Enhancement Layer) introduces LLM-based reasoning as a plug-and-play module. Structured prompts summarize the current FSM state, sign detection, and baseline command to form a semantic context. The LLM processes this context to extract actionable velocity refinements. Crucially, this layer operates asynchronously: the high-latency LLM inference never blocks the high-frequency FSM loop. Suggestions are validated and fused only when available; otherwise, the system seamlessly defaults to the baseline control.

3.5. Prompt Engineering Strategy

The prompt is dynamically adapted to the robot’s state to maximize relevance. The template includes the current FSM phase, the detected sign class, spatial metrics (distance, offset), and the baseline velocity command. The LLM is instructed to act as a “velocity optimizer,” outputting only valid velocity pairs within a strict JSON schema. This strategy simplifies parsing and mitigates the risk of hallucinated commands. Prompts are requested only during the APPROACH phase, where fine-grained velocity tuning provides the largest efficiency benefits.

4. Implementation Details

This section describes the technical implementation of our framework, with emphasis on deploying knowledge-extractio components on embedded hardware. We detail the hardware constraints, the asynchronous software architecture, and the ROS 2 integration used to validate the system in both Gazebo simulation and on a physical TurtleBot3 platform.

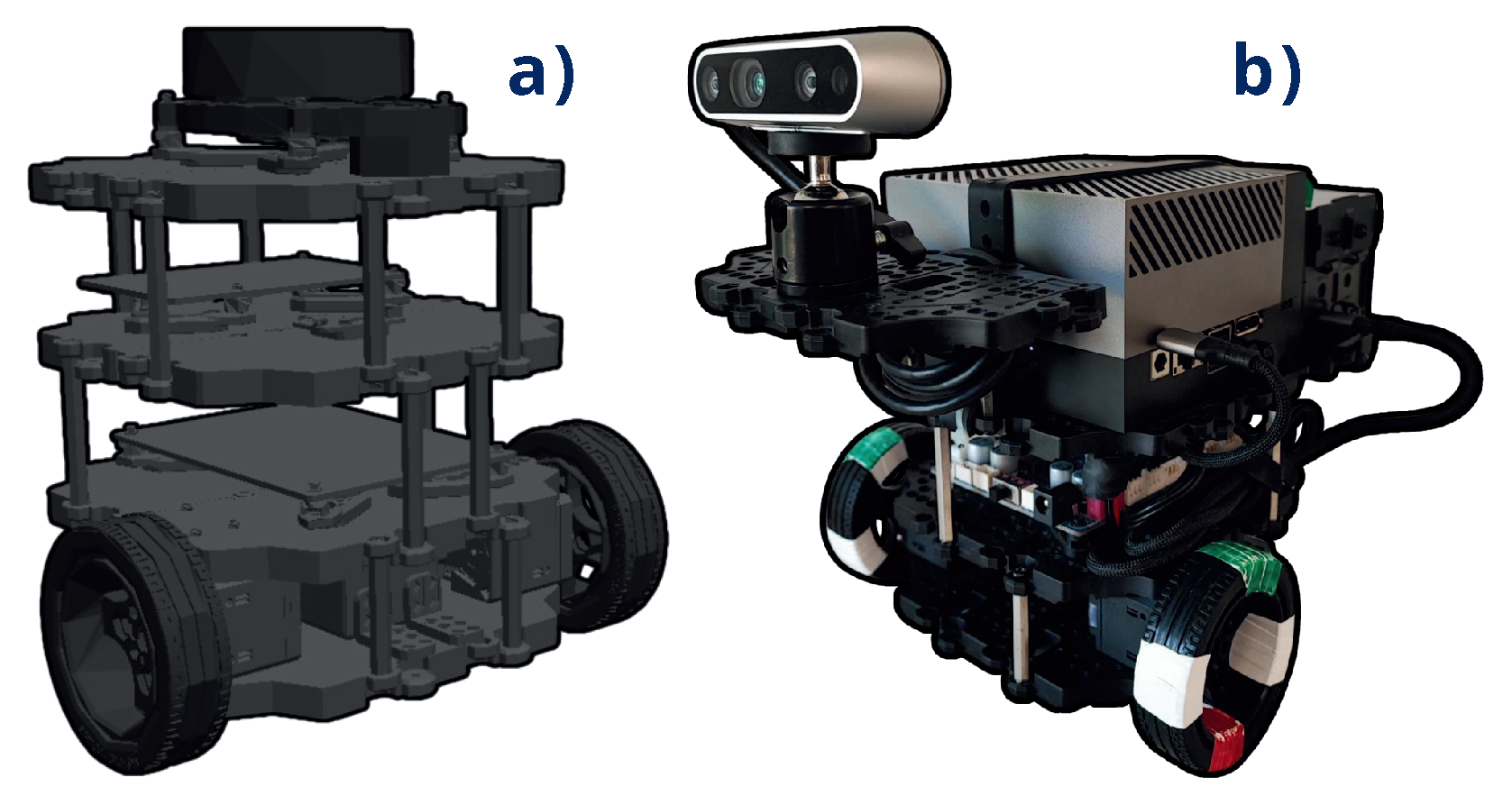

4.1. Hardware Setup

The robotic platform is a TurtleBot3 Burger equipped with an Intel RealSense D435i Red–Green–Blue plus Depth (RGB-D) camera. The complete stack runs on an NVIDIA Jetson AGX Orin (an embedded AI computing platform) (64 GB RAM, Ampere GPU), enabling on-device execution of both the You Only Look Once (YOLO)-based perception module (vision) and the DistilGPT-2 inference module (language). Running locally avoids cloud dependencies, reduces network-induced delays, and supports privacy-preserving deployment.

4.2. Software Framework

The system is implemented in ROS 2 Humble and decomposed into modular nodes that separate deterministic control from stochastic language-based suggestions.

4.2.1. Perception Node

The perception node captures synchronized RGB-D streams from the Intel RealSense D435i (

Figure 2). RGB frames are processed by a YOLOv8-based detector trained on the three traffic signs used in the Maze Arena (

left arrow,

right arrow,

stop). The trained model is exported to Open Neural Network Exchange (ONNX) and deployed as an independent ROS 2 node. It publishes structured outputs: (i)

/sign_class (detected label,

std_msgs/msg/String); (ii)

/sign_distance (estimated range; see

Section 3); (iii)

/sign_offset (normalized lateral displacement w.r.t. the image center); (iv)

/sign_detection/annotated_image (overlay image,

sensor_msgs/msg/Image).

4.2.2. FSM Node (Deterministic Controller)

The FSM (

Figure 3) publishes commands at a fixed 50 Hz (20 ms period). The LLM runs asynchronously and produces a new suggestion whenever inference completes (average ≈186 ms in our setup). Crucially, suggestions are not required to arrive within the next 20 ms tick. Instead, when a valid suggestion arrives, it is applied at the next available FSM tick and then held constant (sample-and-hold) until either (i) a newer valid suggestion becomes available, or (ii) a time-to-live (TTL) expires. This policy ensures that stale suggestions are never applied and also preserves deterministic 50 Hz actuation. The FSM enforces speed limits, collision-aware slowdown, and validated state transitions.

Five navigation states are defined: SEARCH, APPROACH, ACT_LEFT, ACT_RIGHT, and ACT_STOP. During APPROACH, the FSM publishes a compact JSON summary on /fsm_state containing {stamp, phase, class, distance, offset, base_v, base_w, deadline_ms}. The LLM module consumes this summary only in APPROACH; in all other states, the baseline command is applied without language intervention.

At each control tick, the FSM computes a baseline velocity pair

derived from distance and alignment errors and saturated within bounds:

where

d is the estimated range to the target sign,

is the desired stopping distance, and

is the alignment error (e.g., normalized horizontal offset of the sign centroid). The saturation operator is

Finally, first-order rate limiters are applied to and to reduce abrupt changes. The baseline command is always available as a deterministic fallback and provides the reference used when validating LLM suggestions during APPROACH.

4.2.3. LLM Node (Knowledge-Extraction/Refinement Layer)

The LLM node runs asynchronously in a separate ROS 2 process so that inference never blocks the 50 Hz control loop (

Figure 4).

It subscribes to /fsm_state and outputs candidate velocity refinements as a strict JSON object with fields linear_x and angular_z on /llm_suggestion (std_msgs/msg/String). The actuation topic /cmd_vel is published only by the FSM after validation and fusion, keeping the safety-critical path deterministic and auditable.

Interfaces. The LLM node uses the following:

Dual-rate timing and freshness (sample-and-hold with TTL). Because language-model inference is slower than the 50 Hz control loop, the FSM never waits for the LLM. The LLM produces suggestions asynchronously; once a suggestion is available, it can be applied at subsequent control ticks under a sample-and-hold policy until a newer suggestion arrives or a time-to-live (TTL) expires. Each suggestion carries the originating state timestamp

stamp. A suggestion is considered

fresh if its age satisfies

otherwise it is discarded as stale.

is selected from on-device latency profiling (and should be re-tuned when porting to different platforms or workloads).

Figure 5 illustrates the resulting dual-rate behavior.

Validation. Before fusion, each suggestion is checked for:

Suggestions are requested only in the APPROACH state, where velocity refinement is most relevant.

Fusion and fallback. Let

and

(mapped from JSON fields

linear_x and

angular_z). Validated suggestions are projected onto the admissible set

and merged as:

with

in our experiments. If validation fails or no fresh suggestion is available, the FSM applies

immediately (deterministic fallback), preserving strict 50 Hz actuation.

This separation prevents actuation from depending on non-deterministic node scheduling and ensures that all executed commands are attributable to a single deterministic controller.

4.3. LLM Configuration

We prioritize inference speed and memory footprint over raw reasoning capability. Based on the protocol in

Table 2, we select DistilGPT-2 (82 M parameters) as an edge-oriented candidate that can produce structured suggestions while remaining feasible on the Jetson Orin. Larger models (e.g., LLaMA-2 7B) exceed practical memory/latency budgets for our on-device setting, whereas smaller models (e.g., TinyGPT-2) often fail to reliably produce parsable structured outputs. In our configuration, DistilGPT-2 generates one suggestion approximately every ∼200 ms (about 5 Hz) under our decoding settings.

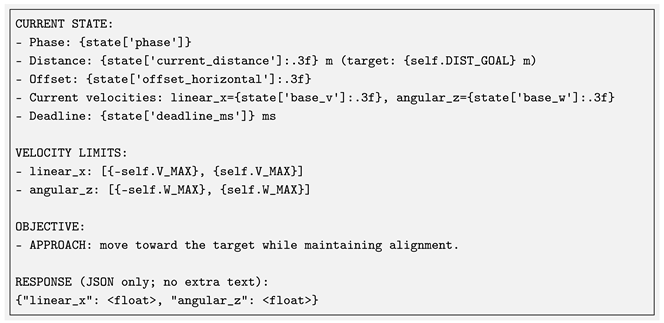

Prompt Engineering

To enable robust parsing and validation, we enforce a strict JSON output format. The prompt encodes the robot state and kinematic limits and instructs the model to return only a JSON object with numeric fields

linear_x and

angular_z. The template used is:

![Make 08 00049 i002 Make 08 00049 i002]()

This design provides two benefits. First, it constrains outputs to a machine-parsable schema compatible with automatic validation and fusion. Second, it supports prompt-level behavioral tuning: developers can refine the APPROACH strategy (e.g., “move slower within 0.5 m of the target”) by editing natural-language instructions rather than modifying the FSM control logic.

5. Experimental Setup

This section describes the experimental methodology used to evaluate the proposed framework in terms of navigation performance, real-time behavior, and developer-oriented usability aspects. The protocol is designed to be reproducible in both simulation and real-world settings and includes an ablation study to isolate the contribution of LLM-based semantic guidance from pure signal smoothing.

5.1. Test Environments

Experiments were conducted in two environments to assess sim-to-real consistency:

Simulation (Gazebo): A TurtleBot3 Burger model was equipped with a simulated Intel RealSense D435i. Simulation enables controlled and repeatable trials under identical initial conditions.

Real robot (TurtleBot3 Burger): The same ROS 2 stack was deployed without code changes on a physical TurtleBot3 Burger with a RealSense D435i and an NVIDIA Jetson AGX Orin. This setting captures real-world effects such as sensor noise, illumination changes, wheel slip, and ground friction.

Figure 6 shows the simulation and physical setups.

5.2. Navigation Scenario

A

m Maze Arena (

Figure 7) was used in both Gazebo and the physical setup. The layout and placement of traffic signs (left, right, stop) were replicated as closely as possible across domains to ensure comparability. Each trial starts from a fixed initial pose and ends when the robot reaches the action threshold

and completes the corresponding maneuver (or when a timeout criterion is triggered; see

Section 4).

In both simulation and real-robot experiments, each sign has a fixed, pre-measured pose in the arena coordinate frame. In the simulation, the target point is obtained directly from the Gazebo world model. In the real setup, sign poses are measured once in the arena frame, and the robot pose is estimated in the same frame via the localization stack used in our TurtleBot3 deployment (logged at 50 Hz). This ensures that compares positions expressed in a consistent coordinate frame across domains.

5.3. Experimental Conditions (Ablation Design)

To assess whether improvements arise from semantic guidance rather than smoothing alone, we compare three controllers:

- 1.

FSM-only (Baseline): The deterministic Finite-State Machine (FSM) without language-based refinement.

- 2.

FSM + smoothing (Control): A purely signal-processing baseline that applies an Exponential Moving Average (EMA) to the FSM command to mimic smoothing effects without LLM input. Specifically, we filter the baseline command as

with

in our experiments. For the smoothing-only baseline, we set the EMA parameter to

to match the fusion weight used in our proposed controller (

). This yields a fair ablation; both methods apply comparable smoothing strength, but only the proposed method receives semantic guidance from the language model. In general, a smaller

reduces smoothing and approaches the raw FSM behavior, while a larger

increases smoothing but may introduce lag and degrade responsiveness near transitions.

- 3.

FSM + LLM (Proposed): The full framework in which the FSM produces

and the asynchronous LLM suggestions are validated and fused as described in Equation (

5).

An end-to-end “LLM-only” controller was not included because it cannot meet the real-time and safety requirements of the platform under our on-device inference constraints (in particular, inference latency and the lack of deterministic fallback).

For each environment (simulation and real robot), we executed trials per condition (total trials). During each trial, all relevant topics were logged at 50 Hz.

5.4. Evaluation Metrics

We evaluate the following Key Performance Indicator (KPIs). All metrics are computed per trial and then aggregated across trials.

Positioning accuracy. The final position error is defined as

where

is the robot position at the stop event and

is the desired stop location.

Trajectory efficiency. The trajectory efficiency is

where

is the traveled path length (arc-length) obtained from the logged robot pose at 50 Hz, and

.

is computed offline from the known Maze Arena layout as the shortest collision-free path length between the start pose and the target sign pose (using the same map constraints for both simulation and real trials).

Control-loop latency. Let

denote the start time of the control tick

k and

the time at which the final velocity command is published. The control-loop latency is

and we report summary statistics of

over all ticks in a trial.

Language-model inference latency. Let

be the timestamp embedded in the input state for suggestion

i, and

the time at which the suggestion becomes available after generation and parsing. The inference latency is

Integration rates. We report the suggestion-level acceptance rate,

and tick-level utilization rate (the fraction of control ticks in which a validated suggestion is actually applied),

For data collection and reproducibility, during each trial, we recorded:

FSM states, transitions, detections, and baseline velocity outputs;

LLM prompts, raw outputs, validation outcomes, and fused commands;

Final executed velocities and timestamps for latency analysis.

Post-processing scripts compute trial-level metrics and export the results to CSV. To support reproducibility, all datasets and scripts are available on request.

6. Results and Discussion

This section reports the outcomes of the Maze Arena evaluation conducted in both Gazebo and on the physical TurtleBot3. We compare the deterministic baseline FSM against the proposed framework (FSM+LLM). Unless otherwise stated, results are reported as a pooled summary across simulation and real-robot trials ( per condition) to increase statistical power for inferential testing.

6.1. Final Positioning Accuracy

We report the terminal positioning error

as defined in Equation (

13) (Euclidean distance between the final robot pose at the stopping event and the trial-specific target point).

Figure 8 summarizes

at the stopping event. For each trial, we record the robot’s final planar position

at the instant the FSM transitions into the terminal action state (e.g.,

ACT_STOP) and compare it to the target point

defined by the Maze Arena layout (fixed sign pose; see

Section 5).

Aggregated over pooled trials per condition, the FSM baseline yields m, whereas FSM+LLM reduces the error to m, corresponding to an approximate 35% reduction.

These results indicate that validated LLM suggestions improve approach-phase velocity modulation, yielding more consistent terminal alignment and stopping behavior. Reduced terminal error is particularly valuable for repeatable interaction with landmarks and for downstream tasks that require reliable spatial alignment.

6.2. Trajectory Efficiency

We report trajectory efficiency

as defined in Equation (

8), and

Figure 9 compares

across conditions. In both simulation and real-robot experiments, each sign has a fixed, pre-measured pose in the arena coordinate frame. In simulation, the target point

is obtained directly from the Gazebo world model. In the real setup, sign poses are measured once in the arena frame and the robot pose is estimated in the same frame via the localization stack used in our TurtleBot3 deployment (logged at 50 Hz). This ensures that

compares positions expressed in a consistent coordinate frame across domains.

Pooled across trials, the FSM-only baseline achieves , while FSM+LLM reaches . This improvement is consistent with fewer detours and reduced oscillations during approach and alignment phases. Higher efficiency generally implies shorter travel time and lower energy consumption, which are relevant for embedded deployments.

The fusion mechanism in Equation (

5) introduces a smoothing effect because the FSM updates at 50 Hz, while LLM suggestions arrive at a lower rate (approximately every ∼200 ms). Consequently,

is held constant across multiple FSM ticks, which can reduce abrupt command changes. However, the ablation in

Section 6.4 indicates that smoothing alone does not account for the full improvement: the LLM-guided controller outperforms an EMA-based smoothing control that applies comparable low-pass behavior without language-derived context.

6.3. Real-Time Behavior and LLM Integration

As defined in Equations (

9) and (

10), we report the 50 Hz control-loop latency

and the LLM inference latency

.

Figure 10 reports the control-loop command publication latency for the deterministic 50 Hz loop. Because suggestions are applied asynchronously under a sample-and-hold policy, the LLM inference latency (approximately 186 ms) does not affect the 50 Hz control-loop timing. The FSM continues to publish at every 20 ms tick; LLM outputs, when they arrive, update the held suggestion for subsequent ticks as long as they remain within the TTL.

The additional computation for parsing, validation, and fusion introduces only a small overhead relative to the FSM tick budget (approximately ∼0.2 ms in our measurements), and does not change the deterministic scheduling of the baseline controller. To contextualize where delays arise, we distinguish (i) perception-side latency (camera capture and detector inference), (ii) ROS 2 transport and executor jitter (queuing/scheduling), (iii) LLM inference time, and (iv) validation/fusion overhead. In our measurements, the reported control-loop latency isolates the deterministic actuation path (tick-to-publication), whereas captures the end-to-end time from stamped context to a ready-to-consume suggestion.

6.3.1. Acceptance and Rejection of LLM Suggestions

Figure 11 summarizes the fate of LLM outputs. Here we report suggestion-level statistics (one output per LLM inference) rather than per-tick control-cycle fractions. On average, 81% of LLM outputs pass validation and are fused into the control stream; the remaining outputs are rejected due to missed freshness/deadline constraints (12%), kinematic violations (5%), or schema/parse failures (2%).

While

Figure 11 reports the frequency of validation outcomes, the categories would differ substantially in terms of potential safety impact if a validator were absent. Late/stale suggestions (12%) primarily pose a performance risk; applying a command based on outdated perception can induce overshoot or oscillatory corrections, especially near the terminal stopping region. Kinematic violations (5%) are potentially safety-critical because unbounded

or

may exceed platform limits and cause collisions or loss of stability. Schema/parse failures (2%) are fail-safe in our implementation because malformed outputs cannot be mapped into an actuation command and therefore deterministically trigger fallback. Finally, we observed occasional semantic inconsistencies (rare, included in the kinematic/stale rejections when detected), such as suggesting acceleration while distance decreases; these are not necessarily kinematically unsafe but can degrade approach smoothness.

Table 3 summarizes severity, risk without validation, and the mitigation enforced by the proposed architecture.

6.3.2. Velocity Profiles

Figure 12 compares the baseline linear velocity, raw LLM outputs, and fused velocities over a representative trajectory. The FSM baseline exhibits a staircase pattern driven by discrete setpoints (

,

, and

m/s), whereas raw LLM outputs show higher variance and occasional infeasible values. Fusion with

reduces dispersion while incorporating context-dependent corrections.

Table 4 summarizes descriptive statistics for

. While accepted LLM outputs have a higher spread than the baseline, the fused command reduces variance and remains bounded by the admissible limits.

Figure 13 and

Figure 14 illustrate representative trajectory overlays. The fused trajectories show smoother re-centering near junctions and smaller terminal deviation relative to the baseline, consistent with the quantitative improvements in

and

.

Overall, these findings reinforce that the LLM component is not suitable as a standalone controller due to occasional infeasible outputs, but it can provide beneficial refinements when combined with strict validation and deterministic fallback.

6.4. Ablation Study: Semantic Guidance vs. Signal Smoothing

To test whether improvements are explained solely by low-pass smoothing, we compare FSM+LLM against a “blind” smoothing control (FSM+EMA) that applies Equation (

6) to the baseline command with

, without any LLM input.

Table 5 summarizes the pooled results. The smoothing control reduces positioning error relative to the raw FSM (from

m to

m), consistent with damping oscillations. However, FSM+LLM achieves substantially better performance (

m error and

), indicating that language-conditioned, context-dependent refinements contribute beyond smoothing alone.

6.5. Statistical Analysis of Outcomes

Table 6 reports the pooled outcomes with 95% confidence intervals (CIs). Continuous measures are reported as mean ± SD and 95%

t-CIs. For inferential testing, we compare conditions using Welch’s two-sample

t-tests (robust to unequal variances) and report Hedges’

g with small-sample correction.

The results support the descriptive trends. The final position error is reduced by approximately 0.087 m on average (, ), and the trajectory efficiency increases substantially (, ), both reflecting large practical effects. Control-loop latency does not differ significantly (), indicating that LLM integration does not compromise deterministic responsiveness.

For Welch’s t-tests, we report the test statistic using the difference (defined as Baseline minus LLM-augmented), so negative t indicates higher values under the LLM-augmented condition.

7. Limitations and Generalizability

This study validates the proposed architecture in a controlled maze scenario with three traffic-sign classes and mostly static conditions. While the deterministic FSM baseline and fallback preserve safety through construction (the control loop never blocks on language inference and all suggestions are bounded and validated), several limitations affect generalizability and performance.

Scenario complexity. The current setup does not include moving obstacles or dense dynamic interactions. In highly dynamic environments, performance gains may diminish when the environment changes faster than the language-model update rate.

Perception uncertainty. The framework assumes reasonably reliable sign detection and range estimation. Under incomplete or contradictory sensor data (false positives/negatives, intermittent detections, or biased depth), the validator preserves feasibility, but semantic refinements may become less consistent. Coupling the approach with uncertainty-aware gating and explicit confidence fields is a necessary extension.

Action space and semantic scalability. Scaling beyond a small sign vocabulary requires an explicit command ontology and state-conditioned prompt templates to preserve auditability as the semantic space grows. Stronger structured interfaces (e.g., schema-constrained decoding or tool-like actions) can further reduce format violations.

Platform dependence. The selection of freshness TTL and overall feasibility depend on measured on-device latency distributions and concurrent workloads (e.g., perception). Porting to different hardware requires re-profiling and re-tuning of timing and bounds.

8. Conclusions and Future Work

This paper presents a plug-and-play architecture that augments a deterministic Finite-State Machine with asynchronous language-model suggestions, subject to strict validation and freshness constraints. The safety-critical controller maintains a 50 Hz loop and never blocks on language inference; instead, candidate suggestions are accepted only when they satisfy a strict schema, remain fresh, and respect explicit kinematic bounds, and are then fused with the baseline command.

Across Gazebo simulation and real-TurtleBot3 experiments, the proposed controller improved navigation outcomes without increasing control-loop latency. The final position error decreased from 0.246 m to 0.159 m and the trajectory efficiency increased from 0.821 to 0.901. Approximately 80% of generated suggestions passed validation and were integrated, while late, malformed, or out-of-bounds outputs were safely rejected and replaced by the deterministic fallback. The ablation against a smoothing-only baseline further indicates that improvements are not explained solely by low-pass filtering, but by opportunistic semantic modulation during approach phases.

The method’s main practical implication is an interpretable integration pattern for edge robotics: language-based guidance can be incorporated without compromising deterministic actuation, and behavior tuning can be performed at the prompt level rather than by rewriting control code. At the same time, broader generalization depends on scenario complexity, perception reliability, and platform-dependent timing constraints, as discussed in

Section 7.

Future work will extend evaluation to more diverse and dynamic environments (moving obstacles, occlusions, lighting changes), expand semantic command sets through an explicit action ontology, and study sensitivity to fusion weight, prompt paraphrasing, and freshness policies. Additionally, we will explore constrained decoding and lightweight instruction-tuned models to further improve structured-output reliability under embedded constraints. To isolate the effect of the proposed asynchronous architecture (validation, fusion, and deterministic fallback), we kept the prompt template fixed and therefore do not claim robustness to prompt paraphrasing. Minor rewordings may change the quality of generated suggestions and, consequently, schema validity and validator acceptance rates, as well as downstream navigation metrics. Importantly, safety does not rely on prompt wording, as all suggestions are gated by strict JSON parsing, kinematic admissibility checks, and temporal freshness/TTL constraints; thus, prompt changes may affect performance but cannot bypass the safety envelope or fallback behavior. A systematic prompt-robustness evaluation is left for future work, which will aim to generate semantically equivalent paraphrases of the prompt (minor syntactic rewordings preserving the same control intent) and report variability across paraphrases in (i) schema-valid rate, (ii) validator acceptance/rejection breakdown, and (iii) navigation outcomes (e.g., and ) with confidence intervals.