1. Introduction

A core challenge in exploratory data analysis is producing two-dimensional views that are both locally faithful and globally interpretable. Popular methods include Principal Component Analysis (PCA) [

1], Uniform Manifold Approximation and Projection (UMAP) [

2], and t-distributed Stochastic Neighbor Embedding (

t-SNE) [

3]. Among these,

t-SNE is widely adopted for preserving local neighborhoods, which makes clusters visually salient. However, optimizing the Kullback–Leibler (

) divergence in the standard objective has known side effects: it tends to under-represent global relationships (inter-cluster layout, long-range structure), is sensitive to a few large

values, and its asymmetry makes it more punitive for missing close neighbors than for misplacing distant ones. These behaviors can limit interpretability when cluster arrangement and global trends matter.

Understanding the tension between local and global fidelity is critical in many practical applications. For example, in single-cell biology, similar cell types may form distinct clusters that should still reflect developmental trajectories; meanwhile, in image retrieval and language modeling, globally meaningful structure supports semantic grouping and hierarchy discovery. Existing methods have sought to balance this trade-off: f-divergence variants of t-SNE re-weight neighborhood probabilities to adjust local sensitivity, while global structure-aware algorithms such as UMAP introduce additional terms that encourage distant clusters to retain relative positioning. Despite these efforts, there remains no simple formulation that simultaneously enforces local ranking consistency and global geometric alignment within the original t-SNE optimization framework.

To address this gap, this work introduces two enhanced formulations that extend the t-SNE framework: (1) Max-Flipped Divergence () and (2) –Wasserstein Loss (). Each method is designed to mitigate specific shortcomings of the original t-SNE formulation while improving the separation and interpretability of resulting visualizations.

First, Max-Flipped Divergence () augments the traditional divergence with an additional term, , that focuses on discrepancies in similarity rankings. By explicitly penalizing violations in expected similarity orderings, this extension improves the model’s sensitivity to subtle structural variations and enhances separation between distinct groups.

Second, –Wasserstein Loss () introduces a hybrid objective that combines divergence with the classic Wasserstein distance. This integration leverages the Wasserstein metric’s ability to capture smooth, geometry-aware transport between distributions, thus reinforcing the separation between clusters while preserving meaningful local arrangements.

In contrast to prior

t-SNE extensions based on alternative f-divergences such as Jensen–Shannon, reverse KL, and other symmetric formulations [

4,

5,

6,

7,

8], as well as OT-regularized or transport-based embedding methods [

9,

10,

11,

12,

13,

14], the proposed

and

differ in two fundamental aspects.

introduces a similarity-ranking inversion mechanism that directly penalizes deviations from the local maximum probability, a behavior not captured by symmetric or alternative f-divergence objectives [

7,

8].

incorporates a direct Wasserstein

alignment term in the embedding space, unlike sliced-OT or entropy-regularized OT variants that operate in the data space or rely on smoothed surrogate distances [

9,

11,

12]. This distinction allows our formulation to jointly address local ranking fidelity and global geometric consistency.

Recent work in representation learning has shown that preserving semantic relationships in complex data is essential for obtaining globally coherent embeddings. For example, robust feature representation methods for human parsing in complex scenes [

15] emphasize maintaining consistent global structure, which is conceptually aligned with our objective of enhancing global structure consistency through the Wasserstein term. In addition, robustness to geometric transformations has been studied in other domains, such as light-field image watermarking. Techniques designed to withstand multidimensional geometric attacks [

16] emphasize the importance of preserving structural consistency under complex transformations. Although the application domain differs, this broader theme is conceptually aligned with our motivation for incorporating the Wasserstein distance to strengthen the geometric structure preservation capability of the embedding.

Together, these considerations motivate the need for embedding objectives that more faithfully preserve both local and global relationships—a goal that the proposed and formulations are specifically designed to address.

2. -Distributed Stochastic Neighbour Embedding (-SNE)

t-SNE is a widely adopted method for visualizing high-dimensional data. It relies on divergence to align probability distributions in the original high-dimensional space with those in the low-dimensional embedding. The primary goal of t-SNE is to minimize the divergence between two distributions:

- (1)

The high-dimensional probability distribution

, which models the similarity between points

and

using a Gaussian kernel:

where

is a local bandwidth parameter. The final symmetric joint probability is given by:

- (2)

The low-dimensional probability distribution

, modeled with a Student’s t-distribution to account for distant similarities:

The optimization objective in

t-SNE is to minimize the

divergence between

and

:

This ensures that the low-dimensional representation preserves the pairwise similarities of the original space as accurately as possible. The gradient of the

divergence with respect to the low-dimensional embeddings is:

This gradient is used to iteratively update the embeddings via gradient descent, ensuring the local structure is well preserved.

Although

t-SNE is highly effective for clustering and visualizing high-dimensional data, its dependence on the

divergence introduces several well-documented drawbacks. First, because the

objective primarily focuses on minimizing local reconstruction errors, it tends to emphasize neighborhood-level relationships while neglecting large-scale structures such as hierarchies, inter-cluster layouts, and global trends [

17,

18,

19]. As a result, clusters that are meaningfully related in the original space may appear arbitrarily separated or distorted in two-dimensional projections, reducing interpretability in tasks that depend on global context. Second, the asymmetric nature of

divergence makes it more sensitive to underestimations in

than to overestimations, causing

t-SNE to penalize mising close neighbors far more severely than misplacing distant ones. This imbalance strengthens local compactness but further weakens the preservation of global geometry. Addressing these limitations motivates our proposed extensions, which explicitly target both local ranking consistency and global geometric alignment.

3. Wasserstein Distance

The

p-Wasserstein distance (

) quantifies the minimum “effort” required to transport probability mass from one distribution to another over a metric space

. It unifies continuous (density-based) and discrete (empirical) settings under the same optimal transport (OT) framework [

20].

Given probability measures

P and

Q on

, the

p-Wasserstein distance is

where

is the set of couplings (transport plans) with the correct marginals:

When

P is absolutely continuous with respect to the Lebesgue measure, the Kantorovich problem admits a map solution in many cases. The Monge formulation searches for a measurable transport map

pushing

P to

Q (denoted

; i.e.,

for all Borel

A):

For

on

, [

21] shows that the optimal map exists and is the gradient of a convex potential (under mild conditions), linking (

8) and (

6).

In one dimension, the optimal map is monotone. Let

and

be the CDFs of

P and

Q, and

their quantile functions. Then

Equivalently,

yields the Monge map and recovers (

9) via (

8).

For discrete/empirical measures, let

and

with

,

. Writing

, the OT problem becomes a finite linear program:

This discrete formulation is the Kantorovich linear program [

22]. When

and

, (

10) searches over doubly-stochastic

. In one dimension with uniform weights, sorting the supports gives a closed form:

where

and

.

For general

, (

10) has

variables and is typically solved by specialized LP/OT solvers. Exact network-simplex methods scale superlinearly (often near-cubic in

n in practice), while entropic regularization leads to fast Sinkhorn matrix scaling with

work per iteration. In one dimension, computing

reduces to sorting (cost

) and then linear time; if samples are pre-sorted, the evaluation is

.

Equations (

6)–(

10) provide a unified framework for both continuous measures (via maps or plans) and discrete empirical distributions (via finite couplings), while the one-dimensional case (

9)–(

11) admits closed-form solutions [

23].

4. Proposed Methods

This section introduces two methods that directly target the well-known limitations of t-SNE. Standard t-SNE’s asymmetric overemphasizes missed neighbors, tolerates false ones, and thus causes crowding and unreliable global geometry. We address this with to enforce bidirectional fidelity and reduce false neighbors, and with to preserve global structure via a transport-aware term. Together, these objectives lower MSE, stabilize embeddings, and yield clearer, more interpretable separations with trustworthy inter-cluster distances for downstream analysis.

4.1. Max-Flipped Divergence

The proposed builds on the original loss by introducing an additional term, , which focuses on differences in similarity rankings. By capturing deviations from the highest similarity values in both the high-dimensional and low-dimensional spaces, ensures that not just the raw probabilities, but also their relative importance, are preserved. This enhancement enables to provide a more balanced embedding, effectively representing relationships between pairs of neighbors rather than focusing on a single dominant relation.

The

divergence is defined as

where

is provided in Equation (

4), and

Here,

and both

and

are subsequently normalized so that

and

for each

i.

This transformation effectively “flips” the focus of the divergence from comparing probabilities directly to measuring their deviations from the maximum probabilities within their respective distributions. Instead of emphasizing the values of or themselves, prioritizes the distances of and from their most probable counterparts, and .

The gradient of

with respect to the low-dimensional embeddings

is the sum of the gradients of the two components:

The gradient of

is given in Equation (

5). Using the Student-

t kernel

and the standard

t-SNE force decomposition, the contribution of

takes the same force form with

:

Combining (

16) with (

17) and the standard

term yields the final expression:

To avoid undefined terms when with , we (i) apply the same -floor to both and , and (ii) compute on the union of active indices per row (equivalently, after flooring-and-renormalization all entries are strictly positive). With these two steps, is well-defined.

4.2. –Wasserstein Loss

The proposed

–Wasserstein objective augments the standard

t-SNE loss with a transport-based regularization term. Formally, the combined loss is

where

is the conventional similarity-based divergence (Equation (

4)). The Wasserstein component

captures global geometric discrepancies between the original data

and the embedded points

. In our formulation, we evaluate the first Wasserstein distance

between the empirical measures

and

, computed using the

wasserstein_distance_nd routine from

scipy.stats. This computation is applied on mini-batches and incurs only moderate cost.

Because wasserstein_distance_nd provides only the scalar distance, we estimate its gradient with respect to the embedding Y using a stochastic finite-difference approximation inspired by SPSA. Small random perturbations are applied to Y, and the resulting changes in are used to approximate .

For reference, the analytic subgradient associated with an explicit optimal assignment

is

or in a smoothed form,

which avoids division by zero while converging to the true subgradient as

. In practice, because recomputing

at each iteration is computationally expensive, our implementation relies on the finite-difference estimator instead of the analytic form.

The and Wasserstein terms contribute complementary geometric information to the embedding. The divergence induces short-range attractive forces that preserve local neighborhood relations, becoming increasingly influential as clusters form. In contrast, the Wasserstein component provides global structural guidance by encouraging each embedded point to align with its high-dimensional counterpart .

To illustrate their interaction, consider the gradient of the combined objective,

The

gradient takes the well-known

t-SNE form,

which strengthens local attraction whenever

.

In contrast, away from assignment changes, a valid subgradient of

is

which pulls each embedded point toward its high-dimensional origin. This long-range signal counteracts crowding and improves the global layout.

Although the two forces may point in different directions at times, their effects are complementary. The Wasserstein component typically dominates in the early iterations, promoting a globally coherent structure, while the term refines local neighborhoods as optimization progresses. Consequently, the combined objective achieves both improved global organization and enhanced local fidelity compared with standard t-SNE.

5. Experiments

This section evaluates the performance of the proposed methods, and , across a variety of datasets. We organize the experiments as follows: (i) we first evaluate the impact of the weighting parameters and on the quality of the embeddings; (ii) we then examine the evolution of the loss function across optimization iterations; (iii) next, we compare the clustering and structure preservation performance of and against the baseline using quantitative metrics; and (iv) finally, we provide qualitative assessments through 2D visualizations of the resulting embeddings. All experiments were conducted on a MacBook Pro (13-inch, M1, 2020) running macOS Sequoia 15.1 with an Apple M1 chip and 8 GB of RAM. These hardware specifications are provided to contextualize the computational performance of the proposed methods.

5.1. Datasets

We conducted experiments on a diverse collection of benchmark datasets, including Pendigits [

24], MNIST [

25], Fashion-MNIST [

26], COIL-20 [

27], and Olivetti Faces [

28]. These datasets vary in terms of structure, modality, and complexity, allowing us to evaluate the effectiveness of our proposed methods under different distributional settings.

MNIST contains 70,000 handwritten digit images (28 × 28 pixels, grayscale), evenly distributed across 10 classes. It is widely used for evaluating clustering quality in embedding spaces.

Pendigits comprises 1797 instances of pen-based digit trajectories (8 × 8 grayscale images) representing digits 0 to 9, each assigned to one of 10 classes.

Fashion-MNIST includes 70,000 grayscale images (28 × 28 pixels) of clothing items from 10 fashion categories. Compared to MNIST, this dataset presents more subtle visual variations between classes.

Olivetti Faces consists of 400 grayscale facial images (64 × 64 pixels) of 40 individuals, with 10 different facial expressions or lighting conditions per subject. This introduces intra-class variation and is suitable for testing embedding robustness.

COIL-20 contains 1440 grayscale images of 20 objects taken from 72 different viewpoints (5-degree increments), introducing continuous rotational changes. Successful embeddings must preserve the geometric progression of these views.

In contrast, synthetic datasets such as Swiss Roll and Three Gaussians provide controlled environments for evaluating structure preservation. Real-world datasets present more complex distributions. MNIST, Pendigits, and Fashion-MNIST, composed of grayscale images with well-separated categories, serve as benchmarks for cluster fidelity. Olivetti Faces challenges embeddings with variation in lighting and expression, while COIL-20 emphasizes the need for preserving smooth geometric transformations.

5.2. Evaluation Metrics

We assess the performance of the

K-means algorithm across various embedding spaces using both external and internal validation metrics. The external metrics include the F1-score [

29,

30] and Normalized Mutual Information (NMI) [

31], both of which yield scores from 0 to 1, where higher values indicate better alignment with ground truth labels. For internal validation, we use the Davies–Bouldin Index (DBI) [

32], which quantifies cluster compactness and separation. Lower DBI values indicate better clustering performance.

5.3. Parameter Sensitivity

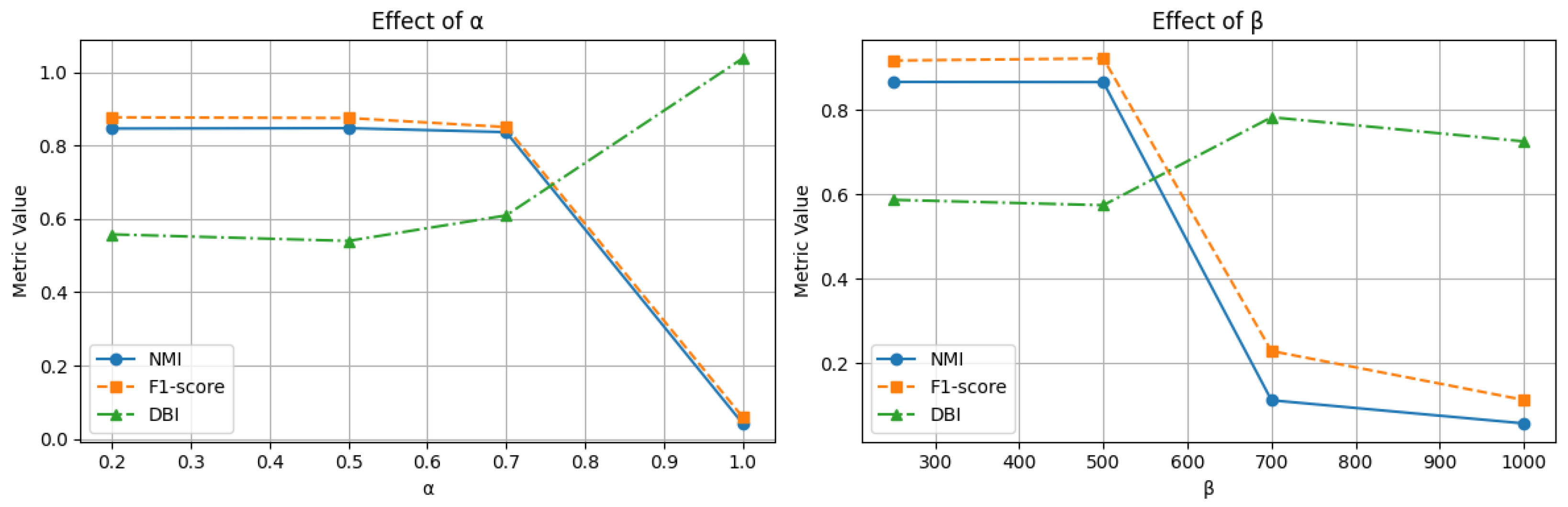

To analyze the influence of parameter weighting in our proposed loss functions, we conducted a sensitivity analysis of (for ) and (for ) on two datasets: Pendigits and COIL-20. We evaluated both quantitative results using NMI, F1-score, and DBI, as well as qualitative 2D visualizations of the embeddings.

While the full sensitivity sweep of (for ) and (for ) was performed on Pendigits and COIL-20, these two datasets were selected because they represent complementary geometric regimes: discrete, well separated clusters and smooth manifold structure. These regimes are known to generalize the behavior of neighbor-embedding objectives on high-dimensional image datasets such as MNIST and Fashion-MNIST. In practice, we found that applying the same parameter ranges (– and –500) to the remaining datasets produced stable embeddings without degradation, suggesting that these values are reasonably robust across datasets.

5.3.1. Effect on NMI and F1-Score

Figure 1 and

Figure 2 illustrate how NMI and F1-score change under varying

and

values. For the Pendigits dataset, NMI initially increases slightly as

moves from 0.2 to 0.5, then drops sharply beyond

. This suggests that a moderate influence from

enhances class separability, while excessive emphasis leads to embedding degradation.

A similar trend is observed with : NMI and F1 peak at –500, before degrading at . These results confirm that both loss functions require careful parameter tuning to balance structural preservation and noise amplification. In the COIL-20 dataset, optimal NMI and F1 are reached at and . However, performance drops at higher values, indicating over-regularization may suppress the dataset’s inherent geometric continuity.

5.3.2. Effect on DBI and Interpretation

Interestingly, the DBI values for COIL-20 remain nearly constant across parameter changes, even when NMI and F1 degrade significantly (see

Figure 2). This suggests that while the semantic alignment with class labels is affected, the geometric structure of the clusters remains stable. This is a direct result of the DBI’s nature. It evaluates clustering quality based on intra-cluster compactness and inter-cluster separation, without relying on ground truth labels.

In contrast, for Pendigits (

Figure 1), DBI trends align closely with NMI and F1. Since Pendigits consists of discrete digit classes, degradation in the embedding affects both structural and label-based clustering quality.

These differences highlight that DBI is more suitable for assessing structure-aware embeddings, particularly in datasets like COIL-20, where the class boundaries follow a smooth, continuous transformation (e.g., object rotation). The model may preserve structural integrity (low DBI) even as class labels become less distinguishable (low NMI/F1), especially under high or values.

5.3.3. Effect on Embedding Structure

These trends are reflected in the visualizations in

Figure 3 and

Figure 4. In Pendigits (

Figure 3), increasing

initially improves cluster tightness, but at

and above, the layout collapses or degenerates into scattered blobs. Similar degradation is observed for large

values.

For COIL-20 (

Figure 4), low

and

values produce visually clean and well-separated loops corresponding to object rotations. At higher values, the embeddings become tangled, yet the overall shape and spacing between clusters are partially preserved, explaining why DBI remains low despite declining NMI and F1.

5.3.4. Summary

Moderate parameter values (–, –500) consistently yield the best trade-off between local fidelity and global structure, in terms of both external (NMI, F1) and internal (DBI) metrics. The near-constant DBI observed in COIL-20 underscores that structural cluster quality can remain high even when class-label agreement is weakened, an important insight for applications involving manifold or rotational structures. These findings reinforce the value of DBI as a robust structure, preservation metric and highlight the importance of understanding dataset-specific geometry when tuning model parameters.

5.4. Convergence Analysis

Despite the slightly higher loss values observed in the Kernel Density Estimation (KDE) plots in

Figure 5, these increases are expected due to the inclusion of additional structural terms in the proposed losses. It is important to emphasize that higher numerical loss does not imply degraded embedding quality. Instead, the added terms in

and

help guide the optimization toward embeddings that are more structurally meaningful. This is validated by the consistently lower DBI scores and stronger clustering metrics (e.g., NMI and F1-score) achieved by our methods across all datasets.

The KDE plots, based on 50 independent runs per method and dataset, reveal that both and exhibit tighter and more symmetric loss distributions compared to standard divergence. This reflects greater optimization stability and reduced sensitivity to random initialization, suggesting that the proposed methods converge more consistently to robust minima.

To statistically verify these observations, we employed two non-parametric tests—the Kolmogorov–Smirnov (KS) test and the Mann–Whitney U test—because they make minimal distributional assumptions, are robust to outliers and skew, and provide complementary sensitivity: KS detects any distributional change (location, scale, or shape), while Mann–Whitney focuses on differences in central tendency dominance between groups [

33,

34]. The results, reported in

Table 1, show that for all dataset comparisons, the

p-values are below the significance threshold of 0.05. This confirms that the differences in loss distributions between KL and our proposed losses are statistically significant.

These findings further strengthen our conclusion that and not only improve the quality of low-dimensional embeddings in terms of structure preservation and cluster separation, but also provide more stable and reproducible optimization behavior.

5.5. Computational Complexity Analysis

To evaluate the practical feasibility of the proposed loss functions, we measured the total execution time required to optimize the embeddings on four benchmark datasets.

Table 2 summarizes the runtime (in seconds) of standard

t-SNE with

divergence and the modified versions using

and

.

As expected, both and introduce additional computational overhead compared to the baseline. This increase stems from the inclusion of extra components in the objective function, ranking deviation terms in and . However, this added cost is relatively modest.

Importantly, the improvements in embedding quality reflected in superior clustering scores, lower DBI values, and increased visual interpretability justify the modest increase in runtime. The structure aware regularization terms provide better guidance during optimization, resulting in embeddings that are both more informative and more stable, without incurring prohibitive computational costs.

5.6. Comprehensive Evaluation of Clustering, Structure, and Reconstruction

This section evaluates the proposed divergence measures, and , against the standard divergence in terms of clustering quality, structural preservation, and reconstruction accuracy. We apply the methods across six benchmark datasets with varying characteristics, including synthetic and real-world data.

5.6.1. Clustering Metrics

As shown in

Table 3, both proposed losses outperform the standard

divergence in terms of F1-score and NMI across most datasets. On simpler datasets such as 3-Gaussians, all methods perform comparably. However, on more challenging datasets like Pendigits, MNIST, and COIL-20,

and

achieve clear improvements. For instance, in Pendigits,

improves the NMI from 0.833 to 0.872 and the F1-score from 0.852 to 0.908. These enhancements demonstrate better alignment between the embedded space and the underlying class structure.

5.6.2. Internal Clustering Quality

The DBI is used to assess cluster compactness and separation. As shown in

Table 3, both

and

generally yield lower DBI scores than the baseline

, indicating tighter and more distinct clusters. For instance, on the COIL-20 dataset, the DBI decreases from 0.637 (

) to 0.600 (

), reflecting improved structural grouping. However, the slightly lower DBI of the baseline on Fashion-MNIST can be attributed to that dataset’s simple, compact cluster structure, its local neighborhoods are already well defined, favoring methods that prioritize local cohesion. In contrast, our proposed methods emphasize global geometric alignment, so a small trade-off in intra-cluster compactness is expected.

5.6.3. Visual Inspection of Embeddings

Figure 6 provides qualitative comparisons of the 2D embeddings produced by each method. Embeddings generated using

show more distinct and well-separated clusters, especially in datasets with complex manifolds like Swiss Roll and COIL-20. The consistency and continuity in structure indicate that

effectively captures long-range relationships in the data. Meanwhile,

produces embeddings with sharper cluster boundaries and reduced within-cluster variance, showing improvements over standard

divergence in terms of local structure.

5.6.4. Out-of-Sample and Reconstruction Quality

To address the out-of-sample limitation inherent in t-SNE and its variants, a Multi-Layer Perceptron (MLP) regressor was employed to approximate the mapping from high-dimensional input data to their corresponding low-dimensional embeddings. Separate models were trained using embeddings generated by t-SNE with , , and loss functions. Once trained, the MLP enables fast and efficient projection of new, unseen data points into the embedding space without rerunning the full optimization procedure, making the method more scalable and practical for dynamic or streaming scenarios.

The quality of these learned mappings was evaluated using MSE between the predicted embeddings and the original embeddings produced by each method. As shown in

Table 4, both

and

achieve lower MSE than the standard

-based

t-SNE, with

achieving the best reconstruction performance (MSE =

). This indicates stronger generalization capabilities and more faithful preservation of the underlying data structure, because the standard asymmetric

primarily penalizes missed neighbors and can tolerate false neighbors (leading to crowding and global distortions), whereas

penalizes discrepancies in both directions, reducing spurious attractions and better aligning local–global relationships; additionally,

augments the objective with a Wasserstein term that encourages globally coherent layouts, further lowering reconstruction error.

To further assess the preservation of structural and semantic features, a reconstruction experiment was conducted using the Olivetti Faces dataset.

Figure 7 shows that the embeddings derived from

and

allow for the recovery of faces with clearer contours, sharper expressions, and reduced artifacts. In contrast, reconstructions from

-based embeddings suffer from noticeable blurring and loss of detail. These qualitative and quantitative results confirm the superiority of the proposed loss functions in preserving essential information for both embedding quality and out-of-sample extension.

6. Advantages, Limitations, and Applications

6.1. Advantages

The proposed loss functions, and , offer several advantages over the divergence in the context of dimensionality reduction and data visualization:

Improved Similarity Ranking: The introduction of enhances the model’s ability to preserve similarity rankings, making the embeddings more consistent with the underlying class structure.

Better Reconstruction Quality: Both loss functions reduce reconstruction error (as reflected in lower MSE values), and the visual results demonstrate sharper and more accurate data recovery.

Robustness Across Datasets: Empirical results show consistent performance gains on a variety of datasets, including structured (e.g., Swiss Roll) and real-world datasets (e.g., MNIST, Olivetti Faces).

6.2. Limitations

Despite the demonstrated benefits, the proposed approaches also have certain limitations:

Increased Computational Complexity: Integrating Wasserstein distance or ranking-based terms into the loss functions introduces additional computational overhead relative to standard t-SNE. Specifically, adds pairwise comparisons with an additional ranking step that marginally increases runtime (about 10%), while incorporates a batch-wise Wasserstein distance term with complexity , where m is the number of random directions and b is the batch size.

Hyperparameter Sensitivity: Parameters such as the exaggeration factor or weighting coefficients require tuning, which may limit generalizability without cross-validation.

6.3. Applications

The proposed loss functions and enhancements to t-SNE are well-suited for many applications:

Bioinformatics and Genomics: For interpreting gene expression data or cell populations where subtle structures (e.g., differentiation trajectories) matter.

Facial Recognition and Image Retrieval: Improved clustering and reconstruction quality make these methods effective for feature extraction and identity preservation.

Out-of-Sample Generalization: One of the key limitations of traditional t-SNE lies in its inability to naturally handle out-of-sample data. Since the algorithm lacks an explicit mapping function, incorporating new data points requires re-running the full embedding process, which is computationally expensive and unsuitable for dynamic or streaming environments.

7. Discussion

This work set out to address two well-known limitations of t-SNE’s -based objective, its emphasis on local neighborhoods at the expense of global structure and its asymmetric sensitivity, by introducing and the loss. Across synthetic and real-world datasets, our experiments show that both objectives improve cluster separability and structural clarity relative to standard , with most consistently enhancing long-range organization and sharpening local neighborhoods. These trends are reflected quantitatively (higher NMI/F1 and lower or comparable DBI) and qualitatively in the 2D embeddings. Notably, modest increases in aggregate loss values for the proposed objectives do not translate to degraded embeddings; instead, they arise from additional structure-aware terms that guide the optimizer toward configurations that better respect the data geometry.

Our findings align with and extend prior observations that alternative divergences or geometry-aware criteria can remedy ’s biases in neighbor embedding. By explicitly penalizing rank deviations () and by incorporating transport geometry (), the proposed losses reconcile local fidelity with broader organization. In particular, leverages Wasserstein’s smooth, geometry-aware transport to better capture manifold continuity (e.g., COIL-20 rotations), while reduces within-cluster variance by enforcing consistency among the highest-similarity relationships. The resulting embeddings exhibit clearer global layouts without sacrificing the hallmark local detail that makes t-SNE appealing.

A practical contribution of this study is guidance on hyperparameter regimes. Sensitivity analyses indicate that moderate values, – for and –500 for , strike a robust balance between local ranking fidelity and global structure, with performance typically degrading if either term dominates the objective. Interestingly, COIL-20’s DBI remains relatively stable even when NMI/F1 decline at high or , suggesting that structure-only metrics can under- or over-estimate semantic separability when manifold continuity drives class organization. This divergence between internal and external indices highlights the importance of reporting both metric families and of visually inspecting embeddings when the data have strong geometric factors.

From an optimization perspective, both and demonstrate tighter, more symmetric loss distributions over repeated runs and statistically significant differences relative to the baseline (KS and Mann–Whitney U tests, across datasets), indicating improved robustness to initialization. While the added terms introduce a modest runtime overhead, the computational cost remains practical on commodity hardware and is offset by gains in stability and interpretability. Moreover, out-of-sample mapping via a simple MLP shows lower reconstruction , particularly for , suggesting that the learned embeddings expose structure that is easier to approximate with smooth predictors, a property valuable for streaming or incremental use cases.

Limitations remain; for example, the methods introduce additional hyperparameters and, for , require approximations to make transport terms tractable at scale. Although our experiments cover diverse datasets, broader evaluations—e.g., high-throughput single-cell data or large-vocabulary text embeddings—could further validate generality. In addition, while we observed that higher composite losses need not imply worse visualization quality, a deeper theoretical characterization of the proposed objectives’ minima and their relationship to cluster topology would be valuable.

For future work, several avenues follow naturally: (i) combine and into a single objective to jointly enforce ranking and transport geometry, potentially with adaptive weighting during training; (ii) explore scalable transport surrogates (e.g., sliced or linearized optimal transport) tailored to neighbor-embedding kernels to further reduce overhead; (iii) integrate label information in a semi-supervised variant to explicitly balance semantic and geometric structure; (iv) develop principled, data-dependent schedules for and based on online diagnostics (e.g., changes in DBI vs. NMI), reducing manual tuning; and (v) extend the out-of-sample mapping with uncertainty estimation to flag projections likely to deviate from the manifold learned by the embedding.