Towards Adaptive Adverse Weather Removal via Semantic and Low-Level Visual Perceptual Priors

Abstract

1. Introduction

- We propose a VLM-guided prior extraction pipeline that explicitly produces (i) weather-type perception, (ii) compact semantic tags, and (iii) global/local restoration instructions, which are encoded as semantic and perceptual priors.

- We develop AWR-VIP, a unified adverse weather removal framework that performs restoration by conditioning a UNet-based backbone on the extracted global and local priors, enabling weather-adaptive and content-aware enhancement.

- We design two complementary prior injection mechanisms: the global prior modulates the affine parameters of layer normalization for image-level adaptation, while the local prior guides a cross-attention module to refine key semantic regions.

- Extensive experiments on combined hazy/rainy/snowy benchmarks demonstrate that AWR-VIP achieves state-of-the-art performance. Moreover, the extracted priors are plug-and-play and can be integrated into existing restoration backbones to further improve their performance.

2. Related Work

2.1. Adverse Weather Deep Learning

2.2. Language-Driven Image Restoration

3. AWR-VIP

3.1. Motivation

3.2. Semantic and Low-Level Prior Extraction Pipeline

3.2.1. VLM Configuration

| Algorithm 1 Primary Tag Selection |

Require: Low-quality image , pre-trained DAPE and the vocabulary .

|

3.2.2. Accurate and Fine-Grained Weather Perceiving

3.2.3. Primary Tag Selection

3.2.4. Flexible Semantic Elements and Controllable Inference

| Algorithm 2 VLM-guided Prior Extraction Pipeline |

Require: Low-quality image , frozen VLM (LLaVA-1.5), pre-trained DAPE , CLIP text encoder , and primary tag selection (Algorithm 1).

|

3.3. Priors Guided Adverse Weather Removal Network

3.3.1. Overview

3.3.2. Layer Norm (LN) Modulated by Global Prior

3.3.3. Instruction-Guided Cross-Attention (IGCA)

4. Experiments

4.1. Experiment Settings

4.2. Performances and Comparisons

4.2.1. Quantitative Results

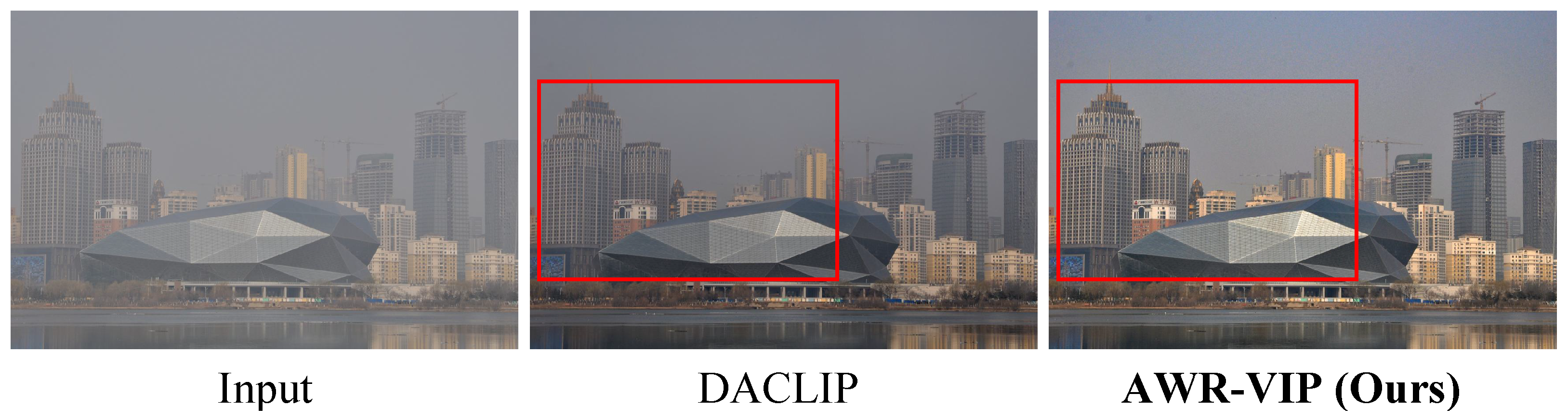

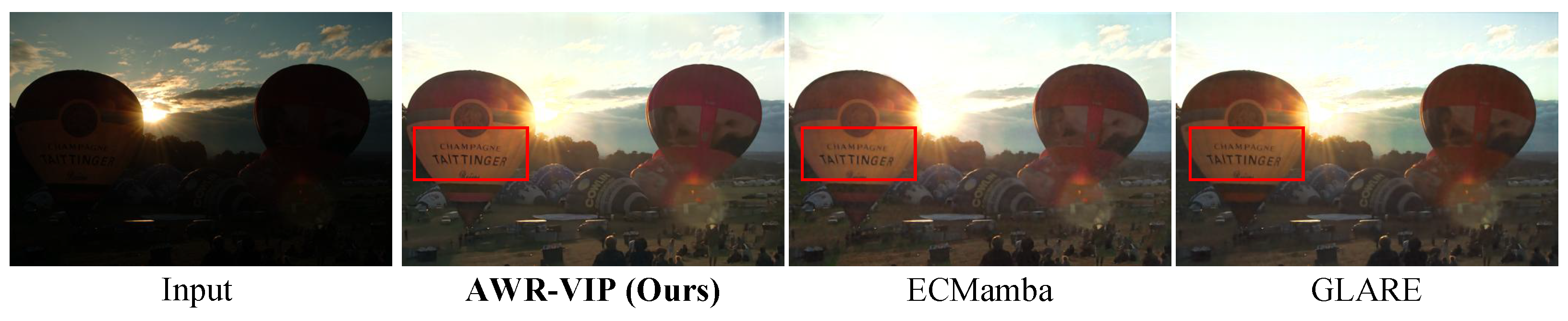

4.2.2. Qualitative Results

4.3. Ablation Study

4.4. Computational Efficiency

4.5. Evaluations on Real-World Data and Generalization to More Restoration Tasks

4.5.1. Visual Comparisons with DACLIP on Real Hazy Images

4.5.2. Generalization to Broader Restoration Tasks

4.5.3. Performance on Extreme Weather Conditions

4.6. Incorporating Conventional Low-Level Priors: Blur Scalar Guidance

4.6.1. Blur Scalar Estimation

4.6.2. Integration into AWR-VIP

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| CNN | Convolutional Neural Network |

| DDPM | Denoising Diffusion Probabilistic Models |

| AWR | Adverse Weather Restoration |

| OOD | Out-Of-Distribution |

| SOTA | State-Of-The-Art |

| VLM | Vision Language Models |

| LN | Layer Norm |

| IGCA | Instruction-Guided Cross-Attention |

| SCA | Simplified Cross Attention |

| PSNR | Peak Signal-To-Noise Ratio |

| SSIM | Structural Similarity Index Measure |

References

- Zang, S.; Ding, M.; Smith, D.; Tyler, P.; Rakotoarivelo, T.; Kaafar, M.A. The impact of adverse weather conditions on autonomous vehicles: How rain, snow, fog, and hail affect the performance of a self-driving car. IEEE Veh. Technol. Mag. 2019, 14, 103–111. [Google Scholar] [CrossRef]

- Li, R.; Cheong, L.-F.; Tan, R.T. Heavy rain image restoration: Integrating physics model and conditional adversarial learning. In 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2019; pp. 1633–1642. [Google Scholar]

- Zhang, R.; Yu, J.; Chen, J.; Li, G.; Lin, L.; Wang, D. A Prior Guided Wavelet-Spatial Dual Attention Transformer Framework for Heavy Rain Image Restoration. IEEE Trans. Multimed. 2024, 26, 7043–7057. [Google Scholar] [CrossRef]

- Chen, W.T.; Fang, H.Y.; Hsieh, C.L.; Tsai, C.C.; Chen, I.; Ding, J.J.; Kuo, S.Y. All snow removed: Single image desnowing algorithm using hierarchical dual-tree complex wavelet representation and contradict channel loss. In 2021 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Piscataway, NJ, USA, 2021; pp. 4196–4205. [Google Scholar]

- Zhang, K.; Li, R.; Yu, Y.; Luo, W.; Li, C. Deep dense multi-scale network for snow removal using semantic and depth priors. IEEE Trans. Image Process. 2021, 30, 7419–7431. [Google Scholar] [CrossRef]

- Li, B.; Peng, X.; Wang, Z.; Xu, J.; Feng, D. AOD-Net: All-in-one dehazing network. In 2017 IEEE International Conference on Computer Vision (ICCV); IEEE: Piscataway, NJ, USA, 2017; pp. 4770–4778. [Google Scholar]

- Cai, B.; Xu, X.; Jia, K.; Qing, C.; Tao, D. DehazeNet: An end-to-end system for single image haze removal. IEEE Trans. Image Process. 2016, 25, 5187–5198. [Google Scholar] [CrossRef] [PubMed]

- Li, B.; Gou, Y.; Liu, J.Z.; Zhu, H.; Zhou, J.T.; Peng, X. Zero-shot image dehazing. IEEE Trans. Image Process. 2020, 29, 8457–8466. [Google Scholar] [CrossRef] [PubMed]

- Song, Y.; He, Z.; Qian, H.; Du, X. Vision transformers for single image dehazing. IEEE Trans. Image Process. 2023, 32, 1927–1941. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet classification with deep convolutional neural networks. In Advances in Neural Information Processing Systems 25 (NIPS 2012); Curran Associates, Inc.: Red Hook, NY, USA, 2012; Volume 25, pp. 1097–1105. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Advances in Neural Information Processing Systems 30 (NIPS 2017); Curran Associates, Inc.: Red Hook, NY, USA, 2017; Volume 30, pp. 5998–6008. [Google Scholar]

- Ho, J.; Jain, A.; Abbeel, P. Denoising diffusion probabilistic models. In Advances in Neural Information Processing Systems 33 (NeurIPS 2020); Curran Associates, Inc.: Red Hook, NY, USA, 2020; Volume 33, pp. 6840–6851. [Google Scholar]

- Fu, X.; Huang, J.; Ding, X.; Liao, Y.; Paisley, J. Clearing the skies: A deep network architecture for single-image rain removal. IEEE Trans. Image Process. 2017, 26, 2944–2956. [Google Scholar] [CrossRef]

- Zhou, H.; Dong, W.; Chen, J. LITA-GS: Illumination-Agnostic Novel View Synthesis via Reference-Free 3D Gaussian Splatting and Physical Priors. In 2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2025; pp. 21580–21589. [Google Scholar]

- Li, R.; Tan, R.T.; Cheong, L.-F. All in one bad weather removal using architectural search. In 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2020; pp. 3175–3185. [Google Scholar]

- Zhu, Y.; Wang, T.; Fu, X.; Yang, X.; Guo, X.; Dai, J.; Qiao, Y.; Hu, X. Learning weather-general and weather-specific features for image restoration under multiple adverse weather conditions. In 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2023; pp. 21747–21758. [Google Scholar]

- Valanarasu, J.M.J.; Yasarla, R.; Patel, V.M. TransWeather: Transformer-based restoration of images degraded by adverse weather conditions. In 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2022; pp. 2353–2363. [Google Scholar]

- Ye, T.; Chen, S.; Bai, J.; Shi, J.; Xue, C.; Jiang, J.; Yin, J.; Chen, E.; Liu, Y. Adverse weather removal with codebook priors. In 2023 IEEE/CVF International Conference on Computer Vision (ICCV); IEEE: Piscataway, NJ, USA, 2023; pp. 12653–12664. [Google Scholar]

- Yang, H.; Pan, L.; Yang, Y.; Liang, W. Language-driven All-in-one Adverse Weather Removal. In 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2024; pp. 24902–24912. [Google Scholar]

- Potlapalli, V.; Zamir, S.W.; Khan, S.; Khan, F.S. PromptIR: Prompting for All-in-One Blind Image Restoration. In Advances in Neural Information Processing Systems 36 NeurIPS 2023; Curran Associates, Inc.: Red Hook, NY, USA, 2023; Volume 36, pp. 71275–71293. [Google Scholar]

- Xu, Y.; Gao, N.; Zhong, Y.; Chao, F.; Ji, R. Unified-Width Adaptive Dynamic Network for All-In-One Image Restoration. arXiv 2024, arXiv:2401.13221. [Google Scholar] [CrossRef]

- Hu, J.; Jin, L.; Yao, Z.; Lu, Y. Universal Image Restoration Pre-training via Degradation Classification. arXiv 2025, arXiv:2501.15510. [Google Scholar] [CrossRef]

- Jiang, Y.; Zhang, Z.; Xue, T.; Gu, J. AutoDir: Automatic all-in-one image restoration with latent diffusion. In Computer Vision–ECCV 2024; Springer: Cham, Switzerland, 2024; pp. 340–359. [Google Scholar]

- Luo, Z.; Gustafsson, F.K.; Zhao, Z.; Sjölund, J.; Schön, T.B. Controlling vision-language models for universal image restoration. arXiv 2023, arXiv:2310.01018. [Google Scholar]

- Zeng, H.; Wang, X.; Chen, Y.; Su, J.; Liu, J. Vision-Language Gradient Descent-driven All-in-One Deep Unfolding Networks. In 2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2025; pp. 7524–7533. [Google Scholar]

- Dong, W.; Zhou, H.; Wang, R.; Liu, X.; Zhai, G.; Chen, J. DehazeDCT: Towards Effective Non-Homogeneous Dehazing via Deformable Convolutional Transformer. In 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW); IEEE: Piscataway, NJ, USA, 2024; pp. 6405–6414. [Google Scholar]

- Zhou, H.; Dong, W.; Liu, Y.; Chen, J. Breaking Through the Haze: An Advanced Non-Homogeneous Dehazing Method Based on Fast Fourier Convolution and ConvNeXt. In 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW); IEEE: Piscataway, NJ, USA, 2023; pp. 1895–1904. [Google Scholar]

- Ancuti, C.O.; Ancuti, C.; Vasluianu, F.-A.; Timofte, R.; Zhou, H.; Dong, W.; Liu, Y.; Chen, J.; Liu, H.; Li, L.; et al. NTIRE 2023 HR Nonhomogeneous Dehazing Challenge Report. In 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW); IEEE: Piscataway, NJ, USA, 2023; pp. 1808–1825. [Google Scholar]

- Ancuti, C.O.; Ancuti, C.; Vasluianu, F.-A.; Timofte, R.; Liu, Y.; Wang, X.; Zhu, Y.; Shi, G.; Lu, X.; Fu, X.; et al. NTIRE 2024 Dense and Non-Homogeneous Dehazing Challenge Report. In 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW); IEEE: Piscataway, NJ, USA, 2024; pp. 6453–6468. [Google Scholar]

- Li, Z.; Lei, Y.; Ma, C.; Zhang, J.; Shan, H. Prompt-In-Prompt Learning for Universal Image Restoration. arXiv 2023, arXiv:2312.05038. [Google Scholar]

- Kong, X.; Dong, C.; Zhang, L. Towards Effective Multiple-in-One Image Restoration: A Sequential and Prompt Learning Strategy. arXiv 2024, arXiv:2401.03379. [Google Scholar] [CrossRef]

- Chen, Y.-W.; Pei, S.-C. Always Clear Days: Degradation Type and Severity Aware All-In-One Adverse Weather Removal. IEEE Access 2025, 13, 7650–7662. [Google Scholar] [CrossRef]

- Guo, Y.; Gao, Y.; Lu, Y.; Zhu, H.; Liu, R.W.; He, S. OneRestore: A Universal Restoration Framework for Composite Degradation. In Computer Vision–ECCV 2024; Springer: Cham, Switzerland, 2024; pp. 255–272. [Google Scholar]

- Rajagopalan, S.; Patel, V.M. AWRaCLe: All-Weather Image Restoration Using Visual In-Context Learning. In Proceedings of the AAAI Conference on Artificial Intelligence, Philadelphia, PA, USA, 25 February–4 March 2025; Volume 39, pp. 6675–6683. [Google Scholar]

- Bai, Y.; Wang, C.; Xie, S.; Dong, C.; Yuan, C.; Wang, Z. TextIR: A Simple Framework for Text-Based Editable Image Restoration. arXiv 2023, arXiv:2302.14736. [Google Scholar] [CrossRef]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning Transferable Visual Models From Natural Language Supervision. In Proceedings of the 38th International Conference on Machine Learning (ICML); PMLR: Cambridge MA, USA, 2021; Volume 139, pp. 8748–8763. [Google Scholar]

- Brooks, T.; Holynski, A.; Efros, A.A. InstructPix2Pix: Learning to Follow Image Editing Instructions. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); PMLR: Cambridge MA, USA, 2023; pp. 18392–18402. [Google Scholar]

- Conde, M.V.; Geigle, G.; Timofte, R. InstructIR: High-Quality Image Restoration Following Human Instructions. In Computer Vision–ECCV 2024; Springer: Cham, Switzerland, 2024; pp. 1–21. [Google Scholar]

- Wu, H.; Zhu, H.; Zhang, Z.; Zhang, E.; Chen, C.; Liao, L.; Li, C.; Wang, A.; Sun, W.; Yan, Q.; et al. Towards Open-Ended Visual Quality Comparison. In Computer Vision–ECCV 2024; Springer: Cham, Switzerland, 2024; pp. 360–377. [Google Scholar]

- Liu, S.; Ma, J.; Sun, L.; Kong, X.; Zhang, L. InstructRestore: Region-Customized Image Restoration with Human Instructions. arXiv 2025, arXiv:2503.24357. [Google Scholar]

- Wu, H.; Zhang, Z.; Zhang, E.; Chen, C.; Liao, L.; Wang, A.; Xu, K.; Li, C.; Hou, J.; Zhai, G.; et al. Q-Instruct: Improving Low-level Visual Abilities for Multi-modality Foundation Models. In 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2024; pp. 25490–25500. [Google Scholar]

- Zhou, H.; Dong, W.; Liu, X.; Zhang, Y.; Zhai, G.; Chen, J. Low-light Image Enhancement via Generative Perceptual Priors. In Proceedings of the AAAI Conference on Artificial Intelligence (AAAI), Philadelphia, PA, USA, 25 February–4 March 2025; pp. 10752–10760. [Google Scholar]

- Dong, W.; Zhou, H.; Lin, J.; Chen, J. Zero-Reference Joint Low-Light Enhancement and Deblurring via Visual Autoregressive Modeling with VLM-Derived Modulation. arXiv 2025, arXiv:2511.18591. [Google Scholar]

- Liu, H.; Li, C.; Wu, Q.; Lee, Y.J. Visual Instruction Tuning. In Advances in Neural Information Processing Systems 36 (NeurIPS 2023); Curran Associates, Inc.: Red Hook, NY, USA, 2023; Volume 36, pp. 34892–34916. [Google Scholar]

- Wu, R.; Yang, T.; Sun, L.; Zhang, Z.; Li, S.; Zhang, L. SeeSR: Towards Semantics-Aware Real-World Image Super-Resolution. In 2024 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2024; pp. 25456–25467. [Google Scholar]

- Chen, L.; Chu, X.; Zhang, X.; Sun, J. Simple Baselines for Image Restoration. In Computer Vision–ECCV 2022; Springer: Cham, Switzerland, 2022; pp. 17–33. [Google Scholar]

- Li, B.; Ren, W.; Fu, D.; Tao, D.; Feng, D.; Zeng, W.; Wang, Z. Benchmarking Single-Image Dehazing and Beyond. IEEE Trans. Image Process. 2018, 28, 492–505. [Google Scholar] [CrossRef] [PubMed]

- Yang, W.; Tan, R.T.; Feng, J.; Liu, J.; Guo, Z.; Yan, S. Deep Joint Rain Detection and Removal From a Single Image. In 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2017; pp. 1357–1366. [Google Scholar]

- Liu, Y.-F.; Jaw, D.-W.; Huang, S.-C.; Hwang, J.-N. DesnowNet: Context-Aware Deep Network for Snow Removal. IEEE Trans. Image Process. 2018, 27, 3064–3073. [Google Scholar] [CrossRef]

- Zhao, S.; Zhang, L.; Huang, S.; Shen, Y.; Zhao, S. Dehazing Evaluation: Real-World Benchmark Datasets, Criteria, and Baselines. IEEE Trans. Image Process. 2020, 29, 6947–6962. [Google Scholar] [CrossRef]

- Zamir, S.W.; Arora, A.; Khan, S.; Hayat, M.; Khan, F.S.; Yang, M.-H. Restormer: Efficient Transformer for High-Resolution Image Restoration. In 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR); IEEE: Piscataway, NJ, USA, 2022; pp. 5728–5739. [Google Scholar]

- Sun, S.; Ren, W.; Gao, X.; Wang, R.; Cao, X. Restoring Images in Adverse Weather Conditions via Histogram Transformer. In Computer Vision–ECCV 2024; Springer: Cham, Switzerland, 2024; pp. 111–129. [Google Scholar]

- Wu, J.; Yang, Z.; Wang, Z.; Jin, Z. Beyond Degradation Conditions: All-in-One Image Restoration via HOG Transformers. arXiv 2025, arXiv:2504.09377. [Google Scholar] [CrossRef]

- Wei, C.; Wang, W.; Yang, W.; Liu, J. Deep Retinex Decomposition for Low-Light Enhancement. arXiv 2018, arXiv:1808.04560. [Google Scholar] [CrossRef]

- Dong, W.; Zhou, H.; Zhang, Y.; Liu, X.; Chen, J. ECMamba: Consolidating Selective State Space Model with Retinex Guidance for Efficient Multiple Exposure Correction. In Advances in Neural Information Processing Systems (NeurIPS); Curran Associates, Inc.: Red Hook, NY, USA, 2024; pp. 53438–53457. [Google Scholar]

- Zhou, H.; Dong, W.; Liu, X.; Liu, S.; Min, X.; Zhai, G.; Chen, J. Glare: Low Light Image Enhancement via Generative Latent Feature Based Codebook Retrieval. In Computer Vision–ECCV 2024; Springer: Cham, Switzerland, 2024; pp. 36–54. [Google Scholar]

- Ancuti, C.O.; Ancuti, C.; Timofte, R. NH-HAZE: An Image Dehazing Benchmark with Non-Homogeneous Hazy and Haze-Free Images. In 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW); IEEE: Piscataway, NJ, USA, 2020; pp. 444–445. [Google Scholar]

- Lee, C.; Lee, C.; Kim, C. Contrast Enhancement Based on Layered Difference Representation of 2D Histograms. IEEE Trans. Image Process. 2013, 22, 5372–5384. [Google Scholar] [CrossRef] [PubMed]

| Methods | Hazy | Rainy | Snowy | Average |

|---|---|---|---|---|

| VLMs w/o <Definition> | 99.0% | 77.5% | 73.5% | 88.1% |

| DACLIP (requires training) | 100.0 ± 0.0% | 97.5 ± 1.3% | 98.7 ± 1.5% | 99.3 ± 0.5% |

| Our pipeline | 99.2% | 82.0% | 88.7% | 93.8% |

| Methods | Hazy | Rainy | Snowy | Average |

|---|---|---|---|---|

| Pre-trained DACLIP | 100.0% | 100.0% | 30.8% | 80.9% |

| Our pipeline | 100.0% | 100.0% | 84.6% | 95.7% |

| Methods | Hazy | Rainy | Snowy | Average | ||||

|---|---|---|---|---|---|---|---|---|

| PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | PSNR↑ | SSIM↑ | |

| Restormer | 24.38 | 0.911 | 22.45 | 0.749 | 24.29 | 0.806 | 24.14 | 0.858 |

| Restormer * | 26.10 | 0.929 | 24.36 | 0.810 | 26.36 | 0.838 | 25.99 | 0.885 |

| WGWS-Net | 25.24 | 0.920 | 25.44 | 0.806 | 26.28 | 0.833 | 25.61 | 0.878 |

| WGWS-Net * | 26.58 | 0.943 | 26.85 | 0.833 | 27.15 | 0.875 | 26.80 | 0.908 |

| TransWeather | 28.87 | 0.945 | 24.30 | 0.815 | 26.95 | 0.877 | 27.72 | 0.908 |

| TransWeather * | 29.47 | 0.951 | 26.17 | 0.830 | 27.53 | 0.889 | 28.46 | 0.917 |

| NAFNet | 29.28 | 0.948 | 26.03 | 0.849 | 28.19 | 0.895 | 28.56 | 0.920 |

| NAFNet * | 29.97 | 0.954 | 27.52 | 0.861 | 28.37 | 0.891 | 29.16 | 0.923 |

| HOGFormer | 26.23 | 0.945 | 25.90 | 0.823 | 26.26 | 0.879 | 26.20 | 0.909 |

| HOGFormer * | 27.16 | 0.954 | 26.84 | 0.837 | 27.21 | 0.888 | 27.14 | 0.919 |

| HistoFormer | 28.88 | 0.953 | 27.26 | 0.855 | 27.39 | 0.887 | 28.20 | 0.920 |

| HistoFormer * | 29.95 | 0.965 | 27.87 | 0.867 | 28.06 | 0.892 | 29.09 | 0.930 |

| DACLIP | 29.12 | 0.937 | 26.80 | 0.850 | 27.03 | 0.870 | 28.16 | 0.905 |

| DACLIP † | 29.70 | 0.954 | 27.43 | 0.877 | 27.59 | 0.890 | 28.74 | 0.924 |

| AWR-VIP (Ours) | 30.55 ± 0.05 | 0.957 ± 0.002 | 28.15 ± 0.06 | 0.883 ± 0.003 | 28.22 ± 0.05 | 0.895 ± 0.005 | 29.51 ± 0.05 | 0.928 ± 0.003 |

| Configurations | PSNR | SSIM |

|---|---|---|

| Full AWR-VIP | 29.51 | 0.928 |

| w/o <Local Instruction> () | 28.62 | 0.914 |

| w/o <Global Instruction> | 29.16 | 0.919 |

| w/o <Weather Type> | 28.85 | 0.916 |

| Baseline (w/o and ) | 28.20 | 0.902 |

| Methods | HistoFormer | DACLIP | Baseline * | AWR-VIP (Baseline + VLM) |

|---|---|---|---|---|

| Params (M) | 16.6 | 174 | 67.8 | 18.2 + 467 |

| FLOPs (G) | 366 | 474 | 291 | 80.5 + 950 |

| Runtime (s) | 0.80 | 18.4 | 0.32 | 0.08 + 0.23 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Dong, W.; Zhou, H.; Ji, T.; Chen, J. Towards Adaptive Adverse Weather Removal via Semantic and Low-Level Visual Perceptual Priors. Mach. Learn. Knowl. Extr. 2026, 8, 45. https://doi.org/10.3390/make8020045

Dong W, Zhou H, Ji T, Chen J. Towards Adaptive Adverse Weather Removal via Semantic and Low-Level Visual Perceptual Priors. Machine Learning and Knowledge Extraction. 2026; 8(2):45. https://doi.org/10.3390/make8020045

Chicago/Turabian StyleDong, Wei, Han Zhou, Terry Ji, and Jun Chen. 2026. "Towards Adaptive Adverse Weather Removal via Semantic and Low-Level Visual Perceptual Priors" Machine Learning and Knowledge Extraction 8, no. 2: 45. https://doi.org/10.3390/make8020045

APA StyleDong, W., Zhou, H., Ji, T., & Chen, J. (2026). Towards Adaptive Adverse Weather Removal via Semantic and Low-Level Visual Perceptual Priors. Machine Learning and Knowledge Extraction, 8(2), 45. https://doi.org/10.3390/make8020045