3.2.1. Tracking and Localization Accuracy Experiment

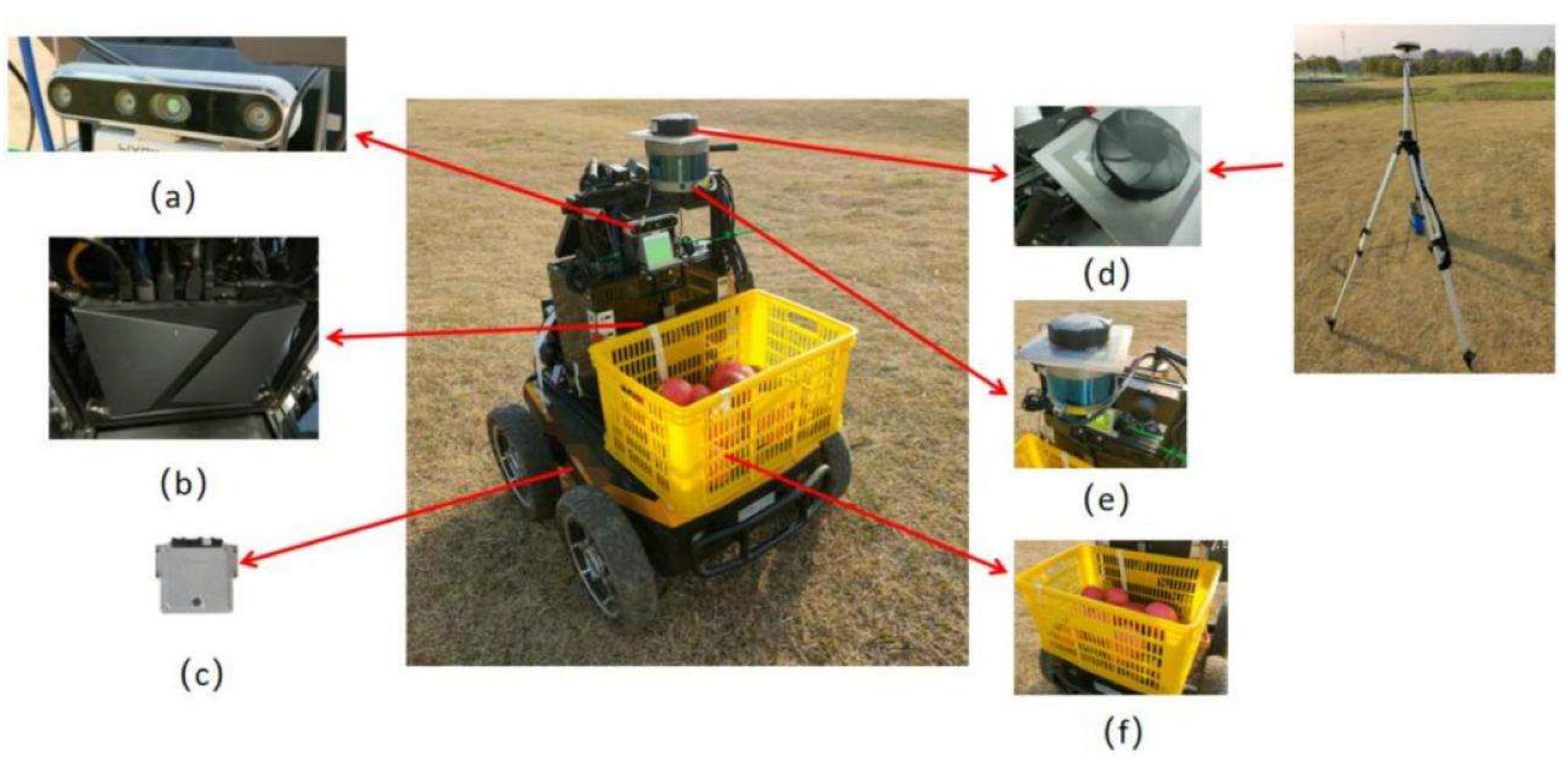

To facilitate the evaluation of target localization accuracy, a LiDAR sensor is additionally mounted on the robot. The LiDAR-measured target position serves as the ground truth, while the position obtained by the proposed target localization method serves as the estimated value. Target localization experiments are conducted along the main orchard pathways, between rows of fruit trees, and in grassy areas, as illustrated in

Figure 16a–c, where red points indicate the positions obtained by the proposed method and green points indicate the actual target positions. Euclidean distance is used as the deviation metric, as defined in Equation (4):

where

denote the positions obtained by the proposed method and

denote the actual target positions.

To quantitatively evaluate the localization performance, statistical analysis was conducted based on the localization errors recorded across all tracking trials. Specifically, the mean error, maximum error, standard deviation, and 95th percentile of the localization error were computed. Across the three tracking trials, the mean localization error is 0.071 m, while the maximum deviation reaches 0.217 m. The standard deviation of the localization error is 0.051 m, indicating limited dispersion of the errors around the mean. Furthermore, 95% of the localization errors are below 0.172 m, demonstrating that the proposed system maintains stable localization accuracy under most operating conditions.

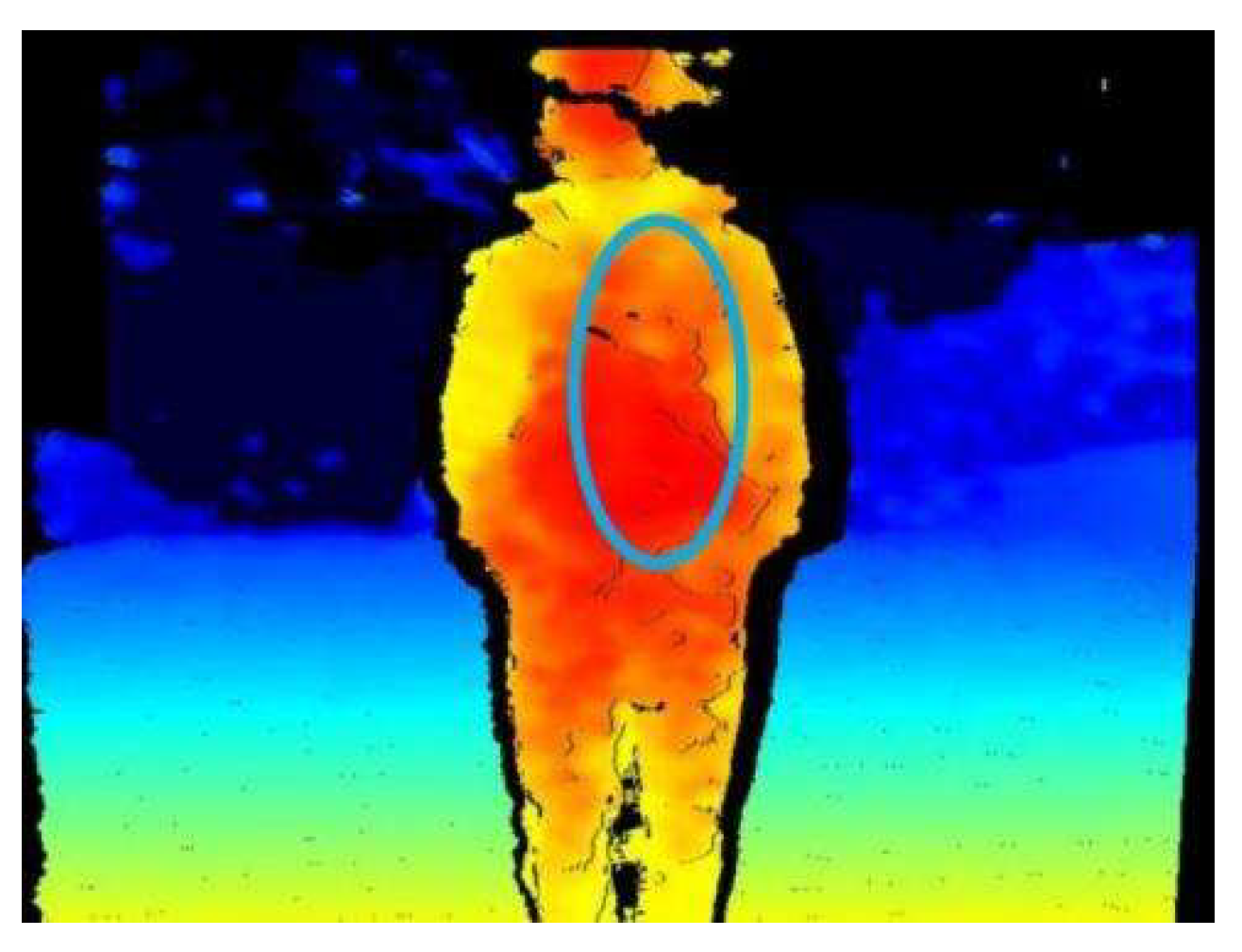

Due to the use of an elliptical mask for depth extraction, no failures caused by missing depth values are observed. The main sources of deviation include errors in the ground-truth measurement process, RealSense depth measurement errors, errors in computing human coordinates, and inaccuracies in depth extraction caused by imperfect bounding-box predictions of the DIMP algorithm. Although a certain deviation exists between the measured and estimated target positions, its impact on the overall system performance is negligible. Therefore, the proposed target tracking and localization method satisfies the requirements for tracking target localization accuracy.

3.2.2. Similar-Target Disambiguation via Person Re-Identification

To evaluate the effectiveness and applicability of the proposed similar-target discrimination method based on human re-identification (ReID) in orchard following tasks, experiments are conducted at three levels: model performance, target discrimination in static scenarios, and system performance in dynamic tracking scenarios. First, the improved HOReID model is quantitatively evaluated on standard pedestrian ReID datasets to verify its feature discrimination capability under occlusion. Second, in real orchard static scenarios, target discrimination experiments are performed under varying numbers of personnel and environmental conditions to assess the reliability of the ReID method in practical applications. Finally, in dynamic tracking scenarios that include multiple typical interference factors, the proposed target discrimination strategy is comprehensively tested, with particular emphasis on maintaining identity consistency during target switching, short-term disappearance, and occlusion. This layer-by-layer experimental design provides a comprehensive evaluation of the method’s practicality and robustness in complex orchard environments.

Experiment I: Experimental Setup and Performance Evaluation of the Improved HOReID Model

To validate the effectiveness of the improved HOReID model, training is conducted on the Occluded-Duke [

39] and DukeMTMC [

40] datasets. Data augmentation techniques including random horizontal flipping, random erasing, and random cropping are applied during training. To adapt to varying illumination conditions in orchards, random brightness adjustment is also introduced. The model is trained for 120 epochs. The performance comparison of the test sets of Occluded-Duke and DukeMTMC is shown in

Table 2.

As shown in

Table 2, the EfficientNet-HOReID model achieves a 3.5% improvement in Rank-1 and a 4.4% improvement in mAP on the Occluded-Duke dataset. On the DukeMTMC dataset, Rank-1 and mAP improve by 1.2% and 0.8%, respectively, demonstrating the effectiveness of the model modifications.

Experiment II: Reliability Analysis of Similar-Target Discrimination in Static Multi-Person Orchard Scenarios

To verify the proposed ReID-based target discrimination method in orchard environments, experiments are conducted in static scenarios with varying numbers of personnel and environmental conditions, comprising a total of nine test groups. Before each experiment, images of each participant are collected from four different angles, and the extracted features are stored in a database. For each group, 20 ReID trials are conducted, with the candidate target randomly selected from the participants present. A trial is considered successful if the candidate correctly matches the corresponding ID in the database. The results are summarized in

Table 3.

Table 3 shows that the proposed ReID method achieves an average matching success rate of 92.8%. The success rate is relatively lower in the between-tree-row scenario. Main causes of mismatches include insufficient performance of the ReID network itself, limited feature extraction under low illumination, and interference from spotty lighting in tree-row areas. Despite occasional mismatches, the frequency is low, and orchard operations typically occur during daytime under non-extreme lighting conditions. Therefore, the proposed ReID method meets the requirements for personnel re-identification in orchard tasks. The variability in success rates across groups can be explained by the binomial nature of the 20 ReID trials per group, with slightly higher variability for larger group sizes and low illumination conditions.

Experiment III: Impact of Similar-Target Interference on Following Identity Consistency in Dynamic Tracking Scenarios

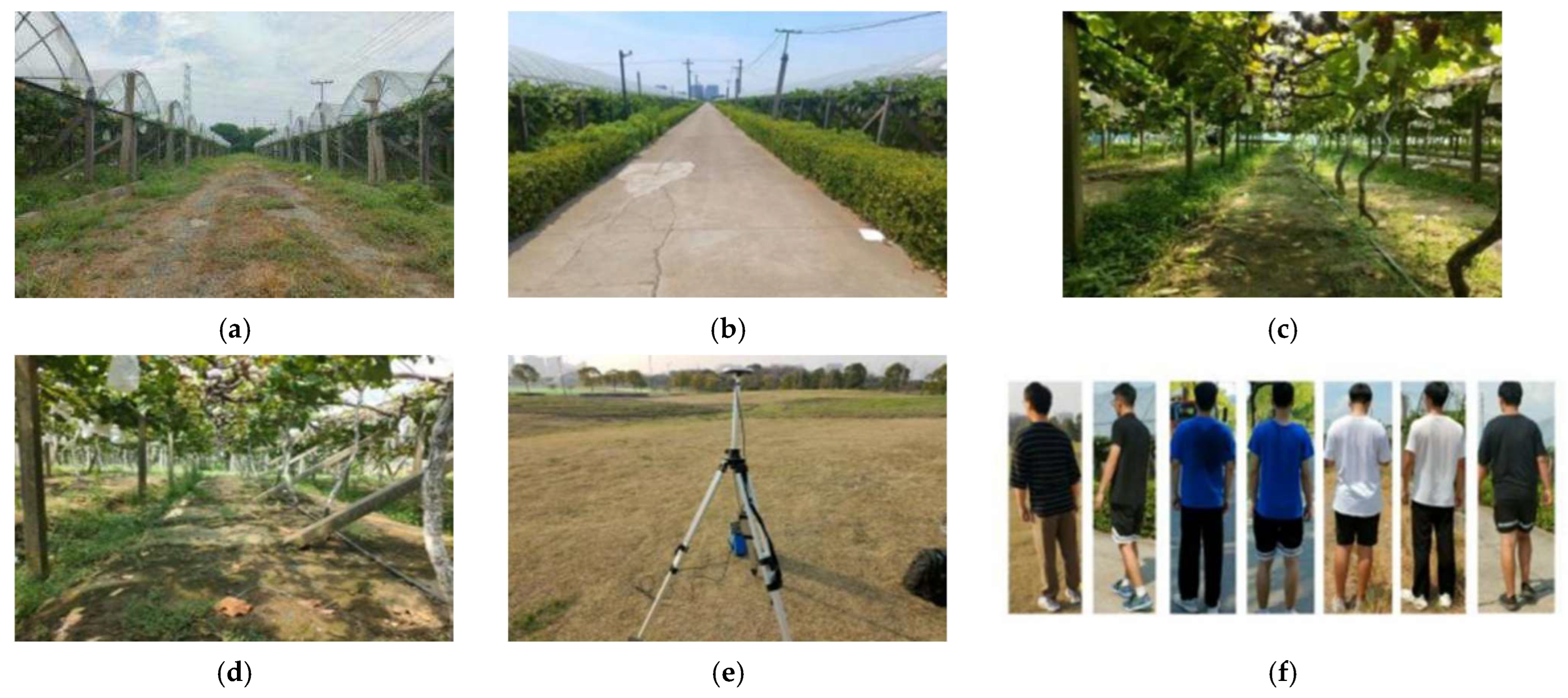

To assess the effectiveness of the ReID-based target discrimination method in dynamic tracking, offline video sequences are collected from multiple scenarios with various interference factors, as shown in

Figure 17. Four typical interferences are introduced: multiple people present simultaneously (seven participants in total, with one target and six distractors; four-angle images of each participant are collected in advance to construct the ReID feature database), target pose variations, short-term disappearance and reappearance, and target switching due to occlusion. The test data are collected in the Bacheng vineyard and surrounding areas of Kunshan, Suzhou, Jiangsu Province, covering main orchard pathways, rows of fruit trees, indoor aggregation areas, and open grassy areas. The robot followed the target under human operation, with the above interferences intentionally introduced.

The proposed method is compared with the original DIMP, SORT [

45], and DeepSORT [

46] algorithms, as shown in

Table 4. For quantitative evaluation, frame-by-frame results are classified into four states: correct tracking (CT), indicating correct following of the target; correct loss (CL), indicating the target leaves the field of view and the system correctly identifies it as lost; wrong tracking (WT), indicating tracking of an incorrect target; and wrong loss (WL), indicating the target remains visible but is not tracked.

Although the proposed method does not always achieve the highest CT score in certain scenarios, its CT + CL (system correct decision rate) is significantly higher than that of DIMP, SORT, and DeepSORT across five test scenarios. A Wilcoxon signed-rank test indicates that the proposed method shows an improvement in CT + CL over the baseline trackers. This reflects a system-level design choice: in complex orchard environments, the priority is to ensure reliable and safe following decisions rather than maximizing frame-level continuous tracking accuracy.

In orchard operations, frequent occlusions, high visual similarity, drastic pose changes, and rapid illumination variations are common. Under such conditions, WT poses a higher risk to the system than CL. Therefore, the proposed ReID-based following framework adopts a conservative discrimination strategy when target identity is uncertain, favoring correct loss detection rather than riskily following a potential incorrect target. This strategy effectively suppresses wrong following, explaining the relatively limited CT but significantly reduced WT.

Furthermore, the ReID mechanism is not continuously applied to every frame. Instead, it is triggered only during critical events, such as suspected target switching, target disappearance, or reappearance, for identity verification and correction. Its main effect is to enhance identity consistency and robustness during long-term following rather than to improve short-term frame-level tracking accuracy. This characteristic allows the system to effectively avoid template contamination and identity accumulation under multi-person interference and prolonged operation.

Overall, the experimental results indicate that, although the proposed method involves some trade-offs in CT, it achieves superior overall decision correctness and following stability in complex, multi-interference orchard dynamic scenarios, demonstrating higher practical value for real-world human–robot collaborative following tasks.

3.2.3. Follow Control and Decision-Making Evaluation

To validate the proposed robot following control method and its decision-making strategy, following control experiments are designed to cover a variety of typical operating conditions. The robot’s linear velocity is preset and is adjusted in real time according to the target’s depth information. Based on practical orchard operation requirements, the following process is divided into five decision states:

Emergency stop: triggered when an obstacle is detected within 1 m in front of the robot.

Stop motion: executed when the distance to the target is less than 1 m.

In-place rotation: when the target distance is 1–1.5 m, the robot performs rotation in place by driving the two wheels in opposite directions at equal speed to maintain alignment.

Re-identification-triggered decision: when a suspected target switch is detected, the human ReID module is invoked to verify identity and suppress incorrect following.

Normal following: when the target distance exceeds 1.5 m and no abnormal conditions occur, the robot follows the target using the Pure Pursuit algorithm.

The safety thresholds and distance-based decision boundaries follow the control strategy defined in

Section 2.3.2, where their kinematic and empirical rationale is discussed.

To evaluate the robot’s ability to track the target trajectory under the normal following state, an RTK-GPS module is installed on top of the robot, and a base station is deployed to record the motion trajectories of both the target and the robot. As shown in

Figure 18, trajectories are recorded under three different following scenarios.

Analysis of the recorded trajectories shows that the robot can closely follow the target during straight walking, turning, and free walking, demonstrating high accuracy and real-time performance in following motion. However, small deviations are observed at some slight turns. Possible causes of these deviations include: (1) the target’s lateral speed during turns is relatively high, which delays the robot’s motion control adjustment; (2) slippage of the differential-drive robot chassis during turning, which causes trajectory offset.

3.2.4. Long-Term Field Experiments in Orchard Environments

To evaluate the long-term following performance of the robot in real operational environments, a field following experiment was conducted in a vineyard and surrounding areas in Bacheng, Kunshan, Suzhou, Jiangsu Province, China, lasting 25 min and 6 s. During the experiment, the robot follows three different participants at walking speeds of approximately 1.5 m/s, 1.0 m/s, and 0.6 m/s, maintaining a human–robot distance of ≤6 m for effective perception by the RealSense camera. Six additional participants acted as distractors, and one was the designated target. Before the experiment, four-view images of all participants are collected and pedestrian re-identification features are extracted to construct the matching database.

Figure 19a–e illustrate the field experiment scenarios. When the robot loses the target, it stops and waits until the correct target reappears before resuming motion (

Figure 20).

The results of target tracking and localization are summarized in

Table 5, with the LiDAR-measured target positions treated as ground truth to compute the average localization error. The average localization error is also reported along with its standard deviation to reflect stability over time.

During the experiment, obstacles appear in front of the robot eight times, and the corresponding obstacle perception results are presented in

Table 6.

In addition, five instances require triggering the re-identification module, with the target recognition results shown in

Table 7.

Throughout the following process, the system successfully handles sudden obstacle intrusion, temporary target disappearance and reappearance, and interference from visually similar individuals. Statistical results indicate that, when the target is within the field of view, the system achieves a correct tracking rate exceeding 94% under all speed conditions, with a maximum of 98.5%, and an average localization error below 0.08 m. Based on the long-term field experiment data, more than 95% of the localization errors are estimated to remain below 0.12 m, demonstrating stable localization performance. Obstacle perception achieves a 100% success rate across all speeds, with an average per-frame processing time of approximately 20 ms. For critical events requiring re-identification, the average target recognition success rate reaches 93.3%. These results demonstrate that the proposed following system provides stable long-term tracking performance and high safety in complex orchard environments, meeting the practical requirements of orchard transportation operations.