A Hierarchical Reinforcement Learning Method for Intelligent Decision-Making in Joint Operations of Sea–Air Unmanned Systems

Abstract

1. Introduction

2. Related Work

3. Hierarchical Intelligent Decision-Making Scheme for Joint Operations of Sea–Air Unmanned Systems

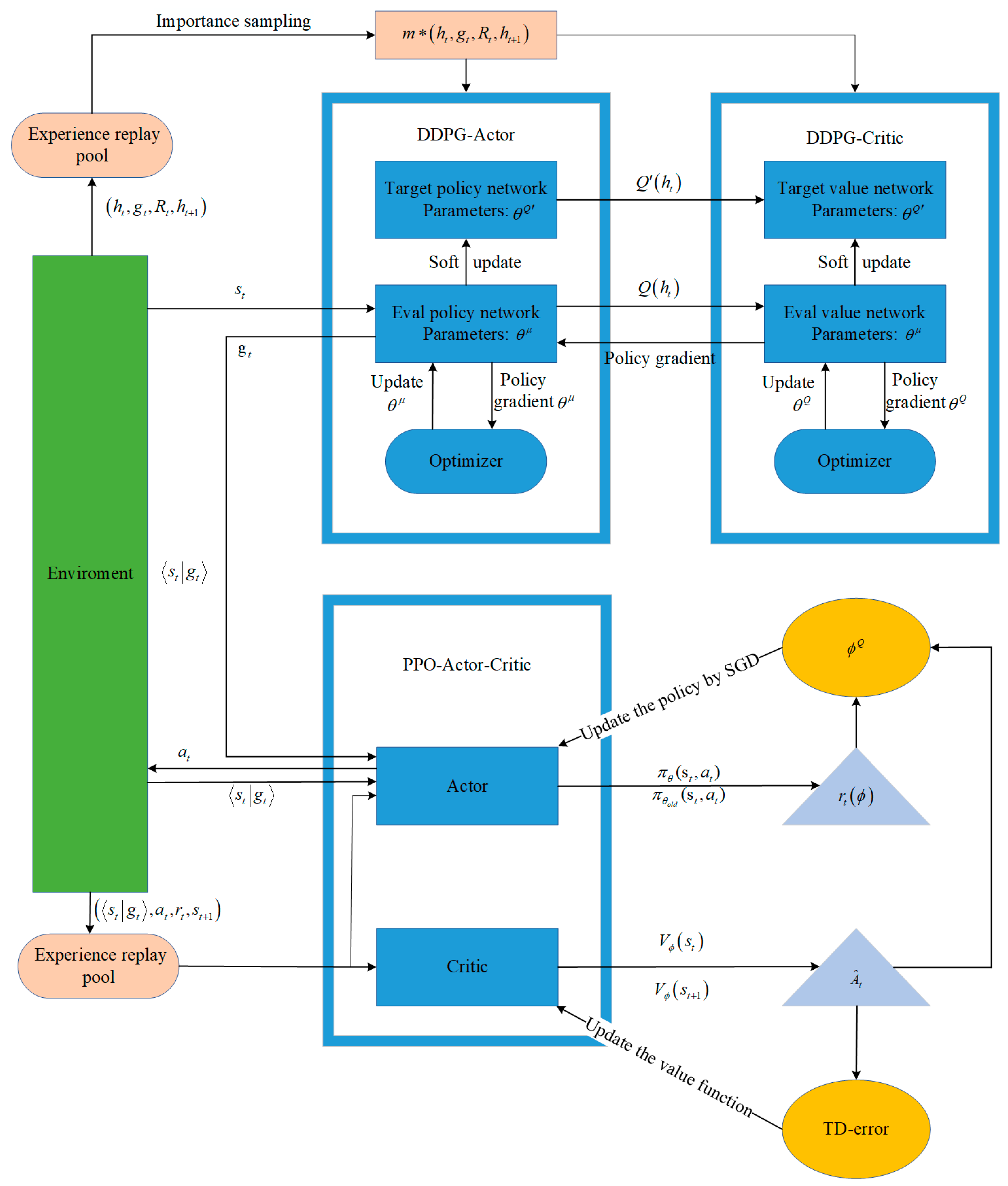

3.1. Subgoal-Based HRL Intelligent Decision-Making Framework for Joint Operations of Sea–Air Unmanned Systems

3.2. A Solution for Sparse Rewards Through the Integration of Intrinsic and Extrinsic Incentives

3.3. Hierarchical Reinforcement Learning Algorithm with Two-Level Screening of Latent Subgoals

| Algorithm 1. Hierarchical Reinforcement Learning Algorithm with Two-Level Screening of Latent Subgoals (HTS). |

| Randomly initialize senior-level Critic network, , and Actor network with weights and Initialize senior-level target network and with , Initialize senior-level default data structures of replay buffer SumTree RB, and set the priority of all m leaf nodes of SumTree to 1, in other words, . Set the maximum capacity of RB to N. Initialize junior-level value function parameters and policy function parameters For episode i = 1, M do, M represents the number of episodes during which the agent interacts with the environment. # The environment interaction commences. Initialize a stochastic process for action exploration. Received an initialized observation state . For t = 1,,, T do, T denotes the number of high-level interaction steps. # The senior-level agent initiates its interaction with the environment. Select a subgoal based on the current policy and exploration noise. For step = 1,,, TL, TL represents the maximum number of low-level interaction steps per high-level interaction, as designed by the algorithm. # The junior-level agent initiates its interaction with the environment and begins its training. Junior-level agent interacts with the environment TL times using the policy , or the environment reaches the terminal state. Compute intrinsic reward, reward-to-go Compute advantage estimates , based on the current value function Update the policy by taking K steps of minibatch SGD (Stochastic Gradient Descent): Update the value function by regression on mean-squared error: End For # The interaction at the junior-level concludes, transitioning to the next interaction at the senior level. Compute the sum of external rewards R obtained by the junior-level agent interacting with the environment TL times. Store transition to senior-level replay buffer . If the size of the senior-level RB reaches the batch size BS, # When the number of transitions at the senior-level meets the training criteria, the training of the senior-level policy is initiated. Sample transitions from RB, with each sample being sampled with a probability of : Compute importance-sampling-weight of each sample: Calculate the current Q-value for each sample: Update senior-level Critic by minimizing the loss: Update the senior-level Actor policy using the sampled policy gradient: Update the senior-level target network: Recompute and update the priority of each sample: where , End If # The current training phase for the senior-level policy concludes. |

| End For # Upon completion of the current episode’s training, the process transitions to the subsequent episode. End For # The data and policy are saved following the conclusion of this training phase. |

3.3.1. Prominent Contribution Detection

3.3.2. Repeat Penalty

3.3.3. Potential Calculation Function

4. Experiment

4.1. Ablation Experiment Study

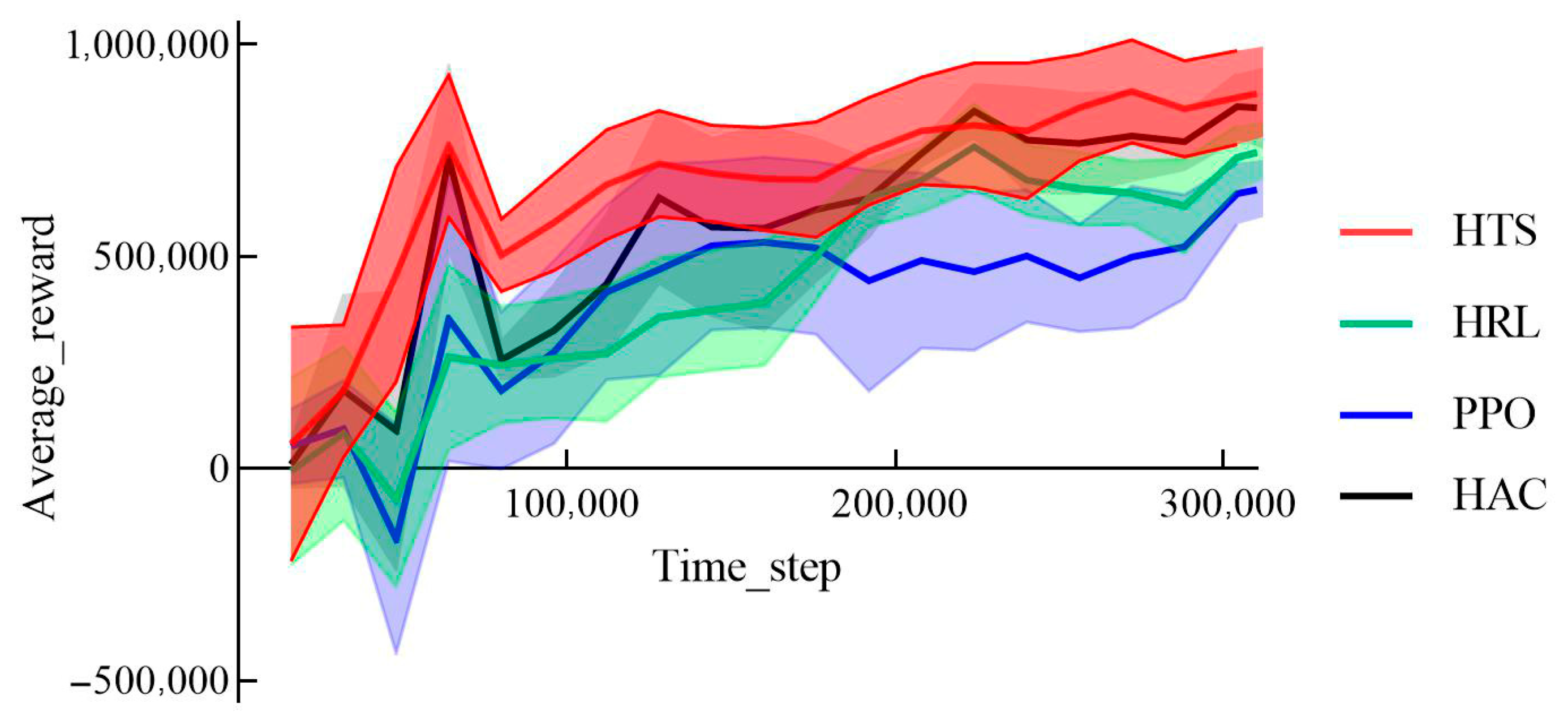

4.1.1. Average Reward

4.1.2. Average Win Rates

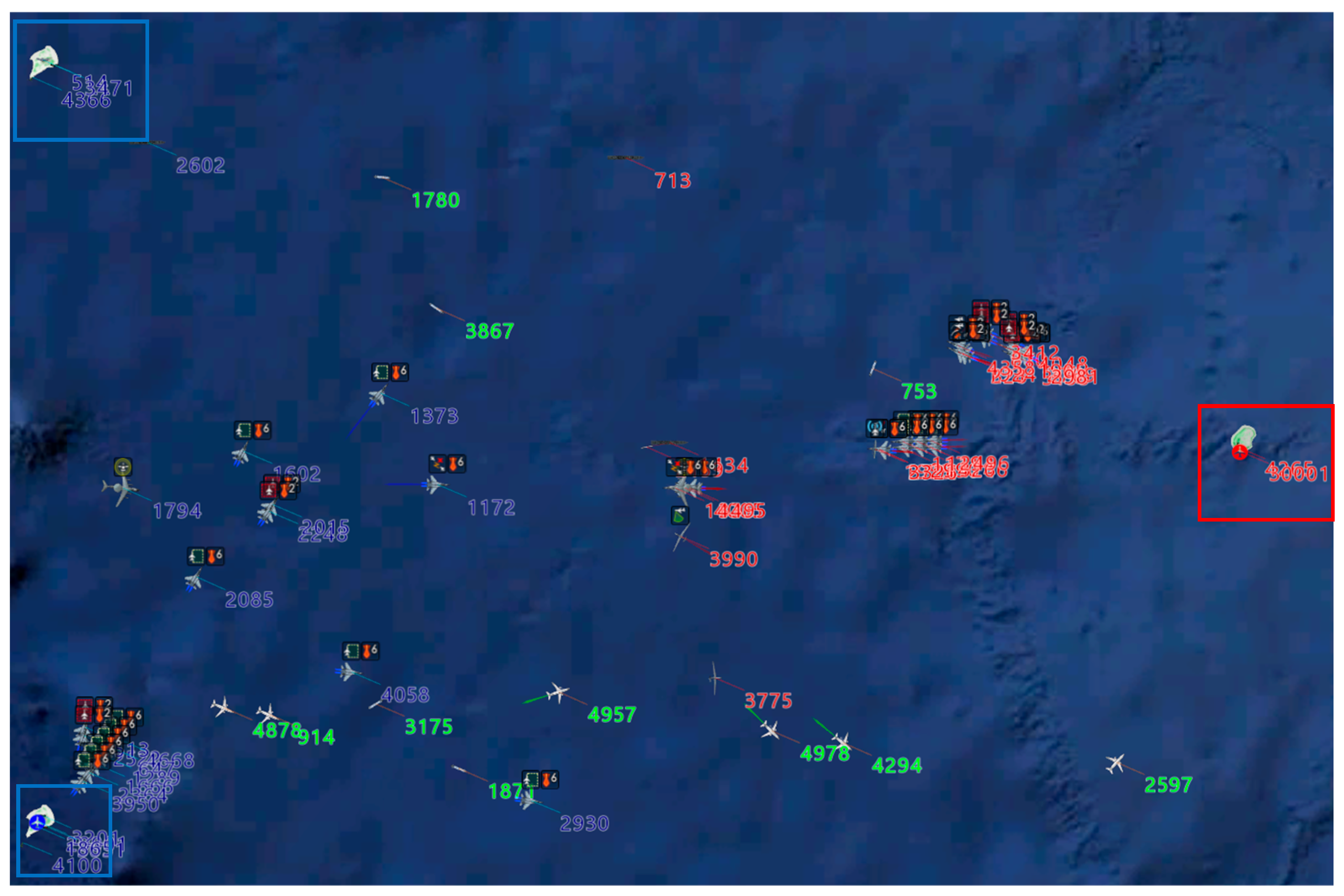

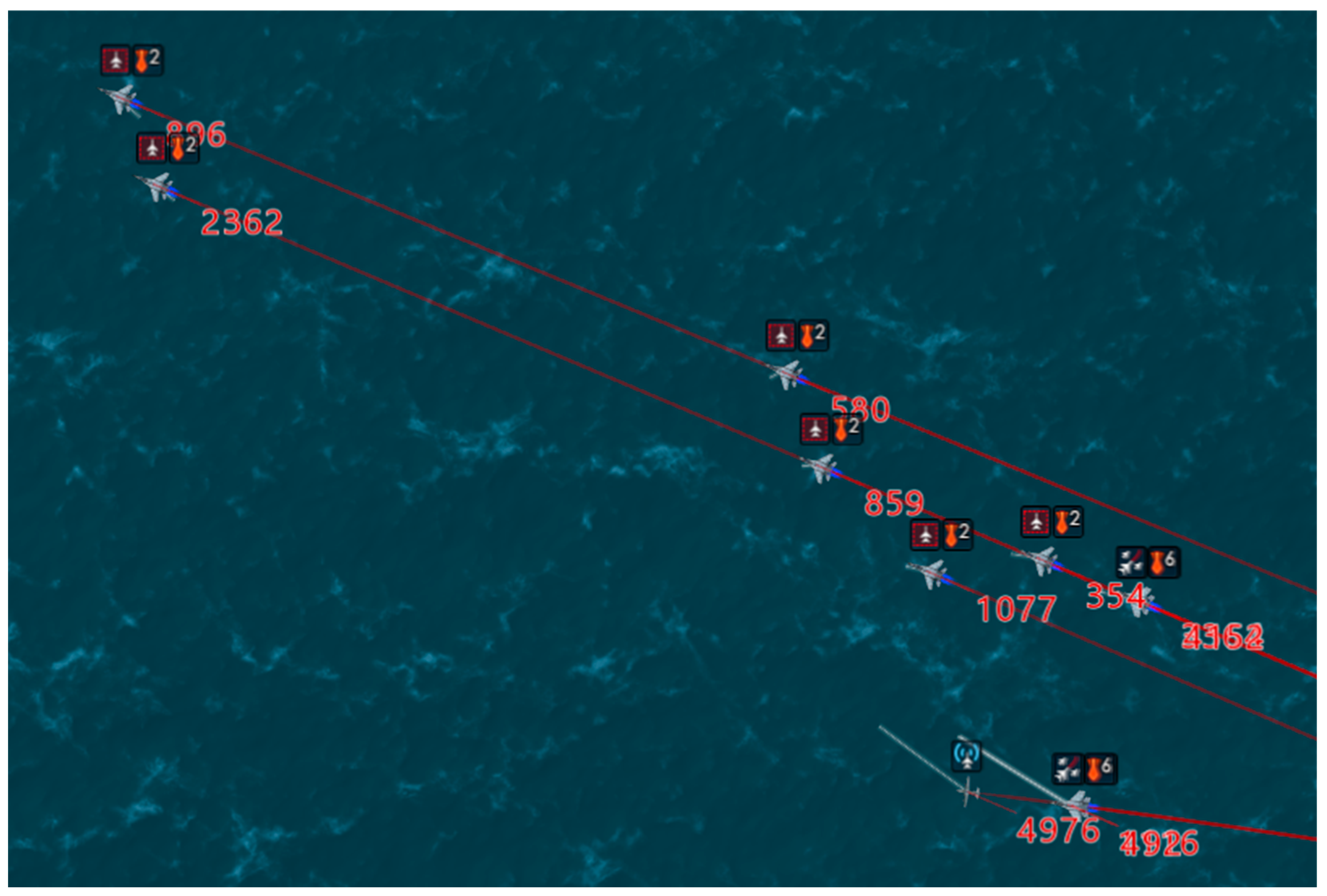

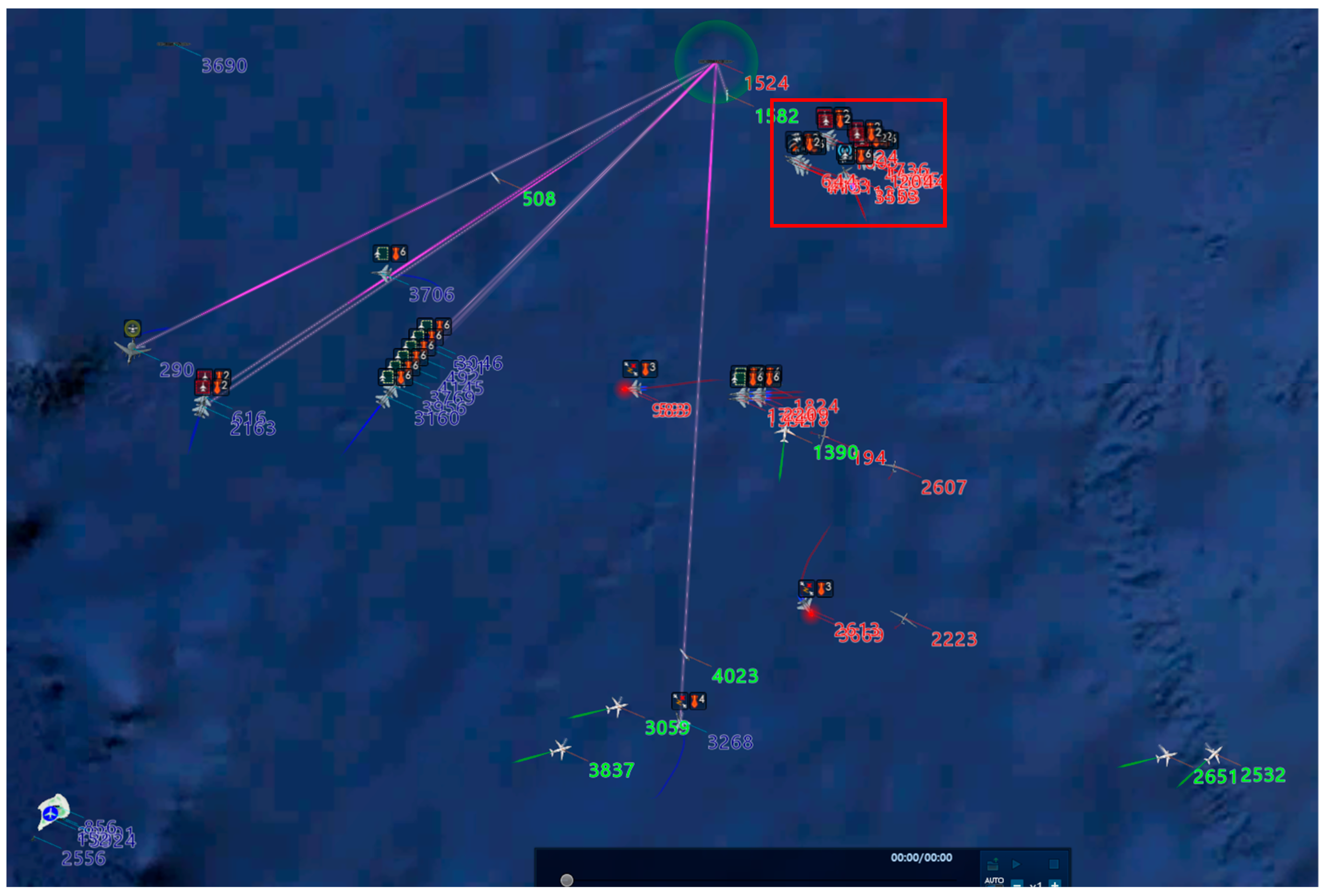

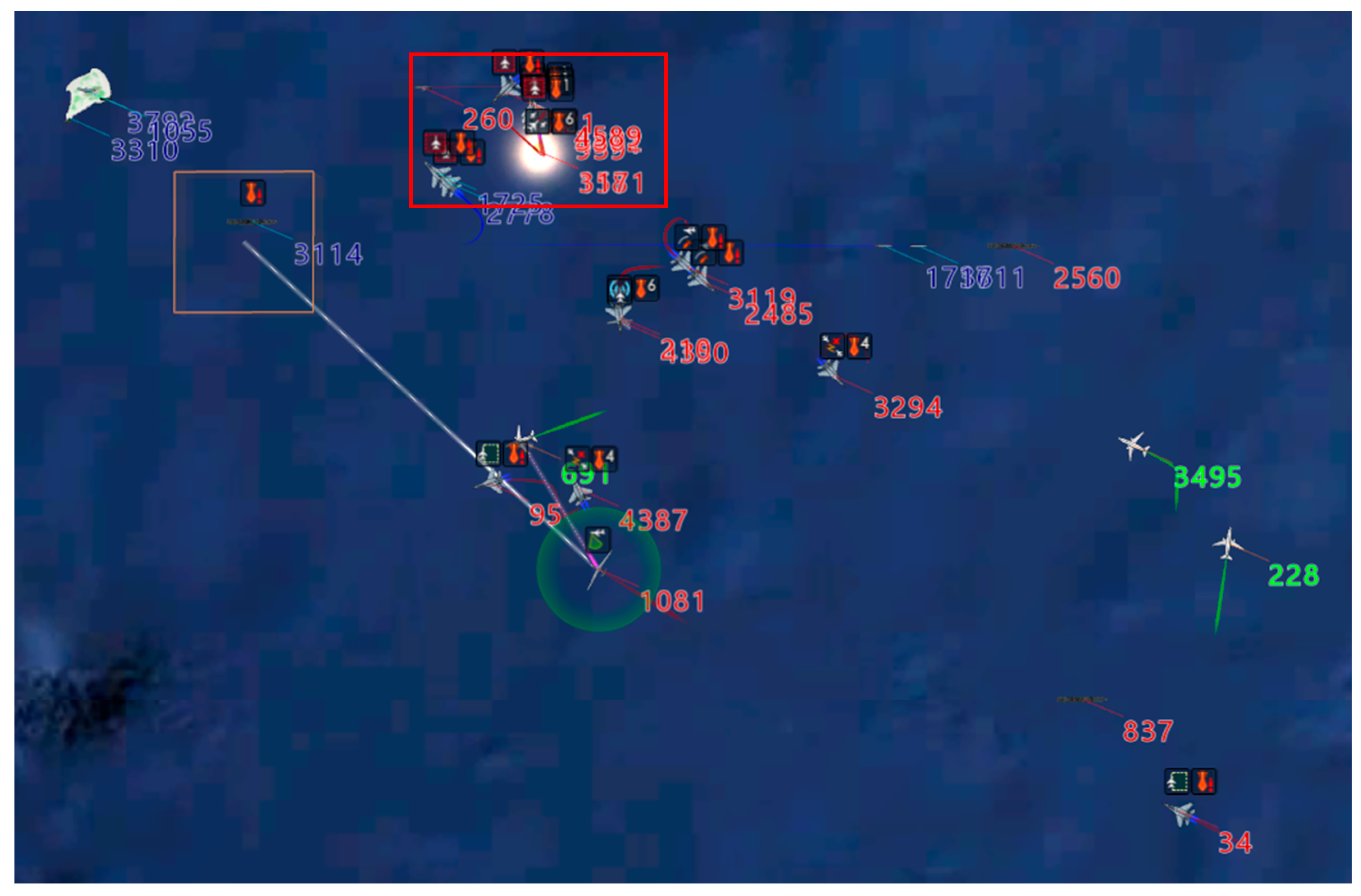

4.2. Behavioral Research

5. Conclusions

Author Contributions

Funding

Data Availability Statement

DURC Statement

Conflicts of Interest

References

- Li, Z.; Zhu, J.; Kuang, M.; Zhang, J.; Ren, J. Hierarchical Decision Algorithm for Air Combat with Hybrid Action Based on Deep Reinforcement Learning. Acta Aeronaut. Astronaut. Sin. 2024, 45, 17. [Google Scholar] [CrossRef]

- Kong, W.; Zhou, D.; Du, Y.; Zhou, Y.; Zhao, Y. Hierarchical Multi-Agent Reinforcement Learning for Multi-Aircraft Close-Range Air Combat. IET Control Theory Appl. 2023, 17, 1840–1862. [Google Scholar] [CrossRef]

- Zhang, T.Y.; Zheng, C.; Sun, M.W.; Wang, Y.S.; Chen, Z.Q. Research on Intelligent Maneuvering Decision in Close Air Combat Based on Deep Q Network. Presented at IEEE 12th Data Driven Control and Learning Systems Conference (DDCLS), Xiangtan, China, 12–14 May 2023; pp. 1044–1049. [Google Scholar] [CrossRef]

- Chen, R.; Li, H.; Yan, G.; Peng, H.; Zhang, Q. Hierarchical Reinforcement Learning Framework in Geographic Coordination for Air Combat Tactical Pursuit. Entropy 2023, 25, 1409. [Google Scholar] [CrossRef]

- Piao, H.; Han, Y.; He, S.; Yu, C.; Fan, S.; Hou, Y.; Bai, C.; Mo, L. Spatiotemporal Relationship Cognitive Learning for Multirobot Air Combat. IEEE Trans. Cognit. Dev. Syst. 2023, 15, 2254–2268. [Google Scholar] [CrossRef]

- Wang, Y.; Wang, Z.; Dong, L.; Li, N. Research on Multi-Aircraft Air Combat Behavior Modeling Based on Hierarchical Intelligent Modeling Methods. J. Syst. Simul. 2023, 35, 2249. [Google Scholar] [CrossRef]

- Yuan, Y.; Yang, J.; Yu, Z.L.; Cheng, Y.; Jiao, P.; Hua, L. Hierarchical Goal-Guided Learning for the Evasive Maneuver of Fixed-Wing UAVs based on Deep Reinforcement Learning. J. Intell. Robot. Syst. 2023, 109, 43. [Google Scholar] [CrossRef]

- Chai, J.; Chen, W.; Zhu, Y.; Yao, Z.-X.; Zhao, D. A Hierarchical Deep Reinforcement Learning Framework for 6-DOF UCAV Air-to-Air Combat. IEEE Trans. Syst. Man Cybern. Syst. 2023, 53, 5417–5429. [Google Scholar] [CrossRef]

- Kong, W.R.; Zhou, D.Y.; Du, Y.J.; Zhou, Y.; Zhao, Y.Y. Reinforcement Learning for Multiaircraft Autonomous Air Combat in Multisensor UCAV Platform. IEEE Sens. J. 2023, 23, 20596. [Google Scholar] [CrossRef]

- Gong, Z.; Xu, Y.; Luo, D. UAV Cooperative Air Combat Maneuvering Confrontation Based on Multi-agent Reinforcement Learning. Unmanned Syst. 2023, 11, 273–286. [Google Scholar] [CrossRef]

- He, S.; Gao, Y.; Zhang, B.; Chang, H.; Zhang, X. Advancing Air Combat Tactics with Improved Neural Fictitious Self-play Reinforcement Learning. In Advanced Intelligent Computing Technology and Applications, Proceedings of the 19th International Conference, ICIC 2023, Zhengzhou, China, 10–13 August 2023; Lecture Notes in Computer Science, Lecture Notes in Artificial Intelligence; Springer: Singapore, 2023; Volume 14090, pp. 653–666. [Google Scholar] [CrossRef]

- Zhu, Y.; Zheng, Y.; Wei, W.; Fang, Z. Enhancing Automated Maneuvering Decisions in UCAV Air Combat Games Using Homotopy-Based Reinforcement Learning. Drones 2024, 8, 756. [Google Scholar] [CrossRef]

- Yuxin, Z.; Enjiao, Z.; Hong, L.; Wentao, Z. MATD3 with Multiple Heterogeneous Sub-Networks for Multi-Agent Encirclement-Combat Task. J. Supercomput. 2024, 81, 279. [Google Scholar] [CrossRef]

- Wang, B.; Liu, J. Research Progress and Development Trend on Learning Algorithm of Combat Agent. Ordnance Ind. Autom. 2023, 96, 74. [Google Scholar] [CrossRef]

- Kong, W.-R.; Zhou, D.-Y.; Zhou, Y.; Zhao, Y.-Y. Hierarchical Reinforcement Learning from Competitive Self-Play for Dual-aircraft Formation Air Combat. J. Comput. Des. Eng. 2023, 10, 830–859. [Google Scholar] [CrossRef]

- Kulkarni, T.D.; Narasimhan, K.; Saeedi, A.; Tenenbaum, J. Hierarchical Deep Reinforcement Learning: Integrating Tem-poral Abstraction and Intrinsic Motivation. Adv. Neural Inf. Process. Syst. 2016, 29, 1826. [Google Scholar] [CrossRef]

- Rafati, J.; Noelle, D.C. Learning Representations in Model-Free Hierarchical Reinforcement Learning. In Proceedings of the AAAI conference on artificial intelligence, Honolulu, HI, USA, 27 January–1 February 2019; Volume 33, pp. 10009–10010. [Google Scholar] [CrossRef]

- Nachum, O.; Gu, S.S.; Lee, H.; Levine, S. Data-Efficient Hierarchical Reinforcement Learning. Adv. Neural Inf. Process. Syst. 2018, 31, 1679. [Google Scholar] [CrossRef]

- Li, R.; Cai, Z.; Huang, T.; Zhu, W. Anchor: The Achieved Goal to Replace the Subgoal for Hierarchical Reinforcement Learning. Knowl.-Based Syst. 2021, 225, 107128. [Google Scholar] [CrossRef]

- Zhang, T.; Guo, S.; Tan, T.; Hu, X.; Chen, F. Generating Adjacency-Constrained Subgoals in Hierarchical Reinforcement Learning. Adv. Neural Inf. Process. Syst. 2020, 33, 21579. [Google Scholar] [CrossRef]

- Zhang, T.; Guo, S.; Tan, T.; Hu, X.; Chen, F. Adjacency Constraint for Efficient Hierarchical Reinforcement Learning. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 4152–4166. [Google Scholar] [CrossRef]

- Levy, A.; Konidaris, G.; Platt, R.; Saenko, K. Learning Multi-Level Hierarchies with Hindsight. arXiv 2017, arXiv:1712.00948. [Google Scholar] [CrossRef]

- He, Z.H.; Gu, C.C.; Xue, R.; Wu, K.J. Automatic Curriculum Generation by Hierarchical Reinforcement Learning. Presented at 27th International Conference on Neural Information Processing, Bangkok, Thailand, 18–20 November 2020; Volume 12533, pp. 202–213. [Google Scholar] [CrossRef]

- Röder, F.; Eppe, M.; Nguyen, P.D.; Wermter, S. Curious Hierarchical Actor-Critic Reinforcement Learning. Presented at Artificial Neural Networks and Machine Learning—ICANN 2020: 29th International Conference on Artificial Neural Net-works, Bratislava, Slovakia, 15–18 September 2020; Volume 12397, pp. 408–419. [Google Scholar] [CrossRef]

- Pateria, S.; Subagdja, B.; Tan, A.-H.; Quek, C. End-to-End Hierarchical Reinforcement Learning With Integrated Subgoal Discovery. IEEE Trans. Neural Netw Learn. Syst. 2021, 33, 7778–7790. [Google Scholar] [CrossRef]

- Wang, K.; Ruan, J.; Zhang, Q.; Xing, D. Efficient Hierarchical Reinforcement Learning via Mutual Information Constrained Subgoal Discovery. In Proceedings of the Neural Information Processing: 30th International Conference, ICONIP 2023, Changsha, China, 20–23 November 2023; Communications in Computer and Information Science. pp. 76–87. [Google Scholar] [CrossRef]

- Nicholaus, I.T.; Kang, D.-K. FTPSG: Feature Mixture Transformer and Potential-Based Subgoal Generation for Hierarchical Multi-Agent Reinforcement Learning. Expert Syst. Appl. 2025, 270, 14. [Google Scholar] [CrossRef]

- Xu, X.; Zuo, G.; Li, J.; Huang, G. Efficient Hierarchical Exploration with An Active Subgoal Generation Strategy. In Proceedings of the 2022 IEEE International Conference on Robotics and Biomimetics (ROBIO), Xishuangbanna, China, 5–9 December 2022; pp. 1335–1340. [Google Scholar]

- Gürtler, N.; Büchler, D.; Martius, G. Hierarchical Reinforcement Learning With Timed Subgoals. Adv. Neural Inf. Process. Syst. 2021, 34, 21732–21743. [Google Scholar] [CrossRef]

- Tong, S.; Liu, Q. Balanced Subgoals Generation in Hierarchical Reinforcement Learning. In Proceedings of the 2024 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Kuching, Malaysia, 6–10 October 2024; pp. 3813–3818. [Google Scholar]

- Zhang, T.; Liu, Z.; Pu, Z.; Yi, J.; Liang, Y.; Zhang, D. Robot Subgoal-guided Navigation in Dynamic Crowded Environments with Hierarchical Deep Reinforcement Learning. Int. J. Control Autom. Syst. 2023, 21, 2350–2362. [Google Scholar] [CrossRef]

- Xu, C.; Zhang, C.; Shi, Y.; Wang, R.; Duan, S.; Wan, Y.; Zhang, X. Subgoal-based Hierarchical Reinforcement Learning for Multi-Agent Collaboration. arXiv 2024, arXiv:2408.11416. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, C.; Dong, W.; He, L.; Cai, M.; Li, Y. A Hierarchical Reinforcement Learning Method for Intelligent Decision-Making in Joint Operations of Sea–Air Unmanned Systems. Drones 2025, 9, 596. https://doi.org/10.3390/drones9090596

Li C, Dong W, He L, Cai M, Li Y. A Hierarchical Reinforcement Learning Method for Intelligent Decision-Making in Joint Operations of Sea–Air Unmanned Systems. Drones. 2025; 9(9):596. https://doi.org/10.3390/drones9090596

Chicago/Turabian StyleLi, Chen, Wenhan Dong, Lei He, Ming Cai, and Yang Li. 2025. "A Hierarchical Reinforcement Learning Method for Intelligent Decision-Making in Joint Operations of Sea–Air Unmanned Systems" Drones 9, no. 9: 596. https://doi.org/10.3390/drones9090596

APA StyleLi, C., Dong, W., He, L., Cai, M., & Li, Y. (2025). A Hierarchical Reinforcement Learning Method for Intelligent Decision-Making in Joint Operations of Sea–Air Unmanned Systems. Drones, 9(9), 596. https://doi.org/10.3390/drones9090596