1. Introduction

As core nodes in future maritime systems for civilian and scientific applications, autonomous unmanned surface vehicle (USV) swarms rely heavily on the deep fusion of multi-source heterogeneous sensor information for situational awareness and collaborative decision-making [

1]. A typical USV platform integrates a suite of sensors, including electro-optical systems (EOSs), navigation radar, search radar, LiDAR, Doppler logs, and crucially, the Strapdown Inertial Navigation System (SINS). Collectively, these sensors form a robust perception network. Through multi-sensor fusion, they provide the swarm with comprehensive situational awareness, precise self-localization, and environmental perception capabilities, forming the foundation for autonomous navigation, collision avoidance, target recognition, and tracking [

2].

To achieve cross-platform collaborative perception, a fundamental prerequisite is the precise knowledge of the spatiotemporal relationships among all sensors within the swarm. Specifically, this entails determining the spatial mounting parameters (i.e., extrinsic parameters, including lever arms and boresight angles) between sensors and the SINS, as well as the temporal synchronization across various sensors. Failure to adequately calibrate these parameters can lead to severe fusion errors. For instance, an uncompensated millisecond-level time offset can translate into decimeter-level spatial projection errors on a high-speed maneuvering hull; similarly, minute angular mounting errors can result in significant localization deviations when observing distant targets. Such errors directly undermine the consistency of the swarm’s shared situational picture and may even lead to mission failure [

3]. Although factory calibration is mandatory, at-sea operations require rapid, online calibration due to structural deformation, sensor replacement, and modular payloads. This ensures that USV swarms can quickly complete calibration and error compensation prior to deployment [

4].

While multi-sensor calibration has been extensively studied over the past decades, most research has focused on offline, target-based methods. For example, the well-known “Zhang’s method” proposed by Zhang et al. [

5] utilized a 2D checkerboard to calibrate camera intrinsics via planar homography. Dhall et al. [

6] proposed a 3D–3D point matching method that extracts the corners or centers of calibration boards from both images and point clouds, establishing 3D-3D correspondences and solving for rigid transformation using ICP or SVD to calibrate LiDAR and cameras. Similarly, Frey et al. [

7] developed a LiDAR–IMU calibration method using point cloud feature extraction, and Liu et al. [

8] proposed a camera–IMU calibration method based on visual odometry. However, these methods rely heavily on specific calibration targets, limiting their applicability to laboratory environments or pre-factory settings.

To enhance the applicability of calibration algorithms, numerous targetless online calibration methods have been proposed. Ishikawa et al. [

9] introduced a LiDAR–camera calibration method based on motion consistency. Persic et al. [

10] proposed an online multi-sensor calibration method based on detecting and tracking moving objects. Chen et al. [

11] presented a general multi-sensor calibration framework. While effective, these approaches are primarily designed for land-based environments, exploiting rich environmental features for calibration. They are ill-suited for maritime environments where observable static features are scarce. Furthermore, most existing methods focus on single-platform calibration. At the swarm level, ensuring that sensor data from all USVs are unified into a global coordinate system while maintaining consistent online calibration accuracy remains an unaddressed research gap.

To address these challenges, this paper proposes a unified, targetless, and swarm-oriented calibration framework. Our core idea is to eliminate the dependence on specific calibration targets and absolute positioning information. Instead, we fully exploit the rich constraints inherent in the USV’s own motion and the relative observations between swarm members to achieve multi-sensor calibration.

Unlike existing swarm-level approaches [

12] that focused primarily on localization while assuming pre-calibrated and fixed sensor extrinsics, this work introduces a unified framework that treats extrinsic parameters as state variables to be optimized online. Furthermore, we address the critical issue of observability degradation in open-sea environments—a gap often overlooked in the current cooperative calibration literature. The main contributions of this paper are as follows:

- (1)

A unified multi-sensor calibration framework for maritime environments: We propose a joint optimization framework tailored for maritime conditions capable of synchronously estimating sensor extrinsics, and relative poses of the swarm, enabling rapid multi-sensor calibration at sea.

- (2)

A swarm cooperative calibration mechanism: We introduce a swarm calibration paradigm based on relative motion constraints. Even in the absence of absolute GPS information, a robust calibration constraint network is constructed through mutual observations among USVs, achieving globally consistent calibration.

The remainder of this paper is organized as follows:

Section 2 reviews related work;

Section 3 introduces system modeling and problem formulation;

Section 4 details the proposed swarm calibration algorithm;

Section 5 presents the experimental setup and analysis of results; and

Section 6 concludes the paper and outlines future research directions.

2. Related Work

2.1. Target-Based Sensor Calibration

Early calibration methodologies predominantly relied on artificial targets with well-defined geometric features to estimate intrinsic and extrinsic parameters. Planar patterns, such as checkerboards, laid the foundation for high-precision photogrammetry-based calibration [

5,

13,

14]. These approaches were subsequently extended to multi-camera and camera–inertial measurement unit (IMU) systems by integrating temporal alignment strategies [

13] and non-linear distortion modeling [

14,

15].

In the domain of LiDAR–camera calibration, physical targets with distinct geometric structures or reflective properties have been widely employed. For instance, planar boards with retroreflective surfaces [

16] and checkerboards [

17] were used to align 3D point clouds with 2D images by optimizing geometric correspondences. Similarly, 3D–3D point cloud matching methods [

6] and line-feature-based registration [

18] have proven effective in structured environments. Regarding joint IMU–vision calibration, optical motion capture systems [

19] often serve as ground truth providers, while robust estimators [

8] have been developed to handle scenarios with incomplete inertial information.

Although target-based methods offer high precision and repeatability, their dependence on visible targets and controlled environments severely limits scalability in large-scale or open-field applications. As autonomous systems evolve toward outdoor operations, the requirement for meticulous setup and manual intervention becomes impractical, driving the shift toward targetless calibration techniques.

2.2. Targetless Sensor Calibration

To overcome the limitations of artificial targets, targetless calibration methods leverage natural scene features or sensor motion constraints. Motion-based approaches for camera–IMU systems exploit kinematic constraints between inertial and visual measurements, using closed-form solutions [

20], probabilistic optimization [

21], or visual odometry [

22] to refine calibration parameters online. Visual-only methods often rely on vanishing points extracted from structural scenes [

23,

24].

In LiDAR–camera systems, mutual information-based methods [

16,

17] calibrate sensors by maximizing the correlation between environmental intensity and depth, eliminating the need for specific targets. Probabilistic registration frameworks based on point-to-edge and point-to-plane constraints [

25] further improve robustness in unstructured environments. Additionally, edge alignment strategies [

16] and Gaussian Mixture Models [

26] have been introduced to enhance feature matching accuracy.

Compared to target-based approaches, targetless calibration offers greater flexibility and autonomy, making it particularly suitable for field-deployed systems. However, these methods often face observability and degradation issues: sufficient excitation signals and environmental textures are required to make all parameters identifiable. Moreover, drift and bias in inertial sensors can propagate into calibration results.

The emergence of factor graph optimization frameworks, such as

GTSAM [

27], has enabled unified modeling of calibration and state estimation. Seminal works in visual–inertial odometry (VIO), such as

VINS-Mono [

28], further demonstrated the efficacy of tightly coupled optimization for real-time state estimation. Our work extends these foundational concepts to the domain of multi-USV cooperative calibration.

2.3. Joint Spatiotemporal Calibration

Accurate sensor fusion relies not only on spatial alignment but also on precise temporal synchronization. Asynchronous sensor data can introduce significant errors, especially during high-dynamic maneuvers. Joint spatiotemporal calibration methods have been developed to estimate both spatial extrinsics and temporal offsets simultaneously.

Early works explicitly modeled time delays in visual–inertial systems [

29] or utilized motion-based constraints for multi-modal sensor arrays [

30]. The Kalibr framework [

13] formulated calibration as a maximum likelihood estimation problem, unifying the optimization of intrinsics, extrinsics, and time offsets. This concept was extended to multi-camera–IMU systems [

31] and continuous-time trajectory estimation [

32], enabling the handling of rolling shutter effects and high-frequency inertial data. Recent B-spline-based approaches [

11] further generalized this framework to targetless scenarios.

While effective, batch optimization methods like Kalibr require sufficient motion excitation and are sensitive to initialization. This motivates the need for robust adaptive calibration strategies capable of maintaining accuracy in dynamic or harsh environments.

2.4. Online Dynamic Calibration

Real-world systems, particularly USV swarms operating in complex maritime conditions, are subject to mechanical vibrations and thermal expansion, which degrade calibration accuracy over time. Online dynamic calibration frameworks address this by continuously refining parameters during operation.

Keyframe-based visual–inertial SLAM methods [

31] treat extrinsics as state variables to be optimized online. Similarly, tightly coupled estimators [

33] collaboratively estimate pose, landmarks, and calibration parameters, ensuring observability-aware updates to prevent overfitting. Recent research has expanded these capabilities to targetless LiDAR–camera calibration [

34], dual-quaternion-based motion estimation [

35], and moving object tracking [

10]. Additionally, non-holonomic constraints [

36] and unified motion-based frameworks [

37] have been proposed for mobile robots. Hybrid approaches combining analytical models with deep learning [

38] are also emerging to adaptively compensate for minor misalignments.

Despite these advancements, existing frameworks often face trade-offs between accuracy, computational cost, and robustness in partially observable or dynamic environments. Moreover, few studies have investigated enhancing system calibration robustness through cooperative calibration across multiple platforms.

These gaps motivate this paper to propose a cooperative calibration framework for USV swarms, aiming to provide consistent calibration for diverse sensor configurations while maintaining robustness against disturbances in complex maritime environments.

3. System Modeling and Problem Formulation

This section presents the mathematical notation and coordinate frame definitions used throughout this paper, followed by detailed descriptions of the corresponding sensor models and the swarm cooperative observation model.

3.1. Notation and Coordinate Frames

We consider a swarm consisting of

(

) unmanned surface vehicles (USVs). Each USV is equipped with three typical sensors: a SINS/GNSS integrated navigation system, a navigation radar, and an electro-optical system (EOS). We denote the world frame, the body frame, the EOS frame, and the radar frame of the

-th USV as

,

,

, and

, respectively. We represent the 6-degree-of-freedom (DoF) rigid transformation from frame

to frame

using a Euclidean matrix

, defined as

where

represents the rotation part, and

represents the translation part.

The state of the

-th USV at time

is defined as

where

and

denote the position and orientation (represented by a quaternion) in the world frame,

is the velocity, and

are the biases of the accelerometer and gyroscope, respectively.

Let the extrinsic parameters of the EOS and the navigation radar for the -th USV be , , respectively. The objective is to estimate the extrinsic parameters for all USVs, where is the number of USVs.

3.2. Unified Factor Graph Optimization Model

We formulate the multi-sensor extrinsic calibration problem for the USV swarm as a unified nonlinear least-squares optimization problem. For a swarm of USVs, we define the full system state vector and solve for the optimal state by minimizing the weighted sum of squared residuals.

Based on Bayesian inference, given all measurements

, the maximum a posteriori (MAP) estimate of the system state

corresponds to the global minimum of the following objective function:

where

,

, and

represent the IMU pre-integration residual, the adaptive GNSS observation residual, and the cooperative mutual observation and calibration residual, respectively.

denotes the prior factor.

is the squared Mahalanobis distance, with

being the covariance matrix of the corresponding measurement. To mitigate the influence of outliers, we employ the

Huber robust kernel function . In our implementation, the threshold

is set to 1.5 for radar observations and 2.0 for GNSS observations to balance robustness and convergence speed.

3.2.1. IMU Pre-Integration Residual ()

The IMU serves as the reference backbone, constraining the relative motion between adjacent time steps

and

. We adopt the pre-integration theory on manifolds to avoid repeated integration. The residual is defined as

where

and

are the position and velocity of the

-th USV at time

in the world frame.

(associated with quaternion

) is the rotation matrix from the body frame to the world frame.

is the time interval between keyframes

and

.

is the gravity vector in the world frame.

are the pre-integrated relative position, velocity, and rotation, which depend only on IMU measurements and are independent of the state.

extracts the vector part of a quaternion.

3.2.2. Adaptive GNSS Observation Residual ()

To address the issue of GNSS susceptibility to interference in complex environments, we design a dynamic covariance model based on confidence.

The corresponding distance weights (information matrix) are

where

is the position measurement output by the GNSS receiver, and

is a signal quality indicator.

is an adaptive weighting function: when the signal is normal,

; when strong interference is detected (

drops below a threshold),

. In this case, the contribution of this term to the objective function approaches zero, making the USV localization rely entirely on IMU integration and inter-USV mutual observations.

3.2.3. Cooperative Mutual Observation and Calibration Residual ()

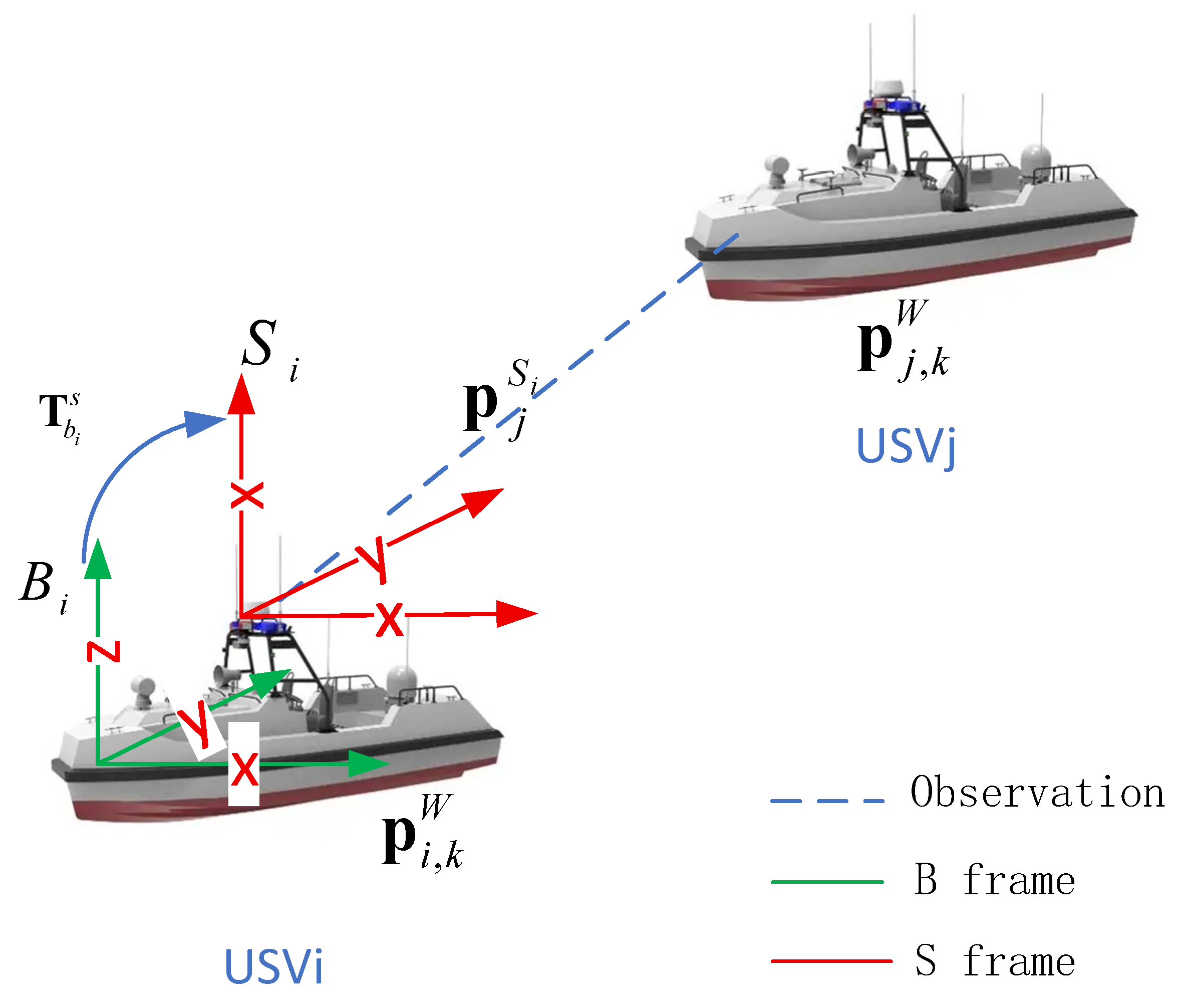

By observing the positions of other USVs, we simultaneously constrain the ego-motion and solve for sensor extrinsics. As shown in

Figure 1, at time

, the observer USV

detects the target USV

using sensor

(radar

or EOS

). The theoretical coordinate of target USV

in the sensor frame

(

) of observer

is

. By transforming the world position

of target USV

to the sensor frame

of observer

, we have

where

is the extrinsic parameter to be estimated, representing the rotation matrix and translation vector of sensor

relative to the body center

. Depending on the sensor type, we define the specific observation function

.

The radar measures range

and azimuth

:

where

are the actual radar measurements, and

are the coordinate components of the target in the radar frame.

Since the EOS integrates a laser rangefinder, it provides high-precision spherical coordinate measurements: range

, azimuth

, and elevation

. The observation function

is

The EOS residual term is

where

are the actual EOS angular measurements, and

are the target coordinates in the EOS frame.

3.2.4. Prior and Marginalization Factors

The last term in Equation (3) represents the prior factor. Since the system adopts a sliding-window-based real-time optimization strategy, to preserve historical state information that slides out of the window and prevent information loss, we utilize the Schur complement technique to marginalize constraints from old states onto the oldest frame in the current window. Additionally, this term includes the initial measurement priors for sensor extrinsics and absolute pose priors used to eliminate the system’s gauge freedom when GNSS is completely unavailable.

3.3. Linearization and Jacobian Derivation

To solve the optimization problem using the Levenberg–Marquardt (LM) or Gauss–Newton algorithm, we need the derivative of the residual

with respect to the extrinsic parameter

. Using the Lie group perturbation model, let the left perturbation of the extrinsic be

. Applying the chain rule:

where

is the Jacobian of the point coordinate with respect to the extrinsic perturbation. For both radar and EOS, the derivative of a point coordinate transformed by the extrinsic is

where

denotes the skew-symmetric matrix operator.

The term is the Jacobian of the observation function, which differs for EOS and navigation radar.

3.3.1. Navigation Radar Observation Jacobian

Although radar provides 2D observations, the extrinsic rotation introduces coupling in the

-axis component. Thus, derivatives with respect to

are required:

The final radar Jacobian is

Although the radar measurement model is 2D (range and azimuth), the Jacobian calculation must account for the full 3D extrinsic rotation. This is because the USV’s roll and pitch motions cause the world-frame -coordinate of the target to couple into the radar’s plane. The chain rule term (Equation (12)) is a matrix that correctly captures these 3D rotational effects, ensuring the optimization can adjust the yaw, pitch, and roll extrinsics based on the 2D projected residuals.

3.3.2. EOS Observation Jacobian

The EOS provides full 3D observations. Let

and

.

The final EOS Jacobian is

4. Algorithm Implementation

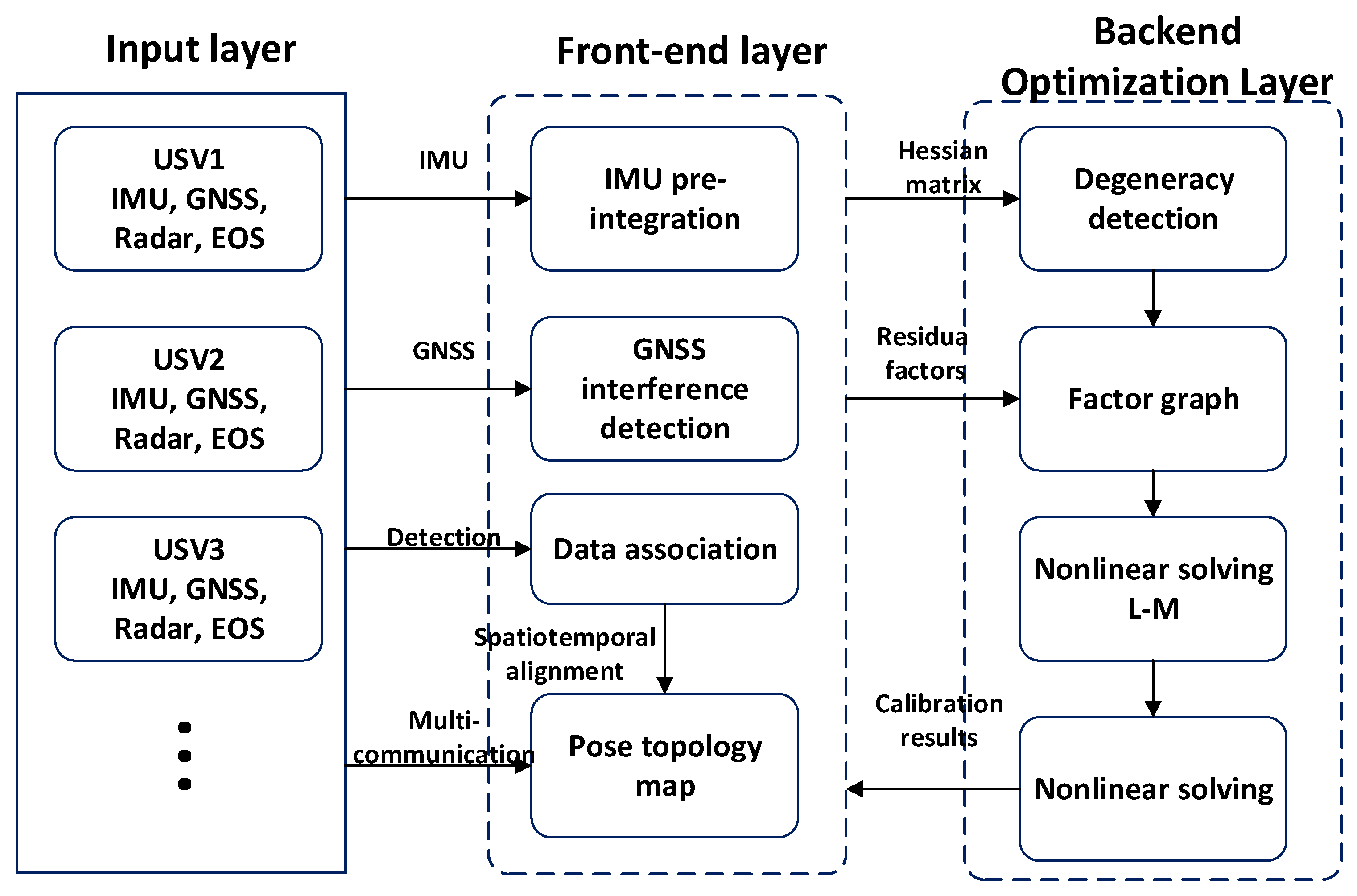

This section details the complete workflow of the proposed cooperative online calibration algorithm. The algorithm adopts a sliding-window-based tightly coupled graph optimization architecture, as illustrated in

Figure 2.

The architecture consists of three layers. The input layer receives raw sensor data (GNSS, IMU, radar, EOS) from USVs. The front-end processing layer handles calibration parameter initialization, IMU pre-integration, GNSS interference monitoring (generating confidence factors), and data association based on radar/EOS detections and swarm information, constructing a rigid pose graph of the swarm. The back-end optimization layer builds the factor graph based on residual factors within the swarm network, performs real-time detection of environmental degradation, and executes calibration parameter optimization using the Levenberg–Marquardt (LM) algorithm when conditions are met.

4.1. Initialization and Pre-Processing

4.1.1. Dynamic Initialization

As a highly nonlinear system, the continuous-time tightly coupled state estimator requires reasonable initial guesses for relevant parameters to pursue a global optimum and achieve better convergence in the final batch optimization. To this end, we implement a rigorous, dynamic multi-stage initialization procedure to sequentially initialize multi-sensor calibration parameters.

In the initial alignment phase, the SINS must complete self-alignment under static or low-dynamic conditions. The core principle leverages the projection of the gravity vector and the Earth’s rotation rate in the navigation frame. The initial roll, pitch, and heading angles are estimated via analytical methods or Kalman filtering. Specifically, static base alignment typically uses a second-order leveling algorithm to minimize the horizontal projection of accelerometer outputs. Simultaneously, the azimuth observation equation is constructed using the Earth’s rotation rate component (where is the local latitude) measured by the gyroscope. By continuously sampling for 10 to 15 min and performing least-squares fitting, horizontal attitude angles with an accuracy better than and an initial heading angle of approximately to can be obtained. This process provides a unified reference frame for subsequent sensor calibration, directly influencing the convergence speed and final accuracy of installation error estimation. After alignment, the attitude matrix , position, and velocity outputs from the INS serve as ground truth references, while estimated gyro and accelerometer biases are recorded for dynamic error modeling.

The coarse calibration between navigation radar and INS relies on range-azimuth observation sequences of other swarm members. Initial installation errors are solved by constructing geometric constraint equations. Specifically, the USV performs at least two straight-line segments with different headings or one uniform circular motion, ensuring the radar stably tracks targets at varying azimuths and ranges. The target range and azimuth are obtained in the radar polar coordinate system. Using the USV pose provided by the INS, the target position is transformed to the world frame and then back-projected to the radar frame, establishing the observation equation , where is the polar conversion function. A coarse least-squares optimization based on SVD decomposition ignores high-order nonlinear terms to rapidly solve for initial position deviation and attitude error . Under 5 to 10 different observation configurations, this method achieves a coarse calibration accuracy of approximately m in position and in angle, providing a sufficiently close starting point for refinement. Outliers with observation noise exceeding are rejected, and targets covering 30% to 80% of the radar range are selected to enhance the condition number of the equation system.

EOS–INS coarse calibration utilizes the correspondence between pixel coordinates of cooperative targets extracted from EOS images and target position information. Camera extrinsics are solved by constructing perspective projection constraints. This process requires the USV to measure the target multiple times at different azimuths and elevations, ensuring target image points are distributed across the four quadrants and the center of the image sensor. Using INS attitude and position data, 3D target coordinates are transformed to the EOS frame. Iterative optimization minimizes the reprojection error , where is the perspective projection function and is the calibrated intrinsic matrix. Considering the EOS typically has higher angular resolution but lower ranging accuracy, the coarse calibration phase simplifies the model to optimize only the attitude installation angle while fixing the position deviation. Using three or more non-collinear observation points and introducing Rodrigues’ formula for rotation, the method quickly converges to a coarse estimate with an angular error of approximately . Care must be taken to avoid targets being too close (causing field-of-view loss or blur) and to use image pyramids for accelerated feature matching, maintaining an effective observation frequency of at least 10 Hz for data continuity.

4.1.2. IMU Pre-Integration

IMU pre-integration plays an indispensable role in spatiotemporal constraints and state propagation within the multi-sensor calibration system. By pre-processing high-frequency raw inertial measurements into relative motion increments independent of the absolute state, it significantly reduces the computational load in nonlinear optimization iterations and solves time synchronization and alignment issues between sensors of different frequencies. Crucially, it constructs a tightly coupled mathematical framework, allowing the system to use sparse radar and EOS observations to correct IMU biases and maintain high-precision attitude estimation when GNSS is disturbed. This provides a stable and rigorous 6-DoF motion reference for extrinsic calibration, avoiding observation correlation reuse issues caused by directly using integrated navigation filter outputs and establishing global consistency for extrinsic solving. We adopt the manifold pre-integration theory proposed by Forster et al. [

39]. IMU measurements are pre-integrated between two adjacent keyframes

and

, converting them into relative motion constraints independent of the absolute state at frame

. The accelerometer measurement

and gyroscope measurement

are modeled as

where

is the rotation matrix from the world frame to the body frame,

is the gravity vector,

are the random walk biases of the accelerometer and gyroscope, and

is Gaussian white noise. Using median integration, all IMU measurements between

and

are integrated to define the pre-integrated relative displacement

, relative velocity

, and relative rotation

. The core advantage is that these quantities depend only on IMU measurements and are decoupled from the world state

:

Since IMU biases

are continuously adjusted during optimization, to avoid reintegration after bias changes, a first-order Taylor expansion is used for linear correction:

where

represents the Jacobians of pre-integrated quantities with respect to biases, computed iteratively during pre-integration.

Finally, the pre-integrated quantities serve as observations to construct the residual term

connecting state nodes

and

:

This residual corresponds to

in the objective function in

Section 3, and its covariance matrix

is propagated from raw measurement noise via the noise propagation equation.

4.1.3. GNSS Interference Detection and Adaptive Weighting

To prevent trajectory drift caused by erroneous constraints when GNSS is disturbed, we automatically cut off unreliable constraints. Indicators are extracted from GNSS NMEA sentences: horizontal dilution of precision (HDOP) and mean carrier-to-noise density (

). We calculate a confidence factor

using the following sigmoid-based decay function:

The parameters for the sigmoid decay function are set to . We set the HDOP threshold , the slope factor , and the reference carrier-to-noise ratio . If the calculated weight , the GNSS factor is considered invalid and excluded from the graph.

The weight matrix is constructed as

When interference occurs (HDOP rises or SNR drops), , making the contribution of the GNSS frame to the graph optimization negligible.

4.1.4. Cooperative Data Association

This step determines which specific USV in the swarm corresponds to “Target A” observed by radar or EOS. Based on the multi-robot SLAM data association framework proposed by Alberto Viseras et al. [

40], we utilize the predicted poses

of each USV in the current sliding window to project USV

onto the sensor plane of USV

, obtaining the predicted observation

. The Mahalanobis distance test is performed:

Using Nearest Neighbor matching, the USV with the minimum distance among all candidates satisfying (set to 5.99 for 95% confidence) is selected as the associated target. If no candidate exists, the observation is treated as environmental clutter and discarded.

4.2. Factor Graph Construction

The schematic of the constructed factor graph is shown in

Figure 3. The leftmost part represents the marginalization prior, containing historical information prior to the sliding window. The graph consists of two types of nodes:

Variable nodes (circles): Small circles represent pose nodes , denoting the navigation state (position, velocity, attitude, bias) of USV at time . Large circles represent the sensor extrinsics to be estimated.

Factor nodes (squares): Blue squares are IMU factors used for dead reckoning, connecting pose nodes of adjacent times. Greysquares are adaptive GNSS factors, which dynamically weight connections to pose nodes based on interference detection results, represents the weight coefficient. Orange squares are observation factors, connecting the observer’s pose, the target USV’s pose, and the observer’s extrinsics. These factors establish spatial constraints between USVs and simultaneously connect to the static extrinsic calibration nodes, enabling synchronous estimation of sensor installation errors and swarm poses.

4.3. Nonlinear Optimization and Robustness Enhancement

After constructing the factor graph, the system state estimation problem is transformed into a nonlinear least-squares problem. This section details the iterative solution process based on the Levenberg–Marquardt (LM) algorithm, focusing on the online degradation detection mechanism for unobservable extrinsics and the sliding window marginalization strategy to ensure real-time performance.

4.3.1. System Linearization and Normal Equation Construction

The goal is to find the optimal state increment

that minimizes the total residual function

. Due to the nonlinearity of the observation function

, we perform a first-order Taylor expansion of the error term at the current linearization point

:

where

is the Jacobian matrix. We construct the Normal Equation:

where

is the approximate Hessian matrix,

is the gradient vector,

is the damping factor of the LM algorithm, and

is the first derivative of the robust kernel function used to mitigate the impact of outliers on the gradient.

4.3.2. Degradation Detection and Subspace Constraint

Under specific operating conditions such as uniform straight-line motion, sensor extrinsics (especially the rotation component) may become unobservable (degenerate). Forced updates in such cases would lead to parameter drift in the null space.

To analyze extrinsic observability, we use the Schur complement technique to marginalize the pose variables

, retaining only the information matrix for extrinsic variables

. We partition

as

where subscript

corresponds to poses and

to extrinsics. The reduced Hessian matrix

after marginalizing poses is

Performing eigenvalue decomposition on

:

We set a degradation threshold ( in our experiments).

Degradation criterion: If the minimum eigenvalue , it indicates that the extrinsic parameters lack constraints in the direction of the corresponding eigenvector .

Update strategy: Parameters are updated only in directions with sufficient information. We construct a projection matrix , where contains only eigenvectors corresponding to . The corrected increment is . This strategy ensures numerical stability of calibration under weak texture or weak maneuvering conditions.

4.3.3. Sliding Window Marginalization

To limit the dimensionality of the state vector over time and ensure computational complexity, the system adopts a sliding window strategy. When a new frame is added to the window, the oldest frame must be removed. Unlike simply discarding it, marginalization converts and its associated observations into a Prior Factor, preserving the constraint of historical information on the remaining states.

Let the states to be removed be

and the retained states be

. The linearized error equation is

Using the Schur complement, we construct the prior information matrix

and residual vector

for

:

It is well-known that marginalization induces fill-in, creating dense connectivity among all variables in that were connected to . This can degrade the sparsity of the Hessian matrix. To mitigate this, we employ two strategies:

Strict window management: We strictly limit the marginalization scope to the oldest keyframe and its direct neighbors, preventing the dense prior from coupling the entire window.

Solver efficiency: We implement the optimization using the Ceres Solver with sparse Cholesky factorization (e.g., CHOLMOD). This solver efficiently exploits the block-sparse structure of the SLAM problem, ensuring that the computational cost remains bounded even with the dense prior block introduced by marginalization.

This prior term is added as a factor to the objective function in the next optimization cycle.

4.4. Algorithm Workflow

The algorithm flow proposed in this paper is shown in Algorithm 1.

| Algorithm 1. Proposed algorithm |

Input: Sensor streams Z (IMU, GNSS, Radar, EOS)

Output: Swarm State X, Extrinsics T_calib, Time Offset tau |

| 1: Initialize T_calib using coarse alignment (Section 4.1). |

| 2: Loop (Incoming Data): |

| 3: //Front-end |

| 4: Perform IMU Pre-integration between keyframes. |

| 5: Calculate GNSS weights lambda based on HDOP/SNR (Equation (11)). |

| 6: Perform Data Association for Radar/EOS measurements (Equation (23)). |

| 7: //Back-end |

| 8: Construct Factor Graph G with new nodes and factors. |

| 9: Calculate Hessian H and FIM. |

| 10: //Degeneracy Check |

| 11: if min(eig(H_calib)) < Threshold then |

| 12: Lock degenerate directions in T_calib (don’t update). |

| 13: else |

| 14: Unlock T_calib for full optimization. |

| 15: end if |

| 16: //Optimization |

| 17: Update X, T_calib using Levenberg-Marquardt. |

| 18: //Marginalization |

| 19: if Window Size > N_max then |

| 20: Marginalize oldest frame via Schur Complement (Equation (30)). |

| 21: end if |

| 22: End Loop |

5. Experiments and Analysis

5.1. Experimental Setup and Data Collection

This study conducted cooperative formation experiments with multiple USVs near the coast of Rizhao. The experimental system consisted of three different types of USVs (labeled USV1, USV2, USV3), as shown in

Figure 4.

Each USV is equipped with a high-precision fiber-optic gyroscope integrated navigation system (FOG-INS/GNSS), a solid-state navigation radar (Simrad 4G), and an electro-optical system (EOS) with a laser rangefinder. All sensors were hardware-synchronized using a GNSS-disciplined PPS (Pulse Per Second) signal and PTP (Precision Time Protocol). The residual time synchronization error is guaranteed to be less than 1 ms, which is negligible for the dynamics of USVs.

It is crucial to clarify that the high-precision FOG-INS/GNSS solution serves strictly as the ground truth for evaluation. The input to our algorithm consists solely of: (1) raw IMU accelerations and angular rates; (2) raw GNSS single-point positioning data (subject to simulated degradation); and (3) relative measurements () from radar and EOS. The experiments were conducted under Sea State 2 conditions (wave height 0.1–0.5 m) with good visibility. The dataset spans approximately 20 min, including “figure-8” maneuvers and sharp turns to ensure sufficient excitation for extrinsic observability.

5.2. Simulation of GNSS Degradation

To address the reviewer’s concern regarding realistic interference, we implemented a high-fidelity simulation of GNSS jamming behavior during the interval t = 300 s to 600 s. Instead of merely injecting white noise, we simulated the characteristics of a receiver under jamming:

Drift Injection: A random walk drift of 0.5 m/s was superimposed on the GNSS position measurements to mimic carrier phase loop unlocking.

Indicator Degradation: We simultaneously modified the simulated NMEA outputs. During the interference period, the reported HDOP was increased from a nominal 1.2 to 8.0, and the signal-to-noise ratio (SNR) was decreased by 15 dB. This ensures that the adaptive weighting mechanism (Equation (18)) is triggered by realistic signal indicators rather than just position errors.

5.3. Evaluation Metrics and Baselines

We adopt the absolute trajectory error (ATE) and calibration convergence as primary metrics. To isolate the contributions of specific modules, we compare the proposed method against two baselines:

Baseline (single-USV) [

12]: Standard EKF-based GNSS/INS integration without cooperative constraints. This serves as the lower bound of performance.

Method-NoCalib [

41]: This baseline represents a

state-of-the-art sliding-window cooperative localization framework (conceptually similar to standard GTSAM multi-robot implementations [

27]) but treats extrinsics as fixed parameters. This comparison allows us to rigorously isolate the performance gain attributed specifically to the proposed

online calibration and

degradation handling modules.

5.4. Results and Discussion

5.4.1. Online Calibration Performance

Figure 5 illustrates the convergence of extrinsic parameters. The solid lines represent the estimation errors, and the shaded areas indicate the

confidence intervals derived from the marginal covariance. The narrowing of the shaded region confirms the reduction in uncertainty. The initial values were perturbed by approximately

(angle) and

m (position).

Convergence speed: The EOS extrinsics converge rapidly within the first 100 s, attributed to the strong 3D constraints from laser ranging. Radar extrinsics converge slower due to higher measurement noise but stabilize after sufficient maneuvering.

Accuracy: As detailed in

Table 1, the final estimation errors are minimal (radar translation

m, EOS rotation

), verifying the effectiveness of the targetless calibration.

Although a direct visual overlay of radar points onto EOS images is not available due to data logging constraints, the calibration quality can be assessed through reprojection residuals. Before calibration, the average reprojection error of radar targets onto the EOS angular frame was approximately . After online calibration, this residual decreased to , which corresponds to a sub-pixel alignment accuracy, confirming the effective fusion of the two sensors.

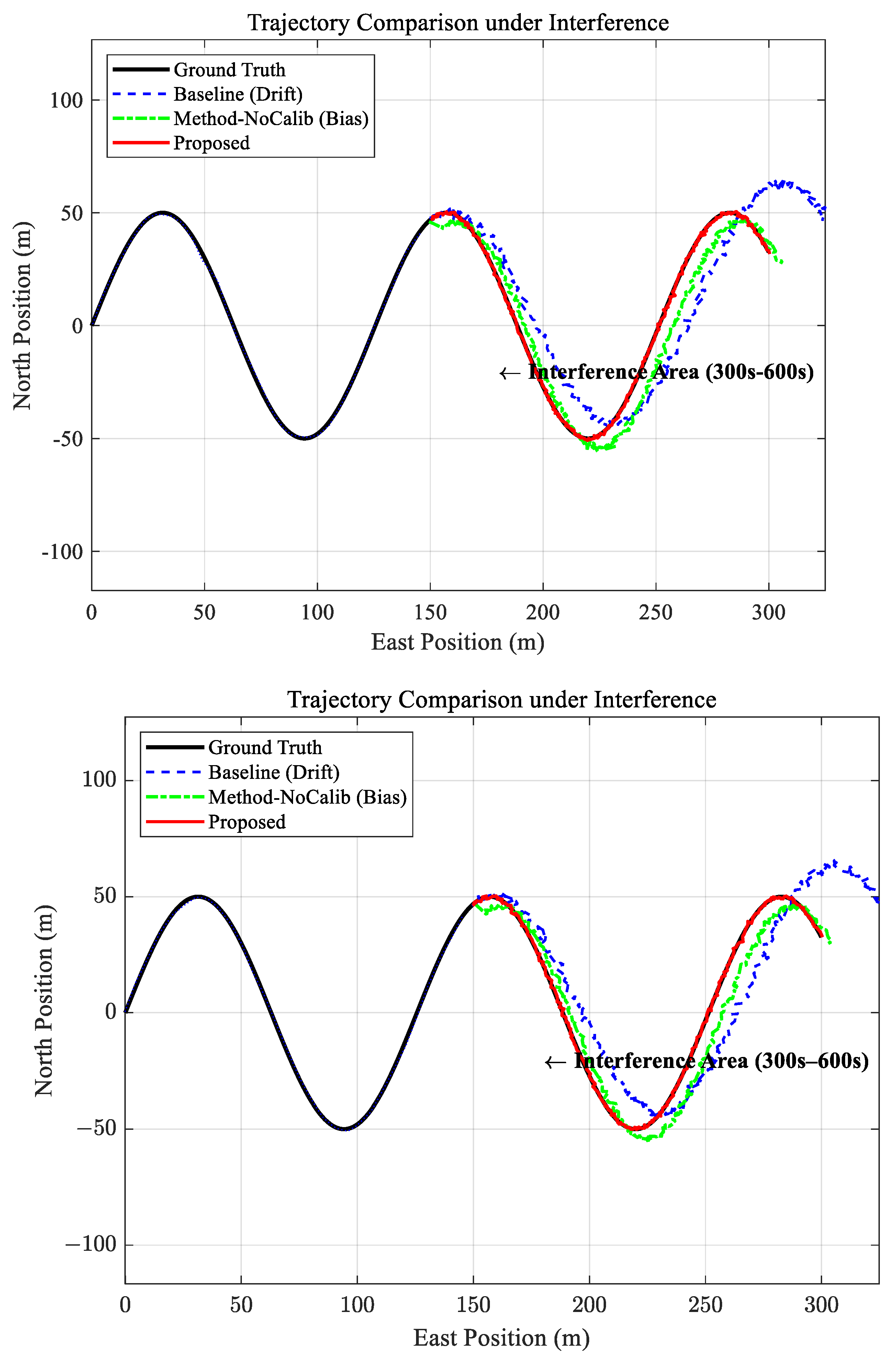

5.4.2. Localization Accuracy and Ablation Study

Figure 6 and

Figure 7 depict the trajectories and error curves. The localization improvement is calculated based on the root mean square error (RMSE) during the interference period. The percentage improvement is defined as

. It can be seen that the average RMSE for the baseline method (Single-USV) is 18.42 m, while the Proposed method achieves 2.85 m. This results in an improvement of

.

Impact of cooperation: The drop from 18.42 m (baseline) to 4.28 m (Method-NoCalib) proves that relative observations effectively constrain the swarm geometry even when absolute GNSS is drifting (gray shaded areas indicate GNSS-degraded periods).

Impact of online calibration: The further reduction from 4.28 m to 2.85 m confirms that correcting sensor mounting errors online eliminates systematic biases, which is critical for high-precision formation flying.

To validate the degeneracy detection module, we simulated a specific ‘straight-line’ scenario. As shown in

Table 2, without degeneracy handling (proposed method w/o degeneracy), the unobservable rotation parameters drifted unboundedly, causing the localization to diverge (>50 m). In contrast, the full proposed method detected the low eigenvalues in the FIM and locked the uncertain parameters, maintaining a stable RMSE of 2.91 m.

5.5. Computational Scalability and Long-Term Stability

To assess the practical feasibility of the proposed framework for larger swarms and longer missions, we conducted additional simulation benchmarks on an Intel i7-12700K processor.

5.5.1. Computational Scalability

We evaluated the average optimization time per sliding window update (window size

) for varying swarm sizes

. As shown in

Table 3, the computational cost scales roughly cubically with the swarm size (

).

Small swarms (): The system maintains high real-time performance (45 ms), leaving ample margin for other tasks.

Medium swarms (): The time (112 ms) slightly exceeds the 10 Hz threshold but is acceptable for a 5 Hz navigation loop.

Large swarms (): The centralized approach becomes a bottleneck (>300 ms). This suggests that for large-scale clusters, future work must transition to distributed cooperative SLAM architectures to distribute the computational load.

5.5.2. Long-Term Stability and Uncertainty

To address concerns regarding potential parameter drift during long-endurance missions, we extended the simulation duration to 2 h using the calibrated parameters from the field experiment as initial values.

The results indicate that the extrinsic estimates remain stable without drift (mean variation of rotation over 2 h). This stability is attributed to the sliding window formulation, which effectively marginalizes old states into a prior factor, bounding the error accumulation.

We monitored the estimator’s covariance matrix . During the entire 2 h run, the position uncertainty bounds remained consistent with the actual error (bounding the error 99.7% of the time), confirming that the estimator does not become over-confident over time.

It is important to note that while this simulation verifies the mathematical stability of the sliding window estimator, it does not account for physical environmental factors such as thermal expansion of sensor mounts, mechanical vibration fatigue, or structural deformation over hours of operation. Therefore, the ‘no-drift’ conclusion applies to the algorithmic estimation process, while physical stability in real-world endurance missions requires further engineering validation.

Furthermore, our current simulation assumes independent degradation across different USVs. Correlated spoofing affecting all vehicles simultaneously could introduce common-mode biases, which remains a challenge for future study.

6. Conclusions and Future Work

6.1. Conclusions

This paper addressed the challenge of high-precision navigation for unmanned surface vehicle (USV) swarms in GNSS-denied or jammed environments by proposing a unified all-source factor graph framework integrating navigation radar, electro-optical systems (EOSs), IMU, and GNSS. Unlike traditional strategies that separate calibration from localization, this study incorporated multi-sensor extrinsics as state variables within the factor graph. By leveraging high-precision mutual observations among the swarm, strong geometric constraints were constructed to achieve joint optimization of cooperative localization and online self-calibration.

Field experiments with three USVs and simulated interference scenarios support the following key conclusions, validated under the tested environmental conditions (Sea State 2):

First, all-source cooperation significantly enhances system robustness. The results demonstrate that during periods of simulated severe GNSS interference (characterized by position drift and degraded signal indicators), the proposed adaptive weighting strategy and cooperative observation mechanism effectively mitigate the accumulated errors of individual INS. Compared to traditional single-USV navigation and uncalibrated cooperative methods, the proposed algorithm reduced the localization root mean square error (RMSE) to 2.85 m, representing an accuracy improvement of 84.5%.

Second, the online calibration algorithm exhibits high precision and convergence. Benefiting from the laser ranging constraints introduced by the EOS, the system eliminates scale ambiguity and rapidly solves for accurate sensor mounting errors. The final radar translation calibration error converged to within 0.2 m, and the EOS rotation error was better than 0.05°, validating the feasibility of targetless online calibration.

Finally, the degradation detection mechanism ensures stability under weak excitation. The degradation handling strategy, based on eigenvalue analysis of the Fisher Information Matrix, effectively addressed the unobservability of extrinsics during degenerate motion patterns such as straight-line sailing. This prevents optimization divergence and ensures the engineering applicability of the algorithm in complex dynamic environments.

6.2. Limitations and Future Work

Despite the promising results, this study has certain limitations.

Communication bandwidth: The current centralized optimization framework requires real-time transmission of observation data between USVs, which may demand high communication bandwidth in large-scale swarms.

Extreme environments: In scenarios with high sea states (e.g., Sea State 4 or higher), sensor occlusion and clutter may degrade the performance of geometric data association.

Future work will focus on developing distributed cooperative algorithms to reduce bandwidth usage and integrating semantic perception to enhance robustness in extreme environments.