Frequency Point Game Environment for UAVs via Expert Knowledge and Large Language Model

Highlights

- We propose UAV-FPG, a novel reinforcement learning-based game environment that simulates dynamic signal interference and anti-interference confrontations between UAVs.

- Within UAV-FPG, the LLM-based opponent planner provides a practical, gradient-free mechanism to generate diverse, feedback-conditioned trajectories and often yields higher opponent rewards than fixed-path baselines, thereby strengthening simulator-side stress tests of ally anti-jamming policies (without implying real-world superiority).

- The UAV-FPG environment serves as a high-fidelity platform for systematically developing and validating anti-jamming decision-making strategies in complex electromagnetic scenarios

- Our simulation results suggest that LLM-driven opponents can act as a stronger and more adaptive adversary within UAV-FPG, providing a practical, gradient-free way to generate diverse trajectories in high-dimensional decision spaces.

Abstract

1. Introduction

- (1)

- We present UAV-FPG, an executable two-player Markov-game environment that couples (i) 3D UAV kinematics and geometry-dependent path loss with (ii) an explicit frequency-point jamming/anti-jamming loop, where link quality is evaluated via SINR/capacity and used to define step-wise rewards.

- (2)

- We build an anti-jamming expert knowledge base and integrate it as a fixed guidance module that maps detected jamming types/frequency bands to safe frequency candidates, providing structured prior support for the ally UAV’s frequency selection during hopping/spreading decisions.

- (3)

- We develop an episode-level LLM-based opponent planner with feedback-conditioned prompting and feasibility constraints to generate adaptive adversarial trajectories, and we benchmark it against fixed geometric trajectories and non-LLM baselines to quantify its effectiveness for stress-testing ally-side policies in UAV-FPG.

2. Related Work

2.1. Multi-Agent Game Theory

2.2. Incorporation of Expert Knowledge Bases

2.3. Path Planning with Large Language Models

3. Environment Model

4. Methods

4.1. Frequency Point Game in Wireless Communications

| Algorithm 1 UAV-FPG |

|

| Environment Step: |

|

4.2. Optimized Frequency Selection with Expert Knowledge

4.3. Toward a Stronger Simulator Adversary: Path Inference and Planning with LLMs

- (1)

- Historical Positions and Rewards: To help the model understand the behavioral performance of the opponent UAV in past environmental states, each historical position and its corresponding reward are explicitly linked. The expected format is as follows:“Positions and Rewards: [xi, yi, zi]: ropponent”

- (2)

- Current Position Information: The real-time state of the UAV is conveyed to the model to facilitate the generation of rational paths based on its current status to intercept the ally UAV. The expected format is:“Current Position: [x, y, z]”

- (3)

- Output Format: A formatted example is provided to clearly define the structure and sequence length constraints for the generated path data, ensuring its usability and structural consistency. The expected format is:“Next directions: [[x1, y1, z1], [x2, y2, z2], …, [xn, yn, zn]]”

5. Experiments

5.1. Environment Setting

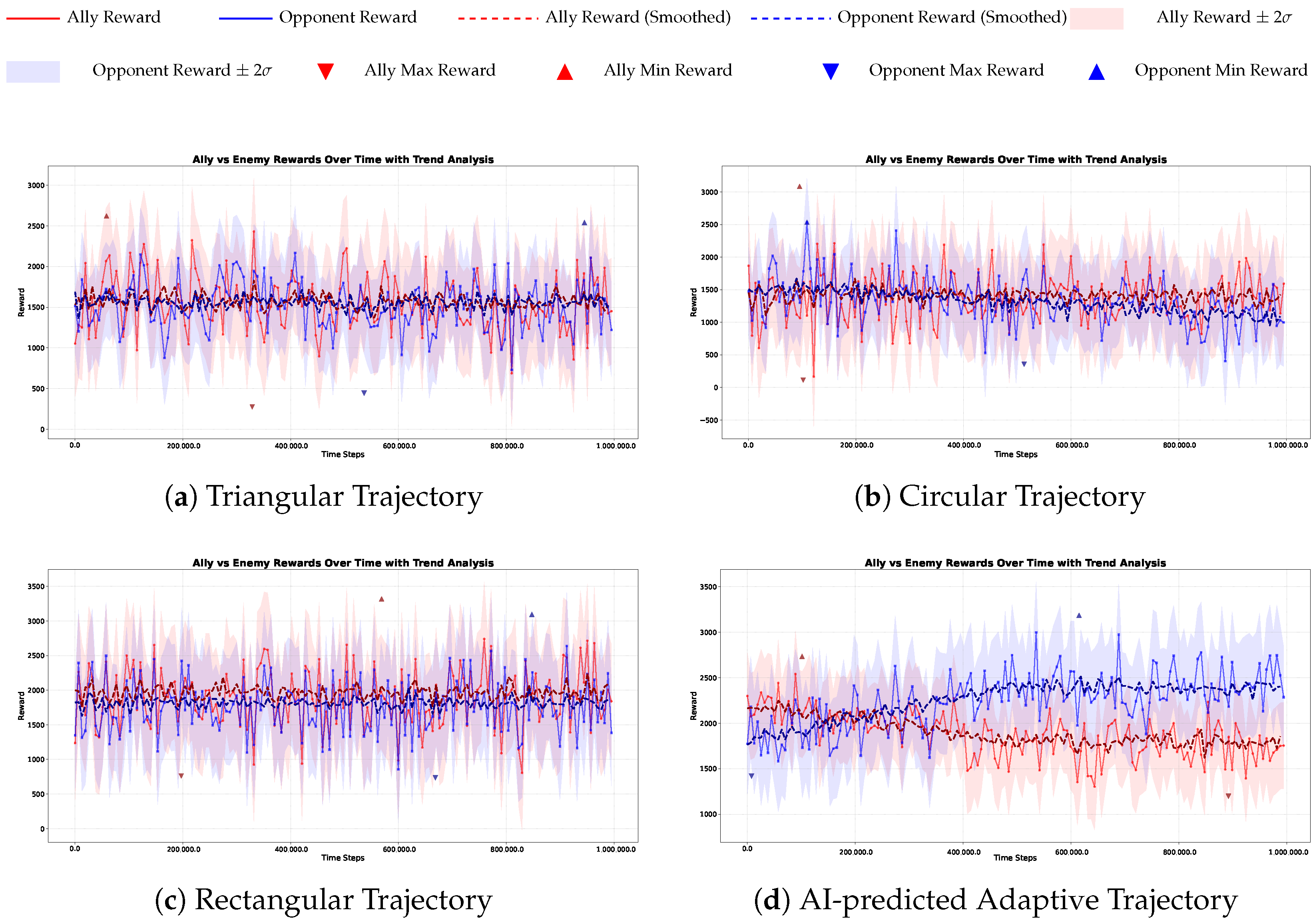

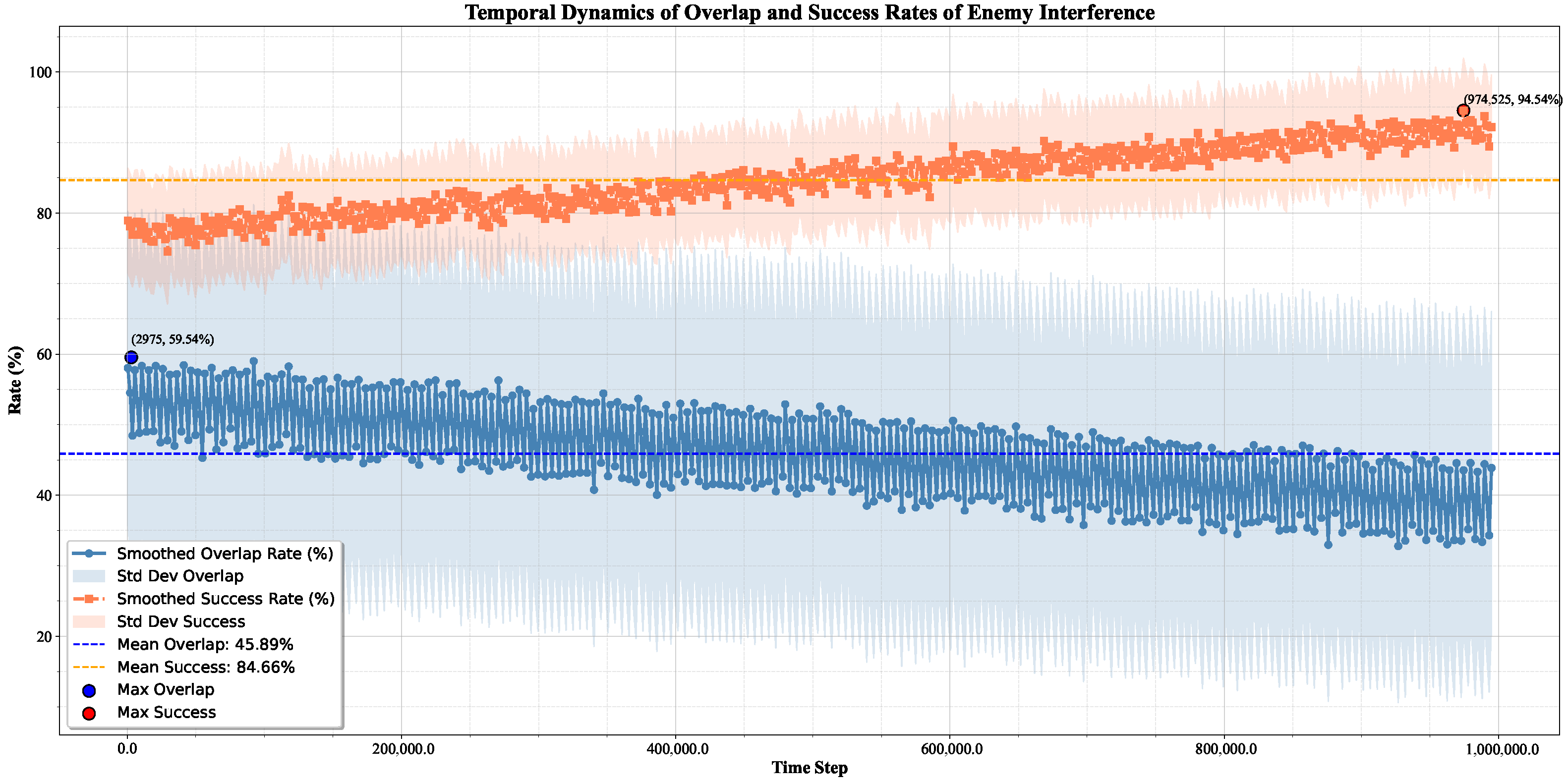

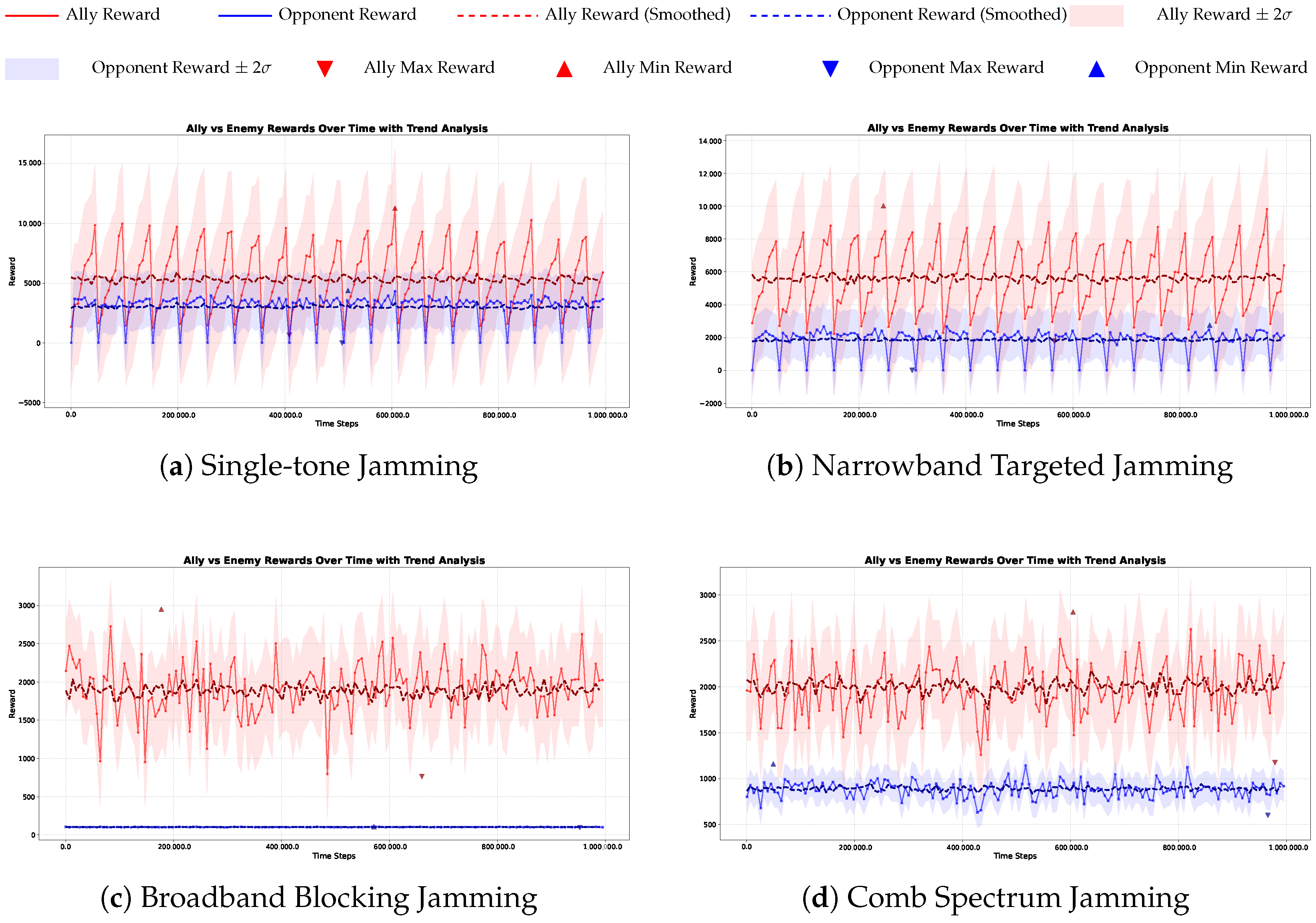

5.2. Opponent Gameplay Performance in UAV-FPG Environment

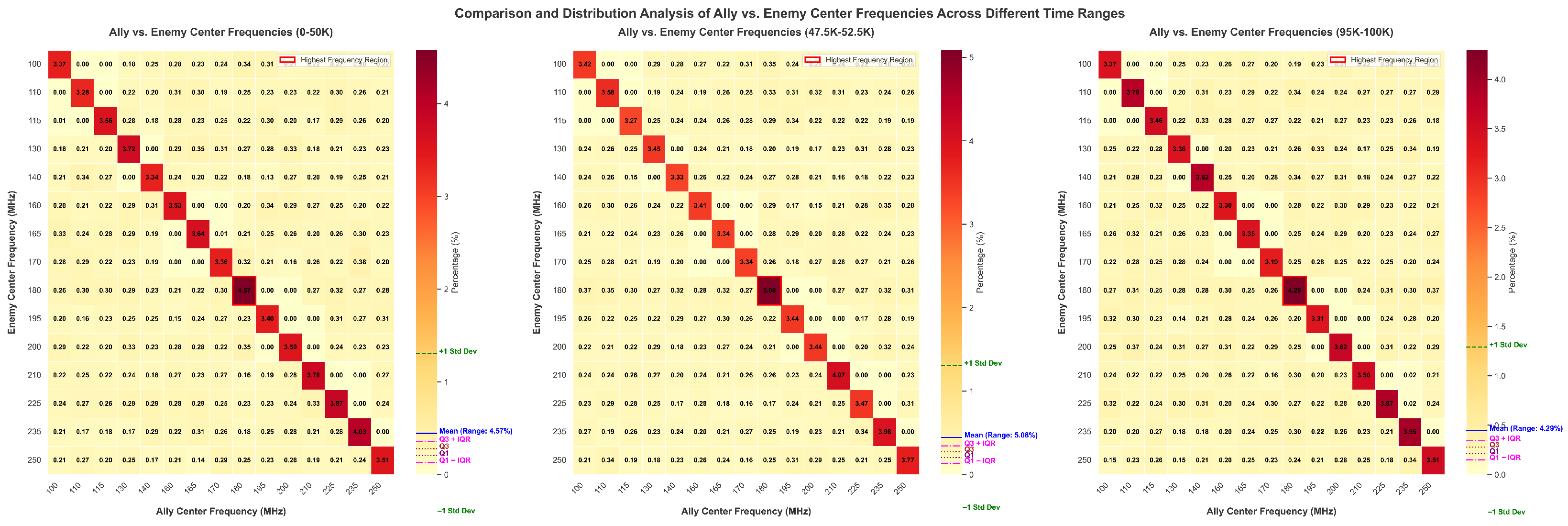

5.3. Analysis of Frequency Selection in Opponent Scenarios

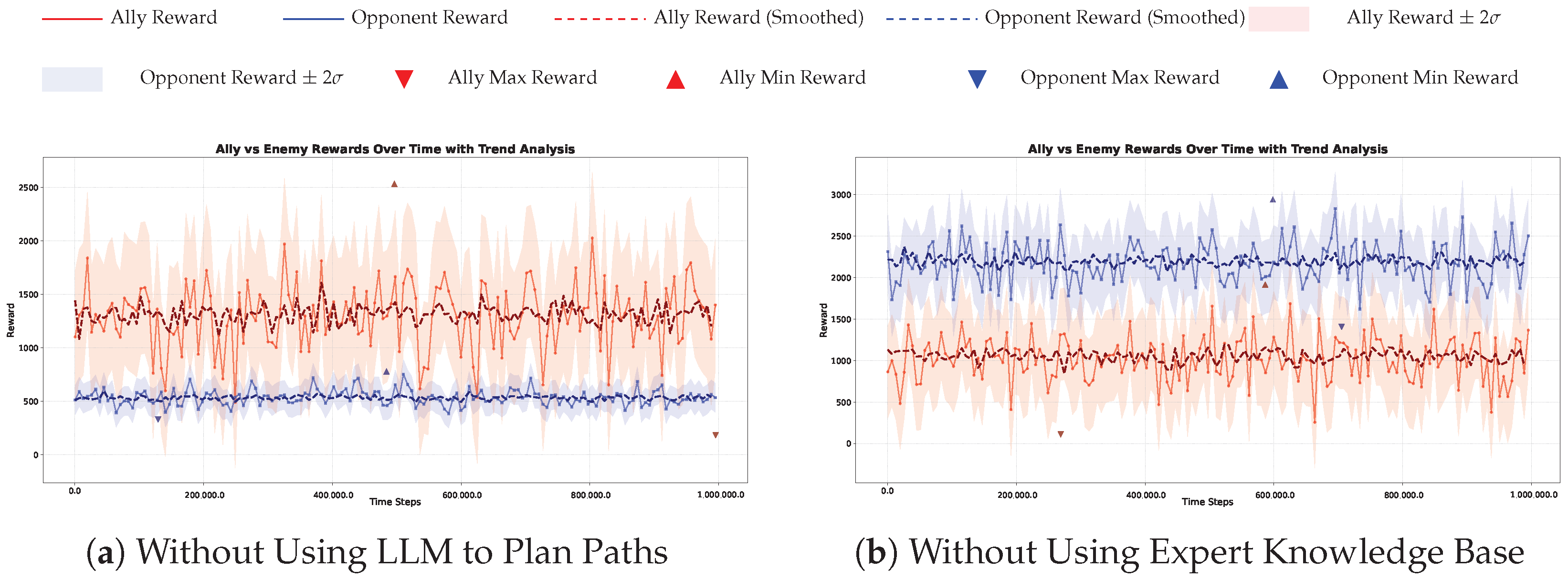

5.4. Ablation Study

6. Conclusions

7. Limitation and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Fan, B.; Li, Y.; Zhang, R.; Fu, Q. Review on the technological development and application of UAV systems. Chin. J. Electron. 2020, 29, 199–207. [Google Scholar] [CrossRef]

- Radoglou-Grammatikis, P.; Sarigiannidis, P.; Lagkas, T.; Moscholios, I. A compilation of UAV applications for precision agriculture. Comput. Netw. 2020, 172, 107148. [Google Scholar] [CrossRef]

- Rana, K.; Praharaj, S.; Nanda, T. Unmanned aerial vehicles (UAVs): An emerging technology for logistics. Int. J. Bus. Manag. Invent. 2016, 5, 86–92. [Google Scholar]

- Wang, Q.; Li, W.; Yu, Z.; Abbasi, Q.; Imran, M.; Ansari, S.; Sambo, Y.; Wu, L.; Li, Q.; Zhu, T. An overview of emergency communication networks. Remote Sens. 2023, 15, 1595. [Google Scholar] [CrossRef]

- Alsamhi, S.H.; Afghah, F.; Sahal, R.; Hawbani, A.; Al-qaness, M.A.; Lee, B.; Guizani, M. Green internet of things using UAVs in B5G networks: A review of applications and strategies. Ad Hoc Netw. 2021, 117, 102505. [Google Scholar] [CrossRef]

- Gallacher, D. Drone applications for environmental management in urban spaces: A review. Int. J. Sustain. Land Use Urban Plan. 2016, 3, 1–14. [Google Scholar] [CrossRef]

- Shi, L.; Marcano, N.J.H.; Jacobsen, R.H. A review on communication protocols for autonomous unmanned aerial vehicles for inspection application. Microprocess. Microsyst. 2021, 86, 104340. [Google Scholar] [CrossRef]

- de Curtò, J.; de Zarzà, I.; Cano, J.C.; Calafate, C.T. Enhancing Communication Security in Drones Using QRNG in Frequency Hopping Spread Spectrum. Future Internet 2024, 16, 412. [Google Scholar] [CrossRef]

- Wang, R.; Wang, S.; Zhang, W. Joint power and hopping rate adaption against follower jammer based on deep reinforcement learning. Trans. Emerg. Telecommun. Technol. 2023, 34, e4700. [Google Scholar] [CrossRef]

- Rao, N.; Xu, H.; Qi, Z.; Wang, D. Fast adaptive jamming resource allocation against frequency-hopping spread spectrum in wireless sensor networks via meta deep reinforcement learning. IEEE Trans. Aerosp. Electron. Syst. 2024, 60, 7676–7693. [Google Scholar] [CrossRef]

- Khan, M.T. A modified convolutional neural network with rectangular filters for frequency-hopping spread spectrum signals. Appl. Soft Comput. 2024, 150, 111036. [Google Scholar] [CrossRef]

- Shakhatreh, H.; Sawalmeh, A.; Hayajneh, K.F.; Abdel-Razeq, S.; Al-Fuqaha, A. A Systematic Review of Interference Mitigation Techniques in Current and Future UAV-Assisted Wireless Networks. IEEE Open J. Commun. Soc. 2024, 5, 2815–2846. [Google Scholar] [CrossRef]

- Pärlin, K.; Riihonen, T.; Turunen, M. Sweep jamming mitigation using adaptive filtering for detecting frequency agile systems. In Proceedings of the Military Communications and Information Systems, Budva, Montenegro, 14–15 May 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1–6. [Google Scholar]

- Liu, T.; Huang, J.; Guo, J.; Shan, Y. Survey on anti-jamming technology of uav communication. In Proceedings of the International Conference on 5G for Future Wireless Networks, Shanghai, China, 7–8 October 2022; Springer: Berlin, Germany, 2022; pp. 111–121. [Google Scholar]

- Wang, R.; Wang, S.; Zhang, W. Cooperative Multi-UAV Dynamic Anti-Jamming Scheme with Deep Reinforcement Learning; IEEE: Piscataway, NJ, USA, 2022; pp. 590–595. [Google Scholar]

- Li, Z.; Lu, Y.; Li, X.; Wang, Z.; Qiao, W.; Liu, Y. UAV networks against multiple maneuvering smart jamming with knowledge-based reinforcement learning. IEEE Internet Things J. 2021, 8, 12289–12310. [Google Scholar] [CrossRef]

- Yao, F.; Jia, L. A Collaborative Multi-Agent Reinforcement Learning Anti-Jamming Algorithm in Wireless Networks. IEEE Wirel. Commun. Lett. 2019, 8, 1024–1027. [Google Scholar] [CrossRef]

- Chen, Y.; Wang, Y.; Zhao, K.; Liang, H.; Liu, P.; Yang, Y. GPDS: A multi-agent deep reinforcement learning game for anti-jamming secure computing in MEC network. Expert Syst. Appl. 2022, 210, 118394. [Google Scholar] [CrossRef]

- Liu, D.; Wang, J.; Xu, Y.; Ruan, L.; Zhang, Y. A coalition-based communication framework for intelligent flying ad-hoc networks. arXiv 2018, arXiv:1812.00896. [Google Scholar]

- Shah, S.; Dey, D.; Lovett, C.; Kapoor, A. AirSim: High-Fidelity Visual and Physical Simulation for Autonomous Vehicles. In Field and Service Robotics Springer Proceedings in Advanced Robotics; Hutter, M., Siegwart, R., Eds.; Springer: Cham, Switzerland, 2018; Volume 5, pp. 621–635. [Google Scholar] [CrossRef]

- Furrer, F.; Burri, M.; Achtelik, M.; Siegwart, R. RotorS—A Modular Gazebo MAV Simulator Framework. In Robot Operating System (ROS): The Complete Reference (Volume 1); Studies in Computational Intelligence; Koubaa, A., Ed.; Springer: Cham, Switzerland, 2016; Volume 625, pp. 595–625. [Google Scholar] [CrossRef]

- Song, Y.; Naji, S.; Kaufmann, E.; Loquercio, A.; Scaramuzza, D. Flightmare: A Flexible Quadrotor Simulator. In Proceedings of the 2020 Conference on Robot Learning; Kober, J., Ramos, F., Tomlin, C., Eds.; PMLR: Cambridge, MA, USA, 2021; Volume 155, pp. 1147–1157. [Google Scholar]

- Panerati, J.; Zheng, H.; Zhou, S.; Xu, J.; Prorok, A.; Schoellig, A.P. Learning to Fly—A Gym Environment with PyBullet Physics for Reinforcement Learning of Multi-agent Quadcopter Control. arXiv 2021, arXiv:2103.02142. [Google Scholar]

- Liu, X.; Xu, Y.; Jia, L.; Wu, Q.; Anpalagan, A. Anti-jamming Communications Using Spectrum Waterfall: A Deep Reinforcement Learning Approach. arXiv 2017, arXiv:1710.04830. [Google Scholar] [CrossRef]

- Nowé, A.; Vrancx, P.; De Hauwere, Y.M. Game theory and multi-agent reinforcement learning. Reinf. Learn.-State Art 2012, 15, 441–470. [Google Scholar]

- Yang, Y.; Wang, J. An overview of multi-agent reinforcement learning from game theoretical perspective. arXiv 2020, arXiv:2011.00583. [Google Scholar]

- Zhang, K.; Yang, Z.; Başar, T. Multi-agent reinforcement learning: A selective overview of theories and algorithms. In Handbook of Reinforcement Learning and Control; Springer: Berlin, Germany, 2021; pp. 321–384. [Google Scholar]

- Busoniu, L.; Babuska, R.; De Schutter, B. Multi-agent reinforcement learning: A survey. In Proceedings of the Control, Automation, Robotics and Vision, Singapore, 5–8 December 2006; IEEE: Piscataway, NJ, USA, 2006; pp. 1–6. [Google Scholar]

- Yang, J.; Wang, H.; Zhao, Q.; Shi, Z.; Song, Z.; Fang, M. Efficient Reinforcement Learning via Decoupling Exploration and Utilization. In Proceedings of the International Conference on Intelligent Computing, Zakopane, Poland, 7–11 June 2024; Springer: Berlin, Germany, 2024; pp. 396–406. [Google Scholar]

- Lowe, R.; Wu, Y.I.; Tamar, A.; Harb, J.; Pieter Abbeel, O.; Mordatch, I. Multi-agent actor-critic for mixed cooperative-competitive environments. Adv. Neural Inf. Process. Syst. 2017, 30, 6379–6390. [Google Scholar]

- Silver, D.; Huang, A.; Maddison, C.J.; Guez, A.; Sifre, L.; Van Den Driessche, G.; Schrittwieser, J.; Antonoglou, I.; Panneershelvam, V.; Lanctot, M.; et al. Mastering the game of Go with deep neural networks and tree search. Nature 2016, 529, 484–489. [Google Scholar] [CrossRef]

- Sunehag, P.; Lever, G.; Gruslys, A.; Czarnecki, W.M.; Zambaldi, V.; Jaderberg, M.; Lanctot, M.; Sonnerat, N.; Leibo, J.Z.; Tuyls, K.; et al. Value-decomposition networks for cooperative multi-agent learning. arXiv 2017, arXiv:1706.05296. [Google Scholar]

- Rashid, T.; Samvelyan, M.; De Witt, C.S.; Farquhar, G.; Foerster, J.; Whiteson, S. Monotonic value function factorisation for deep multi-agent reinforcement learning. J. Mach. Learn. Res. 2020, 21, 1–51. [Google Scholar]

- Zhao, N.; Ye, Z.; Pei, Y.; Liang, Y.C.; Niyato, D. Multi-agent deep reinforcement learning for task offloading in UAV-assisted mobile edge computing. IEEE Trans. Wirel. Commun. 2022, 21, 6949–6960. [Google Scholar] [CrossRef]

- Cui, J.; Liu, Y.; Nallanathan, A. Multi-agent reinforcement learning-based resource allocation for UAV networks. IEEE Trans. Wirel. Commun. 2019, 19, 729–743. [Google Scholar] [CrossRef]

- Ganzfried, S. Fictitious play outperforms counterfactual regret minimization. arXiv 2020, arXiv:2001.11165. [Google Scholar]

- Brown, N.; Lerer, A.; Gross, S.; Sandholm, T. Deep counterfactual regret minimization. In Proceedings of the International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; PMLR: Cambridge, MA, USA, 2019; pp. 793–802. [Google Scholar]

- Gipiškis, R.; Joaquin, A.S.; Chin, Z.S.; Regenfuß, A.; Gil, A.; Holtman, K. Risk Sources and Risk Management Measures in Support of Standards for General-Purpose AI Systems. arXiv 2024, arXiv:2410.23472. [Google Scholar] [CrossRef]

- Kulkarni, A.; Shivananda, A.; Manure, A. Decision Intelligence Overview. In Introduction to Prescriptive AI: A Primer for Decision Intelligence Solutioning with Python; Springer: Berlin, Germany, 2023; pp. 1–25. [Google Scholar]

- Zhu, Q.; Başar, T. Decision and Game Theory for Security; Springer: Berlin, Germany, 2013. [Google Scholar]

- Yu, A.; Kolotylo, I.; Hashim, H.A.; Eltoukhy, A.E. Electronic Warfare Cyberattacks, Countermeasures and Modern Defensive Strategies of UAV Avionics: A Survey. IEEE Access 2025, 13, 68660–68681. [Google Scholar] [CrossRef]

- Wu, Q.; Wang, H.; Li, X.; Zhang, B.; Peng, J. Reinforcement learning-based anti-jamming in networked UAV radar systems. Appl. Sci. 2019, 9, 5173. [Google Scholar] [CrossRef]

- Zhang, Z.; Zhou, Y.; Zhang, Y.; Qian, B. Strong electromagnetic interference and protection in uavs. Electronics 2024, 13, 393. [Google Scholar] [CrossRef]

- Zhou, B.; Yang, G.; Shi, Z.; Ma, S. Natural language processing for smart healthcare. IEEE Rev. Biomed. Eng. 2022, 17, 4–18. [Google Scholar] [CrossRef]

- Pee, L.G.; Pan, S.L.; Cui, L. Artificial intelligence in healthcare robots: A social informatics study of knowledge embodiment. J. Assoc. Inf. Sci. Technol. 2019, 70, 351–369. [Google Scholar] [CrossRef]

- Wang, Y.H.; Lin, G.Y. Exploring AI-healthcare innovation: Natural language processing-based patents analysis for technology-driven roadmapping. Kybernetes 2023, 52, 1173–1189. [Google Scholar] [CrossRef]

- Gyrard, A.; Tabeau, K.; Fiorini, L.; Kung, A.; Senges, E.; De Mul, M.; Giuliani, F.; Lefebvre, D.; Hoshino, I. Knowledge engineering framework for IoT robotics applied to smart healthcare and emotional well-being. Int. J. Soc. Robot. 2023, 15, 445–472. [Google Scholar] [CrossRef]

- Piat, G.X. Incorporating Expert Knowledge in Deep Neural Networks for Domain Adaptation in Natural Language Processing. Ph.D. Thesis, Université Paris-Saclay, Paris, France, 2023. [Google Scholar]

- Chanda, A.K.; Bai, T.; Yang, Z.; Vucetic, S. Improving medical term embeddings using UMLS Metathesaurus. BMC Med. Inform. Decis. Mak. 2022, 22, 114. [Google Scholar] [CrossRef]

- McCray, A.T.; Aronson, A.R.; Browne, A.C.; Rindflesch, T.C.; Razi, A.; Srinivasan, S. UMLS knowledge for biomedical language processing. Bull. Med. Libr. Assoc. 1993, 81, 184. [Google Scholar]

- El Ghosh, M. Automation of Legal Reasoning and Decision Based on Ontologies. Ph.D. Thesis, Normandie Université, Caen, France, 2018. [Google Scholar]

- Karwowski, J.; Szynkiewicz, W.; Niewiadomska-Szynkiewicz, E. Bridging Requirements, Planning, and Evaluation: A Review of Social Robot Navigation. Sensors 2024, 24, 2794. [Google Scholar] [CrossRef]

- Xiao, X.; Liu, B.; Warnell, G.; Stone, P. Motion planning and control for mobile robot navigation using machine learning: A survey. Auton. Robot. 2022, 46, 569–597. [Google Scholar] [CrossRef]

- Sun, X.; Zhang, Y.; Chen, J. RTPO: A domain knowledge base for robot task planning. Electronics 2019, 8, 1105. [Google Scholar] [CrossRef]

- Pan, H.; Huang, S.; Yang, J.; Mi, J.; Li, K.; You, X.; Tang, X.; Liang, P.; Yang, J.; Liu, Y.; et al. Recent Advances in Robot Navigation via Large Language Models: A Review. Res. Gate 2024, preprint. [Google Scholar]

- Yao, F.; Yue, Y.; Liu, Y.; Sun, X.; Fu, K. AeroVerse: UAV-Agent Benchmark Suite for Simulating, Pre-training, Finetuning, and Evaluating Aerospace Embodied World Models. arXiv 2024, arXiv:2408.15511. [Google Scholar]

- Andreoni, M.; Lunardi, W.T.; Lawton, G.; Thakkar, S. Enhancing autonomous system security and resilience with generative AI: A comprehensive survey. IEEE Access 2024, 12, 109470–109493. [Google Scholar] [CrossRef]

- Liu, Y.; Yao, F.; Yue, Y.; Xu, G.; Sun, X.; Fu, K. NavAgent: Multi-scale Urban Street View Fusion For UAV Embodied Vision-and-Language Navigation. arXiv 2024, arXiv:2411.08579. [Google Scholar]

- Chen, Z.; Xu, L.; Zheng, H.; Chen, L.; Tolba, A.; Zhao, L.; Yu, K.; Feng, H. Evolution and Prospects of Foundation Models: From Large Language Models to Large Multimodal Models. Comput. Mater. Contin. 2024, 80, 1753–1808. [Google Scholar] [CrossRef]

- Kang, J.; Liao, J.; Gao, R.; Wen, J.; Huang, H.; Zhang, M.; Yi, C.; Zhang, T.; Niyato, D.; Zheng, Z. Efficient and Trustworthy Block Propagation for Blockchain-enabled Mobile Embodied AI Networks: A Graph Resfusion Approach. arXiv 2025, arXiv:2502.09624. [Google Scholar] [CrossRef]

- Zheng, Z.; Bewley, T.R.; Kuester, F. Point cloud-based target-oriented 3D path planning for UAVs. In Proceedings of the International Conference on Unmanned Aircraft Systems; IEEE: Piscataway, NJ, USA, 2020; pp. 790–798. [Google Scholar]

- Jin, Y.; Yue, M.; Li, W.; Shangguan, J. An improved target-oriented path planning algorithm for wheeled mobile robots. J. Mech. Eng. Sci. 2022, 236, 11081–11093. [Google Scholar] [CrossRef]

- Zhao, X.; Cai, W.; Tang, L.; Wang, T. ImagineNav: Prompting Vision-Language Models as Embodied Navigator through Scene Imagination. arXiv 2024, arXiv:2410.09874. [Google Scholar]

- Zhao, R.; Yuan, Q.; Li, J.; Fan, Y.; Li, Y.; Gao, F. DriveLLaVA: Human-Level Behavior Decisions via Vision Language Model. Sensors 2024, 24, 4113. [Google Scholar] [CrossRef]

- Zhang, Y.F.; Wen, Q.; Fu, C.; Wang, X.; Zhang, Z.; Wang, L.; Jin, R. Beyond LLaVA-HD: Diving into High-Resolution Large Multimodal Models. arXiv 2024, arXiv:2406.08487. [Google Scholar] [CrossRef]

- Reed, S.; Zolna, K.; Parisotto, E.; Colmenarejo, S.G.; Novikov, A.; Barth-Maron, G.; Gimenez, M.; Sulsky, Y.; Kay, J.; Springenberg, J.T.; et al. A generalist agent. arXiv 2022, arXiv:2205.06175. [Google Scholar] [CrossRef]

- Chen, X. One Step Towards Autonomous AI Agent: Reasoning, Alignment and Planning. Ph.D. Thesis, University of California, Los Angeles, CA, USA, 2024. [Google Scholar]

- Jeong, H.; Lee, H.; Kim, C.; Shin, S. A Survey of Robot Intelligence with Large Language Models. Appl. Sci. 2024, 14, 8868. [Google Scholar] [CrossRef]

- Poisel, R.A. Introduction to Communication Electronic Warfare Systems; Artech House, Inc.: Norwood, MA, USA, 2008. [Google Scholar]

- Zhang, C.; Huang, G.; Liu, L.; Huang, S.; Yang, Y.; Wan, X.; Ge, S.; Tao, D. WebUAV-3M: A benchmark for unveiling the power of million-scale deep UAV tracking. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 45, 9186–9205. [Google Scholar] [CrossRef]

- Haarnoja, T.; Zhou, A.; Abbeel, P.; Levine, S. Soft Actor-Critic: Off-Policy Maximum Entropy Deep Reinforcement Learning with a Stochastic Actor. In Proceedings of the 35th International Conference on Machine Learning, Stockholm, Sweden, 10–15 July 2018; PMLR: Cambridge, MA, USA, 2018; Volume 80, pp. 1861–1870. [Google Scholar]

- Yu, C.; Velu, A.; Vinitsky, E.; Gao, J.; Wang, Y.; Bayen, A.; Wu, Y. The Surprising Effectiveness of PPO in Cooperative, Multi-Agent Games. arXiv 2021, arXiv:2103.01955. [Google Scholar]

- Coulter, R.C. Implementation of the Pure Pursuit Path Tracking Algorithm; Technical Report CMU-RI-TR-92-01; Carnegie Mellon University, The Robotics Institute: Pittsburgh, PA, USA, 1992. [Google Scholar]

- Mayne, D.Q.; Rawlings, J.B.; Rao, C.V.; Scokaert, P.O.M. Constrained model predictive control: Stability and optimality. Automatica 2000, 36, 789–814. [Google Scholar] [CrossRef]

| Work/Platform | Category | 3D Mobility | Spectrum Confrontation | KB | LLM |

|---|---|---|---|---|---|

| Anti-jamming learning games | |||||

| Spectrum Waterfall DRL [24] | RL (single agent) | No | Partial | No | No |

| CMAA (Markov game) [17] | MARL (wireless channel selection) | No | Yes | No | No |

| GPDS [18] | MARL game (MEC security computing) | No | Yes | No | No |

| UAV simulation platforms | |||||

| AirSim [20] | High-fidelity UAV simulation | Yes | No | No | No |

| RotorS [21] | Gazebo-based MAV simulation | Yes | No | No | No |

| Flightmare [22] | Fast RL-oriented quadrotor simulator | Yes | No | No | No |

| UAV-FPG (ours) | UAV spectrum confrontation environment | Yes | Yes | Yes | Yes |

| Jamming Type | k |

|---|---|

| Single-tone Jamming | 1.5 |

| Narrowband Targeted Jamming | 1.2 |

| Broadband Blocking Jamming | 0.4 |

| Comb Spectrum Jamming | 0.8 |

| Parameter | Value |

|---|---|

| Discount factor, | 0.99 |

| Total time, T | |

| Batch size, | 32 |

| Learning rate, | 0.001 |

| Buffer capacity, C | |

| State dimension, | 15 |

| Action dimension, | 9 |

| Max action value | 5 |

| Loss function | MSE |

| Actor/Critic network (hidden layers, activation) | 2 × 256, ReLU |

| Exploration noise (Gaussian), | 0.1 |

| Parameter | Value |

|---|---|

| Ally bandwidth (non-spread spectrum) | 5 MHz |

| Ally bandwidth (spread spectrum) | 2400 MHz |

| Noise spectral density | −170 dBm |

| Opponent check interval | 5 s |

| UAV speed range | 10 m/s |

| Base station power, | 45 dBm |

| Opponent power, | 20 dBm |

| SNR threshold, M | 8 |

| Proximity threshold (Equation (6)) | 30 m |

| Number of random seeds, | 5 |

| Curve smoothing window, w | 200 training steps |

| LLM call frequency | Once per episode boundary |

| Model | Ally Average Reward | Opponent Average Reward |

|---|---|---|

| UAV-FPG (Triangular) | 1965.01 | 1812.17 |

| MASAC [71] | 1413.57 | 1316.85 |

| MAPPO [72] | 1572.73 | 1559.42 |

| UAV-FPG (LLM path planning) | 2006.22 | 2257.48 |

| Greedy Intercept (Pure Pursuit) [73] | 3425.19 | 1899.98 |

| MPC (Short-horizon Optimization) [74] | 2252.49 | 1849.97 |

| Method | Final-10% Opp. Reward | AUC (Opp. Reward) | Late-Stage Overlap (%) |

|---|---|---|---|

| UAV-FPG (Triangle) | |||

| UAV-FPG (Circle) | |||

| UAV-FPG (Rectangle) | |||

| UAV-FPG (LLM planner) |

| Model | Ally Avg Reward | Opponent Avg Reward |

|---|---|---|

| UAV-FPG (ours) | 2006.22 | 2257.48 |

| No defense (fixed freq, no spread) | 952.37 | 3158.64 |

| Random FHSS (pseudo-random hopping) | 1486.53 | 2687.21 |

| Adaptive FH (blacklist-based) | 1753.82 | 2421.35 |

| Bandit-UCB (15 arms) | 1967.45 | 2198.73 |

| DQN-based anti-jamming | 2002.68 | 2039.56 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Yang, J.; Zhang, H.; Ji, F.; Wang, Y.; Wang, M.; Luo, Y.; Ding, W. Frequency Point Game Environment for UAVs via Expert Knowledge and Large Language Model. Drones 2026, 10, 147. https://doi.org/10.3390/drones10020147

Yang J, Zhang H, Ji F, Wang Y, Wang M, Luo Y, Ding W. Frequency Point Game Environment for UAVs via Expert Knowledge and Large Language Model. Drones. 2026; 10(2):147. https://doi.org/10.3390/drones10020147

Chicago/Turabian StyleYang, Jingpu, Hang Zhang, Fengxian Ji, Yufeng Wang, Mingjie Wang, Yizhe Luo, and Wenrui Ding. 2026. "Frequency Point Game Environment for UAVs via Expert Knowledge and Large Language Model" Drones 10, no. 2: 147. https://doi.org/10.3390/drones10020147

APA StyleYang, J., Zhang, H., Ji, F., Wang, Y., Wang, M., Luo, Y., & Ding, W. (2026). Frequency Point Game Environment for UAVs via Expert Knowledge and Large Language Model. Drones, 10(2), 147. https://doi.org/10.3390/drones10020147