1. Introduction

In the control system domain, one of the most influential methods is the adaptive (or approximate) dynamic programming (ADP) (sometimes coined as neural dynamic programming; see [

1,

2], whose goal is learning optimal control under system model uncertainty [

3,

4,

5,

6,

7,

8,

9,

10,

11]. Several mainstream ADP controls have evolved, like dual heuristic programming (DHP), heuristic dynamic programming (HDP), and global DHP (GDHP), to cope with learning either in model-free mode or in model-based mode; see, e.g., the works of [

12,

13,

14,

15,

16]. The model-free setting leads to the family of action-dependent HDP (ADHDP) methods, prominently known as Q-learning in the reinforcement learning domain, which is more aligned to Artificial Intelligence.

In ADP, the rationale is that the Hamilton–Jacobi–Bellman (HJB) equation is hard or impossible to solve analytically for general nonlinear systems. Then, ADP proposes to use a so-called actor–critic architecture that employs (a) an actor that embodies the controller (sometimes called policy) and (b) a critic to approximate the state value function “V” or the more extended state action value function “Q”. The critic’s role is to appreciate the actor’s performance, and the family of Policy Iteration (PoIt) and Value Iteration (VI) algorithms was developed to solve the HJB equation forward in time. For the general unknown nonlinear systems, the actor and the critic are represented by neural network (NN) function approximators. A third algorithm called Policy Gradient (PG) can also solve the HJB, but commonly it performs backward in time in an iterative fashion and it is also less efficient than PoIt or VI. Both PoIt and VI come in several implementation flavors, like on- or off-policy, on- or off-line, adaptive or not, and incremental or using experience replay, based on buffers of transition samples. These transition samples model the underlying system’s state transition, ideally captured by a (non)linear state-space representation which in ADP is a deterministic expression of the more general Markov Decision Process (MDP).

Two factors are essential to the underlying system representation whose control is sought via ADP methods. The first and most important is the system state (sometimes called observation) which is unmeasurable for unknown system dynamics. The observability theory deeply rooted in classic control points out the solution to arriving at virtual, equivalent state-space representations, where the hidden true state is aliased by a sequence of input–output data samples that are readily measured from the system. This approach preserves the system input and output, as it only changes the internal state representation that makes the equivalent (or virtual) model a fully observable one, thus alleviating the environment partial observability issue. The second factor is the system input that serves a two-fold objective: control action and action–state space exploration. The action–state exploration is a general requirement for learning convergence of the ADP methods and it is even more critical with nonlinear systems; as with linear ones, exploration of a narrow action–state region can well generalize the optimal learned control solution towards global certificates.

The ADP methods naturally lend themselves to systems with modeling uncertainty and incomplete state measurements. Unmanned aerial vehicle (UAV) systems fall under this category due to their nonlinearity and high complexity and dimensionality. UAV control using ADP or RL methods has been tackled before either in linear or nonlinear settings; however, the specifics of uncertain models, real-time online learning capability, incomplete state measurement and stability preservation along the learning process have not been suitably approached before. In addition, the model reference control approach for UAVs, or for any kind of systems, is an essential instrument to synthesize the control performance specifications at a requirements level. It is therefore of interest to integrate such a feature in the aforementioned setting.

Early results on a 6DoF quadrotor UAV control with ADP were formulated in [

17]. The learning takes place for a nonlinear model and uses NNs for the actor–critic. Although adaptive, the formulation misses the model reference setting and strong convergence and stability results. The work from [

18] studied attitude and longitudinal motion of a quadrotor UAV based on a linearized model, in an adaptive control setting but without an actor–critic. Instead, classical LQR is combined with model reference adaptive control for a partially known model with uncertainty, and stability is assessed. The work’s innovation also includes faults on the control inputs actuation; however, all states are measured and the model-free design is not fully approached under partial observability. Stronger experimental evidence validated the approach. In another work, ref. [

19] contributes a computationally-efficient method to store a Q-function generalization, a continuous action selection based on local Q-function approximation, and a combination of model identification and online learning for inner-loop control of a UAV system. A model reduction is performed to facilitate learning and overcome the curse of dimensionality. A reference tracking problem formulation was proposed; however, the model reference and convergence and stability analysis were not included. In [

20], a novel RL for a thrust-vectoring controlled quadcopter was proposed, based on Proximal Policy Optimization (PPO). The reward was crafted to steer the flying robot towards its specified waypoint starting from its current location. The nonlinear dynamics complexity was used in the actor–critic; nevertheless, the model reference tracking, partial observability, and convergence and stability analysis were not research topics.

In [

21], a quadratic programming neural dynamic controller is applied to UAVs for tracking control purposes. A complex numerical model was employed with a more conventional model-based design under uncertainty and validated in a thorough case study consisting of many flight modes. The observability issues and complete model-free design were not tackled. A deep RL (DRL) adaptive controller for an aerial robot was proposed in [

22] and shown to be superior to traditional PID controllers; however, simulated environments do not pose the challenges associated with real-time interaction control. The authors of [

23] relayed an improved tabular Q-learning for robust attitude control of a fixed-wing UAV, when affected by atmospheric disturbances, sensor noise, actuator faults, and other model uncertainty. Although the learning is adaptive, convergence and stability were not explicitly analyzed. In [

24], a deep RL intelligent nonlinear controller for an experimental gliding UAV was validated using a modified DDPG algorithm. A sophisticated reward design combined with many simulation episodes leads to an optimal solution deemed superior to LQR and PPO. The results are missing convergence and stability analysis, although applied to a nonlinear problem model. A fully integrated RL approach combined with adaptive control was proposed in [

25] for uncertain system control with reference model, in a real-time setting with stability analysis and actuator fault. However, full state measurement was considered even when the results were validated in a real-time UAV, and no partial environment observability was tackled. The authors of [

26] proposed a robust optimal safe and stability certified control validated for UAV reference trajectory tracking under parametric uncertainty, under PPO RL. The real-time mechanisms are not analyzed, and the partial observability is not approached in this work. Ref. [

27] proposed an optimal gain self-tuning approach for altitude, attitude, and longitudinal motion controllers of a 6DoF nonlinear drone, using the DRL mechanism combined with a custom reward. Theoretical stability is certified; however, the real-time interaction mechanism and the partial state observation are not considered, either.

One of the greater challenges with online real-time ADP and RL control concerns the interleaving of the update equations, data collection, and fixed sampling period control execution. In fact, this has been the major bottleneck against a more widespread adoption of the ADP methods. Even in the linear system case, the actor and critic updates may require working with many transition samples of high dimension to improve method conditioning, which makes the learning steps impossible to update within the system’s sampling period. This forces the actor and critic structure update to be performed in asynchronous mode [

28], using specialized software mechanisms. The issue becomes even more critical with generalized NN-based actor–critic approaches when real-time interaction is required [

29,

30,

31,

32,

33]. The problem size becomes larger with the state-action size, which itself grows when using a virtual state representation comprised of present and past IO system samples. The environment’s complete observability issue under real-time control, about how to build equivalent fully observable virtual states, has not been tackled systematically [

34]. Another issue concerns the system stability preservation along the adaptive updates, which is necessary for safety insurance [

35]. Addressing these two challenges may help with the adoption of ADP and RL methods in real-world systems and this idea drives the current contribution. Finally, in a model reference control context, the trajectory feasibility must account for the underlying dynamics, which certainly requires some qualitative system insight and consideration for several classic control rules.

The contributions in this work are enumerated as follows:

- -

A theory for learning optimal control dealing with uncertain model dynamics and actuation faults, in a model reference tracking control approach, with equivalent virtual state-space representation where the virtual state is encoded as a moving window of input–output system samples.

- -

The proposed reference model is different from those existing in the literature, as it originates from easily interpretable performance indicators like rise time and overshoot, which are widely adopted in the community.

- -

Learning convergence of the proposed online off-policy asynchronous real-time model reference tracking control (OOART-MRTC) algorithm, with stability certification alongside learning, when starting from initially stabilizing control.

- -

Validation of the OOART-MRTC on attitude control for a quadrotor UAV serves as a use case study, to show that a complex double-integrator with coupled channels transforms to a large virtual state-space representation consisting of tens of variables. Even under such dimensionality curse, the learning can still be effective, fast, convergent and stabilizing in online asynchronous mode.

Notation used: is the set of real-valued m-dimensional vectors which are column vectors by default. Upper-right “” indicates matrix transpose. The norm operator ‖.‖ is implicitly Euclidean, over some vector or induced over some matrix. is the identity matrix with size .

The paper is organized as follows. The

Section 2 presents the general controlled system dynamics converted to a virtual state-space representation, the tracked reference model, the reference model driving input, and the resulting extended state space system. Once set up, the model reference tracking optimal control problem is formed and the parameterized ADP-based solution is derived under the OOART-MRTC algorithm. It is still in this section that the learning convergence analysis is performed. The

Section 3 is concerned with a complex results case study: a reference model tracking attitude control for a multivariable quadrotor UAV with an actuator fault and an uncertain model. A critical results discussion and interpretations are offered in the

Section 4. The

Section 5 concludes the findings.

2. Materials and Methods

2.1. The Controlled Uncertain Dynamic System

The original system’s state-space transition model with output equation is defined for the linear-time-invariant case as

where

is the state within its domain

,

is control input within its domain

,

is the controlled output within its domain

. Subscript

indexes the discrete time sample herein. Herein, the matrices

are of appropriate dimensions. They are assumed generally unknown and they are being referred to as the

true matrices. Their values could be expressed under additive or multiplicative uncertain formulation. The matrix

could be expressed as

, where

models an actuation fault present in the control input

whenever the value differs from

, and

represents the deviation of the input matrix from its nominal value

. This fault type described by multiplicative matrix

is a form of partial effectiveness loss and not a complete malfunction. Similar arguments are possible for representing uncertainty about the remaining state matrices

, using their nominal counterparts. The described form (1) is more general, as it is able to capture time delays in the variables

as well. This is provable by augmenting (1) with additional state components and it is a specific feature of discrete-time system representation.

Assumption 1. The system described by (1) is both controllable and observable.

Following Assumption 1, an equivalent representation of (1), called a virtual system, is, in its state-space form

where

is called a virtual state vector. The innovation behind

is that it consists of the input/output (IO) present and past values, i.e.,

with appropriate size of

, where

is the output order,

is the input order. Most notably, (1) and (2) share the same inputs and outputs. This means that a suitable controller for system (2) in fact uses the IO data from the system (1) and elaborates the control for (2), which in fact is equivalent to controlling the original system (1). From the IO behavior perspective, systems (1) and (2) are alike [

34,

36]. What is more appealing about (2) is that

is a linear transform on

, which is captured by the next Theorem.

Theorem 1. For an observable and controllable system (1), there exists a transformation matrix which enables .

In the light of Theorem 1 previously introduced, it is well-known that for the integer size (or equivalently for orders greater than some minimal thresholds), more IO samples captured in do not bring more information about the unique state value . The value of indirectly set by the values trades off the high dimensionality of with the inter-correlation between IO samples, i.e., it balances the exploration–exploitation factor. In practice, we start with corresponding to a minimal order known from historical analysis about the system (1) and increment it in unit steps, until there is no more gain in control performance.

2.2. The Reference Model to Be Tracked in Output and Matched in Dynamics

To force a desired control behavior for system (1) through its equivalent description (2), the most natural approach stemming from classic control is the reference model. This is, in fact, a supervised machine learning approach where one models the controller such that the closed loop matches a reference model’s dynamics [

37]. For the single input–single output case, a reference model is commonly described by the linear transfer function

parameterized in the natural frequency

, the damping ration

, and the time delay

with Laplace argument

.

(

s) is the Laplace-domain reference model output, with

being the Laplace image of the reference input time signal. Generally, it is more intuitive to describe control performance in continuous-time and only afterwards discretize the transfer function to match the time-based description with systems (1) and (2) pertaining to the discrete-time representation. The parameter

is tightly related to the rise time while

determines the overshoot. Such relationships are found in families of characteristics or from tables. For a second order reference model,

produces percent overshoot of

[%], while the rise time is

for

.

In multivariable control, a transfer matrix reference model is commonly employed, which in most cases is also diagonal, in order to indirectly enforce control channel decoupling to the maximum extent possible, which greatly improves disturbance rejection resilience and debugging in the control performance. In model reference control, if we use a linear reference model for a nonlinear plant, a nonlinear controller is necessary if we want the closed loop to match the linear response of the reference model. A plain linear controller cannot achieve such a requirement. With a nonlinear controller, we aim for good closed-loop linearization. Such a behavior is very predictable, both in scale and in response, over wide operating ranges. This is a very desirable control systems feature. This kind of generalizability emerges from the linear systems superposition principle. A side note about the reference model: systems like (1) or (2) may also possess a non-minimum phase character, which is a kind of dynamics which should not be compensated for via the controller dynamics; hence, it must be accepted and forced as an explicit component in .

Eventually,

needs to be discretized and, for online ADP and RL control, it also needs to be transformed to a suitable state-space model. The final reference model state space would be described as

with

the reference model’s state of appropriate and known size,

being the reference inputs and reference model outputs, respectively, pinpointing that they must live in the same space as the original system’s outputs. The tuple (

) is one state-space realization out of infinitely many possible. This reference model is more flexible than most in the literature found in ADP and RL, since it stems from performance indicators which are familiar to most control system designers. The reference model output must be controllable and observable. For accuracy, we formally assume it.

Assumption 2. The reference model (3) is both controllable and observable.

2.3. The Driving Reference Input Model

A linear reference input model is next employed with the dynamics

where

is

and compatible with the number of controlled outputs. The reference input

drives both the closed-loop control system (to be designed for (1), based on (2), as shown in the next subsections) and the reference model.

2.4. The Extended State-Space System

To arrive at a final state-space model that complies with the ADP and RL formulation, we define

. Then the augmented system is

For

the size of the extended state vector results from the sizes of its independent components, while

are appropriate matrices extracting the original system’s outputs and the reference model outputs from

, respectively. This augmented system is next used for learning optimal control: it is fully state observable and controllable. The augmented system from (5) is fully observable and controllable by construction. The reference input

from (4), although being a state within

in (5) and not a traditional control input, is a user-set variable whose dynamics are selected beforehand. This

drives the reference model, which is selected to be fully observable and controllable; otherwise, its output is not to be tracked. The virtual state space

in (2) is fully observable since its state is built from IO measured data. Its controllability follows, as it has the same input and output as (1), which is assumed controllable. For this to happen, sufficient past IO samples must fill the shift buffers from

.

2.5. The Model Reference Tracking Cost in the Optimal ADP/RL Control

To induce a control learning behavior, the undiscounted infinite-horizon cost-to-go is

where

captures the normed tracking error between the controlled system output and the reference model output, across all channels, and also penalizes the control effort. Generally, in the model reference tracking problem, no control penalty is used; hence, it is a little different from the classical LQR penalty. To solve the model reference tracking as an optimal control, we proceed as follows:

Define the controller-dependent cost to make sure that, starting from every state , the subsequent state transitions to are performed under the impact of the controller in its most general form. This controller form will be linear in the case of linear system, i.e., .

To solve for the optimal control

, the ADP (RL) methods generally require the system model to be accurately known. To solve for the optimal control independently of the system model, introduce the extended cost

. This is the well-known Q-function, and it obeys the Bellman equation as

Smartly parameterize the Q-function and the controller using polynomials, or neural networks in the most general case.

Simultaneously learn the optimal Q-function and the optimal controller in an off-policy style, using a dataset of transition samples collected from the system under any controller, most often an existing stabilizing one that is suboptimal and it is employed for good exploration. This dataset is called the Experience Replay Buffer (ERB).

For a linear system like (5), the classical LQR theory proves that the cost of operation under a linear controller is , quadratic in the state, under the controller with some symmetric positive definite matrix, and the optimal controller linear in the state, i.e., .

Proposition 1. For the model reference tracking problem with the cost (6), the penalty complies with an LQR penalty formulation, having state and action penalty matrices , as in , where are semipositive definite and positive definite, respectively.

Proof of Proposition 1. It follows immediately since , where . □

Subsequently, it is straightforward to show that the extended Q-function cost is also quadratic in the argument , i.e., , with some symmetric positive definite matrix, while the optimal control still remains linear in the state. The most important utility of the Q-function is that the control improvement is found directly from the condition , and it is analytically solvable with the mentioned parameterization.

A linear state feedback controller of the form

with gain matrix

partitioned as

renders the augmented closed-loop as

The Q-function is linearly parameterized by retaining the unique up-to-second-degree polynomials resulting from the argument products. Let

be expressed for

as a

t-dimensional vector as

where

lumps all the parameters together and

is a basis function vector called nonlinear embedding.

Remark 1. It follows immediately from (9) that the Q-function takes the form with being a positive definite symmetric matrix, to be proven later.

Let the transition samples database in the ERB be

. For the previous iteration controller

, with known previous iteration Q-function parameter

we use a number

of transition samples from the ERB, to write the following VI update in the Q-function parameter space

which can be expressed as an overdetermined system

and be solved accordingly as

. In Value Iteration, we use parameterized notation

for the i-th iteration Q-function. In the right hand side of the VI update,

.

Remark 2. The major advantage of (10) is that it is model-free and independent of system matrices .

Remark 3. Equation (10) is a policy estimate update and not a policy evaluation. If was used in the right-hand side, the available database helps evaluating the Q-function of any arbitrary policy that fits into the expression of .

Remark 4. To solve VI policy estimate update (10) using , the matrix needs to be invertible, requiring to be full column rank. This is achieved by sufficiently exploratory , commonly obtained by noise injection, to decorrelate the tuples from each other. Accordingly, this decorrelates the expansions , which are the columns for .

With

found from (10), we proceed with updating the controller and finding

from the condition

. Let this controller be

where

is some analytically derived expression of

resulting from the solution

. It follows that the stationary point

is to be linear in the state. The analytical derivation is generally possible

only for well-posed parameterizations such as the quadratic one used herein.

Example 1. Suppose that and . Then the nonlinear embedding vector is and . The solution to is , which confirms the linear dependence in the state, of the form .

Next, we propose the online off-policy asynchronous real-time algorithm for the model reference tracking control (OOART-MRTC) problem.

2.6. The Summarized Algorithm—OOART-MRTC

The online off-policy asynchronous real-time algorithm used for model reference trajectory tracking is given below in Algorithm 1:

| Algorithm 1. The OOART-MRTC algorithm |

1. Input parameters: that characterizes , the minibatch size , orders .

2. Reset the environment , , and fill the IO buffers with appropriate data.

3. With the initially stabilizing controller in closed loop, collect transitions to fill in the ERB, using exploratory noise for good state-action coverage.

4. Set update iteration index .

5. repeat (in the main thread, this is the step function with time-critical operations)

6. read . Push the newest to the outputs buffer.

7. form the current sample sliding window virtual state from the IO buffers.

8. construct the augmented state .

9. calculate .

10. send to the system, where is exploratory noise.

11. compute the penalty at the current timestep.

12. push the transition sample in the ERB.

13. push the latest to the inputs buffer.

14. set .

15. update the reference input based on the generative model .

16. update the reference model state according to .

17. make .

18. sleep until the current timestep has elapsed.

19. until experiment is stopped.

20.

21. repeat (in a separate thread where the learning iterative updates take place)

22. sample the ERB with random transitions.

23. fill the matrices in (10) using the transition samples.

24. if ,

25. find for , using the policy evaluation variant of (10) with on both sides.

26. else

27. find based on using Equation (10).

28. find from using Equation (11).

29. increment the iteration index .

30. until convergence or main thread is stopped |

Specifics of the OOART-MRTC algorithm are discussed. The main computing thread first performs the time-critical operations (lines 6–10 in the OOART-MRTC algorithm), among the most important being outputs measurements, augmented state construction by moving a window over the IO data samples, control action computation, and sending to the environment, either under exploration mode (with noise) or without it. For real-time operation and interaction between the controller and the environment, resilient communication backbones are to be adopted, either low-level, like socket-based, or high-level, like Robot Operating System (ROS)-based [

38]. Some less critical but still important management tasks are (lines 11–17 in the OOART-MRTC algorithm): the transition samples are collected in this thread also and pushed to the ERB, the reference input generative model and the reference model state-space are both updated for one timestep. The remaining time left from the sampling period

is slept until the next interaction and control cycle begins.

In the parallel executing thread, the learning updates take place by sampling the ERB randomly for transitions and updating the critic and actor equations. Depending on the number of transitions, the time taken for these tasks may often exceed

; hence, the asynchronous update is imperative. Dedicated software mechanisms for preventing race conditions on reading and writing variables are required (like mutexes); see [

37]. The locked variables are (1) the actor weights

for reading, in order to make atomic inference on the actor in the control thread, without updating these weights from the actor–critic learning thread at the same time; (2) the ERB, for sampling in the actor–critic thread, to prevent race condition when updating it in the control main thread.

2.7. Learning Convergence Analysis for the OOART-MRTC

We formalize the convergence analysis by reparametrizing the VI updates and by setting some assumptions.

Assumption 3. There exists an initial admissible controller with cost-to-go with positive definite symmetric. Let define a transition sample tuple . Then the corresponding Q-function is .

Define the unparameterized VI policy estimate update, which starts with

and uses the greedy updates, as

Remark 5. The value is a true policy evaluation for hence strongly related to , while the subsequent will not represent true policy evaluations for the subsequent controllers ; hence, these updates will not be tightly related to costs-to-go, but only loose approximations. Moreover, the existence of defining the value function of using a stabilizing is guaranteed.

Theorem 2. The sequence is monotonically decreasing as in and . Moreover, preserves its quadratic form over the updates.

Proof of Theorem 2. follows from . Based on , we have . Moreover, is quadratic, with and . □

To find

from

based on (12) written as

, we compute

from

and arrive at

Then

with

since

.

It follows that

since

and also that

is quadratic in its argument by writing

, with

being positive definite symmetric.

It is also valid that

since

is greedy w.r.t.

against any other controller, including

.

To continue this rationale by induction, suppose that

holds for

. Then

must hold because

. This means that

, and the VI update

must also hold, since

. The previous expression also takes the form

, proving that

is itself quadratic at any iteration

.

Assume again by induction that

and let

where in the first equation above we used the greediness of

w.r.t.

against any other controller, including

. By subtracting the second equation from the first, above, we obtain

because

which results in

The above reasoning proves that . □

Theorem 3. Every resulting as a byproduct of the VI update is stabilizing with every iteration.

Proof of Theorem 3. Recall that

is admissible and stabilizing. Notice that

and also recall that

Compute

and since

(proven by previous Theorem 2), it follows that

which is a discrete-time Lyapunov equation for the closed-loop system matrix

, where

. This implies that

is stabilizing. □

By induction, suppose

holds and that by the optimal VI update

we have

Then, since

, it must follow that

which is a Lyapunov equation in discrete time for the closed-loop system matrix

. Its fulfilment implies that

is stabilizing, which concludes the proof. □

Corollary 1. The OOART-MRTC VI-like updates converge to the optimal Q-function and to the optimal control.

Proof of Corollary 1. Using Theorem 2 and Theorem 3 results, we have

being monotonically decreasing and bounded above by

and below by 0, with

stabilizing at every iteration. Then the sequence

must converge to a finite number, when

, making

. Consequently,

, where

defines the fixed point of the VI updates for which it is true that

□

Remark 6. The convergence analysis uses the system matrices only to uncover the problem structure; however, the system knowledge is not really needed in the OOART-MRTC VI-like updates.

3. Results: A Reference Model Tracking Attitude Control Case Study for a Multivariable Quadrotor UAV with an Actuator Fault and an Uncertain Model

A linearized quadrotor UAV attitude control model is

where

are the roll, pitch, and yaw attitude angle orientations of the body-fixed frame relative to the inertial frame,

are the inertial moments of the quadrotor,

is the rotor arm length relative to the mass center. Input variables

are the

normalized collective propeller forces. Here,

with the nominal value

is the actuator health matrix that models a fault like the loss in actuation capacity, with positive diagonal elements within the interval

. The model is a linearized one around hover position and neglects the inner propeller dynamics [

18]. In this assumption, it is noted that the dynamics of the attitude angles behave like coupled double integrators about each of the three controlled outputs. With a sampling period of

[s], the discretized model with zero-order hold becomes compliant with description (1), with appropriate size matrices

(note the matrices

) in (31) are the continuous-time (CT) counterparts. The model is a four-input–three-output one with

.

3.1. Initial Stabilizing Controller Derivation

For the current case study, the considered nominal system parametric values are

[m]

[kg⋅m

2]

[kg⋅m

2]

similar to [

25]. To these nominal values, the nominal CT matrices

are used to discretize the state space model (31) and obtain the nominal discrete-time matrices

. No uncertainty is considered about the output equation matrices

. The resulting nominal state space matrices are only used for searching an initial, stabilizing controller, with no focus on performance except stability certification.

For the sixth order nominal quadrotor model, six closed-loop poles equally spaced in the interval are allocated, let them be . Their discrete counterparts are . For the nominal system matrices , we solve for by placing the poles of at the desired locations . For , let the observability matrix from Theorem 1 be , the controllability matrix be , the matrix , and the transform matrix be . It follows that . Note that the pole placement for MIMO systems requires controllability for proper control authority distribution over each input. Arbitrary pole locations may pose numerical challenges, both because there are infinite state feedback gains and owing to very close poles being mistaken for higher multiplicity. If pole placement is not numerically reliable, then the stabilization design should fall back to LQR instead of pole placement.

Next, we find a state-feedback gain in the equivalent transformed virtual state-space, using the transform matrix . Let this gain be , which makes the virtual closed-loop matrix a stable one.

From the output equation of the augmented system model (8), i.e.,

, we express the steady-state conditions

To find the that assures unit gain from , we set and solve for . Then, for , and is found.

The initial controller is used to stabilize the extended nominal state-space system (5), in order to collect the transition samples and fill the ERB. Note that the model reference dynamics part and the reference input model part from (5) are already stable; hence, the sole concern is to stabilize the virtual state-space dynamics (2).

3.2. The Data Collection Phase with the Uncertain Dynamics and with Actuator Fault

A total of 2500 transition samples are collected to fill the ERB, by interacting with the quadrotor system under the initial stabilizing controller under an exploratory setting. A fault in the actuator is simulated by making and with the inertial parameters increased by 10% more than their nominal values. Hence, . The true state transition matrix is the discrete-time counterpart of the discretized system (31) with . The interaction is next performed with a different system than the one used for stabilization.

The reference input is reset at

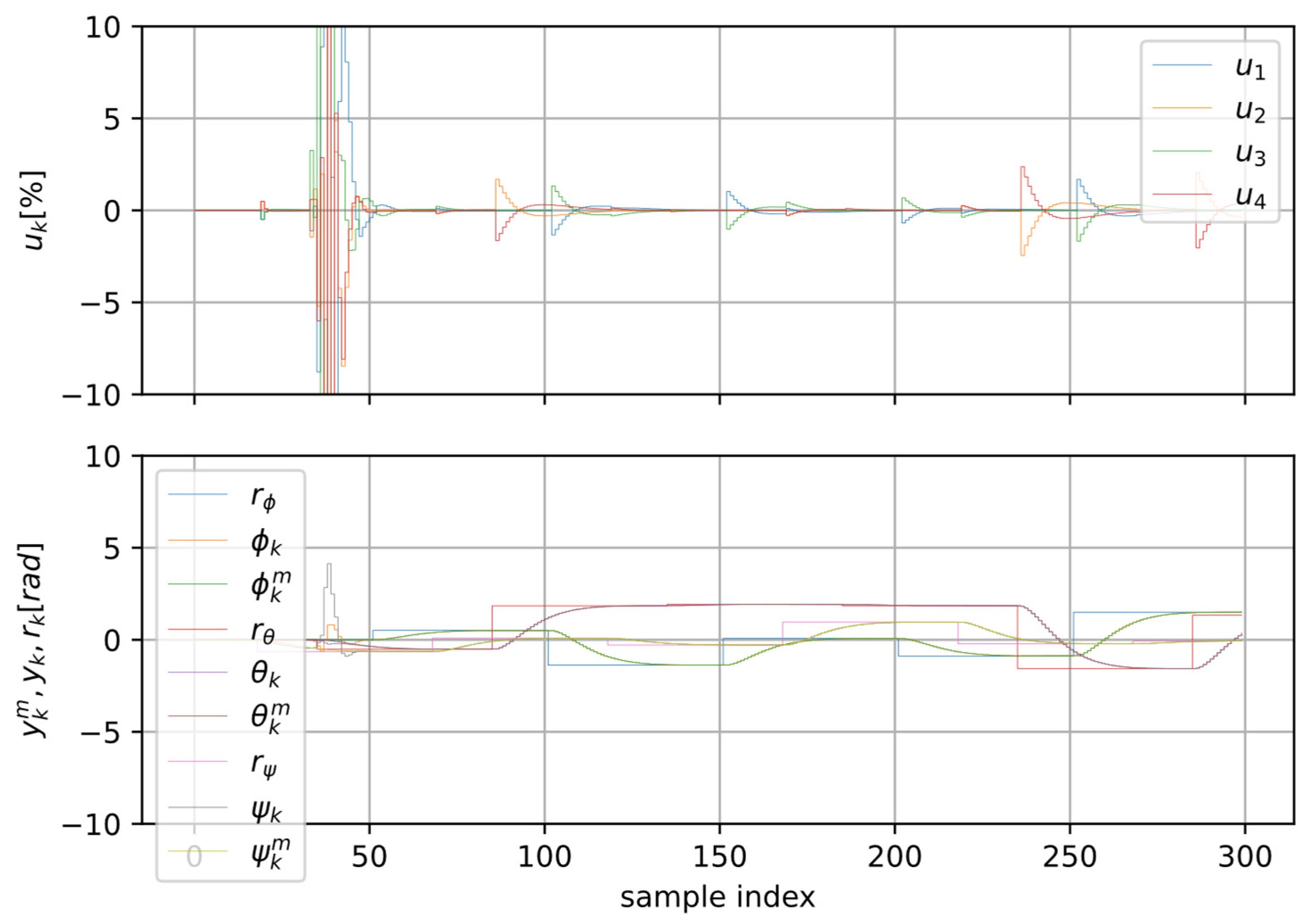

0 on all three channels corresponding to roll, pitch, and yaw. Zero-mean normally distributed noise is added to the four control input channels at each sampling step time; its variance is 0.9. Every 50 samples, each reference switches to a uniform random amplitude value within [−1; 1]; however, we decorrelate the switching times of the reference inputs, in order to excite each channel independently and make it behave as a disturbance about the other control channels. This helps, furthermore, to achieve a good exploration. The collection phase results are displayed in

Figure 1.

The reference inputs about each control channel also drive the reference model outputs. To impose a stable, desirable control performance, we employ an overdamped reference model of the form

with

[rad/s]

about each control channel (i.e., for each controlled angle

), which is discretized for the same

[s]. Here,

corresponds to percent overshoot of

[%] = 0.15[%], while the rise time is

[s] for

. The discretized reference model will be of the form (3), where

More precisely, the continuous-time transfer matrix is therefore of the form

and then discretized accordingly at

[s]. Using the results of Theorem 1, the final and complete virtual state-space representation constructed for

is of the form (5), with

, including

,

.

Remark 7. The transition samples pertaining to the reference model can be generated offline (a posteriori) after the transition sample collection from the true quadrotor system, or they can be generated online in real-time along with the system trajectories. In our work, we choose to compute them online in real-time. Their offline generation is possible since the reference input trajectory is stored.

3.3. The Learning Stage

The learning process starts from the initial state-feedback gain that was used for exploration and with being initialized with random uniform values in [0, 1]. The penalty function is with being diagonal and positive definite.

At each iteration of the OOART-MRTC algorithm, in a parallel processing thread, we extract a minibatch of 1200 transitions and solve for

using Equation (10). Then

is found based on Equation (11). This process is repeated until satisfactory convergence, i.e., until no more changes are observed in either

or

. Solving such a large overdetermined system may exceed the allotted time available within a single sampling period. Thus, to make the computation feasible in online real-time, the algorithm learning updates are detached to a separate thread, according to the soft real-time software implementation principles exposed in [

37]. Importantly, when extracting transition samples from the ERB, we filter out those samples which correspond to switching reference input values on any control channel. The reason is that the piecewise constant reference inputs comply with a generative model like

only when the references are constant, not when they switch.

In

Figure 2, we observe the learning results after 300 timesteps corresponding to about 150 actor–critic iterative updates that take place asynchronously. The model reference tracking is very accurate across each channel. Decoupling of all three control channels is ensured, which fulfils the desired control objective for this very high-order quadrotor UAV system, for which each of the three control channels behaves as a double integrator.

The actor weights

and critic weights

throughout the learning iteration steps are visible in

Figure 3. Both values of

and the values of

stabilize after only few iterations. The model reference tracking is accurately satisfied even long before the actor and critic weights stabilize, as is visible in the samples from

Figure 2. The actor has

parameters/weights and the critic has 465 parameters/weights.

The validated learning method based on OOART-MRTC relies strongly on the linearity assumption in the underlying system dynamics. The advantage is exposed clearly with the smart parameterization of the actor (controller) and the critic (extended Q-function). In the nonlinear dynamics case, neural networks are required [

39,

40,

41,

42]; however, such validation has already been performed for low-order systems [

37]. Employing the virtual state representation leads to a very high-dimensional equivalent linear system which, although completely observable, comes with several challenges associated with the exploration. An efficient exploration implies that the sequences of inputs and outputs that form the augmented state vector must be decorrelated, which is only achievable with a sufficiently noisy excitation. While a good coverage of the state-action domain may not be easily attained in this case, the extrapolative capability that comes from the linear parameterization will make the optimality hold, at least in theory, at any scale around the equilibrium, no matter if the state transitions are collected in a confined smaller region, under persistent excitation. Hence, the clever parameterization compensates for the poorer exploration in this case.

3.4. Ablation Study 1: The Actor–Critic Update Time Relative to Environment Timestep

To monitor the actor–critic update time (ACUT) duration relative to the real-time sampling period of the environment (

[s] herein), the average ACUT duration is measured as a function of the batch size that is used to update the actor and critic weights. These batch sizes are

. The

win-precise-time (v. 1.4.2) and

time libraries are used under Windows

® and Python 3.10 for soft real-time environment stepping mechanisms and for function execution timing; the results are visible in

Figure 4. Vectorized, batch-wise implementation with the

NumPy library (v. 1.26.4) is used for code speed improvement when building the large-scale matrices and when solving the linear equation systems with the

numpy.linalg.lstsq linear algebra package function.

Although we tested the learning with the batch sizes , we did not observe stabilization issues or significant control actions and tracking error bursts in the initial learning phase. This is in spite of increasing the ACUT from to about . This indicates that the learning is robust and bump-less.

3.5. Ablation Study 2: Using VI-like Instead of PoIt-like Style in the First Critic Weight Update

In the OOART-MRTC algorithm, in the asynchronous actor–critic update thread, the critic weights

are found for

using Equation (10) with

used on both sides. This corresponds to a PoIt-like critic update in the first step, and it is necessary, in order to evaluate the state-action Q-function of the initial stabilizing controller. The effects of this strategy shown with

Figure 2 and

Figure 3 reveal the strong stabilization of the learning, despite an increased actor–critic update time duration.

To disclose the importance of this first critic update step, using PoIt-like strategy, we instead change it to a VI-like update, where the critic

is a randomly initialized vector within the unit magnitude uniform distribution. All subsequent critic updates are performed in VI-like style, which is known to converge, ultimately. However, a negative bursting effect is noticed, which is expected to alter the control stability, especially if the actor–critic update time duration increases. The learning behavior is shown in

Figure 5 and

Figure 6.

3.6. Ablation Study 3: Severe Actuator Loss and Performance Tracking Measurement

In the OOART-MRTC algorithm, we exacerbate the actuator loss, making

. This means that one control action corresponding to one rotor is zero, as the propeller is stuck. This way, the actuation is asymmetric and saturating on the faulty propeller. We redo the OOART-MRTC learning, with maximal batch size of 1536, after data is collected with the new severely affected actuator. The controller designed in the first place based on the nominal model delivers the tracking performance in

Figure 7, with measured RMSE averaged on three rollouts being

.

After learning takes place, the tracking is visible in

Figure 8, with measured RMSE averaged on three rollouts being

, which is two orders of magnitude smaller than with the initial stabilizing controller used for transition sample collection. The asymmetric nature of the control action is clearly visible as they try to compensate for one actuator loss out of four. The fourth control action

“learns” to become zero since it has no effect (see its nonzero action in

Figure 7; however, its effect is also null there). Importantly, the result may not be feasible in the real world, since the altitude may not be held by such severe loss. Herein, the control configuration of the linearized operation near steady state permits such a result.

4. Critical Discussions and Result Extensions

Regarding the transferability of the results towards other UAV types, the proposed framework is sufficiently general to accommodate other control configurations, since control necessities are ubiquitous. It is especially favorable towards the easily interpretable model reference tracking, and it can be extended to other performance requirements. Other potential control configurations include fixed-wing UAVs; ground-based unmanned vehicles (UGVs) like wheeled, tracked, legged/quadruped robots; unmanned surface vehicles (USVs); and unmanned underwater vehicles (UUVs).

Simulation experiments in this work were based on scenarios that favor real-world replication, to be performed near hovering point under prior stabilizing controllers, with step-like injected signals used as attitude setpoint excitations, leading to various quadrotor UAV attitude changes that generate the input–output data samples used for learning in the virtual embedded state-space representation. A dedicated flying space is required to allow for horizontal, constant-altitude motions. However, data collection can be relaxed near real operation missions, with injected attitude disturbances. These conditions are reproducible with commercial autopilots (e.g., ArduPilot, PX4), by hooking up within software, at the attitude controller setpoints module, in order to produce synthetic, disturbance-like data injections. Then, by reading the attitude angles from filtered gyroscopes via Extended Kalman Filter (EKF) attitude estimators, together with the controller output signal, the learning data is logged to a database from where transition can be sampled in asynchronous mode, for learning. This learning takes place in a difference thread; hence, it should not affect the real-timeliness constraints for the fast attitude control loops. This aspect was validated with soft real-time validation in the case study. The commonly used control architecture in commercial autopilots, i.e., the P-type dominantly used for attitude and the inner cascaded PID-type rate controllers, can be replaced by the proposed state-feedback controller, which is, in fact, an equivalent mathematical representation.

There are, nevertheless, a number of limitations and corner cases that require further investigation. The linearity assumption holds to a limited extent in practice. In the concrete case of UAVs, aggressive maneuvers requiring drastic attitude changes will exacerbate the nonlinearity, leading to control robustness loss and potential instability issues. Secondly, there are other potential issues that could infringe assumptions like observability, for example, sensor bias, packet losses, and delayed measurements. For the attitude control, delayed measurements are a lesser problem if the delay is fixed, as the model (2) can be further augmented with additional states. However, it is problematic if it is time-varying. Packet loss is not a rough issue, at least in the attitude controller case, since the attitude controller belongs to the critical low-level communication infrastructure and it is carefully designed in practice. Moreover, its real-timeliness constraints are not affected when the learning takes place asynchronously. In real autopilots, the sensor bias is estimated and compensated using EKF, by fusing many sensors like optical flow, IMU, the altimeter, LiDAR, and the camera. Therefore, the attitude angles are relatively unbiased, but noisy.

Another potential limitation is with the fault and uncertainty modeling used here, which does not cover all corner cases of time-varying, asymmetric, saturating, or stuck. The multiplicative uncertainty partially accounts for asymmetric and time-varying cases. Actuator jamming is captured by zeroed entries in . This is a further research direction.