1. Introduction

After sudden disasters such as earthquakes, floods, and building collapses, ground cellular networks often become paralyzed due to physical damage or power outages, forming “communication islands”. In this situation, rapidly deploying multiple unmanned aerial vehicles (UAVs) to form a temporary, self-organizing aerial communication network has become one of the most promising solutions [

1,

2,

3,

4].

UAVs have the advantages of flexible deployment and high maneuverability, making them very suitable for scenarios with sparse and unevenly distributed users [

5]. The main challenge of UAV-assisted communication lies in its limited onboard energy, which limits flight endurance and signal transmission power. Therefore, it is crucial to effectively allocate communication resources among multiple UAVs [

6,

7]. Designing effective resource allocation strategies is crucial for providing sustainable and reliable services under strict energy constraints. However, in highly dynamic scenarios involving multiple UAVs and mobile users, solving resource allocation problems constitutes a complex sequential decision-making process. Traditional methods based on static optimization are not suitable for this task, which in turn highlights the enormous potential of learning-based adaptive strategies.

1.1. Related Works

The problem of resource allocation in UAV-assisted communication has been extensively studied in academia. These studies can be broadly categorized into methods based on traditional optimization and those based on learning methods. Our analysis begins with an overview of traditional optimization techniques, followed by the evolution and application of reinforcement learning paradigms in this domain.

1.1.1. Traditional Optimization Methods

Early research mainly relied on traditional optimization techniques to solve the resource allocation problem in multi-UAV communication networks. These works typically model resource allocation problems as precise mathematical formulas, utilizing methods such as convex optimization and successive convex approximation (SCA) to optimize UAV trajectories and transmission power under idealized channel models [

4,

8]. Other methods include using block coordinate descent (BCD) to decouple complex variables [

8], or applying game theory to simulate interactions between multiple UAVs and users [

9].

Although traditional optimization methods can theoretically achieve optimal or near-optimal solutions under static conditions with perfect information, they face significant limitations in practical multi-UAV communication scenarios serving multiple users as follows:

Computational complexity and real-time decision-making: Traditional methods in UAV trajectory and resource allocation typically transform optimization problems into finding optimal solutions for non-convex problems, which often require complex mathematical designs (e.g., iterative SCA or BCD methods) and are computationally intensive. This makes it impossible for them to make real-time decisions in highly dynamic environments where UAVs and users are constantly moving [

10].

Prior information dependency and model robustness: The performance of traditional optimization methods largely relies on accurate and real-time system state information (e.g., precise channel models, user locations, interference levels) [

4]. However, in highly dynamic UAV communication networks, it is difficult to obtain accurate information in real time, which makes traditional optimization methods susceptible to environmental uncertainty and subsequently affects communication performance.

Scalability: As the number of UAVs and users increases, the dimensionality of optimization problems grows rapidly, leading to combinatorial explosion and making traditional methods unsuitable for large-scale dynamic scenarios.

Local optimality of heuristics: Simpler heuristic approaches, such as greedy algorithms [

11], while computationally less demanding, often suffer from inherent sub-optimality and local convergence, failing to achieve desirable global communication performance across the network.

These issues point out the limitations of traditional optimization methods in highly dynamic UAV communication systems and highlight the importance of feedback based learning algorithms.

1.1.2. Single-Agent Reinforcement Learning Approaches

In order to overcome the limitations of traditional optimization methods, some scholars use reinforcement learning (RL) for communication resource allocation. RL learns the optimal strategy through direct interaction with the environment, unlike traditional optimization methods that rely on fixed computational modes. This method is very suitable for solving complex sequential decision-making problems in multi-UAV communication scenarios.

To simplify the problem, initial deep reinforcement learning (DRL) research was typically based on a single-agent system framework, which can represent a UAV or centralized controller. For example, some studies focus on trajectory design using algorithms such as deep Q-network (DQN) [

12,

13], while assuming fixed power control or user association schemes [

14]. However, this decoupling method ignores the strong coupling between these variables. After recognizing the inherent relationship between the position, transmission power, and communication performance of UAVs, the mainstream trend in academia has shifted towards joint optimization of continuous variables, especially the joint design of UAV trajectories and transmission power. Solutions based on advanced algorithms such as the deep deterministic policy gradient (DDPG), soft actor-critic (SAC), and proximal policy optimization (PPO) [

15,

16,

17] have been shown to significantly outperform single-variable optimization. These studies consolidate the consensus that joint optimization is the key way to improve the efficiency of UAV communication.

However, in high dynamic scenarios where multiple UAVs provide communication services to multiple users, the DRL framework of a single agent, even as an independent learner (IL) applied to a single UAV (e.g., IPPO [

18,

19]), inherently faces severe challenges:

High-dimensional action space: In scenarios where multiple UAVs provide communication services to multiple users, the dimension of the action space increases sharply with the number of UAVs and users, making it difficult for single-agent methods to effectively explore and learn optimal strategies.

Non-stationarity and convergence difficulties: When multiple independent agents run in a shared environment, the dynamics of the environment become non-stationary from the perspective of each agent due to the constantly changing strategies of other agents. This non-stationarity leads to convergence difficulties, often causing algorithms to fall into local optima or requiring excessively long training time, severely limiting the upper limit of communication performance.

Lack of global coordination: When multiple independent agents run in a shared environment, due to their complete independence, the lack of coordination between agents leads to malicious interference and limited global communication performance, such as the following:

- -

Resource contention and interference: Uncoordinated power allocation and trajectory planning can cause severe inter-UAV interference, degrading overall network capacity and user throughput.

- -

Collision avoidance failures: Without explicit coordination, UAVs may collide, leading to mission failure and communication service disruption.

- -

Suboptimal coverage and fairness: Individual UAVs optimizing solely for their local objectives may neglect underserved areas or users, compromising network-wide coverage and fairness in communication quality.

- -

Credit assignment problem: When negative feedback (e.g., collision, network congestion) occurs, it is difficult for an independent learner to determine whether it is an error in one’s own strategy or the influence of other agent strategies, resulting in low learning efficiency.

These limitations emphasize the necessity of transitioning from single-agent DRL to multi-agent DRL, which can clearly handle coordination and cooperation in complex multi-UAV communication environments.

1.1.3. Multi-Agent Reinforcement Learning Approaches

Therefore, after recognizing the inherent need for coordination in multi-UAV systems, the focus of research has shifted from single-agent DRL to MARL. While simple MARL implementations, such as the ILs mentioned above, still struggle with non-stationarity and credit assignment, the centralized training with decentralized execution (CTDE) framework [

20] has emerged as the gold standard. Within CTDE, a centralized critic leverages global information during training to guide the learning of individual, decentralized actors. This architecture is adopted by algorithms ranging from the pioneering multi-agent deep deterministic policy gradient (MADDPG) [

21] to the high-performing MAPPO [

22]. Due to its outstanding collaborative performance and training stability, MAPPO is widely regarded as one of the most advanced state-of-the-art (SOTA) methods [

23,

24], especially in complex multi-UAV communication service scenarios. These CTDE-based MARL algorithms directly solve the problem of dimension explosion in single-agent methods and the lack of global coordination in IL methods, enabling the following:

Enhance communication network performance: Through global information sharing and centralized evaluation, the system can collaboratively optimize the trajectories and resource allocation of multiple UAVs, effectively reduce interference, and improve network capacity and overall user throughput.

Robust collision avoidance: Centralized training enables agents to learn collaborative strategies to avoid collisions, ensuring the safe and stable operation of multi-UAV system.

Improving resource management and fairness: Coordinated methods help to allocate resources more fairly, optimize coverage, and thus improve the overall quality and user satisfaction of communication services.

However, while improving the performance of MARL in resource allocation tasks, some scholars have focused on the structural characteristics of task scenarios and introduced the concept of “task coupling structure” [

25], defining it as the extent to which the optimal behavior of an agent depends on the behavior of other agents. For example, the classic QMIX algorithm [

26] is specifically designed for tasks with strictly monotonic cooperative structures. These studies emphasize the necessity of designing algorithms that appropriately match the coupling characteristics of specific problems.

In the scenario where multiple UAVs provide communication services, the task scenario essentially presents a “loosely coupled” structure. Although UAVs must cooperate to avoid mutual interference and ensure continuous system operation, they are largely autonomous in providing services to users within their respective service areas. Therefore, mainstream MARL algorithms based on CTDE, such as standard MAPPO, may be limited due to their “over concentration” or “over coupling” properties as follows:

Suppression of individualized performance: The single centralized critic of standard MAPPO is designed specifically for strongly coupled tasks to evaluate global returns. This mechanism may inadvertently dilute the unique contributions of individual agents while promoting overall cooperation, inhibiting exploration of personalized and local optimal behaviors. In loosely coupled communication tasks, a single UAV may have different user groups and local optimization objectives, which may hinder achieving maximum individual communication performance.

Ambiguous credit assignment in loosely coupled settings: When receiving global rewards (or punishments), in a loosely coupled environment, a single centralized critic may find it difficult to accurately allocate credit or responsibility to various agents. The ambiguity of credit assignment may slow down learning speed or lead to the formation of suboptimal strategies, especially when the influence of agents is more localized, which can affect the overall efficiency and fairness of communication services.

Therefore, designing a MARL algorithm that not only includes the required communication fairness objectives, but also customizes it specifically for the loosely coupled task characteristics generated by complex multi-UAV communication tasks remains an important and challenging research direction, and current methods have not fully solved it.

1.2. Motivation

In MARL, advanced CTDE algorithms are crucial for coordinating multiple UAVs. Although pioneering methods like MADDPG [

21] effectively extend DDPG to multi-agent systems and can handle continuous control tasks such as trajectory and power optimization, their dependence on deterministic strategies and inherent stability challenges limits their applicability to our specific problems. MAPPO [

22,

23,

24] provides significant advantages for joint optimization of continuous variables such as UAV trajectory and power allocation in high dynamic and safety-critical multi-UAV communication networks. MAPPO is built on a stable and robust PPO algorithm, using a random strategy that inherently promotes better exploration in complex, high-dimensional continuous action spaces. This feature is crucial for discovering diverse and energy-efficient strategies in dynamic UAV environments, ensuring robust learning against uncertainty. In addition, MAPPO’s improved stability and ease of adjustment make it a more reliable choice for formulating policies that can ensure safe operation (such as collision avoidance) and continuous communication services under strict energy constraints. Therefore, while pursuing high communication performance and system robustness, MAPPO has become the preferred benchmark for complex multi-UAV communication tasks.

However, there are still two limitations to current research. Firstly, most current research mainly focuses on maximizing the total rate in optimizing objectives [

13,

22,

23], often neglecting fairness among users. Although methods such as maximizing minimum user performance [

8] or using the jain fairness [

27] index consider fairness among users, they have the problem of ignoring overall system performance or easily falling into local optima in complex optimization environments. Proportional fairness (PF) is a classic indicator that balances performance and fairness, and its application has not been explored to a large extent within this advanced MARL framework.

Secondly, although joint optimization is the most ideal way to improve the overall performance of the system, it is computationally infeasible to directly apply the PF objective function to a fully joint MARL optimization framework. On the other hand, if hierarchical optimization is adopted to avoid this problem, the resulting loosely coupled task structure poses new challenges to the MARL algorithm itself. Simple methods like IPPO are plagued by non-stationarity and cannot capture subtle but crucial inter-agent coupling, such as collision avoidance. This is because sparse global negative feedback signals, such as collision penalties, are often attributed by each independent learner to the randomness of their own strategy or environmental noise, making it difficult to form effective collaborative avoidance strategies. On the contrary, CTDE algorithms designed for mainstream strongly coupled scenarios such as MAPPO may suppress the exploration of individual optimal performance strategies in loosely coupled scenarios due to their overly concentrated value estimation, resulting in poor overall system performance.

Therefore, designing a MARL algorithm that not only considers fairness objectives, but also tailors it to the loosely coupled task characteristics generated by hierarchical optimization has become an important research direction.

1.3. Contribution

To address the aforementioned challenges, this paper proposes a hierarchical framework centered on a novel MARL algorithm, termed MAPPO with decoupled critics (MAPPO-DC). In the upper layer, an efficient user-to-UAV assignment is performed using a lightweight clustering algorithm. In the lower layer, our proposed MAPPO-DC algorithm enables each agent to learn tailored policies for trajectory and power control. Specifically, by redesigning the critic’s update rule, MAPPO-DC allows each agent to distinguish shared global value from its local, task-specific advantages, achieving a sophisticated balance between global coordination and individualized exploration. Furthermore, a composite reward function, congruent with the MAPPO-DC architecture, is devised based on reward construction principles to guide the learning process toward the proportional fairness objective.

The main contributions of this paper are as follows:

We design an efficient hierarchical framework to mitigate the combinatorial explosion caused by an overly large joint optimization space.

Based on a detailed analysis of the scene’s task coupling, we propose the MAPPO-DC algorithm tailored for the loosely coupled task scenario inherent to the hierarchical framework. A composite reward is also designed for this architecture to ensure both inter-agent coordination and individual agent performance in loosely coupled settings.

The simulation results demonstrate that our proposed MAPPO-DC algorithm outperforms IPPO, MAPPO, and other baseline algorithms. In addition, we analyze the intrinsic behavioral mechanisms of different algorithms under energy constraints.

Theoretically, MAPPO-DC is positioned as a CTDE-based algorithm with a multi-critic architecture. Relative to IPPO, it mitigates environmental non-stationarity by incorporating global states into the critic. Relative to standard MAPPO with a single centralized critic, MAPPO-DC decouples the value estimation to prevent the ambiguity of credit assignment caused by global reward averaging in loosely coupled tasks. Furthermore, unlike value-decomposition methods (e.g., VDN, QMIX) that rely on the monotonicity assumption of the global value function, MAPPO-DC requires no such constraints. Consequently, it is particularly well-suited for the loosely coupled communication scenarios presented in this work, which require equal emphasis on inter-agent coordination (e.g., safety constraints) and individual agent performance (e.g., customized power and trajectory control).

2. System Model

We consider a scenario, as illustrated in

Figure 1, where a fleet of

N energy-constrained UAVs provides wireless communication services to

K users distributed over a two-dimensional plane. The set of UAVs is denoted by

, and the set of users is denoted by

. We use the index

for UAVs and

for users. At any given time step

t, the position of the

ith UAV is represented by its 3D Cartesian coordinates

. Similarly, the position of the

kth user is

. The users are randomly distributed within the operational area [

4,

5,

8]. As shown in

Figure 1, multiple UAVs fly within this area to provide communication services to users. The specific parameters of the scenario, adjusted based on [

28,

29], are detailed in

Table A1.

2.1. Transmission Model

The communication link adopts a probabilistic line-of-sight (LoS) model [

4], where the channel gain is not deterministic but is the expected value of the channel gains under LoS and non-loS (NLoS) conditions. The probability of LoS depends on the elevation angle between the UAV and the user.

The 3D distance between UAV

i and user

k is given by

The horizontal distance between UAV

i and user

k is calculated as

And the elevation angle between UAV

i and user

k is calculated as

The LoS probability is given by

where

a and

b are empirical parameters dependent on the environment, set to 9.61 and 0.16, respectively [

28].

Building upon free-space path loss and differentiating between LoS and NLoS conditions, the path loss for LoS and NLoS links are, respectively,

and

To calculate the average channel gain, we first convert the path losses to linear scale,

Consequently, the average channel gain (in linear scale) is obtained by converting the average path loss,

where

and

are the excessive path loss for LoS and NLoS links, respectively, and

is the channel power gain at a reference distance of 1 m [

29].

We employ an orthogonal frequency division multiple access (OFDMA) scheme, allocating independent frequency bands to each UAV to avoid co-channel interference among them. Let

be the set of users served by UAV

i. According to the Shannon–Hartley theorem, for a user

, the received signal-to-interference-plus-noise ratio (SINR) can be simplified as the signal-to-noise ratio (SNR) and expressed as

where

is the transmit power allocated by UAV

i to user

k at time

t,

is the channel gain between them,

is the noise power spectral density, and

is the bandwidth allocated to the user. For analytical convenience, we assume an equal bandwidth allocation scheme, where each served user

receives

. The purpose of this assumption is to simplify the analysis of the problem and focus on key variables [

18,

20], ensuring fairness among users at the bandwidth level, while concentrating research on trajectory and power.

The achievable instantaneous throughput for user

k at time

t is

2.2. Energy Consumption Model

The energy consumption of a UAV is primarily generated by its propulsion system, which includes both hovering and motion components. Based on the quadrotor energy consumption model in [

30] and incorporating common simplifications of rotary-wing aircraft aerodynamics from [

31], factors such as climb, descent, and blade profile drag are neglected to focus the research on the long-term joint optimization strategy for trajectory and power. Thus, the total power consumption

for UAV

i at time

t can be approximated [

4] as

where

is the communication power. The propulsion power

is calculated based on the UAV’s velocity vector,

where

is the hovering power,

is the velocity vector of UAV

i, and

is the motion-related power coefficient [

4]. The remaining energy of UAV

i at the next time step can be calculated by subtracting the consumed energy from the current remaining energy,

where

is the remaining energy of UAV

i,

is the duration of a time step.

2.3. Problem Formulation

This study aims to maximize the system’s PF throughput by jointly optimizing the UAVs’ trajectories

and power allocation strategies

within a finite flight period and under energy constraints. This objective is achieved by maximizing the log-sum of the users’ long-term average throughputs, as formally expressed in the optimization problem (P1),

where

is the long-term discounted cumulative throughput for user

k, and

is the instantaneous throughput defined in (10). Constraints C1 to C6 correspond to the UAV maximum velocity, flight altitude, collision avoidance, communication power, battery endurance, and user serving load, respectively.

Problem (P1) is a mixed-integer non-convex optimization problem, characterized by highly coupled variables, high-dimensional state-action spaces, and the highly dynamic nature of UAV communication environments. Due to these complexities, including the problem being NP-hard and the partial observability from each agent’s perspective, it is intractable for traditional optimization methods to obtain real-time optimal solutions. Therefore, we model this problem as a multi-agent partially observable Markov decision process (POMDP). We then employ a MARL approach, specifically our proposed MAPPO-DC framework, to learn an approximately optimal policy that addresses these challenges and maximizes the long-term cumulative reward, with a carefully designed reward function aligned to the objective of (P1).

3. Method

Although the end-to-end MARL method with a fully joint action space is theoretically superior, the explosion of fully joint action space combinations at our scene scale (

N UAVs and

K users) makes existing algorithms computationally unfeasible. More importantly, in most communication scenarios, the decision time scale of user clusters is much larger than the decision time of trajectory and power control. This natural separation of time scales provides a convincing theoretical basis for adopting a hierarchical optimization framework. The concept of decomposition has also been validated in traditional optimization fields. For example, reference [

8] employs the BCD method to solve a similar problem, decomposing the complex joint optimization task into more tractable subproblems that are solved alternately. This inspires our approach, suggesting that problem decomposition, in traditional or learning-based methods, is a critical path toward finding feasible and efficient solutions.

Therefore, we adopted a hierarchical architecture. At the upper level, we adopted a user clustering algorithm tailored to our scenario, which combines nearest neighbor assignment with intelligent user offloading. At the bottom level, our proposed MAPPO-DC algorithm is used to jointly optimize UAV trajectory planning and power allocation. The hierarchical framework decouples user association from trajectory control, which inevitably sacrifices some global optimality. Specifically, the heuristic clustering based on nearest distance may lead to suboptimal load balancing in boundary cases where a user could be better served by a slightly more distant but less congested UAV (to maximize SINR via power headroom). However, finding the globally optimal assignment requires solving a combinatorial optimization problem (), which is prohibitive for real-time control. Our proposed intelligent user offloading mechanism mitigates this impact by reassigning users from overloaded UAVs to neighboring ones, ensuring that the physical capacity constraints are met. While not mathematically globally optimal, this approach provides a robust Pareto improvement over static assignments, balancing computational latency with system throughput.

The time complexity of our proposed heuristic clustering algorithm is , and in the current scenario (30 users, 5 UAVs), it only requires microsecond-level computation. In contrast, finding the theoretical global optimal solution for user allocation is a combinatorial optimization problem with a complexity of . As the number of UAVs and users increases, the computational cost of this heuristic algorithm only increases linearly, while the computational cost of finding the global optimal solution increases exponentially. Therefore, our hierarchical architecture actually obtained a high-quality suboptimal solution through heuristic methods at an extremely low time cost, leaving a sufficient computational time window for real-time control of the lower-level MARL. This ensures the real-time capability of the system during large-scale expansion.

The specific meanings corresponding to the symbols are shown in

Table 1.

3.1. User Clustering

As mentioned above, incorporating discrete user association decisions into the MARL action space leads to a combinatorial explosion. Furthermore, the decision frequency for user association is typically lower than that for trajectory and power control. Therefore, we employ an efficient heuristic-based clustering algorithm to periodically handle the user association problem, thereby constraining the action space of the lower-layer MARL task to continuous control variables. The procedure for hierarchical control, including periodic clustering, is detailed in Algorithm 1.

| Algorithm 1 User Clustering via Nearest-Neighbor Assignment and Intelligent Offloading |

1: Input: UAV set , user set , max users per UAV , UAV positions , user positions , total time steps T, clustering period 2: Initialize: UAV positions , user positions , initial user-UAV association map 3: for time step to do 4: if then // Periodically update clusters 5: 6: else 7: // Maintain previous association 8: end if 9: Execute lower-layer MAPPO-DC policy based on to determine UAV velocities and powers 10: Update UAV positions: 11: end for 12: Function 13: Output: Final user-UAV association map Phase 1: Nearest-Neighbor Assignment 14: Initialize: clusters for all 15: for each user do 16: Find the nearest UAV: 17: Assign user k to UAV 18: end for Phase 2: Intelligent Offloading 19: Initialize: Overloaded UAV set 20: while do 21: Select an overloaded UAV 22: Identify the user to offload: 23: Define candidate receiver UAVs: 24: if then 25: Find the best receiver UAV: 26: Update clusters: 27: else 28: Break // No available UAV to receive the user 29: end if 30: Update overloaded set: 31: end while Safety truncation for rare unsolvable cases 32: for each UAV i with do 33: Sort users in by distance to in ascending order 34: Keep the nearest users: first users in sorted list 35: end for 36: return Final clusters

|

3.2. State Space

The MAPPO-DC algorithm adheres to the CTDE paradigm, which requires aggregating the local observations of all agents to form a global state. State space is partitioned into two components: the local observation space and the global state space.

The self-centric local observation for agent

i, denoted as

, comprises

It includes the agent’s absolute position , its relative positions to other UAVs , the relative positions to its served users , the historical average throughputs of these users , and its own remaining energy , where . To maintain a fixed input dimension, information related to the served users is padded to the maximum users a UAV can serve ().

The global state

is formed by concatenating the local observations of all agents, thereby containing complete information about all dynamic elements in the system. It is constructed as

It consists of the set of all UAV positions , the set of all user positions , the historical average throughputs of all users , and the remaining energy of all UAVs .

3.3. Action Space

At each time step

t, the action of agent

i, denoted as

, is composed of two continuous vectors: a trajectory control action

and a power allocation action

.

3.3.1. Trajectory Planning

For the trajectory planning action

, the agent’s action aims to determine its velocity or displacement for the next time step. Specifically, the actor network outputs a mean value

for a displacement vector

. The actual displacement increment is sampled from a normal distribution centered at this mean,

where

is a learnable or fixed standard deviation. The UAV’s next position is updated as

. The actual velocity of UAV

i at time

t is then

, which effectively defines the desired velocity for the current time step.

3.3.2. Power Allocation

For the power allocation action

, the agent’s action aims to decide how to distribute its total communication power

among its served users. We implement this through a Softmax-based mechanism. Specifically, the actor network outputs a vector of raw logit values,

. For a user

, the allocated power

is obtained by applying a Softmax function to the corresponding logit values and scaling by the total available power,

The elements of the -dimensional vector are the unnormalized raw logit values. These values are transformed into power allocation ratios via the Softmax function, thereby enabling continuous power control for the served users.

3.4. Reward

To maximize both overall system coordination and individual agent performance, we adapt the reward structure for MAPPO-DC by classifying each reward component based on its contribution to either coordination or individual performance. Specifically, the collision penalty

, PF reward

, and user satisfaction reward

, which contribute to agent coordination, are categorized as shared rewards

. Conversely, the position guidance reward

, motion smoothness penalty

, boundary penalty

, and crash penalty

, which relate to an agent’s individual behavior, are categorized as individual rewards. Since the individual rewards differ for each agent, the total reward for each agent is also distinct

where

is the reward common to all agents,

is the reward unique to agent

i, and

w are the weighting coefficients for each sub-reward,

To align with the primary objective in (P1), we design the main component of the reward function based on the instantaneous sum-log-rate, which serves as a dense signal to promote proportional fairness. The PF reward at time

t is defined as

where

is the instantaneous throughput of the user

k, and

is a small positive constant to ensure numerical stability for logarithmic operation.

To encourage the fulfillment of a basic Quality of Service (QoS), we introduce an additional reward for exceeding a throughput threshold,

where

is the indicator function, which equals 1 when the condition is true and 0 otherwise, and

is the throughput threshold.

To guide exploration within a vast action space and under strict energy constraints, we introduce a position guidance reward

through reward shaping. This reward penalizes the distance between a UAV and the geometric center of its user cluster served by

, thus constraining the exploration space, preventing the model from converging to local optima and accelerating convergence.

where the geometric center of the user cluster

is calculated as

where

is the 2D coordinate vector of the target cluster center for UAV

i at time

t,

is the set of user indices assigned to UAV

i,

is the number of users in that cluster, and

is the 2D projection of user

k’s position vector onto the horizontal plane.

is the sum of the position vectors of all users within the cluster.

To ensure that the UAVs generate physically feasible and energy-efficient smooth trajectories, we penalize abrupt changes in their actions,

A collision penalty is incurred when the distance between any two UAVs is less than the safety distance

,

where

is a large negative constant.

A boundary penalty is applied when a UAV reaches the scenario boundaries,

where

represents the allowed 3D flight region and

is a negative constant. Unlike the collision and energy depletion penalties,

is a relatively smaller negative value. This distinction is made because users are randomly distributed within the scenario; if a UAV’s cluster center is near the edge, the policy should be allowed to explore the boundary without incurring an excessive penalty, which would otherwise overly constrain the search space.

An energy depletion penalty is applied when the UAV’s remaining energy drops below zero,

where

is a large negative constant.

The following rationale behind this composite reward shaping is threefold:

Guidance: The dense signal constrains the exploration space, guiding agents towards valid topological formations early in training.

Constraints: acts as a soft constraint to enforce physical feasibility, while and serve as hard safety constraints (sparse penalties) to prune unsafe state-action regions.

Optimization: Finally, and drive the policy towards the core objective of fair communication performance.

This decomposition ensures that agents learn how to fly safely before optimizing how to communicate efficiently, and the weights of each reward are shown in

Table A2.

3.5. MAPPO-DC Algorithm

The MAPPO-DC algorithm proposed in this paper is built upon the MAPPO architecture and likewise adheres to the CTDE paradigm. Its workflow is detailed in Algorithm 2.

| Algorithm 2 Multi-Agent Proximal Policy Optimization with Decoupled critics (MAPPO-DC) |

1: Initialize: For each agent : 2: actor network with parameters 3: critic network with parameters 4: Target critic network with parameters 5: Initialize: Replay buffer 6: for episode = 1, 2, ... do 7: Reset environment and get initial global state and local observations // Decentralized Execution & Data Collection 8: for t = 1, 2, ..., T do 9: for each agent in parallel do 10: Select action 11: end for 12: Execute joint action in the environment 13: Receive next global state , next local observations , and individual rewards 14: Store transition in buffer 15: end for // Centralized Training with Decoupled critics 16: for training step = 1, 2, ..., do 17: Sample a batch of trajectories from 18: for each agent in parallel do 19: // — critic Update — 20: Get global states from the batch 21: Calculate TD-target: 22: Update critic by minimizing the loss: 23: // — actor Update — 24: Get local observations from the batch 25: Compute advantage estimates using GAE with critic 26: Update actor by maximizing the PPO-Clip objective: 27: 28: end for 29: end for // Update Target Networks 30: for each agent do 31: 32: end for 33: end for

|

The values of the network parameters in Algorithm 2 are shown in

Table A2. The network architectures for the actor and critic are depicted in

Figure 2 and

Figure 3, respectively.

The actor network employs a shared backbone followed by two independent heads for trajectory and power control, which share weights. A Tanh activation function is used on the trajectory output to constrain the velocity, while a Softmax layer normalizes the logits for power allocation. In the input stage, the remaining energy, being a one-dimensional parameter, is expanded to an 8-dimensional vector via dimension expansion to balance its feature scale relative to other input variables.

Specifically, the global state space is dominated by high-dimensional vectors representing UAV and user positions. Directly concatenating the scalar energy state

would cause a dimension imbalance problem [

15], where the critical energy constraints are prone to be submerged by high-dimensional location features during gradient backpropagation. To address this, we employ a dimension spread technique (also known as feature embedding) to map the scalar energy to an

-dimensional vector. We set

based on the following two considerations:

Feature Scale Alignment: This value brings the energy feature representation to a comparable order of magnitude as the user state vectors (e.g., historical throughput), preventing any single feature group from dominating the network’s initial learning phase. This setting is consistent with the findings in [

15], where an expansion to

was shown to be essential for the agent to successfully learn energy-aware navigation tasks.

Convergence Stability: By increasing the dimensionality of the energy input, we effectively increase the number of synaptic weights connecting the energy state to the first hidden layer. This amplifies the gradient signal related to energy consumption, thereby avoiding convergence to local optima where agents maximize throughput but fail to satisfy flight endurance constraints.

In contrast to the single critic in standard MAPPO, our MAPPO-DC algorithm, inspired by the structure of IPPO, assigns a dedicated critic network with distinct parameters

for each agent.

Figure 3 illustrates the architecture for critic

. Unlike IPPO, each critic in MAPPO-DC receives the global state as input. Although all critics share the same input of the global state

, they learn personalized value functions through their unique interactions with their respective paired actors.

Specifically, each critic

solves a regression problem. Its objective is to find a set of parameters

that minimizes the prediction error for future cumulative rewards. This objective can be formally expressed as

where

, the true value of the discounted return

is only known at the end of an episode. In practice, we use the TD-target

as its unbiased estimator. Therefore, the gradient update for critic

follows

where expectation

is taken over the state-action stream generated by the joint policy of the entire team.

Although the input is the global state, the critic’s objective is not simply to learn the unconditional value of the state. Instead, it implicitly learns a conditional value function: given the state, what is the team’s expected future return assuming that the agent will follow policy? This implicit conditioning is achieved through the statistical averaging effect of the stochastic gradient descent.

Decomposing the joint action into agent i’s action and the joint action of all other agents . Both the reward and the state transition are functions of these two components. From the perspective of critic , we obtain the following:

: This is generated by its paired actor i, and its behavior pattern is tightly coupled with the critic’s learning process through the advantage function .

: This is generated by all other actors. Since the output of critic does not directly influence the updates of other agents, the behavior of can be treated as part of the environmental stochasticity from the perspective of critic ’s optimization process, introducing high-variance noise to the value estimation of and .

According to the law of total expectation, we can decompose the expected gradient update,

The update for critic is driven by the advantage signal . From its point of view, the actions of other agents introduce high-variance noise into the global state observation , as these actions lack a direct causal link to agent i’s policy update. By performing a stochastic gradient descent over a large number of samples, critic learns to average out this noise and focus on the stable correlation between the behavior of its paired actor and the resulting reward.

This statistical decoupling enables each critic to function as a specialized value estimator. It effectively learns a projection from the global state to a subspace most relevant to its actor’s role, enabling precise and personalized credit assignment even within a centralized training framework. In contrast, standard MAPPO employs a single, centralized critic that regresses towards a global return shared by all agents. While this mechanism promotes cooperation, in loosely coupled tasks, it dilutes agent i’s local reward signal within the global average, leading to credit assignment ambiguity for individual contributions and potentially stifling the exploration of individually optimal behaviors. MAPPO-DC addresses this issue by equipping each agent with its own dedicated critic.

4. Simulation Results

To ensure fairness in all hyperparameter adjustments, baseline comparisons, and ablation studies, all experiments were conducted using the same random seed. In addition, a dedicated scenario library was established before the training, consisting of a training scenario set and a testing scenario set. In the training set, only the initial positions of the UAV and the user are initialized. The test set not only initializes the location, but also fixes the user trajectory. This design ensures that the training process can explore as wide a range of strategies as possible, while ensuring fairness and reproducibility in the testing phase. The ratio of the training scenario set to the testing scenario set is set to 5:1.

In addition, in all MARL algorithms, the learning rates of critic and actor networks adopt linear decay. In order to continuously monitor the performance trend of the model, the current model is tested every 100 training cycles in the entire testing scenario set. The average performance of all test scenarios is recorded as the performance metric of the current model. To ensure that the test results do not affect the training dynamics, the testing process is completely independent of the training process.

To ensure a fair comparison, all experiments were implemented using Python 3.8 and PyTorch 1.12 on a platform with Intel i9-13900K CPU, GeForce RTX 4080 GPU. All reinforcement learning algorithms were trained for a fixed budget of 100,000 episodes. For the MARL baselines (IPPO, MAPPO, MAPPO-DC, MASAC, MADDPG), we maintained identical backbone network architectures (e.g., three hidden layers with 64 units each) and common hyperparameters, differing only in algorithm-specific parameters (e.g., entropy coefficients for MASAC). Additionally, non-learning baselines (greedy, APF, convex) were implemented using standard configurations from the literature to serve as rigorous benchmarks.

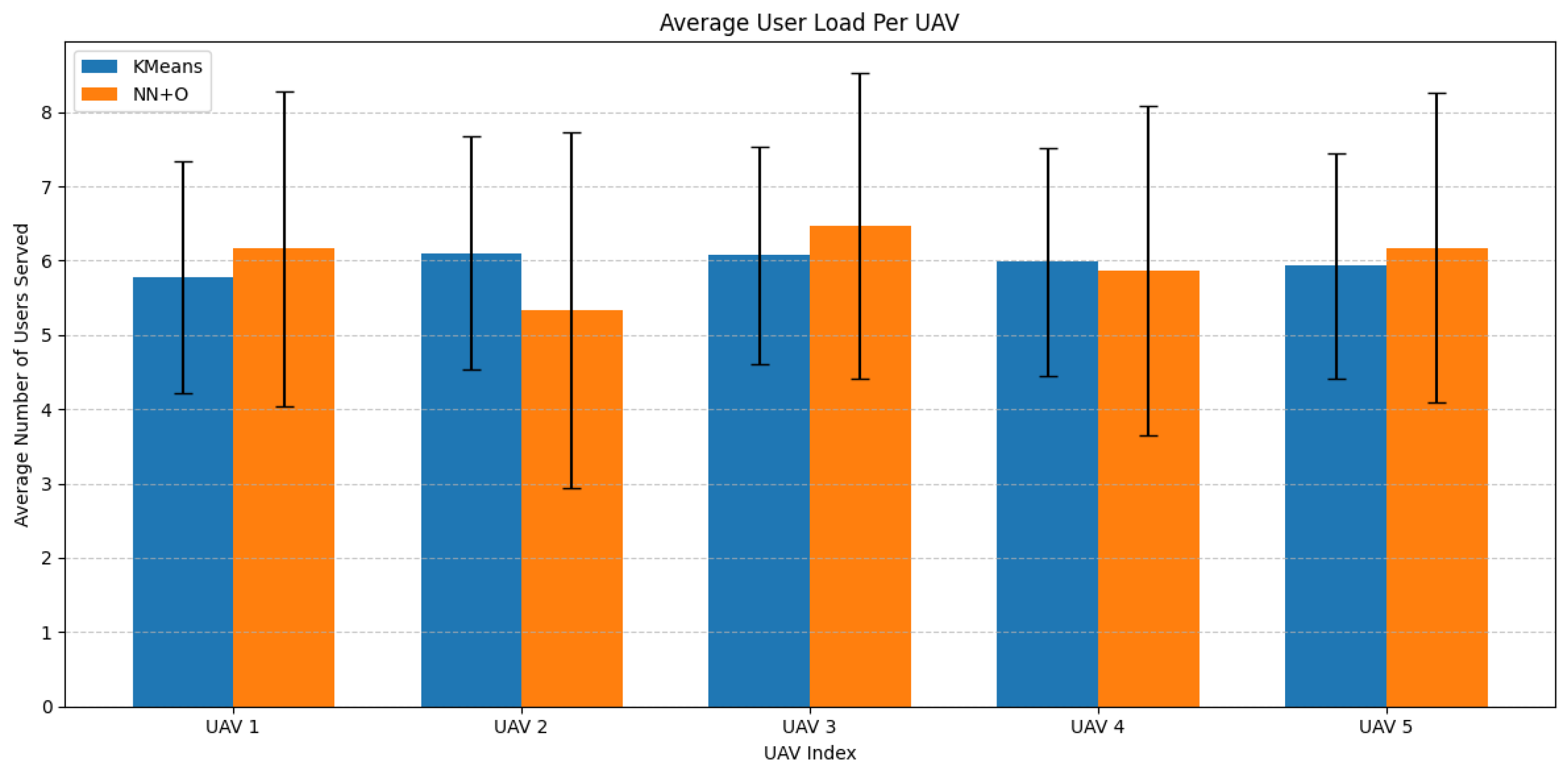

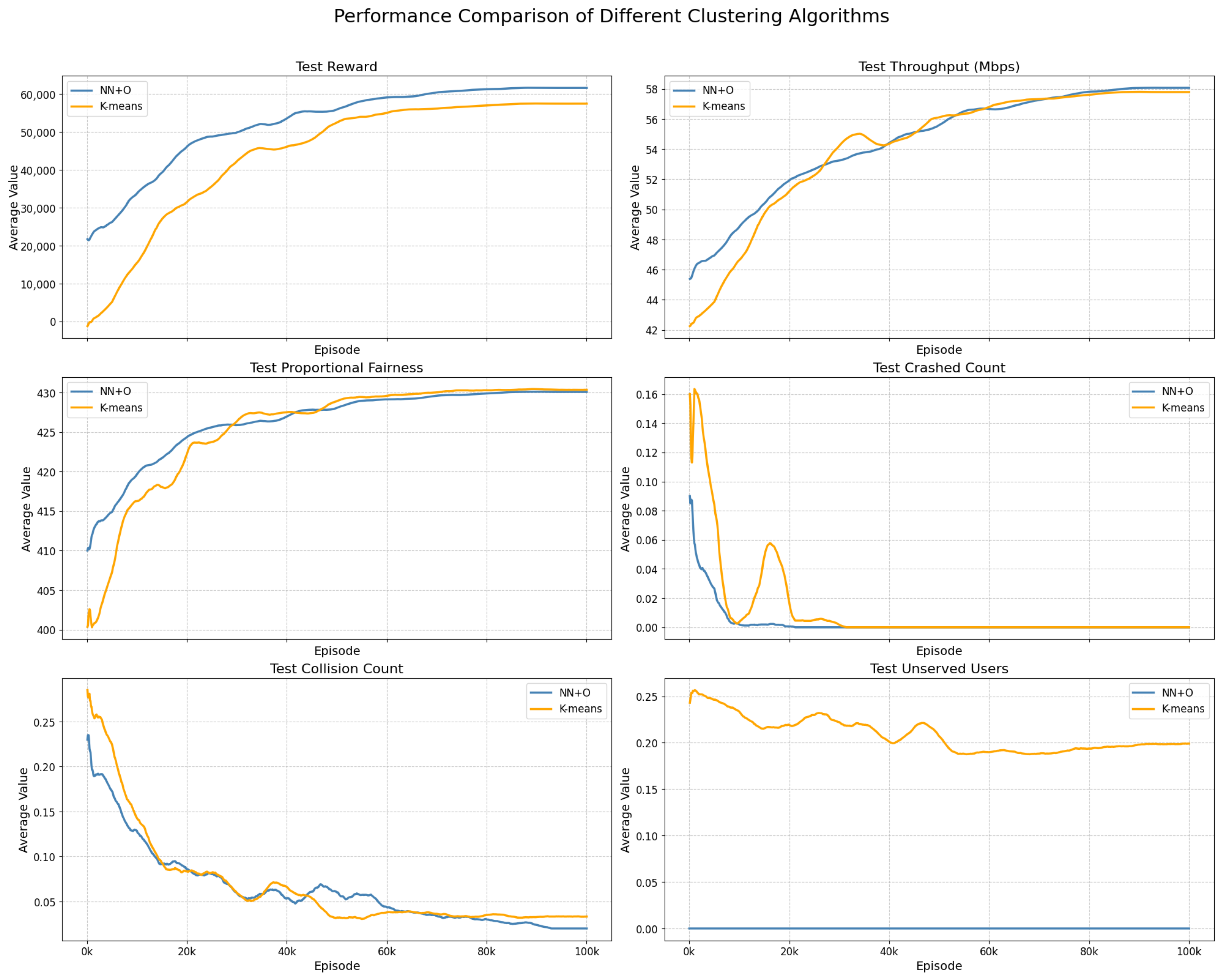

4.1. Comparative Study of Clustering Algorithms

We compared our proposed clustering method with the widely used K-means algorithm. Although K-means assigns a small number of users with low variance to each user cluster [

32], its objective function (minimizing intra-cluster variance) is purely geometric and independent of communication constraints. This may result in users being assigned to geometrically optimal but physically distant UAVs, leading to service interruptions. Our method aims to avoid this critical issue by prioritizing geographical proximity.

Figure 4 illustrates that K-means achieves a more balanced user load distribution.

Figure 5 demonstrates that in terms of individual user performance, the Nearest Neighbor Assignment + Smart Offloading scheme exhibits superior performance for each user, as users consistently experience optimal or suboptimal channel conditions.

Figure 6 shows that in terms of overall system performance, the two schemes are comparable. However, in terms of the presence of unserved users, the Nearest Neighbor Assignment + Smart Offloading scheme ensures that all users are served across all episodes and all time steps, provided that the number of crashed UAVs is less than two.

We trained the MAPPO-DC algorithm architecture combining nearest neighbor assignment and intelligent offloading under 5 different random seeds, and counted the number of truncation operations mentioned in Algorithm 1, as well as the average and variance of other key indicators, the results are shown in

Table 2. From the results, it can be observed that in the entire training episode (100,000 episodes), there were only a maximum of 5 truncation operations, all of which occurred before the crashed zeroing episode, indicating that this situation occurred very rarely; In addition, we observed that the variance of TP and PF under different random seeds is very small, while the variance of the zero episode of crashed is relatively large. This is because the performance limit that the model can reach is relatively high. It can also be said that the best solution explored by the model in the current scenario is relatively fixed. The plan to zero crashed was explored in the early stage of training, when the model is in the random exploration stage, so the variance is relatively large.

In summary, prioritizing the scenario requirements, we find that ensuring all users are served is preferred over strictly balancing the UAV load. Therefore, we select the Nearest Neighbor Assignment + Smart Offloading scheme as the final user clustering solution.

4.2. Clustering Frequency Experiment

The scenario studied involves mobile ground users that show high dynamism. Consequently, the clustering algorithm must dynamically update and iterate to ensure an effective follow-up service by the UAVs. This experiment seeks to determine the optimal dynamic clustering frequency.

In this experiment, the optimal clustering frequency is jointly determined by two parameters: the final test performance (average of the last 1000 episodes) and the episode count for stable crash zeroing (the episode after which the crash count consistently remains zero).

From the throughput (TP) and PF curves in

Figure 7 and the numerical results in

Table 3, we observe that a clustering frequency (CF) of 10 performs relatively poorly early in training compared to other frequencies. However, during the convergence stage, CF = 10 yields the highest TP and PF. In contrast, the Reward curve in

Figure 7 and the Pareto frontier scatter plot in

Figure 8 indicate that CF = 10 requires the longest time for convergence and the slowest stable crash zeroing among the four tested frequencies. The lower clustering frequency (less frequent user cluster reassignment) stabilizes the user groups faced by the agents during training, allowing them to learn superior long-term strategies. However, since the environment changes more slowly, it takes longer to accumulate experience and fully explore the strategy space, resulting in slower convergence.

While CF = 10 exhibits the slowest convergence speed compared to higher frequencies, it ultimately achieves the highest converged TP and PF scores. This presents a trade-off between training efficiency and final inference performance. From a practical deployment perspective, model training is a one-time offline cost, whereas the converged policy’s performance dictates the system’s long-term utility during online execution. Since CF = 10 provides a more stable interaction window that allows agents to learn superior long-term strategies—albeit requiring more episodes to generalize across varying topologies—we prioritize the final performance gains over training speed. Therefore, we select a clustering frequency of 10 as the final parameter setting.

4.3. Comparison with Non-Learning Algorithms

We selected the greedy algorithm [

11,

33] as the primary non-learning baseline for comparison. This algorithm is a typical method that maximizes the instantaneous reward currently available. This study demonstrates the superiority of learning-based algorithms over non-learning ones under energy-constrained conditions.

Since the Greedy algorithm is a non-learning method, it does not undergo a learning and convergence process. Therefore, we compare its average performance across 200 test scenarios. For MAPPO-DC, we load the converged model and evaluate its performance on the same 200 test scenarios. The “average crashed” metric here refers to the average number of crashed UAVs in the test scenarios.

It can be seen from

Table 4 that our algorithm demonstrates a 5.826% improvement in TP, a 16.7 point increase in PF, and a 22.72% improvement in the Jain’s fairness index compared to the Greedy algorithm. Furthermore, as depicted in the performance curves from our experiments, the average crash count for MAPPO-DC decreases from an initial value of 0.26 to a stable convergence at 0 after approximately 20,000 training epochs. In contrast, the Greedy algorithm, which focuses on maximizing instantaneous rewards (where the position reward has the highest weight), leads to frequent and drastic maneuvers that rapidly deplete energy, ultimately failing to sustain continuous service. Consequently, its crash count remains at a persistently high level.

In conclusion, this experiment validates the need to use learning-based algorithms to effectively manage energy consumption in energy-constrained scenarios.

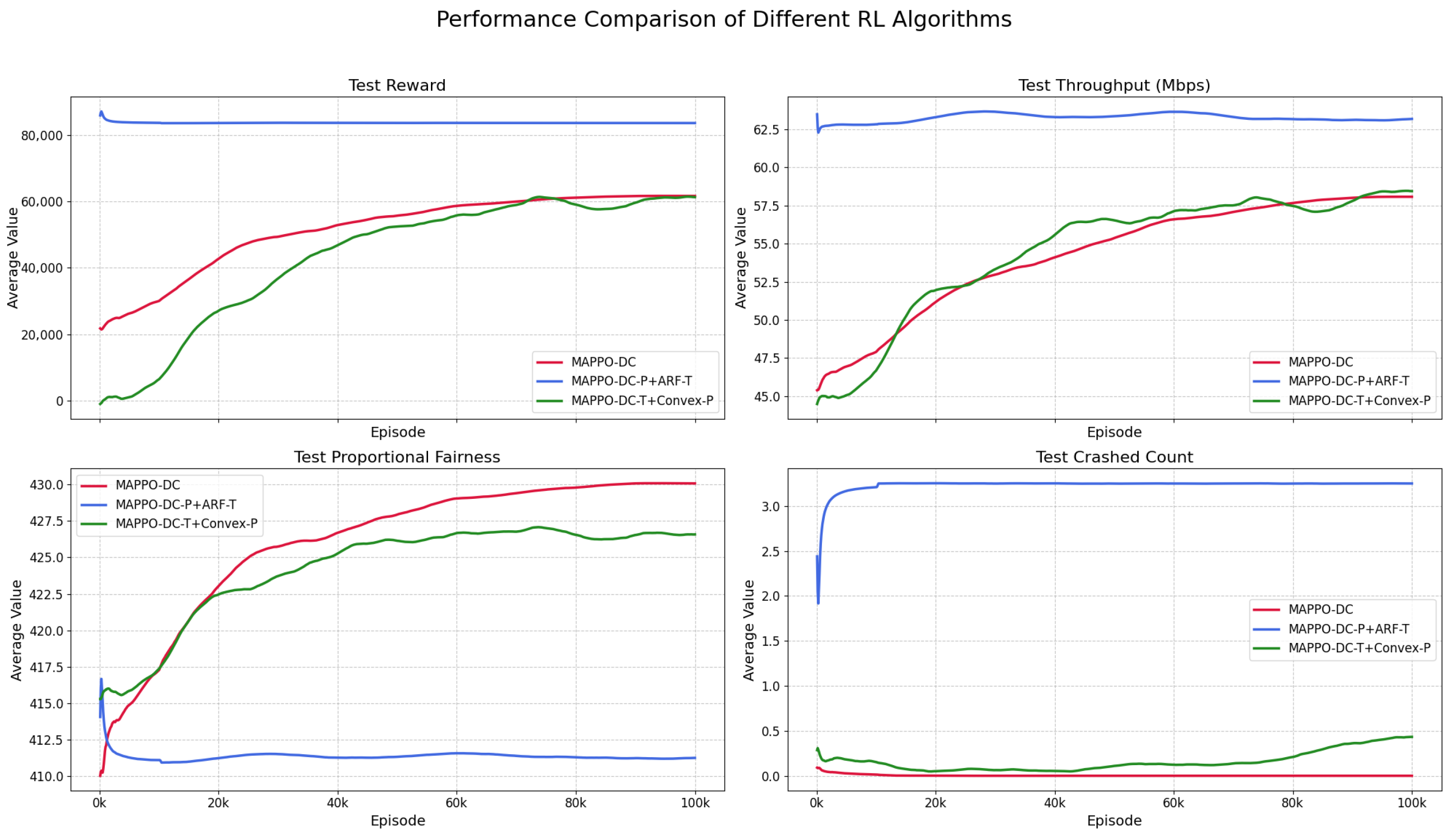

4.4. Comparison with Decoupled Optimization Methods

In the decoupled optimization experiment, we compare our method with two common approaches from existing research: trajectory optimization based on the artificial potential field (APF) method [

34,

35] and power optimization using a convex optimizer [

8]. We denote the scheme combining MAPPO-DC for power optimization and APF for trajectory optimization as MAPPO-DC-P+APF-T. The scheme combining MAPPO-DC for trajectory optimization and convex optimization for power control is denoted as MAPPO-DC-T+Convex-P.

Figure 9 reveals that the advantage of joint optimization with MAPPO-DC over decoupled methods is substantial, primarily because the joint optimization scheme enables global observation and effective credit assignment. The results show that the MAPPO-DC joint optimization scheme outperforms the decoupled schemes in both PF and crash avoidance.

In the MAPPO-DC-P+APF-T scheme, the UAV trajectories are controlled by the non-learning APF algorithm. Since the APF method cannot adjust the trajectories based on the energy remaining of the UAV’s, the crash count fails to decrease. Although it achieves a high TP, its PF remains consistently low.

In the MAPPO-DC-T+Convex-P scheme, although MAPPO-DC can reduce the crash count to a low level by controlling flight energy consumption—which constitutes a major part of the total energy—it lacks control over communication power. While communication energy consumption is relatively small, the lack of direct control over this component prevents the critic from accurately assessing the risks associated with total energy expenditure, leading to a credit assignment failure. As shown in

Figure 9, around the 19 k training epoch mark, the crash curve for the MAPPO-DC-T+Convex-P scheme begins to rise instead of fall. This occurs because the policy, after 19 k epochs, starts to push the energy limits in pursuit of higher PF, causing a temporary resurgence in crashes until the final policy stabilizes.

In summary, this experiment demonstrates that in energy-constrained scenarios, a learning algorithm must have control over the entire energy consumption profile to effectively mitigate the risk of UAV crashes and ensure continuous service to all users within the specified mission time.

4.5. Comparison with Other MARL Algorithms

The MAPPO-DC algorithm we propose is designed by integrating the multi-critic structure of IPPO [

19,

20] into the MAPPO framework. This design solves the problems in loosely coupled tasks by balancing the coordination between intelligent agents and the individual performance of individual agents. Therefore, in this experiment, we mainly compared MAPPO-DC with its basic algorithms, MAPPO and IPPO. In addition, we also compared the MASAC and MADDPG algorithms. Although we conducted extensive parameter tuning experiments, the MADDPG algorithm experienced gradient explosion after about 5500 episodes of training. Therefore, we did not add the training curve of MADDPG to

Figure 10.

From the training time of each algorithm in

Table 5, it can be seen that, compared to off policy IPPO, MAPPO, and MAPPO-DC algorithms, on policy MASAC and MADDPG algorithms require longer training time due to the need to maintain a larger relay buffer during the training process, while MASAC requires more computation time due to the inclusion of a double-Q network to eliminate high estimation bias. In addition, due to the gradient explosion that occurred during MADDPG training up to 5.5 k, we estimated the training time for 100 k episodes based on the time taken for the first 5.5 k episodes.

As shown in

Figure 9 and

Figure 10, both the MAPPO-DC-T+Convex-P scheme in

Section 4.4 and the IPPO experiment in this section suffer from credit assignment failure, which introduces high-variance noise into the decision-making process. Specifically, for agent

i in IPPO, its critic only receives observations from its corresponding actor. Consequently, when the actions of other agents result in negative feedback for agent

i, it can neither identify the source of this negative feedback nor devise a solution, a phenomenon known as credit assignment failure. In the MAPPO-DC-T+Convex-P architecture, MAPPO-DC treats the uncontrollable communication power as noise from environmental non-stationarity. Similarly, in the IPPO architecture, each agent

i treats all other agents

as noise from environmental non-stationarity. After the 21,000 training epochs, IPPO’s crash curve also begins to rise, which, while increasing TP, leads to a decrease in PF. At this stage, IPPO treats crashes as a sacrificial parameter in exchange for higher TP and PF.

MADDPG failed to converge due to severe overestimation bias and the limitations of deterministic policies in stochastic environments, leading to gradient explosion early in training. Meanwhile, MASAC suffered from initial overfitting followed by a collapse into sub-optimal local safety policies. The failure of MADDPG and the instability of MASAC highlight a critical theoretical insight: in highly dynamic scenarios with random user mobility, off-policy methods (MASAC, MADDPG) significantly underperform on-policy methods (IPPO, MAPPO, MAPPO-DC). This is primarily because the historical experiences stored in the replay buffer become obsolete rapidly due to environmental non-stationarity (distributional shift), causing the critic to learn from inaccurate state distributions. In contrast, on-policy methods, which optimize based on fresh samples from the current policy, demonstrate superior adaptability. Consequently, MADDPG is excluded from

Figure 10, and a detailed analysis of both algorithms is provided in

Appendix A.

In scenarios where strong agent coordination is not required, MAPPO suffers from credit assignment ambiguity. That is, when agents

i and

j execute their respective actions and the critic receives positive feedback, it cannot precisely determine whether the positive feedback originated from agent

i or agent

j. As seen in the comparison between the MAPPO-DC and MAPPO curves in

Figure 10, their reward, TP, collision, and out-of-bounds curves are nearly identical. However, the crash curve for MAPPO-DC decreases and converges to zero much faster than that of MAPPO. This indicates that the decoupled critics provide a clearer and more direct penalty signal for unsafe actions, enabling the agents to learn safe operational boundaries more quickly. The crash and PF curves further reveal that by rapidly pruning the vast unsafe regions of the state-action space, MAPPO-DC allows agents to focus their exploration on promising areas for performance optimization. This leads to the initial faster improvement in PF seen in

Figure 10. Furthermore, MAPPO-DC achieves a higher final PF than MAPPO, confirming that the decoupled critic design successfully achieves our objective of maximizing individual agent performance while maintaining necessary coordination.

To quantitatively evaluate the efficacy of credit assignment mechanisms, we introduce the Variance of Advantage Estimates as a metric. In MARL, high advantage variance typically indicates ambiguous credit assignment, where an agent’s value estimation is heavily severely perturbed by the non-stationary behaviors of others (noise). Conversely, a lower variance implies that the critic has successfully isolated the agent’s specific contribution from the environmental noise, leading to more stable and precise policy updates.

As can be seen from

Figure 11, the mean and standard deviation of the advantage variance of MAPPO-DC are the lowest, which confirms that the decoupled critic architecture can effectively isolate the specific contributions of each agent in loosely coupled scenarios, reducing the noise introduced by the dynamic behavior of other agents. The advantage variance of MAPPO is higher than that of MAPPO-DC, indicating that centralized critics introduce coupling noise when dealing with non-stationarity, that is, the value estimation of agents is sensitive to the action fluctuations of other agents; IPPO exhibits relatively low advantage variance, but its error bars show significant dispersion, indicating that although agents often adopt strategies that ignore interactions (resulting in low variance in steady states), they exhibit severe instability when inevitable conflicts occur, as evidenced by the large lower limit of the error bars; MASAC exhibits the highest advantage variance because it is a maximum entropy algorithm designed to encourage exploration, and compared to trust region-based methods such as PPO, its policy randomness and non-strategic nature of value estimation introduce higher variance in advantage computation.

In conclusion, the simulation experiment results validate our core hypothesis: the decoupling critic mechanism in MAPPO-DC effectively solves the problem of credit assignment failure caused by the introduction of high variance noise in IPPO, as well as the problem of credit assignment ambiguity in the MAPPO centralized critic, which suppresses individual performance. The MAPPO-DC algorithm achieves a more efficient and secure learning process without affecting the necessary coordination of multi-agent tasks. In addition, we compared the experimental results of MASAC and MADDPG and found that the off-policy-based algorithms (MASAC, MADDPG) performed worse than the on-policy-based algorithms (MAPPO-DC, MAPPO, IPPO) in highly dynamic random environments.

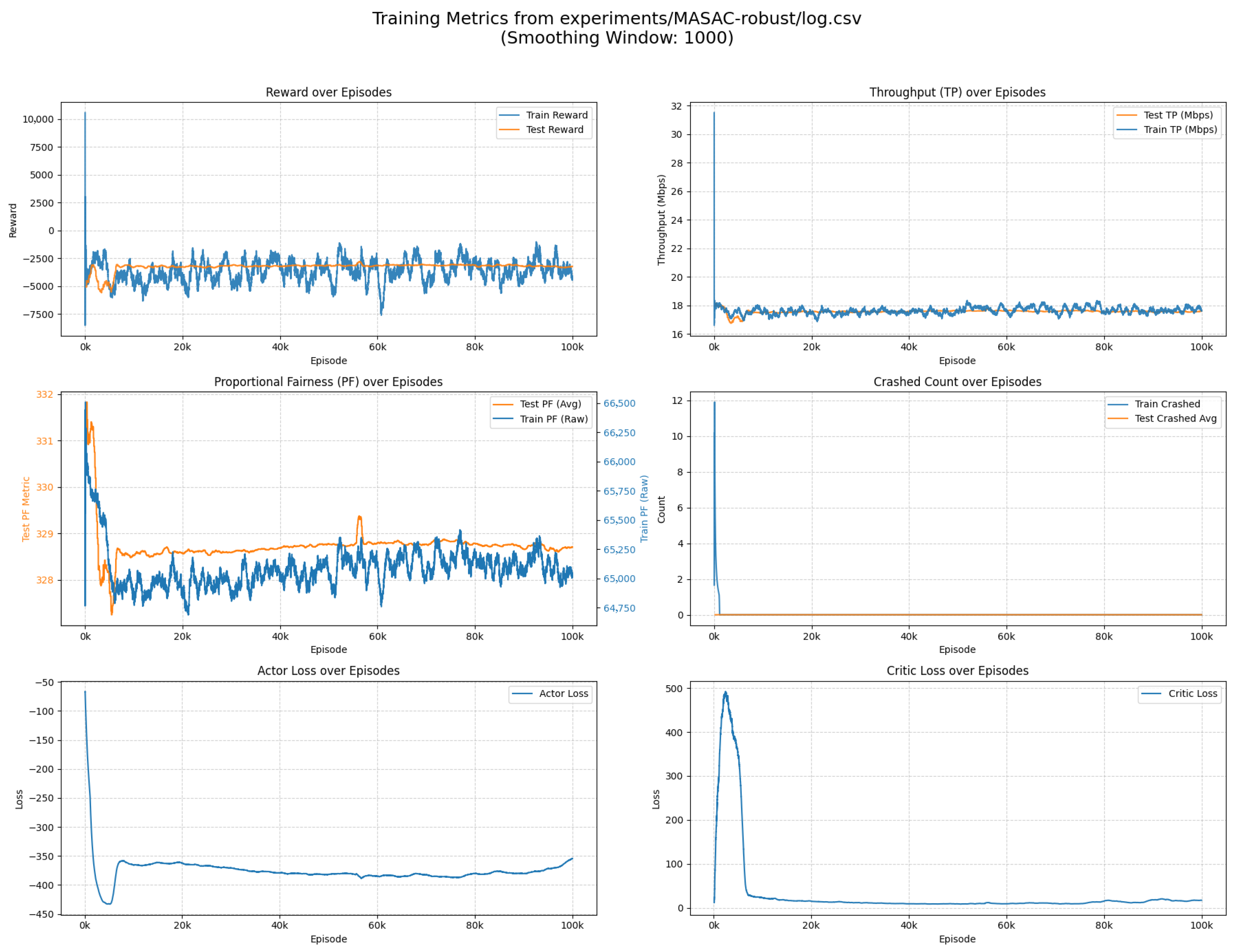

4.6. Model Robustness Experiment

To validate the robustness of the proposed algorithm under severe uncertainty and explore its applicability boundaries, we conducted additional experiments with enhanced stochasticity. Note that MADDPG is excluded from this analysis as it failed to converge even in the baseline scenario (

Section 4.5); evaluating it in this more complex environment would yield no additional analytical value. We introduced the following three major environmental stressors:

Co-channel Interference: Switching from OFDMA to a full frequency reuse scheme, where all UAVs share the entire spectrum, thereby introducing strong inter- UAV interference.

Heterogeneous Bandwidth: Replacing the equal bandwidth division with an inverse-distance-weighted allocation scheme (an edge-user protection strategy) to simulate uneven resource distribution.

Energy Consumption Noise: Adding Gaussian perturbations to the energy consumption model to mimic unmodeled external disturbances (e.g., wind gusts).

As illustrated in

Figure 12, despite the increased environmental uncertainty, both MAPPO and MAPPO-DC successfully converged, demonstrating inherent robustness. The converged system throughput is lower compared to the interference-free scenario (

Section 4.5). From the user’s perspective, although full frequency reuse increases the available bandwidth per user, the SINR degrades significantly due to severe co-channel interference. This is particularly detrimental to users at the cluster edges, who suffer from high interference power from neighboring UAVs, leading to a net reduction in TP and PF.

A critical observation from the crash curves (comparing

Figure 10 and

Figure 12) is that with energy noise, the number of episodes required for crash zeroing increased by approximately 15 k. This indicates the model needs more time to learn a “safety margin”—effectively reserving extra battery to buffer against

noise fluctuations. Comparing this to the MAPPO-DC-T+Convex-P scheme (

Section 4.4) reveals a key insight: In this experiment, the noise follows a statistical distribution, which the critic network can approximate, allowing the actor to learn a robust margin. In contrast, the convex optimizer in

Section 4.4 behaves as a non-stationary or adversarial “black box”—slight position changes cause drastic, non-linear jumps in power consumption. This structural unpredictability is harder to adapt to than statistical noise, explaining why the hybrid convex scheme failed to eliminate crashes completely, whereas the end-to-end MAPPO-DC succeeded here.

The trends for MASAC and IPPO remain consistent with

Section 4.5. MASAC exhibits instability (overfitting then local optima), and IPPO sacrifices safety (high crashes) for short-term reward gains.

In this strongly coupled scenario, MAPPO and MAPPO-DC achieved similar converged performance (MAPPO-DC: TP ≈ 23.80 Mbps, PF ≈ 357.61; MAPPO: TP ≈ 23.98 Mbps, PF ≈ 356.34). This similarity arises because both algorithms utilize global states for value estimation, enabling global coordination. However, MAPPO-DC achieved crash zeroing significantly faster than MAPPO. This confirms that even under strong coupling, the decoupled critic architecture provides clearer credit assignment for individual safety constraints, accelerating the learning of safe flight policies.

Figure 13 presents the Advantage Variance in the strongly coupled scenario. Compared to the loosely coupled scenario (

Figure 11), the advantage variance for all algorithms increased. This confirms that co-channel interference elevates the coupling strength, making the system performance more dependent on complex multi-agent coordination rather than individual actions, thereby blurring the credit assignment. IPPO exhibits the lowest variance. However, this does not indicate superior credit assignment. Instead, it reflects a trivial solution: to mitigate interference penalties without coordination capabilities, IPPO agents learned a “spatial repulsion” strategy—staying far apart to minimize interaction. This artificially reduces the variance of interaction terms. Consistent with previous results, MASAC shows the highest variance, confirming its poor robustness against uncertainty.

Unlike the loosely coupled case, MAPPO-DC shows slightly higher variance than MAPPO here. This reveals the following trade-off of decoupling:

Smoothing Effect of Centralization: MAPPO’s centralized critic predicts a unified team reward, effectively averaging out individual fluctuations caused by intense interference, resulting in a statistically smoother estimate.

Challenge of Decoupling: MAPPO-DC attempts to isolate individual contributions from this highly entangled signal. In strong coupling, this “forced decoupling” is computationally challenging and more sensitive to interference noise, leading to slightly higher variance.

This explains why MAPPO-DC’s PF is marginally lower (due to variance-induced gradient noise), but its safety learning remains superior (as safety constraints remain largely local).

Comparing the loosely coupled (

Section 4.5) and strongly coupled (

Section 4.6) scenarios allows us to delineate the following clear operational boundaries:

MAPPO (Centralized Critic): Optimal for Interference-Limited Scenarios where maximizing system capacity via complex coordination is the sole priority. If the mission tolerates slower safety convergence to squeeze out the absolute maximum throughput, standard MAPPO is preferable.

MAPPO-DC (Decoupled Critic): Dominates in Safety-Critical and Rapid-Response Missions. It offers comprehensive advantages in loosely coupled tasks and remains highly competitive in strongly coupled settings. Its ability to swiftly learn collision-avoidance policies (fast Crash Zeroing) makes it the robust choice for real-world deployments, offering the best trade-off between optimality, safety, and training efficiency.

5. Conclusions

This paper addresses the challenge of energy-constrained and fairness-aware resource allocation within multi-UAV emergency communication networks. We proposed a hierarchical framework centered around MAPPO-DC, a novel MARL algorithm specifically designed for loosely coupled task structures, which arise from decoupling user association from trajectory and power control. The framework employs a lightweight clustering algorithm for efficient user assignment, while MAPPO-DC enables UAV agents to learn customized control policies. The algorithm successfully maximizes the system’s PF throughput while ensuring the necessary coordination for the multi-agent task, providing a resource allocation strategy that balances both efficiency and fairness for energy-limited emergency communication networks.

Multiple simulation experiments have drawn the following conclusions: Firstly, the joint optimization of trajectory and power control is essential for reliable operation. When decoupling is introduced into the methodology, the uncontrolled subsystem acts as an irreducible “black box,” inevitably leading to credit assignment failures and persistent crashes. Secondly, our proposed MAPPO-DC algorithm significantly outperforms standard benchmarks such as IPPO and MAPPO. It successfully avoids the non-stationarity pitfalls of independent learning (IPPO) while demonstrating superior sample efficiency and performance compared to the overly centralized value learning of standard MAPPO. This validates the effectiveness of specialized credit assignment for loosely coupled tasks. Finally, our robustness analysis across varying coupling levels reveals a clear operational boundary: MAPPO-DC demonstrates superior efficacy and safety convergence in loosely coupled environments, while retaining high adaptability and competitive performance even when subjected to strong co-channel interference. This validates MAPPO-DC as a robust, safety-aware solution suitable for diverse dynamic communication missions.

Future work can extend this research in several directions. The energy consumption model could be refined to incorporate more complex factors such as wind and acceleration. Additionally, the inclusion of Vehicle-to-Vehicle (V2V) communication could be explored as a means to introduce more explicit coordination, further enhancing cooperative efficiency.