UAV Small Target Detection Method Based on Frequency-Enhanced Multi-Scale Fusion Backbone

Highlights

- A method for redesigning the RT-DETR backbone has been established based on the use of a frequency-enhanced multi-scale feature fusion module and a grouped multi-kernel interaction module.

- We integrated Shape-NWD into the loss computation to make the objective shape-aware.

- An end-to-end object detection model tailored for UAV aerial-view scenarios is proposed.

- Compared with RT-DETR, our method achieves improvements in both AP and AP50 while largely preserving the real-time performance of RT-DETR.

Abstract

1. Introduction

- An enhanced end-to-end real-time target detector derived from RT-DETR is proposed, exhibiting superior performance over the baseline RT-DETR in small target detection;

- A ResNet-based modified backbone is proposed to enable multi-scale feature fusion, resolving the problem of small target detail degradation in the downsampling stage;

- The shape and scale of bounding boxes are introduced into the IOU loss computation, where these factors also influence the regression performance.

2. Materials and Methods

2.1. Frequency-Enhanced Multi-Scale Feature Fusion Module

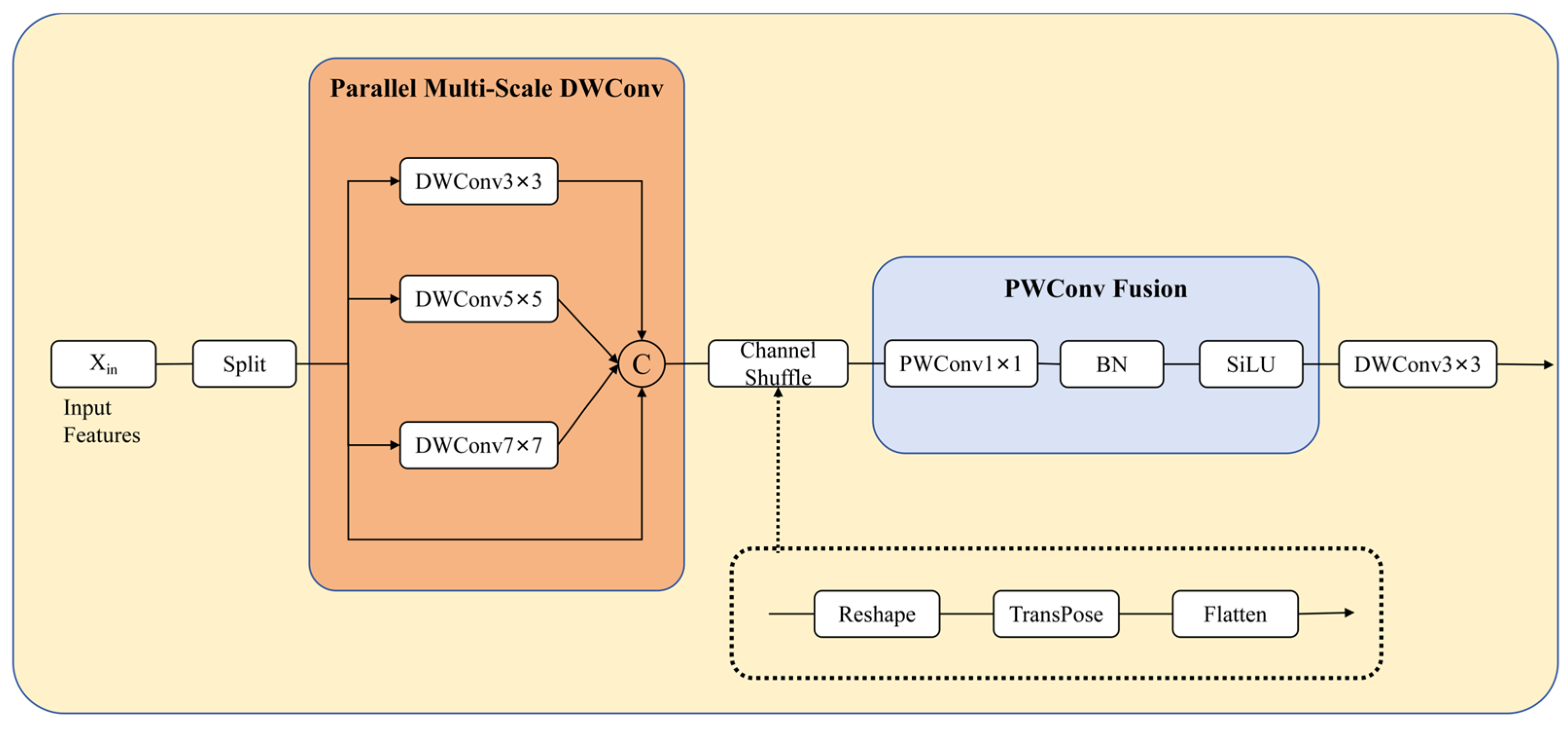

2.2. Grouped Multi-Kernel Interaction Module

2.3. Loss Function

3. Results

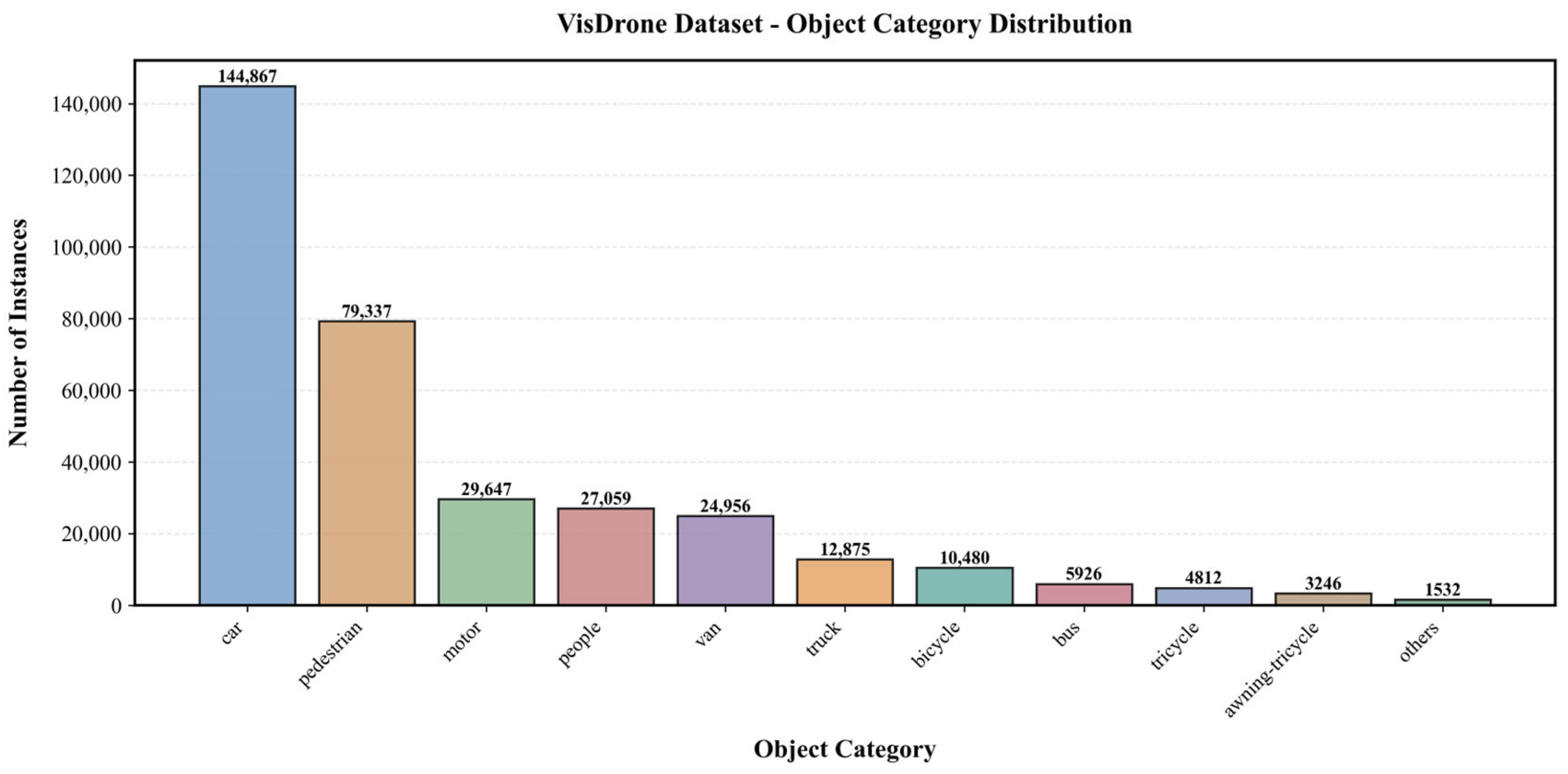

3.1. DataSets

3.2. Comparisons with Other Object Detection Networks

3.3. Ablation Experiment

4. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

Abbreviations

| FFFE | Frequency-Enhanced Multi-Scale Feature Fusion Module |

| GMKI | Grouped Multi-Kernel Interaction Module |

| UAV | Unmanned Aerial Vehicle |

| GFLOPs | Giga Floating-Point Operations Per Second |

References

- Mittal, P.; Singh, R.; Sharma, A. Deep learning-based object detection in low-altitude UAV datasets: A survey. Image Vis. Comput. 2020, 104, 104046. [Google Scholar] [CrossRef]

- Bochkovskiy, A.; Wang, C.Y.; Liao, H.Y.M. Yolov4: Optimal speed and accuracy of object detection. arXiv 2020, arXiv:2004.10934. [Google Scholar] [CrossRef]

- Liu, S.; Qi, L.; Qin, H.; Shi, J.; Jia, J. Path aggregation network for instance segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Wang, G.; Chen, Y.; An, P.; Hong, H.; Hu, J.; Huang, T. UAV-YOLOv8: A small-object-detection model based on improved YOLOv8 for UAV aerial photography scenarios. Sensors 2023, 23, 7190. [Google Scholar] [CrossRef] [PubMed]

- Lou, H.; Duan, X.; Guo, J.; Liu, H.; Gu, J.; Bi, L.; Chen, H. DC-YOLOv8: Small-size object detection algorithm based on camera sensor. Electronics 2023, 12, 2323. [Google Scholar] [CrossRef]

- Tong, K.; Wu, Y.; Zhou, F. Recent advances in small object detection based on deep learning: A review. Image Vis. Comput. 2020, 97, 103910. [Google Scholar] [CrossRef]

- Zou, Z.; Chen, K.; Shi, Z.; Guo, Y.; Ye, J. Object detection in 20 years: A survey. Proc. IEEE 2023, 111, 257–276. [Google Scholar] [CrossRef]

- Fadan, A.M.; Abro, G.E.M.; Khan, A.M. A UAV-Based Framework for Dynamic Traffic Surveillance in Dhahran, Saudi Arabia. In Proceedings of the 2025 IEEE 15th International Conference on Control System, Computing and Engineering (ICCSCE), Penang, Malaysia, 22–23 August 2025; IEEE: Piscataway, NJ, USA, 2025; pp. 114–118. [Google Scholar]

- Alqahtani, S.K.; Abro, G.E.M. Autonomous drone-based border surveillance using real-time object detection with Yolo. In Proceedings of the 2025 IEEE 15th Symposium on Computer Applications & Industrial Electronics (ISCAIE), Penang, Malaysia, 24–25 May 2025; IEEE: Piscataway, NJ, USA, 2025; pp. 564–569. [Google Scholar]

- Munir, A.; Siddiqui, A.J.; Hossain, M.S.; El-Maleh, A. YOLO-RAW: Advancing UAV Detection with Robustness to Adverse Weather Conditions. IEEE Trans. Intell. Transp. Syst. 2025, 26, 7857–7873. [Google Scholar] [CrossRef]

- Munir, A.; Siddiqui, A.J.; Anwar, S.; El-Maleh, A.; Khan, A.H.; Rehman, A. Impact of adverse weather and image distortions on vision-based UAV detection: A performance evaluation of deep learning models. Drones 2024, 8, 638. [Google Scholar] [CrossRef]

- Abro, G.E.M.; Horain, P.; Damurie, J. Image reconstruction & calibration strategies for Fourier ptychographic microscopy—A brief review. In Proceedings of the 2022 IEEE 5th International Symposium in Robotics and Manufacturing Automation (ROMA), Malacca, Malaysia, 6–8 August 2022; IEEE: Piscataway, NJ, USA, 2022; pp. 1–6. [Google Scholar]

- Zhu, X.; Su, W.; Lu, L.; Li, B.; Wang, X.; Dai, J. Deformable detr: Deformable transformers for end-to-end object detection. arXiv 2020, arXiv:2010.04159. [Google Scholar]

- Li, F.; Zhang, H.; Liu, S.; Guo, J.; Ni, L.M.; Zhang, L. Dn-detr: Accelerate detr training by introducing query denoising. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, New Orleans, LA, USA, 19–24 June 2022. [Google Scholar]

- Zhang, H.; Li, F.; Liu, S.; Zhang, L.; Su, H.; Zhu, J.; Ni, L.M.; Shum, H.Y. Dino: Detr with improved denoising anchor boxes for end-to-end object detection. arXiv 2022, arXiv:2203.03605. [Google Scholar]

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-end object detection with transformers. In European Conference on Computer Vision; Springer International Publishing: Cham, Switzerland, 2020; pp. 213–229. [Google Scholar]

- Zhao, Y.; Lv, W.; Xu, S.; Wei, J.; Wang, G.; Dang, Q.; Liu, Y.; Chen, J. Detrs beat yolos on real-time object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 16–22 June 2024. [Google Scholar]

- Dai, X.; Chen, Y.; Yang, J.; Zhang, P.; Yuan, L.; Zhang, L. Dynamic detr: End-to-end object detection with dynamic attention. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 11–17 October 2021. [Google Scholar]

- Dai, Z.; Cai, B.; Lin, Y.; Chen, J. Up-detr: Unsupervised pre-training for object detection with transformers. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 19–25 June 2021. [Google Scholar]

- Yao, Z.; Ai, J.; Li, B.; Zhang, C. Efficient detr: Improving end-to-end object detector with dense prior. arXiv 2021, arXiv:2104.01318. [Google Scholar]

- Wang, Y.; Zhang, X.; Yang, T.; Sun, J. Anchor detr: Query design for transformer-based detector. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtual, 22 February–1 March 2022. [Google Scholar]

- Sohan, M.; Ram, T.S.; Reddy, C.V.R. A review on yolov8 and its advancements. In International Conference on Data Intelligence and Cognitive Informatics; Springer: Singapore, 2024; pp. 529–545. [Google Scholar]

- Wang, C.Y.; Yeh, I.H.; Mark Liao, H.Y. Yolov9: Learning what you want to learn using programmable gradient information. In European Conference on Computer Vision; Springer Nature: Cham, Switzerland, 2024; pp. 1–21. [Google Scholar]

- Khanam, R.; Hussain, M. Yolov11: An overview of the key architectural enhancements. arXiv 2024, arXiv:2410.17725. [Google Scholar] [CrossRef]

- Liu, S.; Li, F.; Zhang, H.; Yang, X.; Qi, X.; Su, H.; Zhu, J.; Zhang, L. Dab-detr: Dynamic anchor boxes are better queries for detr. arXiv 2022, arXiv:2201.12329. [Google Scholar] [CrossRef]

- Zeng, N.; Wu, P.; Wang, Z.; Li, H.; Liu, W.; Liu, X. A small-sized object detection oriented multi-scale feature fusion approach with application to defect detection. IEEE Trans. Instrum. Meas. 2022, 71, 3507014. [Google Scholar] [CrossRef]

- Zheng, Z.; Wang, P.; Liu, W.; Li, J.; Ye, R.; Ren, D. Distance-IoU loss: Faster and better learning for bounding box regression. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020. [Google Scholar]

- Peng, Y.; Li, H.; Wu, P.; Zhang, Y.; Sun, X.; Wu, F. D-FINE: Redefine regression task in DETRs as fine-grained distribution refinement. arXiv 2024, arXiv:2410.13842. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016. [Google Scholar]

- Yu, J.; Jiang, Y.; Wang, Z.; Cao, Z.; Huang, T. Unitbox: An advanced object detection network. In Proceedings of the 24th ACM International Conference on Multimedia (MM’16), Amsterdam, The Netherlands, 15–19 October 2016. [Google Scholar] [CrossRef]

- Zhang, H.; Xu, C.; Zhang, S. Inner-IoU: More effective intersection over union loss with auxiliary bounding box. arXiv 2023, arXiv:2311.02877. [Google Scholar]

- Zhang, H.; Zhang, S. Shape-iou: More accurate metric considering bounding box shape and scale. arXiv 2023, arXiv:2312.17663. [Google Scholar]

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Gao, S.H.; Cheng, M.M.; Zhao, K.; Zhang, X.Y.; Yang, M.H.; Torr, P. Res2net: A new multi-scale backbone architecture. In IEEE Transactions on Pattern Analysis and Machine Intelligence; IEEE Computer Society: Los Alamitos, CA, USA, 2019; Volume 43, pp. 652–662. [Google Scholar]

- Qiao, S.; Chen, L.C.; Yuille, A. Detectors: Detecting objects with recursive feature pyramid and switchable atrous convolution. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Nashville, TN, USA, 20–25 June 2021; pp. 10213–10224. [Google Scholar]

- Pang, J.; Chen, K.; Shi, J.; Feng, H.; Ouyang, W.; Lin, D. Libra r-cnn: Towards balanced learning for object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019. [Google Scholar]

- Zhao, Q.; Sheng, T.; Wang, Y.; Tang, Z.; Chen, Y.; Cai, L.; Ling, H. M2det: A single-shot object detector based on multi-level feature pyramid network. In Proceedings of the AAAI Conference on Artificial Intelligence, Honolulu, HI, USA, 27 January–1 February 2019. [Google Scholar]

- Ghiasi, G.; Lin, T.Y.; Le, Q.V. Nas-fpn: Learning scalable feature pyramid architecture for object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019. [Google Scholar]

- Tan, M.; Pang, R.; Le, Q.V. Efficientdet: Scalable and efficient object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Virtual, 13–19 June 2020. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Ozge Unel, F.; Ozkalayci, B.O.; Cigla, C. The power of tiling for small object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, Long Beach, CA, USA, 16–17 June 2019. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- Targ, S.; Almeida, D.; Lyman, K. Resnet in resnet: Generalizing residual architectures. arXiv 2016, arXiv:1603.08029. [Google Scholar] [CrossRef]

- Wu, Z.; Shen, C.; Van Den Hengel, A. Wider or deeper: Revisiting the resnet model for visual recognition. Pattern Recog. 2019, 90, 119–133. [Google Scholar] [CrossRef]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. Cbam: Convolutional block attention module. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Qin, Z.; Zhang, P.; Wu, F.; Li, X. Fcanet: Frequency channel attention networks. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QC, Canada, 11–17 October 2021. [Google Scholar]

- Rao, Y.; Zhao, W.; Zhu, Z.; Lu, J.; Zhou, J. Global filter networks for image classification. Adv. Neural Inf. Process. Syst. 2021, 34, 980–993. [Google Scholar]

- Xu, K.; Qin, M.; Sun, F.; Wang, Y.; Chen, Y.-K.; Ren, F. Learning in the frequency domain. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Virtual, 13–19 June 2020. [Google Scholar]

- Tang, S.; Zhang, S.; Fang, Y. HIC-YOLOv5: Improved YOLOv5 for small object detection. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation (ICRA), Yokohama, Japan, 13–17 May 2024; IEEE: Piscataway, NJ, USA, 2024; pp. 6614–6619. [Google Scholar]

| Representative Approaches | Key Advantages | Key Limitations |

|---|---|---|

| YOLOv8 [22] YOLOv9 [23] YOLOv11 [24] | High inference speed; Mature ecosystem. | Dependence on NMS creates deployment bottlenecks; Performance drops significantly for dense tiny objects due to heuristic anchor matching. |

| DETR Deformable DETR | Eliminates NMS; Captures global context. | Slow convergence speed; Extremely high computational complexity. |

| RT-DETR | Real-time speed with end-to-end architecture. | Feature degradation during deep downsampling causes loss of fine-grained details for small targets. |

| Model | Params (M) | GFLOPs | FPS | AP | AP50 |

|---|---|---|---|---|---|

| Real-time Object Detectors | |||||

| YOLOV8-M | 25.9 | 78.9 | 156 | 24.6 | 40.7 |

| YOLOV8-L | 43.7 | 165.2 | 79 | 26.1 | 42.7 |

| YOLOV9-S | 7.2 | 26.7 | 160 | 22.7 | 38.3 |

| YOLOV9-M | 20.1 | 76.8 | 110 | 25.2 | 42.0 |

| YOLOV10-M | 15.4 | 59.1 | 254 | 24.5 | 40.5 |

| YOLOV10-L | 24.4 | 120.3 | 163 | 26.3 | 43.1 |

| YOLOV11-S | 9.4 | 21.3 | 265 | 23.0 | 38.7 |

| YOLOV11-M | 20.0 | 67.7 | 162 | 25.9 | 43.1 |

| Object Detectors for UAV Imagery | |||||

| HIC-YOLOV5 [50] | 9.4 | 31.7 | 82 | 26.0 | 44.3 |

| End-to-end Object Detectors | |||||

| DETR | 60 | 187 | 28 | 24.1 | 40.1 |

| Deformable DETR | 40 | 173 | 19 | 27.1 | 42.2 |

| RT-DETR-R18 | 20 | 60 | 183 | 26.7 | 44.6 |

| RT-DETR-R50 | 42 | 136 | 89 | 28.4 | 47.0 |

| Our Model | |||||

| Model-R18 | 20.8 | 79 | 126 | 27.5 | 45.9 |

| Model-R50 | 42.9 | 172 | 69 | 29.4 | 47.9 |

| Model | Params (M) | GFLOPs | FPS | APS | APM | AP | AP50 |

|---|---|---|---|---|---|---|---|

| YOLOV11-S | 9.4 | 21.3 | 255 | 27.3 | 48.7 | 27.8 | 63.0 |

| HIV-YOLOV5 | 9.4 | 31.2 | 80 | 30.8 | 20.9 | 30.5 | 65.1 |

| RT-DETR-R18 | 20.0 | 57.3 | 184 | 35.8 | 64.8 | 36.3 | 72.6 |

| RT-DETR-R50 | 42.0 | 129.9 | 69 | 37.0 | 62.3 | 37.4 | 73.5 |

| Model-R18 | 20.8 | 79 | 127 | 36.2 | 64.2 | 36.9 | 73.1 |

| Model-R50 | 42.9 | 172 | 71 | 37.3 | 61.1 | 37.5 | 74.2 |

| Category | Representative Models | Fusion Strategy | AP | AP50 |

|---|---|---|---|---|

| General Real-time Detectors | YOLOv8 YOLOv9 YOLOv10 YOLOv11 | FPN + PANet | 24.6/26.1 (+2.9/3.3) 22.7/25.2 (+4.8/4.2) 24.5/26.3 (+3.0/3.1) 23.0/25.9 (+4.5/3.5) | 40.7/42.7 (+4.8/7.2) 38.3/42.0 (+7.6/5.9) 40.5/43.1 (+5.4/4.8) 38.7/43.1 (+7.2/4.8) |

| UAV-Specialized Detectors | HIC-YOLOv5 [50] | CBAM/SE/ Reweighting | 26.0 (+1.5) | 44.3 (+1.6) |

| End-to-end Object Detectors | RT-DETR | AIFI + CCFF | 26.7/28.4 (+0.8/1.0) | 44.6/47.0 (+1.3/0.9) |

| Ours | Model-R18/R50 | FFFE + GMKI | 27.5/29.4 | 45.9/47.9 |

| Baseline | SN | FFFE | GMKI | AP | AP50 |

|---|---|---|---|---|---|

| √ | 26.7 | 44.6 | |||

| √ | √ | 26.8 | 44.8 | ||

| √ | √ | √ | 27.0 | 45.4 | |

| √ | √ | √ | √ | 27.5 | 45.9 |

| Categories | Recall | AP50 | Categories | Recall | AP50 |

|---|---|---|---|---|---|

| pedestrian | 48.1 | 53.4 | truck | 34.5 | 36.4 |

| people | 43.4 | 45.1 | tricycle | 32.3 | 32.4 |

| bicycle | 19.9 | 18.2 | awning- tricycle | 18.8 | 18.3 |

| car | 84.1 | 85.9 | bus | 60.2 | 62.5 |

| van | 46.4 | 50.5 | motor | 56.6 | 56.8 |

| Model | GFLOPs | FPS |

|---|---|---|

| RT-DETR-R18 | 60 | 183 |

| RT-DETR-R50 | 136 | 89 |

| Model-R18 | 79 | 126 |

| Model-R50 | 172 | 69 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Zhong, Y.; Zhao, D.; Han, Y.; Wang, Z. UAV Small Target Detection Method Based on Frequency-Enhanced Multi-Scale Fusion Backbone. Drones 2026, 10, 106. https://doi.org/10.3390/drones10020106

Zhong Y, Zhao D, Han Y, Wang Z. UAV Small Target Detection Method Based on Frequency-Enhanced Multi-Scale Fusion Backbone. Drones. 2026; 10(2):106. https://doi.org/10.3390/drones10020106

Chicago/Turabian StyleZhong, Yi, Di Zhao, Yi Han, and Zhou Wang. 2026. "UAV Small Target Detection Method Based on Frequency-Enhanced Multi-Scale Fusion Backbone" Drones 10, no. 2: 106. https://doi.org/10.3390/drones10020106

APA StyleZhong, Y., Zhao, D., Han, Y., & Wang, Z. (2026). UAV Small Target Detection Method Based on Frequency-Enhanced Multi-Scale Fusion Backbone. Drones, 10(2), 106. https://doi.org/10.3390/drones10020106