1. Introduction

Nonlinear problems are pervasive in scientific and engineering computations; they encompass classical nonlinear finite element problems [

1] and nonlinear programming problems in economics [

2], as well as fundamental problems in physics, chemistry, and fluid mechanics [

3,

4,

5]. Consequently, resolving nonlinear systems is represented by

which has emerged as a pivotal aspect in tackling scientific computing challenges. However, owing to the intricacy inherent in nonlinear systems, it is often difficult to obtain analytical solutions directly, which makes numerical solutions the key to solve such problems.

The iterative method stands out as the most frequently employed numerical approach for solving nonlinear systems. The Newton iterative method is the most classical iterative method, which has the following form [

6]:

where

is the Jacobian matrix of the function

iterated at step

k, and

is the inverse of

. Newton’s method can be used to find the approximate solution of the nonlinear systems

in both real and complex fields. When the initial value

is sufficiently proximate to the root of the function

, Newton’s method exhibits a convergence order of at least 2. However, Newton’s method is categorized as a single-point iterative approach. To circumvent the sluggish convergence associated with single-point iterative methods when tackling complex nonlinear problems, researchers have shifted their focus toward multi-point iterative methods, which are characterized by enhanced computational efficiency and higher convergence orders.

The concept of multi-point iterative methods was first introduced by Traub in 1964 [

7]. Since then, numerous scholars have dedicated their efforts to formulating iterative methods of varying orders and conducting convergence analyses. Cordero et al. introduced a class of optimal fourth-order iterative methods with weight functions and conducted a dynamic analysis of one of the iterative methods [

8]. Argyros et al. presented the following sixth-order iterative method (

) [

9]:

In recognizing that the format of the iterative method

(

1) is complex, this paper introduces a Newton-type iterative method with two free parameters. The format of the proposed method is as follows:

where

and

. When

and

, Iterative Method (

2) can reach the sixth order. This will be proved in Theorem 1.

To provide a more intuitive demonstration of the convergence of the proposed iterative method, fractal graphs were generated under various nonlinear functions. This approach has been employed in several studies. For instance, in leveraging fractal theory, Sabban scrutinized the stability of the proposed iterative method through dynamic plane visualization [

10]. Additionally, Wang et al. explored the parameters that ensured the stability of the iterative method by studying its fractal graph with varying parameters [

11]. Wang et al. utilized dynamic plane plots of the conformable vector obtained with Traub’s method [

12].

The contributions of this paper are summarized as follows: (1) The proposal of a sixth-order Newton-type iterative method (

30) for solving nonlinear systems accompanied by a proof of its convergence. (2) A discussion of the local convergence of the proposed sixth-order iterative method (

30) in Banach space is provided, in which scenarios where equations in nonlinear systems may lack higher-order derivatives are considered. (3) An illustration of the advantages and applicability of the new iterative method (

30) is delivered through comparisons of convergence rates and average iterative numbers with other iterative methods of the same order. This is achieved using fractal graphs and conducting numerical experiments.

The rest of this paper is arranged as follows. In

Section 2, we outline the preparations for analyzing the convergence of Iterative Method (

30). In

Section 3, the conditions that need to be satisfied to make the convergence order of Iterative Method (

1) reach the sixth order are given, and the local convergence of Iterative Method (

30) is established in Banach space by using the

-continuity condition on the first-order Fréchet derivative. The proposed analysis helps to avoid the absence of higher derivatives of the function, as well as extends Iterative Method (

30). In addition, we also give the distance information between the initial point and the exact point to ensure the convergence of the convergence sequence and the uniqueness of the solution. In

Section 4, we plot the fractal plot of Iterative Method (

30) under nonlinear polynomials and compare the average number of iterations with other iterative methods of the same order. In

Section 5, we employ Iterative Method (

30) to solve nonlinear systems and nonlinear matrix symbolic functions. In addition, we apply the analysis in

Section 3 to solve practical chemical problems to demonstrate its validity (see

Section 5). Finally, we provide a concise summary and highlight our future research directions.

2. Preparation for Convergence Analysis

The convergence proof of the iterative method is provided by Theorem 1. The proof of Theorem 1 (refer to

Section 3) reveals a requirement for the higher derivative of the function, thereby implying a constraint on convergence. Specifically, if the higher derivative of the solution function does not exist, the iterative method becomes inapplicable. Additionally, in establishing the convergence of iterative sequences, it is customary to assume that the initial point

is sufficiently proximate to the exact solution

. However, determining the precise proximity required remains uncertain. To address this, numerous scholars have undertaken research on local convergence [

13,

14,

15]. Similar to the “problem” functions in these literature, a function

defined on

is achieved with the following:

In examining this function, it was observed that its third derivative is unbounded within its domain. Consequently, Iterative Method (

2) becomes unsuitable for solving this equation. To address this limitation, a local convergence analysis was conducted, whereby the aim was to circumvent the reliance on higher derivatives in the convergence study. This approach broadened the applicability of Iterative Method (

2).

We will discuss the local convergence of the proposed iterative method in Banach spaces. Let

be a continuous Fréchet differentiable operator, where

and

are both Banach spaces,

is an open set on

, and

is convex. First, we constructed the space in which the conditions exist. For any point

and a given distance

, let us say

where

is a bounded and linear operator. Before giving the local convergence theorem, we need to assume that the following non-decreasing continuous functions exist:

- •

On the interval , the function exists and satisfies condition .

- •

Let exist, where is the least positive solution satisfying .

- •

On the interval , the function exists and satisfies condition .

- •

On the interval , the function exists and satisfies condition .

Given the existence of these three functions, for the sake of simplifying the expression in the proof process, the following functions were constructed:

We then defined the following functions on interval

:

It is easy to show that

, and that, as

s approaches

,

approaches

. We know that

, the smallest zero of

, exists, and that

. This point can be proved by applying the mean value theorem. Let us say

, then for any

, we have

Under these assumptions, the local convergence proof of the proposed iterative method is presented in Theorem 2.

3. Analysis of Convergence

In this section, we will explore the conditions under which the free parameters

and

in Iterative Method (

2) satisfy the convergence requirements, whereby it is ensured that the convergence order of Iterative Method (

2) can attain six.

Theorem 1. Consider function , which is a sufficiently Fréchet differentiable function in a neighborhood D of and . Suppose that the Jacobian is continuous and non-singular in , and that and . Thus, when the initial estimate is close enough to , the iterative sequence generated by (1) converges to , and the error equation is as follows:where and . Proof. In Iterative Method (

2), the first-order divided difference operator appears. We can consider it a mapping

, where

By expanding

at

x by a Taylor series, we obtain

Let

be the root of the nonlinear system

. If

is expanded by a Taylor series at

, then

where

and

.

By differentiating Equation (

14), we can obtain

Considering

is invertible, we can let

. Then, we have

According to , we can determine as follows:

- •

- •

- •

;

- •

From the first step in Iterative Method (

1), the following equation is established:

and

Therefore, by combining Expression (

18) and Expression (

21), we can obtain

By

,

, we then have

Using the result of Equation (

18), we have

Next, let us consider the concrete form of

t. By Equations (

18) and (

23), we have

As such, we also have

and

Combined with Equation (

18), the following formula is established:

,

and

.

If we choose

and

, then we have

□

This indicates that the iterative method in the following format achieves a sixth-order convergence.

Subsequently, we will conduct an analysis of its convergence and delve into the approximate problem of its locally unique solution.

Theorem 2. Consider the Fréchet differentiable operator on a Banach space. Let be the root of , and let . Suppose the following conditions apply to : Based on the above five conditions, , the iterative sequence can be generated by Iterative Method (30). In addition, there is . As n approaches , the distance between and approaches 0, that is, is a convergence sequence. In addition, for , the following formulas are also true: Finally, if there exists an satisfying , then the root on satisfying is unique.

Proof. Let

, then use Equation (

32) to obtain

Through the simple transformation of the above equation, we can directly obtain

When

in Iterative Method (

30), it is obtained by the first step in (

30) as follows:

Its norm can be obtained as follows:

Combined with the result of Equation (

37), the following can be obtained:

Further, we can obtain the following:

Through the second step of Iteration Method (

30), we can know

Similar to Equation (

40), is the following:

and

For the third step in Iterative Method (

30), the following can be obtained:

and

As such, this proves what happens when . By applying mathematical induction, we can prove that , so . We can also prove that as n approaches , the distance between and approaches 0, so is a convergence sequence.

Suppose that there is a point

and

that satisfies

, then we can construct the function

. Through Equation (

32) and

, we can see that the following formula is true:

That means that . As such, from , we can obtain . Thus, uniqueness is obtained. □

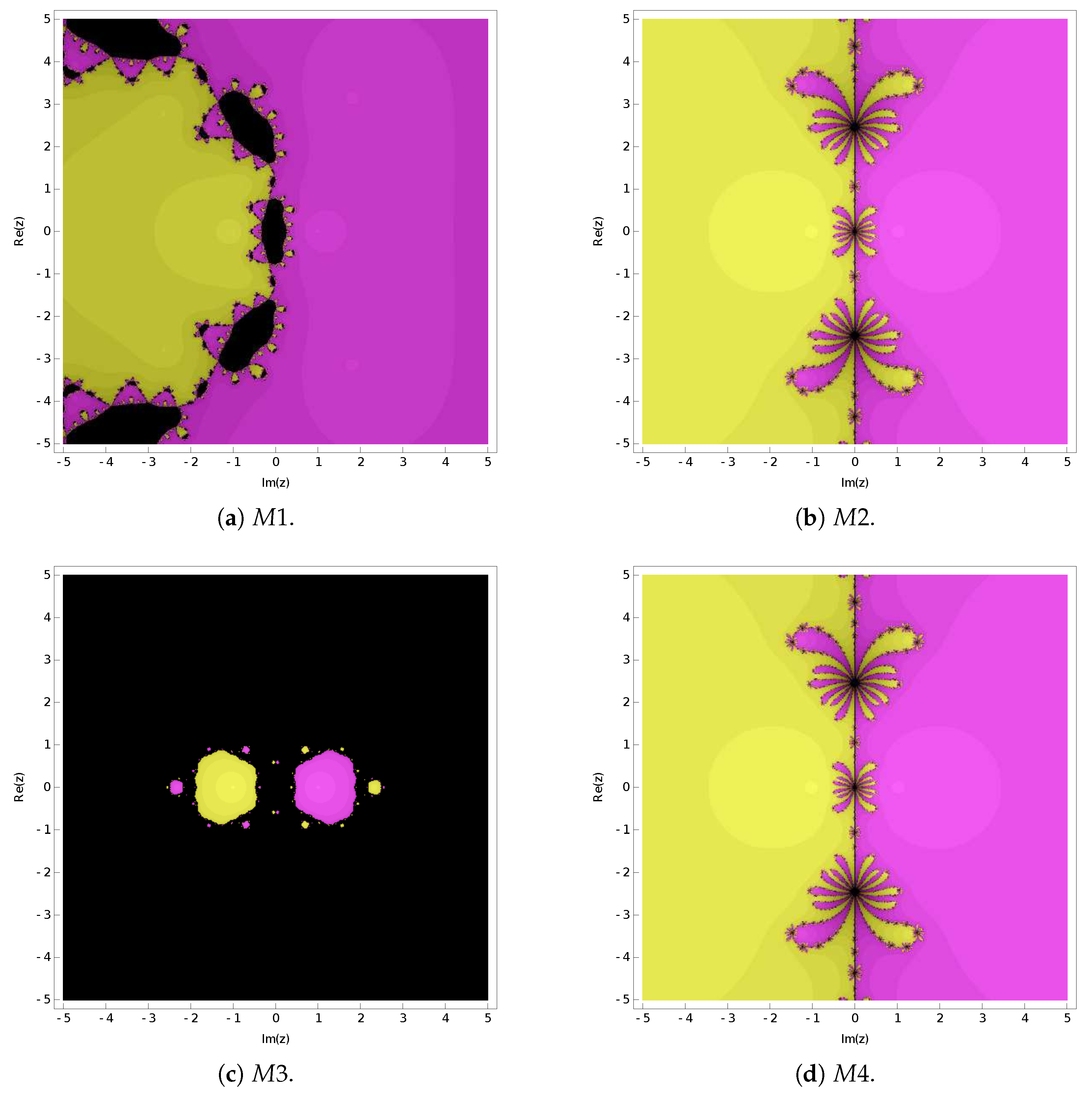

4. Fractals of Attractive Basins

In this section, we will generate fractal plots for Iterative Method (

30) under various nonlinear functions to visually illustrate its convergence. Additionally, we will depict the fractal graphs of three sixth-order iterative methods for solving nonlinear equations. The average number of iterations after five iterations were calculated and are, respectively, presented in

Figure 1 and

Figure 2, as well as

Table 1. The different colors in

Figure 1 and

Figure 2 denote the attractive basins of the different roots. The maximum number of iterations per iteration was set to 25. If the number of iterations exceeded 25 or the iteration sequence failed to converge, it is represented in black.

Let us consider the following sixth-order iterative methods: Iterative Method

, which was proposed by Wang [

16]; and

, which was proposed by Behl et al. [

17]. As for Iterative Method (

30), we will label it as

. Specifically,

and

take the following forms, respectively:

Upon examining

Figure 1 and

Figure 2, it is evident that the convergence of Iterative Methods

and

surpasses that of Iterative Methods

and

. The data presented in

Table 1 further support this observation, with Iterative Method

demonstrating the lowest average number of iterations over the five iterations.

Building on this conclusion, the subsequent section will involve numerical experiments to compare the performance of Iterative Method

(

52) and Iterative Method

(

30).

5. Numerical Experiments and Practical Applications

In this section, we will utilize Iterative Method (

30) to solve nonlinear systems and matrix symbolic functions. We will then compare its performance with that of Iterative Method

(

52), where the advantages of Iterative Method (

30) is emphasized. In order to demonstrate the advantages of Iterative Method (

30) in terms of computational accuracy, we will also compare the following three other sixth-order iterative methods:

(

54) [

18],

(

55) [

19], and

(

56) [

20]. Method

is as follows:

Method

is as follows:

Method

is as follows:

Additionally, we will apply Iterative Method (

30) to address practical chemistry problems, thus showcasing its applicability.

5.1. Solving Nonlinear Systems

We will address the following three nonlinear systems (where

k represents the number of iterations, the experimental accuracy was 2048, and the experimental results are presented in

Table 2,

Table 3 and

Table 4):

During the iteration, we chose the initial value to be . The solution to the system is . The stop criterion is .

During the iteration, we chose the initial value to be . The solution to the system is . The stop criterion is .

Here, m is the number of equations. During the iteration, we chose the initial value to be . When , the solution to the system is . The stop criterion is .

The experimental results from

Table 2,

Table 3 and

Table 4 show that the convergence accuracy of Iterative Method

is inferior to the other four iterative methods. Therefore, we will contrast Iterative Methods

and

in the next section by solving a nonlinear matrix sign function.

5.2. Solving the Matrix Sign Function

In this section, we will, respectively, apply Iterative Methods and to solve the nonlinear matrix symbolic function , where I represents the identity matrix. Therefore, when solving this function, the corresponding iterative formats for and are

- •

,

- •

,

- •

,

- •

The experimental results are displayed in

Table 5, where

n represents the number of iterations,

t represents the CPU running time, and

indicates no convergence. The termination criterion was set to

.

According to the experimental results in

Table 5, it can be seen that Iterative Method

is superior to

and

in solving the nonlinear matrix symbolic function.

5.3. Practical Applications

The gas equation of the state problem stands out as one of the most crucial challenges in addressing practical chemical problems. In this context, we will apply Theorem 2 from

Section 3 to this problem. To begin with, let us consider the following van der Waals equation:

where

a = 4.17 atm·L/mol

2 and

b = 0.0371 L/mol. Then, the volume of the container is found by considering the pressure of 945.36 kPa (9.33 atm) and the temperature of 300.2 K with 2 mol nitrogen. Finally, by substituting the data into (

60), we obtain

In the context of a practical problem, the solution to this nonlinear equation can only be found on

. If we further qualify

, then

, where

is the result of preserving 6 significant digits for the exact solution. As such, by using Theorem 2, we find

According to Theorem 2, we can finally obtain

As such, .

In the chemical production process of converting nitrogen–hydrogen feed into ammonia, if the air pressure is 250 atm and the temperature is 500 degrees Celsius, then the following equation can be derived:

In a practical context, by limiting the range of solutions to

, we know that the root of Equation (

61) is

. Then, we have

According to Theorem 2, we can finally obtain

As such, .

6. Conclusions and Discussion

In this paper, a class of iterative methods for solving nonlinear systems (

1) was presented. Through the proof results in Theorem 1, we established that, when

and

, Iterative Method (

1) can reach a sixth-order convergence, which is Iterative Method (

30).

During the proof of Theorem 1, we noticed that this convergence process has a high limitation on the existence of the higher derivatives of functions. But not all functions have higher derivatives. Therefore, we discussed the local convergence of Iterative Method (

30) in

Section 3. By using the

-continuity condition on the first-order Fréchet derivative in Banach space, we established the conditions for a local convergence of Iterative Method (

30), thus avoiding the discussion of the higher-order derivative of the function.

Finally, by drawing the attractive basin of Iterative Method (

30)—as well as by comparing it with the average number of iterations of five cycles for the known sixth-order iterative methods

,

, and

for solving nonlinear systems—it was shown that the new Iterative Method

(

30) is superior to the other three iterative methods in terms of convergence and average number of iterations. In the experiment where nonlinear systems were solved, it was also shown that the convergence accuracy of Iterative Method (

30) is better than that of Iterative Method

. Furthermore, when employing Iterative Methods

and

to simultaneously solve the nonlinear matrix symbolic function, it is evident that

exhibits broader applicability. Through leveraging the local convergence established in Theorem 2 for Iterative Method

, we proceeded to address the practical chemical problems. Through these experiments, we have objectively demonstrated the plausibility of our proposal iterative method.

Building upon the foundation laid in this paper, our future focus will be on proposing diverse forms of iterative methods with higher convergence orders. This will involve analyzing their local convergence, semi-local convergence, and employing fractal theory to study their stability.