On Entropy of Some Fractal Structures

Abstract

1. Introduction

- if

- and

- and if

- if

- and

- and if

1.1. General Entropy of Graphs

1.1.1. The First Zagreb Entropy

1.1.2. The Second Zagreb Entropy

1.1.3. The Forgotten Entropy

2. Results and Discussion

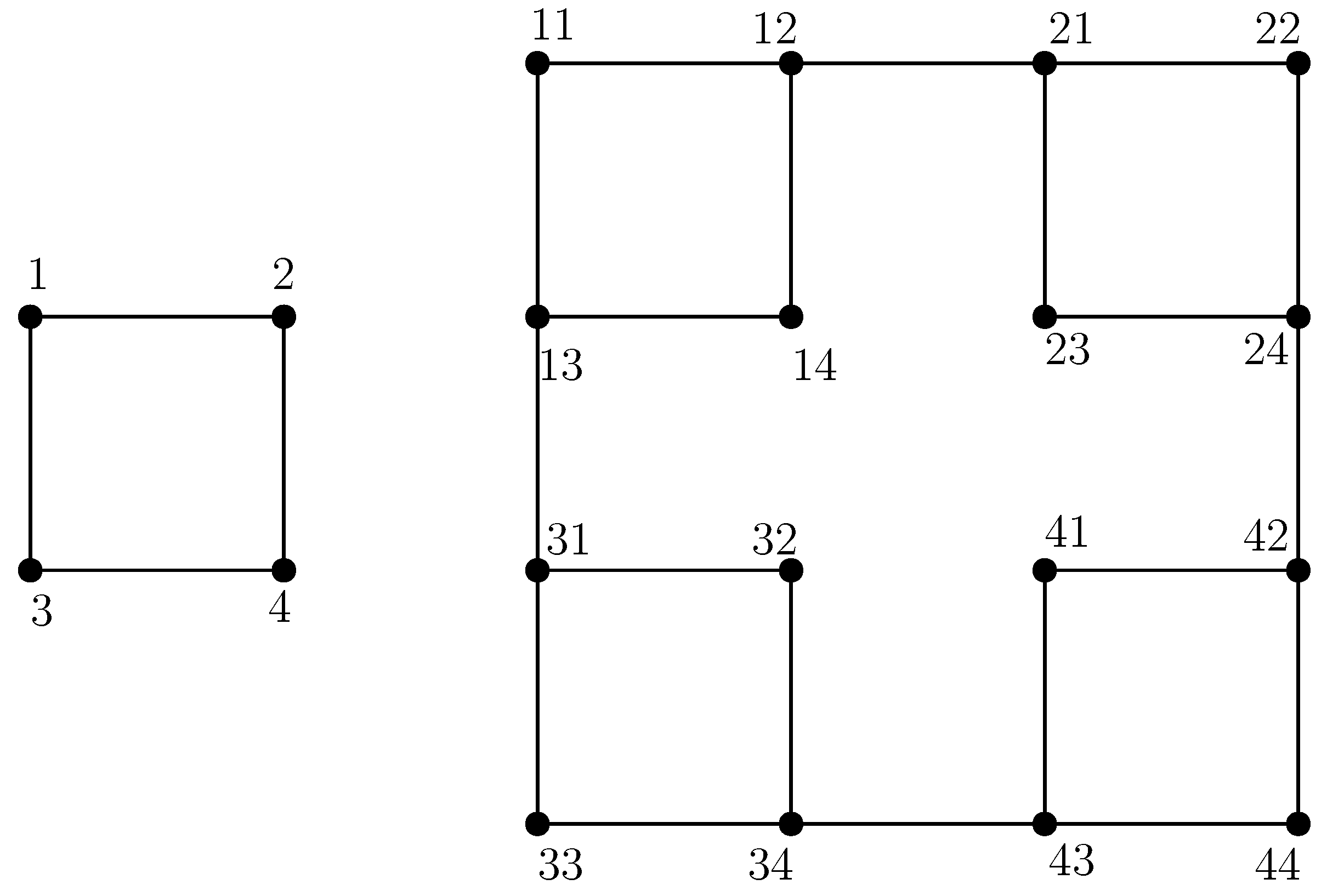

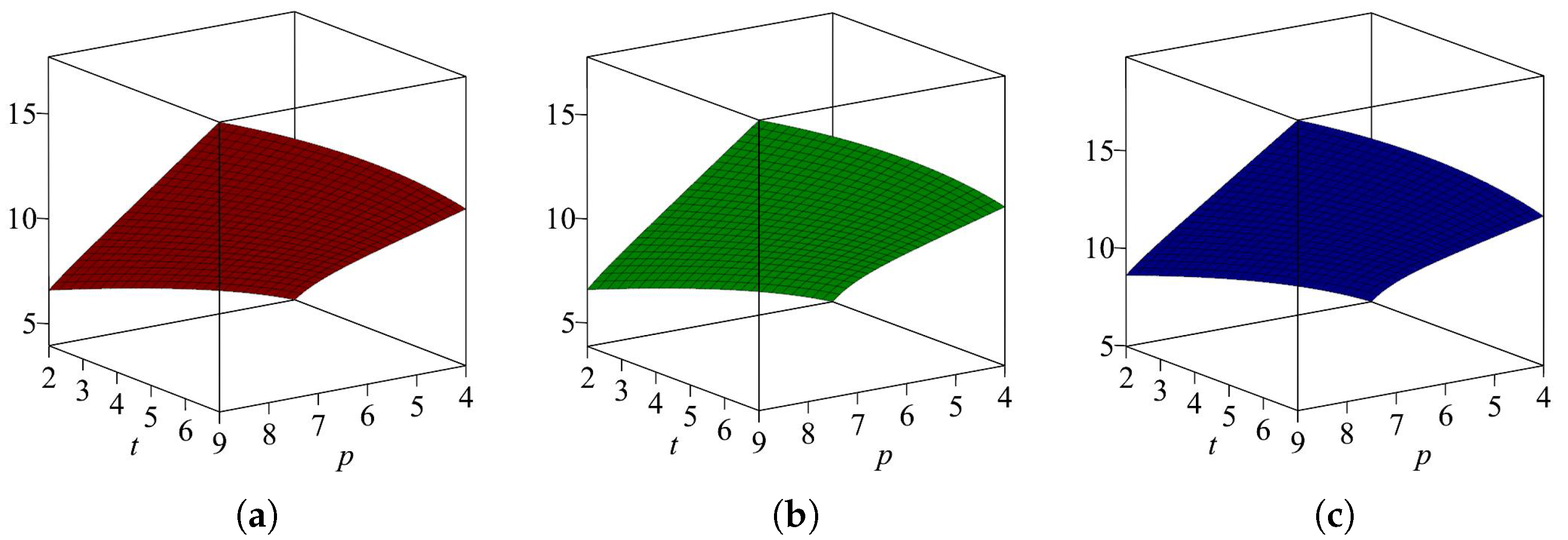

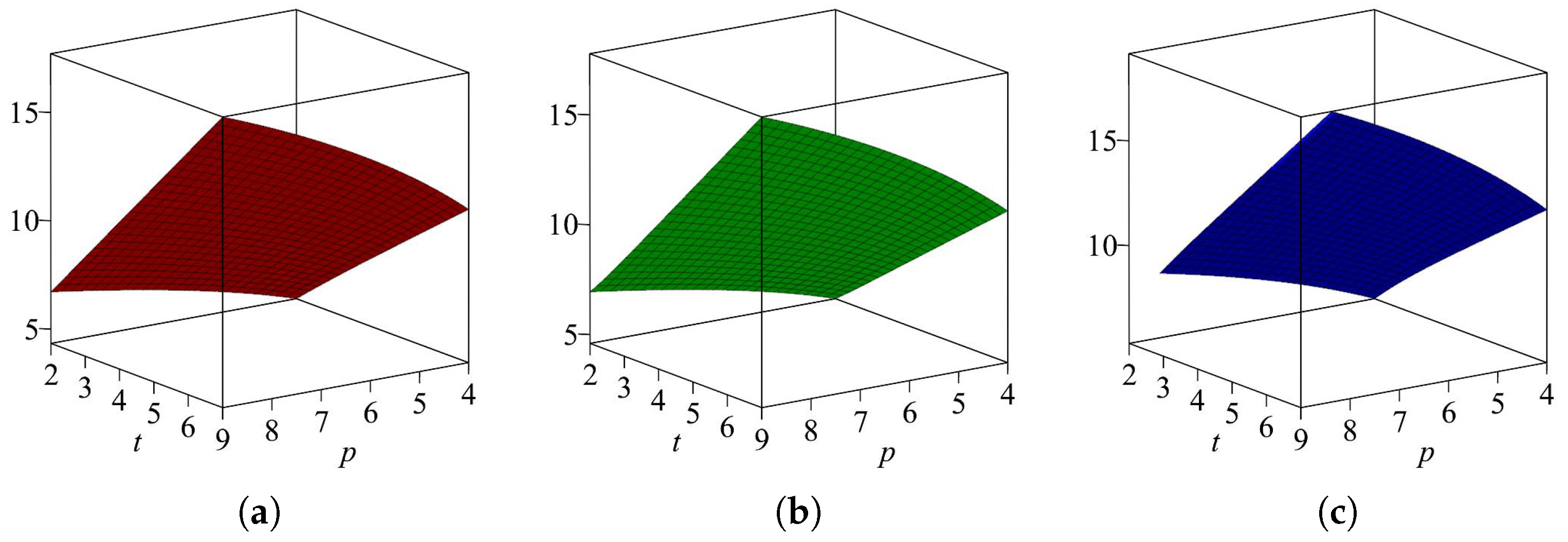

2.1. Results for Sierpiński Graph When Cycle Is a Base Graph

2.1.1. First Zagreb Entropy

2.1.2. Second Zagreb Entropy

2.1.3. Forgotten Entropy

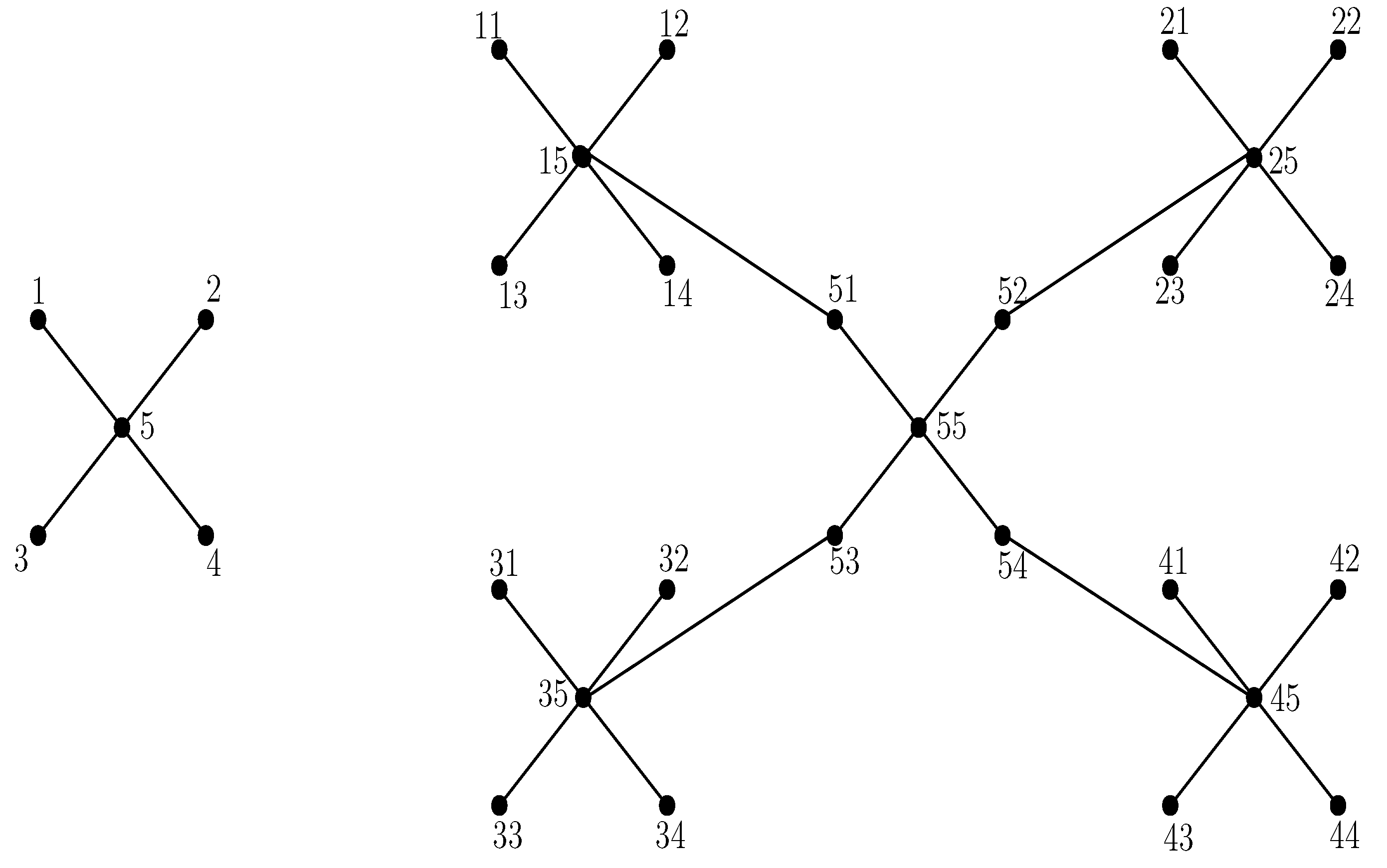

2.2. Results for the Sierpiński Graph When Star Is a Base Graph

2.2.1. First Zagreb Entropy

2.2.2. Second Zagreb Entropy

2.2.3. Forgotten Entropy

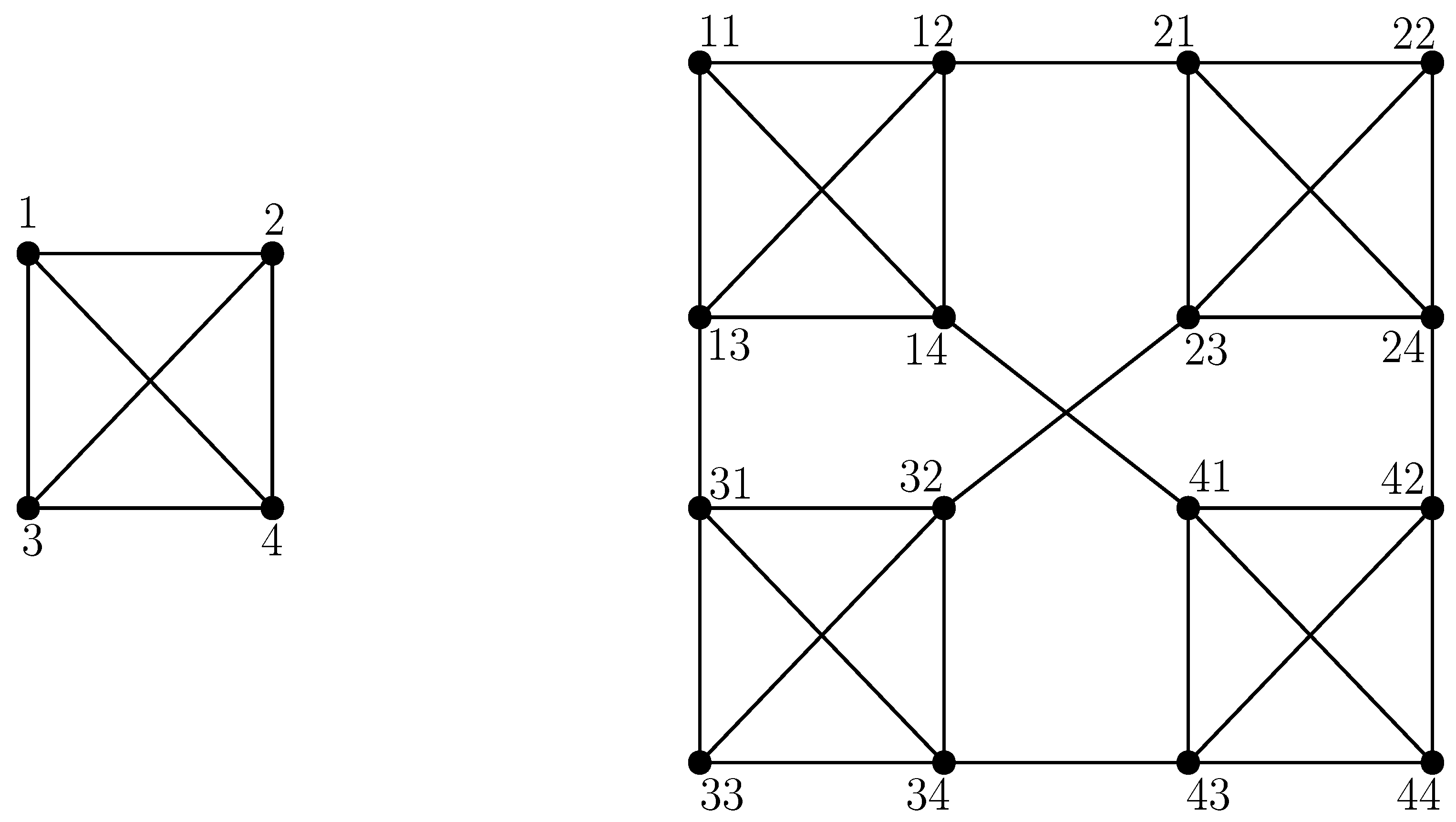

2.3. Results for the Sierpiński Graph When Complete Graph Is a Base Graph

2.3.1. First Zagreb Entropy

2.3.2. Second Zagreb Entropy

2.3.3. Forgotten Entropy

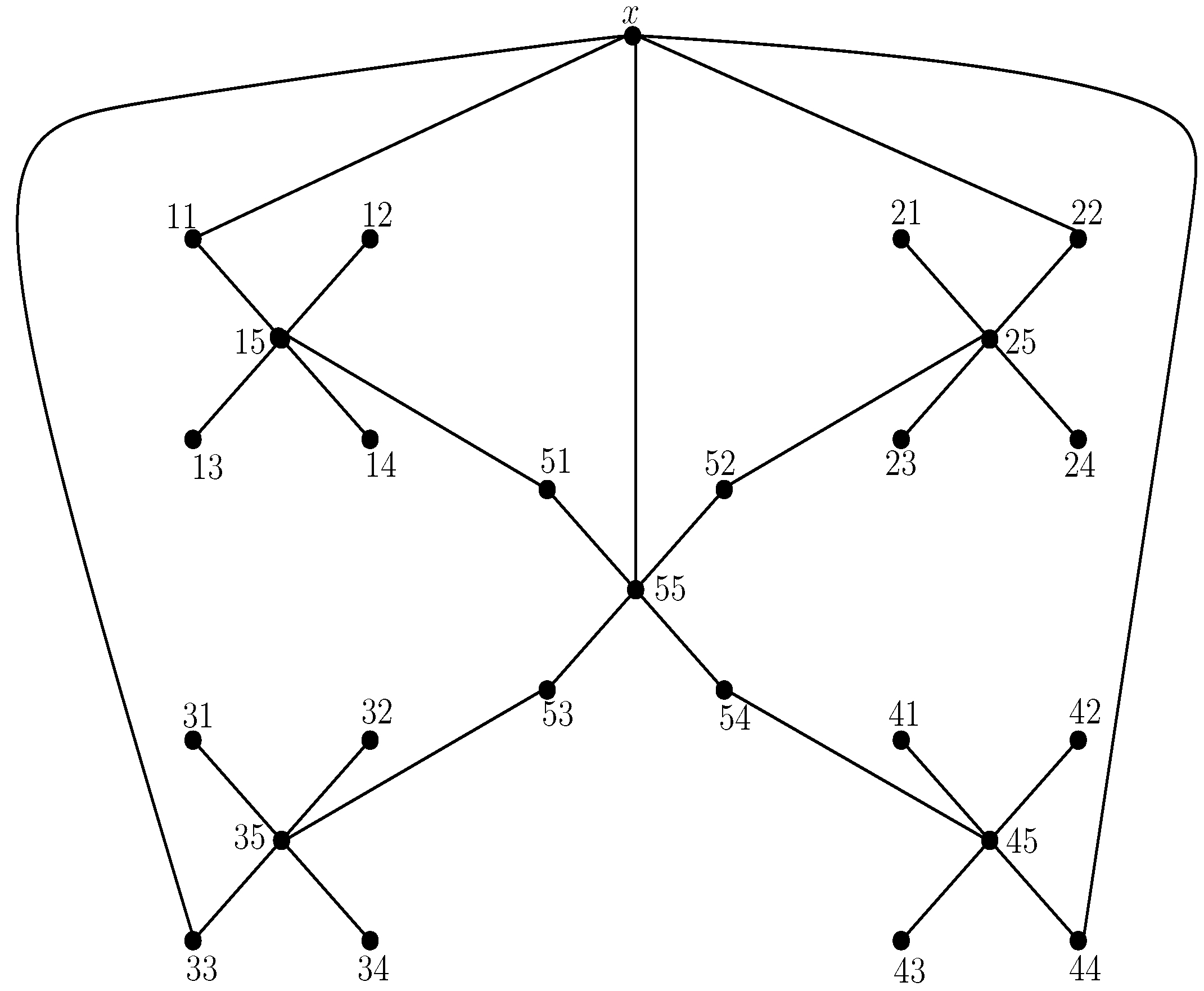

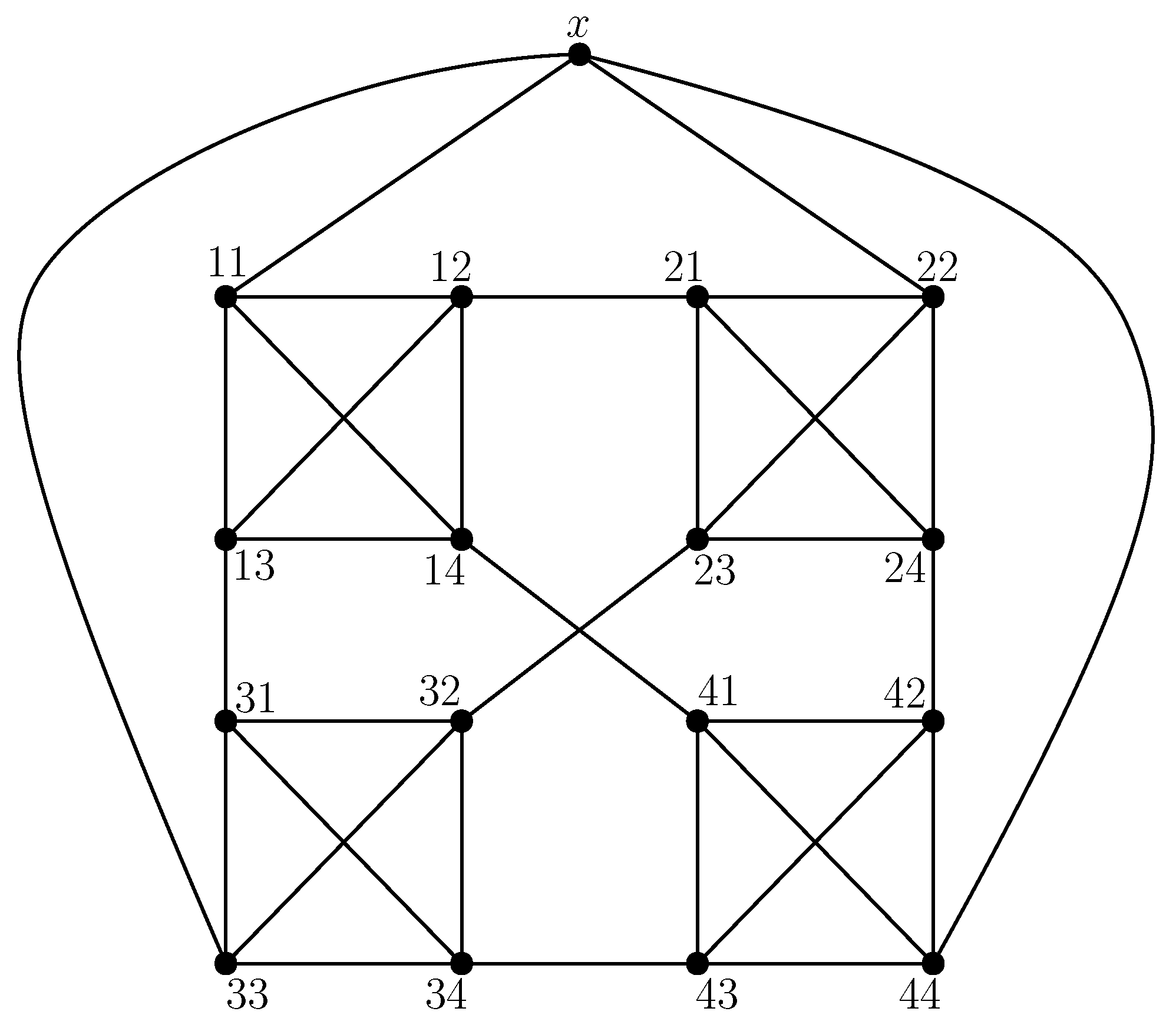

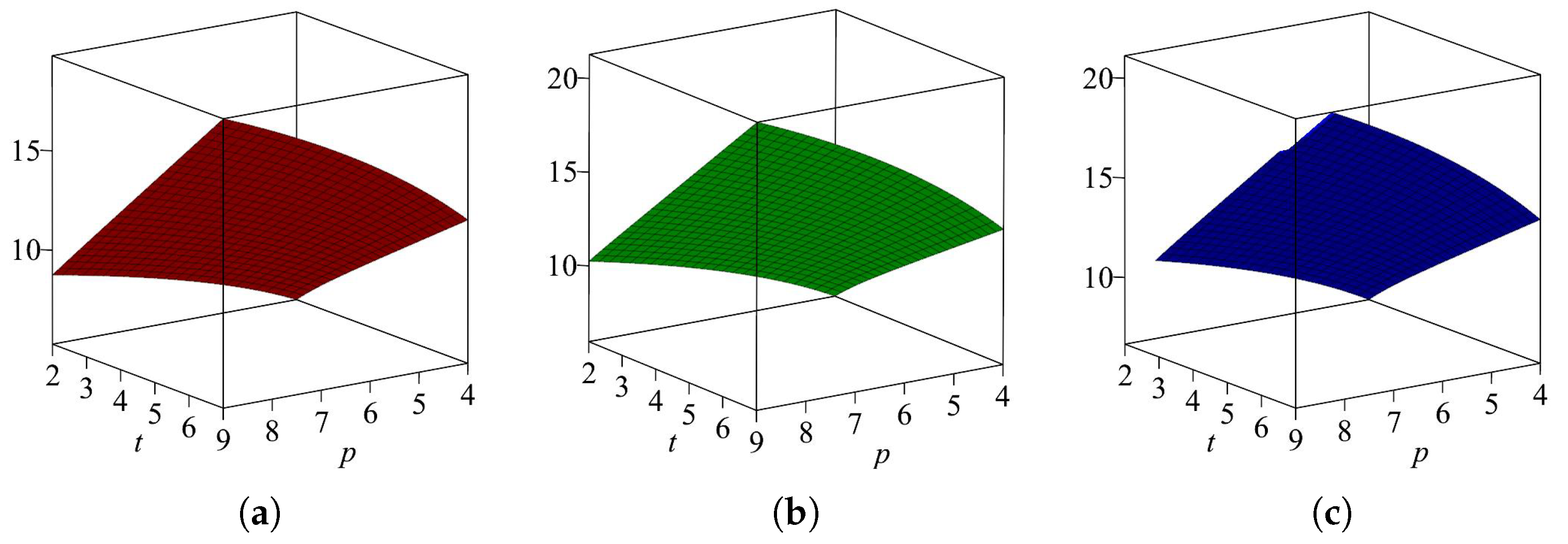

2.4. Results for Extended Sierpiński Graphs

2.4.1. Results for the Extended Sierpiński Graph When Cycle Is a Base Graph

2.4.2. First Zagreb Entropy

2.4.3. Second Zagreb Entropy

2.4.4. Forgotten Entropy

2.5. Results for the Extended Sierpiński Graph When Star Is a Base Graph

2.5.1. First Zagreb Entropy

2.5.2. Second Zagreb Entropy

2.5.3. Forgotten Entropy

2.6. Results for the Extended Sierpiński Graph When Complete Graph Is a Base Graph

2.6.1. First Zagreb Entropy

2.6.2. Second Zagreb Entropy

2.6.3. Forgotten Entropy

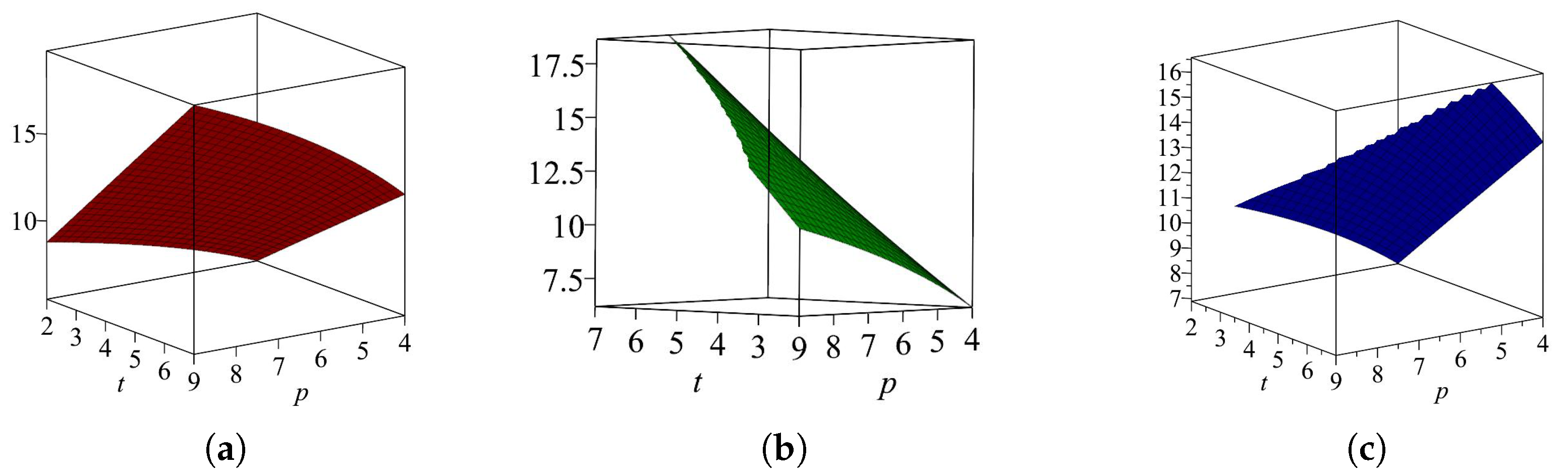

3. Comparison of Entropies for Sierpiński and Extended Sierpiński Graphs

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Shannon, C.E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Ahmad, A.; Elahi, K.; Azeem, M.; Swaray, S.; Asim, M.A. Topological descriptors for the metal organic network and its structural properties. J. Math. 2023, 9, 9859957. [Google Scholar] [CrossRef]

- Hussain, M.T.; Javaid, M.; Parveen, S.; Awais, H.M.; Alam, M.N. Studies of metal organic networks via M-polynomial-based topological indices. J. Math. 2022, 2022, 7407924. [Google Scholar] [CrossRef]

- Rashevsky, N. Life, Information theory, and topology. Bull. Math. Biol. 1955, 17, 229–235. [Google Scholar] [CrossRef]

- Trucco, E. A note on the information content of graphs. Bull. Math. Biol. 1956, 18, 129–135. [Google Scholar] [CrossRef]

- Tan, Y.J.; Wu, J. Network structure entropy and its application to scale-free networks. Syst. Eng.-Theory Pract. 2004, 6, 1–3. [Google Scholar]

- Amâncio, M.A.; Pinto, E.P.; Matos, R.S.; Nobre, F.X.; Brito, W.R.; da Fonseca Filho, H.D. Nanoscale morphology and fractal analysis of TiO2 coatings on ITO substrate by electrodeposition. J. Microsc. 2021, 282, 162–174. [Google Scholar] [CrossRef]

- Barrow, J.D.; Spyros, B.; Emmanuel, N.S. Big bang nucleosynthesis constraints on Barrow entropy. Phys. Lett. 2021, 815, 136134. [Google Scholar] [CrossRef]

- Lei, M.; Cheong, K.H. Node influence ranking in complex networks: A local structure entropy approach. Chaos Solitons Fractals 2022, 160, 112136. [Google Scholar] [CrossRef]

- Xie, X.; Deng, H.; Li, Y.; Hu, L.; Mao, J.; Li, R. Investigation of the Oriented Structure Characteristics of Shale Using Fractal and Structural Entropy Theory. Fractal Fract. 2022, 6, 734. [Google Scholar] [CrossRef]

- Zhang, Q.; Meizhu, L. A betweenness structural entropy of complex networks. Chaos Solitons Fractals 2022, 161, 112264. [Google Scholar] [CrossRef]

- Zhang, G.; Wang, H.; Israr, J.; Ma, W.; Yang, Y.; Ren, K. A Fractal Entropy-Based Effective Particle Model Used to Deduce Hydraulic Conductivity of Granular Soils. Fractal Fract. 2022, 6, 474. [Google Scholar] [CrossRef]

- Zhou, Q.; Deng, Y. Fractal-based belief entropy. Inform. Sci. 2022, 587, 265–282. [Google Scholar] [CrossRef]

- Bonchev, D.G. Kolmogorov’s information shannon’s entropy and topological complexity of molecules. Bulg. Chem. Commun. 1995, 28, 567–582. [Google Scholar]

- Castellano, G.; Torrens, F. Information entropy-based classification of triterpenoids and steroids from Ganoderma. Phytochemistry 2015, 116, 305–313. [Google Scholar] [CrossRef]

- Dehmer, M.; Varmuza, K.; Borgert, S.; Emmert-Streib, F. On entropy-based molecular descriptors: Statistical analysis of real and synthetic chemical structures. J. Chem. Inf. Model. 2009, 7, 1655–1663. [Google Scholar] [CrossRef]

- Bonchev, D.; Trinajstic, N. Information theory, distance matrix and molecular branching. J. Chem. Phys. 1977, 67, 4517–4533. [Google Scholar] [CrossRef]

- Manzoor, S.; Siddiqui, M.K.; Ahmad, S. On entropy measures of polycyclic hydroxychloroquine used for novel coronavirus (COVID-19) treatment. Polycycl. Aromat. Comp. 2022, 42, 2947–2969. [Google Scholar] [CrossRef]

- Abraham, J.; Arockiaraj, M.; Jency, J.; Kavitha, S.R.J.; Balasubramanian, K. Graph entropies, enumeration of circuits, walks and topological properties of three classes of isoreticular metal organic frameworks. J. Math. Chem. 2022, 60, 695–732. [Google Scholar] [CrossRef]

- Shabbir, A.; Nadeem, M.F. Computational Analysis of Topological Index-Based Entropies of Carbon Nanotube Y-Junctions. J. Stat. Phys. 2022, 188, 31. [Google Scholar] [CrossRef]

- Shanmukha, M.C.; Usha, A.; Basavarajappa, N.S.; Shilpa, K.C. Graph entropies of porous graphene using topological indices. Comput. Theor. Chem. 2021, 1197, 113142. [Google Scholar] [CrossRef]

- Estrada-Moreno, A.; Rodriguez-Velazques, J.A. On the general Randic index of polymeric networks modelled by generalized Sierpiński graphs. Discr. Appl. Math. 2019, 263, 140–151. [Google Scholar] [CrossRef]

- Kang, S.M.; Asghar, A.; Ahmad, H.; Kwun, Y.C. Irregularity of Sierpiński graph. J. Discr. Math. Sci. Cryp. 2019, 22, 1269–1280. [Google Scholar] [CrossRef]

- Varghese, J.; Aparna, L.; Anu, V. Domination parameters of generalized Sierpiński graphs. AKCE Int. J. Graph. Comb. 2022. [Google Scholar] [CrossRef]

- Klavžar, S.; Milutinović, U. Graphs S(n,k) and a Variant of the Tower of Hanoi Problem. Czech. Math. J. 1997, 47, 95–104. [Google Scholar] [CrossRef]

- Klavžar, S.; Milutinović, U.; Petr, C. 1-perfect codes in Sierpiński graphs. Bull. Aust. Math. Soc. 2002, 66, 369–384. [Google Scholar] [CrossRef]

- Imran, M.; Hafi, S.; Gao, W.; Farhani, M.R. On topological properties of Sierpiński networks. Chaos Solitons Fractals 2017, 98, 199–204. [Google Scholar] [CrossRef]

- Gravier, S.; Kovše, M.; Mollard, M.; Moncel, J.; Parreau, A. New results on variants of covering codes in Sierpiński graphs. Des. Codes Cryptogr. 2013, 69, 181–188. [Google Scholar] [CrossRef]

- Hinz, A.M.; Heide, C.H. An efficient algorithm to determine all shortest paths in Sierpiński graphs. Discrete Appl. Math. 2014, 177, 111–120. [Google Scholar] [CrossRef]

- Klavžar, S.; Zemljic, S.S. On distances in Sierpiński graphs: Almost-extreme vertices and metric dimension. Appl. Anal. Discrete Math. 2013, 7, 72–82. [Google Scholar] [CrossRef]

- Hinz, A.M.; Parisse, D. The average eccentricity of Sierpiński graphs. Graphs Combin. 2012, 28, 671–686. [Google Scholar] [CrossRef]

- Rodríguez-Velázquez, J.A.; Rodrxixguez-Bazan, E.D.; Estrada-Moreno, A. On generalized Sierpinski graphs. Discuss. Math. Graph Theory 2017, 37, 547–560. [Google Scholar]

- Ishfaq, F.; Imran, M.; Nadeem, M.F. Topological aspects of extended Sierpiński structures with help of underlying networks. J. King Saud Univ. Sci. 2022, 34, 102126. [Google Scholar] [CrossRef]

- Hinz, A.M.; Klavžar, S.; Zemljič, S.S. A survey and classification of Sierpiński-type graphs. Discrete Appl. Math. 2017, 217, 565–600. [Google Scholar] [CrossRef]

- Gravier, S.; Kovse, M.; Parreau, A. Generalized Sierpinski graphs. In Proceedings of the Posters at EuroComb’11, Budapest, Hungary, 29 August–2 September 2011; Available online: http://www.renyi.hu/conferences/ec11/posters/parreau.pdf (accessed on 21 August 2016).

- Akhter, S.; Imran, M. Computing the forgotten topological index of four operations on graphs. AKCE Int. J. Graphs. Comb. 2017, 14, 70–79. [Google Scholar] [CrossRef]

- Das, K.C.; Akgnes, N.; Togan, M.; Yurtas, A.; Cangl, I.N.; Cevik, A.S. On the first Zagreb index and multiplicative Zagreb coindices of graphs. Analele Stiint. Univ. Ovidius Contanta 2016, 21, 153–176. [Google Scholar] [CrossRef]

- Mauri, A.; Consonni, V.; Todeschini, R. Molecular descriptors. In Handbook of Computational Chemistry; Springer: Cham, Switzerland, 2017; pp. 2065–2093. [Google Scholar]

- Yasmeen, F.; Imran, M.; Akhter, S.; Ali, Y.; Ali, K. On topological polynomials and indices for metal-organic and cuboctahedral bimetallic networks. Main. Group. Met. Chem. 2022, 45, 136–151. [Google Scholar] [CrossRef]

- Wiener, H. Structural determination of paraffin boiling point. J. Am. Chem. Soc. 1947, 69, 17–20. [Google Scholar] [CrossRef]

- Gutman, I.; Trinajstic, N. Graph theory and moleculer orbitals. Total pi-electron energy of alternate hydrocarbons. Chem. Phys. Lett. 1972, 17, 535–548. [Google Scholar] [CrossRef]

- Gutman, I.; Ruiscic, N.; Wilcox, C.F.; Phys, J.C. Graph theory and moleculer orbitals. XII Polyenes. 1975, 62, 3399–4405. [Google Scholar]

- Furtula, B.; Gutman, I. A forgotten topological index. J. Math. Chem. 2015, 53, 1184–1190. [Google Scholar] [CrossRef]

- Chen, Z.; Dehmer, M.; Shi, Y. A Note on Distance-based Graph Entropies. Entropy 2014, 16, 5416–5427. [Google Scholar] [CrossRef]

| Frequency | Set of Edges | |

|---|---|---|

| Frequency | Set of Edges | |

|---|---|---|

| Frequency | Set of Edges | |

|---|---|---|

| Frequency | Set of Edges | |

|---|---|---|

| p |

| Frequency | Set of Edges | |

|---|---|---|

| 1 | ||

| Frequency | Set of Edges | |

|---|---|---|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ghazwani, H.; Nadeem, M.F.; Ishfaq, F.; Koam, A.N.A. On Entropy of Some Fractal Structures. Fractal Fract. 2023, 7, 378. https://doi.org/10.3390/fractalfract7050378

Ghazwani H, Nadeem MF, Ishfaq F, Koam ANA. On Entropy of Some Fractal Structures. Fractal and Fractional. 2023; 7(5):378. https://doi.org/10.3390/fractalfract7050378

Chicago/Turabian StyleGhazwani, Haleemah, Muhammad Faisal Nadeem, Faiza Ishfaq, and Ali N. A. Koam. 2023. "On Entropy of Some Fractal Structures" Fractal and Fractional 7, no. 5: 378. https://doi.org/10.3390/fractalfract7050378

APA StyleGhazwani, H., Nadeem, M. F., Ishfaq, F., & Koam, A. N. A. (2023). On Entropy of Some Fractal Structures. Fractal and Fractional, 7(5), 378. https://doi.org/10.3390/fractalfract7050378