Controller-Free Hand Tracking for Grab-and-Place Tasks in Immersive Virtual Reality: Design Elements and Their Empirical Study

Abstract

:1. Introduction

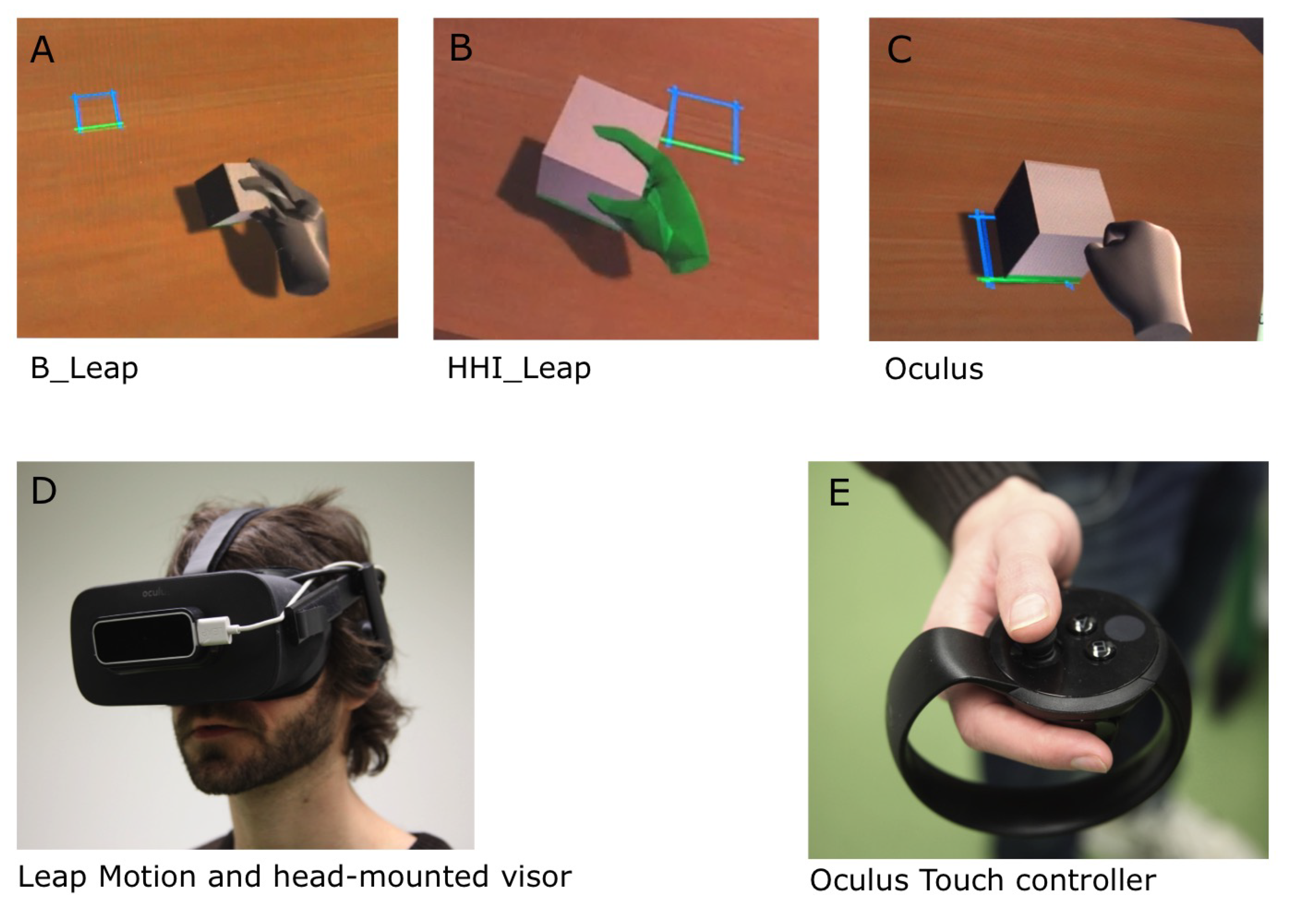

1.1. Controller-Free Object Interaction

1.2. Controller-Free Interaction with the Leap Motion

1.3. Design Challenges to Hand Tracking

1.4. Task and Prototype

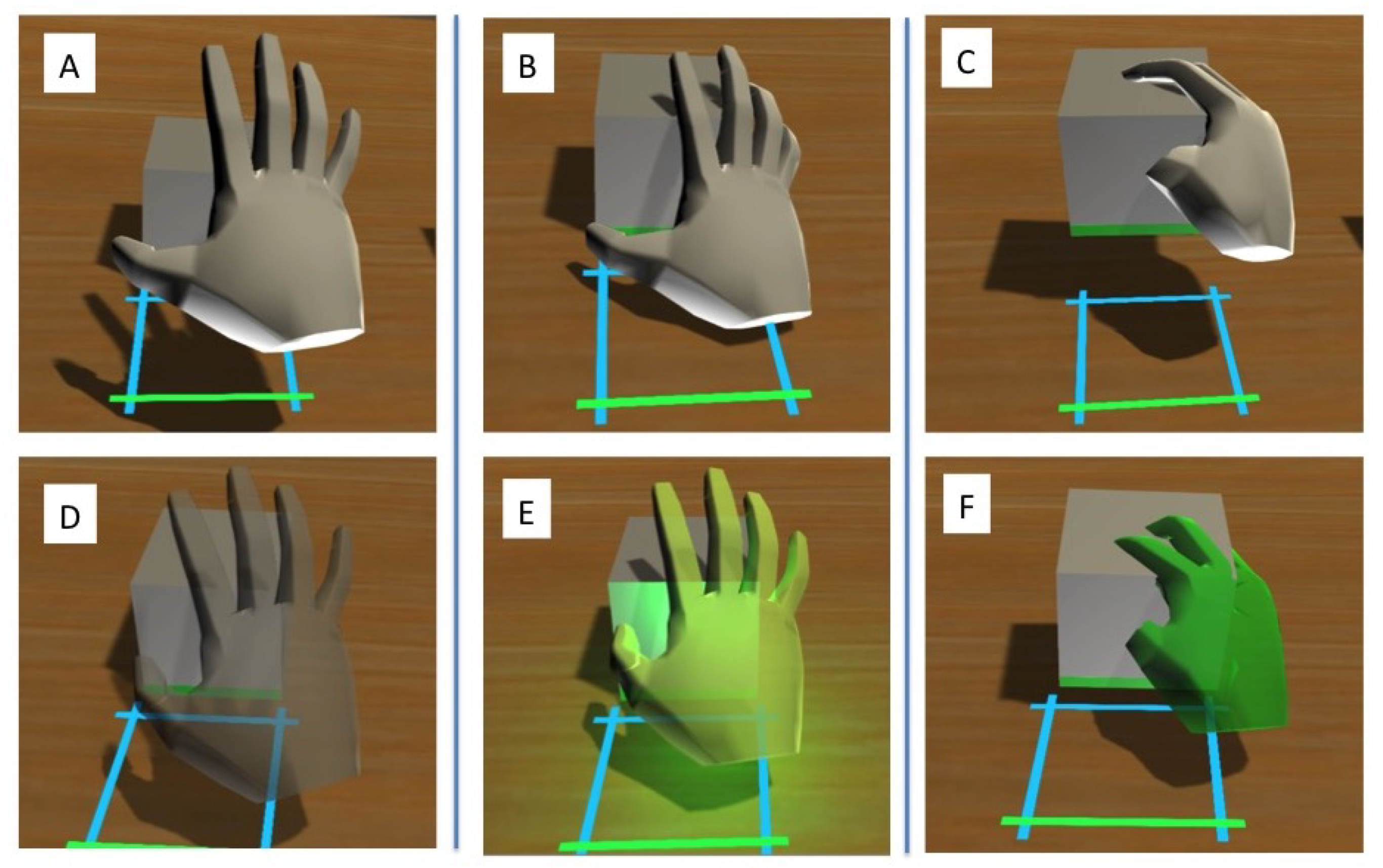

- Colour: Smart object colouring (a green “spotlight” emitted from the virtual hand-see Figure 1) to indicate when an object is in grabbing distance. Color indicators on the virtual hand, the virtual object or both have been shown to improve time on task, accuracy of placement and subjective user experience [5,15,16].

- Grab restriction: The user can only grab the object after first making an open-hand gesture within grabbing distance of the object, in order to prevent accidental grabs.

- Transparency: Semi-transparent hand representation as long as no object is grabbed, to allow the user to see the object even if it is occluded by the hand (Figure 1).

- Grabbing area: The grabbing area is extended so that near misses (following an open hand gesture, see above) are still able to grab the object.

- Velocity restriction: If the hand is moving above a certain velocity, grabbing cannot take place, in order to prevent uncontrolled grabs and accidental drops).

- Trajectory ensurance: Once the object is released from the hand, rogue finger placement cannot alter the trajectory of the falling object.

- Acoustic support: Audio feedback occurs when an object is grabbed and when an object touches the table surface after release (pre-installed sounds available in the Unity library).

1.5. Present Study and Hypotheses

Hypotheses

- Performance measures: The HHI_Leap shows lower accuracy (greater distance from target), higher times (total time, grab time and release time) and more errors (accidental drops) than the Oculus controller.

- Subjective measures: The HHI_Leap is rated higher than the Oculus controller for naturalness and intuitiveness. For all other subjective measures (other individual ratings, SUS, overall preference), we did not have hypotheses (exploratory analyses).

- Performance measures: The HHI_Leap shows higher accuracy (greater distance from target), lower times (total time, grab time and release time) and fewer errors (accidental drops) than the B_Leap.

- Subjective measures: The HHI_Leap is rated higher than the B_Leap on all subjective measures (individual rating questions, SUS, overall preference).

2. Methods

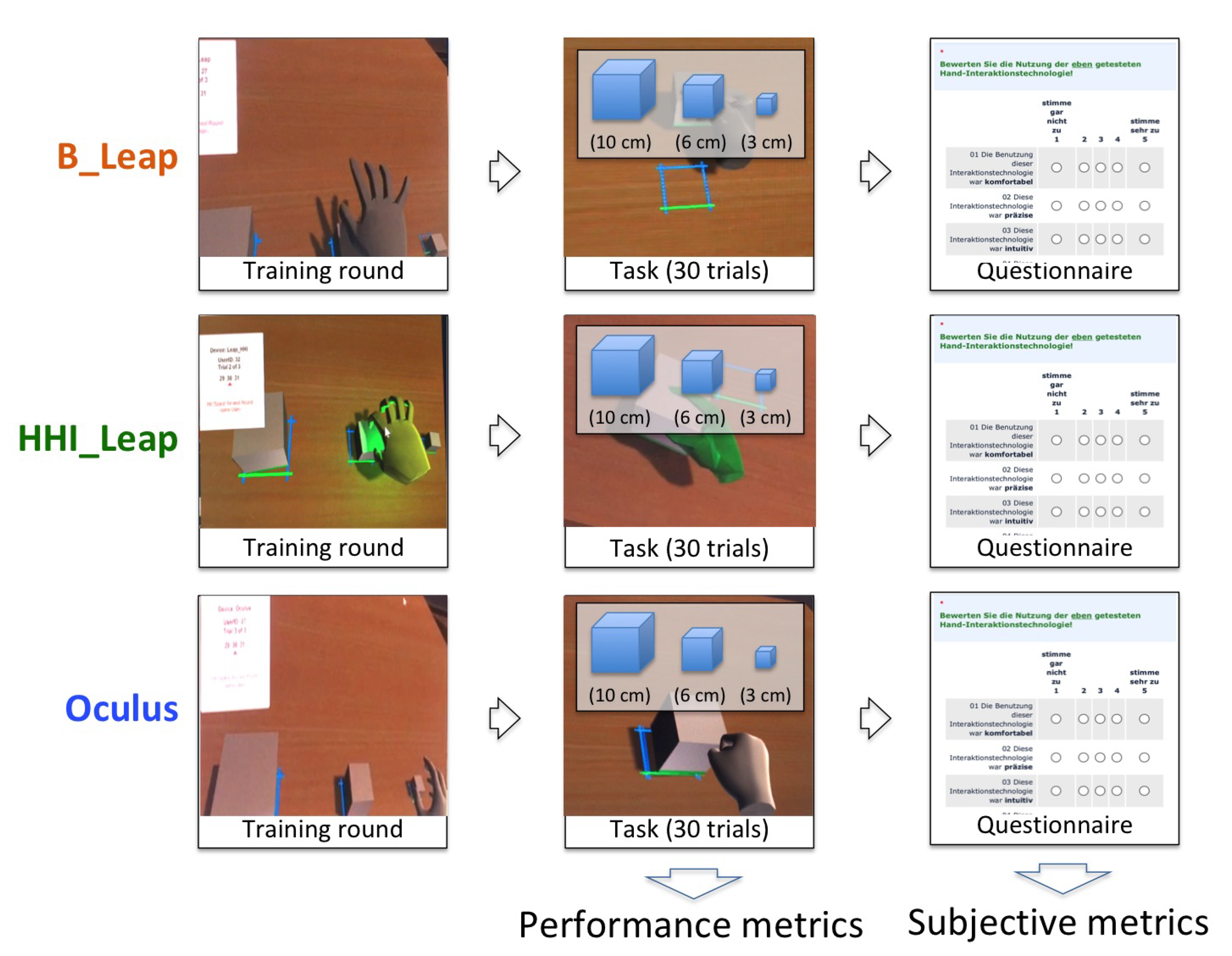

2.1. Study Design

2.2. Sample

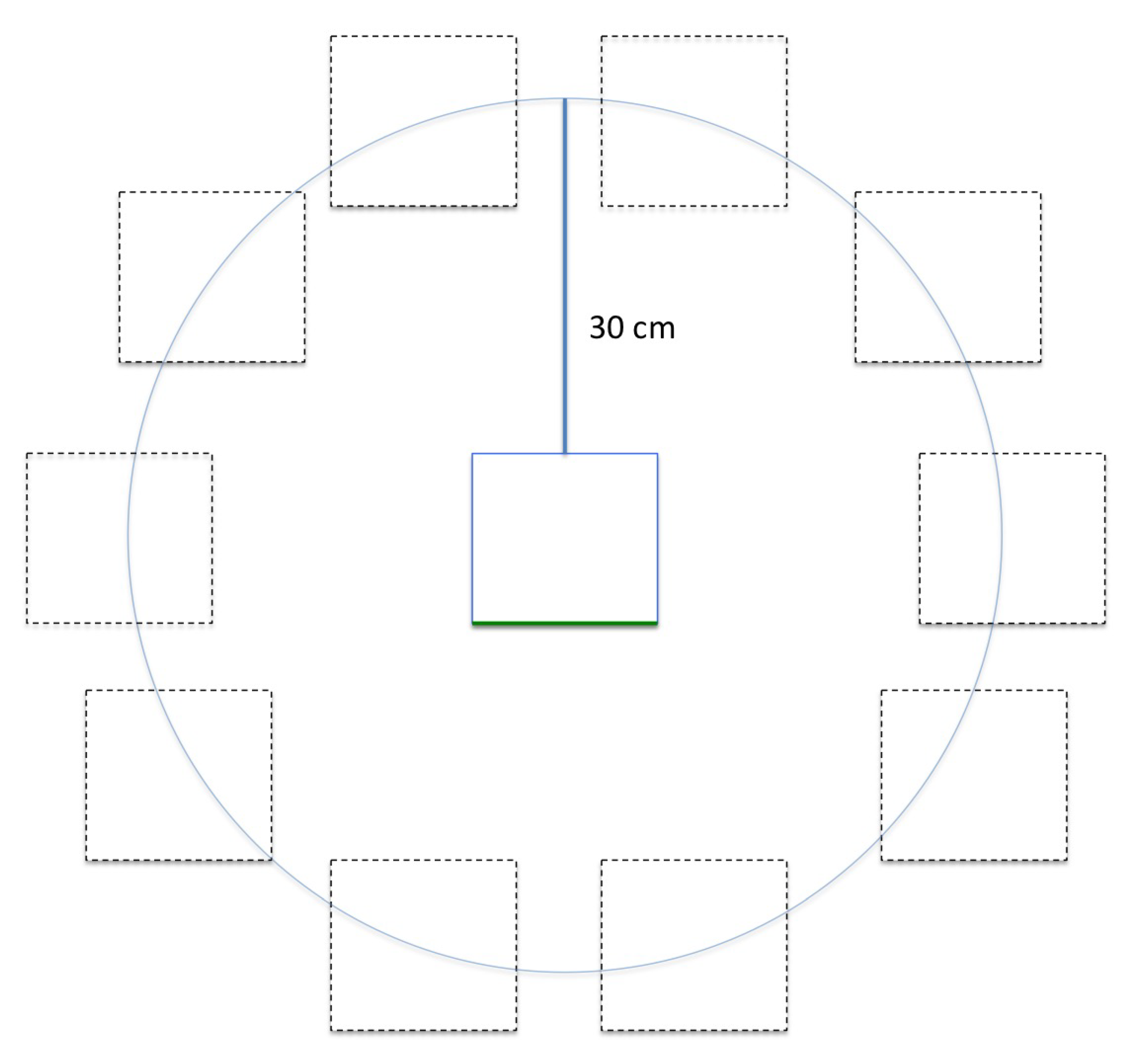

2.3. Task

2.4. Measures

2.4.1. Performance Measures

- Accuracy: Euclidean distance, in meters, from the 2D center of the bottom face of the cube to the center of the target square.

- Total time per trial: Time from cube spawn (appearance on the table) until the time the cube made contact with the table after having been picked up; equal to the sum of the following two time measures (grab and release time).

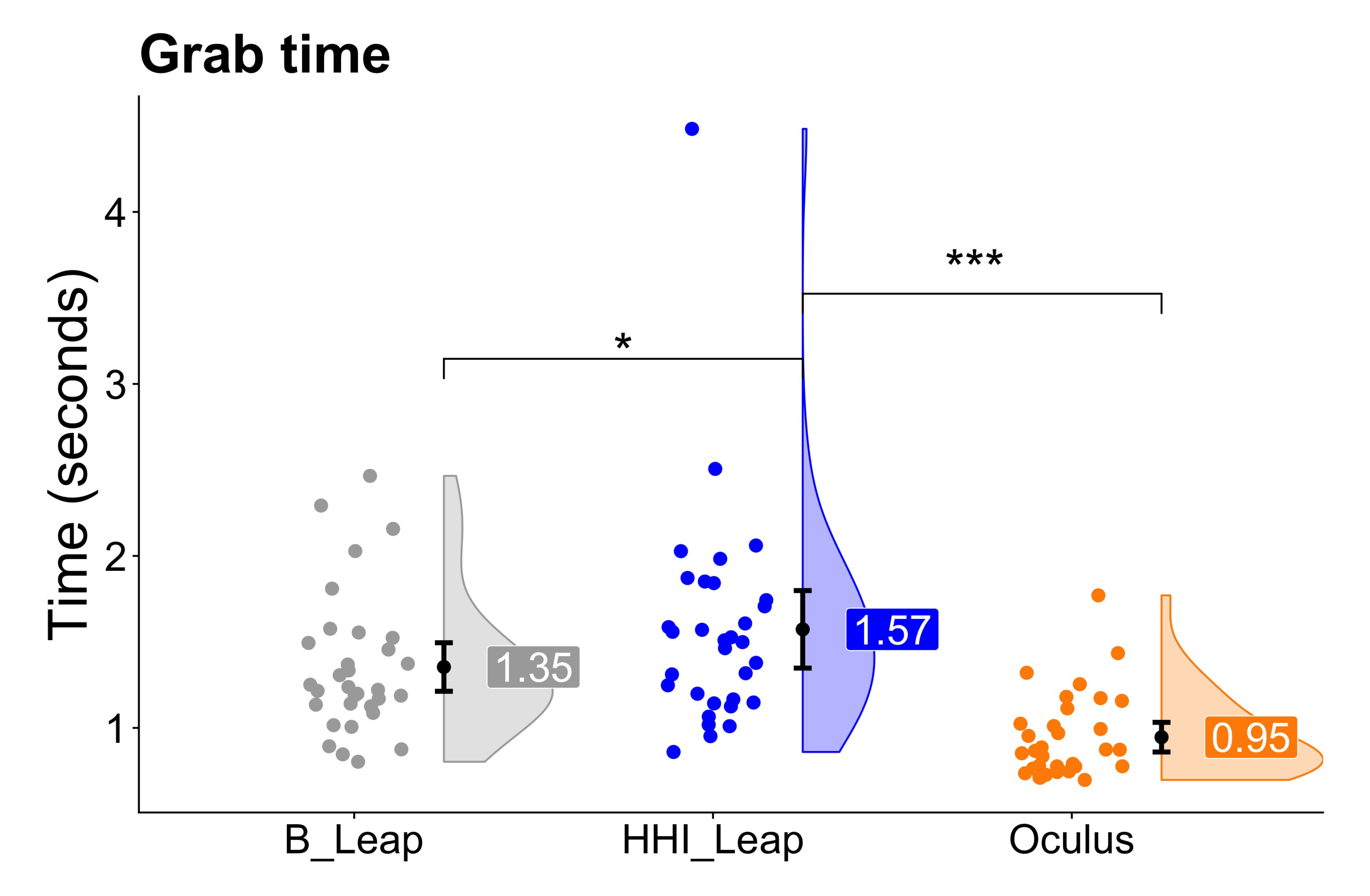

- Grab time (time to grab): Time from when the cube appeared on the table to the time it was grabbed.

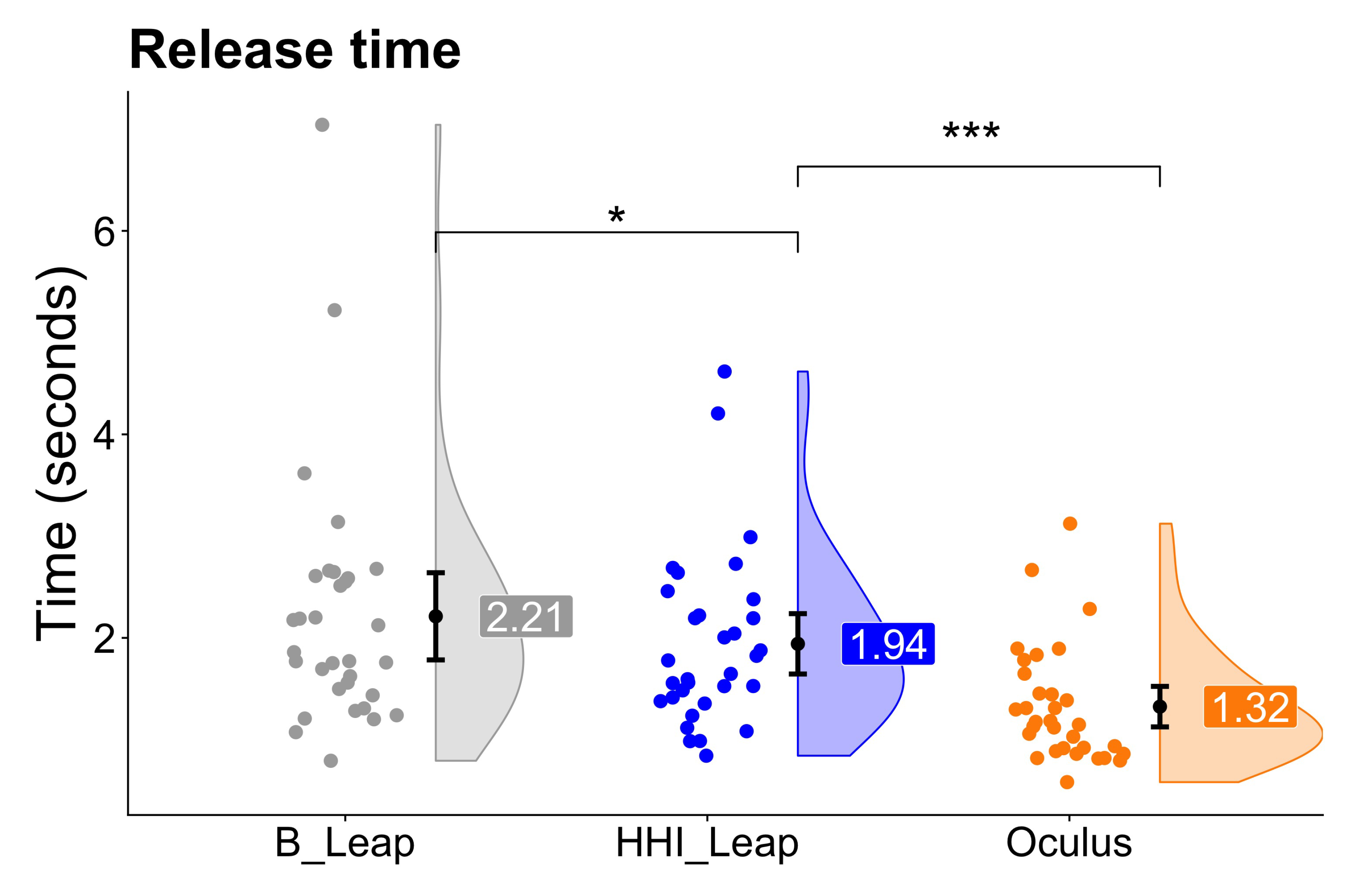

- Release time (time from grab to placement): Time from when the cube was grabbed to the time the cube made contact with the table after being released.

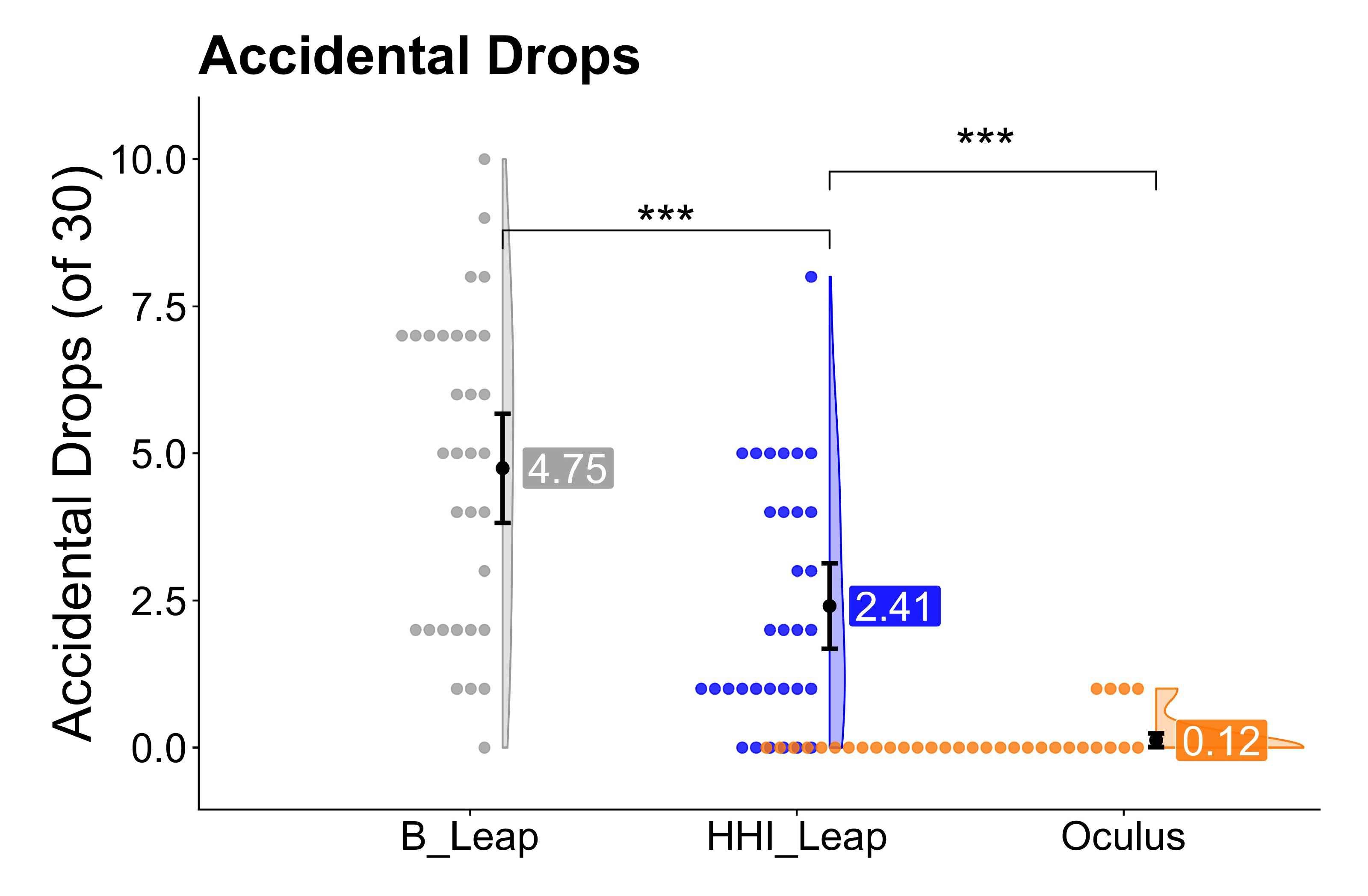

- Accidental drops: Prematurely terminated trials due to mistakenly dropping the cube (for details see below); used for both cleaning the data and as additional outcome measure to quantify interface performance.

2.4.2. Subjective Experience Measures

- System Usability Scale (SUS): A “quick and dirty” [21] questionnaire to assess the usability (primarily “ease of use”) of any product (e.g., websites, cell phones, kitchen appliances). It contains 10 items with 5-point Likert-scale response options from 1 (strongly disagree) to 5 (strongly agree). Responses are transformed by a scoring rubric, resulting in a score out of 100.

- Single subjective questions: 8 questions assessing user experience (i.e., comfort, ease of gripping, likelihood to recommend to friends) with Likert-scale response options ranging from 1 (strongly disagree) to 5 (strongly agree).

- Agency: The feeling of control over and connectedness to (a part of) one’s own body or a representation thereof [22] was measured with the question “I felt like I controlled the virtual representation of the hand as if it was part of my own body” [16]. Response options ranged from 1 (strongly disagree) to 7 (strongly agree).

- Overall preference: After the participants completed all 3 interfaces, they answered the question “Of the three interfaces you used, which did you like best?” It was left up to the participants to define “best” for themselves. This was not meant to be a single definitive data point to gauge overall subjective preference, but one measure among others (including overall satisfaction and the SUS).

2.5. Data Cleaning/Pre-Processing

- the experimenter noted that the participant accidentally dropped the cube before getting a chance to place it on the target,

- release time below 0.5 s and accuracy above 10 cm,

- accuracy above 20 cm.

2.6. Analysis

3. Results

3.1. Performance Measures

3.2. Subjective Measures

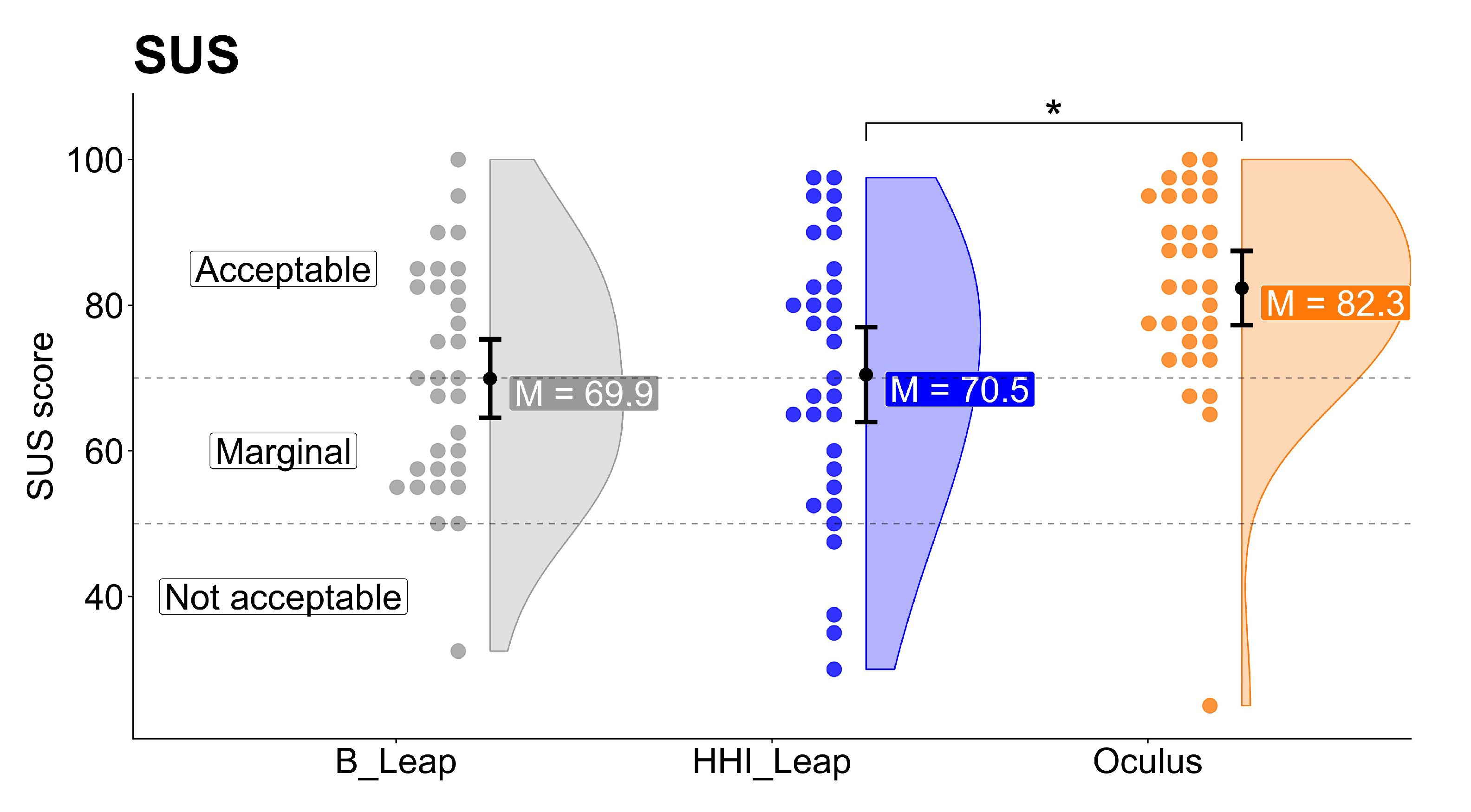

3.2.1. System Usability Scale (Sus)

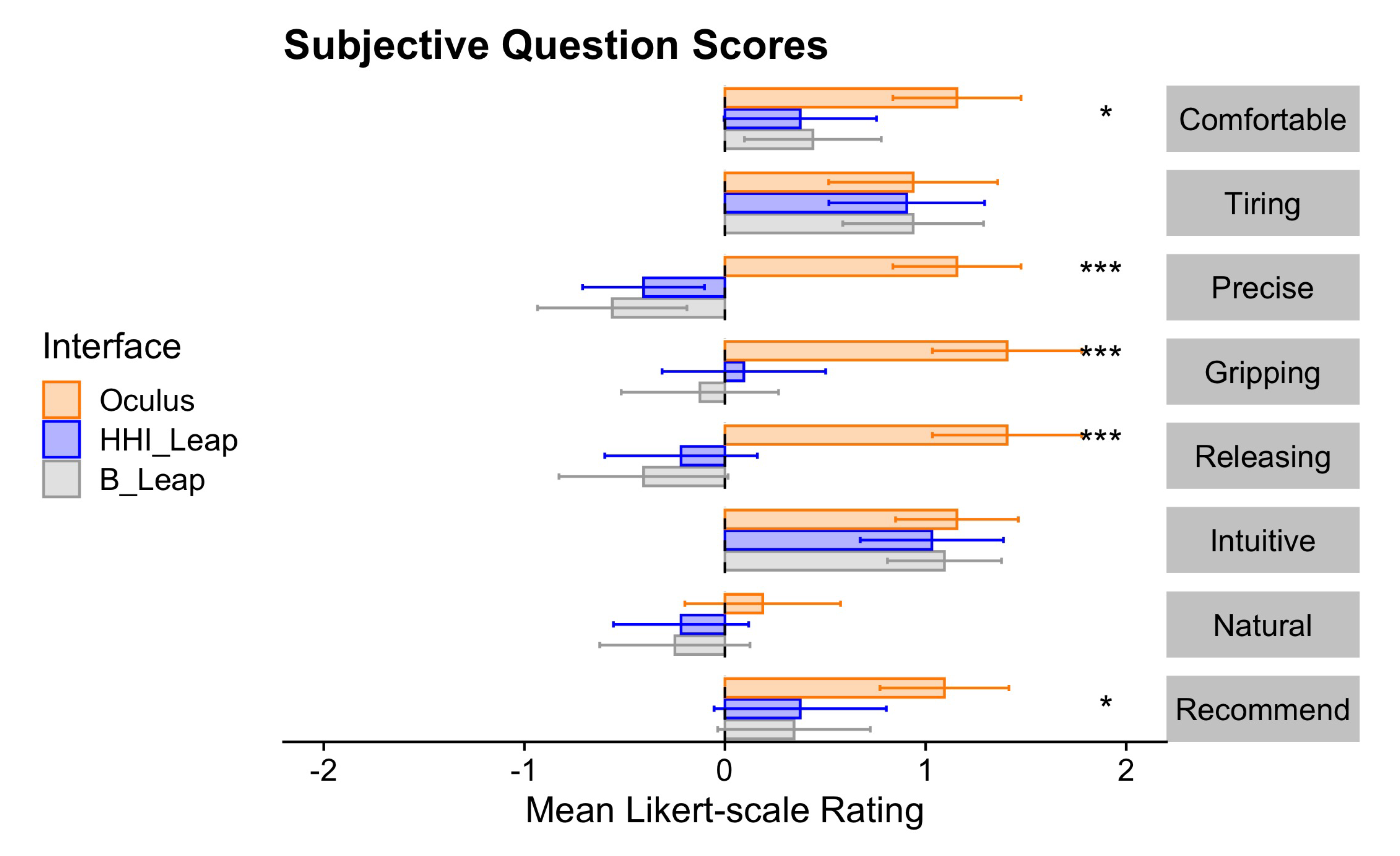

3.2.2. 5-Point Likert Scale Questionnaire Items

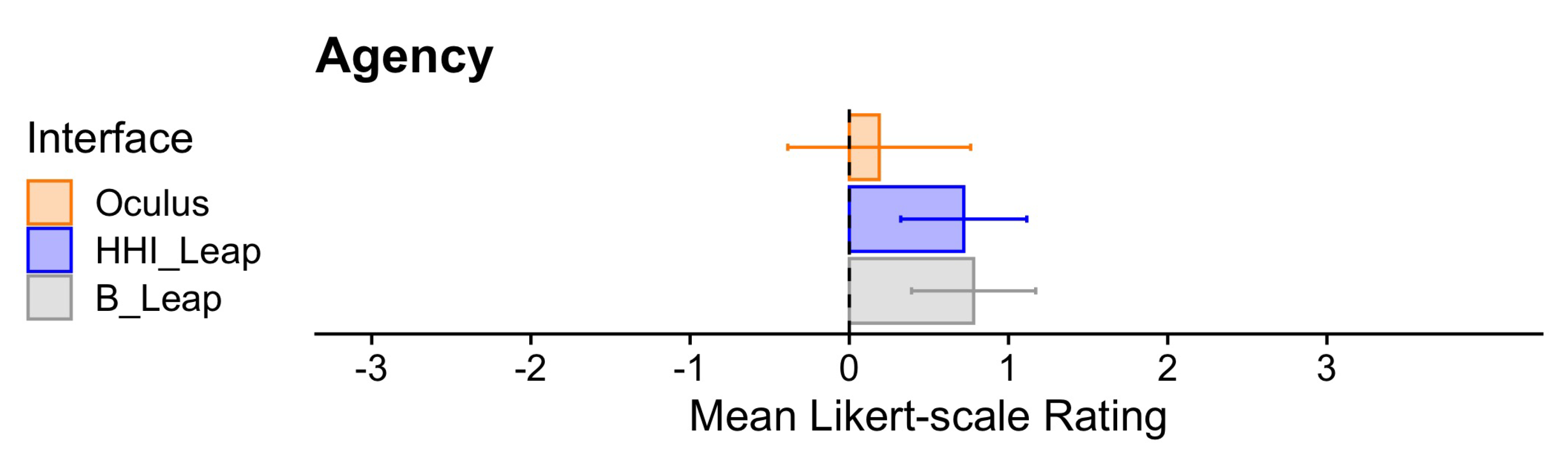

3.2.3. Agency

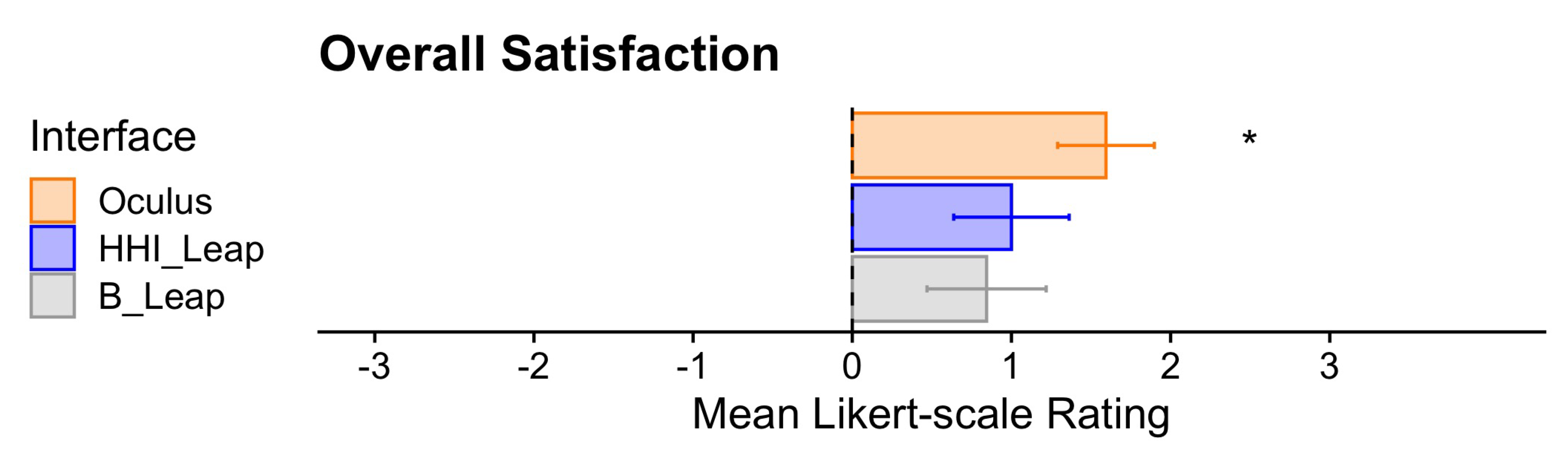

3.2.4. Overall Satisfaction

3.2.5. Overall Preference

4. Discussion

4.1. Comparison of Hand Tracking to the Traditional Controller

4.2. Comparison of Our Hand Tracking Prototype (Hhi _Leap) to the Basic Leap Api (B _Leap)

4.3. Limitations and Recommendations for Future Research

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| AR | Augmented reality |

| HMD | Head mounted display |

| MDPI | Multidisciplinary Digital Publishing Institute |

| MR | Mixed reality |

| VR | Virtual reality |

Appendix A. Questionnaire Items

Appendix A.1. English

Appendix A.1.1. 5-Point Likert Questions

- Using this interface was comfortable

- This interface was precise

- This interface was intuitive

- This interface was tiring for the hand (reverse scored)

- The gripping of objects gave me a lot of trouble (reverse scored)

- The releasing of objects gave me a lot of trouble (reverse scored)

- The gripping and releasing of objects was very natural

- I would recommend this interface to friends

Appendix A.1.2. 7-Point Likert Scale Questions

- Agency: I felt like I controlled the virtual representation of the hand as if it was part of my own body.

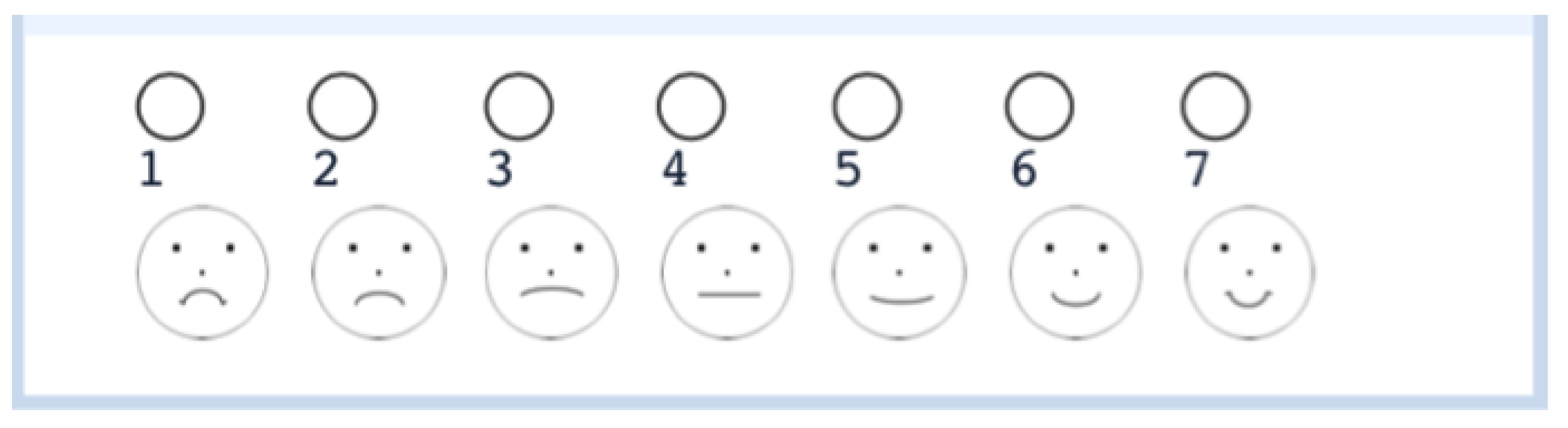

- Overall satisfaction: Which of the following faces best represents your overall satisfaction with using these interfaces? (Figure A1).

Appendix A.2. German

Appendix A.2.1. 5-Point Likert Questions

- Die Benutzung dieser Interaktionstechnologie war komfortabel

- Diese Interaktionstechnologie war präzise

- Die Interaktionstechnologie war intuitiv

- Diese Interaktionstechnologie war für die Hand ermüdend

- Das Greifen der Objekte bereitete mir große Mühe

- Das Loslassen der Objekte bereitete mir große Mühe

- Das Greifen und Loslassen der Objekte war sehr natürlich

- Ich würde diese Interaktionstechnologie Freunde empfehlen

Appendix A.2.2. 7-Point Likert Scale Questions

- Agency: Ich hatte das Gefühl, die virtuelle Darstellung der Hand so zu steuern, als ob sie Teil meines eigenen Körpers wäre.

- Overall satisfaction: Welches Gesicht entspricht am ehesten Ihre Gesamtzufriedenheit mit der Nutzung dieser Interaktionstechnologie? (Figure A1).

References

- Kim, M.; Jeon, C.; Kim, J. A Study on Immersion and Presence of a Portable Hand Haptic System for Immersive Virtual Reality. Sensors 2017, 17, 1141. [Google Scholar] [CrossRef] [Green Version]

- Slater, M. Grand Challenges in Virtual Environments. Front. Robot. AI 2014, 1, 1–4. [Google Scholar] [CrossRef] [Green Version]

- Rizzo, A.; Koenig, S. Is clinical virtual reality ready for primetime? Neuropsychology 2017, 31, 877–899. [Google Scholar] [CrossRef] [Green Version]

- Kim, H.; Choi, Y. Performance comparison of user interface devices for controlling mining software in virtual reality environments. Appl. Sci. (Switzerland) 2019, 9, 2584. [Google Scholar] [CrossRef] [Green Version]

- Geiger, A.; Bewersdorf, I.; Brandenburg, E.; Stark, R. Visual feedback for grasping in virtual reality environments for an interface to instruct digital human models. In Advances in Intelligent Systems and Computing; Springer: Berlin/Heidelberg, Germany, 2018; Volume 607, pp. 228–239. [Google Scholar] [CrossRef]

- Park, J.L.; Dudchenko, P.A.; Donaldson, D.I. Navigation in real-world environments: New opportunities afforded by advances in mobile brain imaging. Front. Hum. Neurosci. 2018, 12, 1–12. [Google Scholar] [CrossRef]

- Hofmann, S.; Klotzsche, F.; Mariola, A.; Nikulin, V.; Villringer, A.; Gaebler, M. Decoding Subjective Emotional Arousal during a Naturalistic VR Experience from EEG Using LSTMs. In Proceedings of the 2018 IEEE International Conference on Artificial Intelligence and Virtual Reality (AIVR), Taichung, Taiwan, 10–12 December 2018; pp. 128–131. [Google Scholar]

- Tromp, J.; Peeters, D.; Meyer, A.S.; Hagoort, P. The combined use of virtual reality and EEG to study language processing in naturalistic environments. Behav. Res. Methods 2018, 50, 862–869. [Google Scholar] [CrossRef] [Green Version]

- Parsons, T.D. Virtual Reality for Enhanced Ecological Validity and Experimental Control in the Clinical, Affective and Social Neurosciences. Front. Hum. Neurosci. 2015, 9, 1–9. [Google Scholar] [CrossRef] [Green Version]

- Belger, J.; Krohn, S.; Finke, C.; Tromp, J.; Klotzche, F.; Villringer, A.; Gaebler, M.; Chojecki, P.; Quinque, E.; Thöne-Otto, A. Immersive Virtual Reality for the Assessment and Training of Spatial Memory: Feasibility in Neurological Patients. In Proceedings of the 2019 International Conference on Virtual Rehabilitation (ICVR), Tel Aviv, Israel, 21–24 July 2019; pp. 21–24. [Google Scholar]

- Massetti, T.; da Silva, T.D.; Crocetta, T.B.; Guarnieri, R.; de Freitas, B.L.; Bianchi Lopes, P. The Clinical Utility of Virtual Reality in Neurorehabilitation: A Systematic Review. J. Cent. Nerv. Syst. Dis. 2018, 10. [Google Scholar] [CrossRef]

- Pedroli, E.; Greci, L.; Colombo, D.; Serino, S.; Cipresso, P.; Arlati, S.; Mondellini, M.; Boilini, L.; Giussani, V.; Goulene, K.; et al. Characteristics, Usability, and Users Experience of a System Combining Cognitive and Physical Therapy in a Virtual Environment: Positive Bike. Sensors 2018, 18, 2343. [Google Scholar] [CrossRef] [Green Version]

- McMahan, R.P.; Lai, C.; Pal, S.K. Interaction Fidelity: The Uncanny Valley of Virtual Reality Interactions. In Virtual, Augmented and Mixed Reality; Lackey, S., Shumaker, R., Eds.; Springer International Publishing: Cham, Switzerland, 2016; pp. 59–70. [Google Scholar] [CrossRef]

- Weichert, F.; Bachmann, D.; Rudak, B.; Fisseler, D. Analysis of the accuracy and robustness of the leap motion controller. Sensors 2013, 13, 6380–6393. [Google Scholar] [CrossRef] [Green Version]

- Vosinakis, S.; Koutsabasis, P. Evaluation of visual feedback techniques for virtual grasping with bare hands using Leap Motion and Oculus Rift. Virtual Real. 2018, 22, 47–62. [Google Scholar] [CrossRef]

- Argelaguet, F.; Hoyet, L.; Trico, M.; Lécuyer, A. The role of interaction in virtual embodiment: Effects of the virtual hand representation. In Proceedings of the 2016 IEEE Virtual Reality (VR), Greenville, SC, USA, 19–23 March 2016. [Google Scholar]

- Wozniak, P.; Vauderwange, O.; Mandal, A.; Javahiraly, N.; Curticapean, D. Possible applications of the LEAP motion controller for more interactive simulated experiments in augmented or virtual reality. Opt. Educ. Outreach IV 2016, 9946, 2016. [Google Scholar]

- Zhang, Z. Microsoft kinect sensor and its effect. IEEE Multimed. 2012, 19, 4–10. [Google Scholar] [CrossRef] [Green Version]

- Benda, B.; Esmaeili, S.; Ragan, E.D. Determining Detection Thresholds for Fixed Positional Offsets for Virtual Hand Remapping in Virtual Reality. Available online: https://www.cise.ufl.edu/~eragan/papers/Benda_ISMAR2020.pdf (accessed on 29 November 2020).

- Norman, D. The Design of Everyday Things; Basic Books: New York, NY, USA, 2013. [Google Scholar]

- Brooke, J. SUS-a quick and dirty usability scale. In Usability Evaluation in Industry; Jordan, P.T.B.M.I., Weerdmeester, B.A., Eds.; Taylor and Francis: London, UK, 1996; pp. 189–194. [Google Scholar]

- Caspar, E.A.; Cleeremans, A.; Haggard, P. The relationship between human agency and embodiment. Conscious. Cogn. 2015, 33, 226–236. [Google Scholar] [CrossRef] [Green Version]

- KUNIN, T. The Construction of a New Type of Attitude Measure1. Pers. Psychol. 1955, 8, 65–77. [Google Scholar] [CrossRef]

- Holm, S. A simple sequentially rejective multiple test procedure. Scand. J. Stat. 1979, 6, 65–70. [Google Scholar]

- Norman, G. Likert scales, levels of measurement and the “laws” of statistics. Adv. Health Sci. Educ. 2010, 15, 625–632. [Google Scholar] [CrossRef]

- Carifio, J.; Perla, R. Resolving the 50-Year Debate around Using and Misusing Likert Scales. Med. Educ. 2008, 42, 1150–1152. [Google Scholar] [CrossRef]

- Meek, G.; Ozgur, C.; Dunning, K. Comparison of the t vs. Wilcoxon Signed-Rank test for likert scale data and small samples. J. Appl. Stat. Methods 2007, 6, 91–106. [Google Scholar] [CrossRef]

- Bangor, A.; Kortum, P.; Miller, J. Determining What Individual SUS Scores Mean: Adding an Adjective Rating Scale. J. Usability Stud. 2009, 4, 114–123. [Google Scholar]

- Spurlock, J.; Ravasz, J. Hand Tracking: Designing a New Input Modality. Presented at the Oculus Connect Conference in San Jose, CA, USA; Available online: https://www.youtube.com/watch?v=or5M01Pcy5U (accessed on 13 January 2020).

- Myers, C.M.; Furqan, A.; Zhu, J. The impact of user characteristics and preferences on performance with an unfamiliar voice user interface. In Proceedings of the Conference on Human Factors in Computing Systems-Proceedings (Paper 47), Glasgow, Scotland, UK, 4–9 May 2019; pp. 1–9. [Google Scholar]

- Krugwasser, R.; Harel, E.V.; Salomon, R. The boundaries of the self: The sense of agency across different sensorimotor aspects. J. Vis. 2019, 19, 1–11. [Google Scholar] [CrossRef] [Green Version]

- Haggard, P.; Tsakiris, M. The experience of agency: Feelings, judgments, and responsibility. Curr. Dir. Psychol. Sci. 2009, 18, 242–246. [Google Scholar] [CrossRef]

- Mathur, M.B.; Reichling, D.B. Navigating a social world with robot partners: A quantitative cartography of the Uncanny Valley. Cognition 2016, 146, 22–32. [Google Scholar] [CrossRef] [Green Version]

- Mori, M. The uncanny valley. IEEE Robot. Autom. 2012, 19, 98–100. [Google Scholar] [CrossRef]

- Zajonc, R. Mere Exposure: A Gateway to the Subliminal. Curr. Dir. Psychol. Sci. 2001, 10, 224–228. [Google Scholar] [CrossRef]

- Corry, M.D. Mental models and hypermedia user interface design. In AACE Educational Technology Review; Spring: Berlin/Heidelberg, Germany, 1998; pp. 20–24. [Google Scholar]

| Performance Measures | |||||

|---|---|---|---|---|---|

| Measure | df_n | df_d | F | p | |

| Accuracy | 2 | 62 | p < 0.001 | ||

| Total Time | 2 | 62 | p < 0.001 | ||

| Grab Time | 2 | 62 | p < 0.001 | ||

| Release Time | 2 | 62 | p < 0.001 | ||

| Accidental Drops | 2 | 62 | p < 0.001 | ||

| Subjective Measures | |||||

| Measure | df_n | df_d | F | p | |

| SUS | 2 | 62 | 0.002 | ||

| Comfortable | 2 | 62 | p < 0.001 | ||

| Precise | 2 | 62 | p < 0.001 | ||

| Intuitive | 2 | 62 | 0.821 | ||

| Tiring | 2 | 62 | 0.989 | ||

| Gripping | 2 | 62 | p < 0.001 | ||

| Releasing | 2 | 62 | p < 0.001 | ||

| Natural | 2 | 62 | 0.209 | ||

| Recommend | 2 | 62 | 0.003 | ||

| Agency | 2 | 62 | 0.21 | ||

| Satisfaction | 2 | 62 | 0.005 | ||

| Performance Measures | HHI_Leap | Oculus | |||||

|---|---|---|---|---|---|---|---|

| Measure | M | SD | M | SD | t | df | p(Holm) |

| Accuracy (m) | 0.0158 | 0.0081 | 0.0071 | 0.0038 | 7.1268 | 31 | p < 0.001 |

| Total Time (s) | 3.5145 | 1.3465 | 2.2705 | 0.753 | 7.8842 | 31 | p < 0.001 |

| Grab Time (s) | 1.573 | 0.6522 | 0.9462 | 0.2479 | 6.9393 | 31 | p < 0.001 |

| Release Time (s) | 1.9416 | 0.8561 | 1.3243 | 0.5745 | 6.7013 | 31 | p < 0.001 |

| Accidental Drops (#) | 2.4062 | 2.0924 | 0.125 | 0.336 | 6.2427 | 31 | p < 0.001 |

| Subjective Measures | HHI_Leap | Oculus | |||||

| Measure | M | SD | M | SD | t | df | p(Holm) |

| SUS | 70.4688 | 18.8418 | 82.3438 | 14.7416 | −2.6887 | 31 | 0.022 |

| Comfortable | 3.375 | 1.1 | 4.156 | 0.92 | −3.0886 | 31 | 0.008 |

| Precise | 2.594 | 0.875 | 4.156 | 0.92 | −6.4689 | 31 | p < 0.001 |

| Gripping | 3.094 | 1.174 | 4.406 | 1.073 | −5.2133 | 31 | p < 0.001 |

| Releasing | 2.781 | 1.099 | 4.406 | 1.073 | −6.1393 | 31 | p < 0.001 |

| Recommend | 3.375 | 1.238 | 4.094 | 0.928 | −2.6592 | 31 | 0.025 |

| Satisfaction | 5 | 1.047 | 5.594 | 0.875 | −2.4615 | 31 | 0.039 |

| Performance Measures | HHI_Leap | B_Leap | |||||

| Measure | M | SD | M | SD | t | df | p(Holm) |

| Accuracy (m) | 0.0158 | 0.0081 | 0.0154 | 0.0052 | 0.2934 | 31 | 0.771 |

| Total Time (s) | 3.5145 | 1.3465 | 3.5661 | 1.5687 | −0.2926 | 31 | 0.772 |

| Grab Time (s) | 1.573 | 0.6522 | 1.3543 | 0.4097 | 2.2529 | 31 | 0.032 |

| Release Time (s) | 1.9416 | 0.8561 | 2.2118 | 1.2365 | −2.3171 | 31 | 0.027 |

| Accidental Drops (#) | 2.4062 | 2.0924 | 4.75 | 2.6761 | −3.8627 | 31 | p < 0.001 |

| Subjective Measures | HHI_Leap | B_Leap | |||||

| Measure | M | SD | M | SD | t | df | p(Holm) |

| SUS | 70.4688 | 18.8418 | 69.9219 | 18.8418 | −0.1871 | 31 | 0.853 |

| Comfortable | 3.375 | 1.1 | 3.438 | 0.982 | −0.3117 | 31 | 0.757 |

| Precise | 2.594 | 0.875 | 2.438 | 1.076 | 0.5958 | 31 | 0.556 |

| Gripping | 3.094 | 1.174 | 2.875 | 1.129 | 0.9088 | 31 | 0.37 |

| Releasing | 2.781 | 1.099 | 2.594 | 1.214 | 0.641 | 31 | 0.526 |

| Recommend | 3.375 | 1.238 | 3.344 | 1.096 | 0.1664 | 31 | 0.869 |

| Satisfaction | 5 | 1.047 | 4.844 | 1.081 | 0.776 | 31 | 0.444 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Masurovsky, A.; Chojecki, P.; Runde, D.; Lafci, M.; Przewozny, D.; Gaebler, M. Controller-Free Hand Tracking for Grab-and-Place Tasks in Immersive Virtual Reality: Design Elements and Their Empirical Study. Multimodal Technol. Interact. 2020, 4, 91. https://doi.org/10.3390/mti4040091

Masurovsky A, Chojecki P, Runde D, Lafci M, Przewozny D, Gaebler M. Controller-Free Hand Tracking for Grab-and-Place Tasks in Immersive Virtual Reality: Design Elements and Their Empirical Study. Multimodal Technologies and Interaction. 2020; 4(4):91. https://doi.org/10.3390/mti4040091

Chicago/Turabian StyleMasurovsky, Alexander, Paul Chojecki, Detlef Runde, Mustafa Lafci, David Przewozny, and Michael Gaebler. 2020. "Controller-Free Hand Tracking for Grab-and-Place Tasks in Immersive Virtual Reality: Design Elements and Their Empirical Study" Multimodal Technologies and Interaction 4, no. 4: 91. https://doi.org/10.3390/mti4040091

APA StyleMasurovsky, A., Chojecki, P., Runde, D., Lafci, M., Przewozny, D., & Gaebler, M. (2020). Controller-Free Hand Tracking for Grab-and-Place Tasks in Immersive Virtual Reality: Design Elements and Their Empirical Study. Multimodal Technologies and Interaction, 4(4), 91. https://doi.org/10.3390/mti4040091