Guidance in Cinematic Virtual Reality-Taxonomy, Research Status and Challenges

Abstract

:1. Introduction

2. Terms and Insights from Various Research Fields

2.1. Attention Theory

2.2. Basics about Physiology of the Eyes

3. Guiding Methods in Literature

3.1. Diegetic Methods

3.2. Salience Modulation Technique (SMT)

3.3. Blurring

3.4. Stylistic Rendering

3.5. Subtle Gaze Direction (SGD) with Eye Tracking

3.6. Subtle Gaze Direction (SGD) with High Frequent Flickers

3.7. Off-screen Indicators (Halo, Edge)

3.8. Forced Rotation of the user (SwiVRChair)

3.9. Forced Rotation of the VR world

3.10. Forced Rotation via Cutting

3.11. Haptic Cues

4. Taxonomy

4.1. Diegetic and Non-Diegetic

4.2. Visual, Auditive and Haptic

4.3. On- and Off-screen

4.4. World- and Screen-referenced

4.5. Direct and Indirect Cues

4.6. Subtle and Overt

4.7. Forced by System, Forced by Reflex and Voluntary

4.8. Usage of the Taxonomy for CVR Guiding Methods from Literature

5. Methods for CVR Adapted from Guiding in Traditional Movies and Images (2D)

5.1. Diegetic Methods Diegetic, Visual/Auditive, on-Screen/off-Screen, World-Referenced, Subtle, Voluntary

- Diegetic auditive cues: Sounds motivate the user to search for the source of the sound and therefore to change the viewing direction [22].

5.2. Image Modulation non-Diegetic, Visual, on-Screen, world-Referenced, Subtle/overt, Voluntary

5.3. Overlays non-Diegetic, Visual, on-Screen/off-Screen, World/Screen-Referenced, Overt, Voluntary

5.4. Subtle Gaze Direction non-Diegetic, Visual, on-Screen/off-Screen, World-Referenced, Subtle, Voluntary

- A method developed for images works in a static environment. The remaining part of the picture does not change. This is not the case for videos.

- A method developed for clear test environments sometimes might not work for complex images or videos with a lot of objects competing for attention.

- A method developed for a monitor has to be extended for the case where the target object is not in the FoV.

- A method using flickering must take into account the frame rate of the movie and the HMD.

6. Methods for CVR adapted from VR and AR (3D)

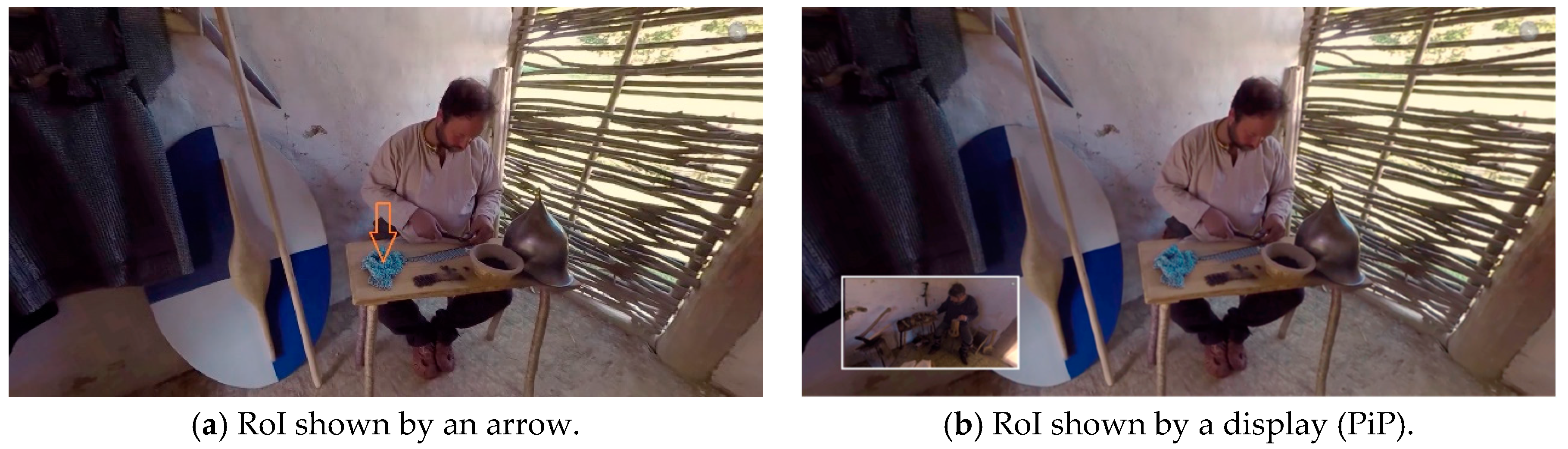

6.1. Arrows and Similar Signs non-Diegetic, Visual, on-Screen/off-Screen, World-Referenced/Screen-Referenced, Overt, Voluntary

6.2. Stylistic Rendering non-Diegetic, Visual, on-Screen, World-Referenced, subtle/Overt, Voluntary

6.3. Picture-in-Picture Displays non-Diegetic, Visual, off-Screen, Screen-Referenced, Overt, Voluntary

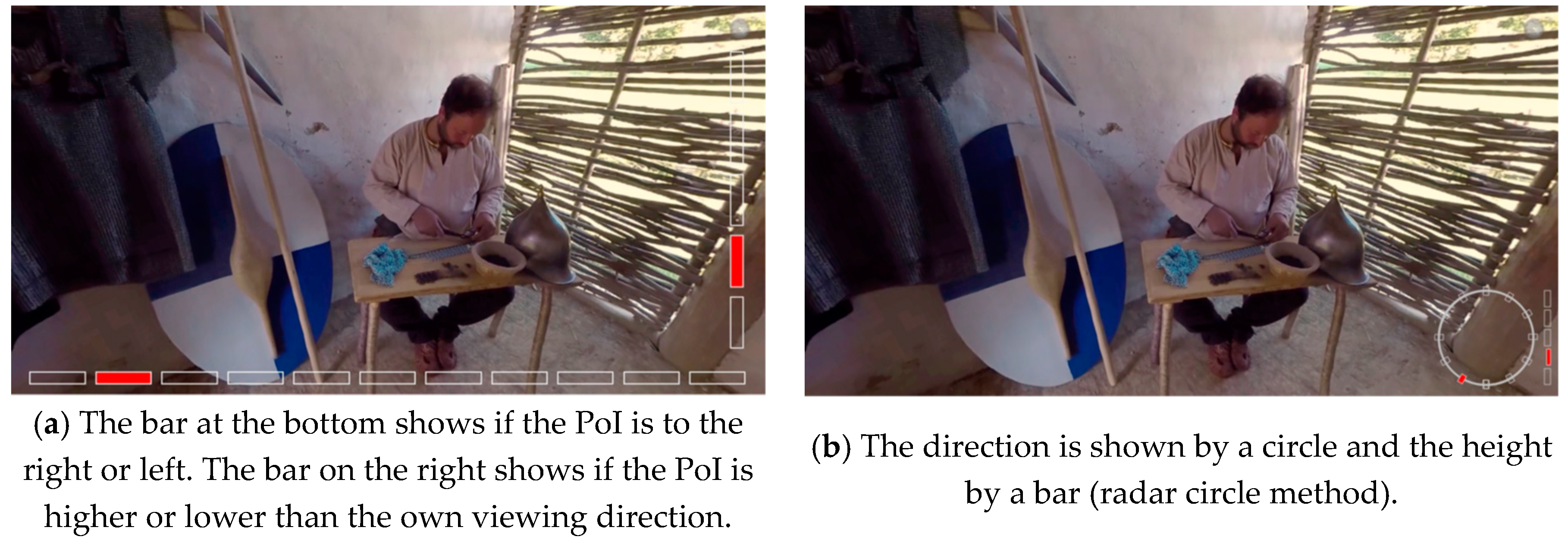

6.4. Radar non-Diegetic, Visual, off-Screen, Screen-Referenced, Overt, Voluntary

7. Practical Considerations when Applying the Taxonomy

- How fast should the method work (tempo)?

- Is presence more important than the effectiveness of the method (effectiveness)?

- Is it important that the viewer remembers the target (recall rate)?

- Are there more than one RoI simultaneously (number of RoIs)?

- Is there a problem if indicators (arrows, halo) cover movie content (covering)?

- How complex is the content (visual or auditive clutter)?

- Should the guiding method be part of the CVR movie (experience)?

8. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- De Abreu, A.; Ozcinar, C.; Smolic, A. Look around you: Saliency maps for omnidirectional images in VR applications. In Proceedings of the 2017 Ninth International Conference on Quality of Multimedia Experience (QoMEX), Erfurt, Germany, 31 May–2 June 2017. [Google Scholar]

- Petry, B.; Huber, J. Towards effective interaction with omnidirectional videos using immersive virtual reality headsets. In Proceedings of the 6th Augmented Human International Conference on—AH ’15, Singapore, 9–11 March 2015; ACM Press: New York, NY, USA, 2015; pp. 217–218. [Google Scholar]

- 5 Lessons Learned While Making Lost | Oculus. Available online: https://www.oculus.com/story-studio/blog/5-lessons-learned-while-making-lost/ (accessed on 10 December 2018).

- Tse, A.; Jennett, C.; Moore, J.; Watson, Z.; Rigby, J.; Cox, A.L. Was I There? In Proceedings of the 2017 CHI Conference Extended Abstracts on Human Factors in Computing Systems—CHI EA ’17, Denver, CO, USA, 6–11 May 2017; ACM Press: New York, NY, USA, 2017; pp. 2967–2974. [Google Scholar]

- MacQuarrie, A.; Steed, A. Cinematic virtual reality: Evaluating the effect of display type on the viewing experience for panoramic video. In Proceedings of the 2017 IEEE Virtual Reality (VR), Los Angeles, CA, USA, 18–22 March 2017; pp. 45–54. [Google Scholar]

- Vosmeer, M.; Schouten, B. Interactive cinema: Engagement and interaction. In Proceedings of the International Conference on Interactive Digital Storytelling, Singapore, 3–6 November 2014; pp. 140–147. [Google Scholar]

- Rothe, S.; Tran, K.; Hußmann, H. Dynamic Subtitles in Cinematic Virtual Reality. In Proceedings of the 2018 ACM International Conference on Interactive Experiences for TV and Online Video—TVX ’18; ACM Press: New York, NY, USA, 2018; pp. 209–214. [Google Scholar]

- Liu, D.; Bhagat, K.; Gao, Y.; Chang, T.-W.; Huang, R. The Potentials and Trends of VR in Education: A Bibliometric Analysis on Top Research Studies in the last Two decades. In Augmented and Mixed Realities in Education; Springer: Singapore, 2017; pp. 105–113. [Google Scholar]

- Howard, S.; Serpanchy, K.; Lewin, K. Virtual reality content for higher education curriculum. In Proceedings of the VALA, Melbourne, Australia, 13–15 February 2018. [Google Scholar]

- Stojšić, I.; Ivkov-Džigurski, A.; Maričić, O. Virtual Reality as a Learning Tool: How and Where to Start with Immersive Teaching. In Didactics of Smart Pedagogy; Springer International Publishing: Cham, Switzerland, 2019; pp. 353–369. [Google Scholar]

- Merchant, Z.; Goetz, E.T.; Cifuentes, L.; Keeney-Kennicutt, W.; Davis, T.J. Effectiveness of virtual reality-based instruction on students’ learning outcomes in K-12 and higher education: A meta-analysis. Comput. Educ. 2014, 70, 29–40. [Google Scholar] [CrossRef]

- Bailey, R.; McNamara, A.; Costello, A.; Sridharan, S.; Grimm, C. Impact of subtle gaze direction on short-term spatial information recall. Proc. Symp. Eye Track. Res. Appl. 2012, 67–74. [Google Scholar] [CrossRef]

- Rothe, S.; Althammer, F.; Khamis, M. GazeRecall: Using Gaze Direction to Increase Recall of Details in Cinematic Virtual Reality. In Proceedings of the 11th International Conference on Mobile and Ubiquitous Multimedia (MUM’18), Cairo, Egypt, 25–28 November 2018; ACM: Kairo, Egypt, 2018. [Google Scholar]

- Rothe, S.; Montagud, M.; Mai, C.; Buschek, D.; Hußmann, H. Social Viewing in Cinematic Virtual Reality: Challenges and Opportunities. In Interactive Storytelling. ICIDS 2018; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2018; Volume 11318, Presented at the 5 December 2018. [Google Scholar]

- Subramanian, R.; Shankar, D.; Sebe, N.; Melcher, D. Emotion modulates eye movement patterns and subsequent memory for the gist and details of movie scenes. J. Vis. 2014, 14, 31. [Google Scholar] [CrossRef] [PubMed]

- Dorr, M.; Vig, E.; Barth, E. Eye movement prediction and variability on natural video data sets. Vis. Cogn. 2012, 20, 495–514. [Google Scholar] [CrossRef]

- Smith, T.J. Watching You Watch Movies: Using Eye Tracking to Inform Cognitive Film Theory. In Psychocinematics; Oxford University Press: New York, NY, USA, 2013; pp. 165–192. [Google Scholar]

- Brown, A.; Sheikh, A.; Evans, M.; Watson, Z. Directing attention in 360-degree video. In Proceedings of the IBC 2016 Conference; Institution of Engineering and Technology: Amsterdam, The Netherlands, 2016. [Google Scholar]

- Danieau, F.; Guillo, A.; Dore, R. Attention guidance for immersive video content in head-mounted displays. In Proceedings of the IEEE Virtual Reality, Los Angeles, CA, USA, 18–22 March 2017. [Google Scholar]

- Lin, Y.-C.; Chang, Y.-J.; Hu, H.-N.; Cheng, H.-T.; Huang, C.-W.; Sun, M. Tell Me Where to Look: Investigating Ways for Assisting Focus in 360° Video. In Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems—CHI ’17; ACM Press: New York, NY, USA, 2017; pp. 2535–2545. [Google Scholar]

- Nielsen, L.T.; Møller, M.B.; Hartmeyer, S.D.; Ljung, T.C.M.; Nilsson, N.C.; Nordahl, R.; Serafin, S. Missing the point: An exploration of how to guide users’ attention during cinematic virtual reality. In Proceedings of the 22nd ACM Conference on Virtual Reality Software and Technology—VRST ’16; ACM Press: New York, NY, USA, 2016; pp. 229–232. [Google Scholar]

- Rothe, S.; Hußmann, H. Guiding the Viewer in Cinematic Virtual Reality by Diegetic Cues. In Proceedings of the International Conference on Augmented Reality, Virtual Reality and Computer Graphics; Springer: Cham, Switzerland, 2018; pp. 101–117. [Google Scholar]

- Frintrop, S.; Rome, E.; Christensen, H.I. Computational visual attention systems and their cognitive foundations. ACM Trans. Appl. Percept. 2010, 7, 1–39. [Google Scholar] [CrossRef]

- Borji, A.; Itti, L. State-of-the-art in visual attention modeling. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 185–207. [Google Scholar] [CrossRef] [PubMed]

- Smith, T. Edit blindness: The relationship between attention and global change blindness in dynamic scenes. J. Eye Mov. Res. 2008, 2, 1–17. [Google Scholar]

- Posner, M.I. Orienting of attention. Q. J. Exp. Psychol. 1980, 32, 3–25. [Google Scholar] [CrossRef] [PubMed]

- Ward, L. Scholarpedia: Attention. Scholarpedia 2008, 3, 1538. [Google Scholar] [CrossRef]

- Yarbus, A.L. Eye Movements and Vision; Springer: Boston, MA, USA, 1967. [Google Scholar]

- Treisman, A.M.; Gelade, G. A feature-integration theory of attention. Cogn. Psychol. 1980, 12, 97–136. [Google Scholar] [CrossRef]

- Healey, C.G.; Booth, K.S.; Enns, J.T. High-speed visual estimation using preattentive processing. ACM Trans. Comput. Interact. 1996, 3, 107–135. [Google Scholar] [CrossRef]

- Wolfe, J.M.; Horowitz, T.S. Five factors that guide attention in visual search. Nat. Hum. Behav. 2017, 1, 0058. [Google Scholar] [CrossRef]

- Wolfe, J.M.; Horowitz, T.S. What attributes guide the deployment of visual attention and how do they do it? Nat. Rev. Neurosci. 2004, 5, 495–501. [Google Scholar] [CrossRef]

- Tyler, C.W.; Hamer, R.D. Eccentricity and the Ferry–Porter law. J. Opt. Soc. Am. A 1993, 10, 2084. [Google Scholar] [CrossRef]

- Rovamo, J.; Raninen, A. Critical flicker frequency as a function of stimulus area and luminance at various eccentricities in human cone vision: A revision of granit-harper and ferry-porter laws. Vis. Res. 1988, 28, 785–790. [Google Scholar] [CrossRef]

- Grimes, J.D. Effects of Patterning on Flicker Frequency. In Proceedings of the Human Factors Society Annual Meeting; SAGE Publications: Los Angeles, CA, USA, 1983; pp. 46–50. [Google Scholar]

- Waldin, N.; Waldner, M.; Viola, I. Flicker Observer Effect: Guiding Attention Through High Frequency Flicker in Images. Comput. Graph. Forum 2017, 36, 467–476. [Google Scholar] [CrossRef]

- Gugenheimer, J.; Wolf, D.; Haas, G.; Krebs, S.; Rukzio, E. SwiVRChair: A Motorized Swivel Chair to Nudge Users’ Orientation for 360 Degree Storytelling in Virtual Reality. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems—CHI ’16; ACM Press: New York, NY, USA, 2016; pp. 1996–2000. [Google Scholar]

- Chang, H.-Y.; Tseng, W.-J.; Tsai, C.-E.; Chen, H.-Y.; Peiris, R.L.; Chan, L. FacePush: Introducing Normal Force on Face with Head-Mounted Displays. In Proceedings of the 31st Annual ACM Symposium on User Interface Software and Technology—UIST ’18; ACM Press: New York, NY, USA, 2018; pp. 927–935. [Google Scholar]

- Sassatelli, L.; Pinna-Déry, A.-M.; Winckler, M.; Dambra, S.; Samela, G.; Pighetti, R.; Aparicio-Pardo, R. Snap-changes: A Dynamic Editing Strategy for Directing Viewer’s Attention in Streaming Virtual Reality Videos. In Proceedings of the 2018 International Conference on Advanced Visual Interfaces—AVI ’18; ACM Press: New York, NY, USA, 2018; pp. 1–5. [Google Scholar]

- Gruenefeld, U.; Stratmann, T.C.; El Ali, A.; Boll, S.; Heuten, W. RadialLight. In Proceedings of the 20th International Conference on Human-Computer Interaction with Mobile Devices and Services—MobileHCI ’18, Barcelona, Spain, 3–6 September 2018; ACM Press: New York, NY, USA, 2018; pp. 1–6. [Google Scholar]

- Lin, Y.-T.; Liao, Y.-C.; Teng, S.-Y.; Chung, Y.-J.; Chan, L.; Chen, B.-Y. Outside-In: Visualizing Out-of-Sight Regions-of-Interest in a 360 Video Using Spatial Picture-in-Picture Previews. In Proceedings of the 30th Annual ACM Symposium on User Interface Software and Technology—UIST ’17; ACM Press: New York, NY, USA, 2017; pp. 255–265. [Google Scholar]

- Cole, F.; DeCarlo, D.; Finkelstein, A.; Kin, K.; Morley, K.; Santella, A. Directing gaze in 3D models with stylized focus. Proc. 17th Eurographics Conf. Render. Tech. 2006, 377–387. [Google Scholar] [CrossRef]

- Tanaka, R.; Narumi, T.; Tanikawa, T.; Hirose, M. Attracting User’s Attention in Spherical Image by Angular Shift of Virtual Camera Direction. In Proceedings of the 3rd ACM Symposium on Spatial User Interaction—SUI ’15; ACM Press: New York, NY, USA, 2015; pp. 61–64. [Google Scholar]

- Mendez, E.; Feiner, S.; Schmalstieg, D. Focus and Context in Mixed Reality by Modulating First Order Salient Features. In Proceedings of the International Symposium on Smart GraphicsL; Springer: Berlin/Heidelberg, Germany, 2010; pp. 232–243. [Google Scholar]

- Veas, E.E.; Mendez, E.; Feiner, S.K.; Schmalstieg, D. Directing attention and influencing memory with visual saliency modulation. In Proceedings of the 2011 Annual Conference on Human Factors in Computing Systems—CHI ’11; ACM Press: New York, NY, USA, 2011; p. 1471. [Google Scholar]

- Hoffmann, R.; Baudisch, P.; Weld, D.S. Evaluating visual cues for window switching on large screens. In Proceeding of the Twenty-Sixth Annual CHI Conference on Human Factors in Computing Systems—CHI ’08; ACM Press: New York, NY, USA, 2008; p. 929. [Google Scholar]

- Renner, P.; Pfeiffer, T. Attention Guiding Using Augmented Reality in Complex Environments. In Proceeding of the 2018 IEEE Conference on Virtual Reality and 3D User Interfaces (VR); IEEE: Reutlingen, Germany, 2018; pp. 771–772. [Google Scholar]

- Perea, P.; Morand, D.; Nigay, L. [POSTER] Halo3D: A Technique for Visualizing Off-Screen Points of Interest in Mobile Augmented Reality. In Proceeding of the 2017 IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct), Nantes, France, 9–13 October 2017; pp. 170–175. [Google Scholar]

- Gruenefeld, U.; El Ali, A.; Boll, S.; Heuten, W. Beyond Halo and Wedge. In Proceedings of the 20th International Conference on Human-Computer Interaction with Mobile Devices and Services—MobileHCI ’18; ACM Press: New York, NY, USA, 2018; pp. 1–11. [Google Scholar]

- Gruenefeld, U.; Ennenga, D.; El Ali, A.; Heuten, W.; Boll, S. EyeSee360. In Proceedings of the 5th Symposium on Spatial User Interaction—SUI ’17; ACM Press: New York, NY, USA, 2017; pp. 109–118. [Google Scholar]

- Bork, F.; Schnelzer, C.; Eck, U.; Navab, N. Towards Efficient Visual Guidance in Limited Field-of-View Head-Mounted Displays. IEEE Trans. Vis. Comput. Graph. 2018, 24, 2983–2992. [Google Scholar] [CrossRef] [PubMed]

- Siu, T.; Herskovic, V. SidebARs: Improving awareness of off-screen elements in mobile augmented reality. In Proceedings of the 2013 Chilean Conference on Human—Computer Interaction—ChileCHI ’13; ACM Press: New York, NY, USA, 2013; pp. 36–41. [Google Scholar]

- Renner, P.; Pfeiffer, T. Attention guiding techniques using peripheral vision and eye tracking for feedback in augmented-reality-based assistance systems. In Proceedings of the 2017 IEEE Symposium on 3D User Interfaces (3DUI); IEEE: Los Angeles, CA, USA, 2017; pp. 186–194. [Google Scholar]

- Burigat, S.; Chittaro, L.; Gabrielli, S. Visualizing locations of off-screen objects on mobile devices. In Proceedings of the 8th Conference on Human-Computer Interaction with Mobile Devices and Services—MobileHCI ’06; ACM Press: New York, NY, USA, 2006; p. 239. [Google Scholar]

- Henze, N.; Boll, S. Evaluation of an off-screen visualization for magic lens and dynamic peephole interfaces. In Proceedings of the 12th International Conference on Human Computer Interaction with Mobile Devices and Services—MobileHCI ’10; ACM Press: New York, NY, USA, 2010; p. 191. [Google Scholar]

- Schinke, T.; Henze, N.; Boll, S. Visualization of off-screen objects in mobile augmented reality. In Proceedings of the 12th International Conference on Human Computer Interaction with Mobile Devices and Services—MobileHCI ’10; ACM Press: New York, NY, USA, 2010; p. 313. [Google Scholar]

- Koskinen, E.; Rakkolainen, I.; Raisamo, R. Direct retinal signals for virtual environments. In Proceedings of the 23rd ACM Symposium on Virtual Reality Software and Technology—VRST ’17; ACM Press: New York, NY, USA, 2017; pp. 1–2. [Google Scholar]

- Zellweger, P.T.; Mackinlay, J.D.; Good, L.; Stefik, M.; Baudisch, P. City lights. In Proceedings of the CHI ’03 Extended Abstracts on Human Factors in Computing Systems—CHI ’03; ACM Press: New York, NY, USA, 2003; p. 838. [Google Scholar]

- Bailey, R.; McNamara, A.; Sudarsanam, N.; Grimm, C. Subtle gaze direction. ACM Trans. Graph. 2009, 28, 1–14. [Google Scholar] [CrossRef]

- McNamara, A.; Bailey, R.; Grimm, C. Improving search task performance using subtle gaze direction. In Proceedings of the 5th Symposium on Applied Perception in Graphics and Visualization—APGV ’08; ACM Press: New York, NY, USA, 2008; p. 51. [Google Scholar]

- Grogorick, S.; Stengel, M.; Eisemann, E.; Magnor, M. Subtle gaze guidance for immersive environments. In Proceedings of the ACM Symposium on Applied Perception—SAP ’17; ACM Press: New York, NY, USA, 2017; pp. 1–7. [Google Scholar]

- McNamara, A.; Booth, T.; Sridharan, S.; Caffey, S.; Grimm, C.; Bailey, R. Directing gaze in narrative art. In Proceedings of the ACM Symposium on Applied Perception—SAP ’12; ACM Press: New York, NY, USA, 2012; p. 63. [Google Scholar]

- Lu, W.; Duh, H.B.-L.; Feiner, S.; Zhao, Q. Attributes of Subtle Cues for Facilitating Visual Search in Augmented Reality. IEEE Trans. Vis. Comput. Graph. 2014, 20, 404–412. [Google Scholar] [CrossRef] [PubMed]

- Smith, W.S.; Tadmor, Y. Nonblurred regions show priority for gaze direction over spatial blur. Q. J. Exp. Psychol. 2013, 66, 927–945. [Google Scholar] [CrossRef] [PubMed]

- Hata, H.; Koike, H.; Sato, Y. Visual Guidance with Unnoticed Blur Effect. In Proceedings of the International Working Conference on Advanced Visual Interfaces—AVI ’16; ACM Press: New York, NY, USA, 2016; pp. 28–35. [Google Scholar]

- Hagiwara, A.; Sugimoto, A.; Kawamoto, K. Saliency-based image editing for guiding visual attention. In Proceedings of the 1st International Workshop on Pervasive Eye Tracking & Mobile Eye-Based Interaction—PETMEI ’11; ACM Press: New York, NY, USA, 2011; p. 43. [Google Scholar]

- Kosek, M.; Koniaris, B.; Sinclair, D.; Markova, D.; Rothnie, F.; Smoot, L.; Mitchell, K. IRIDiuM+: Deep Media Storytelling with Non-linear Light Field Video. In Proceedings of the ACM SIGGRAPH 2017 VR Village on—SIGGRAPH ’17; ACM Press: New York, NY, USA, 2017; pp. 1–2. [Google Scholar]

- Kaul, O.B.; Rohs, M. HapticHead: A Spherical Vibrotactile Grid around the Head for 3D Guidance in Virtual and Augmented Reality. In Proceedings of the 2017 CHI Conference on Human Factors in Computing Systems—CHI ’17; ACM Press: New York, NY, USA, 2017; pp. 3729–3740. [Google Scholar]

- Rantala, J.; Kangas, J.; Raisamo, R. Directional cueing of gaze with a vibrotactile headband. In Proceedings of the 8th Augmented Human International Conference on—AH ’17; ACM Press: New York, NY, USA, 2017; pp. 1–7. [Google Scholar]

- Stratmann, T.C.; Löcken, A.; Gruenefeld, U.; Heuten, W.; Boll, S. Exploring Vibrotactile and Peripheral Cues for Spatial Attention Guidance. In Proceedings of the 7th ACM International Symposium on Pervasive Displays—PerDis ’18; ACM Press: New York, NY, USA, 2018; pp. 1–8. [Google Scholar]

- Knierim, P.; Kosch, T.; Schwind, V.; Funk, M.; Kiss, F.; Schneegass, S.; Henze, N. Tactile Drones - Providing Immersive Tactile Feedback in Virtual Reality through Quadcopters. In Proceedings of the 2017 CHI Conference Extended Abstracts on Human Factors in Computing Systems—CHI EA ’17; ACM Press: New York, NY, USA, 2017; pp. 433–436. [Google Scholar]

- Sridharan, S.; Pieszala, J.; Bailey, R. Depth-based subtle gaze guidance in virtual reality environments. In Proceedings of the ACM SIGGRAPH Symposium on Applied Perception—SAP ’15; ACM Press: New York, NY, USA, 2015; p. 132. [Google Scholar]

- Kim, Y.; Varshney, A. Saliency-guided Enhancement for Volume Visualization. IEEE Trans. Vis. Comput. Graph. 2006, 12, 925–932. [Google Scholar] [CrossRef]

- Jarodzka, H.; van Gog, T.; Dorr, M.; Scheiter, K.; Gerjets, P. Learning to see: Guiding students’ attention via a Model’s eye movements fosters learning. Learn. Instr. 2013, 25, 62–70. [Google Scholar] [CrossRef]

- Lintu, A.; Carbonell, N. Gaze Guidance through Peripheral Stimuli. 2009. Available online: https://hal.inria.fr/inria-00421151/ (accessed on 31 January 2019).

- Khan, A.; Matejka, J.; Fitzmaurice, G.; Kurtenbach, G. Spotlight. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems—CHI ’05; ACM Press: New York, NY, USA, 2005; p. 791. [Google Scholar]

- Barth, E.; Dorr, M.; Böhme, M.; Gegenfurtner, K.; Martinetz, T. Guiding the mind’s eye: Improving communication and vision by external control of the scanpath. In Human Vision and Electronic Imaging XI; International Society for Optics and Photonics: Washington, DC, USA, 2006; Volume 6057, p. 60570D. [Google Scholar]

- Dorr, M.; Dorr, M.; Vig, E.; Gegenfurtner, K.R.; Martinetz, T.; Barth, E. Eye movement modelling and gaze guidance. In Proceedings of the Fourth International Workshop on Human-Computer Conversation, Bellagio, Italy, 6–7 October 2008. [Google Scholar]

- Sato, Y.; Sugano, Y.; Sugimoto, A.; Kuno, Y.; Koike, H. Sensing and Controlling Human Gaze in Daily Living Space for Human-Harmonized Information Environments. In Human-Harmonized Information Technology; Springer: Tokyo, Japan, 2016; Volume 1, pp. 199–237. [Google Scholar]

- Vig, E.; Dorr, M.; Barth, E. Learned saliency transformations for gaze guidance. In Human Vision and Electronic Imaging XVI; International Society for Optics and Photonics: Bellingham, WA, USA, 2011; p. 78650W. [Google Scholar]

- Biocca, F.; Owen, C.; Tang, A.; Bohil, C. Attention Issues in Spatial Information Systems: Directing Mobile Users’ Visual Attention Using Augmented Reality. J. Manag. Inf. Syst. 2007, 23, 163–184. [Google Scholar] [CrossRef]

- Sukan, M.; Elvezio, C.; Oda, O.; Feiner, S.; Tversky, B. ParaFrustum. In Proceedings of the 27th Annual ACM Symposium on User Interface Software and Technology—UIST ’14; ACM Press: New York, NY, USA, 2014; pp. 331–340. [Google Scholar]

- Kosara, R.; Miksch, S.; Hauser, H. Focus+context taken literally. IEEE Comput. Graph. Appl. 2002, 22, 22–29. [Google Scholar] [CrossRef]

- Mateescu, V.A.; Bajić, I.V. Attention Retargeting by Color Manipulation in Images. In Proceedings of the 1st International Workshop on Perception Inspired Video Processing—PIVP ’14; ACM Press: New York, NY, USA, 2014; pp. 15–20. [Google Scholar]

- Delamare, W.; Han, T.; Irani, P. Designing a gaze gesture guiding system. In Procedings of the 19th International Conference on Human-Computer Interaction with Mobile Devices and Services—MobileHCI ’17; ACM Press: New York, NY, USA, 2017; pp. 1–13. [Google Scholar]

- Pausch, R.; Snoddy, J.; Taylor, R.; Watson, S.; Haseltine, E. Disney’s Aladdin. In Proceedings of the 23rd Annual Conference on Computer Graphics and Interactive Techniques—SIGGRAPH ’96; ACM Press: New York, NY, USA, 1996; pp. 193–203. [Google Scholar]

- Souriau, E. La structure de l’univers filmique et le vocabulaire de la filmologie. | Interdisciplinary Center for Narratology. Rev. Int. de Filmol. 1951, 7–8, 231–240. [Google Scholar]

- Silva, A.; Raimundo, G.; Paiva, A. Tell me that bit again… bringing interactivity to a virtual storyteller. In Proceedings of the International Conference on Virtual Storytelling, Toulouse, France, 20–21 November 2003; pp. 146–154. [Google Scholar]

- Brown, C.; Bhutra, G.; Suhail, M.; Xu, Q.; Ragan, E.D. Coordinating attention and cooperation in multi-user virtual reality narratives. In Proceedings of the 2017 IEEE Virtual Reality (VR), Los Angeles, CA, USA, 18–22 March 2017; pp. 377–378. [Google Scholar]

- Niebur, E. Saliency map. Scholarpedia 2007, 2, 2675. [Google Scholar] [CrossRef]

- Itti, L.; Koch, C.; Niebur, E. A model of saliency-based visual attention for rapid scene analysis. IEEE Trans. Pattern Anal. Mach. Intell. 1998, 20, 1254–1259. [Google Scholar] [CrossRef]

- Baudisch, P.; DeCarlo, D.; Duchowski, A.T.; Geisler, W.S. Focusing on the essential. Commun. ACM 2003, 46, 60. [Google Scholar] [CrossRef]

- Duchowski, A.T.; Cournia, N.; Murphy, H. Gaze-Contingent Displays: A Review. CyberPsychol. Behav. 2004, 7, 621–634. [Google Scholar] [CrossRef] [PubMed]

- Sitzmann, V.; Serrano, A.; Pavel, A.; Agrawala, M.; Gutierrez, D.; Masia, B.; Wetzstein, G. Saliency in VR: How Do People Explore Virtual Environments? IEEE Trans. Vis. Comput. Graph. 2018, 24, 1633–1642. [Google Scholar] [CrossRef] [PubMed]

- Baudisch, P.; Rosenholtz, R. Halo: A technique for visualizing offscreen location. In Proceedings of the Conference on Human Factors in Computing Systems CHI’03, Ft. Lauderdale, FL, USA, 5–10 April 1993. [Google Scholar]

- Gustafson, S.G.; Irani, P.P. Comparing visualizations for tracking off-screen moving targets. In Proceedings of the CHI’07 Extended Abstracts on Human Factors in Computing Systems, San Jose, CA, USA, 28 April–3 May 2007; pp. 2399–2404. [Google Scholar]

- Gustafson, S.; Baudisch, P.; Gutwin, C.; Irani, P. Wedge: Clutter-free visualization of off-screen locations. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Florence, Italy, 5–10 April 2008; pp. 787–796. [Google Scholar]

- Gruenefeld, U.; El Ali, A.; Heuten, W.; Boll, S. Visualizing out-of-view objects in head-mounted augmented reality. In Proceedings of the 19th International Conference on Human-Computer Interaction with Mobile Devices and Services—MobileHCI ’17; ACM Press: New York, NY, USA, 2017; pp. 1–7. [Google Scholar]

- Kolasinski, E.M. Simulator Sickness in Virtual Environments. Available online: https://apps.dtic.mil/docs/citations/ADA295861 (accessed on 17 March 2019).

- Davis, S.; Nesbitt, K.; Nalivaiko, E. A Systematic Review of Cybersickness. In Proceedings of the 2014 Conference on Interactive Entertainment—IE2014; ACM Press: New York, NY, USA, 2014; pp. 1–9. [Google Scholar]

- Pavel, A.; Hartmann, B.; Agrawala, M. Shot Orientation Controls for Interactive Cinematography with 360 Video. In Proceedings of the 30th Annual ACM Symposium on User Interface Software and Technology—UIST ‘17, Québec City, QC, Canada, 22–25 October 2017; ACM Press: New York, NY, USA, 2017; pp. 289–297. [Google Scholar]

- Crossing the Line. Available online: https://www.mediacollege.com/video/editing/transition/reverse-cut.html (accessed on 10 December 2018).

- Knierim, P.; Kosch, T.; Achberger, A.; Funk, M. Flyables: Exploring 3D Interaction Spaces for Levitating Tangibles. In Proceedings of the Twelfth International Conference on Tangible, Embedded, and Embodied Interaction—TEI ’18; ACM Press: New York, NY, USA, 2018; pp. 329–336. [Google Scholar]

- Hoppe, M.; Knierim, P.; Kosch, T.; Funk, M.; Futami, L.; Schneegass, S.; Henze, N.; Schmidt, A.; Machulla, T. VRHapticDrones: Providing Haptics in Virtual Reality through Quadcopters. In Proceedings of the 17th International Conference on Mobile and Ubiquitous Multimedia—MUM 2018; ACM Press: New York, NY, USA, 2018; pp. 7–18. [Google Scholar]

- Nilsson, N.C.; Serafin, S.; Nordahl, R. Walking in Place Through Virtual Worlds. In Human-Computer Interaction. Interaction Platforms and Techniques. HCI 2016; Lecture Notes in Computer Science; Springer: Cham, Switzerland, 2016; Volume 9732. [Google Scholar]

- Suma, E.A.; Bruder, G.; Steinicke, F.; Krum, D.M.; Bolas, M. A taxonomy for deploying redirection techniques in immersive virtual environments. In Proceedings of the 2012 IEEE Virtual Reality (VR); IEEE: Costa Mesa, CA, USA, 2012; pp. 43–46. [Google Scholar]

- Bordwell, D.; Thompson, K. Film Art: An Introduction; McGraw-Hill: New York, NY, USA, 2013. [Google Scholar]

- Shah, P.; Miyake, A. The Cambridge Handbook of Visuospatial Thinking; Cambridge University Press: Cambridge, UK, 2005. [Google Scholar]

- Yeh, M.; Wickens, C.D.; Seagull, F.J. Target Cuing in Visual Search: The Effects of Conformality and Display Location on the Allocation of Visual Attention. Hum. Factors J. Hum. Factors Ergon. Soc. 1999, 41, 524–542. [Google Scholar] [CrossRef] [PubMed]

- Renner, P.; Pfeiffer, T. Evaluation of Attention Guiding Techniques for Augmented Reality-based Assistance in Picking and Assembly Tasks. In Proceedings of the 22nd International Conference on Intelligent User Interfaces Companion—IUI ’17 Companion; ACM Press: New York, NY, USA, 2017; pp. 89–92. [Google Scholar]

- Wright, R.D. Visual Attention; Oxford University Press: New York, NY, USA, 1998. [Google Scholar]

- Carrasco, M. Visual attention: The past 25 years. Vis. Res. 2011, 51, 1484–1525. [Google Scholar] [CrossRef] [PubMed]

- Itti, L.; Koch, C. Computational modelling of visual attention. Nat. Rev. Neurosci. 2001, 2, 194–203. [Google Scholar] [CrossRef] [PubMed]

- Biocca, F.; Tang, A.; Owen, C.; Xiao, F. Attention Funnel: Omnidirectional 3D Cursor for Mobile Augmented Reality Platforms. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems—CHI ’06; ACM Press: New York, NY, USA, 2006; p. 1115. [Google Scholar]

| process | bottom-up | top-down |

| attention | exogenous | endogenous |

| impulse | stimulus-driven | goal-driven |

| automatism | automatically/reflexive | voluntary |

| attentiveness | pre-attentive | attentive |

| cognition | memory-free | memory-bound |

| awareness | subtle | overt |

| cues | direct cues | indirect cues (symbolic) |

| Project/Literature | Environment | Display | Method | Name |

|---|---|---|---|---|

| (Rothe and Hußmann, 2018)[22] | CVR | HMD | diegetic | |

| (Brown et al., 2016)[18] | CVR | diegetic | ||

| (Nielsen et al., 2016)[21] | CVR | HMD | diegetic | |

| forced | ||||

| (Y.-C. Lin et al., 2017)[20] | CVR | HMD | forced | Autopilot |

| sign | Arrow | |||

| (Gugenheimer et al., 2016)[37] | CVR | HMD | forced | SwiVRChair |

| (Danieau et al., 2017)[19] | CVR | HMD | effects | Desaturation Fading |

| (Chang et al., 2018)[38] | CVR | HMD | haptic | FacePush |

| (Sassatelli et al., 2018)[39] | CVR | HMD | forced | Snap-Changes |

| (Gruenefeld et al., 2018b)[40] | CVR | HMD, LEDs | off - screen | RadialLight |

| (Y.-T. Lin et al., 2017)[41] | omnidirectional video | mobile device | off-screen | Outside-In |

| (Cole et al., 2006)[42] | 3D models | monitor, ET | modulation | stylized rendering |

| (Tanaka et al., 2015)[43] | omnidirectional images | mobile device | angular shift | |

| (Mendez et al., 2010)[44] | images | monitor | SMT | |

| (Veas et al., 2011)[45] | video | monitor | SMT | SMT |

| (Hoffmann et al., 2008)[46] | desktop windows | large screen | on-screen | frame, beam, splash |

| (Renner and Pfeiffer, 2018)[47] | AR | HoloLens | arrow flicker | SWave. 3D-path |

| (Perea et al., 2017)[48] | AR | mobile device | off-screen | Halo3D |

| (Gruenefeld et al., 2018a)[49] | AR, VR | HMD | off -screen | HaloVR WedgeVR |

| (Gruenefeld et al., 2017b)[50] | AR | HMD | off -screen | EyeSee360 |

| (Bork et al., 2018)[51] | AR | HMD | off - screen | Mirror Ball sidebARs u.a. |

| (Siu and Herskovic, 2013)[52] | AR | mobile device | off - screen | SidebARs |

| (Renner and Pfeiffer, 2017a)[53] | AR | HMD | screen-referenced word-referenced | sWave, arrow, flicker |

| (Burigat et al., 2006)[54] | maps | mobile device | off - screen | Halo, arrows |

| (Henze and Boll, 2010)[55] | AR | mobile device | off -screen | Magic Lens Peephole |

| (Schinke et al., 2010)[56] | AR | mobile device | off -screen | Mini-map 3d arrows |

| (Koskinen et al., 2017)[57] | VR | HMD, LEDs | off - screen | LEDs |

| (Zellweger et al., 2003)[58] | desktop windows | monitor | off -screen | CityLights |

| (Bailey et al., 2012)[12] | images | monitor | subtle | SGD |

| (Bailey et al., 2009)[59] | images | monitor | subtle | SGD |

| (McNamara et al., 2008a)[60] | images | monitor, ET | subtle | SGD |

| (Grogorick et al., 2017)[61] | VR | HMD, ET | subtle | SGD |

| (McNamara et al., 2012)[62] | images | monitor, ET | subtle | Art |

| (Weiquan Lu et al., 2014)[63] | AR, video | monitor | subtle | subtle cues, visual clutter |

| (Waldin et al., 2017)[36] | images | High-frequent monitor | subtle | SGD |

| (Smith and Tadmor, 2013)[64] | images | monitor | blur | |

| (Hata et al., 2016)[65] | images | monitor | subtle, blur | |

| (Hagiwara et al., 2011)[66] | images | monitor | saliency editing | |

| (Kosek et al., 2017)[67] | lightfield video | HMD | visual, auditive, haptic | IRIDiuM+ |

| (Kaul and Rohs, 2017)[68] | VR/AR | HMD | haptic | HapticHead |

| (Rantala et al., 2017)[69] | image | monitor | haptic | Headband |

| (Stratmann et al., 2018)[70] | cyber-physical systems | monitors | haptic | Vibrotactile Peripheral |

| (Knierim et al., 2017)[71] | VR | HMD | haptic | Tactile Drones |

| (Sridharan et al., 2015)[72] | VR | HMD, ET | subtle modulation | |

| (Kim and Varshney, 2006)[73] | image | monitor | saliency adjust | |

| (Jarodzka et al., 2013)[74] | video | monitor | blur | EMME |

| (Lintu and Carbonell, 2009)[75] | images | monitor | blur | |

| (Khan et al., 2005)[76] | images | large display | highlighting | spotlight |

| (Barth et al., 2006)[77] | video | GCD, ET | saliency adjust | |

| (Dorr et al., 2008)[78] | video | GCD, ET | saliency adjust | |

| (Sato et al., 2016)[79] | video | monitor | subtle | saliency |

| diegetic | robot gaze | |||

| (Vig et al., 2011)[80] | video | GCD | saliency adjust | |

| (Biocca et al., 2007)[81] | AR | AR-HMD | non-diegetic | funnel |

| (Sukan et al., 2014)[82] | AR | AR-HMD | non-diegetic | ParaFrustum |

| (Kosara et al., 2002)[83] | text, images | monitor, ET | blur, depth-of-field | |

| (Mateescu and Bajić, 2014)[84] | images | monitor | color manipulation | |

| (Delamare et al., 2017)[85] | AR | HMD | gaze gestures |

| Dimension/Property | Option 1 | Option 2 | Option 3 |

|---|---|---|---|

| Diegesis | diegetic | non-diegetic | |

| Senses | visual | auditive | haptic |

| Target | on-screen | off-screen | |

| Reference | world-referenced | screen-referenced | |

| Directness | direct | indirect | |

| Awareness | subtle | overt | |

| Freedom | forced by system | forced by reflex | voluntary |

| Diegetic | Non-Diegetic | |

|---|---|---|

| + | high presence and enjoyment [21,22] | easily usable and noticeable |

| - | depends on the story, not usable for all cases [18,22] | can disrupt the VR experience [21] |

| -> | suitable for visual experiences and wide story structures | suitable for important information |

| Visual | Auditive | Haptic | |

|---|---|---|---|

| + | can be easily integrated | works also for off-screen RoIs | novel experience |

| - | not always visible, depending on the viewing direction | difficult to distinguish between diegetic and non-diegetic | difficult to realize needs additional devices |

| -> | suitable for visual experiences and wide story structures | suitable for changing the viewing direction | suitable for public application |

| World-Referenced | Screen-Referenced | |

|---|---|---|

| + | integrated in VR world, higher presence | always visible |

| - | not always visible | disrupt the VR experience |

| -> | suitable for experiences where presence is important | suitable for off-screen guiding and important information |

| Direct Cues | Indirect Cues | |

|---|---|---|

| + | fast | sustainable |

| - | transient, not always visible | must be interpreted |

| -> | suitable for on-screen guiding | suitable for recallable RoIs |

| Subtle | Overt | |

|---|---|---|

| + | no disruption [59] | easily noticeable [106] can increase recall rates |

| - | not always effective [13] | can be disrupting |

| -> | suitable for wide story structures | suitable for learning task [12,13] |

| Forced by System | Forced by Reflex | Voluntary | |

|---|---|---|---|

| + | RoI always shown | fast, can be integrated in the story | remains the freedom of viewing direction |

| - | can disrupt the VR experience [21] | not always usable | RoIs can be missed |

| -> | suitable for important and fast direction changes | suitable for visual experiences and fast reactions | suitable for visual experiences and wide story structures (world narratives) |

| Literature | Diegesis | Senses | Target | Reference | Directness | Awareness | Freedom | Name |

|---|---|---|---|---|---|---|---|---|

| (Rothe and Hußmann, 2018)[22] | diegetic | visual | on-scr. off-scr. | world | dir. | subtle | voluntary | |

| audio | off-scr. | world | dir. | subtle | voluntary | |||

| (Brown et al., 2016)[18] | diegetic | visual | on-scr. | world | dir. indir. | subtle | voluntary | |

| (S. Rothe et al., 2018)[13] | non-dieg. | visual | on-scr. off-scr. | world | dir. | subtle overt | voluntary | SGD |

| (Nielsen et al., 2016)[21] | diegetic | visual | off-scr. | world | dir. | subtle | voluntary | |

| non-dieg. | / | off-scr. | screen | dir. | overt | forced sys | ||

| (Y.-C. Lin et al., 2017)[20] | non-dieg. | / | off-scr. | screen | dir. | overt | forced sys | Autopilot |

| non-dieg. | visual | off-scr. | world | indir. | overt | voluntary | Arrow | |

| (Gugenheimer et al., 2016)[37] | non-dieg. | / | off-scr. | world | dir. | overt | forced sys | SwiVRChair |

| (Danieau et al., 2017)[19] | non-dieg. | visual | on-scr. off-scr. | world | dir. | overt | voluntary | Fading, Desaturation |

| (Chang et al., 2018)[38] | non-dieg. | haptic | off-scr. | screen | indir. | overt | voluntary | FacePush |

| (Sassatelli et al., 2018)[39] | non-dieg. | / | off-scr. | screen | dir. | overt | forced sys | Snap-Changes |

| (Gruenefeld et al., 2018b)[40] | non-dieg. | visual | off-scr. | screen | indir | overt | voluntary | RadialLight |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Rothe, S.; Buschek, D.; Hußmann, H. Guidance in Cinematic Virtual Reality-Taxonomy, Research Status and Challenges. Multimodal Technol. Interact. 2019, 3, 19. https://doi.org/10.3390/mti3010019

Rothe S, Buschek D, Hußmann H. Guidance in Cinematic Virtual Reality-Taxonomy, Research Status and Challenges. Multimodal Technologies and Interaction. 2019; 3(1):19. https://doi.org/10.3390/mti3010019

Chicago/Turabian StyleRothe, Sylvia, Daniel Buschek, and Heinrich Hußmann. 2019. "Guidance in Cinematic Virtual Reality-Taxonomy, Research Status and Challenges" Multimodal Technologies and Interaction 3, no. 1: 19. https://doi.org/10.3390/mti3010019

APA StyleRothe, S., Buschek, D., & Hußmann, H. (2019). Guidance in Cinematic Virtual Reality-Taxonomy, Research Status and Challenges. Multimodal Technologies and Interaction, 3(1), 19. https://doi.org/10.3390/mti3010019