Contextually-Based Social Attention Diverges across Covert and Overt Measures

Abstract

1. Introduction

2. Experiment 1

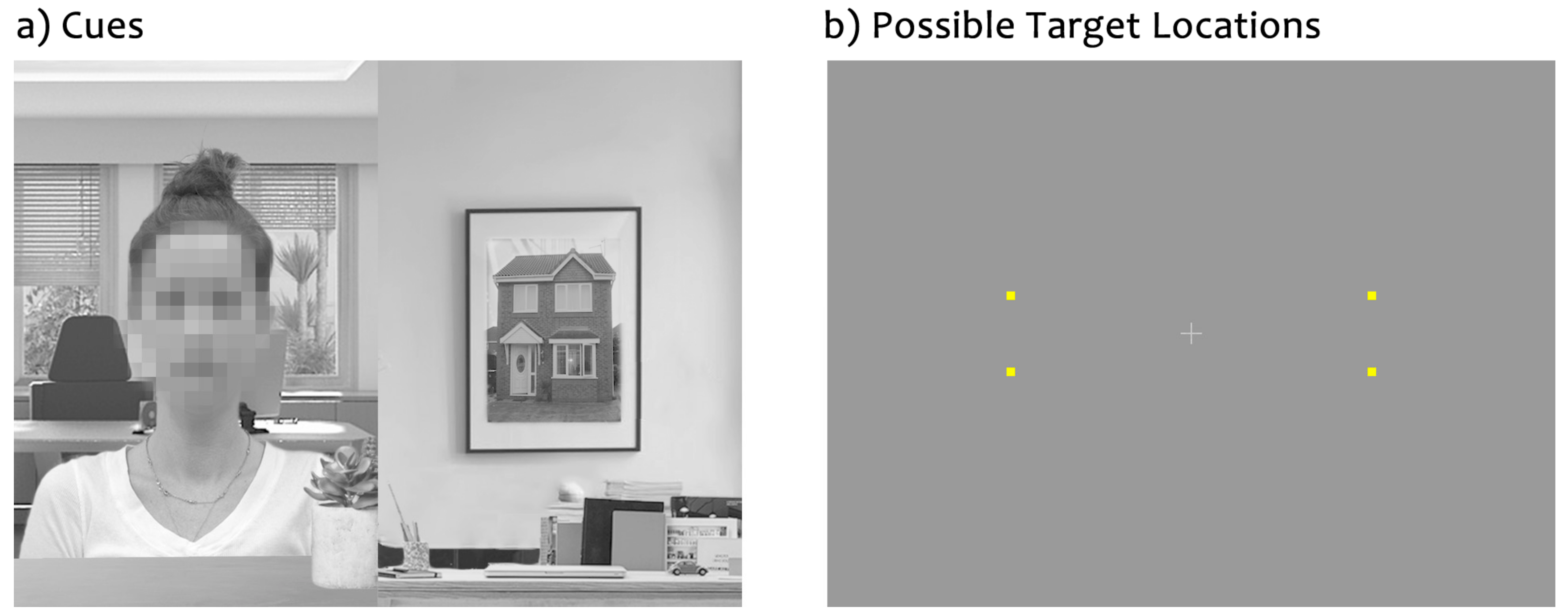

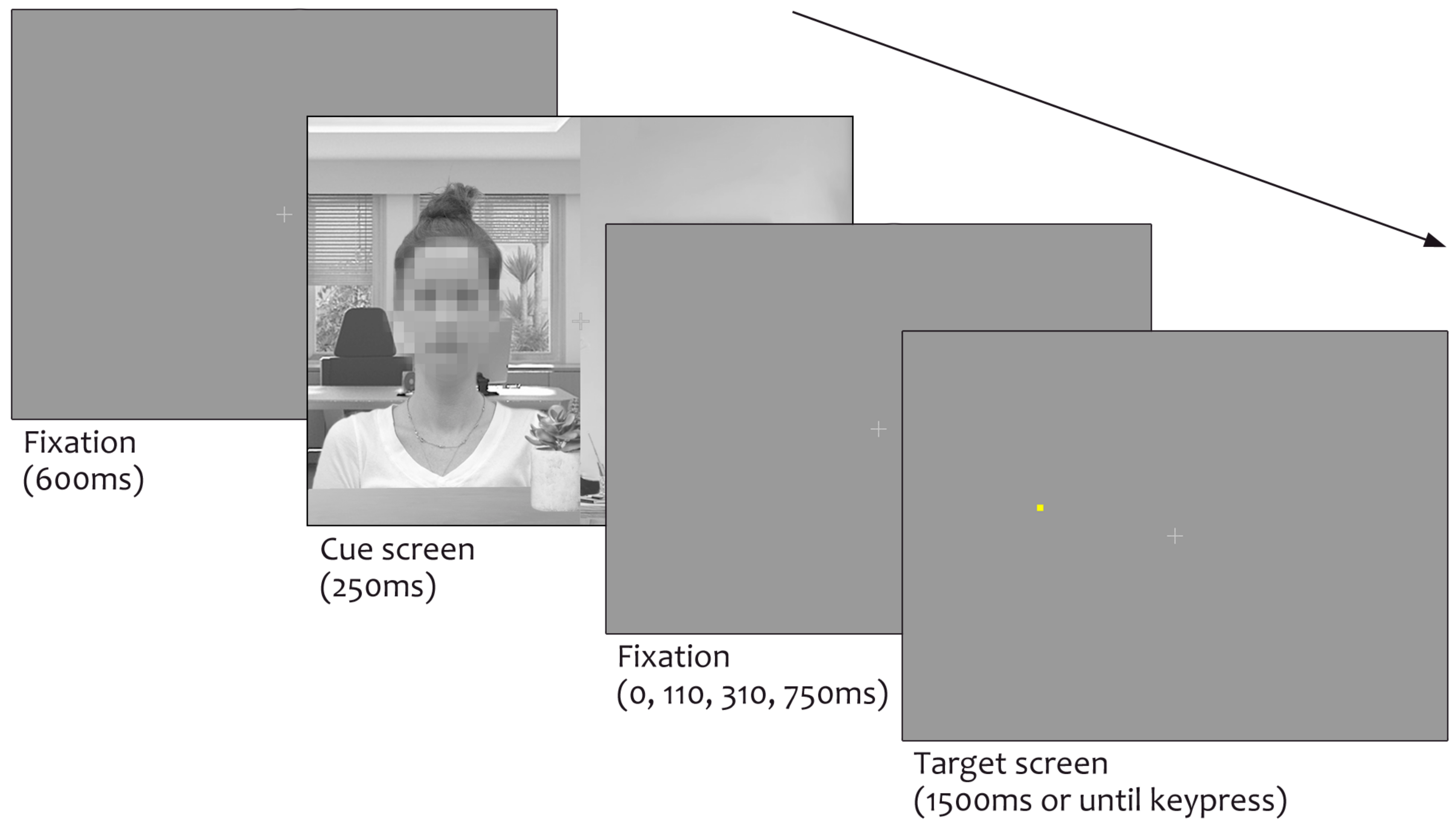

Materials and Methods

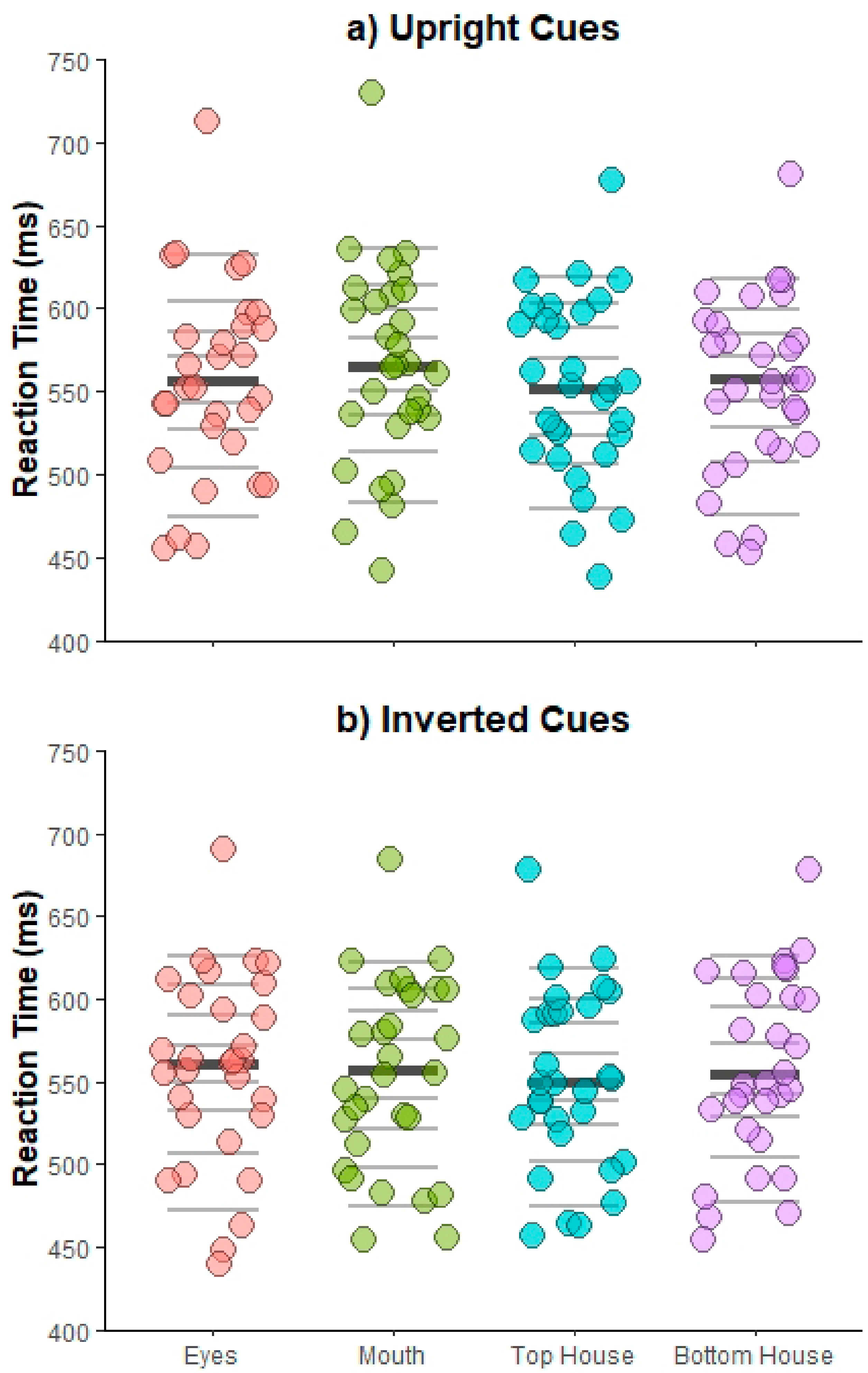

3. Results

4. Discussion

5. Experiment 2

Materials and Methods

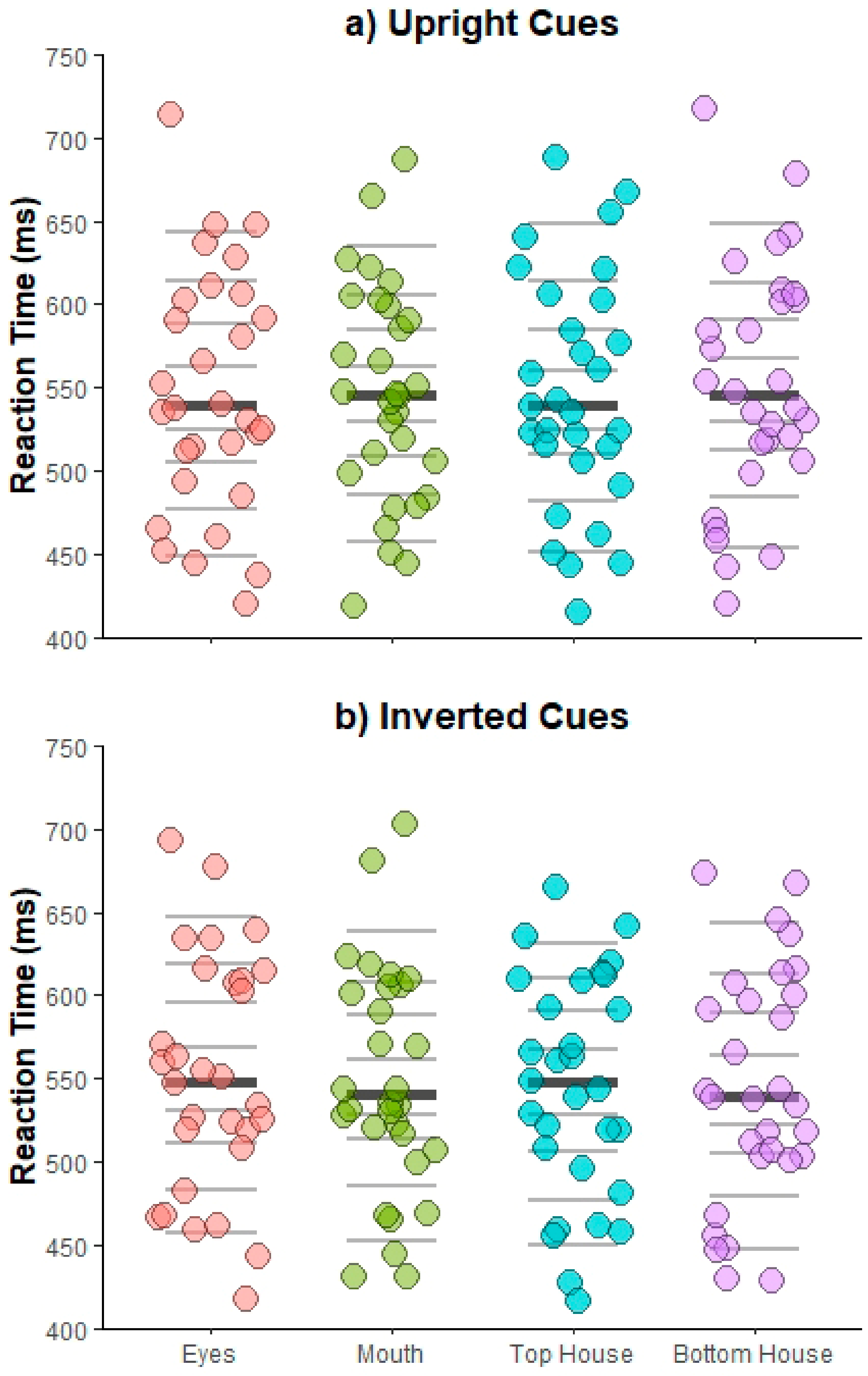

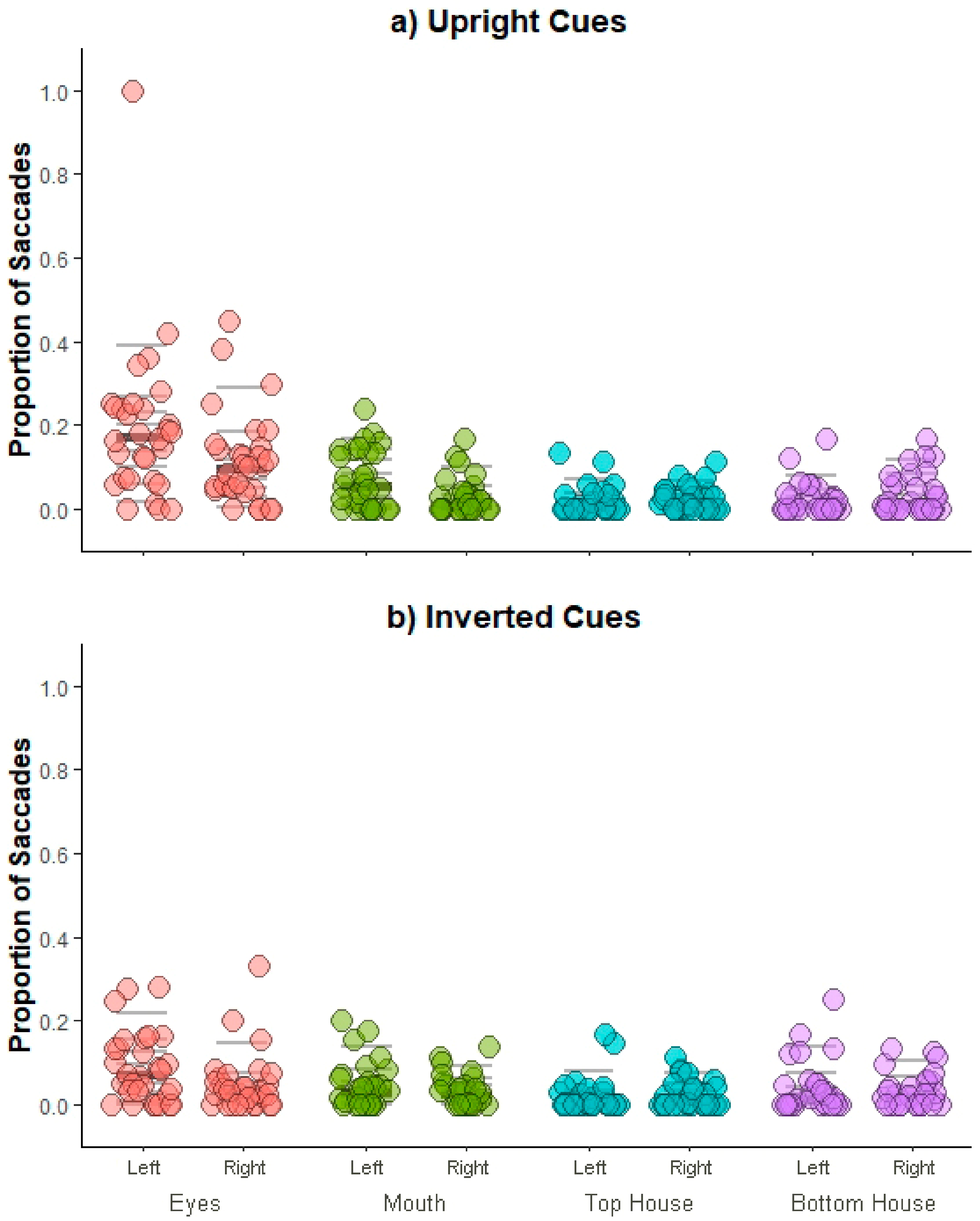

6. Results

7. Discussion

8. General Discussion

Author Contributions

Acknowledgments

Conflicts of Interest

References

- Argyle, M. Social Interactions; Methuen: London, UK, 1969. [Google Scholar]

- Brüne, M.; Brüne-Cohrs, U. Theory of mind—Evolution, ontogeny, brain mechanisms and psychopathology. Neurosci. Biobehav. Rev. 2006, 30, 437–455. [Google Scholar] [CrossRef] [PubMed]

- Corballis, M.; Lea, S.E.G. The Descent of Mind: Psychological Perspectives on Hominid Evolution; Oxford University Press: Oxford, UK, 2000. [Google Scholar]

- Whiten, A.; Byrne, R. The manipulation of attention in primate tactical deception. In Machiavellian Intelligence: Social Expertise and the Evolution of Intellect in Monkeys, Apes and Humans; Whiten, R.B.A.A., Ed.; Clarendon Press: Oxford, UK, 1988. [Google Scholar]

- Farroni, T.; Csibra, G.; Simion, F.; Johnson, M.H. Eye contact detection in humans from birth. Proc. Natl. Acad. Sci. USA 2002, 99, 9602–9605. [Google Scholar] [CrossRef] [PubMed]

- Hood, B.M.; Willen, J.D.; Driver, J. Adult’s eyes trigger shifts of visual attention in human infants. Psychol. Sci. 1998, 9, 131–134. [Google Scholar] [CrossRef]

- Goren, C.C.; Sarty, M.; Wu, P.Y. Visual following and pattern discrimination of face-like stimuli by newborn infants. Pediatrics 1975, 56, 544–549. [Google Scholar] [PubMed]

- Johnson, M.H.; Dziurawiec, S.; Ellis, H.; Morton, J. Newborns’ preferential tracking of face-like stimuli and its subsequent decline. Cognition 1991, 40, 1–19. [Google Scholar] [CrossRef]

- Valenza, E.; Simion, F.; Cassia, V.M.; Umilta, C. Face preference at birth. J. Exp. Psychol. Hum. Percept. Perform. 1996, 22, 892–903. [Google Scholar] [CrossRef]

- Bentin, S.; Allison, T.; Puce, A.; Perez, E.; McCarthy, G. Electrophysiological studies of face perception in humans. J. Cognit. Neurosci. 1996, 8, 551–565. [Google Scholar] [CrossRef]

- Kanwisher, N.; Yovel, G. The fusiform face area: A cortical region specialized for the perception of faces. Philos. Trans. R. Soc. Lond. B Biol. Sci. 2006, 361, 2109–2128. [Google Scholar] [CrossRef]

- Nummenmaa, L.; Calder, A.J. Neural mechanisms of social attention. Trends Cognit. Sci. 2008, 13, 135–143. [Google Scholar] [CrossRef]

- Puce, A.; Allison, T.; Bentin, S.; Gore, J.C.; McCarthy, G. Temporal cortex activation in humans viewing eye and mouth movements. J. Neurosci. 1998, 18, 2188–2199. [Google Scholar] [CrossRef]

- Yovel, G.; Levy, J.; Grabowecky, M.; Paller, K.A. Neural correlates of the left-visual-field superiority in face perception appear at multiple stages of face processing. J. Cognit. Neurosci. 2003, 15, 462–474. [Google Scholar] [CrossRef] [PubMed]

- Haxby, J.V.; Norwitz, B.; Ungerleider, L.G.; Maisog, J.M.; Pietrini, P.; Grady, C.L. The functional organization of human extrastriate cortex: A pet-rcbf study of selective attention to faces and locations. J. Neurosci. 1994, 14, 6336–6353. [Google Scholar] [CrossRef] [PubMed]

- Gauthier, I.; Tarr, M.J.; Moylan, J.; Skudlarski, P.; Gore, J.C.; Anderson, A.W. The fusiform “face area” is part of a network that processes faces at the individual level. J. Cognit. Neurosci. 2000, 12, 495–504. [Google Scholar] [CrossRef]

- Perrett, D.I.; Hietanen, J.K.; Oram, M.W.; Benson, P.J.; Rolls, E.T. Organization and functions of cells responsive to faces in the temporal cortex [and discussion]. Philos. Trans. R. Soc. Lond. B Biol. Sci. 1992, 335, 23–30. [Google Scholar] [PubMed]

- Perrett, D.I.; Smith, P.A.J.; Potter, D.D.; Mistlin, A.J.; Head, A.S.; Milner, A.D.; Jeeves, M.A. Visual cells in the temporal cortex sensitive to face view and gaze direction. Proc. R. Soc. Lond. Ser. B Biol. Sci. 1985, 223, 293–317. [Google Scholar]

- Baron-Cohen, S. Mindblindness: An Essay on Autism and Theory of Mind; MIT Press: Cambridge, MA, USA, 1995. [Google Scholar]

- Emery, N.J. The eyes have it: The neuroethology, function and evolution of social gaze. Neurosci. Biobehav. Rev. 2000, 24, 581–604. [Google Scholar] [CrossRef]

- Schaller, M.; Park, J.H.; Kenrick, D.T. Human evolution & social cognition. In Oxford Handbook of Evolutionary Psychology; Dunbar, R.I.M., Barrett, L., Eds.; Oxford University Press: Oxford, UK, 2007. [Google Scholar]

- Dunbar, R.I.M.; Shultz, S. Evolution in the Social Brain. Science 2007, 317, 1344. [Google Scholar] [CrossRef]

- Birmingham, E.; Kingstone, A. Human Social Attention. Ann. N. Y. Acad. Sci. 2009, 1156, 118–140. [Google Scholar] [CrossRef]

- Bindemann, M.; Burton, A.M.; Langton, S.R.; Schweinberger, S.R.; Doherty, M.J. The control of attention to faces. J. Vis. 2007, 7, 1–8. [Google Scholar] [CrossRef]

- Bindemann, M.; Burton, A.M.; Hooge, I.T.C.; Jenkins, R.; DeHaan, E.H.F. Faces retain attention. Psychon. Bull. Rev. 2005, 12, 1048–1053. [Google Scholar] [CrossRef]

- Ariga, A.; Arihara, K. Attentional capture by spatiotemporally task-irrelevant faces: Supportive evidence for Sato and Kawahara (2015). Psychol. Res. 2018, 82, 859–865. [Google Scholar] [CrossRef] [PubMed]

- Lavie, N.; Ro, T.; Russell, C. The role of perceptual load in processing distractor faces. Psychol. Sci. 2003, 14, 510–515. [Google Scholar] [CrossRef] [PubMed]

- Devue, C.; Laloyaux, C.; Feyers, D.; Theeuwes, J.; Brédart, S. Do pictures of faces, and which ones, capture attention in the inattentional-blindness paradigm? Perception 2009, 38, 552–568. [Google Scholar] [CrossRef] [PubMed]

- Ro, T.; Russell, C.; Lavie, N. Changing faces: A detection advantage in the flicker paradigm. Psychol. Sci. 2001, 12, 94–99. [Google Scholar] [CrossRef] [PubMed]

- Yarbus, A.L. Eye Movements & Vision; Plenum Press: New York, NY, USA, 1967. [Google Scholar]

- Birmingham, E.; Bischof, W.; Kingstone, A. Social attention and real-world scenes: The roles of action, competition and social content. Q. J. Exp. Psychol. 2008, 61, 986–998. [Google Scholar] [CrossRef]

- Birmingham, E.; Bischof, W.; Kingstone, A. Gaze selection in complex social scenes. Vis. Cognit. 2008, 16, 341–355. [Google Scholar] [CrossRef]

- Cerf, M.; Frady, E.P.; Koch, C. Faces and text attract gaze independent of the task: Experimental data and computer model. J. Vis. 2009, 9, 10–10. [Google Scholar] [CrossRef]

- Laidlaw, K.E.W.; Risko, E.F.; Kingstone, A. A new look at social attention: Orienting to the eyes is not (entirely) under volitional control. J. Exp. Psychol. Hum. Percept. Perform. 2012, 38, 1132–1143. [Google Scholar] [CrossRef]

- Crouzet, S.M.; Kirchner, H.; Thorpe, S.J. Fast saccades toward faces: Face detection in just 100 ms. J. Vis. 2010, 10, 1–17. [Google Scholar] [CrossRef]

- Devue, C.; Belopolsky, A.V.; Theeuwes, J. Oculomotor guidance and capture by irrelevant faces. PLoS ONE 2012, 7, e34598. [Google Scholar] [CrossRef]

- Theeuwes, J.; Van der Stigchel, S. Faces capture attention: Evidence from inhibition of return. Vis. Cognit. 2006, 13, 657–665. [Google Scholar] [CrossRef]

- Smilek, D.; Birmingham, E.; Cameron, D.; Bischof, W.; Kingstone, A. Cognitive Ethology and exploring attention in real-world scenes. Brain Res. 2006, 1080, 101–119. [Google Scholar] [CrossRef] [PubMed]

- Smith, T.J. Watching You Watch Movies: Using Eye Tracking to Inform Film Theory; Oxford University Press: Oxford, UK, 2013. [Google Scholar]

- Riby, D.; Hancock, P.J.B. Looking at movies and cartoons: Eye-tracking evidence from Williams syndrome and autism. J. Intellect. Disabil. Res. 2009, 53, 169–181. [Google Scholar] [CrossRef] [PubMed]

- Boggia, J.; Ristic, J. Social event segmentation. Q. J. Exp. Psychol. 2015, 68, 731–744. [Google Scholar] [CrossRef] [PubMed]

- Kuhn, G.; Teszka, R.; Tenaw, N.; Kingstone, A. Don’t be fooled! Attentional responses to social cues in a face-to-face and video magic trick reveals greater top-down control for overt than covert attention. Cognition 2016, 146, 136–142. [Google Scholar] [CrossRef] [PubMed]

- Hayward, D.A.; Voorhies, W.; Morris, J.L.; Capozzi, F.; Ristic, J. Staring reality in the face: A comparison of social attention across laboratory and real world measures suggests little common ground. Can. J. Exp. Psychol. 2017, 71, 212–225. [Google Scholar] [CrossRef]

- Risko, E.F.; Richardson, D.C.; Kingstone, A. Breaking the fourth wall of cognitive science. Curr. Dir. Psychol. Sci. 2016, 25, 70–74. [Google Scholar] [CrossRef]

- Pereira, E.J.; Birmingham, E.; Ristic, J. The eyes do not have it after all? Attention is not automatically biased towards faces and eyes. Psychol. Res. 2019, 1–17. [Google Scholar] [CrossRef]

- Crouzet, S.M.; Thorpe, S.J. Low-level cues and ultra-fast face detection. Front. Psychol. 2011, 2. [Google Scholar] [CrossRef]

- Cerf, M.; Harel, J.; Einhäuser, W.; Koch, C. Predicting human gaze using low-level saliency combined with face detection. In Proceedings of the Advances in Neural Information Processing Systems, Vancouver, BC, Canada, 3–6 December 2007; pp. 241–248. [Google Scholar]

- Itier, R.J.; Latinus, M.; Taylor, M.J. Face, eye and object early processing: What is the face specificity? Neuroimage 2006, 29, 667–676. [Google Scholar] [CrossRef]

- Kendall, L.N.; Raffaelli, Q.; Kingstone, A.; Todd, R.M. Iconic faces are not real faces: Enhanced emotion detection and altered neural processing as faces become more iconic. Cognit. Res. Princ. Implic. 2016, 1, 19. [Google Scholar] [CrossRef] [PubMed]

- Rousselet, G.A.; Ince, R.A.; van Rijsbergen, N.J.; Schyns, P.G. Eye coding mechanisms in early human face event-related potentials. J. Vis. 2014, 14, 1–24. [Google Scholar] [CrossRef] [PubMed]

- Nakamura, K.; Kawabata, H. Attractive faces temporally modulate visual attention. Front. Psychol. 2014, 5, 620. [Google Scholar] [CrossRef] [PubMed]

- Silva, A.; Macedo, A.F.; Albuquerque, P.B.; Arantes, J. Always on my mind? Recognition of attractive faces may not depend on attention. Front. Psychol. 2016, 7, 53. [Google Scholar] [CrossRef]

- Sui, J.; Liu, C.H. Can beauty be ignored? Effects of facial attractiveness on covert attention. Psychon. Bull. Rev. 2009, 16, 276–281. [Google Scholar] [CrossRef]

- Chun, M.M.; Jiang, Y. Contextual cueing: Implicit learning and memory of visual context guides spatial attention. Cognit. Psychol. 1998, 36, 28–71. [Google Scholar] [CrossRef]

- Loftus, G.R.; Mackworth, N.H. Cognitive determinants of fixation location during picture viewing. J. Exp. Psychol. Hum. Percept. Perform. 1978, 4, 565–572. [Google Scholar] [CrossRef]

- Neider, M.B.; Zelinsky, G.J. Scene context guides eye movements during visual search. Vis. Res. 2006, 46, 614–621. [Google Scholar] [CrossRef]

- Aviezer, H.; Bentin, S.; Dudarev, V.; Hassin, R.R. The automaticity of emotional face-context integration. Emotion 2011, 11, 1406–1414. [Google Scholar] [CrossRef]

- Bentin, S.; Sagiv, N.; Mecklinger, A.; Friederici, A.; von Cramon, Y.D. Priming visual face-processing mechanisms: Electrophysiological evidence. Psychol. Sci. 2002, 13, 190–193. [Google Scholar] [CrossRef]

- Hassin, R.R.; Aviezer, H.; Bentin, S. Inherently ambiguous: Facial expressions of emotions, in context. Emot. Rev. 2013, 5, 60–65. [Google Scholar] [CrossRef]

- MacNamara, A.; Ochsner, K.N.; Hajcak, G. Previously reappraised: The lasting effect of description type on picture-elicited electrocortical activity. Soc. Cognit. Affect. Neurosci. 2011, 6, 348–358. [Google Scholar] [CrossRef] [PubMed]

- Morel, S.; Beaucousin, V.; Perrin, M.; George, N. Very early modulation of brain responses to neutral faces by a single prior association with an emotional context: Evidence from MEG. Neuroimage 2012, 61, 1461–1470. [Google Scholar] [CrossRef] [PubMed]

- Righart, R.; de Gelder, B. Context influences early perceptual analysis of faces—An electrophysiological study. Cereb. Cortex (N. Y. N.Y. 1991) 2006, 16, 1249–1257. [Google Scholar] [CrossRef] [PubMed]

- Wieser, M.J.; Gerdes, A.B.M.; Büngel, I.; Schwarz, K.A.; Mühlberger, A.; Pauli, P. Not so harmless anymore: How context impacts the perception and electrocortical processing of neutral faces. NeuroImage 2014, 92, 74–82. [Google Scholar] [CrossRef] [PubMed]

- Righart, R.; de Gelder, B. Recognition of facial expressions is influenced by emotional scene gist. Cognit. Affect. Behav. Neurosci. 2008, 8, 264–272. [Google Scholar] [CrossRef]

- Faul, F.; Erdfelder, E.; Lang, A.-G.; Buchner, A. G*Power 3: A flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behav. Res. Methods 2007, 39, 175–191. [Google Scholar] [CrossRef] [PubMed]

- Bindemann, M.; Burton, A.M. Attention to upside-down faces: An exception to the inversion effect. Vis. Res. 2008, 48, 2555–2561. [Google Scholar] [CrossRef] [PubMed]

- Langton, S.R.; Law, A.S.; Burton, A.M.; Schweinberger, S.R. Attention capture by faces. Cognition 2008, 107, 330–342. [Google Scholar] [CrossRef] [PubMed]

- Brainard, D.H. The psychophysics toolbox. Spat. Vis. 1997, 10, 433–436. [Google Scholar] [CrossRef]

- Bruce, V.; Young, A. Understanding face recognition. Br. J. Psychol. 1986, 77, 305–327. [Google Scholar] [CrossRef] [PubMed]

- Farah, M.J.; Wilson, K.D.; Drain, M.; Tanaka, J.N. What is “special” about face perception? Psychol. Rev. 1998, 105, 482–498. [Google Scholar] [CrossRef] [PubMed]

- Tanaka, J.W.; Farah, M.J. Parts and wholes in face recognition. Q. J. Exp. Psychol. A Hum. Exp. Psychol. 1993, 46, 225–245. [Google Scholar] [CrossRef]

- O’Craven, K.M.; Downing, P.E.; Kanwisher, N. fMRI evidence for objects as the units of attentional selection. Nature 1999, 401, 584–587. [Google Scholar] [CrossRef] [PubMed]

- Willenbockel, V.; Sadr, J.; Fiset, D.; Horne, G.O.; Gosselin, F.; Tanaka, J.W. Controlling low-level image properties: The SHINE toolbox. Beha. Res. Methods 2010, 42, 671–684. [Google Scholar] [CrossRef] [PubMed]

- Frank, M.C.; Vul, E.; Johnson, S.P. Development of infants’ attention to faces during the first year. Cognition 2009, 110, 160–170. [Google Scholar] [CrossRef] [PubMed]

- Simion, F.; Giorgio, E.D. Face perception and processing in early infancy: Inborn predispositions and developmental changes. Front. Psychol. 2015, 6, 969. [Google Scholar] [CrossRef] [PubMed]

- Yin, R.K. Looking at upside-down faces. J. Exp. Psychol. 1969, 81, 141–145. [Google Scholar] [CrossRef]

- Kanwisher, N.; McDermott, J.; Chun, M.M. The fusiform face area: A module in human extrastriate cortex specialized for face perception. J. Neurosci. 1997, 17, 4302–4311. [Google Scholar] [CrossRef]

- Rossion, B.; Joyce, C.A.; Cottrell, G.W.; Tarr, M.J. Early lateralization and orientation tuning for face, word, and object processing in the visual cortex. NeuroImage 2003, 20, 1609–1624. [Google Scholar] [CrossRef]

- MacLeod, C.; Mathews, A.M.; Tata, P. Attentional bias in emotional disorders. J. Abnorm. Psychol. 1986, 95, 15–20. [Google Scholar] [CrossRef] [PubMed]

- Holm, S. A simple sequential rejective multiple test procedure. Scand. J. Stat. 1979, 6, 65–70. [Google Scholar]

- Ludbrook, J. Multiple inferences using confidence intervals. Clin. Exp. Pharmacol. Physiol. 2000, 27, 212–215. [Google Scholar] [CrossRef] [PubMed]

- Dienes, Z. Bayesian Versus Orthodox Statistics: Which Side Are You On? Perspect. Psychol. Sci. 2011, 6, 274–290. [Google Scholar] [CrossRef] [PubMed]

- Hayward, D.A.; Ristic, J. Measuring attention using the Posner cuing paradigm: The role of across and within trial target probabilities. Front. Hum. Neurosci. 2013, 7, 205. [Google Scholar] [CrossRef] [PubMed]

- Bertelson, P. The time course of preparation. Q. J. Exp. Psychol. 1967, 19, 272–279. [Google Scholar] [CrossRef]

- Eastwood, J.D.; Smilek, D.; Merikle, P.M. Differential attentional guidance by unattended faces expressing positive and negative emotion. Percept. Psychophys. 2001, 63, 1004–1013. [Google Scholar] [CrossRef]

- Hedger, N.; Garner, M.; Adams, W.J. Do emotional faces capture attention, and does this depend on awareness? Evidence from the visual probe paradigm. J. Exp. Psychol. Hum. Percept. Perform. 2019, 45, 790. [Google Scholar] [CrossRef]

- Larson, C.L.; Aronoff, J.; Stearns, J.J. The shape of threat: Simple geometric forms evoke rapid and sustained capture of attention. Emotion 2007, 7, 526–534. [Google Scholar] [CrossRef]

- Hayward, D.A.; Ristic, J. Exposing the cuing task: The case of gaze and arrow cues. Atten. Percept. Psychophys. 2015, 77, 1088–1104. [Google Scholar] [CrossRef]

- Burra, N.; Framorando, D.; Pegna, A.J. Early and late cortical responses to directly gazing faces are task dependent. Cognit. Affect. Behav. Neurosci. 2018, 18, 796–809. [Google Scholar] [CrossRef] [PubMed]

- Hessels, R.S.; Holleman, G.A.; Kingstone, A.; Hooge, I.T.C.; Kemner, C. Gaze allocation in face-to-face communication is affected primarily by task structure and social context, not stimulus-driven factors. Cognition 2018, 184, 28–43. [Google Scholar] [CrossRef] [PubMed]

- Blair, C.D.; Capozzi, F.; Ristic, J. Where is your attention? Assessing individual instances of covert attentional orienting in response to gaze and arrow cues. Vision 2017, 1, 19. [Google Scholar] [CrossRef]

- Gobel, M.S.; Kim, H.S.; Richardson, D.C. The dual function of social gaze. Cognition 2015, 136, 359–364. [Google Scholar] [CrossRef] [PubMed]

- Latinus, M.; Love, S.A.; Rossi, A.; Parada, F.J.; Huang, L.; Conty, L.; George, N.; James, K.; Puce, A. Social decisions affect neural activity to perceived dynamic gaze. Soc. Cognit. Affect. Neurosci. 2015, 10, 1557–1567. [Google Scholar] [CrossRef] [PubMed]

- Scott, H.; Batten, J.P.; Kuhn, G. Why are you looking at me? It’s because I’m talking, but mostly because I’m staring or not doing much. Atten. Percept. Psychophys. 2018, 81, 109–118. [Google Scholar] [CrossRef] [PubMed]

- Laidlaw, K.E.W.; Foulsham, T.; Kuhn, G.; Kingstone, A. Potential social interactions are important to social attention. Proc. Natl. Acad. Sci. USA 2011, 108, 5548–5553. [Google Scholar] [CrossRef] [PubMed]

- Laidlaw, K.E.W.; Kingstone, A. Fixations to the eyes aids in facial encoding; covertly attending to the eyes does not. Acta Psychol. 2017, 173, 55–65. [Google Scholar] [CrossRef]

- Laidlaw, K.E.W.; Rothwell, A.; Kingstone, A. Camouflaged attention: Covert attention is critical to social communication in natural settings. Evol. Hum. Behav. 2016, 37, 449–455. [Google Scholar] [CrossRef]

- Bonmassar, C.; Pavani, F.; van Zoest, W. The role of eye movements in manual responses to social and nonsocial cues. Atten. Percept. Psychophys. 2019. [Google Scholar] [CrossRef]

- Kuhn, G.; Teszka, R. Don’t get misdirected! Differences in overt and covert attentional inhibition between children and adults. Q. J. Exp. Psychol. 2018, 71, 688–694. [Google Scholar] [CrossRef] [PubMed]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Pereira, E.J.; Birmingham, E.; Ristic, J. Contextually-Based Social Attention Diverges across Covert and Overt Measures. Vision 2019, 3, 29. https://doi.org/10.3390/vision3020029

Pereira EJ, Birmingham E, Ristic J. Contextually-Based Social Attention Diverges across Covert and Overt Measures. Vision. 2019; 3(2):29. https://doi.org/10.3390/vision3020029

Chicago/Turabian StylePereira, Effie J., Elina Birmingham, and Jelena Ristic. 2019. "Contextually-Based Social Attention Diverges across Covert and Overt Measures" Vision 3, no. 2: 29. https://doi.org/10.3390/vision3020029

APA StylePereira, E. J., Birmingham, E., & Ristic, J. (2019). Contextually-Based Social Attention Diverges across Covert and Overt Measures. Vision, 3(2), 29. https://doi.org/10.3390/vision3020029