Gaze and Arrows: The Effect of Element Orientation on Apparent Motion is Modulated by Attention

Abstract

1. Introduction

1.1. The Correspondence Problem

1.2. The Role of Attention in Motion Perception

1.3. Stimuli that Trigger Reflexive Attention Shifts and Their Possible Role in Apparent Motion Perception

1.4. Rationale for Experiment 1: Using Stimuli that Elicit Automatic Orienting of Attention as Moving Stimuli in an Ambiguous Motion Paradigm

2. Experiment 1

2.1. Methods

2.1.1. Participants and Apparatus

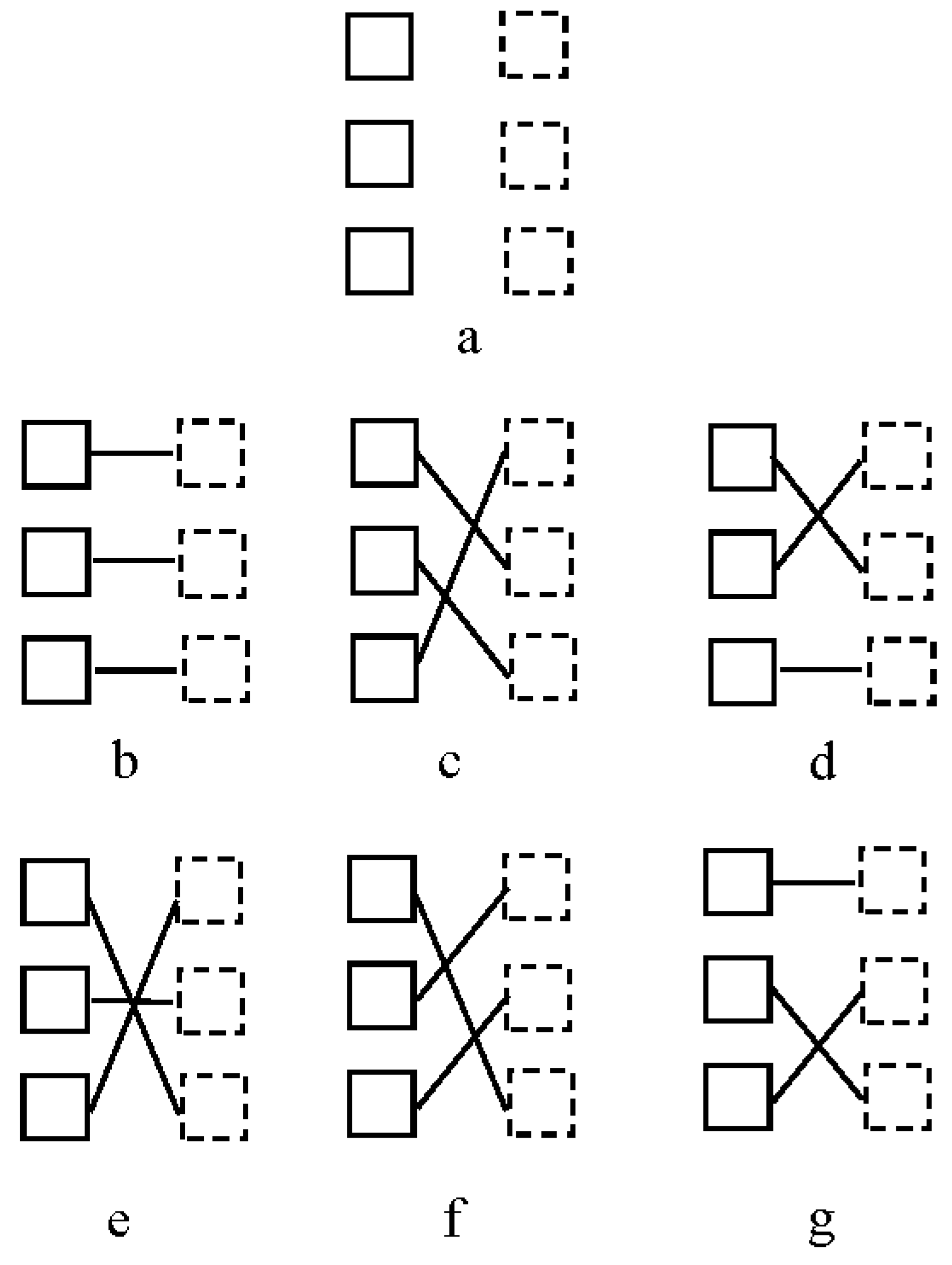

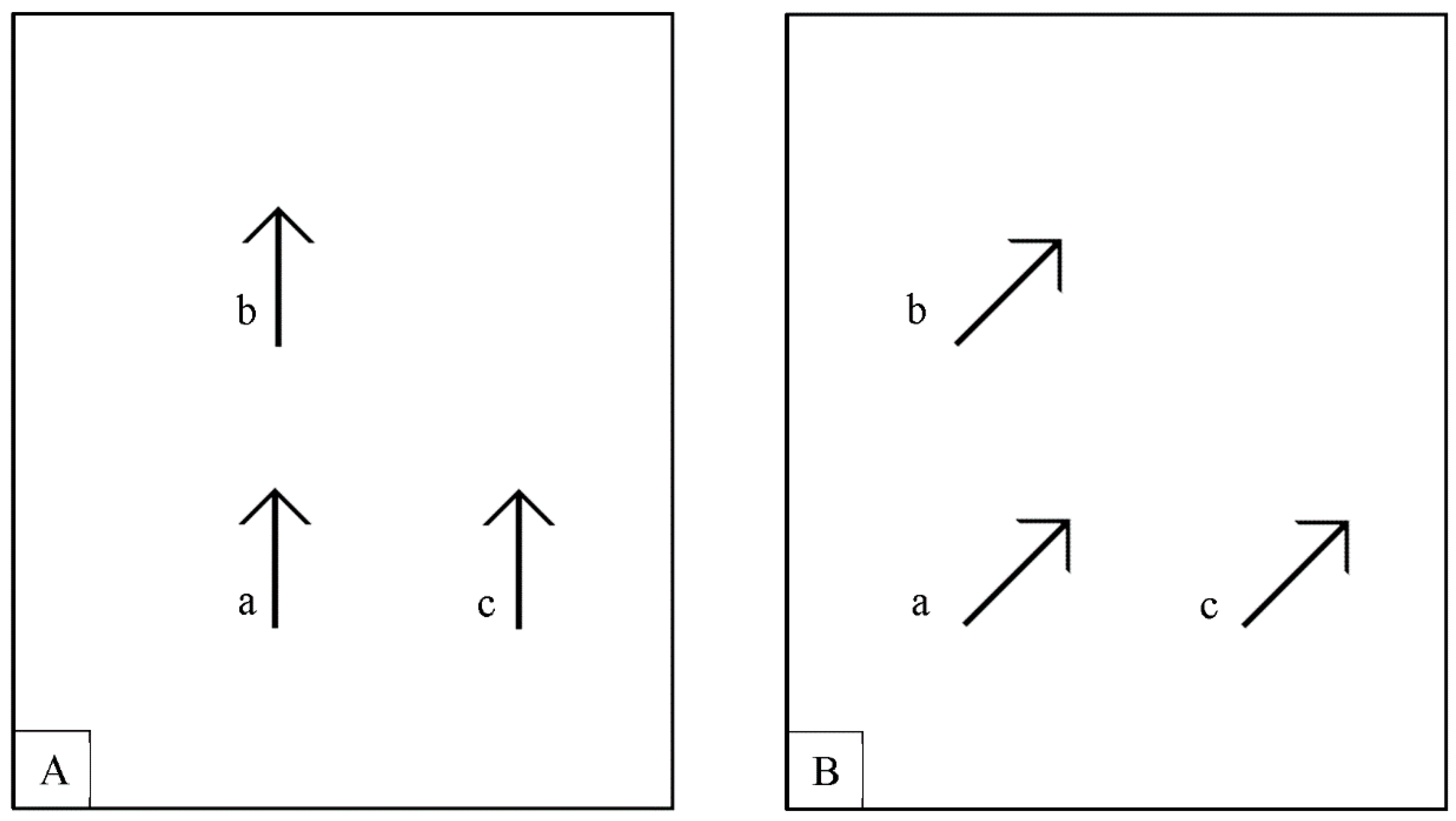

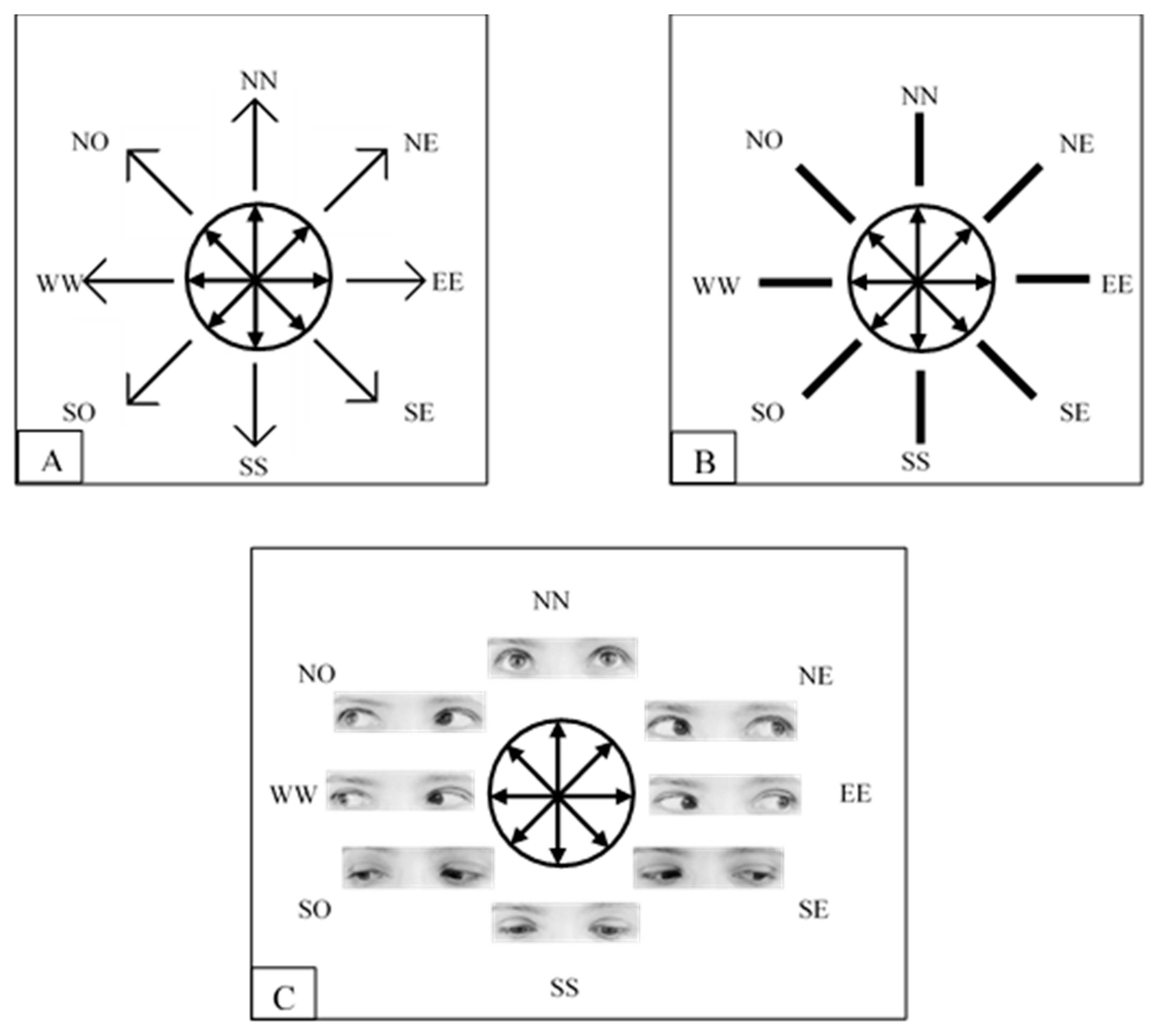

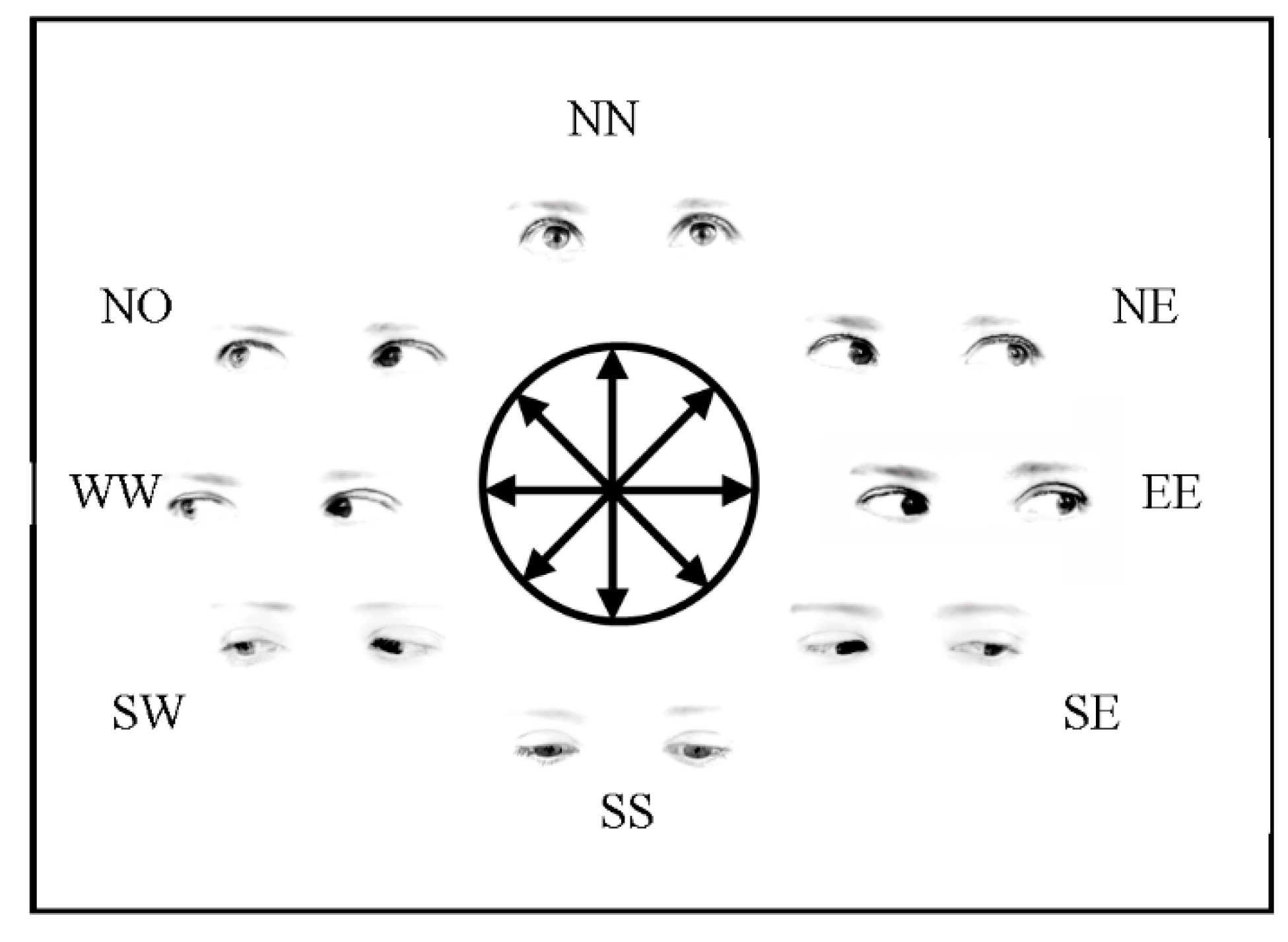

2.1.2. Stimuli, Procedure and Design

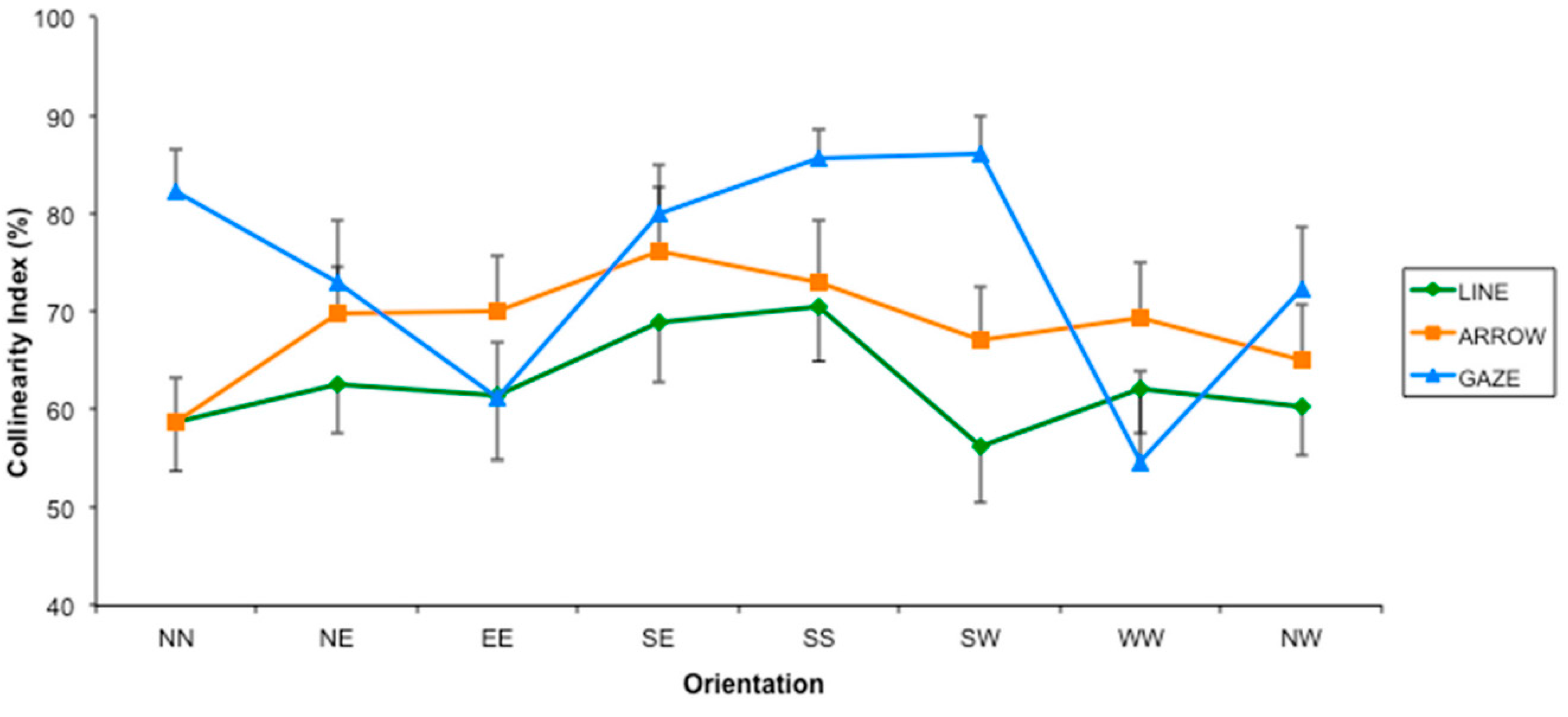

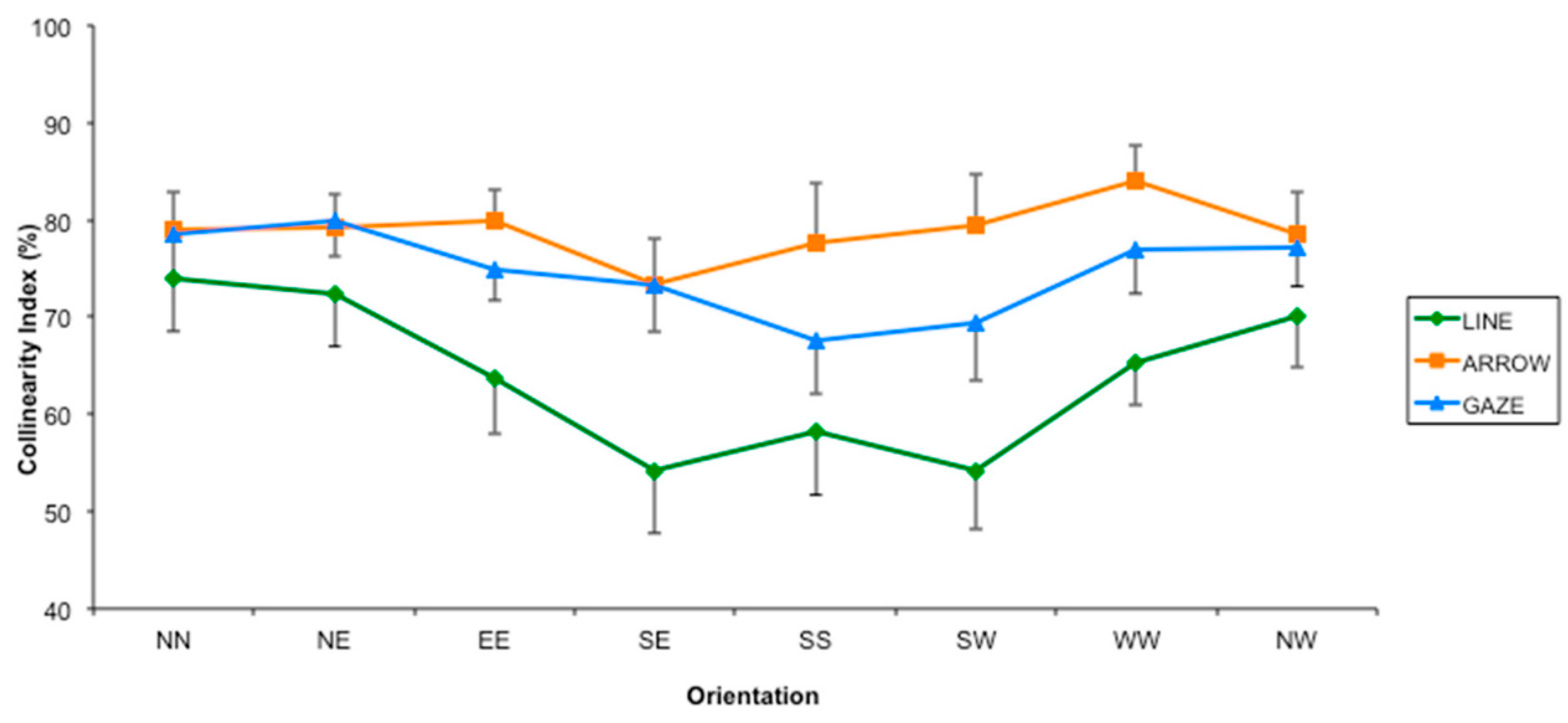

2.2. Results

2.3. Discussion

3. Experiment 2

3.1. Methods

3.1.1. Participants

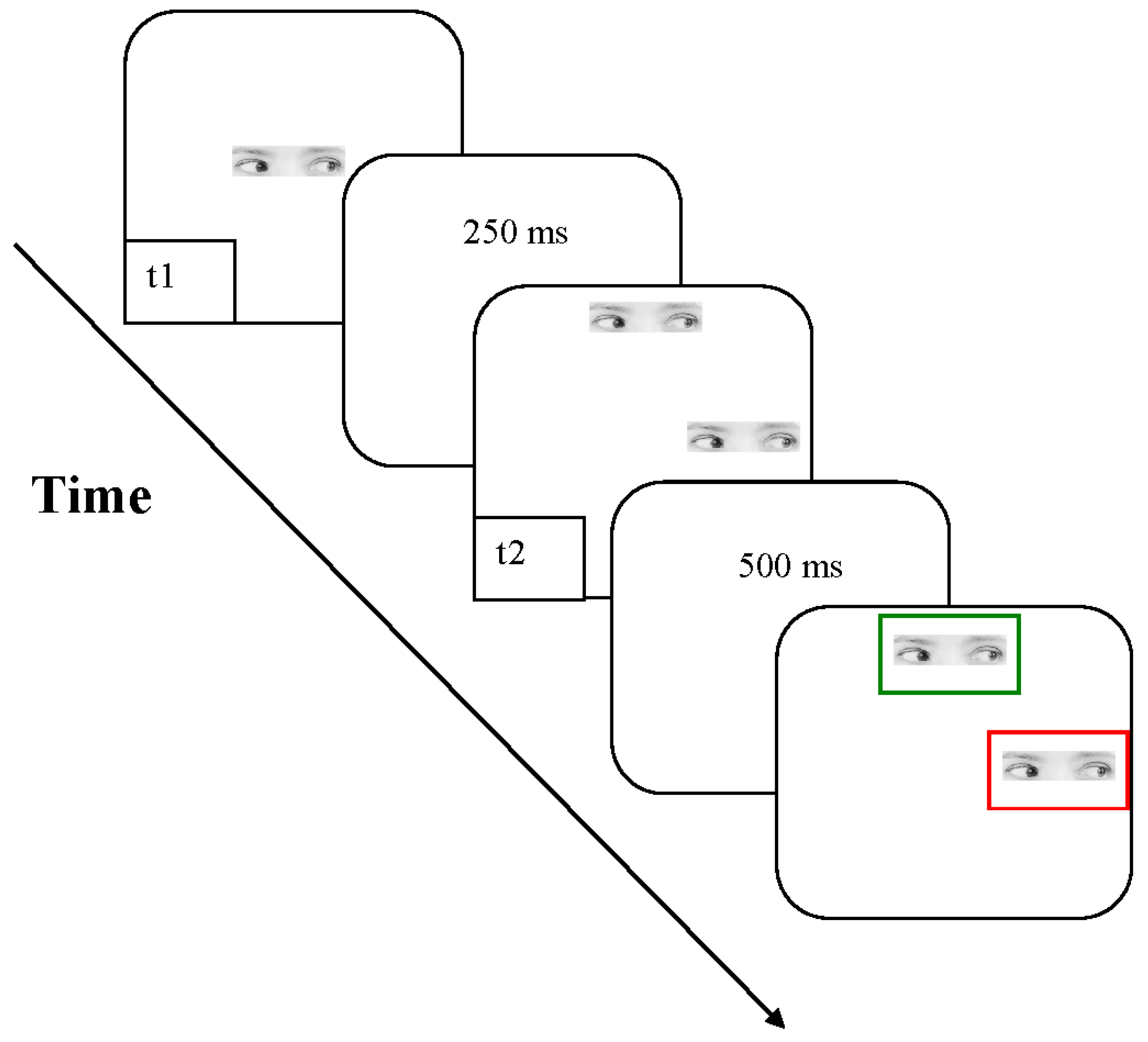

3.1.2. Apparatus, Stimulus, and Procedure

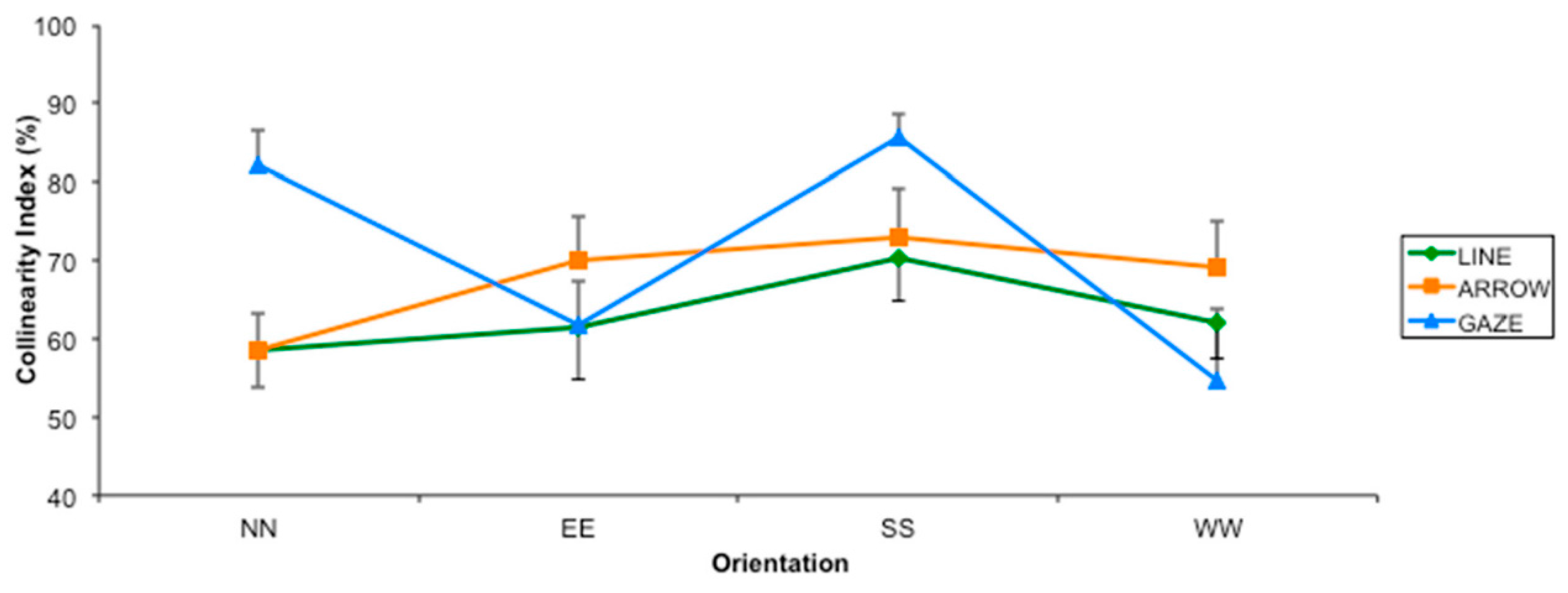

3.2. Results and Discussion

4. General Discussion

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Attneave, E. Apparent movement and the what and what-where connection. Psychologia 1974, 17, 108–120. [Google Scholar]

- Ramachandran, V.S.; Anstis, S.M. The perception of apparent motion. Sci. Am. 1986, 254, 102–109. [Google Scholar] [CrossRef] [PubMed]

- Ullman, S. The Interpretation of Visual Motion; MIT Press: Cambridge, MA, USA, 1979; ISBN 0-262-71011-0. [Google Scholar]

- Von Schiller, P. Stroboscopiche Alternativversuche. Psychol. Forsch. 1933, 17, 179–214. [Google Scholar] [CrossRef]

- Dawson, M.R. The how and why of what went where in apparent motion: Modelling solutions to the motion correspondence problem. Psychol. Rev. 1991, 96, 569–603. [Google Scholar] [CrossRef]

- Ogale, A.S.; Aloimonos, Y. Shape and the Stereo Correspondence Problem. Int. J. Comput. Vis. 2005, 65, 155–161. [Google Scholar] [CrossRef]

- Burr, D.C.; Ross, J.; Morrone, M.C. Seeing objects in motion. Proc. R. Soc. Lond. B Biol. Sci. 1986, 227, 249–265. [Google Scholar] [CrossRef] [PubMed]

- Watson, A.B. Optimal displacement in apparent motion and quadrature models of motion sensing. Vis. Res. 1990, 30, 1389–1393. [Google Scholar] [CrossRef]

- Watson, A.B.; Ahumada, A.J., Jr.; Farrell, J. Window of visibility: Psychophysical theory of fidelity in time-sampled visual motion displays. J. Opt. Soc. Am. 1986, 3, 300–307. [Google Scholar] [CrossRef]

- Watson, A.B.; Turano, K. The optimal motion stimulus. Vis. Res. 1994, 35, 325–336. [Google Scholar] [CrossRef]

- McLean, J.; Raab, S.; Palmer, L.A. Contribution of linear mechanisms to the specification of local motion by simple cells in areas 17 and 18 of the cat. Visual. Neurosci. 1994, 11, 271–294. [Google Scholar] [CrossRef]

- Reichardt, W. Processing of optical information by the visual system of the fly. Vis. Res. 1986, 26, 113–126. [Google Scholar] [CrossRef]

- De Valois, R.L.; Albrecht, D.G.; Thorell, L.G. Spatial frequency selectivity of cells in Macaque visual cortex. Vis. Res. 1982, 22, 545–559. [Google Scholar] [CrossRef]

- De Valois, R.L.; Yund, E.W.; Hepler, H. The orientation and direction selectivity of cells in Macaque visual cortex. Vis. Res. 1982, 22, 531–544. [Google Scholar] [CrossRef]

- Fernberger, S.W. New phenomena of apparent visual movement. Am. J. Psychol. 1934, 46, 309–314. [Google Scholar] [CrossRef]

- Orlansky, J. The effect of similarity and difference of form on apparent visual motion. Arch. Psychol. 1940, 246, 1–38. [Google Scholar]

- Kolers, P.A.; Pomerantz, J.R. Figural change in apparent motion. J. Exp. Psychol. 1971, 87, 99–108. [Google Scholar] [CrossRef] [PubMed]

- Navon, D. Irrelevance of figural identity for resolving ambiguities in apparent motion. J. Exp. Psychol. Hum. Percept. Perform. 1976, 2, 130–138. [Google Scholar] [CrossRef] [PubMed]

- Werkhoven, P.; Snippe, H.P.; Koenderink, J.J. Effect of element orientation on apparent motion perception. Percept. Psychophys. 1990, 47, 509–525. [Google Scholar] [CrossRef] [PubMed]

- Ramachandran, V.S.; Anstis, S.M. Figure-ground segregation modulates apparent motion. Vis. Res. 1986, 26, 1969–1975. [Google Scholar] [CrossRef]

- Cavanagh, P. Short-range vs. long-range motion: Not a valid distinction. Spat. Vis. 1991, 5, 303–309. [Google Scholar] [CrossRef] [PubMed]

- Hubel, D.H.; Wiesel, T.N. Receptive fields and functional architecture in two nonstriate visual areas (18 and 19) of the cat. J. Neurophys. 1965, 28, 229–289. [Google Scholar]

- Cavanagh, P. Attention based motion perception. Science 1992, 257, 1563–1565. [Google Scholar] [CrossRef] [PubMed]

- Wertheimer, M. Experimentelle Studien über das Sehen von Bewegung. Z. Psychol. 1912, 61, 161–265. [Google Scholar]

- Verstraten, F.A.J.; Cavanagh, P.; Labianca, A. Limits of attentive tracking reveal temporal properties of attention. Vis. Res. 2000, 40, 3651–3664. [Google Scholar] [CrossRef]

- Cavanagh, P.; Mather, G. Motion: The long and short of it. Spat. Vis. 1989, 4, 103–129. [Google Scholar] [CrossRef] [PubMed]

- Posner, M.I.; Cohen, Y. Components of Visual Orienting. In Attention and Performance X: Control of Language Processes; Bouma, H., Bouwhuis, D.G., Eds.; Erlbaum: Hillsdale, NJ, USA, 1984; pp. 531–556. [Google Scholar]

- Jonides, J. Voluntary vs. automatic control over the mind’s eye’s movement. In Attention and Performance IX; Long, J.B., Baddeley, A.D., Eds.; Erlbaum: Hillsdale, NJ, USA, 1981; pp. 187–203. [Google Scholar]

- Eimer, M. Uninformative symbolic cues may bias visual-spatial attention: Behavioral and electrophysiological evidence. Biol. Psychol. 1997, 46, 67–71. [Google Scholar] [CrossRef]

- Ristic, J.; Friesen, C.K.; Kingstone, A. Are eyes special? It depends on how you look at it. Psychonom. Bull. Rev. 2002, 9, 507–513. [Google Scholar] [CrossRef]

- Tipples, J. Eye gaze is not unique: Automatic orienting in response to uninformative arrows. Psychonom. Bull. Rev. 2002, 9, 314–318. [Google Scholar] [CrossRef]

- Driver, J.; Davis, G.; Ricciardelli, P.; Kidd, P.; Maxwell, E.; Baron-Cohen, S. Gaze perception triggers reflexive visuospatial orienting. Vis. Cogn. 1999, 6, 509–540. [Google Scholar] [CrossRef]

- Friesen, C.K.; Kingstone, A. The eyes have it! Reflexive orienting is triggered by non predictive gaze. Psychonom. Bull. Rev. 1998, 5, 490–495. [Google Scholar] [CrossRef]

- Frischen, A.; Bayliss, A.P.; Tipper, S.P. Gaze cueing of attention: Visual attention, social cognition and individual differences. Psychol. Bull. 2007, 133, 694–724. [Google Scholar] [CrossRef] [PubMed]

- Galfano, G.; Dalmaso, M.; Marzoli, D.; Pavan, G.; Coricelli, C.; Castelli, L. Eye gaze cannot be ignored (but neither can arrows). Q. J. Exp. Psychol. 2012, 65, 1895–1910. [Google Scholar] [CrossRef] [PubMed]

- Hietanen, J.K. Does your gaze direction and head orientation shift my visual attention? Neuroreport 1999, 10, 3443–3447. [Google Scholar] [CrossRef] [PubMed]

- Langton, S.R.H.; Bruce, V. Reflexive visual orienting in response to the social attention of others. Vis. Cogn. 1999, 6, 541–567. [Google Scholar] [CrossRef]

- Tipper, S.P. From observation to action simulation: The role of attention, eye-gaze, emotion, and body state. Q. J. Exp. Psychol. 2010, 63, 2081–2105. [Google Scholar] [CrossRef] [PubMed]

- Posner, M.I. Orienting of attention. Q. J. Exp. Psychol. 1980, 32, 3–25. [Google Scholar] [CrossRef] [PubMed]

- Guzzon, D.; Brignani, D.; Miniussi, C.; Marzi, C.A. Orienting of attention with eye and arrow cues and the effect of overtraining. Acta Psychol. 2010, 134, 353–362. [Google Scholar] [CrossRef] [PubMed]

- Symons, L.A.; Lee, K.; Cedrone, C.C.; Nishimura, M. What are you looking at? Acuity for triadic eye gaze. J. Gen. Psychol. 2004, 65, 155–161. [Google Scholar] [CrossRef]

- Lobmaier, J.S.; Fischer, M.H.; Schwaninger, A. Objects capture perceived gaze direction. Exp. Psychol. 2006, 53, 117–122. [Google Scholar] [CrossRef] [PubMed]

- Baron-Cohen, S. Mindblindness: An Essay on Autism and Theory of Mind; MIT Press: Cambridge, MA, USA, 1995; ISBN 0-262-02384-9 (hb); 0-262-5225-X. [Google Scholar]

- Bedford, F.L.; Mansson, B.E. Object identity, apparent motion, transformation geometry. Curr. Res. Psychol. 2010, 1, 35–52. [Google Scholar] [CrossRef]

- Van Doorn, A.J.; Koenderink, J.J. Spatiotemporal integration in the detection of coherent motion. Vis. Res. 1984, 24, 47–53. [Google Scholar] [CrossRef]

- Brignani, D.; Guzzon, D.; Marzi, C.A.; Miniussi, C. Attentional orienting induced by arrows and eye-gaze compared with an endogenous cue. Neuropsychologia 2009, 47, 370–381. [Google Scholar] [CrossRef] [PubMed]

- Kuhn, G.; Kingstone, A. Look away! Eyes and arrows engage oculomotor responses automatically. Atten. Percept. Psychophys. 2009, 71, 314–327. [Google Scholar] [CrossRef] [PubMed]

- Gibson, B.S.; Bryant, T.A. Variation in cue duration reveals top-down modulation of involuntary orienting to uninformative symbolic cues. Percept. Psychophys. 2005, 67, 749–758. [Google Scholar] [CrossRef] [PubMed]

- Hommel, B.; Pratt, J.; Colzato, L.; Godijn, R. Symbolic control of visual attention. Psychol. Sci. 2001, 12, 360–365. [Google Scholar] [CrossRef] [PubMed]

- Friesen, C.K.; Ristic, J.; Kingstone, A. Attentional effects of counterpredictive gaze and arrow cues. J. Exp. Psychol. Hum. Percept. Perform. 2004, 30, 319–329. [Google Scholar] [CrossRef] [PubMed]

- Massironi, M. The Psychology of Graphics Images; LEA: Mahwah, NJ, USA, 2002; ISBN 1-4106-0189-7. [Google Scholar]

- Marino, B.F.M.; Mirabella, G.; Actis-Grosso, R.; Bricolo, E.; Ricciardelli, P. Can we resistanother person’s gaze? Front. Behav. Neurosci. 2015, 9, 258. [Google Scholar] [CrossRef] [PubMed]

- Klein, R.M.; Kingstone, A.; Pontefract, A. Orienting of visual attention. In Eye movements and Visual Cognition: Scene Perception and Reading; Rayner, K., Ed.; Springer-Verlag: New York, NY, USA, 1992; pp. 46–65. ISBN 0387977112. [Google Scholar]

- Frischen, A.; Tipper, S.P. Orienting attention via observed gaze shift evokes longer term inhibitory effects: Implications for social interactions, attention, and memory. J. Exp. Psychol. Gen. 2004, 133, 516–533. [Google Scholar] [CrossRef] [PubMed]

- Frischen, A.; Smilek, D.; Eastwood, J.D.; Tipper, S.P. Inhibition of return in response to gaze cues: The role of time-course and fixation cue. Vis. Cogn. 2007, 15, 881–895. [Google Scholar] [CrossRef]

- Friesen, C.K.; Kingstone, A. Abrupt onsets and gaze direction cues trigger independent reflexive attentional effects. Cognition 2003, 87, B1–B10. [Google Scholar] [CrossRef]

- Hoffman, E.A.; Haxby, J.V. Distinct representations of eye gaze and identity in the distributed human neural system for face perception. Nat. Neurosci. 2000, 3, 80–84. [Google Scholar] [CrossRef] [PubMed]

- Puce, A.; Allison, T.; Bentin, S.; Gore, J.C.; McCarthy, G. Temporal cortex activation in humans viewing eye and mouth movements. J. Neurosci. 1998, 18, 2188–2199. [Google Scholar] [PubMed]

- Puce, A.; Perrett, D. Electrophysiology and brain imaging of biological motion. Philos. Trans. R. Soc. Lond. B Biol. Sci. 2003, 358, 435–445. [Google Scholar] [CrossRef] [PubMed]

- Pelphrey, K.A.; Singerman, J.D.; Allison, T.; McCarthy, G. Brain activation evoked by perception of gaze shifts: The influence of context. Neuropsychologia 2003, 41, 156–170. [Google Scholar] [CrossRef]

- Johansson, G. Visual perception of biological motion and a model for its analysis. Percept. Psychophys. 1973, 14, 201–211. [Google Scholar] [CrossRef]

- Runeson, S.; Frykholm, G. Visual perception of lifted weight. J. Exp. Psychol. Hum. Percept. Perform. 1981, 7, 733–740. [Google Scholar] [CrossRef] [PubMed]

- Lacquaniti, F.; Terzuolo, C.; Viviani, P. The law relating the kinematic and figural aspects of drawing movements. Acta Psychol. 1983, 54, 115–130. [Google Scholar] [CrossRef]

- Carlini, A.; Actis-Grosso, R.; Stucchi, N.; Pozzo, T. Forward to the past. Front. Hum. Neurosci. 2012, 6, 1–7. [Google Scholar] [CrossRef] [PubMed]

- Chang, D.H.; Troje, N.F. Characterizing global and local mechanisms in biological motion perception. J. Vis. 2009, 9, 1–10. [Google Scholar] [CrossRef] [PubMed]

- Giese, M.A.; Poggio, T. Neural mechanisms for the recognition of biological movements. Nat. Neurosci. 2003, 4, 179–192. [Google Scholar] [CrossRef] [PubMed]

- Baron-Cohen, S.; Wheelwright, S.; Hill, J.; Raste, Y.; Plumb, I. The “Reading the Mind in the Eyes” test revised version: A study with normal adults, and adultswith Asperger syndrome or high-functioning autism. J. Child Psychol. Psychiatry 2001, 42, 241–251. [Google Scholar] [CrossRef] [PubMed]

- Atkinson, A.P.; Dittrich, W.H.; Gemmell, A.J.; Young, A.W. Emotion perception from dynamic and static body expressions in point-light and full-light displays. Perception 2004, 33, 717–746. [Google Scholar] [CrossRef] [PubMed]

- Pollick, F.E.; Paterson, H.M.; Bruderlin, A.; Sanford, A.J. Perceiving affect from arm movement. Cognition 2001, 82, B51–B61. [Google Scholar] [CrossRef]

- Actis-Grosso, R.; Bossi, F.; Ricciardelli, P. Emotion recognition through static faces and moving bodies: A comparison between typically developed adults and individuals with high level of autistic traits. Front. Psychol. 2015, 6, 1570. [Google Scholar] [CrossRef] [PubMed]

- Tipples, J. Orienting to a counterpredictive gaze and arrow cues. Percept. Psychophys. 2008, 70, 77–87. [Google Scholar] [CrossRef] [PubMed]

- Ricciardelli, P.; Bonfiglioli, C.; Iani, C.; Rubichi, S.; Nicoletti, R. Spatial coding and central patterns: Is there something special about the eyes? Can. J. Exp. Psychol. 2007, 61, 79–90. [Google Scholar] [CrossRef] [PubMed]

- Ricciardelli, P.; Carcagno, S.; Vallar, G.; Bricolo, E. Is gaze following purely reflexive or goal-directed instead? Revisiting the automaticity of orienting attention by gaze cues. Exp. Brain Res. 2013, 224, 93–106. [Google Scholar] [CrossRef] [PubMed]

- Ciardo, F.; Marino, B.F.M.; Rossetti, A.; Actis-Grosso, R.; Ricciardelli, P. Face age modulates gaze following in young adults. Sci. Rep. 2014, 4, 4746. [Google Scholar] [CrossRef] [PubMed]

- Hooker, C.I.; Paller, K.A.; Gitelman, D.R.; Parrish, T.B.; Mesulam, M.M.; Reber, P.J. Brain networks for analyzing eye gaze. Cogn. Brain Res. 2003, 17, 406–418. [Google Scholar] [CrossRef]

- Langdon, R.; Smith, P. Spatial cueing by social versus nonsocial directional signals. Vis. Cogn. 2005, 12, 1497–1527. [Google Scholar] [CrossRef]

- Nummenmaa, L.; Hietanen, J.K. How Attentional Systems Process Conflicting Cues. The Superiority of Social over Symbolic Orienting Revisited. J. Exp. Psychol. Hum. Percept. Perform. 2009, 35, 1738–1754. [Google Scholar] [CrossRef] [PubMed]

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Actis-Grosso, R.; Ricciardelli, P. Gaze and Arrows: The Effect of Element Orientation on Apparent Motion is Modulated by Attention. Vision 2017, 1, 21. https://doi.org/10.3390/vision1030021

Actis-Grosso R, Ricciardelli P. Gaze and Arrows: The Effect of Element Orientation on Apparent Motion is Modulated by Attention. Vision. 2017; 1(3):21. https://doi.org/10.3390/vision1030021

Chicago/Turabian StyleActis-Grosso, Rossana, and Paola Ricciardelli. 2017. "Gaze and Arrows: The Effect of Element Orientation on Apparent Motion is Modulated by Attention" Vision 1, no. 3: 21. https://doi.org/10.3390/vision1030021

APA StyleActis-Grosso, R., & Ricciardelli, P. (2017). Gaze and Arrows: The Effect of Element Orientation on Apparent Motion is Modulated by Attention. Vision, 1(3), 21. https://doi.org/10.3390/vision1030021