Where Is Your Attention? Assessing Individual Instances of Covert Attentional Orienting in Response to Gaze and Arrow Cues

Abstract

:1. Introduction

2. Results

2.1. Experiment 1

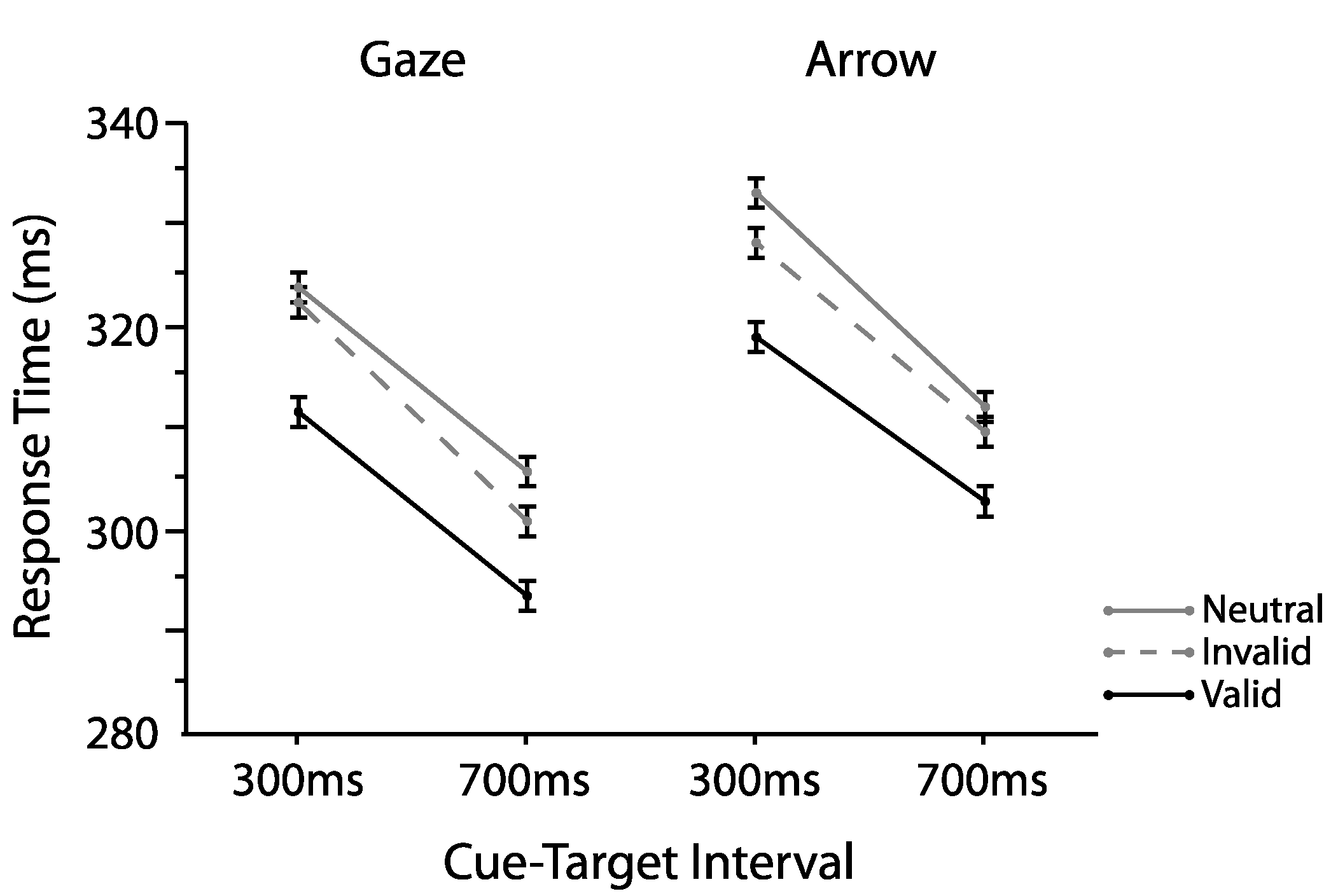

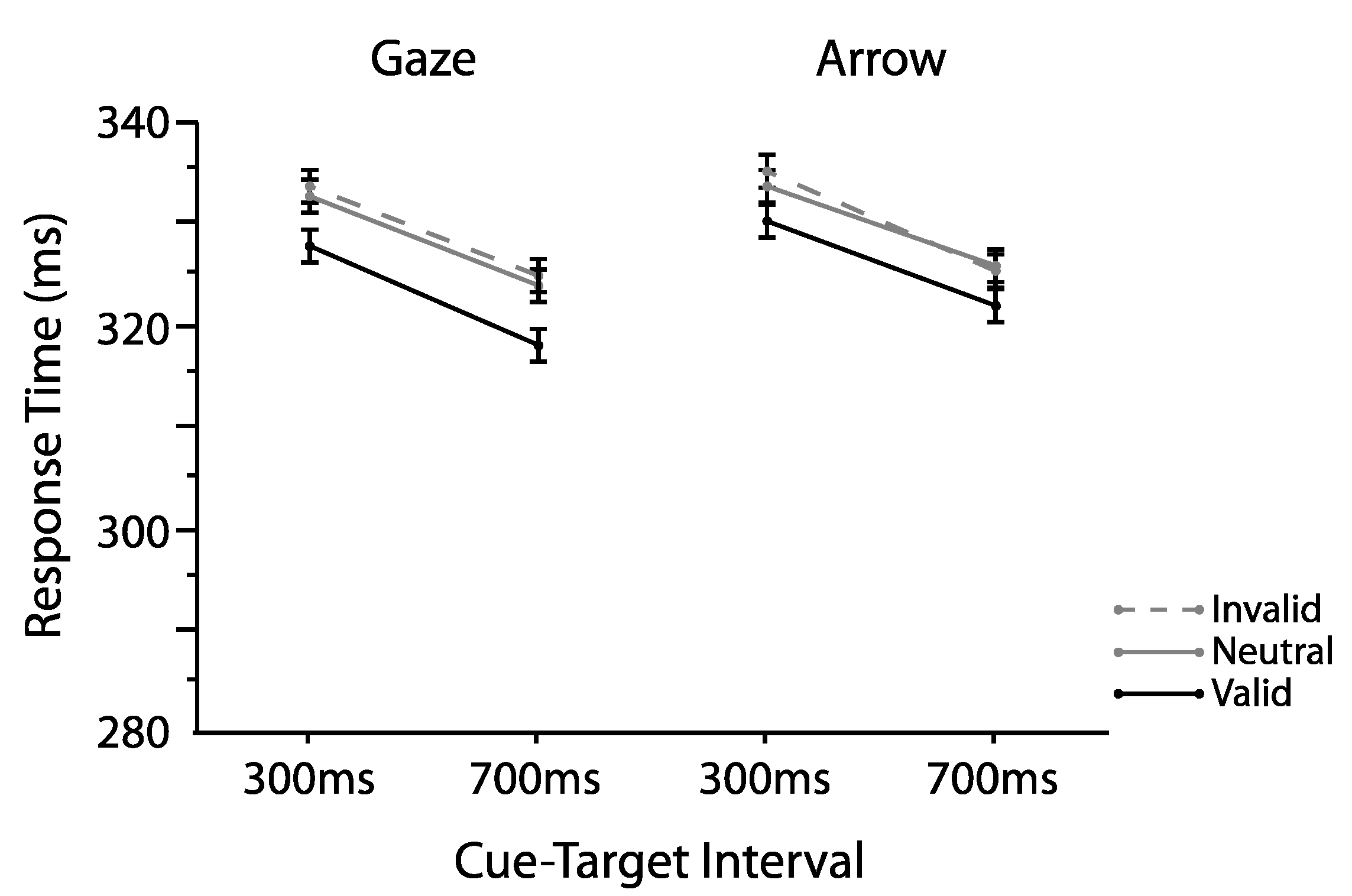

2.1.1. Omnibus Analyses

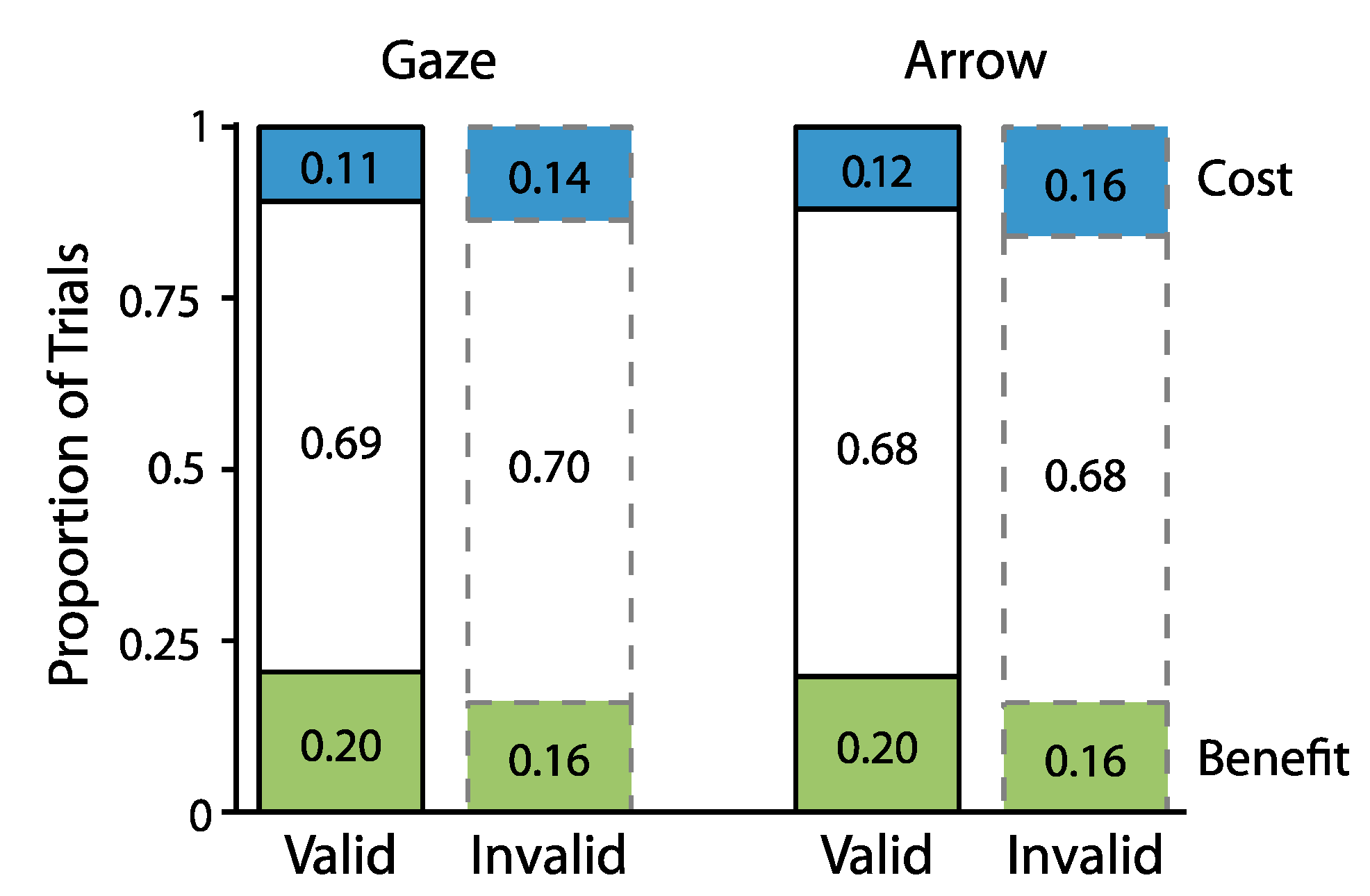

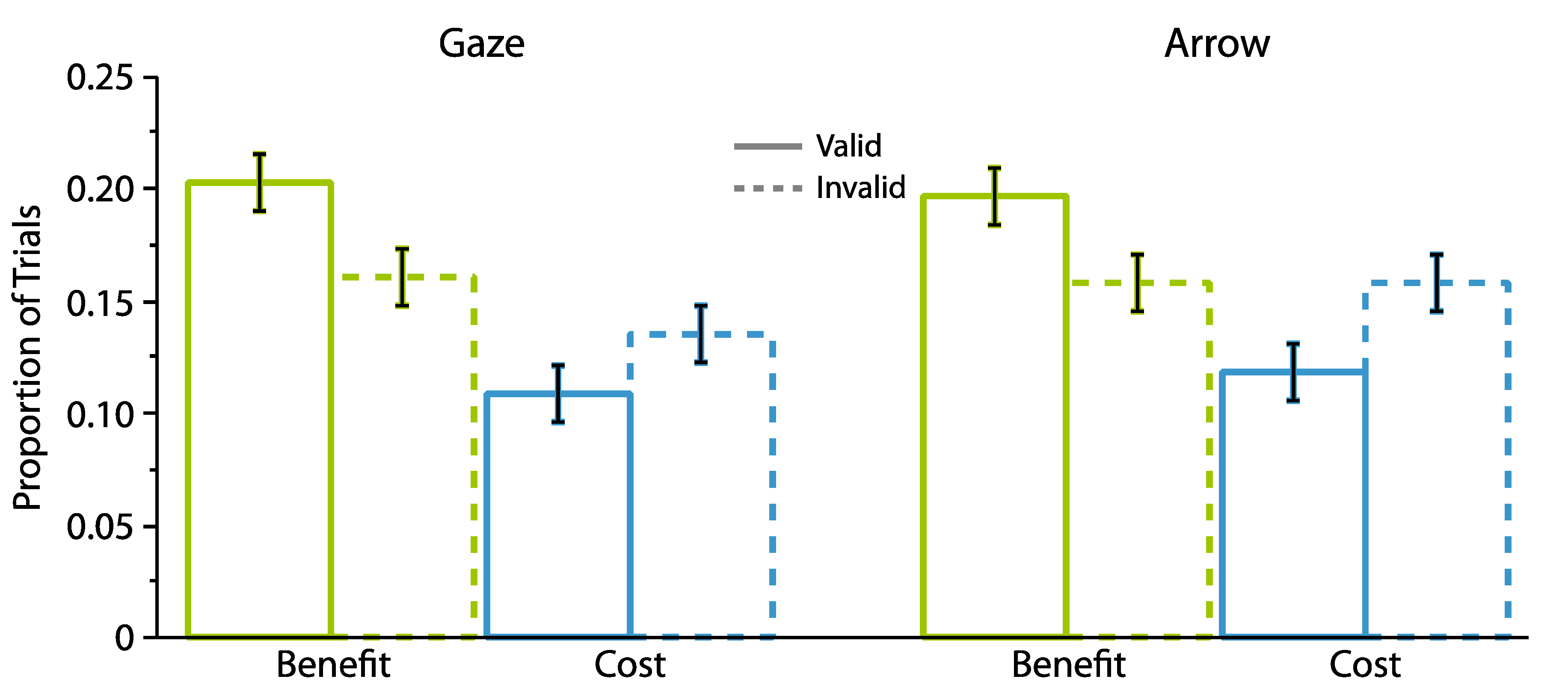

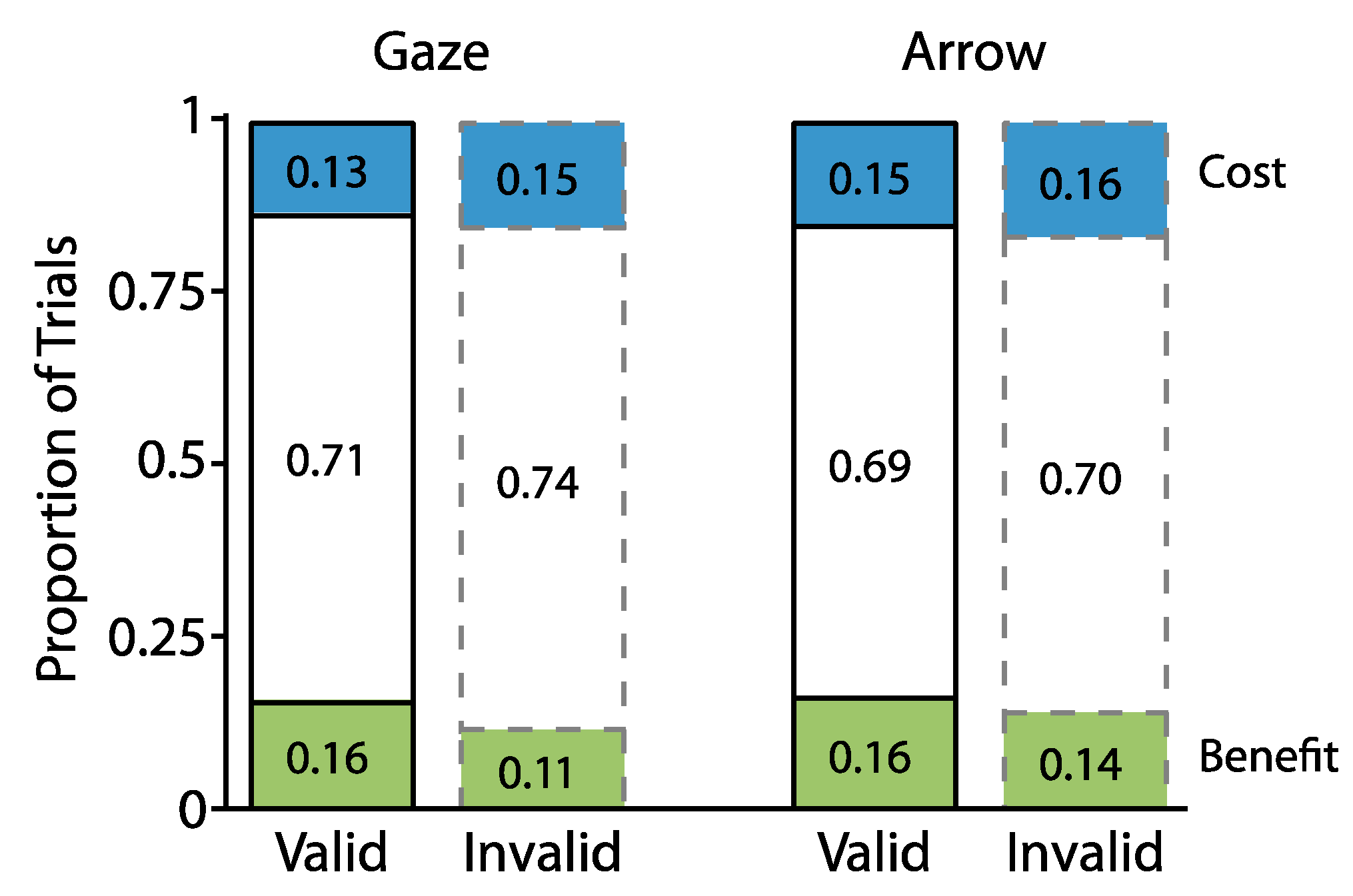

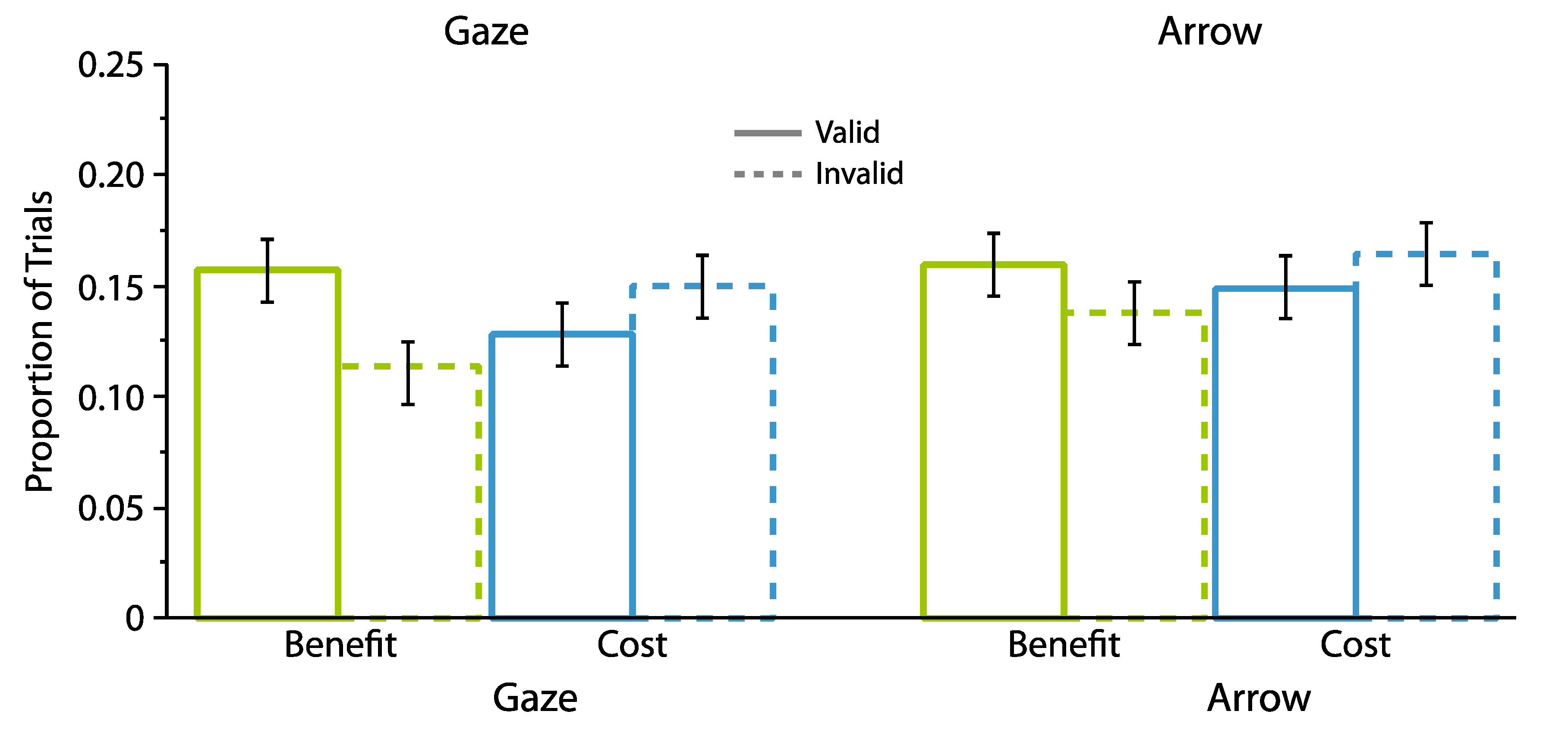

2.1.2. Proportion Analyses

2.2. Experiment 2

2.2.1. Omnibus Analyses

2.2.2. Proportion Analyses

3. Discussion

4. Materials and Methods

4.1. Experiment 1

4.1.1. Participants

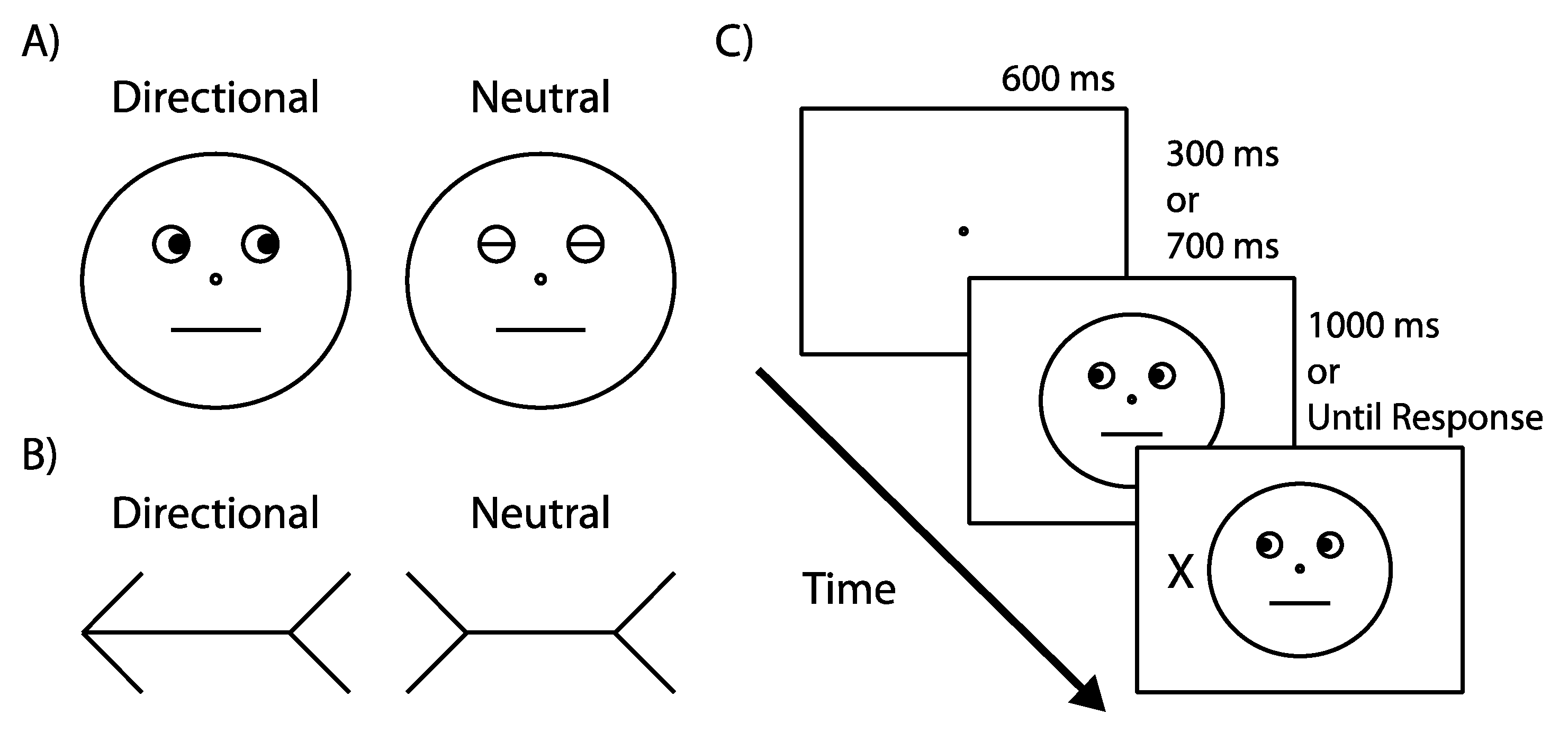

4.1.2. Apparatus & Stimuli

4.1.3. Design

4.1.4. Procedure

4.2. Experiment 2

Participants, Apparatus, Stimuli, Design, Procedure

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Emery, N.J. The eyes have it: The neuroethology, function and evolution of social gaze. Neurosci. Biobehav. Rev. 2000, 24, 581–604. [Google Scholar] [CrossRef]

- Shepherd, S.V. Following gaze: Gaze-following behavior as a window into social cognition. Front. Integr. Neurosci. 2010, 4, 5. [Google Scholar] [CrossRef] [PubMed]

- Zuberbühler, K. Gaze following. Curr. Biol. 2008, 18, R453–R455. [Google Scholar] [CrossRef] [PubMed]

- Friesen, C.K.; Kingstone, A. The eyes have it! Reflexive orienting is triggered by nonpredictive gaze. Psychon. Bull. Rev. 1998, 5, 490–495. [Google Scholar] [CrossRef]

- Frischen, A.; Bayliss, A.P.; Tipper, S.P. Gaze cueing of attention: Visual attention, social cognition, and individual differences. Psychol. Bull. 2007, 133, 694–724. [Google Scholar] [CrossRef] [PubMed]

- Nummenmaa, L.; Calder, A.J. Neural mechanisms of social attention. Trends Cogn. Sci. 2009, 13, 135–143. [Google Scholar] [CrossRef] [PubMed]

- Gallup, A.C.; Hale, J.J.; Sumpter, D.J.T.; Garnier, S.; Kacelnik, A.; Krebs, J.R.; Couzin, I.D. Visual attention and the acquisition of information in human crowds. Proc. Natl. Acad. Sci. USA 2012, 109, 7245–7250. [Google Scholar] [CrossRef] [PubMed]

- Hayward, D.A.; Voorhies, W.; Morris, J.L.; Capozzi, F.; Ristic, J. Staring reality in the face: A comparison of social attention across laboratory and real world measures suggests little common ground. Can. J. Exp. Psychol. 2017, in press. [Google Scholar] [CrossRef] [PubMed]

- Kuhn, G.; Tatler, B.W.; Cole, G.G. You look where I look! Effect of gaze cues on overt and covert attention in misdirection. Vis. Cogn. 2009, 17, 925–944. [Google Scholar] [CrossRef]

- Gallup, A.C.; Chong, A.; Couzin, I.D. The directional flow of visual information transfer between pedestrians. Biol. Lett. 2012, 8, 520–522. [Google Scholar] [CrossRef] [PubMed]

- Gallup, A.C.; Chong, A.; Kacelnik, A.; Krebs, J.R.; Couzin, I.D. The influence of emotional facial expressions on gaze-following in grouped and solitary pedestrians. Sci. Rep. 2014, 4, 5794. [Google Scholar] [CrossRef] [PubMed]

- Downing, P.; Dodds, C.; Bray, D. Why does the gaze of others direct visual attention? Vis. Cogn. 2004, 11, 71–79. [Google Scholar] [CrossRef]

- Friesen, C.K.; Ristic, J.; Kingstone, A. Attentional effects of counterpredictive gaze and arrow cues. J. Exp. Psychol. Hum. Percept. Perform. 2004, 30, 319–329. [Google Scholar] [CrossRef] [PubMed]

- Jonides, J.; Mack, R. On the cost and benefit of cost and benefit. Psychol. Bull. 1984, 96, 29–44. [Google Scholar] [CrossRef]

- Pestilli, F.; Carrasco, M. Attention enhances contrast sensitivity at cued and impairs it at uncued locations. Vision Res. 2005, 45, 1867–1875. [Google Scholar] [CrossRef] [PubMed]

- Mangun, G.R.; Buck, L.A. Sustained visual spatial attention produces costs and benefits in response time and evoked neural activity. Neuropsychologia 1998, 36, 189–200. [Google Scholar] [CrossRef]

- Ristic, J.; Friesen, C.K.; Kingstone, A. Are eyes special? It depends on how you look at it. Psychon. Bull. Rev. 2002, 9, 507–513. [Google Scholar] [CrossRef] [PubMed]

- Bayliss, A.P.; Tipper, S.P. Gaze and arrow cueing of attention reveals individual differences along the autism spectrum as a function of target context. Br. J. Psychol. 2005, 96, 95–114. [Google Scholar] [CrossRef] [PubMed]

- Hayward, D.A.; Ristic, J. Exposing the cuing task: The case of gaze and arrow cues. Atten. Percept. Psychophys. 2015, 77, 1088–1104. [Google Scholar] [CrossRef] [PubMed]

- Tipples, J. Eye gaze is not unique: Automatic orienting in response to uninformative arrows. Psychon. Bull. Rev. 2002, 9, 314–318. [Google Scholar] [CrossRef] [PubMed]

- Hayward, D.A.; Ristic, J. Measuring attention using the Posner cuing paradigm: The role of across and within trial target probabilities. Front. Hum. Neurosci. 2013, 7, 205. [Google Scholar] [CrossRef] [PubMed]

- Hayward, D.A.; Ristic, J. The uniqueness of social attention revisited: Working memory load interferes with endogenous but not social orienting. Exp. Brain Res. 2013, 231, 405–414. [Google Scholar] [CrossRef] [PubMed]

- Ristic, J.; Wright, A.; Kingstone, A. Attentional control and reflexive orienting to gaze and arrow cues. Psychon. Bull. Rev. 2007, 14, 964–969. [Google Scholar] [CrossRef] [PubMed]

- Ristic, J.; Landry, M.; Kingstone, A. Automated symbolic orienting: The missing link. Front. Psychol. 2012, 3, 560. [Google Scholar] [CrossRef] [PubMed]

- Ristic, J.; Landry, M. Combining attention: A novel way of conceptualizing the links between attention, sensory processing, and behavior. Atten. Percept. Psychophys. 2015, 77, 36–49. [Google Scholar] [CrossRef] [PubMed]

- Ristic, J.; Kingstone, A. A new form of human spatial attention: Automated symbolic orienting. Vis. Cogn. 2012, 20, 244–264. [Google Scholar] [CrossRef]

- Laidlaw, K.E.W.; Rothwell, A.; Kingstone, A. Camouflaged attention: Covert attention is critical to social communication in natural settings. Evol. Hum. Behav. 2016, 37, 449–455. [Google Scholar] [CrossRef]

- Risko, E.F.; Richardson, D.C.; Kingstone, A. Breaking the Fourth Wall of Cognitive Science: Real-World Social Attention and the Dual Function of Gaze. Curr. Dir. Psychol. Sci. 2016, 25, 70–74. [Google Scholar] [CrossRef]

- Posner, M.I. Orienting of attention. Q. J. Exp. Psychol. 1980, 32, 3–25. [Google Scholar] [CrossRef] [PubMed]

- Capozzi, F.; Becchio, C.; Willemse, C.; Bayliss, A.P. Followers Are Not Followed: Observed Group Interactions Modulate Subsequent Social Attention. J. Exp. Psychol. Gen. 2016, 145, 531–535. [Google Scholar] [CrossRef] [PubMed]

- Capozzi, F.; Cavallo, A.; Furlanetto, T.; Becchio, C. Altercentric intrusions from multiple perspectives: Beyond dyads. PLoS ONE 2014, 9, e114210. [Google Scholar] [CrossRef] [PubMed]

- Ristic, J.; Kingstone, A. Taking control of reflexive social attention. Cognition 2005, 94, B55–B65. [Google Scholar] [CrossRef] [PubMed]

- Blankertz, B.; Tomioka, R.; Lemm, S.; Kawanabe, M.; Müller, K.-R. Optimizing spatial filters for robust EEG single-trial analysis. IEEE Signal Process. Mag. 2008, 25, 41–56. [Google Scholar] [CrossRef]

- Delorme, A.; Makeig, S. EEGLAB: An open source toolbox for analysis of single-trial EEG dynamics including independent component analysis. J. Neurosci. Methods 2004, 134, 9–21. [Google Scholar] [CrossRef] [PubMed]

- Pfurtscheller, G.; Brunner, C.; Schlögl, A.; Lopes da Silva, F.H. Mu rhythm (de)synchronization and EEG single-trial classification of different motor imagery tasks. Neuroimage 2006, 31, 153–159. [Google Scholar] [CrossRef] [PubMed]

- Ristic, J.; Enns, J.T. The changing face of attentional development. Curr. Dir. Psychol. Sci. 2015, 24, 24–31. [Google Scholar] [CrossRef]

- Brainard, D.H. The Psychophysics Toolbox. Spat. Vis. 1997, 10, 433–436. [Google Scholar] [CrossRef] [PubMed]

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Blair, C.D.; Capozzi, F.; Ristic, J. Where Is Your Attention? Assessing Individual Instances of Covert Attentional Orienting in Response to Gaze and Arrow Cues. Vision 2017, 1, 19. https://doi.org/10.3390/vision1030019

Blair CD, Capozzi F, Ristic J. Where Is Your Attention? Assessing Individual Instances of Covert Attentional Orienting in Response to Gaze and Arrow Cues. Vision. 2017; 1(3):19. https://doi.org/10.3390/vision1030019

Chicago/Turabian StyleBlair, Christopher D., Francesca Capozzi, and Jelena Ristic. 2017. "Where Is Your Attention? Assessing Individual Instances of Covert Attentional Orienting in Response to Gaze and Arrow Cues" Vision 1, no. 3: 19. https://doi.org/10.3390/vision1030019

APA StyleBlair, C. D., Capozzi, F., & Ristic, J. (2017). Where Is Your Attention? Assessing Individual Instances of Covert Attentional Orienting in Response to Gaze and Arrow Cues. Vision, 1(3), 19. https://doi.org/10.3390/vision1030019