Dataset of Annotated Virtual Detection Line for Road Traffic Monitoring

Abstract

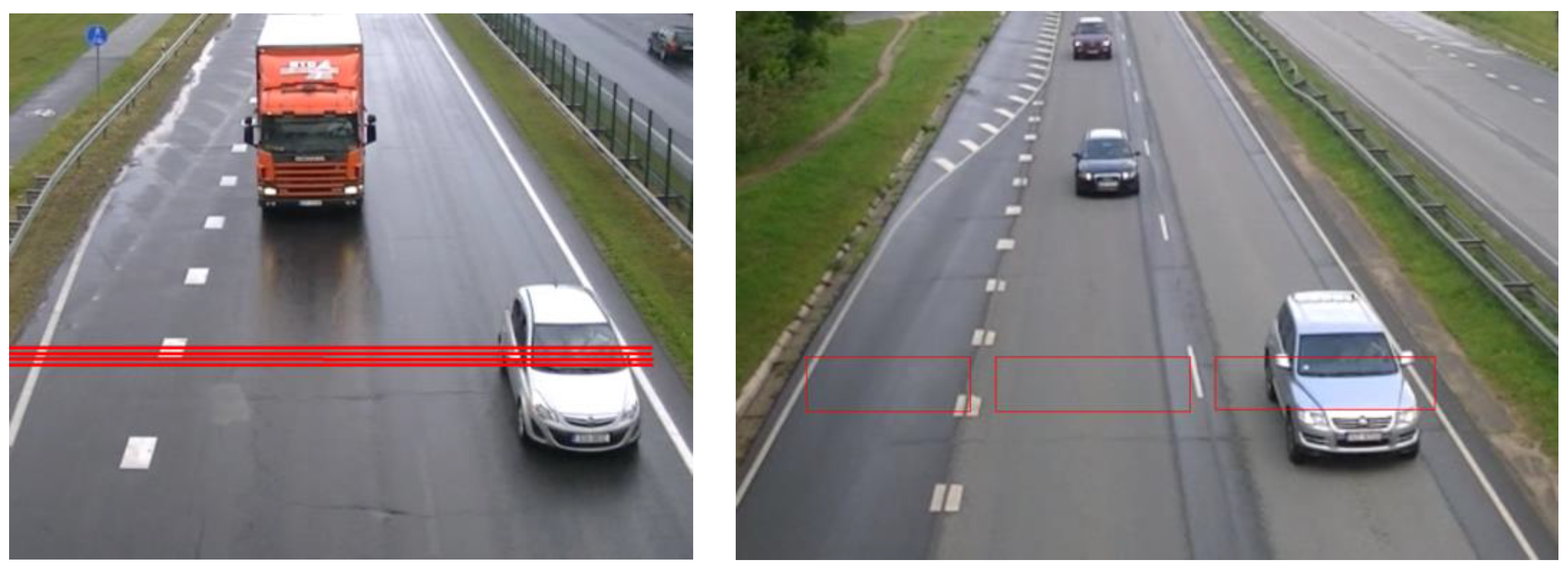

1. Summary

2. Data Collection

2.1. Video Data Collection

2.2. Video Data Annotation

2.3. Encoding Object Classes

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Elharrous, O.; Almaadeed, N.; Al-Maadeed, S. A review of video surveillance systems. J. Vis. Commun. Image Represent. 2021, 77, 103116. [Google Scholar] [CrossRef]

- Meng, Q.; Song, H.; Zhang, Y.; Zhang, X.; Li, G.; Yang, Y. Based on Vehicle Detection and Correlation-Matched Tracking Using Image Data from PTZ Cameras. Math. Probl. Eng. 2020, 9, 1–16. [Google Scholar]

- Kalbo, N.; Mirsky, Y.; Shabtai, A.; Elovici. The Security of IP-Based Video Surveillance Systems. Sensors 2020, 20, 4806. [Google Scholar] [CrossRef] [PubMed]

- Liu, G.; Shi, H.; Kiani, A.; Khreishah, A.; Lee, J.Y.; Ansari, N.; Liu, C.; Yousef, M. Smart Traffic Monitoring System using Computer Vision and Edge Computing. IEEE Trans. Intell. Transp. Syst. 2021, e-print. arXiv:2109.03141v1. [Google Scholar] [CrossRef]

- Zhao, X.; Ye, M.; Zhu, Y.; Zhong, C.; Zhou, C.; Zhou, J. Real Time ROI Generation for Pedestrian Detection. In Proceedings of the 2009 International Conference on Computational Intelligence and Software Engineering, Wuhan, China, 11–13 December 2009. [Google Scholar]

- Zhao, Z.-Q.; Zheng, P.; Xu, S.; Wu, X. Object Detection with Deep Learning: A Review. IEEE Trans. Neural Netw. Learn. Syst. 2019, 30, 3212–3232. [Google Scholar] [CrossRef] [PubMed]

- Wang, W.; Wang, L.; Ge, X.; Li, J.; Yin, B. Pedestrain Detection Based on Two-Stream UDN. Appl. Sci. 2020, 10, 1866. [Google Scholar] [CrossRef]

- Ye, D.H.; Li, J.; Chen, Q.; Wachs, J.; Bouman, C. Deep Learning for Object Detection and Tracking form a Single Camera in Unmanned Aerial Vehicles (UAVs). Electron. Imaging 2018, 10, 4661–4666. [Google Scholar] [CrossRef]

- Zhou, Y.; Maskel, S. Detecting and Tracking Small Moving Objects in Wide Area Motion Imagery (WAMI) Using Convolution Neural Networks (CNNs). In Proceedings of the 2019 22th International Conference on Information Fusion (FUSION), Ottawa, ON, Canada, 2–5 July 2019. arXiv:1911.01727. [Google Scholar]

- Kadikis, R. Recurrent neural network based virtual detection line. In Proceedings of the Tenth International Conferences on Machine Vision (ICMV), Vienna, Austria, 13–15 November 2018; Volume 10696. [Google Scholar]

- Zhu, J.; Wang, Z.; Wang, S.; Chen, S. Moving Object Detection Based on Background Compensation and Deep Learning. Symmetry 2020, 12, 1965. [Google Scholar] [CrossRef]

- Liu, H.; Hou, X. Moving Detection Research of Background Frame Difference based on Gaussian model, Computer Science and Service System. In Proceedings of the International Conference on Computer Science and Service System, Nanjing, China, 11–13 August 2012; pp. 258–261. [Google Scholar]

- Brox, T.; Bruhn, A.; Papenberg, N.; Weickert, J. High Accuracy Optical Flow Estimation Based on the theory for Wrapping. In Computer Vision—ECCV 2004; Lecture Notes in Computer Science; Pajdla, T., Matas, J., Eds.; Springer: Berlin, Germany, 2004; Volume 3024, pp. 25–36. [Google Scholar]

- Weng, M.; Huang, G.; Da, X. A New Interframe Difference Algorithm for Moving Target Detection. In Proceedings of the 2010 3rd International Congress on Image and Signal Processing, Yantai, China, 16–18 October 2010; Volume 1, pp. 285–289. [Google Scholar]

- Nakashima, T.; Yabuta, Y. Object Detection by using Interframe Difference Algorithm. In Proceedings of the 12th France-Japan and 10th Europe-Asia Congress on Mechatronics, Tsu, Japan, 10–12 September 2018; pp. 98–102. [Google Scholar]

- An-an, L. Video vehicle detection algorithm based on virtual-line group. In Proceedings of the ASITIS’08, IEEE International Conference on Signal Image Technology and Internet Based Systems, Singapore, 4–7 December 2006; pp. 1148–1151. [Google Scholar]

- Lei, M.; Lefloch, D.; Gouton, P.; Madani, K. A video-based real-time vehicle counting system using adaptive background method. In Proceedings of the 2008 IEEE International Conference on Signal Image Technology and Internet Based Systems, Bali, Indonesia, 30 November–3 December 2008; p. 523. [Google Scholar]

- Yue, Y. A Traffic-flow Parameters Evaluation Approach based on Urban Road Video. Int. J. Intell. Eng. Syst. 2009, 2, 33–39. [Google Scholar] [CrossRef]

- Kadikis, R.; Freivalds, K. Efficient Video Processing method for Traffic Monitoring Combining Motion Detection and Background Subtraction. In Proceedings of the Fourth International Conference on Signal and Image Processing 2012 (ICSIP 2012); Springer: New Delhi, India, 2013; pp. 131–141. [Google Scholar]

- Zhang, Y.; Zhao, C.; Zhang, Q. Counting Vehicles in Urban Traffic Scenes using Foreground Time-spatial Images. IET Intell. Transp. Syst. 2016, 11, 61–67. [Google Scholar] [CrossRef]

- Kinetic (Kinetic Human Action Video Dataset) Dataset. Available online: https://arxiv.org/abs/1705.06950 (accessed on 23 February 2022).

- MIT Traffic Dataset. Available online: http://mmlab.ie.cuhk.edu.hk/datasets/mit_traffic/index.html (accessed on 23 February 2022).

- GRAM Road-Traffic Monitoring Dataset. Available online: https://gram.web.uah.es/data/datasets/rtm/index.html (accessed on 23 February 2022).

- WiseNet: Multi-Camera Dataset. Available online: https://doi.org/10.4121/uuid:c1fb5962-e939-4c51-bfd5-eac6f2935d44 (accessed on 23 February 2022).

| Available Classes | ||||||

|---|---|---|---|---|---|---|

| Vehicle | Truck | Pedestrian | Bicycle | Motorcycle | Scooters | |

| Example of an encoding vector for the corresponding class when only one object crosses the virtual line. | [1, 0, 0, 0, 0, 0] | [0, 1, 0, 0, 0, 0] | [0, 0, 1, 0, 0, 0] | [0, 0, 0, 1, 0, 0] | [0, 0, 0, 0, 1, 0] | [0, 0, 0, 0, 0, 1] |

| Number of annotations for each class. | 56,134 | 5540 | 9073 | 2534 | 756 | 71 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Namatēvs, I.; Kadiķis, R.; Zencovs, A.; Leja, L.; Dobrājs, A. Dataset of Annotated Virtual Detection Line for Road Traffic Monitoring. Data 2022, 7, 40. https://doi.org/10.3390/data7040040

Namatēvs I, Kadiķis R, Zencovs A, Leja L, Dobrājs A. Dataset of Annotated Virtual Detection Line for Road Traffic Monitoring. Data. 2022; 7(4):40. https://doi.org/10.3390/data7040040

Chicago/Turabian StyleNamatēvs, Ivars, Roberts Kadiķis, Anatolijs Zencovs, Laura Leja, and Artis Dobrājs. 2022. "Dataset of Annotated Virtual Detection Line for Road Traffic Monitoring" Data 7, no. 4: 40. https://doi.org/10.3390/data7040040

APA StyleNamatēvs, I., Kadiķis, R., Zencovs, A., Leja, L., & Dobrājs, A. (2022). Dataset of Annotated Virtual Detection Line for Road Traffic Monitoring. Data, 7(4), 40. https://doi.org/10.3390/data7040040