Machine Learning-Based Algorithms to Knowledge Extraction from Time Series Data: A Review

Abstract

:1. Introduction

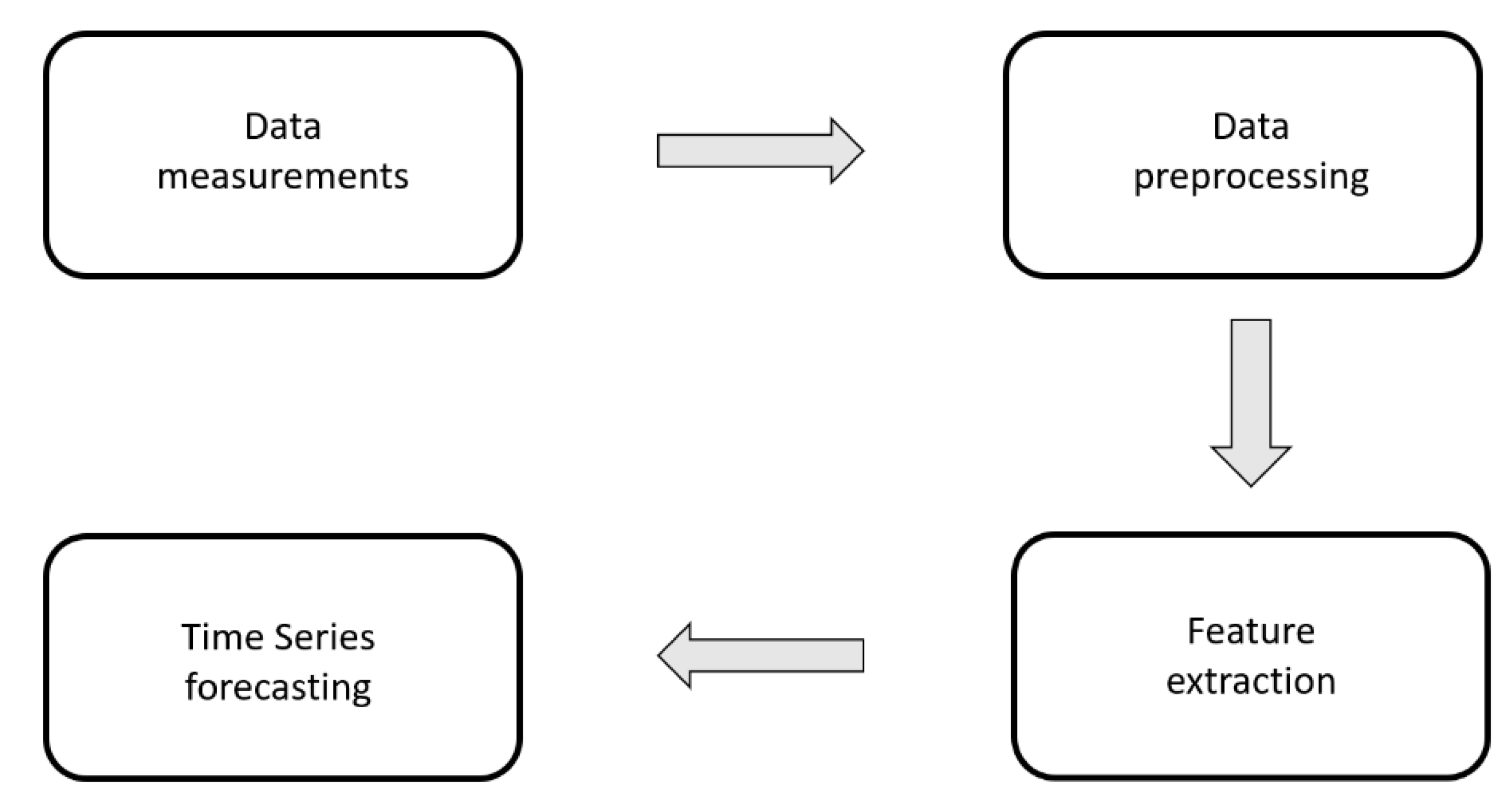

2. Times Series Analysis

2.1. Time Series Background

- f (t) is the deterministic component;

- w (t) is the random noise component.

- Trend (T): monotonous, long-term underlying trend movement, which highlights a structural evolution of the phenomenon due to causes that act systematically on it. For this reason, it is usual to assume that the trend values are expressible through a sufficiently regular and smooth function, for example, polynomial or exponential functions. If it is assumed that the function to be used is monotonous, then the trend can be an increasing or decreasing function of the phenomenon in the analyzed period [48].

- Seasonality (S): consisting of the movements of the phenomenon during the year, which, due to the influence of climatic and social factors, tend to repeat themselves in an almost similar manner in the same period (month or quarter) of subsequent years. These fluctuations originate from climatic factors (alternation of seasons) and/or social organization [49].

- Cycle (C): originating from the occurrence of more or less favorable conditions, of expansion and contraction, of the context in which the phenomenon under examination is located. It consists of fluctuations or alternating upward and downward movements, attributable to the succession in the phenomenon considered of ascending and descending phases, generally connected with the expansion and contraction phases of the entire system. The difference with respect to seasonality, which from a numerical point of view is equal to cyclicality, is the fact that the first is in the order of years for long-term cycles, while the second is in the order of months or at most one year [50].

- Accidentality or disturbance component (E): is given by irregular or accidental movements caused by a series of circumstances, each of a negligible extent. It represents the background noise of the time series, that is, the unpredictable demand component, given by the random fluctuation of the demand values around the average value of the series. The random fluctuation is detected after removing the three regular components of the time series, that is, having isolated the average demand. It should be noted that the random component is not statistically predictable. However, if it is numerically relevant, it is possible to apply linear regression models in which several independent variables are tested on the time series with only the random component. This is useful for identifying correlations between the random component and measurable input variables for which prediction for future values is also available [51].

- is the trend component;

- is the cycle component;

- is the seasonality component;

- is the accidentality component.

2.2. Times Series Datasets

3. Machine Learning Methods for Time Series Data Analysis

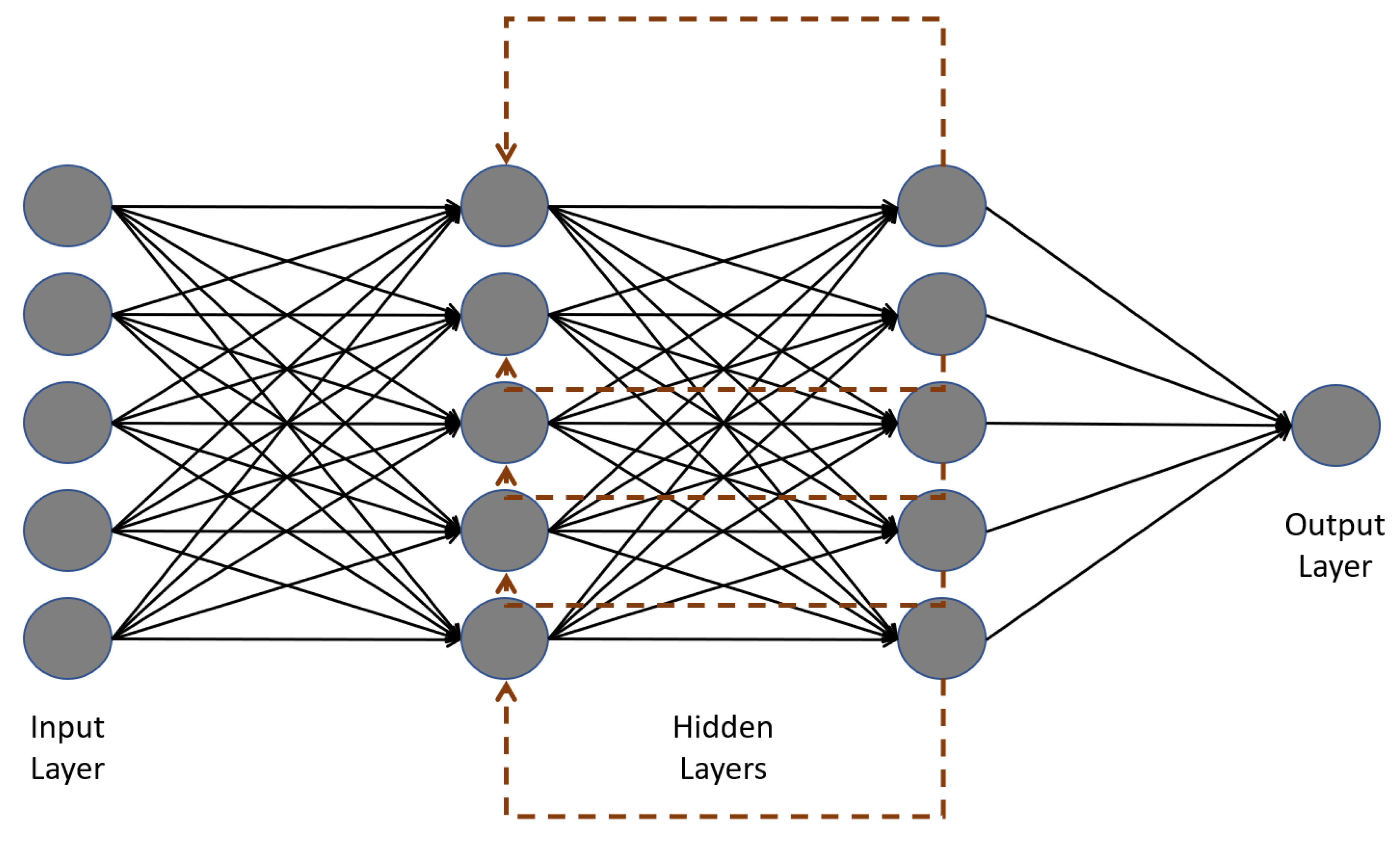

3.1. Artificial Neural Network-Based Methods

- Input neurons: they receive information from the environment;

- Hidden neurons: they are used by the input neurons to communicate with the output neurons or with other hidden neurons;

- Output neurons: they emit responses to the environment.

- = connection weights;

- = bias.

3.2. Time Series Claustering Methods

- Agglomerative: if they foresee a succession of mergers of the n units, starting from the basic situation in which each constitutes a group and up to the stage (n − 1) in which a group is formed which includes them all.

- Divisive: when the set of n units, in (n − 1) steps, is divided into groups that are, at each step of the analysis, subsets of a group formed at the previous stage of analysis, and which ends with the situation in which each group is composed of a unit.

- Nonhierarchical analysis techniques can be divided into the following:

- Partitions: mutually exclusive classes, such that, for a predetermined number of groups, it is possible to classify an entity into one and only one class.

- Overlapping classes: for which it is admitted the possibility that an entity may belong to more than one class at the same time.

- Model-free approaches, that is, methods that are based only on specific characteristics of the series;

- Model-based approaches, that is, methods that are based on parametric assumptions on time series;

- Prediction-based approaches, that is, methods that are based on forecasts of time series.

3.3. Convolutional Neural Network for Time Series Data

- Sparse connectivity: a single element in the trait map is connected only to small groups of pixels.

- Parameter-sharing: the same weights are used for different groups of pixels of the main image.

- Input level: represents the set of numbers that make up the image to be analyzed by the computer.

- Convolutional level: it is the main level of the network. Its goal is to identify patterns, such as curves, angles, and squares. There are more than one, and each of them focuses on finding these characteristics in the initial image. The greater the number, the greater the complexity of the feature they can identify.

- ReLu level (rectified linear units): the objective is to cancel negative values obtained in previous levels.

- Pool level: allows you to identify if the study feature is present in the previous level.

- Fully connected level: connects all the neurons of the previous level in order to establish the various identifying classes according to a certain probability. Each class represents a possible final answer.

3.4. Recurrent Neural Network

- Cell status: represents the element that carries the information that can be modified by the gates.

- Forget gate: represents the bridge where information passes through the sigmoid function. The output values are between 0 and 1. If the value is closer to 0, it means forget the information, if it is closer to 1, it means keep the information.

- Input gate: represents the element that updates the state of the cell. The sigmoid function is still present, but in combination with the tanh function. The tanh function reduces the input values to between −1 and 1. Then the output of tanh is multiplied with the output of the sigmoid function, which will decide what information is important to keep from the tanh output.

- Output gate: represents the element that decides how the next hidden state should be, which remembers the information on previous inputs to subsequent time cells. First, the previous hidden state and the current input pass into a sigmoid function, whose output, that is, the cell state just modified, passes through the tanh function. This output is then multiplied with the sigmoid output to decide what information should be contained in the hidden state. The new cell state and the new hidden state are then carried over to the next time step.

3.5. Autoencoders Algorithms in Time Series Data Processing

3.6. Automated Features Extraction from Time Series Data

4. Conclusions

Funding

Conflicts of Interest

References

- Wei, W.W. Time series analysis. In The Oxford Handbook of Quantitative Methods in Psychology; Oxford University Press: New York, NY, USA, 2006; Volume 2. [Google Scholar]

- Lütkepohl, H. New Introduction to Multiple Time Series Analysis; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2005. [Google Scholar]

- Chatfield, C.; Xing, H. The Analysis of Time Series: An Introduction with R; CRC Press: Boca Raton, FL, USA, 2019. [Google Scholar]

- Hamilton, J.D. Time Series Analysis; Princeton University Press: Princeton, NJ, USA, 2020. [Google Scholar]

- Brillinger, D.R. Time Series: Data Analysis and Theory; Society for Industrial and Applied Mathematics: Philadelphia, PA, USA, 2001. [Google Scholar]

- Granger, C.W.J.; Newbold, P. Forecasting Economic Time Series; Academic Press: Cambridge, MA, USA, 2014. [Google Scholar]

- Cryer, J.D. Time Series Analysis; Duxbury Press: Boston, MA, USA, 1986; Volume 286. [Google Scholar]

- Box, G.E.; Jenkins, G.M.; Reinsel, G.C.; Ljung, G.M. Time Series Analysis: Forecasting and Control; John Wiley & Sons: Hoboken, NJ, USA, 2015. [Google Scholar]

- Madsen, H. Time Series Analysis; CRC Press: Boca Raton, FL, USA, 2007. [Google Scholar]

- Fuller, W.A. Introduction to Statistical Time Series; John Wiley & Sons: Hoboken, NJ, USA, 2009; Volume 428. [Google Scholar]

- Tsay, R.S. Analysis of Financial Time Series; John Wiley & Sons: Hoboken, NJ, USA, 2005; Volume 543. [Google Scholar]

- Harvey, A.C. Forecasting, Structural Time Series Models and the Kalman Filter; Cambridge University Press: Cambridge, UK, 1990. [Google Scholar]

- Kantz, H.; Schreiber, T. Nonlinear Time Series Analysis; Cambridge University Press: Cambridge, UK, 2004; Volume 7. [Google Scholar]

- Shumway, R.H.; Stoffer, D.S.; Stoffer, D.S. Time Series Analysis and Its Applications; Springer: New York, NY, USA, 2000; Volume 3. [Google Scholar]

- Fahrmeir, L.; Tutz, G.; Hennevogl, W.; Salem, E. Multivariate Statistical Modelling Based on Generalized Linear Models; Springer: New York, NY, USA, 1990; Volume 425. [Google Scholar]

- Kirchgässner, G.; Wolters, J.; Hassler, U. Introduction to Modern Time Series Analysis; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2012. [Google Scholar]

- Hannan, E.J. Multiple Time Series; John Wiley & Sons: Hoboken, NJ, USA, 2009; Volume 38. [Google Scholar]

- Brown, R.G. Smoothing, Forecasting and Prediction of Discrete Time Series; Courier Corporation: Chelmsford, MA, USA, 2004. [Google Scholar]

- Rao, S.S. A Course in Time Series Analysis; Technical Report; Texas A & M University: College Station, TX, USA, 2008. [Google Scholar]

- Schreiber, T.; Schmitz, A. Surrogate Time Series. Phys. D Nonlinear Phenom. 2000, 142, 346–382. [Google Scholar] [CrossRef] [Green Version]

- Zhang, C.; Ma, Y. (Eds.) Ensemble Machine Learning: Methods and Applications; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2012. [Google Scholar]

- Gollapudi, S. Practical Machine Learning; Packt Publishing Ltd.: Birmingham, UK, 2016. [Google Scholar]

- Paluszek, M.; Thomas, S. MATLAB Machine Learning; Apress: New York, NY, USA, 2016. [Google Scholar]

- Murphy, K.P. Machine Learning: A Probabilistic Perspective; MIT Press: Cambridge, MA, USA, 2012. [Google Scholar]

- Adeli, H.; Hung, S.L. Machine Learning: Neural Networks, Genetic Algorithms, and Fuzzy Systems; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 1994. [Google Scholar]

- Bishop, C.M. Pattern Recognition and Machine Learning; Springer: Berlin/Heidelberg, Germany, 2006. [Google Scholar]

- Hutter, F.; Kotthoff, L.; Vanschoren, J. Automated Machine Learning: Methods, Systems, Challenges; Springer Nature: Berlin/Heidelberg, Germany, 2019; p. 219. [Google Scholar]

- Ciaburro, G.; Iannace, G.; Passaro, J.; Bifulco, A.; Marano, D.; Guida, M.; Branda, F. Artificial neural network-based models for predicting the sound absorption coefficient of electrospun poly (vinyl pyrrolidone)/silica composite. Appl. Acoust. 2020, 169, 107472. [Google Scholar] [CrossRef]

- Lantz, B. Machine Learning with R: Expert Techniques for Predictive Modeling; Packt Publishing Ltd.: Birmingham, UK, 2019. [Google Scholar]

- Dangeti, P. Statistics for Machine Learning; Packt Publishing Ltd.: Birmingham, UK, 2017. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A.; Bengio, Y. Deep Learning; MIT Press: Cambridge, MA, USA, 2016; Volume 1, No. 2. [Google Scholar]

- Koustas, Z.; Veloce, W. Unemployment hysteresis in Canada: An approach based on long-memory time series models. Appl. Econ. 1996, 28, 823–831. [Google Scholar] [CrossRef]

- Teyssière, G.; Kirman, A.P. (Eds.) Long Memory in Economics; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2006. [Google Scholar]

- Gradišek, J.; Siegert, S.; Friedrich, R.; Grabec, I. Analysis of time series from stochastic processes. Phys. Rev. E 2000, 62, 3146. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Grenander, U.; Rosenblatt, M. Statistical spectral analysis of time series arising from stationary stochastic processes. Ann. Math. Stat. 1953, 24, 537–558. [Google Scholar] [CrossRef]

- Alessio, E.; Carbone, A.; Castelli, G.; Frappietro, V. Second-order moving average and scaling of stochastic time series. Eur. Phys. J. B Condens. Matter Complex Syst. 2002, 27, 197–200. [Google Scholar] [CrossRef]

- Klöckl, B.; Papaefthymiou, G. Multivariate time series models for studies on stochastic generators in power systems. Electr. Power Syst. Res. 2010, 80, 265–276. [Google Scholar] [CrossRef]

- Harvey, A.; Ruiz, E.; Sentana, E. Unobserved component time series models with ARCH disturbances. J. Econom. 1992, 52, 129–157. [Google Scholar] [CrossRef] [Green Version]

- Nelson, C.R.; Plosser, C.R. Trends and random walks in macroeconmic time series: Some evidence and implications. J. Monet. Econ. 1982, 10, 139–162. [Google Scholar] [CrossRef]

- Shephard, N.G.; Harvey, A.C. On the probability of estimating a deterministic component in the local level model. J. Time Ser. Anal. 1990, 11, 339–347. [Google Scholar] [CrossRef]

- Duarte, F.S.; Rios, R.A.; Hruschka, E.R.; de Mello, R.F. Decomposing time series into deterministic and stochastic influences: A survey. Digit. Signal Process. 2019, 95, 102582. [Google Scholar] [CrossRef]

- Rios, R.A.; De Mello, R.F. Improving time series modeling by decomposing and analyzing stochastic and deterministic influences. Signal Process. 2013, 93, 3001–3013. [Google Scholar] [CrossRef]

- Franzini, L.; Harvey, A.C. Testing for deterministic trend and seasonal components in time series models. Biometrika 1983, 70, 673–682. [Google Scholar] [CrossRef]

- Time Series Data Library. Available online: https://pkg.yangzhuoranyang.com/tsdl/ (accessed on 24 March 2021).

- Granger, C.W.J.; Hatanaka, M. Spectral Analysis of Economic Time Series. (PSME-1); Princeton University Press: Princeton, NJ, USA, 2015. [Google Scholar]

- Durbin, J.; Koopman, S.J. Time Series Analysis by State Space Methods; Oxford University Press: Oxford, UK, 2012. [Google Scholar]

- Gourieroux, C.; Wickens, M.; Ghysels, E.; Smith, R.J. Applied Time Series Econometrics; Cambridge University Press: Cambridge, UK, 2004. [Google Scholar]

- Longobardi, A.; Villani, P. Trend analysis of annual and seasonal rainfall time series in the Mediterranean area. Int. J. Climatol. 2010, 30, 1538–1546. [Google Scholar] [CrossRef]

- Hylleberg, S. Modelling Seasonality; Oxford University Press: Oxford, UK, 1992. [Google Scholar]

- Beveridge, S.; Nelson, C.R. A new approach to decomposition of economic time series into permanent and transitory components with particular attention to measurement of the ‘business cycle’. J. Monet. Econ. 1981, 7, 151–174. [Google Scholar] [CrossRef]

- Adhikari, R.; Agrawal, R.K. An introductory study on time series modeling and forecasting. arXiv 2013, arXiv:1302.6613. [Google Scholar]

- Maçaira, P.M.; Thomé, A.M.T.; Oliveira, F.L.C.; Ferrer, A.L.C. Time series analysis with explanatory variables: A systematic literature review. Environ. Model. Softw. 2018, 107, 199–209. [Google Scholar] [CrossRef]

- Box-Steffensmeier, J.M.; Freeman, J.R.; Hitt, M.P.; Pevehouse, J.C. Time Series Analysis for the Social Sciences; Cambridge University Press: Cambridge, UK, 2014. [Google Scholar]

- Box, G. Box, G. Box and Jenkins: Time series analysis, forecasting and control. In A Very British Affair; Palgrave Macmillan: London, UK, 2013; pp. 161–215. [Google Scholar]

- Hagan, M.T.; Behr, S.M. The time series approach to short term load forecasting. IEEE Trans. Power Syst. 1987, 2, 785–791. [Google Scholar] [CrossRef]

- Velasco, C. Gaussian semiparametric estimation of non-stationary time series. J. Time Ser. Anal. 1999, 20, 87–127. [Google Scholar] [CrossRef] [Green Version]

- Dau, H.A.; Bagnall, A.; Kamgar, K.; Yeh, C.C.M.; Zhu, Y.; Gharghabi, S.; Keogh, E. The UCR time series archive. IEEE CAA J. Autom. Sin. 2019, 6, 1293–1305. [Google Scholar] [CrossRef]

- Dau, H.A.; Keogh, E.; Kamgar, K.; Yeh, C.M.; Zhu, Y.; Gharghabi, S.; Ratanamahatana, C.A.; Chen, Y.; Hu, B.; Begum, N.; et al. The UCR Time Series Classification Archive. 2019. Available online: https://www.cs.ucr.edu/~eamonn/time_series_data_2018/ (accessed on 24 March 2021).

- Kleiber, C.; Zeileis, A. Applied Econometrics with R; Springer Science & Business Media: Berlin/Heidelberg, Germany, 2008. [Google Scholar]

- Kleiber, C.; Zeileis, A.; Zeileis, M.A. Package ‘AER’, R package version 1.2, 9; R Foundation for Statistical Computing: Vienna, Austria, 2020; Available online: http://www.R-project.org/ (accessed on 24 May 2021).

- Graves, S.; Boshnakov, G.N. ‘FinTS’ Package, R package version 0.4-6; R Foundation for Statistical Computing: Vienna, Austria, 2019; Available online: http://www.R-project.org/ (accessed on 24 May 2021).

- Croissant, Y.; Graves, M.S. ‘Ecdat’ Package, R package version 0.3-9; R Foundation for Statistical Computing: Vienna, Austria, 2020; Available online: http://www.R-project.org/ (accessed on 24 May 2021).

- ANES Time Series Study. Available online: https://electionstudies.org/data-center/ (accessed on 23 March 2021).

- Schlittgen, R.; Sattarhoff, C. 9 Regressionsmodelle für Zeitreihen. In Angewandte Zeitreihenanalyse mit R; De Gruyter Oldenbourg: Berlin, Germany, 2020; pp. 225–246. [Google Scholar]

- Harvard Dataverse. Available online: https://dataverse.harvard.edu/ (accessed on 24 March 2021).

- Data.gov. Available online: https://www.data.gov/ (accessed on 24 March 2021).

- Dua, D.; Graff, C. UCI Machine Learning Repository; University of California, School of Information and Computer Science: Irvine, CA, USA, 2019; Available online: http://archive.ics.uci.edu/ml (accessed on 24 March 2021).

- Cryer, J.D.; Chan, K. Time Series Analysis with Applications in R; Springer: Berlin/Heidelberg, Germany, 2008. [Google Scholar]

- Chan, K.S.; Ripley, B.; Chan, M.K.S.; Chan, S. Package ‘TSA’, R package version 1.3; R Foundation for Statistical Computing: Vienna, Austria, 2020; Available online: http://www.R-project.org/ (accessed on 24 May 2021).

- Google Dataset Search. Available online: https://datasetsearch.research.google.com/ (accessed on 24 March 2021).

- Shumway, R.H.; Stoffer, D.S. Time Series Analysis and Its Applications: With R Examples; Springer: New York, NY, USA, 2017. [Google Scholar]

- Stoffer, D. Astsa: Applied Statistical Time Series Analysis, R package version 1.7; R Foundation for Statistical Computing: Vienna, Austria, 2016. Available online: http://www.R-project.org/ (accessed on 24 May 2021).

- Kaggle Dataset. Available online: https://www.kaggle.com/datasets (accessed on 23 March 2021).

- Hyndman, M.R.J.; Akram, M.; Bergmeir, C.; O’Hara-Wild, M.; Hyndman, M.R. Package ‘Mcomp’, R package version 2.8; R Foundation for Statistical Computing: Vienna, Austria, 2018; Available online: http://www.R-project.org/ (accessed on 24 May 2021).

- Makridakis, S.; Andersen, A.; Carbone, R.; Fildes, R.; Hibon, M.; Lewandowski, R.; Winkler, R. The accuracy of extrapolation (time series) methods: Results of a forecasting competition. J. Forecast. 1982, 1, 111–153. [Google Scholar] [CrossRef]

- Makridakis, S.; Hibon, M. The M3-Competition: Results, conclusions and implications. Int. J. Forecast. 2000, 16, 451–476. [Google Scholar] [CrossRef]

- BenTaieb, S. Package ‘M4comp’, R package version 0.0.1; R Foundation for Statistical Computing: Vienna, Austria, 2016; Available online: http://www.R-project.org/ (accessed on 24 May 2021).

- Ciaburro, G. Sound event detection in underground parking garage using convolutional neural network. Big Data Cogn. Comput. 2020, 4, 20. [Google Scholar] [CrossRef]

- Mohri, M.; Rostamizadeh, A.; Talwalkar, A. Foundations of Machine Learning; MIT Press: Cambridge, MA, USA, 2018. [Google Scholar]

- Caruana, R.; Niculescu-Mizil, A. An empirical comparison of supervised learning algorithms. In Proceedings of the 23rd International Conference on Machine Learning, Pittsburgh, PA, USA, 25–29 June 2006; pp. 161–168. [Google Scholar]

- Celebi, M.E.; Aydin, K. (Eds.) Unsupervised Learning Algorithms; Springer International Publishing: Berlin, Germany, 2016. [Google Scholar]

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction; MIT Press: Cambridge, MA, USA, 2018. [Google Scholar]

- Abiodun, O.I.; Jantan, A.; Omolara, A.E.; Dada, K.V.; Mohamed, N.A.; Arshad, H. State-of-the-art in artificial neural network applications: A survey. Heliyon 2018, 4, e00938. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Ciaburro, G.; Iannace, G.; Ali, M.; Alabdulkarem, A.; Nuhait, A. An Artificial neural network approach to modelling absorbent asphalts acoustic properties. J. King Saud Univ. Eng. Sci. 2021, 33, 213–220. [Google Scholar] [CrossRef]

- Da Silva, I.N.; Spatti, D.H.; Flauzino, R.A.; Liboni, L.H.B.; dos Reis Alves, S.F. Artificial neural network architectures and training processes. In Artificial Works; Springer: Cham, Switzerland, 2017; pp. 21–28. [Google Scholar]

- Fabio, S.; Giovanni, D.N.; Mariano, P. Airborne sound insulation prediction of masonry walls using artificial neural networks. Build. Acoust. 2021. [Google Scholar] [CrossRef]

- Alanis, A.Y.; Arana-Daniel, N.; Lopez-Franco, C. (Eds.) Artificial Neural Networks for Engineering Applications; Academic Press: Cambridge, MA, USA, 2019. [Google Scholar]

- Romero, V.P.; Maffei, L.; Brambilla, G.; Ciaburro, G. Modelling the soundscape quality of urban waterfronts by artificial neural networks. Appl. Acoust. 2016, 111, 121–128. [Google Scholar] [CrossRef]

- Walczak, S. Artificial neural networks. In Advanced Methodologies and Technologies in Artificial Intelligence, Computer Simulation, and Human-Computer Interaction; IGI Global: Hershey, PA, USA, 2019; pp. 40–53. [Google Scholar]

- Al-Massri, R.; Al-Astel, Y.; Ziadia, H.; Mousa, D.K.; Abu-Naser, S.S. Classification Prediction of SBRCTs Cancers Using Artificial Neural Network. Int. J. Acad. Eng. Res. 2018, 2, 1–7. [Google Scholar]

- Wang, L.; Wang, Z.; Qu, H.; Liu, S. Optimal forecast combination based on neural networks for time series forecasting. Appl. Soft Comput. 2018, 66, 1–17. [Google Scholar] [CrossRef]

- Gholami, V.; Torkaman, J.; Dalir, P. Simulation of precipitation time series using tree-rings, earlywood vessel features, and artificial neural network. Theor. Appl. Climatol. 2019, 137, 1939–1948. [Google Scholar] [CrossRef]

- Vochozka, M.; Horák, J.; Šuleř, P. Equalizing seasonal time series using artificial neural networks in predicting the Euro–Yuan exchange rate. J. Risk Financ. Manag. 2019, 12, 76. [Google Scholar] [CrossRef] [Green Version]

- Olawoyin, A.; Chen, Y. Predicting the future with artificial neural network. Procedia Comput. Sci. 2018, 140, 383–392. [Google Scholar] [CrossRef]

- Adeyinka, D.A.; Muhajarine, N. Time series prediction of under-five mortality rates for Nigeria: Comparative analysis of artificial neural networks, Holt-Winters exponential smoothing and autoregressive integrated moving average models. BMC Med. Res. Methodol. 2020, 20, 1–11. [Google Scholar] [CrossRef] [PubMed]

- Azadeh, A.; Ghaderi, S.F.; Sohrabkhani, S. Forecasting electrical consumption by integration of neural network, time series and ANOVA. Appl. Math. Comput. 2007, 186, 1753–1761. [Google Scholar] [CrossRef]

- Miller, R.G., Jr. Beyond ANOVA: Basics of Applied Statistics; CRC Press: Boca Raton, FL, USA, 1997. [Google Scholar]

- Hill, T.; O’Connor, M.; Remus, W. Neural network models for time series forecasts. Manag. Sci. 1996, 42, 1082–1092. [Google Scholar] [CrossRef]

- Zhang, G.P. Time series forecasting using a hybrid ARIMA and neural network model. Neurocomputing 2003, 50, 159–175. [Google Scholar] [CrossRef]

- Contreras, J.; Espinola, R.; Nogales, F.J.; Conejo, A.J. ARIMA models to predict next-day electricity prices. IEEE Trans. Power Syst. 2003, 18, 1014–1020. [Google Scholar] [CrossRef]

- Jain, A.; Kumar, A.M. Hybrid neural network models for hydrologic time series forecasting. Appl. Soft Comput. 2007, 7, 585–592. [Google Scholar] [CrossRef]

- Tseng, F.M.; Yu, H.C.; Tzeng, G.H. Combining neural network model with seasonal time series ARIMA model. Technol. Forecast. Soc. Chang. 2002, 69, 71–87. [Google Scholar] [CrossRef]

- Chen, C.F.; Chang, Y.H.; Chang, Y.W. Seasonal ARIMA forecasting of inbound air travel arrivals to Taiwan. Transportmetrica 2009, 5, 125–140. [Google Scholar] [CrossRef]

- Khashei, M.; Bijari, M. An artificial neural network (p, d, q) model for timeseries forecasting. Expert Syst. Appl. 2010, 37, 479–489. [Google Scholar] [CrossRef]

- Chaudhuri, T.D.; Ghosh, I. Artificial neural network and time series modeling based approach to forecasting the exchange rate in a multivariate framework. arXiv 2016, arXiv:1607.02093. [Google Scholar]

- Aras, S.; Kocakoç, İ.D. A new model selection strategy in time series forecasting with artificial neural networks: IHTS. Neurocomputing 2016, 174, 974–987. [Google Scholar] [CrossRef]

- Doucoure, B.; Agbossou, K.; Cardenas, A. Time series prediction using artificial wavelet neural network and multi-resolution analysis: Application to wind speed data. Renew. Energy 2016, 92, 202–211. [Google Scholar] [CrossRef]

- Lohani, A.K.; Kumar, R.; Singh, R.D. Hydrological time series modeling: A comparison between adaptive neuro-fuzzy, neural network and autoregressive techniques. J. Hydrol. 2012, 442, 23–35. [Google Scholar] [CrossRef]

- Chicea, D.; Rei, S.M. A fast artificial neural network approach for dynamic light scattering time series processing. Meas. Sci. Technol. 2018, 29, 105201. [Google Scholar] [CrossRef]

- Horák, J.; Krulický, T. Comparison of exponential time series alignment and time series alignment using artificial neural networks by example of prediction of future development of stock prices of a specific company. In SHS Web of Conferences; EDP Sciences: Les Ulis, France, 2019; Volume 61, p. 01006. [Google Scholar]

- Liu, H.; Tian, H.Q.; Pan, D.F.; Li, Y.F. Forecasting models for wind speed using wavelet, wavelet packet, time series and Artificial Neural Networks. Appl. Energy 2013, 107, 191–208. [Google Scholar] [CrossRef]

- Wang, C.C.; Kang, Y.; Shen, P.C.; Chang, Y.P.; Chung, Y.L. Applications of fault diagnosis in rotating machinery by using time series analysis with neural network. Expert Syst. Appl. 2010, 37, 1696–1702. [Google Scholar] [CrossRef]

- Xu, R.; Wunsch, D. Clustering; John Wiley & Sons: Hoboken, NJ, USA, 2008; Volume 10. [Google Scholar]

- Rokach, L.; Maimon, O. Clustering methods. In Data Mining and Knowledge Discovery Handbook; Springer: Boston, MA, USA, 2005; pp. 321–352. [Google Scholar]

- Gaertler, M. Clustering. In Network Analysis; Springer: Berlin/Heidelberg, Germany, 2005; pp. 178–215. [Google Scholar]

- Gionis, A.; Mannila, H.; Tsaparas, P. Clustering aggregation. Acm Trans. Knowl. Discov. Data 2007, 1, 4-es. [Google Scholar] [CrossRef] [Green Version]

- Vesanto, J.; Alhoniemi, E. Clustering of the self-organizing map. IEEE Trans. Neural Netw. 2000, 11, 586–600. [Google Scholar] [CrossRef]

- Jain, A.K. Data clustering: 50 years beyond K-means. Pattern Recognit. Lett. 2010, 31, 651–666. [Google Scholar] [CrossRef]

- Mirkin, B. Clustering: A Data Recovery Approach; CRC Press: Boca Raton, FL, USA, 2012. [Google Scholar]

- Forina, M.; Armanino, C.; Raggio, V. Clustering with dendrograms on interpretation variables. Anal. Chim. Acta 2002, 454, 13–19. [Google Scholar] [CrossRef]

- Hirano, S.; Tsumoto, S. Cluster analysis of time-series medical data based on the trajectory representation and multiscale comparison techniques. In Proceedings of the Sixth International Conference on Data Mining (ICDM’06), Hong Kong, China, 18–22 December 2006; IEEE: Piscataway, NJ, USA, 2006; pp. 896–901. [Google Scholar]

- Caraway, N.M.; McCreight, J.L.; Rajagopalan, B. Multisite stochastic weather generation using cluster analysis and k-nearest neighbor time series resampling. J. Hydrol. 2014, 508, 197–213. [Google Scholar] [CrossRef]

- Balslev, D.; Nielsen, F.Å.; Frutiger, S.A.; Sidtis, J.J.; Christiansen, T.B.; Svarer, C.; Law, I. Cluster analysis of activity-time series in motor learning. Hum. Brain Mapp. 2002, 15, 135–145. [Google Scholar] [CrossRef] [PubMed]

- Mikalsen, K.Ø.; Bianchi, F.M.; Soguero-Ruiz, C.; Jenssen, R. Time series cluster kernel for learning similarities between multivariate time series with missing data. Pattern Recognit. 2018, 76, 569–581. [Google Scholar] [CrossRef] [Green Version]

- Corduas, M.; Piccolo, D. Time series clustering and classification by the autoregressive metric. Comput. Stat. Data Anal. 2008, 52, 1860–1872. [Google Scholar] [CrossRef]

- Otranto, E.; Trudda, A. Classifying Italian pension funds via GARCH distance. In Mathematical and Statistical Methods in Insurance and Finance; Springer: Milano, Italy, 2008; pp. 189–197. [Google Scholar]

- Gupta, S.K.; Gupta, N.; Singh, V.P. Variable-Sized Cluster Analysis for 3D Pattern Characterization of Trends in Precipitation and Change-Point Detection. J. Hydrol. Eng. 2021, 26, 04020056. [Google Scholar] [CrossRef]

- Iglesias, F.; Kastner, W. Analysis of similarity measures in times series clustering for the discovery of building energy patterns. Energies 2013, 6, 579–597. [Google Scholar] [CrossRef] [Green Version]

- Gopalapillai, R.; Gupta, D.; Sudarshan, T.S.B. Experimentation and analysis of time series data for rescue robotics. In Recent Advances in Intelligent Informatics; Springer: Cham, Switzerland, 2014; pp. 443–453. [Google Scholar]

- Wismüller, A.; Lange, O.; Dersch, D.R.; Leinsinger, G.L.; Hahn, K.; Pütz, B.; Auer, D. Cluster analysis of biomedical image time-series. Int. J. Comput. Vis. 2002, 46, 103–128. [Google Scholar] [CrossRef]

- Guo, C.; Jia, H.; Zhang, N. Time series clustering based on ICA for stock data analysis. In Proceedings of the 2008 4th International Conference on Wireless Communications, Networking and Mobile Computing, Dalian, China, 12–14 October 2008; IEEE: Piscataway, NJ, USA, 2008; pp. 1–4. [Google Scholar]

- Stone, J.V. Independent component analysis: An introduction. Trends Cogn. Sci. 2002, 6, 59–64. [Google Scholar] [CrossRef]

- Lee, D.; Baek, S.; Sung, K. Modified k-means algorithm for vector quantizer design. IEEE Signal Process. Lett. 1997, 4, 2–4. [Google Scholar]

- Shumway, R.H. Time-frequency clustering and discriminant analysis. Stat. Probab. Lett. 2003, 63, 307–314. [Google Scholar] [CrossRef]

- Elangasinghe, M.A.; Singhal, N.; Dirks, K.N.; Salmond, J.A.; Samarasinghe, S. Complex time series analysis of PM10 and PM2. 5 for a coastal site using artificial neural network modelling and k-means clustering. Atmos. Environ. 2014, 94, 106–116. [Google Scholar] [CrossRef]

- Möller-Levet, C.S.; Klawonn, F.; Cho, K.H.; Wolkenhauer, O. Fuzzy clustering of short time-series and unevenly distributed sampling points. In International Symposium on Intelligent Data Analysis; Springer: Berlin/Heidelberg, Germany, 2003; pp. 330–340. [Google Scholar]

- Rebbapragada, U.; Protopapas, P.; Brodley, C.E.; Alcock, C. Finding anomalous periodic time series. Mach. Learn. 2009, 74, 281–313. [Google Scholar] [CrossRef]

- Paparrizos, J.; Gravano, L. Fast and accurate time-series clustering. ACM Trans. Database Syst. 2017, 42, 1–49. [Google Scholar] [CrossRef]

- Paparrizos, J.; Gravano, L. K-shape: Efficient and accurate clustering of time series. In Proceedings of the 2015 ACM SIGMOD International Conference on Management of Data, Melbourne, Australia, 31 May–4 June 2015; pp. 1855–1870. [Google Scholar]

- Sardá-Espinosa, A. Comparing time-series clustering algorithms in r using the dtwclust package. R Package Vignette 2017, 12, 41. [Google Scholar]

- Zhang, Q.; Wu, J.; Zhang, P.; Long, G.; Zhang, C. Salient subsequence learning for time series clustering. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 41, 2193–2207. [Google Scholar] [CrossRef]

- Chen, T.; Shi, X.; Wong, Y.D. A lane-changing risk profile analysis method based on time-series clustering. Phys. A Stat. Mech. Appl. 2021, 565, 125567. [Google Scholar] [CrossRef]

- Steinmann, P.; Auping, W.L.; Kwakkel, J.H. Behavior-based scenario discovery using time series clustering. Technol. Forecast. Soc. Chang. 2020, 156, 120052. [Google Scholar] [CrossRef]

- Kuschnerus, M.; Lindenbergh, R.; Vos, S. Coastal change patterns from time series clustering of permanent laser scan data. Earth Surf. Dyn. 2021, 9, 89–103. [Google Scholar] [CrossRef]

- Motlagh, O.; Berry, A.; O’Neil, L. Clustering of residential electricity customers using load time series. Appl. Energy 2019, 237, 11–24. [Google Scholar] [CrossRef]

- Hallac, D.; Vare, S.; Boyd, S.; Leskovec, J. Toeplitz inverse covariance-based clustering of multivariate time series data. In Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, Halifax, NS, Canada, 13–17 August 2017; pp. 215–223. [Google Scholar]

- McDowell, I.C.; Manandhar, D.; Vockley, C.M.; Schmid, A.K.; Reddy, T.E.; Engelhardt, B.E. Clustering gene expression time series data using an infinite Gaussian process mixture model. PLoS Comput. Biol. 2018, 14, e1005896. [Google Scholar] [CrossRef] [PubMed]

- LeCun, Y.; Boser, B.E.; Denker, J.S.; Henderson, D.; Howard, R.E.; Hubbard, W.E.; Jackel, L.D. Handwritten digit recognition with a back-propagation network. In Advances in Neural Information Processing Systems (NIPS 1989); Morgan Kaufmann: Denver, CO, USA, 1990; Volume 2, pp. 396–404. [Google Scholar]

- Han, J.; Shi, L.; Yang, Q.; Huang, K.; Zha, Y.; Yu, J. Real-time detection of rice phenology through convolutional neural network using handheld camera images. Precis. Agric. 2021, 22, 154–178. [Google Scholar] [CrossRef]

- Chen, T.; Sun, Y.; Li, T.H. A semi-parametric estimation method for the quantile spectrum with an application to earthquake classification using convolutional neural network. Comput. Stat. Data Anal. 2021, 154, 107069. [Google Scholar] [CrossRef]

- Ciaburro, G.; Iannace, G.; Puyana-Romero, V.; Trematerra, A. A Comparison between Numerical Simulation Models for the Prediction of Acoustic Behavior of Giant Reeds Shredded. Appl. Sci. 2020, 10, 6881. [Google Scholar] [CrossRef]

- Han, J.; Miao, S.; Li, Y.; Yang, W.; Yin, H. Faulted-Phase classification for transmission lines using gradient similarity visualization and cross-domain adaption-based convolutional neural network. Electr. Power Syst. Res. 2021, 191, 106876. [Google Scholar] [CrossRef]

- Yildiz, C.; Acikgoz, H.; Korkmaz, D.; Budak, U. An improved residual-based convolutional neural network for very short-term wind power forecasting. Energy Convers. Manag. 2021, 228, 113731. [Google Scholar] [CrossRef]

- Ye, R.; Dai, Q. Implementing transfer learning across different datasets for time series forecasting. Pattern Recognit. 2021, 109, 107617. [Google Scholar] [CrossRef]

- Perla, F.; Richman, R.; Scognamiglio, S.; Wüthrich, M.V. Time-series forecasting of mortality rates using deep learning. Scand. Actuar. J. 2021, 1–27. [Google Scholar] [CrossRef]

- Ciaburro, G.; Iannace, G. Improving Smart Cities Safety Using Sound Events Detection Based on Deep Neural Network Algorithms. Informatics 2020, 7, 23. [Google Scholar] [CrossRef]

- Yang, C.L.; Yang, C.Y.; Chen, Z.X.; Lo, N.W. Multivariate time series data transformation for convolutional neural network. In Proceedings of the 2019 IEEE/SICE International Symposium on System Integration (SII), Paris, France, 14–16 January 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 188–192. [Google Scholar]

- Stoian, A.; Poulain, V.; Inglada, J.; Poughon, V.; Derksen, D. Land cover maps production with high resolution satellite image time series and convolutional neural networks: Adaptations and limits for operational systems. Remote Sens. 2019, 11, 1986. [Google Scholar] [CrossRef] [Green Version]

- Anantrasirichai, N.; Biggs, J.; Albino, F.; Bull, D. The application of convolutional neural networks to detect slow, sustained deformation in InSAR time series. Geophys. Res. Lett. 2019, 46, 11850–11858. [Google Scholar] [CrossRef] [Green Version]

- Wan, R.; Mei, S.; Wang, J.; Liu, M.; Yang, F. Multivariate temporal convolutional network: A deep neural networks approach for multivariate time series forecasting. Electronics 2019, 8, 876. [Google Scholar] [CrossRef] [Green Version]

- Ni, L.; Li, Y.; Wang, X.; Zhang, J.; Yu, J.; Qi, C. Forecasting of forex time series data based on deep learning. Procedia Comput. Sci. 2019, 147, 647–652. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y. Convolutional networks for images, speech, and time series. In The Handbook of Brain Theory and Neural Networks; MIT Press: Cambridge, MA, USA, 1995. [Google Scholar]

- Zhao, B.; Lu, H.; Chen, S.; Liu, J.; Wu, D. Convolutional neural networks for time series classification. J. Syst. Eng. Electron. 2017, 28, 162–169. [Google Scholar] [CrossRef]

- Chen, Y.; Keogh, E.; Hu, B.; Begum, N.; Bagnall, A.; Mueen, A.; Batista, G. The Ucr Time Series Classification Archive. 2015. Available online: https://www.cs.ucr.edu/~eamonn/time_series_data/ (accessed on 4 April 2021).

- Liu, C.L.; Hsaio, W.H.; Tu, Y.C. Time series classification with multivariate convolutional neural network. IEEE Trans. Ind. Electron. 2018, 66, 4788–4797. [Google Scholar] [CrossRef]

- PHM Data Challenge. 2015. Available online: https://www.phmsociety.org/events/conference/phm/15/data-challenge (accessed on 4 April 2021).

- Cui, Z.; Chen, W.; Chen, Y. Multi-scale convolutional neural networks for time series classification. arXiv 2016, arXiv:1603.06995. [Google Scholar]

- Borovykh, A.; Bohte, S.; Oosterlee, C.W. Conditional time series forecasting with convolutional neural networks. arXiv 2017, arXiv:1703.04691. [Google Scholar]

- Oord, A.V.D.; Dieleman, S.; Zen, H.; Simonyan, K.; Vinyals, O.; Graves, A.; Kavukcuoglu, K. Wavenet: A generative model for raw audio. arXiv 2016, arXiv:1609.03499. [Google Scholar]

- Yang, J.; Nguyen, M.N.; San, P.P.; Li, X.; Krishnaswamy, S. Deep convolutional neural networks on multichannel time series for human activity recognition. IJCAI 2015, 15, 3995–4001. [Google Scholar]

- Roggen, D.; Calatroni, A.; Rossi, M.; Holleczek, T.; Förster, K.; Tröster, G.; Millan, J.D.R. Collecting complex activity datasets in highly rich networked sensor environments. In Proceedings of the 2010 Seventh international conference on networked sensing systems (INSS), Kassel, Germany, 15–18 June 2010; IEEE: Piscataway, NJ, USA, 2010; pp. 233–240. [Google Scholar]

- Bulling, A.; Blanke, U.; Schiele, B. A tutorial on human activity recognition using body-worn inertial sensors. ACM Comput. Surv. 2014, 46, 1–33. [Google Scholar] [CrossRef]

- Le Guennec, A.; Malinowski, S.; Tavenard, R. Data Augmentation for Time Series Classification Using Convolutional Neural Networks. Ecml/Pkdd Workshop on Advanced Analytics and Learning on Temporal Data. 2016. Available online: https://halshs.archives-ouvertes.fr/halshs-01357973 (accessed on 24 May 2021).

- Hatami, N.; Gavet, Y.; Debayle, J. Classification of time-series images using deep convolutional neural networks. In Proceedings of the Tenth international conference on machine vision (ICMV 2017), Vienna, Austria, 13–15 November 2017; International Society for Optics and Photonics: Bellingham, WA, USA, 2018; Volume 10696, p. 106960Y. [Google Scholar]

- Marwan, N.; Romano, M.C.; Thiel, M.; Kurths, J. Recurrence plots for the analysis of complex systems. Phys. Rep. 2007, 438, 237–329. [Google Scholar] [CrossRef]

- Sezer, O.B.; Ozbayoglu, A.M. Algorithmic financial trading with deep convolutional neural networks: Time series to image conversion approach. Appl. Soft Comput. 2018, 70, 525–538. [Google Scholar] [CrossRef]

- Hong, S.; Wang, C.; Fu, Z. Gated temporal convolutional neural network and expert features for diagnosing and explaining physiological time series: A case study on heart rates. Comput. Methods Programs Biomed. 2021, 200, 105847. [Google Scholar] [CrossRef]

- Lu, X.; Lin, P.; Cheng, S.; Lin, Y.; Chen, Z.; Wu, L.; Zheng, Q. Fault diagnosis for photovoltaic array based on convolutional neural network and electrical time series graph. Energy Convers. Manag. 2019, 196, 950–965. [Google Scholar] [CrossRef]

- Han, L.; Yu, C.; Xiao, K.; Zhao, X. A new method of mixed gas identification based on a convolutional neural network for time series classification. Sensors 2019, 19, 1960. [Google Scholar] [CrossRef] [Green Version]

- Gao, J.; Song, X.; Wen, Q.; Wang, P.; Sun, L.; Xu, H. RobustTAD: Robust time series anomaly detection via decomposition and convolutional neural networks. arXiv 2020, arXiv:2002.09545. [Google Scholar]

- Kashiparekh, K.; Narwariya, J.; Malhotra, P.; Vig, L.; Shroff, G. ConvTimeNet: A pre-trained deep convolutional neural network for time series classification. In Proceedings of the 2019 International Joint Conference on Neural Networks (IJCNN), Budapest, Hungary, 14–19 July 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1–8. [Google Scholar]

- Tang, W.H.; Röllin, A. Model identification for ARMA time series through convolutional neural networks. Decis. Support Syst. 2021, 146, 113544. [Google Scholar] [CrossRef]

- Mikolov, T.; Karafiát, M.; Burget, L.; Černocký, J.; Khudanpur, S. Recurrent neural network based language model. In Proceedings of the Eleventh Annual Conference of the International Speech Communication Association, Chiba, Japan, 26–30 September 2010. [Google Scholar]

- Zaremba, W.; Sutskever, I.; Vinyals, O. Recurrent neural network regularization. arXiv 2014, arXiv:1409.2329. [Google Scholar]

- Mikolov, T.; Kombrink, S.; Burget, L.; Černocký, J.; Khudanpur, S. Extensions of recurrent neural network language model. In Proceedings of the 2011 IEEE international conference on acoustics, speech and signal processing (ICASSP), Prague, Czech Republic, 22–27 May 2011; IEEE: Piscataway, NJ, USA, 2011; pp. 5528–5531. [Google Scholar]

- Hopfield, J.J. Neural networks and physical systems with emergent collective computational abilities. Proc. Natl. Acad. Sci. USA 1982, 79, 2554–2558. [Google Scholar] [CrossRef] [Green Version]

- Gregor, K.; Danihelka, I.; Graves, A.; Rezende, D.; Wierstra, D. Draw: A recurrent neural network for image generation. In Proceedings of the International Conference on Machine Learning, Lille, France, 6–11 July 2015; pp. 1462–1471. [Google Scholar]

- Saon, G.; Soltau, H.; Emami, A.; Picheny, M. Unfolded recurrent neural networks for speech recognition. In Proceedings of the Fifteenth Annual Conference of the International Speech Communication Association, Singapore, 14–18 September 2014. [Google Scholar]

- Goodfellow, I.; Bengio, Y. Courville, Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Kag, A.; Zhang, Z.; Saligrama, V. Rnns incrementally evolving on an equilibrium manifold: A panacea for vanishing and exploding gradients? In Proceedings of the International Conference on Learning Representations, New Orleans, LA, USA, 6–9 May 2019. [Google Scholar]

- Hochreiter, S.; Schmidhuber, J. Long Short-Term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Fischer, T.; Krauss, C. Deep learning with long short-term memory networks for financial market predictions. Eur. J. Oper. Res. 2018, 270, 654–669. [Google Scholar] [CrossRef] [Green Version]

- Bao, W.; Yue, J.; Rao, Y. A deep learning framework for financial time series using stacked autoencoders and long-short term memory. PLoS ONE 2017, 12, e0180944. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Soni, B.; Patel, D.K.; Lopez-Benitez, M. Long short-term memory based spectrum sensing scheme for cognitive radio using primary activity statistics. IEEE Access 2020, 8, 97437–97451. [Google Scholar] [CrossRef]

- Connor, J.T.; Martin, R.D.; Atlas, L.E. Recurrent neural networks and robust time series prediction. IEEE Trans. Neural Netw. 1994, 5, 240–254. [Google Scholar] [CrossRef] [Green Version]

- Qin, Y.; Song, D.; Chen, H.; Cheng, W.; Jiang, G.; Cottrell, G. A dual-stage attention-based recurrent neural network for time series prediction. arXiv 2017, arXiv:1704.02971. [Google Scholar]

- Che, Z.; Purushotham, S.; Cho, K.; Sontag, D.; Liu, Y. Recurrent neural networks for multivariate time series with missing values. Sci. Rep. 2018, 8, 1–12. [Google Scholar] [CrossRef] [Green Version]

- Chandra, R.; Zhang, M. Cooperative coevolution of Elman recurrent neural networks for chaotic time series prediction. Neurocomputing 2012, 86, 116–123. [Google Scholar] [CrossRef]

- Elman, J.L. Finding structure in time. Cogn. Sci. 1990, 14, 179–211. [Google Scholar] [CrossRef]

- Hüsken, M.; Stagge, P. Recurrent neural networks for time series classification. Neurocomputing 2003, 50, 223–235. [Google Scholar] [CrossRef]

- Hermans, M.; Schrauwen, B. Training and analysing deep recurrent neural networks. Adv. Neural Inf. Process. Syst. 2013, 26, 190–198. [Google Scholar]

- Hua, Y.; Zhao, Z.; Li, R.; Chen, X.; Liu, Z.; Zhang, H. Deep learning with long short-term memory for time series prediction. IEEE Commun. Mag. 2019, 57, 114–119. [Google Scholar] [CrossRef] [Green Version]

- Song, X.; Liu, Y.; Xue, L.; Wang, J.; Zhang, J.; Wang, J.; Cheng, Z. Time-series well performance prediction based on Long Short-Term Memory (LSTM) neural network model. J. Pet. Sci. Eng. 2020, 186, 106682. [Google Scholar] [CrossRef]

- Yang, B.; Yin, K.; Lacasse, S.; Liu, Z. Time series analysis and long short-term memory neural network to predict landslide displacement. Landslides 2019, 16, 677–694. [Google Scholar] [CrossRef]

- Sahoo, B.B.; Jha, R.; Singh, A.; Kumar, D. Long short-term memory (LSTM) recurrent neural network for low-flow hydrological time series forecasting. Acta Geophys. 2019, 67, 1471–1481. [Google Scholar] [CrossRef]

- Benhaddi, M.; Ouarzazi, J. Multivariate Time Series Forecasting with Dilated Residual Convolutional Neural Networks for Urban Air Quality Prediction. Arab. J. Sci. Eng. 2021, 46, 3423–3442. [Google Scholar] [CrossRef]

- Kong, Y.L.; Huang, Q.; Wang, C.; Chen, J.; Chen, J.; He, D. Long short-term memory neural networks for online disturbance detection in satellite image time series. Remote Sens. 2018, 10, 452. [Google Scholar] [CrossRef] [Green Version]

- Lei, J.; Liu, C.; Jiang, D. Fault diagnosis of wind turbine based on Long Short-term memory networks. Renew. Energy 2019, 133, 422–432. [Google Scholar] [CrossRef]

- Tschannen, M.; Bachem, O.; Lucic, M. Recent advances in autoencoder-based representation learning. arXiv 2018, arXiv:1812.05069. [Google Scholar]

- Myronenko, A. 3D MRI brain tumor segmentation using autoencoder regularization. In International MICCAI Brainlesion Workshop; Springer: Cham, Switzerland, 2018; pp. 311–320. [Google Scholar]

- Yang, X.; Deng, C.; Zheng, F.; Yan, J.; Liu, W. Deep spectral clustering using dual autoencoder network. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 4066–4075. [Google Scholar]

- Ashfahani, A.; Pratama, M.; Lughofer, E.; Ong, Y.S. DEVDAN: Deep evolving denoising autoencoder. Neurocomputing 2020, 390, 297–314. [Google Scholar] [CrossRef] [Green Version]

- Semeniuta, S.; Severyn, A.; Barth, E. A hybrid convolutional variational autoencoder for text generation. arXiv 2017, arXiv:1702.02390. [Google Scholar]

- Mehdiyev, N.; Lahann, J.; Emrich, A.; Enke, D.; Fettke, P.; Loos, P. Time series classification using deep learning for process planning: A case from the process industry. Procedia Comput. Sci. 2017, 114, 242–249. [Google Scholar] [CrossRef]

- Corizzo, R.; Ceci, M.; Zdravevski, E.; Japkowicz, N. Scalable auto-encoders for gravitational waves detection from time series data. Expert Syst. Appl. 2020, 151, 113378. [Google Scholar] [CrossRef]

- Yang, J.; Bai, Y.; Lin, F.; Liu, M.; Hou, Z.; Liu, X. A novel electrocardiogram arrhythmia classification method based on stacked sparse auto-encoders and softmax regression. Int. J. Mach. Learn. Cybern. 2018, 9, 1733–1740. [Google Scholar] [CrossRef]

- Rußwurm, M.; Körner, M. Multi-temporal land cover classification with sequential recurrent encoders. ISPRS Int. J. Geo-Inf. 2018, 7, 129. [Google Scholar] [CrossRef] [Green Version]

- Zdravevski, E.; Lameski, P.; Trajkovik, V.; Kulakov, A.; Chorbev, I.; Goleva, R.; Garcia, N. Improving activity recognition accuracy in ambient-assisted living systems by automated feature engineering. IEEE Access 2017, 5, 5262–5280. [Google Scholar] [CrossRef]

- Christ, M.; Braun, N.; Neuffer, J.; Kempa-Liehr, A.W. Time series feature extraction on basis of scalable hypothesis tests (tsfresh–a python package). Neurocomputing 2018, 307, 72–77. [Google Scholar] [CrossRef]

- Caesarendra, W.; Pratama, M.; Kosasih, B.; Tjahjowidodo, T.; Glowacz, A. Parsimonious network based on a fuzzy inference system (PANFIS) for time series feature prediction of low speed slew bearing prognosis. Appl. Sci. 2018, 8, 2656. [Google Scholar] [CrossRef] [Green Version]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ciaburro, G.; Iannace, G. Machine Learning-Based Algorithms to Knowledge Extraction from Time Series Data: A Review. Data 2021, 6, 55. https://doi.org/10.3390/data6060055

Ciaburro G, Iannace G. Machine Learning-Based Algorithms to Knowledge Extraction from Time Series Data: A Review. Data. 2021; 6(6):55. https://doi.org/10.3390/data6060055

Chicago/Turabian StyleCiaburro, Giuseppe, and Gino Iannace. 2021. "Machine Learning-Based Algorithms to Knowledge Extraction from Time Series Data: A Review" Data 6, no. 6: 55. https://doi.org/10.3390/data6060055

APA StyleCiaburro, G., & Iannace, G. (2021). Machine Learning-Based Algorithms to Knowledge Extraction from Time Series Data: A Review. Data, 6(6), 55. https://doi.org/10.3390/data6060055