Russian–German Astroparticle Data Life Cycle Initiative

Abstract

1. Introduction

- KCDC extension: the already-existing data centre released an initial dataset of parameters of more than 400 million extensive air showers of the concluded KASCADE-Grande experiment. The initiative extends KCDC with more scientific data from the TAIGA experiment (i.e., current/up-to-date data), allowing on-the-fly multi-messenger-analysis. Our goal is to extend and improve KCDC and make it more attractive to a broader user community.

- Big Data science software: such an extension of the data centre allowing not only access to the data but also the possibility of developing specific analysis methods and corresponding simulations in one environment requires a move to the most modern computing, storage, and data access concepts, which is only possible by a close co-operation between the participating groups from both physics and information technology. A possible concept to reach this goal is the installation of a dedicated so-called “data life cycle lab”, which this project is aiming for. Dedicated access, storage, and interface software have to be developed.

- Reliability tests: some specific analysis of the data provided by the new data centre will be performed to test the entire concept. The results will give important contributions and confidence in the project as a valuable scientific tool.

- Go for the public: the full outreach aspect of the project, including sample applications for all user levels, from pupils to the directly involved scientists to theoreticians, with detailed tutorials and documentation is an important goal of the project.

- Distributed data storage algorithms and techniques with a common metadata catalog to provide a common information space of the distributed repository;

- Data transmission algorithms as well as simultaneous data transmission from several data repositories, thus significantly reducing load time;

- Deep-learning techniques for identifying mass groups of impinging cosmic particles and their properties in a fully remote access mode;

- A KCDC-based prototype system of Big Data analysis and exporting the experimental data from KASCADE-Grande and TAIGA to test the technology of efficient data life cycle management.

- An educational system based on the HUBzero1 platform dedicated to astroparticle physics.

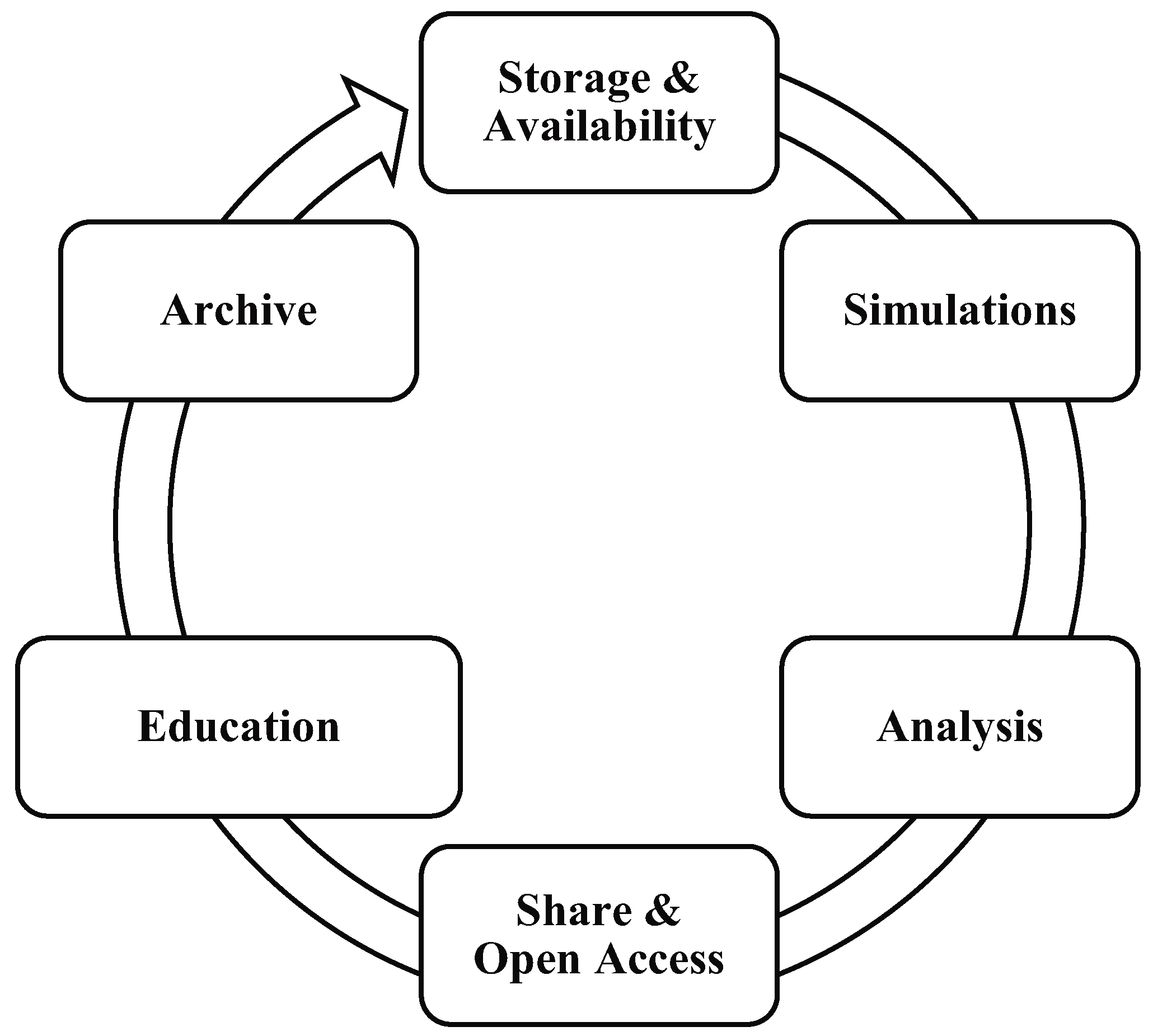

- We defined the concept of an astroparticle data life cycle, covering the following issues: storage, simulations, analysis, education, open access, and archive of astroparticle data (Section 2).

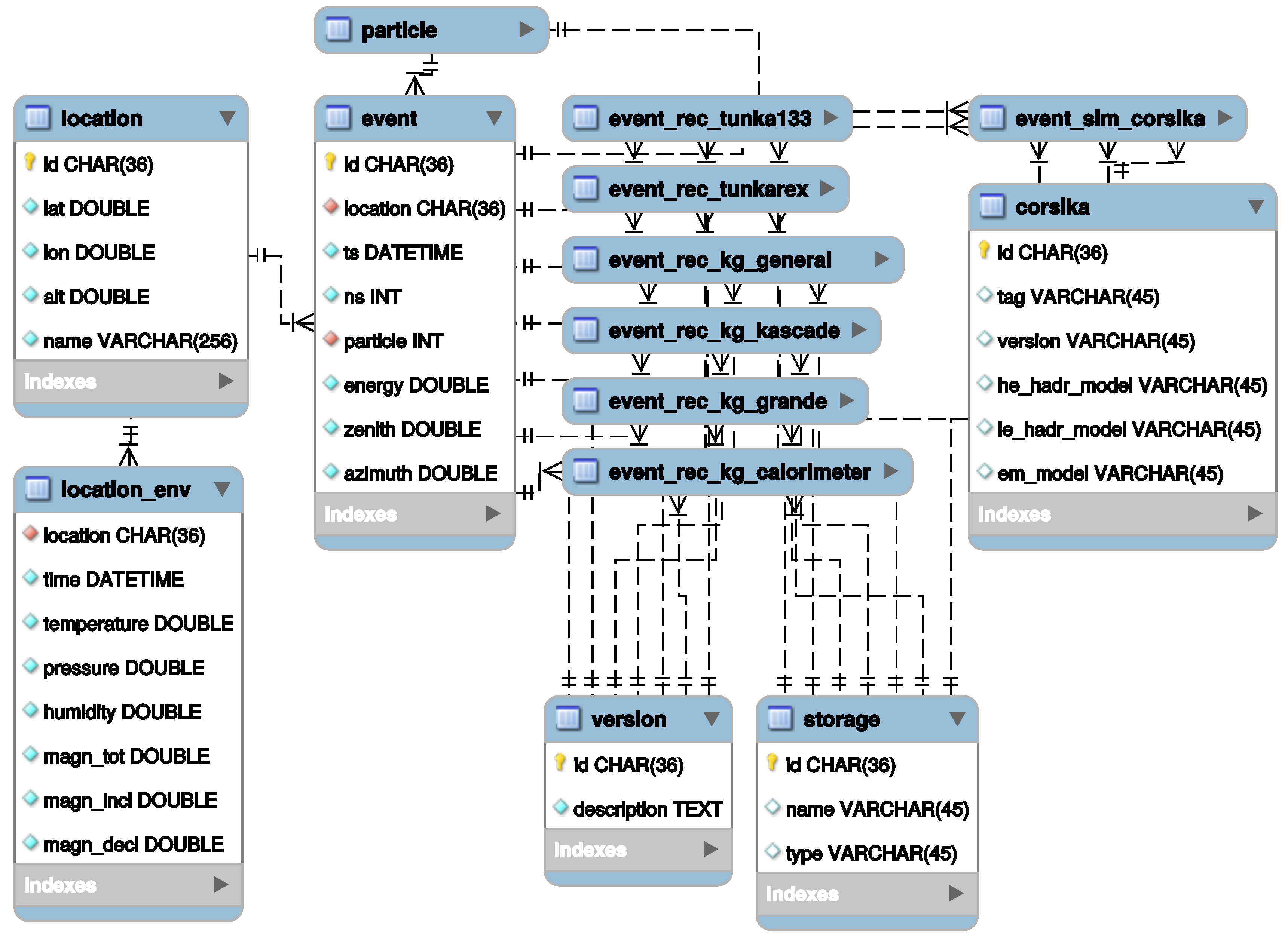

- We introduced a metadata architecture for cosmic ray experiments that aims at describing and searching for all events from KASCADE-Grande and TAIGA experiments in a centralized database (Section 3.1).

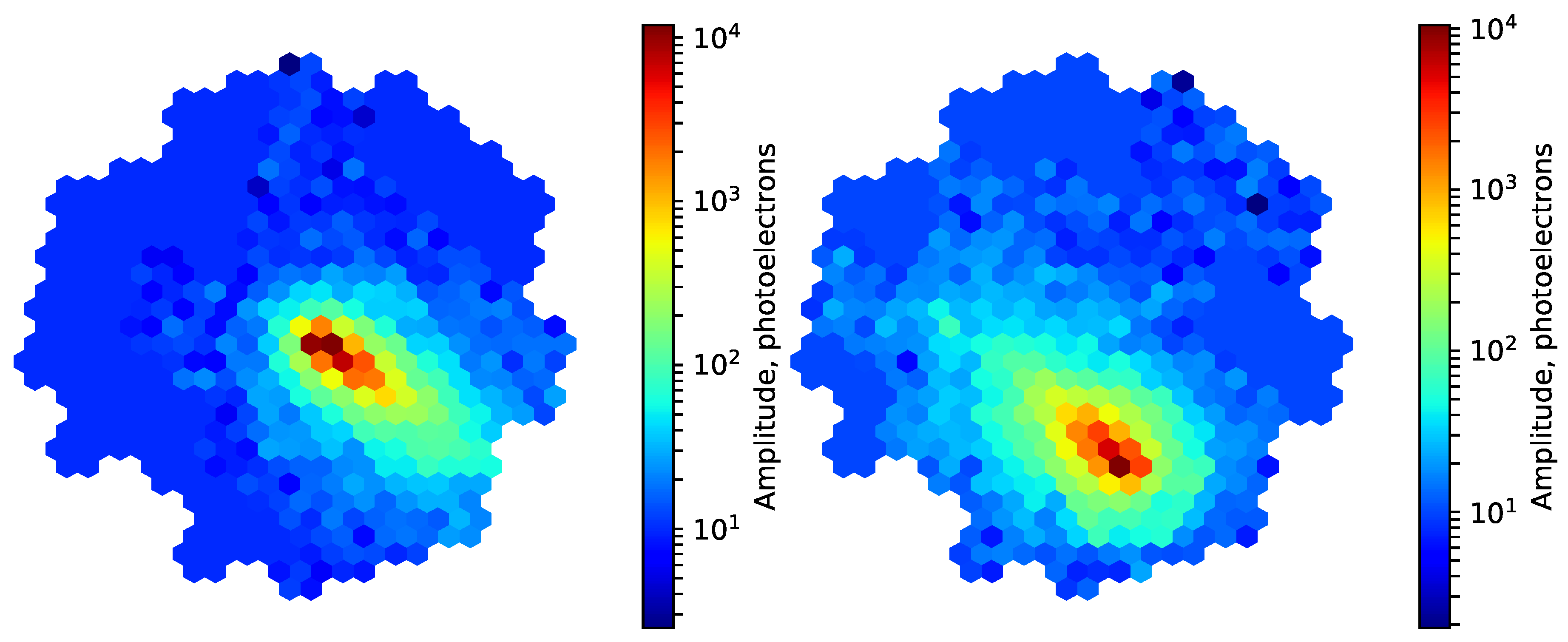

- We proposed and estimated a novel technique for particle identification in imaging air Cherenkov telescopes based on deep learning. The technique was implemented with two well-known deep learning platforms—PyTorch and TensorFlow (Section 3.2).

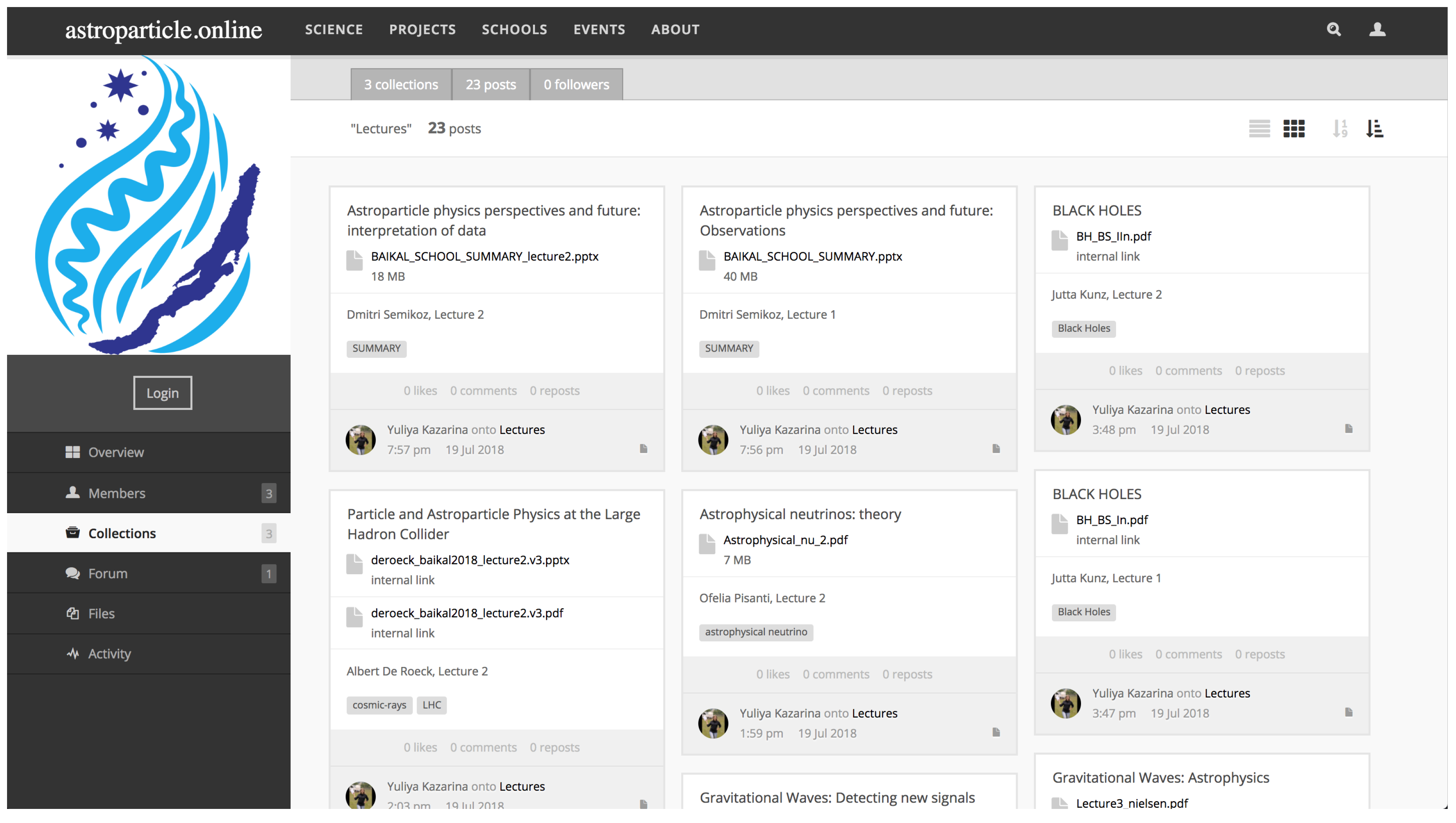

- We developed educational resources for teaching students in the field of astroparticle physics. The resources were implemented with HUBzero, an open-source software platform for building scientific collaboration websites (Section 3.3).

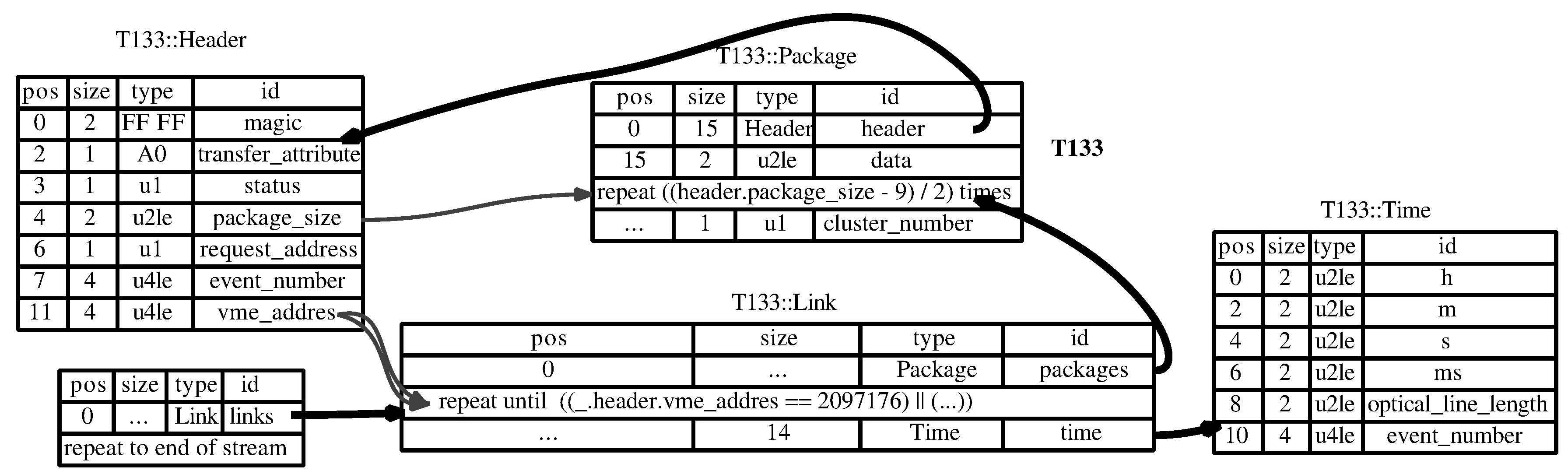

- We examined the applicability of some data format description languages for documenting, parsing, and verifying raw binary data produced in both experiments. The implemented formal specifications of all file formats allowed source code to be automatically generated for data reading libraries in target programming languages (Section 3.4).

2. Concept of an Astroparticle Data Life Cycle

- Data availability: all participating researchers of the individual experiments or facilities need fast and simple access to the relevant data.

- Simulations and methods development: to prepare the analysis of the data the researchers need an environment with mighty computing power for the production of relevant simulations and the development of new methods (e.g., by deep machine learning).

- Analysis: fast access to the (probably distributed) Big Data from measurements and simulations is needed.

- Education in data science: the handling of the data centers as well as the processing of the data needs specialized education in “Big Data science”.

- Open access: it is increasingly important to provide the scientific data not only to the internal research community but also to the interested public.

- Data archive: the valuable scientific data need to be preserved for later reuse.

2.1. Storage and Availability

2.2. Simulations

2.3. Analysis

2.4. Sharing and Open Access

2.5. Education in Data Science

2.6. Archive

2.7. KCDC Extension

3. First Results

3.1. Metadata Architecture for Cosmic Ray Experiments

3.2. Data Analysis

3.3. Educational Resources

3.4. Raw Binary Data Sharing and Reuse

4. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| KASCADE | KArlsruhe Shower Core and Array DEtector |

| TAIGA | Tunka Advanced Instrument for cosmic rays and Gamma Astronomy |

| IACT | Imaging Atmospheric Cherenkov Telescope |

| HiSCORE | High-Sensitivity Cosmic ORigin Explorer |

| KCDC | KASCADE Cosmic-ray Data Centre |

| SCC KIT | Steinbuch Centre for Computing Karlsruhe Institute of Technology |

| SINP MSU | Skobeltsyn Institute of Nuclear Physics Lomonosov Moscow State University |

References

- Cirkel-Bartelt, V. History of Astroparticle Physics and its Components. Living Rev. Relat. 2008, 11, 2. [Google Scholar] [CrossRef] [PubMed]

- De Angelis, A.; Pimenta, M. Undergraduate Lecture Notes in Physics; Springer: Cham, Switzerland, 2018; pp. 1–733. [Google Scholar]

- Olinto, A.V. Cosmic Rays: The Highest-Energy Messengers. Science 2007, 315, 68–70. [Google Scholar] [CrossRef] [PubMed]

- Aab, A.; Abreu, P.; Aglietta, M.; Ahlers, M.; Ahn, E.J.; Al Samarai, I.; Albuquerque, I.F.M.; Allekotte, I.; Allen, J.; Allison, P.; et al. A Targeted Search for Point Sources of EeV Neutrons. Astrophys. J. Lett. 2014, 789, L34. [Google Scholar] [CrossRef]

- Horns, D. Gamma-Ray Astronomy from the Ground. J. Phys. Conf. Ser. 2016, 718, 022010. [Google Scholar] [CrossRef]

- Knödlseder, J. The future of gamma-ray astronomy. C. R. Phys. 2016, 17, 663–678. [Google Scholar] [CrossRef]

- Tluczykont, M.; Budnev, N.; Astapov, I.; Bezyazeekov, P.; Bogdanov, A.; Boreyko, V.; Brueckner, M.; Chiavassa, A.; Chvalaev, O.; Gress, O.; et al. Connecting neutrino Astrophysics to Multi-TeV to PeV gamma-ray astronomy with TAIGA. In Proceedings of the Magellan Workshop: Connecting Neutrino Physics and Astronomy, Hamburg, Germany, 17–18 March 2016; Volume 434, pp. 135–142. [Google Scholar] [CrossRef]

- Ahlers, M. Deciphering the Dipole Anisotropy of Galactic Cosmic Rays. Phys. Rev. Lett. 2016, 117, 151103. [Google Scholar] [CrossRef] [PubMed]

- The Pierre Auger Collaboration; Aab, A.; Abreu, P.; Aglietta, M.; Al Samarai, I.; Albuquerque, I.F.M.; Allekotte, I.; Almela, A.; Alvarez Castillo, J.; Alvarez-Muniz, J.; et al. Observation of a Large-scale Anisotropy in the Arrival Directions of Cosmic Rays above 8 × 1018 eV. Science 2017, 357, 1266–1270. [Google Scholar] [CrossRef]

- The CTA Consortium. Design concepts for the Cherenkov Telescope Array CTA: An advanced facility for ground-based high-energy gamma-ray astronomy. Exp. Astron. 2011, 32, 193–316. [Google Scholar] [CrossRef]

- Allard, D. Extragalactic propagation of ultrahigh energy cosmic-rays. Astropart. Phys. 2012, 39–40, 33–43. [Google Scholar] [CrossRef]

- Adrián-Martínez, S.; Al Samarai, I.; Albert, A.; André, M.; Anghinolfi, M.; Anton, G.; Anvar, S.; Ardid, M.; Astraatmadja, T.; Aubert, J.-J.; et al. Search for a correlation between ANTARES neutrinos and Pierre Auger Observatory UHECRs arrival directions. Astrophys. J. 2013, 774, 19. [Google Scholar] [CrossRef]

- The IceCube, Pierre Auger and Telescope Array Collaborations. Search for correlations between the arrival directions of IceCube neutrino events and ultrahigh-energy cosmic rays detected by the Pierre Auger Observatory and the Telescope Array. J. Cosmol. Astropart. Phys. 2016, 2016, 037. [Google Scholar] [CrossRef]

- Gorbunov, D.S.; Tinyakov, P.G.; Tkachev, I.I.; Troitsky, S.V. Evidence for a Connection between the γ-Ray and the Highest Energy Cosmic-Ray Emissions by BL Lacertae Objects. Astrophys. J. Lett. 2002, 577, L93. [Google Scholar] [CrossRef]

- Nemmen, R.S.; Bonatto, C.; Storchi-Bergmann, T. A correlation between the highest energy cosmic rays and nearby active galactic nuclei detected by Fermi. Astrophys. J. 2010, 722, 281. [Google Scholar] [CrossRef]

- Álvarez, E.; Cuoco, A.; Mirabal, N.; Zaharijas, G. Searches for correlation between UHECR events and high-energy gamma-ray Fermi-LAT data. J. Cosmol. Astropart. Phys. 2016, 2016, 023. [Google Scholar] [CrossRef]

- Smith, M.W.E.; Fox, D.B.; Cowen, D.F.; Mészáros, P.; Tesić, G.; Fixelle, J.; Bartos, I.; Sommers, P.; Ashtekar, A.; Babu, G.J.; et al. The Astrophysical Multimessenger Observatory Network (AMON). Messenger 2013, 41, 56–70. [Google Scholar] [CrossRef]

- Arnaboldi, M.; Neeser, M.J.; Parker, L.C.; Rosati, P.; Lombardi, M.; Dietrich, J.P.; Hummel, W. ESO Public Surveys with the VST and VISTA. Messenger 2007, 127, 28–32. [Google Scholar]

- Santander-Vela, J.D.; Delgado, A.; Delmotte, N.; Vuong, M. Data Provenance: Use Cases for the ESO archive, and Interactions with the Virtual Observatory. ASP Conf. Ser. 2010, 434, 398. [Google Scholar]

- Haungs, A.; Kang, D.; Schoo, S.; Wochele, D.; Wochele, J.; Apel, W.D.; Arteaga-Velázquez, J.C.; Bekk, K.; Bertaina, M.; Blümer, J.; et al. The KASCADE Cosmic-ray Data Centre KCDC: Granting Open Access to Astroparticle Physics Research Data. Eur. Phys. J. C 2018. submitted. [Google Scholar] [CrossRef]

- Apel, W.D.; Arteaga, J.C.; Badea, A.F.; Bekk, K.; Bertaina, M.; Blümer, J.; Bozdog, H.; Brancus, I.M.; Buchholz, P.; Cantonic, E.; et al. The KASCADE-Grande experiment. Nucl. Instrum. Meth. 2010, A620, 202–216. [Google Scholar] [CrossRef]

- Budnev, N.; Astapov, I.; Bezyazeekov, P.; Bogdanov, A.; Boreyko, V.; Büker, M.; Brückner, M.; Chiavassa, A.; Chvalaev, O.; Gress, O.; et al. The TAIGA experiment: from cosmic ray to gamma-ray astronomy in the Tunka valley. J. Phys. Conf. Ser. 2016, 718, 052006. [Google Scholar] [CrossRef]

- Krivonos, R.; Revnivtsev, M.; Lutovinov, A.; Sazonov, S.; Churazov, E.; Sunyaev, R. INTEGRAL/IBIS all-sky survey in hard X-rays. Astron. Astrophys. 2007. [Google Scholar] [CrossRef]

- De Bruijne, J.H.J. Science performance of Gaia, ESA’s space-astrometry mission. Astrophys. Space Sci. 2012, 341, 31–41. [Google Scholar] [CrossRef]

- Abell, P.A.; Allison, J.; Anderson, S.F.; Andrew, J.R.; Angel, J.R.P.; Armus, L.; Arnett, D.; Asztalos, S.J.; Axelrod, T.S.; Bailey, S.; et al. LSST Science Book, Version 2.0; LSST Corporation: Tucson, AZ, USA, 2009. [Google Scholar]

- David, P.A. Understanding the emergence of ‘open science’ institutions: Functionalist economics in historical context. Ind. Corp. Chang. 2004, 13, 571–589. [Google Scholar] [CrossRef]

- Berghöfer, T.; Agrafioti, I.; Allen, B.; Beckmann, V.; Chiarusi, T.; Delfino, M.; Hesping, S.; Chudoba, J.; Dell’Agnello, L.; Katsanevas, S.; et al. Towards a Model for Computing in European Astroparticle Physics. arXiv, 2015; arXiv:1512.00988. [Google Scholar]

- The Pierre Auger Collaboration. The Pierre Auger Cosmic Ray Observatory. Nucl. Instrum. Meth. 2015, A798, 172–213. [Google Scholar] [CrossRef]

- Karle, A.; IceCube Collaboration; Ahrensa, J.; Bahcall, J.N.; Bai, X.; Becca, T.; Becker, K.-H.; Besson, D.Z.; Berley, D.; Bernardini, E.; et al. Icecube—The next generation neutrino telescope at the south pole. Nucl. Phys. Proc. Suppl. 2003, 118, 388–395. [Google Scholar] [CrossRef]

- Postnikov, E.; Astapov, I.; Bezyazeekov, P.; Boreyko, V.; Borodin, A.; Brueckner, M.; Budnev, N.; Chiavassa, A.; Dyachok, A.; Elshoukrofy, A.S.; et al. Commissioning the joint operation of the wide angle timing HiSCORE Cherenkov array with the first IACT of the TAIGA experimen. Proc. Sci. 2018, ICRC2017, 756. [Google Scholar] [CrossRef]

- Kuzmichev, L.A.; Astapov, I.I.; Bezyazeekov, P.A.; Boreyko, V.; Borodin, A.N.; Budnev, N.M.; Wischnewski, R.; Garmash, A.Y.; Gafarov, A.R.; Gorbunov, N.V.; et al. TAIGA Gamma Observatory: Status and Prospects. Phys. Atom. Nucl. 2018, 81, 497–507. [Google Scholar] [CrossRef]

- Feng, Q.; Lin, T.T.Y. The analysis of VERITAS muon images using convolutional neural networks. Proc. Int. Astron. Union Symp. S325 2016, 12, 173–179. [Google Scholar] [CrossRef]

- Nieto, D.; Brill, A.; Kim, B.; Humensky, T. Exploring deep learning as an event classification method for the Cherenkov Telescope Array. Proc. Sci. 2017, 301, 809. [Google Scholar]

- Kraus, M.; Büchele, M.; Egberts, K.; Fischer, T.; Holch, T.L.; Lohse, T.; Schwanke, U.; Steppa, C.; Funk, S. Application of Deep Learning methods to analysis of Imaging Atmospheric Cherenkov Telescopes data. arXiv, 2018; arXiv:1803.10698. [Google Scholar]

- Ketkar, N. Deep Learning with Python; Apress: Berkeley, CA, USA, 2017; pp. 195–208. [Google Scholar]

- Abadi, M.; Barham, P.; Chen, J.; Chen, Z.; Davis, A.; Dean, J.; Devin, M.; Ghemawat, S.; Irving, G.; Isard, M.; et al. TensorFlow: A System for Large-Scale Machine Learning. In Proceedings of the 12th USENIX Symposium on Operating Systems Design and Implementation (OSDI 16), Savannah, GA, USA, 2–4 November 2016; USENIX Association: Savannah, GA, USA, 2016; pp. 265–283. [Google Scholar]

- Kryukov, A.; Korosteleva, E.; Bychkov, I.; Khmelnov, A.; Mikhailov, A.; Shigarov, A. Specifying Binary File Formats for TAIGA Data Sharing and Reuse. In Proceedings of the 26th Extended European Cosmic Ray Symposium/35th Russian Cosmic Ray Conference, Altayskiy Kray, Russia, 6–10 July 2018; pp. 171–172. [Google Scholar]

- Prosin, V.V.; Berezhnev, S.F.; Budnev, N.M.; Brückner, M.; Chiavassa, A.; Chvalaev, O.A.; Dyachok, A.V.; Epimakhov, S.N.; Gafarov, A.V.; Gress, O.A.; et al. Results from Tunka-133 (5 years observation) and from the Tunka-HiSCORE prototype. EPJ Web. Conf. 2016, 121, 03004. [Google Scholar] [CrossRef]

- Bezyazeekov, P.A.; Budneva, N.M.; Gress, O.A.; Haungs, A.; Hiller, R.; Huege, T.; Kazarine, Y.; Kleifges, M.; Konstatinov, E.N.; Korosteleva, E.E.; et al. Measurement of cosmic-ray air showers with the Tunka Radio Extension (Tunka-Rex). Nucl. Instrum. Meth. 2015, A802, 89–96. [Google Scholar] [CrossRef]

- Monkhoev, R.D.; Budnev, N.M.; Voronin, D.M.; Gafarov, A.R.; Gress, O.A.; Gress, T.I.; Gress, O.G.; Dyahhok, A.N.; Epimakhov, S.N.; Zhurov, D.P.; et al. The Tunka-Grande experiment: Status and prospects. Bull. Russ. Acad. Sci. 2017, 81, 468–470. [Google Scholar] [CrossRef]

| 1. | |

| 2. | |

| 3. | |

| 4. | |

| 5. | |

| 6. | |

| 7. | |

| 8. | |

| 9. | |

| 10. | |

| 11. | |

| 12. | |

| 13. |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bychkov, I.; Demichev, A.; Dubenskaya, J.; Fedorov, O.; Haungs, A.; Heiss, A.; Kang, D.; Kazarina, Y.; Korosteleva, E.; Kostunin, D.; et al. Russian–German Astroparticle Data Life Cycle Initiative. Data 2018, 3, 56. https://doi.org/10.3390/data3040056

Bychkov I, Demichev A, Dubenskaya J, Fedorov O, Haungs A, Heiss A, Kang D, Kazarina Y, Korosteleva E, Kostunin D, et al. Russian–German Astroparticle Data Life Cycle Initiative. Data. 2018; 3(4):56. https://doi.org/10.3390/data3040056

Chicago/Turabian StyleBychkov, Igor, Andrey Demichev, Julia Dubenskaya, Oleg Fedorov, Andreas Haungs, Andreas Heiss, Donghwa Kang, Yulia Kazarina, Elena Korosteleva, Dmitriy Kostunin, and et al. 2018. "Russian–German Astroparticle Data Life Cycle Initiative" Data 3, no. 4: 56. https://doi.org/10.3390/data3040056

APA StyleBychkov, I., Demichev, A., Dubenskaya, J., Fedorov, O., Haungs, A., Heiss, A., Kang, D., Kazarina, Y., Korosteleva, E., Kostunin, D., Kryukov, A., Mikhailov, A., Nguyen, M.-D., Polyakov, S., Postnikov, E., Shigarov, A., Shipilov, D., Streit, A., Tokareva, V., ... Zhurov, D. (2018). Russian–German Astroparticle Data Life Cycle Initiative. Data, 3(4), 56. https://doi.org/10.3390/data3040056