Emerging Technologies Based on Artificial Intelligence to Assess the Quality and Consumer Preference of Beverages

Abstract

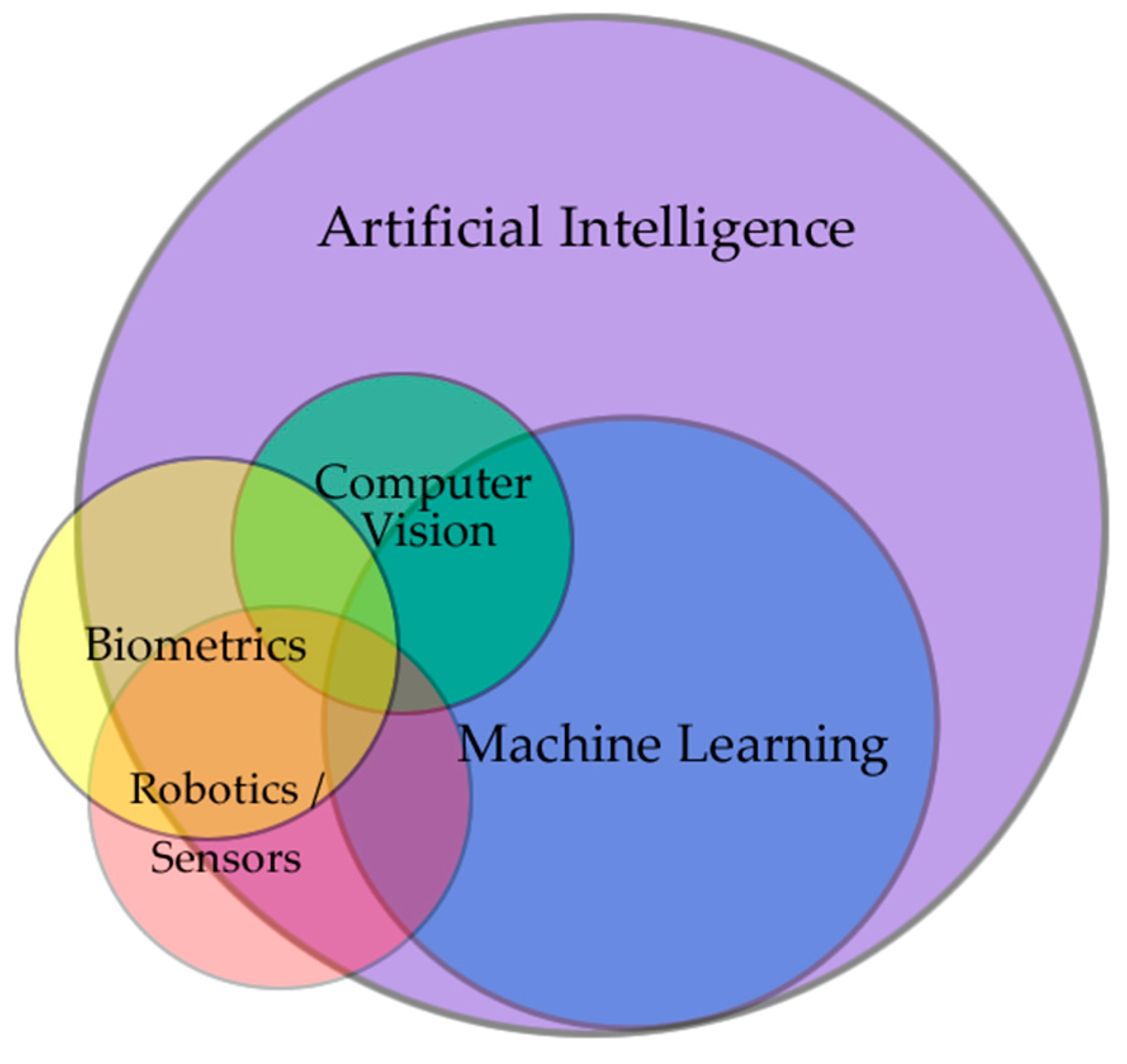

1. Introduction

2. Robotics

2.1. Robotics in Alcoholic Beverages

2.2. Robotics in Hot Beverages

2.3. Robotics in Non-Alcoholic Beverages

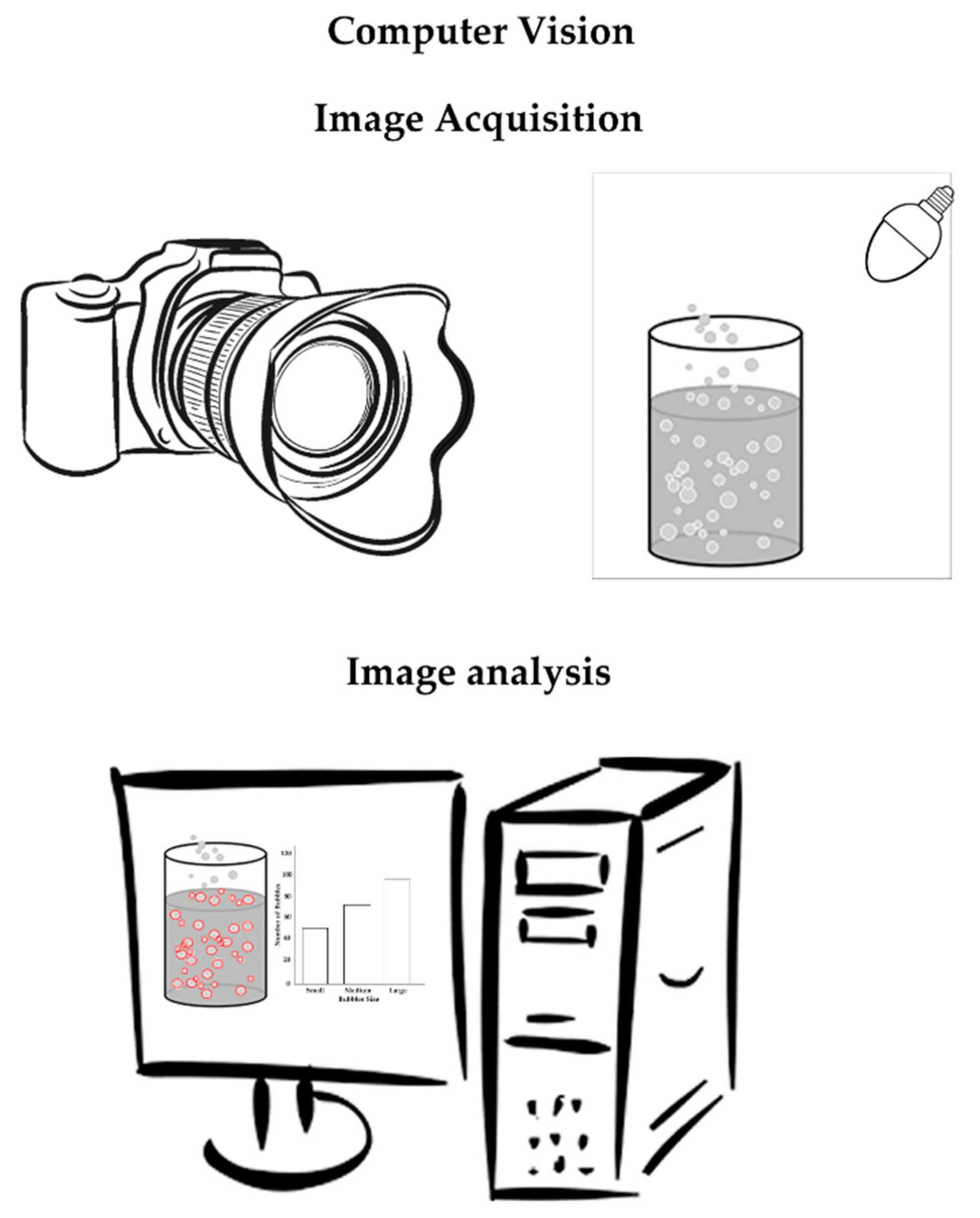

3. Computer Vision Techniques

3.1. Computer Vision in Alcoholic Beverages

3.2. Computer Vision in Hot Beverages

3.3. Computer Vision in Non-Alcoholic Beverages

4. Machine Learning

4.1. Machine Learning in Alcoholic Beverages

4.2. Machine Learning in Hot Beverages

4.3. Machine Learning in Non-Alcoholic Beverages

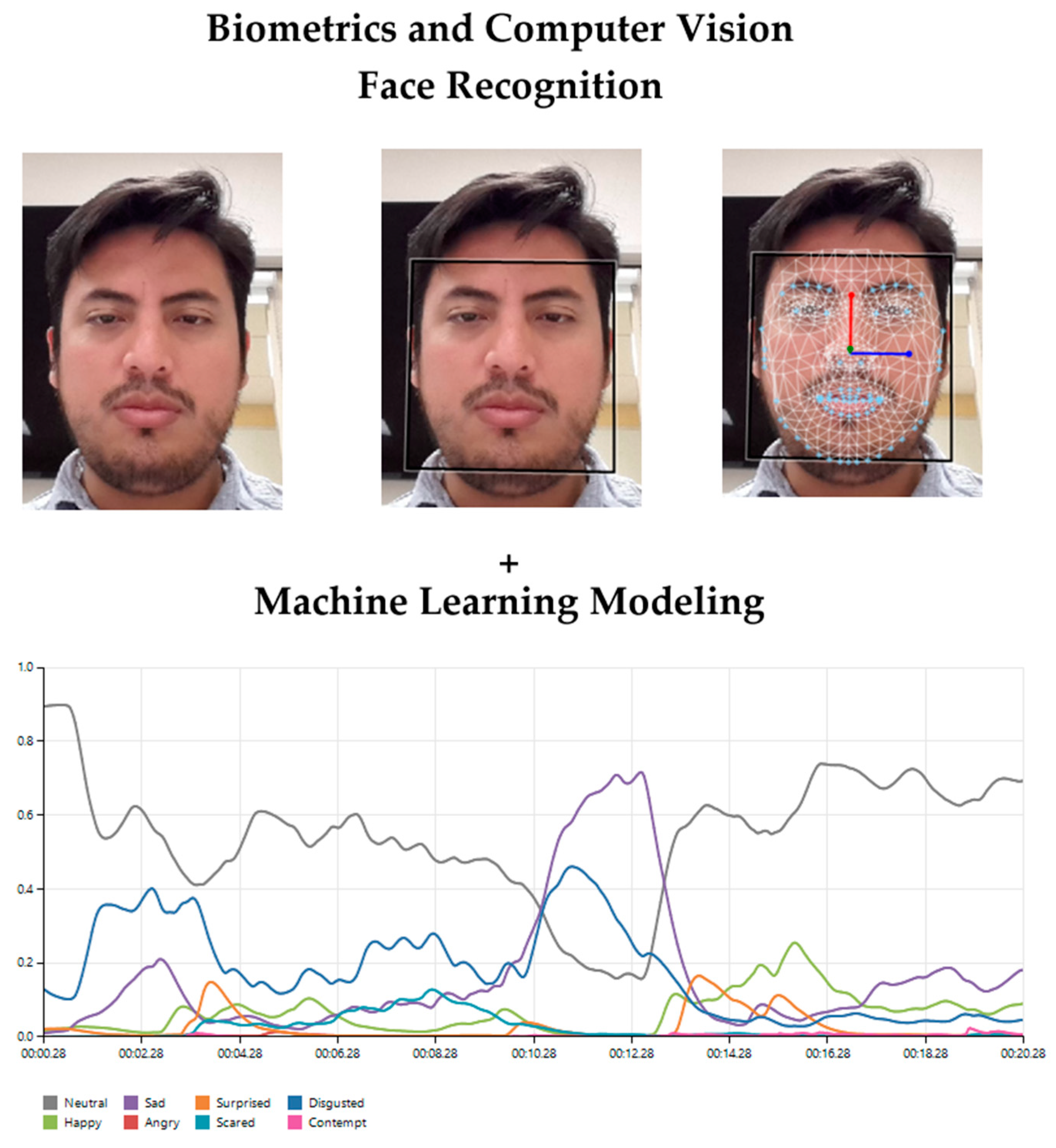

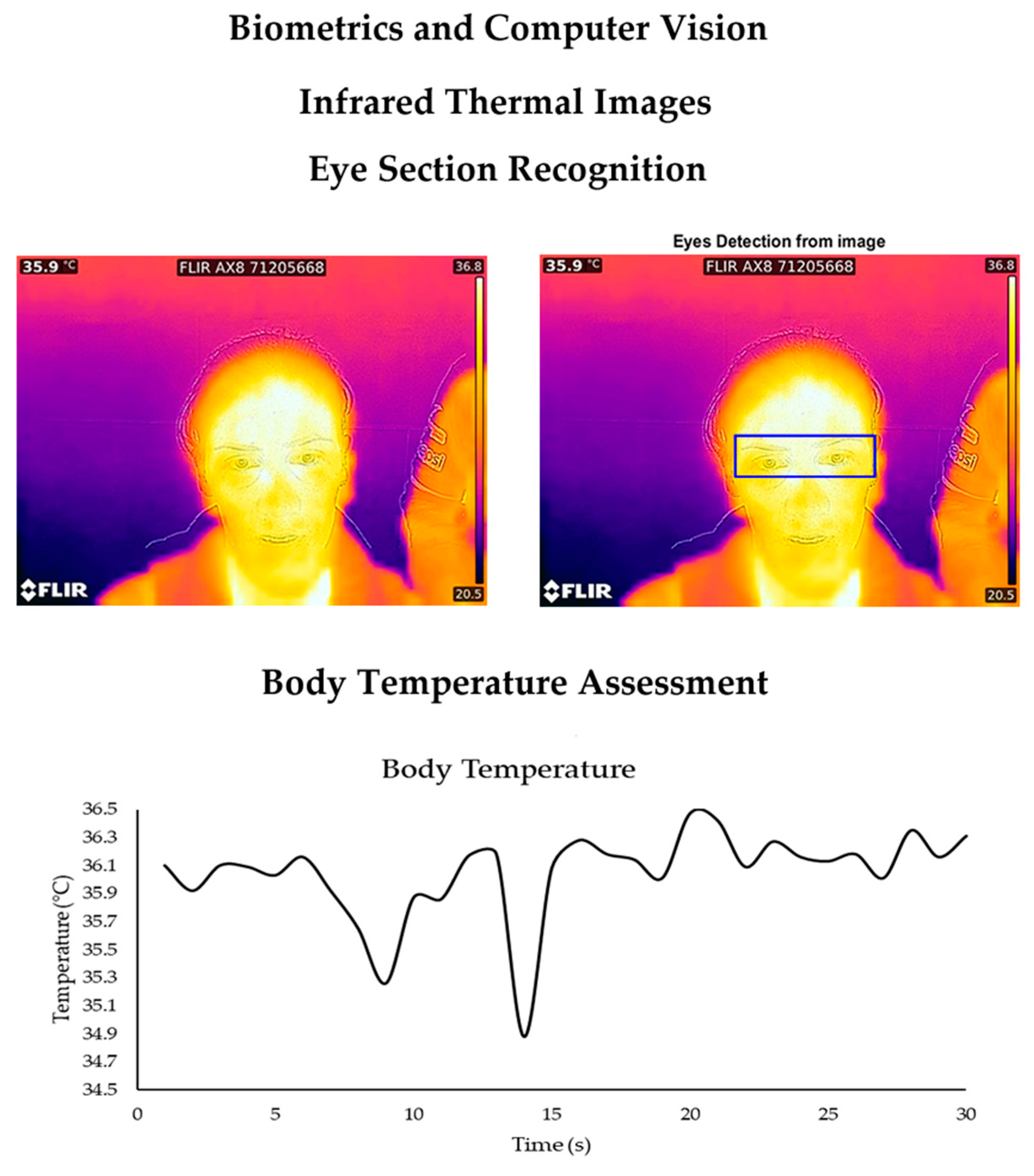

5. Biometrics

5.1. Biometrics in Alcoholic Beverages

5.2. Biometrics in Hot Beverages

5.3. Biometrics in Non-Alcoholic Beverages

6. Artificial Intelligence

7. Key Findings and Future Trends

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Glossary of Quality Indicators

| Aroma | Volatile aromatic compounds detected via the retronasal olfactory system |

| Bubble growth | Rate at which a bubble increases its size |

| Bubble haze | Large number of micro-bubbles formed when the beer is poured, and that circulate the liquid before reaching the surface |

| Bubble size distribution | Number of small, medium and large bubbles |

| CIELab | Color parameters in which L = lightness, a = red to green, and b = yellow to blue values |

| Clarity | Level of transparency of the liquid due to lack of suspended particles |

| Collar | Array of bubbles that remains at the edge of the glass |

| Color | Visual element produced when the light that hits an object is reflected to the eye |

| Density | Mass divided by the volume unit of a liquid |

| Flavor | Perception of basic tastes, aromas and trigeminal sensations during mastication |

| Foamability | Capacity to form foam |

| Foam drainage | Excess of liquid drained from the wet foam to produce dry foam |

| Foam stability | Lifetime of foam |

| Foam thickness | Viscosity of the foam |

| Foam volume | Amount of foam in mL |

| Foam velocity | Rate at which the foam collapses |

| Off-odors | Odors that are not characteristic of the product and are considered as faults |

| pH | Measurement of the acidity or alkalinity based on the number of hydrogen ions |

| RGB | Color in Red, Green and Blue scale |

| Taste | Perception through the receptor cells found in the papillae |

| Texture | Sensory characteristic of the solid or rheological state of a product |

| Viscosity | Consistency of a liquid which may vary from thin to thick |

| Water hardness | High mineral concentration in water |

References

- Pang, X.-N.; Li, Z.-J.; Chen, J.-Y.; Gao, L.-J.; Han, B.-Z. A Comprehensive Review of Spirit Drink Safety Standards and Regulations from an International Perspective. J. Food Prot. 2017, 80, 431–442. [Google Scholar] [CrossRef] [PubMed]

- Pushpangadan, P.; Dan, V.M.; Ijinu, T.; George, V. Food, Nutrition and Beverage. Indian J. Tradit. Knowl. 2012, 11, 26–34. [Google Scholar]

- Mise, J.K.; Nair, C.; Odera, O.; Ogutu, M. Factors influencing brand loyalty of soft drink consumers in Kenya and India. Int. J. Bus. Manag. Econ. Res. 2013, 4, 706–713. [Google Scholar]

- Schwarz, B.; Bischof, H.-P.; Kunze, M. Coffee, tea, and lifestyle. Prev. Med. 1994, 23, 377–384. [Google Scholar] [CrossRef] [PubMed]

- Plutowska, B.; Wardencki, W. Application of gas chromatography–olfactometry (GC–O) in analysis and quality assessment of alcoholic beverages–A review. Food Chem. 2008, 107, 449–463. [Google Scholar] [CrossRef]

- Viejo, C.G.; Fuentes, S.; Torrico, D.D.; Godbole, A.; Dunshea, F.R. Chemical characterization of aromas in beer and their effect on consumers liking. Food Chem. 2019, 293, 479–485. [Google Scholar] [CrossRef]

- Viejo, C.G.; Fuentes, S.; Li, G.; Collmann, R.; Condé, B.; Torrico, D. Development of a robotic pourer constructed with ubiquitous materials, open hardware and sensors to assess beer foam quality using computer vision and pattern recognition algorithms: RoboBEER. Food Res. Int. 2016, 89, 504–513. [Google Scholar] [CrossRef]

- Bamforth, C.; Russell, I.; Stewart, G. Beer: A Quality Perspective; Academic press: Cambridge, MA, USA, 2011. [Google Scholar]

- Gonzalez Viejo, C.; Fuentes, S.; Torrico, D.; Howell, K.; Dunshea, F.R. Assessment of beer quality based on foamability and chemical composition using computer vision algorithms, near infrared spectroscopy and artificial neural networks modelling techniques. J. Sci. Food Agric. 2018, 98, 618–627. [Google Scholar] [CrossRef]

- Belitz, H.-D.; Grosch, W.; Schieberle, P. Coffee, tea, cocoa. In Food Chemistry; Springer: Berlin/Heidelberg, Germany, 2009; pp. 938–970. [Google Scholar]

- Cullen, P.; Cullen, P.J.; Tiwari, B.K.; Valdramidis, V. Novel Thermal and Non-Thermal Technologies for Fluid Foods; Academic Press: San Diego, CA, USA, 2011. [Google Scholar]

- Wang, L.; Sun, D.-W.; Pu, H.; Cheng, J.-H. Quality analysis, classification, and authentication of liquid foods by near-infrared spectroscopy: A review of recent research developments. Crit. Rev. Food Sci. Nutr. 2017, 57, 1524–1538. [Google Scholar] [CrossRef]

- Piper, D.; Scharf, A. Descriptive Analysis: State of the Art and Recent Developments; ForschungsForum eV: Göttingen, Germany, 2004. [Google Scholar]

- Gonzalez Viejo, C.; Fuentes, S.; Torrico, D.D.; Howell, K.; Dunshea, F.R. Assessment of Beer Quality Based on a Robotic Pourer, Computer Vision, and Machine Learning Algorithms Using Commercial Beers. J. Food Sci. 2018, 83, 1381–1388. [Google Scholar] [CrossRef]

- Kemp, S.; Hollowood, T.; Hort, J. Sensory Evaluation: A Practical Handbook; Wiley: Oxford, UK, 2011. [Google Scholar]

- Stone, H.; Bleibaum, R.; Thomas, H.A. Sensory Evaluation Practices; Elsevier: Amsterdam, The Netherlands; Academic Press: Cambridge, MA, USA, 2012. [Google Scholar]

- Gonzalez Viejo, C.; Fuentes, S.; Howell, K.; Torrico, D.; Dunshea, F.R. Robotics and computer vision techniques combined with non-invasive consumer biometrics to assess quality traits from beer foamability using machine learning: A potential for artificial intelligence applications. Food Control 2018, 92, 72–79. [Google Scholar] [CrossRef]

- Gonzalez Viejo, C.; Fuentes, S.; Howell, K.; Torrico, D.D.; Dunshea, F.R. Integration of non-invasive biometrics with sensory analysis techniques to assess acceptability of beer by consumers. Phys. Behav. 2019, 200, 139–147. [Google Scholar] [CrossRef] [PubMed]

- Gill, G.S.; Kumar, A.; Agarwal, R. Monitoring and grading of tea by computer vision–A review. J. Food Eng. 2011, 106, 13–19. [Google Scholar] [CrossRef]

- Ceccarelli, M. Fundamentals of Mechanics of Robotic Manipulation; Springer: Dordrecht, The Netherlands, 2004. [Google Scholar]

- Dell Technologies. The Difference Between AI, Machine Learning, and Robotics. Available online: https://www.delltechnologies.com/en-us/perspectives/the-difference-between-ai-machine-learning-and-robotics/ (accessed on 15 August 2019).

- Nayik, G.A.; Muzaffar, K.; Gull, A. Robotics and food technology: A mini review. J. Nutr. Food Sci. 2015, 5, 1–11. [Google Scholar]

- Iqbal, J.; Khan, Z.H.; Khalid, A. Prospects of robotics in food industry. Food Sci. Technol. 2017, 37, 159–165. [Google Scholar] [CrossRef]

- Caldwell, D.G. Robotics and Automation in the Food Industry: Current and Future Technologies; Elsevier: Amsterdam, The Netherlands, 2012. [Google Scholar]

- MAKR SHAKR Srl. MAKR SHAKR. Available online: https://www.makrshakr.com/ (accessed on 15 August 2019).

- Wilson, A. Vision and Robots Team up for Wine Production. 2016. Available online: https://www.vision-systems.com/non-factory/article/16736826/vision-and-robots-team-up-for-wine-production/ (accessed on 17 August 2019).

- Condé, B.C.; Fuentes, S.; Caron, M.; Xiao, D.; Collmann, R.; Howell, K.S. Development of a robotic and computer vision method to assess foam quality in sparkling wines. Food Control 2017, 71, 383–392. [Google Scholar] [CrossRef]

- Peano, D.; Chiaberge, M. Innovative Beer Dispenser Based on Collaborative Robotics. Ph.D. Thesis, Politecnico di Torino, Torino, Italy, 2018. [Google Scholar]

- Yasui, K.; Yokoi, S.; Shigyo, T.; Tamaki, T.; Shinotsuka, K. A customer-oriented approach to the development of a visual and statistical foam analysis. J. Am. Soc. Brew. Chem. 1998, 56, 152–158. [Google Scholar] [CrossRef]

- Gonzalez Viejo, C.; Torrico, D.D.; Dunshea, F.R.; Fuentes, S. Development of Artificial Neural Network Models to Assess Beer Acceptability Based on Sensory Properties Using a Robotic Pourer: A Comparative Model Approach to Achieve an Artificial Intelligence System. Beverages 2019, 5, 33. [Google Scholar] [CrossRef]

- Marr, B. How Artificial Intelligence Is Used to Make Beer. Forbes. 2019. Available online: https://www.forbes.com/sites/bernardmarr/2019/02/01/how-artificial-intelligence-is-used-to-make-beer/#35b077d070cf (accessed on 1 February 2019).

- Hutson, M. Beer-slinging robot predicts whether you’ll give that brew a thumbs up—or down. Science 2018. [Google Scholar] [CrossRef]

- Kayaalp, K.; Ceylan, O.; Süzen, A.A.; Yildiz, Z. Internet Controlled Smart Tea Machine Design with Arduino and Tea Consumption Analysis. Uluborlu Mesl. Bilimler Derg. 2018, 1, 29–37. [Google Scholar]

- Buhr, S. Meet Teforia, A Tea Brewing Robot For The Home. Techcrunch. 30 October 2015. Available online: https://techcrunch.com/2015/10/29/meet-teforia-a-tea-brewing-robot-for-the-home/ (accessed on 1 February 2019).

- Buhr, S. Taste Testing With teaBOT, The Robot That Brews Up Loose Leaf Tea In Under 30 Seconds. Techcrunch. 24 July 2015. Available online: https://techcrunch.com/2015/07/23/taste-testing-with-teabot-the-robot-that-brews-up-loose-leaf-tea-in-under-30-seconds/ (accessed on 18 June 2019).

- Coward, C. Mugsy, the Raspberry Pi-Powered Coffee Maker, Is Nearing Production. Medium Corporation. 2019. Available online: https://www.hackster.io/news/mugsy-the-raspberry-pi-based-robotic-coffee-maker-is-now-on-kickstarter-8a24f38ffbe6 (accessed on 15 July 2019).

- Budds, D. Can a $25,000 robot make better coffee than a barista? Curbed; Vox Media, Inc., 23 February 2018. Available online: https://www.curbed.com/2018/2/23/17041842/cafe-x-automated-coffee-robot-ammunition-design (accessed on 15 July 2019).

- Do, C.; Burgard, W. Accurate pouring with an autonomous robot using an RGB-D camera. In Proceedings of the International Conference on Intelligent Autonomous Systems, Singapore, 1–3 March 2018; pp. 210–221. [Google Scholar]

- Morita, T.; Kashiwagi, N.; Yorozu, A.; Walch, M.; Suzuki, H.; Karagiannis, D.; Yamaguchi, T. Practice of multi-robot teahouse based on printeps and evaluation of service quality. In Proceedings of the 2018 IEEE 42nd Annual Computer Software and Applications Conference (COMPSAC), Tokyo, Japan, 23–27 July 2018; pp. 147–152. [Google Scholar]

- Cai, D.C.; Chen, J.F.; Chang, Y.W. Design and Development of Beverage Maker. Appl. Mech. Mater. 2014, 590, 581–585. [Google Scholar] [CrossRef]

- Albrecht, C. Alberts Brings Robot Smoothie Stations to Europe. The Spoon. 18 April 2018. Available online: https://thespoon.tech/alberts-brings-robot-smoothie-stations-to-europe/ (accessed on 15 July 2019).

- Awwad, S.; Tarvade, S.; Piccardi, M.; Gattas, D.J. The use of privacy-protected computer vision to measure the quality of healthcare worker hand hygiene. Int. J. Qual. Health Care 2018, 31, 36–42. [Google Scholar] [CrossRef] [PubMed]

- Wu, D.; Sun, D.-W. Colour measurements by computer vision for food quality control–A review. Trends Food Sci. Technol. 2013, 29, 5–20. [Google Scholar] [CrossRef]

- Lukinac, J.; Mastanjević, K.; Mastanjević, K.; Nakov, G.; Jukić, M. Computer Vision Method in Beer Quality Evaluation—A Review. Beverages 2019, 5, 38. [Google Scholar] [CrossRef]

- Solem, J.E. Programming Computer Vision with Python: Tools and Algorithms for Analyzing Images; O’Reilly Media, Inc.: Sebastopol, CA, USA, 2012. [Google Scholar]

- Marques, O. Practical Image and Video Processing Using MATLAB; John Wiley & Sons: Hoboken, NJ, USA, 2011. [Google Scholar]

- Sun, D.-W. Inspecting pizza topping percentage and distribution by a computer vision method. J. Food Eng. 2000, 44, 245–249. [Google Scholar] [CrossRef]

- Sarangi, P.; Mishra, B.; Majhi, B.; Dehuri, S. Gray-level image enhancement using differential evolution optimization algorithm. In Proceedings of the 2014 International Conference on Signal Processing and Integrated Networks (SPIN), Noida, India, 20–21 February 2014; pp. 95–100. [Google Scholar]

- Vala, H.J.; Baxi, A. A review on Otsu image segmentation algorithm. Intern. J. Adv. Res. Comput. Eng. Technol. (IJARCET) 2013, 2, 387–389. [Google Scholar]

- Fuentes, S.; Hernández-Montes, E.; Escalona, J.; Bota, J.; Viejo, C.G.; Poblete-Echeverría, C.; Tongson, E.; Medrano, H. Automated grapevine cultivar classification based on machine learning using leaf morpho-colorimetry, fractal dimension and near-infrared spectroscopy parameters. Comput. Electron. Agric. 2018, 151, 311–318. [Google Scholar] [CrossRef]

- Szeliski, R. Computer Vision: Algorithms and Applications; Springer Science & Business Media: London, UK, 2010. [Google Scholar]

- Sun, D.-W. Computer Vision Technology for Food Quality Evaluation; Academic Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Rodrigues, B.U.; da Costa, R.M.; Salvini, R.L.; da Silva Soares, A.; da Silva, F.A.; Caliari, M.; Cardoso, K.C.R.; Ribeiro, T.I.M. Cachaça classification using chemical features and computer vision. Procedia Comput. Sci. 2014, 29, 2024–2033. [Google Scholar] [CrossRef]

- Pessoa, K.D.; Suarez, W.T.; dos Reis, M.F.; Franco, M.d.O.K.; Moreira, R.P.L.; dos Santos, V.B. A digital image method of spot tests for determination of copper in sugar cane spirits. Spectrochim. Acta Part A: Mol. Biomol. Spectrosc. 2017, 185, 310–316. [Google Scholar] [CrossRef]

- Wang, Y.; Zhou, B.; Zhang, H.; Ge, J. A vision-based intelligent inspector for wine production. Intern. J. Mach. Learn. Cybern. 2012, 3, 193–203. [Google Scholar] [CrossRef]

- Martin, M.L.G.-M.; Ji, W.; Luo, R.; Hutchings, J.; Heredia, F.J. Measuring colour appearance of red wines. Food Qual. Preference 2007, 18, 862–871. [Google Scholar] [CrossRef]

- Pérez-Bernal, J.L.; Villar-Navarro, M.; Morales, M.L.; Ubeda, C.; Callejón, R.M. The smartphone as an economical and reliable tool for monitoring the browning process in sparkling wine. Comput. Electron. Agric. 2017, 141, 248–254. [Google Scholar] [CrossRef]

- Arakawa, T.; Iitani, K.; Wang, X.; Kajiro, T.; Toma, K.; Yano, K.; Mitsubayashi, K. A sniffer-camera for imaging of ethanol vaporization from wine: The effect of wine glass shape. Analyst 2015, 140, 2881–2886. [Google Scholar] [CrossRef] [PubMed]

- Cilindre, C.; Liger-Belair, G.; Villaume, S.; Jeandet, P.; Marchal, R. Foaming properties of various Champagne wines depending on several parameters: Grape variety, aging, protein and CO2 content. Anal. Chim. Acta 2010, 660, 164–170. [Google Scholar] [CrossRef] [PubMed]

- Crumpton, M.; Rice, C.J.; Atkinson, A.; Taylor, G.; Marangon, M. The effect of sucrose addition at dosage stage on the foam attributes of a bottle-fermented English sparkling wine. J. Sci. Food Agric. 2018, 98, 1171–1178. [Google Scholar] [CrossRef]

- Silva, T.; Godinho, M.S.; de Oliveira, A.E. Identification of pale lager beers via image analysis. Lat. Am. Appl. Res. 2011, 41, 141–145. [Google Scholar]

- Fengxia, S.; Yuwen, C.; Zhanming, Z.; Yifeng, Y. Determination of beer color using image analysis. J. Am. Soc. Brew. Chem. 2004, 62, 163–167. [Google Scholar] [CrossRef]

- Hepworth, N.; Varley, J.; Hind, A. Characterizing gas bubble dispersions in beer. Food Bioprod. Process. 2001, 79, 13–20. [Google Scholar] [CrossRef]

- Hepworth, N.; Hammond, J.; Varley, J. Novel application of computer vision to determine bubble size distributions in beer. J. Food Eng. 2004, 61, 119–124. [Google Scholar] [CrossRef]

- Cimini, A.; Pallottino, F.; Menesatti, P.; Moresi, M. A low-cost image analysis system to upgrade the rudin beer foam head retention meter. Food Bioprocess Technol. 2016, 9, 1587–1597. [Google Scholar] [CrossRef]

- Dong, C.-w.; Zhu, H.-k.; Zhao, J.-w.; Jiang, Y.-w.; Yuan, H.-b.; Chen, Q.-s. Sensory quality evaluation for appearance of needle-shaped green tea based on computer vision and nonlinear tools. J. Zhejiang Univ. Sci. B 2017, 18, 544–548. [Google Scholar] [CrossRef] [PubMed]

- Singh, G.; Kamal, N. Machine vision system for tea quality determination-Tea Quality Index (TQI). IOSR J. Eng. 2013, 3, 46–50. [Google Scholar] [CrossRef]

- Kumar, A.; Singh, H.; Sharma, S.; Kumar, A. Color Analysis of Black Tea Liquor using Image Processing Techniques. Int. J. Electron. Commun. Technol. 2011, 2, 292–296. [Google Scholar]

- Akuli, A.; Pal, A.; Bej, G.; Dey, T.; Ghosh, A.; Tudu, B.; Bhattacharyya, N.; Bandyopadhyay, R. A Machine Vision System for Estimation of Theaflavins and Thearubigins in Orthodox Black Tea. Int. J. Smart Sens. Intell. Syst. 2016, 9, 709–731. [Google Scholar] [CrossRef]

- Oblitas Cruz, J.; Castro Silupu, W. Computer vision system for the optimization of the color generated by the coffee roasting process according to time, temperature and mesh size. Ingeniería y Universidad 2014, 18, 355–368. [Google Scholar] [CrossRef]

- Várvölgyi, E.; Werum, T.; Dénes, L.; Soós, J.; Szabó, G.; Felföldi, J.; Esper, G.; Kovács, Z. Vision system and electronic tongue application to detect coffee adulteration with barley. Acta Aliment. 2014, 43, 197–205. [Google Scholar] [CrossRef]

- De Oliveira, E.M.; Pereira, R.G.F.A.; Leme, D.S.; Barbosa, B.H.G.; Rodarte, M.P. A computer vision system for coffee beans classification based on computational intelligence techniques. J. Food Eng. 2016, 171, 22–27. [Google Scholar] [CrossRef]

- Chu, B.; Yu, K.; Zhao, Y.; He, Y. Development of Noninvasive Classification Methods for Different Roasting Degrees of Coffee Beans Using Hyperspectral Imaging. Sensors 2018, 18, 1259. [Google Scholar] [CrossRef]

- Piazza, L.; Bulbarello, A.; Gigli, J. Rheological interfacial properties of espresso coffee foaming fractions. In Proceedings of the 13th World Congress of Food Science & Technology 2006, Nantes, France, 17–21 September 2006; p. 873. [Google Scholar]

- Buratti, S.; Benedetti, S.; Giovanelli, G. Application of electronic senses to characterize espresso coffees brewed with different thermal profiles. Eur. Food Res. Technol. 2017, 243, 511–520. [Google Scholar] [CrossRef]

- Damasceno, D.; Toledo, T.G.; Soares, A.D.S.; De Oliveira, S.B.; De Oliveira, A.E. CompVis: A novel method for drinking water alkalinity and total hardness analyses. Anal. Methods 2016, 8, 7832–7836. [Google Scholar] [CrossRef]

- Barker, G.; Jefferson, B.; Judd, S.; Judd, S. The control of bubble size in carbonated beverages. Chem. Eng. Sci. 2002, 57, 565–573. [Google Scholar] [CrossRef]

- Viejo, C.G.; Torrico, D.D.; Dunshea, F.R.; Fuentes, S. The Effect of Sonication on Bubble Size and Sensory Perception of Carbonated Water to Improve Quality and Consumer Acceptability. Beverages 2019, 5, 58. [Google Scholar] [CrossRef]

- Aliabadi, R.S.; Mahmoodi, N.O. Synthesis and characterization of polypyrrole, polyaniline nanoparticles and their nanocomposite for removal of azo dyes; sunset yellow and Congo red. J. Clean. Prod. 2018, 179, 235–245. [Google Scholar] [CrossRef]

- Botelho, B.G.; De Assis, L.P.; Sena, M.M. Development and analytical validation of a simple multivariate calibration method using digital scanner images for sunset yellow determination in soft beverages. Food Chem. 2014, 159, 175–180. [Google Scholar] [CrossRef] [PubMed]

- Sorouraddin, M.-H.; Saadati, M.; Mirabi, F. Simultaneous determination of some common food dyes in commercial products by digital image analysis. J. Food Drug Anal. 2015, 23, 447–452. [Google Scholar] [CrossRef]

- Hosseininia, S.A.R.; Kamani, M.H.; Rani, S. Quantitative determination of sunset yellow concentration in soft drinks via digital image processing. J. Food Meas. Charact. 2017, 11, 1065–1070. [Google Scholar] [CrossRef]

- Heredia, F.; González-Miret, M.; Álvarez, C.; Ramírez, A. DigiFood® (Análisis de imagen). Registro No. SE 1298. 2006. Available online: http://www.https.com//digifood.com/ (accessed on 1 November 2019).

- Fernández-Vázquez, R.; Stinco, C.M.; Hernanz, D.; Heredia, F.J.; Vicario, I.M. Colour training and colour differences thresholds in orange juice. Food Qual. Preference 2013, 30, 320–327. [Google Scholar] [CrossRef]

- Fernandez-Vazquez, R.; Stinco, C.M.; Melendez-Martinez, A.J.; Heredia, F.J.; Vicario, I.M. Visual and instrumental evaluation of orange juice color: A consumers’preference study. J. Sens. Stud. 2011, 26, 436–444. [Google Scholar] [CrossRef]

- Vélez-Ruiz, J.; Barbosa-Cánovas, G. Flow and structural characteristics of concentrated milk. J. Texture Stud. 2000, 31, 315–333. [Google Scholar] [CrossRef]

- dos Santos, P.M.; Pereira-Filho, E.R. Digital image analysis–an alternative tool for monitoring milk authenticity. Anal. Methods 2013, 5, 3669–3674. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT press: Cambridge, MA, USA, 2016. [Google Scholar]

- Mathworks Inc. Mastering Machine Learning: A Step-by-Step Guide with MATLAB; Mathworks Inc.: Sherborn, MA, USA, 2018. [Google Scholar]

- Romero, M.; Luo, Y.; Su, B.; Fuentes, S. Vineyard water status estimation using multispectral imagery from an UAV platform and machine learning algorithms for irrigation scheduling management. Comput. Electron. Agric. 2018, 147, 109–117. [Google Scholar] [CrossRef]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Abadi, M.; Barham, P.; Chen, J.; Chen, Z.; Davis, A.; Dean, J.; Devin, M.; Ghemawat, S.; Irving, G.; Isard, M. Tensorflow: A system for large-scale machine learning. In Proceedings of the 12th {USENIX} Symposium on Operating Systems Design and Implementation ({OSDI} 16), Savannah, GA, USA, 2–4 November 2016; pp. 265–283. [Google Scholar]

- Witten, I.H.; Frank, E.; Hall, M.A.; Pal, C.J. Data Mining: Practical Machine Learning Tools and Techniques; Morgan Kaufmann: Burlington, MA, USA, 2016. [Google Scholar]

- Hornik, K.; Buchta, C.; Zeileis, A. Open-source machine learning: R meets Weka. Comput. Stat. 2009, 24, 225–232. [Google Scholar] [CrossRef]

- Buss, D. Food Companies Get Smart About Artificial Intelligence. Food Technol. 2018, 72, 26–41. [Google Scholar]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A simple way to prevent neural networks from overfitting. J. Machine Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Martino, J.C.R. Hands-On Machine Learning with Microsoft Excel 2019: Build complete data analysis flows, from data collection to visualization; Packt Publishing: Birmingham, UK, 2019. [Google Scholar]

- Backhaus, A.; Ashok, P.C.; Praveen, B.B.; Dholakia, K.; Seiffert, U. Classifying Scotch Whisky from near-infrared Raman spectra with a Radial Basis Function Network with Relevance Learning. In Proceedings of the 20th European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning, ESANN, Bruges, Belgium, 25–27 April 2012; pp. 411–416. [Google Scholar]

- Ceballos-Magaña, S.G.; Jurado, J.M.; Muñiz-Valencia, R.; Alcázar, A.; de Pablos, F.; Martín, M.J. Geographical authentication of tequila according to its mineral content by means of support vector machines. Food Anal. Methods 2012, 5, 260–265. [Google Scholar] [CrossRef]

- Andrade, J.M.; Ballabio, D.; Gómez-Carracedo, M.P.; Pérez-Caballero, G. Nonlinear classification of commercial Mexican tequilas. J. Chemom. 2017, 31, e2939. [Google Scholar] [CrossRef]

- Rodrigues, B.U.; Soares, A.d.S.; Costa, R.M.d.; Van Baalen, J.; Salvini, R.; Silva, F.A.d.; Caliari, M.; Cardoso, K.C.R.; Ribeiro, T.I.M.; Delbem, A.C. A feasibility cachaca type recognition using computer vision and pattern recognition. Comput. Electron. Agric. 2016, 123, 410–414. [Google Scholar] [CrossRef]

- Er, Y.; Atasoy, A. The classification of white wine and red wine according to their physicochemical qualities. Int. J. Intell. Syst. Appl. Eng. 2016, 4, 23–26. [Google Scholar] [CrossRef]

- da Costa, N.L.; Castro, I.A.; Barbosa, R. Classification of cabernet sauvignon from two different countries in South America by chemical compounds and support vector machines. Appl. Artif. Intell. 2016, 30, 679–689. [Google Scholar] [CrossRef]

- Perrot, N.; Baudrit, C.; Brousset, J.M.; Abbal, P.; Guillemin, H.; Perret, B.; Goulet, E.; Guerin, L.; Barbeau, G.; Picque, D. A decision support system coupling fuzzy logic and probabilistic graphical approaches for the agri-food industry: Prediction of grape berry maturity. PLoS ONE 2015, 10, e0134373. [Google Scholar] [CrossRef] [PubMed]

- Lvova, L.; Yaroshenko, I.; Kirsanov, D.; Di Natale, C.; Paolesse, R.; Legin, A. Electronic tongue for brand uniformity control: A case study of apulian red wines recognition and defects evaluation. Sensors 2018, 18, 2584. [Google Scholar] [CrossRef] [PubMed]

- Cetó, X.; Gutiérrez, J.M.; Moreno-Barón, L.; Alegret, S.; Del Valle, M. Voltammetric electronic tongue in the analysis of cava wines. Electroanalysis 2011, 23, 72–78. [Google Scholar] [CrossRef]

- Fuentes, S.; Tongson, E.J.; De Bei, R.; Gonzalez Viejo, C.; Ristic, R.; Tyerman, S.; Wilkinson, K. Non-Invasive Tools to Detect Smoke Contamination in Grapevine Canopies, Berries and Wine: A Remote Sensing and Machine Learning Modeling Approach. Sensors 2019, 19, 3335. [Google Scholar] [CrossRef]

- Navajas, M.P.S.; del Teso, S.F.; Romero, M.; Dario, P.; Díaz, D.; González, V.F.; Zurbano, P.F. Modelling wine astringency from its chemical composition using machine learning algorithms. OENO ONE 2019, 53, 498–510. [Google Scholar]

- Fuentes, S.; Gonzalez Viejo, C.; Wang, X.; Torrico, D.D. Aroma and quality assessment for vertical vintages using machine learning modelling based on weather and management information. In Proceedings of the 21st GiESCO International Meeting, Thessaloniki, Greece, 23–28 June 2019. [Google Scholar]

- Cetó, X.; Gutiérrez-Capitán, M.; Calvo, D.; del Valle, M. Beer classification by means of a potentiometric electronic tongue. Food Chem. 2013, 141, 2533–2540. [Google Scholar] [CrossRef]

- Alcázar, Á.; Jurado, J.M.; Palacios-Morillo, A.; de Pablos, F.; Martín, M.J. Recognition of the geographical origin of beer based on support vector machines applied to chemical descriptors. Food Control 2012, 23, 258–262. [Google Scholar] [CrossRef]

- Rousu, J.; Elomaa, T.; Aarts, R. Predicting the speed of beer fermentation in laboratory and industrial scale. In Proceedings of the International Work-Conference on Artificial Neural Networks, Alicante, Spain, 2–4 June 1999; pp. 893–901. [Google Scholar]

- Santos, J.P.; Lozano, J. Real time detection of beer defects with a hand held electronic nose. In Proceedings of the 2015 10th Spanish Conference on Electron Devices (CDE), Aranjuez-Madrid, Spain, 11–13 February 2015; pp. 1–4. [Google Scholar]

- Gardner, J.W.; Bartlett, P.N. A brief history of electronic noses. Sens. Actuators B Chem. 1994, 18, 210–211. [Google Scholar] [CrossRef]

- Voss, H.G.J.; Mendes Júnior, J.J.A.; Farinelli, M.E.; Stevan, S.L. A Prototype to Detect the Alcohol Content of Beers Based on an Electronic Nose. Sensors 2019, 19, 2646. [Google Scholar] [CrossRef]

- Zhang, Y.; Jia, S.; Zhang, W. Predicting acetic acid content in the final beer using neural networks and support vector machine. J. Inst. Brew. 2012, 118, 361–367. [Google Scholar] [CrossRef]

- Yu, H.; Wang, J.; Yao, C.; Zhang, H.; Yu, Y. Quality grade identification of green tea using E-nose by CA and ANN. LWT-Food Sci. Technol. 2008, 41, 1268–1273. [Google Scholar] [CrossRef]

- Chen, Q.; Zhao, J.; Chen, Z.; Lin, H.; Zhao, D.-A. Discrimination of green tea quality using the electronic nose technique and the human panel test, comparison of linear and nonlinear classification tools. Sens. Actuators B Chem. 2011, 159, 294–300. [Google Scholar] [CrossRef]

- Cimpoiu, C.; Cristea, V.-M.; Hosu, A.; Sandru, M.; Seserman, L. Antioxidant activity prediction and classification of some teas using artificial neural networks. Food Chem. 2011, 127, 1323–1328. [Google Scholar] [CrossRef]

- Guo, Z.; Chen, L.; Zhao, C.; Huang, W.; Chen, Q. Nondestructive estimation of total free amino acid in green tea by near infrared spectroscopy and artificial neural networks. In Proceedings of the International Conference on Computer and Computing Technologies in Agriculture, Beijing, China, 29–31 October 2011; pp. 43–53. [Google Scholar]

- Zhu, H.; Ye, Y.; He, H.; Dong, C. Evaluation of green tea sensory quality via process characteristics and image information. Food Bioprod. Process. 2017, 102, 116–122. [Google Scholar] [CrossRef]

- Messias, J.A.; Melo, E.d.C.; Lacerda Filho, A.F.d.; Braga, J.L.; Cecon, P.R. Determination of the influence of the variation of reducing and non-reducing sugars on coffee quality with use of artificial neural network. Engenharia Agrícola 2012, 32, 354–360. [Google Scholar] [CrossRef][Green Version]

- Domínguez, R.; Moreno-Barón, L.; Muñoz, R.; Gutiérrez, J. Voltammetric electronic tongue and support vector machines for identification of selected features in Mexican coffee. Sensors 2014, 14, 17770–17785. [Google Scholar] [CrossRef]

- Romani, S.; Cevoli, C.; Fabbri, A.; Alessandrini, L.; Dalla Rosa, M. Evaluation of coffee roasting degree by using electronic nose and artificial neural network for off-line quality control. J. Food Sci. 2012, 77, C960–C965. [Google Scholar] [CrossRef]

- Thazin, Y.; Pobkrut, T.; Kerdcharoen, T. Prediction of acidity levels of fresh roasted coffees using e-nose and artificial neural network. In Proceedings of the 2018 10th International Conference on Knowledge and Smart Technology (KST), Chiangmai, Thailand, 31 January–3 February 2018; pp. 210–215. [Google Scholar]

- Bucak, I.O.; Karlik, B. Detection of drinking water quality using CMAC based artificial neural Networks. Ekoloji 2011, 20, 75–81. [Google Scholar] [CrossRef]

- Camejo, J.; Pacheco, O.; Guevara, M. Classifier for drinking water quality in real time. In Proceedings of the 2013 International Conference on Computer Applications Technology (ICCAT), Sousse, Tunisia, 20–22 January 2013; pp. 1–5. [Google Scholar]

- Chatterjee, S.; Sarkar, S.; Dey, N.; Sen, S.; Goto, T.; Debnath, N.C. Water quality prediction: Multi objective genetic algorithm coupled artificial neural network based approach. In Proceedings of the 2017 IEEE 15th International Conference on Industrial Informatics (INDIN), Emden, Germany, 24–26 July 2017; pp. 963–968. [Google Scholar]

- Qiu, S.; Gao, L.; Wang, J. Classification and regression of ELM, LVQ and SVM for E-nose data of strawberry juice. J. Food Eng. 2015, 144, 77–85. [Google Scholar] [CrossRef]

- Hong, X.; Wang, J.; Qi, G. E-nose combined with chemometrics to trace tomato-juice quality. J. Food Eng. 2015, 149, 38–43. [Google Scholar] [CrossRef]

- Qiu, S.; Wang, J. The prediction of food additives in the fruit juice based on electronic nose with chemometrics. Food Chem. 2017, 230, 208–214. [Google Scholar] [CrossRef] [PubMed]

- Nandeshwar, V.J.; Phadke, G.S.; Das, S. Classification of orange juice adulteration using LDA, PCA and ANN. In Proceedings of the 2016 IEEE 1st International Conference on Power Electronics, Intelligent Control and Energy Systems (ICPEICES), Delhi, India, 4–6 July 2016; pp. 1–5. [Google Scholar]

- Rácz, A.; Bajusz, D.; Fodor, M.; Héberger, K. Comparison of classification methods with “n-class” receiver operating characteristic curves: A case study of energy drinks. Chemom. Intell. Lab. Syst. 2016, 151, 34–43. [Google Scholar] [CrossRef][Green Version]

- Rácz, A.; Héberger, K.; Fodor, M. Quantitative determination and classification of energy drinks using near-infrared spectroscopy. Anal. Bioanal. Chem. 2016, 408, 6403–6411. [Google Scholar] [CrossRef] [PubMed]

- Mamat, M.; Samad, S.A. Classification of beverages using electronic nose and machine vision systems. In Proceedings of the 2012 Asia Pacific Signal and Information Processing Association Annual Summit and Conference, Hollywood, CA, USA, 3–6 December 2012; pp. 1–6. [Google Scholar]

- Balabin, R.M.; Smirnov, S.V. Melamine detection by mid-and near-infrared (MIR/NIR) spectroscopy: A quick and sensitive method for dairy products analysis including liquid milk, infant formula, and milk powder. Talanta 2011, 85, 562–568. [Google Scholar] [CrossRef]

- Jain, A.; Flynn, P.; Ross, A.A. Handbook of Biometrics; Springer: New York, NY, USA, 2007. [Google Scholar]

- Viola, P.; Jones, M. Rapid object detection using a boosted cascade of simple features. In Proceedings of the 2001 IEEE Computer Society Conference on Computer Vision and Pattern Recognition. CVPR 2001, Kauai, HI, USA, 8–14 December 2001; Volume 511, pp. I-511–I-518. [Google Scholar]

- McDuff, D.; Mahmoud, A.; Mavadati, M.; Amr, M.; Turcot, J.; Kaliouby, R.e. AFFDEX SDK: A cross-platform real-time multi-face expression recognition toolkit. In Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems, San Jose, CA, USA, 7–12 May 2016; pp. 3723–3726. [Google Scholar]

- Leanne Loijens, O.K.; van Kuilenburg, H.; den Uyl, M.; Ivan, P. FaceReader™ Version 6.1 Reference Manual; Noldus Information Technology b.v: Wageningen, The Netherlands, 2015. [Google Scholar]

- Fuentes, S.; Gonzalez Viejo, C.; Torrico, D.; Dunshea, F. Development of a biosensory computer application to assess physiological and emotional responses from sensory panelists. Sensors 2018, 18, 2958. [Google Scholar] [CrossRef]

- de Wijk, R.A.; He, W.; Mensink, M.G.; Verhoeven, R.H.; de Graaf, C. ANS responses and facial expressions differentiate between the taste of commercial breakfast drinks. PLoS ONE 2014, 9, e93823. [Google Scholar] [CrossRef]

- He, W.; Boesveldt, S.; de Graaf, C.; de Wijk, R.A. Dynamics of autonomic nervous system responses and facial expressions to odors. Front. Psychol. 2014, 5, 110. [Google Scholar] [CrossRef]

- Frelih, N.G.; Podlesek, A.; Babič, J.; Geršak, G. Evaluation of psychological effects on human postural stability. Measurement 2017, 98, 186–191. [Google Scholar] [CrossRef]

- Jain, M.; Deb, S.; Subramanyam, A. Face video based touchless blood pressure and heart rate estimation. In Proceedings of the 2016 IEEE 18th International Workshop on Multimedia Signal Processing (MMSP), Montreal, QC, Canada, 21–23 September 2016; pp. 1–5. [Google Scholar]

- Carvalho, L.; Virani, M.H.; Kutty, M.S. Analysis of Heart Rate Monitoring Using a Webcam. Analysis 2014, 3, 6593–6595. [Google Scholar]

- Viejo, C.G.; Fuentes, S.; Torrico, D.D.; Dunshea, F.R. Non-Contact Heart Rate and Blood Pressure Estimations from Video Analysis and Machine Learning Modelling Applied to Food Sensory Responses: A Case Study for Chocolate. Sensors 2018, 18, 1802. [Google Scholar] [CrossRef] [PubMed]

- Torrico, D.D.; Fuentes, S.; Viejo, C.G.; Ashman, H.; Gurr, P.A.; Dunshea, F.R. Analysis of thermochromic label elements and colour transitions using sensory acceptability and eye tracking techniques. LWT 2018, 89, 475–481. [Google Scholar] [CrossRef]

- Kamboj, S.K.; Joye, A.; Bisby, J.A.; Das, R.K.; Platt, B.; Curran, H.V. Processing of facial affect in social drinkers: A dose–response study of alcohol using dynamic emotion expressions. Psychopharmacology 2013, 227, 31–39. [Google Scholar] [CrossRef] [PubMed]

- Beyts, C.; Chaya, C.; Dehrmann, F.; James, S.; Smart, K.; Hort, J. A comparison of self-reported emotional and implicit responses to aromas in beer. Food Qual. Preference 2017, 59, 68–80. [Google Scholar] [CrossRef]

- Garcia-Burgos, D.; Zamora, M.C. Exploring the hedonic and incentive properties in preferences for bitter foods via self-reports, facial expressions and instrumental behaviours. Food Qual. Preference 2015, 39, 73–81. [Google Scholar] [CrossRef]

- Danner, L.; Haindl, S.; Joechl, M.; Duerrschmid, K. Facial expressions and autonomous nervous system responses elicited by tasting different juices. Food Res. Int. 2014, 64, 81–90. [Google Scholar] [CrossRef]

- Danner, L.; Sidorkina, L.; Joechl, M.; Duerrschmid, K. Make a face! Implicit and explicit measurement of facial expressions elicited by orange juices using face reading technology. Food Qual. Preference 2014, 32, 167–172. [Google Scholar] [CrossRef]

- McPherson, S.S. Artificial intelligence: Building Smarter Machines; Twenty-First Century Books: Minneapolis, MN, USA, 2018. [Google Scholar]

- Joshi, N. How Far are we from Achieving Artificial General Intelligence? Forbes. 10 June 2019. Available online: https://www.forbes.com/sites/cognitiveworld/2019/06/10/how-far-are-we-from-achieving-artificial-general-intelligence/#2edd17436dc4 (accessed on 17 July 2019).

- Mohammadi, V.; Minaei, S. Artificial Intelligence in the Production Process. In Engineering Tools in the Beverage Industry; Elsevier: Amsterdam, The Netherlands, 2019; pp. 27–63. [Google Scholar]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gonzalez Viejo, C.; Torrico, D.D.; Dunshea, F.R.; Fuentes, S. Emerging Technologies Based on Artificial Intelligence to Assess the Quality and Consumer Preference of Beverages. Beverages 2019, 5, 62. https://doi.org/10.3390/beverages5040062

Gonzalez Viejo C, Torrico DD, Dunshea FR, Fuentes S. Emerging Technologies Based on Artificial Intelligence to Assess the Quality and Consumer Preference of Beverages. Beverages. 2019; 5(4):62. https://doi.org/10.3390/beverages5040062

Chicago/Turabian StyleGonzalez Viejo, Claudia, Damir D. Torrico, Frank R. Dunshea, and Sigfredo Fuentes. 2019. "Emerging Technologies Based on Artificial Intelligence to Assess the Quality and Consumer Preference of Beverages" Beverages 5, no. 4: 62. https://doi.org/10.3390/beverages5040062

APA StyleGonzalez Viejo, C., Torrico, D. D., Dunshea, F. R., & Fuentes, S. (2019). Emerging Technologies Based on Artificial Intelligence to Assess the Quality and Consumer Preference of Beverages. Beverages, 5(4), 62. https://doi.org/10.3390/beverages5040062