1. Introduction

Colorectal cancer (CRC) remains a major global health concern, ranking among the most common malignancies and leading causes of cancer-related mortality worldwide [

1]. Colonoscopy is widely recognized as the gold standard for CRC screening, allowing for direct visualization of the gastrointestinal mucosa, early detection, and removal of precancerous lesions, thereby reducing CRC incidence and mortality [

2,

3].

Adequate bowel preparation is a prerequisite for high-quality colonoscopy, directly impacting the Adenoma Detection Rate (ADR) [

4,

5,

6]. To standardize quality reporting, the Boston Bowel Preparation Scale (BBPS) has emerged as the most widely validated metric, requiring endoscopists to assign segment-specific scores (0–3) based on mucosal visibility. However, the clinical utility of BBPS is currently compromised by two fundamental limitations: subjectivity and inefficiency. In daily practice, scoring is highly dependent on the observer’s experience, leading to significant inter-observer variability where junior endoscopists may exhibit “optimism bias” compared to strict senior experts [

7]. Furthermore, manual assessment is prone to fatigue and distraction, making it impractical for senior experts to review every procedure in high-volume centers [

4,

5,

6]. Therefore, there is an urgent need for an automated, objective system that not only rivals expert accuracy but also reproduces BBPS-like assessments through interpretable, rule-based heuristics derived from clinical consensus, rather than inventing novel metrics, to ensure seamless integration into existing clinical workflows and provide a conservative, safety-oriented net for quality control.

Recent advances in artificial intelligence (AI) have opened new avenues for automating and standardizing colonoscopic image analysis. AI tools offer the potential to assist endoscopists in quality control and improve overall efficiency [

8,

9,

10]. Consequently, numerous research groups have developed AI algorithms to objectively assess bowel preparation adequacy [

8]. Convolutional neural networks trained on large datasets of colonoscopic images have achieved substantial agreement with human raters [

6,

7,

8,

9,

10,

11]. Recent advancements in AI have introduced robust automated solutions for bowel preparation assessment. Notably, Cold et al. developed the Open-Source Automatic Bowel Preparation Scale (OSABPS), which quantifies cleanliness using a pixel-level fecal-to-mucosa ratio [

12]. Similarly, the e-BBPS system defined by Yu et al. has demonstrated validity for bowel preparation assessment [

7]. While these metrics offer high scientific precision and correlation with adenoma detection rates (ADR), they often introduce novel quantitative indices that differ from the categorical Boston Bowel Preparation Scale (BBPS, 0–9) used in standard clinical guidelines. This discrepancy requires clinicians to ‘translate’ AI outputs into standard reports, potentially hindering seamless integration into routine workflows. Therefore, a gap remains for a fully automated system that not only processes continuous video streams but also directly replicates the standard BBPS scoring logic, serving as a ‘drop-in’ surrogate for expert assessment without altering established reporting standards.

Therefore, the development of a simple, user-friendly, and highly generalizable segmental bowel cleanliness assessment tool is significant for enhancing the standardization and clinical applicability of colonoscopy. The present study aims to design and validate such a software solution, providing a novel approach for the objective evaluation of bowel preparation quality in clinical practice.

To address this gap, we propose EndoClean, a fully automated and generalizable framework that processes colonoscopy videos to compute the BBPS score of the entire colon. EndoClean consists of two components: a segment classification model that divides the video into right, middle, and left colon segments, and a frame-level scoring model that evaluates cleanliness per BBPS criteria. By aggregating segment-wise scores, EndoClean outputs a complete BBPS score for the entire bowel, thus facilitating standardized, objective, and efficient bowel preparation assessment.

2. Methods

2.1. Datasets

This study was approved by the Ethics Committee of Zhongshan Hospital, Fudan University (Approval No. B2025-145R). The construction of EndoClean involved three distinct datasets for training the frame selection model, the frame-level scoring model, and the video-level segmentation model, respectively, followed by an independent validation set for the final system evaluation. To prevent data leakage, dataset splitting was strictly performed at the patient level, ensuring that no images or video frames from the same patient appeared in both training and validation sets.

(1) Frame Selection Dataset:

To train the model responsible for filtering out non-diagnostic frames, a specific dataset of 5670 colonoscopy images was curated. These images were annotated as either “valid” (clear visualization of mucosa) or “invalid” (blur, stool obstruction, or poor lighting). The dataset was randomly split at the patient level into a training set (4537 images) and a validation set (1133 images). This ensures the system learns to exclude low-quality inputs based on generalizable visual features rather than patient-specific artifacts.

(2) Frame-level BBPS Scoring Dataset:

The training dataset for the cleanliness assessment model was prospectively collected and annotated. A total of 35,940 colonoscopy images were curated. Senior endoscopists provided frame-level annotations according to the standard BBPS criteria (scores 0–3) or marked frames as invalid. The dataset was divided into a training set of 31,078 valid images distributed across BBPS scores 0 to 3, and a validation set of 4862 images. Invalid images were excluded from this stage to ensure the model focused solely on fine-grained cleanliness scoring, as non-diagnostic frames are pre-filtered by the Stage 1 model. Data augmentation techniques were applied to the training set to enhance model robustness against variations in endoscopic devices.

(3) Video-level Anatomical Segmentation Dataset:

The training dataset for the canatomical segmentation was prospectively collected and annotated. A total of 27,066 valid colonoscopy images were curated for this stage. Unlike the Stage 1 dataset, all images in this subset underwent a rigorous pre-screening process to exclude non-diagnostic frames.

Crucially, to address the challenge of precise anatomical localization and flexure detection, our annotation protocol was aligned with the rigorous anatomical definitions proposed in the recent research [

13]. Specifically, we adopted their granular landmark criteria for identifying the hepatic flexure (visualizing the liver shadow/blueish hue) and splenic flexure (transition to descending colon). Based on these standardized landmarks, experts annotated frames into six anatomical classes (cecum, ascending colon, transverse colon, descending colon, sigmoid colon, and rectum). This alignment with state-of-the-art anatomical standards ensures that our model focuses on topologically accurate mucosal cleanliness patterns. The dataset was divided into a training set of 22,204 images and a validation set of 4862 images.

(4) System Evaluation Dataset:

To evaluate the performance of the fully integrated EndoClean framework, an independent test set consisting of 314 colonoscopy videos was collected consecutively from January 2023 to December 2024, a period subsequent to the training data collection. These videos were strictly isolated from the training and validation processes of the component models. Each video was independently scored by 5 junior endoscopists.

To establish a robust ground truth, a rigorous consensus protocol was implemented involving 5 senior endoscopists with 10 years of experience. The process adhered to the following consistency criteria: To eliminate ‘groupthink’ or authority bias, all five experts reviewed the videos independently in a blinded manner, without access to patient metadata or peer ratings. Given that BBPS scores are ordinal variables, the median value of the five experts’ scores was calculated for each colonic segment to serve as the final Ground Truth. This statistical approach was selected to minimize the impact of individual inter-observer variability and outlier ratings.

(5) Exclusion Criteria for Patients and Videos:

To ensure the quality and applicability of the dataset for automated BBPS assessment, the following exclusion criteria were applied to all collected videos:

History of Colorectal Resection: Patients with a history of partial or total colectomy were excluded, as altered anatomical structures could compromise the accuracy of the anatomical segmentation model.

Inflammatory Bowel Disease (IBD) or Severe Stenosis: Patients with active IBD (ulcerative colitis or Crohn’s disease) or severe luminal stenosis were excluded, as the mucosal features in these conditions differ significantly from routine screening populations.

Incomplete Procedures: Colonoscopies where the cecum was not reached (intubation failure) were excluded to ensure full-length video analysis.

Technical Video Defects: Videos with severe data corruption, missing segments, or extreme image artifacts that prevented human expert review were excluded.

(6) Data Preprocessing:

All colonoscopy videos were recorded using high-definition endoscopy systems (Evis Lucera Elite CV-290, Olympus Medical Systems, Tokyo, Japan) with a native resolution of 1920 × 1080 pixels. To ensure patient privacy, all protected health information (PHI), including patient ID, name, and examination date, was automatically cropped and removed from the video frames.

To balance computational efficiency with the need for temporal continuity required by the HMM-based segmentation model, videos were sampled at a rate of 5 frames per second (fps). Extracted frames were then resized to 224 × 224 pixels using bilinear interpolation to match the input dimensions of the ResNet-50 backbone. Standard normalization was applied using the mean and standard deviation of the ImageNet dataset to facilitate transfer learning.

2.2. Models

In this study, we propose EndoClean, an automated system designed to compute the BBPS score from colonoscopy videos. The system consists of three distinct models to process the video in a sequence of steps, ensuring an accurate and efficient assessment of bowel preparation quality (

Figure 1).

We apply a frame selection model to evaluate each frame of the colonoscopy video individually, identifying clear and assessable frames for subsequent scoring. This model is based on a convolutional neural network and performs binary classification to detect the presence of visual artifacts that may compromise scoring accuracy, such as air bubbles, blurriness, or motion blur. Only frames that are free from significant occlusions and clearly display the colonic mucosa are retained, while all others are discarded. This filtering step significantly improves the quality of inputs for the downstream models, preventing the scoring process from being affected by invalid frames. In parallel, a colon segmentation model is introduced to classify each valid frame into one of three anatomical segments: the right colon, middle colon, or left colon.

The model adopts a hybrid approach that integrates a deep neural network with a probabilistic sequence model to assign anatomical segment labels to each frame in a colonoscopy video. Unlike the other two models, this model processes entire frame sequences and formulates the segmentation task as a temporal sequence labeling problem.

In the first stage, we also trained a ResNet-50-based classifier to perform six-class frame-level classification, corresponding to six colon segments (cecum, ascending colon, transverse colon, descending colon, sigmoid colon and rectum). Once we input a sequence of frames into this model, for each frame, it will output six probabilities represent the possibility of the frame belonging to six corresponding colon segments.

Since the frame-level classification by ResNet may contain noise or abrupt transitions, we further model the task as a Hidden Markov Model (HMM) to refine the predictions by enforcing temporal continuity, thereby inferring the true underlying anatomical segment of each frame and denoising as much as possible. Specifically, we assume that the observed class probabilities produced by the ResNet are noisy manifestations of a latent, temporally smooth sequence of anatomical states. To construct the HMM, we first estimate the emission probability matrix by computing the class-wise confusion statistics of the ResNet predictions on the validation set. Then, we define a custom transition matrix that reflects the expected anatomical progression during colonoscopy, and heavily penalizes biologically implausible transitions.

Given the observation sequence, emission matrix, and transition matrix, we apply the Viterbi algorithm to compute the most likely sequence of hidden states—that is, the anatomically accurate colon segment assigned to each video frame. This HMM-based refinement enables temporally coherent, anatomically consistent segmentation, correcting noisy frame-wise predictions and producing a final sequence suitable for downstream scoring.

In detail, denote

as the ResNet model’s prior estimate of the anatomical site for the

-th frame. Let

denote the anatomical site assigned to the

-th frame by the HMM as the final decision, and let j index a particular anatomical site. We have:

where the term

represents the probability that the ResNet model does not misclassify, which can be obtained from the emission matrix. The term

denotes the probability of the current frame being assigned to a given site conditioned on the previous frame being site j which can be obtained from the transition matrix.

This segmentation is crucial for the BBPS scoring, as the scale requires separate cleanliness assessments for each colon segment. The segmentation model assigns each frame to its appropriate segment based on spatial features. Although the HMM refines the frame-level predictions, the resulting sequence may still exhibit boundary ambiguity or residual noise, making it difficult to directly segment the colon based on labels. Therefore, we designed a post-processing algorithm that automatically and explicitly detects the transition time points between colon segments based on the stability and trend of predicted labels across the frame sequence, thereby converting sequential information into precise temporal boundaries: the transition time points between the right-to-middle and middle-to-left colon segments. This algorithm refines the boundaries between the colon segments, which is essential for accurate scoring.

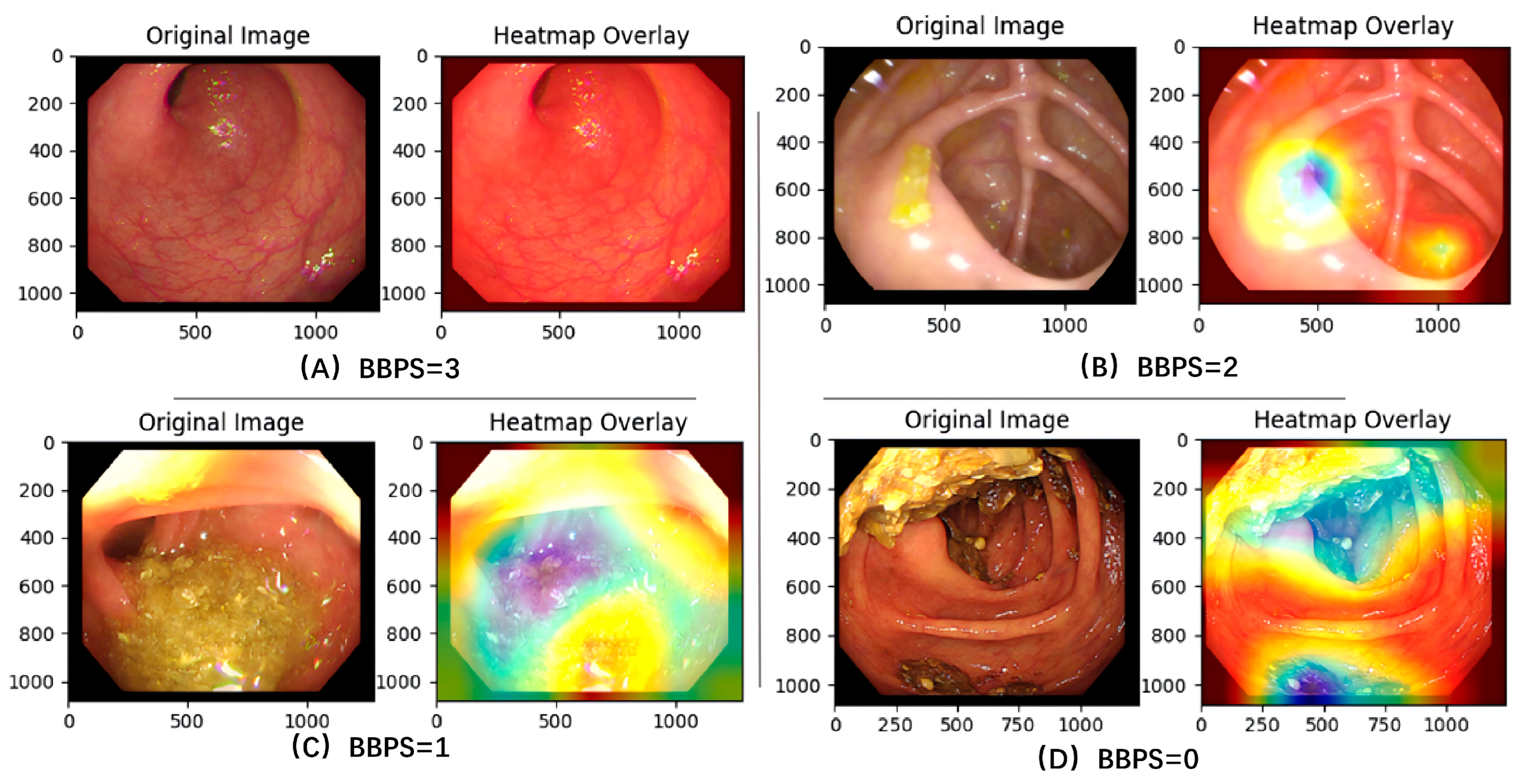

In the final step, the selected valid frames are fed into the BBPS scoring model, which assigns a cleanliness score ranging from 0 to 3 to each frame based on the BBPS criteria. This model is built upon a deep convolutional neural network and is trained to capture key visual features. As illustrated in

Figure 2, the Class Activation Mapping (CAM) visualization confirms that the model’s attention is correctly focused on discriminative regions, such as areas of mucosal visibility or obscuring stool, to generate the cleanliness score. It outputs a probability distribution over the BBPS score levels for each frame. After scoring frames individually, the system aggregates the frame-level scores within each anatomically segmented region—based on the corresponding time intervals—using a weighted average to compute segment-level scores. These are then combined to generate the final total BBPS score for the entire colonoscopy video.

The entire system operates at the frame level. It first uses Frame Selection Model to filter out valid frames, after which colon segmentation model and BBPS scoring mode run in parallel: colon segmentation model determines the temporal boundaries of the three colon segments within the video, while BBPS scoring mode assigns a cleanliness score to each valid frame. Finally, the scores of valid frames within each segment are aggregated using a weighted average to obtain the overall BBPS score for the colonoscopy video. By utilizing these three models—frame selection, colon segmentation, and BBPS scoring—EndoClean provides a fully automated, objective, and consistent method for evaluating bowel preparation quality, improving both the efficiency and accuracy of colonoscopy evaluations.

2.3. Algorithms

Time Points Regression for Boundary Definition

Following the probabilistic smoothing performed by the HMM (as described in

Section 2.2), we obtain a coherent sequence of anatomical labels. However, to consolidate this fine-grained six-class sequence into the conventional three anatomical segments (Right, Transverse, and Left Colon) required for BBPS scoring, we need to pinpoint the exact temporal boundaries. Therefore, we designed a Time Points Regression algorithm to accurately identify the transition frames corresponding to the Hepatic and Splenic flexures.

The algorithm begins by grouping all video frames according to their HMM-predicted segment labels. Since the frame-wise predictions—even after HMM smoothing—may contain minor temporal outliers, a statistical filtering step is applied. This filtering excludes frames whose indices lie beyond two standard deviations from the mean index of the group, effectively refining the temporal distribution of each segment.

After filtering, the algorithm determines a representative median frame index for each of the six fine segments. This median serves as a temporal anchor, summarizing where that segment predominantly occurs within the video. We choose the median instead of the mean because it is more robust to outliers and accurately reflects the central distribution of labels within the temporal sequence.

Between every pair of adjacent median frames, the algorithm searches exhaustively for an optimal transition frame that best separates the two corresponding fine segments. For each candidate transition frame

m within this interval, a composite score

is calculated based on how well the frames on either side conform to their expected segment labels. Mathematically, the score is defined as:

After obtaining frame-wise anatomical-site probabilities from the HMM, we identify six median frames—one for each of the six anatomical sites. We then determine five boundary points within the five segments defined by these six median frames. Let denote the likelihood score that the m-th frame within a segment serves as a boundary point, where a larger value indicates a higher likelihood. Let be the total number of frames to the left of frame m within the current segment, and let be the number of frames on the left side that are predicted as the anatomical site corresponding to the median frame at the start of the segment. Similarly, and are defined for the right side of frame m. We set = 0.8 as a balancing coefficient to discourage excessively imbalanced numbers of frames on the two sides of frame m. The scoring function consists of three components: the first two terms encourage high classification accuracy on both sides, and the third term penalizes large discrepancies in accuracy between the two sides. The candidate frame with the highest score is selected as the optimal transition point between the two fine-grained segments.

Clinical Landmark Identification

To accurately delineate the three broad colon segments, our model leverages the six fine-grained anatomical classes (Cecum, Ascending Colon, Transverse Colon, Descending Colon, Sigmoid Colon, and Rectum). By applying the temporal boundary detection algorithm described above, we identify the five anatomical transition frames. Among them, the second and third boundaries—corresponding to the Hepatic Flexure (between Ascending and Transverse Colon) and Splenic Flexure (between Transverse and Descending Colon)—are selected as the clinically meaningful transition points. This two-stage strategy (HMM smoothing followed by Time Points Regression) leverages temporal context to precisely localize key anatomical landmarks, thereby reducing frame-level noise and enhancing the accuracy of the downstream BBPS assessment.

BBPS estimation

Once the temporal boundaries are established, the system integrates the frame-level BBPS predictions (generated in parallel as described in

Section 2.2) to estimate the overall score for each segment. We developed a rule-based aggregation algorithm that prioritizes the detection of inadequate bowel preparation. Specifically, using the two identified transition frames (Hepatic and Splenic flexures), the colonoscopy video is divided into three anatomically meaningful regions. For each segment, the algorithm collects the BBPS scores of all valid frames and computes the frequency of each score level (0, 1, 2, and 3). Based on these statistics, the final segmental score is determined according to the following rules:

- -

If the proportion of frames scored as 0 exceeds 10%, indicating a substantial presence of unprepared mucosa, the segment score is set to 0;

- -

Otherwise, if the proportion of frames scored as 1 exceeds 20%, suggesting generally poor preparation, the segment score is set to 1;

- -

If neither condition is met, the average BBPS score of all frames in the segment is computed and rounded to the nearest integer to obtain the final score.

From a clinical perspective, these thresholds reflect a ‘safety-oriented’ scoring strategy. Score 0 represents solid stool or obstruction that prevents visualization, which poses the highest risk for missed lesions; therefore, a stricter threshold (10%) was applied to ensure high sensitivity for inadequate preparation. Score 1 represents minor staining or liquid that allows some visualization, justifying a slightly more lenient threshold (20%). This proportion-based quantification method aligns with recent benchmarks in automated bowel preparation assessment, ensuring that the aggregated video-level score accurately reflects the worst-case scenario observed within the segment.

After computing the segmental scores for all three regions, the system aggregates them to derive the total BBPS score for the entire colonoscopy video, providing a quantitative and objective assessment of bowel preparation quality for clinical evaluation.

2.4. Implementation Details

Frame Selection Model

The model is built upon the ResNet-18 architecture. We adopted the pre-trained weights from ImageNet to initialize the model. The dataset described above (4537 training and 1133 validation images) was used to fine-tune the network. During training, we applied data augmentation techniques, including color jitter, random affine, and random rotation. The model was optimized using the Adam optimizer with a learning rate of 1 × 10−3 and a batch size of 512. The weighted binary cross-entropy loss function was used to address class imbalance. The model checkpoint exhibiting the lowest loss on the validation set was selected for integration into the final EndoClean system.

Colon Segmentation Model

In the first stage, the ResNet-50 architecture was adapted for six-class classification and initialized with ImageNet pre-trained weights. The model was trained using the 22,204 images from the segmentation training set. Data augmentation techniques were applied to enhance robustness. The model was trained using categorical cross-entropy loss, optimized with the Adam optimizer (learning rate 1 × 10−3, weight decay 1 × 10−2). Training was monitored using the validation set (9790 images), and the optimal model was retained to generate observation probabilities for the subsequent HMM stage.

BBPS Scoring Model

The BBPS Scoring Model employs a customized architecture integrating ResNet-50 with a dedicated attention mechanism. The model was trained from scratch on the dataset of 31,078 images (Training Set) described in the dataset section. It outputs a probability distribution across the BBPS score levels for each frame. Performance was monitored on the validation set of 4862 images to prevent overfitting and ensure generalization capability.

2.5. Statistical Analysis

Continuous variables were expressed as mean ± standard deviation (SD) or median with interquartile range (IQR), depending on the data distribution. Categorical variables were presented as frequencies and percentages. Differences in baseline characteristics were evaluated using the Student’s t-test or Mann–Whitney U test for continuous variables, and the Chi-square test or Fisher’s exact test for categorical variables.

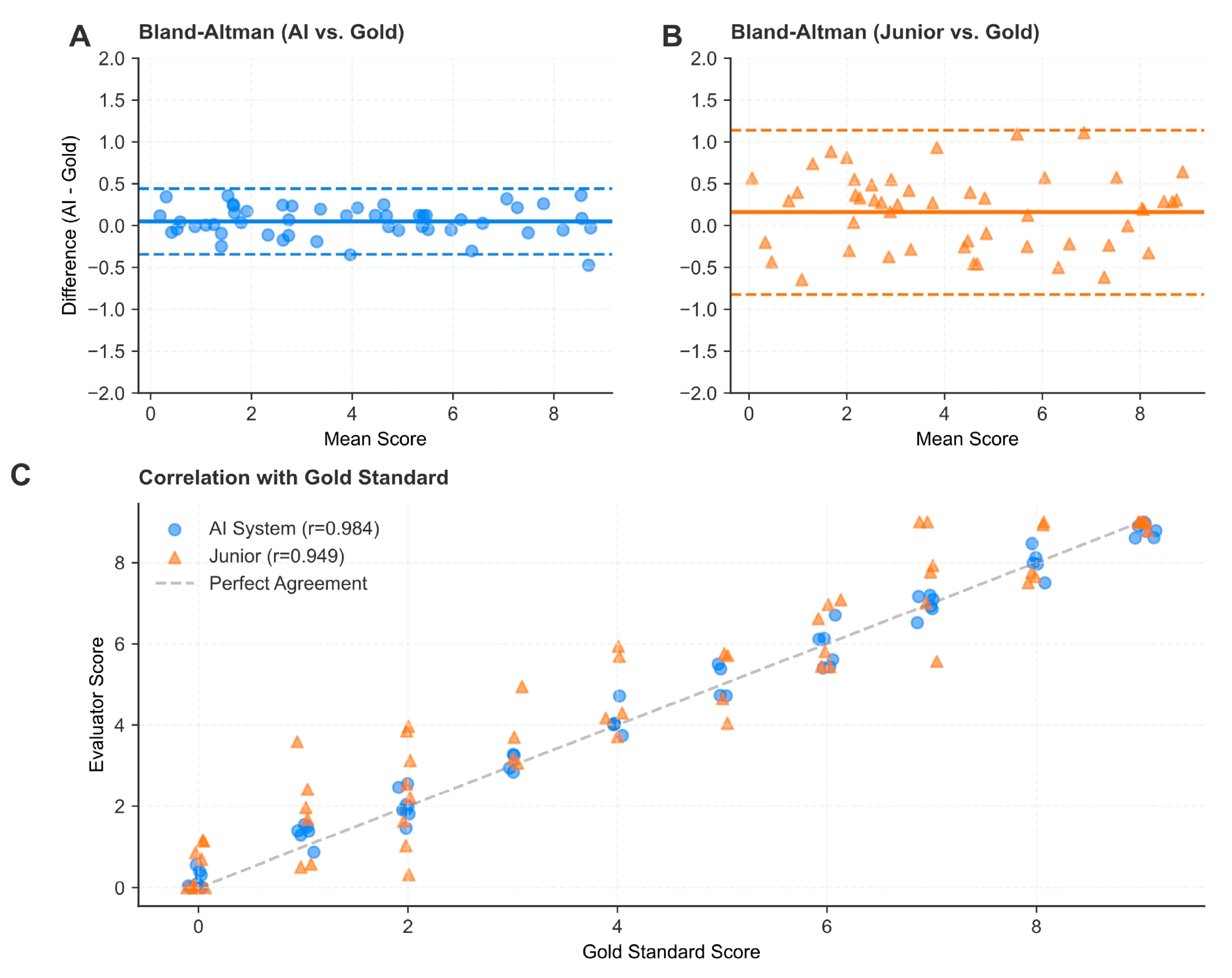

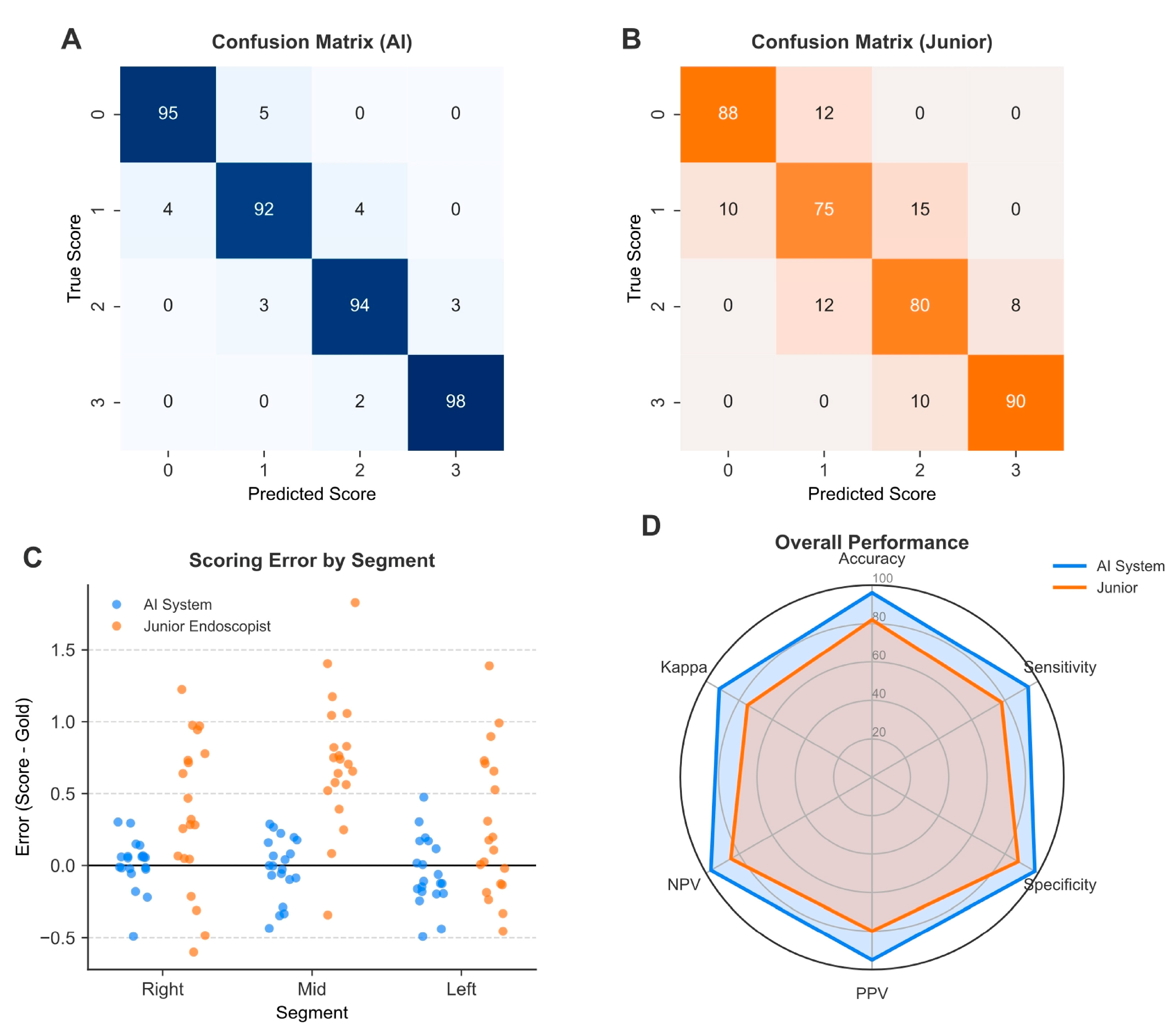

The primary performance metrics for the AI system and endoscopists included sensitivity, specificity, accuracy, positive predictive value (PPV), and negative predictive value (NPV). 95% confidence intervals (CIs) for all performance metrics were calculated using either the Wilson score interval or the bootstrap method (1000 resamples).

Inter-rater agreement between the AI system/junior endoscopists and the gold standard (senior experts) was assessed using the quadratic weighted Kappa (κ) coefficient and the Intraclass Correlation Coefficient (ICC, two-way random effects model, absolute agreement). The strength of agreement was interpreted as follows: 0–0.20 (slight), 0.21–0.40 (fair), 0.41–0.60 (moderate), 0.61–0.80 (substantial), and 0.81–1.00 (almost perfect).

To statistically compare the performance differences between the AI system and junior endoscopists, McNemar’s test was used for paired nominal data (accuracy, sensitivity, and specificity). The difference in Kappa coefficients between the two groups was tested for statistical significance using a bootstrap-based test for equality of dependent kappa coefficients. The relationship between the AI scores and the gold standard was further analyzed using Pearson correlation coefficients (r) and Bland–Altman analysis to assess systematic bias.

All statistical tests were two-sided, and a p-value of <0.05 was considered statistically significant. Statistical analyses were performed using Python (scikit-learn library, version 1.0.2) and SPSS software (version 21.0, IBM Corp., Armonk, NY, USA).

5. Limitations and Future Directions

Despite the promising results, our study is not without limitations. First, as a retrospective single-center study, there is an inherent risk of selection bias. The training and validation datasets, while large, may not fully capture the visual diversity of bowel preparations encountered in different populations or with different endoscopic equipment manufacturers. Multi-center validation is the necessary next step to confirm the robustness of our algorithm across varying clinical settings.

Second, regarding the technical architecture, our current framework employs a hybrid CNN-HMM. While the HMM effectively leverages the fixed anatomical sequence to smooth predictions, we acknowledge that this approach may not capture long-range temporal dependencies as effectively as emerging deep learning architectures. As suggested by recent literature, Video Transformers represent a promising direction for future work, with their ability to model complex temporal dynamics via self-attention mechanisms potentially improving segmentation precision, particularly in procedures with erratic camera movements or loops.

Concurrently, the core cleanliness assessment is primarily based on frame-level feature extraction using 2D-CNNs. Although this approach achieves high accuracy by aggregating scores across segments, it may not fully capture complex spatiotemporal dynamics—such as the rapid movement of fluid or debris that an endoscopist dynamically visualizes. Future iterations will therefore aim to incorporate 3D-CNNs or Transformer-based architectures to better leverage spatiotemporal continuity, enabling a more robust, real-time dynamic assessment that mirrors the continuous cognitive process of a human expert.

Finally, while we have established that the EndoClean correlates well with expert BBPS scoring, the ultimate litmus test for any bowel preparation assessment tool is its association with clinical outcomes, specifically the Adenoma Detection Rate (ADR). Future studies should investigate whether AI-derived BBPS scores are as predictive of ADR as human-derived scores. If the EndoClean system can reliably flag inadequate preparation that leads to missed lesions, its value shifts from a mere documentation tool to a critical quality assurance instrument that directly impacts patient safety.