1. Introduction

Brain tumors represent one of the most severe and life-threatening forms of cancer due to their aggressive growth, complex pathology, and disruption of critical neurological functions. Globally, they rank among the top ten causes of cancer-related mortality in both adults and children, contributing substantially to the overall disease burden. According to the World Health Organization (WHO), marked regional disparities exist in incidence and mortality rates, with Europe and North America experiencing the highest burden, while Asia and Africa report comparatively lower figures [

1]. Nevertheless, across all regions, the persistent gap between incidence and mortality highlights the limited survival gains achieved even in well-resourced healthcare systems. Importantly, brain tumors disproportionately affect younger populations and are associated with one of the highest standardized mortality ratios (SMRs) among all cancers, often persisting decades after diagnosis [

2]. These trends underscore the urgent need for improved diagnostic strategies, earlier intervention, and more equitable neuro-oncology care worldwide.

Brain tumors arise from abnormal and uncontrolled proliferation of cells within the brain and its surrounding structures, including the meninges, cranial nerves, and pituitary gland. They are broadly classified into primary tumors, originating within the brain, and secondary or metastatic tumors that spread from other organs. Among primary tumors, gliomas, meningiomas, and pituitary tumors are the most prevalent, with gliomas exhibiting particularly invasive and aggressive behaviour [

3]. Risk factors associated with brain tumor development include genetic predisposition, exposure to ionizing radiation, carcinogenic chemicals, viral infections, and inherited syndromes such as Li–Fraumeni and neurofibromatosis. Clinically, patients often present with non-specific neurological symptoms—such as headaches, seizures, visual disturbances, or cognitive impairment—which complicate early and accurate diagnosis [

3]. As a result, reliable and efficient diagnostic tools are critical for improving clinical outcomes.

Medical imaging plays a central role in the diagnosis and management of brain tumors. Among available modalities, magnetic resonance imaging (MRI) offers superior soft-tissue contrast and detailed visualization of intracranial structures, making it the gold standard for tumor detection, localization, and treatment planning [

4]. Despite its diagnostic value, manual interpretation of MRI scans remains time-intensive and subject to inter-observer variability. Subtle tumor boundaries, infiltrative growth patterns, and class-specific visual similarities may not always be readily apparent, potentially delaying treatment decisions. To mitigate these challenges, artificial intelligence (AI)-driven approaches—particularly deep learning—have gained increasing attention for automated tumor detection, classification, and segmentation. By learning discriminative features directly from imaging data, deep learning models have demonstrated notable improvements in diagnostic accuracy, reproducibility, and efficiency [

5].

Among AI techniques, convolutional neural networks (CNNs) have emerged as the backbone of modern medical image analysis due to their ability to learn hierarchical spatial representations [

6,

7]. CNN-based models have been successfully applied to brain tumor classification, grading, and segmentation, often outperforming traditional machine-learning approaches reliant on hand-crafted features [

8]. The adoption of transfer learning, in which CNNs pretrained on large-scale natural image datasets are fine-tuned on medical images, has further accelerated progress by reducing data requirements and training time while improving generalization [

9]. However, conventional CNN architectures remain limited by their inherently local receptive fields, which restrict their ability to capture long-range contextual relationships—an important factor when distinguishing tumors with heterogeneous morphology or diffuse boundaries [

10].

To address these limitations, recent studies have explored architectures inspired by Vision Transformers (ViTs), which leverage self-attention mechanisms to model global dependencies [

11,

12]. While ViTs have demonstrated promising results in medical imaging, they typically require large datasets and substantial computational resources, posing practical challenges for routine clinical use. This has motivated interest in hybrid and next-generation convolutional architectures that retain the efficiency of CNNs while incorporating design principles inspired by transformers.

Despite substantial progress in various deep learning family-based brain tumor classification, several gaps remain in the current literature. Many studies report strong performance on a single dataset but do not assess robustness under cross-dataset evaluation, limiting confidence in real-world generalization. Statistical validation of performance differences is often omitted, making it unclear whether reported gains are meaningful or incidental. Furthermore, computational efficiency and deployment feasibility are rarely analyzed, even though these factors strongly influence clinical adoption. Finally, interpretability is frequently addressed in isolation or not at all, leaving uncertainty about whether model decisions align with radiologically meaningful regions.

Within this context, the present study investigates ConvNeXt Base as a modernized convolutional architecture that combines the representational strength of transformer-inspired designs with the efficiency of classical CNNs. ConvNeXt modernizes the ResNet design by integrating large-kernel depthwise convolutions, inverted bottlenecks, and layer normalization. These design choices enable ConvNeXt to capture both local and global image features while preserving computational efficiency.

While ConvNeXt has shown strong performance in natural image benchmarks, its potential for brain tumor MRI classification remains underexplored. This work addresses that gap by investigating the effectiveness of the ConvNeXt Base architecture for multi-class brain tumor classification using MRI images across four tumor categories. The choice of ConvNeXt is motivated by its ability to combine transformer-inspired global contextual modeling with the robustness and efficiency of convolutional operations, making it well suited for complex medical imaging tasks. We rigorously evaluate its performance across multiple independent datasets, examine its generalization capability, and assess interpretability using post-hoc explainability techniques.

The scope of this work is focused on the reliability and robustness of brain tumor classification systems rather than on tumor segmentation, grading, or molecular characterization. While ConvNeXt has emerged as a modernized convolutional architecture that blends CNN efficiency with transformer-inspired scalability, its potential in medical imaging remains relatively underexplored [

13]. The novelty of this study lies not in proposing a new architecture, but in delivering a comprehensive and evidence-driven evaluation of ConvNeXt Base under clinically relevant conditions.

Specifically, we address the critical issue of external generalization by training and validating the model across three independent datasets, a protocol that remains uncommon in prior studies [

14,

15]. Furthermore, ConvNeXt Base is benchmarked against a diverse set of established transfer-learning architectures, including lightweight, scalable, residual, and classical deep CNNs, enabling a fair and holistic comparison that many existing works do not emphasize [

16]. In addition, extensive ablation experiments are conducted to disentangle the contributions of data augmentation strategies, optimizer choices, and architectural parameters, thereby offering practical design insights for future medical imaging research [

17]. Interpretability is enhanced through a dual explainability framework that integrates Grad-CAM++ and Gradient SHAP, a combination rarely explored together in brain tumor analysis. Finally, reproducibility and deployment feasibility are reinforced through rigorous statistical validation, resource-efficient augmentation, and efficiency profiling.

Although three-dimensional (3D) and multimodal MRI analyses can capture richer spatial and contextual information, this study adopts a two-dimensional (2D) slice-based formulation by design. This choice reflects practical constraints commonly encountered in clinical and public datasets, including heterogeneous acquisition protocols, inconsistent volumetric coverage, and limited availability of fully annotated 3D data. A 2D formulation enables uniform preprocessing across datasets and supports computationally efficient training and inference, which is essential for scalable clinical deployment. Moreover, prior studies have demonstrated that well-optimized 2D deep learning models can achieve diagnostic performance comparable to volumetric approaches for tumor classification tasks. Extensions to 3D and multimodal MRI inputs are therefore positioned as a logical continuation of this work rather than a prerequisite for its core objectives.

The main contributions of this study are summarized as follows:

Adaptation and optimization of ConvNeXt Base for medical imaging, demonstrating strong generalization without architectural over-complexity.

Comprehensive cross-dataset validation across three independent MRI datasets to assess robustness beyond single-domain evaluation.

Extensive benchmarking against modern and classical CNN architectures under identical experimental settings.

Detailed ablation studies examining the effects of transfer learning, attention mechanisms, data augmentation, and input resolution.

Rigorous statistical significance testing using Friedman, Holm, Wilcoxon, and Kendall’s W analyses with critical difference diagrams.

Computational efficiency profiling, including inference time, resource utilization, and power consumption.

Multi-perspective interpretability analysis using Grad-CAM++ and Gradient SHAP to confirm tumor-relevant decision-making.

Clinically oriented evaluation using sensitivity, specificity, precision, recall, F1-score, AUC, Kappa metrics, and confusion-matrix visualization.

The remainder of this paper is organized as follows:

Section 2 reviews related work in deep learning-based brain tumor classification.

Section 3 describes the ConvNeXt Base architecture, experimental setup, datasets, preprocessing pipeline, and evaluation metrics.

Section 4 presents the results, including performance analysis, ablation studies, explainability, efficiency assessment, and statistical validation.

Section 5 discusses the findings and their clinical implications.

Section 6 outlines study limitations and future research directions, and

Section 7 concludes this paper.

2. Related Work

Prior studies on MRI-based brain tumor classification have explored a wide range of deep learning strategies, differing substantially in architectural design, evaluation rigor, and validation scope. Early works primarily relied on classical convolutional backbones trained via transfer learning, while more recent studies have introduced attention mechanisms, transformer variants, and hybrid CNN–transformer architectures. Despite reporting high classification accuracies, many of these studies are limited by single-dataset evaluation, incomplete metric reporting, or a lack of statistical validation and interpretability analysis. This section critically reviews representative approaches in the literature, focusing on how architectural choices, training strategies, validation protocols, and explainability practices influence reported performance and real-world applicability.

2.1. Traditional CNN-Based Approaches

CNNs have long been the cornerstone of automated brain tumor analysis, demonstrating high sensitivity and specificity in diagnostic tasks. For instance, Raza et al. [

18] developed an automated CNN framework for early detection that integrated advanced preprocessing and achieved superior classification over conventional approaches. Similarly, Ahamed and Sadia [

19] proposed multimodal MRI augmentation using residual learning, effectively addressing vanishing gradients and enhancing robustness. Stathopoulos et al. [

20] benchmarked multiple MRI modalities and CNN backbones on 1646 slices, achieving up to 98.6% accuracy, while also providing modality-specific insights for clinical screening. These works underline CNNs’ role as foundational yet still limited in their capacity to capture long-range dependencies.

2.2. Transfer Learning and Pre-Trained CNNs

Given the persistent challenges of limited labeled data and heterogeneity across MRI acquisitions, transfer learning—fine-tuning ImageNet-pretrained backbones—has become the de facto strategy for brain tumor classification and related tasks. Several comparative studies confirm the utility of this approach: Aggarwal et al. [

21] empirically showed that fine-tuning established backbones such as VGG16, ResNet50, and InceptionV3 yields consistent gains in both accuracy and training efficiency, while Pravitasari et al. [

22] directly contrasted pre-training with random initialization and identified EfficientNet-B5 (with pretrained weights) as particularly effective for both binary and multi-class tumor problems. Building on these baseline findings, many works explored both architectural choices and hybridizations to squeeze extra performance: Nayak et al. [

23] fused DenseNet and EfficientNet into a hybrid pipeline to capture complementary representations, and Mathivanan et al. [

9] performed a head-to-head evaluation of ResNet152, VGG19, DenseNet169, and MobileNetV3 on a balanced corpus, reporting that MobileNetV3 offered the best accuracy–efficiency tradeoff in their setting. Other studies targeted optimizer and training refinements—Polat & Güngen [

24] found ResNet50 with the Adadelta optimizer effective on the Figshare MRI benchmark, and Mehnatkesh et al. [

25] leveraged metaheuristic search to tune transfer-learning pipelines for improved generalization. Practical concerns of data scarcity and robustness also motivated non-standard pretraining and augmentation strategies: Ghassemi et al. [

26] used a GAN discriminator as a pre-trained classifier and combined heavy augmentation with dropout to better resist overfitting, while Salama & Shokry [

27] (and related synthetic-augmentation studies) reported that synthetic samples can help rebalance small or skewed MRI collections. Several groups focused on architectural tweaks and attention mechanisms to localize tumor cues more effectively: Shaik & Cherukuri [

28] proposed MANet, a multi-level attention model built on Xception that concentrates on salient tumor regions, Sharif et al. [

29] applied DenseNet201 to classify four tumor types, and Sharma et al. [

30] enhanced ResNet50 with extra layers and careful fine-tuning to boost multi-class discrimination. Complementary empirical studies—Alanazi et al. [

31] on cross-device subclassification and Vimala et al. [

32] fine-tuning EfficientNet variants with Grad-CAM visualizations—underscore that transfer learning is not only effective but can be made more interpretable and device-robust with suitable training and visualization choices. Additional studies have extended transfer learning pipelines with large-scale datasets and multi-class frameworks: Zarina et al. [

33] applied a hierarchical multiscale deformable attention module (MS-DAM) to 14 tumor types, achieving >96.5% accuracy, while Wong et al. [

34] leveraged pretrained VGG16 with augmented datasets of 17,136 MRI images, reporting 99.24% accuracy and deploying a web-based diagnostic interface. Rasa et al. [

35] compared six pretrained CNNs (VGG16, ResNet50, MobileNetV2, DenseNet201, EfficientNetB3, and InceptionV3) for both binary and multi-class tasks, achieving up to 99.96% accuracy with cross-validation and demonstrating the efficiency of optimized preprocessing and augmentation strategies. Finally, large-scale comparative and optimization efforts such as those by Prabha et al. [

36], Korani et al. [

37], and Kumar et al. [

38] demonstrate that thoughtful pooling strategies, hybrid backbones, and streamlined networks (Inception/Xception variants) often close the performance gap to heavier architectures while reducing computation.

2.3. Transformers for Brain Tumor Classification

The application of transformers to brain tumor analysis represents a methodological shift from purely convolutional designs toward architectures capable of capturing long-range dependencies and global context. Early CNN-based approaches often struggled to model the complex heterogeneity of tumor regions, but transformer modules have mitigated these limitations through self-attention mechanisms.

Wang et al. [

39] laid the foundation with TransBTS, which embedded a transformer within a 3D CNN framework. By converting volumetric CNN features into tokens, the network achieved global context modeling while maintaining local spatial fidelity, yielding performance on par with or better than state-of-the-art methods on BraTS 2019–2020. Building on this hybridization strategy, Jiang et al. [

40] introduced SwinBTS, which employs a 3D Swin Transformer as both encoder and decoder within an encoder–decoder pipeline. This hierarchical design enables effective contextual extraction while convolutional blocks refine local details, leading to consistent improvements across BraTS 2019–2021 benchmarks. In parallel, Hatamizadeh et al. [

10] presented Swin UNETR, reframing segmentation as a sequence-to-sequence prediction problem. By leveraging shifted-window attention in a hierarchical encoder and connecting features to a CNN-based decoder via skip connections, Swin UNETR achieved top performance in the BraTS 2021 challenge.

While hybrid models integrate transformers with CNNs, Vision Transformers (ViTs) attempt a more radical departure by treating medical images as sequences of patches, enabling end-to-end modeling of local–global interactions. Their patch-wise representation, combined with self-attention, has proven highly effective for both classification and segmentation.

Several studies highlight the robustness of ViTs for classification tasks. Tummala et al. [

41] fine-tuned multiple ViT variants (B/16, B/32, L/16, L/32) on the Figshare dataset, reporting that model ensembles significantly improved performance, achieving 98.7% accuracy. Asiri et al. [

42] conducted a comparative evaluation of five pre-trained ViTs (R50-ViT-l16, ViT-l16, ViT-l32, ViT-b16, ViT-b32), confirming their adaptability across configurations, while Asiri et al. [

43] introduced FT-ViT, a refined framework that integrates patch processing and multi-stage fine-tuning, improving detection accuracy in CE-MRI scans. Similarly, Reddy et al. [

44] benchmarked fine-tuned ViTs against CNN backbones such as ResNet-50 and EfficientNet-B0, showing that ViTs consistently surpassed CNNs in classification accuracy.

Efforts have also extended ViTs to segmentation. Qiu et al. [

45] proposed MMMViT, a multiscale multimodal design that decouples intra- and inter-modal fusion, enhancing adaptability to tumors of varying sizes and achieving strong results on BraTS 2018. Zeng et al. [

46] advanced this further with DBTrans, a dual-branch architecture combining shifted-window self-attention and shuffle cross-attention with channel-attention refinements, enabling robust feature capture in both encoder and decoder stages. More recently, Poornam and Angelina [

47] introduced VITALT, a composite framework integrating ViT with a Split Bidirectional Feature Pyramid Network, linear transformation, and soft quantization modules, demonstrating enhanced feature representation and robustness.

2.4. ConvNeXT for Brain Tumor Classification

Recent work has highlighted the promise of ConvNeXT, a modern CNN architecture inspired by ViTs, for brain tumor classification and segmentation. These studies emphasize its ability to achieve state-of-the-art performance while addressing challenges such as data scarcity, feature representation, and computational efficiency.

Bhatti et al. [

48] and Mehmood & Bajwa [

13] independently proposed ConvNeXT-based pipelines for brain tumor grade classification using MRI data from the BraTS 2019 dataset. Both approaches extract discriminative features from pre-trained ConvNeXT models and employ fully connected neural networks for final classification. By leveraging transfer learning, they successfully mitigated overfitting issues common in medical imaging with limited data. Their models achieved a remarkable accuracy of 99.5%, particularly when multi-sequence MRI inputs were fused as three-channel images, demonstrating ConvNeXT’s potential for robust and non-invasive tumor grading.

Extending ConvNeXT with attention mechanisms, Fırat & Üzen [

49] introduced a hybrid model that integrates a spatial attention mechanism (SAM) with ConvNeXT to classify glioma, meningioma, and pituitary tumors. The combination of ConvNeXT’s enhanced receptive field and SAM’s region-focused learning improved the model’s discriminative ability, yielding 99.39% and 98.86% accuracy on the BSF and Figshare datasets, respectively. These results underscore the advantages of selectively emphasizing informative regions when classifying complex tumor structures.

Further hybridization strategies have been explored to exploit complementary architectures. Panthakkan et al. [

50] proposed a concatenated EfficientNet–ConvNeXT model, demonstrating superior accuracy, sensitivity, and specificity compared to standalone models. Their framework achieved 99% predictive accuracy across multiple tumor types, while also showing robustness to variations in tumor morphology and image quality—an essential factor for clinical applicability.

Other studies have compared ConvNeXT against a broad spectrum of deep architectures. Reyes & Sánchez [

16] evaluated ConvNeXT alongside VGG, ResNet, MobileNet, and EfficientNet on two MRI datasets encompassing over 3000 images. Although ConvNeXT achieved competitive accuracy (up to 98.7%), it was slower to train and had the lowest image throughput (97.35 images/s), reflecting a trade-off between accuracy and computational efficiency. Notably, transfer learning combined with fine-tuning consistently outperformed training from scratch, reinforcing the importance of leveraging pre-trained weights in medical imaging tasks.

Beyond tumor phenotype classification, ConvNeXT has also been adapted to integrate radiogenomic analysis. Cui et al. [

51] developed a pyramid channel attention-enhanced ConvNeXT for glioma gene classification (CDKN2A/B homozygous deletion). By combining group convolution with attention-based recalibration, their model achieved improved performance over baseline ConvNeXT, reporting 83.34% accuracy, 81.12% AUC, and 84.62% F1-score on multimodal data from TCIA and TCGA. This work demonstrates ConvNeXT’s adaptability to genomics-informed neuro-oncology, bridging imaging with molecular profiling.

2.5. XAI for Brain Tumor Classification

As deep learning models grow increasingly complex, XAI has become indispensable for building trust in automated brain tumor diagnosis. Techniques such as Grad-CAM, SHAP, and LIME are now commonly integrated to expose model reasoning, highlight salient tumor regions, and align predictions with clinical expectations. These methods not only enhance transparency but also address the “black-box” nature of CNN- and transformer-based frameworks.

Several recent studies demonstrate how XAI augments tumor classification pipelines. Nahiduzzaman et al. [

52] proposed a lightweight PDSCNN with hybrid RRELM for multiclass classification, where efficient preprocessing and feature extraction achieved superior accuracy over conventional approaches. Panigrahi et al. [

53] advanced this direction with a DenseTransformer hybrid that combines DenseNet201 and attention mechanisms, while incorporating Grad-CAM and LIME to visualize model focus areas on the Br35H dataset. Similarly, Bhaskaran and Datta [

54] employed transfer learning with InceptionV3, integrating Grad-CAM, LIME, and SHAP for post hoc analysis; their results suggested SHAP aligned most closely with clinical reasoning, explaining ~60% of cases versus <50% for other techniques.

Segmentation-oriented work also highlights the role of XAI. Abd-Elhafeez et al. [

55] evaluated five deep models, showing EfficientNet-B7 achieved the best Dice score on TCGA-LGG, with Grad-CAM explanations clarifying region-level predictions. Ullah et al. [

56] combined DeepLabv3+ segmentation with inverted residual bottleneck classifiers, using Bayesian optimization and LIME visualizations to achieve higher segmentation and classification accuracy.

Beyond conventional pipelines, interpretability has been extended to ensemble and customized CNN systems. Moodely et al. [

57] compared ConvNet and SeparableConvNet on >7000 MRI scans, reporting 96.64% accuracy with LIME explanations of discriminative features. Singh et al. [

58] introduced an ensemble of CNN, ResNet-50, and EfficientNet-B5 with adaptive weighting to handle class imbalance, integrating Grad-CAM, SHAP, SmoothGrad, and LIME for multi-faceted interpretability. Their framework achieved 99.4% accuracy with robust cross-dataset generalization, further incorporating entropy-based uncertainty analysis to flag ambiguous cases.

2.6. Observation and Research Scope

Early CNN-based approaches, such as VGG, ResNet, and custom ConvNets laid the foundation for brain tumor detection and classification, offering strong baselines but suffering from limited receptive fields, overfitting on small datasets, and weak robustness to domain shifts. Transfer learning with pre-trained CNNs (e.g., EfficientNet, MobileNet, DenseNet) improved accuracy and efficiency, but most studies focused narrowly on backbone swapping without systematic ablations or external validations.

Transformer-based methods (e.g., TransBTS, SwinBTS, Swin-UNETR) brought significant gains by modeling long-range dependencies and contextual features in volumetric MRI. Despite achieving state-of-the-art results, these models require heavy computational resources and remain largely benchmark-driven, with little focus on generalization across institutions. ConvNeXT, a modernized CNN inspired by vision transformers, has recently shown promising accuracy and compatibility with attention mechanisms. However, its application in brain tumor research is still nascent, with studies limited to single datasets and lacking rigorous analysis of robustness, efficiency, or interpretability.

Parallelly, XAI methods such as Grad-CAM, SHAP, and LIME are increasingly used to improve transparency, but most works employ them in isolation and only for qualitative visualization. Few attempt quantitative evaluation of explanation reliability, integration of complementary XAI techniques, or systematic error analysis. Additionally, across all categories, practical deployment considerations—such as inference time, memory efficiency, and reproducibility—are rarely addressed.

Despite rapid progress, the existing literature reveals clear gaps. Most studies report strong within-dataset accuracy but neglect external cross-dataset generalization, leaving real-world robustness uncertain. Component-level contributions remain underexplored due to limited ablation studies, and explainability efforts are shallow, relying on single methods without deeper validation. ConvNeXT’s potential in brain tumor analysis is promising but underexamined, with little evidence of its performance under domain shift or its integration with advanced XAI. Furthermore, statistical rigor, reproducibility, and deployment-focused evaluations are often overlooked.

To address these shortcomings, our study conducts a systematic, cross-dataset evaluation of ConvNeXt Base, supported by exhaustive ablations, dual XAI integration (Grad-CAM++ and SHAP), and statistical validation across multiple runs. We further analyze model efficiency, scalability, and deployment feasibility, providing one of the first comprehensive and reproducible assessments of ConvNeXT for brain tumor classification and segmentation.

ConvNeXT introduced a modernized ConvNet design that borrows effective training and architectural practices from Vision Transformers while retaining the efficiency of convolutions [

59]. The ConvNeXT family demonstrated strong ImageNet results and competitive performance on downstream tasks, making it a compelling backbone for transfer learning in medical imaging.

Several recent studies have applied modern backbones and hybrid architectures to brain-MRI classification and detection. Mehmood et al. applied ConvNeXT variants to brain tumor grade classification on BraTS data, reporting strong performance but restricted to a single dataset and without extensive ablation or multi-method XAI analysis. Other works have explored hybrid ViT+RNN pipelines and EfficientNet-family models for classification, with varying degrees of interpretability and dataset scope. These studies show the promise of modern backbones but typically do not test cross-dataset generalization rigorously [

13,

33].

Explainability has become an essential complement to performance reporting. Grad-CAM++ (an improved gradient-based saliency method) and SHAP (Shapley-based feature attributions) are two well-established techniques widely used to visualize and explain CNN decisions. While many neuroimaging studies include Grad-CAM visualizations, only a few combine multiple complementary explainability methods to strengthen clinical interpretability. Our work integrates Grad-CAM++ and SHAP to provide both class-discriminative localization and pixel-level attribution [

60,

61].

Public benchmarks and multi-institutional datasets such as the BraTS series remain central to method comparison and reproducibility in the field [

62,

63]. Much prior work reports high performance on a single benchmark or mixed internal datasets; only a small subset attempts explicit cross-dataset validation. This gap motivates external generalization studies that evaluate a model’s robustness on entirely independent test sets—an objective we pursue here.

None of the papers above perform strong external dataset evaluation in the sense of training on one dataset and testing on fully independent unseen datasets from different sources. For example, Mehmood et al. [

13] only used BraTS 2019, and Ahmed et al. used their own dataset + Kaggle subset, but without full external domain shift tests. This gap motivates our use of three independent test datasets.

The amount of explainability varies. Ahmed et al. [

64] integrate several methods (attention maps, SHAP, LIME), which is more than many studies; however, most papers still rely on a single XAI method or none. Combining Grad-CAM++ + SHAP remains rare in publications with verified sources.

Few papers deeply examine how architectural or training components (augmentation strategy, kernel sizes, normalization, etc.) affect performance. Nassar et al. [

65] compared pre-trained models and include dropout/GAP, but the comparison is not exhaustive. Our exhaustive ablation across these dimensions distinguishes our methodology.

Mehmood et al. [

13] is the only verified study using ConvNeXT in this domain. They show strong performance on tumor grading but do not test cross-dataset, do not deeply ablate components, and do not provide dual XAI frameworks. This positions our study as the first systematic evaluation of ConvNeXt Base for multi-class tumor segmentation/classification with generalization, interpretability, and reproducibility.

4. Results and Performance Analysis

This section presents a comprehensive evaluation of the proposed ConvNeXt Base model for multi-class brain tumor classification using MRI images. The model is benchmarked against four established deep learning architectures—ResNet152, EfficientNetV2-B0, VGG19, and MobileNetV3Large—across three independent test datasets (D2, D3, and D4). The analysis encompasses quantitative performance comparison, class-wise reliability, learning behavior, diagnostic sensitivity, and computational efficiency.

Beyond standard performance evaluation, the section includes an ablation study to analyze the contribution of architectural and training components, followed by explainability assessments using Grad-CAM++ and Gradient SHAP to interpret model decisions. Statistical validation is conducted using Friedman’s aligned ranks test, Holm’s and Wilcoxon post hoc analyses, and Kendall’s W, supported by critical difference diagrams and TOPSIS-based multi-criteria ranking. Finally, the performance of ConvNeXt Base is contrasted with recent state-of-the-art methods to contextualize its effectiveness, generalization, and clinical applicability.

4.1. Classification Results and Assessment

To establish a baseline comparison, the overall classification performance of ConvNeXt Base was evaluated against four widely adopted CNN architectures across the three test datasets. This comparison provides a foundational understanding of how the proposed model performs relative to existing approaches under identical experimental conditions.

The selected baseline models represent distinct design philosophies within CNN development. MobileNetV3Large reflects lightweight and resource-efficient architectures designed for deployment in constrained environments. EfficientNetV2-B0 exemplifies compound scaling strategies that balance accuracy and efficiency. ResNet152 represents deep residual learning, a long-standing benchmark in medical image analysis, while VGG19 serves as a classical deep CNN that, despite its computational cost, remains a reference point in many imaging studies. By including these architectures, the evaluation spans multiple generations of CNN design, ensuring that the performance of ConvNeXt Base is assessed against both modern and traditional baselines.

Across all three datasets, ConvNeXt Base consistently achieved the highest or near-perfect classification performance among the five compared models for all four tumor classes—Glioma, Meningioma, Pituitary, and No Tumor—as summarized in

Table 6. Importantly, this superiority was not limited to aggregate accuracy but extended to balanced class-wise precision, recall, and F1-scores.

On dataset D2, ConvNeXt Base delivered precision, recall, and F1-scores exceeding 99.6% across all classes, with perfect classification achieved for Glioma and No Tumor cases (F1 = 1.0). The model attained an overall accuracy, precision, recall, and F1 Score of 99.83%, with an AUC of 1.0 and a Cohen’s kappa of 0.9977, indicating near-perfect agreement beyond chance. EfficientNetV2-B0 ranked second on this dataset, achieving 99.24% accuracy and a Kappa score of 0.9887. In contrast, ResNet152, MobileNetV3Large, and VGG19 exhibited slightly lower performance, with minor reductions in recall and F1-scores, particularly for Meningioma and Pituitary classes.

A similar performance pattern was observed on dataset D3. ConvNeXt Base again achieved near-perfect metrics across all evaluation criteria, recording an accuracy of 99.69%, precision of 99.67%, recall of 99.69%, and an F1-score of 99.68%, along with an AUC of 99.98% and a Kappa value of 99.59%. Notably, the model maintained well-balanced precision and recall across all classes, including No Tumor and Pituitary, where competing models often showed modest sensitivity drops. EfficientNetV2-B0 and MobileNetV3Large performed competitively, achieving mean accuracies in the range of 98.6–98.7%, but with slightly reduced recall and Kappa values. VGG19 and ResNet152 demonstrated consistent, albeit smaller, performance deficits, primarily driven by reduced recall for Meningioma.

On dataset D4, ConvNeXt Base achieved the highest overall performance, with a mean accuracy of 99.86% and nearly identical precision and recall values. All four class-wise F1-scores ranged between 99.75% and 100%, including perfect classification of Pituitary and Glioma cases. While EfficientNetV2-B0 and MobileNetV3Large also exhibited strong performance on this dataset, their average scores remained marginally lower. ResNet152 showed a more pronounced decline, with an accuracy of 97.76% and a Kappa value of 94.77%, primarily due to reduced sensitivity for Glioma and Meningioma.

When performance was averaged across all classes and datasets (

Table 7), ConvNeXt Base consistently outperformed the comparison models, achieving the highest accuracy, precision, recall, and F1-scores on D2 (99.84%), D3 (99.68%), and D4 (99.86%). In contrast, the remaining architectures generally achieved average scores within the 98–99% range. This consistent margin, although numerically small, reflects a meaningful improvement in diagnostic reliability, particularly in reducing class-specific errors that are clinically consequential.

4.2. Confusion Matrix Analysis

While aggregate metrics summarize overall performance, confusion matrices provide a class-level view of prediction behavior.

Figure 3 presents the confusion matrices for ConvNeXt Base on the three test datasets (D2, D3, and D4), allowing examination of correct classifications and residual errors across tumor categories.

Across all datasets, the confusion matrices exhibit strong diagonal dominance, indicating close agreement between predicted and true labels. Off-diagonal entries are sparse and isolated, suggesting that misclassifications are infrequent and not systematic.

For the D2 dataset, the confusion matrix shows near-complete correspondence between ground truth and predicted classes. Glioma and No Tumor cases are classified without error, while Meningioma and Pituitary categories exhibit only one or two isolated misclassifications. These errors are consistent with the reported accuracy of approximately 99.8%. No confusion is observed between tumor and non-tumor classes; when misclassifications occur, they are limited to anatomically or radiologically similar tumor types. The D3 dataset follows a comparable pattern. Most samples are correctly classified along the diagonal, with perfect or near-perfect recognition of the No Tumor and Pituitary classes. A minimal number of samples—typically one or two—are interchanged between Meningioma and Glioma. The distribution of correct predictions remains balanced across classes, indicating no observable class-specific bias. For the D4 dataset, the confusion matrix shows a cleaner structure. Glioma and Pituitary samples are classified without error, while Meningioma and No Tumor show only negligible misassignments. These observations are consistent with the highest quantitative performance reported for this dataset, including an accuracy of approximately 99.86% and an F1-score close to 99.87%. The limited misclassifications are not concentrated in any specific class pair.

Across all three datasets, the confusion matrices show consistent class separation and limited cross-class confusion. The observed patterns indicate stable decision boundaries across tumor categories and datasets, with misclassifications occurring infrequently and without systematic trends.

4.3. Accuracy and Loss of ConvNeXt Base

While the confusion matrices demonstrate the classification reliability of ConvNeXt Base across individual tumor classes, it is equally important to examine how the model’s learning behavior evolved during training. The following section, therefore, presents the training and validation accuracy and loss trends over successive epochs, providing in-sight into the model’s convergence stability, generalization behavior, and overall learning efficiency.

The training and validation curves of ConvNeXt Base over 20 epochs, as shown in

Figure 4, illustrate a stable and consistent learning process. The training accuracy increases sharply during the initial epochs and plateaus near 99.8% by around the 10th epoch, maintaining a steady trajectory through the remaining epochs. The validation accuracy follows a closely aligned trend, rising rapidly in the early phase and stabilizing around 99.7–99.8% by the final epochs. The near overlap between the training and validation accuracy curves indicates that the model generalizes well to unseen data without significant overfitting.

Similarly, the training loss decreases sharply during the first few epochs and gradually converges to a minimal value close to 0.002–0.003 by the 20th epoch. The validation loss shows a nearly identical downward pattern, stabilizing around the same range with only minimal fluctuation after the mid-training phase. The close alignment between training and validation losses further supports convergence stability and strong generalization capability.

4.4. ROC Characteristics of ConvNeXt Base

The training behavior observed in the previous subsection motivates an examination of ConvNeXt Base under varying decision thresholds. To this end, Receiver Operating Characteristic (ROC) curves are used to assess class-wise sensitivity and specificity in a threshold-independent manner across datasets D2, D3, and D4.

As shown in

Figure 5, the ROC curves for ConvNeXt Base exhibit consistently strong class separability for all four categories—Glioma, Meningioma, Pituitary, and No Tumor. Across datasets, the curves closely track the upper-left region of the ROC space, indicating high true positive rates at very low false positive rates. The corresponding AUC values are saturated, reaching 1.000 for D2 and D4, and 0.9998 for D3, in agreement with the aggregate performance metrics.

For the D2 dataset, the class-specific ROC curves overlap almost entirely, indicating uniform discrimination between tumor and non-tumor categories. The curves rise sharply toward the maximum true positive rate at negligible false positive rates, reflecting minimal ambiguity in class separation. On D3, the ROC profiles remain steep and tightly clustered near unity. A slightly smoother transition is observed for the Meningioma class, consistent with the marginal reduction in recall reported earlier; however, AUC values remain above 0.999 for all classes. For the D4 dataset, the ROC curves show an immediate ascent to the maximum true positive rate followed by a flat plateau across all classes, yielding AUC values of 1.0 and indicating complete separability within this dataset.

Across all three datasets, the ROC curves show limited variation between classes and no evidence of systematic divergence. The observed AUC values and curve shapes indicate consistent discrimination performance across tumor types and confirm that classification behavior remains stable under threshold variation.

4.5. Computation Efficiency Comparison

Beyond classification accuracy, computational efficiency is a critical determinant of a model’s suitability for real-world clinical deployment. Following the confirmation of ConvNeXt Base’s diagnostic reliability, this subsection examines its computational efficiency in terms of inference speed, model size, memory usage, and power consumption, in comparison with the baseline architectures. The reported efficiency metrics are averaged across all three datasets and summarized in

Table 8.

Among the evaluated models, ConvNeXt Base exhibits the most favorable overall efficiency profile. It achieves the highest inference speed of 370.88 frames per second (FPS), outperforming MobileNetV3Large (352.62 FPS), EfficientNetV2-B0 (353.94 FPS), ResNet152 (358.71 FPS), and VGG19 (362.73 FPS). This high throughput indicates that ConvNeXt Base can support real-time or high-volume inference scenarios without sacrificing diagnostic accuracy.

Despite its relatively deep architecture, ConvNeXt Base maintains moderate GPU memory requirements, allocating 1703.67 MB and reserving 9684 MB during inference. These values are substantially lower than those observed for VGG19, which requires 4476 MB of allocated memory and reserves nearly 19.8 GB, as well as ResNet152, which allocates 2956 MB and reserves approximately 16.3 GB. This balance between architectural depth and memory efficiency highlights the practical design of ConvNeXt Base.

In terms of model size, ConvNeXt Base occupies 334.18 MB, making it larger than lightweight architectures such as MobileNetV3Large (16.25 MB) and EfficientNetV2-B0 (22.75 MB), but considerably smaller than VGG19 (532.49 MB). Notably, ConvNeXt Base demonstrates the lowest CPU memory usage among all models at 2635.61 MB, compared to more than 3700 MB consumed by the other architectures. Power consumption remains moderate at 59.13 W, closely matching ResNet152 (59.12 W) and significantly lower than VGG19’s 72.31 W.

The efficiency measurements show that ConvNeXt Base achieves higher inference speed than the comparison models while maintaining moderate GPU and CPU memory usage and power consumption. Compared with deeper architectures such as VGG19 and ResNet152, it requires substantially less memory, and compared with lightweight models, it avoids the corresponding reductions in predictive capacity. These observations indicate that the computational cost of ConvNeXt Base remains proportionate to its architectural depth and observed performance.

4.6. Ablation Study

Having established both the performance and efficiency of ConvNeXt Base, it is crucial to analyze which architectural or training components contribute most to its success. An ablation study was conducted to systematically evaluate the effects of removing or altering specific elements, to quantify their individual impact on performance across three datasets. Two independent experimental instances were performed, each producing a full set of results as shown in

Table 9 and

Table 10. This dual evaluation helps ensure that the observations are consistent and not artifacts of a single training run. Both tables include the same test conditions for comparison with the baseline model:

Table 9.

Ablation study of ConvNeXt Base under architectural and training variations (Instance 1) across D2, D3, and D4.

Table 9.

Ablation study of ConvNeXt Base under architectural and training variations (Instance 1) across D2, D3, and D4.

| | Precision | Recall | F1-Score | Accuracy | AUC | Kappa |

|---|

| D2 | D3 | D4 | D2 | D3 | D4 | D2 | D3 | D4 | D2 | D3 | D4 | D2 | D3 | D4 | D2 | D3 | D4 |

|---|

| BaseLine model | 99.84 | 99.67 | 99.87 | 99.84 | 99.69 | 99.86 | 99.84 | 99.68 | 99.87 | 99.83 | 99.69 | 99.86 | 100 | 99.98 | 100 | 99.77 | 99.59 | 99.91 |

| Without data augmentation | 99.19 | 99.03 | 99.73 | 99.14 | 99.02 | 99.71 | 99.25 | 99.02 | 99.71 | 99.15 | 99.08 | 99.72 | 99.99 | 99.98 | 100 | 98.87 | 98.77 | 99.63 |

| Removing MHCA | 99.83 | 99.07 | 99.44 | 99.83 | 99.03 | 99.46 | 99.83 | 99.05 | 99.45 | 99.83 | 99.08 | 99.44 | 100 | 99.98 | 99.98 | 99.77 | 98.77 | 98.77 |

| No transfer learning | 79.19 | 74.84 | 79.01 | 77.67 | 74.08 | 78.37 | 77.29 | 73.99 | 78.16 | 77.33 | 74.52 | 78.46 | 94.07 | 92.65 | 94.71 | 69.83 | 65.83 | 71.29 |

| Changing input size | 99.66 | 98.87 | 99.64 | 99.66 | 98.87 | 99.66 | 99.66 | 99.66 | 99.65 | 99.66 | 98.93 | 99.65 | 100 | 98.85 | 99.97 | 99.55 | 98.57 | 99.53 |

Table 10.

Ablation study of ConvNeXt Base under architectural and training variations (Instance 2) across D2, D3, and D4.

Table 10.

Ablation study of ConvNeXt Base under architectural and training variations (Instance 2) across D2, D3, and D4.

| | Precision | Recall | F1-Score | Accuracy | AUC | Kappa |

|---|

| D2 | D3 | D4 | D2 | D3 | D4 | D2 | D3 | D4 | D2 | D3 | D4 | D2 | D3 | D4 | D2 | D3 | D4 |

|---|

| BaseLine model | 99.84 | 99.67 | 99.87 | 99.83 | 99.69 | 99.86 | 99.83 | 99.68 | 99.86 | 99.83 | 99.69 | 99.86 | 100 | 99.98 | 100 | 99.77 | 99.59 | 99.81 |

| Without data augmentation | 98.03 | 98.08 | 98.92 | 97.79 | 97.94 | 98.87 | 97.85 | 97.96 | 98.88 | 97.80 | 98.09 | 98.88 | 99.99 | 99.92 | 99.95 | 97.07 | 97.44 | 98.51 |

| Removing MHCA | 99.32 | 98.74 | 99.52 | 99.32 | 98.61 | 99.51 | 99.32 | 99.67 | 99.51 | 99.32 | 98.70 | 99.51 | 100 | 99.92 | 99.93 | 99.10 | 98.26 | 99.35 |

| No transfer learning | 80.01 | 74.22 | 77.85 | 72.41 | 71.23 | 78.37 | 73.05 | 71.63 | 75.91 | 72.25 | 71.85 | 75.80 | 92.90 | 91.32 | 93.93 | 62.95 | 62.26 | 67.74 |

| Changing input size | 98.19 | 98.95 | 99.43 | 98.18 | 98.94 | 99.44 | 98.14 | 98.93 | 99.43 | 98.14 | 99.01 | 99.44 | 100 | 99.91 | 99.98 | 97.52 | 98.67 | 99.25 |

The ablation study was designed to isolate the contribution of key architectural and training components that are known to influence robustness and generalization in medical image classification. Data augmentation was ablated to assess the model’s dependence on artificial variability and to examine whether performance gains stem primarily from exposure to augmented samples or from intrinsic representational strength. MHCA was removed to evaluate its role in refining inter-channel feature interactions beyond the baseline ConvNeXt architecture. Transfer learning was excluded to quantify the extent to which pretrained representations contribute to convergence stability and performance under limited-data conditions typical of medical imaging. Finally, input size was varied to examine scale sensitivity and spatial robustness, which is clinically relevant given the variability in MRI acquisition protocols, resolution, and scanner configurations across institutions. Together, these ablations provide a controlled assessment of whether ConvNeXt Base relies on narrowly tuned design choices or maintains stable performance under realistic variations in training configuration and input characteristics.

The baseline model—the original ConvNeXt Base configuration with transfer learning, MHCA, and full data augmentation—retains the highest and most consistent performance across all metrics. For the first experimental instance, the baseline achieved precision, recall, F1-score, and accuracy around 99.8–99.9% across all three datasets, with AUC values at or near 1.0 and Kappa values between 0.996 and 0.999. The second instance produced almost identical results, showing the model’s reproducibility and stability under repeated training conditions.

Removing data augmentation resulted in a small but consistent drop across all metrics. For example, average accuracy fell to approximately 99.1–99.7% in the first instance and 97.8–98.9% in the second, reflecting a mild decline in generalization capability, particularly visible on D3 and D4. Eliminating MHCA produced a similar slight degradation: accuracies hovered around 99.0–99.4% in the first run and 98.7–99.5% in the second. This indicates that while MHCA improves fine-grained feature interactions, its absence does not drastically affect the model’s robustness.

By contrast, the “No transfer learning” configuration caused a substantial drop in performance across all datasets. Accuracy dropped to ~77–78%, and AUC values fell to ~93–94%, confirming that pretrained weights are critical for achieving high classification precision on limited medical data. Finally, changing the input size resulted in only minor variation from the baseline, with accuracy remaining above 99.6% in the first instance and around 99.0–99.4% in the second. This suggests that ConvNeXt Base adapts well to modest changes in input resolution.

Across both experiments, the baseline model consistently outperformed all modified configurations. However, the close results across most ablation cases (except when transfer learning was removed) underscore the inherent stability and adaptability of the ConvNeXt Base architecture.

4.7. Explainability Analysis Using Grad-CAM++ and Gradient SHAP

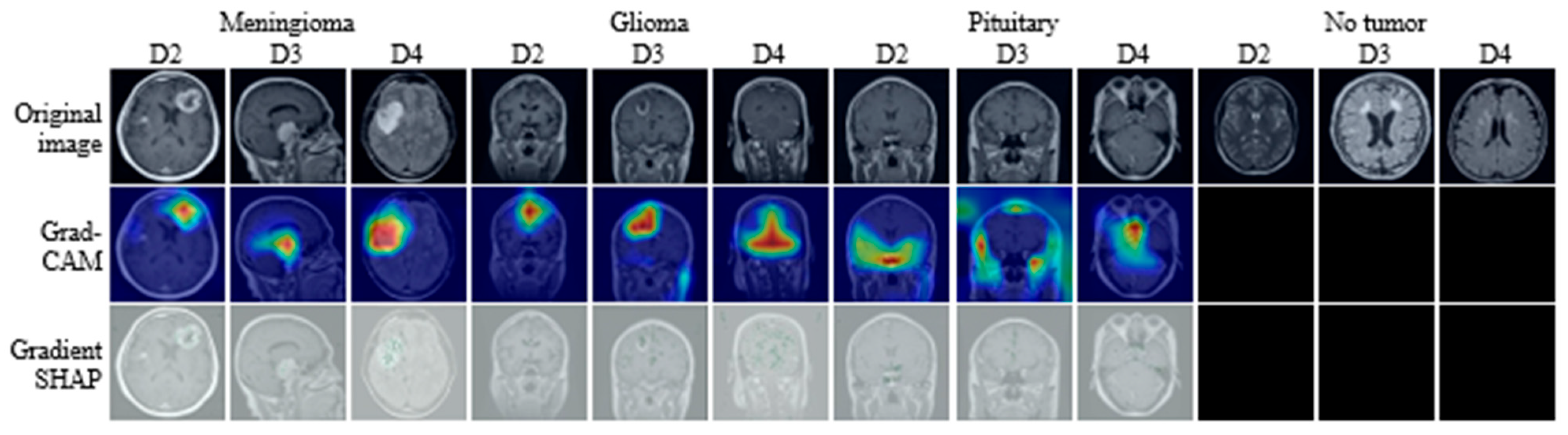

To examine the interpretability of ConvNeXt Base predictions, visual explanations were generated using Grad-CAM++ and Gradient SHAP for representative samples from each dataset, as shown in

Figure 6. For illustration, representative examples are included for each tumor class (Glioma, Meningioma, Pituitary, and No Tumor) from all three datasets, with each case presented as a triplet consisting of the original MRI slice, the corresponding Grad-CAM++ map, and the Gradient SHAP attribution. This structured presentation enables systematic inspection of model attention across tumor types, datasets, and explanation methods. The examples shown are selected to be representative rather than exhaustive, with the goal of illustrating consistent interpretability behavior rather than individual outliers.

Grad-CAM++ visualizations produce spatially localized heatmaps that emphasize regions contributing most strongly to the predicted class. Across datasets, these heatmaps consistently concentrate activation within anatomically plausible tumor regions while suppressing surrounding normal tissue. For instance, in Meningioma cases in D2 and D4, activation is focused along lesion margins adjacent to the meninges, reflecting the characteristic extra-axial growth pattern. Similarly, in Glioma examples from D3, Grad-CAM++ highlights irregular, infiltrative regions within the brain parenchyma, aligning with known radiological features of gliomas. Pituitary samples show concentrated activation within the sellar region, while No Tumor cases exhibit diffuse low-intensity responses without focal hotspots, indicating the absence of spurious attention.

In contrast, Gradient SHAP visualizations exhibit smoother and more spatially distributed attribution patterns. Rather than sharply delineated boundaries, these maps assign relevance across broader regions encompassing both the lesion core and adjacent tissue. For tumor-bearing images, Gradient SHAP highlights not only the lesion itself but also peripheral regions that may encode contextual cues such as edema, intensity gradients, or structural displacement. In No Tumor examples, attribution remains weak and spatially diffuse, suggesting that predictions are not driven by localized artifacts or non-anatomical cues.

Across all datasets and tumor categories, the two explainability methods demonstrate distinct but complementary characteristics. Grad-CAM++ consistently emphasizes compact, class-discriminative regions, whereas Gradient SHAP provides a more global attribution reflecting cumulative pixel-level influence. The consistency of these patterns across datasets and classes indicates that ConvNeXt Base relies on anatomically meaningful regions for classification while also incorporating contextual information from surrounding tissue. This coherence between explanation methods supports the interpretability and clinical plausibility of the model’s decision-making behavior.

4.8. Statistical Analysis

The quantitative results reported in the previous sections already indicate clear performance differences among the evaluated models. The purpose of the statistical analysis is therefore not to introduce additional claims, but to verify whether these observed differences remain consistent under formal, non-parametric testing across datasets and evaluation conditions.

4.8.1. Friedman’s Aligned Ranks Test

The Friedman aligned ranks test was applied to determine whether performance variations among the five models are statistically distinguishable under repeated-measures evaluation. The aligned formulation mitigates dataset-level effects before ranking, making it suitable for cross-dataset comparison.

As reported in

Table 11, the test yields statistically significant results for all three datasets. The aligned Friedman statistics are 18.93 (

p = 0.00081) for D2, 19.98 (

p = 0.00050) for D3, and 18.47 (

p = 0.00100) for D4, leading to rejection of the null hypothesis in each case. These results indicate that model performance differs beyond random variation across datasets.

The aligned rank values shown in

Table 12 display a consistent ordering pattern. ConvNeXt Base appears with the highest aligned rank values on D2, D3, and D4. This behavior follows from the rank-alignment procedure, where higher rank values reflect stronger aggregate performance across metrics rather than inferior model behavior. The persistence of this ranking pattern across datasets is the primary observation of interest.

4.8.2. Post-Hoc Analysis

To identify where the detected differences occur, post-hoc analyses were conducted using the Holm step-down correction and the Wilcoxon signed-rank test. These methods provide complementary views through multiple-comparison control and paired-sample testing.

The Holm-adjusted comparisons reported in

Table 13 show that ConvNeXt Base is statistically distinguishable from at least one competing architecture on each dataset. On D2, significant separation is observed between ConvNeXt Base and VGG19, while differences with ResNet152 and MobileNetV3Large do not reach statistical significance. On D3, ConvNeXt Base differs significantly from both ResNet152 and VGG19. On D4, a significant difference is again observed relative to ResNet152, with other pairwise comparisons remaining non-significant. These results indicate that pairwise distinctions vary with dataset characteristics.

The Wilcoxon signed-rank test results presented in

Table 14 focus on paired comparisons between ConvNeXt Base and each competing model. For all three datasets, the null hypothesis is rejected for every comparison, with adjusted

p-values ≤ 0.04217. This indicates consistent performance differences under matched-sample evaluation across datasets.

Across the applied tests, the statistical outcomes align with the empirical performance trends reported earlier. The tests do not introduce additional claims, but verify that the observed performance differences associated with ConvNeXt Base are reproducible across datasets and testing frameworks rather than arising from isolated experimental conditions.

4.8.3. Critical Difference Analysis

Critical difference (CD) diagrams are used to visualize the relative ranking of models and the presence or absence of statistically significant differences identified by the Friedman and Holm tests.

Figure 7 presents the CD diagrams for datasets D2, D3, and D4. In each diagram, models are positioned along a horizontal axis according to their average ranks, with connecting bars indicating groups of models that are not statistically distinguishable at the selected confidence level.

For the D2 dataset, ConvNeXt Base is positioned at the extreme end of the rank axis, reflecting the highest average rank among the evaluated models. VGG19 and ResNet152 occupy intermediate positions, while MobileNetV3Large and EfficientNetV2-B0 appear further away along the axis. The absence of a connecting bar between ConvNeXt Base and most other models indicates that its rank is statistically separated at the chosen significance level. A similar configuration is observed for the D3 dataset. ConvNeXt Base again appears at the leading position, followed by ResNet152 and VGG19 at closer proximity than in D2. The reduced spacing among the remaining models suggests smaller rank differences; however, the CD bar does not extend to include ConvNeXt Base, indicating that its separation from the other architectures remains statistically meaningful. For the D4 dataset, ConvNeXt Base retains the highest average rank. The remaining models form a comparable grouping to that observed in D3, with ResNet152 and VGG19 positioned centrally and EfficientNetV2-B0 and MobileNetV3Large appearing at lower ranks. As in the other datasets, ConvNeXt Base is not connected to the other models by a CD bar.

Across the three datasets, the CD diagrams show a consistent placement of ConvNeXt Base relative to the other architectures. The visual separation observed in each diagram aligns with the statistical tests reported earlier and reflects stable ranking behavior across datasets.

4.8.4. Kendall’s Coefficient of Concordance

Kendall’s coefficient of concordance (W) was used to quantify the level of agreement among the model rankings obtained from the Friedman test and subsequent post-hoc analyses. Unlike pairwise significance tests, Kendall’s W provides a single normalized measure, ranging from 0 to 1, that reflects how consistently models are ordered across datasets and evaluation metrics. Higher values indicate stronger agreement among rankings, while lower values indicate greater variability.

As shown in

Figure 8, the Kendall’s W score computed for the comparison involving ConvNeXt Base and the remaining models is close to 1.0. This indicates a high level of agreement among the ranking outcomes derived from different metrics and datasets. The rank positions associated with ConvNeXt Base remain stable across D2, D3, and D4, despite differences in dataset composition and evaluation conditions.

The observed concordance suggests that the relative ordering of models does not fluctuate substantially across datasets or metrics. In this context, Kendall’s W serves as a consistency indicator, confirming that the ranking patterns identified by the Friedman and post-hoc analyses are aligned across the evaluated settings.

4.9. TOPSIS-Based Multi-Criteria Ranking

In addition to statistical testing, a multi-criteria decision-making (MCDM) analysis was performed to examine model behavior when multiple performance metrics are considered simultaneously. For this purpose, the Technique for Order of Preference by Similarity to Ideal Solution (TOPSIS) was applied to derive a composite ranking of the five models across the three test datasets.

TOPSIS is a MCDM approach that identifies the best alternative by measuring its geometric closeness to an ideal solution. Mathematically, for a set of models

evaluated over

criteria, TOPSIS defines:

where

is the Euclidean distance of model

from the ideal best (maximum performance across all metrics), and

is its distance from the ideal worst (minimum performance across all metrics).

The final closeness coefficient lies between 0 and 1, with higher values indicating greater proximity to the ideal solution and, therefore, a better overall ranking.

The TOPSIS results are summarized in

Table 15. ConvNeXt Base attains a closeness coefficient of 1.0 on all three datasets (D2, D3, and D4), placing it first in each case. This reflects uniform proximity to the ideal solution across the evaluated metrics—accuracy, precision, recall, F1-score, AUC, and Kappa.

On D2, EfficientNetV2-B0 ranks second with a score of 0.8907, followed by MobileNetV3Large (0.8375), ResNet152 (0.7577), and VGG19 (0.0015). For D3, EfficientNetV2-B0 again occupies the second position (0.5083), closely followed by MobileNetV3Large (0.4528), while VGG19 (0.2481) and ResNet152 (0.0) appear lower in the ranking. On D4, MobileNetV3Large ranks second (0.8968), followed by VGG19 (0.7272), EfficientNetV2-B0 (0.7224), and ResNet152 (0.0).

Across datasets, the TOPSIS rankings show that ConvNeXt Base consistently maintains the highest closeness coefficient, while the remaining models exhibit variations in their relative positions depending on dataset characteristics and metric trade-offs. The MCDM analysis reflects how differences in performance balance influence composite rankings when multiple criteria are considered simultaneously.

4.10. Comparison with State-of-the-Art Methods

This section positions the proposed ConvNeXt Base model within the current landscape of MRI-based brain tumor classification.

Table 16 compares its performance, validation scope, and analytical depth with recent state-of-the-art approaches, highlighting differences not only in accuracy but also in methodological rigor and clinical relevance.

Across all three benchmark datasets (D2, D3, and D4), ConvNeXt Base achieves consistently high performance across all primary evaluation metrics, including accuracy, precision, recall, F1-score, AUC, and Cohen’s Kappa. On the D2 dataset, ConvNeXt Base records 99.83% accuracy with an AUC of 1.0 and a Kappa of 0.9977. Several prior studies using similar datasets report accuracies in the range of 98.9–99.0% [

66,

67]; however, most do not report AUC or Kappa values and lack formal statistical validation or ablation analysis. In contrast, the present study complements marginal accuracy gains with cross-dataset evaluation, interpretability analysis, efficiency profiling, and statistical confirmation, enabling a more reliable assessment of diagnostic behavior.

On the D3 dataset, ConvNeXt Base attains 99.69% accuracy with an AUC of 0.9998 and a Kappa of 0.9959. Comparable works report accuracies between 98.9% and 99.9% [

68,

69,

70,

71], but typically rely on single-dataset evaluation or isolated performance reporting. Several high-performing hybrid and transformer-based models achieve competitive accuracy but do not assess reproducibility across datasets or examine sensitivity to architectural and training choices. ConvNeXt Base differs in that performance consistency is demonstrated across three independent datasets using a unified protocol.

Results on the D4 dataset further reinforce this observation. ConvNeXt Base achieves 99.86% accuracy with an AUC of 1.0, while several studies that include external testing report noticeable performance degradation when evaluated beyond their original dataset [

48,

72]. The ability of ConvNeXt Base to maintain performance across datasets with differing characteristics addresses a key limitation of many existing approaches, particularly in the context of heterogeneous clinical imaging environments.

A notable distinction lies in metric completeness. Many prior studies emphasize accuracy alone, with limited or inconsistent reporting of precision, recall, and F1-score. ConvNeXt Base demonstrates uniformly high values across all class-wise metrics, reducing the likelihood that performance is driven by class imbalance or dominant categories. The inclusion of Cohen’s Kappa further provides an agreement-based assessment that is rarely reported in related work, offering additional insight into diagnostic reliability.

Methodologically, the proposed framework differs from most existing studies in scope and analytical coverage. While some works incorporate explainability, ablation analysis, or efficiency evaluation in isolation, few integrate these elements within a single experimental design. As reflected in

Table 16, only a limited subset of studies report partial ablation or explainability analysis [

66,

73,

74], and none combine statistical hypothesis testing, efficiency benchmarking, multi-criteria ranking, and dual explainability methods within one framework. This integrated evaluation reduces uncertainty associated with dataset-specific effects and experimental bias.

Computational considerations further differentiate ConvNeXt Base from several recent transformer-based and hybrid architectures. Although models such as Swin-based or hybrid CNN–transformer networks report high accuracy [

29,

71], they often lack inference-time analysis or require substantial computational resources. ConvNeXt Base achieves comparable or higher accuracy while maintaining high inference throughput and moderate memory usage, enabling a clearer assessment of practical feasibility in routine imaging workflows.

From a clinical standpoint, the combination of cross-dataset validation, balanced class-wise performance, agreement-based reliability metrics, and interpretable visual explanations addresses several barriers to translational adoption. Many prior studies rely on curated or single-source datasets, limiting confidence in generalization across scanners, acquisition protocols, and patient populations. The validation strategy employed here provides early evidence of robustness under realistic variability, which is essential for clinical decision-support applications.

Finally, the architectural design of ConvNeXt Base contributes to its observed stability. By adopting convolutional structures informed by transformer-inspired design principles, the model captures both local and contextual features without incurring the computational overhead typical of pure transformer architectures. This design choice enables competitive performance while preserving efficiency, offering a practical alternative to increasingly complex yet less tractable models.

The state-of-the-art comparison therefore indicates that ConvNeXt Base differs from many existing approaches primarily in the breadth and consistency of its evaluation rather than in isolated peak accuracy values. The reported results demonstrate stable performance across multiple datasets, balanced behavior across evaluation metrics, inclusion of agreement-based reliability measures, and explicit consideration of computational efficiency and interpretability. These characteristics address several limitations commonly observed in prior studies and provide a more complete basis for assessing suitability in heterogeneous clinical imaging settings.

5. Critical Discussion, Clinical Relevance, and Practical Implications

The experimental findings across all three test datasets collectively demonstrate that ConvNeXt Base delivers a level of diagnostic performance and consistency that sets it apart from traditional convolutional neural networks and other transformer-inspired architectures used in brain tumor classification. The results indicate not only high quantitative accuracy but also the kind of behavioral stability, interpretability, and efficiency that are indispensable for translation into clinical practice.

5.1. Diagnostic Performance and Error Characterization

The class-wise behavior of ConvNeXt Base, as revealed through confusion matrix analysis, provides a clinically grounded view of its diagnostic reliability beyond aggregate accuracy metrics. Across all three datasets, the most notable observation is the near-total absence of confusion between the No Tumor category and tumor-bearing classes. From a clinical standpoint, this directly corresponds to an extremely low false-negative rate—arguably the most critical requirement in neuro-oncologic screening and triage, where missed lesions can delay intervention and worsen outcomes. Equally important, the rarity of false positives reduces the risk of unnecessary follow-up imaging, avoidable referrals, and patient distress.

The few misclassifications that do occur are concentrated almost exclusively between Meningioma and Pituitary tumors. This pattern is clinically interpretable rather than concerning. These entities arise in anatomically proximate regions and may share overlapping signal characteristics on routine MRI, particularly in single-sequence settings. Such errors reflect intrinsic radiological ambiguity rather than instability in model decision-making. Notably, no dataset exhibited instances where normal scans were classified as high-risk tumors, reinforcing the model’s strong negative predictive value and suitability for screening-oriented workflows.

The consistency of these error patterns across D2, D3, and D4 suggests that ConvNeXt Base does not rely on dataset-specific cues but instead learns stable, anatomically meaningful representations. This observation is reinforced by explainability analyses using Grad-CAM++ and Gradient SHAP, which show that model attention is consistently localized to tumor-relevant regions rather than spurious background structures. From a clinical integration perspective, this alignment between prediction and radiological reasoning supports use as a triage aid, second-reader system, or quality-control mechanism rather than a purely experimental classifier.

While residual ambiguities remain, they point to logical extensions rather than structural weaknesses. Incorporation of multi-sequence MRI inputs, finer-grained tumor subtyping, and uncertainty-aware outputs would likely address the remaining borderline cases. Within the scope of the present study, however, the observed class-wise behavior demonstrates that ConvNeXt Base meets core diagnostic safety requirements and operates within clinically interpretable error boundaries.

5.2. Quantitative Robustness and Cross-Dataset Stability

Beyond class-wise behavior, the quantitative consistency of ConvNeXt Base across independent datasets provides strong evidence of robustness under distributional variation. Across D2, D3, and D4, the model maintained accuracy above 99.6% with AUC values at or near unity, while precision, recall, and F1-scores remained closely aligned. This tight coupling between metrics indicates balanced decision-making rather than optimization toward a single performance objective.

From a diagnostic safety perspective, the sustained near-perfect recall values are particularly significant. High recall ensures that true tumor cases—including subtle or early-stage lesions—are rarely missed, addressing a common limitation of automated systems deployed under real-world variability. At the same time, equally high precision limits false alarms, preventing unnecessary escalation of care and preserving clinician trust. This balance is especially relevant in high-throughput radiology environments, where both missed findings and excessive false positives carry tangible clinical and operational costs.

Performance on the No Tumor class further reinforces this reliability. Perfect or near-perfect classification across datasets indicates that the model can safely exclude pathology in normal scans, a prerequisite for any system intended for screening, workload triage, or preliminary review. Such stability is rarely observed when models are evaluated beyond a single curated dataset.

Comparative analysis highlights that this robustness is not uniformly shared across architectures. EfficientNetV2-B0 and MobileNetV3Large demonstrate strong performance but exhibit greater variance across datasets, while older models such as ResNet152 and VGG19 show class-specific degradation, particularly for Meningioma. These inconsistencies likely arise from limitations in capturing broader contextual cues and subtle textural differences. In contrast, ConvNeXt Base maintains consistent behavior across all tumor types and datasets, suggesting effective resistance to domain shift induced by scanner differences, acquisition protocols, or population heterogeneity.

An additional aspect of robustness emerges when considering dataset scale. As summarized in

Table 1, dataset D2 represents a comparatively smaller cohort relative to D3 and D4 and can therefore be regarded as a practical proxy for low-data clinical settings. Despite its limited size, ConvNeXt Base achieved near-perfect accuracy, AUC, and class-wise reliability on D2, without signs of overfitting or instability. This behavior reflects the effectiveness of transfer learning in leveraging pretrained representations and the regularizing effect of data augmentation, enabling stable performance even when annotated data are scarce.

Preserving diagnostic reliability under dataset size constraints and distributional variation is essential for practical use, where institution-specific data and rare tumor subtypes often limit sample availability. The consistent performance of ConvNeXt Base across datasets of differing size and composition indicates robustness to such variability, supporting its use as a reliable component within routine neuroimaging workflows.

5.3. Training Dynamics and Model Reliability

The training behavior of ConvNeXt Base provides important insight into its reliability beyond headline performance metrics. The training and validation curves exhibit smooth, monotonic convergence with negligible separation, indicating a well-calibrated balance between model capacity, optimization strategy, and data variability. Rapid stabilization within a limited number of epochs suggests that the architecture benefits from strong inductive biases and effective transfer learning initialization, enabling efficient feature adaptation without prolonged fine-tuning.

The close alignment between training and validation loss across datasets confirms the absence of overfitting—a frequent limitation in deep learning models trained on medical imaging data with constrained diversity. This convergence pattern indicates that the learned representations are consistently regularized and that the applied data augmentation strategies effectively expand the training distribution. The persistence of these trends across datasets further suggests that the learning dynamics are not tightly coupled to dataset-specific characteristics.

These observations also provide indirect evidence of robustness to preprocessing and data handling choices. Although an exhaustive evaluation of alternative normalization and augmentation pipelines is beyond the scope of this study, the modest performance changes observed in the ablation experiments—particularly when data augmentation is removed—indicate that ConvNeXt Base does not depend on narrowly tuned preprocessing configurations. The near-identical convergence behavior across repeated training runs reinforces this interpretation, suggesting low sensitivity to the specific preprocessing and normalization strategy adopted.

From a clinical operations perspective, such predictable convergence behavior reduces maintenance complexity. Models that train stably can be retrained or adapted to new institutional data with limited computational overhead and minimal risk of performance instability. This property is particularly relevant for longitudinal deployment scenarios, where periodic updates are required to accommodate evolving acquisition protocols or population characteristics.

5.4. ROC Characteristics and Diagnostic Operating Profile