Assessment of Remote Vital Sign Monitoring and Alarms in a Real-World Healthcare at Home Dataset

Abstract

1. Introduction

2. Materials and Methods

2.1. Current Health (CH) Platform

2.2. HaH Program Dataset

2.3. Vital Sign Observations

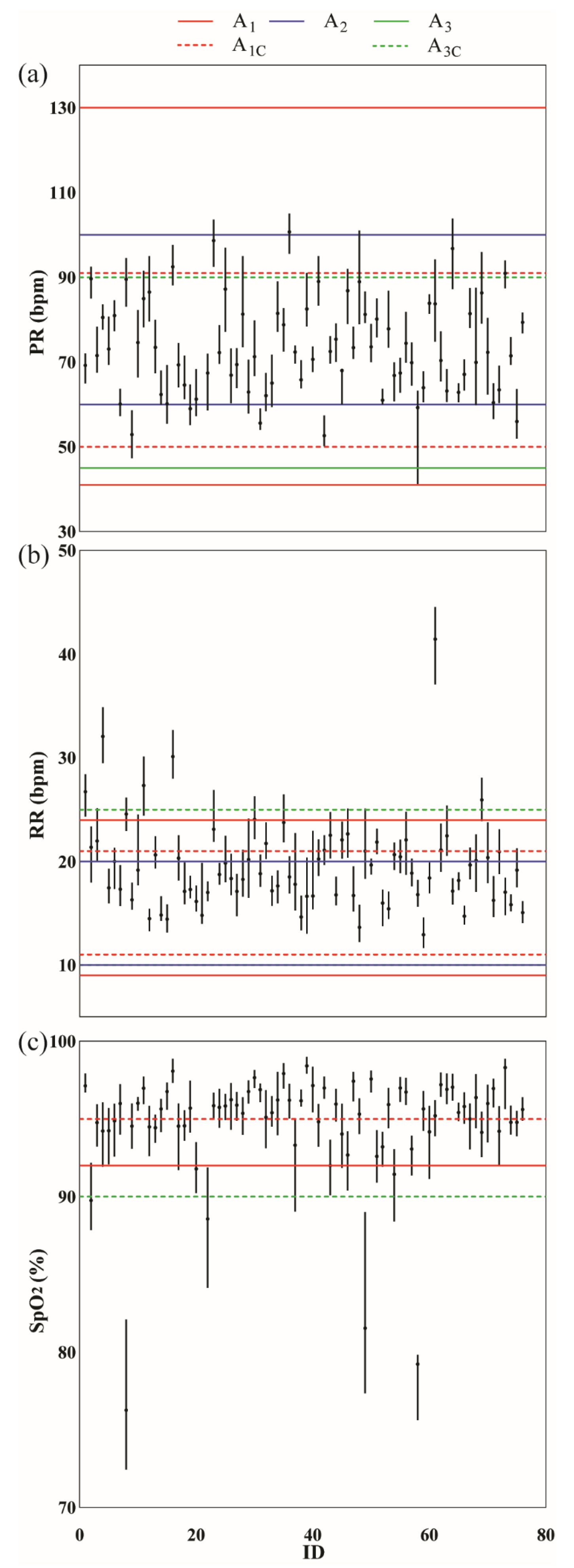

2.4. Vital Sign Alarms

2.5. Data Analysis

3. Results

3.1. Alarms

3.1.1. Vital Sign Observation Rate

3.1.2. Alarm Aggregation Window

3.1.3. Alarm Rule

3.1.4. Alarm Threshold

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

| Minimum Observations | |||

|---|---|---|---|

| AW | PR | SpO2 | RR |

| 5 min | 30 | 30 | 15 |

| 15 min | 90 | 90 | 45 |

| 1 h | 360 | 360 | 180 |

| 4 h | 1440 | 1440 | 720 |

References

- Levine, D.M.; Mitchell, H.; Rosario, N.; Boxer, R.B.; Morris, C.A.; Britton, K.A.; Schnipper, J.L. Acute Care at Home During the COVID-19 Pandemic Surge in Boston. J. Gen. Intern. Med. 2021, 36, 3644–3646. [Google Scholar] [CrossRef] [PubMed]

- Ouchi, K.; Liu, S.; Tonellato, D.; Keschner, Y.G.; Kennedy, M.; Levine, D.M. Home hospital as a disposition for older adults from the emergency department: Benefits and opportunities. J. Am. Coll. Emerg. Physicians Open 2021, 2, e12517. [Google Scholar] [CrossRef] [PubMed]

- Vincent, J.-L.; Einav, S.; Pearse, R.; Jaber, S.; Kranke, P.; Overdyk, F.J.; Whitaker, D.K.; Gordo, F.; Dahan, A.; Hoeft, A. Improving detection of patient deterioration in the general hospital ward environment. Eur. J. Anaesthesiol. 2018, 35, 325–333. [Google Scholar] [CrossRef] [PubMed]

- Sapra, A.; Malik, A.; Bhandari, P. Vital Sign Assessment. In StatPearls; StatPearls Publishing: Treasure Island, FL, USA, 2021. Available online: http://www.ncbi.nlm.nih.gov/books/NBK553213/ (accessed on 13 December 2021).

- NHS England. Pulse Oximetry to Detect Early Deterioration of Patients with COVID-19 in Primary and Community Care Settings. 2020. Available online: https://www.england.nhs.uk/coronavirus/wp-content/uploads/sites/52/2020/06/C0445-remote-monitoring-in-primary-care-jan-2021-v1.1.pdf (accessed on 10 May 2022).

- Japp, A.G.; Robertson, C.; Wright, R.J.; Reed, M.; Robson, A. Macleod’s Clinical Diagnosis; Elsevier Health Sciences: London, UK, 2018. [Google Scholar]

- van Rossum, M.C.; Vlaskamp, L.B.; Posthuma, L.M.; Visscher, M.J.; Breteler, M.J.M.; Hermens, H.J.; Kalkman, C.J.; Preckel, B. Adaptive threshold-based alarm strategies for continuous vital signs monitoring. J. Clin. Monit. Comput. 2021, 36, 407–417. [Google Scholar] [CrossRef]

- Mann, K.D.; Good, N.M.; Fatehi, F.; Khanna, S.; Campbell, V.; Conway, R.; Sullivan, C.; Staib, A.; Joyce, C.; Cook, D. Predicting Patient Deterioration: A Review of Tools in the Digital Hospital Setting. J. Med. Internet Res. 2021, 23, e28209. [Google Scholar] [CrossRef]

- Overview|National Early Warning Score Systems that Alert to Deteriorating Adult Patients in Hospital|Advice|NICE. Available online: https://www.nice.org.uk/advice/mib205 (accessed on 27 April 2021).

- Cardona-Morrell, M.; Nicholson, M.; Hillman, K. Vital Signs: From Monitoring to Prevention of Deterioration in General Wards. In Annual Update in Intensive Care and Emergency Medicine 2015; Vincent, J.-L., Ed.; Springer International Publishing: Cham, Germany, 2015; pp. 533–545. [Google Scholar] [CrossRef]

- Cardona-Morrell, M.; Prgomet, M.; Lake, R.; Nicholson, M.; Harrison, R.; Long, J.; Westbrook, J.; Braithwaite, J.; Hillman, K. Vital signs monitoring and nurse–patient interaction: A qualitative observational study of hospital practice. Int. J. Nurs. Stud. 2016, 56, 9–16. [Google Scholar] [CrossRef]

- Rose, L.; Clarke, S.P. Vital Signs. AJN Am. J. Nurs. 2010, 110, 11. [Google Scholar] [CrossRef]

- Clifton, A.D.; Clifton, L.; Sandu, D.-M.; Smith, G.B.; Tarassenko, L.; Vollam, S.; Watkinson, P.J. ‘Errors’ and omissions in paper-based early warning scores: The association with changes in vital signs—A database analysis. BMJ Open 2015, 5, e007376. [Google Scholar] [CrossRef]

- Watkins, T.; Whisman, L.; Booker, P. Nursing assessment of continuous vital sign surveillance to improve patient safety on the medical/surgical unit. J. Clin. Nurs. 2016, 25, 278–281. [Google Scholar] [CrossRef]

- Areia, C.; King, E.; Ede, J.; Young, L.; Tarassenko, L.; Watkinson, P.; Vollam, S. Experiences of current vital signs monitoring practices and views of wearable monitoring: A qualitative study in patients and nurses. J. Adv. Nurs. 2020, 78, 810–822. [Google Scholar] [CrossRef]

- Leenen, J.P.L.; Leerentveld, C.; van Dijk, J.D.; van Westreenen, H.L.; Schoonhoven, L.; Patijn, G.A. Current Evidence for Continuous Vital Signs Monitoring by Wearable Wireless Devices in Hospitalized Adults: Systematic Review. J. Med. Internet Res. 2020, 22, e18636. [Google Scholar] [CrossRef] [PubMed]

- Cho, O.M.; Kim, H.; Lee, Y.W.; Cho, I. Clinical Alarms in Intensive Care Units: Perceived Obstacles of Alarm Management and Alarm Fatigue in Nurses. Healthc. Inform. Res. 2016, 22, 46. [Google Scholar] [CrossRef] [PubMed]

- Ruppel, H.; De Vaux, L.; Cooper, D.; Kunz, S.; Duller, B.; Funk, M. Testing physiologic monitor alarm customization software to reduce alarm rates and improve nurses’ experience of alarms in a medical intensive care unit. PLoS ONE 2018, 13, e0205901. [Google Scholar] [CrossRef] [PubMed]

- Hastings, S.N.; Choate, A.L.; Mahanna, E.P.; Floegel, T.A.; Allen, K.D.; Van Houtven, C.H.; Wang, V. Early Mobility in the Hospital: Lessons Learned from the STRIDE Program. Geriatrics 2018, 3, 61. [Google Scholar] [CrossRef]

- Weenk, M.; Bredie, S.J.; Koeneman, M.; Hesselink, G.; van Goor, H.; van de Belt, T.H. Continuous Monitoring of Vital Signs in the General Ward Using Wearable Devices: Randomized Controlled Trial. J. Med. Internet Res. 2020, 22, e15471. [Google Scholar] [CrossRef]

- Soroya, S.H.; Farooq, A.; Mahmood, K.; Isoaho, J.; Zara, S. From information seeking to information avoidance: Understanding the health information behavior during a global health crisis. Inf. Process. Manag. 2021, 58, 102440. [Google Scholar] [CrossRef]

- Paine, C.W.; Goel, V.V.; Ely, E.; Stave, C.D.; Stemler, S.; Zander, M.; Bonafide, C.P. Systematic review of physiologic monitor alarm characteristics and pragmatic interventions to reduce alarm frequency. J. Hosp. Med. 2016, 11, 136–144. [Google Scholar] [CrossRef]

- Bent, B.; Goldstein, B.A.; Kibbe, W.A.; Dunn, J.P. Investigating sources of inaccuracy in wearable optical heart rate sensors. npj Digit. Med. 2020, 3, 18. [Google Scholar] [CrossRef]

- Krej, M.; Baran, P.; Dziuda, Ł. Detection of respiratory rate using a classifier of waves in the signal from a FBG-based vital signs sensor. Comput. Methods Programs Biomed. 2019, 177, 31–38. [Google Scholar] [CrossRef]

- Davidson, S.; Villarroel, M.; Harford, M.; Finnegan, E.; Jorge, J.; Young, D.; Watkinson, P.; Tarassenko, L. Vital-sign circadian rhythms in patients prior to discharge from an ICU: A retrospective observational analysis of routinely recorded physiological data. Crit Care 2020, 24, 181. [Google Scholar] [CrossRef]

- Churpek, M.M.; Adhikari, R.; Edelson, D.P. The value of vital sign trends for detecting clinical deterioration on the wards. Resuscitation 2016, 102, 1–5. [Google Scholar] [CrossRef] [PubMed]

- Ghosh, E.; Eshelman, L.; Yang, L.; Carlson, E.; Lord, B. Description of vital signs data measurement frequency in a medical/surgical unit at a community hospital in United States. Data Brief. 2018, 16, 612–616. [Google Scholar] [CrossRef] [PubMed]

- Verrillo, S.C.; Cvach, M.; Hudson, K.W.; Winters, B.D. Using Continuous Vital Sign Monitoring to Detect Early Deterioration in Adult Postoperative Inpatients. J. Nurs. Care Qual. 2019, 34, 107–113. [Google Scholar] [CrossRef] [PubMed]

- Lukasewicz, C.L.; Mattox, E.A. Understanding Clinical Alarm Safety. Crit. Care Nurse 2015, 35, 45–57. [Google Scholar] [CrossRef] [PubMed]

- Graham, K.C.; Cvach, M. Monitor Alarm Fatigue: Standardizing Use of Physiological Monitors and Decreasing Nuisance Alarms. Am. J. Crit. Care 2010, 19, 28–34. [Google Scholar] [CrossRef] [PubMed]

- Welch, J. An Evidence-Based Approach to Reduce Nuisance Alarms and Alarm Fatigue. Biomed. Instrum. Technol. 2011, 45, 46–52. [Google Scholar] [CrossRef]

- Prgomet, M.; Cardona-Morrell, M.; Nicholson, M.; Lake, R.; Long, J.; Westbrook, J.; Braithwaite, J.; Hillman, K. Vital signs monitoring on general wards: Clinical staff perceptions of current practices and the planned introduction of continuous monitoring technology. Int. J. Qual. Health Care 2016, 28, 515–521. [Google Scholar] [CrossRef]

- Alavi, A.; Bogu, G.K.; Wang, M.; Rangan, E.S.; Brooks, A.W.; Wang, Q.; Higgs, E.; Celli, A.; Mishra, T.; Metwally, A.A.; et al. Real-time alerting system for COVID-19 and other stress events using wearable data. Nat. Med. 2022, 28, 175–184. [Google Scholar] [CrossRef]

- Meng, Y.; Speier, W.; Shufelt, C.; Joung, S.; Van Eyk, J.; Merz, C.N.B.; Lopez, M.; Spiegel, B.; Arnold, C.W. A Machine Learning Approach to Classifying Self-Reported Health Status in a Cohort of Patients With Heart Disease Using Activity Tracker Data. IEEE J. Biomed. Health Inform. 2020, 24, 878–884. [Google Scholar] [CrossRef]

| Alarm Ruleset | Alarm Rule | Respiratory Rate | Oxygen Saturation | Pulse Rate |

|---|---|---|---|---|

| A1 | A1-01 | RR < 9 | ||

| A1-02 | RR > 24 | |||

| A1-03 | SpO2 ≤ 91 | |||

| A1-04 | PR < 41 | |||

| A1-05 | PR > 130 | |||

| A1-06 * | 9 ≤ RR ≤ 11 | 92 ≤ SpO2 ≤ 93 | 111 ≤ PR ≤ 130 | |

| A1-07 * | 21 ≤ RR ≤ 24 | 92 ≤ SpO2 ≤ 93 | 91 ≤ PR ≤ 110 | |

| A1-08 * | 21 ≤ RR ≤ 24 | 94 ≤ SpO2 ≤ 95 | 111 ≤ PR ≤ 130 | |

| A1-09 * | 21 ≤ RR ≤ 24 | 92 ≤ SpO2 ≤ 93 | 111 ≤ PR ≤ 130 | |

| A1-10 * | 21 ≤ RR ≤ 24 | 92 ≤ SpO2 ≤ 93 | 41 ≤ PR ≤ 50 | |

| A2 | A2-01 (Hypoxia) | SpO2 < 92 | ||

| A2-02 (Tachycardia) | PR > 100 | |||

| A2-03 (Bradycardia) | PR < 60 | |||

| A2-04 (Tachypnea) | RR > 20 | |||

| A2-05 (Bradypnea) | RR < 10 | |||

| A3 | A3-01 * | RR > 25 | PR > 90 | |

| A3-02 * | RR > 25 | SpO2 < 90 | ||

| A3-03 * | RR < 10 | SpO2 < 90 | ||

| A3-04 | PR < 45 |

| Ruleset | Observation Rate | Aggregation Window | WAP | Patient Rate (%) | Alarm Rate | EDT (hour) |

|---|---|---|---|---|---|---|

| A1 | VS12 | AW0 | 235 | 64.47 | 0.33 ± 0.56 | 0 (0) |

| VS4 | AW0 | 683 | 85.53 | 0.96 ± 1.37 | 0 (0) | |

| VS1 | AW0 | 1364 | 94.74 | 1.93 ± 1.83 | 3 (2.00) | |

| VS15 | AW0 | 1899 | 98.68 | 2.68 ± 1.99 | 3.25 (1.75) | |

| VSSD | - | 2251 | 100 | 3.18 ± 2.02 | 3.5 (1.52) | |

| VSOD | AW15 | 1759 | 96.05 | 2.48 ± 2.02 | 3.41 (1.76) | |

| VSOD | AW1 | 1195 | 76.32 | 1.69 ± 1.88 | 3.67 (1.68) | |

| VSOD | AW4 | 791 | 65.79 | 1.12 ± 1.65 | 3.97 (1.45) | |

| A2 | VS12 | AW0 | 609 | 93.42 | 0.86 ± 0.76 | 0 (0) |

| VS4 | AW0 | 1737 | 96.05 | 2.45 ± 1.89 | 0 (0) | |

| VS1 | AW0 | 2548 | 98.68 | 3.60 ± 1.92 | 3 (1.00) | |

| VS15 | AW0 | 2922 | 100 | 4.13 ± 1.78 | 3.75 (0.75) | |

| VSSD | - | 3113 | 100 | 4.40 ± 1.68 | 3.96 (0.55) | |

| VSOD | AW15 | 2855 | 100 | 4.03 ± 1.83 | 3.98 (0.74) | |

| VSOD | AW1 | 2467 | 98.68 | 3.48 ± 2.04 | 4.00 (0.79) | |

| VSOD | AW4 | 2121 | 93.42 | 3.00 ± 2.22 | 4.00 (0.28) | |

| A3 | VS12 | AW0 | 65 | 30.26 | 0.09 ± 0.31 | 0 (0) |

| VS4 | AW0 | 185 | 50.00 | 0.26 ± 0.71 | 0 (0) | |

| VS1 | AW0 | 435 | 61.84 | 0.61 ± 1.16 | 2.00 (2.00) | |

| VS15 | AW0 | 712 | 65.79 | 1.01 ± 1.50 | 2.75 (2.00) | |

| VSSD | - | 942 | 80.26 | 1.33 ± 1.66 | 3.05 (2.05) | |

| VSOD | AW15 | 626 | 65.79 | 0.88 ± 1.45 | 3.04 (2.16) | |

| VSOD | AW1 | 356 | 48.68 | 0.50 ± 1.13 | 3.25 (2.16) | |

| VSOD | AW4 | 200 | 32.89 | 0.28 ± 0.88 | 3.84 (1.90) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zahradka, N.; Geoghan, S.; Watson, H.; Goldberg, E.; Wolfberg, A.; Wilkes, M. Assessment of Remote Vital Sign Monitoring and Alarms in a Real-World Healthcare at Home Dataset. Bioengineering 2023, 10, 37. https://doi.org/10.3390/bioengineering10010037

Zahradka N, Geoghan S, Watson H, Goldberg E, Wolfberg A, Wilkes M. Assessment of Remote Vital Sign Monitoring and Alarms in a Real-World Healthcare at Home Dataset. Bioengineering. 2023; 10(1):37. https://doi.org/10.3390/bioengineering10010037

Chicago/Turabian StyleZahradka, Nicole, Sophie Geoghan, Hope Watson, Eli Goldberg, Adam Wolfberg, and Matt Wilkes. 2023. "Assessment of Remote Vital Sign Monitoring and Alarms in a Real-World Healthcare at Home Dataset" Bioengineering 10, no. 1: 37. https://doi.org/10.3390/bioengineering10010037

APA StyleZahradka, N., Geoghan, S., Watson, H., Goldberg, E., Wolfberg, A., & Wilkes, M. (2023). Assessment of Remote Vital Sign Monitoring and Alarms in a Real-World Healthcare at Home Dataset. Bioengineering, 10(1), 37. https://doi.org/10.3390/bioengineering10010037