Abstract

Academic conferences offer scientists the opportunity to share their findings and knowledge with other researchers. However, the number of conferences is rapidly increasing globally and many unsolicited e-mails are received from conference organizers. These e-mails take time for researchers to read and ascertain their legitimacy. Because not every conference is of high quality, there is a need for young researchers and scholars to recognize the so-called “predatory conferences” which make a profit from unsuspecting researchers without the core purpose of advancing science or collaboration. Unlike journals that possess accreditation indices, there is no appropriate accreditation for international conferences. Here, a bibliometric measure is proposed that enables scholars to evaluate conference quality before attending.

1. Introduction

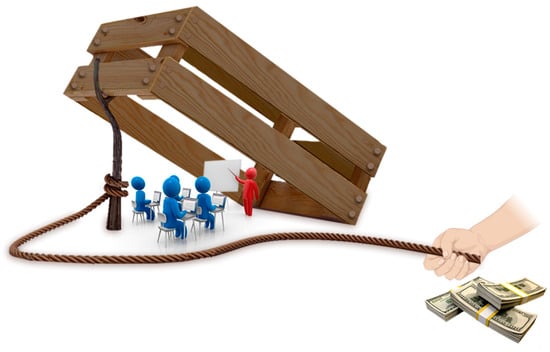

Academic conferences offer scientists the opportunity to share their findings and knowledge with other researchers. Conferences are organized by institutions or societies, and in rare cases, by individuals [1]. There is an increasing tendency for researchers to receive invitations from unsolicited conferences. The organizers of these so-called “predatory conferences” lure researchers, especially young scientists, to attend their conferences by sending out one or more emails that invite the scholars to be plenary speakers in those conferences (Figure 1) [2,3,4]. Jeffrey Beall is the first person to use the term “predatory meetings”. The term was used in the same context as “predatory publications”. He explained that some companies organize conferences to invite researchers from all over the world to present their papers. These organizers exploit the need for researchers to publish papers in proceedings or affiliated journals by asking for a significant conference attendance charge, using low quality conference business models [2]. Interested readers are referred to excellent reference sources for conference enhancement tips [5,6] and the implications of predatory conferences [4,7]. Early-career academics and scholars from developing countries are the most vulnerable to these predatory meeting invitations. Readers can easily identify some of the introductions used in those electronic communications:

- It is with great pleasure that we welcome you to attend our conference as an invited speaker…

- We have gone through your recent study; it has been accepted to be given as an oral presentation…

- On behalf of the organizing committee, we are pleased to invite you to take part in the conference…

- I just wanted to check that if you have received my previous mail that I sent a couple of weeks back. We have not heard back and wanted to make sure it went through your inbox…

Figure 1.

Predatory conferences target scientists. In practice, predatory conferences quickly accept even poor quality submissions without peer review and without control of nonsensical content, while asking for high attendance fees. They may utilize conference names that are similar to the names of more established conferences to attract academics and promote meetings with unrelated images copied from the Internet.

Similar to invitations from predatory conferences, there is also a notable increase in the number of invitations from predatory journals [8,9,10]. Whereas reputable international journals possess accreditation indices such as impact factor [11,12], source normalized impact factor (SNIP) [13,14], Scimago journal rank (SJR) [15], Eigenfactor Score (ES) or Hirsch index (h-index) [16], conferences do not have comparable accreditation indices. Although some conference ranking metrics are available (e.g., http://www.conferenceranks.com/; http://portal.core.edu.au/conf-ranks/, accessed on February 2021), not all reputable conferences are amenable to search (e.g., European Society of Biomaterials Conference; Forbes Women’s Summit). Usually, search conferences are restricted to specific fields only. In addition, the number of conferences are growing at an exponential rate, which makes website updates on a daily or even a weekly basis virtually impossible.

There is a pressing need for a system that evaluates the academic quality of international conferences [17]. Prior art only discusses the dilapidation of predatory conferences without offering a solution. The objective of the present letter is to address potential methods of evaluating conferences and to offer suggestions on conference evaluation. The authors propose a new accreditation scheme for conferences which may be useful for scientists, especially for young scholars, to identify high-level conferences.

2. Potential Solutions

Although some institutions do evaluate the credibility of conferences, such evaluations are not conducted on all conferences. In some instances, the conference organizer has to apply for the conference accreditation. This is not a mandatory process, unlike journal accreditation. The following are suggestions for enhancing the quality of conference accreditation:

(I) A conference must have a unique name with a registered International Standard Serial Number (ISSN). This is comparable with journal ISSN, in which there are no two journals with identical names. The conference title should be devoid of a period descriptor that references it as part of an ongoing conference series. For example, “European Conference on Biomaterials” is preferred over “30th European Conference on Biomaterials”. If one utilizes a descriptor that represents a continued series, such as “30th European Conference on Biomaterials”, the conference will have its own ISSN. It follows that another ISSN will be issued for the “31st European Conference on Biomaterials”. This is not how journals are cited. Each journal has its individual ISSN but issues within the same journal do not have their own ISSNs. Therefore, each conference should have a unique name with a registered ISSN number.

Avoiding citation for a conference: poster and slide presentations in conferences are not peer reviewed in depth and are rarely accessible to scholars. Consequently, citation of posters or slides should be avoided. The information below may be employed for referring a conference abstract/paper.

Author names. Title of the study. Conference name. Series number. Year. City and Country. Publisher.

For example: P. Makvandi, F.R. Tay. Injectable antibacterial hydrogels for potential applications in drug delivery. European Conference on Biomaterials, 2019, 30th series, Dresden, Germany, Elsevier.

It should be stressed that a conference title and a series number should only be used once for a particular conference, similar to the name of a journal. No two conferences should have the same title.

(II) If the original article has previously been published in a journal, it has to be mentioned in the conference abstract by referring to the electronic link of the published paper. Such a strategy helps to reduce redundant citation of one’s previously published research. This is because since many researchers present their results at more than one conference.

3. How to Accredit

If there is a persistent handle or DOI of a previously published paper in the conference abstract, citation of the original paper may be used as an index to distinguish the quality of the presented paper at the conference. Accordingly, the h-index [18,19] of a conference may be used. In addition, the average number of the presented abstracts, including oral and poster presentations, may be employed along with other criteria such as CiteScore [20], impact factor [21,22], source normalized impact per paper (SNIP) [21], in conjunction with the conference h-index. In this manner, one does not need to know the number of accepted abstracts in the conference because such information is already expressed by the h-index. If a conference presentation is generated from more than one published paper (even from different journals), the average number of citations of the original published papers may be used.

Because this type of accreditation depends on previously published papers, the term “secondary” may be added before the indices. For example, secondary CiteScore (SSC), secondary impact factor (SIF), secondary Hirsch index (Sh-index) may be used to differentiate between the previously published papers and the abstracts (Table 1).

Table 1.

List of 3 presentations that come from 4 previously published papers to be introduced at a conference.

Some conferences accept findings that have not been published or were presented at other conferences. In this case, the abstract will not be linked to a previously published paper. It should be noted that presentations of the same findings to the same audience should be avoided. However, different parts of a previously published paper or different aspects of a clinical trial may be presented in different conferences.

Where the secondary CiteScore (SSC) for the conference is calculated based on the average:

In addition, the secondary h-index (Sh-index) is the minimum value of h such that the given conference has published h papers that have each been cited at least h times. In the example, the calculated Sh-index for the conference is 3 because there are three publications that each has at least three citations.

The present letter proposed a bibliometric measure that enables all academicians to evaluate conference quality before attending. Publishing an article may take a long time (e.g., more than one year) in some disciplines. During this period, there may be new publications that may be cited as references. Hence, presenting the paper is an excellent opportunity to identify the strength of an idea and additional research that has been accomplished in a particular field. It has to be mentioned, however, that that is no evaluation available for unpublished and nonpeer reviewed manuscripts. Hence, only published studies may be used for bibliometric measurement.

It has to be pointed out that popular accreditation systems such as “impact factor” have their own disadvantages. For instance, “impact factor” depends on the size of the field/discipline. A larger community who work would draw more citations than the one having a small number of publications. Thus, these limitations motivated academic members to introduce other bibliometric measurements. To date, there is no universal acceptance of the accreditation systems. Thus, it may not be possible to solve the issue completely till there is a new accreditation system for conferences. Nevertheless, this present letter will help researchers identify and avoid participating in predatory conferences. Therefore, our proposed bibliometric measurement has its pros and cons. Based on our opinion, this letter brings the predatory conferences to the attention of scholars to stimulate some thought about this issue.

Author Contributions

Writing—original draft preparation, P.M., A.N.; writing—review and editing, P.M., A.N., and F.R.T. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

No new data were created or analyzed in this study. Data sharing is not applicable to this article.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Richardson, J.; Zikic, J. The darker side of an international academic career. Career Dev. Int. 2007, 12, 164–186. [Google Scholar] [CrossRef]

- Beall, J. Predatory publishers are corrupting open access. Nature 2012, 489, 179. [Google Scholar] [CrossRef]

- Cobey, K.D.; De Costa E Silva, M.; Mazzarello, S.; Stober, C.; Hutton, B.; Moher, D.; Clemons, M. Is this conference for real? Navigating presumed predatory conference invitations. J. Oncol. Pract. 2017, 13, 410–413. [Google Scholar] [CrossRef] [PubMed]

- Heasman, P.A. Unravelling the mysteries of predatory conferences. Br. Dent. J. 2019, 226, 228–230. [Google Scholar] [CrossRef] [PubMed]

- Foster, C.; Wager, E.; Marchington, J.; Patel, M.; Banner, S.; Kennard, N.C.; Panayi, A.; Stacey, R. Good Practice for Conference Abstracts and Presentations: GPCAP. Res. Integr. Peer Rev. 2019, 4, 11. [Google Scholar] [CrossRef]

- Scherer, R.W.; Meerpohl, J.J.; Pfeifer, N.; Schmucker, C.; Schwarzer, G.; von Elm, E. Full publication of results initially presented in abstracts. Cochrane Database Syst. Rev. 2018, 2018, MR000005. [Google Scholar] [CrossRef]

- Cress, P.E. Are predatory conferences the dark side of the open access movement? Aesthetic Surg. J. 2017, 37, 734–738. [Google Scholar] [CrossRef] [PubMed]

- Kolata, G. Scientific Articles Accepted (Personal Checks, Too). New York Times 2013, 7, 6–9. [Google Scholar]

- Strielkowski, W. Predatory journals: Beall’s List is missed. Nature 2017, 544, 416. [Google Scholar] [CrossRef] [PubMed]

- Keogh, A. Beware predatory journals. Br. Dent. J. 2020, 228, 317. [Google Scholar] [CrossRef] [PubMed]

- Abramo, G.; Andrea D’Angelo, C.; Felici, G. Predicting publication long-term impact through a combination of early citations and journal impact factor. J. Inform. 2019, 13, 32–49. [Google Scholar] [CrossRef]

- Kumari, P.; Kumar, R. Scientometric Analysis of Computer Science Publications in Journals and Conferences with Publication Patterns. J. Scientometr. Res. 2020, 9, 54–62. [Google Scholar] [CrossRef]

- Bornmann, L.; Williams, R. Can the journal impact factor be used as a criterion for the selection of junior researchers? A large-scale empirical study based on researcherID data. J. Inform. 2017, 11, 788–799. [Google Scholar] [CrossRef]

- Belkadhi, K.; Trabelsi, A. Toward a stochastically robust normalized impact factor against fraud and scams. Scientometrics 2020, 124, 1871–1884. [Google Scholar] [CrossRef]

- Walters, W.H. Do subjective journal ratings represent whole journals or typical articles? Unweighted or weighted citation impact? J. Informetr. 2017, 11, 730–744. [Google Scholar] [CrossRef]

- Villaseñor-Almaraz, M.; Islas-Serrano, J.; Murata, C.; Roldan-Valadez, E. Impact factor correlations with Scimago Journal Rank, Source Normalized Impact per Paper, Eigenfactor Score, and the CiteScore in Radiology, Nuclear Medicine & Medical Imaging journals. Radiol. Medica 2019, 124, 495–504. [Google Scholar]

- Meho, L.I. Using Scopus’s CiteScore for assessing the quality of computer science conferences. J. Informetr. 2019, 13, 419–433. [Google Scholar] [CrossRef]

- Bornmann, L.; Daniel, H.D. What do we know about the h index? J. Am. Soc. Inf. Sci. Technol. 2007, 58, 1381–1385. [Google Scholar] [CrossRef]

- Therattil, P.J.; Hoppe, I.C.; Granick, M.S.; Lee, E.S. Application of the h-index in academic plastic surgery. Ann. Plast. Surg. 2016, 76, 545–549. [Google Scholar] [CrossRef]

- Teixeira da Silva, J.A.; Memon, A.R. CiteScore: A cite for sore eyes, or a valuable, transparent metric? Scientometrics 2017, 111, 553–556. [Google Scholar] [CrossRef]

- Moed, H.F. From Journal Impact Factor to SJR, Eigenfactor, SNIP, CiteScore and Usage Factor. In Applied Evaluative Informetrics; Springer: Berlin/Heidelberg, Germany, 2017; pp. 229–244. [Google Scholar]

- Bornmann, L.; Marx, W. The journal Impact Factor and alternative metrics. EMBO Rep. 2016, 17, 1094–1097. [Google Scholar] [CrossRef] [PubMed]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).