Abstract

The changing world of scholarly communication and the emerging new wave of ‘Open Science’ or ‘Open Research’ has brought to light a number of controversial and hotly debated topics. Evidence-based rational debate is regularly drowned out by misinformed or exaggerated rhetoric, which does not benefit the evolving system of scholarly communication. This article aims to provide a baseline evidence framework for ten of the most contested topics, in order to help frame and move forward discussions, practices, and policies. We address issues around preprints and scooping, the practice of copyright transfer, the function of peer review, predatory publishers, and the legitimacy of ‘global’ databases. These arguments and data will be a powerful tool against misinformation across wider academic research, policy and practice, and will inform changes within the rapidly evolving scholarly publishing system.

1. Introduction

Scholarly publishing invokes various positions and passions. For example, authors may spend hours struggling with diverse article submission systems, often converting document formatting between a multitude of journal and conference styles, and sometimes spend months waiting for peer review results. The drawn-out and often contentious societal and technological transition to Open Access and Open Science/Open Research, particularly across North America and Europe (Latin America has already widely adopted ‘Acceso Abierto’ for more than 2 decades now [1]) has led both Open Science advocates and defenders of the status quo to adopt increasingly entrenched positions. Much debate ensues via social media, where echo chambers can breed dogmatic partisanship, and character limits leave little room for nuance. Against this backdrop, spurious, misinformed, or even deceptive arguments are sometimes deployed, intentionally or not. With established positions and vested interests on all sides, misleading arguments circulate freely and can be difficult to counter.

Furthermore, while Open Access to publications originated from a grassroots movement born in scholarly circles and academic libraries, policymakers and research funders play a new prescribing role in the area of (open) scholarly practices [2,3,4]. This adds new stakeholders who introduce topics and arguments relating to career incentives, research evaluation and business models for publicly funded research. In particular, the announcement of ‘Plan S’ in 2018 seems to have catalyzed a new wave of debate in scholarly communication, surfacing old and new tensions. While such discussions are by no means new in this ecosystem, this highlights a potential knowledge gap for key components of scholarly communication and the need for better-informed debates [5].

Here, we address ten key topics which are vigorously debated, but pervasive misunderstandings often derail, undercut, or distort discussions1. We aim to develop a base level of common understanding concerning core issues. This can be leveraged to advance discussions on the current state and best practices for academic publishing. We summarize the most up-to-date empirical research, and provide critical commentary, while acknowledging cases where further discussion is still needed. Numerous ‘‘hot topics” were identified through a discussion on Twitter2 and then distilled into ten by the authors of this article and presented in no particular order of importance. These issues overlap, and some are closely related (e.g., those on peer review). The discussion has been constructed in this way to emphasize and focus on precise issues that need addressing. We, the authors, come from a range of backgrounds, as an international group with a variety of experiences in scholarly communication (e.g., publishing, policy, journalism, multiple research disciplines, editorial and peer review, technology, advocacy). Finally, we are writing in our personal capacities.

2. Ten Hot Topics to Address

2.1. Topic 1: Will preprints get your research ‘scooped’?

A ‘preprint’ is typically a version of a research paper that is shared on an online platform prior to, or during, a formal peer review process [6,7,8]. Preprint platforms have become popular in many disciplines due to the increasing drive towards open access publishing and can be publisher- or community-led. A range of discipline-specific or cross-domain platforms now exist [9].

A persistent issue surrounding preprints is the concern that work may be at risk of being plagiarized or ‘scooped’—meaning that the same or similar research will be published by others without proper attribution to the original source—if the original is publicly available without the stamp of approval from peer reviewers and traditional journals [10]. These concerns are often amplified as competition increases for academic jobs and funding, and perceived to be particularly problematic for early-career researchers and other higher-risk demographics within academia.

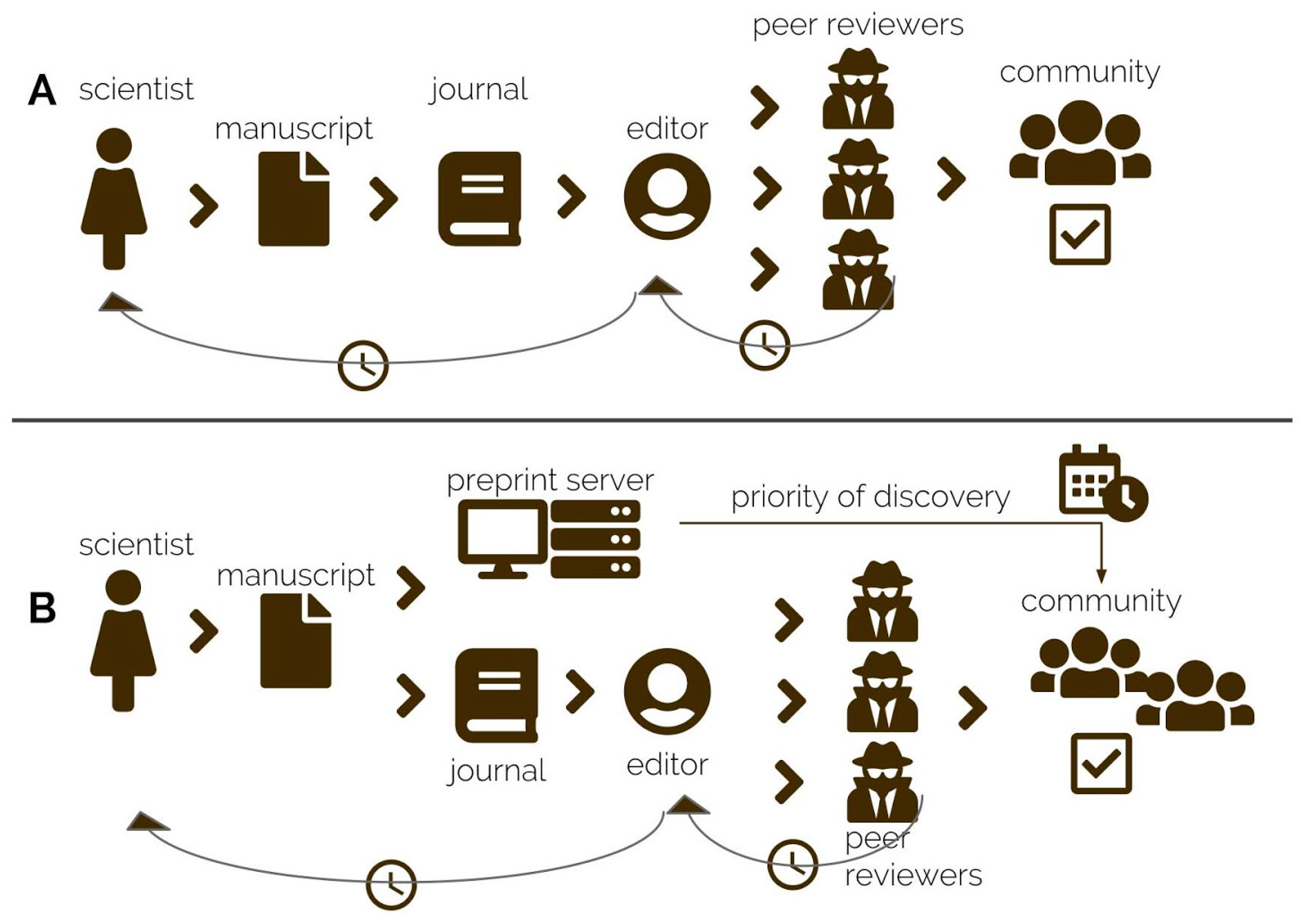

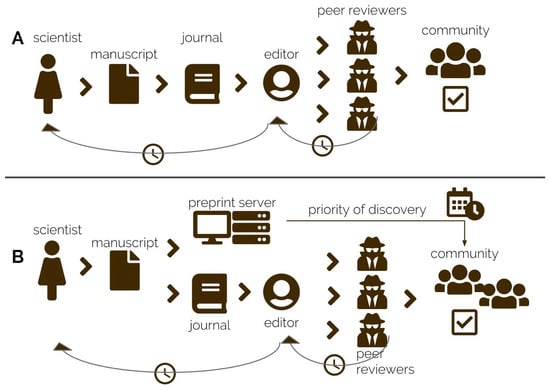

However, preprints protect against scooping [11]. Considering the differences between traditional peer-review based publishing models and deposition of an article on a preprint server, ‘scooping’ is less likely for manuscripts first submitted as preprints. In a traditional publishing scenario, the time from manuscript submission to acceptance and to final publication can range from a few weeks to years, and go through several rounds of revision and resubmission before final publication ([12], see Figure 1). During this time, the same work will have been extensively discussed with external collaborators, presented at conferences, and been read by editors and reviewers in related areas of research. Yet, there is no official open record of that process (e.g., peer reviewers are normally anonymous, reports remain largely unpublished), and if an identical or very similar paper were to be published while the original was still under review, it would be impossible to establish provenance.

Figure 1.

(A) Traditional peer review publishing workflow. (B) Preprint submission establishing priority of discovery.

Preprints provide a time-stamp at time of publication, which establishes the “priority of discovery” for scientific claims ([13], Figure 1). Thus, a preprint can act as proof of provenance for research ideas, data, code, models, and results [14]. The fact that the majority of preprints come with a form of permanent identifier, usually a Digital Object Identifier (DOI), also makes them easy to cite and track; and articles published as preprints tend to accumulate more citations at a faster rate [15]. Thus, if one were ‘scooped’ without adequate acknowledgment, this could be pursued as a case of academic misconduct and plagiarism.

To the best of our knowledge, there is virtually no evidence that ‘scooping’ of research via preprints exists, not even in communities that have broadly adopted the use of the arXiv server for sharing preprints since 1991. If the unlikely case of scooping emerges as the growth of the preprint system continues, it can be dealt with as academic malpractice. ASAPbio includes a series of hypothetical scooping scenarios as part of its preprint FAQ, finding that the overall benefits of using preprints vastly outweigh any potential issues around scooping3. Indeed, the benefits of preprints, especially for early-career researchers, seem to outweigh any perceived risk: rapid sharing of academic research, open access without author-facing charges, establishing priority of discoveries, receiving wider feedback in parallel with or before peer review, and facilitating wider collaborations [11]. That being said, in research disciplines which have not yet widely adopted preprints, scooping should still be acknowledged as a potential threat and protocols implemented in the event that it should occur.

2.2. Topic 2: Do the Journal Impact Factor and journal brand measure the quality of authors and their research?

The journal impact factor (JIF) was originally designed by Eugene Garfield as a metric to help librarians make decisions about which journals were worth subscribing to. The JIF aggregates the number of citations to articles published in each journal, and then divides that sum by the number of published and citable articles. Since this origin, the JIF has become associated as a mark of journal ‘quality’ and gained widespread use for evaluation of research and researchers instead, even at the institutional level. It thus has significant impact on steering research practices and behaviors [16,17,18].

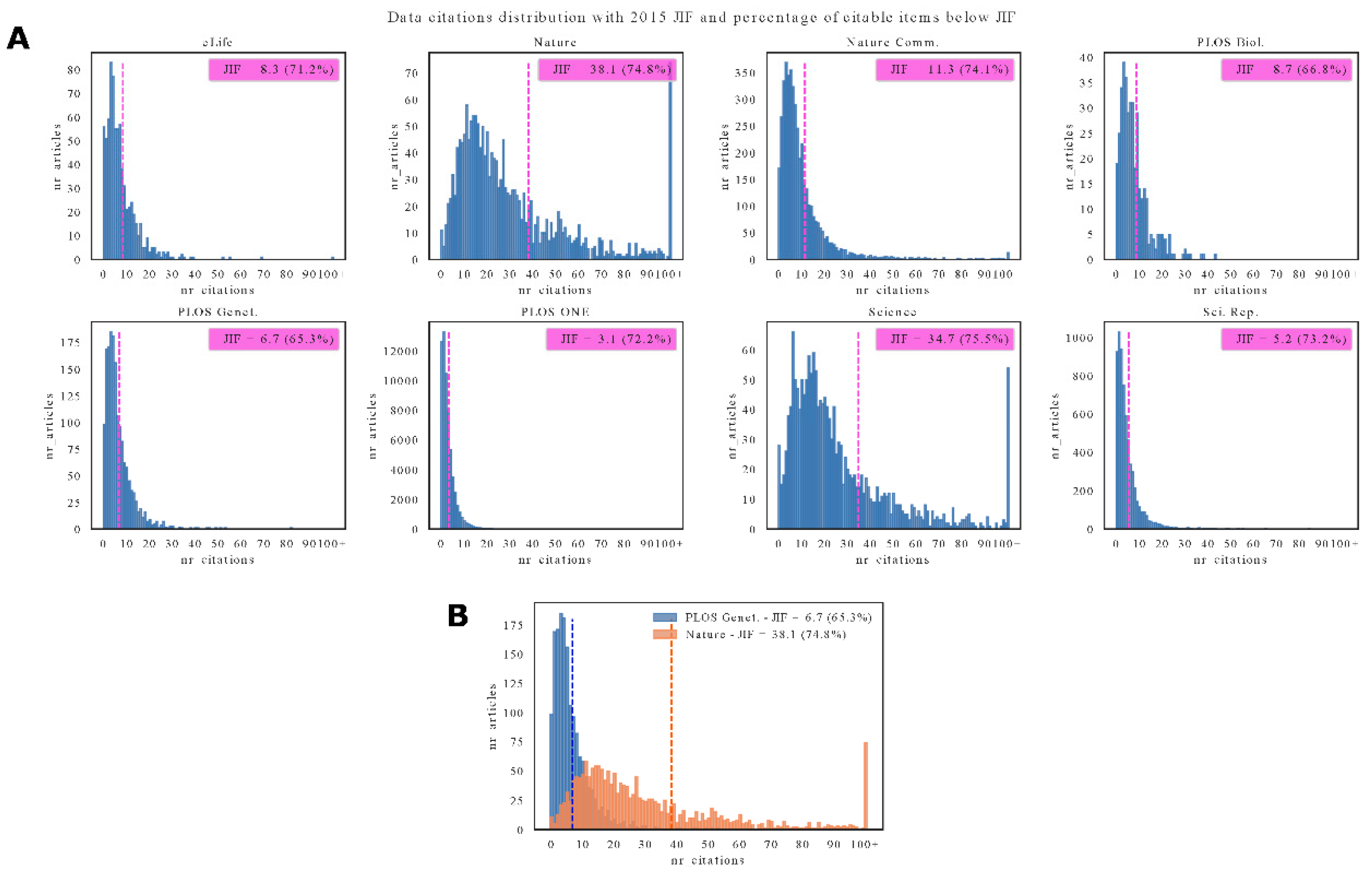

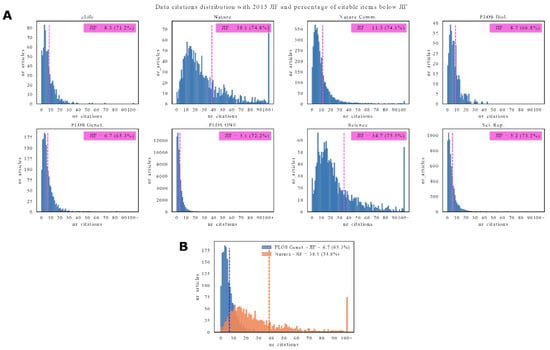

However, this usage of the JIF metric is fundamentally flawed: by the early 1990s it was already clear that the use of the arithmetic mean in its calculation is problematic because the pattern of citation distribution is skewed. Figure 2 shows citation distributions for eight selected journals (data from Lariviere et al., 2016 [19]), along with their JIFs and the percentage of citable items below the JIF. The distributions are clearly skewed, making the arithmetic mean an inappropriate statistic to use to say anything about individual papers (and authors of those papers) within the citation distributions. More informative and readily available article-level metrics can be used instead, such as citation counts or ‘altmetrics’, along with other qualitative and quantitative measures of research ‘impact’ [20,21].

Figure 2.

(A) Data citations distribution for eight selected journals. Each plot reports the 2015 journal impact factor (JIF) and the percentage of citable items below the JIF (between parenthesis). Data from https://www.biorxiv.org/content/10.1101/062109v2. (B) Detail of citations distributions for two selected journals: Plos Genetics and Nature. It is evident how a few highly cited articles push the 2015 JIF of Nature to 38.1. All code and data needed to reproduce these figures are on Zenodo: http://doi.org/10.5281/zenodo.2647404.

About ten years ago, national and international research funding institutions pointed out that numerical indicators such as the JIF should not be deemed a measure of quality4. In fact, the JIF is a highly-manipulated metric [22,23,24], and justifying its continued widespread use beyond its original narrow purpose seems due to its simplicity (easily calculable and comparable number), rather than any actual relationship to research quality [25,26,27].

Empirical evidence shows that the misuse of the JIF—and journal ranking metrics in general—creates negative consequences for the scholarly communication system. These include confusion between outreach of a journal and the quality of individual papers and insufficient coverage of social sciences and humanities as well as research outputs from Latin America, Africa, and South-East Asia [28]. Additional drawbacks include marginalizing research in vernacular languages and on locally relevant topics, inducing unethical authorship and citation practices as well as fostering a reputation economy in academia based on publishers’ prestige rather than actual research qualities such as rigorous methods, replicability, and social impact. Using journal prestige and the JIF to cultivate a competition regime in academia has had deleterious effects on research quality [29].

Despite its inappropriateness, many countries regularly use JIFs to evaluate research [18,30,31] which creates a two-tier scoring system that automatically assigns a higher score (e.g., type A) to papers published in JIF or internationally indexed journals and a lower score (e.g., type B) to those published locally. Most recently, the organization that formally calculates the JIF released a report outlining its questionable use5. Despite this, outstanding issues remain around the opacity of the metric and the fact that it is often negotiated by publishers [32]. However, these integrity problems appear to have done little to curb its widespread misuse.

A number of regional focal points and initiatives now provide and suggest alternative research assessment systems, including key documents such as the Leiden Manifesto6 and the San Francisco Declaration on Research Assessment (DORA)7. Recent developments around ‘Plan S’ call for adopting and implementing such initiatives alongside fundamental changes in the scholarly communication system8. Thus, there is little basis for connecting JIFs with any quality measure, and inappropriate association of the two will continue to have deleterious effects. As appropriate measures of quality for authors and research, concepts of research excellence should be remodeled around transparent workflows and accessible research results [21,33,34].

2.3. Topic 3: Does approval by peer review prove that you can trust a research paper, its data and the reported conclusions?

Researchers have peer reviewed manuscripts prior to publication in a variety of ways since the 18th century [35,36]. The main goal of this practice is to improve the relevance and accuracy of scientific discussions by contributing knowledge, perspective and experience. Even though experts often criticize peer review for a number of reasons, the process is still often considered the “gold standard” of science [37,38]. Occasionally however, peer review approves studies that are later found to be wrong, and rarely deceptive or fraudulent results are indeed discovered prior to publication [39,40]. Thus, there seems to be an element of discord between the ideology behind and the actual practice of peer review. By failing to effectively communicate that peer review is imperfect, the message conveyed to the wider public is that studies published in peer-reviewed journals are “true” and that peer review protects the literature from flawed science. Yet, a number of well-established criticisms exist of many elements of peer review [41,42,43]. In the following, we describe cases of the wider impact of inappropriate peer review on public understanding of scientific literature.

Multiple examples across several areas of science find that scientists elevated the importance of peer review for research that was questionable or corrupted. For example, climate change skeptics have published studies in the Energy and Environment journal, attempting to undermine the body of research that shows how human activity impacts the Earth’s climate. Politicians in the United States downplaying the science of climate change have then cited this journal on several occasions in speeches and reports9.

At times, peer review has been exposed as a process that was orchestrated for a preconceived outcome. The New York Times gained access to confidential peer review documents for studies sponsored by the National Football Leagues (NFL) that were cited as scientific evidence that brain injuries do not cause long-term harm to its players10. During the peer review process, the authors of the study stated that all NFL players were part of a study, a claim that the reporters found to be false by examining the database used for the research. Furthermore, The Times noted that the NFL sought to legitimize the studies’ methods and conclusion by citing a “rigorous, confidential peer-review process” despite evidence that some peer reviewers seemed “desperate” to stop their publication. Such behavior represents a tension between the reviewers wishing to prevent the publication of flawed research, and the publishers who wish to publish highly topical studies on things such as the NFL. Recent research has also demonstrated that widespread industry funding for published medical research often goes undeclared, and that such conflicts of interest are not appropriately addressed by peer review [44,45].

Another problem that peer review often fails to catch is ghostwriting, a process by which companies draft articles for academics who then publish them in journals, sometimes with little or no changes [46,47]. These studies can then be used for political, regulatory and marketing purposes. In 2010, the US Senate Finance Committee released a report that found this practice was widespread, that it corrupted the scientific literature and increased prescription rates11. Ghostwritten articles have appeared in dozens of journals, involving professors at several universities12. Recent court documents have found that Monsanto ghost-wrote articles to counter government assessment of the carcinogenicity of the pesticide glyphosate and to attack the International Agency for Research on Cancer13. Thus, peer review seems to be largely inadequate for exposing or detecting conflicts of interest and mitigating the potential impact this will have.

Scientists understand that peer review is a human process, with human failings, and that despite its limitations, we need it. But these subtleties are lost on the general public, who often only hear the statement that being published in a journal with peer review is the “gold standard” and can erroneously equate published research with the truth. Thus, more care must be taken over how peer review, and the results of peer reviewed research, are communicated to non-specialist audiences; particularly during a time in which a range of technical changes and a deeper appreciation of the complexities of peer review are emerging [48,49,50,51,52]. This will be needed as the scholarly publishing system has to confront wider issues such as retractions [39,53,54] and replication or reproducibility ‘crises’ [55,56,57].

2.4. Topic 4: Will the quality of the scientific literature suffer without journal-imposed peer review?

Peer review, without a doubt, is integral to scientific scholarship and discourse. More often, however, this central scholarly component is coopted for administrative goals: gatekeeping, filtering, and signaling. Its gatekeeping role is believed to be necessary to maintain the quality of the scientific literature [58,59]. Furthermore, some have argued that without the filter provided by peer review, the literature risks becoming a dumping ground for unreliable results, researchers will not be able to separate signal from noise, and scientific progress will slow [60,61]. These beliefs can be detrimental to scientific practice.

The previous section argued that the existing journal-imposed peer gatekeeper is not effective, that ‘bad science’ frequently enters the scholarly record. A possible reaction to this is to think that shortcomings of the current system can be overcome with more oversight, stronger filtering, and more gatekeeping. A common argument in favor of such initiatives is the belief that a filter is needed to maintain the integrity of the scientific literature [62,63]. But if the current model is ineffective, there is little rationale for doubling down on it. Instead of more oversight and filtering, why not less?

The key point is that if anyone has a vested interest in the quality of a particular piece of work, it surely is the author. Only the authors could have, as Feynman (1974)14 puts it, the “extra type of integrity that is beyond not lying, but bending over backwards to show how you’re maybe wrong, that you ought to have when acting as a scientist.” If anything, the current peer review process and academic system penalizes, or at least fails to incentivize, such integrity.

Instead, the credibility conferred by the "peer-reviewed" label diminishes what Feynman calls the culture of doubt necessary for science to operate a self-correcting, truth-seeking process [64]. The troubling effects of this can be seen in the ongoing replication crisis, hoaxes, and widespread outrage over the inefficacy of the current system [35,41]. This issue is exacerbated too by the fact that it is rarely ever just experts who read or use peer reviewed research (see Topic 3), and the wider public impact of this problem remains poorly understood (although, see, for example, the anti-vaccination movement). It is common to think that more oversight is the answer, as peer reviewers are not at all lacking in skepticism. But the issue is not the skepticism shared by the select few who determine whether an article passes through the filter. It is the validation and accompanying lack of skepticism—from both the scientific community and the general public—that comes afterwards15. Here again more oversight only adds to the impression that peer review ensures quality, thereby further diminishing the culture of doubt and counteracting the spirit of scientific inquiry16.

Quality research—even some of our most fundamental scientific discoveries—dates back centuries, long before peer review took its current form [35,36,65]. Whatever peer review existed centuries ago, it took a different form than it does now, without the influence of large, commercial publishing companies or a pervasive culture of publish-or-perish [65]. Though in its initial conception it was often a laborious and time-consuming task, researchers took peer review on nonetheless, not out of obligation but out of duty to uphold the integrity of their own scholarship. They managed to do so, for the most part, without the aid of centralized journals, editors, or any formalized or institutionalized process. Modern technology, which makes it possible to communicate instantaneously with scholars around the globe, only makes such scholarly exchanges easier, and presents an opportunity to restore peer review to its purer scholarly form, as a discourse in which researchers engage with one another to better clarify, understand, and communicate their insights [51,66].

A number of measures can be taken towards this objective, including posting results to preprint servers, preregistration of studies, open peer review, and other open science practices [56,67,68]. In many of these initiatives, however, the role of gatekeeping remains prominent, as if a necessary feature of all scholarly communication. The discussion in this section suggests otherwise, but such a “myth” cannot be fully disproven without a proper, real-world implementation to test it. All of the new and ongoing developments around peer review [43] demonstrate researchers’ desire for more than what many traditional journals can offer. They also show that researchers can be entrusted to perform their own quality control independent of journal-coupled review. After all, the outcry over the inefficiencies of traditional journals centers on their inability to provide rigorous enough scrutiny, and the outsourcing of critical thinking to a concealed and poorly understood process. Thus, it seems that the strong coupling between journals and peer review as a requirement to protect scientific integrity seems to undermine the very foundations of scholarly inquiry.

To test the hypothesis that filtering is unnecessary to quality control, many traditional publication practices must be redesigned, editorial boards must be repurposed, and authors must be granted control over peer reviewing their own work. Putting authors in charge of their own peer review serves a dual purpose. On one hand, it removes the conferral of quality within the traditional system, thus eliminating the prestige associated with the simple act of publishing. Perhaps paradoxically, the removal of this barrier might actually result in an increase of the quality of published work, as it eliminates the cachet of publishing for its own sake. On the other hand, readers—both scientists and laypeople—know that there is no filter so they must interpret anything they read with a healthy dose of skepticism, thereby naturally restoring the culture of doubt to scientific practice [69,70,71].

In addition to concerns about the quality of work produced by well-meaning researchers, there are concerns that a truly open system would allow the literature to be populated with junk and propaganda by vested interests. Though a full analysis of this issue is beyond the scope of this section, we once again emphasize how the conventional model of peer review diminishes the healthy skepticism that is a hallmark of scientific inquiry, and thus confers credibility upon subversive attempts to infiltrate the literature. As we have argued elsewhere, there is reason to believe that allowing such “junk” to be published makes individual articles less reliable but renders the overall literature more robust by fostering a “culture of doubt” [72].

We are not suggesting that peer review should be abandoned. Indeed, we believe that peer review is a valuable tool for scientific discourse and a proper implementation will improve the overall quality of the literature. One essential component of what we believe to be a proper implementation of peer review is facilitating an open dialogue between authors and readers. This provides a forum for readers to explain why they disagree with the authors’ claims. This gives authors the opportunity to revise and improve their work, and gives non-experts a clue as to whether results in the article are reliable.

Only a few initiatives have taken steps to properly test the ‘myth’ highlighted in this section; one among them is Researchers.One, a non-profit peer review publication platform featuring a novel author-driven peer review process [69]. Other similar examples include the Self-Journal of Science, PRElights, and The Winnower. While it is too early in these tests to conclude that the ‘myth’ in this section has been busted, both logic and empirical evidence point in that direction. An important message and key to advancing peer review reform, is optimism: researchers are more than responsible and competent enough to ensure their own quality control, they just need the means and the authority to do so.

2.5. Topic 5: Is Open Access responsible for creating predatory publishers?

Predatory publishing does not refer to a homogenous category of practices. The name itself coined by American librarian Jeffrey Beall who created a list of “deceptive and fraudulent” Open Access (OA) publishers which was used as reference until withdrawn in 2017. The term has been reused since for a new for-profit database by Cabell’s International [73]. On the one hand, Beall’s list as well as Cabell’s International database do include truly fraudulent and deceptive OA publishers, that pretend to provide services (in particular quality peer review) which they do not implement, show fictive editorial boards and/or ISSN numbers, use dubious marketing and spamming techniques or even hijacking known titles [74]. On the other hand, they also list journals with subpar standards of peer review and linguistic correction [75]. The number of predatory journals thus defined has grown exponentially since 2010 [76,77]. Demonstrating unethical practices in the OA publishing industry also attracted considerable media attention [78].

Nevertheless, papers published by predatory publishers still represent only a small proportion of all published papers in OA journals. Most OA publishers ensure their quality by registering their titles in the DOAJ (Directory of Open Access Journals) and complying to a standardized set of conditions17. A recent study has shown that Beall’s criteria of “predatory” publishing were in no way limited to OA publishers and that, applying them to both OA and non-OA journals in the field of Library and Information Science, even top tier non-OA journals could be qualified as predatory ([79]; see Shamseer et al. [80] on difficulties of demarcating predatory and non-predatory journals in Biomedicine). If a causative connection is to be made in this regard, it is thus not between predatory practices and OA. Instead it is between predatory publishing and the unethical use of one of the many OA business models adopted by a minority of DOAJ registered journals [81]. This is the author-facing article-processing charge (APC) business model in which authors are charged to publish rather than to read [82] (see also Topic 7). Such a model may indeed provide conflicting incentives for publishers behaving in an unethical or unprofessional manner to publish quantity rather than quality, in particular once combined with the often-unlimited text space available online. APCs have gained increasing popularity in the last two decades as a business model for OA due to the guaranteed revenue streams they offer, as well as a lack of competitive pricing within the OA market which allows vendors full control over how much they choose to charge [83]. However, in subscription-based systems there can also be an incentive to publish more papers and use this as a justification for raising subscription prices—as is demonstrated, for example, by Elsevier’s statement on ‘double-dipping’18. Ultimately, quality control is not related to the number of papers published, but to editorial policies and standards and their enforcement. In this regard, it is important to note the emergence of journals and platforms that select purely on (peer-reviewed) methodological quality, often enabled by the APC-model and the lack of space restrictions in online publishing. In this way, the APC-model—once used in a responsible manner—does not conflict with the publication of more quality articles.

The majority of predatory OA publishers and authors publishing in these appear to be based in Asia and Africa, but also in Europe and the Americas [84,85,86]. It has been argued that authors who publish in predatory journals may do so unwittingly without actual unethical perspective, due to concerns that North American and European journals might be prejudiced against scholars from non-western countries, high publication pressure, or lack of research proficiency [87,88]. Hence predatory publishing also questions the geopolitical and commercial context of scholarly knowledge production. Nigerian researchers, for example, publish in predatory journals due to the pressure to publish internationally while having little to no access to Western international journals, or due to the often higher APCs practiced by mainstream OA journals [89]. More generally, the criteria adopted by high JIF journals, including the quality of the English language, the composition of the editorial board or the rigor of the peer review process itself tend to favor familiar content from the “center” rather than the “periphery” [90]. It is thus important to distinguish between exploitative publishers and journals—whether OA or not—and legitimate OA initiatives with varying standards in digital publishing, but which may improve and disseminate epistemic contents [91,92]. In Latin America a highly successful system of free of charge OA publishing (for authors) has been in place for more than two decades, thanks to organizations such as SciELO and REDALYC19.

Published and OA review reports are one of a few simple solutions to allow any reader or potential author to directly assess both quality and efficiency of the review system of any given journal, and the value for money of the requested APCs; thus evaluating whether a journal has ‘deceptive’ or predatory practices [42,93]. Associating OA with predatory publishing is therefore misleading. Predatory publishing is rather considered as one consequence of the economic structure that has emerged around many parts of Open Access20. The real issue with it lies in the unethical or unprofessional use of a particular business model and could largely be resolved with more transparency in the peer review and publication process. What remains clear is that any black and white interpretations of the scholarly publishing industry should be avoided. A more careful and rigorous understanding of predatory publishing practices is still required [94].

2.6. Topic 6: Is copyright transfer required to publish and protect authors?

Traditional methods of scholarly publishing require complete and exclusive copyright transfer from authors to the publisher, typically as a precondition for publication [95,96,97,98,99]. This process transfers control and ownership over dissemination and reproduction from authors as creators to publishers as disseminators, with the latter then able to monetize the process [65]. Such a process is deeply historically rooted in the pre-digital era of publishing when controlled distribution was performed by publishers on the behalf of authors. Now, the transfer and ownership of copyright represents a delicate tension between protecting the rights of authors, and the interests—financial as well as reputational—of publishers and institutes [100]. With OA publishing, authors typically retain copyright to their work, and articles and other outputs are granted a variety of licenses depending on the type.

The timing of rights transfer is problematic for several reasons. Firstly, because publishing usually requires copyright transfer it is rarely freely transferred or acquired without pressure [101]. Secondly, authors have little choice against signing a copyright transfer agreement, as publishing leads to career progression (publish or perish/publication pressure), and the time potentially wasted should the review and publication process have to be started afresh. There are power dynamics at play that do not benefit authors, and instead often compromise certain academic freedoms [102]. This might in part explain why authors in scientific research, in contrast to all other industries where original creators get honoraria or royalties, typically do not receive any payments from publishers at all. It also explains why many authors seem to continue to sign away their rights while simultaneously disagreeing with the rationale behind doing so [103].

It remains unclear if such copyright transfer is generally permissible. Research funders or institutes, public museums, or art galleries might have over-ruling policies that state that copyright over research, content, intellectual property, that it employs or funds is not allowed to be transferred to third parties, commercial or otherwise. Usually a single author is signing on behalf of all authors, perhaps without their awareness or permission [101]. The full understanding of copyright transfer agreements requires a firm grasp of ‘legal speak’ and copyright law, in an increasingly complex licensing and copyright landscape21,22, and for which a steep learning curve for librarians and researchers exists [104,105]. Thus, in many cases, authors might not even have the legal rights to transfer full rights to publishers, or agreements have been amended to make full texts available on repositories or archives, regardless of the subsequent publishing contract23.

This amounts to a fundamental discord between the purpose of copyright (i.e., to grant full choice to an author/creator over dissemination of works) and the application of it, because authors lose these rights during copyright transfer. Such fundamental conceptual violations are emphasized by the popular use of sites such as ResearchGate and Sci-Hub for illicit file sharing by academics and the wider public [106,107,108,109,110,111]. Widespread, unrestricted sharing helps to advance science faster than paywalled articles. Thus it can be argued that copyright transfer does a fundamental disservice to the entire research enterprise [112]. It is also highly counter-intuitive when learned societies such as the American Psychological Association actively monitor and remove copyrighted content they publish on behalf of authors24, as this is clearly not in the best interests of either authors or the reusability of published research. The fact that authors sharing their own work becomes copyright infringement, with the possible threat of legal action, shows how counterproductive the system of copyright transfer has become (i.e., original creators lose all control over, and rights to, their own works).

Some commercial publishers, such as Elsevier, engage in ‘nominal copyright’ where they require full and exclusive rights transfer from authors to the publisher for OA articles, while the copyright in name stays with the authors [113]. The assumption that this practice is a condition for publication is misleading, since even works that are in the public domain can be repurposed, printed, and disseminated by publishers. Authors can instead grant a simple non-exclusive license to publish that fulfills the same criteria. However, according to a survey from Taylor and Francis in 2013, almost half of researchers surveyed answered that they would still be content with copyright transfer for OA articles [114].

Therefore, not only does it appear that in scientific research, copyright is largely ineffective in its proposed use, but also perhaps wrongfully acquired in many cases, and goes practically against its fundamental intended purpose of helping to protect authors and further scientific research. Plan S requires that authors and their respective institutes retain copyright to articles without transferring them to publishers; something also supported by OA202025. There are also a number of licenses that can be applied through Creative Commons that can help to increase the dissemination and re-use of research, while protecting authors within a strong legal framework. Thus, we are unaware of a single reason why copyright transfer is required for publication, or indeed a single case where a publisher has exercised copyright in the best interest of the authors. While one argument of publishers in favor of copyright transfer might be that it enables them to defend authors against any copyright infringements26, publishers can take on this responsibility even when copyright stays with the author, as is the policy of the Royal Society27.

2.7. Topic 7: Does gold Open Access have to cost a lot of money for authors, and is it synonymous with the APC business model?

Too often OA gets conflated with just one route to achieving it: the author-facing APC business model, whereby authors (or institutions or research funders, on their behalf) pay an APC to cover publishing costs [115]. Yet, there are a number of routes to OA. These are usually identified by ‘gold’, ‘bronze’, ‘green’, or ‘diamond’; the latter two explicitly having no APCs. Green OA refers to author self-archiving of a near-final version of their work (usually the accepted manuscript or ‘postprint’) on a personal website or general-purpose repository. The latter is usually preferable due to better long-term preservation. Diamond OA refers to availability on the journal website without payment of any APCs, while Gold OA often requires payment of additional APCs for immediate access upon publication (i.e., all APC-based OA is gold OA, but not all Gold OA is APC-based). Bronze OA refers to articles made free-to-read on the publisher website, but without any explicit open license [116].

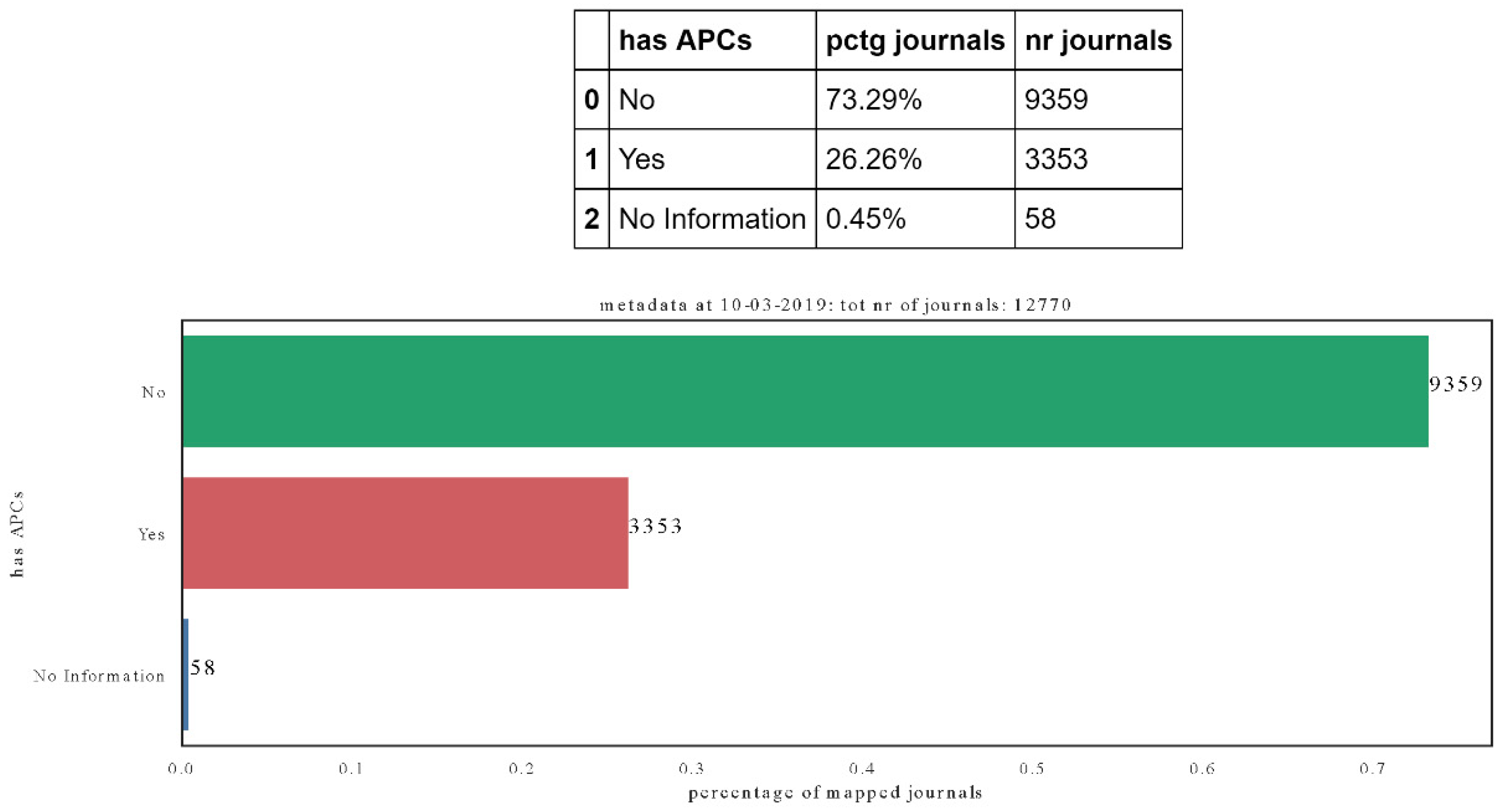

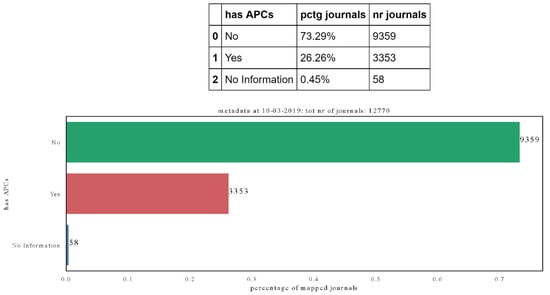

Data from the DOAJ indicates that, of the approximately 11,000 journals it indexes, 71% do not have an APC28 (i.e., are diamond OA), which means they are funded from a range of other sources, such as institutional grants. Accessing the DOAJ metadata29 on the 10th of March 2019, yields to a bit more than 74% of journals indexed in the DOAJ having no APCs (total journals indexed 12,770; Figure 3). While many of these journals are smaller and more local-regional in scale, and their ‘free to publish’ aspects can be conditional on institutional affiliation30, these data show that the APC model is far from hegemonic in the way it is often taken to be. For example, most APC-free journals in Latin America are funded by higher education institutions and are not conditional on institutional affiliation for publication. At a different level, this equates to around a quarter of a million fee-free OA articles in 2017, based on the DOAJ data [81].

Figure 3.

Proportion of journals indexed in the DOAJ (Directory of Open Access Journals) that charge or do not charge APCs (article-processing charges). For a small portion, the information is not available. All code and data needed to reproduce these figures are on Zenodo: http://doi.org/10.5281/zenodo.2647404.

However, many of the larger publishers do leverage very high APCs for OA (e.g., Nature Communications published by Springer Nature costs USD $5200 per article before VAT31). As such, there is a further potential issue that the high and unsustainable growth in the cost of subscription prices will be translated into ever increasing APCs [117,118]. This would ultimately result into a new set of obstacles to authors, which already systematically discriminate against those with lesser financial privilege, irrespective of what proposed countermeasures are in place [119]. Therefore, the current implementation of APC-driven OA is distinct from the original intentions of OA, creating a new barrier for authors, and leading to an OA system where ‘the rich get richer’. What remains unclear is how these APCs reflect the true cost of publication and are related to the value added by the publisher. It has been argued that publishers to some extent take the quality—as indicated by citation rates per paper—into account when pricing APCs [120], but the available evidence also suggests that some publishers scale their APCs based on a number of external factors such as the JIF or certain research disciplines [83,121,122]. It is known that ‘hybrid OA’ (where specific articles in subscription journals are made OA for a fee) generally costs more than ‘gold OA’ and can offer a lower quality of service32.

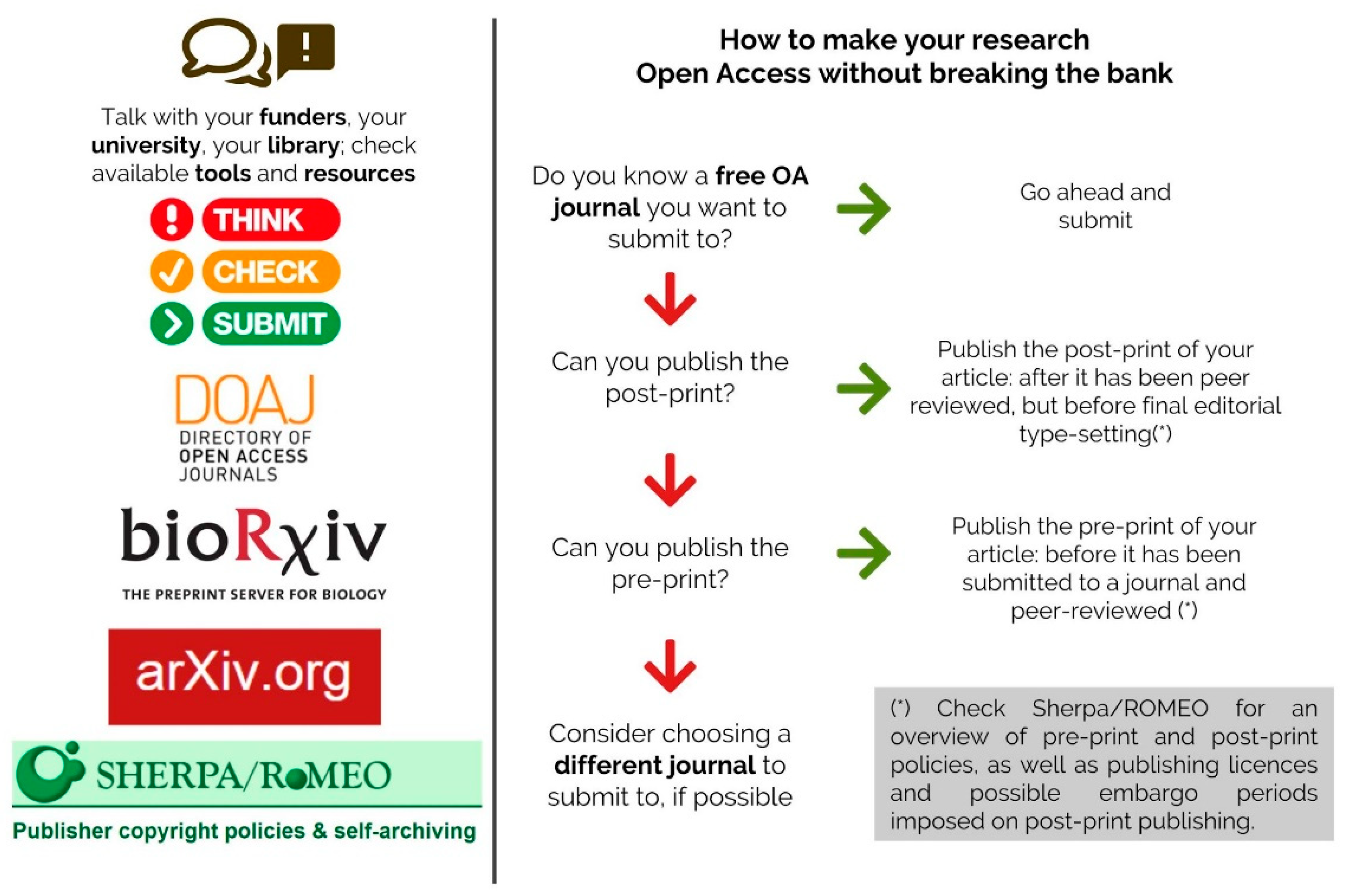

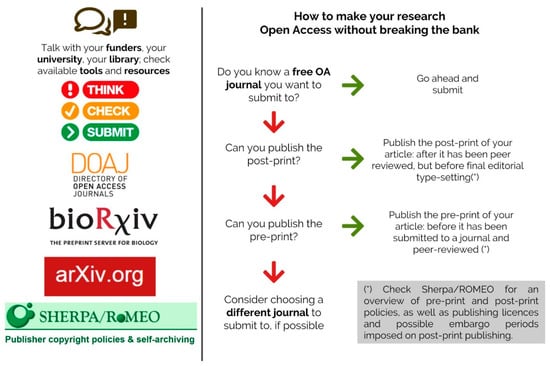

The current average expenditure via subscriptions for a single research article is estimated to be around USD $3500–$4000 (based on total amount spent divided by total number published), but production costs are highly variable by publisher [123]. Some journals, such as the Journal of Machine Learning Research which costs between $6.50–$10 per article33, demonstrate that the cost of publication can be far more efficient than what is often spent. Usually, the costs of publishing and the factors contributing to APCs are completely concealed. The publishers eLife and Ubiquity Press are transparent about their direct and indirect costs; the latter levies an APC of $50034. Depending on the funding available to authors, however, which is also contingent on factors such as institute, discipline, or country, even APCs on the lower end of the spectrum could still be unaffordable. This is why ‘green’ and ‘diamond’ OA help to level the playing field, and encourage more equitable forms of OA publishing, as we highlight in schematic of Figure 4. These more balanced, achievable and equitable forms of OA are becoming more and more relevant, especially when synchronized to changes in the incentive and reward system that challenge the current journal-based ‘prestige economy’ [124]. Not only is there already more than enough money ‘within the system’ to enable a full and immediate transition to OA [123], but there is an enormous potential to do so in a cost-effective manner that promotes more equitable participation in publication.

Figure 4.

Some steps allowing free Open Access publishing for authors (vertical arrows imply ‘no’, and horizontal arrows imply ‘yes’. Inspired by https://figshare.com/collections/How_to_make_your_work_100_Open_Access_for_free_and_legally_multi-lingual_/3943972.

2.8. Topic 8: Are embargo periods on ‘green’ OA needed to sustain publishers?

As mentioned in the previous section, the ‘green’ route to OA refers to author self-archiving, in which a version of the article (often the peer-reviewed version before editorial typesetting, called ‘postprint’) is posted online to an institutional and/or subject repository. This route is often dependent on journal or publisher policies35, which can be more restrictive and complicated than respective ‘gold’ policies regarding deposit location, license, and embargo requirements. Some publishers require an embargo period before deposition in public repositories [125], arguing that immediate self-archiving risks loss of subscription income.

Currently used embargo times (often 6–12 months in STEM and >12 months in social sciences and humanities), however, do not seem to be based on empirical evidence on the effect of embargoes on journal subscriptions. In 2013 the UK House of Commons Select Committee on Business, Innovation and Skills already concluded that “there is no available evidence base to indicate that short or even zero embargoes cause cancellation of subscriptions”36.

There are some data available37 on the median ‘usage half-life’ (the median time it takes for scholarly articles to reach half of their total downloads) and the difference therein across disciplines, but this in itself does not prove that embargo length will affect subscriptions38.

The argument that immediate self-archiving risks subscription revenue does reveal an implicit irony especially where archiving of postprints is concerned. If the value publishers add to the publication process beyond peer review (e.g., in typesetting, dissemination, and archiving) were worth the price asked, people would still be willing to pay for the journal even if the unformatted postprint is available elsewhere. An embargo is a statement that in fact the prices levied for individual articles through subscriptions, are not commensurate to the value added to a publication beyond organizing the peer review process.

Publishers have, in the past, lifted embargo periods for specific research topics in times of humanitarian crises, or have been asked to do so (e.g., outbreaks of Zika and Ebola39). While commendable in itself, this also serves as an implicit acknowledgement that embargoes stifle the progress of science and the potential application of scientific research; particularly when it comes to life-threatening pandemics. While arguably, not all research is potentially critical for saving lives, it is hard to imagine a discipline where fellow researchers and societal partners would not benefit from un-embargoed access to research findings.

Evidence suggests that traditional journals can peacefully coexist with zero-embargo self-archiving policies [126,127,128,129,130], and the relative benefits to both publishers and authors via increased dissemination and citations outweigh any putative negative impacts. For publishers, the fact that that most preprint repositories encourage authors to link to or upload the final published version of record (VOR) is effectively free marketing for the respective journal and publisher.

‘Plan S’ has zero-length embargoes on self-archiving as one of its key principles. Where publishers have already implemented such policies, such as the Royal Society, Sage, and Emerald40, there has been no documented impact on their finances so far. In a reaction to Plan S, Highwire suggested that three of their society publishers make all author manuscripts freely available upon submission and state that they do not believe this practice has contributed to subscription decline41. Therefore, there is little evidence or justification supporting the need for embargo periods.

2.9. Topic 9: Are Web of Science and Scopus global platforms of knowledge?

Clarivate Analytics’ Web of Science (WoS) and Elsevier’s Scopus platforms are synonymous with data on international research, and considered as the two most trusted or authoritative sources of bibliometric data for peer-reviewed global research knowledge across disciplines [22,131,132,133,134,135,136]. They are both also used widely for the purposes of researcher evaluation and promotion, institutional impact (for example the role of WoS in the UK Research Excellence Framework 202142), and international league tables (Bibliographic data from Scopus represents more than 36% of assessment criteria in the THE rankings43). But while these databases are generally agreed to contain rigorously assessed, high quality research, they do not represent the sum of current global research knowledge.

It is often mentioned in popular science articles that the research outputs of researchers in South America, Asia, and Africa is disappointingly low. Sub-Saharan Africa is often singled out and chastised for having “13.5% of the global population but less than 1% of global research output”44. This oft-quoted factoid is based on data from a World Bank/Elsevier report from 2012 which relies on data exclusively from Scopus45. Similarly, many others have analyzed ‘global’ or international collaborations and mobility using the even more selective WoS database [137,138,139]. Research outputs in these contexts refers specifically to papers published in peer-reviewed journals that are indexed either in Scopus or WoS.

Both WoS and Scopus are highly selective. Both are commercial enterprises, whose standards and assessment criteria are mostly controlled by panels of gatekeepers in North America and Western Europe. The same is true for more comprehensive databases such as Ulrich’s Web which lists as many as 70,000 journals [140], but crucially Scopus has fewer than 50% of these, while WoS has fewer than 25% [131]. While Scopus is larger and geographically broader than WoS, it still only covers a fraction of journal publishing outside North America and Europe. For example, it reports a coverage of over 2000 journals in Asia (“230% more than the nearest competitor”)46, which may seem impressive until you consider that in Indonesia alone there are more than 7000 journals listed on the government’s Garuda portal47 (of which more than 1300 are currently listed on DOAJ)48; while at least 2500 Japanese journals listed on the J-Stage platform49. Similarly, Scopus claims to have about 700 journals listed from Latin America, in comparison with SciELO’s 1285 active journal count50; but that is just the tip of the iceberg judging by the 1300+ DOAJ-listed journals in Brazil alone51. Furthermore, the editorial boards of the journals contained in WoS and Scopus databases are dominated by researchers from western Europe and North America. For example, in the journal Human Geography, 41% of editorial board members are from the United States, and 37.8% from the UK [141]. Similarly, Wooliscroft and Rosenstreich [142] studied ten leading marketing journals in WoS and Scopus databases, and concluded that 85.3% of their editorial board members are based in the United States. It comes as no surprise that the research that gets published in these journals is the one that fits the editorial boards’ world view [142].

Comparison with subject-specific indexes has further revealed the geographical and ‘topic bias—for example Ciarli, Rafols, and Llopis [143] found that by comparing the coverage of rice research in CAB Abstracts (an agriculture and global health database) with WoS and Scopus, the latter “may strongly under-represent the scientific production by developing countries, and over-represent that by industrialized countries”, and this is likely to apply to other fields of agriculture. Crucially, this under-representation of applied research in Africa, Asia, and South America may have an additional negative effect on framing research strategies and policy development in these countries [135]. The overpromotion of these databases diminishes the important role of ‘locally-focused’ and ‘regional’ journals for researchers who want to publish and read locally relevant content. In some regions, researchers deliberately bypass ‘high impact’ journals when they want to publish locally useful or important research in favor of outlets that will reach their key audience quicker, and in other cases to be able to publish in their native language [144,145,146].

Furthermore, the odds are stacked against researchers for whom English is a foreign language. More than 95% of WoS journals are English-language [147]. Tietze and Dick [148] consider the use of English language a hegemonic and unreflective linguistic practice. One of the consequences is that non-English speakers spend part of their budget on translation and correction and invest a significant amount of time and effort on subsequent corrections, making publishing in English a burden [149,150]. A far-reaching consequence of the use of English as the lingua franca of science is in knowledge production, because its use benefits “worldviews, social, cultural, and political interests of the English-speaking center” (Tietze and Dick [148], p. 123).

The small proportion of research from South East Asia, Africa, and Latin America which makes it into WoS and Scopus journals is not attributable to a lack of effort or quality of research; but due to hidden and invisible epistemic and structural barriers52. These are a reflection of “deeper historical and structural power that had positioned former colonial masters as the centers of knowledge production, while relegating former colonies to peripheral roles”53). Many North American and European journal’s editorial boards demonstrate conscious and unconscious bias against researchers from other parts of the world54. Many of these journals are called ‘international’ but represent interests, authors, and even references only in their own languages55 [151]. Therefore, researchers in non-European or North American countries commonly get rejected because their research is said to be ‘not internationally significant’ or only of ‘local interest’ (the wrong ‘local’). This reflects the current concept of ‘international’ as limited to a Euro/Anglophone-centric way of knowledge production [147,152]. In other words, “the ongoing internationalization has not meant academic interaction and exchange of knowledge, but the dominance of the leading Anglophone journals in which international debates occur and gain recognition” (Minca [153], p. 8).

Clarivate Analytics have made some positive steps to broaden the scope of WoS, integrating the SciELO citation index—a move not without criticism56—and also through the creation of the Emerging Sources Index (ESI), which has allowed the inclusion of many more international titles into the service. However, there is still a lot of work to be done to recognize and amplify the growing body of research literature generated by those outside North America and Europe. The Royal Society have previously identified that “traditional metrics do not fully capture the dynamics of the emerging global science landscape”, and that we need to develop more sophisticated data and impact measures to provide a richer understanding of the global scientific knowledge that is available to us [154].

As a global community, we have not yet been able to develop digital infrastructures which are truly equal, comprehensive, multi-lingual and allows fair participation in knowledge creation [155]. One way to bridge this gap is with discipline- and region-specific preprint repositories such as AfricArXiv and InarXiv. In the meantime, we need to remain critical of those ‘global’ research databases that have been built in Europe or Northern America and be wary of those who sell these products as a representation of the global sum of human scholarly knowledge. Finally, let us also be aware of the geopolitical impact that such systematic discrimination has on knowledge production, and the inclusion and representation of marginalized research demographics within the global research landscape. This is particularly important when platforms such as Web of Science and Scopus are used for international assessments of research quality57.

2.10. Topic 10: Do publishers add value to the scholarly communication process?

There is increasing frustration among OA advocates with perceived resistance to change by many of the established scholarly publishers. Publishers are often accused of capturing and monetizing publicly-funded research, using free academic labor for peer review, and then selling the resulting publications back to academia at inflated profits [156]. Frustrations sometimes spill over into hyperbole, of which ‘publishers add no value’ is one of the most common examples.

However, scholarly publishing is not a simple process, and publishers do add value to current scholarly communication [157]. Kent Anderson has listed many things that journal publishers do which currently contains 102 items and has yet to be formally contested from anyone who challenges the value of publishers58. Many items on the list could be argued to be of value primarily to the publishers themselves, e.g., “Make money and remain a constant in the system of scholarly output”. However, others provide direct value to researchers and research in steering the academic literature. This includes arbitrating disputes (e.g., over ethics, authorship), stewarding the scholarly record, copy-editing, proofreading, type-setting, styling of materials, linking the articles to open and accessible datasets, and (perhaps most importantly) arranging and managing scholarly peer review. The latter is a task which should not be underestimated as it effectively entails coercing busy people into giving their time to improve someone else’s work and maintain the quality of the literature. Not to mention the standard management processes for large enterprises, including infrastructure, people, security, and marketing. All of these factors contribute in one way or another to maintaining the scholarly record.

It could be questioned though, whether these functions are actually necessary to the core aim of scholarly communication, namely, dissemination of research to researchers and other stakeholders such as policy makers, economic, biomedical and industrial practitioners as well as the general public. Above, for example, we question the necessity of the current infrastructure for peer review, and if a scholar-led crowdsourced alternative may be preferable. In addition, one of the biggest tensions in this space is associated with the question if for-profit companies (or the private sector) should be allowed to be in charge of the management and dissemination of academic output and execute their powers while serving, for the most part, their own interests. This is often considered alongside the value added by such companies, and therefore the two are closely linked as part of broader questions on appropriate expenditure of public funds, the role of commercial entities in the public sector, and issues around the privatization of scholarly knowledge.

Publishing could certainly be done at a lower cost than common at present, as we discussed above. Examples of services that offer the core publishing and long-term archiving and digital preservation functions (e.g., biorXiv, arXiv, DataCite) demonstrate that the most valuable services can often be done at a fraction of the cost charged by some commercial vendors. There are significant researcher-facing inefficiencies in the system including the common scenario of multiple rounds of rejection and resubmission to various venues as well as the fact that some publishers profit beyond reasonable scale [158]. What is missing most from the current publishing market, is transparency about the nature and the quality of the services publishers offer. This would allow authors to make informed choices, rather than decisions based on indicators that are unrelated to research quality, such as the JIF. All the above questions are being investigated and alternatives could be considered and explored. Yet, in the current system, publishers still play a role in managing processes of quality assurance, interlinking, and findability of research. As the role of scholarly publishers within the knowledge communication industry continues to evolve, and as critical services continue to become ‘unbundled’ from them, it will remain paramount that they can justify their operation based on the intrinsic value that they add [159,160], and combat the common perception that they add no value to the process.

3. Conclusions

We selected and addressed ten often debated issues on open scholarly publishing that researchers are now debating. This article is a reference point for combating misinformation put forward in public relations pieces and elsewhere, as well as for journalists wishing to fact-check statements from stakeholder groups. Should these issues arise in policy circles, then this article should guide discussions. Overall, our intention is to provide a stable foundation towards a more constructive and informed debate of open scholarly communication.

Author Contributions

J.P.T. conceived the idea for the manuscript. All authors contributed text and provided editorial oversight.

Funding

This research received no external funding.

Acknowledgments

For contributions to the preprint version of this manuscript, all authors would like to thank Janne-Tuomas Seppänen, Lambert Heller, Dasapta Erwin Irawan, Danny Garside, Valérie Gabelica, Andrés Pavas, Peter Suber, Sami Niinimäki, Mikael Laakso, Neal Haddaway, Dario Taraborelli, Fabio Ciotti, Andrée Rathemacher, Sharon Bloom, Lars Juhl Jensen, Giannis Tsakonas, Lars Öhrström, John A. Stevenson, Kyle Siler, Rich Abdill, Philippe Bocquier, Andy Nobes, Lisa Matthias, and Karen Shashok. Three anonymous reviewers also provided useful feedback.

Conflicts of Interest

Andy Nobes is employed by International Network for the Availability of Scientific Publications (INASP). Bárbara Rivera-López is employed by Asesora Producción Científica. Tony Ross-Hellauer is employed by Know-Center GmbH. Jonathan Tennant, Johanna Havemann, and Paola Masuzzo are independent researchers with IGDORE. Tony Ross-Hellauer is Editor in Chief of Publications, and Jonathan Tennant and Bianca Kramer are also editors at the journal.

Glossary

| Altmetrics | Altmetrics are metrics and qualitative data that are complementary to traditional, citation-based metrics. |

| Article-Processing Charge (APC) | An APC is a fee which is sometimes charged to authors to make a work available Open Access in either a fully OA journal or hybrid journal. |

| Gold Open Access | Immediate access to an article at the point of journal publication. |

| Green Open Access | Where an author self-archives a copy of their article in a freely accessible subject-specific, universal, or institutional repository. |

| Directory for Open Access Journals (DOAJ) | An online directory that indexes and provides access to quality Open Access, peer-reviewed journals. |

| Journal Impact Factor (JIF) | A measure of the yearly average number of citations to recent articles published in a particular journal. |

| Postprint | Version of a research paper subsequent to peer review (and acceptance), but before any type-setting or copy-editing by the publisher. Also sometimes called a ‘peer reviewed accepted manuscript’. |

| Preprint | Version of a research paper, typically prior to peer review and publication in a journal. |

References

- Alperin, J.P.; Fischman, G. Hecho en Latinoamérica. Acceso Abierto, Revistas Académicas e Innovaciones Regionales; 2015; ISBN 978-987-722-067-4. [Google Scholar]

- Vincent-Lamarre, P.; Boivin, J.; Gargouri, Y.; Larivière, V.; Harnad, S. Estimating open access mandate effectiveness: The MELIBEA score. J. Assoc. Inf. Sci. Technol. 2016, 67, 2815–2828. [Google Scholar] [CrossRef]

- Ross-Hellauer, T.; Schmidt, B.; Kramer, B. Are Funder Open Access Platforms a Good Idea? PeerJ Inc.: San Francisco, CA, USA, 2018. [Google Scholar]

- Publications Office of the European Union. Future of scholarly publishing and scholarly communication: Report of the Expert Group to the European Commission. Available online: https://publications.europa.eu/en/publication-detail/-/publication/464477b3-2559-11e9-8d04-01aa75ed71a1 (accessed on 16 February 2019).

- Matthias, L.; Jahn, N.; Laakso, M. The Two-Way Street of Open Access Journal Publishing: Flip It and Reverse It. Publications 2019, 7, 23. [Google Scholar] [CrossRef]

- Ginsparg, P. Preprint Déjà Vu. EMBO J. 2016, e201695531. [Google Scholar] [CrossRef] [PubMed]

- Neylon, C.; Pattinson, D.; Bilder, G.; Lin, J. On the origin of nonequivalent states: How we can talk about preprints. F1000Research 2017, 6, 608. [Google Scholar] [CrossRef] [PubMed]

- Tennant, J.P.; Bauin, S.; James, S.; Kant, J. The evolving preprint landscape: Introductory report for the Knowledge Exchange working group on preprints. BITSS 2018. [Google Scholar] [CrossRef]

- Balaji, B.P.; Dhanamjaya, M. Preprints in Scholarly Communication: Re-Imagining Metrics and Infrastructures. Publications 2019, 7, 6. [Google Scholar] [CrossRef]

- Bourne, P.E.; Polka, J.K.; Vale, R.D.; Kiley, R. Ten simple rules to consider regarding preprint submission. PLOS Comput. Biol. 2017, 13, e1005473. [Google Scholar] [CrossRef] [PubMed]

- Sarabipour, S.; Debat, H.J.; Emmott, E.; Burgess, S.J.; Schwessinger, B.; Hensel, Z. On the value of preprints: An early career researcher perspective. PLOS Biol. 2019, 17, e3000151. [Google Scholar] [CrossRef]

- Powell, K. Does it take too long to publish research? Nat. News 2016, 530, 148. [Google Scholar] [CrossRef]

- Vale, R.D.; Hyman, A.A. Priority of discovery in the life sciences. eLife 2016, 5, e16931. [Google Scholar] [CrossRef]

- Crick, T.; Hall, B.; Ishtiaq, S. Reproducibility in Research: Systems, Infrastructure, Culture. J. Open Res. Softw. 2017, 5, 32. [Google Scholar] [CrossRef]

- Gentil-Beccot, A.; Mele, S.; Brooks, T. Citing and Reading Behaviours in High-Energy Physics. How a Community Stopped Worrying about Journals and Learned to Love Repositories. arXiv 2009, arXiv:0906.5418. [Google Scholar]

- Curry, S. Let’s move beyond the rhetoric: it’s time to change how we judge research. Nature 2018, 554, 147. [Google Scholar] [CrossRef]

- Lariviere, V.; Sugimoto, C.R. The Journal Impact Factor: A brief history, critique, and discussion of adverse effects. arXiv 2018, arXiv:1801.08992. [Google Scholar]

- McKiernan, E.C.; Schimanski, L.A.; Nieves, C.M.; Matthias, L.; Niles, M.T.; Alperin, J.P. Use of the Journal Impact Factor in Academic Review, Promotion, and Tenure Evaluations; PeerJ Inc.: San Francisco, CA, USA, 2019. [Google Scholar]

- Lariviere, V.; Kiermer, V.; MacCallum, C.J.; McNutt, M.; Patterson, M.; Pulverer, B.; Swaminathan, S.; Taylor, S.; Curry, S. A simple proposal for the publication of journal citation distributions. bioRxiv 2016, 062109. [Google Scholar] [CrossRef]

- Priem, J.; Taraborelli, D.; Groth, P.; Neylon, C. Altmetrics: A Manifesto. 2010. Available online: http://altmetrics.org/manifesto (accessed on 11 May 2019).

- Hicks, D.; Wouters, P.; Waltman, L.; de Rijcke, S.; Rafols, I. Bibliometrics: The Leiden Manifesto for research metrics. Nat. News 2015, 520, 429. [Google Scholar] [CrossRef]

- Falagas, M.E.; Alexiou, V.G. The top-ten in journal impact factor manipulation. Arch. Immunol. Ther. Exp. 2008, 56, 223. [Google Scholar] [CrossRef]

- Tort, A.B.L.; Targino, Z.H.; Amaral, O.B. Rising Publication Delays Inflate Journal Impact Factors. PLOS ONE 2012, 7, e53374. [Google Scholar] [CrossRef]

- Fong, E.A.; Wilhite, A.W. Authorship and citation manipulation in academic research. PLOS ONE 2017, 12, e0187394. [Google Scholar] [CrossRef]

- Adler, R.; Ewing, J.; Taylor, P. Citation statistics. A Report from the Joint. 2008. Available online: https://www.jstor.org/stable/20697661?seq=1#page_scan_tab_contents (accessed on 11 May 2019).

- Lariviere, V.; Gingras, Y. The impact factor’s Matthew effect: A natural experiment in bibliometrics. arXiv 2009, arXiv:0908.3177. [Google Scholar] [CrossRef]

- Brembs, B. Prestigious Science Journals Struggle to Reach Even Average Reliability. Front. Hum. Neurosci. 2018, 12, 37. [Google Scholar] [CrossRef] [PubMed]

- Brembs, B.; Button, K.; Munafò, M. Deep impact: Unintended consequences of journal rank. Front. Hum. Neurosci. 2013, 7, 291. [Google Scholar] [CrossRef]

- Vessuri, H.; Guédon, J.-C.; Cetto, A.M. Excellence or quality? Impact of the current competition regime on science and scientific publishing in Latin America and its implications for development. Curr. Sociol. 2014, 62, 647–665. [Google Scholar] [CrossRef]

- Guédon, J.-C. Open Access and the divide between “mainstream” and “peripheral. Como Gerir E Qualif. Rev. Científicas 2008, 1–25. [Google Scholar]

- Alperin, J.P.; Nieves, C.M.; Schimanski, L.; Fischman, G.E.; Niles, M.T.; McKiernan, E.C. How Significant Are the Public Dimensions of Faculty Work in Review, Promotion, and Tenure Documents? 2018. Available online: https://hcommons.org/deposits/item/hc:21015/ (accessed on 11 May 2019).

- Rossner, M.; Epps, H.V.; Hill, E. Show me the data. J Cell Biol 2007, 179, 1091–1092. [Google Scholar] [CrossRef] [PubMed]

- Owen, R.; Macnaghten, P.; Stilgoe, J. Responsible research and innovation: From science in society to science for society, with society. Sci. Public Policy 2012, 39, 751–760. [Google Scholar] [CrossRef]

- Moore, S.; Neylon, C.; Paul Eve, M.; Paul O’Donnell, D.; Pattinson, D. “Excellence R Us”: University research and the fetishisation of excellence. Palgrave Commun. 2017, 3, 16105. [Google Scholar] [CrossRef]

- Csiszar, A. Peer review: Troubled from the start. Nat. News 2016, 532, 306. [Google Scholar] [CrossRef] [PubMed]

- Moxham, N.; Fyfe, A. THE ROYAL SOCIETY AND THE PREHISTORY OF PEER REVIEW, 1665–1965. Hist. J. 2017. [Google Scholar] [CrossRef]

- Moore, J. Does peer review mean the same to the public as it does to scientists? Nature 2006. [Google Scholar] [CrossRef]

- Kumar, M. A review of the review process: Manuscript peer-review in biomedical research. Biol. Med. 2009, 1, 16. [Google Scholar]

- Budd, J.M.; Sievert, M.; Schultz, T.R. Phenomena of Retraction: Reasons for Retraction and Citations to the Publications. JAMA 1998, 280, 296–297. [Google Scholar] [CrossRef]

- Ferguson, C.; Marcus, A.; Oransky, I. Publishing: The peer-review scam. Nat. News 2014, 515, 480. [Google Scholar] [CrossRef]

- Smith, R. Peer review: A flawed process at the heart of science and journals. J. R. Soc. Med. 2006, 99, 178–182. [Google Scholar] [CrossRef] [PubMed]

- Ross-Hellauer, T. What is open peer review? A systematic review. F1000Research 2017, 6, 588. [Google Scholar] [CrossRef] [PubMed]

- Tennant, J.P.; Dugan, J.M.; Graziotin, D.; Jacques, D.C.; Waldner, F.; Mietchen, D.; Elkhatib, Y.; Collister, L.; Pikas, C.K.; Crick, T.; et al. A multi-disciplinary perspective on emergent and future innovations in peer review. F1000Research 2017, 6, 1151. [Google Scholar] [CrossRef] [PubMed]

- Wong, V.S.S.; Avalos, L.N.; Callaham, M.L. Industry payments to physician journal editors. PLoS ONE 2019, 14, e0211495. [Google Scholar] [CrossRef] [PubMed]

- Weiss, G.J.; Davis, R.B. Discordant financial conflicts of interest disclosures between clinical trial conference abstract and subsequent publication. PeerJ 2019, 7, e6423. [Google Scholar] [CrossRef] [PubMed]

- Flaherty, D.K. Ghost- and Guest-Authored Pharmaceutical Industry–Sponsored Studies: Abuse of Academic Integrity, the Peer Review System, and Public Trust. Ann Pharm. 2013, 47, 1081–1083. [Google Scholar] [CrossRef] [PubMed]

- DeTora, L.M.; Carey, M.A.; Toroser, D.; Baum, E.Z. Ghostwriting in biomedicine: A review of the published literature. Curr. Med Res. Opin. 2019. [Google Scholar] [CrossRef] [PubMed]

- Squazzoni, F.; Brezis, E.; Marušić, A. Scientometrics of peer review. Scientometrics 2017, 113, 501–502. [Google Scholar] [CrossRef]

- Squazzoni, F.; Grimaldo, F.; Marušić, A. Publishing: Journals Could Share Peer-Review Data. Available online: https://www.nature.com/articles/546352a (accessed on 22 April 2018).

- Allen, H.; Boxer, E.; Cury, A.; Gaston, T.; Graf, C.; Hogan, B.; Loh, S.; Wakley, H.; Willis, M. What does better peer review look like? Definitions, essential areas, and recommendations for better practice. Open Sci. Framew. 2018. [Google Scholar] [CrossRef]

- Tennant, J.P. The state of the art in peer review. FEMS Microbiol. Lett. 2018, 365. [Google Scholar] [CrossRef]

- Bravo, G.; Grimaldo, F.; López-Iñesta, E.; Mehmani, B.; Squazzoni, F. The effect of publishing peer review reports on referee behavior in five scholarly journals. Nat. Commun. 2019, 10, 322. [Google Scholar] [CrossRef] [PubMed]

- Fang, F.C.; Casadevall, A. Retracted Science and the Retraction Index. Infect. Immun. 2011, 79, 3855–3859. [Google Scholar] [CrossRef]

- Moylan, E.C.; Kowalczuk, M.K. Why articles are retracted: A retrospective cross-sectional study of retraction notices at BioMed Central. BMJ Open 2016, 6, e012047. [Google Scholar] [CrossRef] [PubMed]

- Collaboration, O.S. Estimating the reproducibility of psychological science. Science 2015, 349, 4716. [Google Scholar] [CrossRef] [PubMed]

- Munafò, M.R.; Nosek, B.A.; Bishop, D.V.M.; Button, K.S.; Chambers, C.D.; du Sert, N.P.; Simonsohn, U.; Wagenmakers, E.-J.; Ware, J.J.; Ioannidis, J.P.A. A manifesto for reproducible science. Nat. Hum. Behav. 2017, 1, 21. [Google Scholar] [CrossRef]

- Fanelli, D. Opinion: Is science really facing a reproducibility crisis, and do we need it to? Proc. Natl. Acad. Sci. USA 2018, 201708272. [Google Scholar] [CrossRef] [PubMed]

- Goodman, S.N. Manuscript Quality before and after Peer Review and Editing at Annals of Internal Medicine. Ann. Intern. Med. 1994, 121, 11. [Google Scholar] [CrossRef]

- Pierson, C.A. Peer review and journal quality. J. Am. Assoc. Nurse Pract. 2018, 30, 1. [Google Scholar] [CrossRef]

- Siler, K.; Lee, K.; Bero, L. Measuring the effectiveness of scientific gatekeeping. Proc. Natl. Acad. Sci. USA 2015, 112, 360–365. [Google Scholar] [CrossRef] [PubMed]

- Caputo, R.K. Peer Review: A Vital Gatekeeping Function and Obligation of Professional Scholarly Practice. Fam. Soc. 2018, 1044389418808155. [Google Scholar] [CrossRef]

- Bornmann, L. Scientific peer review. Annu. Rev. Inf. Sci. Technol. 2011, 45, 197–245. [Google Scholar] [CrossRef]

- Resnik, D.B.; Elmore, S.A. Ensuring the Quality, Fairness, and Integrity of Journal Peer Review: A Possible Role of Editors. Sci. Eng. Ethics 2016, 22, 169–188. [Google Scholar] [CrossRef] [PubMed]

- Richard Feynman Cargo Cult Science. Available online: http://calteches.library.caltech.edu/51/2/CargoCult.htm (accessed on 13 February 2019).

- Fyfe, A.; Coate, K.; Curry, S.; Lawson, S.; Moxham, N.; Røstvik, C.M. Untangling Academic Publishing. A History of the Relationship between Commercial Interests, Academic Prestige and the Circulation of Research. 2017. Available online: https://theidealis.org/untangling-academic-publishing-a-history-of-the-relationship-between-commercial-interests-academic-prestige-and-the-circulation-of-research/ (accessed on 11 May 2019).

- Priem, J.; Hemminger, B.M. Decoupling the scholarly journal. Front. Comput. Neurosci. 2012, 6. [Google Scholar] [CrossRef]

- McKiernan, E.C.; Bourne, P.E.; Brown, C.T.; Buck, S.; Kenall, A.; Lin, J.; McDougall, D.; Nosek, B.A.; Ram, K.; Soderberg, C.K.; et al. Point of View: How open science helps researchers succeed. Elife Sci. 2016, 5, e16800. [Google Scholar] [CrossRef] [PubMed]

- Bowman, N.D.; Keene, J.R. A Layered Framework for Considering Open Science Practices. Commun. Res. Rep. 2018, 35, 363–372. [Google Scholar] [CrossRef]

- Crane, H.; Martin, R. The RESEARCHERS.ONE Mission. 2018. Available online: https://zenodo.org/record/546100#.XNaj4aSxUvg (accessed on 11 May 2019).

- Brembs, B. Reliable novelty: New should not trump true. PLoS Biol. 2019, 17, e3000117. [Google Scholar] [CrossRef]

- Stern, B.M.; O’Shea, E.K. A proposal for the future of scientific publishing in the life sciences. PLoS Biol. 2019, 17, e3000116. [Google Scholar] [CrossRef]

- Crane, H.; Martin, R. In peer review we (don’t) trust: How peer review’s filtering poses a systemic risk to science. Res. ONE 2018. [Google Scholar]

- Silver, A. Pay-to-view blacklist of predatory journals set to launch. Nat. News 2017. [Google Scholar] [CrossRef]

- Djuric, D. Penetrating the Omerta of Predatory Publishing: The Romanian Connection. Sci. Eng. Ethics 2015, 21, 183–202. [Google Scholar] [CrossRef]

- Strinzel, M.; Severin, A.; Milzow, K.; Egger, M. “Blacklists” and “Whitelists” to Tackle Predatory Publishing: A Cross-Sectional Comparison and Thematic Analysis; PeerJ Inc.: San Francisco, CA, USA, 2019. [Google Scholar]

- Shen, C.; Björk, B.-C. ‘Predatory’ open access: A longitudinal study of article volumes and market characteristics. BMC Med. 2015, 13, 230. [Google Scholar] [CrossRef]

- Perlin, M.S.; Imasato, T.; Borenstein, D. Is predatory publishing a real threat? Evidence from a large database study. Scientometrics 2018, 116, 255–273. [Google Scholar] [CrossRef]

- Bohannon, J. Who’s Afraid of Peer Review? Science 2013, 342, 60–65. [Google Scholar] [CrossRef]

- Olivarez, J.D.; Bales, S.; Sare, L.; vanDuinkerken, W. Format Aside: Applying Beall’s Criteria to Assess the Predatory Nature of both OA and Non-OA Library and Information Science Journals. Coll. Res. Libr. 2018, 79, 52–67. [Google Scholar] [CrossRef]

- Shamseer, L.; Moher, D.; Maduekwe, O.; Turner, L.; Barbour, V.; Burch, R.; Clark, J.; Galipeau, J.; Roberts, J.; Shea, B.J. Potential predatory and legitimate biomedical journals: Can you tell the difference? A cross-sectional comparison. BMC Med. 2017, 15, 28. [Google Scholar] [CrossRef]

- Crawford, W. GOAJ3: Gold Open Access Journals 2012–2017; Cites & Insights Books: Livermore, CA, USA, 2018. [Google Scholar]

- Eve, M. Co-operating for gold open access without APCs. Insights 2015, 28, 73–77. [Google Scholar] [CrossRef][Green Version]

- Björk, B.-C.; Solomon, D. Developing an Effective Market for Open Access Article Processing Charges. Available online: http://www.wellcome.ac.uk/stellent/groups/corporatesite/@policy_communications/documents/web_document/wtp055910.pdf (accessed on 13 June 2014).

- Oermann, M.H.; Conklin, J.L.; Nicoll, L.H.; Chinn, P.L.; Ashton, K.S.; Edie, A.H.; Amarasekara, S.; Budinger, S.C. Study of Predatory Open Access Nursing Journals. J. Nurs. Scholarsh. 2016, 48, 624–632. [Google Scholar] [CrossRef]

- Oermann, M.H.; Nicoll, L.H.; Chinn, P.L.; Ashton, K.S.; Conklin, J.L.; Edie, A.H.; Amarasekara, S.; Williams, B.L. Quality of articles published in predatory nursing journals. Nurs. Outlook 2018, 66, 4–10. [Google Scholar] [CrossRef] [PubMed]

- Topper, L.; Boehr, D. Publishing trends of journals with manuscripts in PubMed Central: Changes from 2008–2009 to 2015–2016. J. Med. Libr. Assoc. 2018, 106, 445–454. [Google Scholar] [CrossRef]

- Kurt, S. Why do authors publish in predatory journals? Learn. Publ. 2018, 31, 141–147. [Google Scholar] [CrossRef]

- Frandsen, T.F. Why do researchers decide to publish in questionable journals? A review of the literature. Learn. Publ. 2019, 32, 57–62. [Google Scholar] [CrossRef]

- Omobowale, A.O.; Akanle, O.; Adeniran, A.I.; Adegboyega, K. Peripheral scholarship and the context of foreign paid publishing in Nigeria. Curr. Sociol. 2014, 62, 666–684. [Google Scholar] [CrossRef]

- Bell, K. ‘Predatory’ Open Access Journals as Parody: Exposing the Limitations of ‘Legitimate’ Academic Publishing. TripleC 2017, 15, 651–662. [Google Scholar] [CrossRef][Green Version]