Abstract

In this paper, we study the multi-objective optimization of the viscous boundary condition of an elastic rod using a hybrid method combining a genetic algorithm and simple cell mapping (GA-SCM). The method proceeds with the NSGAII algorithm to seek a rough Pareto set, followed by a local recovery process based on one-step simple cell mapping to complete the branch of the Pareto set. To accelerate computation, the rod response under impulsive loading is calculated with a particular solution method that provides accurate structural responses with less computational effort. The Pareto set and Pareto front of a case study are obtained with the GA-SCM hybrid method. Optimal designs of each objective function are illustrated through numerical simulations.

1. Introduction

Structures with viscous boundaries have been applied to diverse areas for vibration reduction [1], sound absorption [2], and boundary control [3]. One recent example is the railway bridge design for high-speed trains where the soil interacting with the bridge has been modeled as mass–damper–spring terminations of the structure [4]. The best design of structures has always been the pursuit of engineers. The optimal structural design must usually accommodate multiple objectives such as the settling time of vibrations, the response amplitude, and the shaping of the frequency response, leading to multi-objective optimization problems (MOPs). This paper presents a study of the multi-objective optimal design of a one-dimensional elastic rod with a mass–damper–spring termination.

The multi-objective nature of the optimization problem leads to a set of optimal solutions called the Pareto set, making set-oriented methods such as simple cell mapping (SCM) [5] suitable for solving such problems. The cell mapping method was initially developed by Hsu [6] for investigating the global behavior of nonlinear dynamical systems, then extended by Sun and his coworkers [7,8,9] for MOPs. The method seeks optimal solutions by constructing cell mappings based on the local dominance relation of cells in the discretized design space until the optimal solutions are achieved. Although the method is effective for low-dimensional problems, it suffers from the curse of dimensionality for high-dimensional problems because the searching space grows exponentially with the increase of the dimensions.

In terms of solving MOPs with relatively high dimensions, the evolutionary algorithms such as the genetic algorithm (GA) [10], immune algorithm [11], particle swarm optimization (PSO) [12], and ant colony optimization [13] are the mainstream methods for MOPs. The evolutionary algorithms are stochastic methods that mimic the biological evolutionary process using the evolution laws defined based on the Pareto dominance of fitness functions. Such methods can escape the local optima and rapidly discover the domains containing the solutions. However, the results of evolutionary algorithms can be sensitive to the selection of the hyperparameters.

Recently, Sun and colleagues [5,14] proposed a hybrid method that incorporates NSGAII and simple cell mapping (SCM). The method begins with NSGAII to generate a rough set from several generations such that the domains containing optimal solutions can be outlined. Using the rough set, SCM performs a local recovery method to complete the branches of the Pareto set through iterative refinement of the design space. With the power of NSGAII, the searching domain of the simple cell mapping method has been substantially reduced, making it possible to apply SCM for high-dimensional problems. On the other hand, the SCM method can complement the GA since obtaining outlined optimal domains using the GA is not very sensitive to the selection of the hyperparameters and is much easier than obtaining detailed Pareto optimal solutions using the GA. This can reduce the burden of parameter tuning with the GA. This paper will present a new case study of MOPs by the hybrid GA-SCM method. For more discussions on the advantage of the GA-SCM method and a comparison with different methods, the reader is referred to [5] and the references therein.

To accelerate the MOP algorithms for structural design, a fast and accurate solver that can predict structural response under external loading is needed. Traditional methods such as the finite-element method for calculating structural response can result in considerable computational load. However, obtaining such a solver for structures with viscous terminations is not an easy task. This is because viscous boundary conditions lead to non-self-adjoint boundary value problems that cannot be solved by the traditional method of eigenvalue expansion. To address this issue, several analytical methods have been developed. Hull et al. [15] presented a method that applies modal expansion in the augmented spatial interval where orthogonal eigenmodes exist. Jayachandran and Sun [16] transformed the problem into a self-adjoint boundary value problem in Hilbert space. Oliveto et al. [17] proposed a complex modal expansion method, which requires formulating new orthogonality conditions. Jovannovic [18] formulated the steady-state solution in the form of Fourier series in the state space by reconstructing the differential operator of the equations of motion. Recently, Xing and Sun [19] applied a particular solution method to study the impulsive response of a 1D elastic rod subject to a mass–damper–spring termination.

In this study, we will continue the effort in [19] to optimize the viscous termination of a 1D elastic rod under impulsive loading using the GA-SCM method. The solution of this problem has many potential applications in structural and acoustic design. The dynamic response of the rod will be predicted by the particular solution method. Firstly, we will define the multi-objective optimization problem, followed by the introduction of the GA-SCM hybrid method. Then, we will formulate the impulse response of the structural problem using the particular solution method and introduce the multi-objective functions for the structural optimization problem. We will demonstrate the effectiveness of the GA-SCM method through a case study.

2. Multi-Objective Optimization

A continuous multi-objective optimization problem (MOP) can be defined as

where is a variable of the design space and and are the design constraints. is a map comprised of objective functions (), i.e.,

where . Herein, Q is the feasible set represented by

The optimal solution of the multi-objective problem is defined in the sense of Pareto optimality, which requires the introduction of the following definitions.

Definition 1.

(Dominance relation [5]).

- (a)

- A vector is called strictly dominated (or simply dominated by a vector () ifwhere is an elementwise less-than-or-equal-to relation.

- (b)

- A vector is called weakly dominated by a vector () if .

The dominance relation defines the “good” solution in the sense of Pareto optimality. This is a strong relation, which can lead to many optimal solutions, because objective functions are considered as equally “good” solutions when they partially satisfy the inequality relations. To define the sets of optimal solutions and their objective functions, we introduce the Pareto set and Pareto front.

Definition 2.

(Pareto point, Pareto set, Pareto front [5]).

- (a)

- A point is called Pareto optimal or a Pareto point of (1) if there is no that dominates .

- (b)

- A point is called locally (Pareto) optimal or a local Pareto point of (1) if there exists a neighborhood of such that there is no that dominates .

- (c)

- A point is called a weak Pareto point or weakly optimal if there exists no such that .

- (d)

- The set of all Pareto optimal solutions is called the Pareto set, i.e.,

- (e)

- The image of is called the Pareto front.

3. GA-SCM Hybrid Method

We apply a hybrid method combining genetic algorithms (GAs) and cell mapping methods [14] to solve an MOP with multi-objective performance indices to be defined in Section 4. The hybrid method is initiated with a genetic algorithm (NSGAII) to generate a rough Pareto set in the design space, which is then used by a cell-mapping-based recovery method to seek a complete branch of the Pareto set through iterative refinement of the cellular space of the design parameters, which will be defined in Section 5. The pseudo code of the GA-SCM method is listed in Algorithm 1. The pseudo code for recovering the Pareto optimal solution is listed in Algorithm 2.

As shown in Algorithm 2, the recovery process firstly discretizes the design space and then iterates through elements of the rough Pareto set from the GA or the previous cell partition, performing a one-step simple cell mapping to search local Pareto points. If a cell is mapped to itself (i.e., a local sink is found), then the cell is pushed into the candidate set, followed by an operator to gather nearby solutions into the set to be visited () as long as they dominate some elements in the Pareto set . Otherwise, the destination cell of the cell mapping is pushed to . Then, the same iterative procedure will be performed on the set until no new cells can be brought into . At last, a dominance check is carried out to remove non-dominant points from the Pareto set. More detail on the method can be seen in [5].

| Algorithm 1 GA-SCM algorithm. |

|

| Algorithm 2 SCM-based recovering algorithm. |

|

The detail of the one-step simple cell mapping algorithm is listed in Algorithm 3. The method finds the local optimal solution by checking the dominance relation between a cell and its neighbor. The optimal solution is defined as the most distant cell that dominates the source cell.

| Algorithm 3 Simple cell mapping algorithm. |

|

Given the numerical computation of the impulse response of the rod is the most time-consuming subroutine in this problem, we record all visited cells using a dictionary structure, whose key is the cell index and whose values consist of the multi-objective functions. This way, the algorithm can search for the values in the dictionary with a time complexity , eliminating the repeated computation for cells that have been visited. In addition, the key of a dictionary is unique. Pushing a visited cell to the dictionary will automatically replace the repeated one. Therefore, our implementation, different from that in [14], does not require combining the repeated cells in the visited set.

4. Multi-Objective Optimization of Mass–Damper–Spring Termination

4.1. Impulse Response

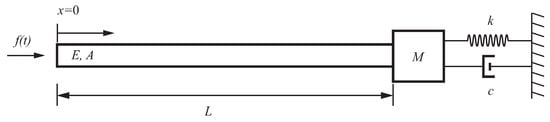

The one-dimensional elastic rod with a mass–damper–spring termination is shown in Figure 1. An impact loading is applied to its free end. Young’s modulus, the cross-section area, and the length of the rod are denoted by E, A, and L, respectively. We split total response into the sum of rigid-body and elastic responses such that

where is the rigid-body response and is the elastic response. From [19], the equations of motion of the system in Figure 1 are in the form

where is the density and is the speed of the longitudinal stress wave. The corresponding boundary conditions are

Figure 1.

A uniform elastic rod with a mass–damper–spring termination. An impact loading is applied to the free end. The material coordinate system is fixed to the free end of the rod.

The non-homogeneous boundary condition of Equation (9) leads to a non-orthogonal eigenvalue problem. We attack this problem using a method of a particular solution, which expresses the elastic motion in the form

where is the homogeneous solution with free–free boundary conditions such that

where

and is the particular solution such that

The formal solution of Equation (14) reads

where is the initial condition generated from the impulsive input (see Appendix A). The numerical error analysis of the method was performed in [19]. We incorporate this method into the GA-SCM method to optimize the termination of the structure.

4.2. Objective Functions

We define the multi-objective performance indices of terminal response as

where is the settling time of the third elastic mode, is the maximal absolute displacement at termination, and is the log decrement of the strain response at termination.

is an indirect indicator for the settling time of the rod response. The reason for using is twofold. Firstly, the settling time of higher modes produced by the model cannot properly capture the physical phenomena that the response of high-frequency modes usually decays more rapidly than that of low-frequency modes. Secondly, identifying the settling time of the total response from the numerical simulation could lead to extensive computational load. Therefore, the settling time of the third elastic mode is used and defined in the form

where stands for the third elastic mode. The selection of the third mode is based on trial and error.

is also an indirect indicator to estimate the decay of the impact wave. After the impact load is applied, an impulsive wave will be produced at the left terminal and a response wave due to the rigid-body motion will be generated at the right terminal. The two waves will propagate along the rod and be reflected at both ends. Although the strain response is the superposition of two waves, the impact wave dominates the response when it is propagated to the right terminal for the first few times. We define in the form

where and represent the first and n-th time when the impulse wave is propagated to the right end, respectively. The larger is, the more the impact wave is suppressed. We let in this study.

5. A Case Study

We considered an elastic rod with Young’s modulus , density , length , cross-section area , and excitation force magnitude . The design space was chosen as

subject to a constraint

where is the tuple (). We calculated the first 15 s rod response under the impact loading through the numerical integration of Equation (14), because the max displacement appears quickly after impact, and the impact wave dominates the terminal response when it is propagated at the right end during this time period. Thirty elastic modes were adopted, which, based on our observation, are sufficient to approximate the values of performance indices within the design space.

We first discover a rough Pareto set using the NSGAII algorithm with a population size 1000, number of generations 10, and mutation rate 0.05. Other configurations of NSGAII can be seen in Table 1. With the numerical predictor, the NSGAII algorithm was completed in 66 s on a desktop with an Intel core i-7 CPU, producing a rough Pareto set as the input to the SCM method. In the SCM method, the design space is discretized into a cellular grid as shown in Table 2. The elements of the Pareto set are the cells in the design space. The local search and recovery algorithm are performed twice, the first time with the initial grid and the second time with the refined grid, which divides the initial grid by three. We stop the program after the refinement because the desired resolution 0.06 × 0.08 × 0.166 in the parameter space is achieved. The computational time was 36 s with the initial grid and 2000 s with the refined grid.

Table 1.

Configuration of NSGAII.

Table 2.

Configuration of SCM.

There are 5392 cells in the Pareto set. The Pareto set and front of the mass–damper–spring termination are presented in Figure 2. Generally, either larger stiffness or damping will lead to better design. The majority of optimal design is achieved with either moderate or small mass. The Pareto front can be divided into three regions, labeled in Figure 2b. Region 1 minimizes displacement at the cost of long settling time and moderate damping performance. Region 2 balances the performance of three objective functions. Region 3 achieves premium damping performance at the expense of large displacement and moderate settling time.

Figure 2.

The Pareto set and front of the termination design of the elastic rod. (a) Pareto set. (b) Pareto front. Design parameters , , . The labels “1”, “2”, and “3” indicate the regions where optimal terminal displacement, balanced performance of objective functions, and optimal damping performance are achieved.

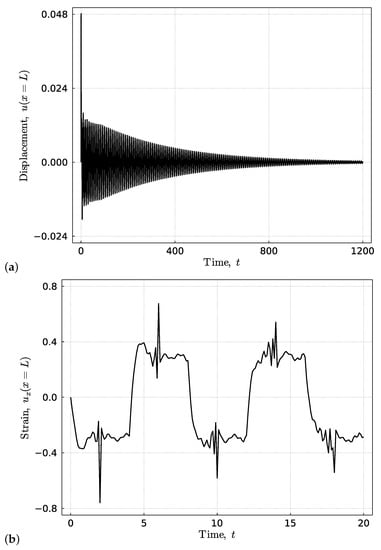

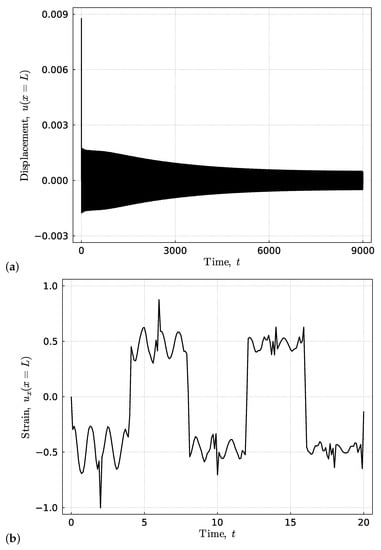

The optimal designs of each performance index are presented in Figure 3, Figure 4 and Figure 5. The corresponding design parameters, as well as performance indices are listed in Table 3.

Figure 3.

The optimal design of settling time. The corresponding (a) terminal displacement and (b) strain responses of the rod. The response is computed with .

Figure 4.

The optimal design of the decay of the impact wave. The corresponding (a) terminal displacement and (b) strain response of the rod. The response is calculated with .

Figure 5.

The optimal design of the maximal terminal displacement. The corresponding (a) terminal displacement and (b) strain responses of the rod. The response is calculated with .

5.1. Optimal Design: Minimal Settling Time

Figure 3 shows the optimal design of the settling time. The settling time of the total response approximates 1200 s. While the performance index of the settling time is significantly smaller than this number, it still correctly reflects the trend of the settling time change in comparison to other designs such as those in Figure 4 and Figure 5. The large mass in this design can increase the portion of energy transmitted to the mass after impact, which can be more effectively dissipated through the heavily damped boundary condition.

5.2. Optimal Design: Maximal Decay of Impact Wave

The time response of the optimal design maximizing the decay of the impact wave is presented in Figure 4. The impact wave propagates to the right end when . The suppression of the impact wave is evident. However, this is at the cost of at least a five-times longer settling time and a slight increase of the maximal displacement. When compared to the other two designs, this design considerably reduces the damping coefficient. This could be attributed to the velocity change of the mounted mass in response to the impact wave hitting the terminal. Such a change will immediately alter the viscous force produced by the damper, which in turn can lead to higher strain at the terminal. A small damping coefficient can reduce the magnitude of the reflected impact wave.

5.3. Optimal Design: Minimal Peak Displacement at Termination

The optimal design of terminal peak displacement in Figure 5 has the same stiffness, but much smaller mass and larger damping as the design in Figure 4. This makes sense because the terminal displacement is identical to the displacement of the mounted mass. Using small inertia and large stiffness and damping, one can effectively reduce the maximal terminal displacement. However, smaller inertia also leads to less energy distributed to the mass. Because the energy can only be dissipated through the damper attached to the mass, this choice can also significantly amplify the settling time.

6. Conclusions

In this paper, a multi-objective optimization problem of the terminal response of an elastic rod with a viscous boundary condition was formulated. The terminal response of the rod was predicted through a computationally effective and accurate particular solution method. The Pareto set and front of the MOP were obtained with the GA-SCM hybrid method. The proposed objective functions can effectively capture the dynamic response of the structure. The optimal design strategies were presented and analyzed. The amount of energy distributed to the terminal mass after impact was significant for the optimization of the terminal design.

The computational load of this work was due to the repeated computations of the impulse response with different parameter sets. Although the solver adopted in this paper can be computationally more effective and accurate than finite-element methods, it still requires a sufficient number of modes to capture the non-smooth impulsive response when highly accurate results are desired. The computational load can be further reduced using a surrogate (metamodel) model [20]. One future direction is to use neural operators such as DeepONet [21] to approximate the impulsive response, with the neural operator trained using data from the adopted solver.

Author Contributions

Conceptualization, methodology, and supervision, J.-Q.S.; software, formal analysis, investigation, and writing—original draft preparation, S.X.; writing—review and editing, J.-Q.S. All authors have read and agreed to the published version of the manuscript.

Funding

The first author would like to thank for the release time support from the Donald E. Bently Center for Engineering Innovation at California Polytechnic State University.

Conflicts of Interest

The authors declare no conflict of interest.

Appendix A

By uniformly sampling spatial points on the rod and applying the least-mean-squares method, the initial conditions of the particular solution and response of elastic modes can be obtained in the form

where , and

References

- Feng, Q.; Shinozuka, M. Control of Seismic Response of Structures Using Variable Dampers. J. Intell. Mater. Syst. Struct. 1993, 4, 117–122. [Google Scholar] [CrossRef]

- Jayachandran, V.; Sun, J.Q. Impedance Characteristics of Active Interior Noise Control Systems. J. Sound Vib. 1998, 211, 716–727. [Google Scholar] [CrossRef]

- Udwadia, F.E. Boundary Control, Quiet Boundaries, Super-stability and Super-instability. Appl. Math. Comput. 2005, 164, 327–349. [Google Scholar] [CrossRef]

- Hirzinger, B.; Adam, C.; Salcher, P. Dynamic Response of a Non-classically Damped Beam with General Boundary Conditions Subjected to a Moving Mass-spring-damper System. Int. J. Mech. Sci. 2020, 185, 105877. [Google Scholar] [CrossRef]

- Sun, J.Q.; Xiong, F.R.; Schütze, O.; Hernández, C. Cell Mapping Methods—Algorithmic Approaches and Applications; Springer: New York, NY, USA, 2018. [Google Scholar]

- Hsu, C.S. Cell-to-Cell Mapping—A Method of Global Analysis for Nonlinear Systems; Springer: New York, NY, USA, 1987. [Google Scholar]

- Sardahi, Y.; Naranjani, Y.; Liang, W.; Sun, J.Q.; Hernandez, C.; Schuetze, O. Multi-objective Optimal Control Design with the Simple Cell Mapping Method. In Proceedings of the ASME International Mechanical Engineering Congress & Exposition, San Diego, CA, USA, 15–21 November 2013. [Google Scholar] [CrossRef]

- Xiong, F.R.; Qin, Z.C.; Xue, Y.; Schütze, O.; Ding, Q.; Sun, J.Q. Multi-objective Optimal Design of Feedback Controls for Dynamical Systems with Hybrid Simple Cell Mapping Algorithm. Commun. Nonlinear Sci. Numer. Simul. 2014, 19, 1465–1473. [Google Scholar] [CrossRef]

- Hernández, C.; Naranjani, Y.; Sardahi, Y.; Liang, W.; Schütze, O.; Sun, J.Q. Simple Cell Mapping Method for Multi-objective Optimal Feedback Control Design. Int. J. Dyn. Control 2013, 1, 231–238. [Google Scholar] [CrossRef]

- Deb, K.; Pratap, A.; Agarwal, S.; Meyarivan, T. A Fast and Elitist Multi-objective Genetic Algorithm: NSGA-II. IEEE Trans. Evol. Comput. 2002, 6, 182–197. [Google Scholar] [CrossRef]

- Khoie, M.; Salahshoor, K.; Nouri, E.; Sedigh, A.K. PID Controller Tuning Using Multi-objective Optimization Based on Fused Genetic-Immune Algorithm and Immune Feedback Mechanism. In Lecture Notes in Computer Science—Advanced Intelligent Computing Theories and Applications; Springer: Berlin, Germany, 2012; Volume 6839. [Google Scholar]

- Poli, R.; Kennedy, J.; Blackwell, T. Particle Swarm Optimization. Swarm Intell. 2007, 1, 33–57. [Google Scholar] [CrossRef]

- Chiha, I.; Liouane, N.; Borne, P. Tuning PID Controller Using Multiobjective Ant Colony Optimization. Appl. Comput. Intell. Soft Comput. 2012, 2012, 11. [Google Scholar] [CrossRef]

- Naranjani, Y.; Hernández, C.; Xiong, F.R.; Schütze, O.; Sun, J.Q. A Hybrid Method of Evolutionary Algorithm and Simple Cell Mapping for Multi-objective Optimization Problems. Int. J. Dyn. Control 2017, 5, 570–582. [Google Scholar] [CrossRef]

- Hull, A.J.; Radcliffe, C.J.; Miklavcic, M.; Maccluer, C.R. State Space Representation of the Nonself-adjoint Acoustic Duct System. J. Vib. Acoust. 1990, 112, 483–488. [Google Scholar] [CrossRef]

- Jayachandran, V.; Sun, J.Q. The Modal Formulation and Adaptive-passive Control of the Nonself-adjoint One-dimensional Acoustic System with a Mass-spring Termination. J. Appl. Mech. 1999, 66, 242–249. [Google Scholar] [CrossRef]

- Oliveto, G.; Santini, A.; Tripodi, E. Complex Modal Analysis of a Flexural Vibrating Beam with Viscous End Conditions. J. Sound Vib. 1997, 200, 327–345. [Google Scholar] [CrossRef]

- Jovanovic, V. A Fourier Series Solution for the Longitudinal Vibrations of a Bar with Viscous Boundary Conditions at Each End. J. Eng. Math. 2013, 79, 125–142. [Google Scholar] [CrossRef]

- Xing, S.Y.; Sun, J.Q. Impulse Response of an Elastic Rod with a Mass-damper-spring Termination. J. Vib. Test. Syst. Dyn. 2023; accepted. [Google Scholar]

- Chugh, T.; Sindhya, K.; Hakanen, J.; Miettinen, K. A Survey on Handling Computationally Expensive Multiobject Optimization Problems with Evolutionry Algorithms. Soft Comput. 2019, 23, 3137–3166. [Google Scholar] [CrossRef]

- Lu, L.; Jin, P.; Pang, G.; Karniadakis, G.E. Learning Nonlinear Operators via DeepONet Based on the Universal Approximation Theorem of Operators. Nat. Mach. Intell. 2021, 3, 218–229. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).